Validating Molecular Dynamics Force Fields: A Comprehensive Guide to Accuracy, Methods, and Best Practices

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to validate molecular dynamics (MD) force field parameters.

Validating Molecular Dynamics Force Fields: A Comprehensive Guide to Accuracy, Methods, and Best Practices

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to validate molecular dynamics (MD) force field parameters. Covering foundational principles to advanced applications, it explores the critical importance of force field accuracy for reliable simulations in biomedical research. The content details modern parameterization methods, including automated iterative protocols, machine learning, and quantum mechanics datasets. It addresses common challenges like overfitting and limited sampling, offering troubleshooting and optimization strategies. A strong emphasis is placed on rigorous validation against experimental data and quantum mechanical benchmarks, with comparative analyses of force field performance across diverse systems, from intrinsically disordered proteins to drug-like molecules and bacterial membranes. The goal is to equip practitioners with the knowledge to critically assess and improve force fields, thereby enhancing the predictive power of MD simulations for drug discovery and material design.

The Critical Role of Force Field Validation in Accurate Molecular Simulations

Foundational Concepts: FAQs on Molecular Force Fields

What is a molecular mechanics force field and what are its core components?

A molecular mechanics (MM) force field is a mathematical model that describes the potential energy surface (PES) of a molecular system as a function of atomic positions. It is a critical component in molecular dynamics (MD) simulations, offering high computational efficiency for studying dynamical behaviors and physical properties [1]. The total energy is typically decomposed into bonded and non-bonded interactions [1]:

[E{MM} = E{MM}^{bonded} + E_{MM}^{non-bonded}]

What are the key differences between conventional and machine learning force fields?

Conventional MM force fields (like Amber, GAFF, OPLS) use fixed analytical forms to approximate the energy landscape, providing excellent computational speed but potentially suffering from inaccuracies due to inherent approximations [1]. Machine learning force fields (MLFFs) use neural networks to map atomistic features and coordinates to the PES without being limited by fixed functional forms. While MLFFs can capture more subtle interactions, they require large training datasets and have relatively lower computational efficiency [1].

Why can't I use my AMBER or GROMACS topology files directly with SMIRNOFF force fields?

SMIRNOFF force fields use direct chemical perception, meaning they apply parameters based on substructure searches acting directly on molecular chemistry [2]. Most molecular dynamics parameter files don't encode enough chemical information (such as formal bond orders and charges) to identify molecules chemically without drawing inferences [2]. Traditional topology files typically lack this complete chemical identity information, making them incompatible as direct input.

Troubleshooting Guide: Common Force Field Issues

Problem: Inaccurate Torsional Energy Profiles

Issue: Force field produces incorrect torsional energy profiles, leading to inaccurate conformational distributions that affect properties like protein-ligand binding affinity [1].

Solution:

- Validation: Compare force field torsion profiles against quantum mechanical (QM) benchmark data

- Parameter Refinement: Use specialized torsion fitting procedures or consider switching to force fields with enhanced torsion parameterization

- Data-Driven Approaches: Implement modern parameterization methods like those used in ByteFF, which trained on 3.2 million torsion profiles for improved accuracy [1]

Problem: Missing Parameters for Novel Chemical Systems

Issue: No existing parameters for non-standard residues or novel molecules in your system.

Solution:

Force Field Parameter Development Workflow

Problem: Noisy or Unphysical Dynamics

Issue: Simulations exhibit unstable dynamics, abnormal energies, or unphysical conformations.

Solution:

- Verify Initial Structure: Ensure your starting configuration has proper bond orders and formal charges [2]

- Check Parameter Transferability: Confirm parameters were derived from appropriate chemical analogs

- Validate Non-Bonded Terms: Verify electrostatic and van der Waals parameters against reference data

- Test with Simplified Systems: Run minimal test cases to isolate the problematic interaction

Problem: Chemical Perception Failures with PDB Files

Issue: PDB files for biomolecular systems containing small molecules don't provide sufficient chemical identity information for parameter assignment [2].

Solution:

- Infer Bond Orders: Use cheminformatics toolkits (like OpenEye's OEPerceiveBondOrders) to infer bond orders [2]

- Database Lookup: Identify ligands from databases like the PDB Ligand Expo for putative chemical identities [2]

- Use Complete Chemical Representations: Start with formats that provide full chemical identity: .mol2 files (with correct bond orders), isomeric SMILES strings, InChI strings, or IUPAC names [2]

Experimental Protocols for Force Field Validation

Protocol 1: Geometry and Hessian Validation

Purpose: Validate force field accuracy in predicting molecular geometries and vibrational frequencies.

Methodology:

- Reference Data Generation: Perform QM geometry optimizations and frequency calculations at appropriate level (e.g., B3LYP-D3(BJ)/DZVP) [1]

- Comparison Metrics: Calculate root-mean-square deviations (RMSD) for:

- Bond lengths

- Bond angles

- Dihedral angles

- Hessian Validation: Compare vibrational frequencies using partial Hessian analysis [1]

Expected Results: Modern force fields like ByteFF should achieve state-of-the-art performance predicting relaxed geometries and vibrational properties [1].

Protocol 2: Torsional Profile Validation

Purpose: Validate torsional energy profiles critical for conformational sampling.

Methodology:

- Scan Dihedral Angles: Perform constrained QM calculations rotating target dihedrals in 15° increments [1]

- Energy Profile Comparison: Compare MM and QM energy profiles for key torsions

- Statistical Analysis: Calculate mean absolute errors (MAE) and root-mean-square errors (RMSE) across torsion profiles

Quality Standards: ByteFF demonstrated exceptional accuracy across 3.2 million torsion profiles in benchmarks [1].

Protocol 3: Reactive Process Validation (ReaxFF)

Purpose: Validate force fields for chemical reactions, such as dehydration processes.

Methodology:

- Activation Energy Calculation: Compare simulated and experimental activation energies [3]

- Structural Analysis: Monitor radial distribution functions during reactions [3]

- Charge Transfer Analysis: Track charge evolution during bond breaking/formation [3]

Validation Metrics: Successful implementations show minimal deviation (∼4% for activation energy, ∼1% for thermal conductivity) from experimental values [3].

Quantitative Force Field Performance Data

Table 1: Performance Comparison of Modern Force Fields

| Force Field | Parameterization Approach | Chemical Coverage | Torsion Profile MAE (kJ/mol) | Geometry RMSD (Å) | Specialized Capabilities |

|---|---|---|---|---|---|

| ByteFF | GNN on 2.4M fragments & 3.2M torsions [1] | Expansive drug-like space [1] | State-of-the-art [1] | State-of-the-art [1] | Simultaneous parameter prediction [1] |

| OPLS3e | Look-up table (146,669 torsion types) [1] | Extensive [1] | Moderate [1] | Moderate [1] | FFBuilder for beyond-list refinement [1] |

| OpenFF | SMIRKS patterns [1] | Moderate [1] | Moderate [1] | Moderate [1] | Direct chemical perception [2] |

| ReaxFF (K₂CO₃·1.5H₂O) | Multi-objective genetic algorithm [3] | Specific hydrated salts [3] | Reaction-specific [3] | Reaction-specific [3] | Bond breaking/formation [3] |

Table 2: Force Field Validation Metrics and Thresholds

| Validation Type | Target Property | Optimal Performance | Acceptable Threshold | Validation Method |

|---|---|---|---|---|

| Geometrical | Bond lengths | < 0.01 Å [1] | < 0.02 Å | QM optimization comparison [1] |

| Geometrical | Bond angles | < 1° [1] | < 2° | QM optimization comparison [1] |

| Energetic | Torsion profiles | < 1 kJ/mol [1] | < 2 kJ/mol | Dihedral scanning [1] |

| Reactive | Activation energy | < 5% deviation [3] | < 10% deviation | MD simulation vs experiment [3] |

| Physical | Thermal conductivity | < 2% deviation [3] | < 5% deviation | Property calculation [3] |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Software Tools for Force Field Development and Validation

| Tool Name | Primary Function | Application Context | Key Features |

|---|---|---|---|

| OpenFF Toolkit | SMIRNOFF force field application [2] | Small molecule parameterization [2] | Direct chemical perception [2] |

| GARFFIELD | Force field training [3] | Reactive force field development [3] | Multi-objective optimization [3] |

| LAMMPS | Molecular dynamics simulation [3] | Reactive process simulation [3] | ReaxFF implementation [3] |

| CASTEP | DFT calculations [3] | Training set generation [3] | Periodic boundary conditions [3] |

| geomeTRIC | Geometry optimization [1] | QM reference data generation [1] | Optimized molecular geometries [1] |

| GNN Models | Parameter prediction [1] | Data-driven force field development [1] | Simultaneous parameter prediction [1] |

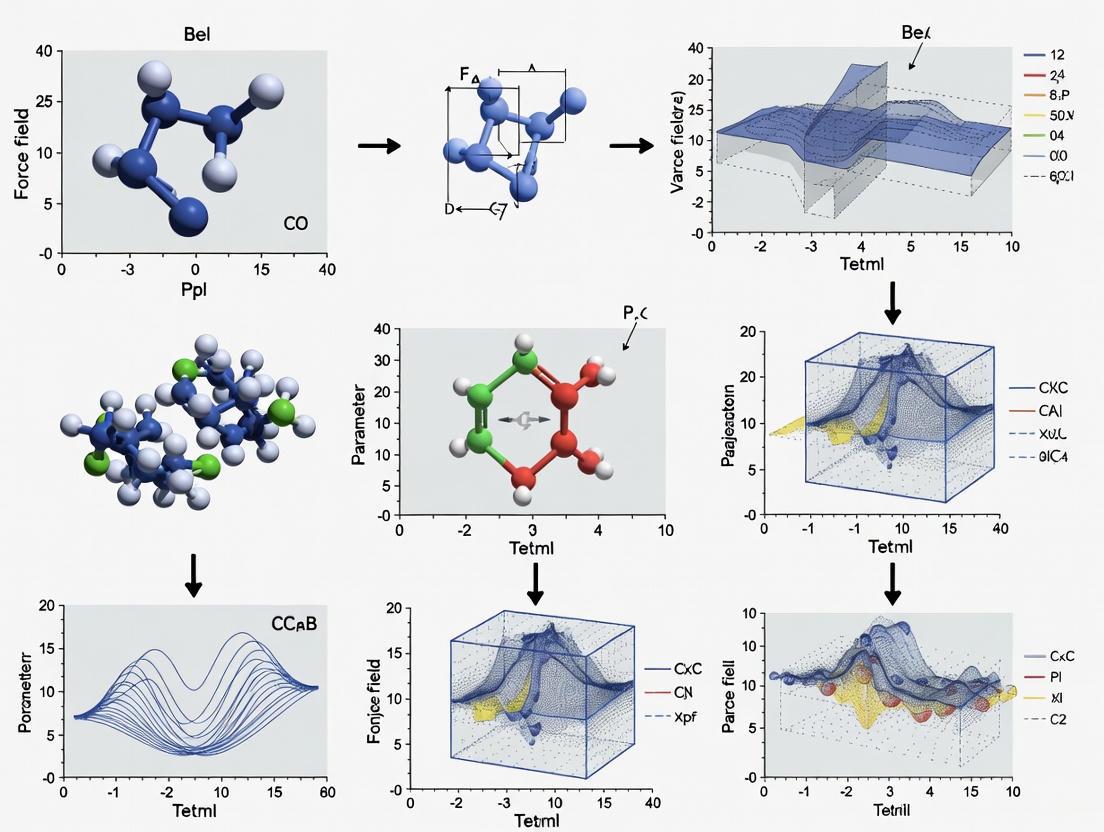

Advanced Methodologies: Force Field Workflows

Force Field Validation Workflow

Frequently Asked Technical Questions

What are the best starting file formats for force field application?

For SMIRNOFF force fields, we recommend formats that provide complete chemical identity: .mol2 files with correct bond orders and formal charges, isomeric SMILES strings, InChI strings, Chemical Identity Registry numbers, or IUPAC names [2]. Avoid formats that lack bond order information like most PDB files or simulation package outputs [2].

How are atom types and classes defined in force field development?

Atom types are the most specific identification - two atoms share a type only if the force field always treats them identically. Atom classes group types that are usually treated similarly. Parameters can be specified by either type or class, with classes making definitions more compact [4].

What physical constraints must force field parameters satisfy?

Force field parameters must be: (1) permutationally invariant, (2) respect chemical symmetries, and (3) conserve charge (sum of partial charges must equal molecular net charge) [1]. These constraints are essential guidelines for machine-learned parameters [1].

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary consequences of using unvalidated force field parameters in my drug discovery simulations?

Using unvalidated force fields can lead to significant errors in predicting key drug properties. Inaccurate parameters for Lennard-Jones interactions or atomic charges can cause large errors in binding affinity predictions, sometimes exceeding chemical precision (1 kcal/mol), which is critical for ranking potential drug candidates [5]. Force fields like PRODRGFF have been shown to produce osmotic coefficients in poor agreement with experimental data for certain molecules, while even generalized force fields (GAFF, CGenFF, OPLS-AA) struggle with specific molecule types like purine-derived compounds [6]. These inaccuracies can misdirect experimental efforts, wasting valuable time and resources.

FAQ 2: Beyond binding affinity, what other properties should I validate when developing a new force field?

A comprehensive validation should assess both structural and dynamic properties:

- Structural predictions: Compare against experimental crystal structures or NMR data [7]

- Thermodynamic properties: Calculate enthalpies of vaporization, heat capacities, and solvation free energies [7]

- Dynamic properties: Evaluate diffusion coefficients and viscosities against experimental measurements [8]

- Membrane properties: For lipid systems, validate rigidity and lateral diffusion rates against biophysical experiments like FRAP [8]

FAQ 3: I'm developing parameters for a novel bacterial lipid. What validation is essential?

For specialized systems like mycobacterial membranes, validation should confirm that the force field captures unique biophysical properties. The BLipidFF force field was validated by demonstrating it could reproduce the high tail rigidity and slow diffusion rates of α-mycolic acid bilayers observed in fluorescence spectroscopy and FRAP experiments [8]. This specialized validation was crucial because general force fields poorly described these membrane properties essential for understanding bacterial pathogenicity.

FAQ 4: How can machine learning accelerate force field parameter optimization?

Machine learning surrogate models can dramatically speed up parameter optimization. One study substituted time-consuming molecular dynamics calculations with a ML surrogate model, reducing the optimization time by a factor of approximately 20 while maintaining similar force field quality [9]. Graph neural networks can also predict bonded and non-bonded parameters across expansive chemical space, though they require extensive quantum mechanical training data [1].

Troubleshooting Guides

Problem: Poor Binding Affinity Predictions

Symptoms:

- Calculated binding free energies significantly deviate from experimental values (RMSE > 1 kcal/mol)

- Incorrect ranking of congeneric ligand series

- Poor correlation between computed and experimental binding affinities

Solutions:

- Improve nonbonded parameters: Implement electron density-derived parameters using methods like Minimal Basis Iterative Stockholder (MBIS) partitioning, which has shown improved accuracy for host-guest systems [5]

- Account for polarization: Use multiple configurations from both bound and unbound states to derive parameters that capture electronic polarization effects [10]

- Validate against reference data: Test parameters on known systems before applying to novel compounds [11]

Table 1: Validation Metrics for Improved Force Field Parameters

| System | Standard FF RMSE (kcal/mol) | Improved FF RMSE (kcal/mol) | Key Improvement |

|---|---|---|---|

| CB7 Host-Guest [5] | >1.0 | ~0.7 | MBIS-derived nonbonded parameters |

| T4 Lysozyme [10] | Not specified | 0.7 | MBIS parameters + validated simulation procedure |

| Mycobacterial Membranes [8] | Poor description of rigidity | Experimentally consistent | Specialized lipid parameterization |

Problem: Inaccurate Conformational Sampling

Symptoms:

- Incorrect populations of ligand rotamers

- Improper protein secondary structure stability

- Unrealistic conformational energy distributions

Solutions:

- Optimize torsion parameters: Refine dihedral terms to match quantum mechanical torsion profiles [8]

- Use expanded training sets: Employ large-scale torsion datasets (millions of profiles) for parameter development [1]

- Validate against QM data: Compare conformational energies against high-level quantum mechanical calculations [1]

Problem: Force Field Transferability Issues

Symptoms:

- Parameters work for training molecules but fail on novel chemotypes

- Systematic errors for specific functional groups

- Deteriorating performance as chemical space expands

Solutions:

- Implement data-driven approaches: Use graph neural networks trained on diverse chemical space (e.g., 2.4+ million molecular fragments) [1]

- Ensure chemical symmetry preservation: Force field parameters should respect molecular symmetry despite different SMILES representations [1]

- Cover expansive chemical space: Include diverse elements, hybridization states, and functional groups in training data [1]

Experimental Protocols

Protocol 1: Validation Against Experimental Osmotic Coefficients

Adapted from Zhu, 2019 [6]

Purpose: Validate force field performance for small drug-like molecules by comparing calculated and experimental osmotic coefficients.

Procedure:

- System Preparation:

- Create simulation boxes with 5-20 solute molecules (e.g., drug-like compounds) solvated in explicit water

- Ensure typical solute concentrations of 0.5-1.0 M

Simulation Details:

- Use molecular dynamics with semipermeable membrane method

- Employ production runs of 20-50 ns after equilibration

- Maintain constant temperature (298 K) and pressure (1 atm)

Analysis:

- Calculate osmotic pressure from average pressure tensor components

- Compute osmotic coefficient: Φ = (Πsolution - Πwater) / (RT * c_ideal)

- Compare with experimental osmotic coefficient data

Troubleshooting Note: Poor agreement for purine-derived molecules (purine, caffeine) has been observed across multiple force fields, suggesting intrinsic challenges with these chemotypes [6].

Protocol 2: MBIS Nonbonded Parameter Derivation

Adapted from González et al., 2022 and preprints [5] [10]

Purpose: Derive accurate atomic charges and Lennard-Jones parameters from molecular electron density.

Procedure:

- Configuration Sampling:

- Perform QM calculations on multiple configurations of the ligand from both bound and unbound states

- Use molecular dynamics to sample relevant conformational space

Electron Density Analysis:

- Calculate molecular electron density at DFT level (e.g., B3LYP/def2-TZVP)

- Apply Minimal Basis Iterative Stockholder (MBIS) partitioning to derive atomic charges

Parameter Assignment:

- Derive Lennard-Jones parameters from the partitioned electron density

- Implement parameters in molecular dynamics simulations

Validation:

- Test binding affinity predictions against experimental data

- Target RMSE < 1 kcal/mol (chemical precision)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Force Field Development and Validation

| Tool/Resource | Function | Application Example |

|---|---|---|

| MBIS Partitioning [5] [10] | Derives atomic charges & LJ parameters from electron density | Improving host-guest binding affinity predictions |

| BLipidFF [8] | Specialized force field for bacterial lipids | Simulating mycobacterial membrane properties |

| ByteFF [1] | Data-driven MMFF trained on 2.4M+ molecular fragments | Expanding chemical space coverage for drug-like molecules |

| ALMO-EDA [11] | Decomposes interaction energies into physical components | Validating nonbonded parameters against QM reference |

| Osmotic Pressure MD [6] | Computes osmotic coefficients from simulation | Validating force fields for small drug-like molecules |

| ReaxFF [12] | Reactive force field for chemical reactions | Simulating bond formation/breaking in complex systems |

Problem: Force Field Parameterization for Metal-Organic Systems

Symptoms:

- Unrealistic metal-ligand coordination geometry

- Poor reproduction of framework flexibility

- Inaccurate host-guest interactions in MOF systems

Solutions:

- Employ cluster-to-periodic approach: Derive parameters from finite molecular clusters then transfer to periodic structures [13]

- Use hybrid parametrization: Combine GAFF for organic components with specialized parameters for metal centers [13]

- Validate framework flexibility: Ensure the force field reproduces pore contraction/expansion during guest adsorption [13]

Workflow Implementation:

- Perform QM calculations on representative clusters with 3+ whole linkers

- Calculate partial charges using RESP fitting at B3LYP/def2TZVP level

- Derive metal parameters using tools like MCPB.py

- Transfer cluster parameters to periodic system with automatic assignment [13]

Potential Energy Surface (PES), Parameter Space, and Transferability

This technical support center provides troubleshooting guides and FAQs for researchers validating molecular dynamics (MD) force field parameters, with a focus on the interplay between Potential Energy Surfaces (PES), parameter space exploration, and force field transferability.

Frequently Asked Questions (FAQs)

Q1: What is a Potential Energy Surface (PES) and how is it used in molecular simulations? A Potential Energy Surface describes the energy of a system, typically a collection of atoms, in terms of the positions of the atoms [14] [15]. It is a foundational concept for exploring molecular properties and reaction dynamics. In the context of force field validation, the PES, as defined by the force field, should reproduce key features like energy minima (stable structures) and saddle points (transition states) that are consistent with higher-level theoretical or experimental data [14].

Q2: What does "transferability" mean for a force field? A force field is considered transferable if it can be successfully used in molecular simulations outside of the specific chemical environments for which it was originally developed and parameterized [16]. For example, a water model parameterized to reproduce pure water properties should also yield accurate results when simulating a supersaturated salt solution without modification [16]. Transferability is a key indicator of a force field's robustness and general applicability.

Q3: Why is exploring the "parameter space" important for force field validation? Mathematical models, including force fields, often have a large number of parameters. Combinatorial variations of these parameters can yield distinct qualitative behaviors in a simulation [17]. Systematically exploring this high-dimensional parameter space is essential to understand the full scope of behaviors a force field can produce and to identify which parameters most strongly regulate key outputs [17]. This helps in assessing the force field's reliability and predictive power.

Q4: What is a common error when trying to make a force field transferable? A frequent mistake is mixing parameters from incompatible force fields [18]. Different force fields use distinct functional forms, methods for deriving charges, and combination rules for non-bonded interactions [18]. Combining them carelessly disrupts the balance between bonded and non-bonded interactions, leading to unphysical simulation behavior [18]. Parameters should only be mixed if the force fields are explicitly designed to be compatible [18].

Q5: My simulation failed with an error that a residue was not found in the topology database. What does this mean? This error occurs when the software cannot find a definition for a molecule (residue) in your system within the chosen force field's database [19]. This means the force field lacks parameters for that specific molecule, directly challenging its transferability for your system. Solutions include ensuring the residue name matches the database, using a different force field that includes the residue, or manually parameterizing the missing residue, which is a non-trivial task [19].

Troubleshooting Guides

Issue 1: Handling Missing Force Field Parameters

Problem: A residue or ligand in your structure is not recognized by the force field, leading to errors during topology generation (e.g., Residue ‘XXX’ not found in residue topology database) [19].

Diagnosis: This indicates a limitation in the transferability of your chosen force field. Its construction plan does not include the building blocks for your specific molecule [19] [20].

Resolution:

- Check Naming and Available Force Fields: Verify the residue name in your structure file matches the name in the force field's database. If not, rename it. Alternatively, see if another force field contains parameters for your molecule [19].

- Search for Pre-existing Parameters: Look in the primary literature or force field databases for a topology file (

*.itp) for your molecule that is consistent with your chosen force field [19]. - Manual Parameterization: If no parameters exist, you must parameterize the molecule yourself. This involves:

- Deriving equilibrium bond lengths and angles, and dihedral parameters.

- Assigning partial atomic charges.

- Defining van der Waals parameters, often via atom types. This process requires significant expertise and often involves matching the molecule's PES from quantum mechanical calculations [19].

Issue 2: Instabilities During Molecular Dynamics Simulation

Problem: The simulation crashes or exhibits unrealistic behavior, such as bonds breaking or atoms moving too fast.

Diagnosis: This can stem from several root causes, including inaccuracies in the PES described by the force field, poor initial structure, or incorrect simulation parameters [18].

Resolution:

- Ensure Proper System Preparation: Always check your starting structure for missing atoms, steric clashes, and correct protonation states. Use minimization to relax high-energy regions [18].

- Validate Force Field Suitability: Confirm your force field is appropriate for your molecule class (e.g., do not use a protein-specific force field for carbohydrates) [18].

- Check Simulation Parameters: Use an appropriate time step (e.g., 2 fs with bond constraints). Ensure temperature and pressure controls (thermostat/barostat) are correctly configured [18].

- Verify Minimization and Equilibration: Do not rush these steps. Ensure energy minimization has converged and that system energy, temperature, and density have stabilized before starting production runs [18].

Issue 3: Inadequate Sampling of Conformational Space

Problem: A single simulation trajectory does not capture all relevant conformational states of the molecule, leading to non-representative results.

Diagnosis: Biomolecular systems have vast conformational spaces with energy barriers. A single simulation may be trapped in a local minimum and fail to explore the full PES [18].

Resolution:

- Perform Multiple Replica Simulations: Run several independent simulations with different initial velocities. This increases the probability of sampling different conformational states [18].

- Extend Simulation Time: If possible, run longer simulations to allow the system to overcome energy barriers.

- Use Enhanced Sampling Techniques: For complex transitions, consider advanced methods (e.g., metadynamics, replica-exchange MD) to systematically explore the PES.

Experimental Protocols for Validation

Protocol 1: Systematic Exploration of Force Field Parameter Space

This protocol outlines a computational method to map how variations in force field parameters affect simulation outcomes [17].

Methodology:

- Define Parameter Ranges: Select a set of

kparametersP1, P2,…, Pk(e.g., torsion force constants, Lennard-Jones ε) and specify their admissible value ranges [17]. - Generate Parameter Combinations: Use Latin hypercube sampling to generate

Npoints within the defined parameter space. This technique ensures efficient coverage of the high-dimensional space with fewer samples [17]. - Run Ensemble of Simulations: For each generated parameter combination, run a simulation and record the temporal profile of key target variables (e.g., radius of gyration, bond rotation rates) [17].

- Cluster Behaviors: Group the simulated temporal profiles into distinct clusters using an algorithm like k-means. Each cluster represents a qualitative behavior of the model [17].

- Partition Parameter Space: Use a regression tree model to partition the parameter space into sub-regions that correspond to the different behavioral clusters. This identifies which parameter combinations produce specific behaviors [17].

Objective: To understand the combinational effects of parameters, identify sensitive parameters, and locate regions of parameter space that yield robust, physically realistic behaviors [17].

Protocol 2: Validating Transferability via Free Energy Calculations

This protocol assesses a force field's transferability by calculating a thermodynamic property across a range of conditions or for a series of molecules and comparing it to experimental data.

Methodology:

- Select a Benchmark Property: Choose a thermodynamic property such as solvation free energy, partition coefficient (LogP), or enthalpy of vaporization.

- Choose a Test Set: Select a series of molecules not used in the original force field parameterization.

- Run Simulations and Compute Properties: Use simulation methods (e.g., thermodynamic integration, free energy perturbation) to calculate the chosen property for each molecule in the test set.

- Quantify Agreement: Compare the simulation results with experimental data using statistical measures like the root-mean-square error (RMSE) or correlation coefficient (R²).

Objective: To quantitatively evaluate how well a transferable force field can predict properties for novel compounds, which is critical for drug development applications.

Data Presentation

Table 1: Common Force Field Types and Their Characteristics

| Force Field Type | Description | Common Examples | Typical Application Scope |

|---|---|---|---|

| All-Atom | Explicitly represents every atom in the system. [20] | AMBER, [20] CHARMM, [20] OPLS-AA [20] | High-resolution studies of proteins, nucleic acids, and detailed biomolecular interactions. |

| United-Atom | Groups hydrogen atoms with their attached heavy atom into a single interaction site. [20] | TraPPE-UA [20] | Increased computational efficiency for simulations of lipids and large molecular systems. |

| Coarse-Grained | Represents groups of atoms (e.g., beads for amino acids) as single interaction sites. [20] | MARTINI | Studying large-scale biomolecular assemblies and long timescale processes. |

Table 2: Key Parameters in a Classical Force Field and Their Validation Metrics

| Parameter Class | Mathematical Form (Example) | Key Validation Metrics |

|---|---|---|

| Bond Stretching | $E{bond} = \frac{1}{2} kb (r - r_0)^2$ | Vibrational frequencies, bond length distributions from crystallographic data. |

| Angle Bending | $E{angle} = \frac{1}{2} k{\theta} (\theta - \theta_0)^2$ | Angle distributions from crystallographic data, IR spectra. |

| Torsional | $E{dihedral} = \frac{1}{2} k{\phi} [1 + cos(n\phi - \delta)]$ | Conformational populations (e.g., from NMR), rotational energy barriers (from QM). |

| Non-Bonded | $E_{LJ} = 4\epsilon [ (\frac{\sigma}{r})^{12} - (\frac{\sigma}{r})^{6} ]$ | Density, enthalpy of vaporization, hydration free energies, radial distribution functions. |

Workflow and Relationship Diagrams

Diagram 1: Force field validation and refinement cycle.

Diagram 2: The relationship between force fields and the PES.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item | Function in Force Field Validation |

|---|---|

| Molecular Dynamics Software | Software (e.g., GROMACS, AMBER, NAMD, OpenMM) to run simulations using the force field parameters. |

| Quantum Chemistry Software | Used to compute high-accuracy reference data (e.g., conformational energies, charge distributions) for parameterization and validation. |

| Parameter Space Exploration Tool | Tools like PSExplorer [17] help systematically sample parameter combinations and cluster resulting behaviors. |

| Standardized Force Field Format | Data schemes like TUK-FFDat [20] enable interoperable, machine-readable force field definitions, improving reproducibility. |

| System Preparation & Analysis Tools | Utilities (e.g., pdb2gmx, cpptraj) for building simulation topologies and analyzing trajectories to compute validation metrics. [19] |

Fundamental Force Field Concepts and Components

What is a force field in molecular dynamics?

In the context of chemistry and molecular modeling, a force field is a computational model used to describe the forces between atoms within molecules or between molecules [21]. It consists of a specific functional form and associated parameter sets used to calculate the potential energy of a system at the atomistic level [21]. Force fields are the foundation of Molecular Dynamics (MD) and Monte Carlo simulations, enabling the prediction of molecular behavior without the prohibitive computational cost of quantum mechanical methods [21].

What are the main components of a traditional force field?

The potential energy in a traditional molecular mechanics force field is calculated as the sum of bonded and non-bonded interactions [21]. The general form is expressed as:

E_total = E_bonded + E_nonbonded [21]

Bonded Interactions (

E_bonded) describe the energy associated with the covalent bond structure:- Bond Stretching (

E_bond): Energy required to stretch or compress a bond from its equilibrium length, typically modeled with a harmonic potential [21]. - Angle Bending (

E_angle): Energy associated with the deviation of bond angles from their equilibrium values. - Dihedral/Torsional (

E_dihedral): Energy related to rotation around central bonds, described by periodic functions [21]. - Improper Dihedrals: Used to enforce planarity in aromatic rings and other conjugated systems [21].

- Bond Stretching (

Non-bonded Interactions (

E_nonbonded) describe interactions between atoms not directly connected by bonds:

Table: Core Components of a Traditional Force Field

| Interaction Type | Functional Form (Common) | Key Parameters | Physical Basis |

|---|---|---|---|

| Bond Stretching | Harmonic: ( E = \frac{k{ij}}{2}(l{ij}-l_{0,ij})^2 ) | Force constant ( k{ij} ), equilibrium length ( l{0,ij} ) | Vibration of covalent bonds |

| Angle Bending | Harmonic: ( E = \frac{k{ijk}}{2}(\theta{ijk}-\theta_{0,ijk})^2 ) | Force constant ( k{ijk} ), equilibrium angle ( \theta{0,ijk} ) | Bending of bond angles |

| Dihedral Torsion | Periodic: ( E = k{ijkl}(1 + \cos(n\phi - \phi0)) ) | Force constant ( k{ijkl} ), periodicity ( n ), phase ( \phi0 ) | Barrier to rotation around a bond |

| van der Waals | Lennard-Jones: ( E = 4\epsilon[ (\frac{\sigma}{r})^{12} - (\frac{\sigma}{r})^{6} ] ) | Well depth ( \epsilon ), van der Waals radius ( \sigma ) | Dispersion and Pauli repulsion |

| Electrostatic | Coulomb: ( E = \frac{1}{4\pi\varepsilon0}\frac{qi qj}{r{ij}} ) | Atomic partial charges ( qi, qj ) | Interaction between permanent charges |

Traditional vs. Machine Learning Force Fields

What are the main types of traditional force fields?

Traditional force fields can be categorized based on their scope and granularity [21]:

- Component-Specific vs. Transferable: Component-specific force fields are developed for a single substance (e.g., a specific water model), while transferable force fields use building blocks (e.g., a methyl group) applicable to many substances [21].

- All-Atom vs. United-Atom vs. Coarse-Grained:

- All-Atom: Explicitly represents every atom, including hydrogen [21].

- United-Atom: Treats hydrogen and carbon atoms in groups like methyl as a single interaction center, reducing computational cost [21].

- Coarse-Grained: Sacrifices chemical details by grouping multiple atoms into "beads" for simulating large macromolecules over long timescales [21].

How do Machine Learning Force Fields (MLFFs) differ from traditional force fields?

Machine Learning Force Fields (MLFFs) represent a paradigm shift. Instead of using a fixed functional form with pre-tabulated parameters, they use machine learning models to learn the relationship between atomic structure and potential energy directly from quantum mechanical data [22] [23].

- Key Distinction: Traditional force fields rely on physicist-defined functional forms, while MLFFs use data-driven models to infer a potential energy surface [23].

- Computational Cost: MLFFs bridge the gap between the high accuracy of quantum mechanics (QM) and the low cost of traditional MM. They are more accurate than traditional MM but several orders of magnitude more expensive. However, they are significantly faster than QM [23].

- Approaches: Some MLFFs, like Grappa, predict parameters for a traditional MM functional form from the molecular graph. Others, like the DPmoire package, create potentials that bypass the MM functional form entirely [22] [23].

Table: Comparison of Force Field Types

| Feature | Traditional MM | Machine-Learned MM (e.g., Grappa) | Pure MLFF (e.g., from DPmoire) |

|---|---|---|---|

| Functional Form | Pre-defined, physics-based (bonds, angles, etc.) [21] | Pre-defined, physics-based MM [23] | Learned, black-box or neural network [22] |

| Parameter Source | Lookup tables based on atom types [21] | Predicted by ML model from molecular graph [23] | N/A (direct energy prediction) |

| Computational Cost | Lowest | Same as Traditional MM (parameters predicted once) [23] | Higher than MM, lower than QM [23] |

| Accuracy | Good for known systems, limited by functional form | State-of-the-art for MM, improved dihedral landscapes [23] | Can approach QM accuracy [22] |

| Transferability | Limited to predefined atom types | High, can extrapolate to new chemical environments [23] | Depends on training data |

| Bond Breaking | Not possible (harmonic bonds) [21] | Not possible | Possible with specific architectures |

Troubleshooting Force Field Selection and Performance

How do I choose the right force field for my system?

Selecting an appropriate force field is critical for meaningful simulation results. The following workflow provides a systematic approach to force field selection, incorporating both traditional and modern options.

My simulation results do not match experimental data. What could be wrong?

This common issue can stem from several sources related to force field accuracy and application:

- Incorrect Force Field for Material Type: Using a force field designed for organic molecules to simulate a different material class (e.g., metals, minerals) will yield poor results. For metals, embedded atom potentials are typically used, while covalent crystals may require bond-order potentials like Tersoff [21].

- Improper Parameterization: Force field parameters can be derived from different sources (quantum mechanics, experimental data), impacting accuracy. Heuristic parametrization procedures can introduce subjectivity and reproducibility issues [21].

- Inadequate Treatment of Non-bonded Interactions: Electrostatic interactions are critical. The assignment of atomic charges often uses heuristic approaches, which can lead to significant deviations in representing specific properties [21]. Van der Waals interactions also vary in their parameterization.

- Missing Polarizability: Many traditional force fields are non-polarizable, meaning atomic charges are fixed. This can fail in environments where electronic polarization is significant [21].

- System Preparation Issues: For membrane simulations, for example, the composition (O/N ratio, proportions of O and N species) must be validated against experimental characterizations to ensure the simulated system is representative [24].

How can I validate my force field choice?

A comprehensive validation protocol is essential, especially when using a force field for a new system. The table below outlines key properties to compare against experimental or high-level theoretical data.

Table: Force Field Validation Checklist

| Validation Category | Specific Properties to Check | Reference Method |

|---|---|---|

| Structural Properties | Density, pore size distribution, radius of gyration, crystal lattice parameters | X-ray diffraction, NMR, experimental scattering data [24] |

| Mechanical Properties | Young's modulus, bulk modulus, compressibility | Experimental stress-strain measurements [24] |

| Thermodynamic Properties | Enthalpy of vaporization/sublimation, free energy of solvation, heat capacity | Calorimetry, experimental thermodynamic data [21] |

| Dynamic Properties | Diffusion coefficients, viscosity, conformational dynamics (J-couplings) | NMR, quasi-elastic neutron scattering, spectroscopy [23] |

| Transport Properties (Membranes) | Pure water permeability, salt rejection | Laboratory-scale permeability tests [24] |

| Electronic Properties | Band structures (for materials like moiré systems) | Density Functional Theory (DFT) [22] |

Machine Learning Force Fields: A Practical Guide

What are the practical steps to build and use an MLFF?

Building a reliable MLFF requires careful data generation and training. The following diagram illustrates a robust workflow for constructing an MLFF for complex systems, such as moiré materials, as implemented in tools like DPmoire [22].

What is "Active Learning" in the context of MLFFs?

Active Learning is a powerful strategy for on-the-fly training of machine learning potentials during molecular dynamics simulations [25]. The core idea is to let the ML model identify regions of configuration space where it is uncertain and then perform targeted reference calculations (usually DFT) for those structures to improve its own training.

- Workflow: An MD simulation is run using a current version of the MLFF. At certain intervals, the model's prediction is compared to a reference calculation. If the disagreement is larger than a predefined threshold (the "success criteria"), the simulation is stopped, the model is retrained on the new data, and the simulation is restarted [25].

- Benefits: This automates the generation of a robust and representative training dataset, ensuring the model learns from its own mistakes and becomes accurate for the specific thermodynamic conditions of the simulation.

- Implementation: Software like the Simple (MD) Active Learning workflow in the Amsterdam Modeling Suite implements this for potentials like M3GNet [25].

I have a limited budget for DFT calculations. Can I still use MLFFs?

Yes. Transfer learning is a key technique for applying MLFFs efficiently. Instead of training a model from scratch, you can start from a pre-trained universal potential and fine-tune it for your specific system with a relatively small amount of targeted data [25]. For example, the M3GNet Universal Potential can be fine-tuned using active learning, significantly reducing the number of required reference calculations [25].

Research Reagents and Computational Tools

Table: Essential Software Tools for Force Field Development and Application

| Tool Name | Type | Primary Function | Relevance |

|---|---|---|---|

| GROMACS [23] | MD Engine | High-performance molecular dynamics simulation. | Industry-standard for running simulations with both traditional and ML force fields. |

| OpenMM [23] | MD Engine | Flexible toolkit for molecular simulation with GPU acceleration. | Known for its versatility and speed; supports custom forces. |

| DPmoire [22] | MLFF Software | Constructs accurate MLFFs for moiré systems. | Example of a specialized tool for building MLFFs for complex materials. |

| Grappa [23] | ML Force Field | Predicts MM parameters from a molecular graph using a graph neural network. | Represents the new generation of machine-learned molecular mechanics force fields. |

| VASP MLFF [22] | MLFF Module | On-the-fly MLFF algorithm within the VASP electronic structure package. | Used for generating training data and performing active learning. |

| Allegro/NequIP [22] | MLFF Architecture | E(3)-equivariant neural network for building interatomic potentials. | State-of-the-art MLFF models that can achieve very low errors (~meV/atom). |

| AMS [25] | Modeling Suite | Includes a "Simple Active Learning" workflow for on-the-fly training. | Provides an integrated platform for non-experts to apply MLFFs. |

| LAMMPS [22] | MD Engine | Classical molecular dynamics code with extensive force field support. | Can be interfaced with many MLFF models for production simulations. |

Troubleshooting Guides and FAQs

FAQ: Fundamental Force Field Limitations

Q1: What are the most common sources of error originating from the force field functional form itself?

The functional form of classical force fields introduces several inherent limitations. Most notably, they typically employ fixed-charge models and lack explicit polarization, meaning atomic partial charges cannot respond to changes in their electrostatic environment [26] [27]. This can lead to inaccurate descriptions of interactions in heterogeneous environments like protein-ligand binding sites. Furthermore, the common use of simple Lennard-Jones potentials for van der Waals interactions is a pairwise approximation that does not capture many-body dispersion effects [28] [1]. Finally, the functional form itself may be too simplistic to fully represent the complex quantum mechanical potential energy surface, a gap that machine-learned force fields are now aiming to address [29].

Q2: Why does my force field perform well for one class of molecules but poorly for another?

This is a classic problem of poor transferability, often rooted in the parameterization process [30] [31]. Force fields are often trained on specific types of data (e.g., thermodynamic properties of neat liquids or conformational energies of small peptides). Consequently, they can become specialized for those specific chemistries and properties. For instance, a force field parameterized for combustion chemistry may perform poorly for predicting mechanical properties of materials [30]. This underscores the importance of using broad and diverse training datasets that represent the wide variety of interactions and systems the force field is expected to model.

Q3: How can I assess whether errors in my simulation are due to poor parameterization or inadequate sampling?

Distinguishing between these two error sources is critical. A robust approach involves validating against multiple experimental observables that are sensitive to different aspects of the force field [26]. The table below summarizes key metrics you can use for this validation. If your simulation shows significant deviations from a range of these target properties, especially static structural properties, the force field parameters are likely a primary source of error. Conversely, if ensemble-averaged properties match experiment but time-dependent or rare events are inaccurate, sampling may be the issue.

Table 1: Key Experimental and Theoretical Properties for Force Field Validation

| Property Category | Specific Metrics | What It Primarily Validates |

|---|---|---|

| Structural Properties | Root-mean-square deviation (RMSD), radius of gyration, number of hydrogen bonds, backbone dihedral distributions [26] | Bonded parameters, non-bonded interactions, overall structural fidelity |

| Energetic Properties | Heats of vaporization, conformational energies, binding free energies and enthalpies [32] [31] | Non-bonded parameters (Lennard-Jones, charges), torsional parameters |

| Dynamical Properties | Diffusion coefficients, order parameters, residual dipolar couplings [26] | Balance of forces, often sensitive to both parameters and sampling |

| Spectroscopic Properties | NMR chemical shifts, J-coupling constants [27] | Local electronic environment and geometry, highly sensitive to atomic coordinates |

FAQ: Parameterization and Optimization Challenges

Q4: What are the main challenges in optimizing force field parameters?

Parameter optimization is a complex, high-dimensional problem. Key challenges include:

- High Dimensionality and Coupling: Force fields can contain hundreds of highly correlated parameters [30] [26]. Optimizing them sequentially neglects coupling effects and can lead to sub-optimal solutions [31].

- Conflicting Target Properties: A parameter change that improves agreement with one experimental property (e.g., density) may worsen agreement with another (e.g., enthalpy of vaporization) [26]. This makes multi-objective optimization essential but challenging.

- Computational Cost: Iteratively running molecular dynamics simulations to evaluate each candidate parameter set is computationally prohibitive [33].

- Overfitting: Optimizing against a narrow range of target data risks creating a parameter set that performs well for those specific properties but fails to generalize [26].

Q5: What advanced optimization methods are available beyond manual tuning?

Several automated, computational strategies have been developed to overcome the limitations of manual parameter fitting:

Table 2: Comparison of Force Field Parameter Optimization Methods

| Optimization Method | Key Principle | Advantages | Disadvantages/Limitations |

|---|---|---|---|

| Genetic Algorithms (GA) [31] | Evolves parameter sets via selection, crossover, and mutation inspired by natural selection. | Effective for complex, high-dimensional problems; does not require gradient information. | Can be computationally intensive; performance depends on initial population and hyperparameters [30]. |

| Simulated Annealing (SA) [30] | Probabilistically explores parameter space, accepting worse solutions early to escape local minima. | Simpler to implement than GA; less prone to premature convergence [30]. | Cooling schedule significantly affects efficiency; completely random search can be slow [30]. |

| Particle Swarm Optimization (PSO) [30] | Parameters ("particles") move through space based on their own and the swarm's best-known positions. | Easy to implement and parallelize; records optimization direction for efficiency. | Can fall into local optima; may require many iterations [30]. |

| Hybrid SA/PSO with CAM [30] | Combines SA and PSO, using a Concentrated Attention Mechanism to focus on key training data. | More accurate and faster than traditional metaheuristic methods alone [30]. | Increased algorithmic complexity. |

| Sensitivity Analysis [32] | Computes gradients of simulation observables with respect to parameters to guide optimization. | Efficiently predicts how parameter changes will affect the output, enabling directed search. | Requires calculation of derivatives, which can be non-trivial. |

| Machine Learning Surrogates [33] | Trains a neural network to predict simulation outcomes from parameters, replacing costly MD. | Dramatically reduces optimization time (e.g., by ~20x [33]). | Requires initial training data; potential for model error. |

Q6: How can quantum mechanical (QM) data improve force field parameterization?

QM data provides a fundamental, physics-based foundation for parameterization. Modern approaches use QM-to-MM mapping to derive parameters directly from quantum calculations [28]. This can include:

- Deriving bond and angle force constants from the QM Hessian matrix.

- Calculating atomic partial charges from the molecular electrostatic potential.

- Estimating Lennard-Jones dispersion coefficients from atomic electron densities. This approach significantly reduces the number of empirical parameters that need to be fit to experimental data, thereby lessening the risk of overfitting and improving physical rigor [28]. For example, one protocol using this method achieved high accuracy in liquid properties with only seven fitting parameters [28].

Experimental Protocols for Validation and Optimization

Protocol 1: Validating a Protein Force Field Using Structural Metrics

Objective: To systematically evaluate the performance of a protein force field against a curated set of high-resolution protein structures.

Materials:

- Software: A molecular dynamics simulation package (e.g., GROMACS, AMBER, NAMD).

- Test Set: A curated set of 50+ high-resolution protein structures (X-ray and NMR-derived) [26].

- Computational Resources: High-performance computing cluster.

Methodology:

- Preparation: Obtain the experimental structures and prepare them for simulation (e.g., add missing atoms, protonate, solvate in a water box, add ions).

- Simulation: Run multiple, independent MD simulations for each protein using the force field under evaluation. Ensure simulation times are long enough to achieve convergence for the properties of interest.

- Analysis: Calculate a range of structural properties from the simulation trajectories and compare them to the experimental reference data. Key metrics should include [26]:

- Root-mean-square deviation (RMSD) from the native structure.

- Radius of gyration.

- Solvent-accessible surface area (SASA).

- Number of native hydrogen bonds.

- Distribution of backbone dihedral angles (Ramachandran plots).

- Statistical Comparison: Use statistical tests to determine if observed differences between the simulation averages and experimental values are significant. Do not rely on a single protein or a single metric for the final assessment [26].

Protocol 2: Optimizing Lennard-Jones Parameters Using a Surrogate Model

Objective: To efficiently optimize non-bonded Lennard-Jones parameters to reproduce target bulk-phase density, using a machine learning surrogate model to accelerate the process.

Materials:

- Software: Optimization toolkit (e.g., FFLOW [33]), MD software, machine learning library (e.g., Scikit-learn, PyTorch).

- System: A target molecule (e.g., n-octane).

- Target Data: Experimental bulk-phase density.

Methodology:

- Define Feasible Parameter Space: Set physically reasonable minimum and maximum bounds for the parameters to be optimized (e.g., σ and ε for relevant atom types) [33].

- Generate Training Data: Sample the parameter space using a strategy like grid-based or space-filling sampling. For each sampled parameter set, run an MD simulation to compute the bulk-phase density. This creates a dataset mapping parameters to properties [33].

- Train Surrogate Model: Train a neural network (or other ML model) to predict the bulk-phase density from the Lennard-Jones parameters, using the dataset generated in the previous step.

- Run Optimization: Execute the optimization algorithm (e.g., genetic algorithm). When the optimizer needs to evaluate the density for a given parameter set, query the surrogate model instead of running a new, costly MD simulation [33].

- Validation: Run a final MD simulation using the optimized parameters from the surrogate-assisted process to confirm that the target density is accurately reproduced.

Visualizations

Diagram 1: Force Field Validation and Optimization Workflow

This diagram outlines a comprehensive strategy for assessing and improving force field accuracy, integrating both validation metrics and modern optimization techniques.

Diagram 2: QM-to-MM Parameter Mapping Protocol

This diagram illustrates the data-driven process of deriving molecular mechanics parameters directly from quantum mechanical calculations, reducing empirical fitting.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Computational Tools for Force Field Development

| Tool Name | Type/Brief Description | Primary Function in Force Field Research |

|---|---|---|

| ForceBalance [28] | Parameter Optimization Software | Automates the fitting of force field parameters against quantum mechanical and experimental target data. |

| QUBEKit [28] | Force Field Derivation Toolkit | Implements QM-to-MM mapping protocols to generate bespoke force field parameters from quantum chemical calculations. |

| FFLOW [33] | Multiscale Optimization Workflow | Provides a modular toolkit for automated parameter optimization against objectives from different property domains (e.g., conformational energy and bulk density). |

| sGDML [29] | Machine-Learned Force Field | Constructs accurate, high-dimensional force fields directly from quantum data by incorporating physical symmetries, enabling MD with near-CCSD(T) accuracy. |

| LAMMPS [34] | Molecular Dynamics Simulator | A widely used software for performing MD simulations, often the engine for evaluating candidate parameter sets during optimization. |

| CheShift [27] | Validation Utility | Assigns accurate chemical shifts to MD trajectory frames via template matching, providing a sensitive metric for validating atomic-level structural accuracy. |

| ByteFF / Espaloma [1] | Data-Driven Parameterization | Graph neural network-based systems that predict molecular mechanics parameters for drug-like molecules across expansive chemical spaces. |

Modern Force Field Parameterization: From Quantum Data to Automated Fitting

Leveraging High-Accuracy Quantum Mechanical Datasets (OMol25, MD17) for Training

FAQs: Dataset Selection and Application

What are the primary differences between the MD17 and OMol25 datasets, and how do I choose?

The MD17 and OMol25 datasets serve different purposes and scopes. Your choice should depend on your project's specific goals, as summarized in the table below.

| Feature | MD17 Dataset | OMol25 Dataset |

|---|---|---|

| Primary Use Case | Benchmarking machine-learned potentials for small, gas-phase molecules [35] | Training generalizable ML models across expansive chemical space [36] |

| System Size | Up to ~21 atoms [35] | Up to 350 atoms [36] |

| Chemical Diversity | Limited to small organic molecules (e.g., aspirin, malonaldehyde) [35] | High; includes 83 elements, biomolecules, metal complexes, electrolytes [36] |

| Configurational Coverage | Near-equilibrium, thermal sampling at 500K [35] | Extensive; includes conformers, reactive structures, explicit solvation [36] |

| Data Volume | Tens of thousands of geometries per molecule [35] | ~100 million DFT calculations [36] |

| Key Limitation | Narrow energy range; poor for reactive or quantum property prediction [35] | High computational cost for full dataset training [36] |

I am getting poor simulation stability despite low force MAE on MD17. What could be wrong?

This is a known issue where models overfit to the narrowly sampled, near-equilibrium configuration space of MD17. To improve stability [35]:

- Incorporate Diverse Data: Pre-train your model on a larger, more diverse dataset like OMol25 or OC20 before fine-tuning on MD17. This builds a more robust foundational understanding of chemical space.

- Use Advanced Sampling: Employ gradient-guided sampling algorithms like Gradient Guided Furthest Point Sampling (GGFPS) to ensure your training set includes high-force regions and strained configurations, not just low-energy minima.

- Validate with MD: Always run a short molecular dynamics simulation as a stability check during model validation; low force MAE does not guarantee long-term trajectory integrity.

How do I handle missing parameters for residues or small molecules when setting up a simulation?

The GROMACS tool pdb2gmx will throw an error like Residue 'XXX' not found in residue topology database if your chosen force field lacks parameters for a molecule in your system [19]. Here are the standard solutions:

- Search for Existing Parameters: Look for topology files (

.itp) from reputable sources or published literature that are consistent with your force field. - Parameterize Yourself: If parameters are missing, you must derive them. This typically involves:

- Quantum Mechanics (QM) Calculations: Generating reference data for the molecule's geometry, electrostatic potential, and torsional energy profiles at an appropriate level of theory (e.g., DFT) [37] [8].

- Charge Fitting: Using methods like Restrained Electrostatic Potential (RESP) to derive partial atomic charges [8].

- Parameter Optimization: Fitting bonded and non-bonded parameters to reproduce the QM reference data and/or experimental measurements [7].

Troubleshooting Guides

Problem: Simulation Instability and Crashes (Bond Breakage, Unphysical Motions)

This occurs when the force field or machine-learned potential fails to accurately describe atomic interactions outside its training domain.

- Possible Cause 1: Limited Training Data Configuration. Models trained solely on MD17's near-equilibrium configurations may fail when simulations sample higher-energy regions [35].

Solution:

- Use Expanded Datasets: Train or refine your model using datasets with broader configurational coverage, such as xxMD (includes reactive configurations) or QM-22 (broader energy range) [35].

- Implement Robust Validation: Move beyond energy and force MAE. Monitor bond length deviations and the onset time for instability during validation MD runs [35].

Possible Cause 2: Incorrect Simulation Parameters. Mismatched temperature or pressure coupling settings between equilibration and production runs can cause system instability [38].

- Solution:

- Double-Check Parameters: Before a production run, ensure the temperature for velocity generation and pressure coupling parameters match those used during the NVT and NPT equilibration steps [38].

- Use Auto-Fill Features: Tools like the SAMSON GROMACS Wizard's "Auto-fill" feature can help prevent manual path and parameter errors [38].

Problem: Energy Minimization Fails to Converge

During the initial energy minimization step, the process halts with a warning that forces have not converged to the requested precision, often with a very high Fmax value [39].

- Possible Cause: Bad Atomic Contacts or Incorrect Geometry. The starting structure may have atoms too close together (steric clashes) or have incorrect bond geometries.

- Solution:

- Identify the Clash: The minimization log file will report the atom number with the maximum force (e.g.,

Maximum force = 2.2208766e+04 on atom 5166). Visually inspect this atom and its surroundings in a molecular viewer to identify the bad contact [39]. - Check for Missing Atoms: Use

pdb2gmxwith the-ignhflag to let the tool add missing hydrogens with correct nomenclature. For missing heavy atoms, you will need to model them using external software [19]. - Two-Step Minimization: Try a two-step minimization protocol: start with the steepest descent algorithm to resolve severe clashes, then switch to the conjugate gradient method for finer convergence [39].

- Identify the Clash: The minimization log file will report the atom number with the maximum force (e.g.,

Problem: "Out of Memory" Error When Running Analysis

- Possible Cause: The system or trajectory is too large for available RAM. The memory cost scales with the number of atoms and trajectory frames [19].

- Solution:

- Reduce Scope: Analyze a subset of atoms or a shorter segment of the trajectory.

- Check Unit Errors: A common mistake is confusing Ångström and nanometers when defining the simulation box, creating a system 10³ times larger than intended. Verify your initial system size [19].

Experimental Protocols for Force Field Validation

This section outlines a standardized workflow for developing and validating force field parameters using high-accuracy quantum mechanical data.

Workflow for Force Field Parameterization and Validation

The following diagram illustrates the iterative cycle of parameterization and validation.

Detailed Methodology

1. Generate Quantum Mechanical Reference Data

This is the foundational step for parameterization. The quality of the QM data directly determines the accuracy of the force field [37] [8].

- Level of Theory: For organic and drug-like molecules, use a density functional theory (DFT) method that accounts for dispersion forces, such as B3LYP-D3(BJ)/def2-TZVP or ωB97M-V/def2-TZVPD [37] [36].

- Key Data to Compute:

- Optimized Geometries and Hessians: Provides reference for bond lengths, angles, and vibrational frequencies. The ByteFF study generated 2.4 million optimized molecular fragment geometries with analytical Hessian matrices [37].

- Torsional Energy Profiles: Scan dihedral angles to fit rotational barriers. The ByteFF dataset included 3.2 million torsion profiles [37].

- Electrostatic Potentials: Used to derive partial atomic charges via fitting methods like RESP (Restrained Electrostatic Potential) [8].

2. Parameterize the Force Field

- Bonded Parameters: Bond and angle force constants can often be derived directly from the QM-calculated Hessian. Dihedral parameters are optimized by minimizing the difference between the classical potential and the QM torsional energy profile [8].

- Non-Bonded Parameters: Atomic partial charges are fitted to reproduce the QM electrostatic potential. Lennard-Jones parameters are typically transferred from existing force fields or optimized against QM interaction energies [37] [40].

- Automated Approaches: Leverage machine learning. For example, the ByteFF project used a graph neural network (GNN) trained on a massive QM dataset to predict all force field parameters simultaneously [37].

3. Validate against Benchmark Properties

Validation must use properties not included in the parameterization process to test transferability and robustness [7].

- Structural Properties: Compare simulated bond lengths, angles, and crystal packing geometries against experimental X-ray crystallography data [7] [8].

- Thermodynamic Properties: Calculate densities, enthalpies of vaporization, and solvation free energies, comparing to experimental measurements [7].

- Dynamical Properties: Validate against experimental data such as lateral diffusion coefficients (from FRAP experiments) and vibrational spectra (IR/Raman) [7] [8].

The Scientist's Toolkit

| Item | Function in Research | Example in Context |

|---|---|---|

| OMol25 Dataset | Training foundational ML models on chemically diverse systems; fine-tuning for specific applications [36] | Provides a massive, diverse training set to improve model generalization beyond small molecules. |

| MD17 Dataset | Benchmarking and rapid prototyping of new machine learning potential architectures [35] | Serves as a standard testbed to compare the accuracy of a new model against existing ones. |

| QM-22 / xxMD | Testing model performance on broad energy ranges and reactive configurations [35] | Validates whether a model can correctly describe bond breaking and formation. |

| ByteFF Methodology | A data-driven framework for parameterizing molecular mechanics force fields across expansive chemical space [37] | Demonstrates an end-to-end pipeline using a GNN to predict Amber-compatible parameters for drug-like molecules. |

| BLipidFF Framework | A parameterization protocol for complex biological molecules not well-covered by standard force fields [8] | Provides a template for deriving parameters for unique lipids, like those in the Mycobacterium tuberculosis membrane. |

| GROMACS | A versatile software package for performing molecular dynamics simulations [38] [19] | The primary engine for running simulations, from energy minimization to production runs. |

| LigParGen Server | A web-based tool for generating initial atomistic OPLS-AA parameters and topologies for small molecules [40] | Useful for creating starting parameters for a ligand or small molecule prior to refinement. |

Force Field Parameterization Workflow

This diagram details the key steps in the parameterization module, which is often iterative.

Automated Iterative Parameter Optimization Protocols

Frequently Asked Questions (FAQs)

Q1: What is the core concept behind automated iterative parameter optimization? Automated iterative parameter optimization is a computational procedure that systematically refines molecular mechanics force field parameters. It works by optimizing parameters against quantum mechanical (QM) calculations, running dynamics simulations to sample new conformations, computing QM energies and forces for these new structures, adding them to the training dataset, and then repeating the cycle. This iterative process continues until convergence is achieved, often determined using a separate validation set to prevent overfitting [41].

Q2: My optimization process is not converging or keeps falling into local minima. What strategies can help? This is a common challenge. Advanced optimization frameworks now combine multiple algorithms to improve robustness. For instance, one effective approach integrates Simulated Annealing (SA) with Particle Swarm Optimization (PSO). SA helps escape local minima by occasionally accepting worse solutions, while PSO efficiently uses knowledge of the swarm's best-known positions to guide the search. Introducing a "concentrated attention mechanism" (CAM) that focuses optimization effort on representative key data, like optimal structures, can further enhance accuracy and convergence [30].

Q3: How can I detect and prevent overfitting during force field parameterization? The most effective method is to use a validation set that is separate from the data used for parameter optimization. During the iterative process, the quality of the parameters is monitored against this held-out validation set. Convergence is declared when the error on the validation set stops improving or starts to increase, which flags the point where overfitting to the training data begins. This strategy circumvents problems with parameter convergence that plagued earlier iterative methods [41].

Q4: The optimization process is computationally very expensive. Are there ways to speed it up? Yes, a promising approach is to use machine learning to create surrogate models. In multiscale force-field parameter optimization, the most time-consuming element is often molecular dynamics (MD) simulations. These can be substituted with a pre-trained neural network surrogate model that instantly predicts the target property (e.g., bulk density) for a given set of parameters. This substitution can reduce the required optimization time by a factor of approximately 20 while retaining similar force field quality [33].

Q5: How do I choose the right optimization algorithm for my parameterization task? The choice depends on your specific problem. Here is a comparison of common algorithms:

Table: Comparison of Force Field Parameter Optimization Algorithms

| Algorithm | Key Features | Advantages | Disadvantages |

|---|---|---|---|

| Gradient-Based Methods [42] [43] | Uses sensitivity analysis (gradients) to guide parameter changes. | Fast, directed convergence; efficient for local minima; can handle many parameters. | Requires gradient calculation; can be sensitive to statistical noise in simulations. |

| Particle Swarm Optimization (PSO) [30] [44] | A population-based method that mimics social behavior. | Simple implementation; effective parallelization; does not require gradients. | Can be prone to getting stuck in local optima; may require many iterations. |

| Simulated Annealing (SA) [30] | Probabilistically accepts worse solutions to explore search space. | Can escape local minima; does not require a good initial guess. | Convergence speed depends on cooling schedule; can be slow. |

| Genetic Algorithm (GA) [30] | Evolves parameters via selection, crossover, and mutation. | Effective for complex, non-linear landscapes. | Complex implementation; premature convergence is a common issue. |

| Hybrid SA+PSO+CAM [30] | Combines SA and PSO with a focus on key data. | High accuracy and efficiency; avoids local traps; improved convergence. | More complex to implement than individual algorithms. |

Q6: What are the essential targets for validating newly parameterized coarse-grained models for small molecules? For coarse-grained models like those in Martini 3, key validation targets include:

- Experimental Log P Values: The octanol-water partition coefficient is a primary indicator of hydrophobicity and must be reproduced [44].

- Atomistic Density Profiles in Lipid Bilayers: This provides a membrane-specific target, capturing the molecule's orientation and distribution within a biologically relevant environment [44].

- Structural Properties: The model should reproduce the overall shape and volume of the atomistic molecule, which can be assessed via metrics like the Solvent Accessible Surface Area (SASA) [44].

Troubleshooting Guide

Table: Common Issues and Solutions in Parameter Optimization

| Problem | Potential Causes | Recommended Solutions |

|---|---|---|

| Non-Physical Simulation Results | Poorly optimized bonded terms (dihedrals, angles); incorrect partial charges; unbalanced non-bonded parameters. | Re-optimize torsion parameters against QM energy scans [8]. Recalculate partial charges using high-level QM (e.g., B3LYP/def2TZVP) and RESP fitting [8]. |

| Poor Transferability | Overfitting to a narrow training set (e.g., a single conformation). | Implement iterative Boltzmann sampling (e.g., at 400 K) to expand the training dataset with relevant conformations [41]. |

| High Computational Cost | Expensive target property evaluation (e.g., long MD simulations). | Substitute costly MD simulations with a machine learning surrogate model to predict target properties [33]. |

| Parameter Set Fails for Certain Properties | The current functional form of the force field may be inherently limited for reproducing some observables. | Use sensitivity analysis to check if the observable depends on the parameters. Conjecture that the functional form may need revision if no parameter set can fit the data [42]. |

Detailed Experimental Protocols

Protocol 1: Automated Iterative Force Field Fitting

This protocol describes an automated, iterative procedure for fitting single-molecule force fields, designed to achieve superior accuracy compared to general force fields [41].

Initialization:

- Begin with an initial set of force field parameters and a small dataset of QM calculations (energies and forces) for a set of reference molecular conformations.

Parameter Optimization Loop:

- Step 1 - Optimize Parameters: Run a parameter optimization algorithm (e.g., gradient-based, PSO) to minimize the difference between the force field's predictions and the QM data in the current dataset.

- Step 2 - Run Dynamics: Perform molecular dynamics simulations using the newly optimized parameters. The sampling temperature (e.g., 400 K) should be sufficient to explore a broad range of conformations on the potential energy surface.

- Step 3 - Expand QM Dataset: Select new conformations sampled from the MD trajectory. Compute QM energies and forces for these new structures and add them to the training dataset.

- Step 4 - Validate: Assess the quality of the current parameters against a separate, static validation set of QM data.

- Step 5 - Check Convergence: Return to Step 1 unless the error on the validation set has converged or begins to increase, indicating the onset of overfitting.

The following diagram illustrates this iterative workflow:

Protocol 2: Charge and Torsion Parameterization for Complex Lipids

This protocol outlines a rigorous, QM-based method for developing force field parameters for complex biological molecules, such as mycobacterial membrane lipids [8].

Partial Charge Calculation:

- Segmentation: Divide the large lipid molecule into smaller, manageable segments at chemically appropriate junctions.

- Capping: Cap the segmentation points with appropriate chemical groups (e.g., methyl, acetate) to maintain the local electronic environment.

- Conformational Sampling: Generate multiple (e.g., 25) conformations for each segment from MD trajectories to account for flexibility.

- QM Calculation & RESP Fitting: For each conformation: a. Perform geometry optimization at the B3LYP/def2SVP level. b. Derive electrostatic potential (ESP) charges via the RESP method at the B3LYP/def2TZVP level.

- Averaging and Integration: Calculate the average RESP charge for each segment across all conformations. Integrate the segment charges to obtain the final partial charges for the full molecule, removing the capping groups.

Torsion Parameter Optimization:

- Identify Torsions: Select all torsion angles involving heavy atoms for parameterization.

- Subdivision: Further subdivide the molecule into even smaller elements to make high-level QM torsion scans computationally feasible.

- QM Target Data: Perform a relaxed torsion scan for each targeted dihedral angle using QM methods to obtain the energy profile.

- Optimization: Iteratively adjust the torsion force parameters (Vn, n, γ) in the force field to minimize the difference between the classical and QM energy profiles.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Software and Computational Tools for Parameter Optimization

| Tool / Reagent | Function / Purpose | Application Context |

|---|---|---|

| FFLOW [33] | A modular, multiscale force-field parameter optimization toolkit. | Enables simultaneous optimization of parameters against target data from different property domains (e.g., conformational energies and bulk density). |

| CGCompiler [44] | A Python package for automated parametrization within the Martini coarse-grained force field. | Uses mixed-variable particle swarm optimization to assign bead types and optimize bonded parameters against targets like log P and density profiles. |

| GROW [43] | A gradient-based optimization workflow for the automated development of molecular models. | Implements efficient gradient-based algorithms (e.g., Steepest Descent) for force field parameter refinement. |