The NPT Ensemble Explained: From Theory to Application in Drug Discovery and Biomolecular Simulation

This article provides a comprehensive exploration of the isothermal-isobaric (NPT) ensemble, a cornerstone of statistical mechanics and molecular simulation.

The NPT Ensemble Explained: From Theory to Application in Drug Discovery and Biomolecular Simulation

Abstract

This article provides a comprehensive exploration of the isothermal-isobaric (NPT) ensemble, a cornerstone of statistical mechanics and molecular simulation. Tailored for researchers and drug development professionals, it details the ensemble's foundational theory, its practical implementation via modern algorithms and barostats, and strategies for troubleshooting common issues. Highlighting a 2025 case study, the article demonstrates how NPT simulations, when combined with machine learning, are used to predict critical drug properties like aqueous solubility, validating the ensemble's pivotal role in advancing biomedical research.

Understanding the NPT Ensemble: Statistical Mechanics and Thermodynamic Foundations

The isothermal-isobaric ensemble, almost universally referred to as the NPT ensemble, is a foundational concept in statistical mechanics and computational physics. It describes a thermodynamic system in contact with a surrounding environment that maintains constant particle number (N), constant pressure (P), and constant temperature (T) [1] [2]. This ensemble represents a system that can exchange both energy and volume with its surroundings, thereby existing in simultaneous thermal and mechanical equilibrium with a heat bath and a pressure reservoir [3]. The NPT ensemble is exceptionally valuable because it closely mirrors a vast array of real-world experimental conditions, particularly in chemistry and materials science, where reactions and processes often occur at atmospheric pressure and controlled temperature [2] [3].

Within the framework of statistical mechanics, the NPT ensemble connects the microscopic properties of a system to its macroscopic observables through statistical averages [3]. The characteristic thermodynamic potential for this ensemble is the Gibbs free energy (G), which is minimized when the system reaches equilibrium [1] [3]. The ability to study systems under constant N, P, and T conditions makes this ensemble indispensable for investigating phase transitions, material compressibility, and the properties of liquids, polymers, and biological macromolecules [1] [3].

Theoretical Foundations

The Partition Function and Probability Distribution

The partition function serves as the cornerstone of any statistical ensemble, encapsulating all its thermodynamic properties. For the NPT ensemble, the partition function, denoted as Δ(N, P, T), is derived from the canonical (NVT) partition function by introducing volume as an additional variable and incorporating the pressure-volume work term [1] [3].

For a classical system, the NPT partition function can be expressed as:

Δ(N, P, T) = ∫ e^(-βPV) Z(N, V, T) C dV [1]

Here:

β = 1/(k_B T), wherek_Bis the Boltzmann constant.Pis the external pressure.Vis the volume of the system.Z(N, V, T)is the canonical (NVT) partition function.Cis a constant ensuring the partition function is dimensionless [1].

An alternative formulation sums over all possible microstates i of the system. The probability p_i of finding the system in a specific microstate i with energy E_i and volume V_i is given by the generalized Boltzmann distribution:

p_i = Z^{-1} e^{-β(E_i + PV_i)} [1]

In this equation, Z acts as the normalization constant. This probability distribution highlights that the likelihood of a microstate depends on both its energy and its volume, weighted by the external pressure.

Connection to Thermodynamics: Gibbs Free Energy

The bridge between the microscopic description of the NPT ensemble and macroscopic thermodynamics is the Gibbs free energy (G). It is calculated directly from the partition function [1] [3]:

G(N, P, T) = -k_B T ln Δ(N, P, T)

The Gibbs free energy is the relevant thermodynamic potential for processes occurring at constant pressure and temperature. The equilibrium state of a system under these conditions is determined by the minimization of G. This fundamental connection allows for the calculation of all other thermodynamic quantities through derivatives of G or the partition function [3].

Table 1: Key Thermodynamic Relationships in the NPT Ensemble

| Thermodynamic Quantity | Relation to Partition Function or Averages |

|---|---|

| Gibbs Free Energy (G) | G = -k_B T ln Δ(N, P, T) [1] [3] |

| Average Energy (⟨E⟩) | ⟨E⟩ = - (∂ ln Δ / ∂ β)_{N, P} |

| Average Volume (⟨V⟩) | ⟨V⟩ = - (1/β) (∂ ln Δ / ∂ P)_{N, T} [3] |

| Enthalpy (H) | H = ⟨E⟩ + P⟨V⟩ [3] |

| Entropy (S) | S = - (∂ G / ∂ T)_{N, P} [3] |

Fluctuations and Response Functions

In the NPT ensemble, both the energy and the volume are fluctuating quantities. The magnitude of these fluctuations is not arbitrary; it is intrinsically linked to the system's fundamental response functions. The variance of the volume fluctuations, for instance, is proportional to the isothermal compressibility, κ_T, which measures how much a material compresses under pressure at constant temperature [3]:

κ_T = - (1/⟨V⟩) (∂⟨V⟩/∂P)_T = (⟨V²⟩ - ⟨V⟩²) / (k_B T ⟨V⟩)

Similarly, energy fluctuations are related to the system's heat capacity. These relationships demonstrate how measurable material properties emerge from the statistical nature of molecular motions and configurations.

Practical Implementation in Computer Simulations

Molecular Dynamics with Thermostats and Barostats

In Molecular Dynamics (MD) simulations, the NPT ensemble is generated by applying algorithms that control both temperature and pressure. This requires the combined use of a thermostat and a barostat [4] [5].

Thermostats maintain a constant temperature by adjusting the atomic velocities. Common algorithms include:

- Nosé-Hoover Thermostat: An extended system method that introduces an additional degree of freedom to represent the heat bath, generating a correct canonical distribution [6] [5].

- Berendsen Thermostat: A weak-coupling method that scales velocities to gently drive the temperature towards the target value. It is efficient but does not produce a strictly rigorous ensemble [4].

- Langevin Thermostat: Applies stochastic and friction forces to particles to maintain temperature, often used in conjunction with barostats like Parinello-Rahman [7].

Barostats maintain constant pressure by dynamically adjusting the simulation cell's volume or shape. Key algorithms include:

- Parrinello-Rahman Barostat: An extended system method that allows for fully flexible simulation cells, enabling the study of solid-state phase transitions where the cell shape may change. It is considered one of the most robust and versatile barostats [7] [5].

- Berendsen Barostat: Scales the cell dimensions to gradually relax the internal pressure to the target pressure. Like its thermostat counterpart, it is efficient for equilibration but does not generate the exact NPT ensemble [5].

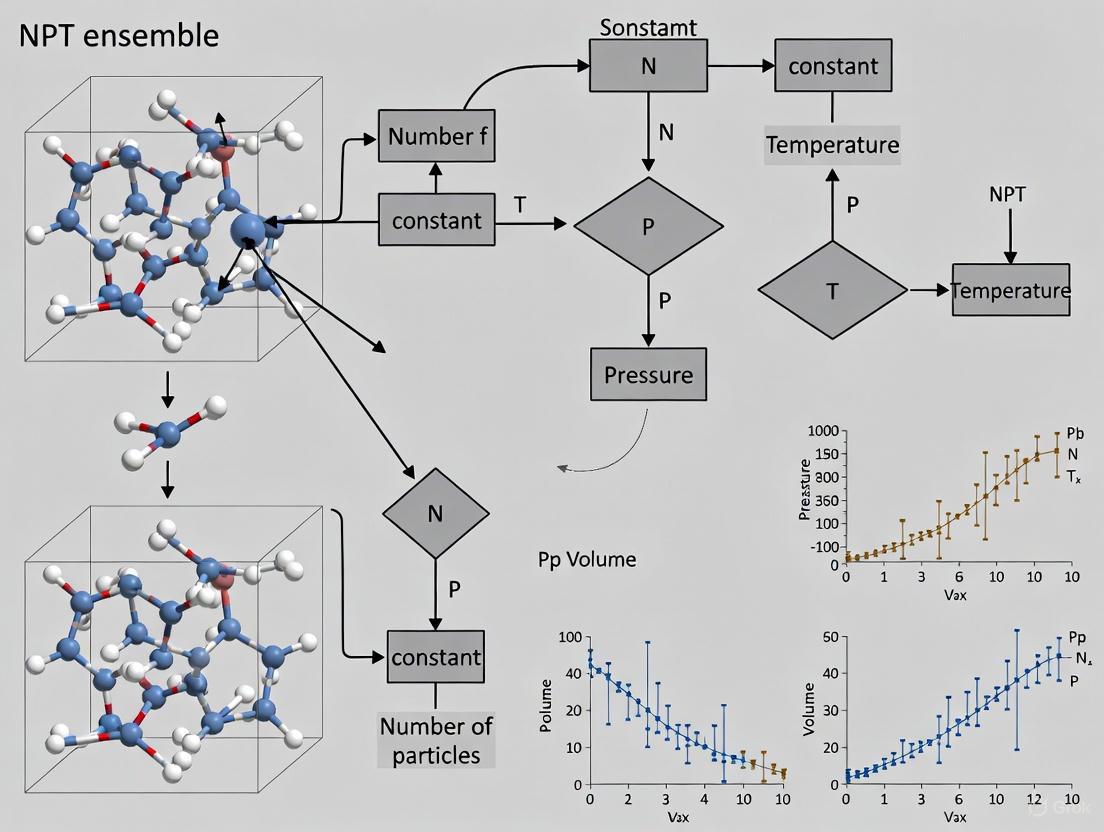

The following diagram illustrates the workflow of an NPT-MD simulation, integrating these core components:

Monte Carlo Methods

In Monte Carlo (MC) simulations, the NPT ensemble is sampled through random moves. In addition to the particle displacement moves used in the NVT ensemble, NPT Monte Carlo incorporates volume change moves [3] [8]. A trial move involves randomly changing the volume of the simulation box and uniformly scaling the coordinates of all particles within it. This trial move is then accepted or rejected based on a criterion that ensures the system samples configurations according to the correct NPT probability distribution, which includes the e^(-β P ΔV) term from the partition function [8]. This method is particularly powerful for studying phase equilibria and calculating free energy differences.

Experimental Protocols and Research Applications

A Representative Workflow: Epoxy Network Mechanics

A study on the elastic properties of elastomeric epoxy networks provides an excellent example of a detailed NPT-MD protocol [6]. The research investigated stoichiometric networks formed from poly(ethylene glycol) diglycidyl ether (PEGDE) and 1,4-diaminobutane (DAB).

Table 2: Key Reagents and Computational Tools for Epoxy Network Simulation

| Research Reagent / Tool | Function / Description |

|---|---|

| Poly(ethylene glycol) diglycidyl ether (PEGDE) | Bifunctional epoxy precursor that forms the network backbone. |

| 1,4-diaminobutane (DAB, Putrescine) | Tetrafunctional cross-linker; amine groups react with epoxy groups. |

| PCFF-IFF Force Field | An interatomic potential providing parameters for polymers, used to calculate energies and forces. |

| LAMMPS (Large-scale Atomic/Molecular Massively Parallel Simulator) | Open-source MD software used to perform the energy minimization, equilibration, and production runs. |

| LUNAR | Open-source Python code used for system configuration, file preparation, and force field application. |

| Nosé-Hoover Thermostat & Barostat | Algorithms used to maintain constant temperature (27°C) and pressure (1 atm) during the NPT equilibration phase. |

Detailed Protocol [6]:

- System Preparation: PEGDE and DAB molecules were packed into a low-density simulation box (0.2 g/cm³).

- Energy Minimization: The conjugate-gradient method was used to relax the system and find a local energy minimum.

- NPT Equilibration: The system was equilibrated in the NPT ensemble at 27°C and 1 atm for 500 ps using a Nosé-Hoover thermostat and barostat with a 1 fs time step.

- Densification: The simulation box volume was gradually reduced over 5 ns to reach the target bulk density of ~1.11 g/cm³.

- Annealing: The system underwent a thermal annealing cycle (heating to 227°C and cooling back to 27°C) to enhance relaxation.

- Cross-linking: Epoxide-amine cross-linking reactions were simulated using the REACTER protocol in LAMMPS until a specific reaction extent (~82% or ~92.5%) was achieved.

- Final Equilibration: The newly formed network was equilibrated again in the NPT ensemble for 100 ns at 27°C and 1 atm.

- Mechanical Characterization: The equilibrated network was subjected to uniaxial tensile and shear deformations at various high strain rates (10⁷ to 10¹⁰ s⁻¹) under the NPT ensemble to calculate Young's modulus and other elastic constants.

Case Study: Negative Thermal Expansion in ScF₃

The NPT ensemble is crucial for studying unusual material properties, such as negative thermal expansion (NTE). Ab initio MD simulations in the NpT ensemble were used to investigate the lattice contraction of scandium fluoride (ScF₃) upon heating [9]. The simulations were performed at temperatures from 300 K to 1600 K. A key finding was the sensitivity of the results to the size of the simulation supercell; a supercell larger than 2a×2a×2a was required to accurately capture the dynamic disorder responsible for the NTE effect. The study concluded that the NTE in ScF₃ arises from the interplay between the expansion of Sc-F bonds and the rotation of ScF₆ octahedra, a mechanism that could only be elucidated through the volume-fluctuating NPT ensemble [9].

Calculation of Thermal Expansion Coefficients

A common application of NPT-MD is the calculation of the coefficient of thermal expansion for solids. A typical protocol, as demonstrated for fcc-Cu, involves [5]:

- Running a series of NPT simulations at different target temperatures (e.g., from 200 K to 1000 K in 100 K increments).

- For each temperature, allowing the system to equilibrate fully and then sampling the average lattice constant over a production run.

- Plotting the average lattice constant or volume as a function of temperature.

- The thermal expansion coefficient is then derived from the slope of this curve. This requires careful parameterization of the barostat, such as selecting an appropriate

pfactor(related to the barostat's time constant and the system's bulk modulus) in the Parrinello-Rahman method [5].

Comparison with Other Statistical Ensembles

The NPT ensemble is one of several used in statistical mechanics and simulation, each suited to different physical conditions.

Table 3: Comparison of Common Statistical Ensembles

| Ensemble | Fixed Variables | Fluctuating Quantities | Common Applications |

|---|---|---|---|

| NVE (Microcanonical) | Number (N), Volume (V), Energy (E) | Temperature, Pressure | Fundamental MD; study of isolated systems. |

| NVT (Canonical) | Number (N), Volume (V), Temperature (T) | Energy, Pressure | Simulating systems in a heat bath with fixed boundaries. |

| NPT (Isothermal-Isobaric) | Number (N), Pressure (P), Temperature (T) | Energy, Volume | Real-world lab conditions; studying density, phase transitions. |

| μVT (Grand Canonical) | Chemical Potential (μ), Volume (V), Temperature (T) | Energy, Particle Number (N) | Open systems; adsorption, phase equilibria. |

The NPT ensemble is often preferred over NVT for modeling realistic experimental conditions where pressure, not volume, is controlled. It is distinct from the grand canonical (μVT) ensemble, which allows particle exchange and is better suited for studying open systems [3].

Based on the protocols and studies cited, successful implementation of the NPT ensemble in research relies on a combination of software, algorithms, and physical models.

Table 4: Essential Tools for NPT Ensemble Research

| Tool / Reagent | Category | Specific Examples | Function |

|---|---|---|---|

| Simulation Software | Software | LAMMPS [6], VASP [7], CP2K [9], ASE [5] | Core platforms for performing MD or MC simulations. |

| Thermostat Algorithms | Algorithm | Nosé-Hoover [6] [5], Berendsen [4] [5], Langevin [7] | Controls and stabilizes the simulation temperature. |

| Barostat Algorithms | Algorithm | Parrinello-Rahman [7] [5], Berendsen [5] | Controls and stabilizes the simulation pressure. |

| Interatomic Potentials | Model/Force Field | PCFF-IFF (for polymers) [6], EMT (for metals) [5], DFT (for ab initio) [9] | Describes the interactions between atoms, the core of the energy calculation. |

| System Builder Tools | Software | LUNAR [6], ASE.build [5] | Prepares initial atomic configurations and simulation cells. |

| Analysis Tools | Software | In-house scripts, visualization software (e.g., OVITO, VMD) | Processes simulation trajectories to compute properties like RDF, stress, etc. |

The NPT ensemble provides an indispensable framework for bridging the microscopic world of atoms and molecules with the macroscopic thermodynamic behavior of systems at constant pressure and temperature. Its theoretical foundation in statistical mechanics is robust, centered on the Gibbs free energy and a partition function that accounts for volume fluctuations. From a practical standpoint, the implementation of the NPT ensemble in molecular dynamics and Monte Carlo simulations via thermostats and barostats allows researchers to accurately model realistic conditions. As demonstrated by its applications in predicting material properties like the elasticity of polymer networks and the anomalous negative thermal expansion in ScF₃, the NPT ensemble remains a cornerstone technique for advancing research in drug development, materials science, and chemical physics.

The isothermal-isobaric (NPT) ensemble is a cornerstone of statistical mechanics, providing the essential theoretical framework for understanding systems that exchange both energy and volume with their surroundings at constant temperature (T), pressure (P), and particle number (N). This ensemble mirrors countless real-world experimental conditions, from chemical reactions at atmospheric pressure to the physiological environment of biological systems [3]. Its power lies in connecting the microscopic details of molecular interactions to macroscopic thermodynamic observables, enabling the prediction of material properties, phase behavior, and reaction equilibria.

This technical guide delineates the statistical mechanical basis of the NPT ensemble, beginning with a formal derivation of its partition function. We will establish the connection between this partition function and the Gibbs free energy, the characteristic thermodynamic potential for the ensemble. Furthermore, the document will explore the theoretical underpinnings of fluctuations and response functions, and conclude with a discussion on the practical implementation of NPT ensemble in modern computational methods, providing detailed protocols for molecular dynamics simulations.

Theoretical Foundation and Derivation

The NPT ensemble describes a system in simultaneous thermal and mechanical equilibrium with its environment. The derivation of its partition function can be approached from multiple angles, each illuminating a different aspect of the ensemble's statistical nature.

The System-and-Bath Derivation

A physically intuitive derivation involves considering a system of interest coupled to a much larger bath that acts as both a heat and a pressure reservoir [1]. The system has a fixed number of particles N, but its volume V can fluctuate. The bath, with volume V₀ and a vastly greater number of particles M (where M - N >> N), is characterized by constant temperature T and pressure P.

The total partition function for the combined system (sys) and bath is given by: Zsys+bath(N, V, T) = Zsys(N, V, T) × Zbath(M-N, V₀-V, T)

Assuming the bath is an ideal gas, its partition function is proportional to (V₀ - V)M-N. In the thermodynamic limit (V₀ → ∞, M → ∞, with (M-N)/V₀ = ρ constant), the dependence on the system's volume V can be isolated. Using the ideal gas law, the particle density ρ is equivalent to βP, leading to the probability density for the system to be in a microstate with volume V and coordinates sN being proportional to exp[-β( U(sN) + PV )] [1].

Integrating over all possible volumes and microstates yields the isothermal-isobaric partition function, Δ(N, P, T): Δ(N, P, T) = ∫ dV e^{-βPV} Z(N, V, T) (1) where Z(N, V, T) is the canonical partition function [1] [3]. The pre-factor C in the integral (C dV) is a matter of convention; it can be set to βP or 1, with the latter being more common in modern treatments [1].

The Laplace Transform and Legendre Transform Relationship

Equation (1) reveals that the NPT partition function is the Laplace transform of the canonical partition function with respect to volume [10] [1]. This mathematical structure directly facilitates the Legendre transform that connects the Helmholtz free energy F(N, V, T) = -kBT ln Z(N, V, T) to the Gibbs free energy G(N, P, T) in the thermodynamic limit.

The saddle-point approximation of the Laplace transform integral shows that in the thermodynamic limit (N → ∞), the primary contribution to the integral comes from the most probable volume, ⟨V⟩. This establishes the connection: G(N, P, T) = -kBT ln Δ(N, P, T) (2) which is the fundamental thermodynamic relationship for the NPT ensemble [1] [11] [3]. The Gibbs free energy G is the characteristic state function for variables N, P, and T, and its minimization determines the equilibrium state of the system.

Table 1: Key Partition Functions and their Corresponding Thermodynamic Potentials

| Ensemble | Partition Function | Thermodynamic Potential | Fundamental Relation |

|---|---|---|---|

| Microcanonical (NVE) | Ω(N, V, E) = eS(N,V,E)/kB | Entropy (S) | dE = TdS - PdV + μdN |

| Canonical (NVT) | Z(N, V, T) = ∫ dE Ω e^{-βE} | Helmholtz Free Energy (F) | F = E - TS |

| Isothermal-Isobaric (NPT) | Δ(N, P, T) = ∫ dV Z e^{-βPV} | Gibbs Free Energy (G) | G = F + PV = H - TS |

Thermodynamic Quantities and Fluctuations

The NPT partition function serves as a generating function for all thermodynamic properties. The ensemble averages of energy and volume are given by: ⟨E⟩ = - ( ∂ ln Δ / ∂β )P,N ⟨V⟩ = kBT ( ∂ ln Δ / ∂P )T,N

Furthermore, the Gibbs entropy formula for the NPT ensemble is: S = kBβ ⟨E⟩ + kBβP ⟨V⟩ + kB ln Δ(P, N, β) (3) which confirms that the NPT distribution is the one of maximum entropy for a given average energy and average volume [11].

A key feature of the NPT ensemble is the presence of natural fluctuations in energy and volume. These fluctuations are not mere noise but are quantitatively linked to the system's response functions. The isothermal compressibility, κT, which measures the relative volume change with pressure at constant temperature, is directly related to the variance of volume fluctuations [3]: κT = - (1/⟨V⟩) (∂⟨V⟩/∂P)T = ( ⟨V²⟩ - ⟨V⟩² ) / ( kBT ⟨V⟩ ) (4)

Similarly, the constant-pressure heat capacity, CP, is related to the fluctuations in the enthalpy, H = E + PV [11]: CP = ( ∂H/∂T )P = [ ⟨(H - ⟨H⟩)²⟩ ] / ( kBT² )

Table 2: Fluctuation-Response Relations in the NPT Ensemble

| Fluctuating Quantity | Statistical Expression for Fluctuations | Related Thermodynamic Response Function |

|---|---|---|

| Volume (V) | ⟨(δV)²⟩ = ⟨V²⟩ - ⟨V⟩² | Isothermal Compressibility: κT = ⟨(δV)²⟩ / (kBT ⟨V⟩) |

| Enthalpy (H = E+PV) | ⟨(δH)²⟩ = ⟨H²⟩ - ⟨H⟩² | Constant-Pressure Heat Capacity: CP = ⟨(δH)²⟩ / (kBT²) |

Computational Implementation and Protocols

The theoretical framework of the NPT ensemble is realized in computer simulations through Molecular Dynamics (MD) and Monte Carlo (MC) methods, enabling the atomistic study of materials under realistic conditions.

Molecular Dynamics and Barostat Algorithms

In MD, the NPT ensemble is generated by coupling the system to a thermostat and a barostat. The barostat dynamically adjusts the simulation cell's size and/or shape to maintain a constant internal pressure. Several barostat algorithms are commonly used [12] [5]:

- Berendsen Barostat: Scales the volume and coordinates with a first-order rate equation towards the target pressure. It is robust and efficient for equilibration but does not generate a rigorously correct NPT ensemble [12].

- Parrinello-Rahman Barostat: An extended system method that introduces additional dynamical variables for the simulation cell. It allows for fully flexible simulation boxes and correctly samples the NPT ensemble, making it suitable for studying solid-state phase transitions [5].

- Martyna-Tobias-Klein (MTK) Barostat: The Hamiltonian version of the Parrinello-Rahman method, also generating a correct NPT ensemble [12].

- Stochastic Barostats (e.g., Bernetti-Bussi): Stochastic variants that provide correct sampling even for very small unit cells and are often recommended for production simulations [12].

The following diagram illustrates the logical workflow and key components of an NPT molecular dynamics simulation.

Diagram 1: NPT-MD simulation workflow.

Detailed Protocol: NPT-MD Simulation with the Parrinello-Rahman Method

This protocol outlines the steps for performing an NPT-MD simulation to calculate the thermal expansion of a solid, such as a 3x3x3 supercell of fcc-Cu [5].

System Setup:

- Construct the initial crystal structure. For isotropic solids (e.g., cubic crystals), use isotropic pressure control.

- Define the empirical or first-principles calculator (e.g., EMT, PFP, DFT).

Parameter Initialization:

- Time Step: Set to 1.0 fs for systems with light atoms or high frequencies.

- Temperature: Set the target temperature (e.g., 300 K).

- Pressure: Set the external pressure (e.g., 1 bar = 10⁻⁴ GPa).

- Thermostat: Use a Nosé-Hoover thermostat with a time constant (τT or

ttime) of ~20-100 fs. - Barostat: Use the Parrinello-Rahman method. The key parameter is

pfactor(e.g., 2×10⁶ GPa·fs² for metals), which is related to the barostat time constant and the system's bulk modulus.

Equilibration:

- Initialize atomic velocities from a Maxwell-Boltzmann distribution at the target temperature.

- Run the simulation for a sufficient number of steps (e.g., 20,000 steps for 20 ps) until the potential energy, temperature, and volume (or density) reach a stable plateau.

Production and Analysis:

- Continue the simulation, saving the trajectory at regular intervals.

- Calculate the equilibrium volume ⟨V⟩ as a time average over the production phase.

- To compute the thermal expansion coefficient, repeat the simulation and analysis at different temperatures.

Table 3: Research Reagent Solutions for Computational NPT Studies

| Item / Software | Type / Function | Key Feature |

|---|---|---|

| LAMMPS | Molecular Dynamics Package | Highly versatile, supports many barostats and force fields. |

| GROMACS | Molecular Dynamics Package | Extremely fast for biomolecular systems. |

| QuantumATK | MD & Multi-scale Simulator | Integrates DFT with MD, user-friendly NPT setup. |

| ASE (Atomic Simulation Environment) | Python Library | Provides framework for defining and running NPT simulations with various calculators. |

| Parrinello-Rahman Barostat | Algorithm | Correctly samples NPT ensemble with a flexible cell. |

| Bernetti-Bussi Barostat | Algorithm | Stochastic barostat for correct NPT sampling in small cells. |

| PLUMED | Library | Enhanced sampling and analysis of MD trajectories, works with major MD engines. |

The NPT partition function, Δ(N, P, T), provides the complete statistical mechanical description of a system at constant particle number, pressure, and temperature. Its derivation as a Laplace transform of the canonical partition function formally introduces pressure as a control variable and establishes the Gibbs free energy as the relevant thermodynamic potential. The formalism naturally accounts for volume and enthalpy fluctuations, linking them directly to fundamental material properties like compressibility and heat capacity. The transition of this theoretical framework from abstract mathematics to practical tool, through sophisticated computational algorithms like the Parrinello-Rahman barostat, has made the NPT ensemble indispensable for simulating and predicting the behavior of materials and biomolecules under realistic, constant-pressure conditions.

The isothermal-isobaric (NPT) ensemble is a cornerstone of statistical mechanics, providing the essential framework for modeling systems under constant temperature (T), pressure (P), and number of particles (N)—conditions that mirror most laboratory experiments and natural phenomena. Within this ensemble, the Gibbs free energy (G) emerges as the fundamental thermodynamic potential, serving as a bridge between the microscopic interactions of particles and the macroscopic thermodynamic properties we observe [11]. This whitepaper provides an in-depth technical examination of the NPT ensemble, detailing how Gibbs free energy is derived from, and fundamentally governs, this ensemble. It further explores modern computational methodologies for its calculation, supported by structured data, experimental protocols, and visualization tools tailored for researchers and drug development professionals.

The critical role of Gibbs free energy extends across scientific disciplines. In materials science, it determines phase stability and guides the design of new alloys [13]. In drug discovery, accurate prediction of binding affinities—a process governed by free energy changes—is paramount for optimizing lead compounds [14]. The NPT ensemble is the natural environment for these studies, and mastering its connection to Gibbs free energy is essential for advancing research in these fields.

Theoretical Foundations of the NPT Ensemble

Definition and Partition Function

The NPT ensemble describes a system in contact with both a thermal bath, maintaining constant temperature, and a mechanical piston, maintaining constant pressure. The ensemble is defined by its partition function, which for a classical system is given by Eq. (73) in [11]: [ \Delta(P, N, \beta) = \int dV \int d^{6N}\Gamma \exp\left{-\beta\left[H(\mathbf{p}^N, \mathbf{q}^N; V) + PV\right]\right} ] Here, ( \beta = 1/k_B T ), ( H ) is the system Hamiltonian, ( V ) is the system volume, and the integral is taken over the entire phase space of the system [11]. The partition function ( \Delta ) is the cornerstone from which all thermodynamic properties of the ensemble are derived. For quantum systems, the partition function involves a trace over quantum states and an integral over volume [11]: [ \Delta(P, N, \beta) = \int dV \, \text{Tr} \exp\left{-\beta\left[\hat{H}(V) + PV\right]\right} ] where ( \hat{H}(V) ) is the quantum Hamiltonian operator.

The Gibbs Free Energy as the Characteristic State Function

The Gibbs free energy, G, is the thermodynamic characteristic function for the variables N, P, and T. It is directly connected to the NPT partition function by [11] [1]: [ G(N, P, T) = -kB T \ln \Delta(N, P, T) ] This relationship is of profound importance because it implies that if the partition function ( \Delta ) is known, all other thermodynamic properties can be calculated by taking appropriate derivatives of G. The fundamental thermodynamic relation for G is [11]: [ dG = -S dT + V dP + \suml \mul dNl ] From this, the entropy (S), volume (V), and chemical potential (( \mul )) can be obtained as conjugate variables: [ S = -\left(\frac{\partial G}{\partial T}\right){P, N}, \quad V = \left(\frac{\partial G}{\partial P}\right){T, N}, \quad \mul = \left(\frac{\partial G}{\partial Nl}\right){T, P, N'} ]

Table 1: Key Thermodynamic Relations in the NPT Ensemble

| Thermodynamic Property | Statistical Mechanical Relation | Reference Equation |

|---|---|---|

| Gibbs Free Energy, G | ( G = -k_B T \ln \Delta(P, N, \beta) ) | [11] Eq. (81) |

| Entropy, S | ( S = kB T \frac{\partial \ln \Delta}{\partial T} + kB \ln \Delta ) | [11] Eq. (86) |

| Average Volume, 〈V〉 | ( \langle V \rangle = -k_B T \frac{\partial \ln \Delta}{\partial P} ) | [11] Eq. (87) |

| Enthalpy, H | ( H = -\frac{\partial \ln \Delta}{\partial \beta} ) | [11] Eq. (89) |

| Constant-Pressure Heat Capacity, C_P | ( CP = kB \beta^2 \langle [(\mathcal{H}+PV) - \langle \mathcal{H}+PV \rangle]^2 \rangle ) | [11] Eq. (91a) |

Fluctuations and Their Physical Significance

In the NPT ensemble, thermodynamic quantities are not fixed but fluctuate around their mean values. These fluctuations are not mere noise; they are directly related to the system's thermodynamic response functions. A key example is the fluctuation in the enthalpy-like quantity ( \mathcal{H} + PV ), which is connected to the constant-pressure heat capacity, ( C_P ) [11]: [ \langle [(\mathcal{H}+PV) - \langle \mathcal{H}+PV \rangle]^2 \rangle = \frac{\partial^2 \ln \Delta}{\partial \beta^2} = - \frac{\partial \langle \mathcal{H}+PV \rangle}{\partial \beta} ] This result demonstrates that the heat capacity, a measurable macroscopic property, is fundamentally a measure of energy fluctuations within the system.

Computational Methodologies for the NPT Ensemble

Simulating the NPT ensemble requires algorithms that faithfully generate system configurations distributed according to the NPT probability density. The following diagram illustrates the logical relationship between the core theoretical concept (Gibbs Free Energy) and the primary computational methods used to sample the NPT ensemble.

Diagram 1: From Gibbs Energy to Computational Methods. This diagram outlines how the foundational concept of Gibbs Free Energy gives rise to the NPT partition function and the probability of microstates, which in turn dictates the design of major Monte Carlo and Molecular Dynamics sampling algorithms.

Monte Carlo Methods

Monte Carlo (MC) methods are a primary tool for sampling the NPT ensemble. They work by generating a Markov chain of system states (particle coordinates and system volume) where each new state is proposed randomly and accepted with a probability that ensures convergence to the correct NPT distribution [15]. The key acceptance criterion is based on the change in the effective energy ( Ei + PVi ).

A powerful variant is the Gibbs Ensemble Monte Carlo (GEMC), which is particularly efficient for simulating phase equilibria without an interface. In GEMC, two simulation boxes (e.g., representing vapor and liquid phases) are simulated in parallel, allowing for particle exchange and volume changes that equalize pressure and chemical potential between the boxes [16].

Molecular Dynamics Methods

Molecular Dynamics (MD) simulates the system by numerically solving Newton's equations of motion, modified to maintain constant temperature and pressure. This is typically achieved using extended Lagrangian formalisms, which introduce additional dynamical variables to represent the thermostat and barostat.

Recent algorithmic advances focus on improving the stability and sampling efficiency of NPT simulations. For instance, Li & Peng (2025) proposed a second-order Langevin sampler that preserves a positive volume for the simulation box [17]. Their method derives equations of motion by sending the artificial mass of the periodic box to zero, resulting in a system that guarantees the positivity of the volume and allows for the development of high-performance weak numerical schemes.

Table 2: Key Computational Methods for NPT Ensemble Sampling

| Method Type | Specific Algorithm | Key Feature | Typical Application |

|---|---|---|---|

| Monte Carlo | Standard NPT MC | Volume change moves | General purpose NPT simulation [1] [15] |

| Monte Carlo | Gibbs Ensemble MC (GEMC) | Simulates two coexisting phases without an interface | Vapor-liquid equilibrium [16] |

| Molecular Dynamics | Nosé-Hoover / Parrinello-Rahman | Extended system with coupled thermostat/barostat | General purpose NPT MD [15] |

| Molecular Dynamics | 2nd-Order Langevin Sampler | Positivity-preserving volume dynamics | Robust NPT sampling for small systems [17] |

Experimental and Computational Protocols

Protocol 1: Calculating Phase Diagrams for Alloys using MLIPs

Predicting phase stability in complex alloys like high-entropy alloys (HEAs) is a critical application of NPT thermodynamics. The following workflow, as implemented in tools like PhaseForge, integrates machine learning interatomic potentials (MLIPs) for efficient and accurate phase diagram calculation [13].

Diagram 2: Workflow for Alloy Phase Diagram Calculation. This protocol shows the steps for constructing a phase diagram using Machine Learning Interatomic Potentials (MLIPs), from generating representative atomic structures to the final thermodynamic modeling and benchmarking.

Detailed Methodology [13]:

- Structure Generation: Generate Special Quasirandom Structures (SQS) for various phases (e.g., FCC, BCC, HCP) and compositions using a tool like the Alloy Theoretic Automated Toolkit (ATAT). SQS are periodic structures that best mimic the randomness of a true solid solution.

- Energy Calculation: Optimize the SQS structures and calculate their energy at 0 K using a trained MLIP (e.g., Grace, SevenNet, CHGNet). This provides the configurational energy of the ordered phases.

- Liquid Phase Handling: Perform NPT-MD simulations on the liquid phase at different compositions. The free energy of the liquid is often calculated using a "ternary search" method to integrate the thermodynamic relations.

- Thermodynamic Modeling: Fit all the calculated energies (for solid and liquid phases) using CALPHAD (CALculation of PHAse Diagrams) modeling within ATAT. This involves finding polynomial expressions for the Gibbs free energy of each phase as a function of composition and temperature.

- Diagram Construction: Construct the final phase diagram using CALPHAD software (e.g., Pandat), which calculates the phase equilibria by finding the global minimum of the total Gibbs free energy of the system.

- Benchmarking: The resulting phase diagram can be used to benchmark the quality of the MLIP by comparing the Zero-Phase-Field (ZPF) lines with those obtained from ab-initio calculations (e.g., VASP) or experimental data. Metrics like True Positive (TP) and False Negative (FN) regions for specific phase fields provide a quantitative accuracy assessment.

Protocol 2: Quantum-Centric Alchemical Free Energy Calculations

In drug discovery, predicting the binding affinity of a ligand to a receptor requires calculating the Gibbs free energy change of binding. Alchemical free energy (AFE) calculations are a powerful class of methods for this purpose. A cutting-edge advancement is the integration of quantum mechanics (QM) to improve accuracy, as outlined in the protocol below [14].

Detailed Methodology [14]:

System Preparation:

- Generate initial coordinates for the ligand and receptor.

- Assign classical force field parameters (e.g., using GAFF) and derive atomic partial charges (e.g., using RESP fitting).

- Solvate the system in a water box (e.g., TIP3P, OPC models) with a minimum padding of 24 Å and apply Periodic Boundary Conditions (PBC).

Classical AFE Calculation:

- Perform a series of MD simulations at different values of a coupling parameter ( \lambda ) (e.g., using Thermodynamic Integration (TI) or Multistate Bennett Acceptance Ratio (MBAR)).

- The Hamiltonian ( U(\lambda) ) is defined as ( U(\lambda) = (1-\lambda)U0 + \lambda U1 ), where ( U0 ) and ( U1 ) represent the Hamiltonian of the initial and final states, respectively. This transformation "annihilates" or "grows" the ligand in solution or the binding site.

Book-Ending Correction via Configuration Interaction (CI):

- This step corrects the classical result with higher-accuracy quantum mechanics.

- For a set of configurations sampled from the classical ( \lambda )-simulations, recalculate the potential energy using a QM or QM/MM method. A novel approach is to use a Full Configuration Interaction (FCI) or a quantum-centric Sample-based Quantum Diagonalization (SQD) workflow, which can provide near-exact solutions for the electronic energy within a given basis set.

- Compute the free energy difference for transforming the system from the MM to the QM/MM description at both end states (( \lambda=0 ) and ( \lambda=1 )).

- Apply this "book-ending" correction to the classically obtained AFE to get the final, more accurate QM-corrected free energy.

The Scientist's Toolkit: Essential Reagents and Software

Table 3: Key Research Reagent Solutions for NPT-based Free Energy Calculations

| Item Name | Type | Function in NPT Research | Example Use Case |

|---|---|---|---|

| ATAT | Software Toolkit | Generates Special Quasirandom Structures (SQS) and performs cluster expansion for alloy thermodynamics. | Predicting stable phases in Ni-Re binary systems [13]. |

| PhaseForge | Software Program | Integrates MLIPs into a workflow for calculating phase diagrams. | Automated phase stability prediction in high-entropy alloys [13]. |

| Grace, CHGNet, SevenNet | Machine Learning Interatomic Potentials (MLIPs) | Provides quantum-accurate energies and forces at a fraction of the cost of ab-initio methods. | Large-scale NPT-MD for free energy estimation in solids and liquids [13]. |

| AMBER | Molecular Dynamics Package | Performs classical MD and alchemical free energy simulations (TI, MBAR). | Calculating hydration free energies and ligand-receptor binding affinities [14]. |

| QUICK/PySCF/Qiskit | Quantum Chemistry Software | Provides the electronic structure engine for book-ending corrections, using DFT, FCI, or SQD methods. | Incorporating quantum mechanics into alchemical free energy calculations [14]. |

| Berendsen/Andersen Barostat | Algorithm | Controls pressure in MD simulations by dynamically scaling the simulation box size. | Maintaining constant pressure during NPT equilibration and production runs [14]. |

| Langevin Sampler (2nd-Order) | Algorithm | Samples the NPT ensemble with guarantees on volume positivity and improved numerical stability. | Robust NPT sampling, particularly for systems with small particle counts [17]. |

Applications in Research

The combination of the NPT ensemble and Gibbs free energy calculation is driving progress in multiple fields:

Materials Design and Alloy Development: The calculation of phase diagrams is essential for discovering new materials. The PhaseForge workflow [13] has been successfully applied to binary systems like Ni-Re and Cr-Ni, and even complex quinary systems like Co-Cr-Fe-Ni-V, dramatically accelerating the exploration of compositionally complex alloys by leveraging the efficiency of MLIPs within the NPT thermodynamic framework.

Drug Discovery: Accurate prediction of binding free energies is a central goal in computer-aided drug design. The quantum-centric book-ending approach [14] addresses a key limitation of classical simulations—the force field inaccuracy—by providing a path to incorporate highly accurate, but computationally expensive, Configuration Interaction methods. This hybrid quantum-classical workflow, applied to calculate hydration free energies of small molecules, establishes a benchmark for future studies on drug-receptor interactions.

The NPT ensemble provides the essential link between the microscopic world of atoms and molecules and the macroscopic experimental conditions of constant temperature and pressure. Its characteristic thermodynamic potential, the Gibbs free energy, is the fundamental quantity that governs phase stability, chemical reactions, and biomolecular binding. As computational power and algorithms advance, the ability to calculate Gibbs free energy with high accuracy continues to improve. The emergence of machine learning potentials and the nascent integration of quantum computing into free energy workflows are pushing the boundaries of what is possible, enabling researchers to tackle increasingly complex problems in materials science and drug discovery with greater confidence and predictive power.

The isothermal-isobaric (NPT) ensemble represents a cornerstone of statistical mechanics, providing the fundamental theoretical framework for connecting microscopic particle behavior to macroscopic thermodynamic observables under constant temperature and pressure conditions. This ensemble maintains constant number of particles (N), constant pressure (P), and constant temperature (T), mirroring most real-world experimental conditions in materials science, chemistry, and pharmaceutical development [3]. The NPT ensemble describes systems that can exchange both energy and volume with their surroundings, making it indispensable for studying pressure-induced phase transitions, material compressibility, and biomolecular behavior in physiological environments [1] [3].

In pharmaceutical research and drug development, the NPT ensemble enables researchers to simulate molecular behavior under biologically relevant conditions, predicting properties such as protein folding stability, membrane permeability, and ligand-binding affinities. By establishing a rigorous connection between microscopic configurations and macroscopic observables, this ensemble provides the theoretical foundation for molecular simulations that drive rational drug design and formulation development [18].

Theoretical Foundations of the NPT Ensemble

Probability Distribution in the NPT Ensemble

In the NPT ensemble, the probability of observing a specific microstate i with energy Ei and volume Vi follows the generalized Boltzmann distribution [1]:

P(i) = Z⁻¹ exp[-β(Ei + PVi)]

where β = 1/kBT, kB is Boltzmann's constant, T is absolute temperature, P is pressure, and Z is the partition function that normalizes the probability distribution. This probability distribution fundamentally connects microscopic states, characterized by their energy and volume, to the macroscopic constraints of constant temperature and pressure through the exponential weighting factor [1] [3].

The exponential term exp[-β(Ei + PVi)] demonstrates how both energy and volume fluctuations contribute to the probability of observing a given microstate. States with lower overall enthalpy (E + PV) are exponentially favored, creating a balance between the system's internal energy and the mechanical work required to maintain the specified pressure [1].

The NPT Partition Function

The partition function Z serves as the fundamental bridge connecting microscopic states to macroscopic thermodynamics in the NPT ensemble. For a continuous system, the NPT partition function is derived from the canonical partition function by incorporating volume as an additional variable and adding the pressure-volume work term [1] [3]:

Δ(N,P,T) = ∫₀^∞ e^(-βPV) Z(N,V,T) dV

where Z(N,V,T) is the canonical partition function at fixed volume V. The exponential term e^(-βPV) represents the Boltzmann factor associated with the pressure-volume work done by the system against the external pressure P [3]. For discrete systems, the integral is replaced by a summation over all possible volumes accessible to the system.

Table 1: Key Mathematical Expressions in the NPT Ensemble

| Quantity | Mathematical Expression | Physical Significance |

|---|---|---|

| Probability Distribution | P(i) ∝ exp[-β(Ei + PVi)] | Probability of microstate i with energy Ei and volume Vi |

| Partition Function | Δ(N,P,T) = ∫ e^(-βPV)Z(N,V,T)dV | Normalization factor summing over all states |

| Gibbs Free Energy | G = -k_BT ln Δ(N,P,T) | Thermodynamic potential for NPT systems |

| Volume Fluctuations | ⟨(ΔV)²⟩ = kBT⟨V⟩κT | Relationship between volume variance and compressibility |

Connecting to Macroscopic Observables through Ensemble Averages

Fundamental Thermodynamic Quantities

The NPT ensemble enables calculation of macroscopic observables through statistical averages over microscopic states. The Gibbs free energy emerges as the characteristic thermodynamic potential for the NPT ensemble, connecting directly to the partition function [1] [3]:

G(N,P,T) = -k_BT ln Δ(N,P,T)

This fundamental relationship demonstrates how the partition function, which sums over all possible microscopic states, determines the macroscopic Gibbs free energy. Other thermodynamic observables follow through appropriate derivatives of G or through direct ensemble averaging [3].

The enthalpy H is obtained as an ensemble average of the microscopic energy and volume:

H = ⟨E⟩ + P⟨V⟩

where ⟨E⟩ represents the ensemble average of the system's internal energy and ⟨V⟩ is the average volume. The entropy S can be derived from the temperature derivative of G or through the statistical definition based on the probability distribution [3]:

S = - (∂G/∂T)_N,P

Fluctuation-Response Relationships

The NPT ensemble provides profound connections between fluctuations of microscopic quantities and macroscopic response functions through statistical mechanics. Volume fluctuations directly relate to the isothermal compressibility κ_T [3]:

κT = -1/⟨V⟩ (∂⟨V⟩/∂P)T = ⟨(ΔV)²⟩ / (k_BT⟨V⟩)

where ⟨(ΔV)²⟩ = ⟨V²⟩ - ⟨V⟩² represents the variance of volume fluctuations in the ensemble. This fluctuation-dissipation relationship demonstrates how the microscopic volume variations determine the system's macroscopic response to pressure changes—a fundamental connection between microscopic dynamics and bulk material properties [3].

Similarly, energy fluctuations relate to the constant-pressure heat capacity:

CP = ⟨(ΔE)²⟩ / (kBT²)

where ⟨(ΔE)²⟩ is the variance of energy fluctuations in the ensemble. These fluctuation-response relationships provide a powerful methodology for computing thermodynamic response functions from molecular simulations that track microscopic fluctuations [1].

Table 2: Key Ensemble Averages and Their Macroscopic Counterparts

| Ensemble Average | Macroscopic Equivalent | Fluctuation-Response Relation |

|---|---|---|

| ⟨E⟩ | Internal Energy | Fundamental thermodynamic observable |

| ⟨V⟩ | Volume | Equilibrium value minimizing G |

| ⟨(ΔV)²⟩ | Isothermal Compressibility | κT = ⟨(ΔV)²⟩/(kBT⟨V⟩) |

| ⟨(ΔE)²⟩ | Constant-Pressure Heat Capacity | CP = ⟨(ΔE)²⟩/(kBT²) |

| ⟨(ΔE)(ΔV)⟩ | Thermal Expansion Coefficient | αP = ⟨(ΔE)(ΔV)⟩/(kBT²⟨V⟩) |

Computational Implementation and Methodologies

Molecular Dynamics in the NPT Ensemble

Molecular dynamics (MD) simulations implement the NPT ensemble through specialized algorithms that control both temperature and pressure. The equations of motion are modified to include coupling to external thermal and pressure baths, enabling the system to explore the appropriate distribution of microstates [5]. For the Parrinello-Rahman method, a sophisticated barostat implementation, the equations of motion take the form [5]:

where η represents the pressure control degrees of freedom, h = (a,b,c) defines the simulation cell vectors, τT and τP are the temperature and pressure coupling time constants, and ζ is the thermal friction coefficient [5]. These equations generate trajectories that sample from the isothermal-isobaric ensemble, allowing proper calculation of ensemble averages.

Barostat Algorithms and Implementation

Two primary barostat methods are commonly employed in NPT simulations, each with distinct characteristics and applications [5]:

Parrinello-Rahman Barostat: This extended system method allows for full flexibility of the simulation cell, enabling both isotropic and anisotropic volume fluctuations. It introduces additional degrees of freedom for the cell vectors and provides rigorous sampling of the NPT ensemble. The method requires careful parameter selection, particularly the

pfactorparameter (τ_P²B where B is the bulk modulus) which controls the response time of volume fluctuations [5].Berendsen Barostat: This weak-coupling method provides efficient pressure control by scaling coordinates and box vectors to gradually approach the target pressure. While computationally efficient, it does not generate a rigorously correct NPT ensemble but is useful for equilibration purposes. The method requires specification of the pressure coupling constant τ_P and the system's compressibility [5].

The following diagram illustrates the complete workflow for NPT molecular dynamics simulations:

Experimental Protocols and Research Applications

Calculation of Thermal Expansion Coefficients

A fundamental application of NPT ensemble simulations involves calculating thermal expansion coefficients of materials, which requires precise control of both temperature and pressure. The following protocol outlines this methodology [5]:

System Preparation: Construct the initial crystal structure with periodic boundary conditions. For example, a 3×3×3 supercell of fcc-Cu containing 108 atoms provides adequate statistical sampling while maintaining computational efficiency.

Force Field Selection: Choose an appropriate potential energy function. The ASAP3-EMT force field offers computational efficiency for metals, while more accurate potentials like PFP may be employed for higher precision.

Parameter Specification: Set the target external pressure (typically 1 bar for standard conditions), temperature range (e.g., 200-1000 K in 100 K increments), and coupling constants. The pressure control parameter

pfactortypically ranges from 10⁶ to 10⁷ GPa·fs² for metallic systems.Equilibration Protocol: Conduct initial equilibration using:

- Time step: 1.0 fs

- Temperature coupling constant (τ_T): 20 fs

- Simulation duration: 20 ps (20,000 steps)

- Maxwell-Boltzmann initialization of velocities

Production Simulation: Extend the simulation to collect sufficient statistics for ensemble averages, typically 100-200 ps depending on system size and required precision.

Data Analysis: Calculate the thermal expansion coefficient from the temperature dependence of the average lattice constant: α = (1/a₀)(da/dT)_P, where a₀ is the reference lattice constant and da/dT is the slope of the lattice constant versus temperature plot [5].

Research Reagent Solutions and Computational Tools

Table 3: Essential Computational Tools for NPT Ensemble Research

| Tool Category | Specific Implementation | Function and Application |

|---|---|---|

| Force Fields | ASAP3-EMT | Empirical potential for metallic systems enabling rapid simulations |

| Force Fields | PFP (PFPAPIClient) | First-principles potential for higher accuracy across diverse materials |

| Barostat Algorithms | Parrinello-Rahman | Extended system method for rigorous NPT sampling with cell flexibility |

| Barostat Algorithms | Berendsen Barostat | Weak-coupling method for efficient pressure equilibration |

| Thermostat Algorithms | Nosé-Hoover Thermostat | Extended system method for canonical temperature distribution |

| Simulation Packages | ASE (Atomic Simulation Environment) | Python framework for setting up, running and analyzing simulations |

| Analysis Tools | Custom Python Scripts | Calculation of ensemble averages and fluctuation properties |

Advanced Theoretical Considerations

Generalized Boltzmann Distribution and Phase Space Sampling

The generalized Boltzmann distribution provides the fundamental connection between microscopic states and their probabilities in the NPT ensemble [1]:

p(μ,x) = Z⁻¹ exp[-βH(μ) + βJ·x]

where H(μ) represents the system Hamiltonian, J represents the external field conjugate to the extensive variable x, and Z is the normalization factor. For the specific case of the NPT ensemble, J = -P and x = V, yielding the familiar form with the PV term [1].

Proper sampling of phase space requires careful consideration of the ensemble definition. Recent theoretical work has highlighted the necessity of a "shell" molecule to uniquely identify the system volume and avoid redundant counting of configurations in the partition function [19]. This theoretical refinement ensures consistent sampling between Monte Carlo and molecular dynamics implementations of the NPT ensemble.

Ensemble Averages and Ergodicity

The ergodic hypothesis forms the foundation for connecting molecular dynamics trajectories to ensemble averages. This hypothesis assumes that time averages over sufficiently long trajectories equal ensemble averages over the statistical ensemble [3]:

⟨A⟩ensemble = lim{τ→∞} (1/τ) ∫₀^τ A(t) dt

where A is any observable quantity and τ is the simulation time. In the NPT ensemble, this principle enables the calculation of macroscopic properties from molecular dynamics simulations by averaging over the generated trajectory [3]. However, ergodicity may be violated in systems with complex energy landscapes, such as glasses or large biomolecules, requiring specialized sampling techniques to ensure proper convergence of ensemble averages.

The following diagram illustrates the conceptual relationship between microscopic states and macroscopic observables in the NPT ensemble:

The isothermal-isobaric ensemble provides a powerful theoretical and computational framework for connecting microscopic particle configurations to macroscopic thermodynamic observables under constant temperature and pressure conditions. Through the generalized Boltzmann distribution and the partition function, researchers can establish rigorous relationships between atomic-level interactions and bulk material properties including volume, enthalpy, entropy, and various response functions.

The fluctuation-dissipation theorems intrinsic to the NPT ensemble enable the calculation of important material properties such as compressibility and thermal expansion from the natural fluctuations occurring in molecular simulations. For pharmaceutical researchers and drug development professionals, this framework offers invaluable insights into molecular behavior under physiologically relevant conditions, supporting rational design of drug candidates and formulation strategies with optimized properties.

As molecular simulation methodologies continue to advance, the NPT ensemble remains an essential tool for bridging scales from molecular interactions to macroscopic observables, enabling predictive computational design across diverse scientific and engineering disciplines.

Within statistical mechanics and molecular simulation, the choice of statistical ensemble is foundational, defining the thermodynamic boundary conditions and determining the properties accessible from a simulation. This guide provides an in-depth technical comparison of three central ensembles: the Isothermal-Isobaric (NPT), Canonical (NVT), and Grand Canonical (μVT) ensembles. Framed within the context of a broader thesis on the NPT ensemble, this document details the theoretical underpinnings, practical implementation protocols, and specific applications of each ensemble, serving as a resource for researchers, scientists, and drug development professionals who utilize molecular simulations to study and design materials and molecules.

Theoretical Foundations

Statistical ensembles are collections of microstates that represent a system under specific macroscopic constraints. The probability of each microstate is derived from the fundamental postulates of statistical mechanics, and the connection to thermodynamics is made through the associated thermodynamic potential.

The Canonical (NVT) Ensemble

The Canonical ensemble describes a system with a constant number of particles (N), a constant volume (V), and a constant temperature (T), maintained through contact with a thermal reservoir [20] [21]. Its probability distribution is given by the Boltzmann distribution.

- Classical Probability Density: ( \rho(\vec{p}^N, \vec{q}^N) = Q^{-1}(N, V, T) \exp[-\beta H(\vec{p}^N, \vec{q}^N)] ) where ( \beta = 1/k_B T ), ( H ) is the classical Hamiltonian, and ( Q ) is the canonical partition function [21].

- Quantum Mechanical Density Matrix: ( \hat{\rho} = \exp[ (F - \hat{H}) / k_B T ] ) where ( \hat{H} ) is the Hamiltonian operator [21].

- Partition Function & Thermodynamics: The partition function ( Q(N, V, T) = \sum{\text{microstates}} e^{-\beta E} ) (or the integral over phase space in classical mechanics) links to the Helmholtz free energy, ( F(N, V, T) = -kB T \ln Q ) [21]. This is the characteristic potential for NVT, with its exact differential being ( dF = -S dT - \langle p \rangle dV ) [21].

The Isothermal-Isobaric (NPT) Ensemble

The Isothermal-Isobaric ensemble describes a closed system at constant temperature (T) and constant pressure (P), allowing its volume (V) to fluctuate [7] [11]. This is the ensemble of choice for simulating condensed phases under common experimental conditions.

- Classical Probability Density: ( \rho(\vec{p}^N, \vec{q}^N; V) = \Delta^{-1}(P, N, \beta) \exp[-\beta (H(\vec{p}^N, \vec{q}^N; V) + P V) ] ) [11].

- Semi-Classical Density Operator: ( \hat{\rho}(V) = \Delta^{-1}(P, N, \beta) \exp[-\beta (\hat{H}(V) + P V) ] ) where the system's quantum energy states depend parametrically on the volume [11].

- Partition Function & Thermodynamics: The isothermal-isobaric partition function is ( \Delta(P, N, \beta) = \int dV \int d\Gamma \exp[-\beta (H + P V)] ) and connects to the Gibbs free energy, ( G(P, N, T) = -kB T \ln \Delta ) [11]. The exact differential is ( dG = -S dT + V dP + \suml \mul dNl ) [11].

The Grand Canonical (μVT) Ensemble

The Grand Canonical ensemble describes an open system at constant temperature (T) and constant chemical potential (μ), which can exchange both energy and particles with a reservoir [22] [23].

- Probability of a Microstate: For a quantum state with energy ( E ) and particle number ( N ), the probability is ( P = \Xi^{-1} e^{\beta (\mu N - E)} ) or, alternatively, ( P = e^{(\Omega + \mu N - E)/(k_B T)} ) [22].

- Partition Function & Thermodynamics: The grand canonical partition function is ( \Xi(\mu, V, T) = \sum{N} \sum{\text{microstates}} e^{\beta (\mu N - E)} ) and links to the Grand Potential, ( \Omega(\mu, V, T) = -kB T \ln \Xi ) [22] [23]. The exact differential is ( d\Omega = -S dT - \langle N \rangle d\mu - \langle p \rangle dV ) [22]. Pressure is given by ( P V = kB T \ln \Xi(\mu, V, T) ) [23].

Table 1: Core Characteristics of Statistical Ensembles

| Feature | NVT Ensemble (Canonical) | NPT Ensemble (Isothermal-Isobaric) | μVT Ensemble (Grand Canonical) |

|---|---|---|---|

| Fixed Variables | Number of particles (N), Volume (V), Temperature (T) | Number of particles (N), Pressure (P), Temperature (T) | Chemical Potential (μ), Volume (V), Temperature (T) |

| Fluctuating Quantity | Energy (E) | Energy (E) and Volume (V) | Energy (E) and Number of particles (N) |

| Thermodynamic Potential | Helmholtz Free Energy (F) | Gibbs Free Energy (G) | Grand Potential (Ω) |

| Typical Application | Systems in a fixed container; initial equilibration [20] [24] | Simulating liquids, solids, and phase transitions at experimental conditions [24] [5] | Open systems: adsorption, interfaces, quantum gases [22] |

Comparative Analysis of Ensembles

Thermodynamic and Statistical Equivalence and Differences

In the thermodynamic limit (infinite system size), and away from phase transitions, the different ensembles are expected to yield equivalent results for thermodynamic properties [25] [23]. However, for the finite-sized systems used in simulations, the choice of ensemble matters.

- Fluctuations: Ensembles differ most significantly in the statistical fluctuations of their observables. These fluctuations are not merely noise but are related to thermodynamic derivatives [23] [24]. In the NPT ensemble, volume fluctuations are related to the isothermal compressibility, ( \kappaT ) [11]. In the Grand Canonical ensemble, particle number fluctuations are also related to ( \kappaT ): ( \langle (\Delta N)^2 \rangle / \langle N \rangle^2 = (kB T \kappaT) / V ) [23]. In the NVT ensemble, energy fluctuations are related to the constant-volume heat capacity, ( C_V ) [21].

- Ensemble Breaking: The equivalence of ensembles can break down, particularly for small systems or near critical points where fluctuations become large [23]. For example, near a liquid-vapor critical point, density fluctuations become macroscopic, making the Grand Canonical ensemble the most natural choice [23].

Practical Implications for Simulation

The choice of ensemble is often dictated by the experimental conditions one wishes to mimic or the specific properties of interest [25] [24].

- NVT Use Cases: This ensemble is suitable for studying systems in a fixed container, such as ion diffusion in a rigid crystal lattice [26], or for gas-phase reactions without a buffer gas [25]. It is also used for equilibration before switching to NVE for dynamical property calculation [25].

- NPT Use Cases: This is the most common ensemble for simulating condensed phases (liquids, solids) as it naturally reproduces experimental conditions of constant temperature and pressure [24] [5]. It allows the system to find its equilibrium density and is essential for studying pressure-dependent phenomena, thermal expansion, and phase transitions [5].

- Grand Canonical Use Cases: This ensemble is vital for studying open systems, such as adsorption in porous materials (e.g., metal-organic frameworks), where the number of adsorbate molecules changes [22] [23]. It is also the natural framework for modeling quantum gases (Bose-Einstein and Fermi-Dirac statistics) in a cavity [22].

Table 2: Fluctuations and Related Thermodynamic Properties

| Ensemble | Fluctuating Quantity | Relation to Thermodynamic Property |

|---|---|---|

| NVT | Energy (E) | ( \langle (\Delta E)^2 \rangle = kB T^2 CV ) [21] |

| NPT | Enthalpy (H+PV) | ( \langle [\Delta(H+PV)]^2 \rangle = kB T^2 CP ) [11] |

| NPT | Volume (V) | ( \langle (\Delta V)^2 \rangle = kB T V \kappaT ) [11] |

| μVT | Particle Number (N) | ( \langle (\Delta N)^2 \rangle = kB T (\partial \langle N \rangle / \partial \mu){T,V} ) [23] |

Experimental and Computational Protocols

Implementing the NPT Ensemble with the Parinello-Rahman Method

A widely used method for NPT simulations, especially in solids, is the Parinello-Rahman algorithm, which allows the simulation cell's shape and size to change [7] [5].

Workflow Overview:

- System Setup: Define the initial atomic coordinates and the initial simulation cell vectors.

- Force Calculation: Use a potential (force field) to compute atomic forces.

- Barostat Coupling: The pressure of the system is calculated from the stress tensor. The Parinello-Rahman equations of motion use the difference between the internal and target pressure to drive the evolution of the simulation cell matrix ( \mathbf{h} = (\mathbf{a}, \mathbf{b}, \mathbf{c}) ) via ( \dot{\mathbf{h}} = \mathbf{\eta} \mathbf{h} ), where ( \mathbf{\eta} ) is a dynamical variable associated with the barostat [5].

- Thermostat Coupling: A thermostat (e.g., Nosé-Hoover) is applied to control the temperature by coupling to the atomic velocities [5].

- Integration: The coupled equations of motion for atoms, cell, and thermostat variables are integrated over time.

VASP INCAR File Example for NpT (Parinello-Rahman & Langevin) [7]:

Key Parameters:

PMASS: The fictitious mass of the lattice degrees of freedom. A larger value leads to slower, more damped cell oscillations [7].LANGEVIN_GAMMA_L: The friction coefficient for the lattice, providing damping to the cell motion [7].- pfactor (ASE): In the ASE implementation, this is a key parameter, defined as ( \tauP^2 B ), where ( \tauP ) is the pressure control time constant and ( B ) is the bulk modulus. A value on the order of ( 10^6 ) to ( 10^7 ) GPa·fs² is a typical starting point for metals [5].

Implementing the NVT Ensemble

The NVT ensemble is implemented by applying a thermostat to control the kinetic energy of the system. Multiple thermostats are available, each with different characteristics.

Thermostat Comparison [20] [26]:

- Andersen Thermostat (MDALGO=1): Stochastic. Randomly assigns new velocities from a Maxwell-Boltzmann distribution. Good for equilibration but disrupts dynamics [20].

- Nosé-Hoover Thermostat (MDALGO=2): Deterministic. Introduces an extended Lagrangian with a fictitious variable that acts as a heat reservoir. Generally produces a correct canonical ensemble but can have ergodicity issues for small or stiff systems [20] [26].

- Langevin Thermostat (MDALGO=3): Stochastic. Applies a friction force and a random force to atoms. Good for equilibration and useful for systems with limited long-range order, but the stochastic nature means exact trajectories are not reproducible [20] [26].

VASP INCAR File Example for NVT (Nosé-Hoover) [20]:

The Scientist's Toolkit: Essential Reagents and Parameters

Table 3: Key Parameters and "Reagents" for Ensemble Simulations

| Item Name | Function / Role | Typical Units | Considerations |

|---|---|---|---|

| Thermostat Time Constant (τ_T) | Controls the coupling strength to the heat bath. A large value gives weak coupling. | femtoseconds (fs) | Too small can cause temperature overshoot; too large leads to slow equilibration [5]. |

| Barostat Mass / pfactor | (Parinello-Rahman) The fictitious mass for the lattice degrees of freedom. | amu or GPa·fs² | A higher mass leads to slower box oscillations. Must be tuned for stability [7] [5]. |

| Isothermal Compressibility (β_T) | (Berendsen Barostat) The strength of the system's volume response to pressure changes. | 1/GPa | Must be set accurately for the Berendsen barostat to yield correct fluctuations [5]. |

| Friction Coefficient (γ) | (Langevin Thermostat/Barostat) Determines the damping of atomic/lattice velocities. | 1/ps | A high friction damps quickly but can obscure natural dynamics [7]. |

| Chemical Potential (μ) | (Grand Canonical) The driving force for particle exchange with the reservoir. | eV, kJ/mol | The key controlled variable in μVT simulations, defining the reservoir [22] [23]. |

Applications and Case Studies

Case Study: Thermal Expansion Coefficient using NPT-MD

A classic application of the NPT ensemble is calculating the coefficient of thermal expansion for a solid, which requires the volume to change with temperature at constant pressure.

Protocol [5]:

- System Preparation: Create an initial crystal structure (e.g., 3x3x3 supercell of fcc-Cu with 108 atoms).

- NPT Simulation Setup: Use a script (e.g., in ASE) to set up an NPT dynamics run with the Parrinello-Rahman method. Key parameters include a target pressure (e.g., 1 bar), a thermostat time constant (

ttime, e.g., 20 fs), and a barostat parameter (pfactor, e.g., 2×10⁶ GPa·fs² for Cu). - Equilibration & Production: For each target temperature in a range (e.g., 200 K to 1000 K in 100 K steps), run the NPT simulation. The first ~5-10 ps is often discarded as equilibration.

- Data Analysis: The instantaneous cell volume and lattice constant are recorded during the production phase. The thermal expansion coefficient ( \alpha ) is calculated from the slope of the average lattice constant vs. temperature curve.

Case Study: Adsorption in a Porous Material using Grand Canonical Monte Carlo (GCMC)

While molecular dynamics can be adapted, the Grand Canonical ensemble is most naturally sampled using Grand Canonical Monte Carlo (GCMC), which involves particle insertion/deletion moves.

Protocol:

- Framework Definition: A rigid model of the porous material (e.g., a zeolite or MOF) is created, and its energy landscape is characterized.

- Reservoir Definition: The chemical potential ( \mu ) of the adsorbate gas is set, often related to the bulk gas pressure via an equation of state.

- Monte Carlo Moves: The simulation proceeds by randomly attempting three types of moves: displacing a molecule, inserting a molecule, and deleting a molecule. The acceptance of these moves follows criteria based on the change in the energy ( \Delta E ) and particle number ( \Delta N ), ensuring detailed balance for the Grand Canonical distribution.

- Analysis: The average number of adsorbed molecules ( \langle N \rangle ) is computed, yielding the adsorption isotherm. The fluctuations in ( N ) can provide information about adsorption thermodynamics.

The NPT, NVT, and Grand Canonical ensembles are complementary tools in the computational scientist's arsenal. The NPT ensemble is indispensable for modeling realistic conditions in materials science and drug development, where pressure and temperature are controlled. The NVT ensemble provides a foundation for studying systems at fixed volume or for pre-equilibration. The Grand Canonical ensemble is the gateway to modeling open systems and adsorption phenomena. A deep understanding of their theoretical basis, practical implementation, and respective limitations, as detailed in this guide, is crucial for the rigorous application of molecular simulation to research and development challenges.

The isothermal-isobaric (NPT) ensemble stands as a cornerstone of molecular simulation, providing an essential bridge between theoretical models and empirical science. Unlike the canonical (NVT) or microcanonical (NVE) ensembles that maintain constant volume or energy, the NPT ensemble maintains constant pressure and temperature while allowing volume to fluctuate. This fundamental characteristic enables researchers to mimic the conditions prevalent in most laboratory experiments and biological environments, where systems typically experience constant atmospheric pressure rather than fixed volume constraints. As noted in statistical mechanics literature, the NPT ensemble represents a thermodynamic system in both thermal and mechanical equilibrium with its surroundings, allowing fluctuations in both energy and volume while keeping particle number, pressure, and temperature constant [3].

The critical importance of the NPT ensemble stems from its unique capacity to model how molecular systems naturally behave when exposed to environmental pressures. Where the microcanonical ensemble describes isolated systems and the canonical ensemble describes systems in thermal contact with a heat bath, the isothermal-isobaric ensemble describes systems in contact with both a heat bath and a pressure reservoir [1]. This dual coupling makes it indispensable for investigating phenomena ranging from protein folding to material phase transitions, as it faithfully replicates the thermodynamic conditions under which these processes naturally occur.

Theoretical Foundations of the NPT Ensemble

Statistical Mechanical Basis

The NPT ensemble derives from rigorous statistical mechanical principles, with its partition function serving as the foundational element from which all thermodynamic properties emerge. For a system of N particles at constant pressure P and temperature T, the partition function is expressed as:

[ \Delta(N,P,T) = \int_{0}^{\infty} e^{-\beta PV} Q(N,V,T) dV ]

where (\beta = 1/kB T), (kB) is Boltzmann's constant, and (Q(N,V,T)) represents the canonical partition function [1] [3]. This formulation integrates over all possible volumes, with each volume weighted by the Boltzmann factor (e^{-\beta PV}) that accounts for the pressure-volume work done by the system against the external pressure.

The probability of observing a specific microstate (i) with energy (Ei) and volume (Vi) within this ensemble follows the generalized Boltzmann distribution:

[ P(\Gamma) \propto e^{-\beta(H(\Gamma) + PV)} ]

where (H(\Gamma)) represents the system Hamiltonian [1]. This probability distribution underscores how the NPT ensemble simultaneously constraints both energetic and volumetric aspects of system behavior.

Connection to Thermodynamics

The characteristic thermodynamic potential for the NPT ensemble is the Gibbs free energy, which can be directly derived from the partition function:

[ G(N,P,T) = -k_B T \ln \Delta(N,P,T) ]

This fundamental relationship connects microscopic configurations to macroscopic thermodynamics, with G representing the maximum reversible work obtainable from the system at constant N, P, and T [1] [3]. The Gibbs free energy further relates to other thermodynamic quantities through:

[ G = H - TS = U + PV - TS ]

where H represents enthalpy, S entropy, and U internal energy. The minimization of G determines the equilibrium state of any system under constant pressure conditions, making it particularly valuable for studying phase equilibria and chemical reactions.

Table 1: Key Thermodynamic Relationships in the NPT Ensemble

| Thermodynamic Quantity | Mathematical Expression | Physical Significance |

|---|---|---|

| Gibbs Free Energy | ( G = -k_B T \ln \Delta(N,P,T) ) | Characteristic state function for NPT |

| Enthalpy | ( H = \langle E \rangle + P\langle V \rangle ) | System's heat content at constant pressure |

| Entropy | ( S = -(\partial G/\partial T)_{N,P} ) | Degree of disorder |

| Average Volume | ( \langle V \rangle = (\partial G/\partial P)_{N,T} ) | Equilibrium volume at pressure P |

| Volume Fluctuations | ( \langle \delta V^2 \rangle = kB T \langle V \rangle \kappaT ) | Related to isothermal compressibility |

The "Shell Particle" Formulation

Traditional formulations of the NPT ensemble faced theoretical challenges related to redundant configuration counting when volume is treated as a continuous variable. This problem was resolved by introducing a "shell particle" approach, which uniquely defines the system volume by requiring that at least one particle resides in the volume element dV encapsulating V [27]. This formulation eliminates the ambiguous volume scale factor ((V_0)) that previously appeared in the partition function and provides a more physically intuitive picture of how volume fluctuations occur in real systems.

In molecular dynamics implementations, this approach replaces the arbitrary "piston mass" parameter of earlier methods with a shell particle of known mass, allowing the system itself to control the timescale of volume fluctuations rather than an artificially imposed parameter [27]. This advancement not only provides stronger theoretical foundations but also more physically realistic simulation dynamics.

Computational Implementation: Barostats and Algorithms

Barostat Methodologies

Implementing the NPT ensemble in molecular dynamics simulations requires specialized algorithms known as barostats, which control pressure by adjusting the simulation cell volume. Several barostat methods have been developed, each with distinct characteristics and applications:

Parrinello-Rahman Barostat: This extended system method allows for both isotropic and anisotropic pressure control by treating the simulation cell vectors as dynamic variables [7] [5]. The equations of motion incorporate the cell tensor (\mathbf{h} = (\mathbf{a}, \mathbf{b}, \mathbf{c})) as dynamic variables, providing flexibility in simulating crystalline materials under stress.

Berendsen Barostat: This weak coupling method adjusts the volume gradually to approach the target pressure through a first-order kinetic equation [5]. While computationally efficient, it may not generate the correct NPT ensemble distribution and is primarily used for equilibration purposes.

Stochastic Barostats: Methods like the Langevin piston introduce stochastic elements to control oscillations in volume fluctuations [28]. The COMPEL algorithm, for instance, combines molecular pressure concepts with Ewald summation for long-range forces and stochastic relaxation to achieve highly efficient and accurate sampling [28].

Table 2: Comparison of Barostat Methods in Molecular Dynamics

| Barostat Method | Ensemble Accuracy | Computational Cost | Typical Applications |

|---|---|---|---|

| Parrinello-Rahman | High | Moderate to High | Solids, anisotropic materials, phase transitions |

| Berendsen | Moderate (biased) | Low | Equilibration, rapid convergence |

| Nosé-Hoover-Langevin | High | Moderate | Accurate thermodynamic sampling |

| Stochastic (COMPEL) | High | Moderate | Complex molecular systems |

Molecular Pressure and Advanced Integration