Systematic Error Reduction in Force Field Parameter Optimization: Advanced Strategies for Biomolecular Simulation and Drug Discovery

This comprehensive review addresses the critical challenge of systematic errors in force field parameterization, which significantly impacts the reliability of molecular simulations in drug discovery and biomolecular research.

Systematic Error Reduction in Force Field Parameter Optimization: Advanced Strategies for Biomolecular Simulation and Drug Discovery

Abstract

This comprehensive review addresses the critical challenge of systematic errors in force field parameterization, which significantly impacts the reliability of molecular simulations in drug discovery and biomolecular research. We explore foundational concepts of systematic versus random errors, advanced methodological approaches including Bayesian inference and machine learning, targeted troubleshooting strategies for specific force field deficiencies, and rigorous validation protocols. By synthesizing recent advances in uncertainty quantification, element-specific corrections, and cross-validation techniques, this article provides researchers with a practical framework for developing more accurate and transferable force fields, ultimately enhancing predictive capabilities in pharmaceutical development and clinical research applications.

Understanding Systematic Errors in Force Fields: Sources, Detection, and Impact on Molecular Simulations

Defining Systematic vs. Random Errors in Force Field Parameterization

Definitions and Core Concepts

What is the fundamental difference between a systematic error and a random error in force field parameterization?

Systematic errors are reproducible inaccuracies that consistently skew results in one direction due to flaws in the force field model itself, parameter derivation methods, or underlying approximations. These errors persist across multiple simulations and sampling attempts.

Random errors are statistical fluctuations that vary unpredictably between different simulations, arising from insufficient conformational sampling, finite simulation times, or stochastic processes in molecular dynamics algorithms.

Table: Characteristics of Systematic vs. Random Errors in Force Fields

| Feature | Systematic Error | Random Error |

|---|---|---|

| Direction | Consistent bias in one direction | Unpredictable variation around true value |

| Reproducibility | Reproducible across simulations | Varies between simulation runs |

| Source | Imperfect functional forms, inaccurate parameters | Incomplete sampling, finite simulation time |

| Solution | Force field reparameterization, improved theory | Enhanced sampling, longer simulations |

| Example | Consistently overestimating solvation free energies for halogenated compounds | Variations in calculated free energy between replicate simulations |

How do these errors manifest in practical molecular simulations?

Systematic errors appear as consistent deviations from experimental or quantum mechanical reference data across multiple molecules sharing similar chemical features. For example, the General AMBER Force Field (GAFF) exhibits systematic errors in hydration free energy predictions for molecules containing chlorine, bromine, iodine, and phosphorus atoms [1]. These errors were identified by applying an element count correction to hydration free energy calculations, which revealed consistent biases for specific elements [1].

Random errors manifest as inconsistencies when repeating the same simulation with different initial conditions. In benchmarking studies, random errors appear as variations in computed observables like J-couplings or nuclear Overhauser effect (NOE) violations between replica simulations of the same system [2].

Identification and Diagnosis

What methodologies can identify systematic force field errors?

Element Counting Analysis: Apply corrections based on chemical composition, such as the Element Count Correction (ECC), which identifies systematic deviations for specific elements. Research shows ECC can reduce mean unsigned error in hydration free energies from 1.44±0.07 kcal/mol to 1.01±0.04 kcal/mol by addressing systematic force field deficiencies [1].

Bayesian Inference Methods: Use algorithms like Bayesian Inference of Conformational Populations (BICePs) that sample the full posterior distribution of conformational populations and experimental uncertainty. BICePs can distinguish between systematic and random errors by modeling uncertainty parameters while accounting for outliers [3].

Multi-system Benchmarking: Validate force fields against diverse experimental datasets. For example, validating macrocyclic compound ensembles against NOE distance bounds reveals when force fields consistently violate experimental constraints [2].

Machine Learning Approaches: Employ ML-based force fields like Grappa which can reveal systematic biases in traditional force fields by comparing their predictions against quantum mechanical reference data [4].

How can researchers diagnose random errors in their simulations?

Convergence Testing: Monitor observables over simulation time to ensure proper equilibration and sampling. Flexible molecules with standard deviations in hydration free energies >0.4 kcal/mol between replicas indicate significant random errors [1].

Replica Analysis: Compare results from multiple independent simulations. Random errors appear as variations between replicas, while systematic errors persist across all replicas.

Statistical Analysis: Calculate standard errors of mean values. The standard error of the mean (SEM) quantifies random error in replica-averaged forward models used in Bayesian methods [3].

Troubleshooting Guide

Table: Troubleshooting Force Field Errors

| Problem | Possible Cause | Diagnosis Method | Solution Approach |

|---|---|---|---|

| Consistent over/under-estimation of solvation free energies for specific chemical groups | Systematic error in Lennard-Jones parameters | Element Count Correction (ECC) [1] | Re-optimize non-bonded parameters for affected elements |

| Ensemble-averaged observables disagree with experiment despite good sampling | Systematic error in torsional parameters | Bayesian Inference of Conformational Populations (BICePs) [3] | Refine dihedral parameters using variational optimization |

| High variability in computed observables between simulation replicates | Random error from insufficient sampling | Calculate standard deviation between replicas [1] | Increase simulation time, use enhanced sampling methods |

| Poor reproduction of experimental NOE bounds for macrocycles | Systematic force field bias | Compare distance distributions to experimental bounds [2] | Switch to modern force fields (OpenFF 2.0, XFF) or reparameterize |

| Inconsistent hydration free energy predictions | Combination of systematic and random errors | 3D-RISM with PMVECC analysis [1] | Apply combined partial molar volume and element count corrections |

Workflow for Error Diagnosis and Resolution

Experimental Protocols

Protocol for Identifying Systematic Errors Using Element Count Correction

Calculate hydration free energies for a diverse set of molecules (e.g., FreeSolv database with 642 molecules) using your force field and 3D-RISM [1].

Apply the Element Count Correction:

- Compute ΔGECC = ΔGRISM + ΣciNi, where ci are element-specific coefficients and Ni are atom counts [1].

- Fit coefficients ci to minimize deviation from experimental data.

Identify problematic elements: Large magnitude ci values indicate elements with systematic parameter errors [1].

Validate with explicit solvent: Confirm identified systematic errors using explicit solvent free energy calculations [1].

Protocol for Parameter Optimization Using Bayesian Methods

Prepare prior ensemble: Generate conformational ensemble using existing force field [3].

Set up BICePs calculation:

- Define experimental observables and uncertainties

- Use replica-averaged forward model: fj(𝐗) = (1/Nr) Σ fj(Xr) [3]

- Configure likelihood function (Gaussian or Student's model for outliers)

Sample posterior distribution: Run Markov chain Monte Carlo sampling of conformational populations and uncertainty parameters [3].

Compute BICePs score: Calculate free energy of "turning on" conformational populations under experimental restraints [3].

Optimize parameters: Use variational method to minimize BICePs score and obtain improved force field parameters [3].

Research Reagent Solutions

Table: Essential Tools for Force Field Error Analysis

| Tool/Resource | Function | Application Context |

|---|---|---|

| 3D-RISM with ECC [1] | Identifies systematic element-specific errors | Hydration free energy calculation and force field validation |

| BICePs [3] | Bayesian refinement against experimental data | Parameter optimization with uncertainty quantification |

| Grappa [4] | Machine-learned force field generation | Alternative parameterization avoiding systematic human biases |

| FreeSolv Database [1] | Benchmark dataset for solvation free energies | Validation against experimental hydration free energies |

| Force Field Toolkit (ffTK) [5] | Workflow for CHARMM-compatible parameterization | Systematic parameter development and refinement |

| OpenFF 2.0/Sage [2] | Modern force field for drug-like molecules | Benchmarking against macrocyclic compounds |

Frequently Asked Questions

How can I determine if my force field error is systematic or random without extensive benchmarking?

Run three to five independent simulations of the same system with different random seeds. If the deviation from experimental reference is consistent in direction and magnitude across all replicas, the error is likely systematic. If the deviations vary randomly around the reference value, the error is predominantly random. For quantitative work, compare the between-replica variance (random error) to the average deviation from reference (potential systematic error) [1] [3].

Which force fields are most susceptible to systematic errors for drug-like molecules?

Studies show that GAFF and GAFF2 exhibit systematic errors for specific chemical elements (Cl, Br, I, P) in hydration free energy calculations [1]. For macrocyclic compounds, GAFF2 and OPLS/AA show systematic biases in reproducing NOE distance bounds, while modern force fields like OpenFF 2.0 and XFF generally perform better [2]. Transferable force fields are particularly prone to systematic errors for unusual chemical motifs not well-represented in their training sets.

Can machine learning force fields completely eliminate systematic errors?

No, ML force fields like Grappa can reduce but not eliminate systematic errors. While they outperform traditional force fields on many benchmarks [4], they can still inherit biases from their training data or have systematic errors in uncharted chemical regions. However, they reduce human-introduced systematic errors from manual parameterization and can be more systematically improved against quantum mechanical reference data.

What is the most efficient approach to handle both systematic and random errors simultaneously?

Implement a multi-stage approach: First, use enhanced sampling (e.g., REST2) to minimize random errors [2]. Then, apply Bayesian methods (e.g., BICePs) with replica-averaging to identify and correct systematic errors while accounting for residual random errors [3]. This combination addresses both error types in a statistically rigorous framework.

FAQs: Diagnosing and Resolving Systematic Errors

How can I distinguish between poor sampling (statistical error) and an incorrect parameter (systematic error) in my simulation?

Systematic errors from incorrect parameters and statistical errors from poor sampling present distinct symptoms. Systematic error due to a bad parameter will cause your simulation to consistently converge to an incorrect value, no matter how long you run it. In contrast, pure statistical error will see results fluctuate randomly around the true value, with variance decreasing as simulation time increases [6].

A system with slow conformational relaxations may exhibit misleading behavior: properties may appear to converge rapidly but to a wrong value if a major conformational state is missing from the sampling. In such cases, variance might initially decrease and then increase again as the simulation finally crosses a major energy barrier [6]. The gold standard is to repeat calculations from different initial structures, but this can fail if your system construction protocol systematically favors one starting conformation [6].

Why does my simulation reproduce quantum mechanical target data but fail to match experimental liquid properties?

This common issue often originates from the tight coupling between Lennard-Jones (LJ) parameters and partial atomic charges. Force fields like OPLS and CHARMM parameterize these terms simultaneously to reproduce ab initio interaction energies and condensed-phase experimental properties [7]. If your partial charges are derived from quantum mechanical calculations without subsequent validation against experimental data like density or heat of vaporization, the resulting force field may perform poorly in molecular dynamics simulations [7].

Using charges from methods like RESP or ChelpG without optimizing the coupled LJ parameters can lead to this discrepancy. Similarly, LJ parameters optimized for one set of charges may not work with another [7].

What are the consequences of using dihedral parameters that are not properly optimized?

Poorly optimized dihedral terms can systematically bias your simulated conformational ensemble. This error manifests as incorrect populations of rotameric states, which in turn affects computed observables like NMR parameters or protein flexibility [3] [5]. Unlike bonds and angles which typically use harmonic potentials, dihedrals employ a more complex periodic function, making them more challenging to parameterize correctly [7].

Systematic errors in dihedrals are particularly problematic because they affect the fundamental structural preferences of your molecule, potentially leading to incorrect conclusions about stability, binding, or mechanism.

How do inconsistent van der Waals parameters cause simulation failures?

Inconsistent LJ parameters between different atom types in your force field can trigger warnings and unstable simulations. The ReaxFF documentation notes that such inconsistencies lead to screening and repulsion parameters that don't work harmoniously across atom types [8]. This can cause sudden energy changes or unphysical interactions.

Similarly, for polarizable force fields, inconsistent electronegativity equalization method (EEM) parameters can trigger "polarization catastrophe" at short interatomic distances, where excessive charge transfer occurs between atoms [8]. The relationship between eta and gamma parameters (eta > 7.2*gamma) must be maintained to prevent this issue [8].

Parameter-Specific Troubleshooting Guides

Lennard-Jones (vdW) Parameters

Table: Diagnosing and Fixing LJ Parameter Errors

| Symptoms | Potential Causes | Validation Methods |

|---|---|---|

| Density deviations >2% from experiment [7] | Parameters optimized only for gas phase; poor transferability | Liquid-state simulation comparing density, heat of vaporization [7] |

| Incorrect solvation free energies (±0.5 kcal/mol error acceptable [5]) | Coupling between LJ parameters and partial charges not accounted for | Free energy perturbation calculations compared to experimental values |

| Unphysical aggregation or repulsion | LJ well depth (ε) too strong/weak; radius (σ) incorrect | Radial distribution functions analysis; comparison to neutron scattering data |

| Temperature-dependent property failures | Parameters optimized only for room temperature | Simulations across temperature range comparing to experimental data [7] |

Optimization Protocol: Genetic Algorithms (GAs) provide an efficient approach for multidimensional LJ parameter optimization. The process involves: (1) Defining a fitness function incorporating multiple experimental properties (density, heat of vaporization, diffusion coefficients); (2) Generating an initial population of parameter sets; (3) Running parallel simulations to evaluate each set's performance; (4) Applying selection, crossover, and mutation to evolve better parameter sets over generations [7]. This method simultaneously optimizes all parameters, accounting for their coupling, unlike sequential hand-tuning [7].

Partial Atomic Charges

Table: Charge Parameterization Issues and Solutions

| Error Symptom | Root Cause | Best Practice Solution |

|---|---|---|

| Excessive intermolecular association | Charges underestimated | Derive charges from water-interaction profiles (CHARMM philosophy) [5] |

| Insufficient binding affinities | Charges overestimated | Combine quantum mechanical data with liquid property validation |

| Transferability failures between phases | Gas-phase derived charges without condensation correction | Environment-specific charge derivation (e.g., COSMO) |

| Inconsistent polarization response | Missing polarization treatment | Consider polarizable force fields or context-specific charges |

Charge Optimization Methodology: The Force Field Toolkit (ffTK) implements a CHARMM-compatible protocol: (1) Perform QM calculations of the molecule interacting with water probes; (2) Calculate electrostatic potential (ESP) for charge fitting; (3) Use a penalty function to balance QM data and water interaction energies; (4) Iteratively refine charges to reproduce both targets [5]. This approach ensures charges work effectively in biological environments where water interactions dominate.

Dihedral Terms

Systematic Error Detection: Compare potential energy surfaces from MD simulations against quantum mechanical rotational scans [7]. Significant deviations (>1-2 kcal/mol) indicate poor dihedral parameterization. For complex molecules, use Bayesian Inference of Conformational Populations (BICePs) to assess how well your force field reproduces ensemble-averaged experimental measurements [3].

Optimization Workflow:

- Perform QM rotational scan around the dihedral bond of interest

- Compute energy profile using multiple theory levels (e.g., MP2, DFT with different functionals)

- Fit MM parameters to QM energy profile using truncated Fourier series

- Validate against experimental data where available (NSC, J-couplings)

- Use BICePs score for model selection when multiple parameter sets seem plausible [3]

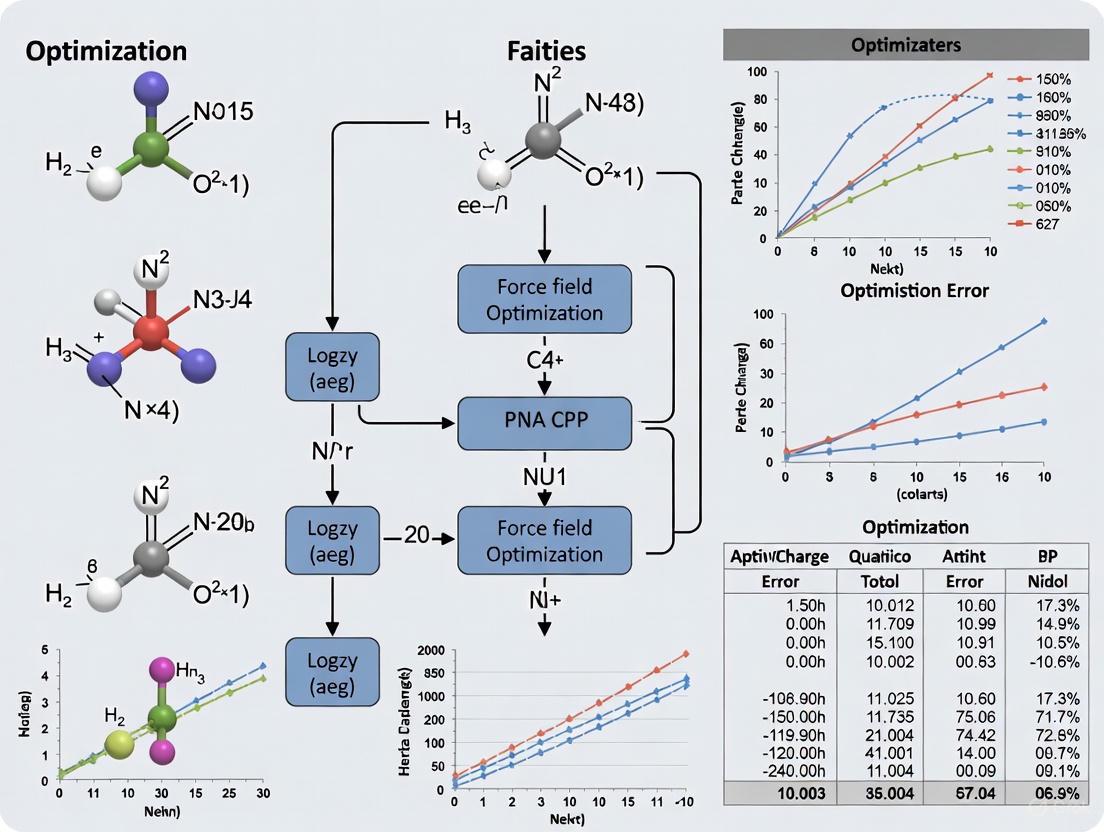

Diagram: Dihedral Parameter Optimization and Validation Workflow

Experimental Protocols for Parameter Validation

Ensemble Validation with BICePs

Bayesian Inference of Conformational Populations (BICePs) is a robust method for validating force fields against sparse or noisy experimental data. The protocol involves: (1) Running MD simulations to generate prior conformational distributions; (2) Computing theoretical observables from the simulation trajectory; (3) Using replica-averaged forward models to compare with experimental measurements; (4) Sampling the full posterior distribution of conformational populations and experimental uncertainty using MCMC; (5) Calculating the BICePs score for model selection [3].

BICePs is particularly valuable because it uses specialized likelihood functions (e.g., Student's model) that are robust to systematic errors and outliers in experimental data [3]. The method automatically detects and down-weights problematic data points during the refinement process.

Liquid Property Validation

For small molecules, validate parameters by comparing simulated and experimental liquid properties:

- Density: Run NpT simulations at ambient conditions; compute average density

- Enthalpy of Vaporization: Calculate using ΔH~vap~ = ⟨E~gas~⟩ - ⟨E~liq~⟩ + RT

- Diffusion Coefficient: Run NVT simulation; compute mean squared displacement

- Static Dielectric Constant: Calculate from dipole moment fluctuations

Acceptable errors are <15% for pure-solvent properties and ±0.5 kcal/mol for free energy of solvation [5].

Table: Key Software Tools for Parameter Optimization

| Tool Name | Primary Function | Application Context |

|---|---|---|

| Force Field Toolkit (ffTK) [5] | Complete parameterization workflow | CHARMM-compatible small molecule parameterization |

| BICePs [3] | Bayesian model selection and validation | Assessing force field performance against ensemble data |

| Genetic Algorithms [7] | Multidimensional parameter optimization | Simultaneous optimization of coupled parameters |

| ParamChem [5] | Automated parameter assignment | Initial parameter estimation by analogy |

| VASP MLFF [9] | Machine-learned force field generation | Ab initio parameterization for complex materials |

Advanced Optimization Workflows

Diagram: Comprehensive Parameter Optimization and Error Diagnosis Workflow

For complex parameterization challenges, follow this integrated workflow:

Initial Parameter Guess: Use tools like ParamChem for initial atom typing and parameter assignment, or ffTK for ab initio parameterization [5]

Systematic Error Diagnosis: Run production MD simulations and compare results with multiple experimental observables. Use BICePs to quantify agreement and identify potential systematic errors [3]

Focused Refinement: Based on diagnosis, target specific parameter types:

Iterative Validation: Continuously validate against both QM target data and experimental measurements until convergence

This approach minimizes the risk of introducing compensating errors and ensures parameters remain transferable across different chemical contexts and simulation conditions.

The Impact of Systematic Errors on Hydration Free Energies and Conformational Sampling

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common sources of systematic error in hydration free energy (HFE) calculations? Systematic errors in HFE calculations primarily originate from two key areas: force field inaccuracies and insufficient conformational sampling.

- Force Field Inaccuracies: Errors in the parameterization of the force field itself, particularly in the Lennard-Jones (vdW) parameters for certain elements, are a major source of systematic error. Studies have identified systematic errors for molecules containing chlorine (Cl), bromine (Br), iodine (I), and phosphorus (P) in the GAFF force field [10] [1]. The choice of partial charge model (e.g., AM1-BCC vs. MP2/cc-PVTZ SCRF RESP) and water model (e.g., TIP3P vs. OPC) also significantly impacts accuracy [11] [12].

- Sampling Deficiencies: Free energy simulations, especially of solute-bilayer systems or flexible molecules, are prone to sampling errors [13]. Inadequate sampling can occur during solute adsorption, insertion, and when crossing the bilayer center. These "hidden sampling barriers" can dramatically influence the results, and the initial conformations can heavily bias the outcome if sampling is insufficient [13].

FAQ 2: My HFE calculations show large errors for drug-like molecules. Is this a known issue? Yes. A key finding is that the accuracy of calculated hydration free energies tends to decrease as molecular weight increases [12]. For smaller molecules, errors are often within 1-2 kcal/mol, but for drug-like molecules in the higher molecular weight region, HFE predictions can easily be off by 5 kcal/mol or more [12]. This is highly problematic for drug discovery, as HFE is a critical determinant of a drug's absorption and distribution [12].

FAQ 3: How can I quickly check if my force field has systematic parameter errors?

A computationally efficient method is to use an Element Count Correction (ECC). This approach fits a simple correction term based on the number of atoms of each element in a molecule to the calculated HFEs [10] [1]. If the correction parameters (c_i in the equation below) for certain elements are consistently large, it indicates a systematic error in the non-bonded parameters for those elements. This method can be applied to both implicit solvent (like 3D-RISM) and explicit solvent calculations [10] [1].

ΔG_ECC = ΔG_RISM + Σ c_i * N_i (where N_i is the count of atoms of element i)

FAQ 4: What advanced methods are available for automated force field optimization? Bayesian inference methods are powerful for automated force field refinement. The Bayesian Inference of Conformational Populations (BICePs) algorithm, for example, can refine force field parameters against sparse or noisy experimental data while simultaneously sampling the full distribution of uncertainties [3]. It uses a variational method to minimize the BICePs score, an objective function that is resilient to systematic error and outliers in the training data [3]. Other meta-heuristic methods like simulated annealing (SA) and particle swarm optimization (PSO) have also been successfully combined to optimize complex reactive force fields (ReaxFF) [14].

Troubleshooting Guides

Issue 1: Systematic Force Field Errors in HFE Predictions

Problem: Calculated hydration free energies consistently deviate from experimental values for specific classes of molecules (e.g., halogens).

Diagnosis and Solution:

- Identify Errant Elements: Apply an Element Count Correction (ECC) or a combined Partial Molar Volume with ECC (PMVECC) to a dataset of calculated HFEs (e.g., from FreeSolv). The fitted coefficients will reveal which elements are associated with the largest errors [10] [1].

- Retrain vdW Parameters: Focus refinement efforts on the Lennard-Jones parameters for the identified elements. Use a robust optimization framework.

- Objective Function: Use the BICePs score or a similar Bayesian method that accounts for uncertainty and systematic error in the reference data [3].

- Training Data: Include not just pure liquid properties but also properties of binary mixtures. Training against mixture data encodes more information about the underlying physics and can reduce systematic errors that persist when training only on pure system properties [15].

- Algorithm: Employ a multi-objective optimization algorithm, such as a hybrid of Simulated Annealing and Particle Swarm Optimization, which can help avoid local minima and improve convergence [14].

Experimental Protocol: Element Count Correction for Error Diagnosis [10] [1]

- Objective: To identify systematic force field errors by analyzing residuals of hydration free energy calculations.

- Procedure:

- Calculate HFEs for a large set of diverse molecules (e.g., the FreeSolv database) using your force field and solvation model (e.g., 3D-RISM or explicit solvent).

- For each molecule

i, compute the error:Error_i = ΔG_calc,i - ΔG_exp,i. - Fit a linear model:

Error = c_C * N_C + c_N * N_N + c_O * N_O + c_Cl * N_Cl + ..., whereN_Xis the count of atomX. - Analyze the fitted coefficients

c_X. Large absolute values indicate elements whose parameters may be systematically biased.

- Key Output: A list of elements ranked by the magnitude of their correction coefficient, guiding parameter refinement efforts.

Issue 2: Sampling Errors in Free Energy Simulations

Problem: Free energy profiles (e.g., for membrane insertion) are noisy, non-convergent, or show hysteresis, indicating inadequate sampling.

Diagnosis and Solution:

- Identify Hidden Barriers: Be aware that significant orthogonal barriers (e.g., in lipid headgroup regions) may not be apparent in the primary reaction coordinate but can severely limit sampling [13].

- Increase Simulation Time: For molecular dynamics (MD)-based methods, simply extending simulation time is a straightforward but costly approach.

- Use Enhanced Sampling Methods: Implement techniques like metadynamics or replica exchange to improve sampling over slow degrees of freedom.

- Leverage Implicit Solvent for Conformational Pre-sampling: For flexible molecules, use extensive conformational sampling with a fast implicit solvent model (like Generalized Born) to identify low-energy conformers before running more expensive explicit solvent free energy calculations [10]. This helps ensure the starting configuration is representative.

Experimental Protocol: Identifying Rigid vs. Flexible Molecules [10]

- Objective: To classify molecules as rigid or flexible to assess potential sampling errors in single-conformer HFE calculations.

- Procedure:

- Perform molecular dynamics simulations of each solute in implicit solvent (e.g., GB).

- Monitor the standard deviation of the HFE (

σ_ΔG_GB) over the course of the simulation. - Compare the HFE from a single, static frame (

ΔG_GB,static) to the HFE from the entire simulation (ΔG_GB,MD). - Classification: Molecules with low standard deviation (

σ_ΔG_GB ≤ 0.4 kcal/mol) and a small difference between static and MD HFEs (|ΔG_GB,static - ΔG_GB,MD| ≤ 0.2 kcal/mol) can be treated as rigid. Others should be considered flexible and require enhanced sampling [10].

Issue 3: Error Propagation from HFEs to Binding Affinities

Problem: Inaccurate absolute binding free energy (ABFE) calculations, where errors in the ligand desolvation penalty dominate the total error.

Diagnosis and Solution:

- Understand the Link: The error in computed binding free energies is often dominated by the error in the hydration free energy, as binding partially involves desolvating the ligand [11] [12].

- Focus on HFE Accuracy: Improving the accuracy of HFE predictions for your force field, as described in Issue 1, will directly improve the accuracy of ABFE calculations.

- Use Relative Binding Free Energies (RBFE): When possible, use RBFE calculations. Since RBFE computes the difference in binding between similar ligands, the large systematic errors in the absolute desolvation free energy often cancel out, leading to more reliable results [12].

Data Presentation

This table summarizes how various post-processing corrections can improve agreement with experimental hydration free energy data.

| Correction Method | Description | Mean Unsigned Error (kcal/mol) | Root Mean Squared Error (kcal/mol) |

|---|---|---|---|

| Uncorrected 3D-RISM | Base calculation without any correction. | ~5.0 (typical) | ~7.0 (typical) |

| PMVC | Partial Molar Volume Correction: ΔG + aV + b |

~1.3 | ~1.8 |

| ECC | Element Count Correction: ΔG + Σ c_i N_i |

~1.2 | ~1.7 |

| PMVECC | Combined PMV and Element Count Correction | 1.01 ± 0.04 | 1.44 ± 0.07 |

Table 2: The Scientist's Toolkit: Essential Research Reagents and Software

This table lists key resources used in force field development, validation, and free energy calculations as cited in the literature.

| Item | Function / Description | Example Use Case |

|---|---|---|

| FreeSolv Database [10] [12] | A public database of experimental and calculated hydration free energies for 642 small molecules. | A benchmark set for validating force fields and solvation models [10] [1]. |

| General AMBER Force Field (GAFF) [11] [12] | A popular force field for small organic molecules. | Often used as a base force field for HFE calculations and parameter refinement studies [10] [11]. |

| 3D-RISM [10] [1] | An implicit solvation model based on statistical mechanics. | Rapid calculation of solvation thermodynamics, useful for high-throughput error screening [10]. |

| BICePs Algorithm [3] | A Bayesian inference method for reweighting ensembles and refining force field parameters. | Automated optimization of parameters against ensemble-averaged data with robust error handling [3]. |

| TIP3P Water Model [11] [12] | A widely used 3-point rigid water model in biomolecular simulations. | The default solvent for many explicit solvent HFE calculations; known to have limitations [12]. |

| OPC Water Model [12] | A newer, more accurate 4-point rigid water model. | Used to test if improved water models lead to better HFE predictions for drug-like molecules [12]. |

Workflow Visualization

Systematic Error Identification and Correction Workflow: This diagram outlines the systematic process for diagnosing and correcting force field errors, moving from initial problem identification through parameter refinement and final validation.

FAQs and Troubleshooting Guides

FAQ 1: What are the most common sources of error when using Bayesian inference for force field optimization?

The most common sources of error can be categorized as follows:

- Systematic Error in Experimental Data: Inaccurate or biased reference data used for training or validation, such as experimental measurements subject to unknown random and systematic errors [3] [16].

- Forward Model Error: Imperfections in the computational model that predicts experimental observables from molecular configurations, leading to a inherent discrepancy even with perfect parameters [3] [16].

- Inadequate Prior Selection: Using prior distributions that do not accurately reflect existing knowledge about parameters, which can bias the posterior results [17].

- Insufficient Sampling: Failing to adequately explore the parameter space during Markov Chain Monte Carlo (MCMC) sampling, resulting in an inaccurate posterior distribution that does not capture the true uncertainty [3] [17].

- Overfitting: A model that is too complex relative to the available data, characterized by excellent performance on training data but poor generalization to new, unseen test data [18].

Troubleshooting Guide: Addressing Poor Model Generalization

- Symptom: Low training-set error but high test-set error [18].

- Potential Causes & Solutions:

- Cause: The force field is overfitted to the specific structures in the training set.

- Solution: Introduce more variability into your training set by including a wider diversity of molecular structures and conformations. Tune hyperparameters to reduce model complexity [18].

- Cause: The test set is not representative of the production conditions.

- Solution: Ensure your test set contains structures with similar numbers of atoms and is sampled from the same thermodynamic phase as your intended production simulations [18].

FAQ 2: How can I identify and down-weight the influence of experimental outliers in my Bayesian analysis?

Bayesian frameworks can be equipped with specialized likelihood functions that are robust to outliers.

- Student's t-likelihood Model: This model marginalizes over uncertainty parameters for individual observables. It operates under the assumption that while most data points have a consistent level of noise, a few erratic measurements (outliers) can exist. This approach automatically detects and down-weights the importance of data points subject to systematic error without requiring a large number of additional parameters [3].

- Replica-Averaged Forward Model: This method, used in algorithms like BICePs, treats the forward model prediction as an average over multiple replicas. The uncertainty parameter (σ) in the likelihood function then combines both the Bayesian error and the standard error of this replica average, making the model more resilient to inconsistencies in both data and predictions [3].

FAQ 3: My MCMC sampling for parameter estimation is not converging efficiently. What steps can I take?

- Utilize Gradient Information: Employ methods that leverage the first and second derivatives of the objective function (e.g., the BICePs score) to guide the sampling or optimization process, which can significantly improve convergence speed in complex parameter spaces [3].

- Employ Surrogate Models: To reduce computational cost, train a local Gaussian process (LGP) surrogate model. This surrogate quickly predicts quantities of interest from trial parameters, allowing for efficient evaluation of candidates during MCMC sampling without running full, costly molecular dynamics simulations each time [17].

- Validate with Analytical Solutions: For problems with a linear mapping between parameters and outputs (e.g., in certain robot dynamic identification problems), derive an analytical solution for the posterior distribution. This bypasses sampling challenges entirely and provides a ground truth for validation [19].

Key Experimental Protocols and Data

Protocol: Bayesian Inference of Conformational Populations (BICePs) for Force Field Refinement

This protocol outlines the use of BICePs for refining force field parameters against ensemble-averaged experimental data [3] [16].

Define the Posterior: The posterior distribution is formulated as: ( p(\mathbf{X}, \bm{\sigma}, \bm{\theta} | D) \propto p(D | \mathbf{X}, \bm{\sigma}, \bm{\theta}) p(\mathbf{X}) p(\bm{\sigma}) p(\bm{\theta}) ) where:

- ( \mathbf{X} ): Conformational states.

- ( \bm{\sigma} ): Nuisance parameters quantifying uncertainty in observables.

- ( \bm{\theta} ): Force field parameters to be optimized.

- ( D ): Experimental data.

- ( p(D | \mathbf{X}, \bm{\sigma}, \bm{\theta}) ): Likelihood function using a forward model.

- ( p(\mathbf{X}) ): Prior from a theoretical model (e.g., a molecular simulation).

- ( p(\bm{\sigma}), p(\bm{\theta}) ): Priors for uncertainty and force field parameters [16].

Replica-Averaging: Use a replica-averaged forward model for predictions. The forward model prediction for an observable ( j ) is ( fj(\mathbf{X}) = \frac{1}{Nr}\sum{r}^{Nr} fj(Xr) ), where ( Nr ) is the number of replicas. The total uncertainty is modeled as ( \sigmaj = \sqrt{(\sigma^Bj)^2 + (\sigma^{SEM}j)^2} ), incorporating both Bayesian error and the standard error of the mean [3].

MCMC Sampling: Sample the full posterior distribution, including conformational states, uncertainty parameters, and force field parameters, using MCMC methods [3] [16].

Variational Optimization (Alternative): Alternatively, minimize the BICePs score—a free energy-like quantity that reflects the total evidence for a model—with respect to the force field parameters ( \bm{\theta} ) using variational methods. This has been shown to be equivalent to the sampling approach [3] [16].

Protocol: Bayesian Learning for Biomolecular Force Field Parameters

This protocol describes a framework for learning partial charge distributions from ab initio molecular dynamics (AIMD) data [17].

Data Generation: Run AIMD simulations of solvated molecular fragments to generate reference data for target quantities of interest (QoIs), such as radial distribution functions (RDFs) and hydrogen bond counts [17].

Surrogate Model Training: Perform multiple force field molecular dynamics (FFMD) simulations with trial parameter sets. Use the results to train a local Gaussian process (LGP) surrogate model that maps partial charges to the QoIs [17].

Bayesian Inference: Use MCMC sampling to draw from the posterior distribution of parameters. The LGP surrogate enables fast evaluation of the likelihood for candidate parameters against the AIMD reference data [17].

Validation: Assess the accuracy of the optimized parameters by comparing FFMD results using posterior parameter samples against the AIMD reference and, if available, experimental data (e.g., aqueous solution densities) [17].

Quantitative Performance Data

Table 1: Performance of Bayesian Deep Reinforcement Learning in Geotechnical Engineering. This example from a different field illustrates the potential quantitative benefits of advanced Bayesian methods for parameter optimization and uncertainty quantification [20].

| Metric | Performance | Comparison to Deterministic Approach |

|---|---|---|

| Prediction Accuracy (R²) | 0.91 | Not Reported |

| Uncertainty Coverage Probability | 96.8% | Not Reported |

| Maximum Wall Displacement | 29.7 mm | 35% reduction (from 45.8 mm) |

| Surface Settlement | 16.5 mm | 42% reduction (from 28.5 mm) |

| Cost Savings | ¥2.3 million | 18% reduction [20] |

Table 2: Error Metrics for Bayesian-Optimized Biomolecular Force Fields. Data from the optimization of partial charges for 18 biologically relevant molecular fragments [17].

| Quantity of Interest (QoI) | Normalized Mean Absolute Error (NMAE) | Key Improvement |

|---|---|---|

| Radial Distribution Functions (RDFs) | < 5% for most species | Systematic improvements, especially for charged anions [17] |

| Hydrogen Bond Counts | Typically 10-20% deviation | Larger variability due to rigid water model limitations [17] |

| Ion-Pair Distance Distributions | Generally under 20% deviation | Larger errors for some anionic species [17] |

| Aqueous Solution Densities | < 1% deviation from experiment for nearly all solutions | Validates transferability of optimized charges [17] |

Workflow and Signaling Pathway Diagrams

Research Reagent Solutions

Table 3: Key Software and Computational Tools for Bayesian Force Field Optimization

| Tool / Algorithm | Type | Primary Function | Application Example |

|---|---|---|---|

| BICePs (Bayesian Inference of Conformational Populations) | Algorithm | A reweighting algorithm that refines structural ensembles against sparse/noisy experimental data by sampling posterior distributions of populations and uncertainties [3] [16]. | Force field validation and optimization against ensemble-averaged measurements like NMR observables [3] [16]. |

| Markov Chain Monte Carlo (MCMC) | Computational Method | A class of algorithms for sampling from a probability distribution, essential for estimating posterior distributions in Bayesian inference [20] [17]. | Sampling the posterior distribution of force field parameters, conformational states, and uncertainty hyperparameters [3] [17]. |

| Local Gaussian Process (LGP) Surrogate | Surrogate Model | A fast, interpretable surrogate model that predicts simulation outcomes from parameters, drastically reducing computational cost during MCMC sampling [17]. | Mapping partial charge distributions to quantities of interest (RDFs, HB counts) during Bayesian optimization of biomolecular force fields [17]. |

| Replica-Averaged Forward Model | Modeling Technique | A forward model that predicts observables as an average over multiple replicas, improving uncertainty estimation by incorporating standard error [3]. | Used within BICePs to provide more robust predictions and better handle forward model error [3]. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the specific element-related systematic errors identified in GAFF? Systematic force field errors have been identified for molecules containing chlorine (Cl), bromine (Br), iodine (I), and phosphorus (P) [21]. These errors were uncovered through large-scale benchmarking of hydration free energy calculations for 642 molecules in the FreeSolv database. The deviations were consistent enough to be corrected using a simple element count correction (ECC), strongly suggesting an origin in the underlying non-bonded, specifically Lennard-Jones, parameters within GAFF [21].

FAQ 2: What is the physical origin of these errors for halogen atoms? The errors are largely attributed to the inability of standard, isotropic force fields to accurately model the anisotropic electron distribution around halogen atoms [22]. When a halogen is covalently bonded to a carbon atom, its electron density is not uniform. This creates a region of positive electrostatic potential (a "σ-hole") along the C-X bond axis, and a region of negative potential in the perpendicular plane [22]. This anisotropy allows halogens to participate in both halogen bonds (as donors) and hydrogen bonds (as acceptors). Traditional force fields, which assign a single, spherical atom-centered point charge and Lennard-Jones parameter set, fail to capture this complex reality, leading to systematic errors in interaction energies and distances [22].

FAQ 3: How can I diagnose if my system is affected by these errors? You can perform a diagnostic calculation using the 3D-RISM model with an Element Count Correction (ECC) [21]. The protocol is as follows:

- Calculate the hydration free energy (HFE) for your molecule(s) of interest using the 3D-RISM model.

- Apply the ECC to the results. The ECC is a simple linear correction based on the counts of specific atoms in the molecule.

- A significant improvement in the agreement with benchmark explicit solvent calculations or experimental data after applying the ECC indicates that your molecule is likely affected by systematic force field errors related to those elements [21].

FAQ 4: What are the available solutions to overcome these limitations? Several strategies are being developed to address these issues:

- Parameter Refinement: Directly optimizing the Lennard-Jones parameters for problematic elements against experimental data or higher-level quantum mechanical calculations [21].

- Polarizable Force Fields: Adopting advanced models like the classical Drude oscillator, which explicitly accounts for electronic polarization. These models can be extended with a virtual particle and anisotropic atomic polarizability on the halogen to accurately reproduce both halogen-bonding and hydrogen-bonding interactions [22].

- Improved Charge Models: Utilizing new charge assignment methods, such as the ABCG2 model for GAFF2, which significantly improves the accuracy of solvation free energy calculations across diverse environments by optimizing bond charge correction terms [23] [24].

Troubleshooting Guides

Issue: Inaccurate Hydration Free Energies for Halogen/Phosphorus-Containing Compounds

Problem: Calculations of hydration free energies (HFEs) for molecules containing Cl, Br, I, or P show significant deviations from experimental values or benchmark explicit solvent calculations when using standard GAFF parameters.

Solution: Apply a 3D-RISM/ECC Diagnostic Correction

Objective: To quickly identify and correct systematic element-specific errors in HFE predictions.

Experimental Protocol:

System Setup:

- Obtain the molecular structure of the compound under investigation.

- Assign GAFF or GAFF2 parameters and partial charges (e.g., using ANTECHAMBER).

3D-RISM Calculation:

- Use the 3D Reference Interaction Site Model (3D-RISM) to calculate the hydration free energy. This method requires significantly less computational time than explicit solvent simulations (reportedly <15 seconds per molecule on a single CPU core) [21].

- The calculation yields an uncorrected HFE value, labeled as

ΔG_3D-RISM.

Apply the Element Count Correction (ECC):

- The ECC is a linear function of the number of specific atoms in the molecule. The general form is

ECC = Σ (n_i * c_i), wheren_iis the count of atomiandc_iis its correction coefficient [21]. - Apply the ECC to the 3D-RISM result:

ΔG_corrected = ΔG_3D-RISM + ECC. - For maximum accuracy, a combined Partial Molar Volume Correction and ECC (PMVECC) can be applied, which was shown to produce a mean unsigned error of 1.01 kcal/mol and a root mean squared error of 1.44 kcal/mol on the FreeSolv database [21].

- The ECC is a linear function of the number of specific atoms in the molecule. The general form is

Interpretation:

- Compare the

ΔG_correctedwith your reference data (experiment or explicit solvent benchmarks). - A substantial improvement after correction confirms the presence of a systematic force field error related to the elemental composition.

- Compare the

The following workflow outlines the diagnostic procedure:

Issue: Poor Modeling of Halogen Bonding and Anisotropic Interactions

Problem: Simulations fail to accurately reproduce interaction geometries (distances, angles) and energies for halogen bonds or the dual hydrogen-bond acceptor role of halogens.

Solution: Utilize a Polarizable Force Field with Anisotropic Features

Objective: To achieve a more physically accurate representation of halogen interactions.

Experimental Protocol:

Force Field Selection: Employ a polarizable force field based on the Drude oscillator model that has been explicitly parameterized for halogens [22].

Model Features: Ensure the force field includes two key features for halogens:

- A Virtual Particle: A positively charged "virtual" site is placed along the C-X covalent bond to represent the σ-hole, enabling the formation of halogen bonds [22].

- Anisotropic Treatment: Lennard-Jones parameters are applied to the halogen's Drude particle, and atomic polarizability is made anisotropic. This allows the model to mimic the flattened van der Waals surface along the σ-hole and the larger electron density perpendicular to the C-X bond [22].

Parameterization Target: The parameters for these models are optimized against a combination of:

Validation: The resulting force field should be validated by its ability to reproduce pure solvent properties for halogenated compounds and accurately model both XB and X-HBD interaction energy profiles against QM reference data [22].

Table 1: Performance of Correction Methods on Hydration Free Energy Prediction

| Method | Test System | Mean Unsigned Error (MUE) | Root Mean Squared Error (RMSE) | Key Finding |

|---|---|---|---|---|

| 3D-RISM + PMVECC [21] | 642 molecules (FreeSolv) | 1.01 ± 0.04 kcal/mol | 1.44 ± 0.07 kcal/mol | Identified systematic errors for Cl, Br, I, P. |

| ABCG2 Charge Model [23] | 442 neutral solutes in water | 0.37 kcal/mol | Not Reported | Improved GAFF2 charges reduce HFE error. |

| ABCG2 Charge Model [23] [24] | 895 solvent-solute systems | 0.51 kcal/mol | 0.65 kcal/mol | Shows transferability across solvents. |

Table 2: Key Software Tools and Resources for Force Field Optimization

| Tool / Resource Name | Type / Category | Primary Function | Reference |

|---|---|---|---|

| 3D-RISM with ECC/PMVC | Implicit Solvent Model | Rapid calculation and error diagnosis of hydration free energies. | [21] |

| BICePs (Bayesian Inference) | Refinement Algorithm | Reweighting simulated ensembles against sparse/noisy experimental data, accounting for uncertainty. | [3] |

| Drude Oscillator Model | Polarizable Force Field | Explicitly models electronic polarization; can be extended for halogen anisotropy. | [22] |

| SA + PSO + CAM | Optimization Framework | Meta-heuristic algorithm for automated, efficient ReaxFF parameter optimization. | [14] |

| ABCG2 | Charge Model | An optimized AM1-BCC method for GAFF2 that drastically improves solvation free energy predictions. | [23] [24] |

| RosettaGenFF | Force Field | Parameters optimized against thousands of small molecule crystal structures to favor native lattice arrangements. | [25] |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Computational Tools

| Item | Specifications / Examples | Function in Research |

|---|---|---|

| Benchmark Datasets | FreeSolv database (~642 molecules with experimental and calculated HFEs) [21]. | Provides a standardized set of molecules for validating and identifying force field errors. |

| Quantum Chemical Software | Gaussian, PSI4, NWCHEM [22]. | Generates target data (interaction energies, polarizabilities, geometries) for force field parameterization. |

| Molecular Dynamics Engines | CHARMM, NAMD, AMBER [22]. | Performs simulations using the developed parameters to compute properties and compare with experiment. |

| Parameterization Toolkits | ForceGen, QUBEKit, ffTK, ContraDRG [24]. | Automates the process of deriving force field parameters from quantum mechanical data. |

| Cambridge Structural Database (CSD) | A repository of small molecule crystal structures [25]. | Serves as a rich source of experimental structural information for training and validating force fields. |

Advanced Parameter Optimization Methods: From Bayesian Inference to Machine Learning Approaches

Bayesian Inference of Conformational Populations (BICePs) for Error-Resilient Optimization

Troubleshooting Guides

Frequently Asked Questions (FAQs)

Q: My BICePs optimization is converging slowly or appears stuck. What can I do?

A: Slow convergence often relates to high-dimensional parameter spaces or poorly scaled gradients. The BICePs algorithm can be accelerated by utilizing its gradient capabilities. Enable gradient-based sampling and consider adjusting the learning rate in the stochastic gradient descent update: θ_trial = θ_old - lrate · ∇u + η · N(0,1). The integration of gradients, especially with neural network potentials, significantly improves convergence in higher dimensions [3] [26].

Q: How can I identify if my results are affected by systematic errors in the experimental data? A: BICePs contains specialized likelihood functions designed to automatically detect and down-weight outliers. Use the Student's likelihood model, which marginalizes uncertainty parameters for individual observables. This model assumes noise is mostly uniform except for a few erratic measurements, making it robust against systematic errors without requiring numerous additional parameters [3].

Q: My conformational sampling seems insufficient. How does this affect uncertainty quantification?

A: Insufficient sampling increases the standard error of the mean (σSEM) in replica-averaged forward models. BICePs accounts for this by combining Bayesian error (σB) with finite sampling error: σ_j = √((σ^B_j)² + (σ^SEM_j)²). The σSEM is estimated from the variance across replicas and decreases with the square root of the replica count. Increase the number of replicas (Nr) to reduce this error source [3] [26].

Q: What constitutes a "good" BICePs score, and how do I interpret it for model selection?

A: The BICePs score (f(k) = -ln(Z(k)/Z0)) is a free energy-like quantity where lower values indicate better agreement between the prior model and experimental data. It quantifies the evidence for model k relative to a uniform reference prior. Use it for comparative model selection—the model with the lowest BICePs score is most consistent with experimental restraints [27] [28].

Common Error Messages and Solutions

WARNING: Suspicious force-field EEM parameters

- Problem: This warning, while from a specific force field implementation, highlights a common parameterization issue: the relationship between eta and gamma parameters for the electronegativity equalization method (EEM) does not satisfy

eta > 7.2*gamma[8]. - Solution: A polarization catastrophe can occur at short interatomic distances if this relation is violated. Check and adjust your force field's EEM parameters to meet this stability criterion [8].

Issue: Discontinuities in energy derivatives during optimization

- Problem: Force field energy functions can have discontinuities, often related to cutoffs (e.g., bond order cutoffs) that determine whether interaction terms are included. This causes sudden changes in forces and breaks optimization convergence [8].

- Solution:

Experimental Protocols & Workflows

Core BICePs Workflow for Force Field Refinement

The following diagram illustrates the complete BICePs workflow for error-resilient force field optimization, from initial ensemble preparation to final parameter selection.

Detailed Methodology: Variational Optimization of Force Field Parameters

Objective: Refine force field parameters θ to minimize disagreement between simulated and experimental ensemble-averaged data while accounting for errors.

Step 1: Prepare Prior Ensemble and Data

- Generate a conformational ensemble using your initial force field via molecular dynamics (MD) or Monte Carlo (MC) simulations. This provides the prior distribution

p(X)[27] [28]. - Compile experimental observables

D = {d_j}(e.g., NMR J-couplings, NOE distances, chemical shifts). Note that data can be sparse and noisy [3] [28].

Step 2: Set Up Forward Model Predictions

- For each conformational state

Xin your prior ensemble, compute the theoretical prediction for each experimental observable using a forward modelg(X, θ). For example, this could be a Karplus relation for J-coupling constants [26]. - In BICePs v2.0, this is handled by the

biceps.Preparationclass, which stores experimental data and forward model predictions as Pandas DataFrame objects [28].

Step 3: Configure and Run BICePs Optimization

- Choose a Likelihood Model: For data with potential systematic errors, select the Student's likelihood model, which is more robust against outliers [3].

- Enable Replica Averaging: Use the replica-averaged forward model

f_j(𝐗) = (1/N_r) ∑_r f_j(X_r)to approximate the ensemble average and compute the associated standard error of the mean (σ_SEM) [3] [26]. - Sample the Full Posterior: Use Markov Chain Monte Carlo (MCMC) to sample the posterior distribution that now includes force field parameters:

p(𝐗, 𝛔, 𝛉 | D) ∝ p(D | g(𝐗, 𝛉), 𝛔) p(𝐗) p(𝛔) p(𝛉)[26]. - Minimize the BICePs Score: Alternatively, or concurrently, perform variational optimization by minimizing the BICePs score,

f(θ) = -ln(Z(θ)/Z_0), with respect to the parametersθ. This leverages gradient descent for efficient high-dimensional optimization [3] [26] [27].

Step 4: Analyze Results and Validate

- Examine the marginalized posterior distribution of the force field parameters

p(θ|D)to obtain refined values and their uncertainties [26]. - Use the BICePs score to compare different parameter sets or force fields—the model with the lowest score is preferred [27].

- Check the posterior distribution of the uncertainty parameters

p(σ|D)to assess the overall agreement with experimental restraints [28].

The Scientist's Toolkit

Research Reagent Solutions

Table 1: Essential Computational Tools for BICePs-Based Force Field Optimization

| Tool / Resource | Function | Relevance to Error-Resilience |

|---|---|---|

| BICePs Software (v2.0+) | Open-source Python package for ensemble reweighting and parameter optimization [28]. | Provides the core algorithms, including robust likelihoods for outliers and replica-averaging for uncertainty quantification. |

Theoretical Prior Ensemble (p(X)) |

Conformational states from MD/MC simulation with an initial force field [27]. | Serves as the baseline model to be refined. Accuracy of the final result depends on the coverage and quality of this ensemble. |

| Replica-Averaged Forward Model | Predicts ensemble averages from a finite set of replicas, estimating finite-sampling error (σ_SEM) [3] [26]. | Key for correctly accounting for uncertainty arising from limited conformational sampling. |

| Student's t Likelihood Model | A specialized likelihood function that marginalizes over uncertainty parameters for individual observables [3]. | Automatically detects and down-weights the influence of experimental outliers, reducing systematic error. |

BICePs Score (f(k)) |

A free energy-like quantity for quantitative model selection [27] [28]. | Enables objective comparison of different force field models or parameter sets, even with sparse/noisy data. |

| Neural Network Potentials | Trainable, differentiable potentials (e.g., MACE, CHGNet) [29]. | Can be integrated as the forward model or force field and optimized directly using BICePs score gradients [3] [26]. |

Key Quantitative Benchmarks

Table 2: Performance Metrics from BICePs Applications and Related Methods

| System / Method | Key Metric | Result / Benchmark | Implication for Error Reduction |

|---|---|---|---|

| BICePs (Variational Optimization) | Parameter recovery for a 12-mer HP lattice model with synthetic experimental error [3] [30]. | Successfully refined multiple interaction parameters despite added random and systematic errors. | Demonstrates inherent resilience of the BICePs score for automated force field parameterization in the presence of noise. |

| BICePs (Model Selection) | Discrimination between force fields for beta-hairpin peptides using NMR data [27]. | BICePs score correctly identified the force field most consistent with experimental chemical shifts. | Validates the BICePs score as an objective function for force field validation and selection. |

| 3D-RISM with ECC | Mean Unsigned Error (MUE) for hydration free energies (FreeSolv database) [1]. | MUE of 1.01 ± 0.04 kcal/mol, identifying systematic errors for Cl, Br, I, and P parameters in GAFF. | Illustrates how element-specific corrections can diagnose and reduce systematic force field errors, a goal shared with BICePs refinement. |

Leveraging Small Molecule Crystal Structures for Balanced Force Field Development

Frequently Asked Questions (FAQs)

Q1: How can small molecule crystal structures lead to more balanced force fields compared to traditional parameterization methods? Traditional force field parameterization often fits different parameter subsets independently on simple representative molecules, which can challenge the transferability of the resulting model to new chemical spaces [25]. Using small molecule crystal structures as a rich information source helps create a more balanced force field. By requiring that experimentally determined molecular lattice arrangements have lower energy than all alternative packing arrangements, the optimization process directly captures the subtle trade-offs between deviations from bonded geometry minima and the optimization of non-bonded interactions. This method ensures the force field is trained on a large diversity of drug-like molecules, making it more robust for practical applications like drug discovery [25].

Q2: My lattice energy calculations show systematic underestimation. Is this a known issue with certain force fields? Yes, systematic underestimation of lattice energy is a known limitation in some empirically parameterized atom-atom force fields. Benchmarks on the X23 dataset revealed that anisotropic atom-atom multipole-based force fields can be as accurate as several popular DFT-D methods but may have errors 2–3 times larger than the current best DFT-D methods, with a systematic underestimation of the absolute lattice energy being a primary error source [31].

Q3: What are the common computational errors I might encounter during force field parameter optimization or application? Common errors can arise from various stages of force field development and application:

- During Parameter Optimization: Insufficient memory allocation when handling large numbers of atoms or long trajectories [32].

- During Topology Generation (e.g., with

pdb2gmx):- Residue not found in database: The force field lacks an entry for the residue/molecule you are using [32].

- Long bonds and/or missing atoms: Atoms are missing in your initial structure file, causing the program to place atoms incorrectly [32].

- Atom naming mismatches: Atom names in your coordinate file do not match those in the force field's residue topology database (

.rtpfile) [32].

- During Simulation Setup (e.g., in LAMMPS or GROMACS):

Q4: Are there specialized software tools to help analyze and troubleshoot force field performance? Yes, specialized tools like FFAST (Force Field Analysis Software and Tools) are designed for this purpose. FFAST is a cross-platform package that provides detailed insights into any molecular force field's performance and limitations. It offers a graphical user interface to analyze error distributions, identify outliers in configurational space, visualize atomic projection of errors, and compare multiple models. This helps move beyond average error metrics to understand a model's specific failures and limitations [34].

Troubleshooting Guides

Issue 1: Handling Missing Residues or Parameters in Topology Generation

Problem: When using a tool like pdb2gmx to generate a molecular topology, you encounter an error that a residue is not found in the residue topology database [32].

Solution:

- Check Residue Naming: Ensure the residue name in your structure file exactly matches the name used in the force field's database (

.rtpfile). - Find an Existing Topology: Search the literature or force field repositories for a topology file (

.itp) for your molecule and include it manually in your system topology. - Parameterize the Residue Yourself: If no parameters exist, you must parameterize the residue yourself, which is expert-level work. Alternatively, search for publications with consistent parameters [32].

- Use a Different Force Field: Consider switching to a force field that already includes parameters for your molecule of interest.

Issue 2: Diagnosing Energetic and Thermodynamic Inconsistencies in MD Simulations

Problem: In molecular dynamics simulations, you observe diverging behavior—such as a linear increase in coupled energy (Ecouple) or incorrect density—when comparing your custom force field implementation against a reference, even though pairwise energy and force calculations match [33].

Solution: This suggests an error in how the force field is integrated into the MD engine, not in the functional form itself.

- Verify Energy Conservation: Run simulations in the NVE ensemble (using

fix nvein LAMMPS) without a thermostat or barostat. Confirm that your implementation correctly conserves total energy [33]. - Check Force and Virial Calculation:

- Code Implementation Details: In LAMMPS, ensure that within your pair style code, the

fpairvariable is correctly handled asforce/r(force divided by distance), which requires multiplication by the distance components (dx,dy,dz) to project the force onto Cartesian coordinates [33].

Issue 3: Identifying and Analyzing Force Field Failures and Outliers

Problem: Your force field has good average error metrics but produces unphysical results or fails for specific molecular configurations during simulations [34].

Solution: Adopt a systematic analysis workflow using tools like FFAST [34]:

- Error Distribution Overview: Start with plotting energy and force error distributions to understand the overall performance and spot deviations from a normal error curve.

- Outlier Detection: Use correlation scatter plots (predicted vs. true values) to visually identify configurations where the model fails badly.

- Cluster Analysis: Apply clustering algorithms to group similar configurations in your dataset. Analyze the per-cluster error to identify specific regions of configurational space (e.g., folded states, specific functional group interactions) where the force field is inaccurate [34].

- Visualize Atomic Errors: Use 3D visualization tools to project force errors onto individual atoms of the molecular structure. This can pinpoint specific atoms or chemical motifs (e.g., carbons and oxygens in glycosidic bonds) that consistently have high errors [34].

Experimental Protocols & Data

Key Methodology: Crystal Lattice Discrimination for Parameter Optimization

This protocol outlines the core method for using crystal structures to optimize force field parameters [25].

1. Data Curation:

- Source thousands of small molecule crystal structures from the Cambridge Structural Database (CSD).

- Apply filters: one molecule per asymmetric unit, high occupancy, common organic elements (H, C, N, O, S, P, F, Cl, Br, I), and a defined range of rotatable bonds.

- Split the data into training and validation sets.

2. Decoy Structure Generation:

- For each native crystal structure, run thousands of independent Crystal Structure Prediction (CSP) simulations.

- Use a Metropolis Monte Carlo with minimization (MCM) search to sample:

- Space Groups: Commonly observed ones (e.g., P 1 21/c 1, P-1, P 21 21 21).

- Internal Coordinates: Randomize all rotatable dihedral angles.

- Rigid-body Orientation: Rotate and translate the molecule within the lattice.

- Lattice Parameters: Vary unit cell lengths and angles.

- For each molecule, generate a large decoy set containing >1,000 de novo predicted structures and >100 near-native perturbed structures.

3. Parameter Optimization:

- Define a force field energy model (e.g., RosettaGenFF) containing hundreds of parameters for non-bonded (Lennard-Jones, implicit solvation) and torsional terms.

- Use an optimization algorithm (e.g., Simplex-based dualOptE) to adjust these parameters. The objective is to maximize the energy gap between the experimentally observed native crystal lattice and all the generated decoy arrangements.

- Iterate between parameter optimization and decoy regeneration to refine the force field.

Performance Benchmarking of Force Fields

The table below summarizes the quantitative performance of various force fields and methods on the X23 benchmark set for lattice energies, allowing for easy comparison of their accuracy [31].

Table 1: Force Field and DFT Performance on the X23 Benchmark Set

| Method / Force Field | Electrostatic Model Used | Typical Performance / Notes |

|---|---|---|

| Best DFT-D Methods | Self-consistent electron density | Highest accuracy; serves as the top-tier benchmark. |

| Anisotropic Atom-Atom Multipole Force Fields | Distributed Atomic Multipoles | Accuracy comparable to several popular DFT-D methods, but errors can be 2-3 times larger than the best DFT-D methods [31]. |

| FIT | Atomic Multipoles | A revision of the W84 force field, parameterized for use with multipoles [31]. |

| W99_ESP | Atomic Partial Charges (ESP-fit) | The original W99 force field applied with electrostatic potential-derived charges [31]. |

| W99_DMA | Distributed Atomic Multipoles | The original W99 force field applied with a multipole electrostatic model [31]. |

| W99rev6311 | Distributed Atomic Multipoles | A revised W99 parameterized for use with multipoles from an isolated molecule charge density [31]. |

| W99rev6311P3/P5 | PCM-derived Multipoles (ε=3/5) | A revised W99 parameterized for use with multipoles from a charge density polarized by a continuum model [31]. |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Resources for Force Field Development and Testing

| Item | Function / Description |

|---|---|

| Cambridge Structural Database (CSD) | A primary repository for experimentally determined organic and metal-organic crystal structures. Serves as the essential source of training and validation data for the parameter optimization process [25] [31]. |

| Rosetta Software Suite | A comprehensive software platform for macromolecular modeling. It was extended with symmetry machinery and a new energy model (RosettaGenFF) to perform crystal lattice prediction and parameter optimization [25]. |

| FFAST (Force Field Analysis Software and Tools) | A cross-platform software package with a GUI designed to provide detailed insights into a force field's performance. It helps with error analysis, outlier detection, and 3D visualization of errors on molecular structures [34]. |

| X23 Benchmark Set | A curated set of 23 high-quality crystal structures of small organic molecules with accurately known lattice energies. Used to rigorously assess and benchmark the performance of computational methods for molecular crystals [31]. |

| LAMMPS | A widely used open-source molecular dynamics simulator. It allows for the implementation and testing of custom force fields but requires careful coding and validation [33]. |

| GROMACS | A high-performance molecular dynamics package. Common errors during setup (e.g., in pdb2gmx or grompp) are well-documented, aiding in troubleshooting topology and parameter issues [32]. |

| Machine Learning Surrogate Models | ML models can be trained to predict molecular properties, substituting expensive molecular dynamics calculations during parameter optimization. This can speed up the optimization process by a factor of 20 or more [35]. |

Workflow Visualization

Crystal-Based Force Field Optimization

Systematic Force Field Analysis with FFAST

Frequently Asked Questions (FAQs)

1. What are the primary advantages of MLFFs over traditional force fields? Machine-learned force fields (MLFFs) are trained directly on quantum-chemical (ab-initio) data, allowing them to achieve accuracy close to quantum mechanical methods at a fraction of the computational cost. Unlike traditional molecular mechanics force fields which rely on fixed, parametrized energy terms and are not easily transferable, MLFFs can inherently model complex interactions, including bond-breaking and formation, without extensive reparameterization for each new system [36] [4].

2. How can I diagnose an overfitted MLFF? An overfitted force field is typically indicated by a low training-set error but a high test-set error [18]. This means your force field performs well on the data it was trained on but fails to generalize to new, unseen structures. To resolve this, you can include more diverse training structures or tune the model's hyperparameters [18].

3. My MD simulations with an MLFF are unstable. What could be wrong? Simulation instability can stem from several issues during training [37] [38]:

- Poor description of inter-molecular interactions: This is a common challenge in molecular liquids. Models may seem stable in NVT or NVE ensembles but fail in the more sensitive NPT ensemble, leading to unphysical density collapse [38].

- Insufficient or non-diverse training data: The training set may not adequately represent the configurations explored during your production MD run.

- Inappropriate time step (POTIM): If your system contains light elements like hydrogen, the time step may be too large. As a rule of thumb, it should not exceed 0.7 fs for hydrogen-containing compounds [9].

4. When should I treat atoms of the same element as different species? It is helpful to split a single element into multiple species when the atoms exist in significantly different chemical environments. Examples include atoms with different oxidation states, or surface atoms versus bulk atoms. This can improve the accuracy of the force field, though it comes at the cost of reduced computational efficiency [9].

5. Why is the density predicted by my MLFF inaccurate? The density in the NPT ensemble is highly sensitive to errors in weak inter-molecular interactions [38]. An MLFF that appears accurate on intramolecular interactions and forces might still fail to reproduce the correct density. This requires specific attention during training, such as using iterative training protocols to improve the description of these interactions [38].

Troubleshooting Guide

This guide addresses common errors and provides solutions based on established best practices.

Problem: High Error in Force Predictions on Test Set

Symptoms: The root-mean-square error (RMSE) for forces is high when the MLFF is applied to a test set of structures not used in training.

Solutions:

- Expand and Diversify Training Data: Ensure your training set covers the relevant phase space. For molecular dynamics, this means sampling various temperatures and densities. If you are studying a surface with an adsorbate, consider training on the bulk, the clean surface, and the isolated molecule first before combining them [9].

- Tune the Confidence Threshold (ML_CTIFOR): This parameter controls when new ab-initio calculations are triggered during on-the-fly learning. If the default value (0.02) is too high, not enough reference data is collected. Adjusting it to a lower value can improve data collection and model accuracy [9].

- Optimize Hyperparameters: Systematically optimize machine learning hyperparameters, such as the cutoff radius and atomic environment descriptor resolution, to improve both accuracy and performance [18].

Problem: Unphysical Structural Collapse During NPT Simulation

Symptoms: The simulation volume expands or contracts unphysically, often with the formation of "bubbles" in a liquid, leading to a completely wrong density [38].

Solutions:

- Employ Iterative Training: Do not rely on a single, static training set. Use an active learning or iterative protocol where the MLFF itself is used to sample new, relevant configurations (e.g., from NPT dynamics) which are then computed with DFT and added to the training set. This ensures the model learns the correct inter-molecular interactions [39] [38].

- Incorporate Specific Training Data: Add data from "rigid-molecule volume scans" to the training set. This explicitly teaches the model about the energy landscape as a function of molecular separation [38].

- Check Ab-Initio Settings for Variable Cell Simulations: When training in the NpT ensemble, set the plane wave cutoff (

ENCUT) at least 30% higher than for fixed-volume calculations to avoid Pulay stress. Restart training frequently to reinitialize the basis set [9].

Problem: MLFF Fails to Describe a Catalytic Reaction Pathway

Symptoms: The MLFF yields inaccurate energy barriers for reaction paths, limiting its utility for catalysis studies.

Solutions:

- Implement a Specialized Training Protocol: Follow a multi-stage active learning protocol designed for catalytic systems [39]. This involves:

- Training on the bulk and clean surface.

- Running MD with adsorbates to sample configurations.

- Including data from nudged elastic band (NEB) calculations of the reaction barriers themselves.

- Use Local Energy Uncertainty for Sampling: Employ an active learning loop that interrupts simulations when the model's uncertainty for any atom exceeds a threshold (e.g., 50 meV). The configuration is then sent for DFT calculation, ensuring the training set grows in the most relevant parts of the potential energy surface [39].

Problem: Slow Performance in Production MD Runs

Symptoms: Predictive MD simulations (ML_MODE = run) are slower than expected.

Solutions:

- Always Refit for Production: After on-the-fly training (