Strategies for Reducing Computational Cost in Long Molecular Dynamics Simulations

Long-timescale Molecular Dynamics (MD) simulations are pivotal for studying biomolecular processes but are often prohibitively expensive.

Strategies for Reducing Computational Cost in Long Molecular Dynamics Simulations

Abstract

Long-timescale Molecular Dynamics (MD) simulations are pivotal for studying biomolecular processes but are often prohibitively expensive. This article provides a comprehensive guide for researchers and drug development professionals on strategies to significantly reduce computational costs. We explore the foundational principles governing MD expense, detail hardware acceleration using GPUs and FPGAs, and explain advanced algorithmic methods like enhanced sampling and machine learning potentials. The guide also covers practical system-specific optimizations and best practices for validating results, synthesizing these approaches into a actionable framework for achieving faster, more efficient, and scientifically robust simulations.

Understanding the Bottleneck: Why Molecular Dynamics Simulations Are Computationally Expensive

Frequently Asked Questions

How do I choose the right enhanced sampling method for my biomolecular system? The choice depends on your system's size and the biological process you're studying [1].

- Replica-Exchange MD (REMD) is widely used for studying conformational changes and protein folding. It works by running multiple parallel simulations at different temperatures and periodically swapping their states, helping the system overcome high energy barriers [1].

- Metadynamics is effective for exploring free-energy landscapes. It works by adding a bias potential to "fill" free energy wells, which discourages the system from revisiting already sampled states. It is particularly useful for processes like protein-ligand binding, but requires careful selection of a small set of collective variables [1].

- Simulated Annealing is well-suited for characterizing very flexible systems and for finding low-energy configurations. It involves gradually cooling the system from a high temperature, which helps avoid getting trapped in local energy minima [1].

What is the optimal system size for simulating polymer resins like epoxy? A 2024 systematic study on an epoxy system found that a model size of 15,000 atoms provides the best balance between simulation precision and computational cost. Larger systems did not significantly improve the precision of predicted properties like mass density, elastic modulus, and thermal properties, but took longer to simulate [2].

| Number of Atoms | Key Findings and Convergence of Properties [2] |

|---|---|

| 5,265 | Smaller systems; may show size effects and less precise property prediction. |

| 10,530 | |

| 14,625 | Found to be the optimal size for epoxy resins, balancing precision and speed. |

| 20,475 | |

| 31,590 | Larger systems; simulation time increases without significant gain in precision. |

| 36,855 |

My simulations are too slow. What are some advanced strategies to speed them up? Beyond choosing the right system size, machine learning (ML) offers promising paths:

- ML-Driven Integrators: New methods are being developed to use ML for predicting molecular trajectories with much longer time steps. However, many current approaches do not conserve energy properly. A solution is to learn structure-preserving maps (symplectic and time-reversible) that are equivalent to learning the mechanical action of the system, which maintains physical fidelity and energy conservation even with large steps [3].

- Adaptive Sampling: This class of algorithms enhances sampling by intelligently choosing new starting points (seeds) for multiple simulations rather than adding biasing forces. This preserves the thermodynamic ensemble while more efficiently exploring conformational space, especially when combined with machine learning to guide the seeding process [4].

Troubleshooting Guides

Problem: Inadequate Sampling of Conformational States

Issue: The molecular system gets trapped in local energy minima and fails to explore all biologically relevant conformations within a feasible simulation time [1].

Solution: Implement an enhanced sampling protocol. Below is a workflow to select and apply the appropriate method.

Experimental Protocol: Replica-Exchange MD (REMD)

- System Setup: Prepare your system (protein, solvent, ions) in a simulation box as for a standard MD run.

- Replica Generation: Create multiple copies (replicas) of your system, each running at a different temperature. The temperature range should span from the temperature of interest to a high temperature where the system can easily overcome energy barriers [1].

- Simulation Run:

- Run each replica independently for a short period (e.g., 1-2 ps).

- Periodically attempt to swap the configurations of two adjacent replicas (e.g., replicas at temperature T1 and T2) based on a Metropolis criterion using their potential energies and temperatures [1].

- Analysis: After completion, use the weighted histogram analysis method (WHAM) to reconstruct the unbiased thermodynamic properties and free energy landscape at the temperature of interest.

Experimental Protocol: Metadynamics

- Collective Variables (CVs): Identify 1-2 slow degrees of freedom that describe the process of interest (e.g., a distance, angle, or dihedral).

- Simulation Run:

- As the simulation progresses, periodically add a small Gaussian-shaped repulsive bias potential to the current location in CV space.

- This "fills" the free energy wells, pushing the system to explore new areas [1].

- Analysis: The accumulated bias potential provides an estimate of the underlying free energy surface as a function of your chosen CVs [1].

Problem: Determining the Optimal System Size for a New Material

Issue: Uncertainty in whether a simulation box is large enough to yield statistically precise results without being wastefully large.

Solution: Follow a systematic sizing procedure to determine the convergence of properties versus computational cost [2].

Experimental Protocol: System Size Convergence Study

- Model Building:

- Build a series of systems of increasing size (e.g., from 5,000 to 40,000 atoms).

- For each size, construct at least 5 independent replicate models using different initial random velocity distributions to ensure statistical robustness [2].

- Simulation and Equilibration:

- Subject all replicates to an identical simulation protocol: energy minimization, densification, annealing, and equilibration in the NPT ensemble [2].

- Property Calculation:

- Using the equilibrated structures, compute key properties of interest (e.g., mass density, Young's modulus, shear modulus, and glass transition temperature) for each replicate.

- Data Analysis:

- For each system size, calculate the mean and standard deviation for each property.

- Plot the mean and standard deviation of each property against the system size.

- The optimal system size is the smallest size at which the mean values have stabilized and the standard deviation has decreased to an acceptable, low level [2].

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Computational Experiments |

|---|---|

| Replica-Exchange MD (REMD) | A generalized-ensemble algorithm that enhances conformational sampling by allowing parallel simulations at different temperatures to exchange states, facilitating escape from local energy minima [1]. |

| Metadynamics | An enhanced sampling technique that applies a history-dependent bias potential to collective variables to efficiently explore free-energy landscapes and estimate free energies [1]. |

| Simulated Annealing | A global optimization method that mimics the physical annealing process by running simulations at high temperature and gradually cooling to find low-energy configurations [1]. |

| LAMMPS | A widely used open-source molecular dynamics simulator that is highly flexible and can be used with various force fields and enhanced sampling protocols, such as the REACTER method for cross-linking [2]. |

| Interface Force Field (IFF) | A force field parameterized for a broad range of materials, including polymers, which has been validated for predicting physical, mechanical, and thermal properties accurately [2]. |

| Collective Variables (CVs) | Low-dimensional descriptors (e.g., distances, angles, radii of gyration) that are used to describe the slow motions of a system and are essential for biased sampling methods like metadynamics [1]. |

Troubleshooting Guide: Common Force Field Calculation Issues

FAQ: My simulation is too slow. What are the main force-field-related factors that could be causing this?

The performance of a Molecular Dynamics (MD) simulation is heavily dependent on the choice and configuration of the force field and its associated parameters. The table below summarizes the key factors and their impact on the computational load.

| Factor | Description & Impact on Computational Load | Common Solutions |

|---|---|---|

| Force Field Type | Classical Molecular Mechanics (MM) force fields are much faster than quantum mechanical (QM) descriptions. MM is the method of choice for most biomolecular simulations in the condensed phase [5]. | Use a classical MM force field for large systems; reserve more accurate QM methods for small systems or specific reactive regions [5]. |

| Non-bonded Interaction Cutoff | Calculating non-bonded (electrostatic and van der Waals) interactions is the most computationally intensive part of a force field calculation. A larger cutoff radius increases the number of atom pairs evaluated. | Use a reasonable cutoff (e.g., 1.0-1.2 nm). For long-range electrostatics, use Particle Mesh Ewald (PME), which is efficient for accurate calculations [6]. |

| System Size and Solvation | Simulating molecules in solution requires thousands to millions of solvent atoms, dramatically increasing the number of force calculations per step [5]. | Use a minimal solvent box. Consider implicit solvent models for specific studies, though explicit solvent is more common for accuracy. |

| Constraints and Rigid Bodies | The timestep for integration is limited by the fastest motions in the system (e.g., bond vibrations). Treating these bonds as rigid allows a larger timestep [5]. | Use algorithms like LINCS to constrain bond lengths involving hydrogen atoms, allowing a timestep of 2 fs instead of 1 fs [5]. |

| Precision Model | Using double precision for all calculations instead of the default mixed precision can significantly increase computation time without always improving accuracy [7] [6]. | Use the default mixed-precision mode of your MD software (e.g., GROMACS) unless required for specific hardware or reproducibility [6]. |

FAQ: How can I ensure my simulation is reproducible, especially when using force fields on different hardware?

MD simulations are inherently chaotic, and even a single bit of difference can cause trajectories to diverge. However, observables like energy should converge to the same average values [7]. The following factors specifically affect the reproducibility of force field calculations:

- Precision: Using double precision improves reproducibility over mixed or single precision [7].

- Hardware and Summation Order: The type of processors (CPU/GPU) and the number of cores used can change the order of floating-point operations for force accumulations (e.g., (a+b)+c ≠ a+(b+c)), leading to non-binary identical results [7].

- GPU Reductions: On GPUs, the reduction of non-bonded forces has a non-deterministic summation order, making any fast implementation non-reproducible by design [7].

Solution: To obtain a reproducible trajectory for debugging purposes, use the -reprod flag in gmx mdrun. This eliminates all sources of non-reproducibility that the software can control, ensuring that the same executable, hardware, and input file produce identical results [7].

FAQ: I am simulating a novel molecule. Can I mix parameters from different force fields?

No. You should not mix parameters from different force fields [6]. Molecules parameterized for one force field will not behave physically when interacting with molecules parameterized under different standards. If your molecule is missing from your chosen force field, you must parameterize it yourself according to that force field's specific methodology [6].

Experimental Protocol: Extending a Simulation Efficiently

To extend a completed simulation, thus maximizing the return on your initial computational investment, follow this protocol. This avoids restarting from scratch and ensures continuity.

1. Protocol: Extending a Simulation using gmx convert-tpr

This method is efficient when you only need to add more simulation time without changing any other parameters [7].

Step 1: Use the

gmx convert-tprtool to create a new run input file (tpr) that extends the simulation time.-s previous.tpr: Specifies the original input file.-extend 10000: Extends the simulation by 10,000 ps (adjust as needed).-o next.tpr: Specifies the name of the new input file [7].

Step 2: Restart the simulation using the new

tprfile and the last checkpoint (cpt) file from the previous run.-cpi state.cpt: Reads the checkpoint file containing full-precision coordinates and velocities for a continuous restart [7].

2. Protocol: Restarting with Modified Parameters using gmx grompp

Use this method if you need to change any parameters in your mdp file or topology for the continuation run [7].

Step 1: Run

gmx gromppwith the original structure file and the final checkpoint file from the previous run.-t state.cpt: Supplies the checkpoint file so thatgromppcan read the full-precision coordinates and velocities from the end of the last run [7].

Step 2: Launch the new simulation with the generated

continued.tprfile.

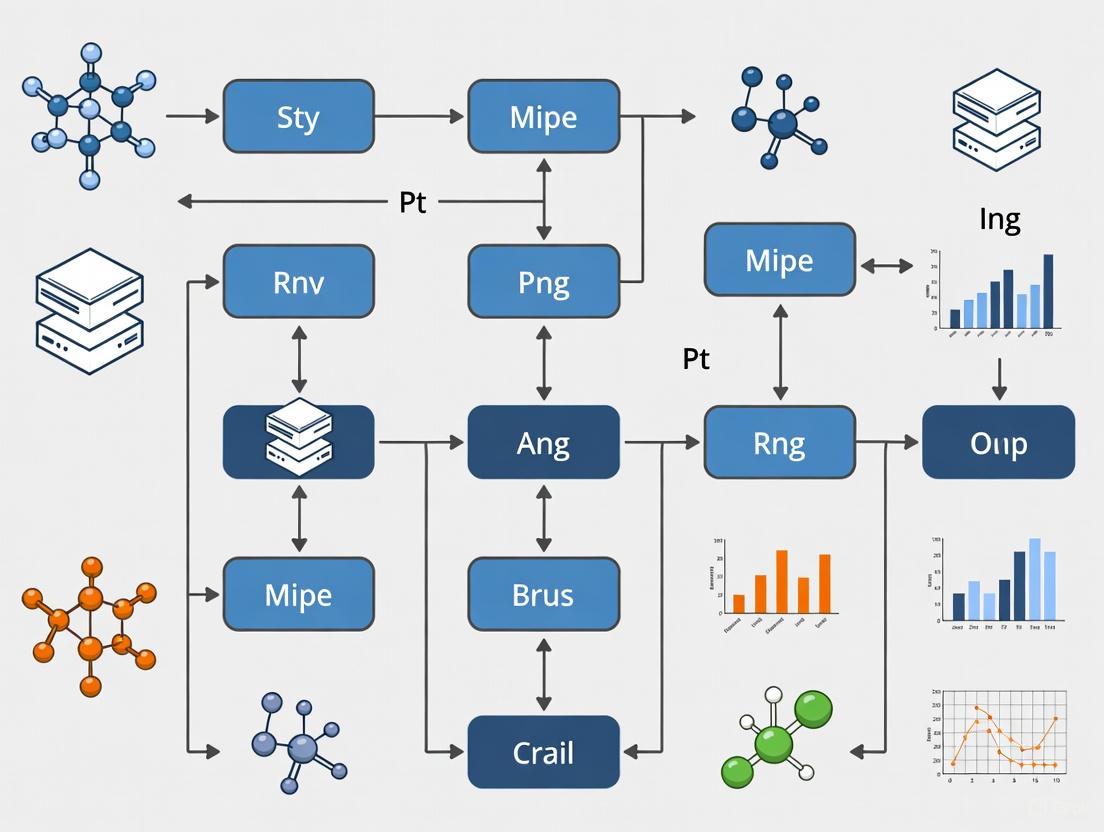

Workflow Diagram: Force Field Simulation Optimization

The diagram below outlines the logical workflow for setting up and optimizing a force field-based MD simulation to manage computational cost.

Force Field Simulation Optimization Workflow

The Scientist's Toolkit: Research Reagent Solutions

The table below details key computational "reagents" — software tools and file formats — essential for managing force field calculations and long simulations.

| Item | Function & Role in Cost Reduction |

|---|---|

| Checkpoint File (.cpt) | A binary file written periodically by gmx mdrun that contains full-precision coordinates, velocities, and all simulation state information. It is the only reliable method for restarting a simulation, preventing loss of computational progress due to interruptions [7]. |

GROMACS (gmx mdrun) |

The primary simulation engine. Its efficient implementation of force field calculations and support for various hardware (CPUs, GPUs) makes it a standard for high-performance MD [7] [6]. |

| Topology File (.top/.itp) | Defines the force field parameters for all molecules in the system, including bonded terms (bonds, angles, dihedrals) and non-bonded terms (atom charges, types). Correct topology is essential for physical accuracy [6]. |

| Run Input File (.tpr) | A portable binary file containing all information about the simulation (coordinates, topology, parameters). It is produced by gmx grompp and is the input for gmx mdrun [7]. |

gmx convert-tpr |

A utility that modifies an existing .tpr file, most commonly to extend the simulation time. This is the most straightforward way to continue a finished simulation without changing other parameters [7]. |

| Structure File (.gro) | A unified structure file format that can be read by all GROMACS utilities. It contains atom coordinates and, importantly, velocities, which are crucial for continuous dynamics [6]. |

| Machine-Learned Force Fields (sGDML) | An advanced approach that constructs force fields from high-level ab initio calculations. It enables converged MD simulations with spectroscopic accuracy for small molecules, bridging the gap between accuracy and computational cost [8]. |

Technical Support Center

Frequently Asked Questions (FAQs)

FAQ 1: Our molecular dynamics simulations are producing terabytes of data, making storage and analysis prohibitively expensive. What strategies can we use to manage this?

Managing large MD data requires a multi-pronged approach. First, consider data reduction techniques, such as saving simulation snapshots at less frequent intervals or removing solvent molecules after the simulation is complete [9]. Second, implement efficient data organization from the start. Using a chronological and logical directory structure for your projects, with a dedicated "lab notebook" file documenting each step, makes data easier to locate and process, reducing time and computational waste [10]. For long-term projects, explore remote analysis solutions, where analysis is performed on a remote server, and only the results are transmitted, avoiding the need to move massive trajectory files [9].

FAQ 2: The neural network potentials (NNPs) we are using are highly accurate but too slow for the timescales we need to study. Are there methods to accelerate them?

Yes, a promising strategy is the use of a Multi-Time-Step (MTS) integrator with a distilled neural network model [11]. This involves using two NNPs: a large, accurate "foundation" model and a smaller, faster model. The fast model handles the frequent calculations of bonded interactions, while the accurate model corrects the trajectory less often. This approach can yield speedups of 2.3 to 4 times over standard 1 fs integration while preserving accuracy [11].

FAQ 3: How can we better connect our expensive simulation results with experimental data to ensure our computational investment is worthwhile?

Validation against experiments is crucial. Use NMR relaxation measurements for comparison, as they cover a wide range of time scales that can be matched to simulation data [9]. Be aware that in highly crowded systems, like a simulated cytoplasm, experiments often report ensemble averages, while simulations might reveal rare events (e.g., individual protein unfolding) that affect only a small percentage of molecules [9]. Explicitly designing simulations to include multiple copies of the same molecule can help you generate meaningful ensemble averages for a more direct comparison [9].

FAQ 4: Our simulation results are complex and difficult to interpret. Manually analyzing them is time-consuming. What tools can help?

For complex systems, manual analysis is no longer feasible. It is recommended to employ automated feature analysis and machine learning (ML) techniques [9]. These AI-driven approaches can identify structural changes, dynamic features, and causal relationships within the simulation data that would be difficult or impossible to spot manually, thereby extracting more value from your costly simulations [9].

Troubleshooting Guides

Issue: Simulation is unstable or produces unphysical results when using a large time step.

- Problem: The time step is too large to accurately capture the fastest motions (e.g., bond vibrations), leading to energy drift or system failure.

- Solution:

- Reduce the time step: Always start with a small time step (e.g., 1 fs) to ensure stability [11].

- Consider an MTS scheme: If performance is critical, implement a multiple time-step integrator like RESPA. This allows you to use a small time step for fast bonded forces and a larger one for slower non-bonded interactions, improving efficiency without sacrificing stability [11].

- Validate carefully: After any change to the integrator, always check that the method conserves energy and reproduces key dynamic and thermodynamic properties [11].

Issue: Difficulty reproducing or building upon past simulation results.

- Problem: Inadequate documentation of data, parameters, and procedures makes it hard to repeat or extend previous work.

- Solution:

- Create a driver script: For each experiment, create a script (e.g.,

runall) that automatically executes the entire simulation and analysis workflow. This script should be heavily commented to explain every operation [10]. - Maintain a lab notebook: Keep a chronologically organized document (can be a text file or wiki) that records the goals, parameters, observations, and conclusions for each simulation run [10].

- Organize files chronologically: Structure project directories with date-stamped folders (e.g.,

2025-11-26) to make the experimental timeline clear [10].

- Create a driver script: For each experiment, create a script (e.g.,

The table below summarizes key performance data for the neural network potential (NNP) acceleration strategy [11].

Table 1: Performance of Multi-Time-Step Scheme with Distilled Neural Network Models

| System Type | Standard 1 fs Integration (Baseline) | MTS with Distilled NNP (Speedup) | Key Metric Preserved |

|---|---|---|---|

| Homogeneous System | 1x | 4x | Static & dynamical properties |

| Large Solvated Protein | 1x | 2.3x | Static & dynamical properties |

| Outer Time Step | 1 fs | 3-6 fs | Accuracy of reference NNP |

Experimental Protocol: Multi-Time-Step Acceleration for Neural Network Potentials

Objective: To significantly accelerate molecular dynamics simulations using a foundation NNP while preserving accuracy, via a dual-level multi-time-step scheme.

Methodology:

Model Selection and Distillation:

- Reference Model: Select a large, accurate foundation NNP (e.g., FeNNix-Bio1(M) with an 11 Å receptive field).

- Distilled Model: Create a smaller, faster model (e.g., with a 3.5 Å receptive field and reduced network capacity) via knowledge distillation. This model is trained on data labeled by the reference model, not on ab initio data [11].

- Training Data: Generate a reference dataset by running a short MD simulation ( <1 ns) with the reference model on the system of interest. For large systems like proteins, a fragmentation strategy may be used to reduce computational load [11].

Integration Scheme (BAOAB-RESPA):

- Implement a RESPA-like MTS integrator [11].

- The forces from the fast, distilled model (

FENNIX_small) are evaluated at every inner time step (1 fs). - The force difference between the reference and distilled models (

FENNIX_large - FENNIX_small) is evaluated less frequently, at the outer time step (3-6 fs). - This corrects the trajectory to that of the accurate reference model without needing to evaluate it at every step.

Validation and Analysis:

- Compare the MTS simulation results against a control simulation run with the reference model using a standard 1 fs time step.

- Monitor key thermodynamic (potential energy, temperature) and dynamic (diffusion coefficients) properties to ensure they are preserved within acceptable limits [11].

Research Workflow and Signaling Pathways

The following diagram illustrates the logical workflow and data flow for the Multi-Time-Step acceleration protocol.

Multi-Time-Step Acceleration Workflow

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Computational Tools for Cost-Effective MD Research

| Item / Software | Function / Purpose | Relevance to Cost Reduction |

|---|---|---|

| Multi-Time-Step (RESPA) Integrator | Enables use of different time steps for fast/slow forces. | Reduces number of expensive force evaluations; core acceleration method [11]. |

| Distilled Neural Network Potential | A fast, simplified model trained to mimic a larger, accurate NNP. | Serves as the "fast" component in MTS schemes, enabling large outer time steps [11]. |

| Foundation NNP (e.g., FeNNix-Bio1) | A general-purpose, accurate machine-learned force field. | Provides high-accuracy reference for distillation and corrective forces in MTS [11]. |

| Centralized Data Repository | A standardized database for all simulation data across projects. | Prevents data silos, enables reuse, and supports training of better ML models [12]. |

| Automated Driver Script (e.g., runall) | A script that automatically executes an entire simulation/analysis workflow. | Ensures reproducibility, saves researcher time, and simplifies re-running experiments [10]. |

| Electronic Lab Notebook | A chronologically organized document for tracking progress and conclusions. | Prevents redundant work by clearly documenting what has been tried and its outcome [10]. |

FAQ: Understanding and Managing Computational Cost

What are the key metrics for evaluating MD simulation performance?

The primary metric for evaluating Molecular Dynamics simulation performance is throughput, measured in nanoseconds simulated per day (ns/day). This indicates how much simulated time you can achieve in a 24-hour period [13]. A higher ns/day value means faster time to results.

Other critical metrics include:

- Cost per 100 ns or cost per microsecond: Essential for budgeting long-running simulations [14] [15].

- GPU Utilization: A high percentage (e.g., ≥90%) indicates the hardware is being used efficiently [15].

- Time-to-Solution: The total wall-clock time needed to complete a simulation.

How does my choice of hardware impact simulation cost and performance?

Your choice of hardware, particularly the GPU, has a profound impact on both simulation speed and cost-efficiency. Raw performance does not always equate to the best value.

The table below benchmarks various GPUs for a ~44,000-atom system (T4 Lysozyme), showing how speed translates into operational cost [15].

| GPU | Cloud Provider | Performance (ns/day) | Cost per 100 ns (Indexed to AWS T4) |

|---|---|---|---|

| H200 | Nebius | 555 | ~13% cheaper than T4 |

| L40S | Nebius/Scaleway | 536 | ~60% cheaper than T4 |

| H100 | Scaleway | 450 | More efficient than T4 |

| A100 | Hyperstack | 250 | More efficient than T4 |

| V100 | AWS | 237 | ~33% more expensive than T4 |

| T4 | AWS | 103 | Baseline (Most expensive per result) |

For traditional MD workloads, the NVIDIA L40S often provides the best balance of performance and affordability [15]. For smaller systems, the consumer-grade NVIDIA RTX 5090 can offer exceptional single-GPU throughput, while server-grade cards like the RTX PRO 4500 Blackwell are excellent for scalable, multi-GPU workstations [16].

What are the most effective strategies to reduce computational costs?

- Optimize I/O Intervals: Frequently saving trajectory data can throttle performance by up to 4x. Reduce the save frequency (e.g., every 1,000-10,000 steps) to maintain high GPU utilization [15].

- Leverage Multi-Time-Step (MTS) Integrators: Advanced algorithms like RESPA use cheaper force calculations for fast motions (bonds) and expensive models for slower motions less frequently. This strategy can provide a 2.3x to 4x speedup for neural network force fields [11].

- Use High-Throughput Computing Clusters: For running hundreds of independent simulations (ensemble methods), use clusters with automated provisioning. Platforms like Fovus have demonstrated the ability to run complex biomolecular structure predictions for as little as $0.10 per simulation [14].

- Enable Efficient Interconnects: For multi-node simulations, a high-performance network like the Elastic Fabric Adapter (EFA) on AWS is critical. It can lead to a 5.4x runtime improvement at scale compared to simulations without it [13].

How can I accurately benchmark my own MD simulation setup?

To ensure your benchmarks reflect production performance, follow this detailed protocol based on a standard T4 Lysozyme system [15]:

- System Preparation: Obtain the T4 Lysozyme structure (PDB ID: 4W52). Solvate it in explicit water, resulting in a system of ~43,861 atoms.

- Simulation Parameters:

- Integration Timestep: 2 fs.

- Electrostatics: Particle Mesh Ewald (PME).

- Simulation Length: 100 ps for a quick benchmark.

- Precision: Mixed precision.

- I/O Optimization: Set the trajectory save interval to every 1,000 or 10,000 steps (2-20 ps) to minimize GPU-CPU data transfer overhead.

- Execution: Use the

quickrunfunction in UnoMD or a similar command in your chosen MD engine. - Data Collection: Measure the final performance in ns/day and monitor GPU utilization (target ≥90%).

What common mistakes lead to poor performance or inflated costs?

- Neglecting I/O Overhead: Saving data too frequently is a common bottleneck. Optimize save intervals for production runs [15].

- Ignoring Interconnect Performance: When scaling to multiple nodes, using standard networking instead of a high-performance interconnect like EFA will cause performance to plateau rapidly [13].

- Overlooking Cost-Per-Result: Choosing an GPU instance based only on its hourly rate or raw speed can be misleading. Always calculate the cost per 100 ns or cost per microsecond for a true comparison [14] [15].

- Using Outdated Hardware: Older GPUs like the T4 and V100 are significantly less cost-effective than modern alternatives like the L40S, leading to longer runtimes and higher total project costs [15].

The Scientist's Toolkit: Research Reagent Solutions

| Category | Item | Function / Relevance |

|---|---|---|

| MD Software & Tools | GROMACS [13], AMBER [16], OpenMM [15], Tinker-HP [11] | Core simulation engines with GPU acceleration. |

| Optimization Algorithms | SHAKE [17], RESPA (MTS) [11] | Allows larger timesteps by constraining bonds; enables faster force evaluation. |

| Performance Tools | UnoMD [15], AWS ParallelCluster [13], Fovus Platform [14] | Tools for benchmarking, workflow automation, and managed HPC. |

| Neural Network Potentials | FeNNix-Bio1(M) [11] | Foundation model for accurate, transferable force fields. |

| Critical Hardware | NVIDIA L40S / RTX 5090 GPUs [16] [15], Elastic Fabric Adapter (EFA) [13] | Cost-effective compute; high-performance networking for multi-node scaling. |

Acceleration Arsenal: Hardware and Algorithmic Strategies for Faster MD

Installation, Setup, and Verification

How do I install OpenMM with full GPU acceleration?

Install OpenMM via conda or pip to easily access its CUDA, HIP, or OpenCL platforms. After installation, you must verify that the software correctly detects and uses your GPU [18].

Detailed Protocol:

- Using Conda: Open a terminal and run:

conda install -c conda-forge openmm. Recent versions of conda will automatically install a version of OpenMM compiled with the latest CUDA version supported by your drivers. You can also specify a CUDA version withconda install -c conda-forge openmm cuda-version=12[18]. - Using Pip: Run

pip install openmm. To include the CUDA platform (for NVIDIA GPUs), usepip install openmm[cuda12]. For AMD GPUs, usepip install openmm[hip6][18]. - Verification: Crucially, test your installation by running

python -m openmm.testInstallation. This command confirms the installation is correct, checks for available GPU platforms, and verifies that all platforms produce consistent results [18].

What is the number one thing to check if my simulation is running slower than expected?

The first step is to verify that your simulation is actually running on the GPU and not falling back to the CPU.

Troubleshooting Guide:

- Check Platform Selection: Ensure your simulation script explicitly specifies the CUDA, HIP, or OpenCL platform when creating the

Context. For example, in a Python script, you might useplatform = Platform.getPlatformByName('CUDA')[18]. - Verify GPU Usage: While your simulation is running, use the

nvidia-smicommand in a separate terminal (for NVIDIA GPUs) to monitor GPU utilization. High utilization percentage confirms the GPU is being used. - Review Output: The verification command

python -m openmm.testInstallationwill list the available platforms and which one is being used for the test.

Performance Optimization

How can I run multiple simulations at once without a multi-GPU system?

You can use NVIDIA's Multi-Process Service (MPS) to run multiple molecular dynamics simulations concurrently on a single GPU. This is ideal for smaller system sizes that cannot fully saturate a modern GPU on their own [19].

Experimental Protocol:

- Enable MPS: In a terminal, run

nvidia-cuda-mps-control -dto start the MPS service [19]. - Launch Concurrent Simulations: Run your multiple simulation scripts, ensuring they are all targeted to the same GPU. This can be done using

CUDA_VISIBLE_DEVICES=0 python sim1.py &andCUDA_VISIBLE_DEVICES=0 python sim2.py &for two simulations [19]. - Fine-tune Performance (Advanced): Use the

CUDA_MPS_ACTIVE_THREAD_PERCENTAGEenvironment variable to allocate GPU resources. A setting of$(( 200 / NSIMS ))forNSIMSnumber of simulations has been shown to further increase throughput by 15-25% for some workloads [19]. - Disable MPS: When finished, stop the service with

echo quit | nvidia-cuda-mps-control[19].

Quantitative Performance Uplift with MPS: The performance gain from MPS depends on the GPU model and the size of the simulated system. The table below summarizes throughput increases observed in benchmarks [19].

| GPU Model | Benchmark System (Size) | Concurrent Simulations | Throughput Increase |

|---|---|---|---|

| NVIDIA H100 | DHFR (23,558 atoms) | 2 | > 100% (More than double) |

| NVIDIA L40S | DHFR (23,558 atoms) | 8 | Approaches 5 µs/day |

| NVIDIA H100 | Cellulose (408,609 atoms) | 2 | ~20% |

What are the key hardware considerations for building a GPU-accelerated MD workstation?

The optimal hardware configuration balances CPU, GPU, and memory to avoid bottlenecks. For MD simulations, GPU selection is the highest priority as it performs the bulk of the calculations [20] [21].

Research Reagent Solutions: Essential Hardware Components

| Component | Recommended Examples | Function in MD Simulations |

|---|---|---|

| GPU | NVIDIA GeForce RTX 4090, NVIDIA RTX 6000 Ada | Executes the parallelized force calculations and particle interactions. High CUDA core count and memory bandwidth are critical [20] [21]. |

| CPU | AMD Threadripper PRO, Intel Xeon W-3400 | Manages simulation setup, data I/O, and coordinates the GPU. Prioritize high clock speeds over extreme core counts for most MD software [20] [21]. |

| System Memory | 128 GB - 256 GB DDR4/5 | Holds the entire simulation state and coordinates. Ample RAM is needed to prevent bottlenecking large systems [20]. |

| Storage | 2 TB - 4 TB NVMe SSD | Provides high-speed storage for reading input files and writing trajectory data, which can be massive [20]. |

Key Hardware Selection Workflow:

Common Errors and Troubleshooting

Why does my GROMACS simulation slow down dramatically after several hours?

This is a known issue often related to the CPU-based "update" phase of the simulation becoming a bottleneck. The solution is to offload this computation to the GPU [22] [23].

Solution:

- Modify the

mdruncommand: Add the-update gpuflag to your command. The full command should look like:gmx mdrun -s test.tpr -v -x test.xtc -c test.gro -nb gpu -bonded gpu -pme gpu -update gpu[22]. - Check Thermostat Compatibility: The

-update gpuflag requires the use of the v-rescale thermostat (tcoupl = v-rescalein your.mdpfile). It is not compatible with the Nose-Hoover thermostat [22].

What should I do if I get an error when testing my OpenMM installation?

An error like "Failed to import OpenMM packages" or an "undefined symbol" indicates an incomplete or corrupted installation, often due to library path issues [24].

FAQ & Resolution Steps:

- Reinstall via Conda/Pip: The most reliable method is to use the recommended package managers. First, try reinstalling OpenMM using

condaorpipas described in the installation protocol [18]. - Environment Conflict: If you have multiple Python environments, ensure you have installed and are testing OpenMM in the same active environment.

- Driver Issues: Verify that you have installed the latest GPU drivers from your vendor (NVIDIA or AMD) [18].

My GPU utilization is high, but performance is poor. What could be wrong?

This could indicate a problem with GPU thermal throttling or a suboptimal simulation configuration.

Troubleshooting Guide:

- Monitor GPU Temperature: Use

nvidia-smi -l 5to monitor your GPU temperature in real-time. If the temperature approaches ~86°C, the GPU will throttle its performance to cool down, reducing speed [22] [23]. - Mitigate Overheating:

- Ensure your computer case has good airflow and that the GPU heatsinks are free of dust.

- Consider improving the cooling system in your workstation.

- Review Simulation Parameters: For GROMACS, adjusting parameters like

nstlist(e.g.,-nstlist 400) can improve performance by reducing the frequency of neighbor list updates [22].

Performance Optimization and Troubleshooting Workflow

The following diagram outlines a systematic approach to diagnosing and resolving common performance issues in GPU-accelerated MD simulations.

Frequently Asked Questions (FAQs)

Q1: What are the primary advantages of using an FPGA coprocessor over a GPU for Molecular Dynamics simulations? FPGA coprocessors offer deterministic, low-latency performance and high power efficiency for fixed, well-defined computational pipelines like the short-range force calculation in MD. Unlike GPUs, which excel at massive, floating-point parallelism, FPGAs can be tailored at the gate level to execute a specific algorithm with minimal overhead, leading to higher performance per watt in edge computing or dedicated server environments [25] [26].

Q2: Our simulations require high numerical precision. Can FPGAs handle this? Yes, but the precision must be carefully designed. One successful approach for MD simulations used 35-bit precision, which was systematically determined through energy fluctuation experiments to maintain simulation quality while optimizing hardware resource usage. FPGAs also support other arithmetic modes like block floating point to retain accuracy without the full cost of double-precision floating-point logic [25].

Q3: What is a common data transfer bottleneck when integrating an FPGA coprocessor? A major bottleneck often occurs when moving particle data between the host processor and the FPGA. Inefficient transfer mechanisms can nullify the acceleration benefits. Utilizing Direct Memory Access (DMA) controllers and high-throughput streaming interfaces (like AXI4-Stream) is critical to minimize CPU overhead and maintain continuous data flow [27] [26].

Q4: How are complex computations like the Lennard-Jones force implemented efficiently on an FPGA? Computationally complex functions are often implemented using lookup tables (LUTs) and interpolation. The order of interpolation and the size of the lookup tables can be systematically optimized to achieve the best trade-off between resource usage, performance, and numerical accuracy for the specific MD simulation [25].

Troubleshooting Guide

| Problem Area | Common Symptoms | Potential Solutions |

|---|---|---|

| System Integration | Driver conflicts; CPU hangs when accessing FPGA; system crashes. | Verify operating system support for your PCIe board. Ensure correct installation of low-level DMA driver and that the FPGA bitstream is correctly loaded [27]. |

| Performance | Simulation speed-up is lower than expected. | Profile the application: use a logic analyzer to check for pipeline stalls; ensure DMA transfers are configured for burst mode; check that the host software is not introducing delays [26]. |

| Numerical Accuracy | Energy drift or unexpected physical behavior in the simulation. | Validate the FPGA output against a trusted software model for a single timestep. Check for precision overflow/underflow in the force pipeline and verify lookup table values [25]. |

| Data Transfer | Low sustained throughput between CPU and FPGA; corrupted particle data. | Confirm that the DMA controller's FIFO thresholds are set for efficient block transfers. Check the alignment of data buffers in host memory [27] [26]. |

Experimental Protocols & Methodologies

Protocol 1: Setting up a Short-Range Force Computation Pipeline This protocol outlines the steps for implementing the core short-range force computation, which is a primary target for FPGA acceleration in MD simulations [25].

- System Modeling: The target system consists of a host PC and an FPGA coprocessor on a PCI plug-in board. The host runs the main MD application (e.g., ProtoMol), handling motion integration and long-range forces, while the FPGA offloads the short-range force calculations [25].

- Cell List Construction: The "cell-list processor" on the FPGA manages the spatial partitioning of particles. This micro-architecture efficiently streams particle data from off-chip memory and identifies which particles are within the cutoff distance for force calculations [25].

- Force Pipeline Configuration:

- Precision: Configure the arithmetic logic for 35-bit fixed-point or other required precision.

- Arithmetic Mode: Employ a specialized arithmetic mode that supports only the small number of alignments actually occurring in the computation for efficiency.

- Interpolation: Use precomputed lookup tables with interpolation for the Lennard-Jones and Coulomb force functions.

- Memory Controller: Utilize a custom off-chip memory controller designed to handle the high, random-access bandwidth required for reading particle data from the cell lists [25].

Quantitative Performance Data: The following table summarizes key performance metrics from a reference implementation [25].

| Metric | Value / Specification |

|---|---|

| Supported Model Size | Up to 256,000 particles |

| Precision | 35-bit (derived from energy fluctuation experiments) |

| Arithmetic Mode | Non-floating point, alignment-specific |

| Speed-up vs. NAMD | 5x to 10x (on 2004-era FPGA hardware) |

Protocol 2: Hardware-Software Co-Design for FPGA Coprocessors This methodology ensures efficient partitioning of the MD application between the host CPU and the FPGA coprocessor [26].

- Workload Profiling: Identify the computationally dominant kernels in the MD simulation. The short-range force calculation often consumes over 95% of the execution time, making it an ideal candidate for offloading (addressing Amdahl's law limitations) [25].

- Architecture Selection: Choose an integration model based on system constraints.

- Interface Definition: Implement standardized hardware interfaces (e.g., AXI4-Stream for data, Avalon for control) and a corresponding software API. The low-level driver should be processor-specific, while the high-level API is coprocessor-specific [27] [26].

- Data Flow Optimization: Structure the data flow to use DMA for bulk transfers and FIFOs to buffer data between the CPU's memory space and the FPGA's processing pipelines, allowing for concurrent operation [27].

The Scientist's Toolkit: Research Reagent Solutions

Essential hardware and software components for building an FPGA-accelerated MD simulation system.

| Item | Function / Description |

|---|---|

| FPGA Development Board | A commercial PCIe board (e.g., with Xilinx Virtex FPGAs) providing the reconfigurable hardware platform and host interface [25]. |

| HDL Code (VHDL/Verilog) | The core design files describing the cell-list processor, force pipeline, memory controller, and system integration logic [25]. |

| MD Software Framework | A software base like ProtoMol, which is designed for experimentation and allows for clear hardware/software partitioning [25]. |

| DMA Controller IP | A pre-designed intellectual property (IP) block for Direct Memory Access, enabling high-speed data transfer between host memory and the FPGA [27] [26]. |

| Standardized Interface IP (AXI, Avalon) | Pre-defined interface IP cores to ensure robust and reusable communication between custom modules and system infrastructure [27] [26]. |

Architectural and Workflow Diagrams

FPGA-Accelerated MD Workflow

FPGA Coprocessor System Architecture

FPGA Force Computation Pipeline

Troubleshooting Common Enhanced Sampling Issues

FAQ 1: My metadynamics simulation is not crossing the desired energy barrier. What could be wrong?

This is often related to the selection of Collective Variables (CVs) or bias deposition parameters.

- Suboptimal Collective Variables (CVs): The chosen CVs might not accurately describe the true reaction coordinate of the process you are studying. A suboptimal CV cannot effectively distinguish between the metastable states or describe their interconversion dynamics, leading to hysteresis and poor sampling [28].

- Incorrect Bias Deposition Rate: If the Gaussian bias potential is deposited too slowly, the simulation may remain trapped in a free energy minimum for an extended period. Conversely, if the deposition is too aggressive, it can lead to an oscillatory behavior of the system between states and make it difficult to converge the free energy estimate [28].

- Solution - CV Validation and Resetting: Ensure your CVs are capable of discriminating between the initial, final, and any known intermediate states. If refining CVs is challenging, a recent solution is to combine metadynamics with Stochastic Resetting. This approach involves periodically stopping the simulation and restarting it from independent initial conditions, which can help prevent the simulation from being trapped and provide significant acceleration, even when using suboptimal CVs [28].

FAQ 2: How do I determine the correct number and temperature range for replicas in REMD?

Inadequate temperature distribution is a primary cause of poor replica exchange rates.

- Problem: The number of replicas and their temperature spacing is critical for achieving a high acceptance probability for exchanges between adjacent replicas. If the temperature gap is too wide, the exchange rate will be low, hindering the random walk through temperature space and slowing down convergence [1].

- Solution - Replica Spacing Calculation: The number of replicas required grows with the square root of the number of degrees of freedom in the system. The temperature range must cover from the target temperature to a temperature high enough to overcome all relevant energy barriers (often near or above the folding/unfolding transition). Tools like the

demuxutility in GROMACS or online calculators can help estimate the required replica count and temperature distribution based on your system size and desired temperature range to maintain an exchange rate of ~20-25% [1].

FAQ 3: My simulation is sampling unrealistic configurations. How can I ensure physical relevance?

This can occur in highly biased simulations if the underlying potential energy surface is not accurate or if the biasing method disrupts the system's physical dynamics.

- Solution - Machine Learning Potentials and Structure-Preserving Integrators: To maintain physical fidelity:

- Use Machine-Learning Potentials (MLPs): MLPs offer a solution by providing near-ab initio accuracy at a much lower computational cost, ensuring a more faithful representation of atomic interactions during enhanced sampling [29].

- Employ Structure-Preserving Integrators: When using machine-learning to enable long-time-step MD, ensure the integrator is symplectic and time-reversible. These properties are crucial for long-term stability as they conserve a modified Hamiltonian, leading to excellent energy conservation and equipartition, which are often lost with non-structure-preserving predictors [3].

Quantitative Performance Data

The table below summarizes performance metrics for running Molecular Dynamics simulations on cloud platforms, providing a benchmark for the computational cost of both standard and enhanced sampling simulations.

Table 1: Benchmarking Data for GPU-Accelerated MD Simulations in the Cloud [30]

| System Size & Description | Performance (ns/day) | Cost Efficiency ($/µs) | Optimal For |

|---|---|---|---|

| Small System (e.g., RNA piece in water, ~32,000 atoms) | Up to 1,139.4 ns/day | ~$101.85 / µs | Rapid testing, method development, REMD of small peptides. |

| Medium System (e.g., Protein in membrane, ~80,000 atoms) | Up to 428.3 ns/day | ~$284.30 / µs | Typical protein-ligand binding studies, protein folding with REMD/MetaD. |

| Large System (e.g., Membrane protein in lipid bilayer, ~616,000 atoms) | Up to 65.3 ns/day | ~$1,870.30 / µs | Studying large complexes, viral capsids, or molecular motors. |

Experimental Protocols

Protocol 1: Calculating Kinetics from Temperature Accelerated Sliced Sampling (TASS)

TASS is an enhanced sampling method that combines umbrella biases, metadynamics, and temperature acceleration for exhaustive exploration of high-dimensional CV spaces. This protocol outlines how to recover kinetic rate constants from TASS simulations [31].

- Simulation Setup: Implement the TASS Lagrangian, which couples the physical system to high-temperature auxiliary variables. Apply a harmonic umbrella bias along a primary CV and a well-tempered metadynamics bias on a subset of other auxiliary variables.

- Run Multiple Simulations: Conduct multiple independent TASS simulations, each with the umbrella bias centered at a different location (

ξ_h) along the primary CV, creating "slices" of the free energy landscape. - Free Energy Representation: Use an artificial neural network to construct a continuous, high-dimensional representation of the free energy landscape from the data collected across all slices.

- Kinetics Extraction: Apply the principles of Infrequent Metadynamics (IMetaD) to the reconstructed free energy landscape. This allows for the calculation of the rate constant for the barrier crossing event, bridging the gap between free energy data and kinetic information [31].

Protocol 2: Combining Stochastic Resetting with Metadynamics

This protocol enhances the efficiency of metadynamics, particularly when using suboptimal CVs, by periodically restarting the simulation [28].

- Run Initial Metadynamics: Perform a standard well-tempered metadynamics simulation using your available CVs.

- Analyze First-Passage Times (FPT): From the initial simulation, analyze the distribution of times taken for the system to transition between states of interest. Calculate the Coefficient of Variation (COV = standard deviation / mean) of the FPT distribution. A COV > 1 indicates that stochastic resetting will be effective.

- Determine Optimal Resetting Rate: Using the FPT distribution data, identify the resetting rate that maximizes the speedup. This is often a non-zero rate that balances exploration with the need to restart from the initial state.

- Run Resetting MetaD: Implement the metadynamics simulation with stochastic resetting. At random or fixed time intervals (determined by the optimal rate), stop the simulation, reset the bias potential to zero, and restart the dynamics from the initial condition using a new random seed.

- Recover Unbiased Kinetics: Use a derived procedure to extract the unbiased mean first-passage time from the resetting-augmented simulations, correcting for the resetting effect [28].

Workflow Visualization

The following diagram illustrates the logical workflow for combining metadynamics with stochastic resetting, a modern approach to improve sampling efficiency.

Combining Metadynamics with Stochastic Resetting

The Scientist's Toolkit: Research Reagents & Computational Solutions

This table lists key computational "reagents" and their roles in implementing enhanced sampling simulations for computational cost reduction.

Table 2: Essential Computational Tools for Enhanced Sampling

| Tool / Resource | Function | Role in Cost Reduction |

|---|---|---|

| Collective Variables (CVs) | Low-dimensional functions of atomic coordinates (e.g., distances, angles, RMSD) that describe slow modes of a process. | Enables focusing computational resources on sampling the most relevant degrees of freedom, ignoring faster, less relevant motions [29]. |

| Machine Learning Potentials (MLPs) | A machine-learned model that provides a highly accurate potential energy surface at a computational cost lower than ab initio methods. | Allows for more accurate simulations of bond formation/breaking and complex interactions without the prohibitive cost of full QM calculations, enabling enhanced sampling on more realistic PESs [29]. |

| Cloud HPC Platforms (e.g., Fovus) | AI-optimized, scalable cloud computing platforms that dynamically provision optimal GPU/CPU resources for MD. | Reduces hardware costs and simulation time via intelligent resource allocation, spot instance utilization, and multi-region failover, making large-scale sampling more accessible and affordable [30]. |

| Structure-Preserving ML Integrator | A machine-learning-based integrator that is symplectic and time-reversible, allowing for much longer time steps. | Directly reduces the number of simulation steps required to reach a given physical time, providing a foundational speedup for any MD simulation, including enhanced sampling runs [3]. |

| Infrequent Metadynamics (IMetaD) | A variant of metadynamics where the bias is deposited so infrequently that it does not affect the transition state, allowing for direct estimation of kinetics. | Enables the calculation of rate constants from biased simulations, avoiding the need for multiple extremely long unbiased simulations to measure slow kinetics [31]. |

Molecular dynamics (MD) simulations are indispensable for atomic-scale research in drug development and materials science. However, the computational cost of ab initio molecular dynamics (AIMD) severely restricts the accessible time and length scales. Machine Learning Potentials (MLPs) have emerged as a transformative solution, dramatically accelerating force calculations by leveraging artificial intelligence to approximate quantum mechanical energies and forces with near-ab initio accuracy. This technical support center provides troubleshooting guides and FAQs to help researchers effectively implement MLPs, framed within the broader thesis of computational cost reduction strategies for long-timescale MD simulations.

Core Concepts and Quantitative Benchmarks

Machine Learning Potentials are trained on data generated from accurate but expensive quantum mechanical calculations. Once trained, they can predict energies and forces for new atomic configurations at a fraction of the computational cost. A key application is Artificial Intelligence Accelerated Ab Initio Molecular Dynamics (AI2MD), which uses MLPs to extend simulation timescales to nanoseconds while maintaining ab initio accuracy [32].

The table below summarizes the performance characteristics of different simulation methods.

Table 1: Performance Comparison of MD Simulation Methods

| Simulation Method | Typical Timescale | Computational Cost | Key Characteristics |

|---|---|---|---|

| Classical MD | Nanoseconds to Microseconds | Low | Relies on pre-defined force fields; accuracy is limited and system-dependent [32]. |

| Ab Initio MD (AIMD) | Picoseconds | Very High (Reference) | High accuracy; describes electronic interactions explicitly but is prohibitively slow [32]. |

| Machine Learning-Accelerated MD (AI2MD) | Nanoseconds | ~10,000x faster than AIMD | Achieves accuracy comparable to AIMD at dramatically reduced cost, enabled by MLPs [32]. |

Public datasets are invaluable for training and benchmarking MLPs. The ElectroFace dataset is a prominent example, compiling over 60 distinct AIMD and MLMD trajectories for various charge-neutral electrochemical interfaces [32].

Table 2: Key Contents of the ElectroFace Dataset

| Data Type | Format | Description | Example System |

|---|---|---|---|

| Atomic Trajectories | Gromacs XTC | Atomic positions over time; compressed for size [32]. | Pt(111)-, SnO2(110)-, and CoO(100)-water interfaces [32]. |

| Forces & Velocities | Zip Archive | Forces (and velocities if applicable) for atoms in trajectories [32]. | N/A |

| ML Potentials & Training Sets | 7z Archive | Trained MLPs and the ab initio data used for training [32]. | IF-SnO2-110-136-H2O-X-MLTDJia2024_PrecisChem.MLP.7z [32]. |

| AIMD/MLMD Input Files | 7z Archive | Input parameters for CP2K and LAMMPS simulations for reproducibility [32]. | N/A |

Experimental Protocol: Implementing an MLP Workflow

This section details a standard concurrent learning protocol for generating robust MLPs, as used for creating the datasets in ElectroFace [32].

The following diagram illustrates the iterative, four-step active learning workflow for generating a robust Machine Learning Potential.

Detailed Methodology

Initial Dataset Preparation

- Extract 50-100 atomic structures evenly distributed across a short, exploratory AIMD trajectory [32].

- Ensure these structures capture a diverse range of atomic environments relevant to your system.

Training

- Use the initial dataset to train four separate MLPs (e.g., using DeePMD-kit) with different random initializations. This ensemble is crucial for uncertainty quantification [32].

- The loss function typically combines energy and force errors.

Exploration

- Use one of the trained MLPs to run an MD simulation (e.g., with LAMMPS) to sample new, unexplored configurations that the model has not been trained on [32].

Screening

- Analyze the configurations sampled during exploration. Calculate the maximum disagreement (standard deviation) on the predicted forces among the four MLPs in the ensemble.

- Categorize structures into:

- Good: Low model disagreement. The MLP is confident.

- Decent: Moderate model disagreement. These are candidates for new training data.

- Poor: High model disagreement. May be outliers or unphysical states [32].

Labeling

- Randomly select 50 structures from the "decent" group.

- Perform accurate ab initio calculations (e.g., using CP2K) on these selected structures to compute their energies and forces.

- Add these newly labeled structures to the existing training dataset [32].

The loop (Steps 2-5) continues until a convergence criterion is met, such as having over 99% of the explored structures categorized as "good" for two consecutive iterations [32].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Software and Tools for MLP Development

| Tool Name | Function | Key Feature |

|---|---|---|

| CP2K/QUICKSTEP | Ab Initio Simulation | Generates reference data for training; uses Gaussian and plane-wave bases [32]. |

| DeePMD-kit | MLP Training | Open-source tool for training Deep Potential models [32]. |

| LAMMPS | MD Simulation with MLPs | Performs high-performance MD simulations using trained MLPs [32]. |

| DP-GEN | Concurrent Learning | Automates the active learning workflow (Training, Exploration, Screening) [32]. |

| ai2-kit | Concurrent Learning & Analysis | Toolkit for workflow management and analysis (e.g., proton transfer pathways) [32]. |

| ECToolkits | Trajectory Analysis | Python package for analyzing properties like water density profiles [32]. |

Frequently Asked Questions (FAQs) & Troubleshooting

Data Generation and Training

Q1: My MLP model fails to generalize to new configurations outside my training set. What could be wrong? A: This is typically a data coverage issue.

- Cause 1: Insufficient Initial Data. The initial AIMD trajectory may be too short or may not capture the full diversity of atomic environments (e.g., different phases, reaction intermediates).

- Solution: Extend the sampling of your initial AIMD run. Consider using enhanced sampling techniques to explore rare events for the initial data.

- Cause 2: Inadequate Active Learning. The exploration phase in the concurrent learning loop may not be thorough enough, failing to visit new, relevant parts of the configuration space.

- Solution: Ensure your exploration MD simulations are long enough and run at appropriate temperatures to sufficiently perturb the system.

Q2: The training process is unstable, with my loss function fluctuating wildly. How can I fix this? A: This often points to problems with the training data or hyperparameters.

- Cause 1: Inconsistent or Noisy Data. The ab initio data may have convergence issues or high numerical noise.

- Solution: Tighten the convergence criteria (e.g., SCF convergence, k-point sampling) in your ab initio calculations. Visually inspect the training structures and energies for outliers.

- Cause 2: Suboptimal Hyperparameters. The learning rate may be too high, or the network architecture may be unsuitable for your system's complexity.

- Solution: Implement a learning rate schedule. Systematically optimize hyperparameters using a validation set. Start with architectures known to work for similar systems.

Simulation and Performance

Q3: My MLP-MD simulation becomes unstable and atoms "blow up." What steps should I take? A: This is a critical failure indicating a poor or untrustworthy MLP prediction.

- Cause 1: Extrapolation. The simulation has entered a region of configuration space where the MLP has no training data and is extrapolating unreliably.

- Solution: This is precisely what the active learning loop is designed to fix. Check the model disagreement (using your ensemble of MLPs) for the step before the explosion. If the disagreement was high, this configuration should have been flagged and added to the training set. Return to the concurrent learning loop and improve sampling.

- Cause 2: Energy Conservation Failure. In an NVE simulation, significant energy drift indicates that the MLP is not accurately representing the potential energy surface.

- Solution: This is a key validation metric. Always test your MLP in a short NVE simulation of a simple system (e.g., a well-defined crystal) and monitor energy conservation. Poor conservation necessitates retraining.

Q4: The promised speedup is not achieved. Where are the common bottlenecks? A: Performance depends on the balance between several factors.

- Cause 1: System Size is Too Small. For small systems (a few tens of atoms), the overhead of evaluating the neural network may outweigh the cost of a direct ab initio calculation.

- Solution: MLPs show their greatest speed advantage for systems containing hundreds to thousands of atoms.

- Cause 2: Hardware and Implementation. Running DeePMD-kit/LAMMPS on a single CPU core will not yield high performance.

- Solution: Leverage GPU acceleration. Ensure your MD engine (LAMMPS) and MLP interface (DeePMD-kit) are compiled and configured to use available GPUs and multiple CPU cores.

Analysis and Validation

Q5: How can I be confident that my MLP results are physically accurate? A: Validation against ab initio and experimental data is non-negotiable.

- Strategy 1: Hold-Out Test Set. Before starting production runs, create a test set of ab initio calculations that were never used in training. Compare the MLP's predictions for energy and forces on this set.

- Strategy 2: Property Comparison. Compute experimentally observable properties from your MLP-MD simulation and compare them directly to experimental data or benchmark AIMD results. The ElectroFace dataset, for example, analyzes water density profiles and proton transfer pathways for this purpose [32]. A good agreement validates the model's physical realism.

This technical support center is designed for researchers implementing multiscale simulations that combine Brownian Dynamics (BD) and Molecular Dynamics (MD). This hybrid approach addresses a critical challenge in computational biology and drug design: the excessive cost of achieving sufficient sampling with all-atom MD simulations alone. By using BD to simulate long-range diffusion and MD to model short-range, atomic-level interactions, this method significantly reduces computational expense while maintaining critical mechanistic details. This guide provides targeted troubleshooting and protocols to help you successfully deploy this strategy in your research.

Frequently Asked Questions (FAQs) & Troubleshooting Guides

FAQ 1: What is the fundamental principle behind coupling BD and MD?

- Answer: BD and MD operate at different spatial and temporal scales. BD efficiently simulates the diffusional motion of molecules over large distances and long timescales, treating the solvent as an implicit continuum and using random forces to model collisions. MD, while computationally intensive, provides atomic-level detail of interactions, such as those in a protein's binding pocket. The multiscale approach uses BD to generate an ensemble of diffusional encounter complexes, which then serve as initial structures for MD simulations to model the final formation of the stable bound complex [33] [34]. This synergy avoids the need for prohibitively long MD simulations to observe rare binding events.

FAQ 2: How do I determine the appropriate handover parameters between BD and MD simulations?

- Answer: The handover, or "coupling," is critical. A common strategy is to define a reaction criterion or a distance threshold in the BD simulation. When a ligand diffuses within this critical distance of the protein's active site, the simulation is stopped, and the coordinates are passed to the MD engine. This threshold should be small enough to ensure the ligand is in close proximity but large enough to avoid steric clashes that could destabilize the initial MD steps [34]. Testing different thresholds on a small system is recommended for calibration.

FAQ 3: My BD-generated structures cause immediate instability in the subsequent MD simulation. How can I fix this?

- Answer: This is a common issue. BD simulations often use simplified, rigid-body representations and implicit solvent.

- Solution 1: Implement a brief energy minimization and equilibration protocol for the structures obtained from BD before starting the production MD run. This allows the atomic contacts to relax and the explicit solvent molecules (if present) to organize around the protein-ligand complex.

- Solution 2: Check for severe atomic overlaps in the BD output. You may need to adjust the reaction criterion in your BD setup to be slightly larger, giving the MD simulation more space to relax the encounter complex [34].

FAQ 4: How can I validate that my multiscale workflow is producing physically accurate results?

- Answer: Validation is a multi-step process.

- Kinetic Checks: Compare the computed association rate constant ((k_{on})) from your multiscale simulations against available experimental data (e.g., from surface plasmon resonance) [34].

- Structural Checks: Ensure the final bound pose from your MD simulation matches experimentally determined structures (e.g., from X-ray crystallography or Cryo-EM).

- Convergence Checks: Run multiple independent BD/MD trials to ensure your results are reproducible and not dependent on a single trajectory.

Troubleshooting Table: Common Errors and Solutions

| Problem Symptom | Potential Cause | Recommended Solution |

|---|---|---|

| MD simulation crashes immediately after handover from BD. | Severe steric clashes in the initial BD structure. | 1. Increase the BD handover distance.2. Perform thorough energy minimization and solvent equilibration in MD. [34] |

| The computed binding rate ((k_{on})) is too slow compared to experiment. | BD reaction radius may be set too small, missing productive encounters. | Re-calibrate the reaction radius, potentially using the Smoluchowski equation as a starting point: ( \varr = k/(4\pi(DA + DB)) ) [35]. |

| The ligand fails to reach the binding site in BD simulations. | Inaccurate diffusion coefficients ((D)) or attractive/repulsive forces in the BD model. | Review the assignment of diffusion constants and the force field parameters (electrostatics, desolvation) used in the BD simulation. [35] |

| The simulation is still computationally too expensive. | The MD phase is too long or the BD sampling is inefficient. | Optimize BD sampling algorithms; use the MD phase only for short-range refinement. Consider using a Markov State Model (MSM) to extract kinetics from shorter MD runs. [33] |

Key Experimental Protocols & Workflows

Protocol 1: A Standard Workflow for Computing Protein-Ligand Association Rates

This protocol outlines a validated multiscale approach for calculating association rate constants ((k_{on})) [34].

System Preparation:

- Prepare the protein and ligand structures (e.g., with standard tools like

pdb2gmx,tleap). - Assign partial charges and force field parameters to the ligand.

- Prepare the protein and ligand structures (e.g., with standard tools like

Brownian Dynamics Simulation:

- Objective: Generate a large ensemble of diffusional encounter complexes between the protein and ligand.

- Software: Use a BD simulator like

SDA(Simulation of Diffusional Association). - Key Parameters:

- Diffusion Constants: Calculate for both protein and ligand.

- Reaction Criterion: Define a distance threshold (e.g., 3.5 Å between key atoms) to identify successful encounters [34].

- Electrostatic Forces: Use a Poisson-Boltzmann-derived force field to guide diffusion.

- Output: Hundreds or thousands of structural snapshots where the ligand is near the binding site.

Structure Handover and Preparation for MD:

- Extract all BD snapshots that meet the reaction criterion.

- Solvate these structures in an explicit water box and add ions to neutralize the system.

Molecular Dynamics Simulation:

- Objective: Simulate the short-range interactions and conformational changes leading to stable binding.

- Software: Use an MD package like

GROMACS,NAMD, orAMBER[33]. - Protocol:

- Energy Minimization: Relax each structure to remove bad contacts.

- Equilibration: Briefly equilibrate the solvent and ions around the fixed protein-ligand complex, then release all restraints in a short NVT/NPT equilibration.

- Production Run: Run multiple short, independent MD simulations from different BD-generated starting points.

Analysis and Rate Calculation:

- Determine the fraction of MD simulations that successfully form a stable bound complex, defined by a specific structural metric.

- Combine this probability with the rate of encounter complex formation from BD to compute the overall (k_{on}) [34].

Workflow for Computing Association Rates

Protocol 2: Using Milestoning to Integrate MD and BD Scales

Milestoning is a powerful technique to bridge scales and calculate kinetic parameters by efficiently sampling the transitions between defined states ("milestones") [33].

- Define Reaction Coordinates and Milestones: Identify a key coordinate (e.g., distance between protein and ligand) and define several milestones along its path.

- Run Short MD Simulations from each Milestone: Launch multiple independent, short MD simulations starting from each milestone. The goal is to observe which adjacent milestone is reached first.

- Calculate Transition Probabilities and Times: Analyze the MD trajectories to compute the probability of transitioning from one milestone to another and the average time it takes.

- Construct a Kinetic Model: Use the transition probabilities and times to build a model (e.g., a Markov State Model) that describes the entire association process and allows calculation of the overall rate constant [33].

The Scientist's Toolkit: Essential Research Reagents & Software

The following table details key computational "reagents" and tools essential for setting up and running multiscale BD/MD simulations.

Research Reagent Solutions

| Item Name | Function / Purpose | Key Considerations |

|---|---|---|

BD Simulators (e.g., SDA, Smoldyn [35]) |

Simulates long-range, stochastic diffusion of molecules. Uses implicit solvent, making it much faster than MD for sampling large volumes. | Correct assignment of diffusion constants and interaction potentials (electrostatics) is critical for accuracy. |

MD Packages (e.g., GROMACS, NAMD, AMBER [33]) |

Simulates atomic-level interactions with high fidelity using Newton's equations of motion and an explicit solvent model. | Computationally demanding. Force field choice (CHARMM, AMBER, OPLS) and water model can affect results. |

Force Fields (e.g., CHARMM, AMBER, OPLS [33]) |

A set of empirical parameters that mathematically describe the potential energy of a system of particles. | Must be self-consistent. Ligand parameters often need to be generated separately. |

Markov State Model (MSM) Frameworks (e.g., PyEMMA, MSMBuilder [33]) |

A computational method to reconstruct the long-timescale kinetics of a molecular system from many short, distributed MD simulations. | Ideal for analyzing the ensemble of short MD runs started from BD encounter complexes to determine binding pathways and rates. |

Visualization & Analysis (e.g., VMD, PyMOL) |

Used to visualize trajectories, analyze structures, and debug simulations. | Essential for checking the quality of BD-generated structures and the final bound state from MD. |

Advanced Data Management: Handling Trajectory Data

Multiscale simulations generate enormous amounts of trajectory data, creating storage and processing bottlenecks. Applying context-aware compression can yield significant efficiency gains without sacrificing scientific value [36].

- Generalization: As a pre-processing step, the motion of entire molecules can be represented by a single trajectory of their center of mass, rather than storing every atom's path. This drastically reduces the data's initial volume [36].

- Compression: Standard trajectory compression algorithms can then be applied to this generalized data for further storage reduction.

- Benefit: This approach can significantly speed up the processing of common queries, such as detecting when two molecules come within a proximity threshold (e.g., for hydrogen bond formation), by reducing the data that must be searched [36].

Quantitative Data from Compression Studies

| Data Processing Method | Storage Savings | Query Processing Time | Key Advantage |

|---|---|---|---|

| Uncompressed Trajectories | Baseline (0%) | Baseline (e.g., 38.83 sec) [36] | Full atomic detail. |

| Generalization + Compression | Significant (High %) | Much Faster (e.g., < 10 sec) [36] | Retains semantic features needed for proximity queries; eliminates false negatives. |

| Lossless Compression Only [36] | Moderate | Slower than Generalized+Compressed | Perfect data fidelity, but less efficient for fast querying of specific events. |

Practical Optimization: Fine-Tuning Simulations for Maximum Throughput

Maximizing GPU Utilization with NVIDIA Multi-Process Service (MPS)

Performance and Cost-Benefit Analysis

Quantitative Benefits of MPS for MD Simulations

Enabling MPS can significantly increase the total simulation throughput, especially for smaller molecular systems. The table below summarizes performance gains observed in benchmark studies.

Table 1: Throughput Gains Using MPS on Various GPUs and System Sizes

| GPU Model | Test System (Atoms) | Configuration | Throughput Gain | Key Metric |

|---|---|---|---|---|

| NVIDIA H100 | DHFR (23,558) | Multiple concurrent simulations with MPS | >2x | Total throughput vs. single simulation [19] |

| NVIDIA A100 | RNAse (23,558) | Multiple simulations per GPU with MPS | 1.8x | Total throughput on an 8-GPU server [37] |

| NVIDIA A100 | ADH Dodec (96,448) | Multiple simulations per GPU with MPS | 1.3x | Total throughput on an 8-GPU server [37] |

| NVIDIA L40S | DHFR (23,558) | MPS with CUDA_MPS_ACTIVE_THREAD_PERCENTAGE tuning |

Approaches 5 μs/day | Simulation speed on a single GPU [19] |

| General (e.g., A100) | Small Systems (~10,000) | MPS enabled | ~4x higher throughput, 7x shorter wall-clock time | Overall workflow efficiency [15] |

Cost Analysis of GPU Utilization

Maximizing GPU utilization directly translates to lower computational costs and faster research cycles, a core thesis of computational cost reduction.

Table 2: Economic Impact of Improved GPU Utilization

| Factor | Typical Baseline | With Optimization | Impact on Research |

|---|---|---|---|

| Average GPU Utilization | <30% [38] | Can be significantly increased [19] [37] | Wasted infrastructure investment; delays model deployments [38] |

| Cloud Cost Savings | - | Up to 40% reduction possible [38] | Frees budget for other research activities; extends grant funding [38] |

| Training Time | Weeks or months | Reduced to days [38] | Accelerates time-to-solution from months to weeks [38] |

| Infrastructure ROI | Low on a large capital investment | Effectively doubles capacity without new hardware [38] | Allows more simulations to be run concurrently on existing resources [19] |

Implementation Guide: Experimental Protocols

Protocol 1: Basic MPS Setup for OpenMM Simulations

This methodology enables MPS to run multiple, concurrent OpenMM simulations on a single GPU [19] [39].

Enable the MPS Server Daemon: Open a terminal and start the MPS control daemon. This service will manage GPU resource sharing among different processes.

Launch Concurrent Simulations: In the same terminal session, launch multiple simulation instances, ensuring they are directed to the same GPU. The