Step Size Control in Steepest Descent: Achieving Robust Convergence in Biomedical Optimization

This article provides a comprehensive analysis of step size adaptation strategies for the steepest descent method, focusing on applications in drug discovery and clinical research.

Step Size Control in Steepest Descent: Achieving Robust Convergence in Biomedical Optimization

Abstract

This article provides a comprehensive analysis of step size adaptation strategies for the steepest descent method, focusing on applications in drug discovery and clinical research. It explores foundational convergence theory, methodological implementations for ill-conditioned problems, advanced troubleshooting for unstable iterations, and comparative validation of techniques. Aimed at researchers and scientists, the content synthesizes recent theoretical advances with practical guidance to enhance the efficiency and reliability of optimization in high-dimensional, noisy biomedical data environments.

Understanding Steepest Descent Convergence: Why Step Size Matters

The Fundamental Principle of Steepest Descent and Linear Convergence

Troubleshooting Guides

Frequently Asked Questions (FAQs)

Q1: My steepest descent algorithm is not converging. What could be wrong? The most common cause is an improperly chosen step size (learning rate, η). A step size that is too large can cause the algorithm to overshoot the minimum and diverge, while one that is too small leads to impractically slow convergence [1]. To resolve this, implement an adaptive step size strategy, such as the Armijo line search [2] or the Barzilai-Borwein method [1], which dynamically adjust the step size based on local function properties to guarantee sufficient decrease in the objective function.

Q2: The algorithm has stalled, making very slow progress near a suspected minimum. How can I improve the convergence rate?

This behavior indicates a vanishing gradient in a flat region, and the convergence rate may be linear [3]. You can verify this by monitoring the norm of the gradient, ||∇f(xₖ)||. To improve the rate, consider switching to a second-order method like Newton's method if the Hessian is available and inexpensive to compute [3]. Alternatively, a quasi-Newton method can approximate second-order information to achieve faster convergence [3].

Q3: For my multi-objective optimization problem (MOP), the algorithm fails to find large portions of the Pareto front. What modifications can help? This is a known limitation of some front steepest descent algorithms [2]. An effective solution is the Improved Front Steepest Descent (IFSD) algorithm. Key modifications include [2]:

- Performing a preliminary steepest descent step from any non-dominated point using an Armijo line search.

- Initiating further searches from these updated, potentially improved points rather than the originals.

- This enhances the algorithm's exploration capability, allowing it to span a more complete Pareto front.

Common Error Messages and Resolutions

| Error Symptom | Probable Cause | Resolution |

|---|---|---|

| Diverging values/NaN | Step size (η) too large [1]. | Reduce η; use a conservative value (e.g., 1e-5) and use a line search. |

| Slow convergence in late stages | Fixed step size is too small for flat regions [1]. | Implement a scheduled step size reduction or adaptive methods [4]. |

| Oscillation around minimum | Step size is large relative to the basin [1]. | Systematically reduce η after each iteration or use a momentum term. |

| Pareto front has gaps | Poor exploration from initial points [2]. | Adopt the IFSD algorithm with its modified point generation strategy [2]. |

Key Experimental Protocols and Methodologies

Protocol: Verifying Linear Convergence Rate

Objective: Empirically validate the linear convergence rate of the steepest descent method on a strongly convex function as proven in theoretical analyses [3].

Materials: See "Research Reagent Solutions" in Section 4.

Methodology:

- Function Selection: Choose a simple, strongly convex quadratic function like

f(x) = xᵀAx - 2xᵀb, whereAis a symmetric positive definite matrix [1]. - Algorithm Setup: Implement the steepest descent update rule:

xₖ₊₁ = xₖ - ηₖ∇f(xₖ). For this experiment, a fixed, sufficiently small step sizeηor an exact line search can be used. - Data Collection: Run the algorithm from a defined initial point

x₀. At each iterationk, record:- The function value

f(xₖ) - The norm of the gradient

||∇f(xₖ)|| - The distance to the optimal point

||xₖ - x*||

- The function value

- Analysis: Plot the recorded values (e.g.,

||∇f(xₖ)||) on a semi-log scale. A straight-line trend on this plot confirms a linear convergence rate, as it indicates the error decreases geometrically [3].

Protocol: Testing Robust Efficiency for Uncertain Multi-Objective Problems (UMOP)

Objective: Find a robust efficient solution for an UMOP using the Objective-Wise Worst-Case Robust Counterpart (OWRC) and the steepest descent method [3].

Methodology:

- Problem Formulation: Define an UMOP where the objective functions

Fᵢ(x)depend on uncertain parameters within a known uncertainty set. Formulate the OWRC problem, which aims to minimize, for each objective, the worst-case value over the uncertainty set [3]. - Steepest Descent Direction: Compute the steepest descent direction for the OWRC. This involves solving a sub-problem to find a direction

dthat minimizes the maximum of the directional derivatives of all objective functions over the uncertainty set [3]. - Iteration: Update the solution point using

xₖ₊₁ = xₖ + ηₖdₖ, where the step sizeηₖis determined by a line search ensuring sufficient decrease for all worst-case objectives. - Termination: Iterate until a Pareto stationarity condition for the robust problem is satisfied within a tolerance, e.g.,

mind maxⱼ ∇fⱼ(x̄)ᵀd < ε[2].

Protocol: Implementing Improved Front Steepest Descent (IFSD)

Objective: Approximate the entire Pareto front of a multi-objective problem more effectively than the standard front steepest descent algorithm [2].

Methodology:

- Initialization: Start with a set

X₀of non-dominated points [2]. - Preliminary Descent: For each point in

X₀that is still non-dominated, perform a steepest descent step using a standard Armijo line search. This creates a new set of points. - Multi-Directional Search: From these updated points, initiate new searches. For each point, solve sub-problems (e.g., minimizing a weighted sum of a subset of objectives) to find new candidate points [2].

- Update Front: Evaluate all new candidate points and update the current set of non-dominated points (

Xₖ) to form the new approximation of the Pareto front. - Convergence Check: Continue until the set of points converges, meaning no significant changes occur for several iterations [2].

Data Presentation and Visualization

Convergence Criteria and Parameters

The following table summarizes key parameters and their role in analyzing steepest descent convergence.

| Parameter | Symbol | Role in Convergence Analysis | Typical Test Value/Range |

|---|---|---|---|

| Step Size | η (eta) | Controls update magnitude; critical for stability & speed [1]. | Fixed: 1e-3 to 1e-1; Adaptive: Barzilai-Borwein [1]. |

| Gradient Norm | ||∇f(x)|| | Measures optimality; convergence requires → 0 [3]. | Tolerance: 1e-6 to 1e-8. |

| Function Value Decrease | f(xₖ) - f(x*) | Tracks progress to minimum [1]. | Monitor for monotonic decrease. |

| Pareto Stationarity Tolerance | ε (epsilon) | For MOPs, threshold for stationarity condition [2]. | 1e-6. |

Step Size Strategies for Convergence

This table compares different step size selection strategies, which are central to the thesis context of reducing step size for convergence.

| Strategy | Principle | Pros | Cons |

|---|---|---|---|

| Constant Step Size | Fixed value η for all iterations [4]. | Simple to implement. | Must be chosen carefully; often slow or divergent [1]. |

| Armijo Line Search | Finds η that ensures sufficient decrease in f [2]. | Guarantees convergence; robust. | Requires multiple function evaluations per step. |

| Barzilai-Borwein | Uses gradient differences to approximate Hessian information for η [1]. | Often faster than simple line search; no extra evaluations. | Does not guarantee monotonic decrease of f. |

| Diminishing Step Size | Systematically reduces η over time (e.g., ηₖ = 1/k) [4]. | Guarantees convergence for convex functions. | Very slow convergence in practice. |

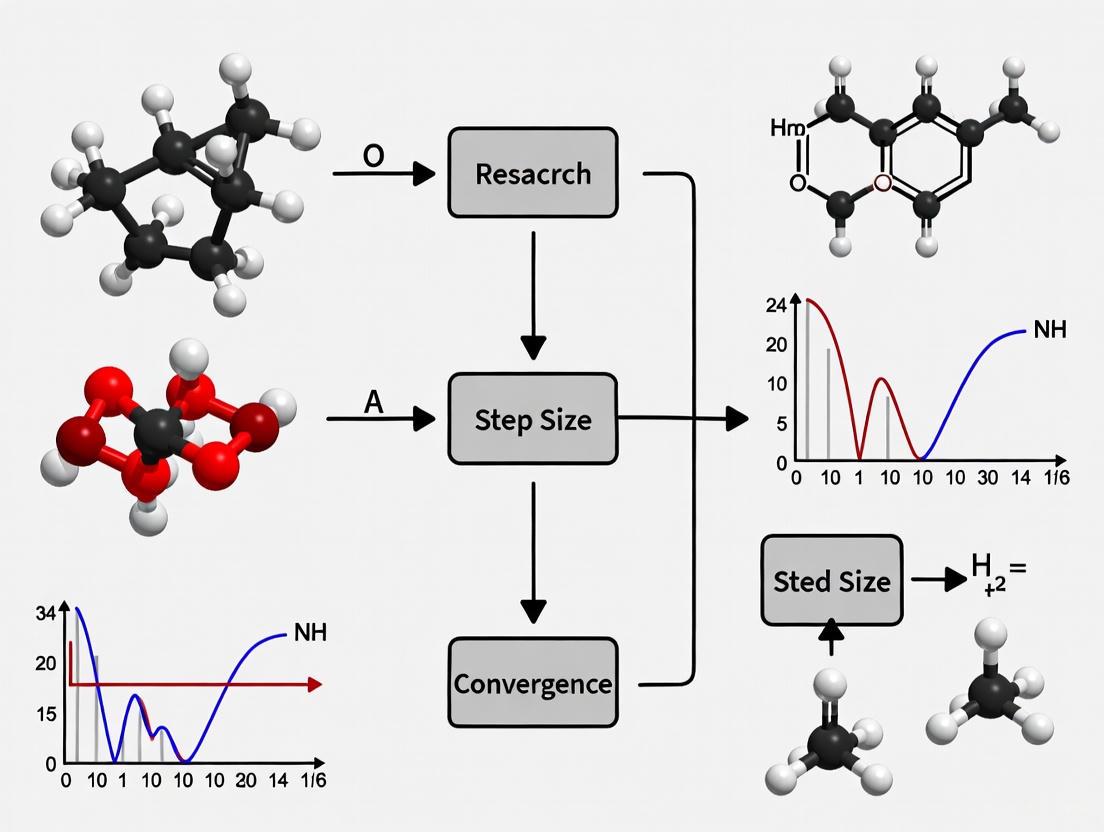

Steepest Descent Experimental Workflow

Steepest Descent Experimental Workflow

Convergence Regimes and Step Size Logic

Convergence Regimes and Step Size Logic

The Scientist's Toolkit

Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| Strongly Convex Test Function (e.g., quadratic) | A well-understood benchmark with a known minimum to validate algorithm correctness and measure convergence rate [1] [3]. |

| Multi-Objective Test Problem (MOP) | A problem with a known Pareto front (e.g., ZDT series) to test the ability of algorithms like IFSD to span the entire front [2]. |

| Uncertainty Set Simulator | For UMOPs, defines the range of parameter variations to model real-world uncertainty and test robust optimization methods [3]. |

| Line Search Algorithm | A subroutine (e.g., Armijo, Wolfe conditions) to automatically determine a productive step size in each iteration, ensuring convergence [2]. |

| Numerical Linear Algebra Library | Provides efficient routines for matrix operations and solving linear systems, which are often required to compute descent directions [1]. |

| Gradient Computing Tool | Either analytical gradient expressions or automatic differentiation tools to compute the required gradient ∇f(x) accurately and efficiently [4]. |

Frequently Asked Questions

Q1: Why does my gradient descent algorithm zigzag and progress very slowly towards the minimum?

This is a classic symptom of an ill-conditioned problem. The issue arises when the objective function has a very high condition number, which is the ratio of the largest to the smallest eigenvalue of its Hessian matrix. In high-dimensional space, imagine the function creates a narrow, steep-sided valley. The gradient descent path will zigzag down this valley because the negative gradient direction, which is the steepest local direction, rarely points directly toward the minimum. The algorithm makes rapid progress along steep, high-curvature directions but only very slow progress along shallow, low-curvature directions [5] [6].

Q2: What is the fundamental relationship between the Hessian's condition number and convergence rate?

For a strongly convex function, the gradient descent method is proven to have a global linear convergence rate [7]. However, the speed of this convergence is dictated by the condition number, ( \kappa ), of the Hessian. A high ( \kappa ) leads to slow convergence. Intuitively, the algorithm must eliminate the error in the steepest direction first before it can effectively minimize along the shallowest direction. The greater the difference in steepness (the higher the condition number), the less progress is made on the shallow ridge during the process of climbing down the steep one, leading to the characteristic zigzag path and slow convergence [5] [8].

Q3: How does the steepest descent method with exact line search behave on an ill-conditioned quadratic function?

Even with a perfect exact line search, which eliminates overshooting, convergence on an ill-conditioned quadratic function is slow. The algorithm will converge in a number of steps less than or equal to the number of dimensions, but it will explore each principal axis of the quadratic function sequentially. It takes one iteration to minimize the error along the eigenvector corresponding to the largest eigenvalue (steepest direction), the next iteration for the second steepest, and so on. This step-wise minimization of error along each eigenvector is why progress is slow when the condition number is high [5].

Q4: What are the main limitations of the standard steepest descent method?

- Linear Convergence: It exhibits linear convergence, which becomes very slow for ill-conditioned problems [8] [7].

- Zigzagging Behavior: It is prone to zigzagging in narrow valleys, which drastically reduces efficiency [8] [6].

- Sensitivity to Step Size: A step size that is too large may cause divergence, while one that is too small leads to slow convergence [8].

- Local Minima: For non-convex functions, it can only guarantee convergence to a local minimum [8].

Troubleshooting Guides

Problem: Slow Convergence in Narrow Valleys

Symptoms: The optimization path shows a pronounced zigzag pattern with minimal net progress per iteration. The function value decreases very slowly after an initial rapid decline.

Diagnosis: High condition number of the Hessian matrix, leading to ill-conditioning.

Solutions:

Use Advanced First-Order Methods:

- Momentum-Based Methods: Incorporate a momentum term that adds a fraction of the previous update to the current update. This helps to smooth out the zigzagging path and accelerate progress in shallow directions [8].

- Conjugate Gradient Methods: This method combines information from the current gradient and the previous search direction to construct a new, conjugate direction. It is designed to avoid zigzagging and can converge in at most ( n ) steps for a quadratic problem in ( n ) dimensions [8].

Employ Second-Order or Quasi-Newton Methods:

- Newton's Method: Uses the inverse Hessian to pre-condition the gradient, effectively rescaling the problem so that the contours become more circular. This allows for much faster convergence but is computationally expensive per iteration due to the calculation and inversion of the Hessian [8].

- Quasi-Newton Methods (e.g., BFGS): These methods approximate the inverse Hessian over successive iterations. They offer faster convergence than steepest descent with a lower computational cost per iteration than Newton's method [8].

Implement Adaptive Step-Size Algorithms:

- Recent research has developed gradient methods with step adaptation that mimic the steepest descent principle but are more efficient. One algorithm aims to find a new point where the current gradient is orthogonal to the previous one, replacing complete relaxation with a form of incomplete or over-relaxation. On average, this method can outperform the standard steepest descent by 2.7 times in the number of iterations required [7].

Problem: Sensitivity to Step Size and Oscillations

Symptoms: The algorithm diverges (function value increases) with a large step size or stalls (no meaningful progress) with a small step size. Oscillations are observed around the minimum.

Diagnosis: The fixed step size is inappropriate for the local curvature of the function.

Solutions:

Use a Line Search Method:

Adopt Adaptive Learning Rate Schedules:

- Polyak Step Size: For a function with a known minimum value ( f^* ), the step is calculated as ( hk = \frac{f(xk) - f^*}{\|\nabla f(xk)\|2^2} ). This is often used in its stochastic variants in machine learning (e.g., AdaSPS, AdaSLS) [7].

- AdaGrad and Family: These methods adapt the step size for each parameter based on the historical sum of squared gradients. This is particularly beneficial for problems with sparse gradients and is widely used in deep learning [7].

Problem: Convergence in Noisy or Interfered Gradients

Symptoms: Optimization becomes unstable or fails to converge when the gradient measurements are corrupted by noise, which is common in real-world experimental data.

Diagnosis: The standard step-size selection methods are sensitive to relative interference on the gradient.

Solutions:

- Use Noise-Immune Step Adaptation:

- Algorithms based on the principle of the steepest descent have been developed to be highly robust to noise. Some proposed methods can converge even when the gradient is corrupted by uniformly distributed interference vectors in a ball with a radius 8 times greater than the gradient norm [7]. These methods rely on a structured step adaptation strategy that does not require pre-tuning parameters based on unknown noise constants.

Experimental Data & Protocols

Table 1: Comparison of Gradient-Based Optimization Methods

| Method | Convergence Rate | Computational Cost per Iteration | Key Advantage | Key Disadvantage |

|---|---|---|---|---|

| Steepest Descent | Linear | Low (1 Gradient) | Guaranteed convergence on smooth, convex functions [7] | Slow for ill-conditioned problems; zigzags [8] |

| Conjugate Gradient | Linear (n-step for quadratic) | Low (1 Gradient) | Faster than steepest descent; low memory footprint [8] | Requires fine-tuning for general non-linear functions |

| Newton's Method | Quadratic | High (Hessian + Inversion) | Very fast convergence near optimum [8] | Computationally expensive for large-scale problems |

| BFGS (Quasi-Newton) | Superlinear | Medium (Update Approx.) | Faster than steepest descent; no second derivatives needed [8] | Higher memory usage (O(n²)) |

| Adaptive Step [7] | Linear | Low (1 Gradient) | Robust to significant gradient noise; no parameter tuning | Newer method, less established in all domains |

| Metric | Steepest Descent | Proposed Adaptive Algorithm |

|---|---|---|

| Average Number of Iterations | Baseline | 2.7x fewer |

| Noise Immunity | Standard | Operable with noise radius >8x gradient norm |

| Parameter Tuning | Requires line search | Universal, no optimal parameters to select |

Experimental Protocol: Benchmarking Optimization Algorithms

- Test Functions: Select a set of multidimensional, ill-conditioned test functions (e.g., strongly convex quadratics with high condition numbers, Rosenbrock function).

- Initialization: Choose a standard initial point ( x_0 ) for each test function.

- Stopping Criterion: Define a convergence threshold (e.g., ( \|\nabla f(x_k)\| < 10^{-6} ) or maximum number of iterations).

- Algorithm Configuration:

- For Steepest Descent, implement an exact or backtracking line search.

- For the Adaptive Algorithm [7], code the step adjustment rule that aims for orthogonality between successive gradients.

- For Momentum and Conjugate Gradient, use standard implementations.

- Metrics Recording: For each run, record the number of iterations and function evaluations until convergence, and the final function value.

- Noise Introduction (Optional): To test robustness, add a random noise vector ( \xik ) to the gradient at each iteration, where ( \|\xik\| ) is a multiple of ( \|\nabla f(x_k)\| ).

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in the Research Context |

|---|---|

| Smooth, Strongly Convex Test Functions | Provides a controlled, well-understood benchmark for analyzing algorithm performance and convergence rates on problems with a known unique minimum [7]. |

| Polyak-Lojasiewicz Condition | A mathematical property used to prove global linear convergence for gradient descent on a class of non-convex problems, expanding the theoretical understanding of optimization [7]. |

| Backtracking Line Search | An inexact line search method that efficiently finds a step size satisfying the Armijo condition, ensuring sufficient decrease in the objective function without a costly minimization [8]. |

| Stochastic Objective Functions | Objective functions composed of a sum of independent terms (common in machine learning), which enable the use of stochastic gradients and specialized step-size methods like AdaSPS [7]. |

| Relative Gradient Noise Model | A model where gradient interference is proportional to the true gradient norm, used to experimentally test and validate the robustness of new optimization algorithms [7]. |

Visualization of Concepts

Optimization Paths in Ill-Conditioned Landscapes

Step Adaptation Principle

In optimization algorithm research, establishing global convergence guarantees and explicit convergence rates represents a fundamental theoretical challenge, particularly for descent methods like gradient descent and Quasi-Newton approaches. This technical resource center addresses the crucial role of step size reduction in achieving guaranteed convergence for steepest descent methods and their variants, synthesizing recent theoretical advances with practical implementation guidance. Within the broader context of convergence research, careful management of step size parameters emerges as a critical mechanism for transforming locally convergent algorithms into globally reliable optimization tools with predictable performance characteristics.

Frequently Asked Questions (FAQs)

Q1: Why does reducing step size help guarantee global convergence for steepest descent methods?

Reducing step size ensures that each iteration sufficiently decreases the objective function value, preventing oscillation and divergence. Theoretical analysis shows that under appropriate step size conditions, the sequence of iterates generated by gradient descent converges to a stationary point even when started far from the optimum [9]. This is particularly important for non-convex problems where aggressive step sizes can lead to convergence failures.

Q2: What convergence rates can be expected from properly tuned gradient descent methods?

For convex functions with Lipschitz-continuous gradients, gradient descent with appropriate fixed step size achieves a convergence rate of O(1/k) where k is the iteration count [9]. For strongly convex functions, this improves to a linear convergence rate O(ρ^k) for some ρ ∈ (0,1) [3] [9]. Recent Quasi-Newton methods with controlled step sizes can achieve accelerated rates of O(1/k²) under certain conditions [10].

Q3: How does step size selection affect convergence in practical applications?

The step size (learning rate) directly controls the trade-off between convergence speed and stability. Too large a step size causes oscillation or divergence, while too small a step size leads to unacceptably slow progress [1]. Adaptive step size strategies that balance descent guarantees with performance include the Barzilai-Borwein method, which uses curvature information to select more aggressive steps while maintaining convergence [1].

Q4: What special considerations apply to step size selection in multiobjective optimization problems?

For uncertain multiobjective optimization problems, the steepest descent method requires careful step size control to ensure convergence to robust efficient solutions. Recent research has established that with appropriate step size selection, these methods achieve linear convergence rates even in the presence of objective uncertainty [3].

Q5: How do Quasi-Newton methods with global convergence guarantees differ from classical approaches?

Classical Quasi-Newton methods like BFGS typically use unitary step sizes (η_k = 1) and exhibit only local convergence properties [10]. Newer approaches incorporate carefully designed step size schedules or cubic regularization to guarantee global convergence without requiring strong convexity assumptions [10].

Troubleshooting Guides

Problem 1: Non-Convergence or Divergence in Steepest Descent

Symptoms: Iterates oscillate between values or move away from the suspected optimum; objective function values increase or show no consistent decrease.

Diagnosis: Typically caused by excessively large step sizes that overshoot the descent region, particularly in regions of high curvature.

Solutions:

- Implement step size reduction with backtracking line search

- Verify the Lipschitz continuity constant of the gradient and set α_t ≤ 1/L [9]

- For Quasi-Newton methods, employ the Cubically Enhanced Quasi-Newton (CEQN) stepsize schedule [10]

- Monitor the gradient norm ‖∇f(x_k)‖ to detect divergence early

Problem 2: Slow Convergence Despite Correct Formulation

Symptoms: Algorithm makes consistent but prohibitively slow progress; many iterations yield minimal improvement.

Diagnosis: Overly conservative step sizes or poor local curvature approximation.

Solutions:

- For convex problems, employ diminishing step sizes of form η_t = O(1/t) [9]

- Implement adaptive methods like Barzilai-Borwein that use local curvature information [1]

- For Quasi-Newton methods, ensure Hessian approximations satisfy relative inexactness conditions with controlled bounds [10]

- Consider switching to accelerated methods when high precision is required

Problem 3: Convergence to Non-Stationary Points

Symptoms: Algorithm terminates with non-zero gradient norm; gets stuck in regions with moderate slope.

Diagnosis: Insufficient descent control or problematic objective function geometry (saddle points, flat regions).

Solutions:

- Implement sufficient decrease conditions (Armijo-Wolfe conditions) [1]

- For non-convex problems, use stochastic perturbations to escape saddle points

- Employ cubic regularization techniques that explicitly model higher-order information [10]

- Verify that descent directions satisfy cos θ_n > 0 in relation to the negative gradient [1]

Convergence Rates Comparison Table

Table 1: Theoretical Convergence Rates Under Different Assumptions

| Method | Function Class | Step Size Strategy | Convergence Rate | Global Guarantee? |

|---|---|---|---|---|

| Gradient Descent | Convex, L-smooth | Fixed: α ≤ 1/L | O(1/k) | Yes [9] |

| Gradient Descent | Strongly Convex | Fixed: α ≤ 2/(μ+L) | Linear: O(ρ^k) | Yes [9] |

| Steepest Descent (Multiobjective) | Uncertain Convex | Diminishing | Linear | Yes [3] |

| Classical Quasi-Newton | General Convex | Unitary (η_k = 1) | Asymptotic only | No [10] |

| CEQN Method | General Convex | Simple schedule | O(1/k) | Yes [10] |

| CEQN with Controlled Inexactness | General Convex | Adaptive schedule | O(1/k²) | Yes [10] |

Table 2: Step Size Selection Strategies and Their Properties

| Strategy | Implementation Complexity | Convergence Guarantee | Practical Performance | Best Application Context |

|---|---|---|---|---|

| Fixed Step Size | Low | Requires knowledge of L | Variable | Well-conditioned problems |

| Backtracking Line Search | Medium | Strong | Robust | General purpose |

| Barzilai-Borwein | Medium | Local only | Excellent for smooth problems | Quadratic and near-quadratic functions |

| Diminishing Schedules | Low | Strong | Slow but reliable | Convex stochastic optimization |

| Adaptive (CEQN) | High | Strong with verification | State-of-the-art | Ill-conditioned and non-convex problems |

Experimental Protocols

Protocol 1: Verifying Global Convergence in Steepest Descent

Purpose: Empirically validate global convergence guarantees for gradient descent with reduced step sizes.

Materials: Objective function f(x), gradient computation ∇f(x), initialization point x₀.

Methodology:

- Compute or estimate Lipschitz constant L of ∇f(x)

- Set fixed step size α = 0.9/L (conservative choice)

- For k = 0, 1, 2, ..., Kmax:

- Compute gradient gk = ∇f(xk)

- Update x{k+1} = xk - α gk

- Record f(xk) and ‖gk‖

- Terminate when ‖gk‖ < ε or k = Kmax

Validation Metrics:

- Monotonic decrease: f(x{k+1}) ≤ f(xk) for all k

- Gradient norm convergence: lim{k→∞} ‖gk‖ = 0

- Objective value convergence: |f(x_k) - f(x^*)| ≤ C/k for convex f [9]

Protocol 2: Adaptive Step Size Selection for Quasi-Newton Methods

Purpose: Implement and validate the Cubically Enhanced Quasi-Newton (CEQN) method with global convergence guarantees.

Materials: Objective function f(x), gradient computation ∇f(x), Hessian approximation B_k.

Methodology:

- Initialize x₀, approximation accuracy parameters α, ᾱ

- For k = 0, 1, 2, ...:

- Compute Hessian approximation Bk satisfying relative inexactness condition: (1-ᾱ)Bk ⪯ ∇²f(xk) ⪯ (1+ᾱ)Bk [10]

- Calculate step size ηk using CEQN schedule based on local curvature

- Update x{k+1} = xk - ηk Hk ∇f(xk) where Hk = Bk^{-1}

- Verify descent condition f(x{k+1}) < f(xk)

- Continue until convergence criteria satisfied

Validation Metrics:

- Hessian approximation quality: ‖Bk - ∇²f(xk)‖/‖∇²f(x_k)‖

- Convergence rate: Compare empirical rate to theoretical O(1/k) or O(1/k²)

- Adaptive performance: Method should automatically adjust to local curvature [10]

Protocol 3: Robust Multiobjective Optimization with Guaranteed Convergence

Purpose: Implement steepest descent for uncertain multiobjective problems with convergence verification.

Materials: Multiple objective functions F(x) = (F₁(x), ..., F_m(x)), uncertainty set U.

Methodology:

- Formulate robust counterpart using objective-wise worst-case approach [3]

- Compute descent direction for the robust multiobjective problem

- Select step size ensuring sufficient decrease for all objective components

- Iterate using x{k+1} = xk - αk dk with carefully chosen α_k

- Verify convergence to robust efficient solution

Validation Metrics:

- Pareto optimality: No other point improves all objectives simultaneously

- Convergence rate: Linear convergence for strongly convex case [3]

- Robustness: Performance stability across uncertainty realizations

Diagrammatic Representations

Diagram 1: Gradient Descent with Convergence Guarantees

Diagram 2: Step Size Selection Hierarchy for Global Convergence

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Convergence Experiments

| Reagent/Tool | Function | Implementation Considerations | ||

|---|---|---|---|---|

| Lipschitz Constant Estimator | Determines maximum safe fixed step size | Can be computed globally or locally; conservative estimates ensure stability but slow convergence [9] | ||

| Backtracking Line Search | Adaptively reduces step size to ensure sufficient decrease | Requires parameters (typically β=0.5-0.8, c=1e-4); guarantees monotonic decrease [1] | ||

| Relative Inexactness Verifier | Validates Hessian approximation quality in Quasi-Newton methods | Ensures (1-ᾱ)Bk ⪯ ∇²f(xk) ⪯ (1+ᾱ)B_k; critical for O(1/k²) rates [10] | ||

| Curvature Pair Monitor | Tracks (sk, yk) for Quasi-Newton updates | sk = xk - x{k-1}, yk = ∇f(xk) - ∇f(x{k-1}); enables Hessian approximation [10] | ||

| Robust Counterpart Formulator | Converts uncertain multiobjective problems to deterministic form | Uses objective-wise worst-case approach; enables standard optimization techniques [3] | ||

| Convergence Diagnostic Suite | Monitors multiple convergence indicators | Tracks ‖∇f(x_k)‖, | f(xk)-f(x{k-1}) | , ‖xk-x{k-1}‖; detects stalls and oscillations [9] |

The theoretical guarantees for global convergence in steepest descent methods fundamentally rely on appropriate step size reduction strategies. From fixed step sizes based on Lipschitz constants to sophisticated adaptive schedules like the CEQN method, proper step size control transforms locally convergent algorithms into globally reliable optimization tools. Recent advances have established non-asymptotic convergence rates for broad classes of Quasi-Newton methods, bridging the gap between practical performance and theoretical guarantees. For researchers in drug development and scientific computing, these convergence guarantees provide confidence in optimization results while the troubleshooting guides address common implementation challenges encountered in experimental settings.

The Kantorovich Inequality and Its Implications for Convergence Bounds

The Kantorovich inequality is a fundamental result in mathematics, serving as a particular case of the Cauchy-Schwarz inequality. It provides an upper bound for the product of a quadratic form and the quadratic form of the inverse of a matrix. This inequality is crucial in optimization, particularly in analyzing the convergence rate of iterative algorithms like the steepest descent method [11].

For a symmetric positive definite matrix ( A ) with eigenvalues ( 0 < \lambda1 \leq \cdots \leq \lambdan ), and any non-zero vector ( \mathbf{x} \in \mathbb{R}^n ), the inequality states [12]: [ \frac{(\mathbf{x}^{\top}A\mathbf{x})(\mathbf{x}^{\top}A^{-1}\mathbf{x})}{(\mathbf{x}^{\top}\mathbf{x})^2} \leq \frac{1}{4}\frac{(\lambda1+\lambdan)^2}{\lambda1\lambdan} = \frac{1}{4}\Bigg(\sqrt{\frac{\lambda1}{\lambdan}}+\sqrt{\frac{\lambdan}{\lambda1}}\Bigg)^2. ] This bound depends only on the condition number ( \kappa(A) = \frac{\lambdan}{\lambda1} ) of the matrix ( A ), highlighting its role in assessing problem conditioning and algorithm efficiency [11] [13].

How the Kantorovich Inequality Bounds Convergence

Role in Steepest Descent Convergence Analysis

The Kantorovich inequality is instrumental in convergence analysis, specifically bounding the convergence rate of the steepest descent method for unconstrained optimization [11]. The condition number ( \kappa(A) ) of the Hessian matrix directly influences how quickly the algorithm converges. The inequality helps establish that the worst-case convergence rate is proportional to ( \left( \frac{\kappa(A) - 1}{\kappa(A) + 1} \right)^2 ), which approaches 1 as ( \kappa(A) ) increases, leading to slower convergence [11] [3].

Practical Implications for Step Size Reduction

In practice, a large condition number indicates an ill-conditioned problem, where the objective function's curvature varies significantly across dimensions. This often necessitates reducing the step size to maintain stability in iterative methods, directly impacting efficiency. The Kantorovich inequality quantifies this relationship, providing a theoretical foundation for step-size selection strategies [3].

Frequently Asked Questions (FAQs)

Q1: Why is the Kantorovich inequality important in optimization? It provides a theoretical upper bound on the convergence rate of gradient-based methods, helping researchers analyze and predict algorithm performance, especially for ill-conditioned problems [11] [3].

Q2: How does the condition number affect convergence? A larger condition number ( ( \kappa(A) ) ) leads to a slower convergence rate. The Kantorovich inequality shows the convergence rate is bounded by a function of this condition number [11].

Q3: Can the Kantorovich inequality be applied to non-quadratic problems? While originally for quadratic forms, its principles extend to general unconstrained optimization via local quadratic approximations (e.g., using the Hessian matrix) [3].

Q4: What are the implications for drug development and scientific computing? In drug development, optimization problems (e.g., molecular modeling) often involve ill-conditioned data. Understanding convergence bounds helps in designing efficient and robust computational experiments [3].

Troubleshooting Common Experimental Issues

Problem: Slow Convergence in Steepest Descent Experiments

- Possible Cause: Large condition number of the Hessian matrix.

- Solution:

- Preconditioning: Transform the problem to improve conditioning.

- Adaptive Step Sizes: Use methods like line search to dynamically adjust step sizes [3].

Problem: Numerical Instability in Calculations

- Possible Cause: Extreme eigenvalues causing overflow/underflow in ( \mathbf{x}^{\top}A^{-1}\mathbf{x} ).

- Solution:

- Eigenvalue Analysis: Check the range of eigenvalues.

- Regularization: Add a small positive constant to the diagonal of ( A ) to bound eigenvalues away from zero [11].

Problem: Validating Kantorovich Inequality in Code

- Steps:

- Compute eigenvalues ( \lambda1, \lambdan ) of ( A ).

- For random unit vectors ( \mathbf{x} ), compute ( \text{LHS} = (\mathbf{x}^{\top}A\mathbf{x})(\mathbf{x}^{\top}A^{-1}\mathbf{x}) ).

- Compute ( \text{RHS} = \frac{(\lambda1 + \lambdan)^2}{4 \lambda1 \lambdan} ).

- Verify ( \text{LHS} \leq \text{RHS} ) holds [12].

Key Mathematical Expressions and Bounds

Table 1: Key Components of the Kantorovich Inequality

| Component | Mathematical Expression | Role in Inequality |

|---|---|---|

| Quadratic Form | ( \mathbf{x}^{\top}A\mathbf{x} ) | Represents the primary objective landscape. |

| Inverse Quadratic Form | ( \mathbf{x}^{\top}A^{-1}\mathbf{x} ) | Relates to the conjugate direction performance. |

| Condition Number | ( \kappa(A) = \frac{\lambdan}{\lambda1} ) | Determines the upper bound of the product. |

| Kantorovich Bound | ( \frac{1}{4} \left( \sqrt{\kappa(A)} + \sqrt{\frac{1}{\kappa(A)}} \right)^2 ) | Worst-case upper limit for the product of forms. |

Research Reagent Solutions: Mathematical Tools

Table 2: Essential Mathematical Tools for Convergence Analysis

| Tool Name | Function in Analysis | Application Context |

|---|---|---|

| Eigenvalue Decomposition | Determines the condition number ( \kappa(A) ) | Assessing problem conditioning and convergence bounds. |

| Quadratic Form Analysis | Evaluates ( \mathbf{x}^{\top}A\mathbf{x} ) and ( \mathbf{x}^{\top}A^{-1}\mathbf{x} ) | Directly computing the terms in the Kantorovich inequality. |

| Spectral Theory | Analyzes matrix properties via eigenvalues | Proving the inequality and its extensions. |

| Numerical Linear Algebra | Provides algorithms for matrix computations | Implementing checks and applying the inequality in code. |

Experimental Protocol: Validating the Bound

Objective: Verify the Kantorovich inequality for a given positive definite matrix ( A ) and multiple vectors ( \mathbf{x} ).

Methodology:

- Input: A symmetric positive definite matrix ( A ), number of random vectors ( N ).

- Compute Eigenvalues: Calculate ( \lambda1 ) and ( \lambdan ), the smallest and largest eigenvalues of ( A ).

- Calculate RHS Bound: Compute ( \text{Bound} = \frac{(\lambda1 + \lambdan)^2}{4 \lambda1 \lambdan} ).

- Generate Vectors: For ( i = 1 ) to ( N ), generate a random vector ( \mathbf{x}i ) and normalize it: ( \mathbf{x}i = \frac{\mathbf{x}i}{\|\mathbf{x}i\|} ).

- Compute LHS: For each ( \mathbf{x}i ), calculate ( \text{LHS}i = (\mathbf{x}i^{\top}A\mathbf{x}i)(\mathbf{x}i^{\top}A^{-1}\mathbf{x}i) ).

- Validation: Check that ( \text{LHS}i \leq \text{Bound} ) for all ( i ). The maximum value of ( \text{LHS}i ) should approach the bound.

Workflow Diagram

The following diagram illustrates the logical process of using the Kantorovich inequality in the convergence analysis of the steepest descent method.

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: Why does my steepest descent algorithm converge slowly or become unstable when training machine learning models on my biomedical dataset? A1: Slow convergence or instability in steepest descent is frequently caused by the high levels of noise and high-dimensional nature of biomedical data. Noise enters the cost function nonlinearly and can cause the optimization process to oscillate or converge to poor local minima [14]. Reducing the step size can stabilize convergence, but it must be balanced against the increased number of iterations required [6]. For multiobjective problems common in drug design, specialized robust steepest descent methods have been developed that guarantee global convergence with a linear convergence rate, even under data uncertainty [3].

Q2: What are the main sources of noise and uncertainty in biomedical data that affect computational analysis? A2: The primary sources can be categorized as follows:

- Aleatoric Uncertainty: This is inherent, irreducible noise in the data measurements themselves. In signal processing terms, this noise can be characterized by its power spectrum (e.g., white, pink, or red noise) [14]. In medicine, this can stem from measurement imprecision in lab tests or the inherent stochasticity of biological processes, like cancer metastasis [15].

- Epistemic Uncertainty: This arises from a lack of knowledge, including uncertainty in the model's parameters, its structure, or from incomplete training data that lacks representation from diverse demographic groups [16] [17] [18]. This is particularly problematic in "small-data" biomedical problems [19].

- Data Complexity: Biomedical data is often high-dimensional, heterogeneous (combining text, images, and numerical values), and multimodal (e.g., integrating genomic sequences with clinical records). These characteristics complicate preprocessing and can introduce variability that undermines reproducibility [16].

Q3: How can I make my ML model more resilient to noise in biomedical data? A3: Several strategies can improve resilience:

- Uncertainty Quantification (UQ): Integrate methods like Bayesian Inference, Monte Carlo Dropout, or deep ensembles to estimate predictive uncertainty. This allows the model to signal when its predictions are unreliable [17] [18] [15].

- Robust Preprocessing: Employ careful feature selection and discretization techniques to mitigate the impact of noisy features. Logic-based machine learning models like the Tsetlin Machine have shown particular resilience to noise injection, maintaining performance even at low signal-to-noise ratios [19].

- Sampling and Robust Optimization: Instead of random sampling, use smart model parameterizations and robust optimization approaches designed for uncertain multiobjective problems. These methods help find solutions that remain effective across a range of possible scenarios [14] [3].

Q4: My model performs well on training data but fails on new clinical data. What could be the cause? A4: This is often a result of dataset shift, where the statistical properties of the deployment data differ from the training data. This can be covariate shift (change in the input feature distributions) or label shift (change in the output class distributions) [18]. Another common cause is data leakage, where information from the test set inadvertently influences the training process (e.g., by performing normalization before splitting the data), which artificially inflates performance metrics [16].

Troubleshooting Guides

Problem: Irreproducible AI Model Results

- Symptoms: The model produces different results upon repeated runs with the same data and code.

- Potential Causes:

- Inherent Non-Determinism: Use of stochastic algorithms (e.g., Stochastic Gradient Descent), random weight initialization, or dropout layers in deep learning [16].

- Hardware and Software Variability: Floating-point precision limitations and non-deterministic parallel computing on GPUs/TPUs [16].

- Non-Deterministic Preprocessing: Use of inherently variable dimensionality reduction techniques like t-SNE or UMAP [16].

- Solutions:

- Set random seeds for all random number generators used by your libraries (e.g., NumPy, TensorFlow, PyTorch).

- Utilize deterministic algorithms and deep learning layers where available, though this may come with a performance cost.

- Document all preprocessing steps, software versions, and hardware settings meticulously to improve replicability.

Problem: High Predictive Uncertainty in Clinical Predictions

- Symptoms: Model outputs have low confidence or high variance, making clinical decisions difficult.

- Potential Causes:

- Solutions:

- Quantify Uncertainty: Implement methods like conformal prediction to create prediction sets with guaranteed coverage, or use Bayesian methods to estimate posterior distributions [18].

- Implement Abstention: Program the model to abstain from making a prediction when uncertainty exceeds a predefined, clinically safe threshold, and flag the case for human expert review [18].

- Dynamic Calibration: Use continual learning strategies to update the model with new data, improving its calibration and adaptability to changing data distributions [15].

Experimental Protocols & Data

The following table summarizes key results from a study on logic-based ML resilience against noise in biomedical data [19].

Table 1: Performance of a Tsetlin Machine (TM) under varying levels of injected noise.

| Dataset | Signal-to-Noise Ratio (SNR) | Reported Performance Metric | Resilience Observation |

|---|---|---|---|

| Breast Cancer | -15 dB | High Sensitivity & Specificity | Effective classification remains possible even at very low SNRs. |

| Pima Indians Diabetes | Multiple low SNRs | Accuracy, Sensitivity, Specificity | TM's training parameters (Nash equilibrium) remain resilient to noise injection. |

| Parkinson's Disease | Multiple low SNRs | Accuracy, Sensitivity, Specificity | A rule mining encoding method allowed for a 6x reduction in training parameters while retaining performance. |

Detailed Experimental Protocol: Testing Model Resilience to Injected Noise

This protocol is adapted from research on resilient biomedical systems design [19].

Objective: To evaluate the robustness of a machine learning model against environmentally induced noise in a biomedical dataset.

Materials:

- Datasets: Publicly available biomedical datasets (e.g., UCI Breast Cancer, Pima Indians Diabetes, Parkinson's disease voice recordings) [19].

- ML Models: The model under test (e.g., a neural network) and a logic-based model like the Tsetlin Machine for comparison.

- Software: Python with libraries such as NumPy, Scikit-learn, and PyTM.

Methodology:

- Data Preprocessing: Handle missing values and normalize features. For logic-based models, apply a feature discretization method (e.g., fixed thresholding or a rule mining-based encoding).

- Noise Injection: Systematically inject additive white Gaussian noise into the training and/or testing data. The noise level should be quantified by the Signal-to-Noise Ratio (SNR) in decibels (dB).

- Model Training: Train the ML models on both the clean and noise-injected training sets.

- Model Evaluation: Evaluate the trained models on a held-out test set (which can also be clean or noisy). Key metrics include:

- Accuracy: Overall correctness.

- Sensitivity (Recall): Ability to identify true positives.

- Specificity: Ability to identify true negatives.

- Resilience Analysis: Plot performance metrics (e.g., sensitivity) against SNR. A more resilient model will maintain higher performance as SNR decreases. Monitor the stability of the model's internal parameters (e.g., convergence efficiency expressed in terms of Nash equilibrium for the TM).

Research Reagent Solutions

Table 2: Essential materials and computational tools for experiments in noisy biomedical data environments.

| Item / Reagent | Function / Application |

|---|---|

| UCI Machine Learning Repository Datasets | Provides standardized, publicly available biomedical datasets (e.g., Breast Cancer, Pima Indians Diabetes) for benchmarking model performance and noise resilience [19]. |

| Tsetlin Machine (TM) | A logic-based ML algorithm that uses propositional logic for pattern recognition. It is particularly resilient to noise and can produce interpretable models, making it suitable for clinical data [19]. |

| Monte Carlo Dropout | A technique to estimate epistemic uncertainty in deep learning models by performing multiple stochastic forward passes during inference [17] [18]. |

| Conformal Prediction Framework | A method to generate prediction sets (rather than single point estimates) for any standard ML model, providing formal, sample-specific coverage guarantees under minimal assumptions [18]. |

| Bayesian Inference Libraries | Software tools (e.g., PyMC3, Stan) that enable model parameter estimation and uncertainty quantification through Markov Chain Monte Carlo (MCMC) sampling or variational inference [17]. |

Workflow Visualizations

Diagram 1: Uncertainty Management Workflow

Diagram 2: Steepest Descent in Noisy Biomedical Optimization

Practical Step Size Adaptation Algorithms for Biomedical Optimization

Exact Line Search Methods for Polynomial Objective Functions

Exact line search is an iterative optimization approach that finds a local minimum of a multidimensional nonlinear function by calculating the optimal step size in a chosen descent direction during each iteration [20]. When applied to polynomial objective functions, these methods leverage the specific algebraic structure of polynomials to efficiently compute exact minimizers, offering potential advantages in convergence speed and stability [21] [22]. This technical guide addresses common implementation challenges and provides methodological details for researchers applying these techniques in scientific computing and drug development contexts, particularly within research focused on steepest descent convergence.

Troubleshooting Guides

Frequently Encountered Issues and Solutions

Problem: Slow Convergence in Ill-Conditioned Problems Symptoms: Method progresses very slowly despite polynomial structure; iteration count becomes excessively high. Diagnosis: This occurs when the Hessian of the polynomial objective has a high condition number [22] [23]. Solution: For quadratic polynomials, implement preconditioning. For higher-degree polynomials, consider variable transformations to improve conditioning. Monitor the relationship between gradient norms and iteration count [22].

Problem: Computational Expense of Exact Minimization Symptoms: Each iteration takes prohibitively long despite theoretical convergence guarantees. Diagnosis: Exact minimization of high-degree polynomials requires finding roots of derivative polynomials [24]. Solution: For quartic or higher polynomials, implement efficient root-finding algorithms specifically designed for the polynomial degree. Balance computational cost against convergence benefits [21] [22].

Problem: Convergence to Non-Minimizing Stationary Points Symptoms: Algorithm stagnates at points where gradient is zero but function value is not minimized. Diagnosis: Exact line search may converge to any stationary point without additional safeguards [20]. Solution: Implement curvature conditions to ensure sufficient decrease. For higher-degree polynomials, verify that the Hessian is positive definite at candidate solutions [20].

Problem: Numerical Instability with Large-Scale Problems Symptoms: Erratic convergence behavior or overflow errors with high-dimensional polynomial objectives. Diagnosis: Accumulation of numerical errors in polynomial evaluation and gradient calculations [22]. Solution: Use multi-precision arithmetic for critical computations. Implement residual control strategies and regularly check descent conditions [7].

Experimental Protocols and Methodologies

Protocol 1: Implementing Exact Line Search for Quadratic Polynomials

Initialization: Define quadratic objective function f(x) = ½xᵀAx - bᵀx, where A is symmetric positive definite [22].

Gradient Calculation: Compute ∇f(xₖ) = Axₖ - b at current iterate xₖ [22].

Step Size Calculation: For quadratic objectives, compute exact step size using αₖ = (∇f(xₖ)ᵀ∇f(xₖ)) / (∇f(xₖ)ᵀA∇f(xₖ)) [22].

Update Iterate: Calculate new iterate xₖ₊₁ = xₖ - αₖ∇f(xₖ) [22].

Convergence Check: Terminate when ‖∇f(xₖ)‖ < ε or maximum iterations reached [20].

Protocol 2: Exact Line Search for Higher-Degree Polynomials

Function Representation: Represent polynomial objective in canonical form with stored coefficients [21].

Direction Computation: Calculate descent direction pₖ (typically negative gradient for steepest descent) [20].

Univariate Minimization: Construct univariate polynomial φ(α) = f(xₖ + αpₖ) and find its real positive roots [21].

Root Selection: Identify α* that minimizes φ(α) among all critical points [24].

Safeguards: Implement conditions to ensure α* provides sufficient decrease (e.g., Armijo condition) [20].

Research Reagent Solutions

Table 1: Essential Computational Tools for Exact Line Search Implementation

| Tool/Category | Specific Implementation | Function/Purpose |

|---|---|---|

| Optimization Libraries | TensorFlow, PyTorch [25] | Automatic differentiation for polynomial gradients |

| Polynomial Solvers | NumPy (Python), Eigen (C++) [21] | Root finding for derivative polynomials |

| Linear Algebra | LAPACK, ARPACK [22] | Eigenvalue computation for conditioning analysis |

| Specialized Software | MATPLOTLIB (visualization) [25] | Convergence monitoring and performance profiling |

Quantitative Performance Data

Table 2: Convergence Properties of Exact Line Search Methods

| Problem Type | Convergence Rate | Iteration Cost | Stability |

|---|---|---|---|

| Well-Conditioned Quadratic | Linear[(λ₁-λₙ)/(λ₁+λₙ) [22]] | Low (closed-form solution) [22] | High [22] |

| Ill-Conditioned Quadratic | Linear (deteriorates with condition number) [22] [23] | Low (closed-form solution) [22] | Medium [22] |

| Quartic Polynomials | Superlinear (when close to solution) [26] | Medium (root finding) [21] | Medium-High [21] |

| General Polynomials | Varies with degree and structure [26] | High (numerical optimization) [24] | Medium [20] |

Frequently Asked Questions (FAQs)

Q: When is exact line search preferred over approximate methods for polynomial objectives? A: Exact line search is particularly beneficial when the polynomial structure allows efficient computation of minimizers (e.g., low-degree polynomials), when computational resources allow for more accurate steps, and when convergence stability is prioritized over per-iteration cost [21] [22].

Q: How does exact line search improve upon standard gradient descent for polynomial optimization? A: Research demonstrates that exact line search can enhance convergence speed and computational efficiency compared to standard methods. For polynomial matrix equations, it requires fewer iterations to reach solutions and shows improved stability, especially with ill-conditioned matrices [21].

Q: What are the computational bottlenecks when implementing exact line search for high-degree polynomials? A: The primary challenges include: (1) solving for roots of high-degree derivative polynomials, (2) selecting the correct minimizer among multiple critical points, and (3) managing numerical precision in polynomial evaluations [24].

Q: Can exact line search be combined with Newton-type methods for polynomial objectives? A: Yes, exact line search can enhance Newton-type methods by ensuring sufficient decrease at each iteration, potentially improving global convergence while maintaining fast local convergence near optima [26] [24].

Workflow Visualization

The Armijo Rule and Wolfe Conditions

In unconstrained minimization problems, inexact line search methods provide an efficient way to determine an acceptable step length without spending excessive computational resources to find the exact minimum along a search direction. The Armijo rule (also called the sufficient decrease condition) and Wolfe conditions are inequalities used to ensure that the step length achieves adequate reduction in the objective function while maintaining reasonable convergence properties [27] [28].

The Armijo condition alone ensures that the function value decreases sufficiently, but it may accept step lengths that are too small, leading to slow convergence. The Wolfe conditions combine the Armijo condition with a curvature condition to prevent excessively small steps while still guaranteeing convergence [29] [28].

Table: Key Parameters in Inexact Line Search Conditions

| Parameter | Typical Value Range | Function | Mathematical Expression |

|---|---|---|---|

| c₁ (Armijo parameter) | 10⁻⁴ or smaller [29] | Controls sufficient decrease | ( f(xk + αk pk) ≤ f(xk) + c1 αk pk^T ∇f(xk) ) [28] |

| c₂ (Curvature parameter) | 0.1-0.9 [29] | Controls step acceptance | ( pk^T ∇f(xk + αk pk) ≥ c2 pk^T ∇f(x_k) ) [28] |

| Relationship requirement | 0 < c₁ < c₂ < 1 [28] | Ensures existence of acceptable steps | Critical for convergence guarantees |

Visualization of Condition Relationships

Relationship between different line search conditions

Implementation Guide

Backtracking Line Search with Armijo Rule

Backtracking line search provides a simple method for implementing the Armijo condition. It starts with a relatively large estimate of the step size and iteratively shrinks it until the Armijo condition is satisfied [30].

Algorithm Steps:

- Initialize: Choose initial step length α₀ > 0, contraction factor τ ∈ (0,1), and c₁ ∈ (0,1)

- Set j = 0 and compute m = ∇f(x)ᵀp (local slope along direction p)

- Iterate: While ( f(x + αj p) > f(x) + c₁ αj m ), set α{j+1} = τ αj and increment j

- Return α_j when condition is satisfied [30]

Table: Backtracking Line Search Parameter Selection

| Parameter | Recommended Values | Effect on Performance | Stability Considerations |

|---|---|---|---|

| Initial α₀ | 1.0 or BB step size [31] | Larger values may reduce iterations but increase function evaluations | Too large may cause overflow or numerical instability |

| Contraction factor τ | 0.5 [30] | Smaller values find acceptable steps faster but may result in smaller steps | Values too close to 1 may require many iterations |

| c₁ | 10⁻⁴ [29] | Larger values enforce stricter decrease requirements | Too large may make condition unsatisfiable |

Wolfe Conditions Implementation

For more sophisticated optimization algorithms, particularly quasi-Newton methods, implementing the full Wolfe conditions often yields better performance [28].

Algorithm Workflow:

Wolfe conditions step length selection workflow

Troubleshooting Guide

Common Implementation Issues

Problem: Line Search Taking Too Small Steps

Symptoms: Slow convergence, minimal objective function improvement between iterations Diagnosis: Armijo condition too strict (c₁ too large) or initial step length too small Solution:

- Reduce c₁ to 10⁻⁴ or smaller [29]

- Implement interpolation instead of fixed contraction

- Use Barzilai-Borwein (BB) step size as initial guess [31]

Problem: Line Search Failing to Find Acceptable Step

Symptoms: Algorithm terminates early or enters infinite loop Diagnosis: Descent direction not properly computed or curvature condition violated Solution:

- Verify descent direction satisfies ∇f(x)ᵀp < 0 [29]

- Check gradient computation for errors

- Implement bracketing with zoom algorithm [29]

Problem: Excessive Function Evaluations

Symptoms: Slow runtime despite good convergence Diagnosis: Overly strict Wolfe conditions or inefficient implementation Solution:

- Increase c₂ to 0.9 to widen acceptance interval [28]

- Implement caching of function and gradient evaluations

- Consider two-way backtracking for large-scale problems [30]

Problem: Non-Monotonic Gradient Norm Reduction

Symptoms: Gradient norm oscillates between iterations Diagnosis: Using standard Wolfe conditions instead of strong Wolfe conditions Solution:

- Implement strong Wolfe conditions: ( |pk^T ∇f(xk + αk pk)| ≤ c2 |pk^T ∇f(x_k)| ) [29] [28]

- This prevents the gradient from being "too positive" at the new point

Research Reagent Solutions

Table: Essential Computational Tools for Line Search Implementation

| Tool/Component | Function | Implementation Notes |

|---|---|---|

| Gradient Verifier | Validates analytical gradient computation | Use finite differences: ( [f(x+ε) - f(x)]/ε ) |

| Direction Checker | Ensures p is a descent direction | Must satisfy: ∇f(x)ᵀp < 0 [29] |

| Bracketing Algorithm | Finds interval containing acceptable step | Combine with zoom for strong Wolfe conditions [29] |

| Function Evaluator | Computes objective function | Cache previous evaluations to reduce computation |

| Step Length Interpolator | Generates candidate step lengths | Quadratic/cubic interpolation often effective |

Frequently Asked Questions

Q: Why must c₁ be smaller than c₂ in the Wolfe conditions?

A: The relationship 0 < c₁ < c₂ < 1 is mathematically necessary to guarantee that there exists a range of step lengths satisfying both conditions simultaneously. If c₁ were larger than c₂, it might be impossible to find any step length that satisfies both the sufficient decrease and curvature conditions, causing the line search to fail [28].

Q: When should I use Armijo alone versus full Wolfe conditions?

A: Use Armijo alone (backtracking) for simpler algorithms like gradient descent where computational efficiency is prioritized over convergence rate. Use Wolfe conditions for quasi-Newton methods where preserving the positive-definiteness of Hessian approximations is important, or when you need faster convergence [28].

Q: How do I choose between standard and strong Wolfe conditions?

A: Use standard Wolfe conditions for general purposes. Prefer strong Wolfe conditions when you need to avoid points where the gradient is still significantly negative, which can occur with standard Wolfe conditions. Strong Wolfe conditions typically lead to better convergence behavior [29].

Q: What causes the "curvature condition" to fail and how is it resolved?

A: The curvature condition ( ∇^Tf(x + αp)p ≥ c₂ ∇^Tf(x)^Tp ) fails when the step length is too short, causing insufficient change in the directional derivative. This is resolved by increasing the step length until the gradient at the new point is sufficiently less negative than at the current point [32] [29].

Q: How does the Barzilai-Borwein (BB) method relate to these conditions?

A: The BB method uses a specific formula to compute step sizes that can be viewed as a special case of more general line search methods. Recent extensions to BB-like step sizes show how the principles behind Wolfe conditions can be adapted to create new step size strategies with proven convergence guarantees [27] [31].

Troubleshooting Guide: Common Issues and Solutions

Q1: My algorithm's convergence slows down significantly in high-dimensional problems, even when the problem is well-conditioned. What is causing this, and how can I fix it?

Problem: This is a known limitation of the standard Polyak step-size in high-dimensional settings, where the problem dimension d grows much faster than the sample size n. The issue arises from a mismatch in how smoothness is measured. The standard approach estimates the global Lipschitz smoothness constant, which becomes ineffective in high dimensions [33].

Solution: Implement the Sparse Polyak step-size. This variant is designed for high-dimensional M-estimation problems. It modifies the step size to estimate the restricted Lipschitz smoothness constant (RSS), which measures smoothness only in directions relevant to the problem. This adaptation helps maintain a constant number of iterations to achieve optimal statistical precision, preserving the rate invariance property even as d/n grows [33] [34].

Q2: When using gradient descent on a function like the Rosenbrock function, the algorithm oscillates in the "ravine" and converges very slowly. How can adaptive step-sizes help?

Problem: The Rosenbrock function f(x,y)=x^4+10(y-x^2)^2 has a valley (or ravine) along the parabola y=x^2. The function grows rapidly (quadratically) away from this ravine but only slowly (quartically) along it. Constant step-size gradient descent struggles to navigate this terrain efficiently [35].

Solution: Use an epoch-based adaptive strategy that interlaces multiple constant step-size gradient steps with a single long Polyak step [35].

- Constant Step-size Phase (

GD): Run several iterations with a constant step-size. This brings the iterates close to the ravine. - Polyak Step (

Polyak): Execute a single step using the Polyak rule:η = f(x_t) / ||∇f(x_t)||^2. This large step moves the iterate significantly closer to the minimum along the ravine. This hybrid method,GDPolyak, can achieve linear convergence on problems where both constant step-size GD and pure Polyak exhibit sublinear convergence [35].

Q3: How can I implement a Polyak step-size without prior knowledge of the optimal value f(x*)?

Problem: The classical Polyak step-size, η_k = (f(x_k) - f(x*)) / ||∇f(x_k)||^2, requires knowing the optimal function value f(x*), which is often unavailable in real-world problems [36].

Solution: While the core method requires f(x*), research has proposed modifications for when it is unknown.

- Lower Bound Approach: The requirement can be relaxed by paying a logarithmic factor in complexity if a lower bound on

f(x*)is available [36]. - Stochastic Variants: In stochastic settings, variants like AdaSPS and AdaSLS have been designed that do not require

f(x*)or knowledge of problem parameters and still guarantee convergence to the exact minimizer [37] [7].

Q4: In noisy optimization environments, the gradient norm can be unreliable. Are there robust alternatives for step-size adaptation?

Problem: When gradients are subject to significant interference or noise, calculating the step size based on the gradient norm can be unstable and harm convergence [7].

Solution: Implement a step adaptation algorithm based on orthogonality. The core idea is to adapt the step h_k to find a new point where the current gradient is orthogonal to the previous one, aiming for a 90-degree angle between successive gradients. This method mimics the steepest descent principle but is more robust to noise. The step is adjusted to achieve incomplete relaxation or over-relaxation to enforce this orthogonality condition, which can provide better performance than the steepest descent method under significant relative interference on the gradient [7].

Performance Comparison of Adaptive Step-Size Methods

The table below summarizes the characteristics and performance of different adaptive step-size algorithms discussed in the troubleshooting guide.

| Algorithm Name | Key Principle | Typical Convergence Rate | Problem Context / Assumptions | Key Advantage |

|---|---|---|---|---|

| Standard Polyak [36] | Hyperplane projection; step-size uses f(x*). |

O(1/√K) (nonsmooth), O(1/K) (smooth) |

Star-convex functions. | No need for Lipschitz constant; simple update. |

| Sparse Polyak [33] | Uses restricted Lipschitz smoothness (RSS). | Near-optimal statistical precision in high dimensions. | High-dimensional sparse M-estimation (d >> n). |

Maintains rate invariance; superior high-dim performance. |

| GDPolyak [35] | Alternates constant GD steps with large Polyak steps. | Local (nearly) linear convergence. | Functions with fourth-order growth (e.g., Rosenbrock). | Handles "ravine" structures effectively. |

| MomSPSmax (Stochastic HB) [37] | Polyak step-size integrated with heavy-ball momentum. | Fast rate (matching deterministic HB under interpolation). | Convex, smooth stochastic optimization. | Combines benefits of momentum and adaptive step-size. |

| Orthogonality-Based [7] | Adjusts step to enforce orthogonality of successive gradients. | ~2.7x faster than steepest descent in iterations (avg.). | Noisy gradients; non-convex smooth functions. | High noise immunity; only one gradient calc per iteration. |

Experimental Protocols for Key Algorithms

Protocol 1: Evaluating Sparse Polyak for High-Dimensional Estimation

This protocol outlines the methodology for comparing Sparse Polyak against standard adaptive methods in a high-dimensional sparse regression setting [33].

- Problem Setup: Generate data for a high-dimensional linear model where the true parameter vector

θ*is sparse (s*non-zero entries). The design dimensiondshould be much larger than the sample sizen. - Algorithm Configuration:

- Sparse Polyak: Implement the Iterative Hard Thresholding (IHT) algorithm, where the step-size is adapted using the Sparse Polyak rule, which estimates the Restricted Lipschitz Smoothness constant.

- Baselines: Run standard IHT with a fixed step-size (requiring knowledge of the RSS constant

L̄) and IHT with the standard Polyak step-size.

- Evaluation Metrics: Track the following over iterations:

- Statistical Error:

||θ_t - θ*||_2. - Optimization Error:

f(θ_t) - f(θ*). - Number of Iterations to reach a pre-specified statistical precision

ε.

- Statistical Error:

- Key Experiment: Measure how the number of required iterations scales as the dimension

dincreases (while keepings* log(d)/nconstant). The Sparse Polyak method should maintain a nearly constant iteration count, unlike the standard Polyak, whose iteration count will increase [33].

Protocol 2: Testing the GDPolyak Algorithm on Degenerate Functions

This protocol tests the hybrid GDPolyak algorithm on a function with a "ravine" structure and fourth-order growth [35].

- Test Function: Use the Rosenbrock function

f(x,y) = x^4 + 10(y - x^2)^2, which has a known minimum at(1, 1). - Algorithm Configuration:

- GDPolyak: Choose an epoch length

K(e.g., 5-10). In each epoch, performKgradient descent steps with a small constant step-sizeη. Then, perform one Polyak step:η_polyak = f(x_t) / ||∇f(x_t)||^2. - Baselines: Run standard gradient descent with a constant step-size and gradient descent using only the Polyak step-size at every iteration.

- GDPolyak: Choose an epoch length

- Evaluation Metrics: Record over time (iterations):

- Function value

f(x_t). - Distance to optimum

||x_t - x*||. - The adaptive step-size

η_tused.

- Function value

- Expected Outcome: The GDPolyak algorithm should exhibit linear convergence in both function value and distance to the optimum, while the baselines show sublinear convergence. The log-plot of the step-size

η_tfor GDPolyak should show an exponential growth pattern [35].

Research Reagent Solutions

The table below lists key conceptual "reagents" and their functions in the context of researching adaptive step-size algorithms.

| Research Reagent / Concept | Function / Role in the Experiment |

|---|---|

| Restricted Lipschitz Smoothness (RSS) Constant [33] | A key smoothness parameter in high-dimensional spaces; ensures convergence of algorithms like IHT when the problem is restricted to sparse vectors. |

| Ravinе Manifold (M) [35] | A smooth manifold containing the solution along which the function grows slowly. Its identification allows for designing efficient hybrid algorithms (e.g., GDPolyak). |

Hard Thresholding Operator (HT_s) [33] |

A non-linear projection used in IHT to enforce sparsity by retaining only the s largest (in magnitude) elements of a vector. |

| Orthogonality Principle (for step adaptation) [7] | A criterion used to adjust the step-size by aiming for orthogonality between successive gradients, improving robustness to noise. |

| Star-Convexity [36] | A generalization of convexity (the function is convex with respect to all its minimizers) sufficient for the convergence of the subgradient method with Polyak stepsize. |

Workflow and Conceptual Diagrams

This diagram illustrates a decision workflow for choosing between the standard and Sparse Polyak step-size within an iterative optimization algorithm, highlighting the key differentiation point for high-dimensional problems.

This diagram shows the conceptual decomposition of a function near a minimizer, which underpins the GDPolyak method. The function is split into a normal component (decreased by constant GD steps) and a tangential component (decreased by large Polyak steps).

Angle Condition Methods for Controlling Iterative Instability

The angle condition is a stabilization technique for the steepest descent method in structural reliability analysis. It controls instabilities by monitoring the angle between successive search direction vectors and dynamically adjusting the step size to prevent oscillatory or chaotic divergence [38]. This method is particularly valuable for highly nonlinear performance functions where traditional first-order reliability methods (FORM) like HL-RF become unstable [38].

Troubleshooting Guides

Issue 1: Oscillatory or Divergent Iterations

- Problem: Iterates oscillate between values or diverge chaotically instead of converging to the Most Probable Point (MPP).

- Diagnosis: This occurs when the iterative FORM formulation, particularly the standard HL-RF method with a step size of 1, is applied to highly nonlinear limit state functions [38]. The search direction overshoots.

- Solution: Implement the Angle Condition to adaptively control the step size.

- Procedure:

- At each iteration ( k ), compute the standard HL-RF point ( U{k+1}^{HLRF} ) [38].

- Calculate the angle ( \thetak ) between the current normalized steepest descent vector ( \alpha{k+1} ) and the vector from the current point to the new HL-RF point [38].

- Compare ( \thetak ) to the angle from the previous iteration, ( \theta{k-1} ).

- If the new angle is larger (( \thetak > \theta{k-1} )), it indicates potential instability. Reduce the step size ( \lambdak ) using an inner loop (e.g., ( \lambdak = \lambdak / 2 )) until the angle condition (( \thetak \leq \theta{k-1} )) is satisfied [38].

- Update the design point: ( U{k+1} = Uk + \lambdak (U{k+1}^{HLRF} - U_k) ).

- Procedure:

Issue 2: Slow Convergence Rate

- Problem: The algorithm converges stably but requires an excessive number of iterations.

- Diagnosis: Overly conservative step sizes from the angle condition can slow convergence.

- Solution:

- Use a dynamical-accelerated step size within the angle condition framework. The inner loop for step size reduction can be designed to find the largest step size that still satisfies the angle condition, rather than the smallest [38].

- For non-chaotic cases, consider a hybrid approach where the standard HL-RF method (step size=1) is used initially and the angle condition is activated only when oscillations are detected.

Issue 3: Inaccurate MPP and Reliability Index

- Problem: The solution converges but to an incorrect MPP, leading to an inaccurate failure probability.

- Diagnosis: This can be caused by an inaccurate gradient vector or numerical precision issues in the limit state function.

- Solution:

- Verify the gradient calculation. Use a central difference method for better accuracy rather than forward difference.

- Ensure the neighborhood size for numerical gradient calculation is appropriately small and decreases with iterations to prevent cycling, as demonstrated in proof-of-concept code where

Nsizeis reduced by a factor of ( k ) [39]. - Check the sensitivity of the limit state function. Highly discontinuous or noisy functions may require specialized treatment.

Frequently Asked Questions (FAQs)

Q1: How does the angle condition method compare to other stabilized FORM algorithms like the Finite-Step Length (FSL) or Chaos Control (STM) methods?

A1: The angle condition method is recognized for its simple application and effectiveness in enhancing robustness [38]. Unlike methods that rely on merit functions or Armijo rules, which can lead to complicated formulations and increased computational burden, the angle condition provides a geometrically intuitive and computationally simpler criterion for step size adjustment [38]. It has been shown to offer a superior balance of stability and efficiency compared to some traditional iterative methods [38].

Q2: My research involves multiobjective optimization under uncertainty. Can the steepest descent method with step size control be applied?

A2: Yes, the principles are actively being extended. Recent research has developed steepest descent methods for uncertain multiobjective optimization problems (UMOP) using a robust optimization framework [3]. While the specific "angle condition" may not be used, the fundamental challenge of achieving global convergence and controlling the step size is critical. Rigorous proofs for the global convergence and linear convergence rate of these steepest descent algorithms in UMOP are a current research focus [3].

Q3: What is the computational cost of implementing the inner loop for the angle condition?

A3: While the inner loop for step size adjustment adds computational overhead per iteration, the overall computational burden is often improved. This is because the method prevents wasteful, divergent iterations and achieves stabilization more efficiently than some other controlled FORM formulations, leading to a net reduction in total computation time for complex problems [38].

Q4: Are there alternatives to decreasing step sizes for ensuring convergence?

A4: Yes, the core requirement is a balance between step sizes going to zero (for convergence) and their sum being infinite (to avoid getting stuck far from the optimum) [39]. A harmonic sequence (( ak = a1 / k )) is a common choice, but more general sequences (( ak = a1 / k^t ) with ( 0 < t \leq 1 )) can also be used [39].

Experimental Protocol and Data

The following table summarizes key parameters and their roles in implementing the angle condition method for a typical structural reliability analysis.

Table 1: Key Parameters for Angle Condition Method Implementation

| Parameter | Symbol | Role & Specification | Recommended Value / Range |

|---|---|---|---|

| Initial Step Size | ( a1 ) or ( \lambda1 ) | Governs the initial aggressiveness of the search. Too large causes instability; too small slows convergence. | Start at 1.0, then reduce via angle condition [38] [39]. |

| Initial Point | ( x1 ) or ( U1 ) | The starting point for the iterative MPP search in the standard normal space. | Problem-dependent; often the origin or a known design point. |