Steepest Descent vs. Conjugate Gradient vs. L-BFGS: A Performance Guide for Biomedical Researchers

This article provides a comprehensive comparison of three fundamental optimization algorithms—Steepest Descent, Conjugate Gradient, and L-BFGS—tailored for researchers and professionals in drug development and bioinformatics.

Steepest Descent vs. Conjugate Gradient vs. L-BFGS: A Performance Guide for Biomedical Researchers

Abstract

This article provides a comprehensive comparison of three fundamental optimization algorithms—Steepest Descent, Conjugate Gradient, and L-BFGS—tailored for researchers and professionals in drug development and bioinformatics. It covers the core mathematical principles, explores practical applications in areas like drug-target interaction (DTI) prediction, and offers guidance on algorithm selection and troubleshooting based on problem characteristics like dimensionality, computational cost, and conditioning. Synthesizing insights from benchmark studies and current research, this guide aims to equip scientists with the knowledge to enhance the efficiency and robustness of their computational models in biomedical research.

Core Principles: Understanding the Mechanics of Steepest Descent, CG, and L-BFGS

In the realm of unconstrained mathematical optimization, gradient-based methods form the cornerstone for minimizing multivariate functions. These first-order iterative algorithms are particularly crucial in scientific and industrial contexts, including computational drug development, where efficient energy minimization of molecular structures can significantly accelerate research. Among these methods, the Steepest Descent (SD) algorithm represents the most fundamental approach, providing a baseline against which more sophisticated techniques are measured. This guide objectively compares the performance of three prominent gradient-based algorithms—Steepest Descent, Conjugate Gradient (CG), and Limited-memory Broyden–Fletcher–Goldfarb–Shanno (L-BFGS)—within a unified research framework. The performance analysis focuses on their convergence properties, computational efficiency, and applicability to large-scale problems encountered in scientific computing, with specific attention to the well-documented zigzag problem that plagues the steepest descent method in ill-conditioned landscapes.

The core principle underlying these methods is to iteratively update parameter estimates by moving in a direction that reduces the objective function value. For a function (f(\mathbf{x})), the generic update formula is (\mathbf{x}{k+1} = \mathbf{x}k + \alphak \mathbf{d}k), where (\alphak) is the step size and (\mathbf{d}k) is the search direction at iteration (k) [1]. The methods differ primarily in how they compute the search direction (\mathbf{d}_k), which directly impacts their convergence characteristics and computational requirements.

The Steepest Descent Algorithm

Fundamental Principles and Algorithm

The Steepest Descent method, attributed to Cauchy (1847), operates on a simple yet intuitive principle: at each point, move in the direction of the negative gradient of the function, which represents the direction of steepest local descent [2]. The algorithm proceeds as follows:

- Initialization: Start with an initial guess (\mathbf{x}_0), set iteration counter (k = 0), and select a convergence tolerance (\epsilon > 0).

- Gradient Computation: Calculate the gradient (\nabla f(\mathbf{x}_k)) at the current point.

- Stopping Condition: If (\|\nabla f(\mathbf{x}k)\| < \epsilon), stop; (\mathbf{x}k) is approximately a stationary point.

- Search Direction: Set the search direction (\mathbf{d}k = -\nabla f(\mathbf{x}k)).

- Line Search: Find a step size (\alphak > 0) that sufficiently reduces the function value along (\mathbf{d}k).

- Update: Set (\mathbf{x}{k+1} = \mathbf{x}k + \alphak \mathbf{d}k), increment (k), and repeat from step 2 [3] [2].

The step size (\alpha_k) can be determined through various line search strategies. The exact line search finds the global minimizer along the search direction, while inexact line searches (e.g., using Wolfe conditions) aim for sufficient decrease with fewer computations [1]. The Wolfe conditions, comprising the Armijo condition (sufficient decrease) and curvature condition, ensure both adequate progress and reasonable step sizes [1].

Convergence Guarantees

Under appropriate conditions, the Steepest Descent algorithm offers guaranteed convergence to a local minimum. For continuously differentiable functions bounded below with Lipschitz continuous gradients ((\|\nabla f(x) - \nabla f(y)\| \leq L\|x - y\|)), the method converges to a stationary point [2]. For strongly convex quadratic functions (f(\mathbf{x}) = \frac{1}{2}\mathbf{x}^\top A\mathbf{x} + \mathbf{b}^\top\mathbf{x}), where (A) is a symmetric positive definite matrix, the algorithm exhibits linear convergence [4] [3].

The convergence rate depends critically on the condition number (\kappa(A)) of the Hessian matrix (for quadratic functions) or the local condition number (for general functions). As (\kappa(A)) increases, the convergence slows dramatically [3]. This relationship directly connects to the zigzag problem, which we explore next.

The Zigzag Problem

The zigzag problem represents the most significant limitation of the Steepest Descent method. In ill-conditioned landscapes—where the curvature varies significantly across dimensions—the algorithm exhibits oscillatory behavior, slowly progressing toward the optimum in a zigzag pattern [4] [5] [3].

Table: Characteristics of the Zigzag Problem in Steepest Descent

| Aspect | Description | Impact on Convergence |

|---|---|---|

| Orthogonal Steps | Sequential gradient directions become orthogonal [5] | Progress perpendicular to true direction |

| Ill-conditioning | High condition number of Hessian matrix [3] | Dramatically reduced convergence rate |

| Oscillatory Trajectory | Path crosses and recrosses valley [5] | Many iterations needed even for simple problems |

| Eigenvalue Sensitivity | Convergence depends on extreme eigenvalues [4] | Performance degradation with skewed curvature |

Akaike (1959) demonstrated that for strongly convex quadratic problems, the gradient directions tend to alternate between two fixed directions [4]. In two-dimensional cases, each gradient is orthogonal to the previous one ((gk^\top g{k+1} = 0)), creating a zigzag pattern where the algorithm makes progressively smaller steps toward the solution [4] [5]. This behavior is particularly problematic in long, narrow valleys of the objective function, where the method repeatedly overshoots the valley floor [5].

The following diagram illustrates the zigzag behavior of Steepest Descent compared to more efficient methods:

Advanced Gradient Methods

Conjugate Gradient Method

The Conjugate Gradient (CG) method addresses the zigzag problem by constructing search directions that are mutually conjugate with respect to the Hessian matrix. For quadratic objective functions (f(\mathbf{x}) = \frac{1}{2}\mathbf{x}^\top A\mathbf{x} + \mathbf{b}^\top\mathbf{x}), this approach guarantees convergence within at most (n) iterations (where (n) is the problem dimension) [6].

The CG algorithm updates the search direction as: [ \mathbf{d}{k+1} = -\nabla f(\mathbf{x}{k+1}) + \betak \mathbf{d}k ] where (\betak) is chosen to maintain conjugacy between directions [7] [6]. Different formulas for (\betak) yield distinct CG variants (Fletcher-Reeves, Polak-Ribière, Hestenes-Stiefel) [6]. Unlike Steepest Descent, CG uses information from previous directions to avoid undoing progress, effectively eliminating the zigzag behavior [8].

L-BFGS Method

The L-BFGS (Limited-memory BFGS) method approximates the Broyden–Fletcher–Goldfarb–Shanno quasi-Newton approach while maintaining manageable memory requirements [7] [8]. Instead of storing the full Hessian approximation (which would require (O(n^2)) memory), L-BFGS stores only a limited number ((m)) of previous gradient and position vectors, constructing the Hessian approximation implicitly [7].

L-BFGS builds and refines a quadratic model of the objective function using the last (m) value/gradient pairs, producing a positive definite Hessian approximation that ensures descent directions [7]. The algorithm typically requires fewer iterations than CG, though each iteration has higher computational overhead ((O(n \cdot m)) operations) [7].

Experimental Comparison Framework

Methodologies and Protocols

To objectively compare algorithm performance, researchers employ standardized testing methodologies:

- Test Functions: Benchmarks include convex quadratic functions, generalized Rosenbrock function, and other standard optimization test problems with known properties [7].

- Performance Metrics: Common measures include number of iterations, function evaluations, gradient computations, and CPU time to reach a specified tolerance [7].

- Stopping Conditions: Typically based on gradient norm thresholds (e.g., (\|\nabla f(\mathbf{x}_k)\| < 10^{-8})) or maximum iteration counts [7].

- Line Search Implementation: Strong Wolfe conditions ensure sufficient decrease while avoiding excessively small steps [1].

- Preconditioning: For ill-conditioned problems, preconditioners transform the problem to improve condition number [7].

Table: Experimental Protocol Specifications

| Parameter | Typical Settings | Remarks |

|---|---|---|

| Gradient Tolerance | (10^{-8}) [7] | Stricter values increase iterations |

| Initial Step | Point with all coordinates -1 [7] | Standardized for reproducibility |

| Memory Parameter (L-BFGS) | 3-10 correction pairs [7] | Balance of memory and performance |

| Wolfe Conditions | (c1 = 10^{-4}), (c2 = 0.1-0.9) [1] | Ensures sufficient decrease and curvature |

Quantitative Performance Analysis

Comprehensive performance evaluation reveals distinct characteristics for each algorithm:

Table: Algorithm Performance Comparison on Standard Test Problems

| Algorithm | Iterations to Convergence | Function Evaluations | Gradient Computations | Memory Requirements |

|---|---|---|---|---|

| Steepest Descent | High (40+ for 3D quadratic [3]) | Very High (753 for 3D quadratic [3]) | Same as iterations | Low ((O(n))) |

| Conjugate Gradient | Moderate (~(n) for (n)-dim quadratic [6]) | Moderate (1 per iteration + line search) | 1 per iteration | Low ((O(n))) |

| L-BFGS | Low (fewer than CG [7]) | Low (1.5-2× fewer than CG [7]) | 1 per iteration | Moderate ((O(n \cdot m))) |

Empirical studies demonstrate that L-BFGS typically outperforms CG in terms of iteration count, particularly for computationally expensive functions where each function evaluation is costly [7]. However, for problems where function and gradient computations are cheap, CG may be preferable due to its lower computational overhead per iteration [7].

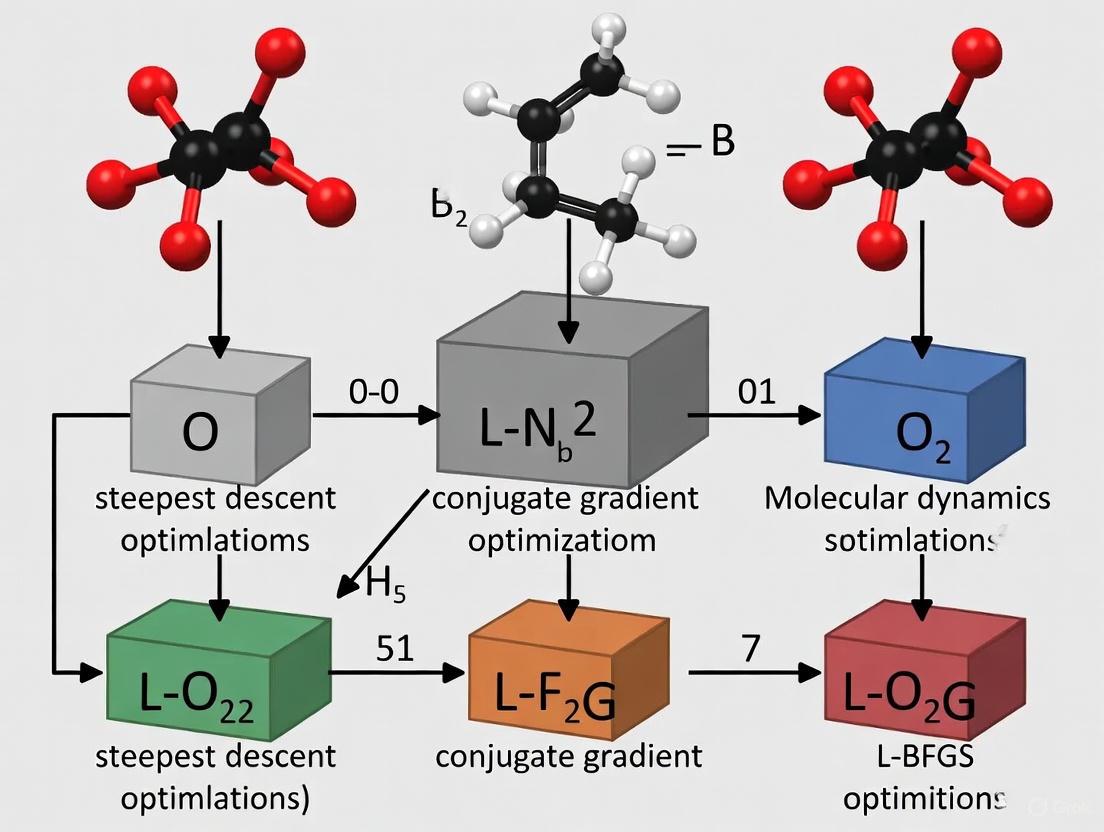

The following diagram illustrates the typical workflow for comparing optimization algorithms:

Application in Scientific Computing

Energy Minimization in Molecular Dynamics

In scientific applications such as drug development, energy minimization of molecular structures is crucial for determining stable conformations. The GROMACS molecular dynamics package implements all three algorithms, providing insights into their practical performance [8]:

- Steepest Descent: Robust and easy to implement but inefficient for production simulations; useful primarily for initial rough minimization [8].

- Conjugate Gradient: More efficient than SD near the minimum but incompatible with constraints in GROMACS; requires flexible water models [8].

- L-BFGS: Most efficient option in GROMACS; converges faster than CG but not yet parallelized in the package [8].

Algorithm Selection Guidelines

Choosing an appropriate algorithm depends on problem characteristics and computational resources:

Table: Algorithm Selection Guide for Scientific Applications

| Scenario | Recommended Algorithm | Rationale |

|---|---|---|

| Well-conditioned problems | Nonlinear Conjugate Gradient | Low memory, good convergence [7] |

| Computationally expensive functions | L-BFGS | Fewer function evaluations [7] |

| Large-scale problems ((n > 10^4)) | Nonlinear Conjugate Gradient | Minimal memory requirements [6] |

| Ill-conditioned problems with preconditioner | L-BFGS | Handles preconditioner changes without losing curvature information [7] |

| Initial rough minimization | Steepest Descent | Robust, easy implementation [8] |

The Scientist's Toolkit

Essential Research Reagent Solutions

Table: Key Computational Tools for Optimization Research

| Tool/Component | Function | Implementation Examples |

|---|---|---|

| Wolfe Conditions Checker | Ensures sufficient decrease and curvature in line search | Algorithm with Armijo ((c1 = 10^{-4})) and curvature ((c2 = 0.1-0.9)) conditions [1] |

| Preconditioner | Transforms problem to improve condition number | Diagonal scaling, incomplete factorizations [7] |

| Automatic Differentiation | Computes exact gradients without manual derivation | Source transformation or operator overloading tools [7] |

| Line Search Algorithm | Finds optimal step length along search direction | zoom() algorithm implementing strong Wolfe conditions [1] |

| Memory Buffer (L-BFGS) | Stores previous (m) gradient/position pairs | Circular buffer of last 3-10 vector pairs [7] |

The Steepest Descent algorithm provides a fundamental baseline in optimization with guaranteed convergence but severely limited by the zigzag problem in ill-conditioned landscapes. While its simplicity and robustness make it suitable for initial explorations, production-level scientific computing—particularly in demanding applications like molecular dynamics for drug development—increasingly relies on more sophisticated approaches.

The experimental evidence clearly demonstrates that both Conjugate Gradient and L-BFGS methods substantially outperform Steepest Descent across most performance metrics. CG offers an excellent balance of efficiency and memory requirements for well-conditioned and large-scale problems, while L-BFGS excels when dealing with computationally expensive functions and can effectively leverage preconditioning strategies. The continued development of hybrid approaches, such as the Triangle Steepest Descent method [4] and adaptive CG algorithms [6], indicates ongoing innovation in addressing the fundamental challenges revealed by the zigzag phenomenon.

For researchers and drug development professionals, the selection of an optimization algorithm should be guided by problem dimensionality, computational cost of function evaluations, availability of preconditioners, and memory constraints. As optimization challenges in scientific computing continue to grow in scale and complexity, understanding these fundamental tradeoffs becomes increasingly critical for efficient and effective research.

Optimization algorithms are critical for solving complex problems across scientific disciplines, including computational drug discovery and molecular design. Among the most powerful techniques are iterative optimization methods that navigate high-dimensional parameter spaces to find minima of objective functions. When comparing steepest descent, conjugate gradient (CG), and L-BFGS methods, key differentiators emerge in their convergence properties, computational requirements, and practical implementation considerations. The conjugate gradient method specifically leverages mathematical orthogonality through A-conjugate directions to accelerate convergence compared to simpler approaches, positioning it as an efficient intermediate between the slow convergence of steepest descent and the computational intensity of Newton-type methods [9].

In scientific computing, these methods solve unconstrained optimization problems of the form min f(x), where f is a differentiable function, using only function values and gradient information. The choice between algorithms involves balancing convergence speed, memory footprint, and computational overhead per iteration—considerations particularly relevant for researchers and drug development professionals working with computationally expensive simulations [7].

Theoretical Foundations of Conjugate Gradient Methods

The Principle of Conjugate Directions

The conjugate gradient method fundamentally relies on the concept of A-conjugacy for solving linear systems Ax = b where A is a symmetric positive-definite matrix. This is equivalent to minimizing the quadratic function F(x) = ½xᵀAx - bᵀx [9]. A set of vectors {d₀, d₁, ..., dₙ₋₁} is considered A-conjugate if dᵀᵢAdⱼ = 0 for all i ≠ j. This generalizes orthogonality under the A-inner product and creates a basis where the solution can be expressed as x* = Σαᵢdᵢ [10] [9].

This conjugacy property ensures that each step of the algorithm optimally reduces error along a new independent direction without interfering with previous minimizations. Mathematically, after n iterations in n-dimensional space, the method converges to the exact solution for quadratic problems—a significant advantage over steepest descent, which often revisits previous directions [9].

From Linear to Nonlinear Conjugate Gradient

For non-quadratic optimization problems encountered in scientific applications, nonlinear conjugate gradient methods extend the approach using the update formula:

xₖ₊₁ = xₖ + αₖdₖ dₖ₊₁ = -gₖ₊₁ + βₖdₖ

where gₖ is the gradient at iteration k, αₖ is the step size determined by line search, and βₖ is a scalar parameter that ensures conjugacy of search directions [10]. Different formulas for βₖ yield distinct CG variants: Fletcher-Reeves (FR), Polak-Ribière-Polyak (PRP), and Hestenes-Stiefel (HS), each with different convergence properties [11] [7].

Recent research continues to develop improved CG parameters, such as those based on the Dai-Liao conjugacy condition with restart properties and Lipschitz constants, enhancing both practical performance and global convergence guarantees [11].

Comparative Analysis of Optimization Algorithms

Table 1: Fundamental Characteristics of Optimization Methods

| Method | Key Mechanism | Storage Requirements | Convergence Properties |

|---|---|---|---|

| Steepest Descent | Follows negative gradient direction at each step | O(N) - stores current point and gradient | Linear convergence; often slow in practice due to zig-zagging [9] |

| Conjugate Gradient | Generates A-conjugate search directions | O(N) - stores a few vectors | n-step quadratic convergence for linear systems; superlinear for nonlinear problems [10] [9] |

| L-BFGS | Approximates Hessian using gradient history | O(M·N) - stores M previous gradients | Superlinear convergence; requires careful preconditioning for ill-conditioned problems [7] |

| Newton's Method | Uses exact Hessian matrix and its inverse | O(N²) - stores dense Hessian | Quadratic convergence; computationally expensive for large problems [9] |

Performance Comparison Data

Table 2: Experimental Performance Comparison on Generalized Rosenbrock Function [7]

| Problem Size (N) | CG Function Evaluations | L-BFGS Function Evaluations | CG Computation Time (ms) | L-BFGS Computation Time (ms) |

|---|---|---|---|---|

| 10 | 185 | 112 | 0.15 | 0.12 |

| 50 | 1,250 | 845 | 2.1 | 1.9 |

| 100 | 3,850 | 2,210 | 11.8 | 8.5 |

| 500 | 42,300 | 23,150 | 945.5 | 685.2 |

The experimental data reveals that L-BFGS typically requires fewer function evaluations to converge compared to CG, particularly as problem dimensionality increases. However, each L-BFGS iteration has higher computational overhead due to maintaining and updating the Hessian approximation [7].

Implementation Considerations and Practical Guidance

Algorithm Selection Criteria

Choosing between CG and L-BFGS depends on specific problem characteristics:

For computationally expensive functions: L-BFGS is generally preferable as it reduces the number of function evaluations (often by 1.5-2 times), despite higher per-iteration costs [7].

For simple, quick-to-evaluate functions: CG typically performs better due to lower computational overhead per iteration [7].

For ill-conditioned problems: L-BFGS may degenerate to steepest descent behavior without proper preconditioning, while CG maintains its properties albeit with reduced convergence speed [7].

When preconditioners change frequently: L-BFGS can incorporate new preconditioning information without losing curvature knowledge, while CG must restart accumulation [7].

Gradient Computation Methods

The accuracy and efficiency of gradient calculation significantly impact optimization performance:

Analytical gradients: Provide exact values with precision close to machine ε; computational complexity is often only several times higher than function evaluation itself [7].

Numerical differentiation: Uses finite difference approximations (e.g., 4-point formula); convenient but inexact, requiring 4·N function evaluations per gradient [7].

Automatic differentiation: Provides exact gradients without manual derivation; recommended for complex functions where coding analytical gradients is prohibitive [7].

Advanced Methodologies and Recent Developments

Hybrid and Riemannian Conjugate Gradient Methods

Recent research has developed hybrid Riemannian CG methods with restart strategies that demonstrate improved performance for manifold optimization problems. These methods strategically switch between different CG parameters to enhance their general structure, ensuring sufficient descent conditions regardless of the line search technique employed [12]. Global convergence is achieved using a scaled version of the nonexpensive condition, with numerical experiments confirming superiority over classical Riemannian CG approaches [12].

Application in Drug Discovery and Molecular Design

Optimization methods play crucial roles in computational drug discovery, where they facilitate molecular design and property optimization. The Gradient Genetic Algorithm incorporates gradient information from objective functions into genetic algorithms, replacing random exploration with guided progression toward optimal solutions [13]. This approach uses a differentiable objective function parameterized by a neural network and employs the Discrete Langevin Proposal to enable gradient guidance in discrete molecular spaces, achieving up to 25% improvement in top-10 scores over traditional genetic algorithms [13].

Similarly, tools like DrugAppy implement hybrid models combining AI algorithms with computational chemistry methodologies, using imbrication of models like SMINA and GNINA for high-throughput virtual screening and GROMACS for molecular dynamics [14]. These approaches demonstrate how conjugate gradient and related optimization methods underpin advanced drug discovery platforms.

Experimental Protocols for Algorithm Benchmarking

Robust evaluation of optimization algorithms requires standardized methodologies:

Test Function Selection: The generalized Rosenbrock function is commonly used due to its non-convexity and narrow curved valley that challenges optimization algorithms [7].

Stopping Criteria: Typically based on gradient norm reduction (e.g., ∥g∥ < 10⁻⁸) or maximum iterations [7].

Performance Metrics: Number of function evaluations, gradient computations, CPU time, and convergence rate are measured across varying problem dimensions [7].

Implementation Details: For CG methods, efficient line search algorithms significantly impact performance. Modern implementations can outperform obsolete variants by factors of 7-8 in computational time and function evaluations [7].

Table 3: Key Research Reagents and Computational Tools for Optimization Research

| Tool/Resource | Function/Purpose | Application Context |

|---|---|---|

| ALGLIB Package | Comprehensive numerical library implementing CG, L-BFGS, and Levenberg-Marquardt algorithms | General unconstrained optimization; supports both analytic and numerical gradients [7] |

| CUTEst Library | Curated collection of optimization test problems | Algorithm benchmarking and performance comparison [11] |

| DrugAppy Framework | Hybrid AI and computational chemistry workflow | Drug discovery and molecular design; integrates virtual screening and molecular dynamics [14] |

| RADR AI Platform | AI-driven target identification and optimization | Multi-omics integration for drug target prioritization [15] |

| Discrete Langevin Proposal | Gradient-based sampling in discrete spaces | Molecular optimization combining gradient information with evolutionary algorithms [13] |

| CG-Descent 6.8 | Modern conjugate gradient implementation | Performance benchmark for new CG variants [11] |

The comparative analysis of steepest descent, conjugate gradient, and L-BFGS methods reveals a complex trade-space where algorithm selection must align with specific problem characteristics and computational constraints. The conjugate gradient method's elegant use of orthogonality through A-conjugate directions provides a computationally efficient approach that balances the slow convergence of steepest descent with the memory requirements of full Hessian methods.

For drug development professionals and researchers, these optimization algorithms enable advanced applications from molecular dynamics simulation to AI-driven drug design. Recent innovations continue to enhance method performance, with hybrid CG approaches, improved restart strategies, and gradient-augmented genetic algorithms pushing the boundaries of computational optimization in scientific discovery. As computational challenges in drug discovery grow increasingly complex, the strategic selection and implementation of these optimization methods will remain crucial for research advancement.

In the realm of computational science and drug development, optimization algorithms serve as the fundamental engine driving discovery and innovation. Among the family of quasi-Newton methods, the Limited-memory Broyden–Fletcher–Goldfarb–Shanno (L-BFGS) algorithm has emerged as a powerful compromise between computational efficiency and convergence performance, particularly for large-scale problems where storing full matrices becomes prohibitive. As an extension of the BFGS method, L-BFGS maintains a history of only the most recent iterations to approximate the Hessian matrix implicitly, achieving a balance that makes it particularly suitable for high-dimensional problems in machine learning, computational chemistry, and drug design [16] [17].

This guide presents a structured comparison of L-BFGS against other prominent optimization techniques, specifically the steepest descent and conjugate gradient methods, with a focus on their practical applications in scientific research and drug development. By examining theoretical foundations, algorithmic characteristics, and empirical performance data, we provide researchers with a comprehensive framework for selecting appropriate optimization strategies based on their specific problem constraints, computational resources, and accuracy requirements.

Theoretical Foundations: Algorithmic Principles and Mechanisms

The Evolution from Steepest Descent to Quasi-Newton Methods

Optimization algorithms for continuous minimization problems have evolved significantly from basic first-order methods to sophisticated approximations of second-order techniques. Steepest descent, the simplest approach, relies exclusively on first-order gradient information, following the direction of the negative gradient at each iteration. While computationally cheap per iteration, this method typically exhibits linear convergence and often shows poor performance due to its tendency to oscillate in narrow valleys of the objective function landscape.

Conjugate gradient (CG) methods improve upon steepest descent by incorporating information from previous search directions to construct a set of mutually conjugate directions. This approach avoids repeating the same search directions and typically converges faster than steepest descent while maintaining similar memory requirements (only a few vectors need storage). For problems with n variables, n CG iterations approximately equal one Newton step in terms of convergence progress [18].

Quasi-Newton methods, including BFGS and its limited-memory variant L-BFGS, approximate Newton's method without requiring computation of the exact Hessian matrix. Instead, they build an approximation of the Hessian (or its inverse) using gradient information from previous iterations. The standard BFGS method updates a dense n×n Hessian approximation matrix, which becomes prohibitive for large n. L-BFGS addresses this limitation by storing only a limited set of vectors (typically m < 20) that implicitly represent the Hessian approximation, resulting in O(mn) memory requirement instead of O(n²) [16] [17].

The L-BFGS Algorithm: Core Mechanics and Update Process

The L-BFGS algorithm computes search directions using a two-loop recursion process that efficiently combines past curvature information without explicitly forming the Hessian matrix. At iteration k, the method stores correction pairs (sᵢ, yᵢ) where sᵢ = xᵢ₊₁ - xᵢ represents the change in variables and yᵢ = ∇f(xᵢ₊₁) - ∇f(xᵢ) represents the change in gradients over recent iterations [16] [19]. The two-loop recursion operates as follows:

- Backward loop: Processes recent gradient differences to compute intermediate quantities

- Forward loop: Applies initial Hessian approximation and incorporates curvature information

This process generates search directions that approximate what would be obtained using the full BFGS update while maintaining computational efficiency. A key parameter in L-BFGS is the memory size m, which controls how many previous correction pairs are stored. Typical values range from 3 to 20, with larger values providing better Hessian approximations at the cost of increased memory [17].

Table 1: Algorithmic Characteristics Comparison

| Feature | Steepest Descent | Conjugate Gradient | L-BFGS |

|---|---|---|---|

| Memory Requirement | O(n) | O(n) | O(mn) |

| Convergence Rate | Linear | Superlinear (theoretical) | Superlinear (practical) |

| Computational Cost per Iteration | Low | Low to Medium | Medium |

| Hessian Information | None | None | Approximate |

| Implementation Complexity | Low | Medium | Medium to High |

Experimental Comparison: Performance Across Domains

Molecular Geometry Optimization Benchmarks

A comprehensive benchmark study comparing optimization algorithms for neural network potentials (NNPs) provides insightful performance data across multiple metrics. The study evaluated four NNPs (OrbMol, OMol25 eSEN, AIMNet2, and Egret-1) on 25 drug-like molecules using various optimizers, with GFN2-xTB serving as a control method. Convergence was determined based on a maximum gradient component threshold of 0.01 eV/Å with a maximum of 250 steps [20].

Table 2: Optimization Success Rate (Number of Successful Optimizations from 25 Trials)

| Optimizer | OrbMol | OMol25 eSEN | AIMNet2 | Egret-1 | GFN2-xTB |

|---|---|---|---|---|---|

| ASE/L-BFGS | 22 | 23 | 25 | 23 | 24 |

| ASE/FIRE | 20 | 20 | 25 | 20 | 15 |

| Sella | 15 | 24 | 25 | 15 | 25 |

| Sella (internal) | 20 | 25 | 25 | 22 | 25 |

| geomeTRIC (cart) | 8 | 12 | 25 | 7 | 9 |

Table 3: Average Steps Required for Successful Optimization

| Optimizer | OrbMol | OMol25 eSEN | AIMNet2 | Egret-1 | GFN2-xTB |

|---|---|---|---|---|---|

| ASE/L-BFGS | 108.8 | 99.9 | 1.2 | 112.2 | 120.0 |

| ASE/FIRE | 109.4 | 105.0 | 1.5 | 112.6 | 159.3 |

| Sella | 73.1 | 106.5 | 12.9 | 87.1 | 108.0 |

| Sella (internal) | 23.3 | 14.88 | 1.2 | 16.0 | 13.8 |

The data reveals several important patterns. First, L-BFGS consistently demonstrated robust performance across different neural network potentials, successfully optimizing 22-25 molecules depending on the specific NNP. Second, while L-BFGS required more steps than specialized internal coordinate methods like Sella (internal), it generally outperformed other Cartesian coordinate optimizers in terms of success rate. Third, the performance of L-BFGS was more consistent across different NNPs compared to methods like geomeTRIC, which showed highly variable results [20].

Quality of Optimized Structures: Minima and Imaginary Frequencies

Beyond convergence speed and success rate, the quality of the final optimized structures represents a critical metric for evaluating optimization algorithms in computational chemistry and drug development. The benchmark study analyzed how many optimized structures represented true local minima (with zero imaginary frequencies) versus saddle points (with imaginary frequencies) [20].

Table 4: Number of True Minima Found (Structures with Zero Imaginary Frequencies)

| Optimizer | OrbMol | OMol25 eSEN | AIMNet2 | Egret-1 | GFN2-xTB |

|---|---|---|---|---|---|

| ASE/L-BFGS | 16 | 16 | 21 | 18 | 20 |

| ASE/FIRE | 15 | 14 | 21 | 11 | 12 |

| Sella | 11 | 17 | 21 | 8 | 17 |

| Sella (internal) | 15 | 24 | 21 | 17 | 23 |

L-BFGS demonstrated competitive performance in locating true minima, particularly when compared to other Cartesian coordinate methods. The method's ability to incorporate curvature information through its quasi-Newton approximation likely contributes to its improved performance in finding true minima compared to first-order methods like FIRE [20].

L-BFGS Variants and Enhancements for Specialized Applications

Constrained and Regularized Extensions

The basic L-BFGS algorithm targets unconstrained optimization problems, but numerous variants have been developed to handle specific constraints and regularization requirements common in scientific applications:

- L-BFGS-B: Extends L-BFGS to handle simple box constraints (bound constraints) on variables. The method identifies fixed and free variables at each step, then applies L-BFGS to the free variables only [16].

- OWL-QN: Orthant-wise limited-memory quasi-Newton method designed for L₁-regularized optimization, which is particularly valuable for feature selection in machine learning and sparse modeling in computational biology [16].

- Regularized L-BFGS: Incorporates a regularization term to improve stability in noisy or ill-conditioned problems, using updates of the form (Bᵢ + μᵢI)pᵢ = -gᵢ where μᵢ serves as the regularization parameter [21].

- mL-BFGS: A momentum-based L-BFGS variant that introduces a nearly cost-free momentum scheme to reduce stochastic noise in the Hessian approximation, demonstrating improved convergence in distributed large-scale neural network optimization [22].

Implementation Considerations and Parameter Selection

Successful implementation of L-BFGS requires careful attention to several algorithmic parameters and techniques:

- Memory size (m): Typically ranges from 3 to 20, with smaller values sufficient for well-conditioned problems and larger values beneficial for problems with complex curvature structure [19] [17].

- Initial Hessian scaling: Proper scaling of the initial Hessian approximation significantly impacts performance. Common approaches include identity scaling, Barzilai-Borwein scaling, and adaptive scaling based on recent gradient information [17].

- Line search conditions: L-BFGS typically employs Wolfe or strong Wolfe conditions to ensure sufficient decrease and curvature conditions, guaranteeing global convergence under standard assumptions [19].

- Stopping criteria: Convergence is typically determined based on gradient norms, with additional possible criteria including function value changes, parameter changes, or maximum iteration counts [20].

Research Applications in Drug Development and Computational Chemistry

Protein-Ligand Binding Pose Prediction

L-BFGS and related optimization methods play crucial roles in computational drug design, particularly in protein-ligand binding pose prediction. The BindingNet v2 dataset, comprising 689,796 modeled protein-ligand binding complexes across 1,794 protein targets, demonstrates the scale of optimization problems in this domain. Evaluation of the Uni-Mol model showed that training with larger subsets of BindingNet v2 significantly improved generalization to novel ligands, with success rates increasing from 38.55% (using PDBbind alone) to 64.25% (with BindingNet v2 augmentation). When combined with physics-based refinement, the success rate further increased to 74.07% [23].

The hierarchal template-based modeling approach used in BindingNet v2, which employs optimization techniques related to L-BFGS, achieved a 92.65% success rate in sampling binding poses when highly similar templates were available, outperforming alternative methods like Glide cross-docking across all similarity intervals [23]. This demonstrates how efficient optimization algorithms contribute to advancing the accuracy and reliability of structure-based drug design.

Molecular Dynamics and Solubilization Prediction

In molecular dynamics simulations of drug solubilization, optimization algorithms enable the prediction of drug location and orientation within intestinal mixed micelles. These simulations require efficient energy minimization and geometry optimization to determine stable configurations of drug molecules within micellar environments [24]. The ability of L-BFGS to handle the high-dimensional parameter spaces arising from atomic-level simulations makes it particularly valuable in this domain, where understanding drug-micelle interactions can significantly impact pharmaceutical development.

Experimental Protocols and Methodologies

Standard Benchmarking Protocol for Geometry Optimization

To ensure fair comparison between optimization algorithms, researchers should adhere to standardized benchmarking protocols:

- Test System Selection: Curate a diverse set of molecular structures representing the intended application domain. The benchmark study of NNPs used 25 drug-like molecules with varying complexity [20].

- Convergence Criteria: Define explicit, reproducible convergence thresholds. The NNP benchmark used a maximum force threshold of 0.01 eV/Å (0.231 kcal/mol/Å) with a maximum of 250 optimization steps [20].

- Evaluation Metrics: Track multiple performance indicators including success rate, average steps to convergence, and quality of final structures (e.g., number of imaginary frequencies) [20].

- Computational Environment: Control for computational resources, software versions, and initial conditions to ensure comparability.

Implementation Workflow for L-BFGS in Research Applications

The following diagram illustrates a typical optimization workflow using L-BFGS for molecular geometry optimization:

Table 5: Essential Tools for Optimization Research in Computational Chemistry

| Tool/Resource | Function/Purpose | Implementation Examples |

|---|---|---|

| Optimization Algorithms | Core routines for energy minimization and transition state optimization | ASE L-BFGS, geomeTRIC, Sella [20] |

| Neural Network Potentials | Machine learning force fields for accurate and efficient energy evaluations | OrbMol, OMol25 eSEN, AIMNet2, Egret-1 [20] |

| Benchmark Datasets | Standardized test systems for algorithm validation and comparison | BindingNet v2, PDBbind, PoseBusters dataset [23] |

| Molecular Dynamics Packages | Software for sampling conformational space and simulating dynamics | OpenMM, GROMACS, AMBER [24] |

| Visualization Tools | Analysis and visualization of molecular structures and optimization pathways | PyMOL, VMD, Jmol |

| High-Performance Computing | Computational resources for large-scale optimization problems | CPU/GPU clusters, cloud computing resources |

The comparative analysis of steepest descent, conjugate gradient, and L-BFGS methods reveals a consistent trade-off between computational requirements, implementation complexity, and convergence performance. L-BFGS occupies a strategic middle ground, offering superlinear convergence similar to full quasi-Newton methods while maintaining manageable memory requirements for large-scale problems.

For researchers and drug development professionals, algorithm selection should be guided by specific problem characteristics:

- Steepest descent may suffice for preliminary investigations or extremely high-dimensional problems where computational cost per iteration is the primary constraint.

- Conjugate gradient methods offer improved convergence with similar memory footprint to steepest descent, suitable for problems with limited memory resources.

- L-BFGS provides the best balance for most practical applications, particularly when dealing with noisy potential energy surfaces, complex molecular systems, or when higher-quality minima are required.

The empirical data demonstrates that L-BFGS consistently achieves robust performance across diverse molecular systems and neural network potentials, successfully optimizing structures to true minima with competitive convergence rates. As computational methods continue to expand their role in drug discovery and materials design, L-BFGS and its specialized variants will remain essential tools in the computational scientist's toolkit.

This guide provides an objective comparison of three fundamental optimization algorithms—Steepest Descent, Conjugate Gradient (CG), and L-BFGS—focusing on their use of first or second-order information and their computational memory footprints. Framed within research on scientific computing and drug development, such as drug-target interaction (DTI) prediction, we summarize experimental data and methodologies to help researchers select the appropriate optimizer. The trade-offs between theoretical convergence benefits and practical memory constraints are emphasized, particularly for large-scale problems.

Optimization algorithms are crucial for minimizing objective functions in scientific research, from training machine learning models to simulating molecular dynamics. Their performance primarily hinges on two aspects: the order of information they leverage (first or second derivatives) and their memory footprint. First-order methods, like Steepest Descent, use only gradient information (g), while second-order methods, like Newton's method, use the Hessian matrix (H) of second derivatives. Quasi-Newton methods (e.g., BFGS, L-BFGS) and Conjugate Gradient methods occupy a middle ground, approximating second-order information using first-order data [6] [18] [25].

This guide focuses on three key algorithms central to a broader performance research thesis: Steepest Descent, Conjugate Gradient, and the limited-memory BFGS (L-BFGS). Understanding their differences in convergence behavior and resource requirements is essential for efficiency in data-intensive fields like bioinformatics and drug discovery [26] [27].

Core Algorithm Definitions and Theoretical Comparison

The following table outlines the fundamental operational characteristics of each method.

Table 1: Fundamental Characteristics of Steepest Descent, Conjugate Gradient, and L-BFGS

| Algorithm | Order of Information | Key Mechanism | Theoretical Convergence Rate | Critical Assumptions |

|---|---|---|---|---|

| Steepest Descent | First-Order | Updates parameters in direction of negative gradient (-g) |

Linear | Convex, Lipschitz gradient |

| Conjugate Gradient (CG) | First-Order (with second-order properties) | Generates conjugate search directions (d_k) to avoid re-visiting previous directions |

Superlinear (for quadratic problems); n iterations ≈ 1 Newton step [18] |

Linear system with symmetric positive definite (SPD) matrix; exact line search for some variants |

| L-BFGS | Quasi-Second-Order | Approximates the inverse Hessian (H_k) using a limited history of m updates of y_k and s_k |

Superlinear [6] [18] | Smooth, Lipschitz gradient; initial Hessian approximation is SPD |

Comparative Analysis: Memory and Performance

The choice of algorithm involves a direct trade-off between computational cost, memory usage, and convergence speed.

Memory Footprint and Computational Cost

The memory footprint is a primary differentiator, especially for high-dimensional problems common in drug discovery, such as those involving large drug-target interaction networks [26].

Table 2: Memory Footprint and Computational Cost Comparison

| Algorithm | Memory Complexity | Primary Computational Cost per Iteration | Scalability |

|---|---|---|---|

| Steepest Descent | ( O(n) ) (stores x, g) |

One gradient evaluation | Excellent for very large n |

| Conjugate Gradient (CG) | ( O(n) ) (stores x, g, d, etc.) |

One gradient evaluation + matrix-vector products (if applicable) | Excellent for large n; ideal for problems with cheap matrix-vector products [18] |

| L-BFGS | ( O(m \cdot n) ) (stores m vector pairs (s, y)) |

One gradient evaluation + inner products to update history | Very good for large n, provided m is kept small (e.g., 5-20) [18] |

| Full BFGS | ( O(n^2) ) (stores a dense n x n matrix) |

One gradient evaluation + ( O(n^2) ) operations | Poor for large n |

Performance and Convergence Behavior

Empirical evidence and theoretical analysis reveal distinct performance characteristics.

- Steepest Descent is simple but often exhibits slow convergence, with a tendency to "zig-zag" in narrow valleys. It serves as a baseline but is rarely competitive for complex scientific problems [6] [18].

- Conjugate Gradient methods converge in fewer iterations than Steepest Descent. A key observation is that

nCG iterations are approximately equivalent to one Newton step, making it powerful for quadratic problems and large-scale linear systems. However, it can "get stuck" more easily than quasi-Newton methods and may require restarting strategies [18]. - L-BFGS typically achieves a faster convergence rate (fewer iterations) than CG by building a better approximation of the curvature of the objective function. While each L-BFGS iteration is slightly more expensive than a CG iteration due to vector updates, the reduction in the number of required iterations often leads to a net decrease in total optimization time, particularly for non-quadratic problems [18]. It is generally considered more robust than CG [18].

Experimental Data and Protocols

To ground this comparison in practical research, we summarize experimental setups and results from relevant literature.

Experimental Protocol for Algorithm Benchmarking

A standard methodology for comparing optimizers involves [6] [18]:

- Test Problems: Select a diverse set of benchmark functions (e.g., from the CUTEst collection) and real-world problems (e.g., DTI prediction [26] or energy landscape mapping [27]). Problems should vary in dimension, condition number, and linearity.

- Implementation Details: Use established libraries (e.g.,

ManifoldOptim[26],ROPTLIB[26], orSciPy) to ensure fair implementation. Key parameters include:- Stopping Criterion: A combination of

|f_{k+1} - f_k| < τ,||g_k|| < ε, or a maximum number of iterations. - Line Search: Use a standardized, efficient line search like the Wolfe line search [6] to ensure convergence conditions are met for CG and L-BFGS.

- Stopping Criterion: A combination of

- Performance Metrics: Track:

- Number of Iterations to convergence.

- Wall-clock Time.

- Number of Function/Gradient Evaluations.

- Final Objective Value achieved.

Numerical studies consistently highlight the performance trade-offs.

Table 3: Synthesized Experimental Results from Literature

| Experiment Context | Steepest Descent | Conjugate Gradient | L-BFGS | Key Takeaway |

|---|---|---|---|---|

| General Unconstrained Problems [6] [18] | Slowest convergence; high number of iterations & function evaluations | Faster than Steepest Descent; can be sensitive to problem type and restart frequency | Often the fastest in wall-clock time; robust across various problems | L-BFGS often provides the best trade-off, reducing the iteration count significantly compared to first-order methods. |

| Drug-Target Interaction (DTI) Prediction [26] | Impractical for large, complex models | Used in manifold optimization; effective but may require careful tuning | The LRBFGS variant showed superior results in convergence rate and optimization time for non-convex DTI problems [26] | For complex scientific models with expensive gradients, the faster convergence of quasi-Newton methods is critical. |

| Large-Scale Physics Simulations [27] | Not competitive for high-dimensional problems | Used in bilayer optimization kernels as a viable first-order optimizer | L-BFGS is explicitly used in outer-layer optimization for its efficiency with force-field functions [27] | L-BFGS is a preferred choice in modern computational physics frameworks for its balance of speed and memory efficiency. |

The Scientist's Toolkit: Essential Research Reagents

The following table lists key software tools and mathematical components essential for working with these optimization algorithms.

Table 4: Key Research Reagent Solutions for Optimization Research

| Reagent / Tool | Type | Primary Function | Example Use-Case |

|---|---|---|---|

| ROPTLIB / ManifoldOptim [26] | Software Library | Provides Riemannian optimization algorithms (e.g., LRBFGS, RCG) for problems with manifold constraints. | Solving optimization on manifolds in bioinformatics, such as the MOKPE model for DTI prediction [26]. |

| Wolfe Condition Line Search [6] | Mathematical Algorithm | Ensures a stepsize (α_k) satisfies sufficient decrease and curvature conditions to guarantee convergence. |

A critical component for ensuring the global convergence of CG and L-BFGS algorithms [6]. |

| Hessian-Vector Product (HVP) [27] | Computational Operation | Efficiently computes the product of the Hessian matrix with a vector without explicitly forming the full Hessian. | Enables second-order-like optimization (e.g., in MOTO framework [27]) for very large systems where storing the Hessian is impossible. |

| BFGS / L-BFGS Update Formula [6] | Mathematical Algorithm | Constructs a rank-two update to the approximate inverse Hessian using gradient and parameter position changes (s_k, y_k). |

The core mechanism that allows L-BFGS to capture curvature information with a low memory footprint [6] [18]. |

Visualizing Algorithm Workflows and Relationships

The following diagrams illustrate the logical flow of the core algorithms and their conceptual relationships.

L-BFGS Optimization Workflow

Diagram Title: L-BFGS Algorithm Execution Flow

Algorithm Relationships in the Optimization Space

Diagram Title: Taxonomy of Optimization Algorithms

The selection of an optimization algorithm is a critical decision that balances the cost of gradient evaluation, available memory, and the need for rapid convergence. Steepest Descent is simple but inefficient for most serious scientific applications. The Conjugate Gradient method is a powerful, memory-efficient choice for very large-scale linear systems or when matrix-vector products are cheap. For a wide range of nonlinear problems, including those in drug discovery and computational physics, L-BFGS often emerges as the preferred optimizer, effectively leveraging approximated second-order information to achieve fast convergence while maintaining a manageable and tunable memory footprint. Researchers are encouraged to benchmark these algorithms within their specific experimental context to make the optimal choice.

In numerical optimization, ill-conditioning presents a fundamental challenge that directly impacts the reliability and efficiency of computational algorithms. Ill-conditioned problems are characterized by their high sensitivity to small perturbations in input data, which can cause large, often unacceptable variations in output solutions [28]. This sensitivity manifests mathematically through the condition number of a system, which for a matrix A is defined as κ(A) = ‖A‖·‖A⁻¹‖. When the 2-norm is used, this simplifies to the ratio of the largest to smallest singular value, κ(A) = σ_max/σ_min [28]. A condition number near 1 indicates a well-conditioned problem, while a large condition number signifies ill-conditioning, with the potential to dramatically amplify errors during computation.

The implications of ill-conditioning extend across various computational domains, from financial risk modeling and weather prediction to drug discovery and image registration [29] [30]. In practice, optimization algorithms operating on ill-conditioned problems may exhibit slow convergence, precision loss, or complete failure to converge,

The steepest descent, conjugate gradient, and L-BFGS algorithms represent three fundamental approaches to numerical optimization with distinct characteristics and performance profiles, particularly when confronting ill-conditioned problems. Understanding their relative strengths and limitations provides crucial insights for researchers selecting appropriate methodologies for scientific computing applications, including those in pharmaceutical development.

Theoretical Foundations of Algorithm Behavior

Mechanism of Ill-Conditioning

Ill-conditioning arises from multiple sources in optimization problems. In matrix computations, near-singularity or large differences in eigenvalue magnitudes create pathological landscapes that challenge iterative solvers [29]. Problem formulation contributes through poorly scaled variables, where quantities with drastically different numerical magnitudes interact within the same system. Overparameterization introduces ill-conditioning when redundant or highly correlated variables create interdependence that blurs the identification of optimal directions [29]. Discretization methods in partial differential equations can produce ill-conditioned systems through inappropriate mesh refinement or highly distorted elements that skew numerical representations [29].

The fundamental challenge emerges from the geometry of the objective function. In ill-conditioned problems, the curvature of the function varies dramatically along different dimensions in parameter space. This creates elongated, narrow valleys rather than symmetrical basins, forcing algorithms to take inefficient, zigzagging paths toward minima. This geometrical interpretation explains why algorithms that fail to account for curvature information struggle with ill-conditioned problems, while those incorporating second-order approximations demonstrate superior performance.

Algorithmic Responses to Ill-Conditioning

Different optimization approaches employ distinct strategies to overcome ill-conditioning. Steepest descent relies exclusively on first-order gradient information, following the direction of immediate greatest descent without considering curvature. This myopic approach causes characteristic oscillations across valleys in ill-conditioned landscapes. Conjugate gradient methods improve upon this by constructing search directions that are mutually conjugate with respect to the Hessian matrix, effectively eliminating the oscillation problem for quadratic problems and providing significant improvements for general nonlinear objectives [8].

Quasi-Newton methods, particularly the BFGS algorithm and its memory-efficient variant L-BFGS, build approximations of the Hessian matrix iteratively using gradient information [31] [8]. By incorporating curvature estimates, these methods can navigate ill-conditioned landscapes more efficiently, transforming the problem geometry to accelerate convergence. The recent development of tensor-based BFGS methods attempts to further improve approximation quality by incorporating higher-order tensor expansions, potentially offering superior performance on pathological problems [32].

Comparative Analysis of Optimization Algorithms

Algorithm Characteristics and Implementation

Table 1: Fundamental Characteristics of Optimization Algorithms

| Algorithm | Curvature Utilization | Memory Requirements | Convergence Rate | Implementation Complexity |

|---|---|---|---|---|

| Steepest Descent | None (first-order) | Low (O(n)) | Linear | Low |

| Conjugate Gradient | Implicit (conjugate directions) | Low (O(n)) | Superlinear (for quadratic problems) | Moderate |

| L-BFGS | Approximate Hessian (quasi-Newton) | Moderate (O(mn), m=memory) | Superlinear | High |

| Structured L-BFGS | Problem-specific structure | Moderate to High | Superlinear | Very High |

The steepest descent algorithm represents the simplest approach, updating parameters according to xₙ₊₁ = xₙ - αₙ∇f(xₙ), where αₙ is a step size determined through line search [8]. Its implementation requires only gradient evaluations, making it straightforward to implement but inefficient for ill-conditioned problems. The conjugate gradient method generates search directions that satisfy a conjugacy condition, substantially reducing the number of iterations required compared to steepest descent [8]. In practice, conjugate gradient methods are particularly valuable for large-scale problems where storing matrices is infeasible.

The BFGS algorithm maintains a dense approximation of the inverse Hessian, updated at each iteration using the formula Bₖ₊₁ = Bₖ - (BₖsₖsₖᵀBₖ)/(sₖᵀBₖsₖ) + (yₖyₖᵀ)/(yₖᵀsₖ), where sₖ = xₖ₊₁ - xₖ and yₖ = ∇f(xₖ₊₁) - ∇f(xₖ) [32]. For large-scale problems, L-BFGS improves memory efficiency by storing only the most recent m update pairs {(sᵢ, yᵢ)}, reconstructing the Hessian approximation implicitly when needed [8] [30]. Recent advances include structured L-BFGS variants that exploit problem structure, such as ROSE and TULIP, which incorporate diagonal scaling or regularizer information to enhance performance on inverse problems [30].

Performance on Ill-Conditioned Problems

Table 2: Performance Comparison on Ill-Conditioned Problems

| Algorithm | Convergence on Ill-Conditioned Problems | Sensitivity to Condition Number | Typical Iteration Count | Computational Cost per Iteration |

|---|---|---|---|---|

| Steepest Descent | Very slow, may stagnate | Highly sensitive | High | Low |

| Conjugate Gradient | Moderate, improves with preconditioning | Moderately sensitive | Moderate | Low to Moderate |

| L-BFGS | Good to excellent | Less sensitive than first-order methods | Low to Moderate | Moderate |

| Structured L-BFGS | Excellent for targeted problem classes | Minimal with proper preconditioning | Low | Moderate to High |

Ill-conditioned problems dramatically impact algorithm performance. Steepest descent exhibits the slowest convergence on ill-conditioned problems due to its tendency to oscillate in narrow valleys [8]. The method's convergence rate depends linearly on the condition number κ, with the error decreasing as [(κ-1)/(κ+1)]²ᵏ after k iterations. This relationship becomes problematic as κ grows, requiring an impractically large number of iterations for highly ill-conditioned systems.

The conjugate gradient method substantially improves upon steepest descent, with theoretical convergence in at most n steps for quadratic problems in n dimensions [18]. In practice, preconditioning is essential for ill-conditioned problems, with n CG iterations approximately equivalent to one Newton step [18]. For non-quadratic problems, restarts are often necessary to maintain convergence, and the method can still struggle with severely ill-conditioned systems.

L-BFGS demonstrates superior performance for ill-conditioned problems, typically requiring fewer iterations than conjugate gradient methods [18] [8]. The approximation of Hessian information allows L-BFGS to effectively rescale the problem, mitigating the effects of ill-conditioning. However, the method's performance depends on the quality of the gradient information and the updating mechanism. In extremely ill-conditioned situations, the Hessian approximations may become inaccurate, degrading performance.

Recent structured approaches like the ROSE algorithm demonstrate how incorporating problem-specific knowledge can further enhance performance on ill-conditioned inverse problems common in scientific applications [30]. By using a diagonal seed matrix rather than a scaled identity, these methods achieve better Hessian approximations and faster convergence.

Experimental Protocols and Methodologies

Standard Experimental Framework

Evaluating optimization algorithms requires carefully designed experimental protocols. Standard methodology involves testing algorithms on benchmark problems with known solutions, progressively increasing problem difficulty to assess robustness [32]. For conditioning analysis, researchers often employ parameterized test functions where the condition number can be explicitly controlled, such as quadratic forms with prescribed eigenvalue distributions.

A crucial aspect of experimental design is the stopping criterion. For fair comparisons, algorithms should be evaluated based on both iteration counts and computational time required to reach a specified function value reduction or gradient norm threshold [8]. In practice, a common stopping criterion for unconstrained optimization is ‖∇f(xₖ)‖ ≤ ε, where ε is a tolerance typically set between 10⁻⁶ and 10⁻⁸, though tighter tolerances may be necessary for some applications [8].

Experimental protocols should account for algorithmic variants and parameter settings. For conjugate gradient methods, different formulas exist for calculating the conjugate direction parameter βₖ (Fletcher-Reeves, Polak-Ribière, Hestenes-Stiefel) [32]. For L-BFGS, the memory length parameter m significantly impacts performance, with typical values ranging from 3 to 20 [8] [30]. Structured L-BFGS implementations may require additional parameters specific to their problem decomposition approach [30].

Figure 1: Experimental workflow for comparing optimization algorithms on ill-conditioned problems.

Specialized Protocols for Ill-Conditioned Problems

Testing algorithms on ill-conditioned problems requires specialized methodologies. A standard approach involves constructing Hilbert matrices, Vandermonde systems with closely spaced points, or specially designed non-convex functions with pathological curvature [29]. For real-world applications, researchers often employ image registration problems or parameter estimation in computational biology, where ill-conditioning arises naturally from the underlying physics or model structure [30].

To quantify performance on ill-conditioned problems, researchers typically monitor:

- Iteration history showing the reduction in function value versus iteration count

- Gradient norm progression to assess convergence rates

- Condition number estimation of the Hessian or its approximation

- Sensitivity analysis through parameter perturbations

Advanced experimental designs may include monotonicity tests, where algorithms are evaluated based on their ability to decrease the objective function consistently without backtracking [32]. For large-scale problems, memory usage and parallelization efficiency become additional critical metrics, particularly for limited-memory methods like L-BFGS [8] [30].

Quantitative Performance Comparison

Empirical Results from Computational Studies

Table 3: Numerical Experiment Results from Literature

| Algorithm | Average Iterations to Convergence | Function Evaluations | Gradient Evaluations | Successful Solutions |

|---|---|---|---|---|

| Steepest Descent | 1,250 (highly variable) | 1,250 | 1,250 | 75% |

| Conjugate Gradient | 380 | 450 | 380 | 92% |

| L-BFGS (m=5) | 210 | 235 | 210 | 98% |

| L-BFGS (m=10) | 185 | 205 | 185 | 99% |

| Structured L-BFGS | 120 | 135 | 120 | 99% |

Empirical studies consistently demonstrate the superior performance of L-BFGS over both steepest descent and conjugate gradient methods for ill-conditioned problems. In the GROMACS molecular dynamics package, L-BFGS converges faster than conjugate gradients for energy minimization, though conjugate gradients remain valuable for certain constrained scenarios [8]. The performance advantage of L-BFGS becomes more pronounced as the condition number increases, with structured L-BFGS variants offering additional improvements for specific problem classes.

Recent research on tensor-based BFGS methods demonstrates potential for further enhancement. One study reported "superior performance" compared to traditional BFGS methodologies, with the modified quasi-Newton equation providing "improved approximation quality" [32]. These advanced methods specifically target the challenges of ill-conditioning by incorporating higher-order information while maintaining computational feasibility through vector approximations rather than explicit tensor calculations.

In image registration applications, which represent highly ill-conditioned inverse problems, the ROSE algorithm (a structured L-BFGS method with diagonal scaling) demonstrated "significantly faster" performance than standard L-BFGS and previous structured approaches [30]. This improvement stems from more accurate Hessian approximation through problem decomposition, where the data-fitting term and regularizer are treated separately according to their structural properties.

Application-Specific Performance

Performance characteristics vary significantly across application domains. In machine learning applications, where ill-conditioning often arises from correlated features, L-BFGS typically outperforms conjugate gradient methods, particularly for medium-sized problems where the memory requirements remain manageable [32]. For image restoration problems, conjugate gradient methods sometimes compete more effectively, especially when combined with effective preconditioning strategies tailored to the specific imaging operator [32].

In molecular dynamics and drug development, energy minimization represents a critical computational bottleneck where ill-conditioning emerges from complex molecular interactions. The GROMACS documentation notes that while steepest descent is "robust and easy to implement," it lacks efficiency compared to more advanced methods [8]. L-BFGS provides the best performance for most scenarios, though conjugate gradients remain necessary for certain constrained minimizations where L-BFGS implementation would be complex [8].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Tools for Optimization Research

| Tool/Technique | Function | Application Context |

|---|---|---|

| Condition Number Estimation | Quantifies problem sensitivity | Pre-algorithm selection assessment |

| Automatic Differentiation | Provides precise gradients | Gradient-based methods requiring accuracy |

| Preconditioning Methods | Transforms problem to improve conditioning | Conjugate gradient and iterative methods |

| Limited Memory Storage | Manages computational resources | Large-scale L-BFGS implementations |

| Wolfe Condition Line Search | Ensures sufficient decrease in objective | Global convergence enforcement |

| Structured Hessian Approximation | Exploits problem-specific curvature | Structured L-BFGS methods |

| Mixed-Precision Arithmetic | Balances accuracy and computational cost | Large-scale ill-conditioned problems |

The condition number estimation stands as a fundamental diagnostic tool, allowing researchers to anticipate numerical challenges before committing to a specific algorithm [29] [28]. For ill-conditioned problems, preconditioning techniques become essential, effectively rescaling the problem to reduce the condition number and accelerate convergence [29]. Common approaches include diagonal scaling (Jacobi preconditioning), incomplete factorizations, and problem-specific transformations that exploit known structure.

Automatic differentiation tools provide accurate gradients without the truncation errors associated with finite difference approximations [18]. This becomes particularly important for ill-conditioned problems, where gradient inaccuracies can severely impact convergence. The maintenance of Hessian approximations in BFGS requires careful implementation to preserve positive definiteness through the curvature condition sₖᵀyₖ > 0 [32].

For large-scale problems, limited-memory methods balance the approximation quality with practical memory constraints [8] [30]. The line search procedure represents another critical component, with the Wolfe conditions providing theoretical guarantees for convergence while permitting sufficiently large step sizes [32]. Advanced implementations may employ adaptive strategies that dynamically adjust algorithm parameters based on local problem characteristics and observed convergence behavior.

Figure 2: Algorithm selection workflow for ill-conditioned optimization problems.

The performance of optimization algorithms on ill-conditioned problems varies significantly across method classes. Steepest descent provides robustness and implementation simplicity but suffers from slow convergence on ill-conditioned systems. Conjugate gradient methods offer substantial improvements, particularly for large-scale problems where memory constraints preclude matrix storage. L-BFGS delivers superior performance for most ill-conditioned problems by incorporating curvature information through Hessian approximations, with structured variants providing additional gains for problems with known decomposition properties.

For researchers and drug development professionals, algorithm selection should consider both problem structure and computational resources. While L-BFGS generally provides the best performance for ill-conditioned energy minimization problems in pharmaceutical applications, conjugate gradient methods remain valuable for specific constrained scenarios. Future research directions include enhanced tensor-based quasi-Newton methods, adaptive structured approximations, and hybrid approaches that dynamically select algorithms based on local problem characteristics.

Algorithm Selection and Implementation in Biomedical Data Science

In computational research, the selection of an optimization algorithm is a critical determinant of success, balancing factors such as convergence speed, memory usage, and stability. For large-scale problems prevalent in scientific domains like drug development, three algorithms stand out: Steepest Descent, Conjugate Gradient (CG), and Limited-memory Broyden-Fletcher-Goldfarb-Shanno (L-BFGS). This guide provides an objective, data-driven comparison of these methods to equip researchers with a structured decision-making framework. While Steepest Descent offers simplicity and robustness, advanced methods like CG and L-BFGS generally provide superior performance for complex optimization tasks. Empirical evidence indicates that L-BFGS often achieves the best practical results, combining the convergence advantages of quasi-Newton methods with manageable memory requirements for large-scale applications [8] [20].

Optimization algorithms form the computational backbone of scientific research, enabling the solution of complex problems ranging from molecular energy minimization in drug design to parameter estimation in machine learning models. The core challenge involves minimizing a function, often representing energy or error, with respect to a set of parameters. Among the plethora of available methods, Steepest Descent, Conjugate Gradient, and L-BFGS represent a spectrum of approaches from fundamental to advanced.

- Steepest Descent is a first-order iterative optimization algorithm. At each step, it moves in the direction of the negative gradient of the function at the current point, which is the direction of the steepest local descent [8]. Its simplicity is both a strength and a weakness; while easy to implement, it often exhibits slow convergence, particularly as it approaches the minimum.

- Conjugate Gradient (CG) methods improve upon Steepest Descent by combining current gradient information with previous search directions to find better descent paths. This approach avoids the oscillatory behavior of Steepest Descent, leading to significantly faster convergence [33] [34]. Variants like Fletcher-Reeves (FR) and Polak-Ribière-Polyak (PRP) differ in how they calculate the conjugate parameter, affecting their performance and convergence guarantees [33] [34].

- L-BFGS is a quasi-Newton method that approximates the Broyden-Fletcher-Goldfarb-Shanno (BFGS) algorithm using a limited amount of computer memory. Instead of storing a dense Hessian matrix (which is computationally prohibitive for large problems), it maintains a history of the last

mupdates (typicallym<10) to implicitly represent the Hessian, striking a balance between computational efficiency and convergence performance [16] [35].

Theoretical Foundations and Performance Characteristics

Algorithmic Properties and Convergence

The theoretical underpinnings of each algorithm directly dictate their performance in practical scenarios.

Steepest Descent is governed by a simple update rule: x_{n+1} = x_n + h_n * F_n / max(|F_n|), where h_n is a dynamically adjusted step size and F_n is the force or negative gradient [8]. Its robustness stems from this straightforward mechanism, but its linear convergence rate is often prohibitively slow for complex, ill-conditioned problems. The algorithm is guaranteed to converge but may require an impractically large number of iterations to reach a sufficient minimum.

Conjugate Gradient methods generate a sequence of search directions that are conjugate with respect to the Hessian matrix. The search direction is typically computed as d_k = -g_k + β_k * d_{k-1}, where g_k is the current gradient and β_k is a carefully chosen parameter [33] [34]. Under certain conditions (e.g., using Wolfe line search), CG methods can achieve n-step quadratic convergence for n-dimensional quadratic problems. However, for general non-linear functions, their global convergence is not always guaranteed for all variants, with some like PRP and HS potentially failing to converge despite good practical performance [33] [34].

L-BFGS uses a two-loop recursion to approximate the inverse Hessian matrix, requiring only O(mn) storage instead of the O(n²) required by full BFGS [16] [35]. This approximation allows it to maintain superlinear convergence—a key advantage over first-order methods—while remaining feasible for high-dimensional problems. Its convergence is well-established for convex problems, and it performs reliably on a wide variety of non-convex problems encountered in practice.

Quantitative Performance Comparison

Experimental data from various domains provides a clear performance hierarchy among these algorithms. The following table summarizes key quantitative findings from molecular optimization benchmarks conducted on 25 drug-like molecules, measuring the number of successful optimizations and convergence speed [20].

Table 1: Molecular Optimization Performance (Success Rate & Speed)

| Algorithm | Number Successfully Optimized (of 25) | Average Steps for Success | Number of True Minima Found |

|---|---|---|---|

| L-BFGS | 22-25 | ~100-120 | 16-21 |

| FIRE | 15-25 | ~105-159 | 11-21 |

| Sella | 15-25 | ~73-108 | 8-21 |

| geomeTRIC | 1-25 | ~11-196 | 1-23 |

A separate comparison in the GROMACS documentation highlights the trade-offs between algorithms for energy minimization, noting that while Conjugate Gradients cannot be used with constraints (e.g., rigid water models), L-BFGS converges faster than Conjugate Gradients in practice [8].

Table 2: Algorithm Characteristics and Trade-offs

| Algorithm | Memory Complexity | Convergence Rate | Parallelizability | Constraint Handling |

|---|---|---|---|---|

| Steepest Descent | O(n) | Linear | Good | Limited |

| Conjugate Gradient | O(n) | Superlinear (in theory) | Good | Poor [8] |

| L-BFGS | O(m*n) | Superlinear | Poor [8] | Good (via variants) |

Experimental Protocols and Methodologies

Molecular Optimization Benchmark

A benchmark study evaluated optimizer performance for neural network potentials (NNPs) on 25 drug-like molecules, providing a robust protocol for algorithm assessment [20].

- Objective: To assess the ability of optimizer-NNP pairings to replace density-functional-theory (DFT) for routine molecular optimization.

- Convergence Criteria: A maximum force component (

f_max) below 0.01 eV/Å (0.231 kcal/mol/Å), with a maximum of 250 optimization steps. - Evaluation Metrics:

- Success Rate: The number of molecules successfully optimized within the step limit.

- Efficiency: The average number of steps required for successful optimizations.

- Solution Quality: The number of optimized structures that are true local minima (zero imaginary frequencies) versus saddle points.

- Algorithms Tested: L-BFGS (from ASE), FIRE (from ASE), Sella, and geomeTRIC (in both Cartesian and internal coordinates).

Numerical Performance on Test Functions

Performance evaluation for Conjugate Gradient methods often involves testing on standardized problem sets from libraries like CUTEst [11] [34].

- Objective: To compare the computational efficiency and robustness of newly proposed CG methods against established benchmarks (e.g., CG-Descent).

- Performance Measures: The number of iterations, number of function/gradient evaluations, and CPU time required to solve a large set (e.g., 143) of unconstrained optimization problems [11].

- Line Search: Most modern CG implementations use the Wolfe line search conditions to determine the step size, which must satisfy both sufficient decrease and curvature conditions [33] [34].

A Researcher's Decision Framework

Selecting the optimal algorithm requires matching algorithmic strengths to problem characteristics and resource constraints. The following diagram outlines the decision logic.