Steepest Descent vs Conjugate Gradient: Algorithmic Breakdown and Applications in Drug Discovery

This article provides a comprehensive explanation of the Steepest Descent and Conjugate Gradient algorithms, tailored for researchers and professionals in drug development.

Steepest Descent vs Conjugate Gradient: Algorithmic Breakdown and Applications in Drug Discovery

Abstract

This article provides a comprehensive explanation of the Steepest Descent and Conjugate Gradient algorithms, tailored for researchers and professionals in drug development. It covers the foundational mathematics of both methods, explores their practical application in critical tasks like energy minimization for small-molecule drug discovery, offers strategies for troubleshooting and optimization, and presents a comparative analysis of their performance. The content synthesizes theoretical insights with real-world biomedical case studies, including applications for Covid-19 and diabetes drug candidates, to guide the selection and implementation of efficient optimization techniques in clinical research.

Gradient Basics: Unraveling the Core Principles of Descent Algorithms

Optimization algorithms form the computational backbone of modern scientific inquiry, from simulating molecular interactions to training complex artificial intelligence models. At its core, an optimization problem involves finding the parameters that minimize (or maximize) an objective function. In computational chemistry and drug discovery, this typically translates to identifying molecular configurations with the lowest possible energy states, which correspond to stable, biologically relevant structures. The efficiency and effectiveness of these optimization procedures directly impact research timelines, computational costs, and ultimately, the success of scientific endeavors.

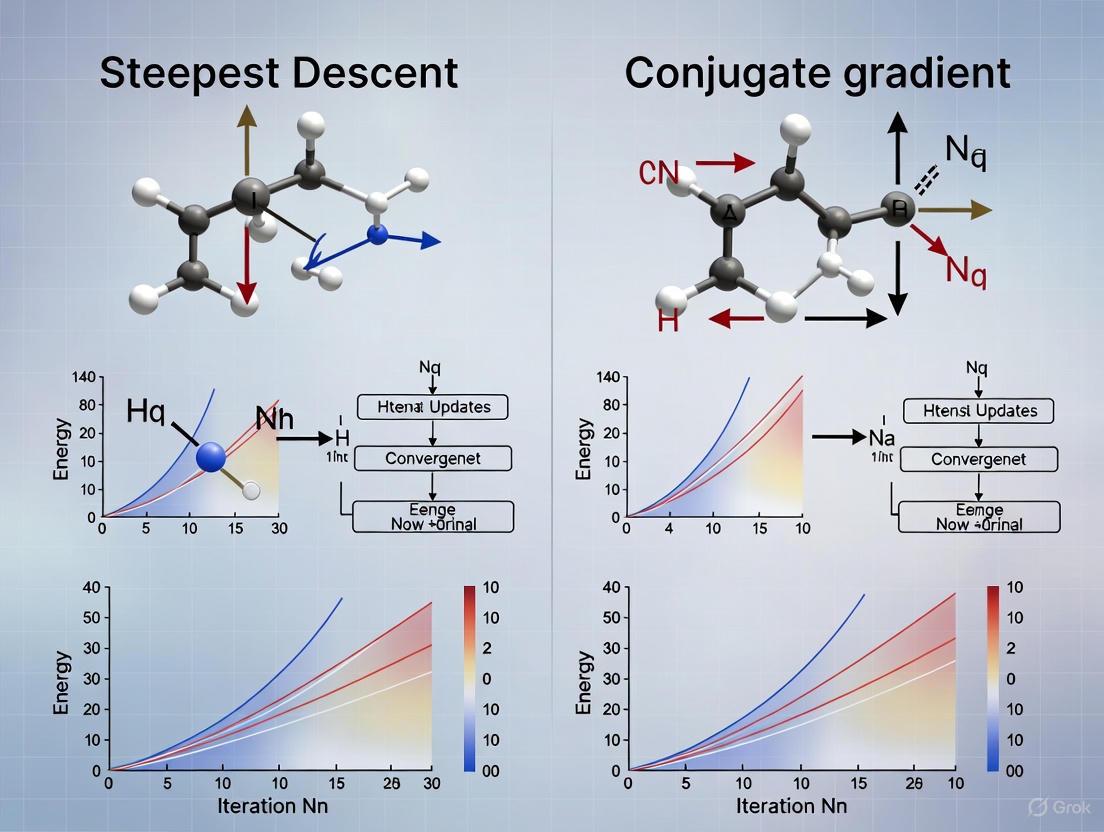

This technical guide examines two fundamental optimization approaches—steepest descent and conjugate gradient methods—within the context of solving linear systems and energy minimization problems. While both are first-order iterative optimization techniques, their convergence properties, computational requirements, and applicability to different problem domains vary significantly. Understanding their theoretical foundations and practical implementations is essential for researchers selecting appropriate methodologies for scientific computing applications, particularly in molecular modeling and drug development workflows where energy minimization is a critical step [1].

Mathematical Foundations of Optimization

The General Minimization Problem

The minimization problem can be formally stated as follows: given a function ( f(\mathbf{x}) ) where ( \mathbf{x} ) is a vector of variables, find the value ( \mathbf{x}^* ) such that ( f(\mathbf{x}^*) ) is a local minimum. In molecular modeling contexts, ( f ) typically represents the potential energy of a system, and ( \mathbf{x} ) represents the atomic coordinates [1]. The potential energy function incorporates multiple components:

- Bond energy and angle energy, representing covalent bonds and bond angles

- Dihedral energy, accounting for torsional strain

- van der Waals terms (Leonard-Jones potential) preventing steric clashes

- Electrostatic energy modeling long-range forces between charged particles [1]

These quantitative terms are parameterized in what is collectively known as a force field (e.g., CHARMM, AMBER, GROMOS) [1].

Stationary Points and the Energy Surface

The potential energy surface (PES) represents the mathematical relationship between molecular structure and its energy [2]. Minimum points on this surface correspond to stable molecular conformations, with the global energy minimum representing the most stable configuration [1]. The challenge lies in efficiently navigating this complex, high-dimensional surface to locate minima, particularly the global minimum among potentially many local minima.

Table 1: Key Concepts in Energy Minimization

| Concept | Mathematical Definition | Physical Interpretation |

|---|---|---|

| Stationary Point | ( \nabla f(\mathbf{x}) = 0 ) | Geometry where net force on all atoms is zero |

| Local Minimum | ( f(\mathbf{x}^) \leq f(\mathbf{x}) ) for ( |\mathbf{x} - \mathbf{x}^| < \varepsilon ) | Stable molecular conformation |

| Global Minimum | Lowest value of ( f(\mathbf{x}) ) across entire domain | Most thermodynamically stable conformation |

| Saddle Point | Point where gradient is zero but not a minimum | Transition state between stable conformations |

First-Order Optimization Algorithms

First-order iterative optimization methods generate a sequence ( {\mathbf{x}^k}_{k\in\mathbb{N}} ) that converges to a minimum (or stationary point) through the iterative process:

- Selecting a search direction ( \mathbf{d}^k \in \mathbb{R}^n )

- Determining a step size ( \tau_k \in \mathbb{R} )

- Updating the position: ( \mathbf{x}^{k+1} = \mathbf{x}^k + \tau_k \mathbf{d}^k )

- Repeating until convergence criteria are met [3]

These methods use only function values ( f(\mathbf{x}^j) ) and gradients ( \nabla f(\mathbf{x}^j) ) for ( j \leq k ) when determining ( \mathbf{d}^k ) and ( \tau_k ).

Steepest Descent Algorithm

Theoretical Foundation

The steepest descent method (also known as gradient descent) represents the simplest first-order optimization approach, choosing the search direction as the negative gradient: ( \mathbf{d}^k = -\nabla f(\mathbf{x}^k) ) [3]. This direction represents the locally steepest downhill direction on the energy surface.

In molecular modeling implementations such as GROMACS, the algorithm proceeds as follows [4]:

- Compute forces ( \mathbf{F} ) (negative gradient) and potential energy at current position

- Calculate new positions: ( \mathbf{r}{n+1} = \mathbf{r}n + \mathbf{F}n \frac{hn}{\max(|\mathbf{F}n|)} ), where ( hn ) is the maximum displacement

- Recompute forces and energy for the new positions

- If ( V{n+1} < Vn ): accept new positions and increase step size ( h{n+1} = 1.2hn )

- If ( V{n+1} \geq Vn ): reject new positions and decrease step size ( hn = 0.2hn )

The algorithm terminates when the maximum absolute value of the force components falls below a specified threshold ( \epsilon ) [4].

Convergence Properties and Limitations

Steepest descent exhibits robust convergence in initial iterations, particularly when starting configurations are far from minimum [2] [3]. However, it demonstrates poor asymptotic convergence, with ( f(\mathbf{x}^k) - \min_{\mathbf{x}} f(\mathbf{x}) = \mathcal{O}(1/k) ) [3]. As the method approaches a minimum, progress slows dramatically, with the algorithm often exhibiting oscillatory behavior in narrow valleys of the energy surface [2].

Despite these limitations, steepest descent remains valuable for preliminary minimization stages where rapid initial progress is more important than precise convergence, and for systems with significant steric clashes that require initial resolution [4].

Conjugate Gradient Algorithm

Theoretical Foundation

The conjugate gradient method improves upon steepest descent by incorporating information from previous search directions. While classical CG solves linear systems with symmetric positive definite matrices, the nonlinear conjugate gradient method (NLCGM) extends this approach to general nonlinear optimization [3].

NLCGM determines search directions through a linear combination of the current gradient and previous search direction: ( \mathbf{d}^k = -\nabla f(\mathbf{x}^k) + \beta^k \mathbf{d}^{k-1} ), where ( \beta^k ) is calculated using various formulae (Fletcher-Reeves, Hestenes-Stiefel, Polak-Ribière, Hager-Zhang) [3].

In the special case of quadratic functions ( f(\mathbf{x}) = \frac{1}{2}\mathbf{x}^T\mathbf{A}\mathbf{x} + \mathbf{b}^T\mathbf{x} + c ), CG generates conjugate search directions satisfying ( \mathbf{d}i^T\mathbf{A}\mathbf{d}j = 0 ) for ( i \neq j ), guaranteeing convergence to the minimum in at most ( n ) steps for an ( n )-dimensional problem [3].

Convergence Properties and Advantages

The conjugate gradient method achieves superior convergence compared to steepest descent, with ( f(\mathbf{x}^k) - \min_{\mathbf{x}} f(\mathbf{x}) = \mathcal{O}(1/k^2) ) for quadratic functions [3]. In molecular modeling contexts, CG "is slower than steepest descent in the early stages of the minimization, but becomes more efficient closer to the energy minimum" [4].

For non-quadratic functions, NLCGM maintains better convergence than steepest descent while requiring only marginally more computational effort per iteration (primarily inner products and AXPY operations) [3]. However, implementations may face restrictions; for instance, GROMACS cannot use conjugate gradient with constraints including the SETTLE algorithm for water [4].

Comparative Analysis: Steepest Descent vs. Conjugate Gradient

Performance Characteristics

Table 2: Algorithm Comparison for Energy Minimization

| Characteristic | Steepest Descent | Conjugate Gradient |

|---|---|---|

| Initial convergence | Rapid far from minimum [4] | Slower initially [4] |

| Asymptotic convergence | Slow near minimum [4] [3] | Fast near minimum [4] [3] |

| Computational cost per iteration | Low | Moderately higher [3] |

| Memory requirements | Low | Low (does not store full history) [3] |

| Robustness | High - rarely fails to find lower energy [2] | Moderate - may require restarts |

| Implementation complexity | Simple [2] | Moderate |

| Optimal for | Initial minimization, poorly conditioned systems [4] | Refined minimization prior to analysis [4] |

A technical analysis demonstrates that "the conjugate-gradient algorithm is actually superior to the steepest-descent algorithm in that, in the generic case, at each iteration it yields a lower cost than does the steepest-descent algorithm, when both start at the same point" [5].

Practical Implementation Considerations

Stopping Criteria

Effective termination criteria are essential for practical implementations. In molecular dynamics packages like GROMACS, minimization typically stops when the maximum absolute value of force components falls below a specified threshold ( \epsilon ) [4]. A reasonable value can be estimated from the root mean square force ( f ) a harmonic oscillator exhibits at temperature ( T ):

[ f = 2\pi\nu\sqrt{2mkT} ]

where ( \nu ) is the oscillator frequency, ( m ) the reduced mass, and ( k ) Boltzmann's constant [4]. For a weak oscillator (100 cm(^{-1}) wave number, 10 atomic units mass) at 1 K, ( f = 7.7 ) kJ mol(^{-1}) nm(^{-1}), suggesting ( \epsilon ) between 1 and 10 is acceptable [4].

Workflow Integration

Optimization Protocol

A hybrid approach often yields optimal results, beginning with steepest descent for rapid initial progress followed by conjugate gradient for refined minimization [4]. This strategy is particularly valuable for molecular systems starting from experimentally-derived coordinates that may contain steric clashes or other high-energy interactions.

Applications in Drug Discovery and Molecular Modeling

Energy Minimization in Molecular Systems

Energy minimization serves as a fundamental procedure in molecular modeling to locate stable molecular conformations by finding arrangements where "the net interatomic force on each atom is acceptably close to zero and the position on the Potential Energy Surface (PES) is a stationary point" [2]. Key applications include:

- Correcting structural anomalies: Resolving bad stereochemistry and short contacts in experimentally-derived structures [1]

- Locating stable conformations: Identifying low-energy arrangements for drug candidates [2]

- Preparing for advanced simulations: Generating starting configurations for molecular dynamics or normal-mode analysis [4]

The GROMACS documentation emphasizes that conjugate gradient "can not be used with constraints," requiring flexible water models when aqueous environments are simulated [4]. This limitation makes steepest descent preferable for certain constrained systems despite its slower convergence.

Integration with AI-Driven Drug Discovery

Modern drug discovery increasingly integrates optimization algorithms with artificial intelligence approaches. Generative models (GMs) employing variational autoencoders (VAEs) with active learning cycles represent an emerging paradigm that combines physics-based energy minimization with data-driven exploration [6].

AI-Driven Molecular Optimization

This integrated workflow enables "exploration of novel chemical spaces tailored for specific targets" while ensuring generated molecules exhibit favorable energy characteristics and synthetic accessibility [6]. The integration of traditional optimization with machine learning approaches represents the cutting edge of computational drug development.

Research Reagent Solutions

Table 3: Essential Computational Tools for Optimization in Drug Discovery

| Tool/Category | Representative Examples | Primary Function in Optimization |

|---|---|---|

| Force Fields | CHARMM, AMBER, GROMOS [1] | Parameterize energy functions for molecular systems |

| Molecular Dynamics Packages | GROMACS [4] | Implement optimization algorithms for energy minimization |

| AI/Generative Platforms | Insilico Medicine Pharma.AI, Recursion OS, Iambic Therapeutics [7] | Integrate optimization with generative molecular design |

| Active Learning Frameworks | VAE with nested AL cycles [6] | Iteratively refine predictions using optimization oracles |

| Quantum Mechanics Packages | Various [1] | Provide energy and derivative calculations for small systems |

The selection between steepest descent and conjugate gradient optimization algorithms represents a fundamental strategic decision in computational chemistry and drug discovery workflows. While steepest descent offers robustness and rapid initial convergence, conjugate gradient methods provide superior asymptotic performance with minimal additional computational overhead. Understanding the mathematical foundations, implementation details, and practical considerations of these algorithms enables researchers to make informed decisions based on their specific scientific objectives and computational constraints.

As drug discovery evolves toward increasingly integrated, AI-driven approaches, the role of efficient optimization algorithms becomes ever more critical. The synergy between traditional energy minimization techniques and modern machine learning frameworks promises to accelerate the identification and optimization of novel therapeutic compounds, potentially addressing the pharmaceutical industry's persistent challenges of excessive costs, lengthy timelines, and high failure rates.

Within the broader research landscape comparing steepest descent with conjugate gradient algorithms, understanding the fundamental intuition behind the steepest descent method is paramount. This first-order iterative optimization algorithm, foundational to modern machine learning and scientific computing, operates on a simple yet powerful principle: at each point, it moves in the direction of the negative gradient of the function [8]. This direction is mathematically guaranteed to be the path of steepest descent, providing a natural and computationally feasible approach to minimizing objective functions [9]. While contemporary research often focuses on more advanced methods like conjugate gradient, the intuition and mechanics of steepest descent remain critical for understanding the optimization landscape, particularly for researchers and scientists in fields like drug development where complex, high-dimensional parameter spaces are commonplace [10] [11].

The historical significance of steepest descent, attributed to Cauchy in 1847, underscores its enduring relevance [8]. This technical guide will deconstruct the core mathematical intuition, provide a detailed comparative analysis with conjugate gradient methods, and present practical experimental protocols for implementing and evaluating these algorithms, with a specific focus on applications relevant to scientific research.

Mathematical Foundations of Steepest Descent

The Core Principle: Direction of Steepest Descent

The steepest descent algorithm is built upon a fundamental fact from multivariable calculus. For a continuously differentiable multivariable function ( f(\mathbf{x}) ), the gradient ( \nabla f(\mathbf{x}) ) evaluated at a point ( \mathbf{x}0 ) represents the direction of the greatest rate of increase of the function at that point [8] [9]. Consequently, the negative gradient ( -\nabla f(\mathbf{x}0 ) points in the direction of the steepest decrease [12]. This local property provides the foundational intuition for the algorithm: by repeatedly moving in the direction opposite to the gradient, we can descend towards a local minimum.

This relationship is formalized by the directional derivative. For a unit vector ( \mathbf{v} ), the directional derivative ( \frac{\partial f}{\partial \mathbf{v}} ) measures the rate of change of ( f ) in the direction of ( \mathbf{v} ). This derivative is maximized when ( \mathbf{v} ) aligns with the gradient and minimized when ( \mathbf{v} ) aligns with the negative gradient [12]. The algorithm leverages this local property to generate a global search strategy.

The Iterative Update Rule

The steepest descent method generates a sequence of points ( \mathbf{x}0, \mathbf{x}1, \mathbf{x}_2, \ldots ) intended to converge to a local minimum. The sequence is defined by the following iterative update rule:

[ \mathbf{x}{n+1} = \mathbf{x}n - \etan \nabla f(\mathbf{x}n) ]

Here, ( \etan ) is a positive scalar known as the step size or learning rate [8]. The term ( \etan \nabla f(\mathbf{x}n) ) represents the step taken, and its magnitude is controlled by ( \etan ). This process can be visualized as being stuck on a mountain in a dense fog and determining your next step downhill by measuring the local steepness of the terrain [8]. You repeatedly measure and take steps in the steepest downward direction until you can descend no further.

The Critical Role of the Step Size

The choice of step size ( \eta ) is critical to the algorithm's performance. A step size that is too small leads to slow convergence, requiring many iterations to reach the minimum. Conversely, a step size that is too large can cause overshooting, where the algorithm diverges or oscillates wildly around the minimum instead of stably converging to it [8] [13].

Several strategies exist for selecting ( \eta ):

- Constant Step Size: A fixed value used for all iterations. Simple but requires careful tuning.

- Diminishing Step Size: A sequence such as ( \eta_n = 1/n ) that decreases over time, guaranteeing convergence under certain conditions.

- Line Search: An adaptive approach where ( \etan ) is chosen at each step to minimize the function along the direction of the negative gradient, i.e., ( \etan = \arg \min{\alpha > 0} f(\mathbf{x}n - \alpha \nabla f(\mathbf{x}_n)) ) [12].

The following diagram illustrates the logical workflow of the steepest descent algorithm, highlighting the iterative process and the role of the gradient and step size.

Steepest Descent vs. Conjugate Gradient: A Comparative Analysis

While steepest descent provides a foundational approach, its performance is often surpassed by the conjugate gradient (CG) method, particularly for solving large-scale linear systems and optimization problems. The primary weakness of steepest descent is its tendency to exhibit slow convergence, especially in valleys of ill-conditioned functions, where it often takes many small, zig-zagging steps [10]. This occurs because while each step is locally optimal, the sequence of steps is not necessarily globally efficient.

The conjugate gradient method addresses this by constructing a sequence of conjugate search directions. This means that the algorithm minimizes the function over a expanding subspace that includes the global minimum, leading to the exact solution for quadratic problems in at most ( n ) steps (where ( n ) is the dimension) [10] [11]. Unlike steepest descent, which can re-minimize previously minimized directions, CG ensures that each step is optimal with respect to all previous directions.

The table below summarizes the key quantitative differences between the two algorithms, based on theoretical and empirical findings.

Table 1: Quantitative Comparison of Steepest Descent and Conjugate Gradient

| Feature | Steepest Descent | Conjugate Gradient |

|---|---|---|

| Iteration Complexity | Can be very high for ill-conditioned problems [10] | Convergence in at most ( n ) steps for quadratic problems [11] |

| Computational Cost per Iteration | ( O(n) ) | ( O(n) ) (for linear systems) |

| Storage Requirements | ( O(n) ) (stores current point and gradient) | ( O(n) ) (stores several vectors) |

| Typical Convergence Rate | Linear (can be very slow) [10] | Superlinear (faster for quadratic problems) [11] |

| Dependence on Condition Number | High sensitivity to the condition number of the Hessian [10] | Better performance for problems with high condition numbers [11] |

| Use of Second-Order Information | No | Yes, implicitly constructs Hessian information [10] |

The fundamental difference in their search behavior is visualized in the following pathway diagram, which contrasts the typical trajectories of each algorithm when minimizing a quadratic function.

Experimental Protocols and Implementation

Protocol 1: Benchmarking Algorithm Performance

To empirically compare the performance of steepest descent and conjugate gradient algorithms, researchers can follow this detailed protocol. The objective is to minimize a predefined cost function, typically a strongly convex quadratic function, and measure metrics like the number of iterations and computational time required to reach a specified tolerance.

1. Problem Formulation:

- Define the cost function, ( f(\mathbf{x}) ). For controlled experiments, use ( f(\mathbf{x}) = \frac{1}{2}\mathbf{x}^T A \mathbf{x} - \mathbf{b}^T \mathbf{x} ), where ( A ) is a symmetric positive definite matrix. The condition number of ( A ) can be varied to test algorithm robustness [11].

- The global minimum is located at ( A^{-1}\mathbf{b} ).

2. Algorithm Initialization:

- Initialize the parameter vector ( \mathbf{x}_0 ) randomly or at a fixed point.

- Set a convergence tolerance ( \epsilon ) (e.g., ( 10^{-6} )) and a maximum number of iterations ( K_{max} ).

3. Steepest Descent Implementation:

- For k = 0 to K{max}:

- Compute Gradient: ( \mathbf{g}k = \nabla f(\mathbf{x}k) = A\mathbf{x}k - \mathbf{b} ).

- Check Convergence: If ( \|\mathbf{g}k\| < \epsilon ), terminate.

- Compute Step Size: Perform an exact line search: ( \etak = \frac{\mathbf{g}k^T \mathbf{g}k}{\mathbf{g}k^T A \mathbf{g}k} ) [8].

- Update Parameters: ( \mathbf{x}{k+1} = \mathbf{x}k - \etak \mathbf{g}k ).

4. Conjugate Gradient Implementation:

- Initialization: ( \mathbf{x}0 ), ( \mathbf{g}0 = A\mathbf{x}0 - \mathbf{b} ), ( \mathbf{d}0 = -\mathbf{g}_0 ).

- For k = 0 to K{max}:

- Compute Step Size: ( \alphak = \frac{\mathbf{g}k^T \mathbf{g}k}{\mathbf{d}k^T A \mathbf{d}k} ).

- Update Parameters: ( \mathbf{x}{k+1} = \mathbf{x}k + \alphak \mathbf{d}k ).

- Compute Gradient: ( \mathbf{g}{k+1} = A\mathbf{x}{k+1} - \mathbf{b} ).

- Check Convergence: If ( \|\mathbf{g}{k+1}\| < \epsilon ), terminate.

- Compute Conjugate Parameter: ( \betak = \frac{\mathbf{g}{k+1}^T \mathbf{g}{k+1}}{\mathbf{g}k^T \mathbf{g}k} ) (Fletcher-Reeves formula) [11].

- Update Search Direction: ( \mathbf{d}{k+1} = -\mathbf{g}{k+1} + \betak \mathbf{d}k ).

5. Data Collection and Analysis:

- For each run, record the iteration count ( k ) and the value of ( \|\nabla f(\mathbf{x}_k)\| ) at each iteration.

- Plot ( \|\nabla f(\mathbf{x}_k)\| ) vs. iteration number for both algorithms on a log-linear scale to visualize convergence rates.

- Repeat experiments with different condition numbers of ( A ) to analyze performance degradation.

The Scientist's Toolkit: Essential Research Reagents

Implementing and testing optimization algorithms requires a set of core computational "reagents." The following table details these essential components and their functions in the experimental workflow.

Table 2: Key Research Reagent Solutions for Optimization Experiments

| Research Reagent | Function & Purpose | Example/Notes |

|---|---|---|

| Test Problem Generator | Creates benchmark functions (e.g., quadratics, Rosenbrock) with known minima to validate algorithms. | make_spd_matrix from scikit-learn for generating matrix ( A ) [11]. |

| Gradient Computation Tool | Computes the gradient of the cost function efficiently, a prerequisite for both SD and CG. | Automatic differentiation libraries like autograd or JAX [14]. |

| Line Search Module | An auxiliary optimizer that finds the optimal step size ( \eta ) along a given search direction. | Implement Wolfe condition checks for robust step size selection [12] [11]. |

| Convergence Analyzer | Tracks the norm of the gradient and/or function value over iterations to assess convergence speed and stability. | Custom script to plot ( f(\mathbf{x}_k) ) vs. ( k ) on a log-scale. |

| Performance Profiler | Measures computational time and memory usage, providing data for complexity analysis. | Python's time and memory_profiler modules. |

Protocol 2: Application in Image Restoration

Steepest descent and conjugate gradient methods find practical application in solving large-scale linear inverse problems, such as image restoration [11]. This protocol outlines their use in deblurring an image.

1. Problem Formulation:

- The goal is to recover a clean image ( \mathbf{x} ) from a blurred and noisy observation ( \mathbf{b} ). The relationship is often modeled as ( \mathbf{b} = A\mathbf{x} + \mathbf{n} ), where ( A ) is a matrix representing the blur operator, and ( \mathbf{n} ) is noise.

- This is framed as minimizing ( f(\mathbf{x}) = \frac{1}{2}\|A\mathbf{x} - \mathbf{b}\|^2 + \lambda R(\mathbf{x}) ), where ( R(\mathbf{x}) ) is a regularization term (e.g., Tikhonov) and ( \lambda ) is a regularization parameter.

2. Experimental Workflow:

- Data Preparation: Use a standard dataset (e.g., Lena, Cameraman). Create a blurred version ( \mathbf{b} ) by convolving the original image with a Gaussian blur kernel and adding Gaussian noise.

- Algorithm Setup: Implement both steepest descent and conjugate gradient to minimize ( f(\mathbf{x}) ). The gradient is ( \nabla f(\mathbf{x}) = A^T(A\mathbf{x} - \mathbf{b}) + \lambda \nabla R(\mathbf{x}) ).

- Evaluation: Run both algorithms, monitoring the reduction in the cost function. Compare the quality of the restored images using metrics like Peak Signal-to-Noise Ratio (PSNR) and the time taken to reach a satisfactory result.

The workflow for this image-based experiment is summarized in the following diagram.

The intuition of following the negative gradient path provides a powerful and accessible entry point into the field of mathematical optimization. The steepest descent algorithm embodies this principle, offering a guaranteed local descent direction that is simple to compute and implement. Its first-order nature makes it computationally lightweight per iteration, a characteristic that sustains its relevance in modern applications, including the training of massive deep learning models where exact gradients are unavailable, and stochastic approximations are used [8] [13].

However, as demonstrated through both theoretical comparison and experimental protocol, steepest descent is often hampered by its slow convergence properties, particularly on ill-conditioned problems prevalent in scientific computing. The conjugate gradient algorithm and its modern hybrids represent a significant evolution, leveraging second-order information to construct more efficient search paths that avoid the zig-zagging behavior of steepest descent [11]. For researchers and scientists, particularly in drug development where optimizing complex molecular models is routine, the choice between these algorithms is not merely academic. It has direct implications for the speed and feasibility of discovery. A robust understanding of both the foundational intuition of steepest descent and the advanced efficiency of conjugate gradient methods provides an essential toolkit for tackling the intricate optimization challenges at the forefront of science and technology.

The steepest descent method represents one of the most fundamental optimization algorithms for minimizing multivariable nonlinear functions. As a first-order iterative optimization algorithm, its convergence properties and practical efficiency are critically dependent on the careful selection of step sizes. This technical guide examines the mathematical formulation of steepest descent methods with particular emphasis on how step size strategies influence convergence behavior, framed within a broader comparative analysis with conjugate gradient methods.

Within optimization research, understanding the trade-offs between various step size selection methodologies provides crucial insights for developing efficient algorithms tailored to specific problem domains, including applications in drug development where parameter optimization frequently arises in molecular modeling and pharmacokinetic analysis.

Mathematical Foundations of Steepest Descent

Core Algorithmic Framework

The steepest descent method follows an intuitive yet powerful iterative process for minimizing a function (f: \mathbb{R}^n \rightarrow \mathbb{R}). At each iteration (k), the algorithm moves in the direction opposite to the gradient (\nabla f(\mathbf{x}_k)), which represents the direction of steepest local descent [15]. The fundamental update equation is given by:

[ \mathbf{x}{k+1} = \mathbf{x}k - \alphak \nabla f(\mathbf{x}k) ]

where (\mathbf{x}k) denotes the current iterate, (\nabla f(\mathbf{x}k)) is the gradient at the current point, and (\alpha_k > 0) is the step size (or learning rate) at iteration (k) [15] [16].

The convergence behavior of this algorithm is heavily influenced by how the step size (\alpha_k) is selected at each iteration. Proper step size selection balances two competing objectives: achieving sufficient function reduction at each iteration while avoiding overshooting that leads to divergence or oscillatory behavior [16] [17].

Geometric Interpretation

The steepest descent direction (-\nabla f(\mathbf{x}_k)) guarantees immediate local improvement but doesn't necessarily yield global optimization efficiency. The orthogonal nature of successive gradient directions in steepest descent leads to characteristic zig-zag behavior, particularly in narrow valleys of the objective function landscape. This phenomenon explains why the theoretical convergence guarantee doesn't always translate to practical efficiency, especially when compared with methods like conjugate gradient that explicitly address this directional interdependence [15].

Step Size Selection Methodologies

The selection of the step size (\alpha_k) represents a critical design choice in implementing steepest descent algorithms. Different approaches offer distinct trade-offs between computational expense and convergence rate guarantees.

Constant Step Size

The simplest approach employs a constant step size (\alpha_k = \alpha) for all iterations. This strategy requires minimal computation per iteration but may lead to slow convergence or divergence if poorly chosen. For convergence, the step size must satisfy (0 < \alpha < 2/L), where (L) is the Lipschitz constant of (\nabla f) [17].

Diminishing Step Sizes

Diminishing step size sequences follow predetermined patterns that decrease to zero as iterations progress. A common choice is the harmonic sequence (ak = a1/k), which ensures that the step sizes approach zero in the limit (k \to \infty) while the cumulative sum diverges ((\sum{k=1}^{\infty} ak = \infty)) [17]. This divergent series condition ensures the algorithm can reach any point in the space rather than getting stuck prematurely [17].

The decreasing nature of these step sizes provides theoretical convergence guarantees at the potential cost of practical convergence speed, particularly as the step sizes become exceedingly small in later iterations [17].

Exact Line Search

Exact line search determines the optimal step size at each iteration by solving the one-dimensional minimization problem:

[ \alphak = \arg\min{\alpha > 0} f(\mathbf{x}k - \alpha \nabla f(\mathbf{x}k)) ]

While this approach optimizes progress along the search direction, the computational cost of solving this subproblem exactly often outweighs its benefits, making it impractical for many applications [15].

Adaptive Barzilai-Borwein (BB) Methods

The Barzilai-Borwein method represents a sophisticated approach that approximates the Hessian information using previous gradient and iterate differences [16]. The two standard BB step sizes are:

[ \alphak^{BB1} = \frac{\mathbf{s}{k-1}^T \mathbf{y}{k-1}}{\mathbf{s}{k-1}^T \mathbf{s}{k-1}} \quad \text{and} \quad \alphak^{BB2} = \frac{\mathbf{y}{k-1}^T \mathbf{y}{k-1}}{\mathbf{s}{k-1}^T \mathbf{y}{k-1}} ]

where (\mathbf{s}{k-1} = \mathbf{x}k - \mathbf{x}{k-1}) and (\mathbf{y}{k-1} = \nabla f(\mathbf{x}k) - \nabla f(\mathbf{x}{k-1})) [16].

Recent research has extended these concepts beyond the original BB bounds. One approach proposes step sizes longer than (\alphak^{BB2}) and shorter than (\alphak^{BB1}), with analysis revealing that under certain conditions, the longer step size can yield monotonic gradient norm decrease while the shorter step size consistently produces non-monotonic behavior [16].

Table 1: Classification of Step Size Selection Methods

| Method Type | Computational Cost | Convergence Guarantee | Practical Efficiency |

|---|---|---|---|

| Constant | Very Low | Conditional | Variable |

| Diminishing | Low | Strong | Often Slow |

| Exact Line Search | High | Strong | Often Impractical |

| BB Methods | Moderate | Strong | Generally Good |

| Extended BB | Moderate | R-linear for quadratics | Promising |

Convergence Analysis

Theoretical Convergence Properties

For convex objective functions with L-Lipschitz continuous gradients, steepest descent with appropriate step sizes achieves a convergence rate of (O(1/k)) for the function value suboptimality [16] [17]. Under stronger assumptions such as strong convexity, this can be improved to linear convergence (O(\rho^k)) for some (0 < \rho < 1).

For the strictly convex quadratic minimization problem:

[ \min_{\mathbf{x} \in \mathbb{R}^n} f(\mathbf{x}) = \frac{1}{2}\mathbf{x}^T A \mathbf{x} - \mathbf{b}^T \mathbf{x} ]

where (A) is symmetric positive definite, extended BB-like step sizes have been proven to achieve R-linear convergence using dynamics of difference equations [16].

Stability and Monotonicity Considerations

Recent research on extended BB-like step sizes has revealed intriguing stability properties. The stability here refers to whether the gradient norm decreases monotonically. Surprisingly, under certain conditions, gradient descent with the longer extended step size exhibits stability (monotonic gradient decrease), while the shorter step size consistently leads to instability [16].

This behavior can be visualized through the following workflow diagram:

Diagram 1: Steepest descent iteration workflow

Comparative Analysis with Conjugate Gradient Methods

Performance Metrics Comparison

When comparing optimization algorithms, both iteration count and computational time provide valuable perspectives on efficiency [15]. Empirical studies implementing both steepest descent and conjugate gradient methods in MATLAB have quantified their relative performance:

Table 2: Steepest Descent vs. Conjugate Gradient Performance Comparison [15]

| Performance Metric | Steepest Descent Method | Conjugate Gradient Method |

|---|---|---|

| Iteration Count | Higher | Fewer |

| Time per Iteration | Lower | Higher |

| Overall Efficiency | Less Efficient | More Efficient |

| Convergence Time | Shorter | Longer |

| Stability | Monotonic decrease | Non-monotonic |

The conjugate gradient method generally requires fewer iterations to achieve the same accuracy level due to its utilization of conjugate directions rather than purely gradient-based directions [15]. This allows it to avoid the zig-zagging behavior that plagues steepest descent in certain geometries. However, each conjugate gradient iteration carries higher computational overhead due to the additional vector operations and orthogonalization requirements.

Interestingly, despite requiring more iterations, steepest descent can sometimes converge in less total time than conjugate gradient for certain problem classes, particularly when function and gradient evaluations are computationally inexpensive [15].

Hybrid Approaches and Modern Variants

Recent research has explored innovative combinations and modifications of these classical approaches. The frozen gradient method, which computes the derivative of nonlinear operators only at an initial point, significantly reduces computational complexity while maintaining convergence through conditional stability estimates [18].

For inverse problems with conditional stability on convex and compact sets, projected steepest descent with frozen derivatives has been shown to converge while avoiding expensive derivative recomputations at each iteration [18]. This approach extends to multi-level methods that leverage nested families of convex compact subsets with controlled growth of stability constants [18].

Experimental Protocols and Implementation

MATLAB Implementation Framework

Empirical comparison of steepest descent and conjugate gradient methods typically follows a structured experimental protocol [15]:

Test Function Selection: Choose representative nonlinear functions with varying curvature properties and dimensionalities.

Algorithm Implementation: Code both algorithms with consistent stopping criteria, typically based on gradient norm tolerance ((\|\nabla f(x_k)\| < \delta)).

Step Size Configuration: For steepest descent, implement multiple step size strategies (constant, diminishing, BB) for comparison.

Performance Metrics Collection: Track iterations until convergence, computational time, and final function value accuracy.

Statistical Analysis: Execute multiple runs with different initializations to account for variability.

Research Reagent Solutions

Table 3: Essential Computational Tools for Optimization Experiments

| Tool/Component | Function in Research | Implementation Notes |

|---|---|---|

| MATLAB Optimization Environment | Algorithm implementation and testing | Provides matrix operations and visualization capabilities |

| Gradient Computation Module | Numerical gradient approximation | Can use finite differences or automatic differentiation |

| Step Size Selector | Implements various αₖ strategies | Constant, diminishing, BB, and extended BB options |

| Convergence Monitor | Tracks progress and determines stopping | Typically based on ‖∇f(xₖ)‖ < δ or iteration limit |

| Performance Profiler | Measures computation time and memory usage | Should account for both iterations and wall-clock time |

The mathematical formulation of steepest descent reveals a sophisticated interplay between step size selection and convergence behavior. While the algorithm's theoretical foundation guarantees convergence under relatively mild conditions, its practical effectiveness heavily depends on appropriate step size strategies. Contemporary research continues to extend these classical methods through adaptive BB-like step sizes, frozen derivative approaches, and multi-level frameworks that enhance computational efficiency while maintaining convergence guarantees.

The comparative analysis with conjugate gradient methods highlights fundamental trade-offs: steepest descent offers simplicity and lower per-iteration cost, while conjugate gradient provides superior directional efficiency at the expense of increased computational overhead per iteration. This understanding enables researchers and practitioners—particularly in computationally intensive fields like drug development—to select and customize optimization approaches based on their specific problem structures and computational constraints.

In the field of numerical optimization, the steepest descent method represents one of the most intuitive and fundamental approaches for minimizing continuous, differentiable functions. As a first-order iterative optimization algorithm, it finds local minima by moving in the direction opposite to the gradient at each point [19]. Despite its conceptual simplicity and guaranteed movement toward lower function values, the method suffers from significant limitations that practically constrain its application to real-world problems, particularly those found in scientific computing and drug development research [20].

When applied to complex optimization landscapes—such as those encountered in molecular docking studies, protein folding simulations, or pharmacokinetic modeling—the steepest descent method exhibits characteristic oscillatory behavior and slow convergence rates, especially in narrow valleys or poorly conditioned systems [19]. These limitations become particularly problematic in high-dimensional parameter spaces where computational efficiency directly impacts research feasibility. This technical guide examines the mathematical foundations of these limitations, contrasts steepest descent with the more efficient conjugate gradient approach, and provides experimental protocols for researchers seeking to implement robust optimization techniques in their computational workflows.

Mathematical Foundations of Steepest Descent

Algorithmic Framework and Implementation

The steepest descent method operates through a straightforward iterative process that begins with an initial parameter estimate and progressively updates this estimate by moving in the direction of the negative gradient. The complete algorithmic implementation follows these steps [19]:

- Choose initial point ( x_0 )

- Set iteration counter ( k = 0 )

- Compute gradient ( \nabla f(x_k) )

- Determine step size ( \alpha_k ) through line search

- Update solution: ( x{k+1} = xk - \alphak \nabla f(xk) )

- Check convergence criteria

- Increment ( k ) and repeat if not converged

The critical component at each iteration is the calculation of the step size ( \alpha_k ), which can be determined through various strategies including fixed values, exact line search, or inexact methods such as the Armijo rule [19]. The convergence criteria typically include assessing whether the gradient norm falls below a specified threshold, monitoring changes in function values between iterations, or establishing a maximum iteration count to prevent infinite loops in poorly converging scenarios.

Theoretical Basis for Oscillatory Behavior

The oscillatory behavior characteristic of steepest descent arises from the fundamental property that the negative gradient represents only the locally steepest direction rather than the globally optimal path toward the minimum. This results in a zigzagging trajectory through the optimization landscape, particularly in valleys with non-spherical curvature [19] [20].

Mathematically, this phenomenon occurs because each search direction is orthogonal to the previous direction when using exact line searches:

[ \nabla f(x{k+1})^T \nabla f(xk) = 0 ]

This orthogonality relationship forces the algorithm to continually correct its course, creating an inefficient oscillatory path toward the solution. In ill-conditioned problems where the Hessian matrix has a high condition number, these oscillations become particularly pronounced, dramatically reducing convergence rates [20].

Comparative Analysis: Steepest Descent vs. Conjugate Gradient

Performance Characteristics and Convergence Rates

The limitations of steepest descent become particularly evident when compared directly with conjugate gradient methods. The following table summarizes key performance differences based on numerical experiments and theoretical analysis:

| Characteristic | Steepest Descent | Conjugate Gradient |

|---|---|---|

| Convergence rate | Linear convergence [19] | Quadratic termination (for n steps in exact arithmetic) [21] |

| Iteration count | Higher number of iterations [22] | Fewer iterations required [22] |

| Computational efficiency | Lower efficiency, especially for large problems [23] | Higher efficiency in large-scale problems [23] |

| Memory requirements | Low storage requirements [19] | Low storage requirements [23] [21] |

| Oscillatory behavior | Pronounced zigzagging in narrow valleys [19] | Reduced zigzagging through conjugate directions [21] |

| Step size sensitivity | Highly sensitive to step size choice [19] | Less sensitive with appropriate line search [24] |

Experimental comparisons demonstrate that the conjugate gradient method typically requires significantly fewer iterations to achieve the same precision in solution quality compared to steepest descent [22]. This efficiency advantage becomes increasingly pronounced as problem dimensionality grows, making conjugate gradient methods particularly valuable for large-scale optimization problems encountered in scientific research, including drug development applications such as molecular dynamics simulations and quantum chemistry calculations.

Visualization of Convergence Patterns

The following diagram illustrates the characteristic optimization paths of steepest descent versus conjugate gradient methods in a quadratic bowl with high condition number, highlighting the inefficient oscillatory trajectory of steepest descent compared to the more direct conjugate gradient path:

The zigzag pattern characteristic of steepest descent occurs because each new search direction is orthogonal to the previous one, forcing the algorithm to continually correct its course. In contrast, the conjugate gradient method constructs search directions that are mutually conjugate with respect to the Hessian matrix, enabling more direct progress toward the minimum [21].

Methodological Approaches for Analyzing Convergence

Experimental Protocol for Convergence Testing

Researchers can implement the following experimental protocol to quantitatively evaluate and compare the performance of steepest descent and conjugate gradient methods:

Test Function Selection: Choose benchmark optimization functions with known characteristics, particularly those with narrow valleys or high condition numbers that highlight steepest descent limitations (e.g., Rosenbrock function, quadratic functions with non-spherical contours) [23].

Algorithm Implementation:

- Implement steepest descent with both fixed and adaptive step sizes

- Implement conjugate gradient with restart strategy (e.g., Fletcher-Reeves or Polak-Ribière parameters) [23] [24]

- Utilize Wolfe line search conditions for step size determination: [ f(xk + \alphak dk) \leq f(xk) + \rho \alphak gk^\top dk ] [ g(xk + \alphak dk)^\top dk \geq \sigma gk^\top d_k ] where ( 0 < \rho < \sigma < 1 ) [24]

Performance Metrics: Track iteration count, function evaluations, computational time, gradient norm reduction, and solution accuracy across multiple problem dimensions [23].

Visualization: Plot convergence trajectories (as shown in Section 3.2) and performance profiles to illustrate comparative efficiency [22].

Research Reagent Solutions: Computational Tools

The following table details essential computational tools and their functions for implementing and testing optimization algorithms:

| Research Tool | Function in Optimization Experiments |

|---|---|

| MATLAB R2022b [24] | Primary environment for algorithm implementation and numerical testing |

| Wolfe Line Search [24] | Condition to determine appropriate step size with convergence guarantees |

| Performance Profiles [23] | Visualization technique for comparative algorithm performance |

| Test Function Library [23] | Benchmark problems (100+ test functions) for validation |

| Condition Number Analysis [19] | Diagnostic tool to assess problem difficulty and algorithm sensitivity |

The Conjugate Gradient Alternative

Theoretical Foundation and Algorithmic Implementation

The conjugate gradient method addresses the fundamental limitations of steepest descent by constructing a sequence of mutually conjugate directions with respect to the Hessian matrix, ensuring that each step does not undo progress made in previous directions [21]. For a quadratic objective function ( f(x) = \frac{1}{2}x^TAx - b^Tx ) with symmetric positive-definite matrix ( A ), two vectors ( pi ) and ( pj ) are conjugate if:

[ pi^T A pj = 0 \quad \text{for} \quad i \neq j ]

This conjugate property enables the algorithm to reach the exact minimum of an n-dimensional quadratic function in at most n steps, a significant improvement over steepest descent's linear convergence rate [21]. The algorithmic implementation follows:

- Initialize: ( x0 ), ( d0 = -g_0 ), ( k = 0 )

- While convergence criteria not met:

- Compute step size ( \alphak = \frac{gk^T gk}{dk^T A dk} )

- Update solution: ( x{k+1} = xk + \alphak dk )

- Compute new gradient: ( g{k+1} = gk + \alphak A dk )

- Compute conjugate parameter: ( \beta{k+1} = \frac{g{k+1}^T g{k+1}}{gk^T gk} )

- Update search direction: ( d{k+1} = -g{k+1} + \beta{k+1} dk )

- Increment ( k = k + 1 )

Modern extensions to non-quadratic problems incorporate hybrid conjugate parameters and restart strategies to maintain efficiency while guaranteeing global convergence [23] [24].

Workflow Diagram: Conjugate Gradient Method

The following diagram illustrates the complete workflow of a modern conjugate gradient algorithm with restart strategy, highlighting key components that address steepest descent limitations:

The restart strategy component is particularly important for maintaining convergence efficiency in non-quadratic problems, periodically resetting the search direction to the negative gradient to recover conjugacy and accelerate progress [23].

Applications in Scientific Computing and Drug Development

Case Study: Image Restoration and Signal Processing

The practical advantages of conjugate gradient methods over steepest descent extend beyond theoretical improvements to tangible benefits in real-world applications. In image restoration problems, conjugate gradient algorithms have demonstrated superior performance in recovering degraded images while minimizing computational requirements [23] [24]. Experimental results show that modified conjugate gradient methods achieve higher Peak Signal-to-Noise Ratio (PSNR) values compared to standard optimization approaches, indicating better restoration quality [23].

Similarly, in compressive sensing applications for sparse signal recovery, conjugate gradient methods efficiently solve the underlying optimization problems with limited memory requirements, making them suitable for large-scale datasets encountered in medical imaging and spectroscopic analysis [24]. These capabilities directly benefit drug development researchers working with complex analytical instrumentation data where optimal parameter estimation is crucial for accurate results interpretation.

Molecular Modeling and Optimization in Drug Discovery

In molecular modeling applications central to drug discovery, conjugate gradient methods play a vital role in energy minimization procedures during molecular dynamics simulations and protein-ligand docking studies [21]. The ability to efficiently navigate complex, high-dimensional energy landscapes enables more thorough exploration of conformational spaces and more accurate prediction of binding affinities.

Compared to steepest descent, which exhibits slow convergence in the narrow valleys characteristic of molecular potential energy surfaces, conjugate gradient methods provide faster approach to minimum energy configurations while maintaining reasonable computational requirements [21]. This efficiency advantage becomes increasingly significant when performing virtual screening of large compound libraries, where thousands or millions of individual energy minimizations may be required to identify promising drug candidates.

The steepest descent method, despite its conceptual simplicity and intuitive appeal, suffers from fundamental limitations including oscillatory behavior and slow convergence rates, particularly for ill-conditioned problems and optimization landscapes with narrow valleys. These limitations significantly impact its practical utility in scientific computing and drug development applications where computational efficiency directly influences research progress.

The conjugate gradient method addresses these limitations through its use of conjugate directions, which prevent the inefficiencies of zigzagging trajectories and enable more direct paths to solutions. With proper implementation including hybrid parameters, restart strategies, and appropriate line search conditions, conjugate gradient algorithms demonstrate superior performance across diverse applications including image restoration, signal processing, and molecular modeling.

For researchers and professionals in drug development, adopting conjugate gradient methods over steepest descent can yield significant improvements in computational efficiency, particularly for energy minimization in molecular dynamics simulations, parameter estimation in pharmacokinetic modeling, and optimization in quantitative structure-activity relationship (QSAR) studies. As optimization challenges continue to grow in scale and complexity with advances in high-throughput screening and multi-parameter optimization, selecting appropriate algorithms based on thorough understanding of their convergence properties becomes increasingly critical to research success.

The conjugate gradient method represents a cornerstone algorithm for the numerical solution of large systems of linear equations, particularly those with symmetric positive-definite matrices. This technical guide explores the mathematical foundation and computational advantages of conjugate gradient methods, emphasizing the crucial role of A-conjugate search directions. Framed within a broader comparison with the steepest descent algorithm, this review demonstrates how conjugacy transformations fundamentally improve convergence behavior and computational efficiency. Through structured quantitative comparisons, detailed methodological protocols, and visual workflow representations, we provide researchers with a comprehensive framework for understanding and implementing these powerful optimization techniques in scientific computing and data-intensive research applications.

The conjugate gradient (CG) method is an iterative algorithm primarily used for solving large systems of linear equations where the coefficient matrix is symmetric positive-definite [21]. Originally developed by Hestenes and Stiefel, the method has become particularly valuable for sparse systems too large for direct solution methods like Cholesky decomposition [21]. The fundamental innovation of the CG method lies in its use of A-conjugate search directions – vectors that are orthogonal with respect to the A-inner product – which enables theoretically exact convergence in at most n steps for an n-dimensional problem [25].

When compared to the steepest descent method, the conjugate gradient approach demonstrates superior convergence properties, particularly for ill-conditioned systems. While both methods aim to minimize quadratic functions of the form f(x) = ½xᵀAx - bᵀx, the conjugate gradient method achieves significantly faster convergence by constructing search directions that avoid interference between successive iterations [15]. This property makes it particularly valuable for large-scale optimization problems in scientific computing, machine learning, and engineering applications where computational efficiency is paramount.

Mathematical Foundations

The Quadratic Optimization Problem

The conjugate gradient method finds its most natural application in solving linear systems arising from quadratic optimization problems. Consider the objective function:

[f(\mathbf{x}) = \frac{1}{2}\mathbf{x}^T\mathbf{A}\mathbf{x} - \mathbf{b}^T\mathbf{x}]

where A is an n×n symmetric positive-definite matrix, and x, b ∈ ℝⁿ [25]. The minimization of this function is equivalent to solving the linear system Ax* = b, as the gradient ∇f(x) = Ax* - b equals zero at the solution [21].

For a symmetric positive-definite matrix A, two vectors u and v are defined as A-conjugate (or A-orthogonal) if:

[\mathbf{u}^T\mathbf{A}\mathbf{v} = 0]

This conjugacy condition generalizes the concept of orthogonality from the standard inner product to the A-inner product, defined as ⟨u, v⟩ₐ = ⟨Au, *v⟩ = ⟨u, Av*⟩ [21].

Theoretical Basis of Conjugate Directions

The power of the conjugate gradient method stems from its use of mutually conjugate vectors with respect to the matrix A. A set of vectors {p₁, p₂, ..., pₙ} is mutually conjugate if pᵢᵀApⱼ = 0 for all i ≠ j. This set forms a basis for ℝⁿ, ensuring that any solution vector x∗ can be expressed as a linear combination of these conjugate directions [21] [25].

Table 1: Key Properties of A-Conjugate Vectors

| Property | Mathematical Expression | Significance |

|---|---|---|

| Mutual Conjugacy | pᵢᵀApⱼ = 0 for i ≠ j | Ensures linear independence |

| Basis Formation | x∗ = Σαᵢpᵢ | Enables solution expansion |

| Optimal Step Size | αₖ = pₖᵀrₖ / pₖᵀApₖ | Minimizes function along search direction |

The expansion coefficients αₖ have closed-form expressions when conjugate directions are known:

[\alphak = \frac{\langle \mathbf{p}k, \mathbf{b} \rangle}{\langle \mathbf{p}k, \mathbf{p}k \rangle{\mathbf{A}}} = \frac{\mathbf{p}k^T\mathbf{b}}{\mathbf{p}k^T\mathbf{A}\mathbf{p}k}]

This formulation allows the exact solution to be constructed by progressing along each conjugate direction exactly once [21].

Conjugate Gradient Algorithm

Algorithm Derivation

The conjugate gradient method generates successive approximations to the solution through an iterative process that constructs conjugate directions from the residual vectors. For each iteration k, the algorithm updates:

[\mathbf{x}{k+1} = \mathbf{x}k + \alphak\mathbf{p}k]

where the step size αₖ is chosen to minimize the objective function along the search direction pₖ [21]. The optimal step size is given by:

[\alphak = \frac{\mathbf{p}k^T\mathbf{r}k}{\mathbf{p}k^T\mathbf{A}\mathbf{p}_k}]

where rₖ = b - Axₖ is the residual vector at iteration k [21].

The key innovation lies in how the search directions are updated. Each new search direction is constructed to be A-conjugate to all previous directions:

[\mathbf{p}k = \mathbf{r}k - \sum{i

In practice, this simplifies to a recursive update that requires only the previous search direction, making the algorithm computationally efficient [21].

Complete Algorithm Specification

The standard conjugate gradient algorithm can be summarized as follows:

Initialize:

- Choose initial guess x₀

- Compute r₀ = b - Ax₀

- Set p₀ = r₀

For k = 0, 1, 2, ... until convergence:

- Compute αₖ = pₖᵀrₖ / pₖᵀApₖ

- Update solution: xₖ₊₁ = xₖ + αₖpₖ

- Update residual: rₖ₊₁ = rₖ - αₖApₖ

- Compute βₖ = rₖ₊₁ᵀrₖ₊₁ / rₖᵀrₖ

- Update search direction: pₖ₊₁ = rₖ₊₁ + βₖpₖ

This implementation highlights the algorithm's efficiency: each iteration requires only one matrix-vector multiplication, making it suitable for large sparse systems [21].

Comparative Analysis: Conjugate Gradient vs. Steepest Descent

Fundamental Differences in Search Strategy

The steepest descent method follows the negative gradient direction at each iteration, which often leads to zig-zagging behavior, especially in narrow valleys of the objective function. In contrast, the conjugate gradient method constructs search directions that are mutually conjugate with respect to the system matrix A, ensuring that each step is optimal and does not undo progress from previous steps [15].

Table 2: Algorithm Comparison: Conjugate Gradient vs. Steepest Descent

| Characteristic | Steepest Descent | Conjugate Gradient |

|---|---|---|

| Search Direction | Negative gradient | A-conjugate directions |

| Convergence Rate | Linear | Superlinear (theoretically exact in n steps) |

| Iteration Cost | O(n²) per iteration | O(n²) per iteration |

| Memory Requirements | O(n) | O(n) |

| Number of Iterations | Higher for ill-conditioned systems | Fewer iterations needed |

| Performance on Quadratic Functions | Good | Superior [5] |

Quantitative Performance Comparison

Experimental comparisons between the two methods reveal significant differences in performance characteristics. Research shows that the conjugate gradient method typically requires fewer iterations to achieve the same accuracy level compared to steepest descent [15]. However, each conjugate gradient iteration may be computationally more expensive due to the additional vector operations required for maintaining conjugacy.

When implemented in MATLAB for minimizing nonlinear functions, studies have demonstrated that "the Conjugate gradient method needs fewer iterations and has more efficiency than the Steepest descent method. On the other hand, the Steepest descent method converges a function in less time than the Conjugate gradient method" [15]. This suggests a trade-off between iteration count and per-iteration computational cost that must be considered for specific applications.

Visualization of Algorithm Workflows

Conjugate Gradient Method Logic Flow

Search Direction Comparison

The Scientist's Toolkit: Essential Research Reagents

Table 3: Computational Research Reagents for Optimization Algorithms

| Tool/Component | Function | Implementation Example |

|---|---|---|

| Symmetric Positive-Definite Matrix | Problem formulation | Matrix A in Ax = b |

| Residual Vector | Optimality measurement | rₖ = b - Axₖ |

| Search Direction Vector | Optimization path | pₖ updated to maintain A-conjugacy |

| Step Size Parameter | Progress control | αₖ = pₖᵀrₖ / pₖᵀApₖ |

| Conjugacy Coefficient | Direction orthogonalization | βₖ = rₖ₊₁ᵀrₖ₊₁ / rₖᵀrₖ |

| Matrix-Vector Multiplier | Core computation | Apₖ product each iteration |

| Convergence Criterion | Termination condition | ‖rₖ‖ < ε or maximum iterations |

Advanced Methodological Protocols

Experimental Setup for Algorithm Comparison

To conduct a rigorous comparison between conjugate gradient and steepest descent methods, researchers should implement the following protocol:

Test Problem Generation: Create a set of symmetric positive-definite matrices with varying condition numbers and sizes. These may include diagonal matrices with controlled eigenvalue distributions and sparse matrices from real-world applications.

Implementation Details: Code both algorithms with efficient linear algebra operations. Precompute matrix-vector products for fair timing comparisons. Use a consistent programming environment (e.g., MATLAB, Python with NumPy/SciPy).

Convergence Metrics: Define precise stopping criteria, such as ‖rₖ‖/‖r₀‖ < 10⁻⁶ or a maximum iteration count of 1000. Track both iteration count and computational time to convergence.

Performance Analysis: Measure the number of iterations, computation time, and final solution accuracy for each test case. Analyze how performance varies with problem condition number and dimensionality.

Nonlinear Conjugate Gradient Extensions

For nonlinear optimization problems, the conjugate gradient method extends through several variants:

Fletcher-Reeves Method:

- Update parameter: βₖ = gₖ₊₁ᵀgₖ₊₁ / gₖᵀgₖ

- Where gₖ = ∇f(xₖ) is the gradient

Polak-Ribière Method:

- Update parameter: βₖ = gₖ₊₁ᵀ(gₖ₊₁ - gₖ) / gₖᵀgₖ

Hestenes-Stiefel Method:

- Update parameter: βₖ = gₖ₊₁ᵀ(gₖ₊₁ - gₖ) / pₖᵀ(gₖ₊₁ - gₖ)

These variants maintain the core concept of conjugate directions while adapting to general nonlinear functions [25].

The conjugate gradient method's power fundamentally stems from its use of A-conjugate search directions, which enables more efficient navigation of the solution space compared to steepest descent approaches. By ensuring that each step is optimal and does not interfere with previous progress, the algorithm achieves superior convergence characteristics, particularly for large-scale linear systems and optimization problems.

The comparative analysis presented in this work demonstrates that while both conjugate gradient and steepest descent methods aim to solve similar problems, their strategic approaches yield significantly different performance profiles. The conjugate gradient method typically requires fewer iterations due to its more intelligent search direction selection, though each iteration may be computationally more intensive. This trade-off makes the conjugate gradient method particularly valuable for problems where matrix-vector products are computationally expensive or where high accuracy is required.

For researchers implementing these algorithms, understanding the mathematical foundation of A-conjugacy is essential for proper application and potential extension to novel problem domains. The experimental protocols and visualization tools provided in this work offer a foundation for further investigation and practical implementation of these powerful optimization techniques.

Within the broader context of research on steepest descent versus conjugate gradient algorithms, understanding the precise mathematical differences in their update directions is fundamental. While both are iterative first-order methods for solving large-scale optimization problems, their core mechanisms and performance characteristics diverge significantly [21] [26]. This guide provides an in-depth technical analysis of these differences, focusing on their mathematical formulation, geometric interpretation, and practical implications for researchers and scientists in fields like drug development where complex optimization problems are prevalent.

The conjugate gradient (CG) method was originally developed for solving systems of linear equations with positive-definite matrices and was later extended to general nonlinear unconstrained optimization problems [21] [23]. Its development marked a significant advancement over classical steepest descent approaches, particularly in addressing the inefficiencies of the characteristic "zig-zag" path that plagues gradient descent in certain geometries [27] [28].

Mathematical Foundations

Gradient Descent Update Rule

The gradient descent method, in its simplest form, follows the update rule:

xk+1 = xk + αkdk

where the search direction dk is always the negative gradient:

dk = -∇f(xk) = -gk

The step size αk > 0 is determined through a line search procedure to minimize the function along dk [21] [29]. In steepest descent, a specific variant of gradient descent, the learning rate η is chosen such that it yields maximal gain along the negative gradient direction [29].

Conjugate Gradient Update Rule

The conjugate gradient method uses a more sophisticated update rule for the search direction:

dk = -gk + βkdk-1

where βk is a scalar parameter that ensures consecutive search directions are conjugate with respect to the Hessian matrix (or an approximation thereof) [21] [23]. Different formulas for βk yield different CG variants, such as Fletcher-Reeves (FR), Polak-Ribière-Polyak (PRP), and Hestenes-Stiefel (HS) [23].

For nonlinear problems, more advanced three-term conjugate gradient methods have been developed with the form:

dk = -ϑgk + βkdk-1 + pkrk

where pk is a suitable scalar and rk is a suitable vector [30]. This structure provides additional flexibility and often exhibits better convergence properties.

The Concept of A-Conjugacy

The fundamental innovation in conjugate gradient methods is the concept of A-conjugacy (or Q-conjugacy for quadratic forms). Two vectors di and dj are considered A-conjugate if:

diTAdj = 0, ∀ i ≠ j

where A is a positive-definite matrix [26]. This generalizes the concept of orthogonality from Euclidean geometry to a geometry defined by A. When search directions satisfy this conjugacy condition, each step minimizes the function over a progressively expanding subspace [21] [26].

Comparative Performance Analysis

Geometric Interpretation and Convergence

The geometric behavior of these methods reveals fundamental differences as illustrated below:

Gradient descent exhibits orthogonal successive directions, which leads to inefficient zig-zagging, especially in ill-conditioned problems with elongated contours [27] [28]. In contrast, conjugate gradient methods construct A-conjugate search directions, ensuring that each step minimizes over an expanding subspace and does not undo progress from previous steps [21] [26].

Theoretical Properties Comparison

Table 1: Mathematical Properties Comparison

| Property | Gradient Descent | Conjugate Gradient |

|---|---|---|

| Direction Formula | dk = -gk | dk = -gk + βkdk-1 |

| Orthogonality | gk+1Tgk = 0 | diTAdj = 0, ∀ i ≠ j |

| Convergence Rate | Linear | Finite (n steps for quadratic problems) |

| Memory Requirement | O(n) | O(n) |

| Computational Cost per Iteration | Low | Moderate (additional βk calculation) |

| Optimal Step Size | Line search along -gk | αk = gkTdk/(dkTAdk) |

Computational Characteristics

Table 2: Computational Trade-offs

| Aspect | Gradient Descent | Conjugate Gradient |

|---|---|---|

| Implementation Complexity | Simple | Moderate |

| Sensitivity to Condition Number | High | Reduced |

| Line Search Requirements | Standard | Requires strong Wolfe conditions for convergence guarantees |

| Stochastic Settings | Well-suited (SGD) | Less effective |

| Parallelization Potential | High | Moderate |

| Modern Variants | Adam, RMSProp, Adagrad | Hybrid three-term, memoryless BFGS-inspired |

For quadratic objectives with Hessian A, the conjugate gradient method theoretically converges to the exact solution in at most n steps (where n is the problem dimension), while gradient descent typically requires many more iterations [21] [26]. This finite termination property is particularly valuable for large-scale problems where direct factorization methods are computationally prohibitive.

In practice, for non-quadratic problems, both methods are implemented with restart strategies to enhance performance [23]. Recent advances in three-term conjugate gradient methods have further improved performance by better approximating quasi-Newton directions while maintaining low memory requirements [23] [30].

Experimental Protocols and Methodologies

Standard Testing Framework

Researchers evaluating these algorithms typically employ the following methodological framework:

- Test Problem Selection: A diverse set of benchmark functions with varying characteristics (ill-conditioned, poorly scaled, non-convex)

- Convergence Criteria:

- Gradient norm tolerance: ‖gk‖ ≤ ε

- Function value change: |f(xk+1) - f(xk)| ≤ ε

- Maximum iterations: k ≤ kmax

- Performance Metrics:

- Number of iterations to convergence

- Number of function/gradient evaluations

- Computational time

- Solution accuracy

Line Search Implementation

Both methods require careful implementation of line search procedures. The strong Wolfe conditions are commonly employed for convergence guarantees:

f(xk + αkdk) ≤ f(xk) + δαkgkTdk

|g(xk + αkdk)Tdk| ≤ -σgkTdk

where 0 < δ < σ < 1 [23] [30]. These conditions ensure sufficient decrease while controlling the step size to prevent excessively small steps.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools for Algorithm Implementation

| Tool/Component | Function | Implementation Considerations |

|---|---|---|

| Gradient Calculator | Computes ∇f(x) | Analytical (preferred) or numerical differentiation |

| Line Search Routine | Finds α satisfying Wolfe conditions | Sectioning or interpolation methods |

| Function Evaluator | Computes f(x) | Often the computational bottleneck |

| Conjugacy Condition Checker | Monitors diTAdj = 0 | Critical for maintaining theoretical properties |

| Restart Mechanism | Resets dk = -gk | Prevents numerical instability; typically every n iterations |

| Preconditioner | Improves condition number | Transform problem to reduce eccentricity of contours |

Application to Real-World Problems

Image Restoration

Conjugate gradient methods have demonstrated superior performance in image restoration problems, achieving higher peak signal-to-noise ratio (PSNR) values compared to conventional gradient-based approaches [23]. The ability to handle large-scale linear systems arising in image deconvolution efficiently makes CG particularly suitable for such applications.

Robotic Motion Control

Recent research has applied three-term conjugate gradient methods to robotic arm motion control, leveraging their strong convergence properties for real-time trajectory optimization [30]. The sufficient descent property of modern CG variants ensures stable and predictable convergence behavior critical for control applications.

Machine Learning and Neural Networks

Despite the theoretical advantages of conjugate gradient methods, gradient descent variants (particularly stochastic gradient descent with momentum and adaptive learning rates) dominate deep learning applications [31]. This preference stems from:

- The stochastic nature of machine learning objectives

- The high computational cost of accurate line searches in high dimensions

- The effectiveness of simple momentum in mitigating zig-zagging

- Better generalization properties observed with SGD variants

As noted in research, "CG doesn't generalize well" in stochastic settings, while methods like Adam were specifically designed for such environments [31].

The mathematical differences between gradient and conjugate direction updates translate to significant practical implications for scientific computing and optimization. Gradient descent, with its simplicity and suitability for stochastic environments, remains popular in machine learning. Conjugate gradient methods, with their superior convergence properties for deterministic problems, excel in scientific computing applications where precision and finite termination are valued.

Recent developments in three-term conjugate gradient methods with restart strategies continue to bridge the gap between theoretical convergence guarantees and practical performance, making them valuable tools for researchers tackling complex optimization problems in fields ranging from drug development to engineering design.

From Theory to Therapy: Implementing Algorithms in Drug Discovery

The Conjugate Gradient (CG) Method is a fundamental iterative algorithm in computational mathematics, serving as a cornerstone for solving large-scale linear systems and optimization problems. Originally developed by Hestenes and Stiefel in 1952, this algorithm efficiently solves systems of linear equations where the coefficient matrix is symmetric and positive-definite [32]. Its significance lies in positioning between Newton's method, which requires computationally expensive Hessian matrix calculations, and the method of steepest descent, which often exhibits slow convergence [26]. For researchers and drug development professionals, understanding the CG method provides powerful capabilities for tackling complex computational challenges in fields ranging from structural biology to pharmacokinetic modeling, where large, sparse systems frequently arise from discretized differential equations or high-dimensional optimization problems [33].

Mathematical Foundations

Problem Formulation

The Conjugate Gradient Method addresses two equivalent mathematical problems:

- Linear Systems: Solving (A\mathbf{x} = \mathbf{b}) where (A) is an (n \times n) symmetric positive-definite matrix [32]

- Optimization Problems: Minimizing the quadratic function (f(\mathbf{x}) = \frac{1}{2}\mathbf{x}^T A\mathbf{x} - \mathbf{b}^T\mathbf{x}) [32] [26]

The gradient of this quadratic function equals (\nabla f(\mathbf{x}) = A\mathbf{x} - \mathbf{b}), demonstrating the equivalence between finding the minimum of (f(\mathbf{x})) and solving the linear system (A\mathbf{x} = \mathbf{b}) [32] [26].

The Principle of Conjugacy

The core innovation of the CG method lies in its use of A-conjugate search directions. A set of vectors ({\mathbf{d}0, \mathbf{d}1, ..., \mathbf{d}_{n-1}}) is considered A-conjugate if:

[ \mathbf{d}i^T A\mathbf{d}j = 0 \quad \forall i \neq j ]

This conjugacy condition generalizes the concept of orthogonality [26]. When search directions satisfy this property, each step optimally reduces the error in the solution, guaranteeing convergence within at most (n) iterations for an (n)-dimensional problem [26].

Table 1: Key Mathematical Properties of the Conjugate Gradient Method

| Property | Mathematical Expression | Significance |

|---|---|---|