Statistical Ensembles in Molecular Dynamics: A Complete Guide for Biomedical Researchers

This article provides a comprehensive examination of statistical ensembles in molecular dynamics simulations, tailored for researchers, scientists, and drug development professionals.

Statistical Ensembles in Molecular Dynamics: A Complete Guide for Biomedical Researchers

Abstract

This article provides a comprehensive examination of statistical ensembles in molecular dynamics simulations, tailored for researchers, scientists, and drug development professionals. It covers foundational concepts including microcanonical (NVE), canonical (NVT), and isothermal-isobaric (NPT) ensembles, explores their practical applications in drug discovery and conformational sampling, addresses common troubleshooting and optimization challenges, and validates ensemble selection through statistical accuracy measures and experimental comparisons. The content synthesizes current methodologies with emerging trends to guide effective implementation in biomedical research.

Understanding Statistical Ensembles: The Foundation of Molecular Dynamics

What is a Statistical Ensemble? Definitions from Gibbs to Modern Interpretations

In the realm of molecular dynamics and statistical mechanics, a statistical ensemble provides the fundamental theoretical framework for connecting the microscopic behavior of atoms and molecules to macroscopic thermodynamic properties. Introduced by J. Willard Gibbs, the ensemble concept involves considering a large number of virtual copies of a system simultaneously, with each copy representing a possible microstate that the real system might occupy [1]. In contemporary molecular dynamics (MD) research, particularly in drug discovery, this century-old concept remains indispensable for simulating biomolecular behavior and predicting ligand-target interactions [2] [3] [4].

The core principle underlying statistical ensembles is ergodicity—the hypothesis that the time average of a system's property equals its average over an ensemble of all possible microstates consistent with the system's macroscopic constraints [1]. As Chandler articulates, "The equivalence of a time average and an ensemble average, while sounding reasonable, is not at all trivial. Dynamical systems that obey this equivalence are said to be ergodic" [1]. This equivalence enables researchers to use molecular dynamics simulations to generate representative ensembles that capture the statistical behavior of complex biological systems.

Table 1: Fundamental Types of Statistical Ensembles in Molecular Simulations

| Ensemble Name | Fixed Thermodynamic Variables | Primary Applications | Thermodynamic Potential |

|---|---|---|---|

| Microcanonical (NVE) | Number of particles (N), Volume (V), Energy (E) | Studying isolated systems; energy conservation | Entropy S(E,V,N) |

| Canonical (NVT) | Number of particles (N), Volume (V), Temperature (T) | Most biomolecular simulations in solution | Helmholtz Free Energy F(T,V,N) |

| Isobaric-Isothermal (NPT) | Number of particles (N), Pressure (P), Temperature (T) | Simulating systems at laboratory conditions | Gibbs Free Energy G(T,P,N) |

| Grand Canonical (μVT) | Chemical potential (μ), Volume (V), Temperature (T) | Studying open systems; adsorption | Grand Potential |

| Gibbs Ensemble | Total N, V, T for coexisting phases | Direct simulation of phase equilibria | - |

Historical Foundations: The Gibbsian Framework

J. Willard Gibbs formulated the statistical mechanics of ensembles in the late 19th century, establishing the mathematical foundation for connecting microscopic states to thermodynamic observables [1]. Gibbs' fundamental insight was that a macroscopic system in thermodynamic equilibrium could be represented by an ensemble of virtual copies, each in a different microscopic state consistent with the macroscopic constraints [5]. The probabilities of these microstates are determined by the specific ensemble appropriate to the thermodynamic conditions.

A crucial mathematical development in Gibbs' framework was the recognition of the Legendre-Fenchel transform (LFT) relationship between entropy as a concave function of internal energy, S(E), and free energy as a concave function of temperature, F(T) [5]. This duality symmetry defines thermodynamic equilibrium, with its breakdown signaling nonequilibrium conditions. As articulated in neo-Gibbsian interpretations, "the LF duality symmetry is completely missing in the current teaching of statistical mechanics; the duality symmetry breaking implies nonequilibrium" [5].

Gibbs' original ensembles—microcanonical, canonical, and grand canonical—provided the foundation for simulating systems with different external constraints. The microcanonical ensemble (NVE) describes completely isolated systems, while the canonical ensemble (NVT) connects to systems in thermal contact with a heat bath, and the grand canonical ensemble (μVT) describes open systems that exchange both energy and particles with their surroundings [1].

Modern Interpretations and Extended Ensemble Methods

The Gibbs Ensemble for Phase Equilibria

A significant modern extension is the Gibbs ensemble Monte Carlo method, introduced by Panagiotopoulos for directly simulating phase equilibria without expensive chemical potential calculations [6]. This approach represents coexisting phases (e.g., vapor and liquid) with two separate simulation boxes that obey the conditions of phase coexistence: thermal equilibrium (Tᴵ = Tᴵᴵ), mechanical equilibrium (pᴵ = pᴵᴵ), and chemical equilibrium (μᴵ = μᴵᴵ) [6].

The simulation technique involves three types of moves: (1) particle displacement within each box; (2) volume fluctuations that redistribute volume between boxes while maintaining constant total volume; and (3) particle transfer between boxes to achieve chemical equilibrium [6]. The acceptance probabilities for these moves are derived from the phase density fɢ for the Gibbs ensemble:

fɢ(Nᴵ,Vᴵ,N,V,T) ∝ exp[ln(N!/Nᴵ!Nᴵᴵ!) + NᴵlnVᴵ + NᴵᴵlnVᴵᴵ - βUᴵ(Nᴵ) - βUᴵᴵ(Nᴵᴵ)]

where Nᴵ and Nᴵᴵ are particle numbers in each phase (with Nᴵ + Nᴵᴵ = N), Vᴵ and Vᴵᴵ are volumes (with Vᴵ + Vᴵᴵ = V), and Uᴵ and Uᴵᴵ are configurational energies [6].

Generalized Ensembles for Enhanced Sampling

Modern molecular dynamics research has developed specialized ensembles to overcome sampling limitations:

The grand-isobaric adiabatic ensemble (μ, p, R) describes systems with constant chemical potential, pressure, and Ray energy (R), while volume and particle number fluctuate [1]. The probability of a microstate in this ensemble is given by:

Pν(q,N,V) = (bV)ᴺΓ(3N/2)/Q(μ,p,R) × [R - pV + μN - U(q)]³ᴺ/²⁻¹

where b = (2πm/h²)³/², m is molecular mass, h is Planck's constant, Γ() is the gamma function, and Q(μ,p,R) is the ensemble partition function [1].

Extended ensemble methods like replica exchange (parallel tempering) enable enhanced sampling by simulating multiple copies of a system at different temperatures or Hamiltonian parameters, allowing exchanges between them [2]. This approach helps overcome energy barriers that trap conventional MD simulations in local minima.

Table 2: Modern Enhanced Sampling Methods Based on Extended Ensembles

| Method | Ensemble Type | Key Mechanism | Primary Application |

|---|---|---|---|

| Replica Exchange | Multiple canonical ensembles at different temperatures | Exchanges configurations between temperatures to escape local minima | Sampling complex energy landscapes |

| Metadynamics | Extended ensemble with bias potential | Adds history-dependent bias to discourage visited states | Exploring free energy surfaces |

| Accelerated MD | Extended ensemble with modified potential | Applies boost potential to smooth energy barriers | Enhancing conformational sampling |

| Integrated Tempering Sampling | Extended ensemble with temperature scaling | Modifies potential to sample multiple temperatures simultaneously | Efficient barrier crossing |

Statistical Ensembles in Molecular Dynamics and Drug Discovery

Practical Implementation in Molecular Dynamics

In molecular dynamics simulations, statistical ensembles provide the thermodynamic constraints that govern the equations of motion. While early MD simulations primarily used the microcanonical (NVE) ensemble, modern biomolecular simulations typically employ the canonical (NVT) or isobaric-isothermal (NPT) ensembles to mimic experimental conditions [4]. Thermostats and barostats algorithmically enforce these ensemble constraints by modifying the equations of motion.

The choice of ensemble significantly impacts which thermodynamic properties can be directly calculated and which require more complex estimation methods. For instance, free energies—crucial for predicting binding affinities in drug discovery—cannot be directly computed from standard MD trajectories but require specialized methods like free energy perturbation or thermodynamic integration [4].

Application to Drug Discovery Challenges

In structure-based drug discovery, molecular dynamics simulations leverage statistical ensembles to address the critical challenge of target flexibility [3]. Proteins sample multiple conformational states under physiological conditions, and different ligands may stabilize distinct conformations. By generating conformational ensembles through MD simulations, researchers can identify "cryptic pockets" and allosteric sites not visible in static crystal structures [2] [3].

The Relaxed Complex Method represents a powerful application of ensemble thinking, where representative target conformations from MD simulations are selected for docking studies [3]. This approach accounts for binding-pocket dynamics and has proven valuable in cases like the development of HIV integrase inhibitors, where MD simulations revealed significant flexibility in the active site region [3].

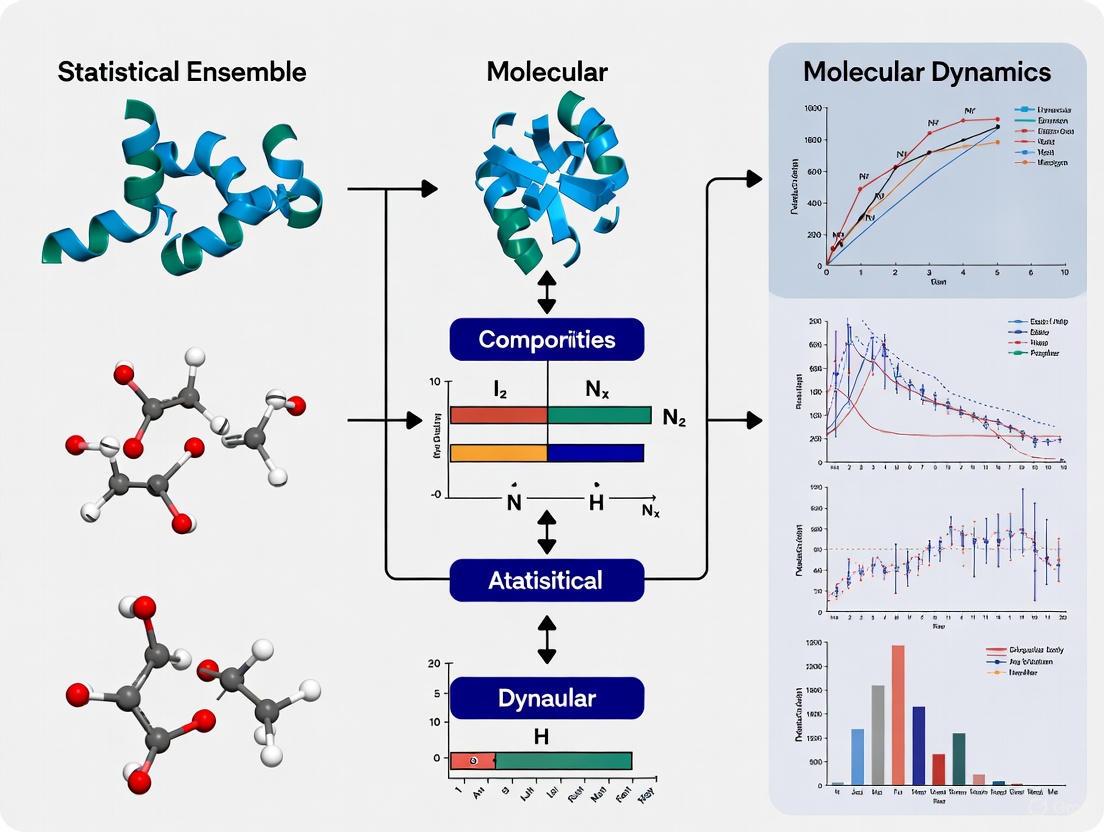

Diagram 1: Ensemble-based drug discovery workflow. The process begins with a protein structure, generates conformational ensembles via MD simulation, identifies representative structures through clustering, performs docking against multiple conformations, and finally identifies promising drug candidates through binding analysis.

Table 3: Essential Computational Resources for Ensemble-Based Molecular Simulations

| Resource Category | Specific Examples | Function in Ensemble Generation |

|---|---|---|

| MD Software Packages | GROMACS, AMBER, NAMD, CHARMM [4] | Implement equations of motion with ensemble constraints; provide analysis tools |

| Force Fields | AMBER, CHARMM, OPLS-AA [4] | Define empirical potential energy functions for different molecule types |

| Enhanced Sampling Algorithms | Replica Exchange, Metadynamics, aMD [2] [3] | Accelerate barrier crossing and improve ensemble convergence |

| Specialized Hardware | GPUs, Anton Supercomputers [2] | Enable longer timescales (µs-ms) for better ensemble representation |

| Conformational Analysis Tools | Clustering algorithms, PCA, tICA [2] | Identify representative structures from ensemble simulations |

Recent Advances and Future Perspectives

Machine Learning Enhancements

Recent advances integrate machine learning with ensemble methods to address sampling challenges. Surrogate Model-Assisted Molecular Dynamics (SMA-MD) leverages deep generative models to enhance sampling of slow degrees of freedom, generating more diverse and lower-energy ensembles than conventional MD [7]. Machine-learning force fields, such as ANI-2x, trained on quantum mechanical calculations, promise to bridge the accuracy gap between classical and quantum simulations while remaining computationally feasible for ensemble generation [2].

AlphaFold2, while revolutionary for structure prediction, often requires refinement through MD simulations to generate conformational ensembles. "Brief MD simulations can correct misplaced sidechains" in AlphaFold models, substantially improving subsequent ligand-binding predictions [2]. Modified AlphaFold pipelines that reduce evolutionary signals can predict entire conformational ensembles, which then serve as seeds for more efficient MD simulations [2].

Neo-Gibbsian Interpretations for Nonequilibrium Systems

Modern theoretical developments include neo-Gibbsian statistical energetics, which extends Gibbs' framework to nonequilibrium systems like living cells [5]. This approach introduces an irreversible thermodynamic potential ψ(T,E) ≡ {E - F(T)}/T - S(E) ≥ 0, where the second law emerges from the disagreement between E and T as a dual pair [5]. Such developments are particularly relevant for pharmaceutical applications involving active transport and nonequilibrium cellular processes.

The identification of the classical thermodynamic limit as kʙ→0 provides a fresh perspective on ensemble interpretations, drawing parallels with ħ→0 in classical mechanics [5]. This viewpoint treats kʙ as the unit for entropy, representing thermal fluctuations, analogous to Planck's constant ħ for quantum fluctuations.

From Gibbs' original formulation to modern interpretations for nonequilibrium systems, statistical ensembles remain foundational to molecular dynamics research and drug discovery. The ensemble concept provides the crucial link between microscopic molecular behavior and macroscopic observables, enabling researchers to simulate and predict the properties of complex biological systems. As computational power continues to grow and algorithms become more sophisticated, ensemble-based methods will play an increasingly vital role in accelerating drug discovery and deepening our understanding of biomolecular function. The ongoing integration of machine learning methods with traditional ensemble approaches promises to further enhance sampling efficiency and predictive accuracy, ensuring that Gibbs' century-old conceptual framework continues to drive innovation in molecular simulation.

The microcanonical ensemble, also known as the NVE ensemble, is a fundamental statistical model in statistical mechanics used for describing isolated mechanical systems with a precisely specified total energy [8]. It represents a cornerstone concept, providing the foundational distribution from which other ensembles, like the canonical (NVT) and grand canonical (µVT) ensembles, can be derived [9] [8]. The core premise of this ensemble is that it models a system that is completely isolated from its environment, meaning it cannot exchange energy or particles with its surroundings [9] [8]. As a result, by the conservation of energy, the system's total energy does not change with time. The primary macroscopic variables that define the microcanonical ensemble are the total number of particles in the system (N), the system's volume (V), and the total energy in the system (E), all of which are held constant [8]. This makes the NVE ensemble the natural starting point for understanding the connection between microscopic mechanics and macroscopic thermodynamics.

The historical development of the microcanonical ensemble is deeply rooted in the work of pioneering physicists. The concept was first introduced by Ludwig Boltzmann in the late 19th century, whose work laid the very foundation for statistical mechanics [9]. Boltzmann's seminal connection between entropy and the number of microstates, encapsulated in his eponymous equation, is a direct product of microcanonical thinking [8] [10]. His ideas were later further developed and rigorously formalized by other luminaries such as Josiah Willard Gibbs and Max Planck [9] [8]. Gibbs, in particular, investigated the analogies between the microcanonical ensemble and thermodynamics with great care, even highlighting how these analogies can break down for systems with very few degrees of freedom [8]. This historical context underscores the microcanonical ensemble's role as a conceptual building block for the entire field of equilibrium statistical mechanics [8].

Fundamental Principles and Key Characteristics

Core Definition and Postulate

The microcanonical ensemble is built upon a few fundamental principles. The system is isolated, meaning it does not exchange energy or matter with its surroundings, leading to a fixed energy (E), volume (V), and number of particles (N) [9]. The ensemble is defined by assigning an equal probability to every microstate whose energy falls within a specified, infinitesimally narrow range centered at E [8]. All other microstates are assigned a probability of zero. This is a formal statement of the postulate of equal a priori probabilities. If W represents the number of microstates within the allowed energy range, then the probability P for any one of those microstates is simply the reciprocal, P = 1/W [8]. This uniform probability distribution is the defining characteristic of the ensemble and is the one that maximizes the information entropy for the given constraints [8].

Microstates, Macrostates, and the Density of States

A critical distinction in statistical mechanics is that between microstates and macrostates. A microstate is a specific, detailed configuration of a system, defined in classical mechanics by all the generalized coordinates and momenta of the constituent particles, and in quantum mechanics by a specific wavefunction [9]. A macrostate, in contrast, is a description of the system's macroscopic properties, such as its total energy, volume, and pressure [9]. For a given macrostate (e.g., defined by E, V, N), there is a vast number of possible microstates that are consistent with it. The number of these accessible microstates is quantified by the density of states, denoted as Ω(E, V, N) [9] [8]. In classical mechanics, this is related to the volume of phase space where the system's Hamiltonian, H({qi, pi}), equals the total energy E [9]. The density of states is the central quantity that connects the microscopic description to macroscopic thermodynamics in the microcanonical ensemble.

The Ergodic Hypothesis

The ergodic hypothesis is a key underlying assumption that justifies the use of statistical ensembles. It posits that over a sufficiently long period, an isolated system will explore all of its accessible microstates [9]. This means that the time-average of any property of the system (as would be measured in an experiment) is equal to the average of that property over all microstates in the ensemble (the ensemble average) [9]. The implication of this hypothesis is that the system, given enough time, will spend an equal amount of time in each of its accessible microstates, providing a dynamical justification for the postulate of equal a priori probabilities. The system's trajectory in phase space will eventually come arbitrarily close to every point in the energy shell, leading to the state of maximum entropy [9].

Thermodynamic Connections and Formal Definitions

Entropy and the Boltzmann Equation

The fundamental thermodynamic potential derived from the microcanonical ensemble is entropy [8]. The direct connection between the microscopic world (microstates) and the macroscopic thermodynamic property of entropy (S) is given by the Boltzmann equation: [ S = kB \ln \Omega ] where ( kB ) is the Boltzmann constant and ( \Omega ) is the number of microstates accessible to the system at its fixed energy E [9] [10] [11]. This equation states that the entropy of an isolated system is a measure of the number of ways the internal energy of the system can be arranged among its constituent particles. A system with more accessible microstates has higher entropy, which corresponds to a greater degree of disorder or randomness [10]. The microcanonical ensemble naturally evolves towards the macrostate with the highest number of microstates, which is the state of maximum entropy, providing a statistical foundation for the Second Law of Thermodynamics [10] [11].

Derived Thermodynamic Quantities

In the microcanonical ensemble, temperature is not an external control parameter but a derived quantity [8]. It is defined through the fundamental relationship between entropy and energy. Other thermodynamic quantities like pressure and chemical potential are similarly derived from entropy. The following table summarizes the key thermodynamic properties and their expressions in the microcanonical ensemble.

Table 1: Thermodynamic Properties in the Microcanonical Ensemble

| Property | Mathematical Expression | Interpretation | |

|---|---|---|---|

| Entropy | ( S = k_B \ln \Omega(E, V, N) ) [9] [10] | The logarithm of the number of accessible microstates. | |

| Temperature | ( \dfrac{1}{T} = \dfrac{\partial S}{\partial E} \Big | _{V,N} ) [9] [8] | The rate of change of entropy with respect to energy. |

| Pressure | ( \dfrac{P}{T} = \dfrac{\partial S}{\partial V} \Big | _{E,N} ) [9] [8] | The rate of change of entropy with respect to volume. |

| Chemical Potential | ( \dfrac{\mu}{T} = -\dfrac{\partial S}{\partial N} \Big | _{E,V} ) [8] | Related to the change in entropy upon adding a particle. |

It is important to note that there are subtle but important differences in how entropy is defined, leading to different but related expressions for temperature. The Boltzmann entropy (( SB )) depends on the derivative of the phase volume, ( dv/dE ), and an arbitrary small energy width, ( \omega ) [8]. The volume entropy (( Sv )) uses the phase volume ( v(E) ) directly, while the surface entropy (( Ss )) uses its derivative [8]. These definitions yield slightly different "temperatures" (( Tv ) and ( T_s )) upon differentiation with respect to energy, a nuance that becomes significant for small systems [8].

Equilibrium and the Maximization of Entropy

The condition for thermal equilibrium between two systems emerges naturally from the microcanonical formalism. Consider two microcanonical systems, A and B, that are allowed to exchange energy (heat) but not particles or volume [11]. The total energy is fixed, ( U = UA + UB ). The most probable energy distribution between A and B is the one that maximizes the total entropy, ( S{total} = SA + SB ) [11]. Maximizing ( S{total} ) with respect to ( UA ) leads to the equilibrium condition: [ \frac{\partial SA}{\partial UA} = \frac{\partial SB}{\partial UB} ] which, by definition, implies ( 1/TA = 1/TB ), or ( TA = T_B ) [11]. This demonstrates that equilibrium is reached when the temperatures of the two subsystems are equal. This principle can be generalized to the exchange of particles and volume, leading to conditions of equal chemical potential and pressure, respectively, at equilibrium.

The Microcanonical Ensemble in Molecular Dynamics Simulations

NVE Simulations as Numerical Realization

In computational chemistry and materials science, Molecular Dynamics (MD) simulations provide a direct numerical realization of statistical ensembles [12]. An NVE MD simulation models an isolated system by numerically solving Newton's equations of motion for all atoms in the system [12]. In this purest form of MD, the system's total energy is a conserved quantity, alongside the number of atoms and the volume, making it a direct analog of the microcanonical ensemble [12]. The numerical integration of the equations of motion, using algorithms like the velocity Verlet integrator, generates a trajectory of the system through phase space [12] [13]. This trajectory can be thought of as a sampling of the microstates that constitute the microcanonical ensemble, allowing for the computation of time-averaged properties that can be compared to ensemble averages via the ergodic hypothesis [14].

Key Considerations for NVE MD Setup

Performing a numerically stable and physically meaningful NVE simulation requires careful attention to several parameters. The following table outlines essential "research reagents" or components for setting up an NVE MD experiment.

Table 2: Essential Components for an NVE Molecular Dynamics Experiment

| Component / Parameter | Function / Role | Typical Considerations |

|---|---|---|

| Initial Configuration | Defines the starting positions of all atoms. | Often an energy-minimized structure or a snapshot from an equilibrated NVT simulation. |

| Initial Velocities | Defines the starting momenta of all atoms, setting the initial kinetic energy and temperature. | Usually drawn randomly from a Maxwell-Boltzmann distribution at a desired initial temperature [12]. |

| Time Step (Δt) | The discrete interval for numerical integration of equations of motion [12]. | Must be small enough to conserve energy and capture fastest atomic vibrations (often 1 fs); too large a step causes energy drift and integration errors [12]. |

| Integrator Algorithm | The numerical method for updating positions and velocities over time. | The velocity Verlet algorithm is a common and symplectic (volume-preserving) choice [12] [13]. |

| Force Field / Calculator | Computes the potential energy and forces between atoms. | Can be based on classical potentials or quantum mechanical methods like Density Functional Theory (DFT) [12]. |

| Periodic Boundary Conditions | Mimics a macroscopic system by eliminating surface effects. | The simulation box should be large enough to avoid spurious self-interaction of atoms with their periodic images [12]. |

Protocol for an NVE Molecular Dynamics Simulation

A typical workflow for running an NVE production simulation involves several key steps to ensure the reliability of the results.

System Preparation and Equilibration: The simulation begins with a stable initial atomic geometry, often in an orthogonal cell for simplicity [12]. The system is then equilibrated, typically in the canonical (NVT) ensemble, to bring it to the desired temperature. This is crucial because directly starting an NVE simulation from an arbitrary configuration can lead to poor energy conservation and non-equilibrium dynamics [12]. During NVT equilibration, a thermostat (e.g., Nose-Hoover) maintains the temperature by allowing the system to exchange energy with a virtual heat bath.

NVE Production Run: Once equilibrated, the thermostat is removed, and the system is propagated in the NVE ensemble using a numerical integrator like velocity Verlet. The total energy, kinetic energy, and potential energy are monitored to verify that the total energy is conserved, which is the primary indicator of a correct NVE simulation [12]. Any significant drift in total energy suggests an unstable simulation, often remedied by using a smaller time step.

Trajectory Analysis and Property Calculation: After the simulation, the saved trajectory (snapshots of atomic positions and velocities recorded at intervals) is analyzed. Observables of interest, such as radial distribution functions, diffusion coefficients, or vibrational spectra, are computed as time averages over the trajectory. According to the ergodic hypothesis, these time averages should correspond to the microcanonical ensemble averages [14].

Relationship to Other Ensembles and Conceptual Significance

The microcanonical ensemble is the most fundamental ensemble, but it is part of a family of statistical ensembles used to model different physical situations. The following diagram illustrates the logical relationships between the primary statistical ensembles and the conditions they represent.

Diagram 1: Relationships between Statistical Ensembles

Comparison with Canonical and Grand Canonical Ensembles

The canonical (NVT) ensemble describes a system that is in thermal contact with a much larger heat reservoir at a constant temperature T [14]. Unlike the microcanonical ensemble, the energy of an NVT system can fluctuate, as it can exchange energy with the reservoir. The grand canonical (µVT) ensemble goes a step further, describing an open system that can exchange both energy and particles with a reservoir, leading to fluctuations in both energy and particle number [9]. These ensembles are often more convenient for theoretical calculations and are more directly applicable to many experimental conditions, such as a solute in a solvent bath [8] [14]. The microcanonical ensemble can be considered the foundation because the canonical ensemble can be derived by considering a small system (the system of interest) coupled to a very large microcanonical system (the heat bath) [14].

Phase Transitions and Small System Behavior

A significant conceptual distinction lies in the treatment of phase transitions. Under a strict definition, phase transitions—which correspond to non-analytic behavior in the thermodynamic potential—can occur in finite systems within the microcanonical ensemble [8]. This contrasts with the canonical and grand canonical ensembles, where true non-analyticities and phase transitions can only occur in the thermodynamic limit (i.e., for systems with infinitely many degrees of freedom) [8]. The reservoirs in the canonical and grand canonical ensembles introduce fluctuations that smooth out any non-analytic behavior in the free energy of finite systems. This makes the microcanonical ensemble particularly important for the theoretical analysis of small systems where finite-size effects are significant [8] [10].

Conceptual Challenges and Limitations

While fundamental, the microcanonical ensemble presents some conceptual challenges. The definitions of entropy (Boltzmann, volume, surface) are not entirely equivalent, leading to ambiguities in the definitions of derived quantities like temperature for small systems [8]. These different definitions can lead to counter-intuitive results, such as the inability to predict energy flow between two combined systems based on the initial values of the surface temperature (( T_s )), or the appearance of spurious negative temperatures when the density of states is a decreasing function of energy [8]. For these reasons, and because most real-world systems are not perfectly isolated, the canonical or grand canonical ensembles are often preferred for practical theoretical calculations [8]. Nevertheless, the NVE ensemble remains crucial for fundamental understanding and is directly implemented in MD to study the natural, unthermostatted dynamics of systems.

Applications in Research and Biomolecular Studies

Analysis of Isolated Systems and Fundamental Derivations

The microcanonical ensemble is essential for analyzing truly isolated systems, which are encountered in various areas of physics. For instance, it can be used to model the behavior of a gas in a perfectly insulated container or to study the thermodynamic properties of black holes [9]. Its primary application, however, is often conceptual: it provides the simplest framework for deriving thermodynamic properties from the underlying microscopic behavior of a system [9]. By starting with the basic postulates of the microcanonical ensemble, one can derive fundamental relationships for entropy, temperature, and pressure, thereby building a bridge between mechanics and thermodynamics.

Role in Biomolecular Recognition and Drug Development

In the context of drug development, understanding biomolecular recognition—the specific non-covalent interaction between a drug candidate (ligand) and its biological target (e.g., a protein)—is paramount [14]. Molecular dynamics simulations are a powerful tool for studying these processes. While production simulations for binding free energy calculations often use the canonical (NVT) or isothermal-isobaric (NPT) ensembles to mimic laboratory conditions, the microcanonical NVE ensemble plays a critical role in specific contexts [14]. NVE simulations are particularly valuable for studying the natural dynamics of a biomolecular system without the interference of a thermostat, which can sometimes artificially suppress or alter certain motions [12]. For example, after equilibration in NVT, a switch to NVE can be used to compute dynamical properties like vibrational spectra or to study energy flow within a protein, which can provide insights into allostery and conformational changes relevant to drug binding [12]. The analysis of configurational entropy, a key component of the binding free energy, also finds its most direct conceptual link to the number of accessible microstates through the microcanonical view of entropy [14].

In statistical mechanics, a statistical ensemble is an idealization consisting of a large number of virtual copies of a system, considered all at once, each representing a possible state that the real system might be in [15]. This conceptual framework, formally introduced by J. Willard Gibbs in 1902, provides the foundation for deriving the thermodynamic properties of systems from the laws of classical and quantum mechanics [15]. In molecular dynamics (MD) research, ensembles define the specific thermodynamic conditions under which a simulation is performed, determining which macroscopic variables—such as energy, temperature, or pressure—are held constant [16] [17]. The choice of ensemble is crucial because it determines how a system exchanges energy and matter with its surroundings, ranging from completely isolated systems (microcanonical ensemble) to completely open ones (grand canonical ensemble) [16].

The canonical ensemble, also known as the NVT ensemble, describes systems in thermal equilibrium with a heat bath at a fixed temperature, maintaining a constant number of particles (N), constant volume (V), and constant temperature (T) [18]. This ensemble is particularly valuable for simulating systems where energy exchange with the environment is permitted, but particle exchange and volume changes are not, making it one of the most widely used ensembles in molecular dynamics simulations [16] [17].

Theoretical Foundation of the Canonical Ensemble

Fundamental Principles and Mathematical Definition

The canonical ensemble represents the possible states of a mechanical system in thermal equilibrium with a heat bath at a fixed temperature [18]. The principal thermodynamic variable governing the probability distribution of states in this ensemble is the absolute temperature (T) [18]. The system can exchange energy with the heat bath, meaning the states of the system differ in total energy, unlike the microcanonical ensemble where total energy is fixed [18].

The canonical ensemble assigns a probability P to each distinct microstate according to the following exponential relationship:

- Probability Expression:

P = e^(F - E)/(kT) = (1/Z) e^(-E/(kT))[18] - Partition Function:

Z = e^(-F/(kT))[18]

where E is the total energy of the microstate, k is the Boltzmann constant, T is the absolute temperature, F is the Helmholtz free energy, and Z is the canonical partition function [18]. The Helmholtz free energy F serves as a normalization constant, ensuring that the probabilities over all microstates sum to unity [18].

Thermodynamic Relationships and Fluctuations

The canonical ensemble provides direct access to key thermodynamic quantities through partial derivatives of the Helmholtz free energy F(N, V, T) [18]:

Table 1: Thermodynamic Relationships in the Canonical Ensemble

| Thermodynamic Quantity | Mathematical Expression | Physical Interpretation |

|---|---|---|

| Average Pressure | ⟨p⟩ = -∂F/∂V | Mechanical force per unit area |

| Gibbs Entropy | S = -k⟨logP⟩ = -∂F/∂T | Measure of molecular disorder |

| Average Energy | ⟨E⟩ = F + ST | Total internal energy of the system |

| Energy Fluctuations | ⟨E²⟩ - ⟨E⟩² = kT² ∂⟨E⟩/∂T | Variance in energy due to thermal effects |

The Helmholtz free energy F(V, T) for a given N has the exact differential dF = -S dT - ⟨p⟩ dV, which leads to a formulation similar to the first law of thermodynamics: d⟨E⟩ = T dS - ⟨p⟩ dV [18]. Unlike the microcanonical ensemble where total energy is strictly conserved, the canonical ensemble allows energy fluctuations around an average value, with the magnitude of these fluctuations governed by the system's heat capacity [18].

Practical Implementation in Molecular Dynamics

Temperature Control Methods (Thermostats)

In NVT molecular dynamics simulations, maintaining a constant temperature is achieved through algorithmic methods known as thermostats [16]. These work by adjusting the kinetic energy of the system to match the desired temperature [16]. The most basic approach involves simple velocity scaling, but more sophisticated methods include:

- Berendsen Thermostat: Efficiently controls temperature by weakly coupling the system to an external heat bath, providing rapid convergence to the target temperature [19].

- Nosé-Hoover Thermostat: An extended system method that introduces additional degrees of freedom representing the heat bath, generating a correct canonical distribution [19].

The NVT ensemble is particularly appropriate for conformational searches of molecules in vacuum without periodic boundary conditions, as volume, pressure, and density are not defined in such systems [20]. Even with periodic boundary conditions, NVT provides the advantage of less perturbation to the trajectory compared to constant-pressure ensembles, making it valuable when pressure is not a significant factor [20].

Simulation Workflow and Protocol

A typical molecular dynamics simulation employs multiple ensembles in sequence to properly equilibrate the system before production runs [16]. The standard procedure often begins with an energy minimization step, followed by:

- NVT Equilibration: The system is brought to the desired temperature while maintaining constant volume [16]. This step is crucial even if the ultimate goal is to simulate at constant pressure, as it establishes proper temperature distribution before allowing volume fluctuations [16].

- NPT Equilibration: After temperature stabilization, the system transitions to constant pressure conditions to achieve proper density [16].

- Production Run: The final simulation in the desired ensemble (which may be NVT, NPT, or NVE) where data is collected for analysis [16].

Table 2: Comparison of Major Thermodynamic Ensembles in Molecular Dynamics

| Ensemble | Constant Parameters | Physical Situation | Common Applications |

|---|---|---|---|

| Microcanonical (NVE) | N, V, E | Isolated system | Studying energy conservation; fundamental mechanics |

| Canonical (NVT) | N, V, T | System in thermal contact with heat bath | Most common for equilibration; fixed-volume studies |

| Isothermal-Isobaric (NPT) | N, P, T | System in thermal and mechanical contact with reservoir | Mimicking laboratory conditions; density calculations |

| Grand Canonical (μVT) | μ, V, T | System exchanging particles and energy | Open systems; adsorption studies |

Applications in Drug Discovery and Biomolecular Research

Conformational Sampling and Ensemble Generation

In computer-aided drug discovery (CADD), molecular dynamics simulations in the NVT ensemble are valuable tools for investigating the conformational diversity of ligand binding pockets [2]. Proteins are highly dynamic in solution, and ligand binding pockets often sample many pharmacologically relevant conformations [2]. A given small-molecule ligand may bind to and stabilize only a subset of conformations that complement its shape and specific arrangement of interacting functional groups [2].

By clustering the many conformations sampled during an NVT simulation, researchers can generate a condensed yet diverse set of representative pocket conformations, known as a conformational ensemble [2]. This ensemble can then be used in subsequent virtual screening and docking studies to identify structurally diverse small-molecule ligands that bind to dynamic binding pockets, including the opening and closing of transient druggable subpockets that are challenging to detect experimentally [2] [3].

The Relaxed Complex Method

The NVT ensemble plays a crucial role in the Relaxed Complex Method, a systematic approach for representing variation in potential binding sites [3]. In this method, representative target conformations—often including novel, cryptic binding sites—are selected from MD simulations for use in docking studies [3]. This approach addresses one of the major limitations of traditional structure-based drug design: target flexibility [3].

An early successful application of this approach was the development of the first FDA-approved inhibitor of HIV integrase [3]. MD simulations starting with x-ray crystallographic structures of the core domain of this integrase provided early indications of significant flexibility in the active site region, ultimately leading to effective inhibitor design [3].

Advanced Methodologies and Recent Advances

Enhanced Sampling Techniques

While standard NVT simulations are powerful, they often struggle to cross substantial energy barriers within practical simulation timescales [2]. To address this limitation, researchers have developed enhanced sampling techniques that can be implemented within the canonical ensemble:

- Parallel Tempering (Replica Exchange): Multiple copies of the system are simulated at different temperatures simultaneously, with occasional exchanges between temperatures based on Metropolis criteria [2].

- Accelerated Molecular Dynamics (aMD): Adds a non-negative boost potential to the system's potential energy surface, decreasing energy barriers and accelerating transitions between different low-energy states [3].

- Metadynamics and Umbrella Sampling: Enhance sampling along predefined collective variables (reaction coordinates) to explore specific conformational changes [2].

These advanced sampling methods have proven particularly valuable for studying rare events and capturing complex conformational changes relevant to drug binding and protein function [2] [3].

Integration with Machine Learning Approaches

Recent advances have integrated NVT simulations with machine learning to enhance binding-pocket sampling [2]. For example, researchers have coupled MD with AlphaFold, a machine-learning approach for protein structure prediction [2]. While AlphaFold can predict static structures, brief NVT simulations can correct misplaced sidechains and induce transitions to biologically relevant conformations [2]. These simulations, which may involve placing a crystallographic ligand within the pocket to encourage transitions, can substantially improve the accuracy of subsequent ligand-binding predictions [2].

Modified AlphaFold pipelines can also overcome the default implementation's tendency to converge on a single conformation, making it possible to predict entire conformational ensembles that can serve as seeds for NVT simulations [2]. This integration bypasses the need for long-timescale simulations that would otherwise be required to transition between conformational states [2].

Visualization of Concepts and Workflows

Position of NVT in the Ensemble Hierarchy

NVT Simulation Workflow in Drug Discovery

Essential Research Tools and Reagents

Table 3: Research Reagent Solutions for NVT Ensemble Simulations

| Tool/Reagent | Function/Purpose | Examples/Implementation |

|---|---|---|

| Thermostat Algorithms | Maintain constant temperature | Berendsen, Nosé-Hoover, Velocity Rescaling [16] [19] |

| Force Fields | Describe interatomic interactions | CHARMM, AMBER, OPLS, Martini [2] |

| Enhanced Sampling Methods | Overcome energy barriers | Parallel Tempering, aMD, Metadynamics [2] [3] |

| Conformational Clustering | Identify representative structures | k-means, Hierarchical, DBSCAN [2] |

| MD Software Packages | Simulation execution | GROMACS, AMBER, NAMD, LAMMPS [16] [2] |

| Analysis Tools | Extract thermodynamic properties | VMD, MDAnalysis, PyTraj, MDTraj [2] |

The canonical ensemble (NVT) represents a cornerstone of molecular dynamics research, providing the theoretical foundation and practical framework for simulating systems in thermal equilibrium with constant temperature, volume, and particle number. Its implementation through thermostat algorithms enables researchers to mimic experimental conditions where temperature control is essential while maintaining fixed system boundaries. In drug discovery and biomolecular research, NVT simulations have proven invaluable for generating conformational ensembles, capturing protein flexibility, and identifying cryptic binding pockets through methods like the Relaxed Complex Method. As molecular dynamics continues to evolve with advances in enhanced sampling, machine learning integration, and specialized hardware, the canonical ensemble remains an essential tool for understanding thermodynamic properties and molecular behavior across diverse scientific disciplines.

Within the framework of molecular dynamics (MD) research, a statistical ensemble defines the specific thermodynamic conditions under which a system is studied, determining which macroscopic quantities (e.g., energy, volume, temperature, pressure) are held constant. The choice of ensemble dictates the rules for sampling microscopic states and connecting them to macroscopic observables. While the microcanonical (NVE) ensemble conserves energy and is fundamental to Newtonian dynamics, most experimental conditions correspond to different thermodynamic environments. The isothermal-isobaric (NPT) ensemble, which maintains constant particle number (N), pressure (P), and temperature (T), is particularly crucial as it mirrors the vast majority of real-world laboratory and biological conditions where systems are in thermal and mechanical equilibrium with their surroundings [21] [20]. This ensemble allows for energy exchange with a heat bath and volume fluctuations against a constant external pressure, making it indispensable for studying biological processes, phase transitions, and material properties under realistic conditions [22] [21].

Theoretical Foundations of the NPT Ensemble

The NPT Partition Function

The foundation of the NPT ensemble is its partition function, denoted as Δ(N,P,T), which encapsulates the statistical properties of the system. For a system of N particles at constant pressure P and constant temperature T, the partition function is derived from the canonical partition function, Q(N,V,T), by integrating over all possible volumes, weighted by the Boltzmann factor for the pressure-volume work [22] [21]:

[ \Delta(N, P, T) = \frac{1}{V0} \int{0}^{\infty} e^{-\beta P V} Q(N, V, T) dV ]

[ \text{where } Q(N, V, T) = \frac{1}{N! \Lambda^{3N}} \int \exp[-\beta U(\mathbf{r}^N)] d\mathbf{r}^N ]

Here, β = 1/kBT, where kB is Boltzmann's constant, Λ is the thermal wavelength, U(rN) is the potential energy function of the system, and V0 is a volume scale factor that ensures dimensional consistency and addresses the problem of redundant configuration counting in continuous systems [23]. The exponential term, e–βPV, represents the influence of the pressure bath, favoring volumes that minimize the Gibbs free energy.

Connection to Thermodynamics: Gibbs Free Energy

The characteristic thermodynamic potential for the NPT ensemble is the Gibbs free energy (G), which is directly related to the partition function [22] [21]:

[ G(N, P, T) = -k_B T \ln \Delta(N, P, T) ]

The Gibbs free energy encompasses both the system's internal energy and the work done against the external pressure, and its minimization determines the equilibrium state of the system at constant T and P. This connection enables the calculation of all other thermodynamic quantities through appropriate derivatives of G or Δ. For instance, the average volume ⟨V⟩ is given by ⟨V⟩ = (∂G/∂P)N,T, and the system's enthalpy is H = ⟨E⟩ + P⟨V⟩, where ⟨E⟩ is the average internal energy [21].

Table 1: Key Thermodynamic Quantities in the NPT Ensemble

| Quantity | Statistical Mechanical Expression | Thermodynamic Relation |

|---|---|---|

| Gibbs Free Energy | ( G = -k_B T \ln \Delta ) | Definition |

| Average Volume | ( \langle V \rangle = -\frac{1}{\beta} \left( \frac{\partial \ln \Delta}{\partial P} \right)_{N, T} ) | ( \langle V \rangle = \left( \frac{\partial G}{\partial P} \right)_{N, T} ) |

| Enthalpy | ( H = \langle E \rangle + P \langle V \rangle ) | ( H = G + TS ) |

| Entropy | ( S = -\left( \frac{\partial G}{\partial T} \right)_{N, P} ) | ( S = \frac{H - G}{T} ) |

| Isothermal Compressibility | ( \kappaT = \frac{ \langle V^2 \rangle - \langle V \rangle^2 }{ kB T \langle V \rangle } ) | ( \kappaT = -\frac{1}{V} \left( \frac{\partial V}{\partial P} \right)T ) |

Barostat Methods for Constant Pressure Control

In molecular dynamics simulations, maintaining constant pressure requires algorithms that adjust the system volume dynamically. These algorithms, known as barostats, are crucial for sampling the NPT ensemble correctly.

The Parrinello-Rahman Barostat

The Parrinello-Rahman method is an extended system approach that treats the simulation cell vectors as dynamic variables with fictitious masses, allowing the cell's shape and size to fluctuate in response to the imbalance between the internal and external stress [19]. The equations of motion couple particle coordinates with the cell degrees of freedom, providing a rigorous path for sampling the NPT ensemble. This method is highly versatile as it can handle anisotropic deformations, making it suitable for studying crystal phase transitions under stress. A key parameter is the pfactor, which is related to the square of the pressure control time constant (τP) and the system's bulk modulus (B): pfactor ≈ τP²B [19]. For crystalline systems like metals, values in the range of 10⁶ to 10⁷ GPa·fs² have been found to provide good convergence and stability [19].

The Berendsen Barostat

The Berendsen barostat scales the coordinates and box dimensions to weakly couple the system to a pressure bath, providing an efficient method for pressure control that leads to rapid convergence [19]. Unlike the Parrinello-Rahman method, the standard Berendsen barostat typically preserves the cell shape, allowing only the volume to change. It requires the user to specify the compressibility of the material and a time constant τP for the pressure coupling [19]. While efficient for equilibration, it does not generate a rigorously correct NPT ensemble as it suppresses the natural volume fluctuations [21].

Table 2: Comparison of Common Barostat Algorithms in Molecular Dynamics

| Barostat Method | Type | Key Control Parameters | Typical Applications | Advantages/Limitations |

|---|---|---|---|---|

| Parrinello-Rahman [19] [24] | Extended System | pfactor (GPa·fs²) |

Solids, anisotropic materials, phase transitions | Advantages: Rigorous, allows full cell fluctuations.Limitations: Requires careful parameter tuning. |

| Berendsen [19] [21] | Weak Coupling | τ_P (fs), compressibility (bar⁻¹) |

Rapid equilibration, simple liquids | Advantages: Fast convergence.Limitations: Does not generate correct fluctuation properties. |

| Langevin Hull [25] | Stochastic Boundary | Solvent viscosity, facet geometry | Non-periodic systems, nanoparticles, biomolecules | Advantages: No periodic box needed, good for inhomogeneous systems.Limitations: Computationally intensive hull calculation. |

| Shell Particle [23] | Geometric Constraint | Mass of the shell particle | Small systems, rigorous NPT sampling | Advantages: No arbitrary piston mass; system-controlled dynamics.Limitations: Less common in standard MD packages. |

Advanced Barostats for Specialized Applications

For non-periodic systems like nanoparticles or isolated biomolecules in implicit solvent, traditional barostats that rely on affine transformations of a periodic box are problematic. The Langevin Hull method addresses this by applying external pressure and a Langevin thermostat directly to the facets of the convex hull surrounding the system [25]. This allows for realistic pressure control and thermal conductivity without the artificial constraints of a periodic box, making it particularly suitable for simulating heterogeneous mixtures with different compressibilities [25].

Another innovative approach is the shell particle method, which reformulates the NPT ensemble to avoid redundant configuration counting by defining the system volume through the position of a specific "shell" particle [23]. This eliminates the need for an arbitrary piston mass, as the system itself controls the timescale of volume fluctuations via the known mass of the shell particle [23].

The NPT Ensemble in Biological Applications

The NPT ensemble is exceptionally well-suited for biological simulations because living organisms typically exist at constant temperature and pressure. This alignment with physiological conditions enables researchers to study biomolecular structure, dynamics, and function in a realistic thermodynamic context.

Protein Folding and Dynamics

Proteins and other biomolecules maintain their native structures and functions under isothermal-isobaric conditions. NPT-MD simulations are therefore essential for studying protein folding, conformational changes, and structural stability [21]. The allowance for volume fluctuations is critical, as these processes often involve subtle changes in molecular packing and solvation that would be artificially constrained in a constant-volume simulation. Furthermore, the NPT ensemble is crucial for investigating the effects of external pressure on protein stability, providing insights relevant to deep-sea biology and high-pressure food processing [21].

Biomolecular Solvation and Interactions

Most biological processes occur in aqueous environments. NPT simulations allow the density of the solvent (water and ions) to adjust naturally to the simulation temperature and pressure, ensuring correct solvation shell structures and thermodynamics [25]. This is vital for accurately modeling ligand binding, protein-protein interactions, and the formation of molecular complexes, as these events often involve significant changes in hydration and volume.

Diagram 1: A generalized workflow for setting up and running an NPT molecular dynamics simulation for a biological system, showing the sequential equilibration and production stages.

Practical Implementation and Protocols

A Protocol for NPT Simulation of a Protein

The following protocol outlines a typical workflow for simulating a solvated protein system, such as Lysozyme, under NPT conditions using a common MD package.

System Preparation: Obtain the protein's initial coordinates (e.g., from the Protein Data Bank, PDB code: 1LYZ). Place it in a periodic box (e.g., a rhombic dodecahedron) with a buffer of at least 1.0-1.5 nm from the box edges. Solvate the system with water molecules (e.g., TIP3P, SPC) and add ions (e.g., Na⁺, Cl⁻) to neutralize the system's charge and achieve a desired physiological concentration (e.g., 150 mM NaCl) [25].

Energy Minimization: Perform an energy minimization (e.g., using the steepest descent algorithm) to remove any bad contacts and steric clashes introduced during the solvation process. This is a crucial step to ensure numerical stability before starting dynamics.

NVT Equilibration: Run a short MD simulation (e.g., 100-500 ps) in the NVT ensemble to equilibrate the system's temperature. Use a thermostat (e.g., Nosé-Hoover, velocity rescale) with a coupling constant (e.g., τT = 0.1-1.0 ps) to maintain the target temperature (e.g., 300 K). Restrain the heavy atoms of the protein to their initial positions to allow the solvent to relax around the protein.

NPT Equilibration: Run a subsequent MD simulation (e.g., 100-500 ps) in the NPT ensemble to equilibrate the system's density. Use the same thermostat and add a barostat (e.g., Parrinello-Rahman with

pfactor~ 1-10, or Berendsen with τP ~ 1-5 ps and compressibility ~ 4.5×10⁻⁵ bar⁻¹ for water). The protein restraints can be gradually released during this stage.Production NPT-MD: Once the system energy, temperature, and density have stabilized, run a long, unrestrained production simulation (e.g., 100 ns to 1 µs) in the NPT ensemble. The trajectory from this stage is used for all subsequent analyses of structural and dynamic properties.

The Scientist's Toolkit: Essential Reagents and Parameters

Table 3: Key Research Reagent Solutions for NPT-MD of Biological Systems

| Item / Parameter | Function / Role | Example Choices / Typical Values |

|---|---|---|

| Force Field | Defines the potential energy function (U) for the system. | CHARMM36, AMBER/GAFF, OPLS-AA |

| Water Model | Represents the solvent environment and its properties. | TIP3P, SPC/E, TIP4P/2005 |

| Thermostat | Maintains constant temperature by controlling kinetic energy. | Nosé-Hoover, Langevin, Velocity Rescale |

| Barostat | Maintains constant pressure by controlling system volume. | Parrinello-Rahman, Berendsen (see Table 2) |

| Pressure (P) | The target external pressure. | 1.013 bar (1 atm) for standard conditions. |

| Time Constant (τₚ) | Coupling strength for the barostat; larger values mean weaker coupling. | 1-5 ps (Berendsen); related to pfactor (Parrinello-Rahman) |

| Compressibility (βₜ) | System's isothermal compressibility; input for some barostats. | 4.5×10⁻⁵ bar⁻¹ for water (Berendsen barostat) |

Comparison with Other Statistical Ensembles

While the NPT ensemble is highly relevant for biological applications, other ensembles serve complementary purposes in molecular dynamics research.

NVE Ensemble (Microcanonical): This is the most fundamental ensemble, generated by integrating Newton's equations without temperature or pressure control. While energy is conserved, it is not recommended for equilibration as it cannot drive the system to a desired temperature. It is, however, useful for production runs after equilibration when calculating properties like infrared spectra that require unperturbed dynamics [26] [20].

NVT Ensemble (Canonical): This ensemble maintains a constant number of particles, volume, and temperature. It is suitable for studying systems with fixed boundaries, such as a protein in vacuum or a solution in a rigid container. However, for condensed phase systems, fixing the volume can prevent the system from finding its natural density at a given temperature and pressure, which is a significant limitation for simulating realistic biological environments [26] [20].

NPT vs. NVT: The key distinction is that NPT allows volume fluctuations, which is essential for obtaining correct densities and for studying pressure-induced phenomena. In practice, for large systems in the thermodynamic limit, NVT and NPT often yield similar results for many structural properties, but properties related to volume and density will differ [26].

NPT vs. Grand Canonical (μVT): The grand canonical ensemble allows the exchange of particles (constant chemical potential μ) in addition to volume fluctuations, making it suitable for studying open systems like adsorption or permeation. In contrast, the NPT ensemble keeps the particle number fixed, making it more appropriate for closed systems [21].

The isothermal-isobaric ensemble represents a cornerstone of modern molecular dynamics, providing the essential link between simulation and experiment for systems under constant temperature and pressure. Its theoretical foundation, rooted in the partition function and connected to the Gibbs free energy, provides a robust framework for calculating thermodynamic averages. For biological applications, from protein dynamics to drug binding, the NPT ensemble is arguably the most relevant statistical ensemble, as it faithfully replicates the natural environment of biomolecules. The continuous development of advanced barostat algorithms, such as those for non-periodic systems and rigorous shell-particle methods, ensures that NPT-MD will remain a powerful and evolving tool for uncovering the mechanisms of life at the atomic level.

In molecular dynamics (MD) research, a statistical ensemble is a fundamental theoretical construct that enables the connection between the microscopic behavior of atoms and molecules and macroscopic thermodynamic properties observable in experiments. An ensemble is defined as an idealization consisting of a large number of virtual copies of a system, considered simultaneously, with each copy representing a possible state that the real system might inhabit [15]. This collection of systems shares identical macroscopic parameters (such as temperature, energy, or particle number) but may differ in their microscopic configurations—the specific positions and momenta of all constituent particles [27]. The power of this approach lies in its ability to predict bulk material properties through statistical averages over all possible microstates in the ensemble, effectively bridging atomic-scale interactions with continuum-scale phenomena [15] [27].

The ensemble concept, formally introduced by J. Willard Gibbs in 1902, provides the mathematical foundation for statistical mechanics [15]. In practical molecular dynamics simulations, researchers leverage this framework to compute thermodynamic properties that would be impossible to derive from single configurations alone. As a system evolves over time during an MD simulation, it samples different regions of phase space, and the resulting trajectory represents a time-dependent exploration of the ensemble [28]. The validity of equating time averages from single simulations to ensemble averages relies on the ergodic hypothesis—a fundamental assumption stating that over sufficiently long time periods, a system will visit all possible states consistent with the imposed constraints [27]. This principle enables MD practitioners to extract meaningful thermodynamic information from computational experiments.

Phase Space: The Mathematical Foundation

Definition and Structure of Phase Space

Phase space provides the complete mathematical framework for describing all possible states of a mechanical system. For a classical system of N particles, phase space is a 6N-dimensional space where each point represents a unique microstate of the entire system, completely specified by the positions (q₁, q₂, ..., q₃N) and momenta (p₁, p₂, ..., p₃N) of all particles [29]. This comprehensive parameterization allows for a complete description of the system's mechanical state at any instant, with the 3N position coordinates spanning what is known as "configuration space" and the 3N momentum coordinates defining "momentum space" [29].

The dimensionality of phase space grows dramatically with system size. For a system of 50,000 atoms—a relatively modest MD simulation by modern standards—the corresponding phase space comprises 300,000 dimensions (3 position and 3 momentum coordinates per atom) [28]. While this high-dimensionality presents visualization challenges, it provides a mathematically rigorous foundation for statistical mechanics. The evolution of a system over time traces a phase space trajectory, representing the continuous transformation of the system's microstate according to Hamilton's equations of motion [29] [30]. For conservative systems, this trajectory is constrained to a fixed energy hypersurface, with the representative point flowing through phase space like an incompressible fluid [30].

Phase Space Visualizations and Representations

Different graphical representations of phase space provide unique insights into system behavior. State space plots (qᵢ versus q̇ᵢ) illustrate correlations between configuration variables and their time derivatives, while true phase space plots (qᵢ versus pᵢ) offer more fundamental representations based on canonical coordinates [30]. For simple systems like the one-dimensional harmonic oscillator, phase space trajectories form elegant elliptical paths, with the size of the ellipse proportional to the total energy of the system [30].

Figure 1: Phase Space Structure. A 6N-dimensional mathematical space that completely describes all possible states of an N-particle system.

Major Thermodynamic Ensembles in Molecular Dynamics

The Primary Ensemble Types

Molecular dynamics simulations utilize several fundamental ensembles, each corresponding to different physical conditions and experimental constraints. The three primary ensembles form the foundation for most MD applications:

Microcanonical Ensemble (NVE): Describes completely isolated systems with fixed Number of particles (N), constant Volume (V), and fixed total Energy (E) [15] [17]. In this ensemble, the system cannot exchange energy or matter with its surroundings, making it the most fundamental ensemble from a theoretical perspective. The microcanonical ensemble represents the natural evolution of Newton's equations of motion without external perturbations [17].

Canonical Ensemble (NVT): Appropriate for closed systems that can exchange energy with a thermal reservoir at fixed temperature [15] [17]. This ensemble maintains constant Number of particles (N), constant Volume (V), and constant Temperature (T). The canonical ensemble is particularly important for calculating the Helmholtz free energy of a system, representing the maximum work attainable at constant volume and temperature [17].

Isothermal-Isobaric Ensemble (NpT): Models systems that exchange both energy and volume with their surroundings, maintaining constant Number of particles (N), constant Pressure (p), and constant Temperature (T) [17]. This ensemble plays a crucial role in chemistry since most important chemical reactions occur under constant pressure conditions. The isothermal-isobaric ensemble provides the framework for determining the Gibbs free energy, representing the maximum work available at constant pressure and temperature [17].

Ensemble Characteristics and Applications

Table 1: Characteristics of Primary Thermodynamic Ensembles in Molecular Dynamics

| Ensemble Type | Fixed Parameters | Fluctuating Quantities | Physical Situation | Primary Applications |

|---|---|---|---|---|

| Microcanonical (NVE) | N, V, E | Temperature, Pressure | Isolated systems | Fundamental Newtonian dynamics; Total energy conservation studies |

| Canonical (NVT) | N, V, T | Energy, Pressure | System in thermal contact with heat bath | Helmholtz free energy calculations; Constant-volume simulations |

| Isothermal-Isobaric (NpT) | N, p, T | Energy, Volume | System in thermal and mechanical contact with reservoir | Gibbs free energy calculations; Most chemical and biological processes |

Connecting Microscopic States to Macroscopic Properties

The Ensemble Average Framework

The connection between microscopic states and macroscopic observables occurs through the mathematical formalism of ensemble averaging. For any mechanical quantity X, which can be expressed as a function of the system's phase space coordinates, the macroscopic observable corresponds to the ensemble average ⟨X⟩, calculated as an integral over the entire phase space weighted by the probability density function ρ [15]:

⟨X⟩ = ∑∫ρX dp₁⋯dq₃_N

This integration extends over all momenta and positions, with possible summation over variable particle numbers in the case of grand canonical ensembles [15]. In practical molecular dynamics simulations, this continuous integral is approximated by a discrete sum over successive configurations generated during the simulation:

⟨X⟩ ≈ (1/M) ∑ᵢ X(tᵢ)

where M represents the number of time steps in the simulation [27]. This approach implicitly relies on the ergodic hypothesis, which equates the ensemble average with the time average over a sufficiently long trajectory [27].

Practical Implementation in Molecular Dynamics

In operational terms, molecular dynamics simulations proceed by numerical integration of the equations of motion, generating a new configuration of atoms at each time step along with instantaneous values for thermodynamic properties [27]. The true thermodynamic value emerges only after averaging over many such configurations, as individual measurements exhibit fluctuations around the mean value [27]. This averaging process effectively projects the complex, high-dimensional information contained in phase space onto meaningful macroscopic observables.

Figure 2: From Microstates to Macroscopic Properties. The ensemble averaging framework connects atomic-level descriptions to thermodynamic observables.

Experimental Protocols and Computational Methodologies

Molecular Dynamics Simulation Workflow

Implementing ensemble concepts in molecular dynamics follows a systematic workflow that transforms theoretical constructs into practical computations:

System Initialization: Define initial atomic positions and velocities corresponding to a single point in phase space [28]. For complex biomolecular systems, this typically begins with experimental structures from protein data bank files or constructed model geometries.

Force Field Selection: Employ appropriate potential energy functions parameterized to reproduce experimental observables and quantum mechanical calculations. These force fields mathematically describe the potential energy surface governing atomic interactions.

Ensemble Assignment: Implement algorithmic constraints corresponding to the desired ensemble—energy conservation for NVE, thermostating algorithms for NVT, and combined thermostating/barostating for NpT simulations.

Equation Integration: Numerically solve Newton's equations of motion using finite difference methods (e.g., Verlet or leapfrog algorithms) with typical time steps of 1-2 femtoseconds for accurate trajectory generation.

Configuration Sampling: Collect system snapshots at regular intervals throughout the simulation, ensuring sufficient decorrelation between sampled states while maintaining adequate resolution of relevant dynamics.

Property Calculation: Compute instantaneous values of thermodynamic properties for each sampled configuration, then perform statistical analysis to determine ensemble averages and fluctuations [27].

Research Reagent Solutions for Ensemble Studies

Table 2: Essential Computational Tools for Ensemble-Based Molecular Dynamics Research

| Tool Category | Specific Examples | Function in Ensemble Studies |

|---|---|---|

| Simulation Software | LAMMPS, GROMACS, NAMD, AMBER | Numerical integration of equations of motion with ensemble constraints |

| Force Fields | CHARMM, AMBER, OPLS, Martini | Mathematical description of potential energy surfaces governing atomic interactions |

| Analysis Packages | MDTraj, VMD, MDAnalysis | Extraction of ensemble averages and fluctuations from trajectory data |

| Thermostats | Nosé-Hoover, Berendsen, Langevin | Temperature regulation for canonical and isothermal-isobaric ensembles |

| Barostats | Parrinello-Rahman, Berendsen | Pressure control for isothermal-isobaric ensembles |

| Enhanced Sampling | Metadynamics, Replica Exchange | Improved phase space sampling for complex systems and rare events |

Applications in Drug Development and Biomolecular Research

Conformational Ensemble Modeling in Drug Discovery

The ensemble concept proves particularly valuable in pharmaceutical research where proteins and drug targets exist as dynamic ensembles of interconverting conformations rather than static structures [31]. Experimental evidence confirms that many proteins and protein complexes sample multiple conformational states with similar energies, making their functional representation necessarily an ensemble rather than a single structure [31]. This conformational variability directly influences drug binding, allosteric regulation, and molecular recognition processes central to pharmaceutical development.

In practical applications, researchers use paramagnetic relaxation enhancement (PRE) measurements from NMR spectroscopy to characterize low-populated conformations that might be crucial for drug binding [31]. The strong distance dependence (r⁻⁶) of PRE effects means that even minor conformations where nuclei approach paramagnetic centers can be detected, providing sensitive probes of transient structural states [31]. This approach enables mapping of conformational landscapes that determine pharmacological activity.

Ensemble-Averaged Experimental Observables

Experimental techniques in structural biology increasingly incorporate ensemble concepts to interpret data from dynamic systems. Paramagnetic residual dipolar couplings (RDCs) provide particularly powerful constraints for ensemble modeling, as they average over molecular motions occurring on timescales faster than approximately 10 milliseconds [31]. The analysis of motionally averaged RDCs allows researchers to determine a "mean tensor" that represents the generalized order parameter for domain motions, quantifying the extent of conformational sampling [31].

The mathematical relationship for these averaged observables follows the form:

Δν_RDC ∝ ⟨PχP⟩ = P₀⟨RχR⟩P₀ = P₀χ̃P₀

where χ̃ represents the averaged magnetic susceptibility tensor over the ensemble of orientations [31]. This formalism enables quantitative characterization of conformational heterogeneity directly relevant to drug-target interactions.

Challenges and Future Directions

Current Methodological Limitations

Despite the powerful framework provided by ensemble concepts, significant challenges remain in practical implementations. The "ill-posed inverse problem" of recovering structural ensembles from averaged experimental data admits infinite possible solutions, requiring careful application of Occam's razor principles to select the most parsimonious ensemble consistent with observations [31]. Additionally, achieving adequate sampling of high-dimensional phase spaces remains computationally demanding, particularly for large biomolecular systems with slow collective motions.

Molecular dynamics practitioners must remain vigilant about potential ergodicity breaches, where simulations become trapped in localized regions of phase space, failing to representative sample all accessible configurations [28]. This sampling limitation can lead to inaccurate predictions of thermodynamic properties and biased characterization of conformational landscapes. Technical implementations must carefully balance computational efficiency with physical accuracy when applying thermostat and barostat algorithms to maintain ensemble conditions.

Emerging Approaches and Innovations

Recent methodological advances address these challenges through enhanced sampling algorithms, integrative structural biology approaches, and machine learning applications. Ensemble machine learning methods, which combine multiple models to improve prediction accuracy, show promise for analyzing complex biomolecular simulations [31]. These approaches recognize that combining multiple models can compensate for individual errors, resulting in higher prediction performance compared to single models [31].

Advanced computational strategies now leverage experimental data as constraints in molecular dynamics simulations, guiding conformational sampling toward experimentally relevant regions of phase space. This integrative approach combines the atomic-resolution detail of simulations with the experimental validation of observational data, creating more accurate and biologically relevant ensemble models for drug discovery applications.

In the annals of theoretical physics, few conceptual frameworks have proven as enduringly influential as the statistical ensemble, formally introduced by Josiah Willard Gibbs in his 1902 monograph Elementary Principles in Statistical Mechanics [32]. This work represented a profound synthesis of earlier efforts by luminaries such as Ludwig Boltzmann and James Clerk Maxwell, distilling thousands of pages of nineteenth-century thermodynamic research into a cohesive mathematical structure that has survived the revolutionary transitions from classical to quantum mechanics [32] [33]. Gibbs's ensemble concept provided the missing theoretical foundation that connected microscopic mechanical laws to macroscopic thermodynamic behavior through a systematic probabilistic approach, ultimately transforming statistical mechanics from a collection of insightful techniques into a rigorous scientific discipline [34] [32].

For researchers in molecular dynamics and drug development, Gibbs's ensemble concept remains indispensable, providing the theoretical justification for simulating molecular behavior through finite sampling of possible system states [14] [20]. This article examines the historical context and intellectual development of Gibbs's ensemble concept, its mathematical formulation, and its enduring significance for modern computational science, particularly in the realm of biomolecular recognition and drug design.

Gibbs's Intellectual Trajectory and Scientific Context

Historical and Scientific Background

Josiah Willard Gibbs (1839-1903) spent his entire academic career at Yale College, where in 1871 he was appointed Professor of Mathematical Physics—the first such professorship in the United States [34]. Working in relative isolation from the European scientific centers, Gibbs produced a remarkable body of work that would eventually earn him the Copley Medal of the Royal Society in 1901 and praise from Albert Einstein as "the greatest mind in American history" [34] [35].

Gibbs's work on statistical mechanics emerged from his deep engagement with thermodynamics, culminating in his monumental 1875-1878 monograph "On the Equilibrium of Heterogeneous Substances," which has been described as "the Principia of thermodynamics" [34]. This work established the theoretical framework for physical chemistry but operated primarily at the macroscopic level. Gibbs's transition to statistical mechanics represented a natural extension of this work into the microscopic realm, seeking to establish thermodynamics on mechanical first principles [33].

Table: Chronological Development of Gibbs's Key Contributions

| Year | Work | Significance |

|---|---|---|

| 1863 | First American PhD in engineering [34] | Early demonstration of mathematical prowess |

| 1873 | First published work on thermodynamics [34] | Geometric representation of thermodynamic quantities |

| 1875-1878 | "On the Equilibrium of Heterogeneous Substances" [34] | Established foundations of physical chemistry |

| 1902 | Elementary Principles in Statistical Mechanics [32] | Systematic development of ensemble theory |

The Pre-Gibbsian Landscape of Statistical Mechanics