Statistical Accuracy in Molecular Dynamics: Advancing Conformational Ensemble Prediction for Drug Discovery

Accurately characterizing the conformational ensembles of intrinsically disordered proteins (IDPs) and highly dynamic systems is a central challenge in structural biology and drug development.

Statistical Accuracy in Molecular Dynamics: Advancing Conformational Ensemble Prediction for Drug Discovery

Abstract

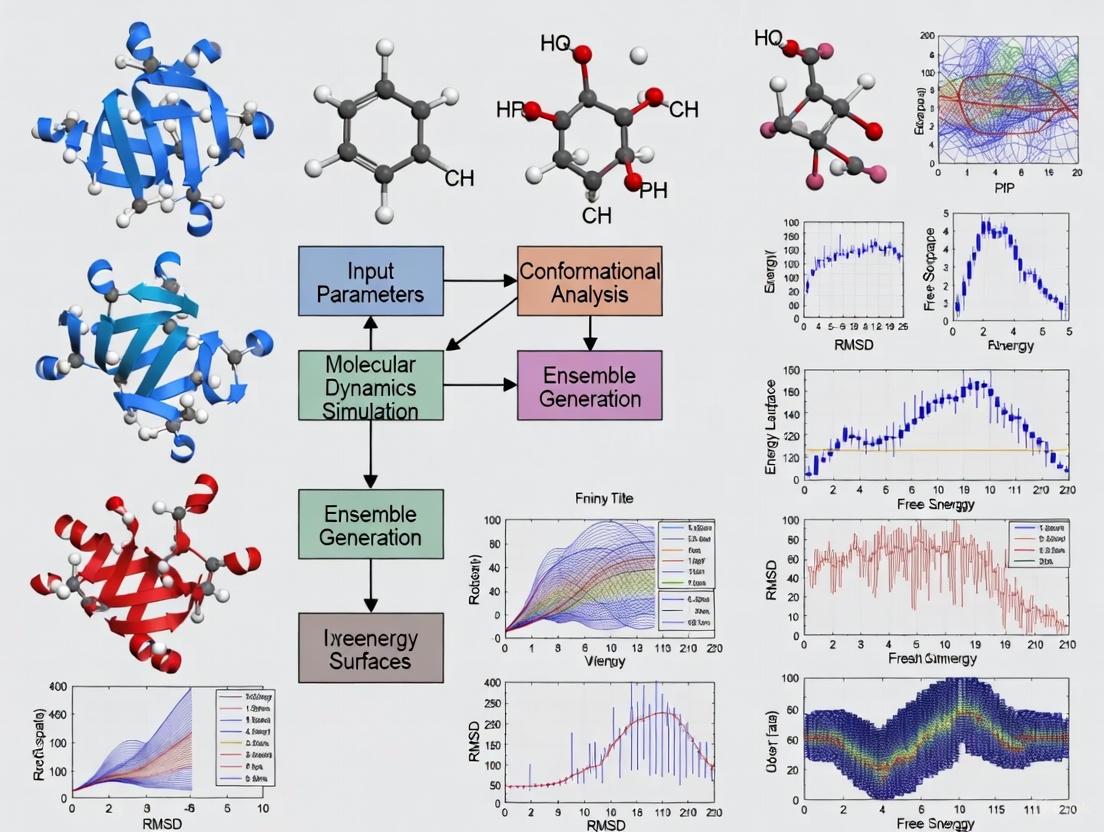

Accurately characterizing the conformational ensembles of intrinsically disordered proteins (IDPs) and highly dynamic systems is a central challenge in structural biology and drug development. This article explores the statistical accuracy of molecular dynamics (MD) simulations in sampling these ensembles, addressing both foundational principles and cutting-edge advancements. We examine the limitations of traditional MD force fields and the rise of integrative methods that combine simulation with experimental data like NMR and SAXS. The content covers enhanced sampling protocols, the disruptive potential of AI and generative deep learning models for efficient sampling, and robust validation frameworks. Finally, we provide a comparative analysis of MD against emerging AI-based and hybrid approaches, offering a practical guide for researchers seeking to generate physically accurate, statistically robust conformational ensembles for therapeutic design.

The Conformational Ensemble Paradigm: Why Accuracy Matters in Dynamic Protein Systems

Frequently Asked Questions (FAQs) and Troubleshooting Guides

FAQ 1: What are the primary computational methods for generating conformational ensembles of IDPs?

Answer: The main computational approaches are Molecular Dynamics (MD) simulations, enhanced sampling techniques, and novel probabilistic methods. Standard MD simulations explore conformational space using physics-based force fields but can be limited by sampling timescale. Enhanced sampling methods, like Replica Exchange Solute Tempering (REST) and Metadynamics, accelerate the exploration of energy landscapes [1] [2]. Novel protocols like Probabilistic MD Chain Growth (PMD-CG) build ensembles extremely quickly by combining tripeptide MD data with chain growth algorithms, showing good agreement with REST results [1] [3]. Another approach, FiveFold, uses protein structure fingerprint technology (PFSC-PFVM) to predict multiple conformational 3D structures from sequence alone [4].

FAQ 2: How can I assess the statistical accuracy and convergence of my conformational ensemble?

Answer: Proper assessment is crucial for reliable ensembles. Key strategies include:

- Block Averaging: Use this standard technique to obtain robust estimates of statistical errors in your simulations [2].

- Independent Replicates: Perform multiple, independent simulation replicates. This often provides higher statistical precision than a single long trajectory [2].

- Effective Ensemble Size: Monitor the Kish ratio (K), which measures the fraction of conformations with significant statistical weights. A Kish ratio of 0.10, for example, corresponds to an effective ensemble size of about 3000 structures from a 30,000-frame simulation and helps prevent overfitting [5].

FAQ 3: My MD ensemble disagrees with experimental data. How can I reconcile them?

Answer: Integrative approaches that combine simulations with experimental data are highly effective.

- Maximum Entropy Reweighting: This is a robust and automated procedure to reweight your MD ensemble to match extensive experimental data from techniques like NMR and SAXS. It introduces minimal perturbation to the simulation, ensuring the final ensemble remains physically realistic while agreeing with experiments [5].

- Metadynamics Metainference (M&M): This method simultaneously enhances sampling and restrains the simulation to match experimental data within a Bayesian framework [2].

FAQ 4: What metrics should I use to compare two different conformational ensembles?

Answer: Traditional root-mean-square deviation (RMSD) is often unsuitable for flexible ensembles. Instead, use superimposition-free, distance-based metrics [6].

- ens_dRMS: This global metric is the root mean-square difference between the medians of the Cα-Cα distance distributions of two ensembles (see Formula 1).

- Difference Matrices: These matrices visualize local and statistically significant differences between the distance distributions of residue pairs in two ensembles, helping pinpoint specific regions of variation [6].

Formula 1: Ensemble Distance Root Mean Square (ens_dRMS)

[ \text{ens_dRMS} = \sqrt{ \frac{1}{n} \sum{i,j} \left[ d{\mu}^A(i,j) - d_{\mu}^B(i,j) \right]^2 } ]

Where (d{\mu}^A(i,j)) and (d{\mu}^B(i,j)) are the medians of the distance distributions for residue pair i,j in ensembles A and B, and n is the number of residue pairs [6].

FAQ 5: Are there public databases for standardized MD trajectories of flexible proteins?

Answer: Yes, databases of standardized simulations are invaluable for comparison. ATLAS is a database of all-atom MD simulations for a representative set of proteins, performed using a uniform protocol to ensure comparability [7]. It includes analyses of global and local flexibility, and special datasets for proteins with unique dynamics, such as those containing chameleon sequences or Dual Personality Fragments (DPFs) [7].

Troubleshooting Common Problems

Problem: Inadequate Sampling of Key Conformational States

- Symptoms: Poor reproducibility between simulation replicates; failure to match ensemble-averaged experimental observables like SAXS profiles or NMR J-couplings.

- Solutions:

- Employ Enhanced Sampling: Switch from standard MD to enhanced sampling methods like REST or Metadynamics to overcome energy barriers [1] [2].

- Leverage Probabilistic Methods: For very fast initial sampling, consider protocols like PMD-CG, which can quickly generate a starting ensemble that can be further refined [1] [3].

- Check Database References: Consult databases like ATLAS to see the typical scale of fluctuations for proteins with similar folds [7].

Problem: Force Field Dependence and Inaccuracy

- Symptoms: Different force fields produce ensembles with systematically different properties (e.g., overly compact or extended chains); persistent disagreement with experimental data even after good sampling.

- Solutions:

- Use Modern Force Fields: Utilize state-of-the-art force fields like CHARMM36m, a99SB-disp, or AMBER99SB-ILDN, which are better balanced for disordered and folded states [7] [5] [8].

- Apply Maximum Entropy Reweighting: Integrate your simulation with experimental data using reweighting. This can produce highly similar, force-field-independent ensembles from different starting points, provided the initial agreement with data is reasonable [5].

Problem: Overfitting to Experimental Data During Refinement

- Symptoms: The refined ensemble fits the experimental data perfectly but shows unphysical structural distortions; the ensemble is dominated by a very small number of conformations.

- Solutions:

- Control the Kish Ratio: Use the maximum entropy reweighting approach and set a lower bound for the Kish ratio (e.g., K=0.1). This ensures a sufficiently large effective ensemble size and prevents over-reliance on a handful of conformations [5].

- Use Restraints Judiciously: When using flexible fitting methods (e.g., MDFF), apply harmonic restraints to preserve secondary structure elements and prevent unphysical deformations [9].

Key Experimental Observables and Integration Protocols

Table 1: Experimental Techniques for Characterizing Conformational Ensembles

| Technique | Provides Information On | Key Considerations for IDPs |

|---|---|---|

| NMR Spectroscopy [10] | Chemical shifts (secondary structure), residual dipolar couplings (long-range order), relaxation rates (dynamics on ps-ns and μs-ms timescales). | Spectral overcrowding can be mitigated with 13C detection and non-uniform sampling. |

| Small-Angle X-Ray Scattering (SAXS) [5] [10] | Global shape and dimensions (radius of gyration, Rg). | Provides ensemble-averaged low-resolution information that is highly sensitive to the size distribution. |

| Single-Molecule FRET [10] | Distance distributions between specific residue pairs. | Probes heterogeneity directly but requires labeling, which might perturb the system. |

| Atomic Force Microscopy (AFM) [10] | Surface topography and mechanical properties. | Can visualize individual molecules under near-physiological conditions. |

Workflow 1: Determining Accurate Conformational Ensembles

The Scientist's Toolkit: Research Reagent Solutions

| Resource / Tool | Type | Primary Function | Key Feature |

|---|---|---|---|

| GROMACS [2] [7] | MD Software | High-performance molecular dynamics simulation. | Optimized for both CPU and GPU clusters; widely used. |

| PLUMED [2] | MD Plugin | Enhanced sampling and free-energy calculations. | Implements metadynamics, replica exchange, and other advanced algorithms. |

| CHARMM36m [7] [5] | Force Field | Molecular mechanics energy function for proteins. | Optimized for folded and intrinsically disordered proteins. |

| a99SB-disp [5] | Force Field | Molecular mechanics energy function with disp water model. | Designed for accurate protein disorder and solvent interactions. |

| ATLAS Database [7] | Database | Repository of standardized MD trajectories. | Allows comparison of protein dynamics using a uniform simulation protocol. |

| Protein Ensemble Database (PED) [6] | Database | Repository of conformational ensembles of IDPs. | Stores ensembles that have been fit to experimental data. |

Workflow 2: Comparing Different Conformational Ensembles

Quantitative Data and Methodologies

Table 3: Key Parameters for Ensemble Generation and Validation

| Parameter | Description | Exemplary Value / Threshold | Reference |

|---|---|---|---|

| Kish Ratio (K) | Effective ensemble size after reweighting. | K = 0.10 (retains ~3000 structures from 30,000) | [5] |

| ens_dRMS | Global similarity metric between two ensembles. | Lower values indicate more similar ensembles. | [6] |

| Replica Count (M&M) | Number of replicas for statistical accuracy. | 100 replicas recommended for optimal heterogeneity capture. | [2] |

| Simulation Length (ATLAS) | Standardized MD run time per replicate. | 100 ns (x3 replicates) | [7] |

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: What is the core trade-off between statistical accuracy and computational cost in Molecular Dynamics (MD) simulations? The core trade-off lies in the choice between using highly accurate but computationally expensive ab initio quantum mechanical methods versus faster but less precise empirical force fields. Ab initio methods provide precise results but scale cubically with the number of electrons, making large-scale or long-time simulations impractical. Machine-learned interatomic potentials (MLIPs) have emerged as a promising alternative, offering near-quantum mechanical accuracy while scaling linearly with the number of atoms [11].

Q2: How can I improve the sampling of rare conformational transitions without prohibitive computational cost? Enhanced sampling methods focus computational power on the transitions between states rather than on thermal fluctuations within metastable states. Techniques like Transition Path Sampling (TPS) can sample the transition path ensemble without requiring pre-defined collective variables. Furthermore, integrating machine learning with quantum computing offers a novel approach, using a quantum annealer to generate uncorrelated transition paths efficiently, thus addressing a key sampling challenge [12].

Q3: My MD ensemble does not match experimental NMR data. How can I reconcile them? This is a common challenge due to force field inaccuracies or sampling limitations. A best practice is to integrate the two methods: use experimental NMR data as restraints or reweighting criteria for your MD simulations. Recent advancements include statistical reweighting techniques and AI-assisted methods to enhance sampling efficiency and ensemble construction, yielding a more accurate and complete understanding of dynamic conformational ensembles [13].

Q4: What strategies exist for building accurate and computationally efficient Machine-Learned Interatomic Potentials (MLIPs)? Building an application-specific MLIP involves a multi-objective optimization. Key strategies include:

- Training Set Precision: Using reduced-precision Density Functional Theory (DFT) calculations for training data can drastically reduce costs with minimal accuracy loss if energy and force contributions are appropriately weighted during training [11].

- Training Set Size: Employing systematic sub-sampling techniques to identify the most informative atomic configurations, thereby reducing the required training set size [11].

- Model Complexity: Choosing a less complex MLIP architecture (like a linear Atomic Cluster Expansion) can significantly reduce evaluation cost, which is crucial for large-scale or long-time simulations [11].

Q5: Can a general-purpose neural network potential be accurate for specific high-energy materials (HEMs)? Yes. Studies have shown that a general neural network potential (NNP) for C, H, N, and O-based HEMs can be developed using transfer learning. This approach leverages a pre-trained model and minimal new data from DFT calculations to achieve DFT-level accuracy in predicting structures, mechanical properties, and decomposition characteristics for a wide range of specific HEMs [14].

Troubleshooting Guides

Problem: Inadequate Sampling of Rare Events

| Symptom | Possible Cause | Solution |

|---|---|---|

| The system gets trapped in a metastable state and fails to observe the transition of interest within the simulation timeframe. | The free energy barrier between states is too high for spontaneous crossing at the simulated time scale. | Implement an enhanced sampling method. Use Transition Path Sampling (TPS) to focus on reactive trajectories without defining collective variables [12]. For very complex systems, explore hybrid ML/Quantum computing algorithms to generate uncorrelated transition paths [12]. |

Problem: Discrepancy Between Simulated Conformational Ensemble and Experimental Data

| Symptom | Possible Cause | Solution |

|---|---|---|

| The structural ensemble generated by MD simulations is inconsistent with ensemble-averaged, site-specific data from techniques like NMR spectroscopy. | Force field inaccuracies or incomplete sampling of the conformational landscape. | Integrate MD with experimental data. Use NMR data as restraints in simulations or apply statistical reweighting techniques to bias the ensemble toward structures that match the experimental observables [13]. |

Problem: High Computational Cost ofAb InitioAccuracy

| Symptom | Possible Cause | Solution |

|---|---|---|

| Achieving quantum-mechanical accuracy for large systems or long time scales is computationally prohibitive. | The cubic scaling of ab initio methods with the number of electrons limits their application. | Adopt Machine-Learned Interatomic Potentials (MLIPs). For application-specific needs, optimize the trade-off by considering a less complex MLIP architecture and a smaller, lower-precision DFT training set to reduce overall computational cost [11]. |

Quantitative Data and Methodologies

The following table summarizes how different levels of precision in Density Functional Theory (DFT) calculations impact the computational cost for generating training data for MLIPs. This illustrates the direct trade-off between precision and cost [11].

| Precision Level | k-point spacing (Å⁻¹) | Energy cut-off (eV) | Average Simulation Time per Configuration (seconds) |

|---|---|---|---|

| 1 (Lowest) | Gamma Point only | 300 | 8.33 |

| 2 | 1.00 | 300 | 10.02 |

| 3 | 0.75 | 400 | 14.80 |

| 4 | 0.50 | 500 | 19.18 |

| 5 | 0.25 | 700 | 91.99 |

| 6 (Highest) | 0.10 | 900 | 996.14 |

Experimental Protocol: Integrating MD and NMR for IDP Ensembles

Aim: To characterize the structural and dynamic properties of Intrinsically Disordered Proteins (IDPs) [13].

- Data Generation:

- Perform all-atom Molecular Dynamics (MD) simulations to generate atomically detailed trajectories.

- Acquire ensemble-averaged, site-specific structural and dynamic information using Nuclear Magnetic Resonance (NMR) spectroscopy.

- Integration and Analysis:

- Use the NMR data as restraints for subsequent MD simulations or as criteria to reweight the generated MD ensemble.

- Apply statistical reweighting techniques or AI-assisted methods to refine the ensemble, ensuring it agrees with the experimental data.

- Validation:

- The accuracy of the final conformational ensemble is validated by its ability to back-calculate the experimental NMR parameters.

Experimental Protocol: Sampling Transitions with a Quantum Computer

Aim: To sample rare conformational transition paths efficiently using a hybrid quantum-classical algorithm [12].

- Exploration of Configuration Space:

- Use Intrinsic Map Dynamics (iMapD), a method combining MD and machine learning (diffusion maps), to perform an uncharted exploration and generate a sparse set of molecular configurations on the intrinsic manifold.

- Coarse-Graining:

- Derive a coarse-grained representation of the molecular dynamics based on the explored configurations. This defines transition paths as sequences of visited sub-regions on the manifold.

- Quantum Path Sampling:

- Encode the path sampling problem onto a quantum annealer (e.g., a D-Wave machine).

- The quantum computer generates new, uncorrelated transition paths.

- Classical Acceptance:

- A classical computer accepts or rejects the proposed quantum-generated paths based on a Metropolis criterion, combining the statistical mechanics of the path ensemble with the physics of the quantum annealer.

Visual Workflows and Pathways

Workflow for a Hybrid Quantum-Classical MD Sampling Algorithm

Optimization Strategy for Machine-Learned Interatomic Potentials

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Reagent | Function in Research |

|---|---|

| Density Functional Theory (DFT) | Provides high-accuracy reference data for energies and forces used to train MLIPs. The precision of its numerical parameters (cut-off energy, k-points) is a primary lever in the accuracy/cost trade-off [11]. |

| Machine-Learned Interatomic Potentials (MLIPs) | Serves as a force field for MD simulations, aiming for near-DFT accuracy at a fraction of the computational cost. They are trained on DFT data and can be tailored for specific applications [14] [11]. |

| Deep Potential (DP) | A specific and scalable framework for building neural network potentials (NNPs) capable of modeling complex reactive processes and large-scale systems with DFT-level precision [14]. |

| Spectral Neighbor Analysis Potential (qSNAP) | A specific type of MLIP that uses linear and quadratic combinations of bispectrum components as descriptors. It offers a good balance between accuracy and computational efficiency [11]. |

| Nuclear Magnetic Resonance (NMR) Spectroscopy | Provides experimental, ensemble-averaged, and site-specific data on protein structure and dynamics. This data is crucial for validating and refining conformational ensembles generated by MD simulations [13]. |

Your Troubleshooting Guide for Ensemble Validation

This guide provides solutions for researchers validating molecular dynamics (MD) conformational ensembles of intrinsically disordered proteins (IDPs) against key experimental data from Nuclear Magnetic Resonance (NMR) and Small-Angle X-ray Scattering (SAXS).

Common Problems & Solutions

Problem: Poor agreement between your MD ensemble and NMR chemical shifts.

- Potential Cause 1: Inaccuracies in the molecular mechanics force field. Some force fields may over- or under-stabilize certain secondary structure elements in IDPs [5].

- Solution: Utilize a maximum entropy reweighting procedure. Integrate your simulation with experimental NMR data by reweighting the ensemble to match the data with minimal bias, improving accuracy without re-running simulations [5].

- Potential Cause 2: Inadequate conformational sampling.

- Solution: Consider enhanced sampling methods like Replica Exchange Solute Tempering (REST), which can provide a more robust reference ensemble for validation [1].

Problem: Your SAXS-derived radius of gyration (Rg) does not match the value back-calculated from your MD ensemble.

- Potential Cause 1: The ensemble may be too compact or too expanded compared to the true solution state [15].

- Solution: Use the SAXS data as a restraint during ensemble generation or refinement. Methods like AlphaFold-Metainference incorporate predicted distances into simulations to generate ensembles that agree with SAXS data [15].

- Potential Cause 2: The SAXS data was processed or back-calculated incorrectly.

- Solution: Use dedicated software like SasView to process your SAXS data and calculate the distance distribution function P(r) and Rg. Ensure your back-calculation from the simulation trajectory uses the same parameters [16].

Problem: AlphaFold2's single structure output is a poor representation of your IDP.

- Potential Cause: AlphaFold2 is trained on folded proteins and often outputs a single, static structure, which is inappropriate for describing a heterogeneous conformational ensemble [15] [17].

- Solution: Use the AlphaFold-Metainference approach. This method uses inter-residue distances predicted by AlphaFold2 as soft restraints in MD simulations to generate a structural ensemble that is consistent with the deep learning model's information [15].

Problem: Poor shimming results in broad NMR lineshapes, reducing data quality.

- Potential Cause: Inhomogeneous magnetic field due to sample issues (e.g., air bubbles, insoluble substances) or poor shim settings [18].

- Solution:

- Ensure your sample volume is sufficient and homogeneous.

- Start from a good shim file. Use the command

rshto load the latest 3D shim file for your probe [18]. - Manually optimize higher-order shims (e.g., Z, X, Y, XZ, YZ) if automated shimming fails [18].

- For a quantitative lineshape test, the full width at half height (50%) should be below 1.0 Hz [19].

Problem: ADC overflow error during NMR data acquisition.

- Potential Cause: The receiver gain (RG) was set too high [18].

- Solution:

Key Experimental Observables for Validation

The following table summarizes the primary experimental observables used to validate and refine conformational ensembles.

| Observable | Experimental Technique | Key Benchmarking Application | Considerations for Integration |

|---|---|---|---|

| Chemical Shifts [5] | NMR | Sensitive probes of local backbone conformation and secondary structure propensity. | Can be back-calculated from ensembles using tools like CamShift [15]. |

| Scalar Couplings [5] | NMR | Provides information on backbone dihedral angles (e.g., φ-angles). | Used as structural restraints in ensemble generation and validation. |

| Paramagnetic Relaxation Enhancement (PRE) [15] | NMR | Reports on long-range distances and transient contacts in an ensemble. | The presence of spin labels can potentially perturb the native ensemble [15]. |

| Residual Dipolar Couplings (RDCs) [5] | NMR | Provides information on the global orientation of bond vectors. | Requires the protein to be partially aligned in a medium, which may affect the IDP [5]. |

| Radius of Gyration (Rg) [15] | SAXS | A single parameter describing the global compactness of the molecule. | Easily calculated from an MD ensemble for direct comparison. |

| Pair-wise Distance Distribution, P(r) [15] | SAXS | Provides a histogram of all atom-atom distances within the molecule, offering a rich source of structural information. | Can be directly compared to the P(r) function derived from an SAXS profile [15]. |

Essential Research Reagent Solutions

This table lists key materials, software, and methods crucial for conducting research in this field.

| Item | Function / Application | Specifications / Examples |

|---|---|---|

| SAXS Analysis Software [16] | Analyzes SAXS data to determine parameters like Rg and the pair-distance distribution function P(r). | SasView: Fits models to SAS data; calculates scattering length densities and distance distribution functions [16]. |

| MD Reweighting Protocol [5] | Integrates experimental data with MD simulations to produce a more accurate conformational ensemble. | Maximum Entropy Reweighting: A robust, automated procedure that uses NMR and SAXS data to reweight an existing MD ensemble with minimal bias [5]. |

| NMR Test Samples [19] | Used for routine quality control (QA-QC) of the NMR spectrometer to ensure optimal performance for data collection. | 0.1% Ethylbenzene in CDCl3: For 1H sensitivity measurement. 1% CHCl3 in Acetone-d6: For 1H lineshape measurement [19]. |

| Enhanced Sampling MD [1] | Improves the sampling of conformational space for complex systems like IDPs. | Replica Exchange Solute Tempering (REST): A method that enhances conformational sampling and can serve as a reference for validating faster protocols [1]. |

| Ensemble Generator [1] | Rapidly generates initial conformational ensembles for IDPs. | Probabilistic MD Chain Growth (PMD-CG): Builds ensembles using statistical data from tripeptide MD trajectories, providing a quick starting point for refinement [1]. |

| Deep Learning Integration [15] | Generates structural ensembles of disordered proteins using deep learning predictions. | AlphaFold-Metainference: Uses AlphaFold-predicted distances as restraints in MD simulations to construct ensembles [15]. |

Workflow: Integrating MD and Experiment

This diagram illustrates the core workflow for determining accurate conformational ensembles by integrating molecular dynamics simulations with experimental data.

NMR Spectrometer Troubleshooting

This flowchart provides a systematic approach to diagnosing and resolving common NMR spectrometer performance issues.

Frequently Asked Questions (FAQs)

Q1: What is the "Force Field Dilemma" in Molecular Dynamics simulations?

The Force Field Dilemma refers to the fundamental challenge in molecular dynamics (MD) simulations where the accuracy of the resulting conformational ensembles is highly dependent on the quality of the physical models (force fields) used to describe interatomic interactions. While MD simulations can provide atomistic details of protein dynamics, their predictive power is limited by mathematical descriptions of physical and chemical forces that may yield biologically meaningless results. This creates ongoing tension between computational efficiency and physical accuracy in biomolecular modeling. [20]

Q2: How do different force fields affect conformational sampling?

Different force fields can produce distinct conformational distributions even when they reproduce experimental averages equally well. Research shows that four major MD packages (AMBER, GROMACS, NAMD, and ilmm) reproduced various experimental observables for proteins like engrailed homeodomain and RNase H equally well overall at room temperature, but revealed subtle differences in underlying conformational distributions and sampling extent. These differences become more pronounced when studying larger amplitude motions, such as thermal unfolding processes, where some packages fail to allow proper unfolding or provide results conflicting with experiment. [20]

Q3: What methods exist to improve force field accuracy for disordered proteins?

For intrinsically disordered proteins (IDPs), accuracy can be improved through integrative approaches that combine MD simulations with experimental data. Recent advances include:

- Maximum entropy reweighting: A robust procedure that integrates all-atom MD simulations with experimental data from NMR spectroscopy and small-angle X-ray scattering (SAXS) to determine accurate atomic-resolution conformational ensembles. [5]

- Enhanced sampling algorithms: Methods like replica exchange solute tempering (REST) and probabilistic MD chain growth (PMD-CG) that improve sampling of the huge conformational space available to IDPs. [1]

- Machine-learned force fields: Approaches like symmetrized gradient-domain machine learning (sGDML) that enable direct construction of flexible molecular force fields from high-level ab initio calculations. [21]

Q4: How can researchers validate force field accuracy?

Force field validation should involve comparison with multiple experimental observables, including:

- NMR chemical shifts and J-couplings

- SAXS profiles

- Residual dipolar couplings

- Hydrogen-deuterium exchange rates

The most compelling measure of force field accuracy is its ability to recapitulate and predict these experimental observables. However, researchers should note that correspondence between simulation and experiment doesn't necessarily validate the entire conformational ensemble, as multiple diverse ensembles may produce averages consistent with experiment. [20]

Troubleshooting Guides

Problem: Discrepancies Between Simulation and Experimental Data

Symptoms:

- Simulated structures consistently deviate from experimental reference data

- Poor agreement with NMR chemical shifts or SAXS profiles

- Incorrect population of secondary structure elements

Diagnosis and Solutions:

Force Field Selection

- Issue: Using an inappropriate force field for your specific system (e.g., applying folded protein force fields to disordered proteins)

- Solution: Switch to modern force fields specifically parameterized for your biomolecular system. Recent improvements include:

Integrative Refinement

- Issue: Force field inaccuracies leading to systematic errors

- Solution: Apply maximum entropy reweighting to refine ensembles against experimental data [5]

Sampling Enhancement

- Issue: Inadequate sampling of relevant conformational space

- Solution: Implement enhanced sampling protocols:

- Replica exchange molecular dynamics (REMD)

- Gaussian accelerated MD (GaMD)

- Replica exchange solute tempering (REST) [1]

Problem: Force Field Selection Confusion

Symptoms:

- Uncertainty about which force field to choose for a specific application

- Contradictory recommendations in literature

- Inconsistent results across different force fields

Decision Framework:

System Characteristics

- Ordered proteins: CHARMM36, AMBER ff99SB-ILDN

- Intrinsically disordered regions: a99SB-disp, CHARMM36m

- Mixed folded/disordered systems: CHARMM36m

Validation Protocol

- Always validate against multiple experimental observables

- Compare conformational distributions, not just averages

- Assess convergence through multiple independent simulations

Experimental Protocols

Protocol 1: Maximum Entropy Reweighting for IDP Ensembles

Purpose: Determine accurate atomic-resolution conformational ensembles of intrinsically disordered proteins by integrating MD simulations with experimental data. [5]

Materials:

- MD Simulation Software: GENESIS, AMBER, GROMACS, or NAMD [22]

- Force Fields: a99SB-disp, CHARMM36m, or CHARMM22* [5]

- Experimental Data: NMR chemical shifts, residual dipolar couplings, SAXS profiles

- Analysis Tools: Maximum entropy reweighting code (available from https://github.com/paulrobustelli/BorthakurMaxEntIDPs_2024/) [5]

Methodology:

System Preparation

- Generate initial coordinates for the IDP of interest

- Solvate in appropriate water model (TIP3P for CHARMM, a99SB-disp water for a99SB-disp)

- Neutralize system with ions

MD Simulation

- Run extended MD simulations (≥30μs recommended)

- Maintain physiological temperature (298K) and pressure

- Use multiple independent replicates

Reweighting Procedure

- Calculate experimental observables for each simulation frame

- Apply maximum entropy principle to adjust weights

- Target Kish ratio of K = 0.10 (retaining ~3000 structures from 29,976) [5]

- Validate with independent experimental data

Expected Results: Force-field independent conformational ensembles that show exceptional agreement with extensive experimental datasets and minimal overfitting.

Protocol 2: Validation Against Experimental Observables

Purpose: Quantitatively assess force field accuracy against experimental measurements. [20]

Materials:

- Test Proteins: Engrailed homeodomain (EnHD, 54 residues) and RNase H (155 residues) [20]

- Force Fields: AMBER ff99SB-ILDN, CHARMM36, Levitt et al. force field [20]

- Software: Multiple MD packages (AMBER, GROMACS, NAMD, ilmm) [20]

Methodology:

Simulation Setup

- Obtain initial coordinates from PDB (1ENH for EnHD, 2RN2 for RNase H)

- Solvate in explicit water with 10Å padding

- Apply periodic boundary conditions

Production Simulations

- Run triplicate 200ns simulations for each force field/package combination

- Maintain experimental conditions (pH 7.0 for EnHD, pH 5.5 for RNase H)

- Use "best practice parameters" for each package [20]

Analysis

- Compare simulations to experimental data

- Assess conformational distributions

- Evaluate sampling completeness

Quantitative Data Tables

Table 1: Force Field Performance for IDP Conformational Ensemble Determination

| Force Field | Water Model | Initial Agreement with Experiment | Convergence After Reweighting | Recommended Use Cases |

|---|---|---|---|---|

| a99SB-disp | a99SB-disp water | Reasonable | High similarity across force fields | IDPs with mixed secondary structure |

| Charmm22* | TIP3P | Reasonable | High similarity across force fields | Disordered regions with helical propensity |

| Charmm36m | TIP3P | Reasonable | High similarity across force fields | Large IDPs and folded-disordered complexes |

Data based on reweighting results for Aβ40, drkN SH3, ACTR, PaaA2, and α-synuclein showing convergence to highly similar conformational distributions after reweighting in favorable cases. [5]

Table 2: Comparison of MD Packages and Force Fields for Ordered Proteins

| MD Package | Force Field | Water Model | Agreement with Experiment (EnHD) | Agreement with Experiment (RNase H) | Sampling Efficiency |

|---|---|---|---|---|---|

| AMBER | ff99SB-ILDN | TIP4P-EW | Good | Good | Moderate |

| GROMACS | ff99SB-ILDN | SPC/E | Good | Good | High |

| NAMD | CHARMM36 | TIP3P | Good | Good | Moderate |

| ilmm | Levitt et al. | TIP3P | Good | Good | Variable |

Data based on 200ns simulations of Engrailed homeodomain and RNase H showing overall good agreement with experimental observables but subtle differences in conformational distributions. [20]

Research Reagent Solutions

Essential Materials for Force Field Validation Studies

| Reagent/Software | Function | Application Notes |

|---|---|---|

| GENESIS MD Software | Highly-parallel MD simulator with enhanced sampling algorithms | Supports QM/MM, atomistic force fields, and coarse-grained models [22] |

| a99SB-disp Force Field | Protein force field with disp water model | Specifically optimized for disordered proteins [5] |

| CHARMM36m Force Field | Modified protein force field | Improved accuracy for membrane proteins and IDPs [5] |

| Maximum Entropy Reweighting Code | Integrative refinement tool | Available from GitHub; automates ensemble refinement with experimental data [5] |

| REST (Replica Exchange Solute Tempering) | Enhanced sampling method | Improves conformational sampling of disordered regions [1] |

Workflow Diagrams

Force Field Selection and Validation Workflow

Conformational Ensemble Validation Protocol

From REST to AI: A Toolkit for Enhanced Conformational Sampling

Frequently Asked Questions (FAQs)

Q1: What is the key difference between REST1 and REST2, and why is REST2 often preferred?

REST2 uses a modified Hamiltonian scaling that specifically lowers energy barriers for the solute, leading to more efficient sampling of large conformational changes, such as protein folding. The key difference lies in the scaling of the protein-water interaction term (Epw). This change, along with the selective scaling of dihedral angles, results in a better acceptance probability and more effective exploration of the protein's conformational landscape compared to REST1 [23].

Q2: My GaMD simulation is not implemented in my main MD software (e.g., GROMACS). What are my options?

GaMD is not natively implemented in GROMACS, and existing independent branches may be outdated [24]. You have two primary options:

- Use a Plumed Workaround: You can attempt to use the Plumed plugin for GROMACS by setting the total system potential energy as a collective variable for Well-Tempered Meta-Dynamics, which can mimic the GaMD ensemble. However, a significant limitation is that current public versions typically only allow biasing the entire system's potential energy, not specific components like dihedral energies of a protein motif [24].

- Switch Software: For a standard, supported implementation of GaMD, it is recommended to use software like NAMD or AMBER, where the method is fully implemented and validated [24].

Q3: How can I make my conformational ensemble accurate and force-field independent?

To achieve a force-field independent conformational ensemble, integrate your MD simulations with experimental data. A robust method is to use a maximum entropy reweighting procedure. This approach automatically adjusts the weights of structures from an MD simulation to achieve the best agreement with experimental data (e.g., from NMR and SAXS) while introducing minimal bias. When initial MD ensembles are in reasonable agreement with experiments, this reweighting can make ensembles from different force fields converge to highly similar conformational distributions, effectively removing the force field's bias [5].

Q4: What does a low Kish ratio indicate in a reweighted ensemble, and how can I fix it?

A low Kish ratio indicates that only a very small number of conformations from your original simulation are being heavily weighted to match the experimental data. This is a sign of overfitting and poor statistical robustness, meaning your final ensemble is not representative and may have lost the structural diversity sampled by the MD simulation [5]. To fix this:

- Use a higher Kish ratio threshold during reweighting to retain a larger effective ensemble size.

- Ensure your initial MD simulation is long enough to adequately sample the conformational space relevant to the experimental data you are using.

- Review the quality and appropriateness of the experimental data and the forward models used to calculate them from the structures [5].

Troubleshooting Guides

Problem: Poor Replica Exchange Acceptance Rates in REST2 A low acceptance rate defeats the purpose of enhanced sampling.

- Cause 1: The temperature spacing between adjacent replicas is too large.

- Solution: Reduce the temperature difference between replicas. The number of replicas required in REST2 scales with the square root of the solute's degrees of freedom, which is much fewer than standard temperature replica exchange, but they still need to be spaced appropriately [23].

- Cause 2: Inadequate simulation time between exchange attempts.

- Solution: Ensure that the MD run time between exchange attempts is long enough for the replicas to decorrelate.

Problem: Inefficient or Unphysical Sampling in REST1 The simulation gets trapped, or higher-temperature replicas sample unrealistic conformations.

- Cause: The original REST1 scaling does not effectively lower energy barriers for large solutes undergoing big conformational changes [23].

- Solution: Switch to the REST2 protocol. If you must use REST1, consider a variant like Replica Exchange with Flexible Tempering (REFT), where only a specific, functionally relevant part of the protein is "heated," which can improve acceptance and sampling for that region [23].

Problem: GaMD Implementation is Not Available or Too Complex You want to use GaMD but lack a straightforward implementation.

- Cause: GaMD is not a standard feature in all MD packages, and its implementation requires modifying potential energy terms, which is non-trivial [24].

- Solution: As a practical alternative, investigate the Essential Dynamics Sampling method in GROMACS, which is based on a similar conformational flooding formalism. Be aware that this code is dated and not extensively tested. For reliable GaMD results, the most straightforward path is to use AMBER or NAMD [24].

Quantitative Data and Methodologies

Table 1: Key Differences Between REST1 and REST2

| Feature | REST1 (Original) | REST2 (Improved) |

|---|---|---|

| Hamiltonian Scaling | Scales Epp, Epw, and Eww with different factors [23] |

Scales Epp and Epw by (βm/β0), leaves Eww unscaled [23] |

| Effective Solute Temperature | Increased [23] | Increased, with lowered barriers [23] |

| Epw Scaling Factor | (β0 + βm)/(2βm) [23] |

√(βm/β0) [23] |

| Acceptance Probability | Depends on fluctuation of Epp + 1/2 Epw [23] |

Depends on fluctuation of Epp + (β0/(βm+βn)) Epw [23] |

| Performance | Less efficient for large conformational changes [23] | Greatly improved efficiency for folding and large-scale changes [23] |

Table 2: Maximum Entropy Reweighting Parameters and Results

This table summarizes the methodology and outcomes from a study that reweighted ensembles of five IDPs using a maximum entropy approach [5].

| Parameter / Result | Description / Value |

|---|---|

| Initial Ensemble Size | 29,976 structures from 30 µs MD simulations [5] |

| Force Fields Tested | a99SB-disp, Charmm22* (C22*), Charmm36m (C36m) [5] |

| Reweighting Metric | Kish Ratio (K) [5] |

| Target Kish Ratio | K = 0.10 [5] |

| Final Ensemble Size | ~3,000 structures [5] |

| Key Outcome | For 3 of 5 IDPs, reweighted ensembles from different force fields converged to highly similar distributions [5] |

Experimental Protocols and Workflows

Workflow: Determining an Accurate Conformational Ensemble

The following diagram illustrates the integrative process of combining MD simulations with experimental data to produce a refined conformational ensemble [5].

Protocol: Setting Up and Running a REST2 Simulation

A typical workflow for a REST2 simulation, adapted for modern biomolecular simulation packages, involves the following steps [23]:

- System Preparation: Create the initial structure of the protein solvated in a water box. Add necessary ions to neutralize the system.

- Replica Parameterization:

- Choose a temperature of interest (

T0). - Select a set of scaling factors (

βm/β0) that define the effective temperatures for your replicas. The number of replicas should be chosen to ensure good exchange rates and scales withsqrt(fp), wherefpis the number of solute degrees of freedom. - In practice, for replica

m, scale the solute's dihedral force constants, Lennard-Jonesεparameters, and charges by the factor(βm/β0).

- Choose a temperature of interest (

- Equilibration: Run standard energy minimization and equilibration procedures for each replica.

- Production Run:

- Run molecular dynamics for each replica in parallel using its scaled Hamiltonian.

- Periodically attempt to swap the coordinates of neighboring replicas

mandnbased on the acceptance probability determined by the energy differenceΔmn(REST2) = (βm - βn)[(Epp(Xn) - Epp(Xm)) + β0/(βm+βn)(Epw(Xn) - Epw(Xm))][23].

- Analysis: Analyze the combined trajectory from the

T0replica (or use weighted analysis from all replicas) to compute thermodynamic and structural properties.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Force Fields for Advanced Sampling

| Item | Function / Description |

|---|---|

| GROMACS | A high-performance MD software package widely used for simulating biomolecules. It supports many enhanced sampling methods via its own routines or plugins like Plumed [24]. |

| PLUMED | A versatile plugin that enables a vast array of enhanced sampling methods and collective variable analysis in conjunction with MD codes like GROMACS and NAMD [24]. |

| AMBER/NAMD | Alternative MD software packages that offer native support for methods like GaMD, providing a more straightforward implementation path for these specific protocols [24]. |

| a99SB-disp | A protein force field and water model combination shown to provide accurate conformational ensembles for intrinsically disordered proteins (IDPs) [5]. |

| Charmm36m | A widely used protein force field, often combined with the TIP3P water model, known for its good performance for both folded and disordered proteins [5]. |

| MaxEnt Reweighting Code | Custom code (e.g., from GitHub repositories associated with published studies) used to integrate MD simulations with experimental data via the maximum entropy principle [5]. |

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: What is the core principle behind Probabilistic MD Chain Growth (PMD-CG) and how does it accelerate conformational sampling?

PMD-CG is a novel protocol that rapidly constructs conformational ensembles of proteins, especially Intrinsically Disordered Regions (IDRs), by leveraging pre-computed statistical data. Its core principle involves breaking down the protein sequence into all possible consecutive tripeptides. For each unique tripeptide, a comprehensive conformational pool is generated using molecular dynamics (MD) simulations [1] [3]. The full-length protein ensemble is then grown by probabilistically stitching together these local tripeptide conformations. This method is extremely fast because the computationally expensive MD sampling is performed only once for each tripeptide fragment, bypassing the need for lengthy, continuous simulations of the entire protein chain [1].

Q2: My PMD-CG ensemble shows poor agreement with NMR chemical shifts. What could be the source of error?

Disagreement with experimental data like NMR chemical shifts can stem from several sources. First, examine the foundational elements of your protocol. The accuracy of PMD-CG is highly dependent on the quality of the initial tripeptide conformational pools. Ensure that the MD simulations used to generate these pools are sufficiently converged and use a modern, accurate force field [25]. Second, review the chain growth logic; the probabilistic selection of fragments must correctly reflect the sequence context and the conformational preferences of overlapping tripeptides. Finally, consider integrating your ensemble using a maximum entropy reweighting procedure. This approach minimally adjusts the weights of conformations in your PMD-CG ensemble to achieve optimal agreement with experimental data, such as NMR chemical shifts and SAXS profiles, thereby refining the initial model [5].

Q3: When comparing my conformational ensemble to a reference, what metrics should I use beyond the Root Mean Square Deviation (RMSD)?

For flexible and heterogeneous systems like IDPs, the traditional RMSD is often inadequate because it requires structural superimposition, which is not meaningful for ensembles without a stable core [6]. Instead, you should use superimposition-free, distance-based metrics. A key global metric is the ensemble distance Root Mean Square Deviation (ens_dRMS), which calculates the root mean square difference between the medians of Cα-Cα distance distributions for all residue pairs in two ensembles [6]. For local comparisons, you can analyze difference matrices that show how the distance distributions of specific residue pairs vary between ensembles, assessing the statistical significance of these differences with non-parametric tests [6].

Q4: How do tripeptide-based methods like the robotics-inspired approach enhance Monte Carlo sampling?

Tripeptide-based methods represent the protein backbone as a series of interconnected kinematic chains, each corresponding to a tripeptide fragment. This representation enables the use of efficient inverse kinematics solvers from robotics to perform complex backbone moves that preserve bond geometry [26]. Within a Monte Carlo framework, this allows for the implementation of sophisticated "move classes," such as perturbing a single torsion angle and using inverse kinematics to compute a new conformation for the subsequent tripeptide that keeps the ends of the segment fixed (ConRot move). These fixed-end moves are larger and more physically realistic than simple torsion pivots, leading to a higher acceptance rate and a more efficient exploration of conformational space [26].

Troubleshooting Guides

Problem 1: Inefficient Sampling in Monte Carlo Simulations

- Symptoms: The simulation gets trapped in certain conformational states, low acceptance rate for trial moves, or slow convergence of ensemble-averaged properties.

- Possible Causes and Solutions:

- Cause: Over-reliance on simple move classes like single torsion angle perturbations (OneTorsion moves).

- Solution: Implement advanced, tripeptide-based move classes. Combine multiple move classes, such as ConRot, OneParticle, and Hinge moves, which operate on a tripeptide representation and use inverse kinematics to perform larger, concerted motions that maintain local geometry [26].

- Cause: Incorrect perturbation step-sizes (

δparameters) for move classes. - Solution: Calibrate step-sizes so that different move classes produce trial moves with similar magnitudes of atomic displacement. This ensures a balanced and efficient exploration. Refer to established parameters, for example, a backbone torsion step-size (

δb) of 0.02-0.025 radians or a particle rotation (δpr) of 0.003-0.02 radians [26].

Problem 2: Discrepancies Between Simulated and Experimental Ensembles

- Symptoms: Computed observables (e.g., radius of gyration, chemical shifts, SAXS profiles) from your simulation ensemble deviate significantly from experimental measurements.

- Possible Causes and Solutions:

- Cause: Inaccuracies in the underlying molecular mechanics force field.

- Solution: Use a state-of-the-art force field that has been specifically validated for IDPs, such as

a99SB-disp,Charmm36m, orCharmm22*[5]. If possible, employ a machine-learned potential energy surface (ML-PES) trained on high-level quantum chemical data for tripeptide fragments to improve accuracy [25]. - Cause: The simulation ensemble, while physically plausible, does not perfectly match the experimental conditions.

- Solution: Apply a maximum entropy reweighting procedure. This is a fully automated, integrative method that adjusts the statistical weights of conformations in your pre-computed ensemble (from MD or PMD-CG) to achieve the best possible agreement with a comprehensive set of experimental data (NMR, SAXS) without drastically altering the sampled structures [5].

Table 1: Comparison of Sampling Techniques for a 20-residue p53-CTD IDR

| Method | Computational Speed | Key Principle | Agreement with REST (Reference) | Best For |

|---|---|---|---|---|

| PMD-CG | Extremely Fast [1] [3] | Probabilistic chain growth from tripeptide pools [1] [3] | Good agreement with experimental observables [1] [3] | Rapid generation of initial ensembles |

| REST (Replica Exchange Solute Tempering) | Slow (Reference Method) | Enhanced sampling via temperature/solute replicas [1] [3] | Reference method [1] [3] | Generating high-quality reference ensembles |

| Tripeptide-Based Monte Carlo | Fast [26] | Robotics-inspired inverse kinematics on tripeptides [26] | N/A (Study used different test systems) | Efficiently exploring conformational space around a starting structure |

Table 2: Key Diagnostic Metrics for Conformational Ensembles

| Metric | Description | Application | Interpretation |

|---|---|---|---|

| ens_dRMS [6] | Root mean square difference between median Cα-Cα distances of two ensembles [6] | Global ensemble similarity | Lower values indicate more similar ensembles. A value of 0 means identical median distance maps. |

| Difference Matrix [6] | Matrix showing differences in distance distributions for each residue pair [6] | Local, residue-level ensemble comparison | Identifies specific protein regions contributing to global differences. |

| Kish Ratio (K) [5] | Effective ensemble size = (Σwi)² / Σwi² [5] | Assessing reweighting robustness | Measures the number of conformations with significant weight. A high K (e.g., >0.1) indicates minimal overfitting. |

| Radius of Gyration (Rg) | Measure of overall compactness [6] | Characterizing global chain dimensions | Can be calculated from Cα atoms alone and compared with SAXS data. |

Experimental Protocols

Protocol 1: Setting Up a Probabilistic MD Chain Growth (PMD-CG) Simulation

- Tripeptide Identification: Decompose the target protein sequence into every possible consecutive three-residue fragment (tripeptide).

- Conformational Pool Generation: For each unique tripeptide sequence, run an all-atom MD simulation (or enhanced sampling simulation) in explicit solvent to thoroughly sample its conformational space. It is critical to use a well-benchmarked force field for this step [25].

- Database Creation: Store the resulting conformational trajectories for each tripeptide in a database, characterizing the distributions of dihedral angles and distances.

- Chain Assembly: To build a full-length conformation, start from the N-terminus. Probabilistically select a conformation for the first tripeptide from its pool. For the next tripeptide (residues 2-4), select a conformation that is structurally compatible with the C-terminal portion of the previous fragment, ensuring a smooth backbone connection. Repeat this process until the entire chain is built.

- Ensemble Generation: Repeat Step 4 thousands of times to generate a large and statistically representative ensemble of full-length protein conformations.

Protocol 2: Integrating Experimental Data with Maximum Entropy Reweighting

- Generate a Prior Ensemble: Produce an initial conformational ensemble using any method (e.g., long-timescale MD, PMD-CG, Monte Carlo).

- Calculate Observables: Use forward models (software that predicts experimental data from atomic coordinates) to compute the experimental observables (e.g., chemical shifts, J-couplings, SAXS profile, Rg) for every conformation in your prior ensemble [5].

- Define Target and Uncertainty: Specify the experimentally measured values for these observables and their associated experimental uncertainties.

- Run Reweighting Algorithm: Employ a maximum entropy reweighting algorithm. The goal is to find a new set of weights for each conformation in the prior ensemble such that:

- The reweighted ensemble's averaged observables match the experimental targets within uncertainty.

- The Kullback-Leibler divergence (a measure of information loss) from the prior ensemble is minimized—meaning the solution is the least biased one possible.

- Validate the Ensemble: Check the Kish ratio of the reweighted ensemble to ensure it has not overfitted the data. A robust ensemble should retain a significant effective sample size (e.g., K > 0.1) [5]. Analyze the new ensemble to extract biologically relevant insights.

Workflow and Relationship Diagrams

PMD-CG and Ensemble Refinement Workflow

Conformational Ensemble Comparison Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Methods

| Item / Resource | Function / Description | Relevance to PMD-CG & Tripeptide Methods |

|---|---|---|

| Molecular Dynamics Engine (e.g., GROMACS, CHARMM, AMBER) | Performs all-atom simulations to generate conformational pools. | Used to sample the conformational space of individual tripeptides, forming the foundational database for PMD-CG [1] [25]. |

| Machine-Learned Potential (ML-PES) | Provides a highly accurate potential energy surface trained on quantum chemistry data. | Can be used to generate ultra-accurate tripeptide conformational pools, improving the physical realism of the PMD-CG starting point [25]. |

| Maximum Entropy Reweighting Software (e.g., custom scripts from [5]) | Integrates simulation ensembles with experimental data. | Refines initial PMD-CG or MD ensembles to achieve quantitative agreement with NMR and SAXS data, ensuring statistical accuracy [5]. |

| Ensemble Comparison Metrics (ens_dRMS, Difference Matrices) | Quantifies similarity between different conformational ensembles. | Essential for validating PMD-CG ensembles against reference methods (e.g., REST) and for benchmarking against experimental data [6]. |

| Tripeptide Conformational Database | A curated collection of sampled structures for all possible tripeptides. | The core "reagent" that enables the rapid assembly phase of the PMD-CG protocol [1] [3]. |

Troubleshooting Guide: Common ICoN Implementation Issues

This section addresses specific technical challenges researchers may face when deploying the Internal Coordinate Net (ICoN) model for sampling conformational ensembles of highly dynamic proteins.

Table 1: Common ICoN Errors and Solutions

| Problem Description | Potential Cause | Solution Steps | Verification Method |

|---|---|---|---|

| High reconstruction RMSD in generated conformations, particularly for larger proteins (>60 residues). | Insufficient training data or model complexity. The dimension of the latent space may be too small for the protein size [27]. | 1. Increase training dataset size to at least 20-30% of a long MD simulation [27]. 2. Scale latent space dimension with protein size (e.g., ~0.75 × number of residues) [27]. 3. For proteins like ChiZ (64 residues), ensure reconstruction RMSD is below ~8.3 Å [27]. | Calculate RMSD between a subset of generated structures and reference MD simulation frames. |

| Poor agreement with experimental data (e.g., SAXS, NMR) after reweighting. | The generated ensemble may not fully cover the biologically relevant conformational space sampled in solution [5]. | 1. Integrate the ensemble using a maximum entropy reweighting procedure with a Kish Ratio threshold (e.g., K=0.10) to match experimental data [5]. 2. Use multiple experimental restraints (NMR chemical shifts, SAXS) concurrently to improve accuracy [5]. | Check the χ² value between experimental data and back-calculated data from the reweighted ensemble [5]. |

| Latent space interpolation produces unrealistic or non-physical conformations. | Linear interpolation in latent space may traverse regions not supported by the training data's underlying distribution [28]. | 1. Select interpolating data points by modeling the latent space as a multivariate Gaussian distribution [27]. 2. Sample new latent vectors directly from this defined Gaussian distribution rather than using simple linear paths [27]. | Visually inspect interpolated conformations for steric clashes or unnatural bond angles using molecular visualization software. |

| Inability to identify novel conformations not present in the training MD data. | The model may be under-sampling the latent space or overfitting to the training set [28]. | 1. Systematically sample from the extremes of the latent Gaussian distribution [28]. 2. Analyze generated clusters for distinct sidechain rearrangements and validate with orthogonal data like EPR studies [28]. | Compare generated synthetic conformations with training set frames using clustering analysis (e.g., RMSD-based). |

Frequently Asked Questions (FAQs)

Q1: What is the minimum amount of Molecular Dynamics (MD) data required to effectively train ICoN for a new protein?

The required MD data depends on the protein's size and intrinsic disorder. For smaller IDPs like a 15-residue polyglutamine (Q15), training on as little as 5-10% of an MD simulation (corresponding to ~95-190 ns) can yield reasonable results (average reconstruction RMSD <5 Å). For medium-sized proteins like Aβ40 (40 residues), using 20% of the MD data for training is recommended to achieve an average reconstruction RMSD of ~6.0 Å. For larger proteins like the 64-residue ChiZ, more extensive training data is necessary [27].

Q2: How can I validate that the conformational ensemble generated by ICoN is physically accurate and not just an artifact of the model?

Validation should be a multi-faceted process:

- Internal Validation: Ensure the generated ensemble covers the conformational space of a long, reference MD simulation that was not used for training [27].

- Experimental Validation: Compare your results with experimental data. A robust method is to use a maximum entropy reweighting procedure to integrate the ensemble with experimental observables from NMR spectroscopy and SAXS. In favorable cases, ensembles reweighted with extensive data converge to highly similar, force-field-independent distributions, indicating physical accuracy [5].

- Statistical Validation: Use the Kish ratio (a measure of the effective ensemble size) to ensure the reweighted ensemble does not overfit the experimental data and retains statistical robustness. A Kish ratio of 0.10 is a typical target, meaning the final ensemble effectively contains about 10% of the original frames [5].

Q3: Our goal is drug discovery. Can ICoN-generated ensembles be used for structure-based drug design on dynamic targets?

Yes, this is a primary application. For dynamic proteins like the SARS-CoV-2 Spike protein, deep learning models that analyze conformational ensembles (like ICoN) can discriminate subtle conformational changes induced by point mutations. These changes are linked to functional impacts like increased infectivity and reduced immunogenicity. Identifying these patterns helps in anticipating high-risk variants and can inform the design of therapeutics and vaccines that target specific conformational states [29].

Experimental Protocol: Building an ICoN Workflow

This protocol outlines the key steps for generating and validating a conformational ensemble using the ICoN framework, integrating methodologies from recent literature [28] [5] [27].

1. Data Preparation and MD Simulation

- Objective: Generate a foundational conformational ensemble for training.

- Procedure:

- Run all-atom Molecular Dynamics (MD) simulations of the target protein using a modern force field (e.g., a99SB-disp, Charmm36m) [5].

- Ensure simulation length is sufficient to capture relevant dynamics. For IDPs, this often requires 10s of microseconds [27].

- Extract frames at regular intervals (e.g., every 10-20 ps) to create the training dataset.

2. ICoN Model Training

- Objective: Train the deep learning model to learn the internal coordinates and physical principles of conformational changes from the MD data.

- Procedure:

- Represent protein conformations in internal coordinates (dihedrals, angles, bonds) as input for ICoN [28].

- Project the high-dimensional conformational data into a reduced-dimensional latent space.

- Train the model to minimize the reconstruction error, allowing it to learn a compressed representation of the protein's dynamics.

3. Conformation Generation and Sampling

- Objective: Generate a comprehensive, synthetic conformational ensemble.

- Procedure:

4. Integrative Reweighting with Experimental Data

- Objective: Refine the generated ensemble to achieve maximum accuracy and agreement with real-world data.

- Procedure:

- Collect experimental data, such as NMR chemical shifts and SAXS profiles [5].

- Use a maximum entropy reweighting procedure to adjust the weights of conformations in your generated ensemble. The goal is to achieve the best possible agreement with the experimental data while making the minimal necessary perturbation to the original ensemble [5].

- Use a target Kish ratio (e.g., K=0.10) to automatically balance the restraints from different experimental datasets and prevent overfitting [5].

5. Ensemble Validation and Analysis

- Objective: Confirm the biological relevance and utility of the final ensemble.

- Procedure:

- Cluster Analysis: Perform clustering (e.g., based on RMSD) on the reweighted ensemble to identify dominant conformational states [28].

- Rationalize Findings: Correlate identified clusters with experimental findings. For example, specific clusters may explain EPR data or the effects of amino acid substitutions [28].

- Identify Novel States: Search the generated ensemble for conformations with distinct structural features (e.g., unique side-chain interactions) that were not present in the original MD training data [28].

ICoN Experimental Workflow

Research Reagent Solutions

Table 2: Essential Computational Tools and Resources

| Item | Function in Workflow | Examples & Notes |

|---|---|---|

| MD Simulation Software | Generates the initial atomic-resolution conformational ensemble for training. | GROMACS, AMBER, CHARMM, NAMD. Use with modern force fields like a99SB-disp or Charmm36m for IDPs [5]. |

| Generative Deep Learning Framework | Provides the environment to build, train, and deploy the ICoN model. | TensorFlow, PyTorch. Custom code is required to implement the ICoN architecture and latent space sampling [28]. |

| Experimental Data (NMR, SAXS) | Serves as experimental restraints for integrative modeling and validation. | NMR chemical shifts, J-couplings, residual dipolar couplings (RDCs), and SAXS profiles are commonly used [5] [27]. |

| Integrative Reweighting Software | Refines the computational ensemble to achieve optimal agreement with experimental data. | In-house scripts implementing maximum entropy reweighting; PLUMED; other bespoke pipelines [5]. |

| Analysis & Visualization Suite | Used for analyzing trajectories, clustering, and visualizing 3D structures. | MDTraj, PyMOL, VMD, UCSF Chimera. Critical for analyzing generated ensembles and comparing to MD data [29]. |

Frequently Asked Questions (FAQs)

Q1: What is the core principle behind maximum entropy reweighting of molecular dynamics (MD) simulations? Maximum entropy reweighting is a computational technique that refines a conformational ensemble obtained from an MD simulation by integrating experimental data. The core principle is to introduce the minimal perturbation to the original simulation-derived weights so that the recalculated ensemble averages of experimental observables (e.g., from NMR or SAXS) agree with the measured data. This approach ensures the final ensemble is as statistically close as possible to the original simulation while being consistent with experiments [30] [31].

Q2: In the context of statistical accuracy, why is reweighting often necessary for conformational ensembles of Intrinsically Disordered Proteins (IDPs)? MD simulations of IDPs are prone to inaccuracies due to force field limitations and finite sampling. Even with state-of-the-art force fields, simulations can sample regions of conformational space that are inconsistent with experimental data. Reweighting corrects the statistical weights of the conformations in the ensemble, leading to a more accurate representation of the true solution ensemble without discarding conformational states, thereby improving the statistical accuracy of the ensemble properties [32] [5] [30].

Q3: My reweighted ensemble fits the experimental data perfectly but has a very low effective ensemble size (Kish ratio). What does this indicate? A low effective ensemble size, often measured by the Kish ratio, indicates that only a very small subset of conformations from the original simulation is assigned significant weight. This is a classic sign of overfitting. The model has likely over-interpreted the experimental data, including its noise, and has become overly specific. To address this, you should relax the restraints or use a Bayesian framework that incorporates uncertainties in the experimental data to prevent the ensemble from collapsing onto too few structures [5] [30] [31].

Q4: How do I choose the appropriate experimental data and the strength of the restraints for reweighting? The choice of data should be guided by the system and the scientific question. NMR chemical shifts, J-couplings, and SAXS data are commonly used. The key is to use multiple independent data types to avoid overfitting to a single observable. For restraint strength, modern automated protocols can balance the influence of different datasets based on a single parameter, such as the desired effective ensemble size, eliminating the need for manual tuning [5]. The Bayesian Maximum Entropy (BME) approach also provides a framework to incorporate experimental uncertainties naturally, which helps determine the optimal restraint strength [31].

Q5: Can maximum entropy reweighting create new conformations that were not present in the original MD simulation? No, a fundamental limitation of reweighting methods is that they cannot generate new conformations. They can only adjust the statistical weights of the conformations already present in the initial ensemble. Therefore, the initial MD simulation must be comprehensive and sample a sufficiently diverse conformational space that includes the biologically relevant states. If key conformations are missing from the initial ensemble, reweighting cannot recover them [30] [33].

Q6: What are the indicators of a successful and statistically robust reweighting procedure? A successful reweighting procedure is indicated by:

- Good agreement with experimental data: The reweighted ensemble should accurately back-calculate the experimental data used for reweighting.

- Reasonable effective ensemble size: The Kish ratio should not be too low, indicating that a meaningful number of conformations contribute to the ensemble.

- Validation with unused data: The ensemble should be able to predict experimental observables that were not used in the reweighting process.

- Force-field convergence: In favorable cases, reweighting simulations from different force fields with the same experimental data should lead to highly similar conformational distributions, suggesting the result is force-field independent [32] [5].

Troubleshooting Guides

Issue 1: Poor Agreement with Experimental Data After Reweighting

Problem: After performing the reweighting procedure, the calculated averages from the ensemble still show significant disagreement with the target experimental data.

Possible Causes and Solutions:

- Cause 1: Inadequate initial sampling. The original MD simulation did not sample the conformational space relevant to the experimental conditions.

- Cause 2: Systematic force field bias. The physical model (force field) used for the simulation has a fundamental inaccuracy that cannot be corrected by reweighting a single simulation.

- Cause 3: Incorrect forward model. The function used to calculate the experimental observable from an atomic structure is inaccurate or uses an inappropriate averaging scheme.

- Solution: Verify the forward model. For example, ensure that Nuclear Overhauser Effect (NOE)-derived distances are averaged with an r$^{-6}$ scheme, while chemical shifts are averaged linearly [30].

Issue 2: Overfitting and Ensemble Collapse

Problem: The reweighted ensemble achieves excellent agreement with the experimental data but has a very low effective ensemble size (Kish ratio), meaning it is dominated by a handful of conformations.

Possible Causes and Solutions:

- Cause 1: Restraints are too strong. The parameters controlling the strength of the experimental restraints are set too high, forcing the algorithm to select a few conformations that perfectly fit the data, including its noise.

- Solution: Use a method that automatically balances restraints, such as setting a target for the effective ensemble size (Kish ratio) [5]. In a Bayesian framework, ensure that experimental uncertainties (errors) are properly set, as this naturally prevents overfitting by making the restraints softer [31].

- Cause 2: Experimental data is over-interpreted. The assumed experimental error is smaller than the true uncertainty.

- Solution: Re-evaluate the experimental error estimates. Increase the uncertainties in the reweighting procedure to allow for a more diverse ensemble that still agrees with the data within its error margins [31].

Issue 3: Inconsistent Results with Different Initial Ensembles

Problem: Reweighting different MD simulations (e.g., from different force fields) for the same system leads to significantly different final ensembles.

Possible Causes and Solutions:

- Cause 1: Divergent initial conformational sampling. The different force fields sample fundamentally different regions of conformational space, and the experimental data is insufficient to guide them to a consensus.

- Solution: This indicates a challenging system. Increase the amount and type of experimental data used for reweighting. If the ensembles remain divergent, it may highlight a genuine force field issue, and the ensemble that shows the best agreement with a broad set of validation data should be trusted [5].

- Cause 2: Insufficient experimental restraints. The experimental data used is too sparse to uniquely define the conformational ensemble.

Experimental Protocols & Workflows

Protocol 1: Determining an Accurate Conformational Ensemble for an IDP

This protocol is adapted from the integrative method demonstrated by Borthakur et al. [32] [5].

- System Preparation: Obtain or create the atomic model of the IDP.

- Molecular Dynamics Simulation:

- Perform long-timescale (e.g., microseconds) all-atom MD simulations using a state-of-the-art force field for disordered proteins (e.g., a99SB-disp, CHARMM36m).

- Ensure adequate sampling by using enhanced sampling techniques like REST if necessary [1].

- Save thousands of snapshots to build the initial conformational ensemble.

- Experimental Data Collection:

- Acquire ensemble-averaged experimental data. For IDPs, this typically includes:

- NMR spectroscopy: Chemical shifts, scalar J-couplings, Residual Dipolar Couplings (RDCs), and Paramagnetic Relaxation Enhancement (PRE) data.

- Small-Angle X-Ray Scattering (SAXS): Scattering profile providing information on overall shape and dimensions.

- Acquire ensemble-averaged experimental data. For IDPs, this typically includes:

- Calculating Observables: Use appropriate forward models to calculate the theoretical value for each experimental observable from every snapshot in the MD ensemble.

- Maximum Entropy Reweighting:

- Apply a maximum entropy reweighting algorithm to adjust the weights of each snapshot.

- The goal is to minimize the discrepancy between the calculated (ensemble-averaged) and experimental observables while maximizing the entropy relative to the original simulation.

- Use a single parameter, such as a target Kish effective sample size, to automatically balance the restraints [5].

- Validation: Validate the final reweighted ensemble by checking its agreement with experimental data not used in the reweighting process and by ensuring the ensemble remains structurally and dynamically plausible.

The workflow for this integrative modeling approach is summarized in the diagram below:

Protocol 2: Bayesian/Maximum Entropy (BME) Reweighting

This protocol outlines the steps for the BME reweighting approach, as described by Bottaro et al. [31].

- Generate Initial Ensemble: Run an MD simulation to generate an initial ensemble of structures.

- Define Experimental Data and Errors: Compile experimental observables ( O{exp} ) and their associated uncertainties (( \sigma{exp} )).

- Calculate Reference Values: Compute the observables from the initial simulation ensemble (( O_{calc} )).

- Optimize Weights: Minimize a loss function that combines the ( \chi^2 ) agreement with experiment and a Lagrange multiplier (( \theta )) term that penalizes large deviations from the initial weights (relative entropy).

- ( L = \chi^2 - \theta S ), where ( S ) is the relative entropy.

- Cross-Validation: Use cross-validation (e.g., leaving out parts of the data) to determine the optimal value of the hyperparameter ( \theta ) that balances fit and ensemble size.

- Analyze Output: Use the final optimized weights to analyze all other properties of interest from the ensemble.

Research Reagent Solutions

The following table details key computational and experimental "reagents" essential for successful integrative modeling studies.

Table 1: Essential Research Reagents for Integrative Modeling

| Category | Item/Software | Function/Benefit |

|---|---|---|

| MD Simulation Engines | GROMACS [34] | A high-performance molecular dynamics package for simulating biomolecular systems. Widely used for generating initial conformational ensembles. |

| Enhanced Sampling Methods | Replica Exchange Solute Tempering (REST) [1] | An enhanced sampling technique that improves the efficiency of conformational sampling, especially useful for IDPs. |

| Reweighting Software & Code | Bayesian/Maximum Entropy (BME) [31], Custom GitHub Scripts [5] | Software implementations that perform the maximum entropy reweighting of simulation ensembles against experimental data. |