Resolving Inaccurate Conformational Populations in MD Simulations: A Troubleshooting Guide for Biomedical Researchers

Molecular dynamics (MD) simulations are a powerful tool for studying protein dynamics, but their predictive power is often limited by inaccuracies in conformational sampling and population estimates.

Resolving Inaccurate Conformational Populations in MD Simulations: A Troubleshooting Guide for Biomedical Researchers

Abstract

Molecular dynamics (MD) simulations are a powerful tool for studying protein dynamics, but their predictive power is often limited by inaccuracies in conformational sampling and population estimates. This article provides a comprehensive troubleshooting framework for researchers and drug development professionals facing these challenges. It explores the foundational causes of inaccuracies, from force field limitations to insufficient sampling. The guide then details advanced methodological solutions, including enhanced sampling techniques and integrative approaches that leverage experimental data. A dedicated troubleshooting section offers practical optimization protocols, and the article concludes with robust strategies for validating and comparing conformational ensembles against experimental observables. By synthesizing the latest research, this work aims to equip scientists with the knowledge to generate more reliable, quantitatively accurate conformational ensembles for therapeutic design and mechanistic studies.

Understanding the Root Causes of Inaccurate Conformational Sampling

Frequently Asked Questions (FAQs)

Q1: My simulation seems to be stuck in one conformational state. How can I tell if this is a sampling problem or an issue with my force field?

A1: Distinguishing between sampling and force field issues is a critical first step. To diagnose this, run multiple independent simulations starting from different conformational states. If all simulations become trapped in the same state regardless of starting conformation, this suggests a potential force field problem that over-stabilizes that particular state. However, if simulations remain stuck in their respective starting states and fail to transition between them, this indicates a clear sampling problem where the simulation cannot overcome the energy barriers separating the states [1] [2].

Q2: What are the signs that my simulation has not reached equilibrium and my conformational populations are inaccurate?

A2: Several key indicators suggest non-equilibrium sampling:

- Progressive Drift in Observables: When running averages of essential properties (such as RMSD, radius of gyration, or key distances) do not plateau but instead show continuous directional drift over time [2].

- Lack of Reversibility: The system transitions between states but shows asymmetric behavior—transitions occur readily in one direction but rarely or never in the reverse direction [3].

- Inconsistent Distributions: Histograms of essential structural parameters (e.g., dihedral angles) show significant differences between the first and second halves of the simulation trajectory [3].

Q3: For studying intrinsically disordered proteins (IDPs), what special sampling considerations should I take?

A3: IDPs present unique sampling challenges due to their flatter energy landscapes with many local minima [1]. Standard molecular dynamics often fails to generate representative ensembles. You should:

- Implement enhanced sampling methods like replica exchange molecular dynamics (REMD) to ensure adequate exploration of their highly flexible conformational space [1].

- Extend simulation times significantly beyond what is typical for folded proteins, as convergence for IDPs can require exceptionally long timescales even with advanced hardware [1].

- Validate against multiple experimental observables (NMR, SAXS, FRET) since ensemble-averaged measurements alone are insufficient to define the heterogeneous ensemble [1].

Q4: How does the choice of collective variables (CVs) impact the effectiveness of enhanced sampling methods?

A4: The selection of CVs is arguably the most critical factor in enhanced sampling. Poor CV choice leads to several problems:

- Inefficient Sampling: Even with aggressive biasing, the simulation may fail to discover relevant conformational states if the CVs don't capture the true reaction coordinates [4].

- Hidden Barriers: The system may encounter "hidden" free energy barriers in degrees of freedom not covered by the CVs, preventing proper sampling despite apparent convergence in the CV space [4].

- Slow Convergence: Inadequate CVs can cause the simulation to spend excessive time exploring irrelevant regions of configuration space [5].

Recent approaches use machine learning to discover optimal CVs directly from simulation data [5].

Troubleshooting Guides

Problem 1: Inadequate Sampling of Rare Events

Symptoms:

- Failure to observe known biological transitions or conformational changes within the simulation timeframe.

- Poor statistical precision in calculated free energy differences.

- Asymmetric transition rates between states.

Solutions:

Step 1: Implement Accelerated Molecular Dynamics (aMD) aMD applies a bias potential to reduce the time spent in energy minima, accelerating transitions over energy barriers without requiring pre-defined reaction coordinates [6].

Protocol:

- Perform a short conventional MD simulation to estimate the average potential energy and dihedral energy.

- Set the acceleration parameters (boost energy E and tuning parameter α). For dihedral boosting, typical values are E = 1.2-1.3 × Vavg and α = 0.2-0.3 × Vavg, where Vavg is the average dihedral energy [6].

- Run the aMD production simulation using software like NAMD or Amber that supports aMD.

- Apply reweighting algorithms to recover canonical ensemble averages from the biased simulation [6].

Step 2: Utilize Metadynamics For transitions with known progress variables, metadynamics uses a history-dependent bias to push the system away from already visited states [4].

Workflow:

- Identify 1-3 collective variables (CVs) that describe the transition.

- Define the CVs and their parameters in your MD software (e.g., Plumed).

- Set the deposition rate and height for the Gaussian biases.

- Run well-tempered metadynamics for better convergence properties.

- Reconstruct the free energy surface from the bias potential.

Step 3: Combine Multiple Methods Hybrid approaches can overcome limitations of individual methods. For instance, combine aMD (which doesn't require CVs) with CV-based methods like metadynamics to handle both known and unknown barriers [4].

Problem 2: Non-Converged Equilibrium Properties

Symptoms:

- Average properties (e.g., RMSD, energy) continue to drift with simulation time.

- Statistical uncertainties do not decrease with increasing simulation length.

- Property distributions differ significantly between trajectory halves.

Solutions:

Step 1: Quantify Convergence with Structural Decorrelation Time Calculate the structural decorrelation time (τdec) to determine how frequently your simulation generates independent configurations [3].

Protocol:

- Cluster your trajectory structures to define microstates.

- Calculate the autocorrelation function of the state populations.

- Determine τdec as the time required for this correlation to decay.

- Ensure your total simulation time is at least 10-20 times τdec for reliable statistics [3].

Step 2: Apply Multi-Ensemble Approaches Use replica exchange molecular dynamics (REMD) to enhance conformational sampling:

Implementation:

- Run parallel simulations at different temperatures (for T-REMD) or with different Hamiltonian parameters (for H-REMD).

- Periodically attempt exchanges between adjacent replicas based on Metropolis criteria.

- Ensure sufficient overlap between replica ensembles for acceptable exchange rates (20-40%).

- Analyze the lowest-temperature replica for thermodynamic properties.

Step 3: Analyze with Advanced Tools Utilize specialized tools like gmx_RRCS to detect subtle conformational changes that traditional metrics (RMSD, RMSF) might miss by quantitatively analyzing residue-residue contact dynamics [7].

Problem 3: Poor Force Field Transferability

Symptoms:

- Over-stabilization of certain secondary structure elements.

- Incorrect compactness compared to experimental data (e.g., SAXS).

- Failure to reproduce known conformational equilibria.

Solutions:

Step 1: Select Appropriate Force Fields Choose modern force fields parameterized for both folded and disordered states:

- CHARMM36m with modified TIP3P water for proteins [1]

- Latest Amber force fields (e.g., ff19SB) with OPC water model [1]

- Force fields specifically optimized for IDPs [1]

Step 2: Validate Against Experimental Data Compare multiple simulation-derived observables with experimental data:

- NMR chemical shifts and J-couplings

- SAXS scattering profiles

- FRET efficiency distributions

- Spin-label ESR distances

Step 3: Utilize Machine Learning-Accelerated Parameterization For specific systems, consider machine learning approaches to accelerate force field optimization, which can speed up parameter searches by an order of magnitude [8].

Research Reagent Solutions

Table 1: Essential Software Tools for Addressing Sampling Problems

| Tool Name | Primary Function | Application Context |

|---|---|---|

| PLUMED | Enhanced sampling, CV analysis | CV-based methods (metadynamics, umbrella sampling) [4] |

| gmx_RRCS | Residue-residue contact analysis | Detecting subtle conformational changes [7] |

| SSAGES | Advanced sampling and analysis | Multiple enhanced sampling methods [4] |

| Self-Organising Maps (SOM) | Dimensionality reduction for trajectory analysis | Mapping conformational landscapes [9] |

Table 2: Key Methodological Approaches for Different Sampling Challenges

| Method | Mechanism | Best For | Requirements |

|---|---|---|---|

| Accelerated MD (aMD) | Raises energy minima using a bias potential | Systems with unknown reaction coordinates [6] | Basic MD parameters (E, α) [6] |

| Metadynamics | Fills energy wells with repulsive bias | Transitions with known collective variables [4] | Pre-defined CVs [4] |

| Replica Exchange | Parallel simulations at different temperatures | Overcoming rough energy landscapes [1] | Significant computational resources [1] |

| Umbrella Sampling | Restraining potential along a CV | Calculating free energy along a path [4] | Reaction path, windowing setup [4] |

Workflow Visualization

Sampling Problem Diagnosis Workflow

Enhanced Sampling Strategy Selection

Frequently Asked Questions (FAQs)

Q1: Why are my simulation's conformational populations inaccurate, and how can I troubleshoot this?

Inaccurate conformational populations often stem from inherent force field limitations, primarily the treatment of electronic polarization. Most widely used force fields are "additive" or "non-polarizable," meaning they use fixed atomic charges that cannot adapt to changes in the molecular environment [10]. This can lead to poor intramolecular conformational energies and an incorrect balance between intra- and intermolecular interactions, causing errors in polymorph stability rankings that can exceed 6-8 kJ mol⁻¹ [11]. This is a critical issue, as about half of all polymorph pairs are separated by less than 2 kJ mol⁻¹ in lattice energy [11].

Troubleshooting Guide:

- Identify the Nature of the Error: Determine if the inaccuracy is due to incorrect relative energies of different molecular conformers (intramolecular) or flawed packing of correctly modeled conformers (intermolecular).

- Verify Parametrization: For small molecules, ensure parameters were generated for your specific force field. Do not mix parameters from different force fields, as this can cause unphysical behavior [10].

- Consider Advanced Methods: If errors persist, the system may require a polarizable force field or higher-level electronic structure methods like fragment-based MP2D, which have been shown to correct stability rankings for challenging conformational polymorphs [11].

Q2: What is the fundamental difference between additive and polarizable force fields?

The core difference lies in how they model electrostatic interactions.

- Additive (Non-polarizable) Force Fields: Use static, fixed partial atomic charges. The electrostatic energy is a simple sum of Coulombic interactions between these fixed charges. This implicitly treats polarization in a mean-field way, often by overestimating gas-phase dipole moments, but fails to capture the dynamic response of electron density to a changing environment [10].

- Polarizable Force Fields: Explicitly include electronic polarization in their energy function. One approach is the classical Drude oscillator model, where auxiliary particles attached to atoms respond to the local electric field. This allows the charge distribution of a molecule to change adaptively, such as when a ligand binds to a protein or passes through a membrane, providing a more accurate physical representation [10].

Table 1: Comparison of Additive and Polarizable Force Fields

| Feature | Additive Force Fields | Polarizable Force Fields |

|---|---|---|

| Electrostatics | Static, fixed atomic charges | Dynamic, responsive charge distributions |

| Polarization | Treated implicitly, on average | Treated explicitly (e.g., via Drude oscillators) |

| Computational Cost | Lower | Significantly higher |

| Transferability | Limited across different dielectric environments | Better, as charges adapt to the local environment |

| Example Use Case | Standard biomolecular simulation in a uniform solvent | Simulations involving interfaces (e.g., membrane permeation, protein-ligand binding) [10] |

Q3: My simulation fails with atomic clashes or SHAKE convergence errors. What are common causes?

These are common numerical failures in MD simulations. Atomic clashes occur when atom pairs are too close, leading to unrealistically high energies. SHAKE algorithm failures often indicate problems with bond constraints, frequently due to:

- Insufficient Equilibration: The system may not be properly relaxed before production runs.

- Problematic Initial Structures: The starting coordinates may contain steric clashes or strained geometries.

- Inappropriate Input Parameters: An incorrect timestep or constraint settings can prevent convergence [12].

Q4: How should I parametrize a novel small molecule or ligand for simulation?

Parametrizing a new molecule is a critical and non-trivial task. The process involves assigning atomic charges, bond parameters, angle parameters, and dihedral parameters based on the molecule's chemical structure.

- Choose a Target Force Field: Select a force field (e.g., CGenFF, GAFF) compatible with the rest of your system (e.g., protein, water) [10].

- Use Automated Parametrization Tools: Leverage specialized programs to generate initial parameters. Examples include:

- Validation is Crucial: Automated parameters are a starting point and must be validated. This involves comparing computed quantum mechanical (QM) and force field (MM) properties, such as conformational energies and dipole moments, and ensuring the molecule behaves physically in short test simulations [10].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Key Resources for Force Field Development and Validation

| Resource / Tool | Function | Key Features / Notes |

|---|---|---|

| CGenFF [10] | An additive force field for drug-like molecules. | Compatible with the CHARMM biomolecular force field; parameters for novel molecules can be generated via the ParamChem website. |

| GAFF [10] | An additive force field for organic molecules. | Compatible with the AMBER biomolecular force field; parameters can be generated with AnteChamber. |

| Drude Polarizable FF [10] | A polarizable force field based on the classical Drude oscillator. | Provides a more accurate physical model for environments with varying polarity. |

| MP2D [11] | A fragment-based electronic structure method with a dispersion correction. | Used for highly accurate polymorph stability rankings where standard DFT methods fail; overcomes limitations in intramolecular conformational energies. |

| SwissParam [10] | Online tool for parameter generation. | Provides topologies and parameters for small molecules for use with CHARMM and GROMOS force fields. |

Experimental Protocols & Workflows

Workflow 1: Diagnosing Conformational Population Inaccuracies

This workflow outlines a systematic approach to identify the source of inaccuracies in conformational sampling.

Workflow 2: Parametrization of a Novel Small Molecule

This diagram details the established protocol for deriving reliable force field parameters for a new molecule.

Frequently Asked Questions (FAQs)

FAQ 1: What does an "ensemble-averaged observation" mean, and why is it a challenge for MD simulations? Most experimental techniques in structural biology, such as Small-Angle X-Ray Scattering (SAXS) and Förster Resonance Energy Transfer (FRET), do not measure the structure of a single molecule. Instead, the observed signal is an average across millions of molecules in the sample [13]. The challenge is that different underlying conformational distributions can produce the same experimental average. A single, static structure might represent the data well if the ensemble is tightly clustered, but a poor fit can indicate a broad or even multi-modal distribution of conformations that the simulation must capture to be accurate [13].

FAQ 2: My MD simulation seems stable, but its average properties disagree with experiments. Where should I start troubleshooting? Begin by systematically checking your simulation's equilibration and stability, then assess the force field. A poorly equilibrated system, where key properties like density have not stabilized, will produce non-physical ensembles [14]. Ensure that fundamental properties like system density match expected values (e.g., ~1 g/cm³ for water) before trusting conformational sampling [14]. If equilibration is correct, the inaccuracy may stem from the force field's inherent approximations, which can be addressed by using machine-learning force fields [15] or integrating experimental data directly into the simulation [16].

FAQ 3: How can I identify if my simulation is failing to sample a key conformational state? A key sign is the inability to reproduce experimental data that reports on specific states, such as the distance between two residues measured by FRET or the overall molecular dimensions from a SAXS curve [13]. Technically, you can also monitor the time evolution of key structural metrics (e.g., root-mean-square deviation (RMSD), radius of gyration). If these values plateau and never fluctuate beyond a certain range, it suggests the simulation is trapped in a local energy minimum and is not sampling the full conformational landscape [17].

FAQ 4: What are cryptic pockets, and how can MD simulations help find them? Cryptic pockets are potential binding sites on a protein that are not visible in static, experimental structures but can open up due to protein dynamics [17]. They represent crucial drug targets. MD simulations, particularly enhanced sampling methods like accelerated Molecular Dynamics (aMD), can smooth the energy landscape and help the protein transition between states, thereby revealing these hidden pockets that would be missed by standard docking into a single structure [17].

Troubleshooting Guides

Guide 1: Diagnosing and Resolving Inadequate Conformational Sampling

| Symptom | Potential Root Cause | Recommended Solution | Key Tools/Methods |

|---|---|---|---|

| Simulation is trapped in a single conformational state; key observables do not match experiment. | High energy barriers between states; simulation timescale is too short to observe rare transitions [16] [17]. | Implement Enhanced Sampling techniques to accelerate barrier crossing [15]. | Metadynamics, Accelerated MD (aMD), Parallel Tempering [15] [17]. |

| Sampled ensemble is narrow and misses known functional states. | Poor choice of initial structure or insufficient simulation time [15]. | Use Multiple Diverse Starting Structures or perform Extended Sampling [15]. | AlphaFold-predicted conformational ensembles, long-timescale simulations on specialized hardware (e.g., Anton) [15]. |

| Uncertainty in which structural features to bias for enhanced sampling. | Lack of a pre-defined Reaction Coordinate (RC) or Collective Variable (CV) that describes the transition of interest [16]. | Employ Machine Learning to identify relevant CVs from preliminary simulation data [16]. | Time-lagged Independent Component Analysis (tICA), Autoencoders [16]. |

| The simulation reproduces some experimental data but fails on others. | The force field may have systematic inaccuracies for your specific system [16]. | Use Experimental Data Integration to refine the force field or guided sampling [16]. | Maximum Entropy Principle, Bayesian Inference [16]. |

Workflow for Applying Enhanced Sampling: The diagram below outlines a general protocol for setting up an enhanced sampling simulation to overcome inadequate sampling.

Guide 2: Reconciling Simulation Ensembles with Experimental Data

| Symptom | Experimental Technique | Potential Interpretation Challenge | Computational Reconciliation Strategy |

|---|---|---|---|

| Average molecular size/volume from simulation does not match experiment. | SAXS [13] | The same average size can arise from a single compact state, a broad ensemble, or multiple distinct states [13]. | Use Multi-Conformation SAXS Fitting: compute theoretical SAXS curves for simulation snapshots and select/fit a weighted ensemble that matches the experimental curve [13]. |

| Measured distance distribution between two sites differs from simulation. | FRET [13] | A single average distance can hide multiple underlying conformational sub-states. | Project the MD ensemble onto the FRET donor-acceptor distance coordinate and compare the full distance distribution, not just the mean [13]. |

| Key functional states are not populated in the simulated ensemble. | Hydroxyl Radical Footprinting (HRF), HDX-MS [18] | The simulation may lack a key conformational state that is chemically labeled or exchanges in experiment. | Use Experimental Data Integration: introduce biases (e.g., Maximum Entropy) to force the simulation to match experimental labeling/uptake data, thus guiding it towards the correct ensemble [16]. |

| Uncertainty in the biological relevance of the simulated ensemble. | Cryo-EM, Crystallography | A single experimental structure may be an average that is not representative of any major state [13]. | Use the Relaxed Complex Method: dock small molecules into multiple snapshots from the MD ensemble to identify cryptic pockets and validate against binding assays [17]. |

Workflow for Integrating SAXS Data: This diagram illustrates the process of using experimental SAXS data to validate and refine a conformational ensemble from MD simulations.

Experimental Protocols

Protocol 1: Hydroxyl Radical Protein Footprinting (HRF) for Conformational Validation

Hydroxyl Radical Protein Footprinting is a powerful mass spectrometry-based method that provides a "snapshot" of protein solvent accessibility, useful for validating conformational states from MD simulations [18].

1. Principle: Freely diffusing hydroxyl radicals generated in situ oxidize amino acid side chains at a rate proportional to their solvent-accessible surface area. Differences in the apparent oxidation rate between protein samples indicate conformational differences [18].

2. Step-by-Step Methodology:

- Step 1: Oxidation Reaction. Mix the protein of interest with hydroxyl radicals generated by a Van de Graaff accelerator (electron pulse radiolysis) or a KrF excimer laser (photolysis of hydrogen peroxide). The reaction is completed in sub-microseconds, creating a "snapshot" before the protein can conformationally change [18].

- Step 2: Digestion and Clean-up. After oxidation, enzymatically digest the protein with a protease (e.g., trypsin) into peptides. Use liquid chromatography (LC) to clean up the sample and separate peptides [18].

- Step 3: LC-MS/MS Analysis. Analyze the digested peptides using Liquid Chromatography coupled to Tandem Mass Spectrometry.

- Step 4: Data Interpretation. Compare the oxidation patterns (footprints) of your simulation-derived protein sample to a reference standard (e.g., a known stable therapeutic protein). Confirm differences with a secondary technique like circular dichroism spectroscopy [18].

3. Key Advantages:

- Overcomes the back-exchange problem of Hydrogen-Deuterium Exchange (HDX) [18].

- Reaction products are stable, allowing flexible sample handling and archiving [18].

- Applicable to a wide variety of therapeutic proteins and formulations [18].

Protocol 2: The Relaxed Complex Scheme for Drug Discovery

The Relaxed Complex Scheme is an MD-based methodology that explicitly accounts for receptor flexibility to improve the discovery of small-molecule ligands, helping to bridge the gap between static structures and dynamic ensembles [17].

1. Principle: Instead of docking into a single static protein structure, the method uses a diverse ensemble of protein conformations extracted from an MD simulation. This accounts for natural flexibility and reveals cryptic binding pockets [17].

2. Step-by-Step Workflow:

- Step 1: Run MD Simulation. Perform a molecular dynamics simulation of the target protein, preferably using enhanced sampling (e.g., aMD) to ensure adequate exploration of conformational space [17].

- Step 2: Cluster and Select Snapshots. Analyze the resulting trajectory and cluster structurally similar snapshots to identify a set of representative conformations that capture the protein's dynamics [17].

- Step 3: Ensemble Docking. Dock a virtual library of small molecules into the binding site of each representative protein conformation [17].

- Step 4: Analyze and Select Hits. Rank compounds based on their binding affinity and, crucially, their frequency of binding across multiple receptor conformations. A compound that binds well to many different conformations may have a higher chance of being a successful hit [17].

The Scientist's Toolkit: Research Reagent Solutions

| Category | Item | Function/Benefit |

|---|---|---|

| Software & Algorithms | PLUMED | An open-source plugin that enables the implementation of various enhanced sampling methods, including Metadynamics and Umbrella Sampling, by defining Collective Variables (CVs) [16]. |

| AlphaFold2 | A machine-learning system for highly accurate protein structure prediction. Its predicted models or ensembles can serve as excellent starting points for MD simulations [15] [17]. | |

| MARKOV STATE MODELS (MSMs) | A framework for building a quantitative model of the protein's conformational dynamics from many short, distributed MD simulations, helping to resolve long-timescale processes [16]. | |

| Force Fields & Potentials | Machine-Learning Force Fields (e.g., ANI-2x) | A general-purpose force field trained on quantum mechanical data that can model electronic effects and chemical reactions, offering higher accuracy than classical force fields [15]. |

| Maximum Entropy Restraints | A method to incorporate experimental data (e.g., from SAXS or HRF) as soft restraints in a simulation, biasing the ensemble toward agreement with experiment without forcing a single structure [16]. | |

| Hardware & Computing | Graphics Processing Units (GPUs) | Essential for dramatically accelerating the calculations required for MD, making long-timescale and large-system simulations feasible [15]. |

| Specialized Hardware (e.g., Anton) | Supercomputers purpose-built for MD simulations, offering orders-of-magnitude speedups for sampling biologically relevant timescales [15]. | |

| Experimental Validation | Hydroxyl Radical Footprinting (HRF) | Provides a stable, irreversible chemical "snapshot" of solvent accessibility to validate conformational states identified in simulations [18]. |

| SAXS | Provides an ensemble-averaged measurement of a protein's overall size and shape in solution, used to validate the global properties of a simulated conformational ensemble [13]. |

Frequently Asked Questions (FAQs)

FAQ 1: Why does my simulation fail to capture the correct conformational population of my protein? The root cause is often insufficient sampling of the protein's energy landscape. Proteins exist as ensembles of conformations, separated by energy barriers [19]. Standard molecular dynamics (MD) simulations can get trapped in local energy minima for nanoseconds to microseconds, which is often insufficient for observing transitions to other functionally important states [6]. This is particularly problematic for proteins with complex, multi-well energy landscapes.

FAQ 2: How does the protein type itself (intrinsic factor) influence its dynamics? The protein's inherent structural properties critically determine its dynamic behavior.

- Fold and Topology: A protein's three-dimensional structure defines its potential energy landscape, including the locations of energy minima and the heights of the barriers between them [19].

- Intrinsically Disordered Proteins (IDPs): Unlike folded proteins, IDPs lack a stable tertiary structure and sample a heterogeneous ensemble of conformations with low energy barriers between them [19]. Their dynamics are characterized by high flexibility and a lack of deep energy minima.

- Multi-Domain Proteins: In proteins with multiple domains, the linker sequences between domains can encode specific dynamic properties. Some linkers favor particular relative orientations, making information transfer between domains more efficient than a random walk [19].

FAQ 3: What environmental (extrinsic) factors most significantly impact dynamics? The simulation environment must accurately represent physiological conditions to produce realistic dynamics.

- Solvent and Ions: The choice of water model and ion concentration can significantly affect protein stability and conformational sampling. An inaccurate solvent model can distort the protein's energy landscape.

- Membrane Environment: For membrane proteins, the lipid composition is a critical extrinsic factor. The lipid bilayer provides a specific chemical and physical environment that influences protein orientation, stability, and function [6].

- Ligands and Post-Translational Modifications (PTMs): Binding of ligands or the addition of PTMs can alter the protein's conformational landscape by stabilizing specific states (population shift) or even creating new energy minima (induced-fit) [19].

FAQ 4: My simulation shows correlated motions, but how do I know if they are biologically relevant? Correlated motions can form dynamic cross-correlation networks that transmit information through the protein structure [20]. To assess their relevance, you can:

- Compare with Experimental Data: Use NMR data, if available, to validate the observed dynamic networks [19] [20].

- Identify Functional Sites: Check if the correlated residues connect known functional sites, such as an allosteric and an active site, which suggests a potential role in allostery [20].

- Perturb the System: Introduce a mutation or a ligand and re-run the simulation. If the perturbation alters the correlation network in a way that explains a change in function, it reinforces the network's biological relevance [20].

Troubleshooting Guides

Problem 1: Inadequate Conformational Sampling

Symptoms:

- The simulation does not transition between known conformational states.

- High root-mean-square deviation (RMSD) in one trajectory that does not converge.

- Calculated thermodynamic properties (e.g., free energy) do not converge.

Solutions:

- Increase Simulation Time: Extend the simulation length to increase the probability of overcoming energy barriers. This is the most straightforward but computationally expensive solution.

- Use Enhanced Sampling Methods: Employ techniques like Accelerated Molecular Dynamics (aMD). aMD adds a bias potential to the true potential energy when the system is below a defined energy threshold, effectively "filling" energy wells and allowing the system to cross barriers more frequently [6].

- Protocol: The bias potential in aMD is defined as ΔV(r) = (E - V(r))² / (α + (E - V(r))), where E is the boost energy and α is a tuning parameter that determines the roughness of the modified landscape [6].

- Considerations: Parameters E and α must be chosen carefully. An overly aggressive boost (high E, low α) can lead to a distorted energy landscape and a "random walk," while a weak boost may not provide sufficient acceleration [6].

- Replicate Simulations: Run multiple independent simulations (replicas) starting from different initial velocities. This helps in sampling different regions of the energy landscape and provides statistics on the reproducibility of observed events.

Problem 2: Unrealistic Dynamics Due to Force Field Selection

Symptoms:

- Protein unfolds or adopts non-native conformations under conditions where it should be stable.

- Unphysical interactions between side chains.

- Discrepancy between simulated flexibility and experimental B-factors or NMR data.

Solutions:

- Choose an Appropriate Force Field: Select a force field that has been validated for your specific protein class.

- Class 1 Force Fields: Include AMBER, CHARMM, GROMOS, and OPLS. They use harmonic potentials for bonds and angles and are standard for most biomolecular simulations [21].

- Class 2 Force Fields: Include MMFF94 and UFF. They add anharmonic terms and cross-terms for more accuracy [21].

- Class 3 Force Fields (Polarizable): Include AMOEBA and DRUDE. They explicitly model electronic polarization, which is critical in environments with strong electrostatic fields, but are computationally expensive [21].

- Consult Recent Literature: Investigate which force fields have been successfully used in recent studies on proteins similar to yours.

Problem 3: Incorrect Representation of the Biological Environment

Symptoms:

- A membrane protein that becomes unstable or denatures.

- A soluble protein that fails to bind a ligand due to incorrect protonation states.

- General instability not attributable to the force field or sampling.

Solutions:

- For Membrane Proteins: Embed the protein in a realistic lipid bilayer. Ensure the membrane composition matches the biological context as closely as possible [6].

- Determine Protonation States: Use tools to calculate the protonation states of titratable residues (e.g., Asp, Glu, His, Lys) at the desired simulation pH. Incorrect protonation can lead to unrealistic electrostatic interactions.

- Use Correct Ion Concentrations: Add physiological concentrations of ions to neutralize the system's charge and mimic the ionic strength of the cellular environment.

Key Data for Troubleshooting

Table 1: Comparison of Force Field Classes

| Force Field Class | Examples | Key Features | Best Use Cases |

|---|---|---|---|

| Class 1 | AMBER, CHARMM, GROMOS, OPLS [21] | Harmonic bonds/angles; computational efficiency [21] | Standard simulations of proteins, DNA, lipids [21] |

| Class 2 | MMFF94, UFF [21] | Anharmonic terms; cross-terms [21] | More accurate small molecule energetics [21] |

| Class 3 (Polarizable) | AMOEBA, DRUDE [21] | Explicit polarization; higher accuracy [21] | Systems with strong electrostatic fields [21] |

Table 2: Accelerated MD (aMD) Parameters and Their Effects

| Parameter | Description | Impact on Simulation |

|---|---|---|

| Boost Energy (E) | Energy threshold above which bias is applied [6] | High E: More aggressive acceleration, risk of landscape distortion. Low E: Less acceleration, possible trapping [6] |

| Tuning Parameter (α) | Controls the depth of the modified potential energy basin [6] | High α: Preserves landscape shape. Low α: Creates flatter basins, more acceleration [6] |

| Boost Factor | ⟨eβΔV[r(ti)]⟩, measure of acceleration [6] | A higher boost factor indicates greater acceleration but requires more care in reweighting for accurate thermodynamics [6] |

Table 3: Key Environmental Factors in Simulation Setup

| Extrinsic Factor | Consideration | Impact on Protein Dynamics |

|---|---|---|

| Water Model | TIP3P, SPC/E, TIP4P-EW | Affects solvation, ion coordination, and protein stability |

| Ions | Type (Na+, K+, Cl-, Ca2+) and concentration | Neutralizes charge, screens electrostatic interactions, can be structurally important |

| Lipid Bilayer | Composition (e.g., POPC, DOPC) | Provides a native environment for membrane proteins, influences protein orientation and dynamics [6] |

| Temperature & Pressure | Controlled by thermostats and barostats | Maintains correct thermodynamic ensemble; incorrect coupling can cause denaturation or collapse |

Experimental Protocols

Protocol 1: Setting Up and Running an Accelerated MD (aMD) Simulation

This protocol outlines the key steps for performing an aMD simulation to improve conformational sampling, using a neurotransmitter transporter as an example [6].

System Preparation:

- Obtain the initial protein structure (e.g., from PDB).

- Place the protein in a suitable environment (e.g., solvate it in a water box or embed it in a lipid bilayer for a membrane protein) [6].

- Add ions to neutralize the system and achieve a physiological salt concentration.

Equilibration:

- Perform energy minimization to remove steric clashes.

- Run a short classical MD simulation with position restraints on the protein backbone to equilibrate the solvent and ions around the protein.

- Release the restraints and equilibrate the entire system at the desired temperature and pressure.

aMD Parameter Calculation:

- Run a short classical MD simulation (e.g., 5-10 ns) to estimate the average potential and dihedral energies of the system.

- Set the aMD boost energy (E) based on these averages. A common practice is to set E = Vavg + (0.2 * Natoms) for the total potential boost, where V_avg is the average potential energy from the classical simulation [6].

- Set the tuning parameter (α). A typical starting point is α = 0.2 * N_atoms for the total potential boost [6].

Production aMD Simulation:

Reweighting (Optional):

- To recover canonical ensemble averages from the biased aMD simulation, apply reweighting algorithms, such as the Maclaurin series expansion, to the collected data [6].

Protocol 2: Dynamic Cross-Correlation Matrix (DCCM) Analysis

This protocol is used to identify correlated and anti-correlated motions within a protein from an MD trajectory [20].

Simulation Trajectory:

- Perform a classical or enhanced MD simulation to generate a trajectory file containing the structural ensembles over time.

Trajectory Processing:

- Use software like GROMACS or the Bio3D R package to process the trajectory. This typically involves aligning all frames to a reference structure to remove global rotation and translation.

Calculation of Cross-Correlations:

- Calculate the cross-correlation coefficient C(i,j) for pairs of atoms (typically Cα atoms) using the formula: C(i,j) = ⟨Δri · Δrj⟩ / (⟨Δri²⟩¹ᐟ² ⟨Δrj²⟩¹ᐟ²) [20]. Here, Δr_i is the displacement vector of atom i from its average position, and the angle brackets denote an ensemble average over all trajectory frames.

Visualization:

- Plot the matrix C(i,j) as a heat map (DCCM). Positive values (correlated motion) and negative values (anti-correlated motion) will appear as off-diagonal cross-peaks [20].

- The results can be mapped onto the 3D protein structure to visualize the communication pathways.

Essential Diagrams for Troubleshooting

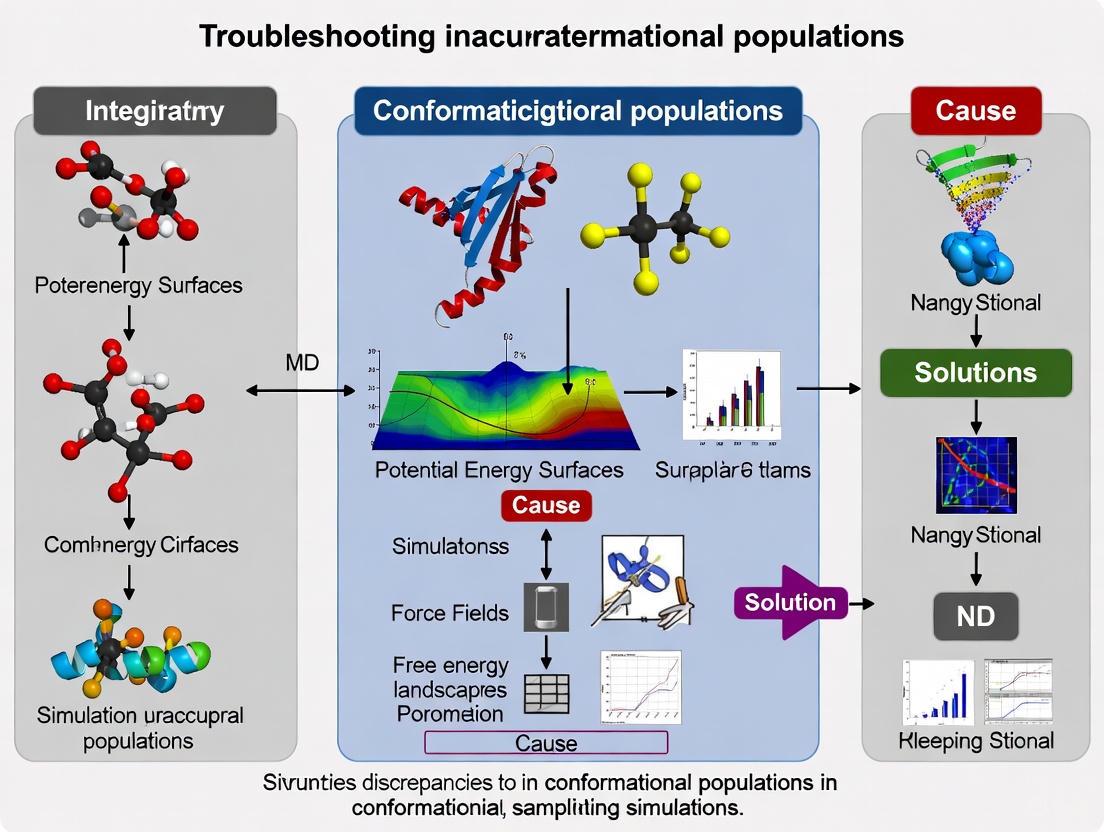

Diagram 1: Troubleshooting Inaccurate Conformational Populations

Troubleshooting Inaccurate Conformational Populations

Diagram 2: The Energy Landscape and Sampling Problem

The Energy Landscape and Sampling Problem

Diagram 3: Dynamic Cross-Correlation Network Analysis Workflow

Dynamic Cross-Correlation Network Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Software and Analysis Tools

| Tool Name | Function | Use Case |

|---|---|---|

| GROMACS | MD simulation software [20] | Performing high-performance MD simulations [20] |

| AMBER | MD simulation software [6] | Performing MD and aMD simulations [6] |

| NAMD | MD simulation software [6] | Performing MD simulations, particularly scalable on parallel computers [6] |

| Bio3D (R Package) | Analysis of biomolecular simulation data [20] | Calculating dynamic cross-correlation matrices (DCCM) [20] |

| MD-TASK | Suite for coarse-grained analysis of MD simulations [22] | Dynamic residue network analysis and perturbation-response scanning [22] |

| MODE-TASK | Suite for essential dynamics and normal mode analysis [22] | Analyzing global motion and conformational changes [22] |

Troubleshooting Guide: Force Field Selection and Validation

FAQ: How do I choose an appropriate force field for simulating Intrinsically Disordered Proteins (IDPs)?

The Challenge: Most traditional molecular dynamics (MD) force fields were developed and parameterized for folded, globular proteins. When applied to IDPs, they tend to overestimate intramolecular attraction, leading to overly compact structures that don't match experimental observations [23].

Troubleshooting Steps:

- Identify Protein Class: First, determine if your protein is fully disordered, contains disordered regions, or is a folded protein on the verge of disorder (like some LEA proteins) [23].

- Select Specialized Force Fields: For IDPs, prefer force fields specifically developed or optimized for disordered states. These often modify protein-water interactions or torsion parameters to improve hydration and conformational sampling [23].

- Run Short Validation Simulations: Perform multiple short (e.g., 200 ns) simulations from different initial structures to assess convergence and compare against available experimental data [23].

- Validate Against Experimental Data: Compare simulation results with Small-Angle X-Ray Scattering (SAXS) data for general compactness and Nuclear Magnetic Resonance (NMR) data for local structural features and dynamics [23].

Performance Table of Selected Force Fields for IDPs (COR15A Case Study) [23]:

| Force Field | Water Model | Performance Summary for IDPs (COR15A) |

|---|---|---|

| DES-amber | TIP4P-D38 | Best overall performance; captured helicity differences between WT and mutant and adequately reproduced NMR relaxation dynamics [23]. |

| ff99SBws | TIP4P/2005s | Captured helicity differences but overestimated overall helicity; reasonable performance for structural properties [23]. |

| ff99SB-disp | a99SB-disp | Performance varies; requires validation against specific experimental data for your system [23]. |

| CHARMM36m | mTIP3P/TIP4P | Improved for IDPs over earlier CHARMM versions, but may still over-stabilize certain secondary structures in some sequences [23]. |

| OPLS-AA | TIP4P | Not recommended for IDPs without modification; tends to overestimate compactness [23]. |

FAQ: My simulations of a globular protein show unrealistic structural drift. Is this a force field issue?

The Challenge: Even for globular proteins, force field choice is critical. Some force fields may introduce instabilities in loops, secondary structures, or at binding sites, leading to unrealistic deformation during simulation.

Troubleshooting Steps:

- Verify Starting Structure Quality: Ensure your initial model is high-quality. MD refinement works best for fine-tuning reliable models, not correcting poor ones [24].

- Check Simulation Stability: Monitor Root-Mean-Square Deviation (RMSD) and potential energy during an equilibration period. The RMSD should plateau, indicating the simulation has reached characteristic conformational sampling [23].

- Optimize Simulation Length: For refinement of already-good models, short simulations (10-50 ns) are often sufficient. Longer simulations (>50 ns) can induce structural drift and reduce fidelity to the experimental starting structure [24].

- Use a Standard Benchmark: Test your force field on a well-characterized globular protein with known experimental dynamics to establish a performance baseline before applying it to your protein of interest.

FAQ: How can I validate my force field choice against experimental data?

The Challenge: Force field performance can be system-dependent. Rigorous validation is required to ensure your simulations accurately reflect reality.

Validation Protocol:

- Compare Global Properties: Calculate the radius of gyration (Rg) or the pair distribution function P(r) from your simulation ensemble and compare it to SAXS data [23].

- Validate Local Structure: Calculate chemical shifts or J-couplings from simulations and compare to NMR data. For IDPs, ensure the force field can capture subtle structural differences, such as those caused by point mutants [23].

- Check Dynamic Properties: Compare order parameters or NMR relaxation times derived from simulations with experimental NMR data to assess if the dynamics are accurately reproduced [23].

- Two-Step Approach: Consider an initial screening with short simulations (e.g., 200 ns) against SAXS data, followed by extended simulations (e.g., 1+ μs) of the best-performing models for detailed validation against NMR data [23].

Diagram 1: Force field selection and validation workflow for troubleshooting conformational populations.

Key Research Reagent Solutions

Specialized Force Fields for Biomolecular Simulations:

| Reagent/Resource | Function & Application | Key Considerations |

|---|---|---|

| DES-amber | An IDP-optimized force field. Best for capturing conformational landscapes and dynamics of disordered proteins, as validated for COR15A [23]. | Use with TIP4P-D38 water model. Excellent for proteins on the verge of folding. |

| ff99SBws | A water-scaling force field for IDPs. Reduces protein-protein interactions to prevent overly compact structures [23]. | May overestimate secondary structure propensity (e.g., helicity) in some systems [23]. |

| CHARMM36m | A modern, widely used force field updated for both folded proteins and disordered systems [25]. | A good general-purpose choice, but always validate for your specific protein. |

| Amber with χOL3 | RNA-specific force field. Recommended for RNA structure refinement and stability testing in MD simulations [24]. | Used in CASP15 RNA refinement benchmarks. Best for fine-tuning reliable RNA models [24]. |

Critical Databases for Dynamic Conformational Data:

| Database | Content & Application | Access Link |

|---|---|---|

| ATLAS | MD simulations of ~2000 representative proteins for protein dynamics analysis [25]. | https://www.dsimb.inserm.fr/ATLAS |

| GPCRmd | MD trajectories focused on G Protein-Coupled Receptors for functionality and drug discovery [25]. | https://www.gpcrmd.org/ |

| SARS-CoV-2 DB | MD simulations of coronavirus proteins to support drug discovery [25]. | https://epimedlab.org/trajectories |

Experimental Protocols

Protocol 1: Initial Force Field Screening for IDPs

This protocol is adapted from the systematic comparison study of COR15A [23].

1. System Preparation:

- Generate 10 independent initial structures for your protein to sample different starting conformations.

- Solvate the protein in a cubic water box with at least 38,000 water molecules.

- Add ions (e.g., 6 Na⁺) to neutralize the system charge.

2. Simulation Setup:

- Test a panel of force fields (e.g., DES-amber, ff99SBws, CHARMM36m, etc.) with their recommended water models.

- Use a thermostat like Bussi-Donadio-Parrinello (BDP) with a coupling constant (τT) of 0.1 ps.

- Use a barostat like Parrinello-Rahman (PR) with a coupling constant (τp) of 2.0 ps.

3. Production and Analysis:

- For each force field, run 10 independent simulations of 200 ns from the different initial structures.

- Treat the first 120 ns as an extended equilibration period. Use only the final 80 ns for production analysis [23].

- Calculate the radius of gyration (Rg) and compare the average against experimental SAXS data to identify the best-performing force fields.

Protocol 2: MD Refinement for RNA Structures

This protocol is based on the CASP15 RNA refinement benchmark [24].

1. Input Model Selection:

- Crucial Step: This method is only effective for high-quality starting models. MD refinement can provide modest improvements by stabilizing stacking and non-canonical base pairs. Poorly predicted models rarely benefit and often deteriorate [24].

- Use models from accurate prediction tools as a starting point.

2. Simulation Execution:

- Use the Amber suite with the RNA-specific χOL3 force field.

- Run short simulations of 10-50 ns. Early dynamics (first few ns) reveal the model's stability and refinement potential.

- Avoid long simulations: Simulations longer than 50 ns typically induce structural drift and reduce model fidelity [24].

3. Analysis:

- Monitor the stability of base pairs and stacking interactions.

- Use metrics like RMSD to assess if the simulation is refining or distorting the initial model. The goal is fine-tuning, not large-scale correction [24].

Diagram 2: MD refinement protocol for improving high-quality RNA models.

Advanced Sampling and Integrative Methods for Accurate Ensembles

Frequently Asked Questions (FAQs)

Q1: What are the primary causes of inaccurate conformational populations in MD simulations? Inaccurate conformational populations primarily stem from two sources: insufficient sampling of conformational space due to limited simulation timeframes and inaccuracies in the molecular mechanics force fields used to describe atomic interactions. Even with enhanced sampling, the accuracy of the resulting ensembles is highly dependent on the quality of the physical models, or force fields, used. Discrepancies between simulations run with different state-of-the-art force fields can persist, leading to varying conformational distributions [26].

Q2: How can I integrate experimental data to improve the accuracy of my conformational ensembles? Integrative approaches, such as maximum entropy reweighting, allow you to refine computational ensembles with experimental data. This method introduces the minimal perturbation to a computational model required to match a set of experimental data, such as NMR spectroscopy and Small-Angle X-Ray Scattering (SAXS) data. This combines the atomistic detail of simulations with the validation provided by experimental observables, helping to achieve force-field independent conformational ensembles [26].

Q3: My λ-dynamics simulation is not sampling chemical space effectively. What could be wrong? Ineffective sampling in λ-dynamics can occur if the biasing potentials in λ-space are not optimal. A technique called Adaptive Landscape Flattening (ALF) can be used to find optimal fitting parameters for the λ-dependent biasing potentials, which helps to flatten the free energy landscape in λ-space and enables adequate sampling of different chemical species. It's important to note that while an optimal bias is beneficial, it is not always strictly necessary to achieve sufficient sampling [27].

Q4: What is a robust method for estimating statistical errors in my simulation results? For statistically robust error estimation, especially when dealing with correlated data from MD trajectories, it is recommended to use a combination of multiple independent simulations and block averaging [28].

- Run multiple independent simulations (e.g., 100 runs) with different initial seeds.

- For each simulation, use block averaging to compute the mean of the quantity of interest and its statistical error, which accounts for serial correlations within the trajectory.

- The final estimate is the average of the means from all simulations, with the final error calculated by propagating the individual errors from each simulation (e.g., standard error propagation assuming independent errors) [28].

Q5: I am getting "atom not found in residue topology database" errors when setting up my system. How do I resolve this?

This error in tools like GROMACS's pdb2gmx indicates that the residue or molecule you are trying to simulate is not defined in the chosen force field's database. Your options are to:

- Check for naming: See if the residue exists in the database under a different name and rename your molecule accordingly.

- Use a different force field: Another force field may already have parameters for your molecule.

- Find existing parameters: Search the literature for published parameters compatible with your force field.

- Parameterize it yourself: Create the topology and parameters for the new molecule, which is a complex task even for experts [29].

Troubleshooting Guides

Guide: Troubleshooting Force Field Inaccuracies and Ensemble Errors

Problem: Your simulated conformational ensemble does not agree with experimental data, such as NMR chemical shifts, SAXS profiles, or order parameters.

Diagnosis and Solutions:

Step 1: Validate against experimental data.

- Action: Compare your simulation's back-calculated observables (e.g., NMR chemical shifts, relaxation rates, SAXS intensities) directly to experimental values. Do not rely solely on structural intuition.

- Protocol: Use tools like

ABSURDerror implement a maximum entropy reweighting protocol to quantitatively assess the agreement. A significant mismatch indicates either insufficient sampling or force field inaccuracy [26] [30].

Step 2: Apply integrative structural biology methods.

- Action: If a reasonable initial agreement exists but is not perfect, use experimental data to refine your ensemble.

- Protocol: Employ a maximum entropy reweighting procedure. This statistical method reweights the frames of your MD simulation so that the averaged back-calculated observables match the experimental data, with minimal perturbation to the simulated ensemble [26].

- Workflow:

- Run a long, unbiased MD simulation or use an enhanced sampling method to generate a diverse conformational ensemble.

- For each frame in the trajectory, use a forward model to calculate the expected experimental observable (e.g., chemical shift).

- Use a maximum entropy algorithm to determine new statistical weights for each frame so that the reweighted ensemble averages match the experiment.

- Use the Kish ratio to ensure the effective ensemble size remains large enough to be statistically representative and avoid overfitting [26].

Step 3: Test multiple force fields.

- Action: The accuracy of MD simulations is highly force-field dependent. If reweighting fails or the initial agreement is poor, the force field may be the source of error.

- Protocol: Run comparable simulations with different, modern force fields (e.g., a99SB-disp, CHARMM36m, CHARMM22*) and compare their inherent agreement with experimental data before reweighting. Studies show that ensembles from different force fields can converge to highly similar distributions after reweighting with sufficient experimental data, providing a more force-field independent result [26].

Guide: Troubleshooting Sampling Issues in Alchemical and λ-Dynamics

Problem: Poor sampling of ligand binding modes or inefficient exploration of chemical space in alchemical methods like λ-dynamics.

Diagnosis and Solutions:

Solution 1: Implement advanced λ-dynamics frameworks.

- Problem: You need to compute free energy landscapes for multiple chemical species (e.g., different ligands or mutations) and find running separate simulations for each one inefficient.

- Protocol: Use the

MSλD+USframework, which combines umbrella sampling (US) with multisite λ-dynamics [27]. - Workflow:

- System Setup: Represent multiple ligands explicitly in a single simulation system, tethered together by harmonically restraining their common core atoms.

- Run Coupled Simulation: In each umbrella sampling window, run a λ-dynamics simulation. The λ variables, which control the alchemical state, are treated as dynamic variables with their own mass and can evolve continuously in response to the environment.

- Apply Biasing Potentials: Use a set of λ-dependent biasing potentials (

U_fixed,U_quadratic,U_end,U_skew) to discourage unphysical states and improve sampling. Optimize these biases using Adaptive Landscape Flattening (ALF) before production runs [27]. - Analysis: Use the Multistate Bennett Acceptance Ratio (MBAR) to recover the potential of mean force (PMF) for each chemical species from the single, combined simulation [27].

Solution 2: Use Grand Canonical Monte Carlo for fragment binding.

- Problem: Spontaneous binding of fragments in standard MD is too rare, and identifying multiple binding modes is difficult.

- Protocol: Employ Grand Canonical Nonequilibrium Candidate Monte Carlo (GCNCMC) [31].

- Workflow:

- Define Region: Specify the region of interest on the protein where fragments can be inserted or deleted.

- Propose Moves: Randomly attempt insertion, deletion, or movement of fragments within the region.

- Nonequilibrium Switching: Each insertion or deletion move is performed gradually over several alchemical steps, allowing the protein and solvent to adapt (induced fit).

- Monte Carlo Test: The proposed move is accepted or rejected based on the Metropolis criterion, ensuring sampling of the grand canonical ensemble.

- Benefit: This method efficiently finds occluded binding sites, samples multiple binding modes, and can calculate binding affinities without the need for complex restraints [31].

Guide: Resolving Common Software Errors in GROMACS

Problem: Energy minimization fails with "Energy minimization has stopped because the force on at least one atom is not finite. This usually means atoms are overlapping." [32]

Diagnosis and Solutions:

Step 1: Identify the problematic atom.

- Action: The error log will specify the atom number (e.g., "Fmax= inf, atom= 1251"). Use visualization software (e.g., VMD, PyMOL) to inspect this atom and its immediate environment for severe clashes, often with other parts of the protein, ligand, or solvent [32].

Step 2: Check for topology-coordinate mismatch.

- Action: This is a common cause. The atom names and/or residue names in your coordinate file (e.g.,

.groor.pdb) must exactly match those defined in your topology file (.top/.itp). - Protocol: Manually compare the atom names in the ligand's residue in the coordinate file against the definition in the force field residue topology database (

.rtp) or your ligand's.itpfile. Correct any naming discrepancies [29] [32].

- Action: This is a common cause. The atom names and/or residue names in your coordinate file (e.g.,

Step 3: Manually resolve clashes.

- Action: If the topology is correct, the structure itself may have overlapping atoms.

- Protocol:

- Slightly shift the position of the offending ligand or residue to eliminate the clash.

- Use a molecular modeling program like MOE or Chimera to perform a quick, preliminary energy minimization on the problematic region before setting up the GROMACS simulation [32].

Problem: grompp fails with "Invalid order for directive..." [29]

Diagnosis and Solutions:

- Cause: The directives in your topology (

.top) and include (.itp) files must appear in a specific order, as defined by GROMACS. - Solution: Restructure your topology file. The general order is:

[ defaults ][ atomtypes ][ moleculetype ](for the entire system)[ system ][ molecules ]

- Ensure that all

[ *types ]directives (e.g.,[ atomtypes ],[ bondtypes ]) appear before the first[ moleculetype ]directive. This means force field parameters must be fully defined before any molecules are described [29].

Problem: grompp fails with "Found a second defaults directive" [29]

Diagnosis and Solutions:

- Cause: The

[ defaults ]directive appears more than once in your topology, which is invalid. - Solution: The

[ defaults ]should only be set once, typically when you#includeyour main force field. If you are including other.itpfiles (e.g., for a ligand) that contain their own[ defaults ]section, comment out or delete the redundant[ defaults ]directives in those secondary files [29].

Research Reagent Solutions

This table details key computational tools and methods used in advanced enhanced sampling studies.

Table 1: Key Research Reagents and Computational Tools

| Item Name | Function/Brief Explanation | Example Application Context |

|---|---|---|

| Maximum Entropy Reweighting | A statistical method to refine MD ensembles by minimally adjusting frame weights to match experimental data [26]. | Correcting inaccurate conformational populations in IDP simulations to match NMR data [26]. |

| Multisite λ-Dynamics (MSλD) | An alchemical method that allows multiple chemical species (e.g., different ligands) to be simulated simultaneously in a single system by treating λ as a dynamic variable [27]. | Efficiently computing PMFs for multiple ligands binding to a protein from one set of simulations [27]. |

| Grand Canonical NCMC (GCNCMC) | A Monte Carlo method that allows for the insertion and deletion of molecules in a defined region during an MD simulation, facilitating the discovery of binding sites and modes [31]. | Identifying fragment binding sites and multiple binding modes in fragment-based drug discovery [31]. |

| Umbrella Sampling (US) | An enhanced sampling technique that uses harmonic biases along collective variables (CVs) to force the system to sample high-energy states and reconstruct free energy profiles [27]. | Calculating the potential of mean force for processes like ligand unbinding or protein folding [27]. |

| Adaptive Landscape Flattening (ALF) | An automated procedure to optimize the biasing potentials in λ-dynamics simulations, which improves the sampling efficiency in λ-space [27]. | Overcoming poor sampling of different chemical states in a multisite λ-dynamics simulation [27]. |

| Multistate Bennett Acceptance Ratio (MBAR) | A statistically optimal method for analyzing data from multiple equilibrium samples (e.g., from umbrella sampling) to compute free energies and PMFs [27]. | Recovering unbiased free energy profiles from a set of biased umbrella sampling simulations [27]. |

Workflow and Relationship Visualizations

The following diagram illustrates the integrative workflow for determining accurate conformational ensembles, combining molecular dynamics simulations with experimental data.

Integrative Workflow for Conformational Ensembles

This diagram outlines the protocol for using advanced λ-dynamics to simultaneously study multiple chemical species.

MSλD+US Protocol for Multiple Species

Leveraging True Reaction Coordinates for Efficient Barrier Crossing

Troubleshooting Guide: Identifying and Validating Reaction Coordinates

This guide addresses common challenges researchers face when identifying True Reaction Coordinates (tRCs) and their impact on obtaining accurate conformational populations in Molecular Dynamics (MD) simulations.

Table: Troubleshooting Common RC-Related Problems

| Problem Symptom | Potential Cause | Diagnostic Steps | Solution & References |

|---|---|---|---|

| 1. Ineffective Enhanced Sampling: Biased simulations show no significant acceleration of conformational changes. | Hidden Barriers: The biased Collective Variables (CVs) are orthogonal to the true reaction coordinates, leaving the actual activation barrier unsampled [33]. | Perform a committor analysis on configurations with the biased CV held at its transition state value. A broad committor distribution indicates a poor RC [34]. | Re-identify CVs using methods that target the committor, such as the generalized work functional (GWF) method [35]. |

| 2. Non-Physical Trajectories: Accelerated transition paths deviate from expected mechanistic understanding. | Incorrect Collective Variables: Empirically chosen CVs (e.g., RMSD, principal components) do not capture the essential dynamics of the process [33] [35]. | Compare the biased trajectory pathway to a known natural reactive trajectory (NRT) or experimental data. Check for unrealistic atomic clashes or geometries [35]. | Replace intuitive CVs with tRCs identified via physics-based methods like energy flow theory, which generate natural transition pathways [33] [35]. |

| 3. Force Field Dependent Results: Conformational ensembles differ significantly when using different force fields. | Incomplete Sampling & Force Field Bias: Under-sampling combined with inherent force field inaccuracies leads to ensembles trapped in different local minima [26] [36]. | Validate the unbiased simulation ensemble against multiple experimental observables (NMR, SAXS). Use the Kish ratio to check for effective ensemble size after reweighting [26]. | Integrate simulations with experimental data using maximum entropy reweighting to obtain force-field independent conformational ensembles [26]. |

| 4. Failure to Generate Transition Paths: Transition path sampling (TPS) cannot find an initial reactive trajectory or transition state configurations. | High Barrier & Rare Events: The system is trapped in the reactant basin, and no initial transition state conformation is available to seed TPS [35]. | Attempt to compute committor values from the reactant basin; a value of exactly 0 confirms the system is trapped. | Use enhanced sampling biased on pre-computed tRCs to efficiently generate trajectories that pass through the transition state region (pB~0.5), providing initial states for TPS [35]. |

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between a collective variable (CV) and a true reaction coordinate (tRC)?

A CV is any function of atomic coordinates used to describe the progress of a process. A tRC is a special type of CV that exactly determines the committor probability for any given system configuration [35]. While many CVs can be proposed, only a tRC guarantees that the dynamics projected onto it will correctly describe the reaction mechanism and kinetics. Using a non-optimal CV leads to the "hidden barrier" problem, whereas biasing a tRC provides optimal sampling efficiency [33].

Q2: How can I objectively test if my proposed coordinate is a good reaction coordinate?

The most rigorous validation is the committor test (or committor histogram test) [34].

- Define your reactant (A) and product (B) states.

- Select configurations from your simulation where your proposed RC has a value corresponding to the transition state (e.g., the top of the free energy barrier).

- For each of these configurations, launch multiple, short, unbiased MD trajectories with random initial velocities.

- Calculate the committor, pB, as the fraction of trajectories that reach B before A.

If your proposed coordinate is a good RC, the histogram of pB values for these configurations will be sharply peaked at pB = 0.5. A broad histogram indicates the coordinate is insufficient [33] [34].

Q3: My enhanced sampling simulation converges to a free energy profile, but the predicted population of a key intermediate is inconsistent with experiment. What could be wrong?

This is a classic symptom of an inaccurate conformational ensemble. The issue may not be the free energy profile along your chosen CV, but rather that the conformational ensemble at each point along the CV is incorrect. This can be caused by:

- Force Field Inaccuracies: The physical model favors incorrect conformations [26] [36].

- Insufficient Sampling: The simulation is not long enough to explore the full conformational space orthogonal to your CV, leading to a biased ensemble.

Solution: Integrate experimental data directly into your ensemble using maximum entropy reweighting. This method minimally adjusts the weights of your simulation frames to match experimental observables (e.g., NMR chemical shifts, J-couplings, SAXS profiles), resulting in a more accurate and force-field independent conformational ensemble [26].

Q4: What are the latest methods for systematically identifying tRCs without relying on intuition?

Recent advances provide physics-based, systematic approaches:

- Energy Flow Theory and Generalized Work Functional (GWF): This method identifies tRCs as the optimal channels for energy flow during a conformational change. It calculates the Potential Energy Flow (PEF) through individual coordinates and uses GWF to generate an orthonormal set of "singular coordinates," with the tRCs being the ones with the highest PEFs [33] [35]. A key advantage is that tRCs can be computed from energy relaxation simulations, which are easier to perform than waiting for a rare reactive event [35].

- Machine Learning (ML) and Committor-Based Analysis: ML models can be trained to approximate the committor function directly from a large set of structural descriptors. The features most important to the ML model's prediction are strong candidates for the tRC [37] [34].

Experimental Protocols

Protocol 1: Identifying tRCs Using the Generalized Work Functional Method

This protocol is based on the method described in Nature Communications 16, 786 (2025) [35].

1. System Preparation:

- Start with a single protein structure (e.g., from AlphaFold or crystal structure).

- Solvate and equilibrate the system using standard MD protocols.

2. Energy Relaxation Simulation:

- Instead of running a long, unbiased MD and waiting for a rare event, initiate a short simulation from a structure of interest.

- The tRCs will govern the energy relaxation process. Collect snapshots and coordinate trajectories from this simulation.

3. Calculate Potential Energy Flows (PEF):

- For each coordinate ( qi ) (e.g., dihedral angle, distance), compute the PEF over a time interval using: ( \Delta Wi(t1, t2) = -\int{qi(t1)}^{qi(t2)} \frac{\partial U(\mathbf{q})}{\partial qi} dq_i ) where ( U(\mathbf{q}) ) is the potential energy of the system [35].

- This measures the energy cost of the motion of each coordinate.

4. Apply the Generalized Work Functional:

- The GWF algorithm is applied to the simulation data to generate a set of orthonormal singular coordinates (SCs).

- This transformation disentangles the coordinates, maximizing the PEF through individual SCs.

5. Identify tRCs:

- The SCs with the highest PEFs are identified as the tRCs. These are the few essential coordinates that control the conformational change and energy activation.

Protocol 2: Validating an RC with Committor Analysis

This is the gold-standard test for any proposed RC [33] [34].

1. Define Stable States:

- Clearly define the reactant (A) and product (B) basins in terms of your chosen RC or other structural metrics.

2. Harvest Putative Transition States:

- Run an umbrella sampling or metadynamics simulation biased along your proposed RC.

- From this simulation, select 50-100 configurations where the RC value corresponds to the maximum of the free energy barrier (the putative transition state).

3. Compute Committor Values:

- For each selected configuration:

- Randomize atomic velocities from a Maxwell-Boltzmann distribution.

- Launch 50-100 short, unbiased MD trajectories.

- For each trajectory, monitor whether it reaches state B or state A first.

- The committor ( p_B ) is the fraction of trajectories that commit to B.

4. Analyze the Histogram:

- Construct a histogram of all computed ( p_B ) values.

- A successful RC will yield a histogram sharply peaked at ( p_B = 0.5 ). A broad, non-peaked distribution (e.g., uniform between 0 and 1) indicates a poor RC.

The Scientist's Toolkit

Table: Essential Reagents and Methods for tRC Research

| Tool / Reagent | Function / Description | Key Application in tRC Studies |

|---|---|---|

| Committor (pB) | The probability that a trajectory initiated from a given configuration reaches the product before the reactant [33] [34]. | The definitive metric for validating a proposed reaction coordinate. The "true" RC is the coordinate that perfectly predicts pB [35]. |

| Energy Flow Theory | A physics-based framework that describes how energy flows through specific coordinates to drive conformational changes [33]. | Provides a physical interpretation of tRCs as the "optimal channels of energy flow" and forms the basis for the GWF method [33] [35]. |

| Generalized Work Functional (GWF) | A computational method that generates an orthonormal coordinate system to disentangle tRCs from other degrees of freedom [35]. | Used to systematically identify tRCs from simulation data by maximizing the potential energy flow through individual singular coordinates [35]. |

| Maximum Entropy Reweighting | A statistical framework to integrate MD simulations with experimental data by minimally adjusting conformational weights [26]. | Corrects for force field inaccuracies and insufficient sampling to generate accurate conformational ensembles for validating and studying tRCs [26]. |

| Transition Path Sampling (TPS) | A suite of algorithms to harvest true, unbiased reactive trajectories between two stable states [35]. | Generates Natural Reactive Trajectories (NRTs), which are essential for both testing tRCs and providing initial data for some tRC identification methods [35]. |

Method Comparison & Workflow

Table: Comparison of Key RC Identification Methods

| Method | Underlying Principle | Required Input | Strengths | Weaknesses |

|---|---|---|---|---|

| GWF / Energy Flow [35] | Physics of energy transfer; identifies coordinates with highest energy cost. | A single structure (for energy relaxation) or pre-computed NRTs. | High physical interpretability; can be predictive from a single structure. | Relatively new method; requires implementation of specialized analysis. |

| Committor-Based ML [37] [34] | Machine learning to approximate the committor function. | A large dataset of configurations with known committor values. | Systematically screens 1000s of candidate coordinates; no intuition needed. | Requires many expensive committor calculations to generate training data. |

| Dimensionality Reduction (e.g., TICA, DiffMap) [37] | Identifies the slowest degrees of freedom in a simulation. | Long, unbiased MD simulation data. | Good for initial exploration and identifying slow modes. | Identifies slow coordinates, which are not necessarily the tRCs for a specific process. |

FAQs: Addressing Common Challenges in Integrative Modeling

Q1: My molecular dynamics (MD) simulations of an intrinsically disordered protein (IDP) do not match my experimental NMR data. What could be wrong?

The discrepancy often stems from inaccuracies in the molecular mechanics force field used in the simulations. Force fields can have an imbalance between protein-protein and protein-water interactions, leading to conformations that are more compact or extended than in reality [38]. The recommended solution is to apply a maximum entropy reweighting procedure. This method uses your experimental NMR data to reweight the structures from your simulation, creating a new conformational ensemble that is consistent with both the simulation data and the experimental restraints, thereby correcting for force field inaccuracies [26].

Q2: How can I reliably combine sparse SAXS data with MD simulations without overfitting?

SAXS data has low information content (typically only 5-30 independent data points), making overfitting a significant risk [39]. To mitigate this, integrate SAXS data directly into your MD simulation as an energetic restraint using a method like SAXS-driven MD [39]. This approach uses the physical information encoded in the MD force field to restrain the simulation to conformations that are both physically realistic and compatible with the experimental SAXS curve. The force field acts as a powerful regularizer, greatly reducing the risk of overinterpreting the sparse data.

Q3: What does a "spiky baseline" in my NMR spectrum indicate, and how does it affect integrative modeling?

A spiky, somewhat symmetrical baseline in an NMR spectrum can be a sign of a mechanical failure in the NMR instrument, such as a malfunctioning lift on one of the magnet's legs [40]. This introduces vibrational noise into the data. For integrative modeling, which relies on high-quality, quantitative experimental data like NMR chemical shifts and paramagnetic relaxation enhancement (PRE), such artifacts can lead to incorrect calculation of conformational ensembles. Always collect data for standard compounds to verify instrument performance before conducting experiments for modeling [40].

Q4: How do I choose an initial structural model for a multidomain protein with flexible linkers when no single structure exists?