Predicting Molecular Mechanics Parameters with Graph Neural Networks: A New Paradigm for Force Field Development

This article explores the transformative role of Graph Neural Networks (GNNs) in predicting Molecular Mechanics (MM) force field parameters, a critical task for accurate and efficient molecular dynamics simulations in...

Predicting Molecular Mechanics Parameters with Graph Neural Networks: A New Paradigm for Force Field Development

Abstract

This article explores the transformative role of Graph Neural Networks (GNNs) in predicting Molecular Mechanics (MM) force field parameters, a critical task for accurate and efficient molecular dynamics simulations in drug discovery and materials science. We cover the foundational principles of GNNs and MM, detail cutting-edge methodological architectures and their specific applications, address key challenges and optimization strategies, and provide a comparative analysis of model performance and validation. Aimed at researchers and development professionals, this review synthesizes recent advances to guide the selection, implementation, and future development of GNN-driven force fields, highlighting their potential to achieve near-chemical accuracy at traditional MM computational cost.

From Atoms to Graphs: Foundational Principles of GNNs and Molecular Mechanics

Molecular mechanics (MM) force fields are foundational computational tools that use classical physics to model the potential energy surface of molecular systems. Their application is crucial for molecular dynamics simulations, which are extensively used in drug discovery to study protein-ligand interactions, conformational dynamics, and other phenomena relevant to pharmaceutical development. The accuracy of these simulations is intrinsically tied to the quality of the force field parameters. The core challenge, known as the parameterization problem, involves determining the optimal numerical values for these parameters—which govern bonded interactions (bonds, angles, dihedrals) and non-bonded interactions (van der Waals, electrostatic)—so that the force field reliably reproduces reference data, typically from quantum mechanical (QM) calculations or experimental measurements [1] [2].

This challenge is multifaceted. First, the parameter space is high-dimensional and interdependent, where adjusting one parameter can necessitate recalibration of others. Second, traditional methods often rely on "look-up tables" of parameters based on discrete atom types, which struggle to cover the vastness of synthetically accessible, drug-like chemical space [1]. Furthermore, conventional parameter fitting can become suboptimal when inconsistencies exist between the equilibrium geometries of QM and MM models, a common issue when parameters are transferred from one molecule to another [3]. Finally, the process of refining parameters against experimental data must robustly handle the sparse, noisy, and ensemble-averaged nature of that data [4].

Modern Data-Driven and Machine Learning Approaches

To overcome these limitations, the field is rapidly shifting toward data-driven and machine learning (ML) approaches. These methods leverage large, diverse datasets and advanced algorithms to create more accurate, transferable, and automatable parameterization schemes.

Graph Neural Networks for Parameter Prediction

Graph Neural Networks (GNNs) are particularly well-suited for molecular modeling as they natively operate on graph representations of molecules, where atoms are nodes and bonds are edges. A leading approach involves training GNNs on expansive QM datasets to predict MM parameters directly from molecular structure.

The ByteFF force field exemplifies this paradigm. Its development involved generating a massive dataset containing 2.4 million optimized molecular fragment geometries and 3.2 million torsion profiles at the B3LYP-D3(BJ)/DZVP level of theory [1]. An edge-augmented, symmetry-preserving GNN was then trained on this dataset to simultaneously predict all bonded and non-bonded parameters for drug-like molecules. This end-to-end, data-driven approach allows ByteFF to achieve state-of-the-art accuracy across a broad chemical space, covering geometries, torsional profiles, and conformational energies [1].

Innovations in GNN architecture are further pushing the boundaries of performance and interpretability. Kolmogorov-Arnold GNNs (KA-GNNs) integrate Kolmogorov-Arnold networks (KANs) into the core components of GNNs: node embedding, message passing, and readout [5]. By using learnable, Fourier-series-based univariate functions instead of fixed activation functions, KA-GNNs demonstrate superior expressivity and parameter efficiency. This translates to higher accuracy in molecular property prediction and offers improved interpretability by highlighting chemically meaningful substructures [5].

Automated and Multi-Objective Optimization Algorithms

For systems where high-fidelity parameterization is required, automated iterative optimization against QM data or experimental observables is key. Modern algorithms make this process less tedious and more robust.

One advanced framework combines the Simulated Annealing (SA) and Particle Swarm Optimization (PSO) algorithms, augmented with a Concentrated Attention Mechanism (CAM). This hybrid method performs a multi-objective search of the parameter space, with the CAM strategically weighting key data points (like optimal structures) to enhance accuracy. This approach has been successfully applied to optimize parameters for reactive force fields (ReaxFF), demonstrating higher efficiency and accuracy compared to using either SA or PSO alone [6].

When the goal is to match ensemble-averaged experimental data (e.g., from NMR or free energy measurements), Bayesian methods are highly effective. The Bayesian Inference of Conformational Populations (BICePs) algorithm treats experimental uncertainty as a nuisance parameter sampled alongside conformational populations [4]. It uses a replica-averaged forward model to predict observables and can employ specialized likelihood functions to automatically detect and down-weight outliers or systematic errors. The BICePs score serves as a differentiable objective function, enabling robust variational optimization of force field parameters against complex experimental data [4].

Quantitative Comparisons of Parameterization Methods

The table below summarizes the key performance characteristics of contemporary force field parameterization methods discussed in this note.

Table 1: Comparison of Modern Force Field Parameterization Approaches

| Method / Framework | Core Approach | Key Advantages | Demonstrated Application |

|---|---|---|---|

| ByteFF GNN [1] | Graph Neural Network trained on QM data | Simultaneous parameter prediction; broad coverage of drug-like chemical space; state-of-the-art accuracy on multiple benchmarks. | General organic molecules / drug-like compounds |

| KA-GNNs [5] | GNN with Kolmogorov-Arnold network modules | Enhanced prediction accuracy & parameter efficiency; improved interpretability of learned chemical patterns. | Molecular property prediction |

| SA+PSO+CAM [6] | Hybrid metaheuristic optimization | High accuracy; avoids local minima; more efficient than sequential or single-algorithm methods. | Reactive force fields (ReaxFF) for specific systems (e.g., H/S) |

| BICePs Optimization [4] | Bayesian inference with replica averaging | Robust handling of noisy/sparse experimental data; automatic treatment of uncertainty and outliers. | Lattice models, polymers, and neural network potentials |

| ML Surrogate Models [7] | Replacing MD simulations with ML | Speeds up parameter optimization by a factor of ~20 while retaining force field quality. | Multi-scale parameter optimization (e.g., bulk density) |

Detailed Experimental Protocols

Protocol: GNN-Based Force Field Parameterization (e.g., ByteFF)

This protocol outlines the key steps for developing a GNN-based force field like ByteFF [1].

Dataset Curation:

- Objective: Assemble a expansive and chemically diverse dataset of small molecules and molecular fragments.

- Procedure: a. Select molecules representative of the target chemical space (e.g., drug-like compounds). b. Perform quantum mechanical calculations for all selected molecules. For ByteFF, this involved geometry optimization and frequency calculation at the B3LYP-D3(BJ)/DZVP level of theory to obtain energies, gradients, and Hessian matrices. c. Perform torsional scans for relevant dihedral angles to generate conformational energy profiles.

- Output: A QM dataset containing millions of data points (e.g., optimized geometries with energies/Hessians, torsion profiles).

Model Architecture and Training:

- Objective: Train a GNN to map molecular structures to MM parameters.

- Procedure: a. Representation: Represent each molecule as a graph with atoms as nodes and bonds as edges. Node features can include atom type, hybridization, formal charge, etc. b. Architecture: Implement a symmetry-preserving GNN. The ByteFF approach used an edge-augmented GNN to process structural information. c. Output Layer: Design the final network layer to predict all necessary MM parameters (equilibrium bond lengths, angle values, force constants, partial charges, etc.) simultaneously. d. Training: Train the model by minimizing a loss function that quantifies the difference between MM-calculated energies/properties (using the predicted parameters) and the reference QM data. This requires a differentiable MM engine within the training loop.

Validation and Benchmarking:

- Objective: Assess the performance and transferability of the resulting force field.

- Procedure: a. Evaluate the force field on held-out benchmark molecules. b. Key metrics include accuracy in predicting relaxed molecular geometries, torsional energy profiles, conformational energies, and atomic forces [1]. c. Compare performance against existing traditional and machine-learning force fields.

Protocol: Fine-Tuning a GNN Force Field to Experimental Data

This protocol describes how to refine a pre-trained GNN force field using experimental free energy data, as demonstrated with espaloma [8].

Foundation Model and Data Preparation:

- Objective: Establish a starting model and collect experimental data for fine-tuning.

- Procedure: a. Start with a pre-trained GNN force field (e.g., espaloma-0.3.2) as the foundation model. b. Gather a limited set of experimental free energy measurements, such as hydration free energies from the FreeSolv database. c. Prepare the molecular structures for the compounds in the dataset.

Efficient One-Shot Fine-Tuning:

- Objective: Adjust the model's parameters to better match experiment without costly re-simulation.

- Procedure: a. Low-Rank Projection: To improve data efficiency, project the GNN's high-dimensional atom embedding vectors to a lower-dimensional space for fine-tuning. b. Reweighting: Use an exponential (Zwanzig) reweighting estimator to compute the effect of changing force field parameters on the simulated free energies. This allows for parameter optimization using existing simulation data from the initial model. c. Effective Sample Size (ESS) Regularization: Apply regularization during optimization to ensure the fine-tuned force field retains sufficient overlap with the initial model, maintaining statistical reliability.

Validation:

- Objective: Confirm that fine-tuning improves prediction without degrading other properties.

- Procedure: Test the fine-tuned model on the target experimental property (e.g., hydration free energy) and related free energy predictions to assess transferability and overall improvement [8].

Table 2: Key Computational Tools and Resources for Force Field Parameterization

| Item / Resource | Function / Description | Relevance in Parameterization |

|---|---|---|

| Quantum Chemical Software (e.g., Gaussian, ORCA, PSI4) | Performs electronic structure calculations to generate high-quality reference data. | Provides target energies, forces, and Hessians for fitting bonded parameters and torsional profiles [1] [3]. |

| Differentiable MM Engine | A molecular mechanics engine that allows gradients to be backpropagated from the energy to the parameters. | Essential for the end-to-end training of GNN-based force field parameter predictors [1] [8]. |

| Automation & Optimization Libraries (e.g., for SA, PSO, GA) | Provides algorithms for automated parameter search and multi-objective optimization. | Enables efficient and robust parameter fitting against complex QM or experimental targets [2] [6]. |

| Bayesian Inference Software (e.g., BICePs) | Implements statistical reweighting and uncertainty quantification for ensemble-averaged data. | Used to refine parameters and conformational ensembles against noisy experimental data with unknown error [4]. |

| ML Surrogate Models | A machine learning model trained to predict simulation outcomes. | Dramatically speeds up parameter optimization loops by replacing expensive MD simulations [7]. |

| Amber/CHARMM Parameter Files | Standardized file formats for storing force field parameters. | The output target for new parameterization methods; ensures compatibility with major simulation packages [1] [3]. |

Workflow and Signaling Pathway Diagrams

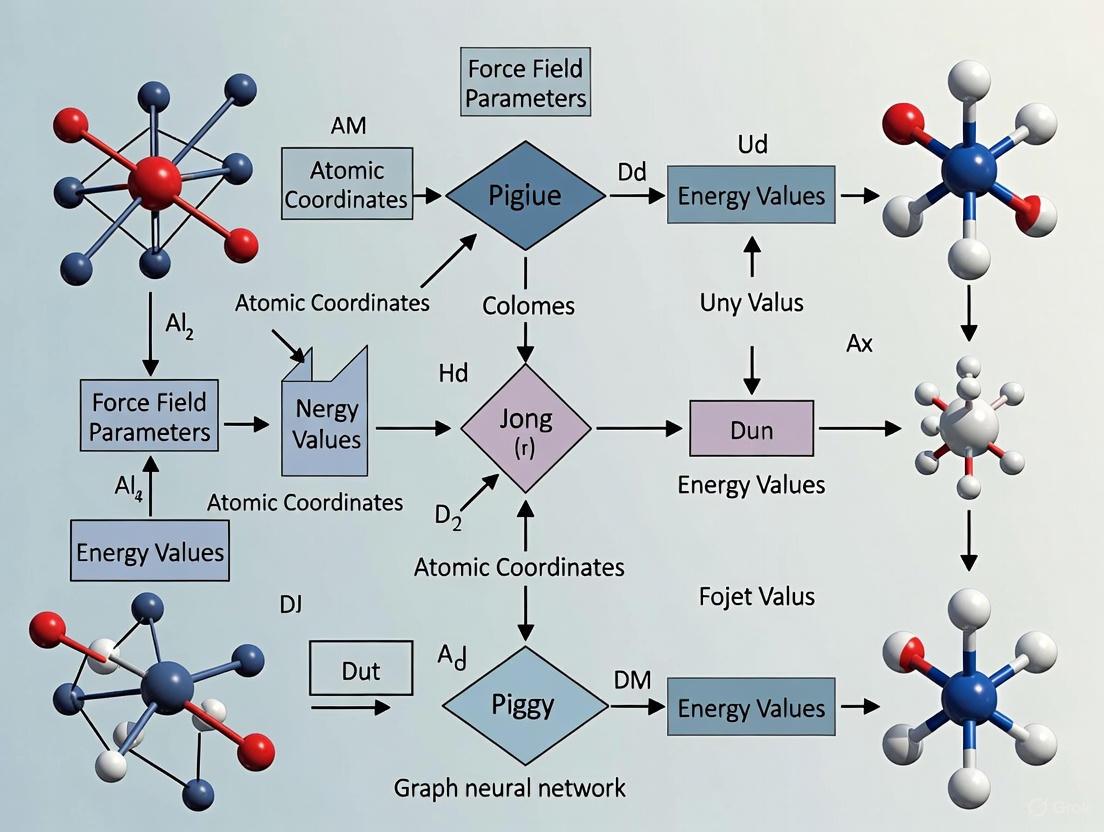

GNN Parameterization Workflow

Iterative Parameter Refinement

Why Graphs? Representing Molecules as Nodes and Edges

In computational chemistry and drug discovery, the translation of molecular structures into a machine-readable format is a foundational step. The graph-based representation, which models atoms as nodes and bonds as edges, has emerged as a particularly powerful and intuitive method [9]. This approach directly mirrors the fundamental structure of molecules, providing a natural bridge between chemical reality and computational analysis. For researchers focused on predicting molecular mechanics parameters using Graph Neural Networks (GNNs), this representation is indispensable because it preserves the topological and relational information that governs molecular properties and interactions [5] [10]. Unlike simpler string-based representations like SMILES, graph structures natively encode the connectivity and local environments of atoms, which are critical for accurate physical property prediction [11] [9].

Theoretical Foundation: The Molecular Graph

Mathematical Formalism

A molecular graph is formally defined as a tuple ( G = (V, E) ), where:

- ( V ) is the set of nodes (atoms)

- ( E ) is the set of edges (bonds) connecting pairs of nodes [9].

This abstract mathematical structure is implemented computationally using matrices. The adjacency matrix (A) encodes connectivity, where element ( a_{ij} = 1 ) indicates a bond between atoms ( i ) and ( j ). The node feature matrix (X) contains atom-level attributes (e.g., atom type, formal charge), and the edge feature matrix (E) describes bond characteristics (e.g., bond type, bond length) [9]. This structured data format is ideally suited for processing by graph neural networks.

Advantages for Molecular Mechanics

Graph representations offer several distinct advantages for predicting molecular mechanics parameters:

- Structural Fidelity: They explicitly represent the connectivity and topology of a molecule, which directly determines its spatial conformation, vibrational modes, and steric interactions [9].

- Feature Integration: Both atomic properties (e.g., mass, hybridization) and bond properties (e.g., order, conjugation) can be incorporated as features, providing a comprehensive description of the molecular system [5] [10].

- Scale-Invariance: The representation is inherently independent of molecular size and complexity, making it suitable for modeling diverse chemical spaces [9].

Experimental Protocols: From Molecules to Predictions

Protocol 1: Constructing a Molecular Graph from a SMILES String

Objective: Convert a Simplified Molecular-Input Line-Entry System (SMILES) string into a structured molecular graph for GNN-based property prediction [10].

Materials:

- SMILES string of the target molecule.

- Cheminformatics library (e.g., RDKit).

Methodology:

- SMILES Parsing: Input the SMILES string into a parser to generate a 2D molecular structure.

- Node Identification: Identify each atom in the structure. Populate the node feature matrix ( X ) with atomic features (e.g., atom type, degree, formal charge, hybridization state).

- Edge Identification: Identify all covalent bonds between atoms. Construct the adjacency matrix ( A ), and populate the edge feature matrix ( E ) with bond features (e.g., bond type, conjugation).

- Graph Validation: Visually inspect the resulting graph against the original 2D structure to ensure fidelity.

Table 1: Default Node and Edge Features for Molecular Mechanics Studies

| Feature Type | Feature Description | Data Format | Role in Molecular Mechanics |

|---|---|---|---|

| Node Features | Atom type (e.g., C, N, O) | One-hot encoding | Defines element-specific properties |

| Atomic number | Integer | Correlates with van der Waals radius | |

| Hybridization (sp, sp², sp³) | One-hot encoding | Determines bond angles and geometry | |

| Partial charge | Continuous float | Influences electrostatic interactions | |

| Number of bonded hydrogens | Integer | Affects local steric environment | |

| Edge Features | Bond type (single, double, triple, aromatic) | One-hot encoding | Determines bond length and strength |

| Bond length (if 3D data available) | Continuous float (Å) | Critical for strain energy and conformation | |

| Bond stereochemistry | One-hot encoding | Affects chiral centers and isomerism | |

| Graph distance between nodes | Integer | Captures long-range intramolecular interactions |

Protocol 2: Implementing a GNN for Property Prediction

Objective: Train a Graph Neural Network to predict a target molecular mechanics parameter (e.g., dipole moment, HOMO-LUMO gap) [12] [10].

Materials:

- Dataset of molecular graphs (e.g., QM9 [12] [10]).

- Deep learning framework with GNN support (e.g., PyTorch Geometric).

Methodology:

- Data Preparation: Split the dataset into training, validation, and test sets (common ratio: 80/10/10).

- Model Selection: Choose a GNN architecture suitable for the task. For example:

- Message Passing: In each layer, nodes aggregate feature information from their neighbors. For a GCN, the update for node ( i ) is: [ hi^{(l+1)} = \sigma \left( \sum{j \in \mathcal{N}(i) \cup {i}} \frac{1}{\sqrt{\hat{d}i \hat{d}j}} hj^{(l)} W^{(l)} \right) ] where ( \mathcal{N}(i) ) are the neighbors of ( i ), ( \hat{d}i ) is the node degree, ( h_j^{(l)} ) are features, ( W^{(l)} ) is a learnable weight matrix, and ( \sigma ) is a non-linear activation [10].

- Readout / Pooling: After ( L ) message-passing layers, generate a single graph-level embedding ( hG ) from the set of node embeddings ( {h1^{(L)}, ..., h_n^{(L)}} ) for molecular property prediction.

- Training and Evaluation: Train the model using a regression loss (e.g., Mean Squared Error) and evaluate performance on the test set using metrics like Root Mean Square Error (RMSE) and Mean Absolute Error (MAE) [10].

Diagram 1: GNN Workflow for Molecular Property Prediction.

Advanced Architectures and Applications

Enhanced GNN Frameworks

Recent research has developed advanced GNN frameworks that integrate novel components to boost performance in molecular tasks:

Kolmogorov–Arnold GNNs (KA-GNNs): These models integrate Kolmogorov–Arnold networks (KANs) into the core components of GNNs—node embedding, message passing, and readout [5]. KA-GNNs use learnable univariate functions (e.g., based on Fourier series) on edges instead of fixed activation functions on nodes. This leads to improved expressivity, parameter efficiency, and interpretability compared to standard GNNs based on multi-layer perceptrons (MLPs) [5]. Experimental results show that KA-GNNs consistently outperform conventional GNNs in prediction accuracy and computational efficiency across multiple molecular benchmarks [5].

Quantized GNNs: To address the high computational and memory demands of GNNs, quantization techniques represent model parameters (weights, activations) using fewer bits [10]. Applying the DoReFa-Net quantization algorithm to GNN models can significantly reduce memory footprint and inference latency while maintaining predictive performance, making deployment on resource-constrained devices feasible [10]. Performance is maintained well at 8-bit precision for tasks like predicting quantum mechanical properties, though aggressive 2-bit quantization can lead to significant degradation [10].

Table 2: Performance Comparison of GNN Architectures on Molecular Tasks

| Model Architecture | Dataset | Target Property | Key Metric | Reported Performance | Key Advantage |

|---|---|---|---|---|---|

| KA-GNN [5] | Multiple Molecular Benchmarks | Various Properties | Accuracy | Consistent Improvement over baselines | High accuracy & interpretability |

| GNN + Quantization (8-bit) [10] | QM9 | Dipole Moment (μ) | RMSE | Comparable to Full-Precision | High computational efficiency |

| GNN + Quantization (2-bit) [10] | QM9 | Dipole Moment (μ) | RMSE | Significant performance degradation | (High compression) |

| DIDgen (Inverse Design) [12] | QM9 | HOMO-LUMO Gap | Success Rate (within 0.5 eV of target) | Comparable or better than genetic algorithms | Direct molecular generation |

Application: Inverse Molecular Design

A powerful application of GNNs is inverse molecular design, where the goal is to generate novel molecular structures with desired target properties. The DIDgen (Direct Inverse Design Generator) method leverages the differentiability of a pre-trained GNN property predictor [12]. It starts from a random graph or existing molecule and performs gradient ascent on the input graph (adjusting the adjacency and feature matrices) to optimize the target property, while enforcing chemical validity constraints [12]. This approach can generate diverse molecules with specific electronic properties, such as HOMO-LUMO gaps, verified by density functional theory (DFT) calculations [12].

Diagram 2: Inverse Design via GNN Gradient Ascent.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Computational Reagents for Molecular Graph Research

| Item / Resource | Category | Function / Application | Example / Note |

|---|---|---|---|

| RDKit | Cheminformatics Library | Construction, manipulation, and featurization of molecular graphs from SMILES or other formats. | Open-source; provides features for nodes (atoms) and edges (bonds). |

| PyTorch Geometric (PyG) | Deep Learning Library | Implements popular GNN architectures (GCN, GIN, GAT) and provides molecular graph datasets. | Standard library for building and training GNN models. |

| QM9 Dataset | Benchmark Dataset | Contains 130k+ small organic molecules with quantum mechanical properties (e.g., dipole moment, HOMO-LUMO gap). | Used for training and benchmarking models for molecular mechanics prediction [12] [10]. |

| MoleculeNet | Benchmark Suite | A collection of diverse molecular datasets for property prediction tasks. | Includes ESOL (solubility), FreeSolv (hydration energy), Lipophilicity, etc. [10]. |

| DIDgen Framework | Generative Model | Enables inverse molecular design by optimizing graph inputs via gradient ascent on a pre-trained GNN. | Generates molecules with targeted electronic properties [12]. |

| DoReFa-Net Algorithm | Quantization Tool | Reduces the memory footprint and computational cost of GNNs by quantizing weights and activations to low-bit precision. | Enables deployment on resource-constrained hardware [10]. |

Graph Neural Networks (GNNs) have become indispensable tools in computational chemistry and drug discovery, providing a powerful framework for learning from molecular graph structures where atoms represent nodes and chemical bonds represent edges [13]. Among the various GNN architectures, Message Passing Neural Networks (MPNNs), Graph Attention Networks (GATs), and Graph Convolutional Networks (GCNs) constitute the foundational pillars for molecular property prediction [14] [15]. These architectures naturally operate on the graph-structured representation of molecules, enabling end-to-end learning of task-specific molecular representations that capture intricate atomic interactions and topological patterns [13] [14]. The significance of these core architectures lies in their ability to overcome limitations of traditional hand-crafted molecular descriptors by directly learning from molecular topology and features [14] [10].

The MPNN framework provides a universal formulation that generalizes many GNN variants, operating through iterative message exchange between connected nodes [14]. GCNs implement spectral graph convolutions with normalized aggregation of neighbor information [15], while GATs incorporate attention mechanisms to weigh the importance of neighboring nodes dynamically [5] [15]. Recent advances have further enhanced these architectures through Kolmogorov-Arnold network (KAN) integrations, bidirectional message passing, and spatial descriptor incorporations, pushing the boundaries of predictive performance for molecular mechanics parameters [5] [15].

Theoretical Foundations of Core GNN Architectures

Mathematical Formulations

The Message Passing Neural Network (MPNN) framework provides a generalized mathematical structure that encompasses many GNN variants [14]. The core MPNN operates through three fundamental phases: message passing, feature update, and readout. For a molecular graph G with node features (hv) and edge features (e{vw}), the message passing at step (t+1) is defined as:

[mv^{t+1} = \sum{w \in N(v)} Mt(hv^t, hw^t, e{vw})]

where (M_t) is the message function and (N(v)) denotes the neighbors of node (v) [14]. The node update function then computes:

[hv^{t+1} = Ut(hv^t, mv^{t+1})]

where (U_t) is the update function [14]. After T message passing steps, a readout function generates the graph-level representation:

[\hat{y} = R({h_v^T | v \in G})]

where R must be permutation invariant to handle variable node orderings [13] [14].

Graph Convolutional Networks (GCNs) implement a specific instantiation of this framework using a convolution-like aggregation function with normalized feature propagation [15]. The layer-wise propagation rule in GCNs is:

[hv^{t+1} = \sigma \left( \sum{w \in N(v) \cup {v}} \frac{1}{\sqrt{dv dw}} h_w^t W^t \right)]

where (dv) and (dw) are node degrees, and (W^t) is a learnable weight matrix [15]. This normalization balances the contribution of highly connected nodes, preventing over-smoothing while enabling feature propagation across the graph.

Graph Attention Networks (GATs) enhance the basic MPNN framework by incorporating attention mechanisms that assign learnable importance weights to neighbors [15]. The attention mechanism in GATs computes:

[\alpha{vw} = \frac{\exp(\text{LeakyReLU}(a^T[Whv || Whw]))}{\sum{k \in N(v)} \exp(\text{LeakyReLU}(a^T[Whv || Whk]))}]

where (a) is a learnable attention vector, (W) is a weight matrix, and (||) denotes concatenation [15]. The node update then becomes:

[hv^{t+1} = \sigma \left( \sum{w \in N(v)} \alpha{vw} Whw^t \right)]

This attention mechanism enables dynamic, task-specific weighting of neighbor influences, improving model expressivity and interpretability [15].

Architectural Properties and Molecular Applications

These core GNN architectures maintain critical properties essential for molecular modeling. Permutation invariance ensures predictions are unchanged by node reordering, while permutation equivariance guarantees consistent feature transformations across the graph [15]. The relational inductive bias inherent in MPNNs preferentially learns from connected nodes, making them particularly suitable for detecting functional groups associated with chemical properties [15].

For molecular applications, these architectures effectively capture both local connectivity and global structural information. Graph Isomorphism Networks (GIN), a variant of MPNNs, have demonstrated exceptional capability in capturing molecular topology, achieving up to 92.7% accuracy in molecular point group prediction tasks [16]. The bidirectional message passing schemes in modern MPNNs better reflect the symmetric nature of covalent bonds, enhancing molecular representation fidelity [15].

Table 1: Core GNN Architectural Properties for Molecular Applications

| Architecture | Key Mechanism | Molecular Advantage | Computational Consideration |

|---|---|---|---|

| MPNN | Message functions + node updates | Flexible framework for complex atomic interactions | High parameter count, moderate complexity |

| GCN | Normalized neighborhood aggregation | Efficient feature propagation | Fastest among GNNs, potential over-smoothing |

| GAT | Attention-weighted aggregation | Dynamic neighbor importance weighting | Doubled parameters vs GCN, enhanced interpretability |

Advanced Architectural Variants and Performance

Integrated and Enhanced Architectures

Recent research has developed sophisticated integrations that combine strengths across architectural families. The Kolmogorov-Arnold GNN (KA-GNN) framework integrates KAN modules into all three core GNN components: node embedding, message passing, and readout [5]. KA-GNN replaces standard multilayer perceptrons (MLPs) with Fourier-series-based univariate functions, enhancing function approximation capabilities and theoretical expressiveness [5]. This integration has spawned variants like KA-GCN and KA-GAT, which consistently outperform conventional GNNs in prediction accuracy and computational efficiency across multiple molecular benchmarks [5].

The Edge-Set Attention (ESA) architecture represents another significant advancement, treating graphs as sets of edges and employing purely attention-based learning [17]. ESA vertically interleaves masked and vanilla self-attention modules to learn effective edge representations while addressing potential graph misspecifications [17]. Despite its simplicity, ESA outperforms tuned message passing baselines and complex transformer models across more than 70 node and graph-level tasks, demonstrating exceptional scalability and transfer learning capability [17].

Bidirectional message passing with attention mechanisms has emerged as a particularly effective strategy, surpassing more complex models pre-trained on external databases [15]. This approach eliminates artificial directionality in molecular graphs, better reflecting the symmetric nature of covalent bonds. Simpler architectures that exclude redundant self-perception and employ minimalist message formulations have shown higher class separability, challenging the assumption that increased complexity always improves performance [15].

Quantitative Performance Comparison

Table 2: Performance Comparison of GNN Architectures on Molecular Benchmarks

| Architecture | QM9 Accuracy (MAE) | Point Group Prediction | Computational Efficiency | Key Advantage |

|---|---|---|---|---|

| Standard GCN | Baseline | N/A | Fastest | Simplicity, speed |

| Standard GAT | +5-10% vs GCN | N/A | Moderate | Dynamic weighting |

| KA-GNN | +15-20% vs GCN [5] | N/A | High | Expressivity, parameter efficiency |

| GIN | N/A | 92.7% accuracy [16] | Moderate | Captures graph isomorphisms |

| Bidirectional MPNN | Superior to classical MPNN [15] | N/A | Moderate | Molecular symmetry preservation |

| ESA | Outperforms tuned baselines [17] | N/A | High scalability | Transfer learning capability |

Experimental Protocols for Molecular Property Prediction

Protocol 1: KA-GNN Implementation for Molecular Property Prediction

Purpose: To implement a Kolmogorov-Arnold Graph Neural Network for enhanced molecular property prediction accuracy and interpretability [5].

Materials and Reagents:

- Dataset: QM9 dataset (130,831 molecules with 19 quantum mechanical properties) [10]

- Software: PyTorch Geometric, RDKit for molecular featurization

- Hardware: GPU with ≥8GB memory (NVIDIA RTX 2080+ recommended)

- KA-GNN Implementation: Fourier-based KAN layers for node embedding, message passing, and readout

Procedure:

- Data Preprocessing:

- Convert SMILES representations to molecular graphs with node and edge features

- Initialize atom features (atomic number, radius, hybridization) and bond features (bond type, conjugation)

- Split dataset into training (80%), validation (10%), and test (10%) sets

Model Architecture Configuration:

- Implement Fourier-KAN layers with basis functions: (\phi(x) = \sum{k=1}^K (ak \cos(kx) + b_k \sin(kx)))

- Construct KA-GCN variant with KAN-based node embedding initialization

- Configure message passing with 4-6 layers of residual KAN modules

- Implement graph-level readout using attention-based KAN pooling

Training Protocol:

- Initialize parameters using Xavier uniform distribution

- Use Adam optimizer with learning rate 0.001 (exponentially decayed by 0.95 every 50 epochs)

- Train with mean absolute error (MAE) loss function for 500 epochs

- Apply early stopping with patience of 30 epochs based on validation loss

Interpretation and Analysis:

- Extract attention weights from KA-GAT layers to identify chemically significant substructures

- Visualize Fourier basis functions to understand frequency patterns captured

- Compare predictive performance against baseline GCN and GAT models

Troubleshooting: If training instability occurs, reduce learning rate to 0.0005 or decrease KAN network width. For overfitting, apply edge dropout (0.1 rate) during message passing.

Protocol 2: Bidirectional MPNN with Spatial Descriptors

Purpose: To implement a bidirectional message passing network with 3D molecular descriptors for improved molecular mechanics parameter prediction [15].

Materials and Reagents:

- Dataset: Target-specific molecular dataset (e.g., MD17 for molecular dynamics)

- Spatial Descriptors: van der Waals radius, electronegativity, dipole polarizability

- Software: RDKit for 3D conformation generation and descriptor calculation

Procedure:

- Molecular Graph Construction:

- Generate 3D conformations for all molecules using RDKit MMFF94 force field optimization

- Extract element-like 2D features (atomic number, hybridization, bond types)

- Calculate spatial descriptors: van der Waals interactions, electronegativity differences

- Construct bidirectional edges for all covalent bonds

Model Architecture:

- Implement bidirectional message passing without self-perception connections

- Integrate attention mechanism with 4 attention heads

- Exclude convolution normalization factors based on target dataset characteristics

- Add residual connections between MPNN layers to prevent over-smoothing

Training Configuration:

- Use combined loss function: MAE for properties + auxiliary node classification

- Apply gradient clipping with maximum norm of 1.0

- Implement learning rate warmup for first 10 epochs

- Use batch size of 32 with graph-based batching

Validation and Interpretation:

- Visualize node-level predictions using colormaps on molecular structures

- Analyze attention weights to identify key molecular substructures

- Compare performance with and without spatial descriptors

Troubleshooting: If performance plateaus, increase spatial descriptor dimensionality or add multi-hop edges (2-hop, 3-hop) to capture angular information.

Research Reagent Solutions

Table 3: Essential Research Reagents for GNN Molecular Applications

| Reagent / Resource | Specifications | Application Context | Access Source |

|---|---|---|---|

| QM9 Dataset | 130,831 molecules, 19 quantum mechanical properties [10] | Benchmarking quantum property prediction | PyTorch Geometric MoleculeNet |

| ESOL Dataset | 1,128 molecules with water solubility data [10] | Solubility and pharmacokinetic prediction | PyTorch Geometric MoleculeNet |

| FreeSolv | 642 molecules with hydration free energy [10] | Solvation property prediction | PyTorch Geometric MoleculeNet |

| Lipophilicity Dataset | 4,200 molecules with octanol/water distribution coefficient [10] | Drug permeability and ADMET prediction | PyTorch Geometric MoleculeNet |

| RDKit | Cheminformatics library with 3D conformation generation | Molecular featurization and graph construction | Open-source Python package |

| PyTorch Geometric | GNN library with pre-built molecular graph layers | Model implementation and training | Open-source Python library |

| DoReFa-Net Quantization | Algorithm for model compression with flexible bit-widths [10] | Deployment on resource-constrained devices | Research implementation |

Architectural Visualizations

Message Passing Neural Network Framework

GNN Architecture Comparison

The core GNN architectures—Message Passing Neural Networks, Graph Attention Networks, and Graph Convolutional Networks—provide the fundamental building blocks for molecular property prediction in drug discovery and materials science. While each architecture offers distinct advantages, the emerging trend integrates their strengths into hybrid models like KA-GNNs and bidirectional MPNNs with attention mechanisms [5] [15]. These advanced architectures demonstrate superior performance by combining expressive power with computational efficiency while maintaining chemical interpretability.

Future directions point toward simplified yet powerful architectures that eliminate redundant components while incorporating essential molecular descriptors. The integration of 3D spatial information with 2D topological graphs provides a balanced approach that preserves predictive performance while reducing computational costs by over 50% [15], making these architectures particularly advantageous for high-throughput virtual screening campaigns in drug discovery pipelines.

Molecular Mechanics (MM) force fields are the computational cornerstone for simulating large biological systems, such as proteins and nucleic acids, over physiologically relevant timescales. Traditional MM force fields operate by using a pre-defined set of atom types to assign parameters for the energy function via lookup tables. This approach, while computationally efficient, often lacks the accuracy and transferability required for exploring diverse regions of chemical space. The molecular graph—a representation where atoms are nodes and bonds are edges—naturally encodes the topology and connectivity of a molecule. This makes it an ideal foundation for rethinking parameter assignment. Framing MM parameter prediction as a graph learning task harnesses the power of Graph Neural Networks (GNNs) to learn complex, non-linear relationships between a molecule's structure and its optimal force field parameters, creating a synergistic partnership between physical modeling and data-driven learning.

This paradigm shift, exemplified by next-generation force fields like Grappa, moves away from hand-crafted rules and instead uses GNNs to predict MM parameters directly from the molecular graph [18] [19]. The synergy lies in the marriage of MM's computationally efficient energy functional form with the expressive power and accuracy of modern graph learning, enabling simulations that are both fast and highly accurate.

Graph Learning Architectures for Molecular Mechanics

The application of GNNs to molecular graphs relies on a message-passing framework, where nodes iteratively aggregate information from their neighbors to build rich representations of their local chemical environment [20]. Two advanced architectures demonstrate the cutting edge of this approach.

Grappa: A Graph Attention and Transformer-Based Approach

Grappa is a machine-learned molecular mechanics force field that employs a graph attentional neural network to generate atom embeddings from the molecular graph, capturing the local chemical environment without hand-crafted features [18] [19]. A key innovation is its use of a transformer with symmetry-preserving positional encoding to predict the MM parameters (bond force constants k_ij, equilibrium bond lengths r^(0)_ij, etc.) from these embeddings [18].

Critically, the Grappa architecture is designed to respect the inherent permutation symmetries of the MM energy function. For instance, the energy contribution from a bond between atoms i and j must be invariant to the order of the atoms (ξ(bond)_ij = ξ(bond)_ji). Grappa builds these physical constraints directly into the model, ensuring that predicted parameters are physically meaningful [18].

Kolmogorov-Arnold Graph Neural Networks (KA-GNNs)

An emerging alternative enhances GNNs using the Kolmogorov-Arnold representation theorem. KA-GNNs replace standard linear transformations and activation functions in GNNs with learnable univariate functions, often based on Fourier series or B-splines, leading to improved expressivity and parameter efficiency [5].

KA-GNNs can be integrated into all core components of a GNN:

- Node Embedding: Initial atom features are transformed using KAN layers.

- Message Passing: Message aggregation and updating functions are handled by KAN modules.

- Readout: Graph-level representations are constructed using KANs [5].

This architecture has shown superior performance in molecular property prediction tasks, suggesting its potential for capturing the complex functional relationships required for accurate MM parameter assignment [5].

Application Notes: Protocols and Performance

Experimental Workflow for a Grappa-Based Force Field

The following diagram outlines the end-to-end workflow for developing and deploying a machine-learned MM force field like Grappa.

Quantitative Performance Comparison

The table below summarizes the performance of Grappa against traditional and other machine-learned force fields on benchmark tasks. The data demonstrates that Grappa achieves state-of-the-art accuracy while maintaining the computational cost of traditional MM force fields [18] [19].

Table 1: Performance Comparison of Molecular Mechanics Force Fields

| Force Field | Type | Small Molecule Energy Accuracy (RMSE) | Peptide/RNA Performance | Computational Cost | Transferability to Macromolecules |

|---|---|---|---|---|---|

| Grappa | Machine-Learned (GNN) | Outperforms tabulated & ML MM FFs [19] | State-of-the-art MM accuracy; agrees with expt. J-couplings [18] | Same as traditional MM FFs [18] | Demonstrated (proteins, virus particle) [18] |

| Traditional MM (e.g., AMBER) | Tabulated (Atom Types) | Baseline | Good, may require corrections like CMAP [18] | Baseline (Highly efficient) | Excellent |

| Espaloma | Machine-Learned (GNN) | Lower accuracy than Grappa on benchmark [18] | Good | Same as traditional MM FFs | - |

| E(3)-Equivariant NN | Machine-Learned (Geometric) | Very High | N/A | Several orders of magnitude higher than MM [18] | Often limited by cost |

Detailed Experimental Protocol

Protocol 1: Training a Grappa Model for Protein Simulation

This protocol details the steps for training a Grappa force field model to predict energies and forces for peptides and proteins.

Objective: To train a GNN model that predicts MM parameters for a given molecular graph, minimizing the discrepancy between MM-calculated and reference quantum mechanical (QM) energies and forces.

Materials and Input Data:

- Training Dataset: A curated set of molecular graphs and their corresponding conformations with reference QM energies and forces. Example: The Espaloma dataset, containing over 14,000 molecules and more than one million conformations for small molecules, peptides, and RNA [18].

- Model Architecture: Grappa's graph attentional network and symmetric transformer architecture [18].

- Software Framework: A deep learning framework like PyTorch or TensorFlow, and a molecular dynamics engine (GROMACS, OpenMM) for energy evaluation [18].

- Hardware: Modern GPUs for efficient model training.

Procedure:

- Data Preparation:

- Assemble a dataset of molecular graphs and their diverse conformations.

- Generate reference QM energies and atomic forces for each conformation using a QM software package (e.g., Gaussian, ORCA).

Model Configuration:

- Initialize the Grappa model, which consists of a graph attentional network for generating atom embeddings and a symmetric transformer for predicting MM parameters (

ξ) for bonds, angles, and dihedrals [18]. - The model output is a complete set of MM parameters for the input molecular graph.

- Initialize the Grappa model, which consists of a graph attentional network for generating atom embeddings and a symmetric transformer for predicting MM parameters (

End-to-End Training:

- The training loop is as follows:

a. For a given molecular graph and conformation, the model predicts MM parameters

ξ. b. The MM energy functionE_MM(x, ξ)is evaluated using the predicted parameters and the molecular conformationx[18]. c. The loss function is computed by comparing the predicted MM energies and forces to the reference QM energies and forces. d. Model parameters are updated via backpropagation through the entire computational graph, including the differentiable MM energy function.

- The training loop is as follows:

a. For a given molecular graph and conformation, the model predicts MM parameters

Validation and Testing:

- Validate the model on a held-out set of molecules from the training dataset.

- Test the model's transferability on unseen molecular systems, such as peptide radicals or small proteins like chignolin, by assessing its ability to reproduce experimental observables like J-couplings or folding free energies [18].

Deployment in MD Simulations:

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Resources for Developing Machine-Learned Force Fields

| Tool / Resource | Function | Application in MM Parameter Prediction |

|---|---|---|

| Graph Neural Network Libraries (PyTorch Geometric, DGL) | Provides pre-built modules for implementing GNN architectures. | Used to construct the core graph learning model (e.g., Grappa's graph attention layers) [18]. |

| Quantum Chemistry Software (Gaussian, ORCA) | Generates high-quality reference data. | Computes QM energies and forces for molecular conformations in the training dataset [18]. |

| Molecular Dynamics Engines (GROMACS, OpenMM) | Performs highly optimized energy and force calculations and MD simulations. | Serves as the backend to evaluate the MM energy function E_MM(x, ξ) with predicted parameters [18] [19]. |

| Benchmark Datasets (e.g., Espaloma Dataset) | Standardized datasets for training and evaluation. | Provides a diverse set of molecules (small molecules, peptides, RNA) for model development and comparison [18]. |

| Kolmogorov-Arnold Network (KAN) Modules | A promising alternative to MLPs for greater expressivity. | Can be integrated into GNNs (KA-GNNs) for node embedding, message passing, and readout, potentially improving accuracy [5]. |

Logical Framework of the Grappa Architecture

The following diagram details the core architecture of Grappa, illustrating how permutation symmetries are maintained during the prediction of different MM parameter types.

Framing MM parameter prediction as a graph learning task represents a paradigm shift with immediate and significant benefits. The synergy between the physical interpretability and efficiency of Molecular Mechanics and the accuracy and adaptability of Graph Neural Networks has been proven technically feasible and scientifically valuable by force fields like Grappa. This approach delivers state-of-the-art accuracy across small molecules, peptides, and RNA while retaining the computational efficiency necessary to simulate massive systems like an entire virus particle [18].

Future research will likely focus on several key areas: extending the graph representation to include non-covalent interactions explicitly, which has been shown to boost performance in other molecular property prediction tasks [5]; integrating geometric information (3D coordinates) alongside the topological graph to better capture steric and electrostatic effects; and the continued development of more expressive and efficient GNN architectures, such as KA-GNNs, to push further toward chemical accuracy. This synergistic framework is poised to remain a driving force in the next generation of biomolecular simulation.

Architectures in Action: Methodological Advances and Real-World Applications

Molecular Mechanics (MM) force fields are the empirical backbone of molecular dynamics (MD) simulations, enabling the study of biomolecular structure, dynamics, and interactions at scales inaccessible to quantum mechanical methods. Traditional MM force fields rely on discrete atom-typing rules and lookup tables for parameter assignment, a process that is both labor-intensive and limited in its ability to cover expansive chemical space [18] [21]. The emergence of graph neural networks (GNNs) has catalyzed a paradigm shift, allowing for the development of end-to-end machine learning frameworks that directly predict MM parameters from molecular graphs.

This Application Note details two pioneering GNN-driven frameworks: Grappa (Graph Attentional Protein Parametrization) and Espaloma (Extensible Surrogate Potential Optimized by Message Passing). These frameworks replace traditional atom-typing schemes with continuous learned representations of chemical environments, enabling accurate, transferable, and automated parameter prediction for diverse molecular classes [18] [21]. We provide a comprehensive technical comparison, detailed protocols for implementation, and resource guidance for researchers seeking to integrate these tools into their computational workflows.

Framework Architectures and Core Methodologies

The Grappa Architecture

Grappa employs a sophisticated two-stage architecture to predict molecular mechanics parameters, leveraging a graph neural network followed by a transformer model with specialized symmetry preservation.

- Stage 1: Atom Embedding Generation. A graph attentional neural network processes the 2D molecular graph to generate d-dimensional atom embeddings (( \nu_i \in \mathbb{R}^d )). These embeddings are designed to represent the local chemical environment of each atom, forming a continuous analogue to discrete atom types used in traditional force fields [18] [22] [23].

- Stage 2: Symmetry-Preserving Parameter Prediction. The atom embeddings are passed to a transformer model with symmetry-preserving positional encoding. This stage uses permutation-equivariant layers followed by symmetric pooling to predict the final MM parameters (( \xi^{(l)}{ij...} )) for each interaction type

l(bonds, angles, torsions, impropers). The architecture is explicitly constrained to respect the required permutation symmetries of the MM energy function, ensuring physical consistency [18] [24]. For instance, bond parameters are symmetric (( \xi^{(bond)}{ij} = \xi^{(bond)}{ji} )), and torsion parameters are symmetric with respect to reversal (( \xi^{(torsion)}{ijkl} = \xi^{(torsion)}_{lkji} )) [18].

A key feature of Grappa is its separation of bonded and non-bonded parameter prediction. The current version of Grappa predicts only bonded parameters (force constants and equilibrium values for bonds, angles, and dihedrals), while non-bonded parameters (partial charges, Lennard-Jones) are sourced from a traditional force field. This hybrid approach combines the accuracy of machine-learned bonded terms with the proven stability of established non-bonded models [24].

The Espaloma Architecture

Espaloma also utilizes graph neural networks to create continuous atomic representations and predict MM parameters in an end-to-end differentiable manner.

- Chemical Perception via GNNs: Espaloma replaces rule-based atom-typing with a GNN that operates on the molecular graph. This network generates atomic representations that capture the chemical environment of each atom [21].

- Differentiable Parameter Assignment: These representations are fed into symmetry-preserving pooling layers and feed-forward neural networks to predict the full set of Class I MM force field parameters. The entire process—from graph to parameters—is differentiable, allowing the model to be trained end-to-end by minimizing the difference between MM and quantum chemical (QM) energies and forces [21].

- Self-Consistent Parametrization: A significant advantage of Espaloma is its ability to self-consistently parametrize heterogeneous systems, such as protein-ligand complexes, using a single unified model. This avoids the potential incompatibilities that can arise from combining separate force fields for different molecule classes [21].

Table 1: Comparative Overview of Grappa and Espaloma Frameworks

| Feature | Grappa | Espaloma |

|---|---|---|

| Core Architecture | Graph Attentional Network + Symmetric Transformer [18] | Graph Neural Networks + Symmetry-Preserving Pooling [21] |

| Parameter Scope | Bonds, Angles, Proper/Improper Dihedrals (Bonded only) [24] | Bonds, Angles, Dihedrals, Non-bonded (Charge, vdW) [21] |

| Symmetry Handling | Explicit permutation constraints via model architecture [18] | Symmetry-preserving pooling layers [21] |

| Key Innovation | High accuracy for peptides/proteins; no hand-crafted input features [18] | Self-consistent parametrization across diverse molecular classes [21] |

| MD Engine Integration | GROMACS, OpenMM [24] [25] | OpenMM, Interoperable via Amber/OpenFF formats [21] |

Diagram 1: Grappa's two-stage prediction architecture.

Performance and Benchmarking

Both Grappa and Espaloma have been rigorously benchmarked against traditional and machine-learned force fields, demonstrating significant advancements in accuracy.

Grappa's Performance: Grappa was evaluated on the Espaloma benchmark dataset, which contains over 14,000 molecules and more than one million conformations. It was shown to outperform traditional MM force fields and the machine-learned Espaloma force field in terms of accuracy [18] [22]. Notably, Grappa closely reproduces quantum mechanical (QM) potential energy landscapes and experimentally measured J-couplings for peptides. It has also demonstrated success in protein folding simulations, with MD simulations recovering the experimentally determined native structure of small proteins like chignolin from an unfolded state [18]. Grappa showcases its extensibility by accurately parametrizing peptide radicals, an area typically outside the scope of traditional force fields [18].

Espaloma's Performance: The espaloma-0.3 model was trained on a massive dataset of over 1.1 million QM energy and force calculations. It accurately reproduces QM energetic properties for small molecules, peptides, and nucleic acids [21]. The force field maintains QM energy-minimized geometries of small molecules and preserves the condensed-phase properties of peptides and folded proteins. Crucially, espaloma-0.3 can self-consistently parametrize proteins and ligands, leading to stable simulations and highly accurate predictions of protein-ligand binding free energies, a critical task in drug discovery [21].

Table 2: Key Quantitative Benchmark Results

| Framework | Training Data | Key Benchmark Result | Reported System Performance |

|---|---|---|---|

| Grappa | >1M conformations (Espaloma dataset) [18] | Outperforms traditional MM & Espaloma on Espaloma benchmark [18] | Folds small protein chignolin; stable MD of a virus particle [18] |

| Espaloma-0.3 | >1.1M QM energy/force calculations [21] | Reproduces QM energetics for small molecules, peptides, nucleic acids [21] | Stable simulations; accurate protein-ligand binding free energies [21] |

| ByteFF | 2.4M optimized fragments, 3.2M torsion profiles [26] | State-of-the-art on relaxed geometries, torsional profiles, conformational energies/forces [26] | Exceptional accuracy for intramolecular conformational PES [26] |

Diagram 2: Espaloma's end-to-end differentiable training process.

Application Protocols

Protocol: Using Grappa in GROMACS and OpenMM

This protocol outlines the steps to parametrize a molecular system with Grappa for subsequent MD simulation.

A. Prerequisites

- A molecular structure file (e.g., PDB).

- A working installation of GROMACS or OpenMM.

- Grappa installed in a CPU-mode environment (GPU not required for inference) [24].

B. GROMACS Workflow

- Initial Parametrization: Use

gmx pdb2gmxwith a traditional force field to generate initial topology and structure files. This step establishes the molecular graph and provides the non-bonded parameters. - Grappa Parameter Prediction: Run the

grappa_gmxcommand-line application to create a new topology file with Grappa-predicted bonded parameters. (The-t grappa-1.4flag specifies the pretrained model version, and-pgenerates a plot of parameters for inspection.) [24] - Proceed with Simulation: Continue the standard GROMACS simulation workflow (solvation, energy minimization, equilibration, production) using the new topology file (

topology_grappa.top).

C. OpenMM Workflow

- Create a Classical System: Parametrize your system using OpenMM's

ForceFieldwith a traditional forcefield to obtain non-bonded parameters. - Apply Grappa: Use the

OpenmmGrappawrapper class to replace the bonded parameters in the system with those predicted by Grappa. Alternatively, use theas_openmmfunction to get aForceFieldobject that calls Grappa automatically. [24]

Protocol: Using Espaloma for Parametrization

While specific command-line protocols for the latest espaloma-0.3 are less detailed in the provided results, the general workflow based on its design is as follows:

- System Preparation: Prepare the molecular system of interest as a chemical graph. Espaloma is designed to handle small molecules, peptides, nucleotides, and their complexes.

- Parameter Prediction: Run the Espaloma model on the molecular graph. The model outputs a complete set of Class I MM parameters, including valence and non-bonded terms, in a format compatible with OpenMM or other engines that support Amber/OpenFF parameters.

- Simulation Execution: Load the Espaloma-generated parameters into your MD engine of choice and run the simulation. The self-consistent nature of the parameters ensures stability across heterogeneous molecular systems [21].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Software Solutions

| Item / Resource | Function / Description | Availability |

|---|---|---|

| Grappa (GitHub) | Primary library for training and applying Grappa models; includes integration code for GROMACS/OpenMM. | github.com/graeter-group/grappa [24] |

| Grappa Pretrained Models (e.g., grappa-1.4) | Off-the-shelf models for parameter prediction, covering peptides, small molecules, RNA, and radicals. | Downloaded automatically via the Grappa API [24] |

| Espaloma Package | Software implementation for training and deploying Espaloma force fields. | Open-source (repository linked in publication) [21] |

| OpenMM | A versatile, high-performance MD simulation toolkit with extensive scripting capabilities and GPU support. | openmm.org [24] |

| GROMACS | A widely used, high-performance MD simulation package. | gromacs.org [18] |

| Espaloma Benchmark Dataset | A public dataset of >14k molecules and >1M conformations for training and benchmarking ML force fields. | Referenced in original Espaloma publication [18] [21] |

| ByteFF Training Dataset | A large-scale, diverse QM dataset of 2.4M optimized molecular fragments and 3.2M torsion profiles. | Described in PMC article; availability likely subject to authors' terms [26] |

Grappa and Espaloma represent a transformative advance in molecular mechanics, moving the field from hand-crafted, discrete rule-based parametrization to automated, continuous, and data-driven frameworks. Grappa excels in its high accuracy for biomolecular systems like proteins and peptides and its seamless integration into established simulation workflows. Espaloma stands out for its comprehensive self-consistent parametrization across diverse chemical domains and its end-to-end differentiability. The choice between them depends on the researcher's specific needs: Grappa for robust, high-accuracy biomolecular simulation with minimal computational overhead, and Espaloma for maximum consistency and coverage in heterogeneous chemical systems. Both frameworks significantly lower the barrier to obtaining accurate force field parameters, promising to accelerate research in drug discovery, materials science, and structural biology.

The accurate prediction of molecular mechanics parameters is a cornerstone of computational drug discovery, directly impacting the reliability of molecular dynamics simulations and virtual screening. Traditional methods often struggle with the expansive coverage of chemical space. The integration of two innovative architectures—Kolmogorov–Arnold Networks (KANs) with Graph Neural Networks (GNNs) and Graph Transformers (GTs)—offers a transformative framework for molecular property prediction. KA-GNNs enhance GNNs by replacing standard linear transformations with learnable, univariate functions, leading to superior parameter efficiency and interpretability [5] [27]. Concurrently, Graph Transformers, with their global self-attention mechanisms, provide a flexible and powerful alternative for modeling molecular structures [28]. This Application Note details the protocols for leveraging these architectures to advance the prediction of molecular mechanics force fields and related properties, providing a practical guide for researchers and scientists in the field.

Theoretical Foundation & Key Components

Kolmogorov-Arnold Networks (KANs) in a Nutshell

Inspired by the Kolmogorov-Arnold representation theorem, KANs present a radical departure from traditional Multi-Layer Perceptrons (MLPs). While MLPs apply fixed, non-linear activation functions on nodes, KANs place learnable univariate functions on edges [5] [27]. A multivariate continuous function can be represented as a composition of these simpler univariate functions and additions:

f(𝐱) = ∑Φq ( ∑ϕq,p (xp) )

The functions (ϕ, Φ) are parameterized using basis functions. Initial implementations used B-splines [29], but recent advances propose Fourier-series-based formulations (equation 4, 5 in [5]) which are particularly effective at capturing both low-frequency and high-frequency patterns in molecular graphs, providing strong theoretical approximation guarantees backed by Carleson’s theorem and Fefferman’s multivariate extension [5].

Graph Neural Networks (GNNs) and Graph Transformers (GTs)

GNNs operate on graph-structured data through a message-passing paradigm, where nodes aggregate information from their neighbors to build meaningful representations [30]. Standard GNNs use MLPs for updating node features during this process.

Graph Transformers adapt the self-attention mechanism to graphs, allowing each node to attend to all other nodes, thereby capturing long-range dependencies that local message-passing might miss [28]. They often incorporate structural and spatial information, such as topological distances or 3D geometries, through bias terms or positional encodings.

Protocol: Implementing KA-GNNs for Molecular Property Prediction

This protocol outlines the steps for constructing and training a KA-GNN model, specifically the KA-GCN variant, for predicting molecular mechanics parameters.

Model Architecture: KA-GCN

The following diagram illustrates the flow of information through the KA-GCN architecture, highlighting the integration of KAN layers into the core components of a Graph Convolutional Network.

Step-by-Step Experimental Procedure

Step 1: Data Preparation and Molecular Graph Construction

- Input Data: Utilize a large-scale, high-quality dataset of molecular structures with associated quantum mechanical properties. The OMol25 dataset is a prime example, containing over 100 million calculations at the ωB97M-V/def2-TZVPD level of theory, covering biomolecules, electrolytes, and metal complexes [31].

- Graph Representation: Represent each molecule as a graph ( G=(V, E) ), where:

- Nodes ((vi \in V)): Represent atoms. Initialize node features ((hi^0)) using atomic properties (e.g., atomic number, charge, number of hydrogens). The protocol from [32] suggests enriching these features using a circular algorithm inspired by Extended-Connectivity Fingerprints (ECFPs) that incorporates information from an atom's r-hop neighborhood.

- Edges ((e_{ij} \in E)): Represent chemical bonds (covalent) or non-covalent interactions (within a cut-off distance, e.g., 5 Å). Initialize edge features with bond type, bond length, or other interatomic spatial information [5] [27].

Step 2: Node and Edge Embedding with KANs

- Pass the initial atom features (e.g., atomic number, radius) through a Fourier-KAN layer to generate the initial node embeddings.

- For models incorporating explicit edge embeddings, pass bond features (e.g., bond type, length) through a separate Fourier-KAN layer [5].

Step 3: KAN-Augmented Message Passing

- For each node, aggregate messages from its neighboring nodes. In KA-GCN, this follows a standard GCN aggregation scheme.

- Update the node features using a residual Fourier-KAN layer instead of an MLP: ( hi^{(l+1)} = hi^{(l)} + \text{KAN}(hi^{(l)} + \sum{j \in N(i)} h_j^{(l)}) ) This formulation helps maintain network expressivity and mitigates oversmoothing in deeper architectures [5] [29].

Step 4: KAN-Based Graph Readout

- After (L) layers of message passing, generate a graph-level representation by pooling all node embeddings (e.g., using a mean or sum operation).

- Pass this pooled representation through a final Fourier-KAN module to produce the prediction for the target molecular property (e.g., energy, force field parameter) [5].

Step 5: Model Training and Evaluation

- Loss Function: Use a mean-squared error (MSE) loss for regression tasks like force field parametrization. For conservative force fields, ensure the loss penalizes deviations in predicted forces and energies [31]. ( \mathcal{L} = \frac{1}{N} \sum{i=1}^N (yi - \hat{y}_i)^2 )

- Training Strategy: Employ a two-phase training strategy if predicting conservative forces: first, train a direct-force model, then use it to initialize a conservative-force model for fine-tuning, which significantly reduces wallclock time [31].

- Benchmarking: Evaluate model performance on held-out test sets and standard benchmarks. Compare against state-of-the-art GNNs and GTs to quantify performance gains.

Protocol: Implementing Graph Transformers for Molecular Representation

This protocol describes the use of Graph Transformer models, which serve as a powerful alternative or complementary approach to KA-GNNs.

Model Architecture: 3D Graph Transformer

The diagram below outlines the architecture of a 3D Graph Transformer, which uses spatial distances to bias the self-attention mechanism.

Step-by-Step Experimental Procedure

Step 1: Data Preparation and Feature Engineering

- Use the same molecular graph construction as in Section 3.2, Step 1, but ensure access to 3D molecular conformers [28].

- For each atom, compute its 3D spatial coordinates.

Step 2: Node Feature Initialization and Structural Encoding

- Project initial atom features into a dense embedding vector.

- Compute the shortest path distance (for 2D-GT) or the real-space Euclidean distance (for 3D-GT) between all atom pairs.

- For 3D-GT, bin these distances (e.g., bin width of 0.5 Å, with a spherical cutoff of 5 Å) to create a discrete spatial bias matrix [28].

Step 3: Graph Transformer Layer with Structural Bias

- The self-attention score between node (i) and (j) is computed as: ( \alpha{ij} = \frac{ \text{Exp}(Qi Kj^T + B{ij}) }{ \sum{k} \text{Exp}(Qi Kk^T + B{ik}) } ) where (B_{ij}) is the bias term derived from the binned spatial distance between atoms (i) and (j). This integrates crucial 3D geometric information directly into the attention mechanism [28].

Step 4: Context-Enriched Training (Optional)

- To boost performance, especially in low-data regimes, employ context-enriched training [28]. This can involve:

- Pre-training the model on auxiliary, quantum-mechanically derived atomic-level properties.

- Multi-task learning with auxiliary losses on related properties during the main task training.

Step 5: Property Prediction and Model Evaluation

- Use a dedicated node or a pooling operation on the final node embeddings to get a graph-level representation.

- Pass this representation through a regression head to predict the target molecular mechanics parameter.

- Benchmark the model's performance against GNN baselines and other state-of-the-art methods.

Performance Benchmarking

The following tables summarize the performance of KA-GNNs and Graph Transformers as reported in recent literature.

Table 1: Performance of KA-GNN variants on molecular property benchmarks.

| Model Variant | Architecture Base | Key Innovation | Reported Performance | Citation |

|---|---|---|---|---|

| KA-GCN | Graph Convolutional Network | Fourier-KAN in embedding, message passing, and readout | Consistently outperforms conventional GNNs in accuracy and efficiency on seven molecular benchmarks | [5] |

| KANG | Message-Passing GNN | Spline-based KAN with data-aligned initialization | Outperforms GCN, GAT, and GIN on node (Cora, PubMed) and graph classification (MUTAG, PROTEINS) tasks | [29] |

| KA-GNN (Fourier) | General GNN | Fourier-series basis functions, non-covalent interactions | Surpasses existing state-of-the-art pre-trained models on several benchmark tests | [27] |

Table 2: Comparative performance of Graph Transformer (GT) and GNN models on specific molecular tasks.

| Dataset | Task | 3D-GT Performance (MAE) | GNN Performance (MAE) | Notes | Citation |

|---|---|---|---|---|---|

| BDE | Binding Energy Estimation | On par with best GNNs | Varies by model (e.g., PaiNN, SchNet) | GT models offer advantages in speed and flexibility | [28] |

| Kraken | Sterimol Parameters | On par with best GNNs | Varies by model (e.g., ChIRo) | Context-enriched training (pretraining) boosts GT performance | [28] |

| tmQMg | Property Prediction for TMCs | Competitive performance | Baseline set by GIN-VN, ChemProp | GT demonstrates strong generalization for transition metal complexes | [28] |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key computational tools and datasets for implementing KA-GNNs and Graph Transformers.

| Tool/Resource | Type | Function in Research | Relevance |

|---|---|---|---|

| OMol25 Dataset | Dataset | Provides high-quality, massive-scale QM data for training and benchmarking. | Essential for training robust models on expansive chemical space [31]. |

| ByteFF | Benchmark/Model | An Amber-compatible force field predicted by a GNN; a benchmark for force field accuracy. | Target for property prediction; benchmark for model performance [1]. |

| Fourier-KAN Layer | Software Layer | Learnable activation function using Fourier series to capture complex molecular patterns. | Core component of KA-GNNs for enhanced expressivity [5] [27]. |

| Graphormer | Model Architecture | A leading Graph Transformer implementation that uses spatial biases. | Base architecture for GT protocols; highly flexible [28]. |

| RDKit | Cheminformatics Library | Handles molecule I/O, graph construction, and fingerprint generation. | Foundational for data preprocessing and feature extraction [32]. |

| eSEN / UMA | Model Architecture | State-of-the-art equivariant NNPs; benchmarks for energy and force prediction. | Represents the current state-of-the-art for end-to-end NNP performance [31]. |

The accurate prediction of molecular mechanics parameters is a cornerstone of modern computational chemistry and drug discovery. In this domain, Graph Neural Networks (GNNs) have emerged as transformative tools by natively processing molecular structures represented as graphs, where atoms correspond to nodes and chemical bonds to edges. However, a critical challenge persists: ensuring that these models produce predictions that are consistent with the fundamental laws of physics. This requires the deliberate incorporation of physical symmetries—specifically, E(3)-equivariance for Euclidean transformations (rotations, translations, and reflections) and permutation invariance for the interchange of identical particles.

E(3)-equivariance ensures that a model's predictions for a molecule's energy, forces, or Hamiltonian transform appropriately when the molecule itself is rotated or translated in space. For instance, the forces on atoms, which are vector quantities, should rotate in tandem with the molecular system. Permutation invariance guarantees that the model produces identical outputs for identical molecular structures, regardless of the arbitrary ordering of atoms in the input representation. The integration of these principles is not merely a theoretical exercise; it is essential for developing physically realistic, data-efficient, and generalizable models for molecular property prediction.

Theoretical Foundations and Key Concepts

E(3)-Equivariance in Molecular Systems

In molecular systems, several fundamental symmetries must be respected. A model's architecture must be:

- Translationally invariant: Predictions should not change if every atom in the system is moved by the same displacement vector.

- Rotationally equivariant: The model's vector-valued outputs (e.g., forces or dipole moments) should rotate correspondingly when the input molecule is rotated.

- Permutation invariant: Predictions must be unchanged when identical atoms (e.g., two hydrogen atoms in a methane molecule) are swapped in the input representation.

Formally, a function ( f ) that processes an atomic system is E(3)-equivariant if for any translation ( t ), rotation ( R ), and reflection that are elements of the E(3) group, the following holds: ( f(T{g}(x)) = T'{g}(f(x)) ), where ( Tg ) and ( T'g ) are transformations associated with the group element ( g ) acting on the input and output, respectively. For graph-level predictions such as energy, the requirement is often invariance, a special case of equivariance where ( T'g ) is the identity transformation, meaning ( f(T{g}(x)) = f(x) ) [33].

Permutation Invariance and Equivariance

For graph data, a function ( f ) acting on an adjacency matrix ( A ) satisfies:

- Permutation invariance if ( f(PAP^\top) = f(A) ) for any permutation matrix ( P ).

- Permutation equivariance if ( f(PAP^\top) = Pf(A)P^\top ).

Invariance is typically required for graph-level properties (e.g., total energy), while equivariance is necessary for node-level (e.g., atomic forces) or edge-level predictions. Designing models that inherently possess these properties eliminates the need for data augmentation over all possible permutations and rotations, significantly improving sample efficiency and generalization [34].

Quantitative Performance of Equivariant and Invariant Models

Performance Benchmarks for Molecular Property Prediction

Table 1: Performance comparison of E(3)-equivariant models on molecular property prediction tasks (MAE).

| Model | Dipole Moment | Polarizability | Hessian Matrix | Hyperpolarizability | Reference |

|---|---|---|---|---|---|

| EnviroDetaNet | Lowest MAE | Lowest MAE | 0.016 (MAE) | Lowest MAE | [35] |

| EnviroDetaNet (50% Data) | Slight increase | ~10% error increase | 0.032 (MAE) | Slight increase | [35] |

| DetaNet (Baseline) | Higher MAE | Higher MAE | Baseline MAE | Higher MAE | [35] |

| KA-GNN | Outperforms GCN/GAT | Outperforms GCN/GAT | - | - | [5] |

| NextHAM | - | - | - | - | [36] |

| E2GNN | Outperforms SchNet, MEGNet | Outperforms SchNet, MEGNet | - | - | [37] |

EnviroDetaNet, an E(3)-equivariant message-passing neural network, demonstrates state-of-the-art performance, achieving the lowest Mean Absolute Error (MAE) on properties like dipole moment and polarizability compared to other models like DetaNet. Remarkably, it maintains high accuracy even when trained with only 50% of the original data, showcasing its superior data efficiency and robust generalization capabilities. For instance, on the Hessian matrix prediction task, EnviroDetaNet's error only increased by 0.016 MAE with the halved dataset, remaining 39.64% lower than the original DetaNet model [35].

Table 2: Application-specific benchmarks for Hamiltonian and potential prediction.

| Model | Application | Key Metric | Performance | Reference |

|---|---|---|---|---|

| NextHAM | Materials Hamiltonian | R-space Hamiltonian Error | 1.417 meV | [36] |

| NextHAM | Materials Hamiltonian | SOC Block Error | sub-μeV scale | [36] |

| E2GNN | Interatomic Potentials | MD Simulation Accuracy | Achieves ab initio MD accuracy | [37] |

| Facet | Interatomic Potentials | Training Efficiency | >10x acceleration vs. SOTA | [38] |

| KA-GNN | Molecular Property Prediction | Accuracy & Efficiency | Superior to GCN/GAT baselines | [5] |