Overcoming Sampling Limitations in Force Field Validation: Strategies for Reliable Biomolecular Simulations

Accurate molecular dynamics simulations are paramount for modern drug discovery and structural biology, yet their predictive power is critically limited by insufficient sampling and force field inaccuracies.

Overcoming Sampling Limitations in Force Field Validation: Strategies for Reliable Biomolecular Simulations

Abstract

Accurate molecular dynamics simulations are paramount for modern drug discovery and structural biology, yet their predictive power is critically limited by insufficient sampling and force field inaccuracies. This article provides a comprehensive framework for researchers and drug development professionals to navigate these challenges. We explore the fundamental origins of sampling limitations across diverse biological systems, from folded proteins to intrinsically disordered regions and RNA-ligand complexes. The review systematically evaluates advanced sampling methodologies, force field selection protocols, and robust validation techniques against experimental data. By synthesizing insights from recent benchmarking studies, we offer practical troubleshooting strategies and establish best practices for achieving statistically meaningful, experimentally-validated simulation results that can reliably guide biomedical research.

Understanding the Fundamental Sampling Challenge in Biomolecular Simulations

The Critical Impact of Sampling Limitations on Simulation Reliability

FAQs: Understanding Sampling and Its Challenges

Q1: What is meant by "sampling" in molecular simulations, and why is it a critical issue? In molecular dynamics (MD) and Monte Carlo (MC) simulations, "sampling" refers to the process of generating a representative set of configurations (a trajectory) from the full range of possible states of the system, weighted by their correct Boltzmann probability [1]. The central challenge is that many molecular systems of interest are highly complex, with rough energy landscapes and many local minima. Even with modern computing resources, simulations may not run long enough to overcome energy barriers and visit all relevant states [1] [2]. The usefulness of any simulated result ultimately hinges on the ability to confidently report uncertainties alongside predictions, which requires adequate sampling [1].

Q2: How can I tell if my simulation has been inadequately sampled? Inadequate sampling can manifest in several ways. Key indicators include:

- Poor Convergence of Observables: The estimated value of a property (e.g., average energy or radius of gyration) does not stabilize but instead drifts as the simulation continues.

- High Statistical Uncertainty: Calculations of the "experimental standard deviation of the mean" (often called the standard error) yield large error bars, indicating low confidence in the reported average [1].

- Failure to Replicate Known Experimental Behavior: For example, a simulation of an intrinsically disordered protein (IDP) like amyloid-β might produce an over-structured, collapsed ensemble that contradicts experimental NMR and FRET data showing it is a random coil [2].

- Low Replica Exchange Efficiency: In enhanced sampling methods like Temperature Replica Exchange (TREx), poor swapping rates between adjacent replicas indicate that the sampling is not efficiently exploring configuration space [2].

Q3: My simulation seems trapped in one conformational state. What can I do? This is a classic sampling problem. Solutions involve moving beyond standard MD:

- Employ Enhanced Sampling Methods: Techniques like Replica Exchange with Solute Tempering (REST) or metadynamics can help the system escape local energy minima [2] [3].

- Consider Advanced Algorithms: For systems with entropic barriers, such as IDPs, non-equilibrium methods like Temperature Cool Walking (TCW) have been shown to converge more quickly to the correct equilibrium distribution than TREx [2].

- Leverage Markov State Models (MSMs): Instead of one long simulation, run many shorter, independent simulations and use MSMs to reconstruct the long-timescale behavior and thermodynamics [2].

Q4: How does the choice of force field interact with sampling limitations? Force field accuracy and sampling adequacy are deeply intertwined. An inaccurate force field will yield incorrect results regardless of sampling. Conversely, even a perfect force field is useless without sufficient sampling to generate a representative ensemble [2]. This creates a "combined force field–sampling problem," where a perceived force field failure might actually be a sampling failure. For instance, short simulations or inefficient sampling protocols might incorrectly suggest a force field over-stabilizes certain structures, while more extensive sampling with the same force field reveals agreement with experiment [2].

Q5: What are the best practices for quantifying and reporting uncertainty from my simulations? Best practices advocate a tiered approach [1]:

- Feasibility Checks: Perform back-of-the-envelope calculations to determine if the desired computation is feasible.

- Semi-Quantitative Checks: Before final estimation, check for signs of inadequate sampling.

- Uncertainty Quantification (UQ): Use robust statistical techniques to derive "error bars." The "experimental standard deviation of the mean" is a common measure, but it must be calculated while accounting for correlation time in the data. Simply using every data point as independent will underestimate the true uncertainty [1]. Communicating the methods and assumptions used in your UQ analysis is critical.

Troubleshooting Guides

Issue: Over-Structured Disordered Proteins

Problem: Simulations of intrinsically disordered proteins (IDPs) like amyloid-β or ACTR result in overly collapsed, rigid conformations with excess secondary structure, contradicting experimental evidence [2] [4].

Diagnosis: This is a common symptom of the combined force field–sampling problem. The force field may have a bias, or the sampling may be insufficient to escape low-energy kinetic traps.

Solutions:

- Validate with Multiple Experiments: Compare your simulated ensemble against a variety of experimental data, such as NMR J-couplings, chemical shifts, FRET efficiency, and radius of gyration (Rg) [2] [5].

- Upgrade Your Sampling Protocol: Move beyond simple MD. Implement enhanced sampling methods designed for flatter energy landscapes. Temperature Cool Walking (TCW) has shown success for Aβ peptides, and Markov State Models (MSMs) built from many simulations can yield correct random coil ensembles where shorter runs fail [2].

- Evaluate Your Force Field: Test a newer force field specifically optimized for disordered proteins (e.g., a99SB-disp) [4]. Be aware that implicit solvent models often exacerbate over-compaction and may require specific parameterization for IDPs [4].

Issue: Poor Convergence in Free Energy Calculations

Problem: Relative binding free energy (RBFE) calculations, used in drug discovery, show high variance and poor convergence, making it difficult to predict ligand affinity reliably [3].

Diagnosis: Inadequate sampling of relevant ligand and protein conformational states during the alchemical transformation. Large energy barriers can trap the system.

Solutions:

- Implement Hamiltonian Replica Exchange: Use methods like REST (Replica Exchange with Solute Tempering) to enhance sampling along the alchemical λ pathway, helping to overcome barriers between different conformations [3].

- Ensure Technical Accuracy: Use automated, well-validated workflows (e.g., with OpenMM) to minimize setup errors. Carefully prepare the protein, assign correct partial charges (AM1-BCC or RESP), and select an appropriate water model (e.g., TIP3P, SPC/E) [3].

- Extend Simulation Time: If uncertainty remains high after enabling replica exchange, increase the simulation time per λ window.

Issue: Force Field Validation Yields Inconsistent Results

Problem: When validating a force field on a test system (e.g., a small protein), the results are highly variable between different simulation runs and do not consistently match experimental data.

Diagnosis: The sampling time is likely shorter than the slowest relaxation time of the system. What appears to be a force field error may be a lack of conformational sampling.

Solutions:

- Conduct Extensive Sampling: For force field validation, it is critical to sample the test systems as extensively as possible. Use long simulation times (e.g., 10+ µs for small folded proteins) to ensure the level of agreement with experiment is a true measure of force field accuracy [5] [6].

- Test on Multiple Systems: Validate force fields across a diverse set of proteins and peptides (folded proteins, helical peptides, β-hairpins) to check for biases toward specific secondary structures [5] [6].

- Quantify Statistical Uncertainty: Always report uncertainty estimates (e.g., standard error) for any simulated observable to communicate the confidence in your results [1].

Quantitative Data on Sampling Impact

The table below summarizes quantitative findings from studies that highlight the direct impact of sampling methodology on simulation outcomes.

Table 1: Impact of Sampling Method on Simulated Protein Ensembles

| Protein System | Sampling Method | Key Result | Experimental Reference | Citation |

|---|---|---|---|---|

| Amyloid-β (Aβ42/Aβ43) | Temperature Replica Exchange (TREx) | Over-structured ensembles; poor agreement with NMR/FRET | Roche et al.; Meng et al. | [2] |

| Amyloid-β (Aβ42) | Temperature Cool Walking (TCW) | Random coil ensembles; very good agreement with NMR/FRET | Roche et al.; Meng et al. | [2] |

| Amyloid-β (Aβ42) | ~200 µs MD + Markov State Model (MSM) | Extended, largely unstructured conformations (~10-20% helix); good agreement with experiment | Roche et al.; Meng et al. | [2] |

| Amyloid-β (Aβ40) | Single 30 µs trajectory (Amber99ffsb-ildn*/TIP3P) | ~90% β-sheet structure; disagrees with experiment | Roche et al. | [2] |

| Folded Proteins (Ubiquitin, GB3) | 10 µs MD per force field | Native state stable in 7 of 8 force fields; one (CHARMM22) unfolded | NMR data | [5] [6] |

Experimental Protocols for Assessing Sampling

Protocol: Quantifying Statistical Uncertainty and Correlation Time

This methodology is essential for reporting reliable results from any MD or MC simulation [1].

- Run Production Simulation: Generate a continuous trajectory of your property of interest (e.g., potential energy, Rg).

- Check for Equilibration: Discard the initial non-equilibrated portion of the trajectory.

- Calculate the Arithmetic Mean:

- For

nobservations, calculate:x̄ = (1/n) * Σ(x_j)

- For

- Calculate the Experimental Standard Deviation:

s(x) = sqrt( [ Σ(x_j - x̄)² ] / (n - 1) )

- Account for Correlation Time (τ): Time-series data from simulations are rarely independent. The correlation time is the longest separation at which observations are still correlated. Failing to account for this leads to underestimated errors.

- Calculate the Experimental Standard Deviation of the Mean (Standard Error):

- With correlation, the effective number of independent samples is

n_eff = n / (2τ). - The standard error is then:

s(x̄) = s(x) / sqrt(n_eff) - This

s(x̄)is your standard uncertainty for the reported mean.

- With correlation, the effective number of independent samples is

Protocol: Validating a Force Field for Disordered Proteins

This protocol uses a multi-faceted experimental comparison to rigorously test a force field and sampling method for IDPs [2] [4].

- System Preparation: Select an IDP with robust experimental data (e.g., Aβ42, ACTR). Set up the simulation with the chosen force field and water model.

- Enhanced Sampling Simulation: Run simulations using an enhanced sampling method (e.g., TCW, REST, or long MD with MSM analysis). For comparative studies, run the same system with multiple sampling protocols.

- Analyze Structural Ensemble:

- Calculate the radius of gyration (Rg) distribution.

- Calculate the secondary structure content over time.

- Identify persistent long-range contacts.

- Compare with Experimental Data:

- NMR: Back-calculate NMR chemical shifts and J-couplings from your ensemble and compare directly to experimental values [2] [5].

- FRET: Compute FRET efficiencies from your simulated distances and compare to single-molecule FRET data [2].

- SAXS: Calculate the theoretical SAXS profile from your ensemble and compare to the experimental scattering profile [2].

- Evaluate Agreement: A well-sampled simulation with an accurate force field will produce an ensemble that simultaneously agrees with all these experimental metrics, showing a dominant random coil character for canonical IDPs.

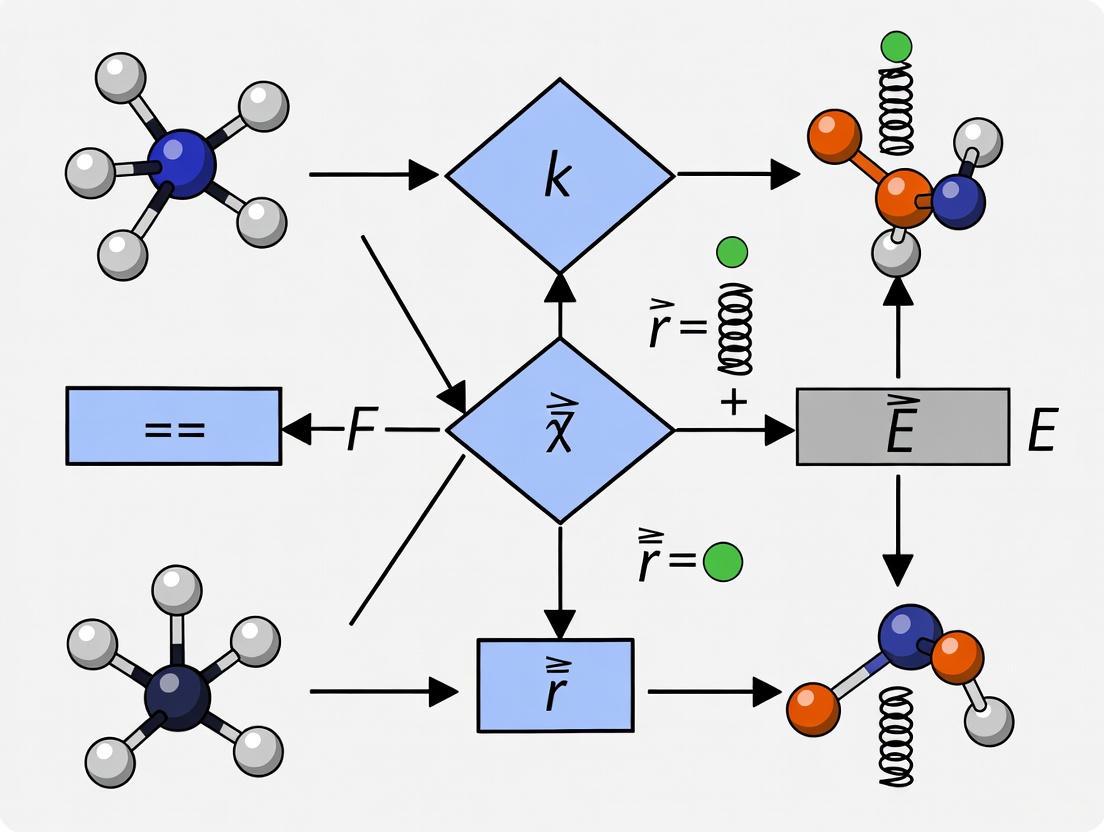

Workflow Diagram

The following diagram illustrates the integrated process of diagnosing and addressing sampling limitations in force field validation.

Diagram: Workflow for Addressing Sampling Limitations. This chart outlines the logical process for diagnosing sampling issues, implementing solutions, and validating the final result.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Tool/Solution | Function / Purpose | Example Use Case | Citation |

|---|---|---|---|

| Enhanced Sampling Algorithms | Accelerate barrier crossing and improve conformational sampling. | ||

| - Temperature Replica Exchange (TREx) | Parallel simulations at different temperatures swap configurations. | Common initial choice for IDP studies, but can be inefficient. | [2] |

| - Temperature Cool Walking (TCW) | Non-equilibrium method using one high-T replica to generate trial moves. | Can provide faster convergence for IDPs like amyloid-β. | [2] |

| - Replica Exchange with Solute Tempering (REST) | Reduces the number of replicas by tempering only the solute-solute and solute-solvent interactions. | Enhances sampling in binding sites for protein-ligand FEP calculations. | [3] |

| Analysis Frameworks | Extract thermodynamic and kinetic information from simulation data. | ||

| - Markov State Models (MSMs) | Build a kinetic model from many short simulations to describe long-timescale behavior. | Characterizing the full folding pathway of proteins and IDPs. | [2] |

| - Uncertainty Quantification (UQ) Tools | Statistical methods (e.g., block averaging, autocorrelation) to calculate standard error. | Essential for reporting statistically robust results in any publication. | [1] |

| Validation Data Sources | Experimental data used to validate the accuracy of simulated ensembles. | ||

| - NMR (J-couplings, NOEs, CS) | Provides atomic-level information on structure and dynamics. | Gold standard for validating folded state dynamics and IDP randomness. | [2] [5] |

| - Single-Molecule FRET | Measures distance distributions in biomolecules. | Ideal for validating the global dimensions of IDPs. | [2] |

| Specialized Force Fields | Parameter sets designed for specific challenges. | ||

| - a99SB-disp | Explicit solvent force field for folded and disordered proteins. | Used as a baseline for developing improved implicit solvent models. | [4] |

| - GB99dms | Improved implicit solvent force field for disordered proteins. | Studying protein aggregation with implicit solvent. | [4] |

Frequently Asked Questions (FAQs) and Troubleshooting Guides

FAQ: Core Concepts and Selection

Q1: What are the key limitations of current force fields when studying non-standard systems like RNA-ligand complexes or bacterial membranes?

Current force fields, while generally effective for standard protein structures, show specific limitations in more complex systems. For RNA-ligand complexes, state-of-the-art force fields can stabilize RNA structures but often at the expense of distorting the experimental model or failing to consistently maintain stable RNA-ligand interactions. Further refinements are needed to accurately reproduce experimental observations of binding stability [7]. For specialized systems like mycobacterial membranes, general force fields (GAFF, CGenFF, OPLS) lack the dedicated parameters for unique bacterial lipids, leading to inaccurate predictions of key membrane properties such as lipid tail rigidity and diffusion rates. Specialized force fields like BLipidFF are required to capture these biophysical characteristics accurately [8].

Q2: How do I choose an appropriate force field for simulating drug-like small molecules?

Validation studies against experimental data, such as osmotic coefficients, are crucial for selecting a force field for drug-like molecules. Several generalized force fields have been tested for this purpose [9]:

- Generally Reliable: GAFF, GAFF2, CGenFF, and OPLS-AA generally produce results in good agreement with experimental osmotic coefficients.

- Use with Caution: PRODRGFF often produces poor agreement with experimental data and should be avoided or its parameters carefully validated.

- Known Issues: All tested force fields (GAFF, CGenFF, OPLS-AA, PRODRGFF) poorly reproduced experimental results for purine-derived molecules (e.g., purine, caffeine), indicating a common challenge with this chemical class [9].

For the most up-to-date and accurate parameters, consider modern data-driven force fields like ByteFF, which are trained on expansive, diverse quantum chemical datasets to provide broad coverage of drug-like chemical space [10].

Q3: What are the best practices for validating a force field for my specific system?

Force field validation should involve comparisons against relevant experimental data for your system of interest. The specific benchmarks depend on the molecular type:

- For Folded Proteins: Compare simulation results to experimental NMR data, including structural data (e.g., chemical shifts) and dynamical properties (e.g., order parameters). Testing a force field's bias towards different secondary structures using small peptides and assessing its ability to fold small proteins are also critical strategies [5].

- For Intrinsically Disordered Regions (IDRs): Validate against experimental data that reports on ensemble dimensions, such as the radius of gyration (Rg) from Small-Angle X-Ray Scattering (SAXS) or data from single-molecule FRET (smFRET). The accuracy of force fields like Mpipi and its variants is often judged by their ability to recapitulate a curated set of experimental Rg values [11].

- For Small Molecules: Calculate physical properties like osmotic coefficients from simulation and compare them directly to experimental measurements [9].

FAQ: Troubleshooting Common Problems

Q1: My RNA-small molecule complex becomes unstable during simulation, with the ligand drifting away from its binding site. What could be wrong?

This is a recognized challenge. Your troubleshooting should consider:

- Force Field Choice: The latest RNA force fields may still not perfectly reproduce experimental binding stability. Consult recent systematic assessments to select the most reliable one for your specific complex [7].

- Initial Experimental Structure: Be cautious when assuming the experimental PDB structure is the definitive conformation. Some local distortions in the deposited model may result from refinement under limited experimental restraints. The force field might be attempting to correct these, leading to apparent instability. Analyze which parts of the structure are well-supported by direct experimental data [7].

- Parametrization of the Ligand: Ensure the small molecule parameters are derived from a robust protocol, such as using GAFF2 with RESP2 charges, to avoid introducing noise from poor ligand parametrization [7].

Q2: I am simulating a system with an intrinsically disordered protein (IDP), but the computed radius of gyration does not match experimental SAXS data. How can I resolve this?

A discrepancy between simulated and experimental Rg suggests a problem with the force field's description of the IDR's sequence-ensemble relationship.

- Switch to a Validated Coarse-Grained Force Field: All-atom force fields developed for folded proteins may not capture IDR physics correctly. Use a coarse-grained force field specifically designed and validated for disordered proteins, such as Mpipi or CALVADOS [11].

- Consider Force Field Fine-Tuning: Even specialized force fields may require minor adjustments. For example, the Mpipi-GG variant was created to improve agreement with known experimental trends for IDR dimensions [11].

- Use a Rapid Predictor for Hypothesis Testing: Tools like ALBATROSS can predict IDR conformational properties (Rg, end-to-end distance) directly from sequence in seconds. Use it to quickly test if your simulated Rg is an expected outcome of the sequence or a clear outlier, which would point to a force field issue [11].

Q3: Parameterizing a custom small molecule force field is tedious and expensive. Are there more efficient modern approaches?

Yes, traditional single-molecule force field fitting is being replaced by automated, data-driven methods.

- Iterative Optimization: New automated procedures can optimize parameters against quantum mechanical (QM) data, run dynamics to sample new conformations, add the new data to the training set, and repeat the optimization until convergence. This iterative process, using a validation set to prevent overfitting, is more efficient and thorough than manual methods [12].

- Graph Neural Networks (GNNs): Modern approaches use GNNs to predict all bonded and non-bonded force field parameters simultaneously. Models like ByteFF and Espaloma are trained on millions of QM-derived molecular geometries and torsion profiles, providing accurate parameters that respect chemical symmetry and cover a vast chemical space, often eliminating the need for custom parametrization [10].

Experimental Protocols and Methodologies

Detailed Workflow: Systematic Force Field Validation for Proteins

This protocol, adapted from a landmark study, provides a framework for rigorously testing protein force fields against experimental data [5].

1. Objective: To systematically evaluate the accuracy of multiple protein force fields in describing the structure, dynamics, and folding behavior of proteins and peptides.

2. Key Components of the Validation Suite:

- Folded Proteins: Simulate well-characterized, stable proteins (e.g., ubiquitin, GB3) for which high-quality NMR data is available.

- Secondary Structure Propensity: Use small peptides known to preferentially populate specific secondary structures (e.g., helical vs. sheet-forming).

- Folding Ability: Test the force field's ability to fold an α-helical and a β-sheet protein from an unfolded state.

3. Methodology:

- System Preparation:

- Obtain starting structures from the Protein Data Bank (PDB).

- Use simulation software such as AMBER, GROMACS, or CHARMM.

- Solvate the protein in an appropriate water box (e.g., TIP3P, OPC) and add ions to neutralize the system and achieve a physiological concentration.

- Simulation Details:

- Run multiple, long-timescale (microsecond to millisecond) unrestrained molecular dynamics simulations for each force field under test.

- Perform simulations under NPT conditions (constant Number of particles, Pressure, and Temperature), e.g., 1 atm and 298 K.

- Use a thermostat (e.g., Langevin, velocity-rescale) and a barostat (e.g., Berendsen, Parrinello-Rahman).

- Data Analysis and Comparison to Experiment:

- Folded State Stability: Calculate the backbone root-mean-square deviation (RMSD) relative to the experimental structure over the simulation trajectory.

- Structure and Dynamics: Compare simulation-derived NMR observables (e.g., chemical shifts, J-couplings, residual dipolar couplings, order parameters) directly to experimental NMR data.

- Contact Maps: Generate residue-residue contact maps from the simulation trajectory and compare them to those from the experimental structure.

- Folding Analysis: For folding tests, monitor metrics like RMSD and fraction of native contacts to determine if the protein folds to the native state.

Detailed Workflow: Parameterizing a Force Field for Unique Bacterial Lipids

This protocol outlines the specialized process used to create the BLipidFF force field for mycobacterial membrane lipids [8].

1. Objective: To develop accurate atomic-level force field parameters for complex bacterial lipids that are poorly described by general force fields.

2. Methodology:

- Atom Type Definition:

- Define specialized atom types based on location and chemical environment (e.g.,

cTfor tail carbon,cAfor headgroup carbon,oSfor ether oxygen).

- Define specialized atom types based on location and chemical environment (e.g.,

- Partial Charge Calculation:

- Divide-and-Conquer: Fragment the large lipid molecule into smaller, manageable segments.

- Quantum Mechanics (QM) Calculations: For each segment:

- Perform geometry optimization at the B3LYP/def2SVP level of theory.

- Derive partial charges using the Restrained Electrostatic Potential (RESP) fitting method at the B3LYP/def2TZVP level.

- Averaging: Use multiple conformations (e.g., 25) from preliminary MD simulations for each segment and average the RESP charges to account for flexibility.

- Integration: Combine the charges of all segments, removing capping groups, to obtain the total charge for the full lipid.

- Torsion Parameter Optimization:

- Further subdivide the lipid molecule into small elements for torsion parameterization.

- Optimize torsion parameters (Vn, n, γ) to minimize the difference between the QM-calculated energy and the classical potential energy.

- For other parameters (bonds, angles), adopt them from a established general force field like GAFF.

- Validation:

- Run MD simulations of lipid bilayers using the new parameters.

- Compare simulation predictions (e.g., lateral diffusion coefficients, order parameters) to biophysical experimental data such as Fluorescence Recovery After Photobleaching (FRAP) and fluorescence spectroscopy measurements.

Data Presentation

Table 1: Performance of Generalized Force Fields for Drug-like Molecules

Table based on testing against experimental osmotic coefficient data [9].

| Force Field | Overall Agreement with Experiment | Notes and Known Limitations |

|---|---|---|

| GAFF / GAFF2 | Good | GAFF2 shows slightly improved agreement over GAFF. Reliable for many drug-like molecules. |

| CGenFF | Good | Generally produces accurate osmotic coefficients. |

| OPLS-AA | Good | A robust choice for small molecule simulation. |

| PRODRGFF | Poor | Often produces poor results; not recommended without careful reparameterization. |

| All Tested FFs | Poor for purines | All force fields performed poorly for purine-derived molecules (e.g., caffeine). |

Table 2: Essential Research Reagents and Computational Tools

Compilation of key software, force fields, and resources mentioned in current literature.

| Reagent / Tool | Function / Purpose | Key Application Context |

|---|---|---|

| ByteFF | A data-driven, Amber-compatible small molecule force field. | Predicts MM parameters for drug-like molecules across expansive chemical space [10]. |

| BLipidFF | A specialized all-atom force field for bacterial lipids. | Accurately simulates mycobacterial outer membrane properties [8]. |

| ALBATROSS | A deep learning model for predicting IDR properties. | Rapidly predicts radius of gyration and other ensemble dimensions directly from sequence [11]. |

| Mpipi / Mpipi-GG | A coarse-grained force field for disordered proteins. | Simulating conformational ensembles of Intrinsically Disordered Regions (IDRs) [11]. |

| OMol25 Dataset | A massive dataset of high-accuracy quantum chemical calculations. | Training and validating next-generation neural network potentials (NNPs) [13]. |

| HARIBOSS | A curated database of RNA-small molecule complexes. | Provides structures for testing and validating RNA-ligand force fields [7]. |

Methodologies and Workflow Visualization

Force Field Selection and Validation Workflow

Data-Driven Force Field Parameterization

Troubleshooting Guide: Addressing Common Force Field Challenges

This guide helps users diagnose and resolve frequent issues encountered when simulating RNA-ligand complexes.

Problem 1: Overly Compact or Non-Native RNA Structures

- Problem Description: Simulations result in RNA structures that are excessively compact, show artificial base stacking, or generate intercalated structures rarely observed in experiments [2] [14].

- Potential Causes:

- Use of a standard protein force field not optimized for RNA, leading to a bias toward collapsed ensembles [2].

- Inadequate sampling of the RNA's conformational landscape, causing the simulation to be trapped in non-representative states [2].

- Improper description of the solvation environment or ion interactions for the highly charged RNA backbone [15].

- Solutions:

- Switch to a Refined RNA Force Field: Utilize modern, RNA-specific force fields like BSFF1, which was developed to mitigate overestimation of base-stacking and reduce artificial intercalated conformations [14].

- Implement Enhanced Sampling: Employ advanced sampling algorithms like Temperature Cool Walking (TCW) or Replica Exchange with Solute Tempering (REST2), which can provide better convergence to the correct equilibrium distribution than standard temperature replica exchange (TREx) for disordered systems [2] [14].

- Extend Sampling Time: If possible, combine extensive molecular dynamics (MD) simulations with Markov State Model (MSM) analysis to achieve more complete sampling [2].

Problem 2: Inaccurate Ligand Binding Poses and Affinities

- Problem Description: Docking or MD simulations fail to reproduce experimentally observed ligand binding modes or predict binding affinities inaccurately [15].

- Potential Causes:

- Insufficient RNA Flexibility: The RNA receptor is treated as rigid, ignoring ligand-induced conformational changes [15].

- Limitations in Scoring Functions: The scoring function does not adequately capture key interactions, such as those mediated by metal ions or the highly polar RNA environment [15].

- Neglect of Electrostatic and Solvation Effects: Inaccurate treatment of the strong electrostatic interactions involving the RNA backbone and bound ions [15] [16].

- Solutions:

- Incorporate Receptor Flexibility: Use docking protocols that allow for flexibility in the RNA target, or run MD simulations to generate an ensemble of RNA conformations for docking [15].

- Utilize Advanced Scoring: Consider physics-based or hybrid knowledge-based/physics-based scoring functions that can better handle the unique challenges of RNA-ligand binding [15].

- Explicitly Model Ions and Water: Ensure the simulation system includes necessary metal ions (e.g., Mg²⁺) and uses appropriate water models, as these are critical for stabilizing RNA structure and ligand binding [15].

Problem 3: Instability of RNA-Protein Complexes

- Problem Description: During simulations of RNA-protein complexes, the complex structure disintegrates, particularly in flexible loop regions [16].

- Potential Causes:

- Use of Polarizable Force Fields: While polarizable force fields (like AMOEBA) can better describe the strong electrostatic nature of RNA, they may also allow for excessive movement that can destabilize complex structures over longer timescales [16].

- Force Field Incompatibility: Using a combination of protein and RNA force fields that have not been rigorously tested together [16].

- Solutions:

- Test Multiple Force Fields: Begin simulations by testing different combinations of well-regarded non-polarizable force fields (e.g., Amber's ff19SB for protein with OL3 for RNA). If using polarizable force fields, closely monitor the structural integrity of the complex [16].

- Consider Polarizable Water Models: As a compromise, using a polarizable water model (like O3P) with non-polarizable force fields can improve the description of electrostatics without the full computational cost and potential instability of a fully polarizable force field [16].

Frequently Asked Questions (FAQs)

Q1: What are the main limitations of current force fields when simulating RNA-ligand complexes? The primary limitations include:

- Sampling Problem: The timescale of simulations is often insufficient to capture the full conformational landscape of flexible RNA molecules [14].

- Force Field Problem: The potential energy functions may have biases, such as overestimating base-stacking propensity and generating non-native intercalated structures [14].

- Electrostatics: The highly charged RNA backbone requires an accurate description of interactions with metal ions and solvent, which is challenging for non-polarizable force fields [15] [16].

- Data Scarcity: Compared to proteins, there are fewer experimentally determined RNA and RNA-ligand complex structures, which limits the development of knowledge-based parameters [15].

Q2: My simulation of an Aβ peptide is too structured, contradicting experimental data. What should I do? This is a known issue. The problem likely stems from a combination of force field and sampling limitations [2]. Solutions include:

- Re-evaluate Your Sampling Protocol: Standard Temperature Replica Exchange (TREx) may be insufficient. Consider switching to non-equilibrium methods like Temperature Cool Walking (TCW), which has been shown to produce more accurate, random-coil-like ensembles for Aβ peptides [2].

- Test Newer Force Fields: Explore the use of newer fixed-charge force fields that have been specifically optimized for intrinsically disordered proteins (IDPs) and unfolded states [2].

Q3: What advanced parameterization methods can make force fields more accurate? Bayesian inference methods are powerful for refining force fields against experimental data.

- Bayesian Sampling: This approach explores the parameter landscape with respect to physical properties, treating uncertainty in experimental data as a part of the optimization process. It can use surrogate models (like Gaussian Processes) to speed up the evaluation of physical properties from simulation [17].

- BICePs (Bayesian Inference of Conformational Populations): This algorithm refines force field parameters against sparse or noisy ensemble-averaged experimental data. It samples the full posterior distribution of uncertainties and can automatically detect and down-weight outlier data points, leading to more robust parameterization [18].

Key Experimental Protocols and Data

Development of the BSFF1 RNA Force Field

The BSFF1 force field was developed to address over-stacking and intercalation artifacts [14].

- Objective: To improve the conformational sampling of RNA by optimizing parameters within the framework of the ff99bsc0χOL3 force field [14].

- Methodology: A two-step strategy was employed:

- Nonbonded Parameter Optimization: The Lennard-Jones epsilon (ε) parameters for heavy atoms in nucleobases were base-specifically adjusted. Optimization was performed using a reweighting method combined with a Monte Carlo Simulated Annealing (MCSA) procedure, based on REST2-enhanced sampling simulations of tetranucleotides. The goal was to minimize the ratio of non-native intercalated conformations [14].

- Introduction of a CMAP Term: A grid-based energy correction map (CMAP) term was added to better reproduce the distributions of the ζ/α dihedral angles [14].

- Validation Systems: The force field was tested on a variety of RNA systems, including tetranucleotides, tetraloops, single-strand RNA, kink-turn, duplex, and a riboswitch [14].

Table 1: Key Parameter Changes in BSFF1 vs. ff99bsc0χOL3 [14]

| Nucleobase Heavy Atom | Force Field | Lennard-Jones ε Parameter (kcal/mol) |

|---|---|---|

| N1(A), N3(C) | ff99bsc0χOL3 | 0.20 |

| BSFF1 | 0.14 | |

| C2(A) | ff99bsc0χOL3 | 0.20 |

| BSFF1 | 0.17 | |

| C4(U) | ff99bsc0χOL3 | 0.20 |

| BSFF1 | 0.15 | |

| O2(C) | ff99bsc0χOL3 | 0.20 |

| BSFF1 | 0.15 |

Assessing Polarizability in RNA-Protein Complexes

A study compared force fields for simulating RNA-protein complexes [16].

- Objective: To determine how polarizable and non-polarizable force fields differ in simulating the dynamics and stability of RNA-protein complexes [16].

- Systems Studied: Three complexes: Argonaute 2 (Ago2), CasPhi-2 (Cas12j), and Retinoic acid-inducible gene I (RIG-I) [16].

- Force Fields Tested:

- Non-polarizable: Amber ff14SB/OL3, ff19SB/OL3, OPLS4.

- Polarizable: AMOEBA.

- Hybrid: ff19SB/OL3 with the polarizable O3P water model [16].

- Key Finding: Non-polarizable force fields tended to produce compact and stable complexes. In contrast, polarizable force fields (AMOEBA) and the hybrid model (with O3P water) allowed significantly more movement, which in some cases led to the disintegration of the complex, especially in proteins with longer loops [16].

Table 2: Force Field Performance in RNA-Protein Complex Simulations [16]

| Force Field Type | Example Force Fields | Complex Stability | Conformational Flexibility | Notes & Recommendations |

|---|---|---|---|---|

| Non-Polarizable | ff19SB/OL3, OPLS4 | High | Lower | Produces compact, stable complexes. A reliable starting point. |

| Fully Polarizable | AMOEBA | Variable (Lower) | Higher | Allows more movement but may destabilize complexes. Monitor structural integrity closely on long timescales. |

| Polarizable Solvent | ff19SB/OL3 with O3P water | Moderate | Moderate | A compromise; improves electrostatic description without the full cost/instability of a polarizable force field. |

Workflow and Relationship Diagrams

Table 3: Key Computational Tools for RNA-Ligand Simulation Research

| Item Name | Type/Category | Primary Function |

|---|---|---|

| AMBER | MD Software Suite | Provides tools (tleap, sander, pmemd) for running simulations with various force fields like ff19SB/OL3. |

| GROMACS | MD Software Suite | A highly optimized package for performing MD simulations, including enhanced sampling methods. |

| OpenFF Toolkit & Evaluator | Force Field Parameterization | A suite for developing and testing force field parameters against physical property data [17]. |

| BICePs | Analysis/Refinement Algorithm | Bayesian inference for refining conformational ensembles and force field parameters against experimental data [18]. |

| PLUMED | Enhanced Sampling Plugin | A library for implementing various enhanced sampling methods, such as metadynamics, in MD simulations. |

| ff99bsc0χOL3 | RNA Force Field | A previously standard RNA force field, often used as a baseline for comparison and development [14]. |

| BSFF1 | RNA Force Field | A base-specifically optimized force field that reduces over-stacking and intercalation artifacts [14]. |

| AMOEBA | Polarizable Force Field | A force field that includes polarizability for a more accurate description of electrostatic interactions [16]. |

Frequently Asked Questions (FAQs)

What is the "Timescale Problem" in molecular simulation? The Timescale Problem, also known as the Sampling Problem, refers to the critical challenge in molecular dynamics (MD) where many processes of interest occur on timescales that are inaccessible to standard simulations due to high computational cost. This happens when high energy barriers separate metastable states, making transitions between them rare events. Consequently, a simulation started in one state may remain trapped there, unable to explore other relevant configurations within a practical simulation time [19].

How does the choice of Force Field impact sampling and validation? The accuracy of force fields significantly influences the reliability of simulation outcomes, including sampling. Force fields are computational models that describe the forces between atoms within molecules or between molecules. Their parameters for calculating potential energy are derived from laboratory experiments, quantum mechanics calculations, or both [20]. Inaccuracies in these parameters, particularly concerning electrostatic interactions and desolvation energies, can lead to incorrect predictions of system behavior. For example, pKa values of protein sidechains calculated from constant pH simulations can be sensitive to the underlying protein force field and water model, potentially leading to errors such as the undersolvation of neutral histidines or overstabilization of salt bridges [21]. Validating force fields against experimental data is therefore essential to ensure they accurately represent the true energy landscape.

What are the most common symptoms of inadequate sampling in my simulation? The most common symptom is the inability to observe transitions between different metastable states of your system. For example, if you are simulating a protein-ligand binding process, you might only see the ligand in the unbound state throughout your entire simulation run, never witnessing the binding event or the reverse dissociation. This indicates your simulation is trapped in one free energy minimum and cannot cross the barrier to other states within the simulated time [19].

My simulation isn't converging. Could poor sampling be the cause? Yes, inadequate sampling is a primary reason for non-convergence in simulations. If your simulation cannot sufficiently explore the configuration space—especially all the relevant low-energy states and the transition paths between them—then computed properties (like free energy differences or average structural properties) will not settle to a stable value. This is often directly linked to the presence of high free energy barriers that the simulation cannot overcome in the allotted time [19] [22].

Troubleshooting Guides

Diagnosis: Is Your Simulation Suffering from the Timescale Problem?

Follow this flowchart to diagnose the core issue. The corresponding Graphviz code is provided for visualization.

Diagram Title: Timescale Problem Diagnosis Flowchart

Solution: Implementing Enhanced Sampling with Metadynamics

This guide provides a step-by-step protocol for setting up a Well-Tempered Metadynamics simulation, a popular enhanced sampling method, using the PLUMED plugin [19].

Diagram Title: Metadynamics Simulation Workflow

Protocol Steps:

- Collective Variables (CVs) Selection: This is the most critical step. Identify a small number of CVs (1-3 is common) that are capable of distinguishing between the metastable states and describing the slow degrees of freedom of the process you want to study. Examples include a key atomic distance for a binding event, a dihedral angle for a conformational change, or a radius of gyration for folding [19].

- Parameters for Bias Potential: Configure the history-dependent bias potential in your input file (e.g., for PLUMED).

- Gaussian Height: The initial energy kick. In Well-Tempered Metadynamics, this decreases over time for smoother convergence [19].

- Gaussian Width: The spread of the Gaussian in CV space. This should be small enough to resolve features but large enough for efficient filling [19].

- Deposition Frequency: How often a Gaussian is added. A higher frequency speeds up exploration but requires careful tuning to avoid artifacts [19].

- System Equilibration: Perform a conventional MD simulation starting from a relevant configuration (e.g., a crystal structure) to equilibrate the system's temperature, pressure, and local structure before applying the bias.

- Production Metadynamics Run: Execute the simulation with the bias potential active. The software will periodically add Gaussian potentials, discouraging the system from revisiting sampled regions and pushing it to explore new ones.

- Free Energy Surface (FES) Calculation: After the simulation, the deposited bias potential can be processed to obtain the underlying FES. PLUMED provides tools to sum the Gaussians, and the FES can be estimated as the negative of the bias potential at convergence [19].

Research Reagent Solutions

Table 1: Essential Software and Computational Tools for Enhanced Sampling

| Item Name | Function/Application | Key Features |

|---|---|---|

| PLUMED [19] | An open-source library for enhanced sampling, free-energy calculations, and analysis of MD simulations. | Works with major MD engines (GROMACS, AMBER, OpenMM); provides a vast suite of methods including Metadynamics; extensive documentation and community. |

| GROMACS [19] | A high-performance MD simulation package. | Extremely fast for biomolecular systems; widely used; fully compatible with PLUMED for enhanced sampling. |

| AMBER [21] | A suite of biomolecular simulation programs. | Includes tools for MD simulations with various force fields; supports constant pH MD and other advanced protocols. |

| OpenMM [20] | A toolkit for molecular simulation using high-performance computing. | High flexibility and GPU optimization; cross-platform; can be used with PLUMED. |

Table 2: Comparison of Common Enhanced Sampling Methods

| Method | Primary Principle | Best For | Key Tuning Parameters |

|---|---|---|---|

| Metadynamics [19] | Fills free energy minima with a history-dependent bias potential to drive transitions. | Exploring unknown free energy surfaces; complex conformational changes. | Collective Variables (CVs), Gaussian height/width/frequency. |

| Well-Tempered Metadynamics [19] | A variant of Metadynamics where the Gaussian height reduces over time. | Achieving converged free energy estimates; systems with complex, rugged landscapes. | CVs, initial Gaussian height, bias factor (tempering). |

| Umbrella Sampling | Restrains the simulation at specific points along a reaction coordinate. | Calculating free energy profiles along a known, one-dimensional reaction coordinate. | Force constant for restraints, spacing of windows. |

| Replica Exchange | Runs multiple simulations in parallel at different temperatures or Hamiltonians and swaps configurations. | Improving sampling of systems with frustrated energy landscapes (e.g., protein folding). | Number of replicas, temperature range or Hamiltonian variants. |

Frequently Asked Questions

What are the main types of uncertainty in molecular simulations? There are two primary types [23]:

- Aleatory Uncertainty: inherent, irreducible randomness in a process or system. It's naturally measured by relative frequency and is attributed to stochastic behavior.

- Epistemic Uncertainty: arises from incomplete knowledge or expertise, such as limitations in the model or force field. It can be reduced by obtaining more information.

How can I tell if my simulation has been sampled adequately? A key step is to estimate the correlation time (τ) of your data [1]. This measures how many steps apart two data points must be before they can be considered statistically independent. If your simulation length is not significantly longer than the correlation time, your sampling is likely inadequate. Use a tiered approach: perform feasibility checks, run your simulation, conduct semi-quantitative checks for sampling quality, and only then calculate observables and uncertainties [1].

What is the difference between accuracy and uncertainty? Accuracy is how close a prediction is to a known value. Uncertainty is a measure of how much predictions and target values can vary [23]. A model can be precise (low uncertainty) but inaccurate if it is consistently wrong, or accurate on average but with high uncertainty in its individual predictions.

Which force field should I use for my protein-ligand binding affinity calculations? There is no single "best" force field, and performance can depend on the specific system. Benchmarking on known data is crucial [24]. For example, in Free Energy Perturbation (FEP) calculations, different combinations of protein forcefields (AMBER ff14SB, ff15ipq), water models (SPC/E, TIP3P, TIP4P-EW), and charge models (AM1-BCC, RESP) have been evaluated, yielding different error metrics [24]. Cross-validation with data not used in parameterization is a recommended best practice [25].

What are some best practices for reporting uncertainties? Always report the standard uncertainty (experimental standard deviation of the mean) for your estimated observables [1]. Clearly state which UQ method was used (e.g., block averaging, bootstrap methods) and ensure your workflow includes checks for adequate sampling. Communicating the assumptions behind your UQ analysis is critical for others to interpret your results correctly [1].

Troubleshooting Guides

Problem: Large Statistical Uncertainty in Results

Symptoms:

- Error bars (standard uncertainty) on your key observables are unacceptably large.

- Results fluctuate significantly between different simulation runs or trajectory segments.

Solutions:

- Extend Simulation Time: The most straightforward solution. The experimental standard deviation of the mean decreases with the square root of the number of uncorrelated samples [1].

- Check for Inefficient Sampling:

- Calculate the integrated correlation time (τ) for your observables [1].

- If τ is large, the data is highly correlated, meaning you have fewer effective independent samples.

- Mitigation: Employ enhanced sampling techniques (e.g., Hamiltonian replica exchange, solute tempering) to overcome energy barriers and decorrelate your data faster [24].

- Use More Efficient UQ Methods: Instead of simply using uncorrelated data points, consider advanced methods like the bootstrap or jackknife resampling, which can provide better uncertainty estimates from correlated data [1].

Problem: Suspected Force Field Inaccuracy

Symptoms:

- Your simulation results consistently deviate from reliable experimental data, even when statistical uncertainties are small.

- The observed systematic error is larger than the estimated statistical uncertainty.

Solutions:

- Benchmark and Validate: Compare your simulation results against a benchmark set of experimental data that was not used in the force field's parameterization [25]. The JACS benchmark set is a common example for protein-ligand binding [24].

- Cross-Validation: Use techniques like k-fold cross-validation or leave-one-out cross-validation to test the force field's predictive performance on data it wasn't trained on [25].

- Systematic Parameter Evaluation: Test different combinations of force fields, water models, and charge assignment methods to identify the most accurate parameter set for your specific system [24].

Uncertainty Quantification Troubleshooting Workflow

Problem: How to Choose a UQ Method

Symptoms:

- Uncertainty in which statistical technique to use for uncertainty quantification.

- Need to balance computational cost with robustness.

Solutions: The table below summarizes common UQ methods.

| Method | Description | Best For | Key Considerations |

|---|---|---|---|

| Block Averaging [1] | Divides data into blocks to estimate variance from correlated data. | Standard MD/MC simulations with time-series data. | A traditional method in molecular simulation. |

| Bootstrap Methods [1] | Resamples data with replacement to build an empirical distribution. | Complex observables, derived quantities. | Computationally intensive but very general. |

| Bayesian Inference [23] | Treats parameters as probability distributions, combining prior knowledge with data. | Incorporating prior information, rigorous uncertainty propagation. | Requires specifying a prior; often uses Markov Chain Monte Carlo (MCMC). |

| Conformal Prediction [23] | Provides distribution-free prediction intervals with coverage guarantees. | Black-box models, regression, and classification tasks. | Model-agnostic and provides strict statistical guarantees. |

| Ensemble Methods [23] | Trains multiple models and quantifies uncertainty from their disagreement. | Machine learning potentials, neural networks. | High computational cost due to multiple training runs. |

The Scientist's Toolkit: Research Reagents & Materials

| Item | Function in Uncertainty Quantification |

|---|---|

| Benchmark Sets (e.g., JACS set) [24] | Standardized collections of molecules and properties to consistently evaluate and validate force field accuracy against experimental data. |

| Enhanced Sampling Algorithms (e.g., REST) [24] | Techniques like Replica Exchange with Solute Tempering (REST) enhance conformational sampling, reducing correlation times and improving statistical reliability. |

| Cross-Validation Protocols [25] | Statistical methods like k-fold cross-validation that assess how a model will generalize to an independent dataset, crucial for testing predictive power. |

| Correlation Time Analysis [1] | A diagnostic tool to determine the number of simulation steps between statistically independent samples, which is fundamental for calculating correct uncertainties. |

| Open-Source MD Packages (e.g., OpenMM) [24] | Software that enables reproducible and automated simulation workflows, allowing for systematic testing of different force fields and parameters. |

Advanced Sampling Methods and Force Field Selection Protocols

FAQs and Troubleshooting Guides

Gaussian Accelerated Molecular Dynamics (GaMD)

Q: How does GaMD improve sampling efficiency, and when should I use it? A: GaMD applies a harmonic boost potential to the system's potential energy, which smooths the energy landscape and reduces energy barriers. This allows the system to transition more easily between conformational states, accelerating the sampling of rare events like ligand binding and protein folding. You should use GaMD when studying complex biomolecular processes that occur on timescales beyond what conventional MD can access, particularly when you lack predefined reaction coordinates. GaMD has been successfully applied to study protein-ligand binding mechanisms, such as in investigations of DPP4 inhibitors for diabetes treatment [26].

Q: My GaMD simulations are not converging. What could be wrong? A: Convergence issues in GaMD can stem from several factors:

- Insufficient simulation time: Despite enhanced sampling, GaMD still requires adequate time to explore the conformational space. Ensure your simulation length is appropriate for the biological process.

- Suboptimal boost potential: The applied boost potential must be properly calibrated. If too low, barriers remain; if too high, it can distort the energy landscape. Verify that your boost parameters follow the principles described in GaMD methodologies [27] [26].

- System preparation issues: As with any MD simulation, issues can arise from improper system setup, including incorrect protonation states, missing residues, or inappropriate water box size.

Q: What is the difference between GaMD and LiGaMD? A: LiGaMD (Ligand Gaussian accelerated Molecular Dynamics) is an extension of GaMD specifically designed to enhance sampling of ligand binding and dissociation events. While standard GaMD applies a boost to the entire system potential, LiGaMD selectively enhances the potential energy associated with ligand degrees of freedom, making it particularly effective for studying protein-ligand interactions and calculating binding free energies and kinetics [26].

Replica-Exchange Molecular Dynamics (REMD)

Q: How do I determine the optimal number and temperature distribution of replicas? A: The number and temperature spacing of replicas are critical for achieving adequate exchange rates between neighboring replicas. According to GROMACS documentation, for a system with N atoms, the temperature spacing should approximately follow ε ≈ 1/√N to maintain a good exchange probability (around 0.135). GROMACS provides an online REMD calculator to help determine appropriate temperature distributions based on your system size and desired temperature range [28].

Q: My REMD simulation shows low exchange rates between replicas. How can I improve this? A: Low exchange rates typically indicate that your temperature spacing is too wide. Try:

- Increasing the number of replicas to reduce the temperature difference between neighbors.

- Adjusting the temperature range to ensure better overlap in potential energy distributions.

- Considering Hamiltonian REMD (H-REMD) methods like REST2, which scale solute-solvent interactions and can be more efficient for biomolecular systems [29] [30].

Q: What is the difference between T-REMD and REST2? A: Temperature REMD (T-REMD) runs replicas at different temperatures, attempting exchanges based on the Metropolis criterion. REST2 (Replica Exchange with Solute Tempering) is a Hamiltonian-based approach where instead of heating the entire system, primarily the solute degrees of freedom are "heated" by scaling the solute-solute and solute-solvent interactions. REST2 is often more efficient for biomolecular systems as it reduces the number of replicas needed by focusing the enhanced sampling on the region of interest [29].

Alchemical Free Energy Methods

Q: How do I choose between FEP and TI for my free energy calculations? A: Both FEP (Free Energy Perturbation) and TI (Thermodynamic Integration) are rigorous methods for calculating relative binding free energies. FEP computes free energy differences as the summation of transformations between fixed λ states, while TI numerically integrates the derivative of the Hamiltonian with respect to λ. The choice often depends on your specific system and software implementation. Recent studies using automated FEP workflows with OpenMM have shown that FEP can achieve mean unsigned errors around 0.8-1.0 kcal/mol for protein-ligand binding affinity predictions, making it suitable for drug discovery applications [24].

Q: My alchemical transformations show poor overlap between adjacent λ states. What should I do? A: Poor overlap between λ states is a common issue that can lead to inaccurate free energy estimates. Consider these solutions:

- Increase the number of intermediate λ states to create smaller transitions between states.

- Implement Hamiltonian replica exchange between λ states (λ-REMD) to improve sampling across the alchemical pathway.

- Extend simulation time for each λ window to ensure better equilibration and sampling.

- Check your force field parameters, as inaccurate parameters can exacerbate overlap issues [24].

Q: How does force field choice impact alchemical free energy calculations? A: Force field selection significantly impacts the accuracy of free energy calculations. Studies comparing AMBER ff14SB and ff15ipq force fields with different water models (SPC/E, TIP3P, TIP4P-EW) have shown that prediction errors can vary by 0.1-0.2 kcal/mol depending on the combination. For example, using AMBER ff14SB with TIP3P water and AM1-BCC charges achieved an MUE of 0.82 kcal/mol in binding affinity predictions, while RESP charges with the same force field increased the error to 1.03 kcal/mol [24].

Quantitative Data Tables

Table 1: Performance Comparison of Free Energy Methods and Force Fields

| Method | Force Field | Water Model | Charge Model | MUE (kcal/mol) | RMSE (kcal/mol) | R² |

|---|---|---|---|---|---|---|

| FEP+ [24] | OPLS2.1 | - | CM1A-BCC | 0.77 | 0.93 | 0.66 |

| AMBER TI [24] | AMBER ff14SB | SPC/E | RESP | 1.01 | 1.3 | 0.44 |

| Alchaware [24] | AMBER ff14SB | SPC/E | AM1-BCC | 0.89 | 1.15 | 0.53 |

| Alchaware [24] | AMBER ff14SB | TIP3P | AM1-BCC | 0.82 | 1.06 | 0.57 |

| Alchaware [24] | AMBER ff14SB | TIP4P-EW | AM1-BCC | 0.85 | 1.11 | 0.56 |

| Alchaware [24] | AMBER ff15ipq | SPC/E | AM1-BCC | 0.85 | 1.07 | 0.58 |

| Alchaware [24] | AMBER ff14SB | TIP3P | RESP | 1.03 | 1.32 | 0.45 |

Table 2: Comparison of Enhanced Sampling Techniques for RNA Tetraloop Folding

| Method | System | Sampling Efficiency | Convergence Time | Key Findings |

|---|---|---|---|---|

| REST2 [30] | RNA GAGA TL | Low | No convergence after 120 μs/replica | Inadequate alone for complex folding |

| REST2 [30] | RNA UUCG TL | Low | No convergence after 120 μs/replica | Limited barrier crossing |

| REST2 + WT-MetaD [30] | RNA GAGA TL | High | 5-10 μs/replica | 100x improvement over REST2 alone |

| T-REMD [29] | RNA Tetraloops | Moderate | Varies with system | Better than standard MD but slow |

| WT-MetaD [29] | RNA Tetraloops | High | Depends on CV choice | Requires careful CV selection |

Table 3: GaMD Applications in Drug Discovery Studies

| Application | System | Method Variant | Key Outcomes |

|---|---|---|---|

| DPP4 Inhibitor Screening [26] | DPP4-FDA drug complexes | GaMD/LiGaMD | Revealed binding/unbinding mechanisms correlated with IC50 |

| Protein-Ligand Binding [27] | CD2-CD58 (168,000 atoms) | GaMD-US/dual-water | PMF converged within 1 kcal/mol of experimental value |

| Large Biological Systems [27] | Polarizable FF simulations | Multi-level GaMD | Reduced time to convergence by 10-20x compared to US |

Experimental Protocols

Protocol: GaMD Simulation Setup for Protein-Ligand Binding

Objective: Study binding mechanisms of DPP4 inhibitors using GaMD [26].

Step-by-Step Methodology:

- System Preparation:

- Obtain crystal structure of DPP4 with bound ligand (if available)

- Parameterize ligands using appropriate tools (GAFF2, CGenFF, etc.)

- Solvate system in TIP3P water box with 10-12 Å buffer

- Add ions to neutralize system and achieve physiological concentration (0.15 M NaCl)

Conventional MD Equilibration:

- Minimize energy using steepest descent (5,000 steps)

- Heat system gradually from 0 to 300 K over 100 ps with position restraints on heavy atoms

- Equilibrate without restraints for 50-100 ns until system stabilizes

- Monitor RMSD, potential energy, and temperature for stability

GaMD Enhancement:

- Calculate potential statistics (average, standard deviation, maximum, minimum) from conventional MD

- Set boost potential parameters according to GaMD theory:

- Lower bound for boosting: σ₀P ≈ 1.0 kcal/mol

- Upper bound for boosting: ensure maximum boost potential respects theory

- Apply harmonic boost to dihedral potential energy terms

- Run dual-boost GaMD for enhanced sampling of both torsional and total potential energies

Production Simulation:

- Run GaMD production for 500 ns - 1 μs depending on system size

- Save frames every 100 ps for analysis

- Monitor boost potential to ensure it remains within theoretical bounds

Analysis:

- Identify binding poses using clustering analysis

- Calculate binding free energy profiles using the GaMD reweighting algorithm

- Identify key residues involved in binding through interaction analysis

Protocol: REST2 Simulations for RNA Folding

Objective: Enhance sampling of RNA tetraloop folding using REST2 [29] [30].

Step-by-Step Methodology:

- System Preparation:

- Build RNA tetraloop structure (e.g., 5'-GAGA-3' or 5'-UUCG-3')

- Use appropriate RNA force field (e.g., AMBER OL3 with bsc0 and χOL3 modifications)

- Solvate in water box with 10 Å minimum padding

- Add ions (Na⁺, Cl⁻) to neutralize and achieve desired ionic concentration

Replica Setup:

- Determine number of replicas (typically 12-16 for small RNA systems)

- Set up temperature or Hamiltonian scaling factors using geometric progression

- For REST2, define solute atoms (RNA and essential ions) for scaling

- Set exchange attempt frequency (every 1-2 ps)

Simulation Parameters:

- Use PME for electrostatic calculations with 8-10 Å cutoff

- Constrain bonds involving hydrogen with SHAKE or LINCS

- Set integration time step of 2 fs

- Maintain temperature with Langevin dynamics

- Use isotropic pressure coupling for equilibration

Production Run:

- Run each replica for 100-500 ns depending on system complexity

- Monitor exchange rates (target: 20-30% between neighboring replicas)

- Check for replica diffusion through temperature space

Analysis:

- Calculate free energy surfaces using weighted histogram analysis method (WHAM)

- Identify folded and unfolded states using collective variables (RMSD, hydrogen bonds)

- Compute folding free energies (ΔGfold°) from population distributions

- Assess convergence by comparing forward and reverse halves of the simulation

Protocol: Alchemical FEP for Relative Binding Affinities

Objective: Calculate relative binding free energies for congeneric ligands using FEP [24].

Step-by-Step Methodology:

- System Preparation:

- Prepare protein structure with co-crystallized ligand

- Generate ligand structures for transformation series

- Parameterize ligands using GAFF2 with AM1-BCC or RESP charges

- Align ligands for minimal perturbation during transformation

Topology Setup:

- Create dual-topology or hybrid-topology approach for transformation

- Define λ schedule (typically 12-16 windows with closer spacing near endpoints)

- Set up soft-core potentials for van der Waals interactions

Equilibration:

- Minimize each λ window separately

- Heat gradually to 300 K with restraints on protein and ligand heavy atoms

- Equilibrate without restraints for 2-5 ns per window

- Monitor volume and density for stability

Production Simulation:

- Run each λ window for 10-50 ns depending on system complexity

- Implement Hamiltonian exchange between λ windows (λ-REMD) every 1-2 ps

- Use Monte Carlo barostat for constant pressure

- Save energies frequently for overlap analysis

Analysis:

- Calculate free energy differences using MBAR or Bennett Acceptance Ratio

- Check for sufficient overlap between adjacent λ windows

- Estimate statistical uncertainty using block analysis or bootstrapping

- Validate with cycle closures in transformation maps

Visualization Diagrams

GaMD Workflow

REMD Exchange Mechanism

Alchemical Free Energy Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Software Tools for Enhanced Sampling Simulations

| Tool Name | Type | Primary Function | Application Examples |

|---|---|---|---|

| OpenMM [24] | MD Engine | High-performance MD simulations | FEP calculations, GPU-accelerated sampling |

| Tinker-HP [27] | MD Package | Polarizable force field simulations | GaMD with AMOEBA force field |

| Alchaware [24] | FEP Workflow | Automated free energy calculations | Relative binding affinity predictions |

| GROMACS [28] | MD Engine | REMD simulations | Temperature and Hamiltonian replica exchange |

| pymd [26] | Analysis Toolkit | Protein-compound binding analysis | GaMD/LiGaMD trajectory analysis |

| AMBER [29] | MD Suite | Nucleic acid simulations | RNA folding with OL3 force field |

Table 5: Force Fields and Parameter Sets

| Force Field | Best For | Key Features | Recommended Usage |

|---|---|---|---|

| AMBER ff14SB [24] | Protein simulations | Optimized for standard proteins | General protein-ligand systems |

| AMBER ff15ipq [24] | High-accuracy proteins | Implicitly polarized charges | Systems requiring high accuracy |

| AMOEBA [27] | Polarizable simulations | Polarizable multipole electrostatics | Systems with strong polarization effects |

| AMBER OL3 [29] [30] | RNA simulations | bsc0 and χOL3 modifications | RNA folding and dynamics |

| GAFF2 [24] | Small molecules | General Amber Force Field | Ligand parameterization |

| OPLS2.1 [24] | Drug discovery | Optimized for binding affinity | Protein-ligand binding FEP |

Table 6: Specialized Sampling Methods

| Method | Best Application | Key Advantage | Implementation Tips |

|---|---|---|---|

| GaMD [27] [26] | Biomolecular dynamics | No predefined CVs needed | Use dual-boost for comprehensive sampling |

| LiGaMD [26] | Protein-ligand binding | Selective ligand enhancement | Ideal for binding kinetics |

| REST2 [29] [30] | Biomolecular folding | Reduced replica count | Define solute region carefully |

| WT-MetaD [29] [30] | Defined reaction pathways | Fast convergence with good CVs | Combine with REST2 for best results |

| FEP/λ-REMD [24] | Relative binding affinities | Alchemical transformations | Use closely spaced λ windows |

Frequently Asked Questions (FAQs)

Q1: What are the main advantages of using deep learning over traditional Molecular Dynamics (MD) for sampling conformational ensembles?

Deep learning (DL) offers several key advantages for sampling conformational ensembles, particularly for complex systems like Intrinsically Disordered Proteins (IDPs) [31]:

- Efficiency and Speed: DL models can generate statistically independent samples in seconds or minutes, bypassing the femtosecond-level integration steps of MD that require GPU-days to simulate millisecond-level transitions [32].

- Overcoming Sampling Limitations: DL models excel at capturing rare, transient states that are biologically relevant but often missed by MD due to vast timescale separations [31].

- Direct Ensemble Emulation: Generative models, such as diffusion models, can directly learn and sample the equilibrium distribution of conformations from data, providing a more end-to-end paradigm for exploring conformational landscapes [32].

Q2: My MD simulations struggle to adequately sample the diverse states of an IDP. Which AI method should I consider?

For IDPs, a promising approach is to use transferable generative models that leverage pre-trained protein folding networks. These models use architectural elements from systems like AlphaFold2, conditioned on multiple sequence alignments (MSAs), to produce a diverse distribution of structures [32]. This approach has been shown to improve the recall of conformational states observed in longer simulations and can generate ensembles with comparable accuracy to MD but greater diversity [32] [31].

Q3: How can I validate a deep learning-generated conformational ensemble to ensure it is physically realistic?

Validation should involve multiple complementary techniques [32] [25]:

- Comparison with Experimental Data: Validate against experimental ensemble-averaged data such as NMR chemical shifts, Residual Dipolar Couplings (RDCs), and Small-Angle X-Ray Scattering (SAXS) profiles [31].

- Recovery of Known States: Check if the generated ensemble recovers known conformational states from the PDB or from long-reference MD simulations [32].

- Physical Property Calculation: Compute properties like root mean square fluctuation (RMSF) profiles, contact fluctuations, and solvent accessibilities, and compare them with those derived from MD simulations or physical expectations [32] [33].

- Kinetic and Thermodynamic Consistency: For methods involving coarse-grained ML potentials, the resulting simulations can be validated using standard physical checks, such as ensuring the kinetic energy follows a Maxwell-Boltzmann distribution [33].

Q4: What are the common failure modes when using constrained conformational sampling with AI-enhanced tools, and how can I troubleshoot them?

A common issue, as seen with tools like CREST, is the early termination of sampling or program crashes during the optimization of generated ensembles [34]. This can be due to several factors:

- Overly Restrictive Constraints: Applying too many or overly strong harmonic constraints can destabilize the sampling algorithm. Troubleshooting: Review the force constants and the number of constrained atoms; try a less restrictive setup.

- System Size and Complexity: Large cluster models (e.g., 200-400 atoms) push the limits of sampling algorithms. Troubleshooting: If possible, break down the problem or use a simpler model to first verify the sampling parameters.

- Software-Specific Bugs: The error "free(): invalid next size (fast)" indicates a potential memory management issue within the code itself [34]. Troubleshooting: Ensure you are using the latest stable version of the software and check issue trackers for known bugs and patches.

Troubleshooting Guides

Issue 1: Generated Conformational Ensemble Lacks Diversity

Problem: The AI model produces a very narrow set of conformations, failing to capture the known heterogeneity of the protein.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Insufficient or Biased Training Data | Check the diversity of the training set (e.g., PDB structures, MD trajectories). Is your system underrepresented? | Fine-tune a pre-trained model on diverse, system-specific MD data [32]. |

| Over-regularization or Poor Model Calibration | Monitor the training and validation loss curves. A high validation loss suggests poor generalization [35]. | Reduce regularization strength (e.g., L2 penalty, dropout) and ensure the model is not overfitting [35]. |

| Incorrect Sampling Parameters | Analyze the latent space or sampling noise parameters of the generative model. | Adjust the sampling temperature or noise schedules to explore a wider region of the conformational landscape. |

Issue 2: AI-Generated Ensembles Are Thermodynamically Implausible

Problem: The generated structures have high steric clashes, unrealistic torsion angles, or do not correspond to low-energy states.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Decoupling from Physical Potentials | The purely data-driven model has learned correlations that violate physical laws. | Use a hybrid approach: Employ a generative model for initial sampling, then refine structures with a short MD relaxation using a physical or machine learning force field [31]. |

| Poorly Validated Force Field | If using an ML potential, it may be inaccurate for your specific system. | Rigorously validate the force field by checking properties like energy conservation in NVE simulations or kinetic energy distributions in NVT simulations [33] [36]. |

| Lack of Energetic Ranking | The model generates structures but does not provide their relative energies. | Integrate a re-ranking step. Generate a diverse ensemble with the AI, then compute the relative energies of the conformers using a higher-level theory like DFT or a validated force field to obtain a Boltzmann-weighted ensemble [37]. |

Issue 3: High Computational Cost of Refining AI-Generated Ensembles

Problem: Using high-level quantum mechanics (QM) methods to reoptimize and re-rank thousands of AI-generated conformers is prohibitively expensive.

Solution: Implement a multi-level workflow to reduce computational cost while maintaining accuracy, as demonstrated for molecular dimers [37]. The following workflow diagram illustrates this efficient, multi-step process:

Workflow Description:

- Initial Sampling: Use an efficient tool like CREST with a fast semi-empirical method (GFN2-xTB) to generate a broad, initial set of conformers [37].

- Intermediate Reoptimization: Reoptimize the entire initial ensemble using a low-cost but accurate composite quantum chemistry method (e.g., B97-3c). This step corrects major geometric and energetic errors from the initial sampling without the cost of full DFT [37].

- Deduplication: Remove duplicate conformers that coalesce after the intermediate reoptimization. This can eliminate a large percentage (~90%) of structures, drastically reducing the number that proceed to expensive DFT [37].

- High-Level Refinement: Perform a final reoptimization and vibrational frequency calculation on the remaining unique conformers using a higher-level DFT method.

- Final Energetics: Compute ultra-accurate single-point energies for the final conformers using an even higher level of theory (e.g., ωB97X-V/def2-QZVPP) to obtain precise relative energies for Boltzmann weighting [37].

This table details essential computational tools and datasets for AI-driven conformational ensemble generation.

| Resource Name | Type | Function & Application |

|---|---|---|

| CREST | Software | A Conformer-Rotamer Ensemble Sampling Tool that uses metadynamics with GFN2-xTB to extensively explore conformational space for molecules and non-covalent complexes [37]. |

| mdCATH & ATLAS | Dataset | Large-scale, publicly available Molecular Dynamics datasets providing thousands of microseconds of simulation data for training and validating generative models on folded proteins [32]. |

| AlphaFlow, UFConf, DiG | AI Model | Examples of transferable generative models (diffusion models) that use MSA-based conditioning from AlphaFold2 to generate diverse conformational ensembles from a single sequence or structure [32]. |

| Martini3-IDP | Force Field | A specialized coarse-grained force field parameterized for Intrinsically Disordered Proteins, useful for running long simulations or as a target for ML potentials focused on IDPs [38]. |

| B97-3c / ωB97X-3c | Quantum Chemistry Method | Computationally efficient composite density functional theory methods ideal for intermediate reoptimization and re-ranking of large conformational ensembles [37]. |

| GROMACS Physical Validation Module | Validation Suite | A suite of tests integrated into the GROMACS MD package to check simulation results against physical expectations, such as integrator convergence and correct ensemble sampling [33]. |

Systematic Force Field Comparison Across Biological Systems