Optimizing Lennard-Jones Parameters with Condensed-Phase Data: A Guide for Robust Force Field Development

This article provides a comprehensive guide for researchers and scientists in drug development on the advanced optimization of Lennard-Jones (LJ) parameters using condensed-phase target data.

Optimizing Lennard-Jones Parameters with Condensed-Phase Data: A Guide for Robust Force Field Development

Abstract

This article provides a comprehensive guide for researchers and scientists in drug development on the advanced optimization of Lennard-Jones (LJ) parameters using condensed-phase target data. It covers the foundational principles of LJ and alternative potentials, explores automated workflows and machine-learning strategies for parameterization, addresses key challenges in balancing nanoscale and macroscale properties, and validates the transferability of optimized parameters across different thermodynamic conditions and systems. By integrating insights from recent methodological advances, this work serves as a critical resource for creating more accurate and predictive molecular models for biomedical simulation.

The Lennard-Jones Potential and Its Role in Modern Molecular Simulation

Revisiting the Classic Lennard-Jones and Mie Potentials

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between the Lennard-Jones potential and the more general Mie potential?

The standard Lennard-Jones (LJ) 12-6 potential uses fixed exponents: the repulsive term has an exponent of 12 and the attractive term has an exponent of 6 [1]. The Mie potential is a generalized form where these exponents are tunable parameters (λrep and λattr), offering greater flexibility to model the softness or hardness of interactions for different types of atoms and molecules [1] [2]. The n-6 Lennard-Jones potential is a specific case of the Mie potential where only the repulsive exponent n is variable [2].

Q2: When should I use the "full" Lennard-Jones potential versus the "truncated & shifted" (LJTS) version in my simulations?

The "full" Lennard-Jones potential has an infinite range, but in practice, simulations use a cut-off distance for computational efficiency [1]. The LJTS potential is explicitly truncated at a specified distance (r_end) and the entire potential is shifted to zero at that point to avoid discontinuities [1]. The LJTS potential is computationally cheaper and often sufficient for capturing essential physical features like phase equilibria [1]. Use the "full" potential with long-range corrections for high-accuracy studies of equilibrium homogeneous fluids, while LJTS is an excellent choice for more complex systems where computational speed is a priority [1].

Q3: My simulations are not reproducing experimental condensed-phase properties (e.g., density). Could the issue be with my Lennard-Jones parameters?

Yes, this is a common challenge. The LJ parameters (σ and ε) are typically optimized for specific atom types and a given force field [3]. If you are modeling a novel molecule or an atom in a unique chemical environment, the transferable parameters from a general force field may be inadequate [4] [3]. To address this, you should optimize the LJ parameters using a protocol that targets both quantum mechanical (QM) data (like interaction energies with noble gases) and key experimental condensed-phase data (such as density and heat of vaporization) [3]. This ensures the parameters are balanced and accurate for your specific system [4].

Q4: What are reduced units in the context of Lennard-Jones simulations, and why are they used?

Reduced units are a dimensionless system defined by the LJ parameters themselves [1]. Key reduced properties include:

| Property | Reduced Form |

|---|---|

| Length | r* = r / σ |

| Temperature | T* = k_B T / ε |

| Energy | U* = U / ε |

| Density | ρ* = ρ σ³ |

| Pressure | p* = p σ³ / ε |

This system simplifies equations, improves numerical stability by keeping values close to unity, and makes results scalable and independent of the specific σ and ε values used [1].

Troubleshooting Guides

Issue 1: Poor Reproduction of Bulk Thermodynamic Properties

Problem: Your molecular dynamics simulations of a liquid (e.g., n-octane) yield inaccurate values for properties like density, heat of vaporization, or free energy of solvation.

Diagnosis and Solutions:

- Check Parameter Transferability: The Lennard-Jones parameters for your molecule's atom types might not be directly transferable from a general force field for your specific application [4] [3].

- Solution: Re-optimize the LJ parameters using a multi-scale approach.

- Gather Target Data: Use both QM data (interaction potential energy scans with noble gases like He or Ne) and experimental condensed-phase data (liquid density and heat of vaporization) [3].

- Employ Optimization Workflows: Utilize tools like FFParam-v2 or GrOW, which are designed to automate the parameter optimization process by minimizing the difference between simulation results and your target data [4] [3].

- Validate Extensively: After optimization, test the new parameters against other properties not included in the training set (e.g., surface tension, viscosity) and across a range of temperatures to ensure their transferability and robustness [4].

Table 1: Key Materials and Tools for LJ Parameter Optimization

| Name/Item | Function/Brief Explanation |

|---|---|

| FFParam-v2 | A software tool for optimizing CHARMM force field parameters, including LJ terms, using QM and condensed-phase target data [3]. |

| GrOW | A gradient-based optimization workflow for the automated refinement of force-field parameters using diverse target observables [4]. |

| Noble Gases (He, Ne) | Used in QM potential energy scans to probe van der Waals interactions without the complicating effects of electrostatic forces [3]. |

| Condensed Phase Data | Experimental properties (density, heat of vaporization) used to ensure parameters yield correct behavior in bulk systems [4] [3]. |

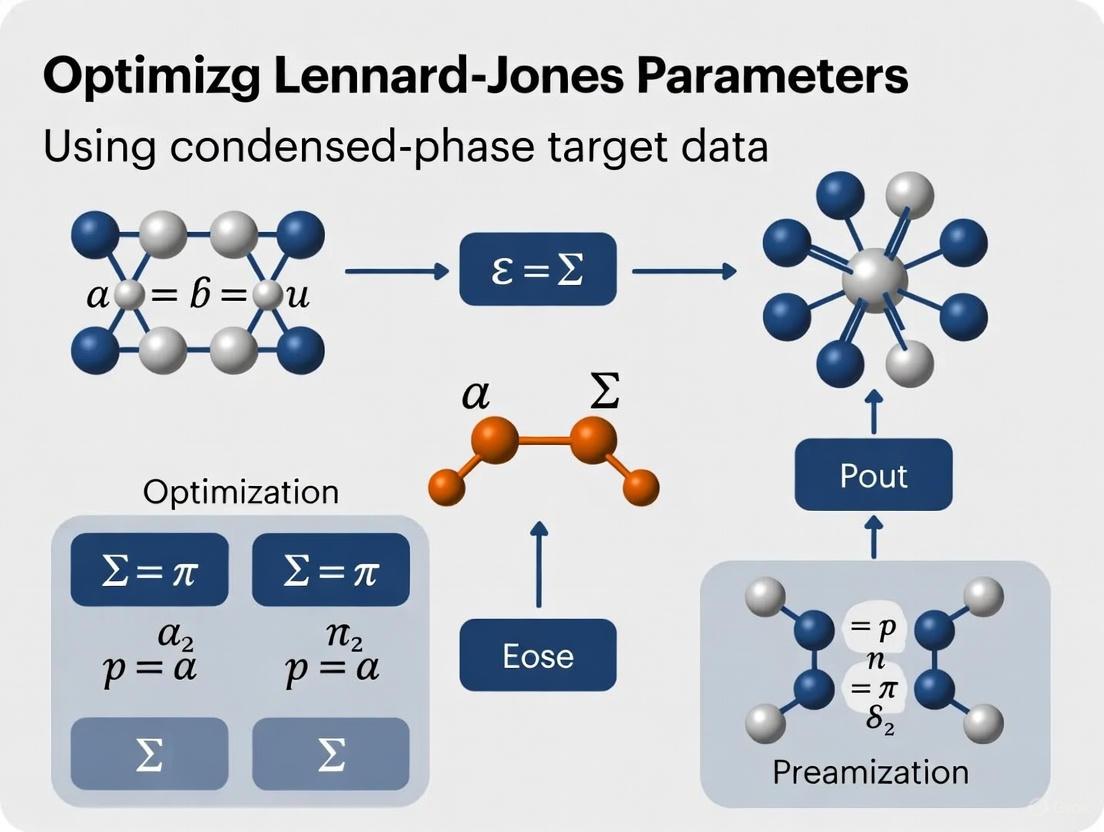

Diagram: LJ Parameter Optimization Workflow

Issue 2: Unphysical System Behavior Due to Cut-off Radius

Problem: Your simulation exhibits artifacts, such as unrealistic energy drift or forces, often related to how long-range van der Waals interactions are handled.

Diagnosis and Solutions:

- Diagnosis 1: Cut-off is too short. A short cut-off radius (e.g., below 2.5σ) can mean significant portions of the attractive part of the potential are ignored, leading to inaccurate energies and pressures [1].

- Solution: Increase the cut-off radius (rc). A common choice for the LJTS potential is rend = 2.5σ, but longer cut-offs (e.g., 3.5σ or more) may be necessary for better convergence to the "full" potential's properties [1].

- Diagnosis 2: Missing long-range corrections.

- Solution: For the "full" Lennard-Jones potential, always apply analytical long-range corrections (LRC) for energy and pressure to account for interactions beyond the cut-off radius. These corrections are essential for obtaining accurate thermodynamic properties [1].

Table 2: Comparison of Common LJ Potential Forms

| Potential Form | Mathematical Form | Key Feature | Primary Use Case |

|---|---|---|---|

| Full LJ | V(r) = 4ε [(σ/r)¹² - (σ/r)⁶] |

Infinite range; requires long-range corrections [1]. | High-accuracy studies of homogeneous fluids [1]. |

| LJ Truncated & Shifted (LJTS) | V(r) = { VLJ(r) - VLJ(r_end) for r ≤ r_end; 0 for r > r_end } [1] |

Zero at cut-off; computationally efficient [1]. | Complex systems, exploratory studies where speed is crucial [1]. |

| Mie / n-6 LJ | Φ₁₂(r) = ε [ (n/(n-6)) (n/6)^{6/(n-6)} ] [ (σ/r)^n - (σ/r)⁶ ] [2] |

Tunable repulsive exponent (n) for softer/harder cores [2]. | Achieving a more realistic representation for specific molecules [2]. |

Limitations of the Standard 12-6 Form and the Case for Parameter Optimization

Frequently Asked Questions

1. Why would I need to optimize Lennard-Jones (LJ) parameters if a standard set already exists for my atom types? Standard LJ parameter sets are often optimized for a specific purpose, such as molecular dynamics (MD) simulations with explicit solvent, and may not perform well in different computational frameworks or for all target properties. [5] For instance, parameters developed for MD can yield inaccurate results like distorted hydration free energies or ion-oxygen distances when used in integral equation theory methods like the Reference Interaction Site Model (RISM). [5] Optimizing parameters for your specific study ensures they are tailored to reproduce the condensed-phase properties (e.g., density, hydration free energy) most relevant to your research.

2. What are the consequences of using non-optimized LJ parameters? Using non-optimized parameters can introduce significant errors in the prediction of key physicochemical properties. This can manifest as inaccuracies in:

- Bulk liquid properties (e.g., density, surface tension, viscosity). [4]

- Thermodynamic quantities (e.g., hydration free energy, mean activity coefficients at finite concentrations). [5]

- System structure (e.g., incorrect ion-oxygen distances in solution). [5] These inaccuracies ultimately reduce the predictive power and reliability of your simulations.

3. Can I optimize LJ parameters to work for multiple related molecules? Yes, a primary goal of parameter optimization is to achieve transferability. This means a single parameter set, optimized using data from one or a few molecules, can accurately reproduce properties for other chemically similar compounds. For example, one study optimized LJ parameters for n-octane and successfully tested them on other linear alkanes like n-hexane and n-heptane. [4] Transferability is a key benchmark for a force field's quality and broad applicability.

4. My simulations involve metal ions, and the standard LJ model performs poorly. Why? The standard 12-6 LJ model, combined with point charges, is a simplistic representation for metal ions like Zn²⁺. [6] It cannot fully capture the complex nature of metal-ligand bonding, including electronic effects and specific coordination geometry preferences. This often results in an underestimation of coordination strength and an inability to model dynamic ligand exchange accurately. [6] Advanced non-bonded models (like the 12-6-4 model that includes an r⁻⁴ term) or a full quantum mechanical/molecular mechanics (QM/MM) treatment may be necessary for these systems. [6]

Troubleshooting Guides

Problem: Inaccurate Bulk Properties in Liquid-Phase Simulations

- Symptoms: Your simulation results for properties like density, surface tension, or viscosity consistently deviate from experimental values across a range of temperatures.

- Potential Cause: The LJ parameters (σ and ε) for your model are not optimized for the condensed phase or the specific class of molecules you are studying.

- Solution:

- Define Objectives: Select key experimental target data from the condensed phase, such as liquid-phase density at a specific temperature. [4]

- Choose an Optimization Workflow: Employ an automated optimization toolkit. The table below summarizes methods mentioned in the literature:

Table: Global Optimization Methods for Parameterization

Method Brief Description Key Feature Gradient-based Optimization (GrOW) [4] An automated toolbox using algorithms like steepest descent to iteratively improve parameters. Efficient local optimization guided by gradients. Surrogate-Assisted Global Optimization [7] Combines a global evolutionary algorithm with a presampling phase and a surrogate model. Reduces computational cost; good for initial parameter guess. - Validate Extensively: After optimization, test the new parameters against other properties (e.g., surface tension, viscosity) and for similar molecules not included in the training set to ensure transferability. [4]

Problem: Force Field Fails to Transfer Across Chemical Space

- Symptoms: Parameters that work well for one molecule perform poorly for a closely related molecule (e.g., a homologue).

- Potential Cause: The original parameterization was over-fitted to a narrow set of training data and lacks broader chemical relevance.

- Solution:

- Use a Diverse Training Set: Incorporate target data from multiple scales into the optimization process. This includes both:

- Multi-Scale Optimization: Utilize a workflow that can simultaneously handle both types of target data. This helps find a balanced parameter set that captures both intramolecular energetics and intermolecular packing effects. [4]

Problem: Poor Performance in Implicit Solvent Calculations

- Symptoms: When switching from explicit solvent MD simulations to implicit solvent methods like RISM, the results for ion hydration or activity coefficients become inaccurate.

- Potential Cause: The LJ parameters were optimized for explicit solvent MD and are not suitable for the approximations inherent in the implicit solvent model.

- Solution:

- Re-optimize for the Framework: Perform a new parameter optimization specifically within the target implicit solvent framework. [5]

- Refit to Key Data: Solve the 1D-RISM equations for a grid of ε and σ values, fitting the results to experimental data like ion-oxygen distance (IOD), hydration free energy (HFE), and mean activity coefficient. [5] A second optimization step may be needed to fine-tune cation-anion interactions. [5]

Problem: Modeling Systems with Divalent Cations (e.g., Zn²⁺)

- Symptoms: Unrealistic ligand binding geometries, underestimated binding affinities, or failure to reproduce known coordination numbers.

- Potential Cause: The standard 12-6 LJ potential cannot adequately describe the ion-induced dipole interactions and directionality of bonds involving metal ions. [6]

- Solution:

- Consider Advanced Non-Bonded Models: Implement a non-bonded model like the 12-6-4 potential, which includes an additional r⁻⁴ term to better describe ion-induced dipole interactions. [6]

- Adopt a Multiscale QM/MM Approach: For the highest accuracy in predicting binding modes, use a workflow that refines structures through QM/MM optimization with a density functional theory (DFT) method after docking and MD simulation. [6]

Diagram: Multiscale Workflow for Metalloprotein Systems

The Scientist's Toolkit: Research Reagents & Solutions

Table: Essential Components for Lennard-Jones Parameter Optimization

| Item | Function in Optimization |

|---|---|

| Target Data | Experimental or high-level theoretical reference data used to fit the parameters. Examples: liquid density, hydration free energy, relative conformational energies. [4] [5] |

| Initial Parameter Guess | A starting set of LJ parameters (σ and ε), often taken from an established force field (e.g., Lipid14, OPLS). [4] |

| Optimization Workflow | Automated software to iteratively adjust parameters. Examples: GrOW (Gradient-based Optimization Workflow) or surrogate-assisted global optimization. [4] [7] |

| Molecular Dynamics (MD) Engine | Software to perform simulations using the trial parameters and compute the properties for comparison with target data. [4] |

| Closure Approximation (for RISM) | An essential component for integral equation theories. Example: Partial Series Expansion of order n (PSE-n). [5] |

FAQ: Understanding Alternative Interatomic Potentials

Q1: Why would I use an alternative to the standard Lennard-Jones potential? While the Lennard-Jones (LJ) 12-6 potential is a cornerstone model in molecular simulations due to its computational simplicity, it may not accurately capture the repulsive forces for all substances. Alternatives can provide a more physically realistic representation of interactions, leading to more accurate simulation results. For instance, the repulsive exponent in the LJ form was historically set to 12 for mathematical convenience, but data for substances like Argon can suggest that values such as n ≈ 12.7 are more appropriate [8].

Q2: What is the core difference between the Buckingham and Lennard-Jones potentials?

The key difference lies in how they model the repulsive force at short interatomic distances. The Lennard-Jones potential uses a term proportional to 1/r^12, while the Buckingham potential replaces this with an exponential term, A * exp(-B * r) [9] [10]. The exponential decay is considered a more physically justified model for the repulsion arising from the interpenetration of electron shells [9] [10].

Q3: When should I avoid using the standard Buckingham potential?

The standard Buckingham potential has a significant weakness: as the distance r approaches zero, the attractive -C/r^6 term diverges faster than the repulsive exponential term, which merely converges to a constant. This can lead to an unphysical "collapse" at very short distances [9]. If your simulation involves high-energy collisions or systems where atoms can get extremely close, this potential is risky. The "exp-six" or other modified versions should be considered instead [9].

Q4: What are SAAPx and continued fraction potentials, and when are they used? The SAAPx (Deiters and Sadus) potential is a general functional form that requires fitting multiple coefficients (six for noble gases like Xe, Kr, Ar, and Ne, and seven for Helium) to high-quality ab initio data [8]. Continued fraction approximations represent a new, data-driven method that expresses the unknown potential as a compact analytic continued fraction with integer coefficients, offering a computationally efficient way to closely approximate ab initio data for noble gases [8] [11].

Q5: How do I decide which potential is best for my system? The choice depends on a balance of physical accuracy, computational cost, and numerical stability. For general purposes where the LJ form is acceptable, it remains a good choice. For more accurate repulsive forces, the Buckingham or Mie potentials are options, but beware of the Buckingham collapse. For the highest accuracy, especially when working with noble gases, the newer SAAPx or continued fraction methods are promising but may require more effort to parameterize [8].

Troubleshooting Guides

Problem: Unphysical energy minimization or simulation crash at short atomic distances.

- Potential Cause: You are likely using the standard Buckingham potential, which becomes infinitely attractive as

r → 0[9]. - Solution:

- Switch to a modified potential: Implement the "Modified Buckingham (Exp-Six)" potential, which is reparameterized to avoid this issue [9].

- Use a different repulsive form: Consider using the Mie potential (the generalized form of LJ) or the Lennard-Jones Truncated & Shifted (LJTS) potential, which do not suffer from this collapse [1] [8].

- Implement a hard cutoff: As a last resort, implement a short-range cutoff to prevent atoms from entering the unstable region, though this is not physically rigorous.

Problem: Inaccurate representation of fluid behavior or material properties despite fitting parameters.

- Potential Cause: The functional form of your chosen potential (e.g., the fixed exponents in the standard LJ potential) may be too rigid to capture the true interaction physics of your specific atoms or molecules [8].

- Solution:

- Use a more flexible potential: Refit your data using the generalized Mie potential, which allows both the repulsive and attractive exponents to vary [8].

- Explore data-driven forms: If high-quality ab initio data is available, consider using the SAAPx functional form or the new continued fraction approximation method to "learn" the potential directly from the data [8] [11].

- Re-evaluate your target data: Ensure that the experimental or ab initio data you are using for parameterization is appropriate for the condensed-phase conditions you are studying [12].

Problem: Long-range interaction corrections are causing significant errors in property calculation.

- Potential Cause: The Lennard-Jones potential has an infinite range. When a finite cut-off radius is used in simulations, the contributions from interactions beyond this cut-off must be accounted for with long-range corrections (LRC). An LRC scheme that is not converged or is applied incorrectly will introduce errors [1].

- Solution:

- Increase your cut-off radius: Test if your computed properties (e.g., energy, pressure) converge with a longer cut-off.

- Apply standard LRC formulas: Use established analytical expressions for correcting energy, pressure, and other properties for homogeneous systems. Ensure the assumptions behind the LRC (e.g., radial distribution function g(r) = 1 for r > cut-off) are valid for your system [1].

- Consider a truncated potential: For computational simplicity and to avoid LRC complexities, use the LJTS potential, which is shifted to zero at a specified cut-off distance, such as

r_end = 2.5σ[1].

Comparison of Key Interatomic Potentials

The table below summarizes the mathematical forms and key characteristics of the discussed potentials to aid in selection.

| Potential Name | Mathematical Form | Key Parameters | Strengths | Weaknesses |

|---|---|---|---|---|

| Lennard-Jones (12-6) [1] | V(r) = 4ε [(σ/r)¹² - (σ/r)⁶] |

σ, ε |

Computationally efficient; widely used and understood. | Repulsive exponent (12) may not be physically accurate for all systems [8]. |

| Mie Potential [8] | V(r) = [n/(n-m)] (n/m)^(m/(n-m)) ε [(σ/r)ⁿ - (σ/r)ᵐ] |

σ, ε, n, m |

More flexible than LJ; exponents can be fitted to data. | More computationally intensive than LJ; requires fitting two exponents. |

| Buckingham [9] | V(r) = A exp(-B r) - C / r⁶ |

A, B, C |

More physically realistic repulsive term. | Prone to unphysical "collapse" at very short distances (r→0) [9]. |

| Modified Buckingham (Exp-Six) [9] | V(r) = ε/(1-6/α) * [ (6/α) exp(α(1-r/r_min)) - (r_min/r)⁶ ] |

ε, α, r_min |

Avoids the collapse of the standard Buckingham potential. | More complex parameterization. |

| SAAPx [8] | General functional form (multiple terms) | 6-7 coefficients | High accuracy for noble gases by fitting to ab initio data. | Complex form; requires fitting many parameters. |

| Continued Fraction [8] [11] | Represented as an analytic continued fraction, e.g., depending on n^{-r}. |

Integer coefficients, base n |

Compact, computationally efficient, data-driven approximation. | New method; applicability beyond noble gases under exploration. |

Experimental Protocol: Parameterizing a New Potential UsingAb InitioData

This protocol outlines the steps for deriving a new interatomic potential, such as a continued fraction approximation, from high-quality ab initio data, as described in recent literature [8].

1. Data Acquisition and Preparation:

- Source: Obtain reference data for the two-body interatomic potential from reliable ab initio quantum chemistry calculations. Public datasets for noble gas dimers (Xe, Kr, Ar, Ne, He) are available [8] [11].

- Format: The data should consist of a set of points (r_i, V(r_i)), where r_i is the interatomic distance and V(r_i) is the interaction energy.

2. Selection of a Functional Form:

- Choose the mathematical structure you wish to fit. For a continued fraction approximation, the potential is represented as a truncated continued fraction, often with integer coefficients and a dependence on a variable like n^{-r} [8].

- The "depth" of the fraction (e.g., depth=1 or depth=2) determines the number of convergents and the complexity of the fit.

3. Non-Linear Optimization:

- Task: Find the optimal parameters (e.g., integer coefficients and base n) that minimize the difference between the model's predictions and the ab initio data.

- Method: This is a challenging non-linear optimization problem. Employ global optimization strategies such as Memetic Algorithms or other symbolic regression techniques to effectively search the parameter space and avoid local minima [8].

4. Validation and Testing: - Internal Validation: Assess the quality of the fit by calculating residuals (e.g., Root-Mean-Square Error) against the training ab initio data. - External Validation: Test the newly parameterized potential in molecular dynamics simulations and compare the predicted macroscopic properties (e.g., equation of state, phase behavior) against experimental data to ensure transferability.

The Scientist's Toolkit: Research Reagent Solutions

This table lists key computational "reagents" – the potential functions and methods – essential for research in this field.

| Item | Function / Purpose |

|---|---|

| Lennard-Jones (12-6) Potential | A foundational, computationally efficient model for simulating simple repulsive and attractive interactions in fluids and soft matter [1]. |

| Mie Potential | A generalized form used for developing more accurate potentials by calibrating both repulsive and attractive exponents to data [8]. |

| Buckingham Potential | Provides a more physically realistic model for repulsive forces due to electron shell overlap, used in molecular dynamics simulations [9] [10]. |

| Lennard-Jones Truncated & Shifted (LJTS) | A numerically stable alternative to the "full" LJ potential, commonly used in molecular simulations with a finite cut-off to avoid long-range corrections [1]. |

| Continued Fraction Approximation | A new, data-driven method for deriving compact and computationally efficient analytical potentials that closely match ab initio data [8] [11]. |

| Symbolic Regression Software | Software tools (e.g., TuringBot) used to "learn" the functional form of a potential directly from data, aiding in the discovery of new models like the continued fraction form [8]. |

| Ab Initio Reference Data | High-quality quantum mechanical calculation results used as the ground truth for parameterizing and validating classical interatomic potentials [8]. |

Workflow for Potential Optimization

The following diagram illustrates the logical workflow for selecting and optimizing an interatomic potential within a research project focused on using condensed-phase target data.

Why Condensed-Phase Target Data is Critical for Predictive Force Fields

Frequently Asked Questions

FAQ 1: Why can't I just use gas-phase quantum mechanical data to optimize my Lennard-Jones parameters? While gas-phase QM data is excellent for initial parameter estimates, it fails to capture the many-body effects and complex molecular environments present in liquids and solids. Force fields trained solely on gas-phase data often show systematic errors in predicting condensed-phase properties like density and enthalpy of vaporization because they miss the critical balance of intermolecular interactions that occur in dense environments [13] [14]. Using condensed-phase target data ensures your parameters are optimized for the actual conditions where they'll be applied.

FAQ 2: My simulated densities match experiment, but enthalpies of vaporization are consistently off. What's wrong? This is a common issue indicating a problem with your Lennard-Jones parameter balance. The density primarily constrains the distance parameter (Rmin or σ), while the enthalpy of vaporization constrains the well depth (ε). If you have good densities but poor enthalpies, your well depths likely need adjustment. This discrepancy can also arise from inadequate treatment of polarization differences between gas and liquid phases in fixed-charge force fields [14]. Consider using a polarizable force field or re-optimizing your ε parameters against condensed-phase enthalpic data.

FAQ 3: How do I know if my optimized parameters will transfer to new molecules? Robust parameter transferability requires validation against molecules not included in your training set. The standard protocol involves:

- Optimizing parameters using a diverse training set of 5-10 molecules per functional group

- Validating on a separate set of 3-5 molecules with the same functional groups

- Testing performance on thermodynamic properties like hydration free energies and mixture properties [13] Parameters that perform well on both training and validation sets demonstrate good transferability. Including binary mixture data in training significantly improves transferability for solute-solvent interactions [14].

FAQ 4: What properties should I target for the most robust Lennard-Jones parameters? For comprehensive parameter optimization, target multiple properties simultaneously:

Table 1: Key Target Properties for Lennard-Jones Parameter Optimization

| Property Category | Specific Properties | Parameters Most Constrained |

|---|---|---|

| Energetics | Enthalpy of vaporization (ΔHvap), Enthalpy of mixing (ΔHmix) | Well depth (ε) |

| Volumetric | Density (ρ), Molecular volume (Vm) | Distance at minimum (Rmin) |

| Solvation | Hydration free energy (HFE) | Both ε and Rmin |

| Structural | Ion-Oxygen distance (IOD), Radial distribution functions | Rmin |

Combining pure liquid properties (density, ΔHvap) with mixture properties (ΔHmix) and solvation properties (HFE) provides the most robust parameter constraints [13] [14] [5].

Troubleshooting Guides

Problem: Poor Reproduction of Experimental Activity Coefficients or Mixing Enthalpies

Issue: Your force field accurately reproduces pure liquid properties but fails for binary mixtures.

Solution: Incorporate mixture data directly into your parameter optimization workflow.

Table 2: Troubleshooting Mixture Property Reproduction

| Symptom | Possible Cause | Solution |

|---|---|---|

| Systematic error in ΔHmix for specific functional group pairs | Inadequate A-B interaction parameters | Retrain LJ parameters for concerned atom types using ΔHmix data [14] |

| Poor activity coefficients across multiple mixtures | Limited training to pure properties only | Expand training set to include multiple binary mixtures with varying composition [14] |

| Good pure properties but poor solvation free energies | Missing solute-solvent interaction balance | Include hydration free energies in optimization targets [13] |

Experimental Protocol: When optimizing with mixture data:

- Select 3-5 binary mixtures containing the functional groups of interest

- Obtain experimental density and enthalpy of mixing data at multiple compositions

- Calculate properties using molecular dynamics simulations:

- For ΔHmix: Simulate mixture and pure components separately

- Apply formula: ΔHmix(x₁,x₂) = Hmix - x₁H₁ - x₂H₂

- Include these properties in your objective function during parameter optimization [14]

Problem: Parameter Correlation During Optimization

Issue: Multiple parameter combinations give similar quality for your target properties, making it difficult to identify the optimal set.

Solution: Implement a multi-stage optimization approach with enhanced sampling.

Experimental Protocol (Deep Learning-Optimized Workflow):

- Initial Sampling: Use Latin Hypercube Design to broadly sample LJ parameter space for multiple atom types simultaneously [13]

- Molecular Simulations: Run MD simulations for each parameter set to calculate condensed-phase properties (Vm, ΔHvap, ΔHsub)

- Deep Learning Training: Train neural networks to predict properties from parameters using the simulation data

- Parameter Screening: Use trained models to screen 10⁷+ parameter combinations, selecting those minimizing error against experimental data

- QM Validation: Validate top candidates using QM rare-gas interaction energies and geometries [13]

Deep Learning Optimization Workflow

Experimental Protocols

Protocol 1: Standard Condensed-Phase Property Calculation

This protocol details how to calculate key target properties from MD simulations for force field optimization.

Target Properties:

- Density (ρ) and Molecular Volume (Vm)

- Enthalpy of Vaporization (ΔHvap)

- Enthalpy of Sublimation (ΔHsub)

- Dielectric Constant (ε)

Procedure:

- System Setup:

- Build simulation boxes with 150-500 molecules depending on system size

- Apply standard energy minimization and equilibration protocols

Production Simulation:

Property Calculation:

- Density: Average from NPT simulation trajectory

- ΔHvap: Calculate using: ΔHvap = ⟨Ugas⟩ - ⟨Uliquid⟩ + PΔV

- ⟨Ugas⟩: Energy from gas-phase simulation of single molecule

- ⟨Uliquid⟩: Energy per molecule from liquid simulation

- PΔV: Work term (approximately RT for liquids) [14]

- Dielectric Constant: Calculate from fluctuation theory of polarization

Protocol 2: Multi-Stage Optimization with QM Validation

For highest accuracy, combine condensed-phase data with QM validation.

Stage 1: Initial Optimization

- Target experimental condensed-phase properties of training set molecules

- Use deep learning or gradient-based optimization methods [13] [16]

- Include multiple molecules per functional group (5-10 recommended)

Stage 2: QM Validation

- Calculate interaction energies between training molecules and rare-gas probes

- Compare QM (CCSD(T)/CBS) with force field results

- Ensure accurate reproduction of interaction energies and geometries [13]

Stage 3: Transferability Testing

- Validate on molecules not included in training set (3-5 minimum)

- Test reproduction of hydration free energies and mixture properties

- Confirm dielectric constants match experimental values [13]

The Scientist's Toolkit

Table 3: Essential Research Tools and Resources

| Tool/Resource | Function | Application in LJ Optimization |

|---|---|---|

| CHARMM/NAMD | Molecular dynamics simulation | Calculating condensed-phase properties from parameter sets [13] |

| Psi4 | Quantum chemical calculations | Generating QM target data for validation [13] |

| Deep Learning Frameworks (TensorFlow, PyTorch) | Neural network training | Building property prediction models for parameter screening [13] [17] |

| Latin Hypercube Sampling | Experimental design | Efficiently exploring multi-dimensional parameter space [13] |

| ThermoML Archive | Experimental data repository | Accessing curated thermodynamic properties for training [14] |

| Reference Interaction Site Model (RISM) | Integral equation theory | Calculating solvation properties without extensive sampling [5] |

Performance Metrics and Validation

Key Metrics for Force Field Assessment:

Table 4: Quantitative Performance Metrics from Recent Studies

| Force Field | Density Error (%) | ΔHvap Error (kcal/mol) | HFE Error (kcal/mol) | Reference |

|---|---|---|---|---|

| DL-Optimized Drude FF | ~1-2% | ~1-2% | ~1-2% | [13] |

| GAFF2 | 2.45% | 2.43 | Not reported | [16] |

| pGM (Optimized) | 1.69% | 1.39 | Not reported | [16] |

| RISM-Optimized Ions | Improved vs. previous | Improved HFE | Improved activity coefficients | [5] |

Best Practices for Validation:

- Always include both training and validation set performance

- Report multiple property types (energetic, volumetric, structural)

- Compare against experimental uncertainty when available

- Test across temperature and concentration ranges for transferability

- Validate against both pure and mixture properties [13] [14]

Advanced Workflows for Parameter Optimization: From Automation to Machine Learning

Frequently Asked Questions (FAQs)

1. What is the GrOW framework, and what is it used for? The Gradient-based Optimization Workflow (GrOW) is an automated optimization toolbox written in Python designed for force field (FF) parameterization [4]. It is used to optimize parameters, such as Lennard-Jones (LJ) parameters, by coupling molecular dynamics (MD) simulations with optimization algorithms to improve the reproduction of target data, which can include both quantum mechanical (QM) data and experimental observables [4].

2. Why would I use condensed-phase target data for optimizing Lennard-Jones parameters? Using condensed-phase (or macroscale) target data, like liquid-phase density, helps ensure that the optimized force field parameters are meaningful for simulating real-world conditions in liquids and solids [4]. This approach, especially when combined with gas-phase (nanoscale) data, can improve the overall accuracy and transferability of the force field across different properties and temperatures [4].

3. A common error is "Poor Reproduction of Ensemble Properties" (e.g., density). What should I check? This often indicates an issue with the non-bonded parameters or an imbalance in the optimization objectives. We recommend the following steps:

- Verify Parameter Ranges: Ensure the allowed ranges for

eps(ε, well depth) andrmin(distance at minimum potential) in your parameter interface are physically reasonable and sufficiently broad for the optimization algorithm to explore. Initial values should be within these ranges [18]. - Check Training Data Quality: Confirm that your reference data (e.g., from dispersion-corrected DFTB calculations) is accurate and that the molecular dynamics simulations used to generate it are properly converged [18].

- Re-evaluate Objective Balance: The force field may be over-fitting to one type of observable. Consider adjusting the weighting of different targets in your objective function, for instance, balancing the importance of liquid density against conformational energies [4].

4. My optimization is not converging. What could be wrong?

- Initial Parameters: The optimization is highly sensitive to the starting values of the parameters. If the initial

epsandrminare too far from the optimal region, the algorithm may struggle to converge. Use values from a well-established force field as a starting point if possible [4]. - Algorithm Settings: Review the settings for the steepest descent algorithm or other optimizers within GrOW. The step size may be too large or too small [4].

- Objective Function: The loss function might be too complex or contain conflicting targets. Try simplifying the objective function to a single, well-defined property (like density) to see if the optimization converges, then gradually add more objectives [4].

5. How can I assess the transferability of my newly optimized parameters? To test transferability, use the optimized parameter set to simulate properties and systems that were not included in the training set. For example, if you optimized parameters on n-octane at 293.15 K, you should [4]:

- Test on Similar Molecules: Simulate properties like surface tension and viscosity for other linear alkanes (e.g., n-hexane, n-heptane).

- Test at Different Temperatures: Run simulations at temperatures not used during optimization (e.g., 315.15 K and 338.15 K).

- Compare to Reference Data: Evaluate how closely the simulation results match additional experimental or high-level computational data.

Troubleshooting Guide: Common Experimental Issues

| Problem Area | Specific Issue | Symptom | Likely Cause | Solution |

|---|---|---|---|---|

| Workflow Setup | Incorrect Parameter Interface | Jobs fail to start; parameters are not varied. | Parameter definitions (names, ranges, active status) in the parameter_interface.yaml file are misconfigured [18]. |

Validate the YAML file structure. Ensure parameters like eps and rmin are marked as is_active: true and have sensible range values [18]. |

| Workflow Setup | Reference Data Mismatch | Large, consistent errors in energy/force comparisons. | The reference data (e.g., from DFTB) and the force field engine are modeling the system at different levels of theory or with inconsistent settings [18]. | Double-check that the methods and conditions used to generate your training set are consistent with how the force field is applied in the optimization loop. |

| Convergence & Accuracy | Overfitting to Training Set | Excellent reproduction of training data (e.g., n-octane density) but poor performance on test molecules or other properties (e.g., n-nonane viscosity) [4]. | The parameter set is too specialized to the limited data in the training set and lacks generalizability. | Expand the training set to include a more diverse set of observables, even from a limited number of thermodynamic state points [4]. |

| Convergence & Accuracy | Conflicting Objectives | Optimization stalls; improving one observable (e.g., conformational energy) drastically worsens another (e.g., liquid density) [4]. | The nanoscale and macroscale target data impose conflicting physical requirements on the parameters. | Implement a multi-objective optimization strategy or adjust the relative weights of the targets in the loss function to find a better compromise [4]. |

Research Reagent Solutions: Essential Components for LJ Parameter Optimization

The following table details the key "reagents" or components needed to set up an automated optimization experiment for Lennard-Jones parameters using a framework like GrOW.

| Item / Component | Function / Purpose in the Experiment |

|---|---|

| Initial Parameter Set | Provides the starting values for eps and rmin. Often taken from an existing force field (e.g., Lipid14) [4]. |

Parameter Interface File (e.g., parameter_interface.yaml) |

Defines the parameters to be optimized, their initial values, allowed ranges, and active/inactive status [18]. |

Training Set (e.g., training_set.yaml) |

Contains the reference data the model is trained against. This can include energies and forces from quantum calculations or experimental data like density [4] [18]. |

Job Collection (e.g., job_collection.yaml) |

A list of computational jobs (e.g., single-point energy and gradient calculations for specific molecular configurations) that define the systems used for optimization [18]. |

| Molecular Dynamics (MD) Engine | The software that performs simulations using the current force field parameters to generate predicted observables for comparison with the training set [4]. |

| Optimization Algorithm (e.g., Steepest Descent) | The core mathematical process that adjusts parameter values to minimize the difference between simulated results and reference data [4]. |

Detailed Experimental Protocol: A Proof-of-Concept Workflow

This protocol outlines the methodology for a proof-of-concept optimization of Lennard-Jones parameters, as described in relevant literature [4].

Objective: To optimize the Lennard-Jones parameters for n-octane using a combination of single-molecule conformational energies (nanoscale property) and liquid-phase density at 293.15 K (macroscale property) as optimization targets.

Methodology:

- System Selection: Select a series of flexible linear alkanes (n-hexane, n-heptane, n-octane, n-nonane) for testing transferability [4].

- Initialization:

- Initial Parameters: Choose a set of initial Lennard-Jones parameters for carbon and hydrogen atoms. The cited study used parameters from the Lipid14 force field as a starting point [4].

- Parameter Interface: Define these parameters in a

parameter_interface.yamlfile, specifying their initial values and the allowed range for optimization (e.g.,epsbetween 1e-5 and 0.01) [18].

- Reference Data Generation:

- Nanoscale Data: Calculate the relative conformational energies for an isolated n-octane molecule using a high-level quantum mechanical (QM) method.

- Macroscale Data: Obtain the experimental liquid-phase density for n-octane at 293.15 K.

- Automated Optimization Loop (GrOW):

- The GrOW workflow automatically executes the following steps iteratively [4]:

- Simulation: Run molecular dynamics simulations of the target system (liquid n-octane) using the current LJ parameters.

- Property Calculation: From the simulation, extract the predicted properties (conformational energies and density).

- Loss Calculation: Calculate a loss function that quantifies the difference between the predicted properties and the reference data.

- Parameter Update: Using a gradient-based optimization algorithm (e.g., steepest descent), calculate a new, improved set of

epsandrminparameters. - Convergence Check: The loop continues until the loss function is minimized to a satisfactory level or other convergence criteria are met.

- Validation and Transferability Testing:

- Use the final optimized parameters to simulate additional properties (e.g., surface tension, viscosity) for n-octane and other linear alkanes (n-hexane, n-heptane) at various temperatures (293.15 K, 315.15 K, 338.15 K) that were not part of the training set [4].

- Compare these results to experimental data to assess the transferability and overall accuracy of the optimized force field.

GrOW Optimization Workflow

The following diagram illustrates the automated, iterative process of force field parameter optimization using a framework like GrOW.

Relationship Between Optimization Objectives and Parameters

This diagram shows the logical relationship between different types of optimization targets and the parameters they influence.

Coupling Nanoscale and Macroscale Target Data in a Single Workflow

FAQs: Optimizing Lennard-Jones Parameters

Q1: Why should I combine nanoscale and macroscale data for Lennard-Jones (LJ) parameter optimization? Combining these data types creates a more robust and meaningful force field. Using only nanoscale data (e.g., quantum mechanical conformational energies) may not accurately capture bulk material behavior, while using only macroscale data (e.g., density) can overlook molecular-level details. A combined approach ensures the parameters are valid across scales, improving their predictive power and transferability to other systems and properties not included in the training set [4].

Q2: What are common pitfalls when coupling data from different scales? A major challenge is parameter corruption, where optimizing for a new observable degrades the accuracy of previously well-reproduced properties. This often occurs because the parameter space has multiple local minima, and the optimization algorithm may settle on one that improves the target observables at the expense of others. A careful balance must be struck between the different property domains to avoid this issue [4].

Q3: How transferable are LJ parameters optimized with this method? Transferability is a key goal. Parameters optimized for one molecule (e.g., n-octane) using this coupled approach have been shown to successfully predict properties like density and viscosity for other, chemically similar molecules (e.g., n-hexane, n-heptane, n-nonane) across a range of temperatures, demonstrating good transferability [4].

Q4: What software tools can facilitate this coupled optimization workflow? Automated optimization toolkits are essential. The Gradient-based Optimization Workflow (GrOW) is one such Python-written toolkit designed for this purpose. It can simultaneously leverage quantum mechanical (QM), molecular mechanics (MM), molecular dynamics (MD), and experimental target data to efficiently optimize force-field parameters using algorithms like the steepest descent method [4].

Troubleshooting Guides

Issue 1: Poor Transferability to Unseen Molecules or Temperatures

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Over-fitting to the specific training set. | Check if the model performs well on training data but poorly on validation data (e.g., other alkane chains or different temperatures). | Expand the training set to include a more diverse set of molecules and state points, even if minimally. Incorporate a penalty term in the optimization objective function to prevent parameters from becoming overly specific [4]. |

| Insufficient training observables. | Verify the types of properties used for optimization. Relying on only one type of data (e.g., only density) is a key indicator. | Include a combination of nanoscale (e.g., relative conformational energies from QM) and macroscale (e.g., liquid density, surface tension) properties in the optimization objective to create a more generalizable parameter set [4]. |

Issue 2: Optimization Fails to Converge or Results in Parameter Corruption

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Conflicting objectives between nanoscale and macroscale target data. | Observe if the optimization progress shows one property improving while another worsens significantly. | Use a multi-objective optimization strategy that seeks a Pareto-optimal solution, acknowledging the trade-offs between different observables. Adjust the weighting of different targets in the objective function based on their importance [4]. |

| Poor choice of initial parameters. | Restart the optimization from different, well-established initial parameter sets and compare the results. | Use initial parameters from a trusted, broadly validated force field (e.g., Lipid14, OPLS). Running multiple optimizations from different starting points can help identify a more globally optimal solution [4]. |

Issue 3: Inadequate Reproduction of Dynamic or Interfacial Properties

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Training set lacks relevant properties. | Check if dynamic (e.g., viscosity) or interfacial (e.g., surface tension) properties were included in the optimization. | Explicitly include these challenging observables, such as surface tension and viscosity, in the training set. This directly guides the optimization to reproduce them, though it may slightly reduce accuracy for other properties [4]. |

| Limitations of the Class I force field. | Evaluate if inaccuracies persist across a wide range of properties despite thorough optimization. | Consider that simple two-body Lennard-Jones potentials have inherent physical limitations. For greater accuracy, explore more complex force fields that include polarization, many-body effects, or machine-learning potentials [4]. |

Experimental Protocols & Data Presentation

Detailed Methodology for Coupled LJ Parameter Optimization

The following protocol is adapted from a proof-of-concept study optimizing n-alkanes [4].

1. Objective Definition:

- Primary Goal: Optimize carbon and hydrogen LJ parameters (σ and ε) to simultaneously reproduce a nanoscale property (gas-phase relative conformational energies of n-octane) and a macroscale property (liquid-phase density of n-octane at 293.15 K).

2. System Setup:

- Molecules: n-octane (for optimization), n-hexane, n-heptane, n-nonane (for transferability testing).

- Software: An automated optimization toolkit like GrOW [4].

- Force Field: A Class I force field where LJ parameters are optimized, and other parameters (bonds, angles, dihedrals) are kept fixed.

3. Optimization Cycle: a. Initialization: Start with an initial guess for the LJ parameters (e.g., from the Lipid14 force field). b. Nanoscale Calculation: Perform molecular mechanics (MM) calculations to determine the relative conformational energies of n-octane and compare them to target quantum mechanical (QM) data. c. Macroscale Calculation: Run molecular dynamics (MD) simulations of liquid n-octane at 293.15 K and calculate the equilibrium density. d. Objective Function Evaluation: Calculate a combined error metric that quantifies the deviation from both the QM conformational energies and the experimental density. e. Parameter Update: The optimization algorithm (e.g., steepest descent) uses the calculated error to propose a new, improved set of LJ parameters. f. Iteration: Steps b-e are repeated until the objective function is minimized and convergence is achieved.

4. Validation:

- Use the finalized parameters to simulate properties not included in the training, such as:

- Density at other temperatures (e.g., 315.15 K, 338.15 K).

- Surface tension and viscosity for n-octane and other n-alkanes.

The table below exemplifies the kind of results obtained from a coupled optimization, showing how optimized parameters can improve accuracy across multiple properties [4].

Table 1: Exemplary performance of optimized Lennard-Jones parameters for n-alkanes.

| Property | Molecule | Temperature (K) | Initial Parameter Error (%) | Optimized Parameter Error (%) |

|---|---|---|---|---|

| Liquid Density | n-octane | 293.15 (Training) | ~2.5 | ~0.1 |

| Liquid Density | n-hexane | 293.15 (Validation) | ~1.8 | ~0.5 |

| Surface Tension | n-octane | 293.15 | ~15 | ~8 |

| Viscosity | n-octane | 293.15 | ~25 | ~15 |

Table 2: Key research reagents and computational solutions for LJ parameter optimization.

| Item Name | Function / Description |

|---|---|

| Linear Alkanes (n-Hexane to n-Nonane) | Serve as model systems due to their chemical simplicity, facilitating the clear evaluation of parameter transferability [4]. |

| Gradient-based Optimization Workflow (GrOW) | An automated Python-based toolkit that couples MD simulations and parameter optimization, enabling efficient and systematic parameter search [4]. |

| Quantum Mechanical (QM) Target Data | Provides high-accuracy nanoscale reference data, such as the relative conformational energies of a molecule, which the MM model aims to reproduce [4]. |

| Class I Force Field | The functional form upon which parameters are optimized; it typically includes bonds, angles, dihedrals, and non-bonded (LJ and Coulomb) interactions [4]. |

Workflow Visualization

SVR & PSO Troubleshooting Guide

Support Vector Regression (SVR) FAQs

Q1: My SVR model is performing poorly. What are the key hyperparameters I should tune? The core hyperparameters to tune in SVR are the Kernel, Regularization parameter (C), and Epsilon (ε) [19] [20].

- Kernel: Defines the feature space for the hyperplane. Common choices are Linear, Polynomial, and Radial Basis Function (RBF) [19].

- C (Regularization): Controls the trade-off between achieving a low error on training data and a simpler model. A high C fits the training data more closely, risking overfitting, while a low C promotes a more generalized model [19].

- Epsilon (ε): Defines the width of the epsilon-insensitive tube. No penalty is given for predictions within ε distance from the actual value. A proper ε makes the model robust to outliers [19] [20].

Q2: How should I pre-process my data before using SVR? Feature scaling is critical for SVR performance [21] [22]. It's recommended to scale all input features to the same interval, such as [0, 1] or [-1, 1], to prevent any single feature from dominating the kernel calculations [22].

Q3: When should I use SVR over other regression algorithms like Linear Regression? SVR is particularly powerful when your dataset is small, the data is non-linearly related, or the dataset contains outliers [20]. Its ability to use kernels to handle non-linear relationships and the epsilon-insensitive loss that ignores small errors makes it robust [19] [21]. For very large datasets, other algorithms might be computationally more efficient [20].

Particle Swarm Optimization (PSO) FAQs

Q4: My PSO algorithm is converging to a sub-optimal solution. How can I improve it? This is often due to premature convergence. You can address it by [23] [24] [25]:

- Adjusting the Inertia Weight (w): A higher inertia (e.g., 0.9) promotes global exploration of the search space, while a lower inertia (e.g., 0.4) favors local exploitation. You can decrease it linearly during the run for a good balance [23] [24].

- Reviewing Cognitive and Social Coefficients: Parameters c1 and c2 control the particle's movement toward its personal best (

pBest) and the swarm's global best (gBest), respectively [23] [24]. Ensure neither is too dominant. - Changing the Swarm Topology: Using a global best (star) topology can lead to fast but premature convergence. A local best (ring) topology, where particles only share information with neighbors, can improve exploration and help avoid local optima [23] [25].

Q5: How do I set the number of particles and iterations? There is no one-size-fits-all answer, as it depends on the problem's complexity. A common starting point is a swarm size of 20-40 particles [24]. The number of iterations should be sufficient for the fitness value to stabilize. Start with a few hundred to a thousand iterations and adjust based on observed convergence behavior [24].

Q6: What are the main advantages of PSO over other optimization algorithms like Genetic Algorithms (GA)? PSO is often favored for its conceptual simplicity, ease of implementation, and fewer parameters to tune compared to GA [24] [25]. It does not use evolutionary operators like crossover and mutation, instead relying on the social sharing of information to guide the search [25]. Studies have shown PSO can outperform GA on various optimization problems [25].

Technical Reference Tables

Table 1: SVR Kernel Comparison

| Kernel | Function | Typical Use Case | Key Parameter | ||||

|---|---|---|---|---|---|---|---|

| Linear [19] | ( K(xi, xj) = xi \cdot xj ) | Linearly separable data | C |

||||

| Polynomial [19] | ( K(xi, xj) = (xi \cdot xj + 1)^d ) | Captures polynomial relationships | degree (d) |

||||

| Radial Basis Function (RBF) [19] [21] | ( K(xi, xj) = \exp(-\gamma | xi - xj | ^2) ) | Default for non-linear relationships; handles complex structures | gamma ((\gamma)) |

Table 2: Key PSO Parameters and Their Impact

| Parameter | Description | Impact on Optimization |

|---|---|---|

Inertia Weight (w) [23] [24] |

Balances global and local search. | High: More exploration. Low: More exploitation. |

Cognitive Coefficient (c1) [23] [24] |

Attraction to particle's own best position. | High: Encourages individual learning. |

Social Coefficient (c2) [23] [24] |

Attraction to swarm's best position. | High: Promotes convergence and social learning. |

| Swarm Size [24] | Number of particles in the swarm. | Larger swarms explore more but increase computational cost. |

Experimental Protocols

Protocol 1: Hyperparameter Tuning for SVR with Grid Search

This protocol is used to find the optimal combination of SVR hyperparameters [20].

- Define Parameter Grid: Specify a set of values to try for

C,epsilon, andkernelparameters. - Initialize Model: Create an SVR model object.

- Set Up GridSearchCV: Use cross-validation (e.g., 5-fold) to evaluate all combinations of parameters in the grid. A common scoring metric is R² [20].

- Fit and Extract: Fit the

GridSearchCVobject to your training data. The best parameters can be found in thebest_estimator_attribute [20].

Protocol 2: Standard PSO Workflow for Parameter Optimization

This general workflow is adapted for optimizing force field parameters [26] [27].

- Problem Formulation: Define the fitness function (e.g., the root-mean-square error between calculated and experimental properties like density and heat of vaporization) [26].

- Initialize Swarm: Define the search space boundaries and randomly initialize particle positions (candidate parameters) and velocities within them [23].

- Iterative Optimization:

a. Evaluate Fitness: Calculate the fitness for each particle's position [23].

b. Update Bests: Compare each particle's fitness to its personal best (

pBest) and the swarm's global best (gBest), updating them if a better solution is found [23] [24]. c. Update Velocity and Position: For each particle, calculate its new velocity and then update its position [23] [24]. - Termination: Repeat Step 3 until a stopping criterion is met (e.g., max iterations or sufficient fitness) [23].

Workflow Visualization

SVR Hyperparameter Tuning

PSO in Force Field Optimization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for LJ Parameter Optimization

| Item | Function in the Research Context |

|---|---|

| Experimental Thermodynamic Data [26] | Serves as the target for optimization. Includes properties like bulk density, heat of vaporization, and hydration free energy for a training set of molecules. |

| Initial Force Field Parameters [26] | Provides a starting point for the optimization, often derived from knowledge-based libraries (e.g., CGenFF) or quantum mechanical calculations. |

| Fitness Function [26] [27] | A quantitatively defined objective (e.g., error between simulation and experiment) that the PSO algorithm minimizes. |

| Molecular Dynamics (MD) Engine [26] | Software used to compute the thermodynamic properties from a given set of parameters, which are then fed into the fitness function. |

| SVR Model with RBF Kernel | A machine learning tool that can be used to create surrogate models or analyze relationships within the parameter space and resulting properties. |

Frequently Asked Questions (FAQs)

Q1: What is the core concept behind delta-learning in computational chemistry? Delta-learning is a machine learning strategy designed to bridge the accuracy gap between computationally expensive and highly accurate electronic structure methods, like CCSD(T), and faster but less precise methods. It works by training a model to predict the difference (or "delta") between a high-level target method and a lower-level baseline method. Once trained, the model can correct the results of the low-level calculation, achieving near-target accuracy at a fraction of the computational cost [28] [29].

Q2: Why is CCSD(T) accuracy considered the "gold standard" and why is it challenging to achieve for condensed phases? CCSD(T) (Coupled Cluster theory with Single, Double, and perturbative Triple excitations) is widely regarded as the most reliable method for predicting molecular electronic energies and interaction energies, often referred to as the "gold standard" [30] [31]. However, applying it to condensed-phase systems like liquid water is extremely challenging due to its prohibitive computational cost and scaling, which makes direct simulations of large systems or long timescales practically infeasible [31] [29].

Q3: How can delta-learning be integrated with the optimization of Lennard-Jones (LJ) parameters? Traditional force fields often rely on system-dependent LJ parameters to model dispersion interactions, which can be difficult to calibrate [28]. Delta-learning offers a path to more physics-informed parameters. For instance, it can be used to predict precise two-electron correlation energies from CCSD(T)-level calculations, which can then be used to inform or replace the empirical dispersion terms in a force field, leading to more robust and transferable LJ parameters [28]. Furthermore, machine learning potentials (MLPs) trained with delta-learning can provide CCSD(T)-level accuracy for bulk properties, creating a superior benchmark for optimizing LJ parameters against condensed-phase target data [31] [29].

Q4: What are the key requirements for generating a successful delta-learned machine learning potential (MLP)? Creating a reliable MLP involves several critical steps [31] [29]:

- High-Quality Training Data: A dataset of molecular configurations with energies and forces calculated at the high-level target theory (e.g., CCSD(T)) is essential.

- Robust MLP Architecture: A suitable machine learning model (e.g., neural networks) must be trained to map atomic configurations to the quantum-mechanical energies and forces.

- Nuclear Quantum Effects (NQEs): For accurate simulation of properties like the density of liquid water, it is crucial to include NQEs in the simulations.

- Constant-Pressure Simulation Capability: The MLP must be able to perform simulations in the isothermal-isobaric (NPT) ensemble to predict fundamental bulk properties like density correctly.

Troubleshooting Guides

Issue 1: Poor Transferability of Optimized Force Fields

Problem: A force field with newly optimized Lennard-Jones parameters performs well on its training data (e.g., liquid density of n-octane at 293.15 K) but fails to accurately predict properties of similar molecules (e.g., n-hexane, n-nonane) or other thermodynamic properties (e.g., surface tension, viscosity) [4].

| Potential Cause & Solution | Supporting Experimental Protocol |

|---|---|

| Cause: Limited optimization objectives. The parameter set was over-fitted to a narrow set of target observables. | Protocol for Multi-Objective Optimization: As a proof-of-concept, simultaneously optimize LJ parameters using a combination of nanoscale (e.g., relative conformational energies of an isolated molecule from QM calculations) and macroscale (e.g., liquid-phase density from ensemble MD simulations) target data [4]. This ensures the parameters capture both intramolecular and bulk-phase behavior. |

| Solution: Expand the training set. Include diverse target observables (e.g., relative conformational energies, densities of multiple liquids, surface tension) across several chemically similar molecules and a range of temperatures during the optimization process to enhance transferability [4]. | Evaluation: After optimization, test the parameters on a validation set containing molecules and properties not included in the training. For example, after training on n-octane, validate the parameters by simulating the density, surface tension, and viscosity of n-hexane, n-heptane, and n-nonane at multiple temperatures (e.g., 293.15 K, 315.15 K, 338.15 K) [4]. |

Issue 2: Inefficient Delta-Learning Model Training

Problem: Training a delta-learning model to predict CCSD(T)-level correlation energies is too slow or requires an impractically large amount of high-level training data [28].

Solution: Leverage a multi-fidelity learning strategy and efficient baselines.

- Cause: Directly training on only high-level CCSD(T) data is computationally demanding.

- Solution: Utilize a Δ-learning setup where the model learns the difference between a low-cost baseline method (e.g., a density functional theory (DFT) functional or the Müller approximation) and the high-level CCSD(T) target. This can be done with a small set of high-level calculations [28]. For example, one can use the Müller-approximated 2PDM calculated with a small basis set as the baseline and correct it to CCSD(T) accuracy with a large basis set [28].

Issue 3: Discrepancies Between Simulated and Experimental Bulk Properties

Problem: Even with a machine learning potential claiming CCSD(T)-level accuracy, simulated bulk properties (e.g., density of liquid water) do not match experimental measurements [29].

Solution: Ensure that all critical physical effects are included in the simulation protocol.

- Cause: Neglect of Nuclear Quantum Effects (NQEs). Protons are treated as classical particles, which is insufficient for systems like water.

- Solution: Incorporate NQEs into the molecular dynamics simulations. Studies have shown that combining a CCSD(T)-accurate MLP with NQEs brings structural and transport properties of liquid water into close agreement with experiment [29].

- Cause: Performing simulations at constant volume instead of constant pressure.

- Solution: Use the MLP to perform constant-pressure (NPT) simulations. This is essential for predicting fundamental isothermal-isobaric properties like the density of liquid water and its temperature of maximum density accurately [29].

Experimental Protocols & Workflows

Protocol 1: Delta-Learning for Dispersion Energy Correction

This protocol outlines the method used to obtain precise 2-electron correlation energies via delta-learning, which can be used to refine dispersion interactions in force fields [28].

- System Selection: Choose a set of representative molecular systems (e.g., a series of water trimers with weak intermolecular interactions).

- Baseline Calculation: For each configuration, calculate the 2-particle density matrix (2PDM) using a fast, approximate method (e.g., the Müller approximation) and a small basis set (e.g., 6-31+G(d)).

- Target Calculation: For the same configurations, calculate the pure 2-electron correlation energies using the high-level target method (e.g., CCSD(T)) and a large basis set (e.g., aug-cc-pVDZ).

- Model Training: Train a machine learning model (e.g., Gaussian Process Regression) to predict the difference (delta) between the high-level correlation energies and the results derived from the baseline 2PDM.

- Validation: Validate the model on a held-out test set, ensuring the maximum absolute error is within an acceptable chemical accuracy threshold (e.g., ~1.30 kJ/mol) [28].

Protocol 2: Multi-Scale Optimization of Lennard-Jones Parameters

This protocol describes a strategy for optimizing LJ parameters using target data from both quantum-mechanical (nanoscale) and ensemble (macroscale) domains [4].

- Define Objectives: Select nanoscale (e.g., relative conformational energies of an isolated n-octane molecule from QM calculations) and macroscale (e.g., liquid density of n-octane at 293.15 K from experiment or high-level simulation) target data.

- Initial Parameter Sampling: Employ a presampling phase in the parameter space, focusing initially on the less computationally expensive nanoscale property to narrow down promising regions [7].

- Surrogate-Assisted Optimization: Use a global optimization algorithm (e.g., an evolutionary algorithm) coupled with a surrogate model (e.g., a neural network) to efficiently explore the parameter space. The surrogate model is trained on-the-fly to predict the cost function, reducing the number of expensive MD simulations required [7].

- Iterative Refinement: The optimization algorithm iteratively proposes new parameters. The surrogate model's predictions are used to guide the search, and promising candidates are validated with full MD simulations to update the surrogate model.

- Validation of Transferability: Test the final optimized parameter set against a diverse validation set, including other molecules (n-hexane, n-heptane), different temperatures, and other thermodynamic properties (viscosity, surface tension) to assess its robustness [4].

Workflow Diagrams

Delta Learning & LJ Optimization

Delta Learning Workflow

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational methods, software, and data types essential for implementing delta-learning and optimizing force field parameters.

| Item Name | Type | Function/Brief Explanation |

|---|---|---|

| CCSD(T)/CBS | Computational Method | The "gold standard" quantum chemistry method used to generate benchmark-quality training and validation data for interaction energies and structures [30] [31]. |

| Delta-Learning (Δ-Learning) | Machine Learning Strategy | A framework to efficiently predict the correction from a fast, low-level quantum method to a high-accuracy method, drastically reducing computational costs [28] [29]. |

| Machine Learning Potentials (MLPs) | Software/Model | Machine-learned interatomic potentials trained on quantum mechanical data that can perform molecular dynamics simulations at near-CCSD(T) accuracy [31] [29]. |

| Improved Lennard-Jones (ILJ) | Analytical Potential | A refined version of the classic LJ potential with a physically meaningful parameter (β) that provides a more accurate representation of the potential well and dispersion forces [30] [32]. |

| Multi-Scale Target Data | Data Type | A combination of nanoscale (e.g., QM conformational energies) and macroscale (e.g., bulk density) observables used to force field parameter optimization for better transferability [4]. |

| Surrogate-Assisted Global Optimization | Optimization Algorithm | An efficient optimization method that uses a surrogate model (e.g., neural network) to approximate expensive-to-evaluate functions (like MD simulations), guiding the search for optimal parameters [7]. |

Frequently Asked Questions

Q1: What is the primary goal of simultaneously optimizing nanoscale and macroscale properties? The goal is to create a more meaningful and transferable force field. Traditional approaches often optimize parameters using either gas-phase quantum mechanical data (nanoscale) or liquid-phase experimental bulk data (macroscale). Coupling these diverse training observables generates parameters that balance both individual molecular behavior and ensemble-level properties, improving overall accuracy even when using limited thermodynamic state points [4].

Q2: Why might my optimized parameters work well for the training data but fail for other molecules or properties? This is a classic issue of force field transferability. If the training set is too narrow (e.g., only one molecule or a single property), the parameters may become overspecialized. The solution is to use a diverse set of training observables (e.g., conformational energies and density) and validate the parameters against other molecules and properties not included in the training, such as surface tension and viscosity across a temperature range [4].

Q3: A common optimization fails to reproduce experimental melting curves for peptides. Is the functional form of the force field to blame? This is a known challenge. Research suggests that for peptide folding, the standard force field functional form may be inherently limited in its ability to reproduce correct melting curves over a significant temperature range, even with highly sensitive torsion parameters. It is conjectured that no set of parameters for the usual functional form can achieve this when used with common water models like TIP3P [33].

Q4: How can I improve the accuracy of hydration free energies in my simulations? Systematic errors in hydration free energies are often linked to the Lennard-Jones (LJ) parameters and combining rules. A highly effective method is to introduce pair-specific LJ parameters between solute heavy atoms and water oxygen atoms, which override the standard combining rules. This targeted optimization can greatly improve agreement with experimental values without altering the properties of the individual phases [34].

Troubleshooting Guides

Issue 1: Poor Transferability Across Chemically Similar Molecules

Problem: Parameters optimized for n-octane do not perform well for n-hexane or n-nonane.

- Potential Cause 1: The training set was insufficiently diverse, causing overfitting.

- Solution: Expand the optimization objectives. Include data from multiple molecules in the training set. For example, during optimization, consider the relative conformational energies of an isolated n-octane molecule and the liquid-phase density of n-octane simultaneously [4].

- Solution: Test transferability post-optimization. Evaluate the optimized parameters on other substances (e.g., n-hexane, n-heptane, n-nonane) and for properties not included in the training (e.g., surface tension, viscosity) [4].

Problem: The force field cannot reproduce diverse observables (e.g., both density and diffusion) within the same phase.

- Potential Cause: The observables have varying sensitivities to different parameters, making it difficult to satisfy all at once.

- Solution: Perform a sensitivity analysis. Use algorithms that compute the gradient of the macroscopic observable with respect to the parameters. This identifies which parameters most influence a given property, guiding a more informed optimization [33].

Issue 2: Imbalance Between Hydrophilic and Hydrophobic Interactions

Problem: Simulated transfer free energy of water to hexadecane is overestimated compared to experiment, indicating poor modeling of hydrophobic interactions.

- Potential Cause: The lack of explicit polarizability in additive force fields unbalances interactions, especially in heterogeneous environments like water/alkane interfaces [35].

- Solution 1 (Long-term): Adopt a polarizable force field. Models like SWM4-NDP and SWM6 explicitly account for environmental polarization and can accurately reproduce transfer free energies [35].

- Solution 2 (Efficient alternative): Optimize pair-specific Lennard-Jones parameters. Develop an automated workflow to optimize the LJ parameters between water and alkane atoms, using the experimental transfer free energy as a target. This mimics desirable polarizable behavior within the additive framework [35].

Issue 3: Systematic Error in Hydration Free Energies

Problem: Calculated hydration free energies are systematically too favorable (more negative) than experimental values.

- Potential Cause: The dispersion interaction component of the LJ potential is a primary source of systematic error, potentially due to inaccuracies in the standard combining rules [34].

- Solution: Implement pair-specific LJ (NBFIX) parameters. Optimize the LJ parameters between solute heavy atoms and water oxygen atoms specifically to correct hydration free energies. This is a established protocol within the CHARMM Drude polarizable force field and can be adapted for other force fields [34].

Experimental Data & Optimization Results

The following table summarizes key quantitative data from the case study on optimizing LJ parameters for n-alkanes, demonstrating performance against experimental targets [4].

Table 1: Key Observables and Performance Metrics for Linear Alkane Optimization

| Category | Specific Observable | Molecule(s) | Temperature (K) | Performance Note |

|---|---|---|---|---|

| Training Observables | Relative Conformational Energies | n-octane (isolated) | - | Primary optimization target [4] |

| Liquid-Phase Density | n-octane | 293.15 | Primary optimization target [4] | |

| Test Observables | Liquid-Phase Density | n-hexane, n-heptane, n-nonane | 293.15, 315.15, 338.15 | Used for transferability testing [4] |