Node-Embedding Approaches for Consistent Force Field Parameter Assignment: From Molecular Graphs to Accurate Simulations

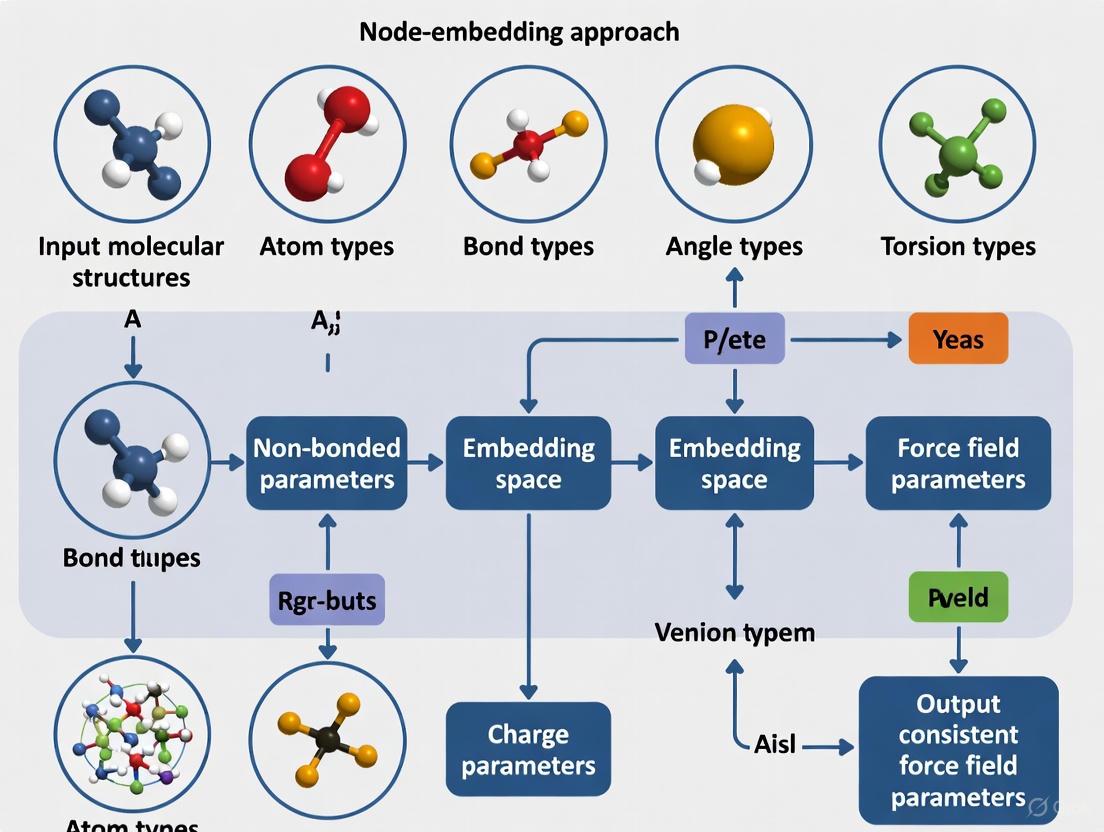

This article explores the transformative shift in molecular mechanics force field development from traditional, discrete atom-typing to modern, data-driven node-embedding approaches.

Node-Embedding Approaches for Consistent Force Field Parameter Assignment: From Molecular Graphs to Accurate Simulations

Abstract

This article explores the transformative shift in molecular mechanics force field development from traditional, discrete atom-typing to modern, data-driven node-embedding approaches. We detail how graph neural networks (GNNs) generate continuous, chemically aware atom embeddings that enable consistent and transferable parameter assignment across expansive chemical spaces, including drug-like molecules and complex biomolecules. The content covers the foundational principles of these methods, their implementation in tools like Grappa and ByteFF, strategies for troubleshooting and optimization, and rigorous validation against quantum mechanics and experimental data. Aimed at computational chemists, structural biologists, and drug discovery professionals, this review synthesizes how these advances are enhancing the accuracy and predictive power of molecular dynamics simulations for biomedical research.

The Foundation of Modern Force Fields: From Discrete Atom Types to Continuous Embeddings

The Limitations of Traditional Atom Typing in Classical Force Fields

In classical molecular mechanics force fields (FFs), atom typing is a foundational process where each atom in a molecular system is assigned a specific type based on its chemical identity and local environment. These types are essential for assigning parameters that govern bonded interactions (bonds, angles, dihedrals) and non-bonded interactions (van der Waals, electrostatics) during molecular dynamics (MD) simulations [1]. The accuracy and transferability of a force field are intrinsically linked to the completeness and logical consistency of its atom type library.

However, this traditional paradigm faces significant challenges in the modern era of computational chemistry and drug discovery. The exponentially expanding chemical space of drug-like molecules, coupled with the need to model complex biological phenomena such as post-translational modifications (PTMs) and protein-ligand interactions, has exposed critical limitations in static, look-up table-based approaches to atom typing [1] [2]. This application note details these limitations and frames them within the context of emerging, data-driven solutions based on node-embedding approaches that promise more consistent and transferable parameter assignment.

Core Limitations of Traditional Atom Typing

The conventional process of atom typing, which is often manual and reliant on expert knowledge, presents several major bottlenecks for the accuracy and scope of biomolecular simulations.

Table 1: Key Limitations of Traditional Atom Typing

| Limitation Category | Description | Impact on Force Field Development & Application |

|---|---|---|

| Manual & Labor-Intensive Process | Traditionally, atom typing was a manual process relying on researcher expertise and intuition [1]. | Becomes a critical bottleneck for exploring expansive chemical space; hinders high-throughput parametrization. |

| Limited Transferability & Scalability | Discrete, pre-defined atom types and chemical environment descriptions (e.g., SMIRKS patterns) have inherent limitations [2]. | Hampered transferability and scalability of force fields to novel molecular structures not explicitly in the parameter library. |

| Handling of Chemical Modifications | 76 types of PTMs have been identified, creating a vast set of non-standard amino acids [1]. | Traditional look-up tables struggle to cover this diversity, limiting simulations of functionally crucial biological processes. |

| Inconsistency in Parameter Assignment | Asynchronous development of parameters for small molecule ligands versus biomolecular FFs can lead to inconsistencies [1]. | Compromises accuracy in critical applications like free energy perturbation (FEP) calculations for binding affinity. |

Workflow Bottleneck and Coverage Gaps

The manual nature of traditional atom typing creates a fundamental scalability issue. As one review notes, "atom typing was a manual and labor-intensive process that relied on the expertise and intuition of researchers" [1]. This process is impractical for the vast and growing landscape of synthesizable molecules, often resulting in coverage gaps where parameters for novel chemical motifs are missing or suboptimal [2].

The Challenge of Complex Chemical Environments

The rigidity of fixed atom types struggles with chemically ambiguous environments. A prominent example is the handling of post-translational modifications (PTMs). With over 76 types of PTMs identified, representing "over 200 distinct chemical modifications of amino acids," the combinatorial complexity far exceeds what is feasibly captured in a pre-defined list of atom types [1]. This limitation obstructs the realistic simulation of many critical biological processes.

The Node-Embedding Approach: A Paradigm Shift

In response to these limitations, a new paradigm has emerged: leveraging graph-based node-embedding techniques to assign force field parameters directly from the molecular graph. This approach treats the molecule as a graph where atoms are nodes and bonds are edges. A machine learning model learns to generate continuous, numerical representations (embeddings) for each atom based on its chemical environment, and these embeddings are then mapped to specific force field parameters [3] [2].

Key Advantages of the Node-Embedding Approach

This data-driven framework addresses the core shortcomings of traditional atom typing:

- Automation and Scalability: Once trained, the model can automatically parametriize any molecule within its learned chemical space, eliminating manual effort and accelerating high-throughput screening [2] [3].

- Continuous and Transferable Representations: The continuous vector representations of chemical environments are inherently more flexible and transferable than discrete atom types, enabling more accurate parametrization of novel compounds [2].

- Improved Consistency: By applying a single, unified model to both proteins and small molecules, this approach ensures greater internal consistency across the entire force field [3].

Table 2: Comparison of Traditional vs. Node-Embedding Approaches

| Feature | Traditional Atom Typing | Node-Embedding Approach |

|---|---|---|

| Basis of Assignment | Pre-defined lookup tables of discrete atom types. | Continuous vector embeddings generated by a model. |

| Transferability | Limited to pre-enumerated chemical groups. | High, due to continuous representation of chemical environment. |

| Automation Level | Manual or rule-based, requiring expert input. | Fully automated, end-to-end. |

| Handling of Novelty | Requires manual creation of new types/parameters. | Can extrapolate to novel structures within trained chemical space. |

| Representative Examples | AMBER, CHARMM, GAFF, OPLS [1] [4]. | Grappa, Espaloma, ByteFF [3] [2]. |

Experimental Protocols for Node-Embedded Force Field Validation

The development and validation of a node-embedded force field involve a multi-stage workflow combining large-scale quantum chemistry data generation, model training, and rigorous benchmarking. The following protocol outlines the key steps, as exemplified by modern implementations like ByteFF and Grappa [2] [3].

Protocol 1: Data Generation and Model Training

Objective: To create a high-quality dataset and train a graph neural network (GNN) to predict molecular mechanics force field parameters.

Materials:

- Quantum Chemistry Software: ORCA, Gaussian, or PSI4 for generating reference data.

- Computational Resources: High-performance computing (HPC) cluster for large-scale quantum mechanics (QM) calculations.

Procedure:

- Dataset Curation: Generate a diverse dataset of drug-like molecules and molecular fragments. For instance, ByteFF was trained on a dataset containing "2.4 million optimized molecular fragment geometries with analytical Hessian matrices, along with 3.2 million torsion profiles" at the B3LYP-D3(BJ)/DZVP level of theory [2].

- Molecular Graph Featurization: Represent each molecule as a graph. Nodes (atoms) are initially featurized with basic chemical properties (e.g., element, formal charge). Bonds are represented as edges.

- Model Architecture Selection: Employ a graph neural network architecture. For example:

- Model Training: Train the model to predict MM parameters (e.g., bond force constants, equilibrium lengths, partial charges) by minimizing the difference between the MM-calculated energies/forces and the reference QM data. This can involve a differentiable partial Hessian loss to better fit vibrational frequencies [2].

Protocol 2: Validation and Benchmarking

Objective: To assess the accuracy and transferability of the trained node-embedded force field.

Materials:

- MD Simulation Software: GROMACS, OpenMM, or AMBER.

- Benchmarking Datasets: Public datasets like the Espaloma benchmark (covering 14,000 molecules and over one million conformations for small molecules, peptides, and RNA) [3].

Procedure:

- Geometry Prediction: Evaluate the force field's ability to reproduce optimized molecular geometries (bond lengths, angles) from QM calculations.

- Torsional Profile Accuracy: Compare the torsional energy profiles generated by the force field against high-level QM scans across a range of dihedral angles.

- Conformational Energy & Force Prediction: Calculate the mean absolute error (MAE) between the force field and QM energies and forces for a diverse set of molecular conformers.

- Specialized Property Validation: For protein force fields like Grappa, validate against experimental data such as NMR J-couplings and protein folding free energies (e.g., for the chignolin protein) [3].

- Molecular Dynamics Stability Test: Run multi-nanosecond MD simulations of proteins or other macromolecules to ensure stability and realistic dynamics.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Node-Embedded Force Field Research

| Tool / Reagent | Type | Function in Research |

|---|---|---|

| Grappa | Machine-Learned Force Field | A GNN-based model that predicts MM parameters for proteins and small molecules; compatible with GROMACS/OpenMM [3]. |

| Espaloma | Machine-Learned Force Field | An end-to-end workflow using GNNs to assign MM parameters, improving upon traditional look-up tables [2]. |

| ByteFF | Data-Driven MM Force Field | An Amber-compatible force field trained on a massive QM dataset using a graph neural network for expansive coverage [2]. |

| QM Reference Data | Dataset | High-quality quantum mechanical calculations (geometries, Hessians, torsion scans) used to train and validate the models [2]. |

| GROMACS / OpenMM | MD Simulation Engine | High-performance software that performs the molecular dynamics calculations using the generated parameters [3]. |

| Reactive INTERFACE FF (IFF-R) | Reactive Force Field | Demonstrates extension beyond harmonic potentials using Morse bonds for bond breaking, a challenge beyond standard typing [4]. |

Traditional atom typing has been a cornerstone of classical molecular dynamics, but its inherent limitations—manual processes, limited transferability, and poor scalability—are increasingly apparent in the face of modern scientific challenges. The node-embedding approach represents a paradigm shift, replacing discrete, hard-coded types with continuous, learned atomic representations. This methodology, as realized in force fields like Grappa, ByteFF, and Espaloma, enables automated, consistent, and transferable parameter assignment across vast and diverse chemical spaces. By embracing this data-driven framework, researchers can develop more accurate and robust force fields, ultimately enhancing the predictive power of molecular simulations in drug discovery and materials science.

Node embeddings are a fundamental paradigm in graph representation learning where nodes in a graph are mapped to vectors in a continuous, low-dimensional space [5]. This technique transforms abstract graph nodes without inherent coordinates into a structured metric space (typically ℝ^d), enabling downstream machine learning processing [5] [6]. The core objective is to preserve the graph's topological structure—neighborhood relationships, connectivity patterns, and structural roles—within the embedding space [7]. Nodes that are similar in the original graph (whether by proximity or structural role) should have similar vector representations [6] [7].

Molecular graph representations provide a computational framework for encoding chemical structures as graphs, where atoms constitute nodes and chemical bonds form edges [8] [9]. This representation has emerged as a powerful alternative to traditional string-based formats like SMILES (Simplified Molecular-Input Line-Entry System), offering a more natural and unambiguous depiction of molecular structure that explicitly captures atomic connectivity and relationships [10] [8] [9]. The integration of node embedding techniques with molecular graphs enables sophisticated AI-assisted drug discovery applications, including molecular property prediction, virtual screening, and lead optimization [10].

Comparative Analysis of Methods and Metrics

Table 1: Key Node Embedding Algorithms and Their Characteristics

| Algorithm | Core Methodology | Key Features | Molecular Applications |

|---|---|---|---|

| DeepWalk [5] [7] | Random walk + word2vec | Uniform random walks; precursor to node2vec | Social network analysis (e.g., Zachary's Karate Club) [7] |

| node2vec [5] [6] | Biased random walks | Balances breadth-first (homophily) and depth-first (structural equivalence) search | Flexible similarity notions for chemical networks [6] |

| Graph Neighbor Embedding (NE) [5] | Direct neighbor embedding | No random walks; pulls adjacent nodes together; strong local structure preservation | Potential for molecular graph layout and visualization [5] |

| Graph Isomorphism Network (GIN) [8] [11] | Graph neural network with neighborhood aggregation | Theoretical power equivalent to Weisfeiler-Lehman graph isomorphism test [8] | Molecular property prediction [8] and symmetry classification [11] |

Table 2: Molecular Representation Types and Their Applications

| Representation Type | Format | Advantages | Limitations |

|---|---|---|---|

| String-Based (SMILES) [10] [9] | Linear string | Compact, human-readable, database-friendly [10] | Limited complexity capture; ambiguous representations [10] [8] |

| Atom Graph [8] [9] | Graph (atoms=nodes, bonds=edges) | Natural representation; unique and unambiguous [8] | May overlook important substructure effects [8] |

| Substructure Graph [8] | Graph (substructures=nodes) | Encodes functional groups/pharmacophores; enhanced interpretability | Potential molecular structure information loss [8] |

| 3D-Aware GNNs [9] [12] | 3D graph with spatial coordinates | Captures spatial geometry critical for molecular interactions [9] | Requires accurate 3D data; computationally intensive [12] |

Table 3: Evaluation Metrics for Node Embeddings and Molecular Representations

| Metric Category | Specific Metrics | Application Context |

|---|---|---|

| Structural Preservation [5] | Local structure preservation, graph distance correlation | Evaluating node embedding quality [5] |

| Prediction Performance [8] [11] | Accuracy, F1-score, ROC-AUC | Molecular property prediction, point group classification [8] [11] |

| Computational Efficiency [8] | Runtime, memory usage, scaling with graph size | Model selection for large chemical databases [8] |

Experimental Protocols

Protocol: Node Embedding with node2vec

Purpose: To generate Euclidean vector representations of nodes in a molecular graph using the node2vec algorithm.

Materials:

- Molecular graph data (nodes = atoms, edges = bonds)

- Programming environment (Python recommended)

- NetworkX or similar graph manipulation library

- node2vec implementation (Python package available)

Procedure:

- Graph Preparation: Convert molecular structure to graph format with appropriate node and edge features.

- Parameter Configuration:

- Set number of walks per node to 80

- Set walk length to 10

- Set context window size ω = 10

- Set return parameter p = 1 and in-out parameter q = 1 (default values) [13]

- Execution:

- Generate random walks according to parameters

- Apply word2vec algorithm to walk sequences

- Output d-dimensional node embeddings

- Validation:

- Evaluate embedding quality through downstream tasks (node classification, link prediction)

- Assess chemical relevance of similarity relationships in embedding space

Technical Notes: For weighted molecular graphs, transition probabilities are modified such that higher link weights correspond to higher transition probabilities. Computational complexity is O(E + N·d·ω^2) for a network with N nodes and E edges [13].

Protocol: Molecular Property Prediction with Graph Isomorphism Networks

Purpose: To predict molecular properties using a Graph Isomorphism Network (GIN) on molecular graph representations.

Materials:

- Molecular dataset with annotated properties (e.g., QM9 for quantum properties)

- RDKit or OpenBabel for chemical informatics operations

- Deep learning framework (PyTorch or TensorFlow)

- GIN implementation

Procedure:

- Data Preparation:

- Convert molecular structures to graph representations

- Split data into training, validation, and test sets (typical ratio: 80/10/10)

- Normalize molecular property labels if necessary

- Model Configuration:

- Implement GIN architecture with multiple layers (typically 3-5)

- Set hidden dimension appropriate for molecular complexity (typically 64-300)

- Choose readout function (sum, mean, or attention-based pooling)

- Training:

- Initialize model parameters

- Select optimization algorithm (Adam recommended)

- Train with appropriate loss function (MSE for regression, cross-entropy for classification)

- Apply early stopping based on validation performance

- Evaluation:

- Calculate performance metrics on test set

- Compare against baseline methods (traditional ML, other GNNs)

- Visualize learned representations for chemical interpretability

Technical Notes: GIN is particularly effective for molecular graphs due to its theoretical power in distinguishing graph structures, achieving state-of-the-art performance on benchmark molecular datasets [8] [11].

Framework Integration for Force Field Parameter Assignment

The integration of node embedding approaches with molecular graph representations establishes a powerful framework for consistent force field parameter assignment. This approach replaces traditional hand-crafted atom typing rules with data-driven parameter prediction directly from molecular graphs [14].

Grappa Framework: The Grappa (Graph Attentional Protein Parametrization) framework exemplifies this paradigm by employing a graph attentional neural network to predict molecular mechanics parameters directly from molecular graphs [14]. The architecture comprises:

- Atom Embedding Generation: A graph neural network constructs d-dimensional atom embeddings (ν₁, ν₂, ..., νₙ) from the molecular graph.

- Parameter Prediction: A transformer architecture predicts MM parameters ξ for each interaction type (bonds, angles, torsions, impropers) from the atom embeddings: ξij...(l) = ψ(l)(νi, νj, ...)

- Energy Evaluation: The predicted parameters define a potential energy surface E(x) = E_MM(x, ξ) that can be evaluated for different molecular conformations x [14].

Force Field-Inspired Neural Networks: The FFiNet model incorporates physical priors by modeling intramolecular interactions analogous to force field terms: bond stretching, angle bending, torsion, and nonbonded interactions [12]. This approach organizes message passing by interaction hops:

- 1-hop nodes: Bond stretching interactions

- 2-hop nodes: Angle bending and nonbonded interactions

- 3-hop nodes: Torsion and nonbonded interactions [12]

The model calculates attention scores using functional forms derived from classical force fields (e.g., OPLS), embedding physical chemistry knowledge directly into the learning process [12].

Molecular Representation to Force Field Pipeline

The Scientist's Toolkit

Table 4: Essential Research Reagents and Computational Tools

| Tool/Resource | Type | Function/Purpose | Application Context |

|---|---|---|---|

| RDKit [8] | Cheminformatics library | Molecular graph construction, substructure analysis, descriptor calculation | General molecular representation preprocessing [8] |

| GIN (Graph Isomorphism Network) [8] [11] | Neural network architecture | Molecular graph embedding with theoretical graph isomorphism guarantees | Molecular property prediction, symmetry classification [8] [11] |

| node2vec [5] [13] | Node embedding algorithm | Euclidean node embeddings via biased random walks | Graph-based similarity searching in chemical space [5] |

| Grappa [14] | Force field parameterization | Machine learning-based MM parameter prediction from molecular graphs | Force field development for unexplored chemical spaces [14] |

| QM9 Dataset [11] | Molecular dataset | Quantum chemical properties for ~134k small organic molecules | Benchmarking molecular representation methods [11] |

| OPLS Functional Forms [12] | Mathematical potentials | Basis for spatial information embedding in force field-inspired networks | Incorporating physical chemistry priors in neural networks [12] |

Node embeddings and molecular graph representations provide a powerful synergistic framework for advancing computational chemistry and force field development. The integration of these approaches enables data-driven parameter assignment that maintains physical consistency while exploring uncharted regions of chemical space. Methods like Grappa and FFiNet demonstrate how graph-based learning can capture complex molecular interactions through attention mechanisms inspired by force field energy terms. As molecular representation learning continues to evolve—incorporating 3D geometries, multi-modal data, and physical constraints—these techniques promise to accelerate drug discovery and materials design through more accurate, efficient, and interpretable molecular modeling.

Permutation Invariance and Equivariance in Machine Learning Force Fields

Molecular dynamics (MD) simulations are a cornerstone of computational chemistry, physics, and drug discovery. The accuracy of these simulations is fundamentally governed by the force field—the mathematical model that describes the potential energy of a molecular system. Traditional molecular mechanics (MM) force fields rely on fixed sets of atom types and lookup tables for parameter assignment. This approach, while computationally efficient, struggles with transferability across diverse chemical spaces and often requires laborious, expert-guided parameterization for novel molecules [15] [16].

The emergence of machine learning (ML) has revolutionized force field development. A critical advancement in this domain is the principled incorporation of physical symmetries—specifically, permutation invariance and equivariance. These properties ensure that a model's predictions transform consistently under fundamental symmetries present in molecular systems: the energy remains unchanged (invariant) when identical atoms are permuted, while the forces, which are negative gradients of the energy, transform accordingly (equivariant) [17]. Embedding these symmetries as inductive biases into ML models leads to superior data efficiency, generalizability, and robust physical predictions. This application note explores the key concepts of permutation invariance and equivariance, detailing their implementation in modern, node-embedding-based force fields and providing protocols for their practical application.

Key Concepts and Theoretical Foundations

Symmetries in Molecular Systems

Molecules possess inherent symmetries that must be respected by any physical model, including a force field.

- Permutation Invariance: The total potential energy of a molecule must remain unchanged if two identical atoms (e.g., two hydrogen atoms in a water molecule) are swapped. Formally, for a permutation

πof identical atoms, the energy functionEmust satisfyE(π(x)) = E(x), wherexrepresents the atomic coordinates [17]. - Permutation Equivariance: The forces acting on the atoms must transform in concert with any permutation of the atom identities. If the inputs (atom indices) are permuted, the outputs (forces on those atoms) should be permuted in the same way. Formally, if

F(x)is the force function, thenF(π(x)) = π(F(x))[17]. - Rotational and Translational Invariance (E(3) Invariance): The energy of a system should not depend on its overall orientation or position in space. While crucial, this note focuses primarily on permutation symmetries, which are often handled at the level of the molecular graph before spatial coordinates are considered [18] [14].

The Node-Embedding Paradigm

A powerful framework for achieving consistent parameter assignment replaces the concept of fixed atom types with dynamic atom embeddings. In this approach:

- A molecular graph

G = (V, E)is constructed, where nodesVrepresent atoms and edgesErepresent bonds. - A neural network processes this graph to generate a continuous, vector-valued embedding

ν_i ∈ R^dfor each atomi. This embedding encodes the atom's chemical environment based on the molecular graph [18] [14]. - Force field parameters for an interaction (e.g., a bond, angle, or torsion) are predicted by applying a second function, typically a transformer or multilayer perceptron (MLP), to the embeddings of the atoms involved in that interaction:

ξ^(l)_ij... = ψ^(l)(ν_i, ν_j, ...)[14].

This paradigm directly enables a node-embedding approach for consistent force field parameter assignment, as atoms in similar chemical environments will naturally receive similar embeddings and, consequently, similar parameters, without the need for hard-coded atom types [16] [14].

Implementation in Machine Learning Force Field Architectures

Architectures must be carefully designed to inherently respect permutation symmetries. The following table summarizes how leading models achieve this.

Table 1: Symmetry Handling in Modern ML Force Field Architectures

| Model | Architecture | Invariance/Equivariance Mechanism | Key Insight |

|---|---|---|---|

| Grappa [18] [14] | Graph Attention + Transformer | Embeds permutation symmetries directly into the parameter prediction function ψ. For a bond between atoms i and j, the bond parameter function is constrained so that ξ_(bond, ij) = ξ_(bond, ji). |

Permutation symmetries are enforced by construction in the final parameter prediction layers, ensuring the MM energy function is invariant. |

| FreeCG [19] | Equivariant Graph Neural Network (EGNN) | Utilizes invariance transitivity. The Clebsch-Gordan transform is applied to permutation-invariant "abstract edges," freeing the internal design of the layer while maintaining overall permutation equivariance. | Decouples the requirement for intensive, edge-wise processing from the overall symmetry guarantee, boosting expressiveness and efficiency. |

| Symmetry-Invariant VQLM [17] | Variational Quantum Machine Learning | Constructs a quantum circuit with an invariant initial state, equivariant encoding layers, equivariant trainable layers, and an invariant observable. | Demonstrates the generality of symmetry principles, extending them to quantum machine learning models for chemistry. |

| FFiNet [12] | Force Field-Inspired GNN | Aggregates information from 1-hop, 2-hop, and 3-hop neighbors using attention mechanisms derived from the functional forms of bond, angle, torsion, and non-bonded potentials. | Directly incorporates the physics of the MM energy function to guide the message-passing scheme, inherently respecting chemical intuitions about locality and interaction. |

Workflow: Grappa Force Field Construction

The Grappa model exemplifies a complete, end-to-end symmetric force field construction pipeline. The following diagram visualizes this workflow from a molecular graph to a ready-to-use force field.

Quantitative Performance and Benchmarking

Adhering to symmetry principles not only provides theoretical soundness but also translates to superior empirical performance. The following table quantifies the performance of symmetric ML force fields against traditional and other machine-learned baselines on standard benchmarks.

Table 2: Benchmarking Performance of Symmetric ML Force Fields

| Model | Benchmark Dataset | Key Metric | Performance | Comparative Outcome |

|---|---|---|---|---|

| Grappa [18] [14] | Espaloma (14k+ molecules) | Force & Energy Accuracy | State-of-the-art MM accuracy | Outperforms traditional MM (GAFF, CGenFF) and ML-based (Espaloma) force fields. |

| Grappa [14] | Protein Folding (Chignolin) | Folding Free Energy | Improved calculation | More accurately recovers the experimentally determined folded structure from an unfolded state. |

| FreeCG [19] | MD17, rMD17, MD22 | Force Prediction | State-of-the-art (SOTA) | Achieves SOTA with several improvements >15%, maximum beyond 20% over previous methods. |

| FFiNet [12] | PDBBind | Binding Affinity Prediction | Outperformed baselines | Achieves state-of-the-art performance on predicting protein-ligand binding affinity. |

| DPA-2 Optimization [20] | TYK2 Inhibitor, PTP1B | Free Energy Perturbation (FEP) | Improved results | On-the-fly optimization using a node-embedding-based similarity metric improved FEP results. |

Experimental Protocols

Protocol: Building a Grappa-Based Force Field for a Novel Molecule

This protocol details the steps to generate a complete set of molecular mechanics parameters for a novel small molecule or peptide using the Grappa framework [18] [14].

Research Reagent Solutions:

- Grappa Model Weights: Pre-trained neural network parameters for predicting MM coefficients.

- Molecular Graph Generator: Software like RDKit to convert a SMILES string or similar representation into a 2D molecular graph.

- MD Engine Integration: A compatible molecular dynamics engine (GROMACS, OpenMM) with a plugin or wrapper to load Grappa-predicted parameters.

Procedure:

- Input Preparation:

- Represent your target molecule as a molecular graph. The input can be a SMILES string, an InChI, or a list of atoms and bonds.

- No hand-crafted features (e.g., hybridization, formal charge) are required. The graph structure alone suffices.

Atom Embedding Generation:

- Pass the molecular graph through the Grappa graph attentional network.

- This network uses multi-head dot-product attention on graph edges to generate a

d-dimensional embedding vector for each atom, capturing its chemical environment.

MM Parameter Prediction:

- For each interaction in the molecule (bonds, angles, proper torsions, improper torsions):

- Identify the set of atoms involved (e.g., atoms

i,jfor a bond;i,j,k,lfor a torsion). - Feed the corresponding atom embeddings into the dedicated transformer module

ψ^(l). - The transformer outputs the specific MM parameters (

k,r₀,θ₀, etc.), with internal constraints ensuring permutation symmetry (e.g.,ξ_(bond, ij) = ξ_(bond, ji)).

- Identify the set of atoms involved (e.g., atoms

- For each interaction in the molecule (bonds, angles, proper torsions, improper torsions):

Force Field Assembly and Simulation:

- Collect all predicted parameters into a complete parameter file.

- Load this parameter file, along with the molecular topology and coordinates, into your MD engine of choice (e.g., GROMACS, OpenMM).

- The subsequent energy and force evaluations are performed at the standard, highly optimized speed of classical molecular mechanics.

Protocol: Optimizing Dihedral Parameters with DPA-2 and Node Embedding Similarity

This protocol describes a method for optimizing specific dihedral parameters using a fine-tuned neural network potential and a node-embedding-based similarity metric to ensure transferability [20].

Procedure:

- Molecule Fragmentation:

- Decompose a complex organic molecule into smaller fragments, each containing at least one rotatable bond of interest.

- Use a tool like RDKit to systematically break bonds, capping the fragments with methyl groups or hydrogens to maintain valency. Preserve key chemical features like ring systems and functional groups.

Torsion Scan with Fine-Tuned DPA-2-TB Model:

- For each fragment, perform a flexible torsion scan. This involves rotating the dihedral angle in increments while allowing all other degrees of freedom (bond lengths, other angles) to relax.

- Use the fine-tuned DPA-2-TB model, which employs delta-learning to correct a semi-empirical GFN2-xTB method, to generate high-accuracy quantum-mechanical-level potential energy surfaces at a fraction of the computational cost.

Parameter Optimization via Similarity Matching:

- Fingerprint Generation: Generate a topological fingerprint for each fragment based on the node embeddings of its constituent atoms. This fingerprint defines the "chemical environment" of the rotatable bond.

- Library Cataloging: Store the optimized dihedral parameters for each fragment in a library, indexed by its fingerprint.

- Parameter Matching: For a new molecule, identify its rotatable bonds, generate the corresponding fragment fingerprints, and match them to the closest entry in the library to assign consistent parameters automatically.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Software for Symmetric ML Force Fields

| Item Name | Type | Function / Application | Example / Source |

|---|---|---|---|

| Grappa | Software / Model | An end-to-end trainable model that predicts MM parameters from a molecular graph, enforcing permutation symmetries. | [18] [14] |

| OpenMM / GROMACS | MD Engine | High-performance simulation software that can integrate externally predicted parameters from models like Grappa. | [18] [14] |

| FreeCG | Software / Model | An EGNN that frees the design space of the Clebsch-Gordan transform while preserving equivariance. | [19] |

| DPA-2-TB | Neural Network Potential | A fine-tuned, high-accuracy model for rapid torsion scans and potential energy surface generation. | [20] |

| Node-Embedding Similarity Metric | Algorithm | Replaces hand-crafted atom types for consistent parameter assignment and transfer across molecules. | [20] |

| Force Field Toolkit (ffTK) | Software | A legacy plugin for VMD that facilitates traditional QM-to-MM parameterization, providing a useful comparison to modern ML methods. | [15] |

| FFiNet | Software / Model | A GNN that explicitly incorporates the functional forms of force field energy terms into its architecture. | [12] |

| RDKit | Cheminformatics Library | Used for molecule manipulation, fragmentation, and graph generation as a preprocessing step. | [20] |

The integration of permutation invariance and equivariance is not merely a theoretical exercise but a practical necessity for developing robust, accurate, and transferable machine-learned force fields. The node-embedding paradigm provides a powerful and flexible framework for achieving consistent force field parameter assignment, moving beyond the limitations of fixed atom types. As demonstrated by architectures like Grappa, FreeCG, and FFiNet, baking these physical symmetries directly into the model leads to state-of-the-art performance on tasks ranging from small molecule energetics to protein folding and ligand binding. The provided protocols and toolkits offer researchers a pathway to implement these advanced methods, accelerating progress in computational drug discovery and materials science.

The development of robust, transferable force fields is a cornerstone of accurate molecular dynamics (MD) simulations in computational chemistry and drug discovery. A persistent challenge in this field is the consistent and accurate assignment of force field parameters across expansive and novel chemical spaces. Traditional methods, which often rely on look-up tables of pre-defined parameters for specific atom types or chemical substructures, struggle with scalability and transferability [21]. The node-embedding approach presents a paradigm shift, leveraging machine learning to infer parameters directly from a molecule's topological and chemical environment, thereby promoting consistency for similar local structures [20]. The efficacy of any data-driven method, however, is fundamentally dependent on the quality, breadth, and chemical diversity of the data on which it is trained. This application note provides a detailed overview of essential quantum mechanics (QM) and crystal structure databases, along with protocols for their use in training and validating node-embedding models for force field parameter assignment.

Essential Databases for Data-Driven Force Field Development

The following databases provide the foundational data—both from quantum mechanical calculations and experimental crystal structures—required for developing and benchmarking models like node-embedding-based parameter assignment.

Table 1: Key Quantum Mechanics (QM) Datasets for Molecular Property Prediction

| Dataset Name | Description | Number of Structures/Conformers | Key Properties | Primary Use in Force Field Development |

|---|---|---|---|---|

| QM7/QM7b [22] | Small organic molecules (up to 7 heavy atoms: C, N, O, S). QM7b includes 13 additional properties. | 7,165 molecules (QM7); 7,211 molecules (QM7b) | Atomization energies (PBE0); Polarizability; HOMO/LUMO (ZINDO, SCS, PBE0, GW) [22] | Benchmarking model performance on molecular energy and electronic property prediction. |

| QM9 [22] | Small organic molecules (up to 9 heavy atoms: C, O, N, F). | ~134,000 stable molecules [22] | Geometric, energetic, electronic, and thermodynamic properties at B3LYP/6-31G(2df,p) level [22] | Large-scale training and validation for molecular property prediction models. |

| ByteFF Training Data [21] [2] | Expansive, drug-like molecular fragments curated from ChEMBL and ZINC20. | 2.4 million optimized molecular fragment geometries; 3.2 million torsion profiles [21] | Optimized geometries with analytical Hessian matrices; Torsional energy profiles at B3LYP-D3(BJ)/DZVP level [21] | Training and refining force field parameters for bonded and non-bonded terms across a broad chemical space. |

Table 2: Key Crystallographic and Structural Databases

| Database Name | Description | Number of Structures | Key Features | Primary Use in Force Field Development |

|---|---|---|---|---|

| Cambridge Structural Database (CSD) [23] | The world's largest curated repository of experimental organic and metal-organic crystal structures. | Over 1.3 million structures [23] | Validated 3D structures from X-ray/neutron diffraction; Includes polymorphs, melting points, bioactivity data [23] | Validating force field predictions against experimental geometries and intermolecular packing; understanding polymorphic behavior. |

| CrystaLLM Corpus [24] | A massive corpus of text-based representations of crystal structures in the Crystallographic Information File (CIF) format. | Millions of CIF files [24] | Autoregressively trained LLM for crystal generation; Focus on inorganic solid-state materials [24] | Training generative models for crystal structure prediction; learning effective models of crystal chemistry. |

Experimental Protocols for Data Utilization

Protocol 1: Data Generation for Node-Embedding Force Field Training

This protocol outlines the workflow for generating a high-quality QM dataset tailored for training a graph neural network (GNN) to predict molecular mechanics (MM) parameters, as exemplified by the development of ByteFF [21] [2].

1. Molecule Curation and Fragmentation:

- Objective: Assemble a highly diverse set of drug-like molecules and process them into smaller, manageable fragments that preserve critical local chemical environments.

- Procedure: a. Source Molecules: Select molecules from chemical databases like ChEMBL and ZINC20 based on criteria such as aromatic ring count, polar surface area (PSA), and quantitative estimate of drug-likeness (QED) to maximize diversity [21]. b. Fragmentation Algorithm: Employ a graph-expansion algorithm to cleave molecules into fragments containing less than 70 atoms. The algorithm should traverse each bond, angle, and non-ring torsion, retaining relevant atoms and their conjugated partners. Capping cleaved bonds (e.g., with methyl groups) maintains valency [21] [20]. c. Protonation State Expansion: Use software like Epik to generate various protonation states for each fragment within a physiologically relevant pH range (e.g., 0.0 to 14.0) to cover probable states in aqueous solution [21]. d. Deduplication: Remove duplicate fragments to create a final, unique set for QM calculations.

2. Quantum Mechanics Calculation Workflow:

- Objective: Generate accurate reference data for molecular potential energy surfaces (PES).

- Procedure: a. Method Selection: Choose a QM method that balances accuracy and computational cost, such as B3LYP-D3(BJ)/DZVP, which is commonly used for force field parametrization [21] [20]. b. Geometry Optimization Dataset: i. Generate initial 3D conformers for all fragments using a tool like RDKit. ii. Optimize these geometries at the chosen QM level using a reliable optimizer (e.g., geomeTRIC). The output is a dataset of optimized molecular fragment geometries and their analytical Hessian matrices, which provide information on vibrational frequencies and force constants [21]. c. Torsion Profile Dataset: i. For each rotatable bond in the fragment library, perform a torsion scan. ii. At each dihedral angle step, constrain the target torsion and allow all other structural degrees of freedom (bond lengths, angles) to relax (a "flexible scan") [20]. This yields a detailed torsional energy profile, which is critical for accurately parameterizing dihedral terms in the force field.

3. Model Training and Parameter Prediction:

- Objective: Train a symmetry-preserving GNN to predict all MM parameters from molecular topology and, optionally, geometry.

- Procedure:

a. Model Architecture: Employ an edge-augmented, symmetry-preserving GNN. The model takes molecular graphs as input, where nodes represent atoms and edges represent bonds. The architecture must be designed to be permutationally invariant and respect chemical symmetries [21].

b. Learning Task: The model learns to map the local chemical environment of an atom or bond to its corresponding MM parameters: bonded parameters (

r0,θ0,k_r,k_θ,k_φ) and non-bonded parameters (partial chargeq, vdW parametersσ,ε) [21] [2]. c. Loss Function: Implement a loss function that combines standard energy and force terms with a differentiable partial Hessian loss. This ensures the model accurately reproduces not just energies but also the vibrational characteristics derived from the QM Hessian [21].

Protocol 2: Leveraging Crystal Structure Data for Validation

This protocol describes how to use experimental crystal structure data to validate the geometries and intermolecular interactions predicted by a force field parameterized via node-embedding.

1. Database Query and Structure Retrieval:

- Objective: Obtain a relevant set of experimental crystal structures for validation.

- Procedure: a. Access: Use the Cambridge Structural Database (CSD) via its query interfaces (e.g., WebCSD) [23]. b. Target Selection: Query structures based on specific functional groups, molecular weights, or presence of intramolecular interactions (e.g., hydrogen bonding, halogen bonding) that are relevant to the drug-like molecules being studied. c. Retrieve Data: Download Crystallographic Information Files (CIFs) for the selected structures. These files contain atomic coordinates, unit cell parameters, and space group symmetry.

2. Geometry Comparison and Validation:

- Objective: Quantify the agreement between force-field-predicted molecular geometries and experimental crystal structures.

- Procedure:

a. Molecular Extraction: Isolate a single molecule from the crystal lattice, disregarding crystal packing effects for initial bonded parameter validation.

b. Geometry Optimization: Using the node-embedding-predicted force field parameters, perform a gas-phase geometry optimization of the molecule, starting from the experimental coordinates.

c. Metric Calculation: Calculate root-mean-square deviations (RMSD) of atomic positions between the optimized structure and the experimental geometry. Specifically compare key internal coordinates:

* Bond lengths and deviations from equilibrium (

r - r0). * Bond angles and deviations from equilibrium (θ - θ0). * Torsion angles and their populations.

3. Intermolecular Interaction Analysis:

- Objective: Assess the force field's ability to reproduce non-bonded interactions critical for modeling condensed phases.

- Procedure: a. Lattice Energy Calculation: Use the predicted partial charges and vdW parameters to calculate the crystal lattice energy for a small set of structures. b. Compare Packing: Compare the packing motifs (e.g., π-π stacking, hydrogen-bonding networks) observed in the experimental crystal structure with those stabilized in a molecular dynamics simulation of the crystal unit cell. c. Polymorph Ranking: If multiple polymorphs exist for a compound, evaluate whether the force field correctly predicts the relative stability (ranking) of these polymorphs, a stringent test of its energy model [23].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Databases for Node-Embedding Force Field Research

| Tool / Resource | Type | Primary Function | Relevance to Node-Embedding Force Fields |

|---|---|---|---|

| RDKit | Software Library | Cheminformatics and molecular manipulation. | Generating initial 3D conformers; molecular fragmentation; handling SMILES/SMARTS strings [21] [20]. |

| GAFF/OpenFF | Molecular Mechanics Force Field | Provides the analytical functional form for energy calculation. | Defines the target functional form (bond, angle, torsion, non-bonded) for which the GNN predicts parameters [21] [2]. |

| DPA-2 | Pre-trained Neural Network Potential (NNP) | High-accuracy potential energy surface prediction. | Can be fine-tuned to accelerate QM-level data generation (e.g., torsion scans) for dataset creation, reducing reliance on expensive QM calculations [20]. |

| Cambridge Structural Database (CSD) [23] | Curated Database | Repository of experimental crystal structures. | Gold standard for validating predicted molecular geometries and intermolecular interaction energies. |

| Quantum Machine Datasets (QM7, QM9) [22] | QM Dataset | Benchmark datasets of molecular structures and properties. | Standardized benchmarks for testing and comparing the accuracy of molecular property prediction models. |

| Graph Neural Network (GNN) Framework (e.g., PyTorch Geometric) | Software Library | Building and training graph neural networks. | Core infrastructure for implementing the node-embedding model that maps molecular graph to force field parameters [12] [21]. |

The transition from manual, look-up table-based force field parametrization to automated, data-driven approaches represents a significant advancement for computational drug discovery. The node-embedding approach stands out by offering a pathway to consistent, scalable, and accurate parameter assignment across the vast and growing chemical space. The reliability of this approach is inextricably linked to the foundational data upon which it is built. High-quality, diverse QM datasets—such as those used for ByteFF and the benchmark QM series—provide the essential targets for energy and forces. Furthermore, rich experimental repositories like the Cambridge Structural Database offer an indispensable ground truth for validating the resulting models. By adhering to the detailed protocols for data generation and validation outlined in this document, researchers can robustly develop and benchmark next-generation force fields, ultimately enhancing the predictive power of molecular simulations in rational drug design.

Implementing Node-Embedding Force Fields: Architectures and Workflows for Drug Discovery

The accurate prediction of molecular and system parameters is a cornerstone of research in fields ranging from drug discovery to hardware design. Traditional methods often rely on manual feature engineering or look-up tables, which struggle with scalability and transferability across expansive, unexplored spaces. Within the broader context of developing a node-embedding approach for consistent force field parameter assignment, two modern deep-learning architectures have come to the forefront: Graph Neural Networks (GNNs) and Transformers. GNNs inherently operate on graph-structured data, making them naturally suited for molecules and circuits, where they excel at capturing local topological interactions. Transformers, renowned for their success in sequential data processing, have been adapted for graph-based tasks through mechanisms that leverage dynamic attention to capture global dependencies. This article provides a detailed architectural comparison of these paradigms, supported by quantitative performance data and detailed protocols for their application in parameter prediction, with a specific focus on molecular mechanics force fields.

Architectural Comparison: GNNs vs. Transformers

Core Principles and Encoding Strategies

Graph Neural Networks (GNNs) are a class of neural networks specifically designed for graph-structured data. They learn node representations by iteratively aggregating features from a node's local neighbors, a process known as message passing. In this paradigm, the graph's edges serve as a static, pre-defined pathway for information flow. The core operation involves updating a node's hidden state by combining its current state with the aggregated states of its neighbors, allowing it to capture the local topological structure. This makes GNNs particularly powerful for tasks where the relational structure between entities is fixed and known a priori, such as in molecular graphs where atoms are nodes and bonds are edges. The adjacency matrix in GNNs can be viewed as a global, static attention map [25].

Transformers, in contrast, were originally designed for sequential data. Their power derives from a self-attention mechanism that dynamically calculates the relevance of all elements (or tokens) in a sequence to every other element. For a given node, the Transformer computes a weighted sum of features from all other nodes, with the weights (attention scores) determined by the compatibility between a node's query vector and the key vectors of others. This Query-Key-Value (QKV) mechanism allows Transformers to capture long-range, global dependencies without being constrained by fixed, local connectivity. When applied to graphs, this enables the model to learn relationships between distant nodes that might not be connected by a short path in the graph structure [25].

A critical distinction lies in their handling of relative positions. In sequential data like language, the order of tokens is paramount (e.g., "eat fish" vs. "fish eat"). Transformers can naturally encode this relative ordering through positional encodings and their dynamic attention, which recalculates relationships based on the current context. GNNs, relying on a static adjacency matrix, are generally unable to natively encode this type of relative position information, which can make them less suitable for strictly sequential tasks. However, for data that is inherently non-sequential or "position-agnostic"—such as a set of genes in single-cell transcriptomics or atoms in a molecular graph where 3D conformation matters more than a 1D sequence—this limitation diminishes, and GNNs can achieve performance competitive with Transformers while consuming significantly less computational memory [25].

Quantitative Performance Comparison

The following table summarizes the performance and characteristics of GNN and Transformer models across various parameter prediction tasks from recent literature.

Table 1: Performance Comparison of GNN and Transformer Models on Parameter Prediction Tasks

| Application Domain | Model Type | Specific Model | Key Performance Metric | Result | Computational Efficiency (vs. Baseline) |

|---|---|---|---|---|---|

| Force Field Param. [21] | GNN | ByteFF (Edge-augmented) | State-of-the-art on geometry, torsion, and conformational energy benchmarks | Exceptional accuracy & expansive chemical space coverage | N/A |

| Molecular Rep. [26] | 2D-Graph Transformer | Graphormer-based | Comparable accuracy to GNNs on sterimol, binding energy, and metal complexes | On par with GNNs | Training/Inference: 3.7s / 0.4s (Fastest in 2D category) |

| Molecular Rep. [26] | 3D-GNN | PaiNN | Strong performance on 3D structure-aware tasks | High accuracy | Training/Inference: 20.7s / 3.9s |

| Molecular Rep. [26] | 3D-Graph Transformer | Graphormer-based (3D) | Comparable accuracy to 3D-GNNs | On par with 3D-GNNs | Training/Inference: 3.9s / 0.4s (Fastest in 3D category) |

| Approximate Circuits [27] | GNN | ApproxGNN | Prediction Accuracy (Mean Square Error) | 50% improvement over conventional metrics; 30-54% better than statistical ML | Eliminates application-specific retraining |

| Single-Cell Data [25] | GNN | Custom GNN | Competitive performance with Transformer | Achieved competitive performance | ~1/8 memory & 1/4 to 1/2 computation of comparable Transformer |

Visualizing the Architectural Workflow

The following diagram illustrates the core information flow and contrasting mechanisms of GNNs and Transformers when processing a molecular graph for parameter prediction.

Case Study: GNNs for Molecular Mechanics Force Field Parameterization

The development of ByteFF serves as a premier example of a successful, scalable application of GNNs for a critical parameter prediction task: assigning molecular mechanics force field parameters across a vast chemical space [21].

Experimental Protocol: Building and Training a GNN for Force Field Prediction

Objective: To create a data-driven, transferable force field for drug-like molecules by training a GNN to predict all bonded and non-bonded parameters simultaneously.

1. Dataset Construction:

- Source Molecules: Curate a initial set of molecules from chemical databases like ChEMBL and ZINC20 based on diversity metrics (e.g., aromatic rings, polar surface area, QED) [21].

- Fragmentation: Use a graph-expansion algorithm to cleave molecules into smaller fragments (<70 atoms) that preserve local chemical environments. Cap cleaved bonds appropriately [21].

- Protonation State Expansion: Generate multiple protonation states for each fragment within a physiologically relevant pKa range (e.g., 0.0-14.0) using tools like Epik [21].

- Quantum Chemical Calculations: Perform high-quality QM calculations on the final set of unique fragments.

- Optimization Dataset: Generate and optimize 3D conformations (e.g., at the B3LYP-D3(BJ)/DZVP level of theory), resulting in ~2.4 million optimized geometries with analytical Hessian matrices [21].

- Torsion Dataset: Systematically scan torsion angles to create ~3.2 million torsion profiles for accurate torsional energy parameterization [21].

2. Model Architecture & Training:

- Architecture: Employ an edge-augmented, symmetry-preserving molecular GNN. This design is critical for ensuring predictions are permutationally invariant and respect chemical symmetries (e.g., equivalent atoms in a carboxyl group receive identical parameters) [21].

- Input Features: Node (atom) features and edge (bond) features that encode chemical information.

- Output: The model simultaneously predicts all MM parameters: bonded (equilibrium bond length

r0, angleθ0, force constantskr,kθ,kφ) and non-bonded (van der Waalsσ,ε, and partial chargesq) [21]. - Loss Function: Implement a combined loss function. Crucially, include a differentiable partial Hessian loss to ensure the model learns to accurately reproduce vibrational frequencies and the curvature of the potential energy surface around the optimized geometry [21].

- Training Strategy: An iterative optimization-and-training procedure may be used to refine model performance [21].

3. Validation:

- Benchmark the predicted parameters and resulting molecular properties (e.g., relaxed geometries, torsional energy profiles, conformational energies and forces) against the QM reference data and existing force fields [21].

- Assess performance on held-out test molecules and external benchmark datasets to demonstrate generalizability and expansive chemical space coverage [21].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 2: Key Resources for GNN and Transformer-Based Parameter Prediction

| Category | Item / Software / Dataset | Function / Description |

|---|---|---|

| Chemical Databases | ChEMBL, ZINC20, PubChem | Sources of molecular structures for building training and test datasets [28] [21]. |

| Cheminformatics Tools | RDKit | Open-source toolkit for cheminformatics; used for SMILES parsing, molecular graph construction, and 3D conformation generation [28] [21]. |

| Quantum Chemistry Software | Gaussian, ORCA, Psi4 | Software packages used to generate high-quality reference data (e.g., optimized geometries, energies, Hessians) for training and validation [21]. |

| Deep Learning Frameworks | PyTorch, PyTorch Geometric, TensorFlow, JAX | Core frameworks for building, training, and evaluating GNN and Transformer models. |

| GNN Libraries | PyTorch Geometric (PyG), Deep Graph Library (DGL) | Provide pre-implemented GNN layers (GCN, GIN, GAT) and graph learning utilities, accelerating model development [29]. |

| Transformer Libraries | Hugging Face, Transformers | Offer pre-trained Transformer models and building blocks, facilitating the adaptation of Transformers to graph and molecular tasks [30]. |

| Graph Transformer Models | Graphormer, Transformer-M | Specific Graph Transformer architectures that have shown strong performance on molecular representation learning tasks [26]. |

| Benchmark Datasets | Open Graph Benchmark (OGB), TUDataset, PDBbind | Standardized datasets for benchmarking model performance on graph property prediction, molecular tasks, and protein-ligand binding affinity [29] [31]. |

| Force Field Tools | OpenFF, AmberTools | Provide baseline force fields, parameterization tools, and simulation environments for validating new parameter prediction methods [21]. |

Workflow Visualization: Data-Driven Force Field Development

The end-to-end process for creating a data-driven force field like ByteFF is visualized below.

The architectural deep dive into GNNs and Transformers reveals a nuanced landscape for parameter prediction. GNNs, with their innate ability to process graph-structured data and enforce physical constraints like permutational and chemical symmetry, offer a powerful, efficient, and often more intuitive framework for tasks like molecular force field parameterization. The ByteFF case study underscores that with a large, high-quality dataset and a carefully designed model, GNNs can achieve state-of-the-art accuracy and broad coverage. Transformers, with their dynamic global attention, provide formidable performance and architectural flexibility, particularly evident in graph-based leaderboards. The choice between them is not always clear-cut and depends on the specific problem constraints: GNNs are a compelling choice for graph-native problems with strong relational inductive biases and where computational efficiency is prized, while Graph Transformers excel when capturing complex, long-range dependencies is critical and sufficient computational resources are available. For the specific thesis context of a node-embedding approach for consistent force field assignment, the GNN paradigm, as demonstrated by ByteFF and Espaloma, provides a robust and effective architectural foundation.

The accurate parametrization of molecular mechanics (MM) force fields is a cornerstone of reliable molecular dynamics (MD) simulations, which are critical for computational drug discovery and materials science. Traditional force fields rely on lookup tables of a finite set of atom types, a method that struggles to cover the expansive and rapidly growing chemical space of drug-like molecules [14] [21]. This protocol details an end-to-end workflow based on a node-embedding approach for consistent force field parameter assignment, a core theme of our broader thesis research. By leveraging graph neural networks (GNNs) to learn chemical environments directly from the 2D molecular graph, this methodology achieves a more accurate, data-efficient, and transferable parametrization, overcoming the limitations of hand-crafted rules and fixed atom types [14].

Theoretical Foundation: Node Embedding for Molecular Graphs

In this workflow, the 2D molecular graph ( G(V, E) ), where ( V ) represents atoms (nodes) and ( E ) represents bonds (edges), serves as the sole input. The core innovation is the use of a graph attentional neural network to generate a continuous, high-dimensional embedding (or feature vector) for each atom [14]: [ \nui = \text{GNN}(G) ] These atom embeddings ( \nui ) encode the chemical environment of each atom based on the molecular graph. The embeddings are subsequently used to predict the full set of MM parameters ( \xi ) for all bonded interactions (bonds, angles, torsions, impropers) via a transformer model ( \psi^{(l)} ) that respects the required permutation symmetries [14]: [ \xi{ij\ldots}^{(l)} = \psi^{(l)}(\nui, \nuj, \ldots) ] This two-step process—from graph to embeddings, then embeddings to parameters—ensures that chemically equivalent atoms receive identical parameters, guaranteeing consistency. The resulting parameters define a potential energy surface that can be evaluated for any molecular conformation ( x ) using the standard MM energy function, ( E(x) = E{\text{MM}}(x, \xi) ) [14]. As the model is differentiable, it can be trained end-to-end on quantum mechanical (QM) data to predict energies and forces.

Implemented Workflows and Architectures

The Grappa Force Field

Grappa employs a graph attentional neural network followed by a symmetry-preserving transformer to predict MM parameters [14]. Its key features are:

- Input: Only the 2D molecular graph, with no need for hand-crafted chemical features.

- Symmetry Preservation: The architecture explicitly respects the permutation symmetries required for the energy contributions of bonds, angles, and torsions [14].

- Computational Efficiency: Parameters are predicted once per molecule; subsequent energy evaluations have the same cost as traditional MM force fields, enabling simulation in standard MD engines like GROMACS and OpenMM [14].

The ByteFF Force Field

ByteFF utilizes an edge-augmented, symmetry-preserving molecular graph neural network trained on a massive, diverse QM dataset [21]. Its workflow includes:

- Dataset: 2.4 million optimized molecular fragment geometries and 3.2 million torsion profiles calculated at the B3LYP-D3(BJ)/DZVP level of theory [21].

- Differentiable Loss: Incorporates a differentiable partial Hessian loss to improve the accuracy of predicted vibrational frequencies [21].

- Comprehensive Parameterization: Predicts all bonded and non-bonded parameters (van der Waals and partial charges) simultaneously for drug-like molecules [21].

Automated Pipeline for Complex Materials

For parametrizing complex systems like amino acid-based metal-organic frameworks (MOFs), a hybrid, highly automated pipeline has been developed [32]. This approach combines:

- Cluster-to-Periodic Transfer: Deriving parameters from ab initio calculations on a finite, representative cluster model and seamlessly transferring them to the periodic system [32].

- GAFF Integration: Leveraging the Generalized Amber Force Field (GAFF) for dihedral parameters and employing tools like AmberTools and MCPB for parameter mapping and metal center parametrization [32].

The following diagram illustrates the unified logical workflow from a 2D molecular graph to MD-ready simulations, encapsulating the principles of the above approaches.

Experimental Protocols

Protocol 1: Training a Node-Embedding Force Field

This protocol outlines the steps for training a model like Grappa or ByteFF.

- Objective: To train a GNN model that predicts MM force field parameters directly from 2D molecular graphs.

- Input: 2D molecular structures (e.g., as SMILES strings).

- Output: A trained model capable of assigning parameters to novel molecules.

| Step | Procedure | Critical Parameters |

|---|---|---|

| 1. Dataset Curation | Generate a diverse set of small molecules and molecular fragments. Generate conformers and compute reference QM data (energy, forces, Hessians) for each [21]. | QM method: B3LYP-D3(BJ)/DZVP; Basis Set: DZVP; Number of torsion profiles: ~3.2 million [21]. |

| 2. Model Architecture | Implement a graph neural network (e.g., graph attention) to generate atom embeddings. A downstream transformer model maps embeddings to final parameters [14]. | Embedding dimension (d): 128; Number of attention heads: 8; Interaction blocks: 3 [14]. |

| 3. Model Training | Train the model end-to-end by minimizing the loss between the MM-calculated energies/forces (using predicted parameters) and the reference QM data [14]. | Loss function: Differentiable MM energy/force/Hessian loss; Optimizer: Adam; Learning rate: 1e-3 [14] [21]. |

| 4. Validation | Validate the trained model on a held-out test set of molecules. Evaluate accuracy on torsion energy profiles, relaxed geometries, and conformational energies [21]. | Metrics: Mean Absolute Error (MAE) in energy and forces; Torsion profile RMSD [21]. |

Protocol 2: Parametrizing a Novel Molecule for MD Simulation

This protocol describes the application of a pre-trained node-embedding model to parametrize a new molecule.

- Objective: To obtain a fully parameterized system for a novel molecule using a pre-trained GNN force field.

- Input: 2D structure of the target molecule.

- Output: Complete set of MM parameters and a ready-to-simulate topology file.

| Step | Procedure | Notes |

|---|---|---|

| 1. Graph Featurization | Convert the input molecule (e.g., from a SMILES string or 2D diagram) into a featurized molecular graph. | The graph should include nodes (atoms) and edges (bonds). |

| 2. Parameter Inference | Pass the molecular graph through the pre-trained GNN model. The model outputs the bonded MM parameters (bonds, angles, dihedrals) for the molecule [14]. | This step is computationally cheap and only needs to be performed once per molecule. |

| 3. Assign Non-Bonded Parameters | Assign non-bonded parameters (van der Waals, partial charges). These can be taken from an established force field or predicted by the model if it was trained to do so [14] [21]. | For Grappa, non-bonded parameters are taken from established force fields [14]. ByteFF predicts them simultaneously [21]. |

| 4. Generate Topology File | Assemble all parameters into a topology file compatible with an MD engine (e.g., GROMACS, OpenMM, AMBER). | This file defines the molecular system for simulation. |

| 5. System Setup and Simulation | Solvate the molecule, add ions, and perform energy minimization and equilibration followed by production MD. | Standard MD setup procedures apply. |

Protocol 3: Validating Force Field Performance

This protocol provides a framework for validating the accuracy of the parameterized system.

- Objective: To assess the performance of the machine-learned force field against QM data and experimental observables.

- Input: Parameterized molecule and corresponding reference data.

| Step | Procedure | Validation Metrics |

|---|---|---|

| 1. Conformational Energy | Calculate the single-point energies of multiple conformers using the MM force field and compare to QM references [21]. | MAE of relative conformational energies (kcal/mol). |

| 2. Torsion Scans | Perform a relaxed torsion scan for key dihedral angles and compare the resulting energy profile to a high-level QM scan [21]. | Root-mean-square error (RMSE) of the torsion profile energy. |

| 3. Geometry Optimization | Optimize the molecular geometry using the MM force field and compare the resulting structure (bond lengths, angles) to the QM-optimized geometry [21]. | MAE of bond lengths (Å) and angles (degrees). |

| 4. Molecular Dynamics | Run MD simulations and compute experimental observables such as J-couplings or protein folding behavior for comparison with experimental data [14]. | Reproduction of experimental J-couplings; folding of small proteins (e.g., chignolin) [14]. |

Performance Benchmarks and Data Presentation

The following tables summarize the quantitative performance of node-embedding force fields as reported in the literature.

Table 1: Performance of Node-Embedding Force Fields on Benchmark Datasets.

| Force Field | Test System | Key Metric | Reported Performance | Reference |

|---|---|---|---|---|

| Grappa | Small Molecules, Peptides, RNA (Espaloma Benchmark) | Outperforms traditional and machine-learned (Espaloma) MM force fields [14]. | State-of-the-art MM accuracy [14]. | |

| Grappa | Peptide Dihedral Angles | Matches Amber FF19SB without requiring CMAP corrections [14]. | Closely reproduces experimental J-couplings [14]. | |

| Grappa | Small Protein (Chignolin) | Improves calculated folding free energy [14]. | Recovers experimentally determined folded structure [14]. | |

| ByteFF | Drug-like Molecules | Torsional Energy Profile RMSE | State-of-the-art performance on various benchmarks [21]. | |

| ByteFF | Drug-like Molecules | Conformational Energy MAE | Excels in predicting relaxed geometries and forces [21]. |

Table 2: Computational Efficiency Comparison.

| Method | Computational Cost for Energy Evaluation | Suitability for Large-Scale MD |

|---|---|---|

| Traditional MM (GAFF, AMBER) | Low (Baseline) | Excellent (Million-atom systems on a single GPU) [14]. |

| Node-Embedding MM (Grappa, ByteFF) | Low (Same as traditional MM) [14] | Excellent (Inherits MM efficiency; used for viruses) [14]. |

| E(3)-Equivariant MLFF | Several orders of magnitude higher than MM [14] | Limited (Requires thousands of GPUs for million-atom systems) [14]. |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Software Tools and Resources for Node-Embedding Force Field Development and Application.

| Tool / Resource | Type | Primary Function | Relevance to Workflow |

|---|---|---|---|

| Grappa | Software Package | A machine-learned molecular mechanics force field that predicts parameters from a 2D graph [14]. | Core application for end-to-end parametrization. |

| GROMACS / OpenMM | MD Simulation Engine | High-performance software for running molecular dynamics simulations [14]. | Used to execute MD with the parameters generated by Grappa. |

| AMBER / GAFF | Force Field & Tools | A suite of programs for biomolecular MD simulation and force field parametrization [32]. | Provides a foundation for non-bonded parameters and validation; tools used in hybrid pipelines. |

| RDKit | Cheminformatics Library | Open-source toolkit for cheminformatics and molecule manipulation [21]. | Used for generating initial 3D conformations from 2D graphs during dataset creation. |

| Espaloma | Machine-Learned Force Field | A predecessor to Grappa that also uses GNNs for parameter assignment [14]. | Provides a benchmark and conceptual foundation for the node-embedding approach. |

| DPmoire | MLFF Software | A tool for constructing accurate machine learning force fields for complex moiré material systems [33]. | Demonstrates the transferability of the workflow concept to materials science. |

The accuracy of Molecular Dynamics (MD) simulations is fundamentally constrained by the underlying force fields. Traditional molecular mechanics (MM) force fields rely on fixed atom types and lookup tables for parameter assignment, which can limit their transferability and accuracy across diverse chemical environments [14]. In the context of a broader thesis on node-embedding approaches for consistent force field parameter assignment, this case study examines Grappa, a machine learning framework that leverages graph-based neural networks to predict MM parameters directly from the molecular graph [14] [34]. By replacing hand-crafted atom-typing rules with a learned, context-aware representation of chemical environments, Grappa aims to achieve state-of-the-art MM accuracy with the same computational efficiency as traditional force fields, enabling more reliable simulations of proteins and peptides [14].

Grappa's architecture is designed to be end-to-end differentiable and respects the fundamental permutation symmetries inherent to molecular mechanics. Its operation can be broken down into two primary stages.

Core Architecture: From Molecular Graph to Force Field Parameters

- Graph-Based Atom Embedding: Grappa employs a graph attentional neural network, inspired by transformer architectures, to process the 2D molecular graph. In this graph, nodes represent atoms and edges represent bonds. This network generates a d-dimensional embedding vector for each atom, which captures its chemical environment based on the molecular connectivity [14].

- Symmetry-Preserving Parameter Prediction: In the second stage, these atom embeddings are used to predict the specific MM parameters (e.g., force constants, equilibrium values) for every bonded interaction (bonds, angles, torsions, impropers) in the molecule. A key innovation of Grappa is its use of a transformer model with symmetry-preserving positional encoding to ensure the predicted parameters respect the required permutation symmetries of the MM energy function. For instance, the parameters for a bond between atom i and j must be invariant to their order: ξ^(bond)ij = ξ^(bond)ji [14].

This two-step process results in a complete set of MM parameters, ξ, for the molecule. The potential energy for any given spatial conformation, x, is then computed using the standard MM energy functional: E(x) = E_MM(x, ξ). Since the machine learning model is only used once per molecule to assign parameters, the subsequent energy evaluations are as computationally efficient as those of traditional MM force fields, allowing for seamless integration into MD engines like GROMACS and OpenMM [14] [34].

Workflow Visualization

The following diagram illustrates the complete Grappa parameterization and simulation workflow.

Application to Proteins and Peptides: Experimental Protocols and Validation

Grappa has been rigorously validated on a range of systems relevant to drug development and biochemical research, from small peptides to entire virus particles.

Performance Benchmarking

The following table summarizes Grappa's performance on key benchmarks for peptides and small molecules as reported in the literature [14].

Table 1: Summary of Grappa's Performance on Key Benchmarks

| System Category | Test Description | Key Comparative Result |

|---|---|---|

| Small Molecules, Peptides & RNA | Prediction of energies and forces on the Espaloma dataset (>14,000 molecules, >1 million conformations) [14] | Outperformed traditional MM force fields and the machine-learned Espaloma force field [14]. |

| Peptide Dihedral Landscapes | Evaluation of potential energy landscape of dihedral angles [14] | Matched the performance of Amber FF19SB (a state-of-the-art protein force field) without requiring the additional complexity of CMAP corrections [14]. |

| Experimental Validation | Reproduction of experimentally measured J-couplings [14] | Closely reproduced experimental values, demonstrating physical accuracy beyond just QM energy fitting [14]. |

| Protein Folding | Calculation of folding free energy for the small protein Chignolin [14] | Showed improvement over established force fields [14]. |

Protocol for Running an MD Simulation of a Peptide with Grappa

This protocol details the steps to parameterize a peptide and run a simulation using Grappa within the OpenMM ecosystem.

Table 2: Research Reagent Solutions for Grappa Simulations

| Item Name | Function / Description |

|---|---|

| Grappa Model Weights | Pre-trained machine learning model that predicts MM parameters from a molecular graph [14]. |

| OpenMM | A high-performance, open-source MD simulation toolkit used to evaluate energies and forces and integrate the equations of motion [14]. |

| OpenMM-Torch | An OpenMM plugin that allows the integration of PyTorch models, such as Grappa, into the simulation workflow. |

| AMBER Force Field (e.g., ff19SB) | Source for non-bonded parameters (van der Waals, charges). Grappa typically predicts only bonded parameters, relying on established force fields for non-bonded terms [14]. |