Microcanonical NVE Ensemble: Fundamental Theory, Molecular Dynamics Implementation, and Applications in Drug Delivery

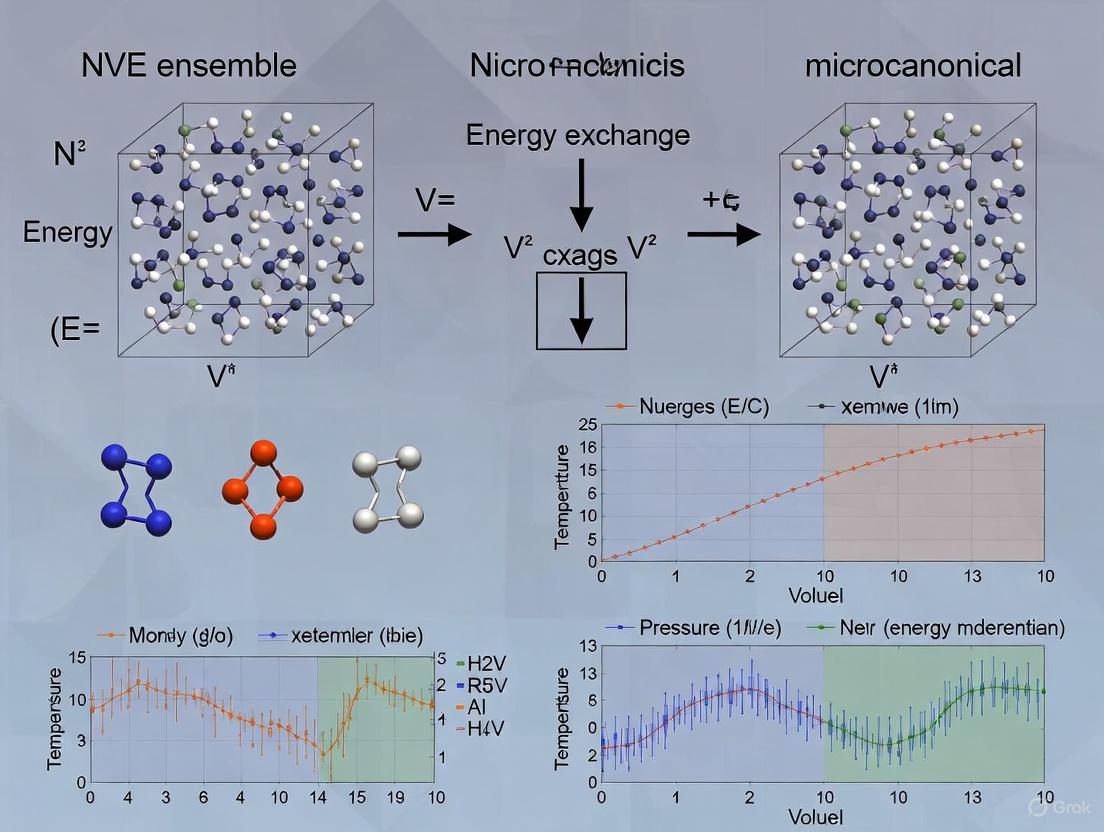

This article provides a comprehensive exploration of the microcanonical (NVE) ensemble, a cornerstone of statistical mechanics defined by constant particle number (N), volume (V), and energy (E).

Microcanonical NVE Ensemble: Fundamental Theory, Molecular Dynamics Implementation, and Applications in Drug Delivery

Abstract

This article provides a comprehensive exploration of the microcanonical (NVE) ensemble, a cornerstone of statistical mechanics defined by constant particle number (N), volume (V), and energy (E). Tailored for researchers, scientists, and drug development professionals, the content spans from foundational principles and entropy definitions to practical implementation in molecular dynamics (MD) simulations. It addresses common challenges and optimization techniques, compares the NVE ensemble with other statistical ensembles like NVT and NPT, and highlights its validation and application in cutting-edge research, including the development of machine learning interatomic potentials and drug delivery systems. By synthesizing theoretical knowledge with practical methodology, this guide serves as a vital resource for applying NVE ensemble simulations to realistic biomedical problems.

What is the NVE Ensemble? Mastering the Core Principles of Isolated Systems

The microcanonical ensemble, also known as the NVE ensemble, represents a cornerstone concept in statistical mechanics, providing the fundamental distribution for isolated mechanical systems. This ensemble is defined as a collection of identical systems, each characterized by the same fixed number of particles (N), confined within the same fixed volume (V), and possessing exactly the same total energy (E) [1] [2]. The system is considered isolated in the strictest sense: it cannot exchange energy or particles with its environment, leading to the conservation of the system's total energy over time, in accordance with the laws of mechanics [1]. This makes the NVE ensemble the conceptual starting point for equilibrium statistical mechanics, as it connects directly to the elementary postulates of the field [1].

The primary macroscopic variables—N, V, and E—are the defining parameters of the ensemble, and their constancy is the key postulate. Each of these quantities is assumed to be invariant for every system within the ensemble [1]. From a thermodynamic perspective, the fundamental potential derived from this ensemble is entropy (S), which is related to the number of microscopic states accessible to the system through the renowned Boltzmann's principle: ( S = k \log W ), where ( k ) is Boltzmann's constant and ( W ) is the number of microstates [2]. Other thermodynamic quantities, such as temperature and pressure, are not control parameters but are instead derived from the fundamental entropy relation [1] [2].

Fundamental Postulates and Theoretical Framework

The Postulate of EqualA PrioriProbability

The entire framework of the microcanonical ensemble rests upon a fundamental postulate. This postulate states that for an isolated system with precisely specified energy (E), volume (V), and number of particles (N), all accessible microstates are equally probable [1] [2]. A microstate is a specific, detailed configuration of the system that is consistent with the macroscopic constraints. For a classical system, this is a specific point in phase space (the set of all possible positions and momenta of all particles). In quantum mechanics, it is a specific quantum state with energy E [1] [2].

The probability ( Pi ) of finding the system in a particular microstate ( i ) is given by: [ Pi = \frac{1}{W} ] where ( W ) is the total number of microstates whose energy is in a range centered at E [1]. This uniform probability distribution is not derived from more basic principles; it is the foundational axiom of statistical mechanics for isolated systems. This assignment of equal probability is also the one that maximizes the information entropy of the ensemble for a given set of constraints [1].

Definitions of Entropy and Derived Thermodynamic Quantities

While the concept of entropy is unified, its precise mathematical definition in the microcanonical ensemble can vary, leading to different but related expressions. The most common definitions are summarized in the table below.

Table 1: Definitions of Entropy in the Microcanonical Ensemble

| Entropy Type | Mathematical Expression | Key Characteristics |

|---|---|---|

| Boltzmann Entropy (Sₛ) | ( SB = kB \log W = k_B \log\left(\omega \frac{dv}{dE}\right) ) | Depends on the density of states, ( \frac{dv}{dE} ), and an arbitrary small energy width, ( \omega ) [1]. |

| Volume Entropy (Sᵥ) | ( Sv = kB \log v(E) ) | Defined in terms of ( v(E) ), the number of states with energy less than E [1]. |

| Surface Entropy (Sₛ) | ( Ss = kB \log \frac{dv}{dE} = SB - kB \log \omega ) | Defined in terms of the derivative of the volume function [1]. |

From the chosen definition of entropy, other thermodynamic quantities are derived as secondary properties rather than controlled parameters [1]. The temperature (T) of the system is defined as the derivative of entropy with respect to energy. Using the volume and surface entropies, one can define corresponding "temperatures": [ \frac{1}{Tv} = \frac{dSv}{dE}, \quad \frac{1}{Ts} = \frac{dSs}{dE} ] Similarly, the microcanonical pressure (p) and chemical potential (μ) are given by [1]: [ \frac{p}{T} = \frac{\partial S}{\partial V}; \qquad \frac{\mu}{T} = -\frac{\partial S}{\partial N} ] These derived definitions, while mathematically sound, can lead to conceptual challenges, such as non-intensive temperature behavior when two microcanonical systems are combined, or the appearance of negative temperatures when the density of states decreases with energy [1].

Practical Implementation in Molecular Dynamics

The NVE Ensemble in Molecular Dynamics Simulations

In molecular dynamics (MD), the NVE ensemble is implemented by numerically solving Newton's equations of motion for all particles in the system. The forces on the particles are derived from a potential energy function (a force field), and these forces are used to update particle velocities and positions over discrete time steps, typically on the order of femtoseconds [3]. In this context, the total energy ( E ) is the sum of kinetic (( K )) and potential (( U )) energy, ( E = K + U ), and is conserved by the numerical integrator in the absence of external influences [2] [4].

A critical aspect of maintaining constant volume in MD is the treatment of the system's boundaries. The "volume" (V) is defined as the spatial domain within which particles are allowed to move. This is enforced through boundary conditions [4]. In a simple isolated system with no explicit boundaries, the volume is effectively infinite. More commonly, a finite volume is defined, such as a cubic box with side length L (V = L³). To prevent artifacts from surfaces, periodic boundary conditions are often applied, making the system virtually infinite and periodic. Alternatively, reflexive boundaries (walls) can be used. In all cases, the size and shape of this box remain fixed throughout an NVE simulation, ensuring constant volume [4].

Protocols for NVE Ensemble Simulations

Setting up a proper NVE simulation requires careful preparation and parameter selection. The following table outlines a general protocol and key parameters as implemented in various MD software packages like GROMACS, VASP, and LAMMPS.

Table 2: NVE Simulation Protocol and Key Parameters

| Simulation Phase | Objective | Typical Methods & Parameters |

|---|---|---|

| System Preparation | Create initial atomic coordinates and box. | Define particle number (N), box size and shape (V), force field. |

| Energy Minimization | Remove bad contacts and high potential energy. | Use steepest descent or conjugate gradient algorithms [5] [6]. |

| Equilibration (NVT/NPT) | Bring system to desired temperature/pressure. | Use thermostats (e.g., v-rescale) and barostats. This is a preparatory step outside of NVE [7]. |

| NVE Production Run | Sample the microcanonical ensemble. | Integrator: md (leap-frog/Verlet). Thermostat/Barostat: Disabled (Tcoupl = no, Pcoupl = no in GROMACS) or set to zero coupling (e.g., ANDERSEN_PROB = 0.0 in VASP) [5] [7]. |

It is crucial to note that a true NVE ensemble in MD requires the absence of thermostats and barostats, as these devices exchange energy or volume with the system to maintain constant temperature or pressure [5] [7]. For instance, in GROMACS, this is achieved by setting Tcoupl = no and Pcoupl = no [5]. In VASP, one can use a thermostat algorithm but effectively disable it by setting its coupling parameter to zero (e.g., ANDERSEN_PROB = 0.0) [7].

Applications and a Research Case Study

Role of NVE in Research and a Drug Delivery Example

While the canonical (NVT) and isothermal-isobaric (NPT) ensembles are more commonly used to mimic common experimental conditions, the NVE ensemble has specific and important applications. It is vital for studying energy conservation properties of integrators and force fields, modeling isolated systems (e.g., clusters in vacuum, gas-phase reactions), and serving as the core integration method in complex simulations even when thermostats are applied to subsets of the system [1] [3] [8].

A prime example of its use in advanced research is found in the study of drug solubilization in deep eutectic solvents (DES). Karimi et al. (2025) used molecular dynamics simulations to investigate the solvation and aggregation of heteroaromatic drugs (allopurinol, losartan, omeprazole) in reline, a DES [6]. In such studies, an NVE production run is often performed after careful equilibration in NVT and NPT ensembles. This allows researchers to observe the natural, energy-conserving dynamics of the system, free from the artificial influence of a thermostat, to analyze phenomena like drug-drug aggregation through π-stacking interactions [6]. The stability of these aggregates, with sizes ranging from ~2.6 to ~5.5 molecules on average, and interplanar distances of 0.36 to 0.47 nm, was further validated using Density Functional Theory (DFT) calculations, yielding dimer stabilization energies from -10 to -32 kcal mol⁻¹ [6].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for MD Simulations Featuring NVE

| Item Name | Function/Description | Example from Literature |

|---|---|---|

| Force Field | Mathematical functions defining interatomic potentials. | GROMOS96 53A6 force field; used for its reliability in H-bonding and solvation behavior [6]. |

| Solvation Box | Defines the constant volume (V) and provides periodic boundary conditions. | Cubic box with periodic boundary conditions; volume is fixed during NVE production [6] [4]. |

| Thermostat (for equilibration) | Controls temperature during pre-equilibration phases. | Velocity-rescale thermostat; used in NVT equilibration prior to NVE production [5] [6]. |

| Software Package | Provides the engine for numerical integration of equations of motion. | GROMACS, VASP, LAMMPS; they implement algorithms like Verlet for NVE integration [5] [6] [7]. |

| Analysis Tools | Programs/scripts to compute properties from trajectory data. | Tools for H-bond analysis, radial/angular distribution functions, mean-squared displacement [6]. |

Conceptual Relationships and Workflow

The foundational principles and practical applications of the NVE ensemble are interconnected through a logical workflow, from its fundamental postulates to its role in modern computational research.

Diagram Title: Logical Flow from NVE Postulates to Application

The microcanonical NVE ensemble is built upon a simple yet powerful set of postulates: the constancy of particle number, volume, and energy, and the equal probability of all accessible microstates. This foundation allows for the derivation of thermodynamics from mechanical principles, with entropy as the central quantity. While conceptually fundamental, the practical use of the pure NVE ensemble in theoretical calculations can be mathematically cumbersome due to ambiguities in defining entropy and temperature [1]. Consequently, for many theoretical purposes, other ensembles like the canonical ensemble are often preferred [1].

In the realm of molecular dynamics simulations, the NVE ensemble remains highly relevant. It is the default ensemble defined by Newton's equations and is crucial for testing energy conservation and studying genuinely isolated systems. However, its applicability to real-world systems depends on the significance of energy fluctuations. For macroscopically large systems or those prepared with precisely known energy and maintained in near isolation, the microcanonical ensemble is an excellent model [1]. In most other cases, particularly where systems interact with a environment, ensembles like NVT or NPT that allow for energy exchange provide a more accurate representation [1] [9]. Thus, the NVE ensemble serves both as the fundamental bedrock of statistical mechanics and as a specialized, powerful tool in the computational scientist's toolkit for probing specific physical scenarios.

The Microcanonical Distribution and the Postulate of Equal a Priori Probabilities

The microcanonical ensemble, also known as the NVE ensemble, represents a cornerstone concept in statistical mechanics, providing the fundamental foundation for describing isolated mechanical systems. It characterizes systems that are completely isolated from their environment, possessing a fixed total number of particles (N), a fixed volume (V), and a precisely specified total energy (E) [1]. The defining Postulate of Equal a Priori Probabilities states that for such an isolated system in thermodynamic equilibrium, all microscopic states (microstates) accessible to the system are equally probable [1] [10] [11]. This means that if a system has a total of W accessible microstates consistent with the fixed N, V, and E, then the probability of finding the system in any one of these microstates is simply 1/W [1] [2].

This postulate arises from a profound lack of information about the detailed state of the system. With no reason to favor one state over another, the uniform probability distribution represents the least biased assumption [12]. The microcanonical ensemble is not just a theoretical construct; it finds application in specific numerical simulations like molecular dynamics [1] and provides the conceptual starting point from which other ensembles, like the canonical (NVT) and grand canonical (µVT) ensembles, can be derived [12].

Theoretical Foundation and Rationale

The Core Physical Picture

The rationale behind the equal probability postulate can be intuitively understood. Consider an isolated system, such as a rigid box with perfectly insulating walls, containing a fixed number of particles and a fixed total energy. This system will evolve dynamically over time, exploring different configurations (microstates) of particle positions and momenta. The core assumption is that, over long periods, the system will spend an equal amount of time in each of these accessible microstates [10] [11]. This dynamic exploration leads to the statistical conclusion that each microstate has an equal probability of being observed at a random instant in time.

This postulate finds rigorous support from modern statistical mechanics. Research has shown that if the initial probability distribution over microstates is not uniform, under very wide and commonly satisfied conditions—such as in ergodic systems—the distribution will relax to the uniform microcanonical distribution over time [13]. This result, derived from first principles using tools like the Fluctuation Theorem, is analogous to the classical Boltzmann H-theorem but applies more generally to dense fluids and allows for non-monotonic relaxation to equilibrium [13].

Why the Postulate is Unique to the Microcanonical Ensemble

A critical question is why this principle of indifference applies uniquely to the microcanonical ensemble and not to the canonical or grand canonical ensembles. The key differentiator is the nature of the constraints:

- Microcanonical Ensemble (NVE): The system is isolated. Its energy, volume, and particle number are strictly fixed. With energy exactly specified, every accessible microstate is, by definition, equiprobable as there is no variable upon which to base a differing probability [12].

- Canonical Ensemble (NVT): The system is in contact with a heat bath at temperature T. It can exchange energy with its surroundings. Therefore, its energy can fluctuate. The system can access states with different energies, and the principle of indifference must be weighted by how likely it is to borrow energy from the bath to occupy a higher energy state. This leads to the non-uniform Boltzmann distribution [12].

- Grand Canonical Ensemble (µVT): The system can exchange both energy and particles with a reservoir. This introduces a second fluctuating quantity (particle number), further moving the probability distribution away from uniformity [12].

In essence, the microcanonical ensemble is the only one where nothing fluctuates. For any quantity that is not held fixed, its value can vary, and the probability distribution must account for this, breaking the simple uniform probability of the microcanonical case [12].

Mathematical Formalisms

The Microcanonical Partition Function and Probability

The total number of accessible microstates, W, serves as the microcanonical partition function. Its mathematical definition depends on whether the system is treated classically or quantum mechanically.

Quantum Mechanical System: For a system with a discrete energy spectrum, W is the number of quantum states with energy in a narrow range around E. If the energy levels are discrete, one can define

W(E) = Σ_i δ_{E, E_i}, where the sum is over all states with energy E_i = E. In practice, a small energy width ω is often introduced to ensure W is a smooth function [1]. The probability for a specific quantum microstate i is then:p_i = 1 / W(E, V, N)Classical System: In classical mechanics, microstates form a continuum in phase space (the space of all possible particle positions r and momenta p). The number of states is replaced by a phase space volume. The classical microcanonical partition function is given by [1] [2]:

W(E, V, N) = (1 / (N! h^(3N))) ∫∫ δ(H(r, p) - E) dr dpHere,H(r, p)is the Hamiltonian of the system,δis the Dirac delta function enforcing the energy constraint,his Planck's constant (providing a quantum-mechanical correction to make the phase volume dimensionless), andN!accounts for the indistinguishability of identical particles (which may be omitted for solid systems) [2]. The probability density in phase space is:ρ(r, p) = δ(H(r, p) - E) / (N! h^(3N) W)

Connection to Thermodynamics: Entropy

The bridge between the microscopic description of statistical mechanics and macroscopic thermodynamics is provided by the Boltzmann entropy (also known as the Boltzmann-Planck entropy formula) [1] [2] [10]:

S(E, V, N) = k_B ln W

where k_B is Boltzmann's constant. This equation is one of the most profound in physics, identifying thermodynamic entropy as a measure of the number of microscopic ways a macroscopic state can be realized. A system will naturally evolve toward the macrostate with the largest W, which corresponds to the maximum entropy, in accordance with the Second Law of Thermodynamics [10] [11].

Other definitions of entropy in the microcanonical ensemble exist, such as the "volume entropy" S_v = k_B log v(E), where v(E) is the volume of phase space with energy less than E, and the "surface entropy" S_s = k_B log (dv/dE). These can lead to subtle differences in the definition of derived quantities like temperature for small systems, but for large systems, they become equivalent [1].

Derived Thermodynamic Quantities

Once the entropy S(E, V, N) is known, all other thermodynamic properties can be derived by taking appropriate partial derivatives, analogous to their definitions in classical thermodynamics [1] [2].

- Temperature (T): Defined from the fundamental thermodynamic relation

dE = T dS - P dV. The statistical mechanical definition is:1/T = (∂S / ∂E)_{V, N} - Pressure (P): The mechanical pressure is derived from:

P / T = (∂S / ∂V)_{E, N} - Chemical Potential (µ): The energy cost of adding a particle is given by:

µ / T = - (∂S / ∂N)_{E, V}

The following table summarizes these key thermodynamic relationships:

Table 1: Thermodynamic Quantities Derived from the Microcanonical Entropy

| Thermodynamic Quantity | Statistical Mechanical Definition | Associated Independent Variable |

|---|---|---|

| Entropy (S) | S = k_B ln W |

Energy (E) |

| Temperature (T) | 1/T = (∂S / ∂E)_{V,N} |

Volume (V), Particle Number (N) |

| Pressure (P) | P / T = (∂S / ∂V)_{E,N} |

Energy (E), Particle Number (N) |

| Chemical Potential (µ) | µ / T = - (∂S / ∂N)_{E,V} |

Energy (E), Volume (V) |

Conceptual and Computational Framework

Logical Workflow of the Microcanonical Formalism

The overall logical structure of the microcanonical ensemble theory, from its fundamental postulate to its thermodynamic consequences, can be visualized as a coherent workflow. The following diagram maps out the key concepts and their relationships:

Diagram Title: Logical Pathway of the Microcanonical Ensemble

Mathematical Pathway from Postulate to Temperature

The derivation of temperature from the fundamental postulate involves a elegant mathematical argument that considers a partitioned system. The following diagram details the logical and mathematical steps in this derivation:

Diagram Title: Mathematical Derivation of Temperature from Postulate

Research Applications and Protocols

The Scientist's Toolkit: Essential Concepts and "Reagents"

In computational studies, particularly those employing Monte Carlo methods, the microcanonical ensemble provides specific "tools" or conceptual reagents to tackle complex problems. The following table lists key items in a researcher's toolkit for working with the microcanonical ensemble and related computational frameworks.

Table 2: Research Reagent Solutions for Microcanonical and Related Computations

| Research Reagent / Concept | Function and Role in the Investigation |

|---|---|

| Microcanonical (NVE) Ensemble | The foundational statistical model for isolated systems; used as a starting point for theory and in molecular dynamics simulations [1]. |

| Daemons / Walkers | Auxiliary dynamic variables introduced in microcanonical Monte Carlo algorithms (e.g., MicSA) to facilitate energy exchange and reduce the need for random numbers, enabling massively parallel simulations [14]. |

| Microcanonical Simulated Annealing (MicSA) | A computational algorithm that generalizes simulated annealing to a microcanonical context, dramatically reducing the burden of random-number generation while maintaining compatibility with canonical results [14]. |

| Ising Spin-Glass Hamiltonian | A classic NP-complete problem and a demanding benchmark system used to test and validate the performance and accuracy of new microcanonical algorithms [14]. |

| Boltzmann's Constant (k_B) | The fundamental physical constant that links the statistical definition of entropy (ln W) to the thermodynamic scale of entropy (S) [2] [10]. |

Advanced Computational Protocols

The microcanonical ensemble is not merely a theoretical concept but is actively used and extended in modern computational physics. Recent research focuses on overcoming the limitations of traditional Monte Carlo methods, which are extremely greedy for (pseudo)random numbers, making large-scale parallel simulations challenging [14].

One advanced protocol is the Microcanonical Simulated Annealing (MicSA) formalism. This method uses an extended configuration space that includes the physical degrees of freedom (e.g., spins) and a set of auxiliary variables called "daemons" or "walkers" [14]. The algorithm alternates between:

- Microcanonical Steps: The system evolves while conserving the total energy shared between the physical system and the daemons.

- Annealing Steps: The total energy of the system is lowered by acting solely on the daemons at a controlled (logarithmic) rate.

This protocol has been successfully demonstrated on GPUs for the three-dimensional Ising spin glass, a standard benchmark for complex systems. Results show that after a simple time rescaling, the off-equilibrium dynamics of MicSA can be mapped onto the results obtained from standard, random-number-intensive canonical simulations, proving its utility for large-scale, parallel computation [14].

Critical Discussion and Limitations

While the microcanonical ensemble is a fundamental building block of statistical mechanics, it has certain limitations and conceptual subtleties.

- Ambiguities in Entropy and Temperature: The different definitions of entropy (

S_B,S_v,S_s) lead to different definitions of temperature (T_s,T_v). For macroscopic systems, these differences are negligible, but they become significant for small systems with few degrees of freedom [1]. - Problematic Behavior of Microcanonical Temperature: The temperature derived from

T_s = (∂S_s/∂E)^{-1}can exhibit non-intuitive behaviors. For instance, if the density of states decreases with energy (e.g., in some spin systems), the temperature can become negative. Furthermore, when two microcanonical systems with the same initialT_sare brought into thermal contact, energy may still flow between them, and the final temperature may differ from the initial one, contradicting the standard intuition of temperature as an intensive property [1]. - Applicability to Real Systems: Truly isolated systems with perfectly known energy are an idealization. Most real-world systems interact with their environment, leading to energy fluctuations. For this reason, the canonical or grand canonical ensembles are often preferred for theoretical calculations, as they provide a more realistic description and avoid the ambiguities of the microcanonical ensemble [1]. The microcanonical ensemble is most applicable to macroscopically large systems or those manufactured with precisely known energy and thereafter maintained in near-isolation [1].

- Phase Transitions: A significant theoretical advantage of the microcanonical ensemble is that strict phase transitions, defined as non-analytic behavior in the thermodynamic potential, can occur in systems of any size within this ensemble. This contrasts with the canonical and grand canonical ensembles, where true non-analyticities and phase transitions can only occur in the thermodynamic limit of infinitely many degrees of freedom [1].

In statistical mechanics, the microcanonical ensemble (or NVE ensemble) describes isolated systems with a constant number of particles ((N)), constant volume ((V)), and constant, precisely specified energy ((E)) [1] [15]. The fundamental postulate for this ensemble is that an isolated system in equilibrium is equally likely to be found in any of its accessible microstates [16]. A microstate is a complete microscopic description of a system, specifying the precise positions and momenta of all its constituent particles [17]. In contrast, a macrostate is described by a few macroscopic variables like temperature, pressure, or volume, and typically corresponds to a vast number of microstates [18] [17].

The framework for describing these microstates is phase space, a central concept for connecting the microscopic dynamics of particles to macroscopic thermodynamics. For a system of (N) particles in three dimensions, phase space is a (6N)-dimensional abstract space. Each of the (N) particles has three position coordinates ((qx, qy, qz)) and three momentum coordinates ((px, py, pz)) [17] [16]. A single point in this (6N)-dimensional space, denoted by the set of all coordinates ((\vec{q}1, \vec{q}2, ..., \vec{q}N, \vec{p}1, \vec{p}2, ..., \vec{p}N)), defines a unique microstate of the entire system at a given instant [16]. As the system evolves in time, this point traces a trajectory in phase space [16].

The Energy Shell and Accessible Microstates

For an isolated system in the microcanonical ensemble, the total energy (E) is fixed. The Hamiltonian function (\mathcal{H}(\vec{q}, \vec{p})) represents the total energy, which is the sum of kinetic and potential energies [2]. Therefore, not all regions of phase space are accessible, only those consistent with this energy constraint.

The set of all microstates with energy between (E) and (E + \delta E) forms a region in phase space known as the energy shell [19]. Although the system's energy is precisely (E) in principle, a small energy range (\delta E) is introduced for practical mathematical treatment, with the assumption that (\delta E) is macroscopically small but large enough to contain a vast number of microstates [1] [16]. The microcanonical ensemble is defined by assigning equal probability to every microstate within this energy shell and zero probability to all others [1].

The volume of the energy shell is given by the integral over phase space [17] [19]: [ \Omega(E, V, N) = \frac{1}{h0^{3N}} \int \mathbf{1}{\delta E}(\mathcal{H}(\vec{q}, \vec{p}) - E) \prod{i=1}^{3N} dqi dpi ] Here, (h0) is a small constant with dimensions of action (e.g., Planck's constant (h) in quantum mechanics) introduced to make (\Omega) dimensionless and to provide a measure for counting states [17] [16]. The function (\mathbf{1}_{\delta E}) is a indicator function that is 1 when the Hamiltonian is within the energy range ([E, E+\delta E]) and 0 otherwise. The term (1/N!) is sometimes included for systems of indistinguishable particles to resolve the Gibbs paradox [18] [17].

The number of microstates (\Omega(E, V, N)) is directly linked to a fundamental thermodynamic property via the Boltzmann entropy [1] [2]: [ S(E, V, N) = kB \ln \Omega(E, V, N) ] where (kB) is Boltzmann's constant. This equation, central to statistical mechanics, provides a microscopic interpretation of entropy: it is a measure of the number of ways a macrostate can be realized microscopically [18] [17]. A system with a greater number of accessible microstates has higher entropy.

Conceptual Diagram of Phase Space and the Energy Shell

The following diagram illustrates the structure of phase space and the concept of the energy shell for a microcanonical ensemble.

Quantitative Treatment and the Density of States

The density of states, (\rho(E)), is a crucial function that measures the number of microstates per unit energy range [16]. It is defined such that the number of states between (E) and (E + \delta E) is: [ \Omega(E) = \rho(E) \delta E ] For a macroscopic system, the density of states is an extremely rapidly increasing function of energy because more energy allows for a greater number of ways to distribute energy among the particles [16].

The table below summarizes the key quantitative relationships in the microcanonical ensemble.

| Concept | Mathematical Expression | Thermodynamic Relation | Description |

|---|---|---|---|

| Phase Space Volume | (\Omega(E) = \frac{1}{h^{3N} N!} \int_{E<\mathcal{H} | - | Count of accessible microstates within the energy shell. |

| Boltzmann Entropy | (S(E, V, N) = k_B \ln \Omega(E)) [1] [2] | Fundamental Relation | Connects microscopic states to macroscopic entropy. |

| Temperature | (\frac{1}{T} = \left( \frac{\partial S}{\partial E} \right)_{V, N}) [1] [19] | (T dS = dE + P dV) | A derived quantity, defined as the derivative of entropy with respect to energy. |

| Pressure | (\frac{P}{T} = \left( \frac{\partial S}{\partial V} \right)_{E, N}) [1] [2] | (T dS = dE + P dV) | The generalized force conjugate to volume. |

Example: The Classical Ideal Gas

The power of this formalism is illustrated by deriving the thermodynamic properties of a classical monatomic ideal gas, where the interatomic potential energy is zero [19]. The Hamiltonian is purely kinetic: (\mathcal{H} = \sum{i=1}^{N} \frac{\vec{p}i^2}{2m}).

The number of microstates for this system can be calculated, and the corresponding entropy is [19]: [ S(E, V, N) = kB N \ln \left[ \frac{V}{N} \left( \frac{4 \pi m E}{ 3 h0^2 N} \right)^{3/2} \right] + \frac{5}{2} kB N ] Using the thermodynamic definitions of temperature and pressure from the table above, one can derive the familiar ideal gas equations of state [19]: [ E = \frac{3}{2} N kB T \quad \text{and} \quad P V = N k_B T ] This derivation from first principles validates the statistical mechanical approach.

The Scientist's Toolkit: Research Reagent Solutions

While the microcanonical ensemble is a theoretical framework, its principles are directly applied in computational experiments. The following table details key "reagents" or tools used in Molecular Dynamics (MD) simulations, a primary numerical application of the NVE ensemble [1] [19].

| Tool/Reagent | Function in NVE Simulation | Technical Specification & Purpose |

|---|---|---|

| Numerical Integrator | Propagates the system in time. | Algorithms like Velocity Verlet; solve Newton's equations (mi \ddot{\vec{r}}i = -\vec{\nabla}_i U(\vec{r}^N)) to generate a trajectory [19]. |

| Initial Condition Generator | Prepares the system's starting microstate. | Assigns initial positions (e.g., crystal lattice) and velocities such that the total energy (E) matches the desired value [19]. |

| Force Field | Defines the potential energy (U(\vec{r}^N)). | A set of mathematical functions and parameters (e.g., Lennard-Jones potential) modeling interatomic forces [19]. |

| Thermodynamic Analyzer | Measures macroscopic properties from the trajectory. | Calculates averages over the trajectory; e.g., temperature via (\langle K \rangle = \frac{3}{2} N k_B T), pressure via the virial theorem [19]. |

Workflow for a Microcanonical Molecular Dynamics Experiment

The typical protocol for an NVE MD simulation, which numerically explores the energy shell, is outlined below.

Detailed Protocol:

- System Preparation: Define the number of particles (N) and simulation box volume (V). Initialize particle positions (e.g., on a lattice) and assign velocities such that the total kinetic energy corresponds to the desired temperature, fixing the total energy (E) [19].

- Force Calculation: At each time step, compute the forces on every atom using the chosen force field: (\vec{F}i = -\vec{\nabla}i U(\vec{r}^N)) [19].

- Numerical Integration: Use a time-reversible integrator like the Velocity Verlet algorithm to update atomic positions and velocities for a small time step (\Delta t) (e.g., 1 femtosecond). This algorithm conserves energy well, making it suitable for NVE simulations [19].

- Equilibration: Monitor the total energy (E) to ensure it fluctuates minimally around a stable value, indicating the system has equilibrated on the energy shell.

- Data Production and Analysis: After equilibration, continue the simulation to sample microstates. Macroscopic properties are computed as time averages; for example, the instantaneous temperature (T(t)) is calculated from the kinetic energy (K(t)) via (K(t) = \frac{3}{2} N k_B T(t)), and the pressure is the average of the virial [19].

The concepts of phase space, accessible microstates, and the energy shell form the foundational core of the microcanonical ensemble. This framework provides a rigorous bridge from the microscopic mechanics of individual particles to the macroscopic laws of thermodynamics. The Boltzmann entropy formula (S = k_B \ln \Omega) is the keystone of this bridge, defining entropy as a measure of microscopic uncertainty. While the microcanonical ensemble can be mathematically cumbersome for complex analytical theories, it remains a conceptually vital model and is directly realized in modern computational methods like NVE molecular dynamics, allowing researchers to probe the statistical behavior of systems from simple gases to complex biomolecules.

This technical guide explores Boltzmann's principle, which establishes the fundamental connection between the thermodynamic entropy of a macrostate and the number of microstates compatible with it. Framed within the context of microcanonical (NVE) ensemble theory, this work examines the statistical mechanical foundations of entropy as formulated by Ludwig Boltzmann and later refined by Max Planck. We present the core theoretical framework, detailed methodologies for computing statistical quantities, and the critical link between microscopic descriptions and macroscopic thermodynamics. The discussion includes contemporary applications and computational approaches that leverage these principles for studying complex systems, providing researchers with both classical foundations and modern implementations for investigating systems with constant energy, volume, and particle number.

The microcanonical ensemble, also known as the NVE ensemble, represents a cornerstone of statistical mechanics, describing isolated systems with constant internal energy (U), volume (V), and particle number (N) [1] [2]. Within this framework, Ludwig Boltzmann's seminal contribution established entropy as a statistical concept rather than purely thermodynamic, creating a fundamental bridge between the microscopic world of atomic configurations and macroscopic thermodynamic observations [20] [21]. This statistical interpretation allows researchers to understand thermodynamic phenomena through the lens of probability and microscopic system configurations.

Boltzmann's work between 1872 and 1875 first articulated the logarithmic relationship between entropy and probability, though the equation in its modern form, S = kB ln W, was ultimately cast by Max Planck around 1900 [20]. In this formulation, S represents the thermodynamic entropy, kB is Boltzmann's constant (1.380658 × 10-23 J K-1), and W denotes the number of microstates corresponding to a given macrostate [22] [21]. The profound implication of this equation is engraved on Boltzmann's tombstone in Vienna's Central Cemetery, testament to its fundamental importance in physics [20].

The microcanonical ensemble provides the most natural framework for understanding Boltzmann's principle, as it considers isolated systems where all accessible microstates are equally probable, embodying the core assumption of statistical mechanics [1] [2]. This principle demonstrates that as the number of particles in a system increases, the probability of significant deviations from the equilibrium state diminishes exponentially, thereby explaining the statistical nature of the second law of thermodynamics [21].

Theoretical Framework of the Microcanonical Ensemble

Fundamental Definitions and Postulates

The microcanonical ensemble is defined as a collection of systems with identical particle number (N), volume (V), and total energy (E) [1] [2]. The fundamental postulate of statistical mechanics, also called the postulate of equal a priori probabilities, states that for an isolated system in equilibrium, all accessible microstates are equally probable [2]. In this framework:

- A microstate constitutes a complete specification of the system at the microscopic level, defining the exact positions and momenta of all constituent particles in classical mechanics, or the exact quantum state in quantum mechanical descriptions [20] [21].

- A macrostate describes the system through macroscopic, measurable variables such as temperature, pressure, and volume, which correspond to averages over microscopic properties [21].

- The number of microstates (W) accessible to a system with fixed N, V, and E is related to the entropy through Boltzmann's formula [20] [22].

In classical statistical mechanics, the microcanonical partition function measures the number of microstates available to a system at constant energy and is expressed as:

[ W = \frac{1}{N! h^{3N}} \int \int \delta(H(\mathbf{r}^N, \mathbf{p}^N) - E) d\mathbf{r}^N d\mathbf{p}^N ]

where h is Planck's constant, δ is the Dirac delta function, and H is the Hamiltonian representing the total energy of the system [2]. The factor of N! accounts for the indistinguishability of identical particles, essential for correct counting in classical treatments of quantum systems.

Entropy Definitions in Microcanonical Ensemble

Within the microcanonical framework, several related but distinct definitions of entropy exist, differing primarily in how they handle the density of states [1]:

Table 1: Definitions of Entropy in Microcanonical Ensemble

| Entropy Type | Mathematical Expression | Characteristics | Applications |

|---|---|---|---|

| Boltzmann Entropy | ( SB = kB \log W = k_B \log\left(\omega \frac{dv}{dE}\right) ) | Depends on arbitrary energy width ω; most common formulation | General statistical mechanics; connects directly to thermodynamics |

| Volume Entropy | ( Sv = kB \log v(E) ) | Uses cumulative number of states with energy less than E | Theoretical studies; avoids energy width dependence |

| Surface Entropy | ( Ss = kB \log \frac{dv}{dE} = SB - kB \log \omega ) | Proportional to Boltzmann entropy minus constant | Specialized theoretical applications |

In these expressions, v(E) represents the number of quantum states with energy less than E, while dv/dE is the density of states at energy E [1]. The volume entropy Sv utilizes the cumulative state count, while SB and Ss work with the density of states in an energy shell, with SB incorporating an arbitrary energy width ω to ensure the logarithm operates on a dimensionless quantity [1].

Methodologies and Computational Approaches

Calculating Microstates and Statistical Weights

The fundamental methodology for applying Boltzmann's principle involves counting the number of microstates (W) accessible to a system under given constraints. For a system of N distinguishable particles distributed among various energy states, with ni particles in the i-th state, the statistical weight is given by:

[ W = \frac{N!}{n1! n2! n_3! \cdots} ]

This formula enumerates the number of ways to arrange N distinguishable particles with a specified distribution among states [22]. For systems with continuous degrees of freedom, the classical phase space integral provides the measure of available microstates [2].

Table 2: Methodologies for Microstate Enumeration

| System Type | Counting Method | Key Considerations | Example Application |

|---|---|---|---|

| Discrete Quantum Systems | Combinatorial analysis of state occupations | Particle distinguishability, quantum statistics | Ideal paramagnet, two-state systems |

| Classical Monatomic Gas | Phase space integral ( W = \frac{1}{N! h^{3N}} \int \int \delta(H - E) d\mathbf{r}^N d\mathbf{p}^N ) | N! for identical particles; h3N for quantum correction | Ideal gas entropy calculation |

| Interacting Particle Systems | Approximation methods, simulation approaches | Interactions reduce accessible states; numerical techniques | Dense fluids, molecular systems |

Workflow for Entropy Calculation in NVE Ensemble

The following diagram illustrates the systematic methodology for determining entropy within the microcanonical ensemble framework:

Diagram 1: Entropy Calculation Workflow in NVE Ensemble. This workflow outlines the systematic approach for determining thermodynamic properties from microscopic descriptions in the microcanonical ensemble.

Partitioned Systems and Entropy Maximization

A fundamental methodology for understanding thermal equilibrium involves analyzing partitioned systems. Consider a system divided into two subsystems A and B by an imaginary rigid wall that allows energy exchange but prevents particle transfer [11]. The probability of finding subsystem A with energy UA is:

[ pA(UA) = \frac{cA(UA) cB(U - UA)}{c(U)} ]

where cA and cB are the numbers of microstates accessible to each subsystem, and c(U) is the total number of microstates for the composite system [11]. Taking the logarithm and multiplying by Boltzmann's constant:

[ k \ln pA(UA) = SA(UA) + SB(U - UA) - k \ln c(U) ]

The equilibrium condition corresponds to the maximum of this probability, which occurs when:

[ \frac{1}{TA} = \frac{\partial SA}{\partial UA} = \frac{\partial SB}{\partial UB} = \frac{1}{TB} ]

This demonstrates that thermal equilibrium emerges from the maximization of total entropy, with equal temperatures across subsystems [11]. This methodology can be extended to systems exchanging particles and volume, leading to definitions of chemical potential and pressure through similar entropy maximization principles.

Connecting Statistical and Thermodynamic Descriptions

Temperature, Pressure, and Chemical Potential

Within the microcanonical ensemble, temperature is not an external control parameter but rather a derived quantity defined through the entropy [1] [11]. For the Boltzmann entropy, temperature is defined as:

[ \frac{1}{T} = \frac{\partial S_B}{\partial E} ]

with analogous definitions for other entropy formulations [1]. Similarly, pressure and chemical potential emerge from entropy derivatives:

[ \frac{p}{T} = \frac{\partial S}{\partial V}, \quad \frac{\mu}{T} = -\frac{\partial S}{\partial N} ]

These relationships demonstrate how macroscopic thermodynamic quantities originate from the statistical behavior of microscopic constituents [1] [2]. The following diagram illustrates the conceptual bridge between microscopic descriptions and macroscopic observables:

Diagram 2: Micro-Macro Connection in Statistical Mechanics. This diagram illustrates the conceptual pathway from microscopic system descriptions to macroscopic thermodynamic observables through Boltzmann's entropy formula and its derivatives.

The Second Law of Thermodynamics

Boltzmann's principle provides a statistical interpretation of the second law of thermodynamics. While the total entropy of an isolated system (thermodynamic universe) cannot decrease, the entropy of a subsystem can decrease at the expense of a greater entropy increase in its surroundings [21]. This statistical perspective explains why processes proceed spontaneously in certain directions – systems evolve toward macrostates with larger numbers of accessible microstates, as these are statistically more probable [21].

For example, with a hundred particles in a box, the number of microstates corresponding to approximately equal distribution between left and right halves vastly exceeds the single microstate with all particles confined to one half [21]. As system size increases, the probability of significant deviations from the maximum entropy state becomes vanishingly small, making the second law essentially deterministic for macroscopic systems despite its statistical origins [21].

Research Reagents and Computational Tools

Table 3: Essential Research Tools for Microcanonical Ensemble Studies

| Tool/Reagent | Function | Application Context |

|---|---|---|

| Molecular Dynamics Simulation | Numerical integration of Newton's equations at constant energy | Direct simulation of NVE ensemble; validation of statistical mechanics predictions |

| Discrete Boltzmann Method (DBM) | Mesoscopic computational approach for fluid dynamics | Simulation of reactive flows with hydrodynamic and thermodynamic nonequilibrium |

| Skeletal Hydrogen-Air Mechanism | Reduced chemical reaction scheme with 9 reactive species | Incorporation of realistic chemistry into Boltzmann framework for combustion studies |

| High-Performance Computing Clusters | Parallel processing for complex system simulations | Enabling statistically significant sampling of microstates in large systems |

| Multiple-Relaxation-Time (MRT) Scheme | Enhanced numerical stability in lattice Boltzmann methods | Accurate capture of chemical reaction dynamics, diffusion, and heat transfer |

Contemporary Applications and Research Directions

Cosmological Applications

The Boltzmann equation provides a powerful framework for describing evolution of particle distributions in cosmological contexts, particularly for analyzing departure from thermal equilibrium in the early universe [23]. Key applications include:

- Dark Matter Abundance: The Boltzmann equation tracks dark matter relic abundance through freeze-out processes, where dark matter particles depart from thermal equilibrium with the primordial plasma [23].

- Baryogenesis: Scenarios explaining matter-antimatter asymmetry utilize Boltzmann equations to model violation of CP symmetry and baryon number non-conservation in the early universe [23].

- Big Bang Nucleosynthesis (BBN): The framework describes how initial protons and neutrons form light elements, predicting abundances consistent with observational constraints [23].

Complex Fluid Dynamics and Reactive Flows

Modern extensions of Boltzmann's approach enable simulation of complex fluid systems with chemical reactions and thermodynamic nonequilibrium:

- Discrete Boltzmann Method (DBM): This approach incorporates skeletal chemical mechanisms (e.g., 12-step hydrogen-air combustion) into a mesoscopic framework, capturing both hydrodynamic and thermodynamic nonequilibrium behaviors in multi-component reactive flows [24].

- Hybrid Kinetic Models: Recent work combines discrete Boltzmann equations with species transport equations, achieving both physical fidelity and computational efficiency for complex compressible reacting flows [24].

Numerical Analysis and High-Order Computational Methods

The kinetic representation provided by the Boltzmann equation enables advanced numerical techniques:

- Solution Bounding: Kinetic representations allow extension of limiters designed for linear advection directly to Euler equations, providing analytic expressions for admissible regions of particle distribution functions [25].

- Positivity Preservation: Approaches derived from Boltzmann formulations maintain positivity of density, pressure, and internal energy while resolving strong discontinuities in gas dynamics simulations [25].

Boltzmann's principle, S = kB ln W, remains a cornerstone of statistical mechanics, providing the fundamental connection between microscopic configurations and macroscopic thermodynamics. Within the microcanonical ensemble framework, this principle reveals entropy as an emergent property of statistical distributions rather than an intrinsic quality of matter. The continuing relevance of Boltzmann's insight is evident in its applications across diverse domains, from foundational cosmological studies to cutting-edge computational fluid dynamics.

Contemporary research continues to extend Boltzmann's original conception, developing increasingly sophisticated numerical methods that leverage the kinetic theory foundation while addressing complex multi-physics problems. The integration of detailed chemical mechanisms into discrete Boltzmann frameworks represents just one example of how this century-old principle continues to enable new scientific advances. For researchers investigating isolated systems or processes where energy conservation is paramount, the microcanonical ensemble and Boltzmann's entropy formula provide an indispensable theoretical and computational foundation.

The microcanonical ensemble, or NVE ensemble, describes isolated thermodynamic systems with constant particle number (N), volume (V), and total energy (E) [1] [11]. It is a foundational concept in statistical mechanics, providing a framework for deriving macroscopic thermodynamic properties from the microscopic states of a system. Central to this framework is the concept of entropy, which quantifies the number of microscopic configurations accessible to the system under these macroscopic constraints.

While the thermodynamic entropy is unique, its statistical mechanical definition within the microcanonical ensemble has been the subject of long-standing discussion and debate [26] [27]. Several distinct but related definitions have been proposed, primarily differing in how they count the microstates associated with a given total energy E. This whitepaper examines the three principal definitions—the Boltzmann (or surface) entropy, the volume entropy, and the Gibbs (or surface) entropy—detailing their theoretical foundations, thermodynamic properties, and the contexts in which their use is appropriate. Understanding these distinctions is crucial for accurate thermodynamic modeling, especially in systems that are small, possess long-range interactions, or exhibit negative heat capacities.

Theoretical Foundations and Definitions

In the microcanonical ensemble, the fundamental assumption is that all microstates consistent with the given N, V, and E are equally probable [1] [11]. The entropy is defined through the number of these accessible microstates. The differences arise in the precise definition of "accessible."

The Phase Space Volume Function and Energy Shell

A key mathematical object is the phase space volume function, v(E), which counts the number of microstates with energy less than E [1]. v(E) = Number of microstates with H < E From this, one can define the density of states, Ω(E), which is the derivative of the volume function with respect to energy and represents the number of states per unit energy at a specific energy E. Ω(E) = dv(E)/dE In practice, for classical systems, one often considers an infinitesimally thin "energy shell" of width ω centered at E, where ω is small compared to E but large enough to contain a sufficient number of microstates [28] [1]. The number of microstates in this shell is given by W(E) = Ω(E) ω.

The Three Entropy Definitions

The three primary entropy definitions use these quantities differently, as summarized in the table below.

Table 1: Core Definitions of Microcanonical Entropy

| Entropy Name | Mathematical Definition | Key Physical Interpretation |

|---|---|---|

| Gibbs (Volume) Entropy | $Sv = kB \, \log v(E)$ | Measures the total number of microstates with energy up to E (a cumulative volume in phase space) [1]. |

| Boltzmann (Surface) Entropy | $Ss = kB \, \log \Omega(E) = k_B \, \log \left( \frac{dv}{dE} \right)$ | Measures the density of microstates at the precise energy E (a surface in phase space) [1] [27]. |

| Boltzmann Entropy (with width) | $SB = kB \, \log W(E) = k_B \, \log \left( \omega \frac{dv}{dE} \right)$ | Measures the number of microstates within a small, finite energy shell of width ω around E [1]. This differs from S_s only by an additive constant, $k_B \log \omega$. |

For large systems with many degrees of freedom, these definitions yield results that are equivalent up to an additive constant of order log N or smaller, which is negligible compared to the total entropy, which is of order N [28]. However, for small systems or those with specific energy spectra, the differences can have significant physical consequences.

Comparative Analysis of Thermodynamic Properties

The choice of entropy definition directly impacts derived thermodynamic quantities, most notably temperature.

Definition of Temperature

In thermodynamics, temperature is defined as the reciprocal of the partial derivative of entropy with respect to energy, at constant volume and particle number. The different entropy definitions lead to different temperature definitions [1]:

- Volume Temperature: $Tv = \left( \frac{\partial Sv}{\partial E} \right)^{-1}$

- Surface Temperature: $Ts = \left( \frac{\partial Ss}{\partial E} \right)^{-1} = \left( \frac{\partial S_B}{\partial E} \right)^{-1}$

Thermodynamic Consistency and Paradoxes

The thermodynamic behavior predicted by these definitions can be compared across several key criteria.

Table 2: Thermodynamic Properties and Behaviors of Different Entropy Definitions

| Property / Behavior | Gibbs (Volume) Entropy | Boltzmann (Surface) Entropy |

|---|---|---|

| General Equivalence | Equivalent to Boltzmann entropy for large systems with a monotonically increasing density of states [28]. | Equivalent to Gibbs entropy for large systems with a monotonically increasing density of states [28]. |

| Negative Temperatures | Cannot occur. $Sv$ is a monotonically increasing function of *E*, so $Tv$ is always positive [1] [27]. | Can occur. If the density of states Ω(E) decreases with energy, $Ss$ decreases, leading to a negative $Ts$ [1] [27]. |

| Additivity for Composite Systems | Properly additive. Maximizing $Sv$ for a composite system leads to the equalization of $Tv$ between subsystems [11]. | Not strictly additive. Can lead to anomalies when combining systems, such as energy flow between systems already at the same $T_s$ [1]. |

| Second Law of Thermodynamics | Can be violated in certain systems, such as those with negative heat capacity, where it may decrease during energy exchange [29]. | Can be violated in certain systems, such as those with long-range interactions, where it may decrease during energy exchange [29]. |

| Applicability to Small Systems | Can produce unphysical results for systems with few degrees of freedom [1]. | Can produce unphysical results for systems with few degrees of freedom (e.g., a one-degree-of-freedom offset) [1]. |

A recent comparative microscopic study of entropy production confirmed these violations, showing that for systems exhibiting negative heat capacity, the Gibbs entropy can violate the second law, while for systems with long-range interactions, the Boltzmann entropy can do the same [29]. In such cases, the associated temperature for the violating entropy definition becomes "meaningless" [29].

The Canonical Entropy as a Proposed Solution

Due to the ambiguities and potential paradoxes associated with microcanonical definitions, some researchers have argued that the fundamental relation S=S(U,V,N) should be calculated using the canonical ensemble [27]. This "canonical entropy" is derived from the system's Helmholtz free energy rather than by counting microstates at a fixed energy. It has been shown that this definition provides physically reasonable predictions for all thermodynamic properties across a wide range of models, including those with first-order phase transitions or decreasing density of states, where microcanonical definitions fail [27]. The preference for the canonical ensemble is further supported by the argument that a system that has ever been in thermal contact with a reservoir cannot be described by a perfectly sharp energy value, making the canonical ensemble more physically realistic than the microcanonical one [27].

Experimental and Computational Protocols

The theoretical differences between entropy definitions can be investigated through specific computational experiments.

Protocol: Comparing Entropy Definitions in Model Systems

This protocol outlines a general method for comparing the predictions of $Sv$, $Ss$, and $S_B$ for a given model.

- System Definition: Define the model Hamiltonian (e.g., Ising model, ideal gas, system with bounded spectrum) and its parameters.

- Density of States Calculation:

- For a quantum system with a discrete spectrum, numerically diagonalize the Hamiltonian to obtain the energy eigenvalues $E_i$.

- For a classical system with a continuous spectrum, use computational methods like Wang-Landau Monte Carlo [27] or molecular dynamics to compute the density of states Ω(E).

- Entropy Computation:

- Compute the cumulative number of states: $v(E) = \sum{Ei < E} 1$ (quantum) or $v(E) = \int_{H

- Compute the Gibbs entropy: $Sv(E) = kB \log v(E)$.

- Compute the density of states: $\Omega(E) = dv/dE$ (or, for discrete spectra, use a smoothing procedure [1] [27]).

- Compute the Boltzmann entropy: $Ss(E) = kB \log \Omega(E)$.

- (Optional) Define an energy width ω and compute $SB(E) = kB \log( \omega \Omega(E) )$.

- Compute the cumulative number of states: $v(E) = \sum{Ei < E} 1$ (quantum) or $v(E) = \int_{H

- Temperature and Thermodynamic Analysis:

- Numerically differentiate $Sv(E)$ and $Ss(E)$ to obtain $1/Tv$ and $1/Ts$.

- Analyze the behavior of these temperatures. Check for regions where $T_s$ becomes negative (where Ω(E) decreases).

- For a composite system, simulate thermal contact and observe the flow of energy and the evolution of total entropy according to each definition [29].

Protocol: Testing the Second Law

This protocol, based on [29], tests for violations of the second law.

- Setup: Model two systems, A and B, initially isolated at energies $EA$ and $EB$. Systems can be chosen from different classes:

- Class A: Two normal systems.

- Class B: A normal system and a negative temperature system (requires a bounded spectrum).

- Class C: A normal system and a system with negative heat capacity (e.g., with long-range interactions).

- Time Evolution: Allow the systems to exchange energy. This can be modeled using:

- Quantum Mechanics: Solve the time-dependent Schrödinger equation for the combined system, starting from a specific pure initial state (e.g., a local energy eigenstate) [29].

- Stochastic Dynamics: Use a master equation or stochastic thermodynamics to model the energy exchange process.

- Entropy Tracking: For each entropy definition ($Sv$, $Ss$, $S_{canonical}$, etc.), calculate its value throughout the time evolution.

- Result Analysis: A violation of the second law is indicated if the total entropy for a given definition is observed to decrease over time. Studies have shown that $Sv$ can violate the second law for Class C systems, while $Ss$ can violate it for Class B systems [29].

Research Reagents and Computational Tools

Table 3: Essential Reagents and Tools for Computational Studies of Entropy

| Tool / Reagent | Type | Function in Research |

|---|---|---|

| Wang-Landau Monte Carlo Algorithm | Computational Algorithm | Efficiently calculates the density of states Ω(E) directly, without needing thermodynamic integration [27]. |

| Molecular Dynamics (MD) Software | Software (e.g., LAMMPS, GROMACS) | Simulates the time evolution of classical many-body systems, from which thermodynamic properties can be extracted. |

| Random Matrix Theory (RMT) | Theoretical/Computational Model | Used to model complex quantum systems and study the dynamics of energy exchange and entropy production between subsystems [29]. |

| Two-level Systems / Ising Model | Theoretical Model | A fundamental model for testing entropy definitions and temperature scales in systems with a bounded, discrete energy spectrum [27]. |

| Potts Model (e.g., 12-state) | Theoretical Model | A classic model used to study first-order phase transitions in the microcanonical ensemble and test the validity of different entropy definitions [27]. |

| "Zentropy" Theory | Theoretical Framework | A parameter-free framework that links statistical probabilities of distinct configurations to macroscopic observables like free energy and entropy [30]. |

Conceptual Relationships and Pathways

The following diagram illustrates the logical and mathematical relationships between the key concepts and definitions discussed in this whitepaper.

The existence of multiple definitions for entropy within the microcanonical ensemble—Gibbs (volume), Boltzmann (surface), and the related Boltzmann entropy with a finite energy shell—highlights a subtle but important complexity in statistical mechanics. For macroscopic systems with a monotonically increasing density of states, these definitions are practically equivalent. However, for finite systems, systems with bounded energy spectra, or systems with long-range interactions, their predictions diverge significantly, leading to different temperatures and, in some cases, violations of the second law of thermodynamics.

The ongoing research in this area, including the proposal of a canonical entropy, suggests that the microcanonical ensemble, while conceptually fundamental, may not always be the most robust tool for defining entropy, especially for small or complex systems. Researchers in fields ranging from drug development studying small molecular systems to physicists exploring negative temperature states must be aware of these distinctions. The choice of entropy definition should be guided by the specific physical context of the system under study to ensure accurate and thermodynamically consistent results.

The microcanonical ensemble, also known as the NVE ensemble, provides the fundamental statistical mechanical description of an isolated system. It is defined as a collection of systems, each with an identical number of particles (N), confined within an identical volume (V), and possessing an identical total energy (E) [1] [2]. This ensemble is foundational because it directly connects to the elementary postulates of equilibrium statistical mechanics, most notably the postulate of equal a priori probabilities [1]. Within this framework, all microscopic states that are compatible with the fixed macroscopic constraints (N, V, E) are considered equally probable [2]. The primary role of statistical mechanics is to bridge the microscopic world of atoms and molecules with the macroscopic world of classical thermodynamics. In the microcanonical ensemble, this connection is masterfully achieved through the concept of entropy, as famously expressed by Boltzmann's principle, which serves as the cornerstone for deriving other thermodynamic properties such as temperature, pressure, and chemical potential [2].

The strict isolation of the system implies that it cannot exchange energy or particles with its environment [1]. Consequently, while the system's internal dynamics are complex and involve energy transfer between various degrees of freedom, the total energy remains a constant of motion. Although the microcanonical ensemble is conceptually straightforward and forms the basis for molecular dynamics simulation algorithms [1] [31], it can be mathematically cumbersome for theoretical calculations of real-world systems. Furthermore, the definitions of derived quantities like temperature can exhibit ambiguities not present in other ensembles [1]. Despite these challenges, the NVE ensemble remains a vital conceptual tool and is directly relevant for understanding the dynamics of isolated systems, such as those simulated in many molecular dynamics (MD) studies where total energy is conserved [31].

Entropy: The Bridge from Microscopic States to Thermodynamics

In the microcanonical ensemble, the fundamental thermodynamic potential is the entropy [1]. The link between the microscopic description of the system and its macroscopic entropy is provided by the Boltzmann entropy formula: [ S = k \ln W ] Here, (k) is Boltzmann's constant, and (W) is the number of distinct microscopic states (microstates) accessible to the system at the given energy (E) [2]. A microstate is a specific, detailed configuration of the system. For a classical system of (N) particles, this would be a specific point in phase space, defined by all the particles' positions ((\mathbf{r}^N)) and momenta ((\mathbf{p}^N)) [2].

However, the precise mathematical definition of (W) and, consequently, entropy, requires careful consideration, leading to multiple definitions in the literature. Table 1 summarizes the common definitions of entropy in the microcanonical ensemble.

Table 1: Definitions of Entropy in the Microcanonical Ensemble

| Entropy Type | Mathematical Expression | Description |

|---|---|---|

| Boltzmann Entropy | ( SB = kB \log \left( \omega \frac{dv}{dE} \right) = k_B \log W ) | Depends on the number of states (W) within a small energy range (\omega) [1]. |

| Volume Entropy | ( Sv = kB \log v(E) ) | Based on the volume (v(E)) of phase space with energy less than (E) [1]. |

| Surface Entropy | ( Ss = kB \log \frac{dv}{dE} = SB - kB \log \omega ) | Based on the density of states, (\frac{dv}{dE}), at energy (E) [1]. |

In these definitions, (v(E)) is the phase space volume with energy less than (E), and (\frac{dv}{dE}) is the density of states [1]. The volume and surface entropies do not depend on the arbitrary energy width (\omega), but different choices lead to different thermodynamic implications, particularly for small systems [1]. The logical flow from the fundamental postulate to the derivation of thermodynamics is outlined in Figure 1 below.

Figure 1: Logical pathway for deriving thermodynamics from the postulates of the microcanonical ensemble.

Derivation of Temperature, Pressure, and Chemical Potential

Temperature as a Derived Quantity

In the microcanonical ensemble, temperature is not an external control parameter but a derived statistical quantity that emerges from the system's energy dependence of entropy [1]. It is defined by the fundamental thermodynamic relation derived from the first law of thermodynamics [2]: [ TdS = dE + PdV ] From this, the temperature is identified as the partial derivative of entropy with respect to energy, holding the volume and particle number constant. The specific definition, however, depends on the choice of entropy. For the volume entropy (Sv) and the surface entropy (Ss), the corresponding temperatures are defined as [1]: [ \frac{1}{Tv} = \frac{dSv}{dE} \quad \text{and} \quad \frac{1}{Ts} = \frac{dSs}{dE} ] This definition aligns with the intuitive understanding of temperature as a measure of how a system's number of accessible states changes with its internal energy. A steep increase of (S) with (E) corresponds to a low temperature (the system requires a lot of energy to open up new states), while a gentle increase corresponds to a high temperature.

It is crucial to note that these different definitions are not always thermodynamically equivalent. For instance, (T_s) can exhibit strange behaviors, such as yielding negative temperatures when the density of states decreases with energy, and it does not always correctly predict the direction of heat flow when two microcanonical systems are brought into thermal contact [1]. This underscores the conceptual subtleties inherent in the microcanonical definition of temperature.

Pressure and Chemical Potential

The definitions of pressure and chemical potential in the NVE ensemble follow a similar logic, deriving from the fundamental thermodynamic relation. The pressure is conjugate to the volume and is related to the derivative of entropy with respect to volume, at constant energy and particle number [1] [2]: [ \frac{p}{T} = \left( \frac{\partial S}{\partial V} \right)_{E, N} ] This derivative measures how the number of accessible microstates changes as the system's volume is altered, providing a statistical mechanical definition for pressure.

Similarly, the chemical potential, which is conjugate to the particle number, is defined by how entropy changes when particles are added to the system at constant energy and volume [1]: [ \frac{\mu}{T} = -\left( \frac{\partial S}{\partial N} \right)_{E, V} ] The negative sign indicates that an increase in entropy (a positive (\partial S)) upon adding a particle corresponds to a lower chemical potential. Table 2 provides a consolidated summary of these key thermodynamic definitions within the NVE ensemble.

Table 2: Thermodynamic Quantities Derived from the Microcanonical Entropy

| Thermodynamic Quantity | Definition in NVE Ensemble | Thermodynamic Relation |

|---|---|---|

| Temperature (T) | ( \frac{1}{T} = \left( \frac{\partial S}{\partial E} \right)_{V, N} ) | Conjugate to energy |

| Pressure (p) | ( \frac{p}{T} = \left( \frac{\partial S}{\partial V} \right)_{E, N} ) | Conjugate to volume |

| Chemical Potential (μ) | ( \frac{\mu}{T} = -\left( \frac{\partial S}{\partial N} \right)_{E, V} ) | Conjugate to particle number |

Methodologies and Computational Protocols

Molecular Dynamics in the NVE Ensemble

The most direct computational method for studying the NVE ensemble is through Molecular Dynamics (MD) simulations. In a standard NVE-MD simulation, the system's time evolution is determined by numerically integrating Newton's equations of motion for all particles [31]: [ \frac{d^2\mathbf{r}i}{dt^2} = \frac{\mathbf{F}i}{mi} ] where (\mathbf{r}i) and (mi) are the position and mass of particle (i), and (\mathbf{F}i = -\frac{\partial V}{\partial \mathbf{r}_i}) is the force on it derived from the potential energy function (V) [31]. The global MD algorithm, as implemented in packages like GROMACS, follows a discrete stepping procedure [31]:

- Initialization: Input of initial particle positions, velocities (e.g., from a Maxwell-Boltzmann distribution), and the potential interaction function [31].

- Force Computation: Calculation of forces on all atoms from non-bonded and bonded interactions.

- Configuration Update: Numerical integration of Newton's equations to update particle positions and velocities.

- Output: Writing of trajectory data (positions, velocities) and thermodynamic properties (energies, temperature, pressure) at desired intervals.

This algorithm conserves the total energy of the system (the sum of kinetic and potential energy), making it a direct physical realization of the microcanonical ensemble. The temperature in such a simulation is not imposed but is a computed quantity derived from the average kinetic energy of the particles.

Computational research in this field relies on a suite of software tools and theoretical "reagents." The following table details key resources for conducting NVE ensemble simulations and analysis.

Table 3: Research Reagent Solutions for NVE Ensemble Simulations

| Name / Resource | Type | Primary Function in NVE Research |

|---|---|---|

| GROMACS | Software Package | High-performance MD software; includes algorithms for NVE integration and property calculation [31]. |

| LAMMPS | Software Package | Versatile MD simulator; can be used with NVE integration and various force fields [32]. |

| ms2 | Software Package | Molecular simulation tool for calculating thermodynamic properties across multiple ensembles [33]. |

| Andersen Thermostat | Algorithm | A stochastic thermostat sometimes used for initialization before NVE production runs [34]. |

| OPLS Force Field | Parameter Set | A family of potential energy functions used to describe atomic interactions in organic molecules and polymers [32]. |

| Boltzmann's Constant (k) | Fundamental Constant | Connects statistical mechanical quantities (like entropy) to macroscopic thermodynamics (temperature) [2]. |

Challenges and Conceptual Considerations

While the microcanonical ensemble is conceptually pure, its application comes with several important challenges and subtleties. A significant issue is the ambiguity in the definition of entropy, as highlighted in Section 2. The choice between (SB), (Sv), and (Ss) is not merely academic; it leads to different definitions of temperature ((Tv) vs. (Ts)) that are not equivalent [1]. For example, when two systems described by (Ts) are brought into thermal contact, energy may flow in counterintuitive ways, even when the initial (T_s) values are equal [1]. This contradicts the expected behavior of an intensive quantity like temperature and is a primary reason why the canonical ensemble (which has a unambiguous temperature) is often preferred for practical calculations [1].

Another key consideration is the treatment of phase transitions. In the strict thermodynamic sense, phase transitions are marked by non-analytic behavior in the thermodynamic potential. Under this definition, phase transitions can occur in the microcanonical ensemble for systems of any size [1]. This contrasts with the canonical and grand canonical ensembles, where true non-analyticities and phase transitions can only occur in the thermodynamic limit (i.e., for systems with infinitely many degrees of freedom) [1]. The energy fluctuations inherent in the canonical ensemble smooth out the free energy of finite systems. For sufficiently large systems, this difference becomes negligible, but it can be critical in the theoretical analysis of small systems [1].

Finally, the energy conservation requirement makes the microcanonical ensemble difficult to apply to many real-world experimental conditions where a system is in thermal contact with an environment. For systems that are not macroscopically large or perfectly isolated, energy fluctuations make ensembles like the canonical (NVT) or grand canonical (μVT) more appropriate and mathematically tractable [1].

Implementing NVE in Molecular Dynamics: A Practical Guide for Simulations

In statistical mechanics, the microcanonical ensemble, also known as the NVE ensemble, describes the possible states of an isolated mechanical system where the total number of particles (N), the system's volume (V), and the total energy (E) are all constant [1]. This ensemble represents a foundational concept in equilibrium statistical mechanics, built upon the postulate of equal a priori probabilities. In this framework, for a system with a precisely specified energy, every microstate within a narrow energy range is considered equally probable [1].

Within molecular dynamics (MD), an NVE simulation is achieved by integrating Newton's equations of motion without any temperature or pressure control mechanisms, thereby conserving the system's total energy [35]. This contrasts with other ensembles like NVT (canonical) or NPT (isothermal-isobaric), which employ artificial coupling to thermal or pressure baths to mimic experimental conditions. The NVE ensemble is particularly valuable for exploring the constant-energy surface of conformational space without the perturbations introduced by such couplings [35]. It is the ensemble generated by a straightforward application of Newton's second law, making it the most basic and historically significant ensemble for MD simulations [36].

Theoretical Foundation of the NVE Ensemble

Formal Definition and Thermodynamic Relations