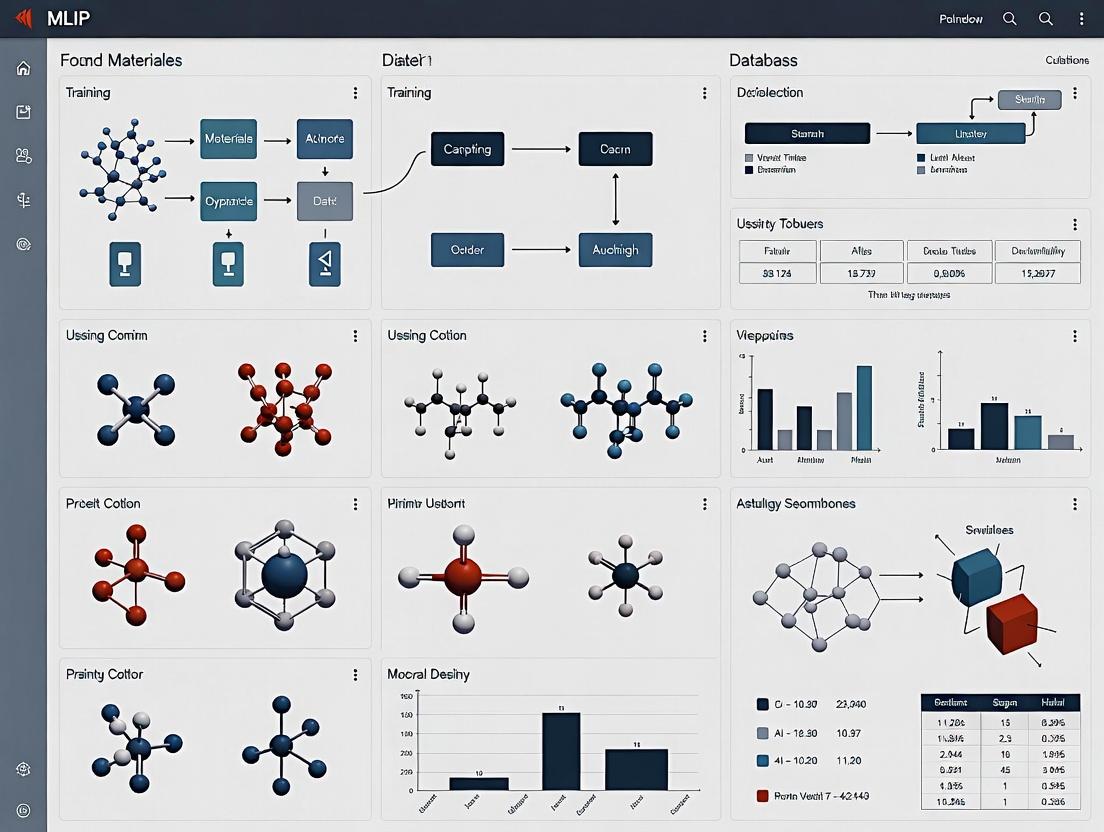

Mastering the MLIP Database: A Complete Training Guide for Biomedical Researchers & Drug Developers

This comprehensive guide provides biomedical researchers and drug development professionals with structured training on the Materials Project (MLIP) database.

Mastering the MLIP Database: A Complete Training Guide for Biomedical Researchers & Drug Developers

Abstract

This comprehensive guide provides biomedical researchers and drug development professionals with structured training on the Materials Project (MLIP) database. It covers everything from foundational principles and data exploration to advanced computational workflows, common troubleshooting, and validation techniques. Learn how to leverage this powerful informatics platform to accelerate materials discovery, predict drug-material interactions, and optimize biomaterials for clinical applications.

What is the MLIP Database? Core Concepts for Biomedical Researchers

The Materials Project (MP) is a core, open-access database in computational materials science, providing calculated properties for over 150,000 inorganic compounds. Its Machine Learning Interatomic Potentials (MLIP) database represents a transformative extension, enabling large-scale atomistic simulations with near-quantum accuracy for accelerated materials discovery and design, critical for advanced research in energy storage, catalysis, and semiconductors.

Core Infrastructure of The Materials Project

The Materials Project is built on a high-throughput computing framework, systematically generating materials data using density functional theory (DFT).

Table 1: Key Quantitative Metrics of The Materials Project Core Database (as of 2024)

| Metric | Value | Description |

|---|---|---|

| Total Materials | > 150,000 | Unique inorganic crystal structures. |

| Properties Calculated | > 1.2 Billion | Individual data points including energy, band gap, elasticity. |

| Active Users | > 400,000 | Registered researchers worldwide. |

| Annual Calculations | ~10 Million | DFT calculations performed to expand/update data. |

| API Queries/Day | > 2 Million | Programmatic access requests. |

Key Computational Workflow

Protocol 1: High-Throughput DFT Calculation Protocol

- Input Curation: Structures sourced from the Inorganic Crystal Structure Database (ICSD) and theoretically predicted prototypes.

- Structure Optimization: Geometry relaxation using the Vienna Ab initio Simulation Package (VASP) with the PBE functional and projector-augmented wave (PAW) pseudopotentials.

- Property Calculation: A sequential workflow calculates:

- Final energy and optimized geometry.

- Electronic band structure and density of states.

- Elastic tensor (for sufficiently stable materials).

- Phonon dispersion (for a subset).

- Surface energies and Wulff shapes.

- Data Storage: Results are stored in a MongoDB database with a defined API for querying.

The MLIP Database: Principles and Architecture

The MLIP database addresses the computational cost bottleneck of DFT by providing pre-trained machine learning interatomic potentials.

MLIP Methodology

Machine Learning Interatomic Potentials are statistical models that map atomic configurations (positions, species) to total energy and forces. The MP MLIP database primarily leverages the moment tensor potential (MTP) formalism and graph neural network (GNN) approaches.

Protocol 2: MLIP Training and Validation Protocol

- Training Set Generation: Select diverse configurations from:

- DFT-MD (molecular dynamics) trajectories at varying temperatures.

- Perturbed crystal structures (phonon displacements).

- Surface and defect configurations.

- Feature Representation: Encode atomic environments using descriptors like:

- MTP: Basis functions of interatomic distances and angles.

- GNN: Graph with atoms as nodes and bonds as edges.

- Model Training: Minimize loss function

L = ||E_DFT - E_MLIP|| + α ||F_DFT - F_MLIP||. - Active Learning: Iteratively run MD with the MLIP, identify configurations with high predictive uncertainty (

σ), compute DFT for those, and add them to the training set. - Validation: Test on held-out DFT data for energy, force, and property accuracy (e.g., lattice dynamics, diffusion barriers).

Table 2: Performance Benchmarks of Example MLIPs in the Database

| Material System | MLIP Type | Energy MAE (meV/atom) | Force MAE (meV/Å) | Speed-up vs. DFT |

|---|---|---|---|---|

| Li-Si (Battery Anodes) | MTP | 2.5 | 85 | ~10^5 |

| SiO2 (Amorphous) | GNN (M3GNet) | 4.8 | 110 | ~10^4 |

| High-Entropy Alloy | MTP | 3.1 | 95 | ~10^5 |

| MoS2 (2D Layer) | GNN (CHGNet) | 2.2 | 78 | ~10^4 |

Database Structure and Access

The MLIP database is accessible via the MP API. Key data objects include:

- Potential Object: Contains model weights, descriptor parameters, and convergence data.

- Training Set: The DFT-calculated configurations used.

- Validation Metrics: Table of accuracy benchmarks (as in Table 2).

Integration in MLIP Training Research Workflow

Within a thesis on MLIP database training research, the MP MLIP ecosystem serves as both a source of training data and a benchmark platform.

Key Research Reagent Solutions

Table 3: Essential Toolkit for MLIP Development and Validation Research

| Research 'Reagent' / Tool | Function in MLIP Research | Example/Note |

|---|---|---|

| VASP / Quantum ESPRESSO | Generates ab initio ground-truth data for training and testing. | Primary DFT engines. |

| MLIP Frameworks (fitkit, Allegro) | Software to train MTPs or GNN-based potentials from data. | |

| Atomic Simulation Environment (ASE) | Python scripting interface for setting up, running, and analyzing atomistic simulations. | Universal tool for workflow automation. |

| LAMMPS / GPUMD | High-performance MD simulators with MLIP plug-in support. | For running large-scale simulations with trained potentials. |

| pymatgen | Python library for materials analysis; core dependency of MP. | Used for structure manipulation, phase diagram analysis, and accessing MP API. |

| MP API Key | Enables programmatic querying and downloading of structures, DFT data, and MLIPs. | Obtained via free registration on materialsproject.org. |

| Active Learning Controller | Custom code to manage the iterative training loop, querying uncertainty. | Often built on ASE and MLIP framework APIs. |

Validation Experiment Protocol

Protocol 3: Protocol for Validating a New MLIP Against MP Benchmarks

- Benchmark Selection: From the MP MLIP database, download:

- The standard training/validation set for a target system (e.g., Li-Si).

- The published benchmark metrics (Table 2).

- Model Training: Train your novel MLIP architecture on the identical training set.

- Property Calculation: Use the trained potential to compute:

- Equation of state (energy vs. volume).

- Phonon dispersion spectrum.

- Lithium diffusion barrier via nudged elastic band (NEB) method.

- Comparison: Compare your results to both:

- The DFT validation data.

- The existing MLIP benchmark data from the MP database.

- Reporting: Document mean absolute error (MAE) and computational efficiency relative to the established baselines.

The Materials Project's MLIP database is a foundational resource that shifts the research paradigm from single-point DFT calculation to high-fidelity, large-scale atomistic simulation. For the MLIP training researcher, it provides standardized datasets, performance benchmarks, and a dissemination platform. Future evolution involves more diverse chemical spaces (e.g., molecular systems relevant to drug development), automated training pipelines, and tighter integration with in silico characterization experiments.

Within the domain of Machine Learning Interatomic Potentials (MLIP) for materials project database training, the foundational step is the systematic encoding of atomic systems into computable data types. This guide details the core data structures, their associated properties, and the critical calculations that transform raw atomic coordinates into feature-rich datasets for training robust MLIPs. This process is central to the broader thesis that high-fidelity, scalable MLIPs are contingent on rigorous, standardized data representation and featurization protocols.

Core Data Structures in MLIP Development

The primary data object representing an atomic system must encapsulate both structural and chemical information.

Table 1: Core Data Structures for Atomic Systems

| Data Structure | Primary Components | Description | Common File Format |

|---|---|---|---|

| Atomic Configuration | positions (Nx3 matrix), cell (3x3 matrix), atomic_numbers (N vector), pbc (Periodic Boundary Conditions) |

A snapshot of N atoms in a defined space, the fundamental unit for single-point calculations. | Extensible XYZ, POSCAR (VASP) |

| Trajectory / Dataset | Sequence of Atomic Configurations, energies, forces (Nx3 matrix per config), stresses (optional) |

A collection of configurations with corresponding quantum-mechanical labels, forming the training/validation set. | ASE .db, .hdf5, .npz |

| Graph Representation | Nodes (atom features), Edges (bond/pair features), Global state | A connectivity-aware representation critical for message-passing neural network potentials. |

Title: MLIP Data Processing Pipeline

Essential Properties and Their Calculations

Key properties are divided into invariant (scalar, vector, tensor) labels for training and derived features that serve as model inputs.

Table 2: Essential Target Properties (Labels) for MLIP Training

| Property | Symbol | Type | Calculation Source | Purpose in Training |

|---|---|---|---|---|

| Total Energy | E | Scalar | DFT (e.g., VASP, Quantum ESPRESSO) | Primary supervised target; must be extensive. |

| Atomic Forces | F_i | Vector (N x 3) | Negative gradient of E w.r.t. atomic positions. | Constrains model to correct physics, crucial for dynamics. |

| Stress Tensor | σ_αβ | Tensor (3x3 or 6) | Derivative of E w.r.t. strain. | Essential for training on deformed cells. |

Table 3: Common Atomic Environment Features (Inputs)

| Feature Type | Description | Calculation Formula / Method | Dimensionality |

|---|---|---|---|

| Atom-centered Symmetry Functions (ACSF) | Radial and angular descriptors encoding local environment. | ( Gi^R = \sum{j\neq i} e^{-\eta (R{ij} - Rs)^2} \cdot fc(R{ij}) ) ( Gi^a = 2^{1-\zeta} \sum{j,k\neq i} (1+\lambda \cos\theta{ijk})^\zeta \cdot e^{-\eta (R{ij}^2+R{ik}^2+R{jk}^2)} \cdot fc(R{ij})fc(R{ik})fc(R{jk}) ) | Set of ~50-100 scalars per atom. |

| Smooth Overlap of Atomic Positions (SOAP) | Spectral descriptor based on the neighbor density kernel. | ( \rhoi(\mathbf{r}) = \sum{j} \exp(-\frac{|\mathbf{r} - \mathbf{r}{ij}|^2}{2\sigma^2}) fc(r_{ij}) ) Projected onto spherical harmonics and radial basis. | Vector of length ~( (n{max}^2 * l{max}) ). |

| One-hot / Atomic Number | Basic chemical identity. | ( Z_i \in \mathbb{N} ) | Integer or one-hot vector. |

Title: Atom-Centered Feature Construction

Experimental Protocol: Generating a MLIP Training Dataset

A standard workflow for curating a dataset suitable for training a generalizable MLIP.

Protocol: Ab-Initio Molecular Dynamics (AIMD) Sampling for MLIP Training

System Preparation:

- Select representative structures (bulk, surfaces, defects, clusters) from the target phase space.

- Use tools like

ASE(Atomic Simulation Environment) orpymatgento generate initialAtomic Configurationobjects. - Define simulation cell size ensuring convergence of relevant properties.

First-Principles Calculations:

- Perform Density Functional Theory (DFT) calculations using codes like VASP or Quantum ESPRESSO.

- Single-point Calculations: Compute E, F for diverse, randomly perturbed structures.

- AIMD Trajectories: Run MD simulations at relevant temperatures (e.g., 300K, 600K, 1200K) using a NVT or NPT ensemble to sample thermal configurations. Use a time step of 0.5-2.0 fs.

- Explicit Deformations: Apply isotropic/anisotropic strains, shear, and tensile deformations to the cell, computing E, F, and stress (σ) for each.

Data Extraction & Labeling:

- Extract atomic positions, cell vectors, atomic numbers, total energy, forces, and stresses from calculation outputs.

- Assemble into a trajectory

Datasetobject. Ensure energy is extensive (not normalized per atom).

Dataset Curation & Splitting:

- Deduplication: Use a similarity metric (e.g., SOAP kernel) to remove near-identical configurations.

- Stratified Splitting: Split data into training (80%), validation (10%), and test (10%) sets. Ensure splits preserve distribution across temperatures, pressures, and structural motifs. The test set should be held out completely for final model evaluation.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Software & Libraries for MLIP Data Handling

| Tool / Library | Primary Function | Key Utility in MLIP Pipeline |

|---|---|---|

| ASE (Atomic Simulation Environment) | Python library for setting up, running, and analyzing atomistic simulations. | Universal I/O for Atomic Configurations, calculator interface, built-in analysis tools. |

| pymatgen | Python library for materials analysis. | Advanced structure generation, analysis, and transformation. |

| DPDKIT / AMPTorch | Deep learning toolkits for atomistic systems. | Provide high-level APIs for featurization (ACSF, etc.) and model training. |

| JAX / PyTorch Geometric | Numerical computing / Graph Neural Network libraries. | Enables custom, high-performance implementation of featurization and graph models. |

| Atomic Simulation Data Format (ASDF) or HDF5 | Binary file formats for hierarchical scientific data. | Efficient storage of large Trajectory / Dataset objects with metadata. |

| SOAPify / dscribe | Specialized descriptor calculation libraries. | Efficient computation of SOAP, ACSF, and other symmetry-invariant features. |

Title: MLIP Development and Validation Workflow

Navigating the Web Interface and API for Efficient Browsing

The Materials Project (MP) database is a cornerstone for high-throughput computational materials science, enabling the discovery and design of novel compounds. Within the broader thesis on Machine Learning Interatomic Potentials (MLIP) training research, efficient navigation of the MP's web interface and API is critical. This guide provides a technical roadmap for researchers, scientists, and drug development professionals to programmatically access and analyze data for training and validating next-generation MLIPs, which require extensive, high-fidelity datasets of structural and energetic properties.

Core Architecture & Data Access Points

The MP ecosystem consists of a public web interface (https://materialsproject.org) and a RESTful API (api.materialsproject.org). The API provides structured access to over 150,000 inorganic crystal structures, formation energies, band structures, elastic tensors, and more.

Table 1: Primary MP Data Endpoints for MLIP Training

| API Endpoint | Key Data Returned | Relevance to MLIP Training |

|---|---|---|

/materials/summary/ |

Core material identifiers, formulas, space groups, volumes. | Dataset curation and filtering. |

/materials/thermo/ |

Formation energy, energy above hull, stability. | Label generation for potential energy surfaces. |

/materials/elasticity/ |

Elastic tensor, bulk/shear modulus, Poisson's ratio. | Training on mechanical property derivatives. |

/materials/surface_properties/ |

Surface energies, Wulff shapes. | Critical for nanoparticle/catalytic MLIPs. |

/materials/xas/ |

Theoretical X-ray Absorption Spectra. | Electronic structure validation. |

Experimental Protocol: Building a Curated Dataset via the API

A standard protocol for acquiring training data for an MLIP focused on battery cathode materials is detailed below.

Methodology:

- Authentication: Obtain an API key from the MP dashboard. Use it in request headers:

{"X-API-KEY": "<YOUR_KEY>"}. - Targeted Query: Use the

/materials/summary/endpoint withPOSTrequests for bulk filtering. A sample query body for layered oxide cathodes:

- Data Enrichment: For returned

material_idvalues, fetch complementary thermodynamic (/thermo/) and elastic (/elasticity/) data via parallelGETrequests. - Structure Processing: Parse the returned CIF or JSON crystal structures into framework-specific objects (e.g., Pymatgen

Structure). Apply standard symmetrization and primitive cell reduction. - Validation Split: Use the

energy_above_hullfield to segregate stable (hull < 0.05 eV/atom) and metastable phases, creating distinct training and validation sets.

Title: API Workflow for MLIP Training Data Acquisition

Quantitative Data: Benchmarking Computational Properties

The reliability of MLIP predictions depends on the quality of underlying Density Functional Theory (DFT) data from MP. Key benchmarks are summarized below.

Table 2: Benchmark Accuracy of Core MP DFT Data (PBE-GGA)

| Property Type | Mean Absolute Error (MAE) vs. Experiment | Typical Range in MP Database | Relevance to MLIP |

|---|---|---|---|

| Formation Energy | ~0.08 eV/atom [1] | -5 to 0 eV/atom | Primary training target. |

| Lattice Parameter | ~1-2% | 2-20 Å | Critical for structural fidelity. |

| Band Gap (PBE) | ~40% (underestimated) | 0-10 eV | Electronic property learning. |

| Bulk Modulus | ~10-15% | 10-300 GPa | Mechanical response learning. |

[1] S. P. Ong et al., Comput. Mater. Sci., 2013, 68, 314–319.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Programmatic MP Navigation & MLIP Training

| Tool / Solution | Function | Key Feature for MLIP Research |

|---|---|---|

| MPRester (Pymatgen) | Python wrapper for the MP API. | Simplifies data retrieval and converts API responses to Pymatgen objects. |

| Pymatgen | Python materials analysis library. | Core structure manipulation, symmetry analysis, and file I/O (CIF, POSCAR). |

| ASE (Atomic Simulation Environment) | Python simulation toolkit. | Interface for converting MP structures to formats for MLIP codes (e.g., AMPTorch, MACE). |

| Jupyter Notebook | Interactive computing platform. | Essential for exploratory data analysis, visualization, and sharing workflows. |

| FireWorks/Atomate | Workflow automation. | Automates complex high-throughput DFT calculations to augment MP data. |

Advanced Pathway: From Database Query to Trained Potential

The logical flow from accessing raw database entries to deploying a functional MLIP involves several integrated stages.

Title: Pathway from MP Data to Deployed MLIP

Efficient navigation of the Materials Project's web and API interfaces is a foundational skill for building the large, high-quality datasets required for robust Machine Learning Interatomic Potentials. By leveraging the structured protocols and tools outlined in this guide, researchers can accelerate the cycle of data acquisition, model training, and validation, directly contributing to the advancement of predictive materials science for energy storage, catalysis, and beyond.

The systematic development of next-generation biomaterials, drug carriers, and implants is being revolutionized by high-throughput computational screening and machine learning interatomic potential (MLIP) training. This whitepaper details the experimental and computational workflows essential for validating MLIP model predictions from databases like the Materials Project, focusing on translational biomedical applications. The integration of MLIP-driven discovery with rigorous experimental validation forms a closed-loop research paradigm, accelerating the design of materials with tailored biological responses.

Core Material Classes: Properties and Quantitative Benchmarks

Biomaterials for Tissue Engineering

Materials must exhibit biocompatibility, appropriate mechanical properties, and surface characteristics that direct cellular behavior.

Table 1: Key Properties of Common Biomaterial Classes

| Material Class | Example Materials | Young's Modulus (GPa) | Degradation Time in vivo | Protein Adsorption Capacity (µg/cm²) | Primary Clinical Use |

|---|---|---|---|---|---|

| Bioceramics | Hydroxyapatite (HA), β-Tricalcium Phosphate (TCP) | 40 - 117 | 6 - 24 months | 1.2 - 2.5 | Bone grafts, coatings |

| Bioactive Glasses | 45S5 Bioglass, 13-93 | 35 - 75 | 1 - 12 months | 2.0 - 3.5 | Bone regeneration, wound healing |

| Biopolymers | PCL, PLA, PLGA | 0.2 - 3.0 | 3 months - 2+ years | 0.8 - 1.8 | Sutures, scaffolds, carriers |

| Metallic Alloys | Ti-6Al-4V, Nitinol, Mg alloys | 55 - 110 | Non-degradable / 6-12 mos (Mg) | 1.5 - 2.2 | Orthopedic/dental implants, stents |

| Hydrogels | Alginate, GelMA, PEGDA | 0.001 - 0.1 | Days - months | 0.5 - 2.0 | Drug delivery, soft tissue models |

Drug Carrier Systems

Carrier efficacy is quantified by drug loading capacity, release kinetics, and targeting efficiency.

Table 2: Performance Metrics of Nanoscale Drug Carriers

| Carrier Type | Typical Size (nm) | Avg. Drug Loading (wt%) | Typical Release Half-life (in vitro) | Active Targeting Ligand Functionalization Efficiency (%) |

|---|---|---|---|---|

| Liposomes | 80 - 200 | 5 - 10% | 2 - 24 hours | 60 - 85% |

| Polymeric NPs (PLGA) | 50 - 300 | 10 - 25% | 1 - 14 days | 70 - 90% |

| Mesoporous Silica NPs | 50 - 200 | 15 - 30% | 6 - 48 hours | 80 - 95% |

| Dendrimers (PAMAM) | 5 - 15 | 5 - 15% | 1 - 12 hours | >90% |

| Micelles | 20 - 100 | 5 - 20% | 2 - 48 hours | 50 - 75% |

Implantable Devices

Long-term performance depends on corrosion resistance, fatigue strength, and interfacial bonding.

Table 3: Comparative Data for Permanent Implant Materials

| Material | Corrosion Rate (µm/year) | Fatigue Strength (MPa) | Bone-Implant Contact (%) after 12 wks | Wear Rate (mm³/million cycles) |

|---|---|---|---|---|

| Ti-6Al-4V (ELI) | <0.1 | 500 - 600 | 50 - 70% | N/A (bearing surfaces not typical) |

| CoCrMo Alloy | <0.1 | 400 - 550 | 30 - 50% | 0.05 - 0.15 |

| 316L Stainless Steel | ~1.0 | 250 - 400 | 20 - 40% | ~0.5 |

| PEEK Polymer | N/A | 70 - 100 | 10 - 25% | 1.0 - 5.0 |

| Oxinium (Oxidized Zr) | <0.1 | >500 | 55 - 75% | <0.01 |

Experimental Protocols for Validation of MLIP-Predicted Materials

Protocol: Hydroxyapatite (HA) Synthesis & Characterization (Predicted Dopant Effects)

Objective: Validate MLIP-predicted enhancement of HA mechanical properties via ionic doping (e.g., Sr²⁺, Zn²⁺, Si⁴⁺).

Materials: Calcium nitrate tetrahydrate, Ammonium phosphate dibasic, Strontium nitrate, Zinc nitrate, Tetraethyl orthosilicate, Ammonium hydroxide.

Method:

- Wet Chemical Precipitation: For Sr-doped HA (10 at%), prepare 0.5M Ca(NO₃)₂ and 0.3M (NH₄)₂HPO₄ solutions. Mix Sr(NO₃)₂ to replace 10% of Ca molarity. Adjust pH to 10-11 with NH₄OH. Add phosphate solution dropwise to the cation solution at 90°C under stirring. Age precipitate for 24h.

- Washing & Drying: Centrifuge, wash with DI water and ethanol, dry at 80°C for 24h.

- Calcination: Sinter at 1100°C for 2h (ramp: 5°C/min).

- Characterization:

- XRD: Confirm phase purity and calculate crystallite size via Scherrer equation.

- FTIR: Identify phosphate and hydroxyl bands.

- SEM/EDS: Analyze morphology and confirm dopant presence.

- Nanoindentation: Measure Young's modulus and hardness (minimum 15 indents).

Protocol: PLGA Nanoparticle Fabrication & Drug Release Kinetics

Objective: Experimentally determine drug loading and release profiles for an MLIP-modeled polymer-drug system.

Materials: PLGA (50:50, 24kDa), Docetaxel, Polyvinyl alcohol (PVA), Dichloromethane (DCM), Phosphate Buffered Saline (PBS, pH 7.4).

Method (Double Emulsion - W/O/W):

- Internal Aqueous Phase: Dissolve 5 mg drug in 0.5 mL DCM.

- Oil Phase: Dissolve 100 mg PLGA in 2 mL DCM.

- Primary Emulsion: Combine drug and polymer solutions, sonicate (30% amplitude, 30s) to form W/O emulsion.

- Secondary Emulsion: Add primary emulsion to 10 mL of 2% w/v PVA solution, homogenize at 10,000 rpm for 2 min to form W/O/W emulsion.

- Solvent Evaporation: Stir emulsion overnight at room temperature to evaporate DCM.

- Purification: Centrifuge at 18,000 rpm for 30 min, wash pellets with DI water 3x.

- Lyophilization: Freeze at -80°C and lyophilize for 48h.

- Analysis:

- Size/Zeta: Dynamic Light Scattering (DLS).

- Drug Loading: Dissolve 5 mg NPs in DCM, extract into acetonitrile, analyze via HPLC. Calculate Loading Capacity (%) = (Mass of drug in NPs / Total mass of NPs) x 100.

- Release Study: Suspend 10 mg NPs in 10 mL PBS + 0.1% Tween 80 at 37°C. At timepoints, centrifuge, sample supernatant (replenish medium), and quantify drug via HPLC.

Protocol:In VitroBiocompatibility Assessment (ISO 10993-5)

Objective: Validate MLIP-predicted biocompatibility of a novel implant alloy surface coating.

Materials: MC3T3-E1 osteoblast cells, Dulbecco's Modified Eagle Medium (DMEM), Fetal Bovine Serum (FBS), Penicillin/Streptomycin, MTT reagent, Test material discs (10mm diameter).

Method (MTT Assay):

- Material Preparation: Sterilize material discs by autoclaving or UV irradiation for 1h per side.

- Cell Seeding: Seed discs in 24-well plate at 2 x 10⁴ cells/well in complete DMEM.

- Incubation: Culture for 1, 3, and 7 days at 37°C, 5% CO₂.

- MTT Incubation: At endpoint, replace medium with 300 µL serum-free DMEM + 30 µL MTT solution (5 mg/mL). Incubate 3h.

- Solubilization: Remove medium, add 300 µL DMSO to dissolve formazan crystals.

- Quantification: Transfer 100 µL to 96-well plate, read absorbance at 570 nm with 650 nm reference. Calculate cell viability relative to tissue culture plastic control.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Reagents for Biomaterials Synthesis and Testing

| Reagent / Material | Supplier Examples | Function & Critical Notes |

|---|---|---|

| PLGA (50:50, 24kDa) | Sigma-Aldrich, Lactel, Corbion | Biodegradable polymer backbone for NPs/implants. Ratio & MW dictate degradation rate. |

| High Purity Titanium Powder (<45µm) | TLS Technik, AP&C | Raw material for additive manufacturing of porous implants. Oxygen content critical. |

| Fetal Bovine Serum (FBS) | Gibco, HyClone | Essential cell culture supplement. Batch testing for specific cell lines required. |

| MTT (Thiazolyl Blue Tetrazolium Bromide) | Thermo Fisher, Abcam | Yellow tetrazolium salt reduced to purple formazan by living cell mitochondria. |

| Polyvinyl Alcohol (PVA, 87-90% hydrolyzed) | Sigma-Aldrich, Alfa Aesar | Common stabilizer/surfactant in NP formulation. Degree of hydrolysis affects performance. |

| RGD Peptide (Arg-Gly-Asp) | Bachem, Tocris | Integrin-binding motif for covalent grafting to materials to enhance cell adhesion. |

| DAPI (4',6-Diamidino-2-Phenylindole) | Thermo Fisher, Sigma-Aldrich | Blue-fluorescent nuclear counterstain for cell viability/attachment assays on materials. |

| Simulated Body Fluid (SBF) | Biorelevant.com, prepared in-house | Ion concentration similar to human blood plasma; tests bioactivity (apatite-forming ability). |

| Lipofectamine 3000 | Thermo Fisher | Transfection reagent for introducing siRNA/plasmid into cells on biomaterial surfaces (gene expression studies). |

| AlamarBlue (Resazurin) | Thermo Fisher, Bio-Rad | Fluorescent oxidation-reduction indicator for non-destructive, long-term cell proliferation tracking. |

Visualization of Core Concepts and Workflows

MLIP-Driven Closed-Loop Biomaterials Research (76 chars)

Targeted Drug Carrier Intracellular Trafficking Pathway (76 chars)

Understanding Computational Data (DFT, ML Potentials) and its Reliability

The development of robust Machine Learning Interatomic Potentials (MLIPs) for large-scale materials databases, such as the Materials Project, represents a paradigm shift in computational materials science and drug development. This whitepaper examines the foundational computational data sources—Density Functional Theory (DFT) and ML Potentials—and critically assesses their reliability. The core thesis is that the accuracy and predictive power of any MLIP model trained on a massive materials database are intrinsically bounded by the fidelity, consistency, and systematic error profile of the underlying DFT training data. Reliability is therefore not an inherent property of the MLIP but a transferable characteristic from its quantum mechanical foundation.

Density Functional Theory: The Foundational Data Source

DFT provides the first-principles data used to train most MLIPs. Its reliability is governed by the choice of exchange-correlation functional and computational parameters.

2.1 Key DFT Methodologies & Protocols

- Protocol for High-Throughput DFT (as used in Materials Project):

- Software: VASP (Vienna Ab initio Simulation Package).

- Pseudopotentials: Projector Augmented-Wave (PAW) potentials.

- Functional: Primarily the Perdew-Burke-Ernzerhof (PBE) generalized gradient approximation (GGA).

- Energy Cutoff: Set to 1.3 times the maximum ENMAX specified in the POTCAR files.

- k-point Density: A uniform Γ-centered k-point mesh with spacing of ~0.25 Å⁻¹.

- Convergence Criteria: Electronic steps converged to 10⁻⁵ eV; ionic relaxation until forces are below 0.01 eV/Å.

- Magnetic Ordering: Spin-polarized calculations initialized with high magnetic moments.

- DFT+U: A Hubbard U correction is applied for certain transition metal oxides to better localize d and f electrons.

2.2 Quantitative Reliability of Common DFT Functionals The following table summarizes the typical performance of standard DFT functionals against experimental benchmarks.

Table 1: Performance Metrics of Common DFT Exchange-Correlation Functionals

| Functional (Type) | Lattice Constant Error (Typical) | Cohesive/Binding Energy Error (Typical) | Band Gap Error (Typical) | Computational Cost (Relative to PBE) | Primary Use Case in MLIP Training |

|---|---|---|---|---|---|

| PBE (GGA) | ~1% overestimation | ~10-20% underestimation | Severe underestimation (often 50-100%) | 1x (Baseline) | High-throughput structural, elastic, vibrational properties. |

| PBEsol (GGA) | <1% (improved for solids) | Similar to PBE | Similar to PBE | ~1x | Improved lattice geometries. |

| SCAN (meta-GGA) | <1% | ~5-10% improvement | Moderate improvement | ~3-5x | Higher accuracy for diverse bonding. |

| HSE06 (Hybrid) | Excellent (~0.5%) | Good improvement | Dramatic improvement (~0.3 eV mean error) | ~50-100x | Electronic properties, defect formation energies. |

2.3 Research Reagent Solutions for DFT Calculations

Table 2: Essential "Research Reagent" Toolkit for DFT Data Generation

| Item/Software | Function & Role in the Pipeline |

|---|---|

| VASP / Quantum ESPRESSO / ABINIT | Core Simulation Engine: Solves the Kohn-Sham equations to compute total energy, electron density, and derived properties. |

| PseudoDojo / GBRV / SG15 Pseudopotentials | Electron-ion Interaction: Pre-calculated potentials that replace core electrons, drastically reducing computational cost while maintaining accuracy. |

| PBE / SCAN / HSE06 Functionals | Exchange-Correlation Kernel: The critical approximation defining the quantum mechanical accuracy of the calculation. |

| FINDSYM / spglib | Symmetry Analysis: Identifies crystal symmetry from atomic coordinates, essential for correct k-point sampling and property derivation. |

| pymatgen / ASE | Python Frameworks: Scripting and automation of high-throughput calculation workflows, input file generation, and output parsing. |

Machine Learning Potentials: Extending the Reach

MLIPs are trained on DFT data to achieve near-DFT accuracy at orders-of-magnitude lower computational cost, enabling molecular dynamics and large-scale simulations.

3.1 Core MLIP Architectures & Training Protocol

- Generic Protocol for Training an MLIP on a Materials Project Database:

- Data Curation: Extract diverse structures (bulk, defects, surfaces, disordered) and their DFT-computed energies/forces/stresses from the database.

- Descriptor Generation: Convert atomic environments into invariant mathematical representations (e.g., atom-centered symmetry functions, smooth overlap of atomic positions (SOAP), or atomic cluster expansion).

- Model Selection: Choose an architecture (e.g., Neural Network, Gaussian Process, Graph Neural Network like MEGNet, or equivariant model like NequIP).

- Training Split: Divide data into training (≈80%), validation (≈10%), and hold-out test (≈10%) sets. Ensure compositional/structural diversity in each.

- Loss Function: Minimize a combined loss: L = wE * MSE(E) + wF * MSE(F) + w_S * MSE(S), where E, F, S are energy, forces, and stresses.

- Active Learning/Uncertainty Quantification: Iteratively sample new configurations from exploratory molecular dynamics where model uncertainty is high, compute them with DFT, and add them to the training set.

- Validation: Test on unseen phases, diffusion barriers, phonon spectra, and liquid properties not included in training.

3.2 Quantitative Reliability Benchmarks for MLIPs

Table 3: Benchmarking MLIP Performance on Typical Materials Properties

| Property | Target DFT Accuracy | Typical High-Quality MLIP Accuracy (on Test Set) | Critical Factor for Reliability |

|---|---|---|---|

| Static Energy (eV/atom) | N/A (Reference) | 1-10 meV/atom | Diversity of training data (energy landscape coverage). |

| Interatomic Forces (eV/Å) | N/A (Reference) | 0.03-0.1 eV/Å | Local environment sampling in training. |

| Lattice Parameters (Å) | ±0.02 Å (PBE) | ±0.01-0.03 Å | Inclusion of stress tensor data in training. |

| Elastic Constants (GPa) | ±10% (PBE) | ±5-15% | Inclusion of deformed configurations. |

| Phonon Frequencies (THz) | ±0.5 THz (DFT) | ±0.1-0.3 THz | Inclusion of finite-displacement supercells. |

| Diffusion Barrier (eV) | ±0.05 eV (DFT) | ±0.05-0.15 eV | Active learning around saddle points. |

The Reliability Pathway: From DFT to MLIP Predictions

The reliability of a final MLIP property prediction hinges on a chain of approximations. The following diagram maps this dependency.

Diagram 1: Sources of Error in MLIP Prediction Pipeline

Experimental Validation Protocol

Computational data must be validated against experiment where possible. A rigorous protocol is essential.

- Protocol for Validating an MLIP for Molecular Dynamics (MD) of a Pharmaceutical Crystal:

- Target Properties: Select key experimentally accessible properties (e.g., lattice parameters at finite temperature, thermal expansion coefficient, Raman spectrum, elastic tensor).

- MLIP-MD Simulation: Perform isothermal-isobaric (NPT) MD using the trained MLIP for a system size and timescale (~100-1000 atoms, >100 ps) inaccessible to ab initio MD.

- Property Extraction: From the MD trajectory, calculate the target properties (e.g., average lattice parameters, vibrational density of states via Fourier transform of velocity autocorrelation).

- Experimental Comparison: Acquire corresponding experimental data (e.g., X-ray diffraction, Brillouin scattering).

- Error Attribution: Discrepancies must be analyzed through a defined decision tree: Is the error from (a) the MLIP's failure to reproduce reference DFT dynamics, (b) the reference DFT's known systematic error (e.g., PBE lattice constant), or (c) approximations in the experimental analysis or idealization of the simulation?

The reliability of computational data in the context of MLIP training for materials databases is a multi-faceted concept. It originates from the controlled errors of DFT, which are then compounded by the representational and sampling errors of the machine learning model. For drug development professionals leveraging these databases, critical attention must be paid to the provenance of the training data (DFT functional used) and the documented performance boundaries of the MLIP. The future of reliable high-throughput materials discovery lies in systematic uncertainty quantification at every stage of this pipeline, transforming MLIPs from black-box predictors into tools with well-understood confidence intervals.

Step-by-Step Workflows: From Data Retrieval to Predictive Modeling

Building Effective Search Queries for Biomedical Materials

Within the context of Machine Learning Interatomic Potential (MLIP) materials project database training research, constructing precise search queries is paramount. This process enables the systematic retrieval of data critical for training robust models that predict biomaterial properties, degradation, and bio-interfacial interactions. Effective queries bridge structured databases and unstructured literature, feeding high-quality, annotated datasets into MLIP pipelines.

Core Principles of Query Construction

A biomedical materials search strategy must balance specificity with recall. Key principles include:

- Conceptual Layering: Combine terms for the material class (e.g., hydrogel, metal-organic framework), properties (e.g., compressive modulus, porosity), biological target (e.g., osteogenesis, angiogenesis), and application (e.g., drug delivery, bone scaffold).

- Synonym and Jargon Expansion: Account for variant terminology (e.g., "TiO2" vs. "titanium dioxide," "bioceramic" vs. "calcium phosphate").

- Hierarchical Structuring: Use database-specific thesauri (e.g., MeSH for PubMed) to nest broader and narrower terms.

- Experimental Protocol Filters: Incorporate methodology terms (e.g., "electrospinning," "3D bioprinting," "MTT assay") to find relevant experimental data for model validation.

Quantitative Analysis of Search Strategies

The following table summarizes the performance of different query strategies in retrieving relevant records for MLIP training from PubMed and the Materials Project database over a defined period.

Table 1: Efficacy of Different Query Formulations for Biomedical Materials Data Retrieval

| Search Strategy & Query Example | Database | Total Returns | Precision (%) | Key Metrics Retrieved for MLIP |

|---|---|---|---|---|

Basic Single Concept: "hydrogel" AND "mechanical properties" |

PubMed | 12,500 | 31 | Qualitative property descriptions; limited numbers |

Advanced Conceptual Layering: ("gelatin methacryloyl" OR "GelMA") AND ("Young's modulus") AND ("vascularization") |

PubMed | 287 | 78 | Quantitative modulus values, biological response |

Property-Focused with Jargon: "piezoelectric" AND ("polyvinylidene fluoride" OR "PVDF") AND "nanofiber" AND "stem cell" |

PubMed | 94 | 82 | Voltage output, cell differentiation rates |

Crystallographic Structure Search: "perovskite" AND "band gap" < 2.0 eV |

Materials Project | 650 | 95 | CIF files, calculated band structures, space groups |

Synthesis-Filtered: "MOF" AND "drug delivery" AND "solvothermal synthesis" AND "loading capacity" > 20 wt% |

PubMed/Patents | 420 | 65 | Synthesis parameters, drug load/Release curves |

Detailed Experimental Protocol for Data Extraction and Curation

This protocol is essential for generating clean datasets from search returns for MLIP training.

Title: Protocol for Extraction of Quantitative Biomaterial Property Data from Literature for MLIP Database Curation

Objective: To systematically identify, extract, and structure quantitative material property and biological performance data from scientific literature retrieved via optimized search queries.

Materials:

- Access to bibliographic databases (PubMed, Web of Science, IEEE Xplore).

- Text mining/Data extraction software (e.g., ChemDataExtractor, custom Python scripts using spaCy).

- Structured database or spreadsheet software.

Methodology:

- Query Execution & Initial Filtering: Execute the optimized search query from Table 1. Export all results, including title, abstract, DOI, and metadata.

- Automated Full-Text Acquisition: Use authorized API access (e.g., PubMed Central, publisher APIs) to download full-text articles of likely relevant records based on abstract screening.

- Named Entity Recognition (NER) Processing: Process full text through a trained NER pipeline to identify and tag material names, numerical values, property names (e.g., "adhesion strength: 15.6 kPa"), and experimental conditions.

- Relationship Mapping: Employ rule-based or machine learning models to associate numerical values with their correct properties and units (e.g., linking "1200" and "MPa" to "compressive strength").

- Manual Verification & Standardization: For a representative subset (20%), manually verify automated extractions. Standardize all units to SI units. Map material names to canonical identifiers (e.g., InChIKey, SMILES for polymers, standard formulas for ceramics).

- Structured Data Compilation: Compile extracted, verified data into a structured table with columns:

Material_ID,Property_Name,Property_Value,Unit,Experimental_Method,Biological_Test_System,DOI. - Data Integration into MLIP Pipeline: Format the structured table for direct ingestion into the MLIP project database, linking each data point to its source publication.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Biomaterial Synthesis & Characterization Featured in Searches

| Item Name (Example) | Function in Biomedical Materials Research |

|---|---|

| Gelatin Methacryloyl (GelMA) | Photocrosslinkable hydrogel precursor for 3D bioprinting and tissue engineering scaffolds. |

| Poly(lactic-co-glycolic acid) (PLGA) | Biodegradable polymer used for controlled drug delivery microparticles and implants. |

| Hydroxyapatite Nanopowder | Calcium phosphate ceramic mimicking bone mineral, used in composite scaffolds for osteogenesis. |

| RGD Peptide (Arg-Gly-Asp) | Cell-adhesive peptide ligand grafted onto material surfaces to enhance specific cellular integration. |

| CCK-8 Assay Kit | Colorimetric kit for quantifying cell viability and proliferation on material surfaces. |

| Recombinant Human VEGF-165 | Growth factor incorporated into materials to induce endothelial cell migration and angiogenesis. |

Visualization of Search Query Logic and Data Curation Workflow

Title: Biomaterial Data Search and Curation Workflow for MLIP

Title: From Query to Predictive MLIP Model

Using pymatgen and MP-API for Automated Data Extraction

The development of Machine Learning Interatomic Potentials (MLIPs) relies on access to large, high-quality datasets of calculated material properties. The Materials Project (MP) database is a cornerstone resource, providing computed properties for over 150,000 inorganic compounds. Within a broader thesis on MLIP training research, automated and reproducible data extraction from MP is not a convenience but a necessity. It enables the construction of tailored datasets for specific MLIP applications, such as simulating drug delivery materials or catalytic surfaces in pharmaceutical development. This technical guide details the use of the pymatgen library and the MP-API for this critical data pipeline step.

Core Components and Setup

Research Reagent Solutions

| Item | Function in Automated Data Extraction |

|---|---|

| MP-API Key | Unique authentication token granting programmatic access to the Materials Project REST API. Essential for querying data. |

| pymatgen Library | Python library for materials analysis. Provides high-level objects (Structure, Composition) and direct interfaces to the MP-API. |

| MPRester Class | The core class within pymatgen that handles all communications with the Materials Project API. |

| Jupyter Notebook / Python Script | Environment for developing, documenting, and executing the data extraction workflow, ensuring reproducibility. |

| Pandas Library | Used to structure extracted quantitative data into DataFrames for cleaning, analysis, and export. |

| NumPy Library | Supports numerical operations on extracted arrays of data (e.g., elastic tensors, band gaps). |

Setup Protocol:

- Obtain an API key from https://materialsproject.org/open.

- Install required packages:

pip install pymatgen mp-api pandas. - Set the API key as an environment variable

MP_API_KEYor pass it directly toMPRester.

Automated Data Extraction Methodology

Protocol 1: Basic Compound Data Retrieval

This protocol fetches fundamental properties for a list of material IDs.

Protocol 2: Criteria-Based Search for Dataset Curation

This protocol constructs a dataset based on physicochemical criteria relevant to a specific MLIP training goal.

Protocol 3: Advanced Property and Electronic Structure Data

This protocol retrieves dense data types essential for training advanced MLIPs.

Table 1: Example Extracted Basic Properties for Perovskite Compounds

| Material ID | Formula | Formation Energy (eV/atom) | Band Gap (eV) | Volume (ų) | Density (g/cm³) | Space Group |

|---|---|---|---|---|---|---|

| mp-149 | Si | -0.102 | 0.61 | 40.04 | 2.33 | 227 |

| mp-3001 | TiO2 | -2.13 | 2.96 | 62.37 | 4.23 | 136 |

| mp-5239 | CsPbI3 | -0.83 | 1.57 | 250.2 | 4.51 | 221 |

Table 2: Criteria-Based Search Results (Semiconductors, 0.1 < Eg < 2.0 eV)

| Material ID | Formula | Band Gap (eV) | Energy Above Hull (eV/atom) | Is Theoretical |

|---|---|---|---|---|

| mp-10734 | Cu2ZnSnS4 | 1.49 | 0.000 | False |

| mp-1565 | CdTe | 1.50 | 0.000 | False |

| mp-2490 | GaAs | 0.42 | 0.000 | False |

| mp-21721 | CH3NH3PbI3 | 1.57 | 0.087 | True |

Integration into MLIP Training Workflow

Automated data extraction is the first node in a larger MLIP development pipeline. The extracted structures and properties serve as the input for generating training (energies, forces, stresses) and validation sets.

Diagram Title: MLIP Training Pipeline with Automated MP Data Extraction

Experimental Protocol for a Reproducible Extraction Study

Title: Protocol for Building a Dielectric Material Dataset for MLIP Training.

Objective: To create a reproducible script that extracts all stable, inorganic materials with calculated dielectric constant data from the Materials Project for training an MLIP on polarizability.

Methodology:

- Initialization: Import

MPRester,pandas. Load API key. - Search Query: Use

mpr.materials.summary.search()with criteria:is_stable=True,has_property="dielectric",theoretical=False. - Field Specification: Request fields:

material_id,formula_pretty,structure,dielectric.total,dielectric.ionic,dielectric.electronic,band_gap,volume. - Data Parsing: Iterate through returned

SummaryDocobjects. Extract the total, ionic, and electronic dielectric tensors. Compute the average scalar dielectric constant from the trace of the total tensor. - Data Structuring: Compile data into a Pandas DataFrame. Handle missing data (

Nonevalues) by marking asNaN. - Export & Versioning: Save DataFrame to a structured format (e.g.,

JSONorCSV). The script must be version-controlled (e.g., Git) and include a metadata header specifying the API endpoint version and date of extraction.

Diagram Title: Workflow for Reproducible MP Data Extraction Study

The development of Machine Learning Interatomic Potentials (MLIPs) trained on expansive materials databases, such as the Materials Project, has created a paradigm shift in materials discovery. This research enables high-throughput, in silico screening of vast compositional spaces with near-first-principles accuracy. This whitepaper provides a practical guide to applying this framework to a critical biomedical challenge: the rapid identification of novel biocompatible coatings or alloy surfaces that minimize inflammatory response, a key hurdle in implantable devices and drug delivery systems.

Core Hypothesis and Screening Strategy

We hypothesize that surface properties dictating protein adsorption—the critical first step in the foreign body response—can be predicted from MLIP-simulated electronic and structural descriptors. The screening workflow integrates MLIP-driven simulation with targeted in vitro validation.

Key Screening Descriptors (Computable via MLIP/Materials Project Data):

- Surface Energy: Lower energy often correlates with reduced protein adhesion.

- Work Function: Influences charge-transfer interactions with biological molecules.

- Elastic Modulus (Young's Modulus): Should match target tissue to reduce mechanical mismatch.

- Oxide Formation Energy & Band Gap: Predicts passive film stability and electrochemical behavior in vivo.

- Hydrophilicity/Hydrophobicity (simulated via water adsorption energy): Drives initial protein orientation and adhesion.

Table 1: Computed Properties for Candidate Biocompatible Alloy Elements/Compounds (Representative Data)

| Material | Surface Energy (J/m²) | Young's Modulus (GPa) | Oxide Formation Energy (eV/atom) | Simulated Water Contact Angle (°) |

|---|---|---|---|---|

| TiO₂ (Rutile) | 0.90 | 283 | -4.98 | ~20 (Hydrophilic) |

| ZrO₂ | 1.25 | 200 | -5.20 | ~30 (Hydrophilic) |

| Ta₂O₅ | 1.10 | 185 | -4.75 | ~45 (Moderate) |

| 316L Stainless Steel | 1.85 | 200 | -1.82 (Cr₂O₃) | ~65 (Hydrophobic) |

| Ti-6Al-4V (Oxidized) | 1.50 | 114 | -4.98 (TiO₂) | ~55 (Moderate) |

| Nitinol (NiTi) | 1.70 | 75 | -2.10 (TiO₂) | ~70 (Hydrophobic) |

| Hydroxyapatite (HA) | 0.75 | 100 | - | ~15 (Highly Hydrophilic) |

Table 2: In Vitro Cell Response to Selected Coating Candidates (Example Experimental Outcomes)

| Coating Material | Fibroblast Viability (%) at 72h | Macrophage TNF-α Secretion (pg/mL) vs. Control | Platelet Adhesion Density (particles/µm²) |

|---|---|---|---|

| Uncoated 316L SS | 78 ± 5 | 450 ± 80 (Elevated) | 12.5 ± 2.1 |

| TiO₂ Nanotube | 98 ± 3 | 150 ± 30 (Reduced) | 4.2 ± 1.0 |

| ZrO₂ Thin Film | 95 ± 4 | 180 ± 40 (Reduced) | 5.8 ± 1.3 |

| Amorphous Ta₂O₅ | 102 ± 2 | 120 ± 25 (Reduced) | 3.5 ± 0.8 |

| HA Coating | 105 ± 4 | 110 ± 20 (Reduced) | 7.0 ± 1.5 |

Detailed Experimental Protocol forIn VitroValidation

Protocol 1: High-Throughput Macrophage Inflammatory Response Assay

- Objective: Quantify pro-inflammatory cytokine release from macrophages (e.g., THP-1 cell line) in response to material candidates.

- Methodology:

- Sample Preparation: Coat 96-well plate with candidate materials via sputter deposition or sol-gel. Sterilize (UV or ethanol).

- Cell Seeding & Differentiation: Seed THP-1 monocytes at 50,000 cells/well. Differentiate into macrophages using 100 ng/mL PMA for 48 hours.

- Stimulation: Replace medium with serum-free RPMI. Optionally add a mild stimulant (e.g., 1 ng/mL LPS) to model challenged environment.

- Cytokine Quantification: Collect supernatant after 24h. Quantify TNF-α or IL-1β using a commercial ELISA kit per manufacturer's instructions.

- Analysis: Normalize cytokine concentration to total protein content (BCA assay) per well. Compare to positive (LPS on tissue culture plastic) and negative (unstimulated) controls.

Protocol 2: Static Platelet Adhesion Assay

- Objective: Assess thrombogenicity of screened surfaces.

- Methodology:

- Surface Incubation: Immerse material coupons in freshly drawn, citrate-anticoagulated human whole blood for 60 minutes at 37°C under static conditions.

- Fixation & Washing: Rinse gently with PBS to remove non-adherent cells. Fix adherent platelets with 2.5% glutaraldehyde for 1 hour.

- Imaging & Quantification: Dehydrate using ethanol series, critical point dry, and sputter coat for SEM imaging. Count platelets in 10 random fields at 5000x magnification.

- Morphology Scoring: Classify adherent platelets (Stage 1: dendritic, 2: spread dendritic, 3: fully spread) to assess activation degree.

Visualizations: Pathways and Workflow

Diagram 1: MLIP-Driven Screening & Foreign Body Response Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for Validation Experiments

| Item/Reagent | Function & Application | Key Considerations |

|---|---|---|

| THP-1 Human Monocyte Cell Line | Standardized model for macrophage differentiation and cytokine response studies. | Maintain in log-phase growth; use low-passage cells for consistency. |

| Recombinant PMA (Phorbol Myristate Acetate) | Differentiates THP-1 monocytes into adherent macrophage-like cells. | Optimize concentration (typically 50-100 ng/mL) and duration (48-72h). |

| LPS (Lipopolysaccharide) | Positive control stimulant to induce a robust inflammatory cytokine response. | Use ultrapure, same source/batch for comparative studies. |

| Human ELISA Kits (TNF-α, IL-1β, IL-10) | Quantify specific pro- and anti-inflammatory cytokines from cell supernatant. | Choose high-sensitivity kits; ensure dynamic range covers expected values. |

| Citrate Anticoagulated Human Whole Blood | For platelet adhesion and hemocompatibility testing. | Use fresh blood (<2 hours old) for biologically relevant results. |

| Glutaraldehyde (2.5% in Buffer) | Fixes adherent cells and platelets for SEM imaging while preserving morphology. | Handle in fume hood; prepare fresh or use sealed aliquots. |

| Critical Point Dryer (CPD) | Removes liquid from fixed biological samples without surface tension damage. | Essential for accurate SEM imaging of delicate platelet structures. |

| Sputter Coater (Au/Pd) | Applies a thin, conductive metal layer to non-conductive samples for SEM. | Use fine grain targets; coat evenly to prevent charging artifacts. |

Integrating MLIP Data with Molecular Dynamics (MD) Simulations

This whitepaper details a core methodology for a broader thesis on Machine Learning Interatomic Potential (MLIP) materials project database training research. The central challenge in modern computational materials science and drug development is bridging the accuracy of quantum mechanics with the scale of classical molecular dynamics. This guide provides a technical framework for integrating curated data from MLIP training databases directly into robust MD simulation workflows, enabling high-throughput, accurate modeling of material properties and biomolecular interactions.

Foundational Concepts and Current State

Machine Learning Interatomic Potentials (MLIPs) are trained on datasets derived from quantum mechanical calculations (e.g., DFT). Integrating this data into MD simulations allows researchers to perform simulations with near-quantum accuracy at significantly lower computational cost, facilitating the study of complex phenomena over longer timescales and larger systems.

Recent search data indicates a surge in MLIP models such as MACE, NequIP, and Allegro, which emphasize equivariance and high data efficiency. The critical integration step involves converting the trained potential into a format compatible with MD engines like LAMMPS, GROMACS, or OpenMM.

Quantitative Comparison of Prevalent MLIP Frameworks

The following table summarizes key performance metrics and characteristics of leading MLIP frameworks, crucial for selecting a model for MD integration.

Table 1: Comparison of Modern MLIP Frameworks for MD Integration

| Framework | Key Architecture | Target System Types | Typical Training Set Size | Speed (atoms/step/sec)* | Integrated MD Engines | Reported Error (MAE) on Test Sets |

|---|---|---|---|---|---|---|

| MACE | Higher-order equivariant message passing | Materials, Molecules | 1k - 50k configurations | ~10⁴ (CPU) | LAMMPS, ASE | 1-5 meV/atom |

| NequIP | E(3)-equivariant NN | Molecules, Solids | 1k - 10k configurations | ~10³ (CPU) | LAMMPS | 2-8 meV/atom |

| Allegro | Equivariant, strictly local | Bulk Materials, Interfaces | 5k - 100k configurations | ~10⁵ (GPU) | LAMMPS | 1-4 meV/atom |

| ANI (ANI-2x, etc.) | Atomic neural networks | Organic Molecules, Drug-like | Millions of conformations | ~10⁵ (GPU) | ASE, OpenMM, GROMACS (via interface) | ~1.5 kcal/mol (energy) |

| PINN | Physically-informed neural networks | Multiscale Systems | Variable, often smaller | Varies widely | Custom, LAMMPS (plugin) | System-dependent |

*Speed is highly dependent on system size, hardware, and model complexity. Values are approximate for medium-sized systems (~100 atoms).

Core Experimental Protocol: From Database to Production MD

This protocol outlines the steps for integrating an MLIP, trained on a materials project database, into an MD simulation.

Protocol 1: MLIP Training and MD Integration Pipeline

Objective: To train an MLIP on a targeted dataset from a materials database and deploy it for molecular dynamics simulations to predict thermodynamic and kinetic properties.

Materials & Software:

- Hardware: High-performance computing cluster with GPU nodes (e.g., NVIDIA A100/V100) recommended for training.

- Quantum Chemistry Database: e.g., Materials Project, OQMD, ANI-2x, SPICE.

- MLIP Training Code: e.g., MACE, NequIP, or Allegro repository.

- MD Engine: LAMMPS (with

mliaporpair_stylesupport) or GROMACS/OpenMM with appropriate interface. - Analysis Tools: ASE, MDTraj, VMD, Ovito.

Procedure:

Phase 1: Data Curation and Preparation

- Query Database: Extract relevant atomic structures (e.g., bulk crystals, molecular conformations, defect structures) and their corresponding quantum mechanical labels (energy, forces, stress tensors) using the database's API.

- Data Wrangling: Convert all structures to a consistent format (e.g., extended XYZ, ASE database). Apply filters for data quality (e.g., convergence criteria, energy cutoffs).

- Dataset Splitting: Partition the data into training (∼80%), validation (∼10%), and test sets (∼10%). Ensure no data leakage between sets (e.g., separate crystal prototypes or molecular scaffolds).

Phase 2: Model Training and Validation

- Configuration: Set up the MLIP training configuration file (YAML/JSON). Key hyperparameters include: radial cutoff (e.g., 5.0 Å), network architecture (width, depth), batch size, and learning rate schedule.

- Training Loop: Execute the training script. Monitor the loss (energy, forces) on both training and validation sets to prevent overfitting. Employ early stopping if validation loss plateaus.

- Model Validation: Evaluate the final model on the held-out test set. Calculate key metrics: Mean Absolute Error (MAE) for energy and forces, and optionally, stress MAE. Perform inference on unseen but relevant structures (e.g., random perturbations, different phases) to assess generalizability.

Phase 3: Deployment in MD Simulations

- Model Export: Convert the trained model to a format compatible with the target MD engine. For LAMMPS, this is typically a compiled library (

.sofile) or a PyTorch script saved viatorch.jit.script. - MD Engine Integration:

- For LAMMPS: In the input script, specify

pair_style mliapandpair_coeff * * <model_file> <element_list>. Ensure LAMMPS is compiled with the ML-IAP package. - For GROMACS/OpenMM: Use an interface like

horace(for ANI) or a custom plugin to evaluate the MLIP energy and forces at each step.

- For LAMMPS: In the input script, specify

- Simulation Setup: Construct the initial simulation cell. Define the ensemble (NVT, NPT), thermostat/barostat (e.g., Nosé-Hoover, Langevin), timestep (typically 0.5-1.0 fs for accurate force evaluation), and total simulation time.

- Production Run & Analysis: Execute the MD simulation. Trajectory analysis includes calculating radial distribution functions, mean squared displacement (for diffusion coefficients), vibrational density of states, and potential of mean force via enhanced sampling techniques (e.g., metadynamics).

Workflow Visualization

Title: MLIP Training and MD Simulation Integration Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Resources for MLIP-MD Integration

| Item Category | Specific Tool/Resource | Function & Relevance |

|---|---|---|

| MLIP Training Software | MACE, NequIP, Allegro, AMPTorch | Provides the codebase to architect, train, and optimize the machine-learned interatomic potential from quantum data. |

| MD Simulation Engine | LAMMPS, GROMACS, OpenMM | Core software to perform molecular dynamics simulations. Must have an interface or plugin to evaluate the MLIP. |

| Quantum Chemistry Database | Materials Project, ANI-2x, SPICE, QM9 | Source of ground-truth data (energies, forces) for training and benchmarking MLIPs. |

| High-Performance Computing (HPC) | GPU Cluster (NVIDIA), Cloud Computing (AWS/GCP) | Essential for training large MLIP models and running large-scale or long-time MD simulations. |

| Interfacing & Wrapper Library | Atomic Simulation Environment (ASE), JuliaMolSim | Provides unified Python interfaces to manipulate atoms, run calculations, and connect different codes (e.g., MLIP to MD engine). |

| Model Deployment Kit | TorchScript, LibTorch, LAMMPS-ML-IAP package | Converts a trained PyTorch model into a serialized format that can be loaded efficiently by C++-based MD engines during simulation. |

| Enhanced Sampling Suite | PLUMED, SSAGES | Software for implementing advanced sampling techniques (metadynamics, umbrella sampling) within MLIP-driven MD to study rare events. |

| Trajectory Analysis Package | MDTraj, MDAnalysis, Ovito, VMD | Used to process MD trajectory files, compute observables (RDF, MSD, etc.), and visualize atomic dynamics. |

Advanced Integration: Enhanced Sampling and Active Learning

For the thesis research, a closed-loop active learning cycle is paramount.

Protocol 2: Active Learning Loop for Database Expansion

Objective: To identify and incorporate new, informative configurations into the training database by running MLIP-driven MD simulations, improving model robustness.

Procedure:

- Initialization: Start with an MLIP trained on a seed database.

- Exploratory Simulation: Run MD simulations (often with enhanced sampling) to probe regions of configuration space not well-represented in the training data (e.g., phase transitions, reaction pathways).

- Uncertainty Quantification: During simulation, use metrics like the committee model variance or the latent space distance (e.g., with a Gaussian Mixture Model) to flag configurations where the MLIP prediction is uncertain.

- Query and Label: Select the most uncertain configurations. Perform first-principles calculations (DFT) on these structures to obtain accurate energy and forces.

- Database Update & Retraining: Append the newly labeled data to the training database. Retrain or fine-tune the MLIP on the expanded dataset.

- Iteration: Repeat steps 2-5 until model performance and uncertainty metrics converge across the relevant phase space.

Title: Active Learning Loop for MLIP Database Expansion

The integration of MLIP data with MD simulations represents a paradigm shift in computational molecular science, forming the computational core of the proposed thesis. By following the protocols outlined—from careful data curation and model training to deployment in production MD and active learning loops—researchers can construct robust, high-fidelity simulation frameworks. This approach directly feeds back into the growth and refinement of the MLIP materials project database, enabling the predictive modeling of complex materials behavior and drug-target interactions with unprecedented accuracy and scale.

The integration of Machine Learning Interatomic Potentials (MLIPs) with expansive materials databases, such as the Materials Project, has revolutionized the predictive modeling of material properties. This case study situates the challenge of predicting degradation rates of bio-implant materials within this paradigm. The core thesis is that by training MLIPs on high-fidelity experimental and computational degradation data within a curated project database, we can accelerate the discovery and design of next-generation, durable implant alloys and polymers.

Table 1: Experimental Degradation Rates of Common Implant Materials in Simulated Body Fluid (SBF)

| Material | Form | Test Duration (Days) | Degradation Rate (mm/year) | Measurement Method | Key Reference |

|---|---|---|---|---|---|

| Pure Mg | Cast | 30 | 1.8 - 2.5 | Hydrogen Evolution | Witte et al., 2008 |

| AZ31 Mg Alloy | Wrought | 14 | 0.7 - 1.2 | Mass Loss / ICP-MS | Zhao et al., 2017 |

| WE43 Mg Alloy | Cast | 28 | 0.3 - 0.6 | Electrochemical Impedance | Kirkland et al., 2012 |

| 316L Stainless Steel | Polished | 365 | <0.001 | Potentiodynamic Polarization | Virtanen et al., 2008 |

| Ti-6Al-4V ELI | Grade 5 | 365 | ~0.0001 | Electrochemical (Rp) | Geetha et al., 2009 |

| PLLA (Poly-L-lactic acid) | Amorphous Film | 180 | 100% Mass Loss | GPC / Mass Loss | Weir et al., 2004 |

Table 2: Feature Set for ML Model Training from MLIP Database

| Feature Category | Specific Descriptor | Data Type | Relevance to Degradation |

|---|---|---|---|

| Atomic/Electronic | Electronegativity Difference | Scalar | Corrosion potential |

| d-band center (for alloys) | Scalar | Surface reactivity | |

| Formation energy | Scalar | Thermodynamic stability | |

| Microstructural | Grain size | Scalar | Galvanic corrosion sites |

| Second-phase volume fraction | Scalar | Localized corrosion driver | |

| Environmental | Local pH (predicted) | Scalar | Chemical dissolution rate |

| Chloride ion concentration | Scalar | Pitting corrosion initiation |

Detailed Experimental Protocols

Protocol A: Standard Immersion Test for Metallic Implants (ASTM G31-12a)

- Sample Preparation: Cut material into 10mm x 10mm x 2mm coupons. Sequentially grind with SiC paper up to 2000 grit. Clean ultrasonically in acetone, ethanol, and deionized water. Dry in a nitrogen stream.

- Solution Preparation: Prepare 500 mL of simulated body fluid (SBF) per Kokubo recipe (ionic concentrations equal to human blood plasma). Maintain at 37.0 ± 0.5 °C in a thermostatic bath. Pre-bubble with 5% CO₂/balanced air for 1 hour to stabilize pH at 7.4.

- Immersion & Monitoring: Immerse pre-weighed sample (W₀) in SBF using a PTFE holder at a 1 cm²/20 mL ratio. Seal the container to limit evaporation. At pre-defined intervals (e.g., 1, 3, 7, 14 days):

- Extract solution for inductively coupled plasma mass spectrometry (ICP-MS) to measure ion release (Mg²⁺, Al³⁺, etc.).

- Measure evolved hydrogen gas using a graduated burette for Mg alloys.

- Record pH changes.

- Post-Test Analysis: After 14 days, remove sample, gently remove corrosion products (chromic acid solution for Mg alloys), wash, dry, and weigh (W₁). Calculate degradation rate via mass loss: Rate (mm/y) = (K * ΔW) / (A * T * ρ), where K=8.76 x 10⁴, ΔW=W₀-W₁ (g), A=area (cm²), T=time (h), ρ=density (g/cm³).

Protocol B: Electrochemical Impedance Spectroscopy (EIS) for Polymer Degradation

- Electrode Setup: Use a standard three-electrode cell (Pt counter, Ag/AgCl reference, polymer-coated working electrode) in phosphate-buffered saline (PBS) at 37°C.

- Measurement: Apply a sinusoidal potential perturbation of 10 mV amplitude over a frequency range of 100 kHz to 10 mHz at the open-circuit potential.

- Data Modeling: Fit EIS spectra to an equivalent circuit model (e.g., R(C(R(CR)))) representing solution resistance, coating capacitance, pore resistance, double-layer capacitance, and charge transfer resistance. Monitor the decrease in pore resistance (R_po) over time as a direct indicator of hydrolytic degradation and water uptake.

Visualizations

Title: MLIP-Enhanced Degradation Prediction Workflow

Title: Key Pathways in Implant Material Degradation

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Relevance |

|---|---|

| Simulated Body Fluid (SBF) | An inorganic solution with ion concentrations nearly equal to human blood plasma, used as a standard in vitro environment for degradation testing. |

| Phosphate-Buffered Saline (PBS) | A buffered saline solution used extensively for testing polymer degradation and biomolecule release profiles. Maintains physiological pH. |

| Dulbecco's Modified Eagle Medium (DMEM) | A cell culture medium sometimes used in more biologically relevant degradation studies, containing amino acids and vitamins that can influence corrosion. |

| Chromium Trioxide (CrO₃) Solution | Used to chemically remove corrosion products from magnesium alloy surfaces post-immersion without attacking the base metal, enabling accurate mass loss measurement. |

| Tris(hydroxymethyl)aminomethane (TRIS) | A common pH buffer agent used in SBF preparation to stabilize the pH at the physiological level of 7.4. |

| Fluorescent Dyes (e.g., Calcein-AM) | Used in live/dead assays to visualize and quantify cell viability on degrading implant surfaces, linking material corrosion to biological response. |

| ICP-MS Calibration Standards | Certified reference solutions for elements like Mg, Al, Ti, and V, essential for quantifying ion release rates from degrading materials. |

Solving Common MLIP Challenges: Data Gaps, Errors, and Workflow Hurdles

Handling Missing or Incomplete Property Data for Your Target Material

In the development of Machine Learning Interatomic Potentials (MLIPs) for a comprehensive materials project database, handling missing or incomplete property data is a critical bottleneck. The predictive power and generalizability of MLIPs are intrinsically linked to the quality and completeness of their training datasets. This whitepaper, framed within a broader thesis on MLIP materials database training research, outlines a systematic, multi-faceted technical approach for researchers and drug development professionals to address data gaps for target materials, ensuring robust model development.

A Hierarchical Framework for Data Imputation and Acquisition

A tiered strategy is recommended, moving from lower-cost computational methods to targeted high-fidelity experiments.

Table 1: Tiered Strategy for Handling Missing Property Data

| Tier | Method Category | Typical Properties Addressed | Computational/Experimental Cost | Expected Uncertainty |

|---|---|---|---|---|

| 1 | First-Principles & High-Throughput Calculations | Formation energy, band gap, elastic constants, vibrational spectra | High (Comp.) | Low (1-5%) |

| 2 | Transfer Learning & Surrogate Models | Thermodynamic stability, solubility, surface energy | Medium (Comp.) | Medium (5-15%) |

| 3 | Physics-Informed & Semi-Empirical Methods | Thermal conductivity, diffusivity, creep resistance | Low-Medium (Comp.) | Medium-High (10-25%) |

| 4 | Focused High-Fidelity Experimentation | In-vitro dissolution rate, in-vivo bioavailability, complex toxicity | Very High (Exp.) | Low (2-10%) |

Detailed Experimental and Computational Protocols

Protocol for Tier 1: Density Functional Theory (DFT) Calculation of Electronic Band Gap

This protocol fills a common gap for novel semiconductor or photocatalyst materials.

- Structure Preparation: Obtain the crystal structure (e.g., from ICSD, Materials Project) or build it from known symmetry. Perform geometry optimization using a generalized gradient approximation (GGA) functional like PBE to relax ionic positions and cell parameters. Convergence criteria: force < 0.01 eV/Å, energy < 1e-5 eV/atom.

- Electronic Structure Calculation: Using the optimized structure, perform a static single-point energy calculation with a hybrid functional (e.g., HSE06) to obtain an accurate electronic density of states (DOS) and band structure. Use a dense k-point mesh (e.g., spacing < 0.03 Å⁻¹).

- Analysis: From the DOS, identify the valence band maximum (VBM) and conduction band minimum (CBM). The direct difference is the fundamental band gap. For indirect gaps, compare k-point locations of VBM and CBM in the band structure plot.

Title: DFT Workflow for Band Gap Prediction

Protocol for Tier 2: Transfer Learning for Solubility Prediction

This protocol estimates aqueous solubility for pharmaceutical crystals using a pre-trained model.

- Descriptor Generation: For the target molecule, compute a set of molecular descriptors (e.g., Morgan fingerprints, logP, topological polar surface area, number of rotatable bonds) using RDKit or a similar cheminformatics library.

- Model Adaptation: Employ a pre-trained graph neural network (GNN) model (e.g., trained on the AqSolDB dataset). Freeze the initial feature extraction layers and retrain (fine-tune) the final regression layers using a small, high-quality dataset (<100 points) of measured solubility for chemically similar compounds.

- Prediction and Uncertainty Quantification: Input the target material's descriptors into the fine-tuned model. Use Monte Carlo dropout or ensemble methods during inference to provide a mean prediction and a standard deviation, quantifying epistemic uncertainty.

Protocol for Tier 4: High-Throughput Experimental Measurement of Dissolution Rate

This protocol generates critical, hard-to-calculate data for drug formulation.

- Sample Preparation: Compact the target API (Active Pharmaceutical Ingredient) material into a standardized mini-disc (e.g., 3mm diameter) using a hydraulic press at a controlled pressure.

- Dissolution Setup: Use a USP-IV flow-through cell apparatus. Place the disc in the cell. Maintain a controlled biorelevant medium (e.g., FaSSIF, pH 6.8) at 37°C, flowing at a constant rate (e.g., 16 ml/min).

- Real-Time Monitoring: Use fiber-optic UV probes or automated sample collection coupled with HPLC-UV to measure the API concentration in the effluent stream as a function of time.

- Data Analysis: Plot concentration vs. time. The initial slope of the curve (dC/dt) normalized by the disc surface area provides the intrinsic dissolution rate (IDR) in mg/(min*cm²).

Title: USP-IV Dissolution Rate Experimental Setup

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools for Addressing Material Data Gaps

| Item / Reagent | Function / Role | Example Vendor/Software |

|---|---|---|

| VASP / Quantum ESPRESSO | First-principles electronic structure calculations for Tier 1 property generation. | VASP Software GmbH, Open Source |

| RDKit | Open-source cheminformatics for descriptor calculation in QSAR/solubility models. | Open Source |

| MATERIALS PROJECT API | Access to pre-computed DFT data for ~150k materials for validation and transfer learning. | LBNL Materials Project |

| Schrödinger Materials Science Suite | Integrated platform for molecular modeling, crystal structure prediction, and property calculation. | Schrödinger |

| USP-IV (Flow-Through) Apparatus | Gold-standard equipment for measuring intrinsic dissolution rates of pharmaceutical materials. | Sotax, Pharma Test |

| FaSSIF/FeSSIF Powders | Biorelevant dissolution media simulating intestinal fluids for predictive in-vitro testing. | Biorelevant.com |

| High-Throughput Crystallization Robot | Automates the generation of polymorphs and co-crystals for solid-form screening. | Chemspeed Technologies |

| Automated Gas Sorption Analyzer | Measures BET surface area, pore volume, and gas adsorption isotherms (e.g., for MOFs). | Micromeritics |

| MLIP Training Code (e.g., AMPTorch, DeepMD) | Frameworks to create MLIPs using the newly completed dataset for MD simulations. | Open Source |

Debugging API Connection and pymatgen Script Errors

The development of Machine Learning Interatomic Potentials (MLIPs) for high-throughput materials discovery relies on large-scale, curated datasets from sources like the Materials Project (MP) database. Efficient programmatic data extraction via the MP API using libraries such as pymatgen is foundational to this research pipeline. Connection failures, authentication errors, and data parsing inconsistencies directly impede model training cycles, making robust debugging a critical competency. This guide details systematic protocols for diagnosing and resolving these issues within a MLIP materials project database training workflow.

Common API & Script Error Categories and Diagnostics

Table 1: Quantitative Summary of Common pymatgen/MP API Error Types (Based on 2024 Community Forum Analysis)

| Error Category | Frequency (%) | Typical Root Cause | Impact on MLIP Training |

|---|---|---|---|

| Authentication & Rate Limiting | 35% | Invalid API key, exceeded request quota. | Halts data fetching pipeline. |

| Network & Connection | 25% | Unstable internet, proxy/firewall, outdated API endpoint. | Causes incomplete or corrupted datasets. |

| pymatgen Data Parsing | 20% | Unexpected data structure from API, missing required keys. | Introduces silent errors into training data. |

| Dependency Version | 15% | Version mismatch between pymatgen, requests, other libs. | Leads to inconsistent behavior across systems. |

| Server-Side (MP) Issues | 5% | Database maintenance, temporary server errors. | Unavoidable pipeline delays. |

Experimental Protocol: Isolating API Connection Failures

Objective: Determine if the failure originates from the client environment or the remote server.

Methodology:

- Direct Endpoint Ping: Use

curlorrequeststo call a simple API endpoint without pymatgen.