Mastering MD Trajectory Analysis: Best Practices and Cutting-Edge Visualization for Drug Discovery

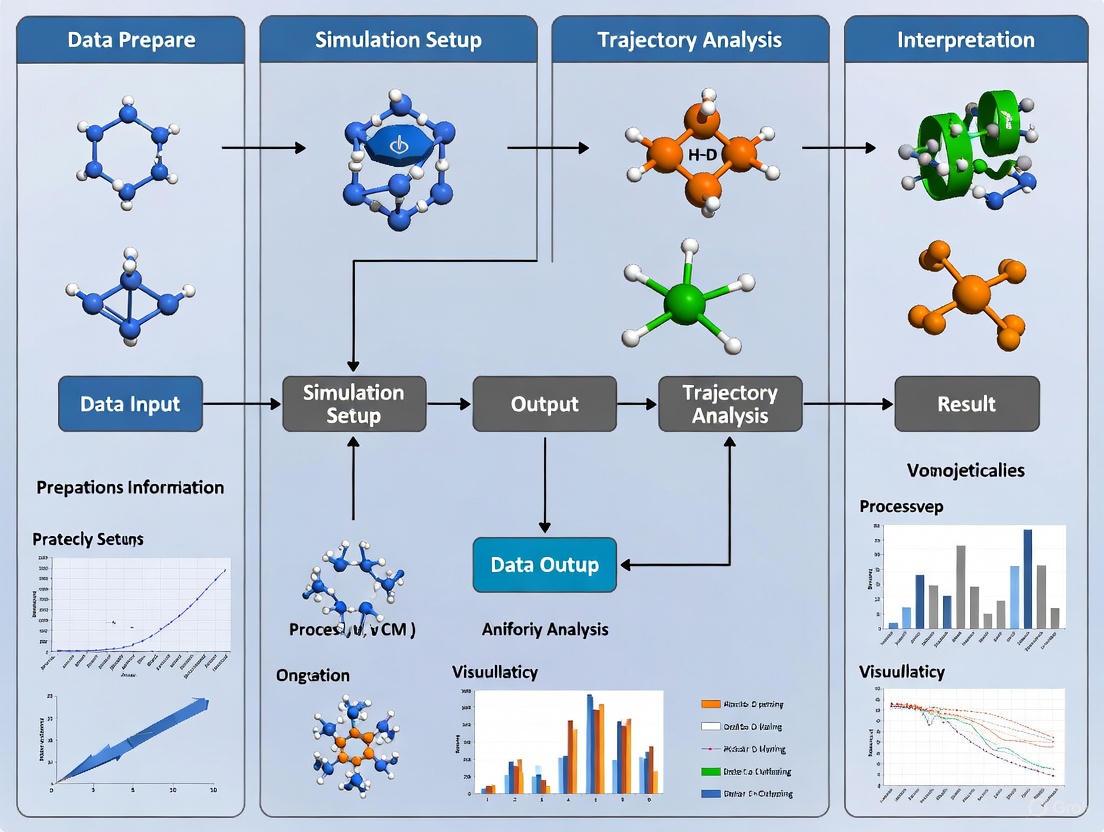

This comprehensive guide addresses the critical challenges in molecular dynamics (MD) trajectory analysis faced by researchers and drug development professionals.

Mastering MD Trajectory Analysis: Best Practices and Cutting-Edge Visualization for Drug Discovery

Abstract

This comprehensive guide addresses the critical challenges in molecular dynamics (MD) trajectory analysis faced by researchers and drug development professionals. As MD simulations generate increasingly massive datasets from complex biological systems, effective analysis and visualization become paramount for extracting meaningful insights. The article covers foundational principles of MD analysis, practical methodologies using modern tools like MDAnalysis and X3DNA, optimization techniques for handling large-scale data, and validation approaches to ensure scientific rigor. By integrating established best practices with emerging trends in AI-powered analysis and immersive visualization, this resource provides a structured framework for accelerating biomedical research and therapeutic development through robust MD data interpretation.

Understanding MD Trajectory Fundamentals: From Raw Data to Biological Insights

The Growing Challenge of MD Data Complexity in Modern Research

Molecular Dynamics (MD) simulation has become an indispensable tool in modern scientific research, from drug development to materials science. The core of MD involves constructing a particle-based description of a system and propagating it to generate a trajectory—a sequence of stored configurations or "frames" that describe its evolution over time [1]. As hardware innovations have made microsecond-length simulations routine, researchers now regularly grapple with trajectories comprising millions of frames, creating a massive data challenge [2] [1]. This data deluge complicates every step, from storage and processing to analysis and interpretation. This application note addresses these challenges by providing structured protocols and best practices for managing and extracting meaningful insights from complex MD data within the broader context of trajectory analysis and visualization research.

The Data Complexity Landscape

The complexity of modern MD data stems from multiple interconnected factors. The sheer volume of data generated poses significant storage and computational hurdles, with systems now routinely containing hundreds of thousands to millions of atoms [1]. This is compounded by the multidimensional nature of the data, where each frame contains spatial coordinates for all particles, and the temporal dimension adds another layer of complexity as researchers seek to understand dynamic processes occurring across multiple timescales [1]. Furthermore, data often exists in silos across various systems, hindering integration and unified analysis—a challenge recognized across data-intensive fields [3].

Effective management of this complexity requires more than just computational resources; it demands systematic approaches to data reduction, feature selection, and intelligent sampling. Without these strategies, researchers risk either being overwhelmed by data volume or missing crucial biological insights through inadequate sampling of conformational space.

Essential Visualization Software

Choosing appropriate visualization tools is crucial for interpreting MD trajectories. The table below compares popular visualization programs capable of handling MD data:

Table 1: Molecular Visualization Software for MD Trajectories

| Software | Trajectory Format Support | Key Features | Platform Compatibility |

|---|---|---|---|

| VMD | Native GROMACS (.xtc, .trr) | 3D graphics, built-in scripting, analysis of large biomolecular systems | Unix, Windows, macOS |

| PyMOL | Requires format conversion (e.g., to PDB); reads trajectories when compiled with VMD plugins | High-quality rendering, crystallography support, animations | Unix, Windows, macOS |

| Chimera | Native GROMACS trajectories | Python-based, full-featured, extensive plugin ecosystem | Cross-platform |

| OVITO | Various particle-based formats | Specialized for particle simulations, real-time interactive exploration, Python integration | Cross-platform |

| NGLView | Multiple formats via MDAnalysis | Jupyter notebook integration, web-based visualization | Web-based, Jupyter environments |

| MolecularNodes | MD trajectories via MDAnalysis | Blender integration, high-quality scientific rendering | Blender plugin |

Each visualization tool employs different heuristics for determining chemical bonds based solely on coordinate files, meaning rendered bonds may not always match topology definitions in your topology (.top) or run input (.tpr) files [4]. This discrepancy can lead to misinterpretation if not properly understood.

Specialized tools like OVITO Pro offer advanced capabilities for large-scale data (100M+ particles), including path-tracing rendering engines, multi-viewport comparative visualizations, and extensive Python scripting support for automated, reproducible analysis workflows [5]. The MolecularNodes plugin for Blender provides a bridge between structural biology data and high-quality rendering capabilities, enabling publication-quality visuals and animations [6].

Key Analysis Techniques and Tools

Dimensionality Reduction Methods

Dimensionality reduction techniques are essential for simplifying complex trajectory data while preserving structurally and functionally relevant information.

Principal Component Analysis (PCA): This linear technique identifies the orthogonal directions (principal components) that capture the greatest variance in the data, effectively reducing dimensionality while preserving global structure. PCA is particularly valuable for initial exploratory analysis of conformational landscapes [7].

T-distributed Stochastic Neighbor Embedding (t-SNE): Unlike PCA, t-SNE focuses on preserving local similarities, making it excellent for cluster visualization and identifying distinct conformational states. However, it is computationally intensive and may not preserve global data structure [7].

Time-lagged Independent Component Analysis (tICA): This method identifies slow, functionally relevant motions by seeking components that maximize autocorrelation at a specified lag time. While powerful for kinetic analysis, tICA requires careful parameter selection and can behave as a "black box," producing components that lack intuitive physical interpretation [2].

Clustering Algorithms

Clustering groups structurally similar frames to identify distinct conformational states and representative structures.

K-Means Clustering: This partition-based algorithm divides frames into a predetermined number of clusters (k) based on structural similarity metrics like RMSD. It is computationally efficient for large datasets but requires prior knowledge of the appropriate number of clusters [7].

Hierarchical Clustering: This approach builds a tree-like structure (dendrogram) illustrating relationships between frames at multiple levels of similarity. It does not require pre-specifying cluster count and provides intuitive visualizations of conformational relationships [7].

RMSD-based Clustering: This physically intuitive approach uses root-mean-square deviation of atomic positions as the similarity metric. The workflow involves: (1) calculating a pairwise RMSD matrix between all frames, (2) clustering based on these distances, and (3) selecting representative structures from each cluster [2].

Feature Selection in Multivariate Analysis

Feature selection is critical for building interpretable models that focus on the most relevant structural descriptors. Key methods include:

Forward Selection: Begins with no features and incrementally adds the most statistically significant contributors to model performance.

Backward Elimination: Starts with all features and iteratively removes the least significant ones.

Recursive Feature Elimination: Systematically eliminates the weakest features through multiple modeling iterations, refining the feature set based on model performance [7].

These techniques help mitigate the "curse of dimensionality" by focusing analysis on the most informative structural parameters, reducing computational overhead, and improving model interpretability.

Experimental Protocols

Protocol 1: RMSD-Based Clustering for State Identification

This protocol provides a robust method for identifying major conformational states in MD trajectories through RMSD-based clustering [2].

Workflow Diagram: RMSD Clustering Protocol

Step-by-Step Methodology:

Pairwise RMSD Matrix Calculation: For an N-frame trajectory, compute the all-atom or backbone RMSD between every pair of frames (i, j), generating an N×N symmetric matrix where each element represents the structural distance between frames i and j. Visually inspect the heatmap representation of this matrix, where dark regions indicate structural similarity and bright bands indicate large deviations; block-like structures along the diagonal suggest stable conformational basins [2].

Hierarchical Clustering: Apply agglomerative hierarchical clustering to the RMSD matrix using an appropriate linkage method (e.g., Ward's method). This progressively merges the most similar frames and clusters into a tree structure. Generate a dendrogram to visualize the clustering, where the vertical axis represents RMSD distance at merger and colored branches highlight distinct conformational basins [2].

Medoid Identification and Sampling: For each cluster, identify the medoid—the frame with the smallest average RMSD to all other cluster members, representing the most central structure. For comprehensive representation, perform Boltzmann-weighted sampling by selecting frames from each cluster in proportion to cluster population. This ensures your final ensemble reflects the relative stability (probability) of each conformational state [2].

Protocol 2: Trajectory Clustering for Periodic Boundary Condition Correction

This protocol corrects for artifacts introduced by periodic boundary conditions, which is essential for accurate calculation of properties like radius of gyration [4].

Workflow Diagram: PBC Correction Protocol

Step-by-Step Methodology:

Initial Clustering Step: Process your trajectory using

gmx trjconv -f input.xtc -o clustered.gro -e 0.001 -pbc cluster. This produces a single frame where all molecules are placed within the primary unit cell, avoiding splits across periodic boundaries. Use the first frame of your trajectory for this step, as other timepoints may not work correctly [4].Regenerate Run Input File: Create a new run input file using

gmx grompp -f parameters.mdp -c clustered.gro -o new_input.tpr. This ensures the topology matches the newly clustered structure [4].Apply Continuous Correction: Process the full trajectory using the new input file:

gmx trjconv -f input.xtc -o corrected_trajectory.xtc -s new_input.tpr -pbc nojump. This produces a final trajectory without periodic boundary jumps, enabling accurate property calculations [4].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Essential Software Tools for MD Trajectory Analysis

| Tool Name | Type/Category | Primary Function |

|---|---|---|

| GROMACS | MD Simulation Engine | High-performance MD simulation with extensive analysis utilities |

| MDAnalysis | Python Library | Trajectory analysis and manipulation, extensible framework for custom analyses |

| PyContact | Analysis Tool | Analysis of non-covalent interactions in MD trajectories [6] |

| LiPyphilic | Analysis Tool | Analysis of lipid membrane simulations [6] |

| MDTraj | Python Library | Fast trajectory manipulation and analysis |

| BioEn | Analysis Tool | Refinement of structural ensembles by integrating experimental data [6] |

| Grace | Plotting Tool | 2D plotting of analysis data (e.g., from GROMACS .xvg files) |

| Matplotlib | Python Library | Flexible data visualization and plotting in Python |

| GNUMAT | Statistical Tool | Statistical computing and graphics for advanced analysis |

| VMD | Visualization | Molecular visualization, animation, and analysis |

| OVITO | Visualization | Scientific visualization and analysis of particle-based data |

| MDAKits | Toolkit Ecosystem | Community-developed tools extending MDAnalysis functionality [6] |

The growing complexity of MD data presents both challenges and opportunities for modern research. Effectively managing this complexity requires a multifaceted approach combining appropriate visualization tools, robust analysis techniques, and systematic protocols. By implementing the strategies outlined in this application note—including intelligent sampling through RMSD clustering, proper trajectory correction, and leveraging the expanding ecosystem of analysis tools—researchers can extract meaningful biological insights from even the most complex MD datasets. As the field continues to evolve with increasingly sophisticated simulations, these foundational practices for trajectory analysis and visualization will remain essential for advancing scientific discovery in structural biology and drug development.

Essential Structural and Dynamic Parameters for Analysis

Molecular dynamics (MD) simulations generate vast amounts of data in the form of trajectories, which record the evolution of atomic coordinates and velocities over time. The analysis of these trajectories transforms raw simulation data into meaningful insights about biological processes, structural stability, and dynamic behavior. For researchers in structural biology and drug development, extracting essential parameters from MD trajectories provides the critical link between atomic-level movements and macromolecular function. This document outlines standardized protocols for calculating key structural and dynamic parameters, ensuring reproducibility and reliability in computational biophysical studies. The parameters and methods described herein form the foundation for interpreting simulations of biomolecular systems, from small proteins to large complexes.

Essential Analytical Parameters

The quantitative analysis of MD trajectories focuses on parameters that describe structural features, fluctuations, and interactions. The following table summarizes the essential parameters, their analytical significance, and typical applications in drug discovery research.

Table 1: Essential Structural and Dynamic Parameters for MD Trajectory Analysis

| Parameter | Mathematical Definition | Structural Interpretation | Research Application |

|---|---|---|---|

| Root Mean Square Deviation (RMSD) | $\text{RMSD}(t) = \sqrt{\frac{1}{N}\sum{i=1}^{N} \lVert \vec{r}i(t) - \vec{r}_i^{\text{ref}} \rVert^2}$ | Measures structural drift from reference conformation | Simulation equilibration assessment, conformational stability |

| Root Mean Square Fluctuation (RMSF) | $\text{RMSF}(i) = \sqrt{\frac{1}{T}\sum{t=1}^{T} \lVert \vec{r}i(t) - \langle\vec{r}_i\rangle \rVert^2}$ | Quantifies per-residue flexibility | Identifying flexible/rigid regions, binding site characterization |

| Radius of Gyration (Rg) | $Rg(t) = \sqrt{\frac{1}{M}\sum{i=1}^{N} mi \lVert \vec{r}i(t) - \vec{r}_{\text{COM}}(t) \rVert^2}$ | Measures structural compactness | Protein folding studies, denaturation monitoring |

| Radial Distribution Function (RDF) | $g(r) = \frac{\langle \rho(r) \rangle}{\langle \rho \rangle{\text{local}}} = \frac{N(r)}{V(r) \cdot \rho{\text{tot}}}$ [8] | Describes particle density variation with distance | Solvation analysis, ion distribution around biomolecules |

| Hydrogen Bonds | $E_{\text{HB}} = -C \cdot F(\theta) \cdot \left[\left(\frac{\sigma}{r}\right)^{12} - \left(\frac{\sigma}{r}\right)^6\right]$ | Counts specific donor-acceptor pairs within cutoff | Protein-ligand interaction stability, secondary structure integrity |

| Torsion Angles | $\phi, \psi = \text{dihedral}(\vec{r}{i-1}, \vec{r}i, \vec{r}{i+1}, \vec{r}{i+2})$ | Defines protein backbone and side-chain conformations | Ramachandran plot analysis, conformational state identification |

These parameters enable researchers to quantify conformational changes, stability, and interactions that underlie biological function. For instance, RMSD provides a global measure of structural stability, while RMSF reveals local flexibility patterns often crucial for understanding allosteric regulation or binding site dynamics. The radial distribution function is particularly valuable for analyzing solvation structure and ion atmosphere around nucleic acids or proteins, with the mathematical formulation showing how the normalized histogram of distances N(r) is converted to a relative density by dividing by the volume of the spherical shell V(r) and the total system density ρtot [8].

Experimental Protocols

Protocol 1: Calculating Radial Distribution Functions

Purpose: To determine the spatial organization of particles around specific sites, such as water molecules around protein residues or ions around DNA.

Materials and Reagents:

- MD trajectory files (XTC, TRR, or other compatible formats)

- Topology file (TPR, PSF, or equivalent)

- Analysis software (GROMACS, MDAnalysis, AMS)

- Visualization tools (VMD, PyMOL, Chimera)

Procedure:

- Trajectory Preparation: Ensure the trajectory is properly aligned and periodic boundary conditions have been correctly handled. For non-periodic systems, explicitly define the maximum radius using the

Rangekeyword [8]. - Atom Selection: Define the two sets of atoms between which the RDF will be calculated using

AtomsFromandAtomsToblocks, selecting by element, region, or atom indices [8]. - Parameter Configuration:

- Set the number of bins (

NBins) for the distance histogram (default: 1000) [8] - Define the maximum distance range (

Range) for non-periodic systems - For NPT simulations, note that the volume is averaged over the trajectory

- Set the number of bins (

- Execution: Run the RDF calculation using appropriate commands for your analysis package:

- GROMACS:

gmx rdf - MDAnalysis:

InterRDFanalysis class - AMS:

Task RadialDistribution[8]

- GROMACS:

- Interpretation: Plot g(r) versus distance r. Peaks indicate distances where particle density is higher than the system average, suggesting preferential coordination.

Troubleshooting Tips:

- For systems with varying numbers of atoms, ensure proper normalization of particle densities

- For accurate solvation shell analysis, use a sufficient number of frames to achieve statistical significance

- When analyzing protein-water RDFs, focus on the first hydration shell (typically 2.5-3.5 Å)

Protocol 2: Stability Analysis via RMSD and RMSF

Purpose: To assess protein structural stability and identify regions of high flexibility during simulation.

Materials and Reagents:

- Equilibrated MD trajectory

- Reference structure (typically the starting structure or experimental coordinates)

- Analysis software (GROMACS, MDAnalysis, CPPTRAJ)

Procedure:

- System Setup: Extract protein atoms from the trajectory, removing solvent and ions for protein-focused analysis.

- Structural Alignment: Superpose each frame to a reference structure using rotational and translational fitting to remove global motions.

- RMSD Calculation: Compute the mass-weighted root mean square deviation of atomic positions after alignment:

- For backbone atoms to assess global structural stability

- For specific domains to identify relative motions

- RMSF Calculation: Calculate residue-specific fluctuations after alignment to quantify local flexibility:

- Per residue for identifying flexible loops or binding sites

- Per atom for specific functional groups

- Time Series Analysis: Plot RMSD versus time to identify equilibration periods and stable simulation phases.

- Visualization: Map RMSF values onto protein structures to create flexibility maps.

Troubleshooting Tips:

- High initial RMSD values may indicate inadequate equilibration

- Sudden RMSD jumps may correspond to conformational transitions

- Compare RMSF patterns with experimental B-factors for validation

Visualization and Data Integration

Visualization Tools and Techniques

Effective visualization is essential for interpreting MD trajectories and communicating insights. The following table catalogs widely-used visualization packages and their specific capabilities for trajectory analysis.

Table 2: Molecular Visualization Software for MD Trajectory Analysis

| Software | Trajectory Format Support | Strengths | Accessibility |

|---|---|---|---|

| VMD | Reads GROMACS trajectories (XTC, TRR) natively [4] | Advanced scripting (Tcl/Python), extensive analysis modules [4] | Free academic use, cross-platform |

| PyMOL | Requires format conversion (e.g., to PDB); can read TRR/XTC with VMD plugins [4] | High-quality rendering, crystallography tools, publication-ready images [4] | Freemium model, cross-platform |

| Chimera | Reads GROMACS trajectories natively [4] | User-friendly interface, Python scripting, integrative modeling [4] | Free academic use, cross-platform |

| MDAnalysis | Supports GROMACS (GRO, XTC, TRR), AMBER, CHARMM, LAMMPS formats [9] | Programmatic analysis, Python API, extensive analysis library [9] | Open source, Python library |

When visualizing analytical results, careful attention to color selection enhances interpretation and accessibility. For categorical data distinguishing discrete entities (e.g., different protein chains or ligand types), use a palette with maximized contrast between neighboring colors [10]. For sequential data representing intensity or magnitude (e.g., RMSF values mapped onto a structure), employ monochromatic scales where darker tones represent higher values in light themes [10]. Ensure all visual elements maintain sufficient color contrast, with a minimum ratio of 4.5:1 for standard text and 3:1 for large text [11].

Data Integration Frameworks

Modern MD analysis increasingly combines computational and experimental data through integrative structural biology approaches. Three principal frameworks enable this integration:

Independent Approach: Computational and experimental protocols are performed separately, with results compared post-hoc. This method can reveal unexpected conformations but may struggle with rare events [12].

Guided Simulation: Experimental data are incorporated as restraints during simulation, directly guiding conformational sampling. This approach efficiently explores experimentally-relevant regions but requires implementation of appropriate restraint potentials [12].

Search and Select: A large ensemble of conformations is generated first, then filtered against experimental data. This method allows integration of multiple data types but requires comprehensive initial sampling [12].

These integrative approaches are particularly valuable for drug discovery, where experimental data on ligand binding can constrain docking simulations, or cryo-EM density maps can guide flexible fitting of atomic models.

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for MD Analysis

| Tool/Resource | Type | Function | Access |

|---|---|---|---|

| GROMACS | Software Suite | Molecular dynamics simulation and analysis [4] | Open source |

| MDAnalysis | Python Library | Trajectory analysis and data reduction [9] | Open source |

| CHARMM22* | Force Field | Empirical energy function for MD simulations [13] | Academic license |

| mdCATH Dataset | Reference Data | Large-scale MD trajectories for 5,398 protein domains [13] | CC BY 4.0 license |

| MoDEL Database | Reference Data | Database of protein molecular dynamics trajectories [14] | Public web server |

| VMD | Visualization | Molecular visualization and trajectory analysis [4] | Free academic |

The standardized protocols and parameters outlined in this document provide a robust framework for extracting biologically meaningful information from MD trajectories. By applying these methods consistently and with attention to analytical details, researchers can reliably connect atomic-level dynamics to macromolecular function. The integration of computational analysis with experimental data further strengthens the interpretative power of MD simulations, particularly in drug discovery applications where understanding flexible binding sites and allosteric mechanisms is crucial. As MD datasets continue to grow in scale and accessibility, exemplified by resources like mdCATH [13] and MoDEL [14], the adoption of standardized analysis protocols will become increasingly important for ensuring reproducibility and facilitating cross-study comparisons in computational biophysics.

In molecular dynamics (MD) simulations, the transition from raw trajectory data to meaningful scientific insight is mediated by visualization. Advances in high-performance computing now enable simulations of biological systems involving millions to billions of atoms over increasingly long timescales, generating enormous amounts of complex trajectory data [15]. Effective visualization techniques are therefore crucial for interpreting the structural and dynamic information contained within these simulations. This document examines the core principles of static versus dynamic representations within MD trajectory analysis, providing a framework for researchers and drug development professionals to select appropriate visualization strategies based on their analytical goals. The choice between these representation modes represents a fundamental decision point in the scientific workflow, influencing what features of molecular behavior can be effectively observed and communicated.

Core Conceptual Framework: Static vs. Dynamic Representations

Static and dynamic visualizations serve complementary roles in MD analysis, each with distinct advantages for revealing different aspects of molecular behavior.

Static representations capture the molecular system at a single point in time, typically as a single frame or structure extracted from a trajectory. These representations excel at highlighting specific structural features, molecular interfaces, or precise atomic arrangements. They provide a fixed reference for detailed examination of particular conformational states, binding sites, or molecular surfaces. Common static representations include cartoon diagrams of secondary structure, space-filling models, ball-and-stick models, and surface representations.

Dynamic representations encompass the temporal evolution of the molecular system across multiple time points, preserving information about molecular motion, conformational changes, and transition pathways. These representations include trajectory animations, interactive molecular dynamics, and collective motion visualizations. Dynamic representations are essential for understanding processes such as protein folding, ligand binding, allosteric transitions, and functional mechanisms that emerge from molecular motions rather than static snapshots.

The integration of both approaches creates a powerful analytical framework where static snapshots provide structural context and dynamic visualizations reveal functional mechanisms, together enabling comprehensive understanding of biomolecular systems.

Quantitative Comparison of Representation Approaches

Table 1: Characteristic Comparison of Static and Dynamic Visualization Approaches

| Feature | Static Representations | Dynamic Representations |

|---|---|---|

| Temporal Resolution | Single time point | Multiple time points across trajectory |

| Data Volume | Low (single structure) | High (thousands to millions of frames) |

| Primary Analytical Value | Structural detail, specific interactions | Motions, pathways, transitions, kinetics |

| Common Visualization Forms | Cartoon diagrams, surface representations, electron density maps | Trajectory animations, interactive MD, pathline diagrams |

| Information Compression | None | Temporal averaging, dimensionality reduction |

| Computational Requirements | Low to moderate | High (processing, memory, rendering) |

| Suitable for Publication | Excellent | Limited (static images from dynamics) |

Table 2: Software Tools for Molecular Visualization

| Software | Representation Type | MD Format Support | Key Capabilities | Platform |

|---|---|---|---|---|

| VMD | Both static & dynamic | Native GROMACS support [4] | 3D graphics, built-in scripting, trajectory analysis | Cross-platform |

| PyMOL | Primarily static | Requires format conversion [4] | High-quality rendering, crystallography | Cross-platform |

| Chimera | Both static & dynamic | Reads GROMACS trajectories [4] | Python-based, extensive feature set | Cross-platform |

| Web-based Tools | Both static & dynamic | Varies by implementation [15] | Accessibility, collaboration, notebook integration | Web browser |

Experimental Protocols

Protocol 1: Generating Representative Structures via RMSD Clustering

Purpose: To identify and extract structurally distinct conformational states from MD trajectories using a physically intuitive metric.

Principle: This method groups trajectory frames based on structural similarity measured by Root-Mean-Square Deviation (RMSD), effectively identifying major conformational states without the mathematical complexity of kinetic analysis methods like tICA [2].

Methodology:

- Calculate pairwise RMSD matrix: Compute the backbone RMSD between every pair of frames in the trajectory, generating an N×N distance matrix where N is the number of frames.

- Cluster analysis: Apply a clustering algorithm (e.g., hierarchical clustering) to group frames with low pairwise RMSD into structurally similar clusters.

- Identify representative structures: For each cluster, select the medoid - the frame with the lowest average RMSD to all other cluster members.

- Weighted sampling: Sample frames proportionally to cluster size to maintain Boltzmann-weighted representation of states [2].

Workflow Diagram:

Protocol 2: Dynamic Visualization of Trajectory Data

Purpose: To create dynamic visualizations that accurately represent molecular motions and transitions across simulation timescales.

Principle: Dynamic representations preserve temporal relationships between conformational states, enabling observation of transition pathways, correlated motions, and mechanistic insights [15].

Methodology:

- Trajectory preprocessing: Ensure trajectory continuity using tools like

gmx trjconvwith-pbc clusterand-pbc nojumpoptions to correct for periodic boundary artifacts [4]. - Representation selection: Choose appropriate visualization styles (cartoon, licorice, surface) balanced against computational performance for smooth animation.

- Animation parameters: Set appropriate frame sampling rates to capture relevant motions without excessive data volume.

- Interactive exploration: Utilize tools like VMD or Chimera for real-time manipulation of trajectory playback, including speed adjustment, rotation, and focused analysis on specific regions [4] [15].

Workflow Diagram:

Table 3: Essential Resources for MD Visualization and Analysis

| Resource Category | Specific Tools/Solutions | Primary Function | Application Context |

|---|---|---|---|

| Visualization Software | VMD, PyMOL, Chimera [4] | 3D molecular visualization and trajectory rendering | Static and dynamic representation of molecular structures |

| Trajectory Analysis | GROMACS (gmx trjconv, gmx traj) [4] |

Trajectory processing, transformation, and data extraction | Preprocessing, clustering, and feature calculation |

| Dimensionality Reduction | tICA, RMSD clustering [2] | Identification of key conformational states and motions | State identification, kinetic analysis, and sampling |

| Programming Environments | Python with Matplotlib, NumPy [4] | Custom analysis, plotting, and data processing | Quantitative analysis and visualization of trajectory data |

| Specialized Analysis | Custom clustering scripts, dimensionality reduction | Identification of metastable states and conformational landscapes | Advanced analysis of complex dynamics |

Integrated Analysis Workflow: From Raw Data to Scientific Insight

Purpose: To provide a comprehensive framework for transitioning from raw trajectory data to scientifically meaningful insights through an integrated visualization strategy.

Principle: Effective MD analysis requires iterative application of both static and dynamic visualization approaches, with each informing the other in a cyclic workflow [15] [2].

Methodology:

- Initial trajectory assessment: Use dynamic visualization to gain overview of system behavior, identify major conformational transitions, and assess simulation stability.

- Key frame identification: Apply RMSD clustering or similar approaches to extract representative structures from dominant conformational states.

- Static structural analysis: Examine representative structures in detail using multiple static representations to understand specific molecular interactions, binding interfaces, or structural features.

- Dynamic context validation: Return to dynamic visualization to confirm the functional relevance and transition pathways between structurally identified states.

- Quantitative correlation: Integrate quantitative measurements from trajectory analysis with visual observations to build mechanistic models.

Workflow Diagram:

Advanced Applications and Future Directions

The field of MD visualization continues to evolve with several emerging technologies enhancing both static and dynamic representation capabilities. Virtual reality (VR) environments provide immersive exploration of complex molecular dynamics, allowing researchers to naturally interact with and manipulate molecular structures in three-dimensional space [15]. Web-based visualization tools have improved accessibility and collaboration potential, enabling researchers to share and analyze trajectories through browser-based interfaces without specialized local software [15]. Deep learning approaches are being integrated into visualization pipelines, allowing for photorealistic rendering of molecular representations and automated identification of relevant features within trajectories [15]. These advancements are particularly valuable for drug development professionals who require intuitive access to complex dynamic processes underlying molecular recognition and binding mechanisms.

Molecular Dynamics (MD) simulation is a cornerstone technique in computational chemistry and drug development, generating vast amounts of trajectory data that require sophisticated analysis to extract meaningful biological and physical insights. The process of analyzing MD trajectories is often fragmented, requiring researchers to chain together multiple software tools and write bespoke scripts for routine structural and dynamic analyses [16]. This workflow complexity creates a significant barrier to efficiency, standardization, and reproducibility, particularly for non-specialists and in high-throughput settings [16]. Within the context of best practices for MD trajectory analysis and visualization techniques research, this application note provides a structured framework for selecting appropriate software tools based on specific analysis requirements. We present a comprehensive comparison of widely used MD analysis tools, detailed experimental protocols for common analyses, and visualization best practices to ensure research quality and reproducibility.

Analysis Requirements and Software Capabilities

The MD analysis software ecosystem comprises specialized tools with complementary strengths. The selection strategy must align computational requirements with scientific objectives, considering factors such as performance, interoperability, and learning curve.

Table 1: Core MD Analysis Tools and Their Primary Applications

| Software Tool | Primary Analysis Capabilities | Interface/Environment | Key Strengths |

|---|---|---|---|

| FastMDAnalysis [16] | Automated end-to-end analysis (RMSD, RMSF, Rg, HB, SASA, SS, PCA, clustering) | Python library with unified interface | Reproducibility, >90% code reduction for standard workflows, high-performance |

| MDAnalysis [17] | Flexible trajectory analysis, reading/writing multiple formats | Python library | Extensible API, integration with NumPy/SciPy, custom analysis development |

| MDTraj [17] | Fast RMSD calculations, geometric analyses (bonds, angles, dihedrals, HB, SS, NMR) | Python library | Speed with large datasets, efficient common analysis tasks |

| VMD [17] | Visualization, animation, structural analysis | Graphical with TCL/Python scripting | Interactive visualization, extensive format support, powerful scripting |

| CPPTRAJ [17] | Trajectory processing, wide range of analyses | AmberTools command-line | Extensive analysis/manipulation functions, scripting for automation |

| GROMACS Tools [17] | Analysis of GROMACS trajectories | Suite of utilities | Highly optimized performance, seamless GROMACS workflow integration |

| PyEMMA [17] | Markov state models, kinetic analysis, TICA | Python library | MSM estimation/validation, advanced kinetic analysis |

| PLUMED [17] | Enhanced sampling, free energy calculations, analysis | Library interfacing with MD codes | Advanced sampling algorithms, free energy methods |

| Free Energy Landscape-MD [17] | Free energy landscape analysis and visualization | Python package | 3D FEL plots, PCA-based landscape generation, minima identification |

| gmx_MMPBSA [17] | MM/PBSA and MM/GBSA binding free energy calculations | Python-based tool | Easy-to-use interface, GROMACS integration |

For researchers requiring automated, reproducible workflows for standard MD analyses, FastMDAnalysis provides a unified framework that significantly reduces scripting overhead [16]. In a case study analyzing a 100 ns simulation of Bovine Pancreatic Trypsin Inhibitor, FastMDAnalysis with a single command performed a comprehensive conformational analysis, including root-mean-square deviation (RMSD), root-mean-square fluctuation (RMSF), radius of gyration (Rg), hydrogen bonding (HB), solvent accessible surface area (SASA), secondary structure assignment (SS), principal component analysis (PCA), and hierarchical clustering, producing results in under 5 minutes [16]. For specialized analyses such as free energy calculations or Markov state modeling, domain-specific tools like PLUMED and PyEMMA offer advanced capabilities that can be integrated into broader workflows [17].

Experimental Protocols for Key Analyses

Protocol 1: Comprehensive Protein Trajectory Analysis Using FastMDAnalysis

This protocol describes an automated workflow for comprehensive protein dynamics analysis, suitable for characterizing conformational changes, flexibility, and structural stability.

Research Reagent Solutions:

- MD Trajectory File: Container for atomic coordinates over time (format: XTC, TRR, DCD, etc.)

- Topology File: Molecular structure definition (format: PDB, PRMTOP, etc.)

- Solvent Model: Explicit or implicit solvent representation

- Force Field Parameters: Atomic interaction potentials (e.g., OPLS4, AMBER, CHARMM)

- FastMDAnalysis Environment: Python installation with required dependencies (MDTraj, scikit-learn)

Procedure:

- Software Installation: Install FastMDAnalysis from the GitHub repository (https://github.com/aai-research-lab/fastmdanalysis) following the provided installation guide [16].

- Environment Setup: Initialize the analysis environment with trajectory and topology file paths.

- Analysis Configuration: Specify analysis parameters in a configuration file or script:

- RMSD reference structure (e.g., initial frame or average structure)

- Hydrogen bond distance and angle cutoffs

- SASA probe radius

- PCA components to retain

- Clustering algorithm parameters

- Workflow Execution: Execute the comprehensive analysis with a single command or short script.

- Output Generation: Review publication-quality figures and machine-readable data files for all analyses.

Expected Outputs:

- Time-series data for RMSD, RMSF, Rg, SASA

- Hydrogen bond occupancy and lifetime statistics

- Secondary structure assignment timelines

- Principal components and projection of trajectories

- Cluster populations and representative structures

- Execution log with parameters for reproducibility

Protocol 2: Free Energy Landscape Analysis

This protocol describes the calculation and visualization of free energy landscapes from MD trajectories to identify metastable states and transition pathways.

Research Reagent Solutions:

- Dimensionality Reduction Method: Principal Component Analysis or Time-Lagged Independent Component Analysis

- Free Energy Estimator: Probability distribution from histogram or kernel density estimation

- Visualization Package: FreeEnergyLandscape-MD or similar Python package

- Clustering Algorithm: For identifying minima basins

Procedure:

- Trajectory Preprocessing: Remove rotational and translational degrees of freedom.

- Collective Variable Selection: Identify appropriate degrees of freedom (e.g., RMSD, dihedral angles, distances).

- Dimensionality Reduction: Apply PCA or TICA to identify slow modes of dynamics.

- Probability Calculation: Project trajectory onto first two principal components and compute 2D probability distribution.

- Free Energy Calculation: Convert probability to free energy using ΔG = -kBT ln(P).

- Landscape Visualization: Generate 2D or 3D free energy contour plots.

- Minima Identification: Locate free energy minima and extract representative structures.

- Transition Analysis: Identify lowest free energy paths between minima.

Expected Outputs:

- Free energy landscape as function of collective variables

- Identification of metastable states and their relative populations

- Representative structures for each free energy minimum

- Transition pathways between stable states

Protocol 3: Binding Free Energy Calculation Using MM/PBSA

This protocol describes the application of Molecular Mechanics/Poisson-Boltzmann Surface Area method to estimate protein-ligand binding affinities.

Research Reagent Solutions:

- MD Trajectories: Separate simulations of complex, receptor, and ligand

- Molecular Mechanics Force Field: Compatible with GROMACS (e.g., OPLS-AA, AMBER)

- Continuum Solvation Model: PBSA or GBSA implementation

- Entropy Estimation Method: Normal mode or quasi-harmonic approximation

Procedure:

- System Preparation: Generate topology files for complex, receptor, and ligand.

- Trajectory Generation: Perform MD simulations for all three systems.

- Frame Extraction: Select uncorrelated trajectory frames for energy calculation.

- Energy Calculations: Compute gas-phase and solvation energies for each component.

- Binding Energy Calculation: Combine components using MM/PBSA formula.

- Statistical Analysis: Calculate mean and standard error of binding energies.

- Decomposition Analysis: Identify residue-specific contributions to binding.

Expected Outputs:

- Average binding free energy and standard deviation

- Energy components (electrostatic, van der Waals, polar and nonpolar solvation)

- Per-residue energy decomposition

- Correlation with experimental binding affinities

Visualization Best Practices for MD Data

Effective visualization of MD results is essential for interpretation and communication of findings. The following practices ensure clarity, accuracy, and accessibility.

Table 2: Data Visualization Best Practices for MD Analysis Results

| Practice | Application to MD Data | Implementation Guidelines |

|---|---|---|

| Choose Right Chart Type [18] [19] | Time-series (RMSD, Rg): Line charts | Match visualization to data structure: line charts for temporal trends, bar charts for discrete comparisons, scatter plots for correlations, histograms for distributions |

| Maintain Data-Ink Ratio [18] [20] | Remove chart junk from trajectory plots | Eliminate heavy gridlines, redundant labels, decorative elements; focus on data trends |

| Use Color Strategically [18] [19] | Differentiate protein chains, | Use sequential palettes for magnitude, diverging for positive/negative, categorical for distinct states; ensure colorblind accessibility |

| Ensure Accessibility [21] [22] | Sufficient contrast in charts and graphs | Maintain minimum 4.5:1 contrast ratio for normal text, 3:1 for large text; test with colorblindness simulators |

| Provide Clear Context [20] | Label molecular representations clearly | Use descriptive titles, axis labels with units, annotations for key events, data source citations |

| Establish Visual Hierarchy [19] [20] | Emphasize key findings in dashboards | Use size, position, and contrast to guide attention to most important insights first |

For MD data specifically, visualization choices should reflect the molecular nature of the data. Time-series data (RMSD, RMSF, Rg) are best represented with line charts, while structural properties may benefit from molecular visualization software like VMD [17]. When creating free energy landscapes, use color strategically with sequential palettes to represent energy values, ensuring sufficient contrast for interpretation [18] [19]. All visualizations should include clear context about the simulation parameters, including force field, water model, simulation time, and analysis method to ensure reproducibility.

Strategic selection of MD analysis tools based on specific research requirements significantly enhances efficiency and reproducibility in computational drug development. FastMDAnalysis provides an integrated solution for routine analyses, reducing scripting overhead by >90% while maintaining numerical accuracy [16]. For specialized applications including free energy calculations, Markov state modeling, and binding affinity estimation, domain-specific tools offer advanced capabilities. By combining appropriate software selection with rigorous experimental protocols and visualization best practices, researchers can maximize insights from MD simulations while ensuring robust, reproducible results. This structured approach to tool selection and analysis enables more effective utilization of MD simulations in drug discovery and biomolecular research.

Molecular dynamics (MD) simulations generate complex trajectory data that capture the time-dependent evolution of biomolecular systems. Establishing a robust and reproducible workflow for loading, visualizing, and initially assessing these trajectories is a foundational prerequisite for any meaningful scientific investigation in computational biology, structural biology, and drug development. This protocol outlines best practices for this initial phase of MD analysis, providing researchers with a standardized framework that ensures data integrity from the outset and enables reliable extraction of biophysical insights. A well-defined workflow is critical for transforming raw trajectory data into scientifically valid conclusions, particularly in drug discovery where molecular interactions inform lead optimization efforts. The procedures detailed herein emphasize accessible tools and rigorous methodologies suitable for both expert and non-expert practitioners.

The Scientist's Toolkit: Research Reagent Solutions

The following table catalogues essential software tools and resources required for establishing a complete MD trajectory analysis workflow. These represent the fundamental "research reagents" for computational structural biology.

Table 1: Essential Software Tools for MD Trajectory Analysis

| Tool Name | Primary Function | Key Features and Applications |

|---|---|---|

| VMD [4] [23] | Molecular Visualization & Analysis | A comprehensive program for displaying, animating, and analyzing large biomolecular systems using 3-D graphics and built-in scripting. It natively reads common GROMACS trajectory formats (.xtc, .trr). |

| PyMOL [4] [23] | Molecular Visualization | A powerful molecular viewer renowned for its high-quality rendering and animation capabilities. It typically requires trajectory conversion to PDB or similar formats unless compiled with specific VMD plugins. |

| Chimera [4] [23] | Molecular Visualization & Analysis | A full-featured, Python-based visualization program that reads GROMACS trajectories and offers a wide array of analysis features across multiple platforms. |

| mdciao [23] | Trajectory Analysis & Visualization | An open-source Python API and command-line tool designed for accessible, one-shot analysis and production-ready representation of MD data, focusing on residue-residue contact frequencies. |

| GROMACS Utilities [4] | Trajectory Processing & Analysis | A suite of built-in tools (e.g., trjconv, gmx traj, gmx dump) for essential trajectory operations like format conversion, imaging, clustering, and data extraction. |

| MDtraj [23] | Trajectory Analysis | A popular Python library that provides a wide range of fast trajectory analysis capabilities, forming a core component of many custom analysis scripts and workflows. |

| Grace/gnuplot [4] | Data Plotting | Standard tools for graphing data from GROMACS-generated .xvg files and other numerical outputs from analysis programs. |

Experimental Protocols

Protocol 1: Trajectory Loading and System Visualization

This protocol describes the initial steps of loading a molecular topology and trajectory into visualization software for system inspection.

Methodology:

- Software Selection and Preparation: Choose a visualization tool based on project needs. VMD is recommended for its extensive native support of MD trajectories and integrated analysis features [4]. Ensure the software is correctly installed and, if using PyMOL without plugins, have a trajectory conversion workflow ready.

- File Preparation: Gather the necessary input files:

- Topology File: The molecular structure file (e.g.,

.tpr,.gro,.pdb) defining atom connectivity. - Trajectory File: The time-series coordinate data (e.g.,

.xtc,.trr).

- Topology File: The molecular structure file (e.g.,

- Data Loading:

- In VMD, use the

File -> New Moleculemenu. Browse and load the topology file first. Then, with the new molecule selected, use theLoad Databutton in the Graphical Representations window to browse and load the trajectory file [4]. - In Chimera, use the

File -> Openmenu to directly open the trajectory files [4].

- In VMD, use the

- Initial System Inspection:

- Visually inspect the trajectory using the animation controls to ensure the simulation appears physically reasonable (e.g., no exploding molecules, expected global motion).

- Render the system using different representations (e.g.,

Linesfor the entire system,Licoricefor a protein binding site,Surffor a ligand) to assess structural quality. - Critical Note: Be aware that visualization software determines chemical bonds for rendering directly from atomic coordinates and distances, not from the original simulation topology. Minor discrepancies in bond rendering may occur compared to the topology definitions in your

.topor.tprfile [4].

Protocol 2: Trajectory Preprocessing and System Integrity Check

Prior to quantitative analysis, trajectories often require preprocessing to correct for periodic boundary conditions (PBC) artifacts and ensure the molecular system is intact.

Methodology:

Clustering for PBC Imaging (e.g., for a micelle or protein):

- Purpose: To ensure that a single, continuous molecule (like a protein or a micelle) is not split across the periodic box, which would invalidate calculations like radius of gyration.

- Procedure:

a. Use

gmx trjconvwith the-pbc clusterflag on the first frame to center the system correctly [4]. b. Generate a new run input file (tpr) using this clustered frame. c. Process the entire trajectory with-pbc nojumpusing the newtprfile. - Validation: Visually inspect the processed trajectory in VMD to confirm the molecule remains whole and centered.

Essential System Checks:

- Root-Mean-Square Deviation (RMSD): Calculate the protein backbone RMSD relative to the first frame to confirm structural stability.

- Visual Inspection: Confirm the absence of unrealistic bond lengths or angles in the visualized trajectory.

Protocol 3: Initial Quantitative Assessment with Contact Frequency Analysis

This protocol uses mdciao to perform an initial, informative assessment of residue-residue interactions across the trajectory [23].

Methodology:

Installation and Setup:

- Install

mdciaovia pip:pip install mdciao. - Ensure all dependencies (MDtraj, NumPy, Matplotlib) are installed in your Python environment.

- Install

Define Residues of Interest:

- Identify key molecular fragments, such as a protein-protein interface, a ligand-binding pocket, or specific mutated residues.

Compute Contact Frequencies:

- The core metric is the residue-residue contact frequency, calculated for a pair of residues (A, B) in a trajectory i as:

f^i_AB,δ = (Σ_j C_δ(d_AB^i(t_j))) / N_t^iwhereC_δis the contact function (1 if distanced_AB ≤ δ, else 0) andN_tis the number of frames [23]. - The global average frequency over all trajectories is:

F_AB,δ = (Σ_i Σ_j C_δ(d_AB^i(t_j))) / (Σ_i N_t^i) - Default Parameters:

mdciaouses a cutoff (δ) of 4.5 Å and a "closest heavy-atom" distance scheme as a robust starting point [23].

- The core metric is the residue-residue contact frequency, calculated for a pair of residues (A, B) in a trajectory i as:

Execute Analysis:

- Command-Line Interface (CLI): Use a command like the following for a one-shot analysis.

- Python API: Integrate the analysis into a custom Jupyter Notebook script for greater flexibility.

Interpretation:

- Examine the generated contact map and flare plot to identify persistent interactions.

- High-frequency contacts suggest stable interactions, while transient contacts may indicate more dynamic regions.

Workflow Visualization and Data Presentation

The logical flow of the established workflow, from data loading to initial assessment, is depicted in the following diagram.

The quantitative data generated from the initial assessment phase, particularly contact frequency analysis, can be summarized as follows for clear reporting and comparison.

Table 2: Example Summary of Initial Assessment Metrics

| Analysis Metric | Target/Region | Average Value ± SD | Interpretation & Notes |

|---|---|---|---|

| Backbone RMSD (nm) | Protein (backbone) | 0.15 ± 0.03 | System stabilized after 50 ns; value indicates structural integrity. |

| Residue-Residue Contact Frequency | Ligand Binding Site | 0.85 | Persistent interaction; key for binding stability. |

| Residue-Residue Contact Frequency | Loop Region (Res 100-110) | 0.45 | Highly dynamic region; transient contacts observed. |

| Radius of Gyration (Rg) | Protein (whole) | 2.05 ± 0.08 nm | Compact and stable fold throughout the simulation. |

Practical Implementation: Analysis Workflows and Visualization Techniques That Deliver Results

Molecular dynamics (MD) simulations generate complex, time-dependent data requiring sophisticated analytical approaches for meaningful interpretation. MDAnalysis, a Python library for MD trajectory analysis, provides researchers with robust tools for processing, analyzing, and visualizing simulation data through seamless integration with the scientific Python ecosystem. This application note details protocols for leveraging MDAnalysis within computational research workflows, emphasizing its capabilities for trajectory transformation, Path Similarity Analysis (PSA), convergence evaluation, and visualization. We present structured methodologies, quantitative comparisons, and standardized workflows to enable researchers and drug development professionals to extract biologically relevant insights from MD simulations efficiently, supporting the broader thesis that systematic analytical approaches are essential for advancing MD trajectory analysis and visualization techniques.

MDAnalysis provides an object-oriented framework for working with molecular dynamics data, treating atoms, groups of atoms, and trajectories as distinct Python objects with defined operations and attributes. The fundamental architecture centers on the Universe object, which serves as the primary interface for accessing simulation data. A Universe is typically initialized with a topology file (defining system structure) and a trajectory file (containing coordinate frames), supporting over 30 common MD file formats including PSF, PDB, DCD, XTC, and GRO files [24]. This design creates an abstraction layer that enables portable analysis code across diverse simulation platforms and force fields.

The core objects within MDAnalysis include Atom, AtomGroup, and Timestep classes, which provide methods for geometric calculations, atom selection, and trajectory iteration. For example, an AtomGroup can compute properties like center of mass or radius of gyration, while selection commands enable the creation of specific molecular subgroups using CHARMM-style selection syntax [24]. This architecture facilitates interactive exploration in IPython environments and seamless integration with NumPy arrays, enabling researchers to apply the extensive computational capabilities of the scientific Python stack to molecular simulation data.

Core Analytical Capabilities and Implementation

Trajectory Transformation Framework

MDAnalysis implements an "on-the-fly" transformation system that modifies trajectory data as it is read, without altering the original files. Transformations are functions that take a Timestep object, modify its coordinates, and return the modified object [25]. These transformations can be organized into workflows - ordered sequences of transformation functions that execute automatically as trajectories are processed.

The transformation framework supports essential preprocessing operations including:

- Periodic boundary condition (PBC) corrections: Wrapping or unwrapping molecules across periodic images

- Structural alignment: Superimposing structures to a reference frame to remove global rotation and translation

- Coordinate manipulation: Translating, rotating, or filtering coordinate data

- Custom transformations: User-defined functions for specialized processing needs

Workflows can be associated with trajectories either during Universe initialization or afterward using the add_transformations() method [25]. The following example demonstrates creating a transformation workflow that translates coordinates and applies PBC corrections:

Custom transformations can be implemented as Python functions following specific patterns. For transformations requiring parameters beyond the Timestep object, either wrapped functions or functools.partial can be used to create the necessary interface [25]:

Path Similarity Analysis (PSA)

Path Similarity Analysis provides quantitative comparison of conformational transitions by calculating pairwise distances between trajectories. MDAnalysis implements PSA through the MDAnalysis.analysis.psa module, which supports multiple distance metrics including the discrete Fréchet distance and Hausdorff distance [26]. These metrics capture differences in the shape and progression of structural transitions, enabling clustering of trajectories based on similarity.

The computational intensity of PSA scales with the product of frames in compared trajectories and the total number of pairwise comparisons. For N trajectories, the number of required comparisons is M = N(N-1)/2, resulting in 79,800 comparisons for 400 trajectories [26]. To manage this computational load, MDAnalysis supports a block decomposition strategy where the distance matrix is partitioned into submatrices for parallel computation.

The following protocol details PSA implementation:

Trajectory Preparation:

Reference Structure Alignment:

Coordinate Extraction and Distance Calculation:

Table 1: PSA Distance Metrics in MDAnalysis

| Metric | Method | Application | Interpretation |

|---|---|---|---|

| Discrete Fréchet | psa.discrete_frechet() |

Global path similarity | RMSD between Fréchet pair frames |

| Hausdorff | psa.hausdorff() |

Maximum separation between paths | Maximum nearest-neighbor distance |

| Weighted RMSD | psa.wrmsd() |

Local structural similarity | Frame-pairwise weighted RMSD |

Convergence Analysis

Evaluating trajectory convergence determines whether simulation sampling adequately represents the conformational ensemble. MDAnalysis provides convergence analysis through the encore module, which implements two complementary approaches: Clustering Ensemble Similarity (CES) and Dimension Reduction Ensemble Similarity (DRES) [27].

The convergence analysis protocol involves:

- Window Definition: The trajectory is divided into increasing windows (e.g., if window_size=3 for a 13-frame trajectory, windows would contain 3, 6, 9, and 12 frames) [27]

- Ensemble Comparison: Conformational ensembles from each window are compared using similarity metrics

- Similarity Tracking: The rate at which similarity values approach zero indicates convergence

Implementation example:

Table 2: Convergence Analysis Methods in MDAnalysis

| Method | Approach | Output Metric | Parameters |

|---|---|---|---|

| CES | Clustering ensemble comparison | Jensen-Shannon divergence | Number of clusters, clustering algorithm |

| DRES | Dimension reduction comparison | Jensen-Shannon divergence | Subspace dimension, reduction method |

| Harmonic similarity | Covariance matrix comparison | Overlap integral | - |

Visualization and Specialized Analysis

Streamplot Generation for 2D Flow Fields

MDAnalysis provides specialized visualization capabilities for analyzing molecular motion patterns, particularly in membrane systems. The streamlines module generates 2D flow fields from MD trajectories, enabling visualization of collective motion such as lipid diffusion [28].

The generate_streamlines() function processes trajectory data to produce displacement arrays:

Noncovalent Interaction Analysis with PyContact

While not directly part of MDAnalysis, PyContact complements the ecosystem by providing analysis of noncovalent interactions in MD trajectories [29]. It identifies hydrogen bonds, ionic interactions, hydrophobic contacts, and π-π stacking, with seamless integration to Visual Molecular Dynamics (VMD) for interactive visualization. This specialized analysis reveals interaction networks governing molecular recognition, binding affinity, and structural stability - critical information for drug development applications.

Integrated Workflow for MD Analysis

The following diagram illustrates a comprehensive MDAnalysis workflow incorporating trajectory transformation, analysis, and visualization:

MD Analysis Workflow: Integrated pipeline from raw trajectories to quantitative insights and visualization.

Research Reagent Solutions

Table 3: Essential Components for MDAnalysis Workflows

| Component | Function | Example Specifications |

|---|---|---|

| Topology Files | Define atomic structure and connectivity | PSF, PDB, GRO formats [24] |

| Trajectory Files | Contain coordinate frames over time | DCD, XTC, TRR formats [24] |

| Atom Selection Language | Create molecular subgroups | CHARMM-style syntax ('name CA', 'backbone') [24] |

| Transformation Functions | Modify trajectory coordinates | translate(), rotate(), wrap() [25] |

| Analysis Modules | Implement specific analytical methods | psa, encore, contacts [26] [27] |

| Visualization Tools | Generate structural and quantitative plots | streamlines, matplotlib integration [28] |

Performance Optimization Strategies

Memory Management

For analysis of large trajectories, MDAnalysis provides the transfer_to_memory() method, which loads entire trajectories into RAM, significantly accelerating data access compared to reading from disk for each operation [26]. This approach is particularly beneficial for PSA and convergence analysis requiring repeated trajectory access.

Parallel Processing

MDAnalysis supports parallel execution for computationally intensive tasks. The streamplot generation function implements multiprocessing, and the PSA framework enables block-based parallelization through tools like RADICAL.pilot [26] [28]. This distributed approach partitions the distance matrix into blocks processed independently across computing resources.

MDAnalysis establishes a robust framework for MD trajectory analysis through deep integration with the scientific Python ecosystem. The protocols detailed in this application note - encompassing trajectory transformation, Path Similarity Analysis, convergence evaluation, and visualization - provide researchers with standardized methodologies for extracting quantitative insights from complex simulation data. As MD simulations continue to grow in scale and complexity, such systematic analytical approaches become increasingly essential for advancing our understanding of biomolecular dynamics and interactions, particularly in drug development applications where precise characterization of molecular behavior is critical. The continued development of MDAnalysis, including recent projects to implement transport property calculations and parallel analysis backends [30], ensures its ongoing relevance for the evolving needs of the computational structural biology community.

Molecular dynamics (MD) simulations generate complex trajectory data, necessitating advanced visualization toolkits for effective analysis. This Application Note details three specialized platforms—NGL Viewer, MolecularNodes, and MDsrv—that address the critical need for interactive, collaborative, and publication-quality molecular visualization. These tools empower researchers to move beyond static structural analysis, enabling dynamic investigation of biomolecular function, interaction, and motion, which is fundamental to fields like structural biology and drug development.

The featured toolkits serve complementary roles within the scientific workflow. NGL Viewer provides a web-based, WebGL-powered graphics library for viewing proteins and nucleic acids [31]. MolecularNodes is a Blender add-on that leverages industry-standard rendering technology to import, style, and animate structural biology data, including MD trajectories [32] [33]. MDsrv is a web server that streams MD trajectories for interactive visual analysis and collaborative sharing directly in browsers [31] [34].

Table 1: Core Feature Comparison of NGL Viewer, MolecularNodes, and MDsrv

| Feature | NGL Viewer | MolecularNodes | MDsrv |

|---|---|---|---|

| Primary Environment | Web browser, Jupyter notebooks [31] [35] | Blender Desktop Software [32] [33] | Web browser (server-client) [36] [34] |

| Core Strength | Web-based molecular graphics & embedding [31] [37] | High-quality rendering & animation [33] [38] | Trajectory streaming & online sharing [31] [34] |

| Key Representations | Cartoon, ball+stick, surface, density [31] [37] | Custom styles via Geometry Nodes [32] [33] | Preset & customizable views [34] |

| Trajectory Support | Yes (via multiple backends) [35] | Yes (via MDAnalysis/Biotite) [33] [6] | Yes (primary function) [36] [34] |

| Collaboration Features | Embedding in websites [37] | Sharing .blend files & animations | Session sharing with preset views [34] |

| Analysis Integration | Basic selection & coloring [37] | Future Jupyter-MDAnalysis integration [38] | On-the-fly RMSD, distances, alignments [34] |

Table 2: Technical Specifications and Data Format Support

| Aspect | NGL Viewer | MolecularNodes | MDsrv |

|---|---|---|---|

| Input Formats (Structure) | PDB, mmCIF [37] | PDB, mmCIF, Cryo-EM maps [32] | PDB, mmCIF (via NGL) [36] |

| Input Formats (Trajectory) | Compatible with pytraj, MDTraj, MDAnalysis [35] | Wide variety via MDAnalysis & Biotite [33] [6] | XTC, TRR (with random access) [36] |

| Programming Languages | JavaScript, Python (Jupyter) [31] [35] | Python (Blender) | Python (Server), JavaScript (Client) [36] |

| License & Cost | Open-Source [31] | Open-Source [33] | Open-Source [36] |

Essential Research Reagents and Computational Tools

A well-equipped computational research environment is foundational for effective molecular visualization and analysis.

Table 3: Essential Research Reagent Solutions for MD Visualization

| Item Name | Function/Application | Example/Note |

|---|---|---|

NGL Library (ngl.js) |

Core JavaScript library for embedding molecular viewers in web pages and applications [37]. | Available via CDN (e.g., Unpkg) or direct download [37]. |

| MolecularNodes Blender Add-on | Enables Blender to import and manipulate molecular structures and trajectories [32] [33]. | Installed via Blender's "Get Extensions" menu [33]. |

| MDsrv Server Package | Python-based server for streaming MD trajectory data to a web client [36]. | Can be deployed with Apache mod_wsgi [36]. |

| Python Analysis Stack | Backend for trajectory handling and analysis in NGLView and MolecularNodes. | Includes MDAnalysis [6], MDTraj [35], or pytraj [35]. |

| WebGL-Capable Browser | Client-side rendering of molecular graphics for NGL Viewer and MDsrv. | Requires Chrome >27, Firefox >29, or Safari >8; EXT_frag_depth extension recommended [36]. |

Protocols for Visualization and Analysis

Protocol: Interactive Trajectory Analysis in Jupyter with NGLView

This protocol enables interactive exploration of MD trajectories directly within a Jupyter notebook, ideal for rapid, iterative analysis.

Procedure:

- Environment Setup: Install

nglviewand a compatible trajectory analysis package (e.g.,MDAnalysis,pytraj, ormdtraj) usingpiporconda. - Data Loading: Within a Jupyter notebook cell, load your trajectory data using your chosen backend.

- Representation and Styling: Add and customize molecular representations. The widget supports interactive manipulation, or it can be controlled programmatically.

- Interactive Visualization: Display the widget to begin interactive analysis.

Protocol: Production-Quality Molecular Animation with MolecularNodes

This protocol outlines the process of creating high-fidelity, publication-ready animations from MD trajectories using Blender and MolecularNodes.

Procedure:

- Add-on Installation:

a. Open Blender (version >=4.2 recommended).

b. Navigate to

Edit>Preferences>Extensions. c. Search for "MolecularNodes" and install the official extension [33]. - Data Import:

a. Use the

Molecular Nodespanel in the 3D Viewport sidebar. b. Import a structure from the PDB or AFDB, or load a local trajectory file (e.g., XTC, TRR). The topology and trajectory files can be specified separately. - Styling and Scene Setup: a. A Geometry Nodes setup is automatically created. Modify the node tree to change representations (e.g., cartoon, surface, licorice). b. Utilize Blender's materials, lighting, and camera tools to craft a visually compelling scene.

- Trajectory Playback and Rendering:

a. The trajectory frames are mapped to Blender's timeline.

b. Scrub through the timeline to preview the animation.

c. Use Blender's Render Settings and

Render Animationto output the final video.

Protocol: Web-Based Trajectory Sharing and Collaboration with MDsrv

This protocol enables the setup of a server to stream and share MD trajectories with collaborators, allowing them to view and analyze simulations without specialized software.

Procedure:

- Server Setup:

a. Install the

mdsrvpackage. b. (Optional) Configureapp.cfgto set the data directory and access restrictions [36]. - Trajectory Preparation: Place topology (e.g., PDB) and trajectory (e.g., XTC, TRR) files in the server's accessible data directory.

- Server Deployment: Start the server. For production use, deployment via Apache with

mod_wsgiis recommended [36]. - Access and Collaboration: a. Provide collaborators with the server URL. b. Upon access, the NGL Viewer interface loads. Trajectories can be played and representations customized. c. Use the "Create Session" feature to generate a URL with preset representations and camera orientation, which can be shared for collaborative analysis [34].

Integrated Workflow for MD Trajectory Visualization

The following diagram illustrates a synergistic workflow integrating NGLView, MolecularNodes, and MDsrv, highlighting their complementary roles from initial exploration to final dissemination.

NGL Viewer, MolecularNodes, and MDsrv form a powerful, integrated toolkit that covers the entire lifecycle of MD trajectory analysis. Researchers can leverage NGLView for rapid, interactive exploration in Jupyter notebooks, utilize the unparalleled rendering power of MolecularNodes in Blender to create compelling visual narratives and publication-quality materials, and employ MDsrv to facilitate seamless collaboration and sharing of results. This multi-platform approach ensures that researchers have access to the best tool for each stage of their workflow, from initial discovery to final communication.

X3DNA-DSSR (Dissecting the Spatial Structure of RNA) represents an integrated computational tool specifically designed to streamline the analysis and annotation of three-dimensional nucleic acid structures. Supported by the NIH as an NIGMS National Resource (grant R24GM153869), this sophisticated software has become indispensable for researchers investigating DNA and RNA architectures [39]. Unlike generic molecular visualization tools, DSSR incorporates specialized algorithms that automatically identify and characterize nucleic-acid-specific structural features, including modified nucleotides, stacked bases, canonical and non-canonical base pairs, higher-order base associations, pseudoknots, various loop types, and G-quadruplexes [40]. This capability is particularly valuable for molecular dynamics (MD) studies where understanding conformational transitions and stability of nucleic acids is crucial.

The utility of DSSR extends beyond static structure analysis to dynamic simulations, making it highly relevant for MD trajectory investigation. Recent studies have demonstrated its effectiveness in analyzing complex nucleic acid systems, such as DNA three-way junctions (3WJs), through MD simulations [41]. As noted in the Singh et al. paper (2025), "We have utilized the X3DNA program to analyze, recreate, and visualize three-dimensional nucleic acid structures. X3DNA is capable of both the antiparallel and the parallel double-helices, the single-stranded structures, the triplexes, the quadruplexes, as well as the other intricate tertiary motifs which is present within the DNA and the RNA structures" [41]. This versatility makes DSSR an essential component in the structural bioinformatics toolkit for researchers studying nucleic acid dynamics, folding, and function.

MD Trajectory Analysis Capabilities

Specialized Features for Dynamics Studies

DSSR offers specific functionality that makes it particularly suited for analyzing MD trajectories of nucleic acids. The software can process structural ensembles through its --nmr (or --md) option, which automates the analysis of multiple conformations from simulations [41]. This capability is essential for tracking conformational changes, stability, and compactness of nucleic acid structures over time. For MD practitioners, DSSR provides detailed characterization of local conformational parameters, including helicoidal parameters and torsion-angle parameters, which are essential for comprehending the folding and rotation of bases during dynamical pathways [41].

A significant advantage of DSSR in MD analysis is its lack of external dependencies and detailed structural characterization capabilities. As noted on the X3DNA website, "Although there are specialized software tools designed for analyzing MD trajectories, the features available in DSSR appear to be adequate for applications like those described in the Singh et al. paper. DSSR has no external dependencies, is easy to use, and provides a more detailed characterization of nucleic acid structures than other tools" [41]. Furthermore, the active maintenance and support ensure compatibility with current computational methodologies.

Technical Implementation for Trajectory Processing

The practical implementation of DSSR for MD trajectory analysis requires conversion of simulation outputs to compatible formats. While popular MD packages (AMBER, GROMACS, CHARMM, etc.) utilize specialized binary formats for trajectories, DSSR is designed to work with standard PDB or mmCIF formats [41]. The input coordinates must be formatted with each model delineated by MODEL/ENDMDL tags in PDB or have associated model numbers in mmCIF records.

The combination of the --nmr and --json options facilitates the programmatic parsing of output from multiple models, making DSSR directly accessible to the MD community [41]. This JSON-structured output enables researchers to efficiently extract and analyze structural parameters across hundreds or thousands of simulation snapshots, revealing dynamics that might be obscured in static structures.