Machine Learning vs. Traditional Force Fields: A New Paradigm for Molecular Simulation and Drug Discovery

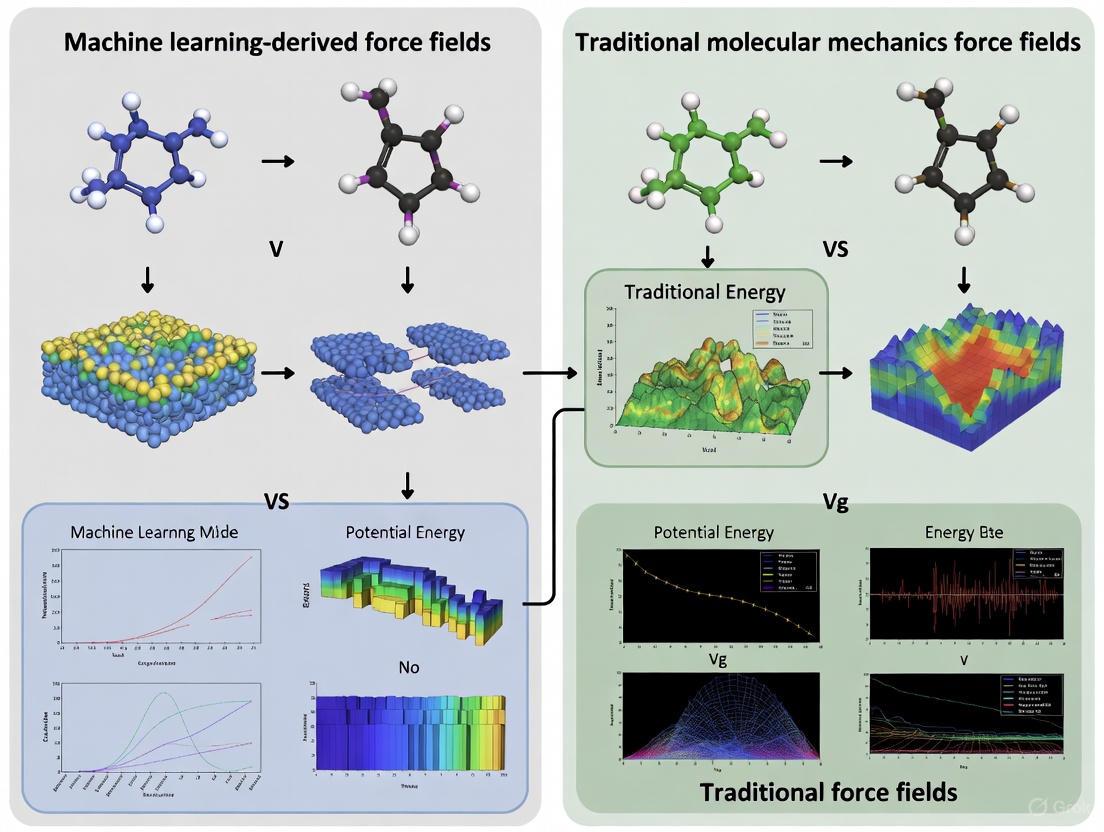

This article provides a comprehensive comparison between emerging machine learning-derived force fields and traditional molecular mechanics force fields, tailored for researchers and professionals in computational chemistry and drug development.

Machine Learning vs. Traditional Force Fields: A New Paradigm for Molecular Simulation and Drug Discovery

Abstract

This article provides a comprehensive comparison between emerging machine learning-derived force fields and traditional molecular mechanics force fields, tailored for researchers and professionals in computational chemistry and drug development. It explores the foundational principles of both approaches, detailing how ML force fields like Grappa and Vivace use graph neural networks to predict parameters directly from molecular structures, moving beyond the fixed atom types of traditional force fields. The content covers key methodological differences, practical applications in simulating biomolecules and polymers, and tackles central challenges such as data requirements, computational cost, and transferability. Finally, it synthesizes validation strategies and performance benchmarks, offering a forward-looking perspective on how ML force fields are set to enhance the accuracy and scope of molecular simulations in biomedical research.

From Fixed Parameters to Learned Potentials: Understanding Force Field Fundamentals

The Core Principles of Traditional Molecular Mechanics Force Fields

Molecular Mechanics (MM) force fields are the cornerstone of computational molecular modeling, providing the mathematical framework that enables the simulation of biological macromolecules and drug-like molecules at an atomistic level. These computational models describe the potential energy of a system as a function of nuclear coordinates, approximating the quantum mechanical energy surface with a classical mechanical model to decrease computational cost by orders of magnitude [1]. In the context of drug discovery, MM force fields remain the method of choice for protein simulations and protein-ligand binding studies, as they facilitate the simulation of entire proteins in aqueous environments over relevant timescales [1]. This article examines the fundamental principles, functional forms, parametrization strategies, and limitations of traditional MM force fields, providing a foundational comparison for evaluating emerging machine-learning alternatives.

Fundamental Components and Functional Forms

The core architecture of traditional MM force fields decomposes the total potential energy into distinct contributions from bonded and non-bonded interactions [2] [1]. This additive approach allows for computationally efficient evaluation of energy and forces, enabling molecular dynamics simulations of large systems.

Bonded Interactions

Bonded terms describe the energy associated with the covalent structure of molecules and are typically represented by simple analytical functions [2] [1].

Bond Stretching: The energy required to stretch or compress a chemical bond from its equilibrium length is most commonly modeled using a harmonic potential, analogous to a spring obeying Hooke's law [2]: ( E{\text{bond}} = \sum{\text{bonds}} Kb(b - b0)^2 ) where ( Kb ) is the bond force constant, ( b ) is the actual bond length, and ( b0 ) is the reference equilibrium bond length [1]. While a Morse potential provides a more realistic description that allows for bond breaking, it is computationally more expensive and rarely used in standard biomolecular force fields [2].

Angle Bending: The energy associated with the deviation of valence angles from their equilibrium values is also typically represented by a harmonic term [1]: ( E{\text{angle}} = \sum{\text{angles}} K\theta(\theta - \theta0)^2 ) where ( K\theta ) is the angle force constant, ( \theta ) is the actual angle, and ( \theta0 ) is the reference equilibrium angle.

Torsional Rotations: The energy barrier associated with rotation around chemical bonds is described by a periodic function [1]: ( E{\text{dihedral}} = \sum{\text{dihedrals}} \sum{n=1}^6 K{\phi,n}(1 + \cos(n\phi - \deltan)) ) where ( K{\phi,n} ) is the torsional force constant, ( n ) is the multiplicity, ( \phi ) is the dihedral angle, and ( \delta_n ) is the phase angle. Proper parametrization of dihedral terms is particularly crucial for accurately reproducing conformational energetics [1].

Improper Dihedrals: These terms enforce out-of-plane bending, typically to maintain the planarity of aromatic rings and other conjugated systems [2] [1]: ( E{\text{improper}} = \sum{\text{improper dihedrals}} K\varphi(\varphi - \varphi0)^2 )

Non-Bonded Interactions

Non-bonded terms describe interactions between atoms that are not directly connected by covalent bonds and primarily govern intermolecular interactions and long-range intramolecular effects [2].

Electrostatics: The classical Coulomb potential describes electrostatic interactions between atomic partial charges [2] [1]: ( E{\text{electrostatic}} = \sum{\text{nonbonded pairs } ij} \frac{qi qj}{4\pi D r{ij}} ) where ( qi ) and ( qj ) are partial charges, ( r{ij} ) is the interatomic distance, and ( D ) is the dielectric constant. The assignment of atomic charges is typically based on heuristic approaches using quantum mechanical calculations [2].

van der Waals Forces: The Lennard-Jones potential captures both attractive (dispersion) and repulsive (electron cloud overlap) components of van der Waals interactions [1]: ( E{\text{vdW}} = \sum{\text{nonbonded pairs } ij} \varepsilon{ij} \left[ \left( \frac{R{\min,ij}}{r{ij}} \right)^{12} - 2 \left( \frac{R{\min,ij}}{r{ij}} \right)^6 \right] ) where ( \varepsilon{ij} ) represents the well depth and ( R_{\min,ij} ) defines the distance at which the potential reaches its minimum [1].

Table 1: Core Energy Terms in Class I Additive Force Fields

| Energy Component | Functional Form | Key Parameters | Physical Basis |

|---|---|---|---|

| Bond Stretching | ( Kb(b - b0)^2 ) | ( Kb ), ( b0 ) | Covalent bond vibration |

| Angle Bending | ( K\theta(\theta - \theta0)^2 ) | ( K\theta ), ( \theta0 ) | Valence angle deformation |

| Proper Dihedral | ( K{\phi,n}(1 + \cos(n\phi - \deltan)) ) | ( K{\phi,n} ), ( n ), ( \deltan ) | Torsional rotation barrier |

| Improper Dihedral | ( K\varphi(\varphi - \varphi0)^2 ) | ( K\varphi ), ( \varphi0 ) | Out-of-plane bending |

| Electrostatics | ( \frac{qi qj}{4\pi D r_{ij}} ) | ( qi ), ( qj ) | Coulomb interaction between partial charges |

| van der Waals | ( \varepsilon{ij} \left[ \left( \frac{R{\min,ij}}{r{ij}} \right)^{12} - 2 \left( \frac{R{\min,ij}}{r_{ij}} \right)^6 \right] ) | ( \varepsilon{ij} ), ( R{\min,ij} ) | Dispersion and exchange-repulsion |

Diagram 1: Architecture of traditional molecular mechanics force fields showing the decomposition of total potential energy into bonded and non-bonded components.

The development of accurate force fields requires careful parameterization, where functional forms are combined with specific parameter sets to describe interactions at the atomistic level [2]. This process represents a significant challenge in force field development.

Parameter Determination Strategies

Force field parameters are derived through two primary approaches, often used in combination [2]:

Quantum Mechanical Calculations: High-quality quantum mechanical data on molecular geometries, vibrational frequencies, and torsion energy profiles provide target data for parametrizing bonded interactions and atomic charges [2] [3]. For example, the ByteFF force field was trained on QM data for 2.4 million optimized molecular fragment geometries with analytical Hessian matrices [3].

Experimental Data: Macroscopic experimental properties such as enthalpy of vaporization, enthalpy of sublimation, dipole moments, and liquid densities are used to refine parameters, particularly for non-bonded interactions [2]. This approach ensures the force field reproduces bulk material properties accurately.

Atom Typing and Transferability

A fundamental concept in traditional force fields is atom typing, where atoms are classified not only by element but also by their chemical environment [2]. For instance, oxygen atoms in water and oxygen atoms in carbonyl functional groups are assigned different force field types with distinct parameters [2]. This approach enables limited transferability, where parameters developed for small molecules can be applied to larger systems with similar chemical motifs [3]. However, this transferability is constrained by the predefined atom types and may fail for novel chemical structures not represented in the training set.

Table 2: Comparison of Force Field Parametrization Approaches

| Parametrization Aspect | Traditional Heuristic Approach | Modern Data-Driven Approach |

|---|---|---|

| Parameter Assignment | Look-up tables based on atom types | Graph neural networks predicting parameters [3] |

| Chemical Environment Handling | SMIRKS patterns [3] | Continuous learned representations [3] |

| Training Data Source | Combination of QM calculations and experimental data [2] | Large-scale QM datasets (millions of molecules) [3] |

| Transferability | Limited by predefined atom types and chemical patterns | Potentially broader coverage of chemical space [3] |

| Dihedral Treatment | Predefined torsion parameters with limited coverage | Extensive torsion profiles (e.g., 3.2 million in ByteFF) [3] |

Classification and Generations of Force Fields

Traditional force fields can be categorized into different classes based on their complexity and the physical phenomena they incorporate [1] [4].

Class I Force Fields

Class I potential energy functions represent the most widely used category in biomolecular simulations [1]. These employ simple harmonic potentials for bond and angle terms, periodic functions for dihedrals, and pairwise additive non-bonded interactions [1]. Popular Class I force fields include AMBER, CHARMM, OPLS, and GROMOS, which form the backbone of contemporary molecular dynamics simulations in drug discovery [1]. Their computational efficiency makes them suitable for simulating large systems over extended timescales, but they lack explicit treatment of electronic polarization and may struggle with accurately modeling heterogeneous environments.

Class II and III Force Fields

More sophisticated force fields incorporate additional physical effects to improve accuracy [1]:

Anharmonicity: Class II and III force fields include cubic and/or quartic terms in the potential energy for bonds and angles, allowing for more accurate reproduction of quantum mechanical potential energy surfaces and experimental vibrational spectra [1].

Cross Terms: These force fields incorporate coupling between internal coordinates, such as bond-bond, bond-angle, and angle-torsion cross terms, to better model vibrational spectra and subtle structural effects [1].

Polarizability: Advanced force fields include explicit polarization effects through methods such as fluctuating charges, Drude oscillators, or induced dipoles, though these come with significantly increased computational cost [2] [1].

Diagram 2: Traditional force field development workflow showing the iterative process of parameter optimization against quantum mechanical and experimental target data.

Limitations and Challenges

Despite their widespread success, traditional molecular mechanics force fields face several fundamental limitations that impact their accuracy and transferability.

Fixed Functional Forms

The predetermined analytical forms used in MM force fields inherently limit their ability to capture the full complexity of quantum mechanical potential energy surfaces [3]. This is particularly problematic for systems where non-pairwise additivity of non-bonded interactions is significant or where the simple functional forms cannot adequately represent complex bonding situations [3].

Limited Transferability

Traditional force fields struggle with transferability—the ability to accurately simulate conditions beyond those for which they were specifically optimized [5]. This limitation becomes particularly evident when exploring the vast chemical space of drug-like molecules or synthetic polymers, where chemical environments may differ significantly from the training data used for parameterization [5].

Fixed Charge Models

The additive electrostatic model used in most biomolecular force fields employs fixed partial charges that cannot respond to changes in their electrostatic environment [1]. This limitation affects the accuracy of simulations in heterogeneous environments such as protein-ligand binding sites or membrane interfaces, where polarization effects can be substantial [1].

Inability to Model Bond Breaking

Standard Class I force fields cannot simulate chemical reactions because their harmonic bond potentials do not allow for bond dissociation [5]. While reactive force fields such as ReaxFF have been developed to address this limitation, they require laborious reparameterization for different chemical systems [6].

Table 3: Key Resources for Traditional Force Field Research and Application

| Resource Category | Specific Examples | Function and Application |

|---|---|---|

| Force Field Databases | OpenKim [2], TraPPE [2], MolMod [2] | Collections of parameter sets for different molecular systems |

| Parameterization Tools | FFBuilder [3], SMIRKS patterns [3] | Assist in developing and refining force field parameters |

| Quantum Chemistry Codes | Gaussian, ORCA, PSI4 | Generate reference data for force field parametrization |

| Molecular Dynamics Engines | GROMACS, AMBER, NAMD, OpenMM | Perform simulations using force field parameters |

| Experimental Reference Data | Enthalpy of vaporization, liquid densities, vibrational spectra | Experimental validation of force field accuracy [2] |

Traditional molecular mechanics force fields provide a computationally efficient framework for simulating molecular systems through their decomposition of potential energy into physically intuitive bonded and non-bonded terms. The Class I additive potential energy function, with its harmonic bond and angle terms, periodic torsions, and pairwise non-bonded interactions, has proven remarkably successful across diverse applications in drug discovery and materials science. However, fundamental limitations arising from fixed functional forms, limited transferability, and the inability to model chemical reactions and polarization effects have motivated the development of machine-learning approaches. Understanding these core principles and limitations provides essential context for evaluating the performance and advancements of emerging machine learning force fields in computational chemistry and drug design.

Molecular dynamics (MD) simulations are a cornerstone of modern computational science, enabling the study of material properties and biomolecular processes at the atomic level. The underlying engine of these simulations is the force field (FF)—a mathematical model that describes the potential energy surface and forces acting within a molecular system. For decades, traditional molecular mechanics force fields have dominated this landscape, operating under a fundamental constraint: the trade-off between computational efficiency and physical accuracy. While highly optimized for simulating large systems over extended timescales, these conventional FFs often lack the quantum-mechanical precision required for predictive modeling. The emergence of machine learning force fields (MLFFs) represents a paradigm shift, offering a path to reconcile this long-standing compromise. This guide provides a comprehensive comparison between these approaches, examining their theoretical foundations, performance benchmarks, and practical applications in contemporary research.

Fundamental Concepts and Comparison Framework

Traditional Molecular Mechanics Force Fields

Traditional force fields employ physics-inspired analytical functions with pre-defined parameters to describe interatomic interactions. The total potential energy is typically decomposed into bonded terms (bond stretching, angle bending, dihedral torsions) and non-bonded terms (van der Waals, electrostatic interactions):

[ E{\text{total}} = E{\text{bond}} + E{\text{angle}} + E{\text{torsion}} + E{\text{vdW}} + E{\text{electrostatic}} ]

These additive all-atom FFs assign fixed partial charges to each atom and calculate non-bonded interactions using a pairwise additive approximation [7]. Their efficiency stems from these simplified functional forms, but this very simplification limits their ability to capture complex quantum mechanical effects such as polarization, charge transfer, and bond formation/breaking.

A significant limitation of traditional FFs is their reliance on atom typing—a manual classification system where parameters are assigned based on chemical identity and local environment. This process is labor-intensive and inherently limited to chemical spaces covered by existing parameter sets [7]. Furthermore, traditional FFs typically require reparameterization for different conditions or molecule types, lacking true transferability across diverse chemical environments [5].

Machine Learning Force Fields

MLFFs replace the pre-defined functional forms of traditional FFs with flexible, data-driven models trained on high-fidelity quantum mechanical calculations or experimental data. Unlike traditional FFs with their fixed mathematical expressions, MLFFs learn the relationship between atomic configurations and potential energies/forces directly from reference data [8].

Two primary architectural paradigms have emerged:

End-to-End MLFFs: These models directly map atomic configurations to energies and forces using sophisticated neural network architectures such as Graph Neural Networks (GNNs) or equivariant networks [8] [5] [9]. Examples include MACE-OFF and Vivace, which demonstrate remarkable transferability across organic molecules and polymers, respectively.

ML-Augmented Molecular Mechanics: This hybrid approach retains the computational efficiency of traditional FF functional forms but uses machine learning to predict their parameters. Grappa exemplifies this strategy, employing a graph neural network to predict MM parameters directly from molecular structure, thereby eliminating the need for manual atom typing [10].

Table 1: Fundamental Characteristics of Traditional vs. Machine Learning Force Fields

| Feature | Traditional Force Fields | Machine Learning Force Fields |

|---|---|---|

| Functional Form | Pre-defined, physics-based analytical functions | Flexible, data-driven models (e.g., neural networks) |

| Parameterization | Manual atom typing and empirical fitting | Learned automatically from reference data (QM or experimental) |

| Computational Cost | Very low | Moderate to high (but significantly cheaper than QM) |

| Accuracy | Limited by functional form; system-dependent | Can approach quantum mechanical accuracy |

| Transferability | Limited to parameterized chemical spaces | High; can generalize to unseen molecules |

| Bond Breaking/Forming | Generally not possible without reparameterization | Can be modeled inherently by some architectures |

| Long-Range Interactions | Approximated via fixed charges or polarizable models | Varies; some include explicit long-range treatments |

Experimental Performance Benchmarks

Quantitative Accuracy Comparisons

Rigorous benchmarking against experimental data and quantum mechanical references reveals significant performance differences between traditional and machine learning FFs.

Organic Molecules and Biomolecules: The MACE-OFF force field demonstrates exceptional capability in reproducing gas and condensed-phase properties of organic molecules. It accurately predicts dihedral torsion scans of unseen molecules, describes molecular crystals and liquids reliably (including quantum nuclear effects), and determines free energy surfaces in explicit solvent [9]. Notably, MACE-OFF successfully simulates the folding dynamics of peptides and enables nanosecond-scale simulation of fully solvated proteins, achieving accuracy previously inaccessible to traditional FFs at comparable computational cost [9].

Polymer Systems: A recent study introduced PolyArena, a benchmark for evaluating MLFFs on experimentally measured polymer properties including densities and glass transition temperatures (Tgs) [5]. The Vivace MLFF significantly outperformed established classical FFs in predicting polymer densities and captured second-order phase transitions, enabling accurate estimation of polymer Tgs—a longstanding challenge in molecular modeling [5].

Broad Chemical Space Evaluation: The UniFFBench framework systematically evaluated six state-of-the-art UMLFFs against approximately 1,500 experimentally determined mineral structures [11]. This comprehensive assessment revealed that while the best-performing MLFFs achieve mean absolute percentage errors below 10% for density and lattice parameters, they still systematically exceed the experimentally acceptable density variation threshold of 2-5% required for practical applications [11]. This "reality gap" highlights remaining challenges in bridging computational accuracy with experimental precision.

Table 2: Performance Comparison of Force Fields Across Different Material Classes

| Material System | Traditional FF Performance | MLFF Performance | Key Metrics |

|---|---|---|---|

| Organic Molecules | Moderate accuracy for equilibrium properties; poor transferability | High accuracy for torsion barriers, crystal properties, and solvation free energies [9] | Dihedral scans, lattice parameters, free energy surfaces |

| Proteins/Peptides | Adequate for folded state stability; limitations in conformational sampling | Accurate folding dynamics of small peptides; stable µs-scale protein simulations [9] | Folding pathways, J-coupling constants, stability metrics |

| Polymers | Limited transferability; unable to predict Tg from first principles | Accurate density prediction (<5% error); captures glass transition phenomena [5] | Density, Tg, thermal expansion coefficients |

| Complex Minerals | Often unstable or inaccurate for multi-element systems | Variable performance; best models achieve <10% MAPE for lattice parameters [11] | Density, lattice parameters, elastic tensors |

Data Fusion Strategies

A particularly promising approach for enhancing MLFF accuracy involves fusing data from both quantum mechanical calculations and experimental measurements. Research on titanium systems demonstrates that ML potentials can be concurrently trained on Density Functional Theory (DFT) calculations and experimentally measured mechanical properties and lattice parameters [8]. This fused data learning strategy satisfies all target objectives simultaneously, resulting in molecular models with higher accuracy compared to models trained on a single data source [8]. The inaccuracies of DFT functionals for target experimental properties were corrected through this approach, while off-target properties were generally unaffected or mildly improved [8].

Diagram 1: Fused Data Training Workflow for Enhanced MLFF Accuracy

Detailed Experimental Protocols

Fused Data Learning Methodology

The integrated training of MLFFs using both computational and experimental data follows a structured protocol:

DFT Data Generation: Perform high-throughput DFT calculations to generate a diverse dataset of atomic configurations with corresponding energies, forces, and virial stresses. For titanium, this involved 5,704 samples including equilibrated, strained, and randomly perturbed structures across multiple phases (hcp, bcc, fcc), along with configurations from high-temperature MD simulations [8].

Experimental Data Curation: Collect experimentally measured properties under well-defined conditions. For the titanium case study, researchers used temperature-dependent elastic constants of hcp titanium measured at 22 different temperatures (4-973 K) and corresponding lattice constants [8].

Alternating Training Protocol: Implement an iterative training scheme that alternates between:

- DFT Trainer: Optimizes ML potential parameters to match DFT-calculated energies, forces, and virial stresses using standard regression.

- EXP Trainer: Employs the Differentiable Trajectory Reweighting (DiffTRe) method to optimize parameters such that properties from ML-driven simulations match experimental values, avoiding backpropagation through entire MD trajectories [8].

Model Selection: Train models for a fixed number of epochs with early stopping based on validation performance. Comparative approaches include DFT-pre-trained models (DFT trainer only), DFT-EXP sequential models (EXP trainer only), and DFT & EXP fused models (alternating trainers) [8].

ML-Augmented Molecular Mechanics Parameterization

The Grappa framework implements a specialized protocol for machine-learned molecular mechanics:

Molecular Graph Representation: Represent the molecular system as a graph where nodes correspond to atoms and edges represent chemical bonds.

Atom Embedding Generation: Process the molecular graph using a graph attentional neural network to generate d-dimensional atom embeddings that encode chemical environments [10].

MM Parameter Prediction: For each interaction type (bonds, angles, torsions, impropers), predict MM parameters using transformer modules that operate on the embeddings of participating atoms, respecting appropriate permutation symmetries [10].

Energy Evaluation: Compute the potential energy using standard molecular mechanics energy functions with the predicted parameters, enabling compatibility with existing MD software such as GROMACS and OpenMM [10].

End-to-End Optimization: Differentiably optimize the model parameters to reproduce quantum mechanical energies and forces, leveraging the differentiability of the entire mapping from molecular graph to potential energy [10].

Research Reagent Solutions

Table 3: Essential Computational Tools for Force Field Development and Validation

| Tool Name | Type | Primary Function | Application Context |

|---|---|---|---|

| Grappa [10] | ML-FF framework | Predicts MM parameters from molecular graphs | Biomolecular simulations with MM efficiency and enhanced accuracy |

| MACE-OFF [9] | Transferable ML-FF | Short-range potential for organic molecules | Drug discovery, peptide folding, material property prediction |

| Vivace [5] | Polymer ML-FF | Specialized architecture for polymer systems | Prediction of polymer densities and glass transition temperatures |

| DiffTRe [8] | Training algorithm | Enables gradient-based training on experimental data | Fusing experimental observations into ML potential training |

| UniFFBench [11] | Benchmarking framework | Evaluates MLFFs against experimental measurements | Systematic validation of force field reliability and transferability |

| PolyArena [5] | Benchmark dataset | Experimental polymer properties for validation | Performance assessment on industrially relevant polymer systems |

The longstanding compromise between accuracy and efficiency in molecular simulations is being fundamentally transformed by machine learning approaches. Traditional force fields, while computationally efficient and deeply integrated into biomolecular simulation workflows, face inherent limitations in accuracy and transferability due to their simplified functional forms and dependency on manual parameterization. Machine learning force fields demonstrate superior accuracy in reproducing quantum mechanical and experimental observations across diverse systems—from organic molecules and polymers to complex minerals—while maintaining computational costs orders of magnitude lower than quantum mechanical methods.

Nevertheless, important challenges remain for MLFFs. Computational expense relative to traditional FFs still limits their application to extremely large systems or millisecond timescales. Benchmarking studies reveal a persistent "reality gap" between quantum mechanical accuracy and experimental precision [11]. The most promising paths forward include continued development of fused data learning strategies that integrate both computational and experimental information [8], architectural innovations that balance expressivity with computational efficiency [5] [9], and comprehensive benchmarking frameworks grounded in experimental measurements [11]. As these technologies mature, MLFFs are positioned to enable truly predictive molecular simulations across chemistry, materials science, and drug discovery.

The Rise of Machine Learning in Molecular Modeling

Molecular modeling stands as a cornerstone of modern scientific inquiry, enabling researchers to probe the structure, dynamics, and function of molecules at an atomic level. For decades, this field has been governed by a fundamental compromise: researchers could prioritize either computational efficiency or quantum-level accuracy, but not both simultaneously. Traditional molecular mechanics (MM) force fields, with their fixed functional forms and predefined parameters, offered the computational speed necessary to simulate large biological systems like proteins over biologically relevant timescales. However, this efficiency came at the cost of reduced accuracy, particularly for systems where electronic effects dominate. Conversely, quantum mechanical (QM) methods provide high accuracy but at computational costs that render them prohibitive for systems exceeding a few hundred atoms or simulations longer than nanoseconds [10].

The emergence of machine learning force fields (MLFFs) represents a paradigm shift, offering a path to reconcile this longstanding trade-off. By leveraging pattern recognition capabilities of neural networks trained on quantum mechanical data, MLFFs learn the underlying potential energy surface of molecular systems, achieving accuracy approaching their QM training data while maintaining computational costs comparable to traditional MM force fields [10] [8]. This transformative capability is reshaping computational chemistry, materials science, and drug discovery, enabling researchers to explore molecular phenomena with unprecedented fidelity and scale. This guide provides a comprehensive comparison of ML-derived force fields against traditional molecular mechanics approaches, examining their respective architectures, performance metrics, and applicability across diverse scientific domains.

Technical Foundations: Architectural Comparison

Traditional Molecular Mechanics Force Fields

Traditional MM force fields employ physics-inspired functional forms with parameters derived from experimental data and quantum calculations. The total potential energy is typically decomposed into bonded terms (bonds, angles, dihedrals) and non-bonded terms (van der Waals, electrostatic) [10]:

\[

E{\text{MM}} = \sum{\text{bonds}} k{ij}(r{ij}-r{ij}^{(0)})^2 + \sum{\text{angles}} k{ijk}(\theta{ijk}-\theta{ijk}^{(0)})^2 + \sum{\text{torsions}} \sumn k{ijkl}^{(n)}\left[1+\cos(n\phi{ijkl}-\phi{ijkl}^{(0)})\right] + \sum{i

These force fields rely on a finite set of atom types characterized by chemical properties, with parameters assigned via lookup tables. This approach provides excellent computational efficiency and interpretability but suffers from limited transferability and accuracy, particularly for chemical environments not well-represented in the parameterization set [10] [4].

Machine Learning Force Fields

MLFFs replace the fixed functional forms of traditional approaches with flexible neural network architectures that learn the relationship between atomic configurations and potential energy. Most modern MLFFs adopt graph-based representations where atoms constitute nodes and chemical bonds form edges, with message-passing operations enabling information exchange across the molecular structure [10] [5].

The Grappa force field exemplifies this approach, employing a graph attentional neural network to construct atom embeddings from molecular graphs, followed by a transformer with symmetry-preserving positional encoding to predict MM parameters [10]. This architecture respects the permutation symmetries inherent in molecular systems while learning chemically aware representations directly from data.

For complex materials systems, models like Vivace implement strictly local SE(3)-equivariant graph neural networks, ensuring rotational and translational invariance while maintaining computational efficiency for large-scale simulations [5]. The fundamental distinction lies in MLFFs learning the energy function from data rather than relying on predetermined physical approximations.

Multi-Fidelity and Hybrid Approaches

Recent advances have introduced multi-fidelity MLFF frameworks that integrate diverse data sources of varying accuracy levels. These architectures employ a shared graph neural network backbone with dedicated output heads and composite loss functions to harmonize low-cost computational data (e.g., non-magnetic DFT) with high-fidelity references (e.g., CCSD(T) or experimental measurements) [12]. By simultaneously leveraging abundant low-fidelity and scarce high-fidelity data, these approaches achieve chemical accuracy with minimal reliance on prohibitively expensive reference calculations, significantly enhancing data efficiency for complex materials systems [12].

Performance Comparison: Quantitative Benchmarks

Accuracy Metrics on Standardized Benchmarks

Rigorous evaluation through community benchmarks provides critical insights into the relative performance of MLFFs versus traditional approaches. The TEA Challenge 2023 conducted comprehensive assessments of modern MLFFs including MACE, SO3krates, sGDML, SOAP/GAP, and FCHL19* across diverse molecular systems, interfaces, and periodic materials [13].

Table 1: Force Field Performance Comparison

| Force Field | System Type | Energy MAE (meV/atom) | Force MAE (meV/Å) | Reference |

|---|---|---|---|---|

| Grappa (ML) | Small molecules | ~43 (chemical accuracy) | ~80 | [10] |

| Traditional MM | Small molecules | >100 | >150 | [10] |

| Vivace (ML) | Polymers | N/A | ~40-60 | [5] |

| Classical FF | Polymers | N/A | >100 | [5] |

| DFT & EXP fused | Titanium | ~43 | ~80 | [8] |

| DFT-only | Titanium | ~43 | ~80 | [8] |

The data demonstrates that MLFFs consistently achieve errors significantly lower than traditional force fields, with several models reaching chemical accuracy (approximately 43 meV/atom) that has long been considered the gold standard in computational chemistry [10] [8]. For polymer systems, MLFFs like Vivace demonstrate substantial improvements in force prediction accuracy, which directly translates to more reliable molecular dynamics simulations and property predictions [5].

Experimental Property Reproduction

Beyond quantum mechanical accuracy, the true test for any force field lies in its ability to reproduce experimentally measurable properties. Recent studies have evaluated MLFFs against critical experimental benchmarks including densities, glass transition temperatures, reduction potentials, and electron affinities [5] [8] [14].

Table 2: Experimental Property Prediction Accuracy

| Property | System | MLFF Performance | Traditional FF Performance | Reference |

|---|---|---|---|---|

| Density | Various polymers | ~2-5% error | ~5-15% error | [5] |

| Glass transition | Various polymers | Captures transition | Varies significantly | [5] |

| J-couplings | Peptides | Closely reproduces | Requires correction maps | [10] |

| Reduction potential | Organometallics | MAE: 0.262-0.365 V | MAE: 0.414 V (B97-3c) | [14] |

| Elastic constants | Titanium | Matches experiment | Deviates from experiment | [8] |

For polymer property prediction, MLFFs demonstrate remarkable capability in capturing complex phenomena like glass transitions, which require accurate description of both local and non-local interactions across multiple length and time scales [5]. In electrochemical applications, OMol25-trained neural network potentials predict reduction potentials for organometallic species with accuracy exceeding traditional DFT methods, despite not explicitly considering Coulombic interactions in their architecture [14].

Methodologies: Experimental Protocols

Training Workflows for Machine Learning Force Fields

The development of accurate MLFFs follows carefully designed training protocols that vary depending on data availability and target applications. Two primary paradigms have emerged: bottom-up learning from quantum mechanical data and top-down learning from experimental observations, with fused approaches combining both strategies [8].

Bottom-up learning employs high-fidelity quantum calculations—typically density functional theory or coupled cluster theory—to generate energies, forces, and virial stresses for diverse atomic configurations [8]. These data serve as training targets for the neural network, with models typically optimized using composite loss functions that balance energy, force, and stress errors:

\[ \mathcal{L} = \lambdaE \ellH(E{\text{pred}} - E{\text{DFT}}) + \lambdaF \ellH(\mathbf{F}{\text{pred}} - \mathbf{F}{\text{DFT}}) + \lambda\sigma \ellH(\boldsymbol{\sigma}{\text{pred}} - \boldsymbol{\sigma}{\text{DFT}}) \]

where \(\ell_H\) represents the Huber loss function that combines MSE and MAE advantages [12].

Top-down learning directly incorporates experimental measurements like elastic constants, lattice parameters, and thermodynamic properties into the training process through differentiable trajectory reweighting techniques [8]. This approach circumvents limitations of quantum methods while ensuring agreement with empirical observations.

Fused data learning strategies, as demonstrated for titanium systems, alternate between DFT and experimental trainers, enabling simultaneous reproduction of quantum mechanical predictions and experimental measurements [8]. This hybrid approach corrects known DFT inaccuracies while maintaining the comprehensive coverage provided by quantum training data.

Simulation and Validation Protocols

Robust validation of force fields requires standardized simulation protocols and comprehensive benchmarking against diverse properties. For biomolecular force fields like Grappa, validation includes:

- Energy and force accuracy on held-out quantum mechanical datasets [10]

- Dihedral angle potential energy scans compared to high-level quantum calculations [10]

- J-couplings comparison to experimental NMR measurements [10]

- Protein folding stability through molecular dynamics simulations of fast-folding proteins [10]

- Transferability assessments on chemically distinct systems like peptide radicals [10]

For materials-focused force fields, validation typically includes:

- Lattice constant prediction across temperature ranges [8]

- Elastic constant calculation compared to experimental measurements [8]

- Phase behavior through analysis of phase transitions [5]

- Bulk property prediction including densities and thermal expansion [5] [8]

Molecular dynamics simulations for validation are typically performed using highly optimized engines like GROMACS, OpenMM, or LAMMPS, with simulation parameters carefully controlled to enable direct comparison between different force fields [10] [13].

Software and Computational Infrastructure

Table 3: Essential Research Tools for MLFF Development and Application

| Tool | Function | Application |

|---|---|---|

| GROMACS | Molecular dynamics engine | High-performance biomolecular simulation [10] |

| OpenMM | GPU-accelerated MD | Rapid force field validation [10] |

| PyTorch, JAX | Deep learning frameworks | ML model development and training [10] [5] |

| Allegro, MACE | MLFF architectures | Equivariant neural network potentials [5] [13] |

| Differentiable Trajectory Reweighting | Gradient calculation through MD | Training on experimental data [8] |

Benchmark Datasets and Training Data

The development of accurate MLFFs relies on high-quality, diverse datasets for training and evaluation:

- OMol25: Over 100 million computational chemistry calculations at ωB97M-V/def2-TZVPD level for general molecular applications [14]

- PolyData: Specifically designed polymer datasets including packed structures (PolyPack), dissociated chains (PolyDiss), and molecular fragments (PolyCrop) [5]

- Espaloma dataset: Over 14,000 molecules and one million conformations covering small molecules, peptides, and RNA [10]

- PolyArena: Experimental densities and glass transition temperatures for 130 polymers under standard conditions [5]

Applications and Transferability

Biomolecular Simulations

Grappa demonstrates exceptional capability in biomolecular modeling, accurately predicting energies and forces for small molecules, peptides, and RNA at state-of-the-art MM accuracy [10]. The force field reproduces experimentally measured J-couplings without requiring correction maps like CMAP used in traditional protein force fields. Most significantly, Grappa exhibits remarkable transferability to macromolecular systems, enabling stable molecular dynamics simulations from small fast-folding proteins up to complete virus particles, with the same computational cost as established protein force fields [10].

Polymer and Materials Science

Machine learning force fields have shown particular promise in polymer science, where traditional force fields often struggle with transferability across diverse chemical structures. Vivace accurately predicts polymer densities and captures second-order phase transitions, enabling prediction of glass transition temperatures that have long challenged computational models [5]. For complex materials systems, multi-fidelity MLFF frameworks have demonstrated accurate prediction of alloy mixing energies and ionic conductivities even when high-fidelity training data is sparse or unavailable [12].

Chemical Space Exploration

The data-driven nature of MLFFs facilitates extension into uncharted regions of chemical space without requiring manual parameterization. Grappa's simple input features and high data efficiency make it well-suited for modeling exotic chemical species, as demonstrated for peptide radicals [10]. Similarly, foundational MLFFs like those trained on the OMol25 dataset exhibit surprising accuracy for charge-related properties of organometallic species despite not explicitly considering Coulombic physics in their architecture [14].

Limitations and Future Directions

Despite remarkable progress, machine learning force fields face several important challenges. Long-range noncovalent interactions remain problematic for many MLFF architectures, requiring special caution in simulations where such interactions dominate [13]. The computational cost of MLFFs, while significantly lower than quantum methods, still exceeds traditional MM force fields by approximately one order of magnitude, though this gap continues to narrow with architectural improvements [10] [5].

The field is rapidly evolving toward multi-fidelity approaches that leverage diverse data sources, foundation models pretrained on extensive chemical spaces, and improved architectures for capturing long-range interactions and electronic effects [12]. As benchmark methodologies mature and standardized evaluation protocols emerge, the integration of machine learning force fields into mainstream research workflows is expected to accelerate, potentially transforming computational molecular modeling across chemistry, materials science, and drug discovery.

Machine learning force fields represent a transformative advancement in molecular modeling, effectively bridging the longstanding divide between computational efficiency and quantum-level accuracy. Through comprehensive benchmarking across diverse molecular systems, MLFFs consistently demonstrate superior accuracy compared to traditional molecular mechanics approaches while maintaining the computational performance necessary for biologically and industrially relevant simulations.

As the field matures, the combination of bottom-up learning from quantum data and top-down learning from experimental observations promises to deliver force fields of unprecedented accuracy and transferability. For researchers and developers, this evolving landscape offers powerful new tools to probe molecular phenomena with fidelity that was previously inaccessible, potentially accelerating discovery across domains from drug development to advanced materials design.

The development of molecular mechanics force fields (FFs) has long been governed by empirical parametrization and fixed functional forms, creating a persistent trade-off between accuracy and computational efficiency. Machine learning-derived force fields (MLFFs) are disrupting this paradigm by leveraging data-driven approaches to achieve quantum-level accuracy while maintaining the speed of classical simulations. This guide provides an objective comparison of MLFFs against traditional FFs, detailing their performance, underlying methodologies, and practical applications in drug discovery. Supported by experimental data, we demonstrate that MLFFs represent a significant advancement, enabling more reliable predictions of biomolecular interactions, ligand binding, and solvation phenomena.

Molecular dynamics (MD) simulations are indispensable in computational chemistry and drug discovery, enabling the study of biomolecular structure, dynamics, and interactions at atomic resolution. The accuracy of these simulations hinges entirely on the quality of the force field (FF)—the mathematical model that describes the potential energy of a system as a function of its atomic coordinates.

Traditional molecular mechanics (MM) FFs, such as those in the AMBER, CHARMM, and OPLS families, employ pre-defined physical functional forms for bonded and non-bonded interactions, with parameters assigned based on a finite set of atom types. While highly efficient, this approach sacrifices accuracy and transferability, particularly for chemically diverse molecules or non-equilibrium configurations [10]. The limitations of traditional FFs are especially apparent in the simulation of RNA–ligand complexes, where maintaining structural fidelity and stable binding poses remains challenging [15].

Machine learning-derived force fields (MLFFs) represent a paradigm shift. Instead of relying on fixed functional forms, MLFFs learn the relationship between molecular structure and potential energy directly from reference quantum mechanical (QM) data or even experimental observations [16] [8]. This data-driven approach bypasses many approximations inherent in traditional FFs, offering a path to quantum accuracy at a fraction of the computational cost of ab initio MD. This guide objectively compares the performance, methodologies, and applications of this new generation of force fields against established alternatives.

Comparative Performance Data

The following tables summarize key quantitative comparisons between MLFFs and traditional FFs across various benchmarks, including energy and force accuracy, torsional profile reproduction, and performance in free energy calculations.

Table 1: Overall Accuracy Benchmarks for Small Molecules and Peptides

| Force Field | Type | Energy MAE (meV) | Force MAE (meV/Å) | Torsion Energy MAE | Reference |

|---|---|---|---|---|---|

| Grappa | MLFF (MM-based) | Not Specified | Not Specified | Outperforms FF19SB (no CMAP) | [10] |

| ByteFF | MLFF (MM-based) | State-of-the-art | State-of-the-art | State-of-the-art | [17] |

| Organic_MPNICE | MLFF (MLP) | Not Specified | Not Specified | Not Specified | [18] |

| AMBER FF19SB | Traditional MM | Not Applicable | Not Applicable | Reference (requires CMAP) | [10] |

| DFT Pre-trained MLP | MLFF (MLP) | < 43 | Reported | Not Specified | [8] |

Table 2: Performance in Free Energy and Binding Calculations

| Force Field / Method | HFE MAE (kcal/mol) | Application Notes | Reference |

|---|---|---|---|

| Organic_MPNICE (MLFF) | < 1.0 | 59 diverse organic molecules; outperforms classical FFs and implicit solvation | [18] |

| State-of-the-art Classical FF | > 1.0 | Fundamentally limited by simplified functional forms | [18] |

| DFT Implicit Solvation | > 1.0 | Less accurate than the MLFF workflow | [18] |

| Current RNA FFs (e.g., OL3) | N/A | Struggles with consistently stable RNA-ligand complexes | [15] |

Table 3: Performance in Reproducing Experimental Observables

| Force Field / Approach | Lattice Parameters | Elastic Constants | Phase Diagram | Reference |

|---|---|---|---|---|

| DFT & EXP Fused MLP | Accurate | Accurate | Improved | [8] |

| DFT-only MLP | Inaccurate | Inaccurate | Often Deviates | [8] |

| Classical MEAM Potential | Inaccurate | Inaccurate | Not Specified | [8] |

Experimental Protocols and Validation

The superior performance of MLFFs is validated through rigorous and standardized computational experiments. Below are the detailed methodologies for key benchmark tests cited in this guide.

Hydration Free Energy (HFE) Calculations

The accurate prediction of HFEs is a critical test for any force field in drug discovery, as it directly relates to solvation and binding.

- Workflow: A robust and general protocol was used, combining the broadly trained

Organic_MPNICEMLFF with enhanced sampling techniques [18]. - System Preparation: A diverse set of 59 organic molecules was selected. The MLFF was applied to both solute and solvent molecules in explicit water simulations.

- Sampling: The solute-tempering technique was employed to achieve sufficient conformational and statistical sampling, which is crucial for converging the free energy estimate.

- Free Energy Calculation: The free energy perturbation (FEP) method was used to compute the HFE relative to experimental measurements.

- Result: The MLFF-based workflow achieved a sub-kcal/mol mean absolute error, a significant improvement over state-of-the-art classical FFs [18].

RNA–Ligand Complex Stability

Assessing the ability of FFs to maintain experimental structures and stable interactions in challenging biomolecular systems.

- System Selection: 10 RNA–small molecule structures were curated from the HARIBOSS database, encompassing diverse RNA topologies (double helices, hairpins) and binding modes (groove binding, intercalation) [15].

- Simulation Protocol: Each system was simulated for 1 μs using multiple state-of-the-art FFs (e.g., OL3, DES-AMBER) in AMBER20 or GROMACS2023. Ligands were parametrized with GAFF2 and RESP2 charges for a direct comparison [15].

- Analysis Metrics:

- RMSD and LoRMSD: Measured the structural drift of the entire RNA and the ligand relative to the RNA backbone, respectively.

- Contact Map Analysis: Quantified the stability of native RNA-ligand contacts and the formation of non-native interactions over the simulation trajectory [15].

- Key Finding: Current traditional FFs, while improving, still show ligand mobility and contact instability, whereas MLFFs like Grappa show promise in reproducing experimentally measured J-couplings for RNA [15] [10].

Fused Data Learning for Materials Properties

This innovative protocol addresses the inaccuracies of DFT-based training by directly incorporating experimental data.

- Training Loop: The method alternates between two trainers [8]:

- DFT Trainer: Performs standard regression to match QM-calculated energies, forces, and virial stress from a database of atomic configurations.

- EXP Trainer: Uses the Differentiable Trajectory Reweighting (DiffTRe) method to optimize the MLFF so that properties (e.g., elastic constants) computed from MD simulations match experimental values.

- Target Data: For titanium, training used a DFT database (5,704 configurations) and experimental hcp elastic constants measured at four temperatures (23–923 K) [8].

- Validation: The "fused" model was validated on out-of-target properties like phonon spectra and liquid phase properties, showing mostly positive transferability [8].

The following diagram illustrates the fused data learning workflow.

The Scientist's Toolkit: Essential Research Reagents

This section catalogs key software tools, datasets, and force fields that constitute the essential "research reagents" in the MLFF landscape.

Table 4: Key Research Reagents in MLFF Development

| Tool / Resource | Type | Function & Application | Reference |

|---|---|---|---|

| Grappa | Machine Learned MM Force Field | Predicts MM parameters from molecular graphs; offers high accuracy with standard MD efficiency in GROMACS/OpenMM. | [10] |

| ByteFF | Machine Learned MM Force Field | Amber-compatible FF for drug-like molecules; trained on massive QM dataset for expansive chemical space coverage. | [17] |

| Q-Force | Automated Parameterization Toolkit | Systematically derives bonded coupling terms for force fields, enabling novel treatments of 1-4 interactions. | [19] |

| DiffTRe | Differentiable Learning Algorithm | Enables gradient-based optimization of MLFFs directly from experimental data without backpropagating through entire MD trajectories. | [8] |

| HARIBOSS | Curated Structural Database | A collection of RNA-small molecule complex structures used for rigorous validation of force fields in drug-binding contexts. | [15] |

| Espaloma Dataset | Benchmark QM Dataset | Contains over 14,000 molecules and 1M+ conformations for training and testing MLFFs on small molecules, peptides, and RNA. | [10] |

The evidence from comparative benchmarks indicates that machine learning-derived force fields constitute a genuine paradigm shift in molecular simulation. MLFFs consistently demonstrate superior accuracy in predicting energies, forces, and critical drug discovery properties like hydration free energies, while also showing unique capabilities in integrating both computational and experimental data sources. Although traditional force fields remain viable for well-trodden applications, the expanding coverage, improving efficiency, and demonstrable accuracy of MLFFs position them as the future cornerstone for high-fidelity simulations in computational chemistry and rational drug design.

Molecular mechanics (MM) force fields are the computational engines that power molecular dynamics simulations, enabling the study of structural, dynamic, and functional properties of biomolecules and materials. The accuracy of these simulations is critically dependent on the force field—the mathematical model used to approximate atomic-level forces. A foundational aspect differentiating modern force fields lies in how they represent and assign parameters to atoms based on their chemical context. This comparison guide examines the two dominant paradigms: the traditional approach using hand-crafted atom types and the emerging machine learning (ML)-driven approach employing learned chemical environments.

The established methodology, utilized by force fields such as AMBER, CHARMM, and OPLS-AA, relies on expert-defined atom types—a finite set of atom classifications characterized by the atom's chemical properties and those of its bonded neighbors. Parameters are then assigned via lookup tables. In contrast, machine learning force fields like Grappa and Espaloma replace this scheme by learning to assign parameters directly from the molecular graph, creating dynamic, data-driven representations of chemical environments. This guide provides an objective, data-driven comparison of these methodologies, detailing their fundamental principles, performance, and practical implications for research.

Fundamental Principles and Definitions

Hand-Crafted Atom Types: A Rule-Based System

Traditional MM force fields express the potential energy of a system as a sum of bonded (bonds, angles, dihedrals) and non-bonded interactions. The parameters for these interactions (e.g., force constants and equilibrium values) are not assigned to individual atoms directly but to atom types [20].

- Philosophy: This approach relies on human expertise to predefine a finite set of atom types. Each type is characterized by hand-crafted rules based on the atom's element, hybridization state, and local bonding environment (e.g., an sp³ carbon in a methyl group versus an sp² carbon in an aromatic ring) [20].

- Parameterization Process: The molecular graph is analyzed, and each atom is assigned one or more specific types from a fixed lookup table. The MM parameters for all possible combinations of these types (e.g., the bond parameters for a type C.3 - O.2 interaction) are pre-tabulated based on fits to quantum mechanical data and experimental observations [20] [21].

- Implicit Representation: The chemical environment is implicitly captured by the chosen atom type. The representation is static; an atom's type and, consequently, its parameters do not change based on a more extensive, non-local chemical context.

Learned Chemical Environments: A Data-Driven Representation

Machine learning force fields reframe parameter assignment as a learning problem, replacing lookup tables with a function (a neural network) that maps the molecular graph to MM parameters.

- Philosophy: Instead of using fixed types, these models learn to create a continuous, numerical representation (an embedding) for each atom that captures its chemical environment directly from data [20].

- Parameterization Process: Models like Grappa employ a graph neural network to process the entire molecular graph. This network generates a d-dimensional embedding vector, ν, for each atom. This embedding represents the atom's chemical environment based on the structure of the molecular graph. A subsequent transformer network then predicts the MM parameters for an interaction (a bond, angle, etc.) as a function of the embeddings of the atoms involved:

ξ_ij...(l) = ψ(l)(ν_i, ν_j, …)[20]. - Explicit Representation: The chemical environment is explicitly and dynamically represented by the atom embedding, which is constructed from the molecular graph. This allows for a more nuanced and continuous representation of chemical space, as the embeddings can capture subtle differences that might be lost in a discrete atom-typing scheme.

Comparative Analysis: Performance and Experimental Data

The transition from hand-crafted rules to learned representations is driven by demonstrable improvements in accuracy and transferability. The following sections compare the performance of both approaches across key benchmarks.

Accuracy on Quantum Mechanics (QM) and Experimental Benchmarks

Extensive testing on diverse molecular sets reveals that ML-derived force fields can achieve superior accuracy while maintaining the computational efficiency of traditional MM.

Table 1: Performance Comparison on Benchmark Datasets

| Metric / Benchmark | Traditional MM (e.g., AMBER ff19SB) | Machine Learned MM (Grappa) | Notes & Experimental Protocol |

|---|---|---|---|

| QM Energy & Forces (Espaloma dataset: >14,000 molecules, >1M conformations) | Lower accuracy | Outperforms tabulated and other machine-learned MM force fields [20] | Protocol: Models are trained to predict QM energies and forces. Accuracy is evaluated by comparing force field predictions to reference QM calculations on a held-out test set of small molecules, peptides, and RNA [20]. |

| Peptide Dihedral Landscapes | Matched by Grappa, but requires additional CMAP corrections [20] | Closely reproduces QM potential energy landscapes without needing CMAP [20] | Protocol: Torsion energy profiles for peptide dihedral angles are calculated with the force field and compared against high-level QM reference data [20]. |

| J-Couplings (NMR) | Good agreement with experiment | Closely reproduces experimentally measured J-couplings [20] | Protocol: Long-timescale MD simulations are performed. J-couplings are calculated from the simulated ensemble and compared directly to experimental NMR data [20] [21]. |

| Protein Folding (Chignolin) | Calculates folding free energy with some error | Improves upon the calculated folding free energy [20] | Protocol: Multiple simulations are run from folded and unfolded states. The free energy difference between states is computed and compared to the experimental value [20] [21]. |

| Transferability | Limited to pre-defined atom types; struggles with "uncharted" chemistry (e.g., radicals) | High transferability; demonstrated on peptide radicals without re-parameterization [20] | Protocol: The model is applied to chemical systems (e.g., molecules with radicals) not present in the training data. Performance is assessed by its ability to produce stable simulations and reasonable geometries/energies [20]. |

A foundational study systematically validating traditional force fields highlights their capabilities and limitations. The 2012 study by Lindorff-Larsen et al. evaluated eight protein force fields (e.g., Amber ff99SB-ILDN, CHARMM22*, OPLS-AA) by comparing multi-microsecond simulations to experimental data. It found that while force fields had improved and could describe many structural and dynamic properties of folded proteins, they exhibited biases and deficiencies, such as instability in certain native states and imbalances in secondary structure propensities [22] [21]. This underscores the need for the improvements shown by ML approaches.

Computational Efficiency and Scalability

A key advantage of both traditional and ML-derived molecular mechanics force fields is their high computational efficiency compared to both ab initio methods and more complex machine learning potentials.

Table 2: Computational Workflow and Efficiency Comparison

| Aspect | Hand-Crafted Atom Types | Learned Chemical Environments |

|---|---|---|

| Parameter Assignment | Instantaneous via table lookup | Requires one-time inference pass of the neural network per molecule |

| Energy/Force Evaluation Cost | Very low (standard MM cost) | Identically low (standard MM cost after parameter assignment) [20] |

| Simulation Engine Compatibility | Directly compatible with GROMACS, OpenMM, etc. | Directly compatible (parameters are generated once, then simulation runs natively) [20] |

| Scalability to Large Systems | Excellent (e.g., millions of atoms) | Excellent; demonstrated on a million-atom virus particle on a single GPU [20] |

| Cost vs. E(3)-Equivariant NN Potentials | N/A (MM is the baseline) | ~4 orders of magnitude faster than E(3)-equivariant neural network potentials [20] |

The workflow difference is critical: after Grappa's neural network predicts the MM parameters for a given molecule, those parameters are fixed. The subsequent molecular dynamics simulation uses the standard, highly optimized MM energy functional, resulting in no ongoing computational overhead from the ML model [20].

Methodologies and Experimental Protocols

To ensure reproducibility and provide context for the data presented, here are detailed methodologies for key experiments cited.

Protocol for Force Field Validation against NMR Data

This is a standard protocol for assessing a force field's ability to describe the structure and dynamics of folded proteins [21].

- System Preparation: A protein with a high-quality solution NMR structure and extensive J-coupling or order parameter data is selected (e.g., Ubiquitin, GB3). The protein is solvated in a water box with ions to neutralize the system.

- Simulation: Multiple, independent, long-timescale (microsecond to millisecond) MD simulations are performed using the force field under evaluation.

- Analysis: From the simulated trajectory, the following are computed:

- Backbone RMSD: The root-mean-square deviation of the protein backbone from the experimental structure to assess structural stability.

- J-Couplings: Scalar J-couplings are calculated from the simulated ensemble using the Karplus relationship.

- Order Parameters: S² order parameters are calculated from the molecular reorientation dynamics.

- Comparison: The computed values are directly compared to the experimental NMR data. A more accurate force field will show better agreement across all metrics.

Protocol for Benchmarking on the Espaloma Dataset

This protocol evaluates a force field's generalizability across a broad chemical space [20].

- Dataset: The Espaloma benchmark dataset is used, containing over 14,000 molecules and more than one million conformations, covering small molecules, peptides, and RNA.

- Training/Test Split: The dataset is split into training, validation, and test sets, ensuring no data leakage.

- Model Training (for ML-FFs): The machine-learned force field (e.g., Grappa, Espaloma) is trained to predict QM energies and atomic forces. The training is typically done end-to-end, with the loss function incorporating both energy and force terms.

- Evaluation: The trained model (or the traditional force field) is used to predict energies and forces for all conformations in the held-out test set.

- Metrics: The accuracy is reported using metrics like Mean Absolute Error (MAE) or Root Mean Square Error (RMSE) for energies and forces, comparing the force field's predictions to the reference QM data.

Visualization of Workflows

The fundamental difference between the two parameterization approaches is best understood through their workflows.

The Scientist's Toolkit: Essential Research Reagents

This section details key software, datasets, and computational tools essential for research and application in this field.

Table 3: Key Research Reagents and Tools

| Item Name | Type | Function/Brief Explanation |

|---|---|---|

| Grappa | Machine Learned Force Field | An ML framework that predicts MM parameters directly from the molecular graph using a graph attentional network and transformer. Offers high accuracy without hand-crafted features [20]. |

| Espaloma | Machine Learned Force Field | A predecessor to Grappa that also learns MM parameters from a graph representation, but relies on some hand-crafted chemical features as input [20]. |

| AMBER | Traditional Force Field Suite | A family of widely used force fields (e.g., ff19SB) and simulation tools that rely on hand-crafted atom types and lookup tables for proteins and nucleic acids [21]. |

| CHARMM | Traditional Force Field Suite | Another major family of force fields (e.g., CHARMM22, CHARMM27) using expert-defined atom types, often enhanced with corrections like CMAP for backbone accuracy [21]. |

| GROMACS | MD Simulation Engine | A high-performance software package for performing MD simulations; compatible with both traditional and ML-generated MM parameters [20]. |

| OpenMM | MD Simulation Engine | A flexible, open-source toolkit for MD simulations that supports a wide variety of force fields and hardware platforms [20]. |

| Espaloma Dataset | Benchmark Dataset | A large-scale dataset containing over 14,000 molecules and more than one million conformations with QM reference data, used for training and benchmarking ML force fields [20]. |

| DPA-2 | Large Atomic Model (LAM) | A multi-task pre-trained model for molecular modeling that represents the trend towards large, foundational models in atomistic simulation, beyond classical MM force fields [23]. |

Architectures and Actions: How ML Force Fields Work and Where They Excel

The development of accurate and efficient force fields is a cornerstone of molecular modeling, directly impacting the reliability of molecular dynamics (MD) simulations. Traditional molecular mechanics (MM) force fields, while computationally inexpensive, often struggle with transferability and accurately describing reactive processes and complex quantum mechanical effects [24]. The emergence of machine learning (ML) has introduced a new paradigm, with ML-derived force fields promising to bridge the gap between the quantum-level accuracy of ab initio methods and the computational efficiency of classical MM force fields [8] [25]. Among the various ML architectures, Graph Neural Networks (GNNs) and Transformers have recently come into sharp focus. This guide provides an objective comparison of these two pioneering architectures, evaluating their performance, data efficiency, and applicability against traditional MM force fields and each other, supported by current experimental data.

GNNs and Transformers approach the problem of approximating molecular potential energy surfaces from fundamentally different starting points. The table below summarizes their core characteristics and how they contrast with traditional MM force fields.

Table 1: Fundamental Characteristics of Force Field Architectures

| Feature | Traditional MM Force Fields | Graph Neural Networks (GNNs) | Transformer-based Models |

|---|---|---|---|

| Architectural Principle | Pre-defined analytical potential functions with fitted parameters [26]. | Message-passing on molecular graphs defined by atomic connectivity or proximity [27] [28]. | Self-attention mechanism applied to sequences or sets of atoms, often without a pre-defined graph [29] [27]. |

| Physical Inductive Biases | Explicitly built-in via functional forms (e.g., harmonic bonds, Lennard-Jones potentials) [26]. | Explicitly built-in via graph structure, radial cutoffs, and often rotational equivariance [27]. | Minimal; biases like distance-based interactions must be learned from data [27]. |

| Handling of Long-Range Interactions | Typically limited to pre-defined cutoffs, with Ewald sums for electrostatics. | Limited by the graph's receptive field, which is constrained by the number of message-passing layers [28]. | Naturally global receptive field via self-attention; can attend to any atom in the system [27]. |

| Computational Efficiency | Very high (fastest). | Moderate (slower than MM, faster than Transformers in some implementations). | Can be higher than GNNs for inference on modern hardware due to dense matrix operations [27]. |

| Data Efficiency | High for systems within their parameterized domain, low for new chemistries. | High, especially when geometric symmetries are incorporated [25]. | Potentially lower for small datasets, but exhibits predictable scaling with data and model size [27]. |

| Representative Examples | AMBER, CHARMM, GAFF | SchNet, NequIP, MACE, Grappa [26] [28] | Graph-Free Transformers [27], Molecular LLMs [30] |

Quantitative benchmarks are essential for a meaningful comparison. The following table compiles reported performance metrics from recent studies on standardized tasks.

Table 2: Experimental Performance Comparison on Benchmark Tasks

| Model (Architecture) | Test System | Energy MAE | Force MAE | Key Experimental Finding | Source |

|---|---|---|---|---|---|

| EMFF-2025 (GNN-based NNP) | 20 C/H/N/O HEMs | ~0.1 eV/atom | ~2.0 eV/Å | Achieved DFT-level accuracy for structure, mechanics, and decomposition of energetic materials. | [24] |

| Grappa (GNN-based MM) | Small molecules, peptides, RNA | N/A (MM accuracy) | N/A (MM accuracy) | Outperformed other MM force fields, reproduced experimental J-couplings, transferable to a whole virus particle. | [26] |

| Graph-Free Transformer (Transformer) | OMol25 dataset | Competitive with SOTA GNN | Competitive with SOTA GNN | Achieved similar errors to a SOTA equivariant GNN under matched compute; learned inverse-distance attention. | [27] |

| BIGDML (Kernel-based Global MLFF) | 2D/3D semiconductors, metals, adsorbates | << 1 meV/atom | N/A | Unprecedented data efficiency, achieving meV/atom accuracy with only 10-200 training geometries. | [25] |

| GNN MLFF (GNN) | Lennard-Jones Argon | N/A | N/A | Successfully predicted phonon spectra and vacancy migration rates in solids for configurations absent from training data. | [28] |

| Fused Data Model (GNN) | Titanium | < 43 meV/atom | Lower than DFT pre-trained model | Concurrently satisfied DFT and experimental targets (lattice parameters, elastic constants) with high accuracy. | [8] |

Detailed Experimental Protocols and Workflows

To ensure reproducibility and provide a clear framework for benchmarking, this section details the methodologies from key experiments cited in this guide.

Protocol: Benchmarking a Transformer against a GNN on the OMol25 Dataset

A pivotal study [27] directly compared a standard Transformer architecture with a state-of-the-art equivariant GNN, providing a clear protocol for a fair architectural comparison.

- Dataset: The Open Molecules 2025 (OMol25) dataset, a large-scale dataset of molecular configurations with DFT-computed energies and forces.

- Model Training:

- Transformer: An unmodified Transformer architecture was trained directly on Cartesian atomic coordinates. No pre-defined molecular graph or physical inductive biases (e.g., rotational equivariance) were built into the model.

- GNN: A modern equivariant GNN was trained on the same data, utilizing its inherent biases like local message-passing and rotational equivariance.

- Control Variable: The computational budget for training was matched for both models to ensure a fair comparison.

- Primary Metrics:

- Mean Absolute Error (MAE) on energy predictions (eV/atom).

- MAE on force predictions (eV/Å).

- Inference wall-clock time.

- Analysis:

- Quantitative errors were compared.

- The learned attention maps of the Transformer were analyzed to identify if physically meaningful patterns (e.g., decay of attention with interatomic distance) emerged from the data.

Protocol: Validating Generalizability of a GNN Force Field for Solid-State Properties

This protocol [28] assesses a GNN's ability to extrapolate to solid-state phenomena not seen during training.

- Training Data Generation:

- An MD simulation of a perfect face-centered cubic (FCC) crystal of Lennard-Jones Argon at various thermodynamic states is performed.

- Atomic configurations, forces, and energies are collected to form the training dataset. Crucially, configurations containing crystal defects like vacancies are excluded.

- Model Training: A GNN-based MLFF is trained on the collected data to predict atomic forces.

- Validation on Unseen Configurations:

- Phonon Density of States (PDOS): The Hessian matrix is computed from the MLFF-predicted forces using numerical differentiation (see Equation 2 in [28]). The PDOS and phonon dispersion curves for a perfect FCC crystal at zero and finite temperatures are then calculated and compared to reference data.

- Vacancy Migration: The energy barrier for vacancy migration is computed using the string method in an imperfect crystal containing a vacancy. The vacancy jump rate is also extracted from direct MD simulations using the MLFF.

- Success Criterion: The MLFF's predictions for PDOS and vacancy migration rates/barriers must show good agreement with results from the original reference potential, demonstrating transferability beyond its training distribution.

Workflow Visualization

The following diagram illustrates a generalized workflow for developing and benchmarking an ML force field, integrating elements from the protocols above.

The Scientist's Toolkit: Essential Research Reagents

Building, training, and validating modern ML force fields requires a suite of software tools and data resources. The table below lists key "research reagents" for practitioners in the field.

Table 3: Essential Tools and Resources for ML Force Field Research

| Tool/Resource Name | Type | Primary Function | Relevance to GNNs/Transformers |

|---|---|---|---|

| DP-GEN [24] | Software Framework | Active learning platform for generating training data and building ML potentials. | Used with Deep Potential (GNN) models; relevant for robust dataset generation for any architecture. |

| OMol25 Dataset [27] | Dataset | A large-scale dataset of molecular configurations with quantum mechanical labels. | Serves as a key benchmark for training and comparing GNN and Transformer models. |

| DiffTRe [8] | Method / Algorithm | Differentiable Trajectory Reweighting; enables training ML potentials directly on experimental data. | Allows GNNs or Transformers to be trained against experimental observables, correcting for DFT inaccuracies. |

| GROMACS / OpenMM [26] | MD Simulation Engine | High-performance software for running molecular dynamics simulations. | MLFFs like Grappa are implemented as plugins, allowing efficient production MD runs. |

| Equivariant GNN Architectures (e.g., NequIP, MACE) | Model Architecture | GNNs that build in rotational equivariance, improving data efficiency and accuracy. | Represents the state-of-the-art in GNN-based MLIPs, often used as a performance benchmark. |

| Global Descriptors (e.g., sGDML, BIGDML [25]) | Model Architecture | Kernel-based methods that treat the entire molecular system as a whole, avoiding locality approximations. | Provides an alternative, highly data-efficient approach; a different point of comparison for GNNs/Transformers. |