Iterative Optimization for Force Field Development: A Modern Guide to Enhanced Accuracy and Efficiency

This article provides a comprehensive guide to iterative optimization procedures for developing molecular mechanics force fields, a critical tool for computational drug discovery and materials science.

Iterative Optimization for Force Field Development: A Modern Guide to Enhanced Accuracy and Efficiency

Abstract

This article provides a comprehensive guide to iterative optimization procedures for developing molecular mechanics force fields, a critical tool for computational drug discovery and materials science. Tailored for researchers and scientists, it explores the foundational principles of force field parameterization, details cutting-edge methodological workflows, and offers practical strategies for troubleshooting and optimization. By comparing validation techniques across different force field types—from classical to machine learning potentials—this review synthesizes best practices for achieving robust, accurate, and transferable force fields, ultimately aiming to accelerate reliable molecular simulations.

The Core Principles and Critical Need for Iterative Force Field Training

Defining Iterative Optimization in the Force Field Landscape

In molecular dynamics (MD) simulations, a force field is a computational model that describes the potential energy of a system of atoms as a function of their positions, enabling the study of complex systems that are prohibitively large for quantum mechanical (QM) treatment [1]. The accuracy of these simulations is fundamentally dependent on the quality of the force field parameters employed [2]. Iterative optimization has emerged as a powerful paradigm for force field development, representing a cyclic process of parameter refinement that systematically reduces the discrepancy between force field predictions and reference data, which can originate from both QM calculations and experimental measurements. This approach is particularly crucial for addressing the vast and expanding chemical space relevant to modern computational drug discovery [3]. This article delineates the core principles, protocols, and applications of iterative optimization within force field development, providing a structured guide for researchers and scientists.

Core Principles and Methodologies of Iterative Optimization

The foundational goal of iterative optimization is to escape local minima in parameter space and achieve a robust, transferable, and physically meaningful force field. This is accomplished through a self-correcting loop that integrates simulation, validation, and parameter adjustment.

The Basic Iterative Workflow

At its simplest, the iterative optimization workflow involves several key stages, elegantly captured by tools like the Force FieLd Optimization Workflow (FFLOW) [4]. The process begins with the initial parameterization of the force field, often informed by QM calculations on small molecular fragments or heuristic rules. Subsequently, MD simulations are run using these parameters to sample molecular conformations and properties. The results of these simulations—such as conformational energies, forces, or bulk properties—are then compared against target data (QM reference or experimental data). A loss function quantifying the difference is minimized by an optimization algorithm, which generates a new, improved set of parameters for the next iteration. The critical step that differentiates modern approaches is the use of a separate validation set to monitor convergence and prevent overfitting to the training data [2].

Advanced Integration of Machine Learning

Machine learning (ML) has profoundly enhanced iterative optimization protocols. Two primary applications are:

- Surrogate Models: ML models can be trained to predict the outcomes of expensive MD simulations or QM calculations. For instance, a neural network can learn the mapping between Lennard-Jones parameters and bulk-phase density, reducing the required computation time by a factor of ≈20 while maintaining accuracy [4]. This substitution allows for a much more rapid exploration of the parameter space.

- End-to-End Parameter Prediction: Graph Neural Networks (GNNs) can be trained to directly predict force field parameters for a given molecule. As demonstrated by the ByteFF force field, an edge-augmented, symmetry-preserving GNN can be trained on a massive dataset of QM calculations (e.g., 2.4 million optimized geometries and 3.2 million torsion profiles) to predict all bonded and non-bonded parameters simultaneously [3]. This data-driven approach ensures expansive coverage of chemical space and inherent transferability.

Table 1: Key Components of an Iterative Optimization Workflow

| Component | Description | Example Techniques |

|---|---|---|

| Target Data Generation | Producing high-quality reference data for parameter fitting and validation. | QM calculations (B3LYP-D3(BJ)/DZVP), experimental liquid properties (density, heat of vaporization) [5] [3]. |

| Parameter Optimization | The algorithm that adjusts force field parameters to minimize a loss function. | Gradient-based optimization, genetic algorithms, Bayesian optimization [4]. |

| Sampling & Data Augmentation | Using dynamics to explore new conformations for QM computation, enriching the training dataset. | Boltzmann sampling at elevated temperatures (e.g., 400 K) [2]. |

| Validation & Convergence | Assessing model performance on unseen data to determine when to halt the iterative cycle. | Tracking error on a separate validation set to flag overfitting [2]. |

| Surrogate Modeling | Replacing computationally expensive simulations with fast ML models during optimization. | Neural networks to predict bulk-phase density from Lennard-Jones parameters [4]. |

Detailed Experimental Protocols

This section outlines two specific protocols that exemplify the iterative optimization paradigm in practice.

Protocol 1: Automated Iterative Force Field Parameterization with Validation

This protocol, as described in [2], is designed for fitting custom single-molecule force fields and emphasizes the prevention of overfitting.

1. Initial Dataset Preparation:

- Select a target molecule (e.g., a tri-alanine peptide or a photosynthesis cofactor).

- Generate an initial dataset of QM calculations. This involves:

- Generating multiple conformers of the target molecule.

- Performing QM geometry optimizations and frequency calculations (e.g., at the B3LYP-D3(BJ)/DZVP level) to obtain energies, forces, and Hessian matrices [3].

- Split the data into a training set and a validation set.

2. Initial Parameter Optimization:

- Parameterize an initial force field using the training set. This can be done by directly fitting parameters to reproduce QM energies and forces.

3. Iterative Loop:

- Step 1 - Run Dynamics: Perform molecular dynamics simulations using the current force field parameters. To ensure adequate sampling of the potential energy surface, use Boltzmann sampling at an elevated temperature (e.g., 400 K) [2].

- Step 2 - Sample New Conformations: Extract new, statistically independent conformations from the MD trajectory.

- Step 3 - Compute New QM Data: Perform QM single-point energy and force calculations on these new conformations.

- Step 4 - Augment Dataset: Add the new QM data (conformations, energies, forces) to the existing training dataset.

- Step 5 - Re-optimize Parameters: Refit the force field parameters against the newly augmented training dataset.

- Step 6 - Validate: Compute the error of the newly parameterized force field on the held-out validation set.

4. Convergence Check:

- The iterative process is terminated when the error on the validation set converges to a minimum, indicating that further iterations would lead to overfitting. This is a key differentiator from earlier methods that lacked robust validation [2].

Protocol 2: Surrogate Model-Assisted Optimization (SMAOpt)

This protocol, based on [4], focuses on dramatically speeding up the optimization process by replacing costly MD simulations with a machine learning model.

1. Define Feasible Parameter Space:

- Identify the force field parameters to be optimized (e.g., Lennard-Jones parameters σ and ε for carbon and hydrogen atoms).

- Set physically reasonable lower and upper bounds for each parameter to ensure numerical stability and physical meaning [4].

2. Generate Training Data for the Surrogate Model:

- Sample hundreds to thousands of parameter sets from the defined feasible space. Sampling strategies can include grid-based or random sampling.

- For each unique parameter set, run a full MD simulation to compute the target property (e.g., the bulk-phase density of n-octane at 293.15 K and 1 bar). This step is computationally expensive but is a one-time cost.

3. Train and Validate the ML Surrogate Model:

- Using the generated data (parameters -> target property), train a machine learning model. Model selection (e.g., Linear Regression, Random Forest, Neural Network) and hyperparameter tuning are critical.

- The model's performance should be evaluated using metrics like Mean Absolute Percentage Error (MAPE) and the coefficient of determination (R²) against a test set [4]. Neural networks have been shown to be effective for this task.

4. Execute the Optimization Loop:

- Integrate the trained and validated surrogate model into an optimization workflow (e.g., FFLOW). In each iteration, instead of running an MD simulation, the surrogate model is queried to predict the target property for a given parameter set.

- The optimizer uses these fast predictions to guide the parameter search. The modularity of this approach allows for the surrogate model to be reused in different optimization contexts [4].

5. Final Validation:

- Once the optimization loop converges, the final optimized parameter set should be validated by running an actual MD simulation and comparing the results to the surrogate model's prediction to ensure fidelity.

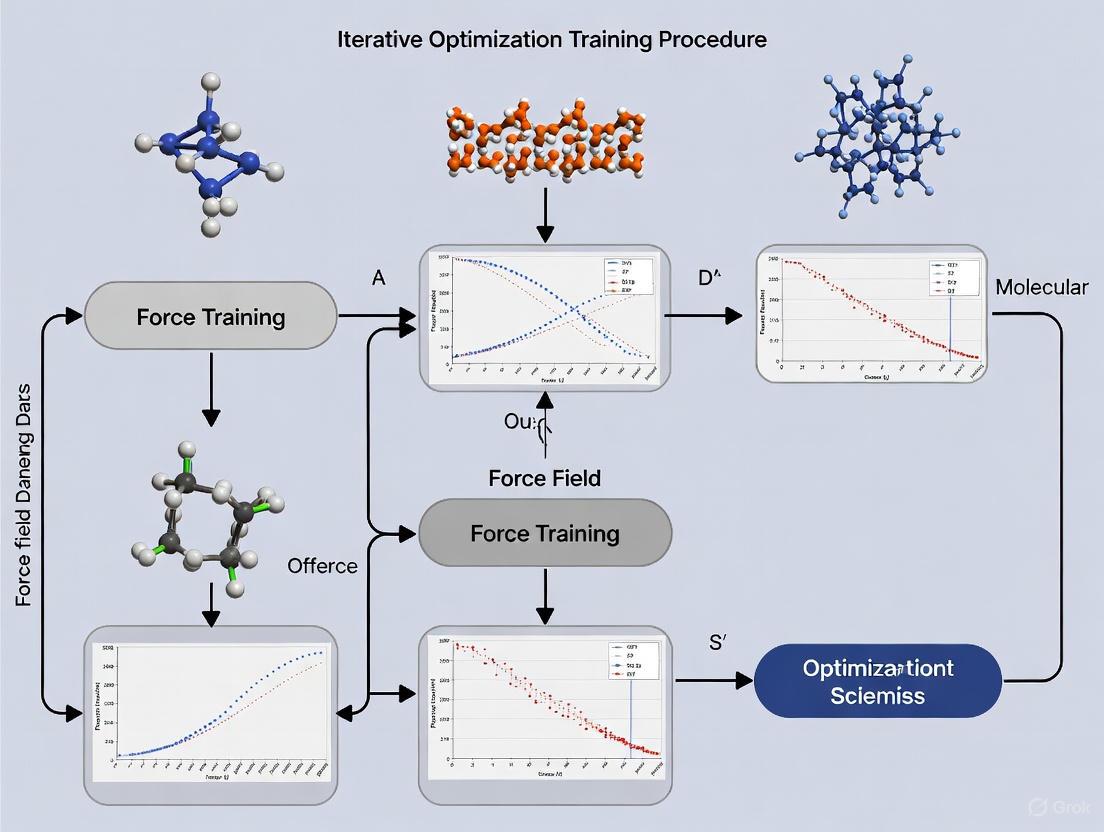

Diagram 1: Surrogate Model-Assisted Optimization (SMAOpt) workflow. The costly MD simulations are a one-time cost for training, while the fast ML surrogate model is used inside the iterative loop.

Application Notes and Performance Benchmarks

Iterative optimization protocols have been successfully applied to a range of challenging problems, demonstrating their efficacy and accuracy.

Table 2: Performance Benchmarks of Iteratively Optimized Force Fields

| Force Field / Method | System / Application | Key Results and Performance |

|---|---|---|

| Iterative Protocol with Validation [2] | Tri-alanine peptide; 31 photosynthesis cofactors. | Boltzmann sampling at 400 K enabled fitting on a rugged potential energy surface. Successfully generated a parameter library for complex cofactors. |

| ByteFF (GNN-based) [3] | Drug-like molecules from ChEMBL and ZINC20 databases. | State-of-the-art performance predicting relaxed geometries, torsional profiles, and conformational energies/forces across a broad chemical space. |

| SMAOpt with Neural Network [4] | n-Octane bulk density and conformational energies. | Reduced optimization time by a factor of ≈20 while producing force fields of similar quality to the standard method. |

| QM-to-MM Mapping Protocols [5] | Small organic molecules (liquid properties). | Best model used only 7 fitting parameters, achieving errors of 0.031 g cm⁻³ (density) and 0.69 kcal mol⁻¹ (heat of vaporization) vs. experiment. |

Notes for Practitioners:

- Data Quality and Diversity: The performance of any iterative method, especially those driven by ML, is contingent on the quality and diversity of the training data. The use of large, diverse datasets (e.g., 2.4 million molecular fragments) is critical for ensuring transferability [3].

- Validation is Non-Negotiable: The use of a separate validation set is the most reliable way to ensure that the optimized force field generalizes well and is not overfitted to the specific configurations in the training set [2].

- Balancing Cost and Accuracy: The choice between running full QM/MD simulations at every iteration versus using a surrogate model depends on the available computational resources and the number of optimization parameters. For complex, multi-parameter optimizations, the initial investment in training a surrogate model often yields significant long-term savings [4].

The Scientist's Toolkit: Essential Research Reagents and Software

Table 3: Key Software and Resources for Iterative Force Field Development

| Tool / Resource | Type | Primary Function and Application |

|---|---|---|

| FFLOW [4] | Software Toolkit | A modular, multiscale force field optimization workflow that facilitates iterative parameter refinement against multiple target properties. |

| QUBEKit [5] [6] | Software Suite | Automates the derivation of force field parameters from quantum mechanical calculations (QM-to-MM mapping), reducing the number of parameters needing experimental fitting. |

| ByteFF [3] | Data-Driven Force Field | An Amber-compatible force field for drug-like molecules whose parameters are predicted by a graph neural network trained on a massive QM dataset. |

| OpenMM | Molecular Dynamics Engine | A high-performance, open-source toolkit for MD simulations, often used as a backend for running the dynamics steps in iterative loops. |

| geomeTRIC [3] | Optimizer | A geometry optimization code used in conjunction with QM software to generate optimized molecular structures and Hessians for training data. |

| OpenFF [3] | Force Field & Infrastructure | A family of force fields and open-source software designed for small molecule drug discovery, providing tools and benchmarks. |

| Espaloma [3] | Graph Neural Network Model | A proof-of-concept GNN for end-to-end force field parameterization, paving the way for models like ByteFF. |

Diagram 2: Generic iterative force field optimization workflow, integrating key tools and the critical validation step.

Molecular dynamics (MD) simulations provide atomic-level insights into the behavior of biological systems, playing a pivotal role in modern computational drug discovery. The accuracy of these simulations hinges on the underlying force field—a mathematical model that describes the potential energy surface of a molecular system. Despite advances in computational power, a fundamental trade-off persists: quantum mechanical (QM) methods offer high accuracy but are computationally prohibitive for large systems and long timescales, while traditional molecular mechanics (MM) force fields offer speed but often lack the precision required for reliable predictions. This application note examines contemporary strategies for bridging this accuracy gap, with a specific focus on iterative optimization training procedures for force field development. We frame these methodologies within the context of drug development, highlighting protocols and resources that enable researchers to leverage these advances in their workflows.

The Force Field Landscape: From Molecular Mechanics to Neural Network Potentials

The choice of potential energy model dictates the balance between computational speed and physical accuracy in MD simulations. Two dominant paradigms are evolving in parallel.

Data-Driven Molecular Mechanics Force Fields

Conventional MM force fields, such as GAFF and OPLS, decompose potential energy into analytical terms for bonded and non-bonded interactions. Their functional forms are computationally efficient but can suffer from inaccuracies due to oversimplification. The field is now shifting towards data-driven parameterization to expand coverage of chemical space. For instance, ByteFF represents a modern MM force field developed by training an edge-augmented, symmetry-preserving graph neural network (GNN) on a massive QM dataset encompassing 2.4 million optimized molecular fragment geometries and 3.2 million torsion profiles [3]. This approach maintains the computational efficiency of the Amber/GAFF functional form while achieving superior accuracy in predicting geometries, torsional profiles, and conformational energies by learning parameters directly from QM data [3].

Neural Network Potentials (NNPs)

In contrast, NNPs represent a more radical departure, using machine learning to map atomic configurations directly to energies and forces, bypassing pre-defined functional forms. They offer near-QM accuracy with a significant reduction in computational cost. Recent breakthroughs, such as Meta's Open Molecules 2025 (OMol25) dataset and associated models like eSEN and the Universal Model for Atoms (UMA), have dramatically advanced the state of the art [7] [8]. The OMol25 dataset provides over 100 million DFT calculations at the ωB97M-V/def2-TZVPD level of theory, covering an unprecedented diversity of chemical structures, including biomolecules, electrolytes, and metal complexes [7]. Models trained on this dataset demonstrate performance that matches high-accuracy DFT on standard benchmarks [7].

Table 1: Comparison of Modern Force Field Development Strategies

| Development Strategy | Key Example | Underlying Methodology | Key Advantage | Applicability in Drug Discovery |

|---|---|---|---|---|

| Data-Driven MMFF | ByteFF [3] | GNN-trained on expansive QM dataset of drug-like fragments | Amber compatibility; High computational efficiency; Broad chemical space coverage | High: Directly parameterized for drug-like molecules |

| General-Purpose NNP | OMol25 Models (eSEN, UMA) [7] [8] | Neural network potentials trained on massive, diverse dataset (OMol25) | Near-DFT accuracy across expansive chemical space | High: Covers biomolecules, protein-ligand interfaces, and metal complexes |

| Specialized NNP | EMFF-2025 [9] | Transfer learning from a pre-trained model for specific material class (energetic materials) | DFT-level accuracy for specialized applications with minimal data | Medium: Framework can be adapted for specific drug classes |

| Multireference NNP | WASP [10] | Integrates multiconfiguration QM with ML-potentials for transition metals | Accurate description of complex electronic structures (e.g., in catalysts) | Medium: Critical for metalloenzymes and organometallic drug candidates |

The Iterative Optimization Framework for Force Field Development

A powerful paradigm emerging in force field development is the iterative optimization procedure, which closes the loop between simulation and QM data generation to achieve self-consistent refinement. This framework directly addresses the challenge of creating accurate and generalizable force fields.

Conceptual Workflow

The core of the iterative approach involves a cyclic process of parameter optimization, molecular dynamics sampling, quantum mechanical validation, and dataset expansion [2]. This workflow ensures that the force field is continuously refined against a growing and relevant set of QM data, preventing overfitting to a static initial dataset and improving transferability across conformational space.

The following diagram illustrates the self-correcting and expanding nature of this workflow:

Protocol: Automated Iterative Force Field Parameterization

This protocol outlines the steps for implementing an iterative parameterization procedure, as demonstrated in recent research [2].

Objective: To automatically generate a highly accurate, system-specific force field by iteratively refining parameters against a dynamically expanding QM dataset, using a validation set to prevent overfitting and determine convergence.

Materials and Software:

- Quantum Chemistry Software: ORCA, Gaussian, or PSI4 for QM energy and force calculations.

- MD Engine: OpenMM, NAMD [11], or LAMMPS [12] for running dynamics.

- Parameter Optimization Script: A custom script (e.g., in Python) to adjust force field parameters to minimize the difference between MM and QM energies/forces.

- Initial Structures: 3D coordinates of the target molecule(s) in a standard format (PDB, MOL2).

Procedure:

- Initialization:

- Generate an initial set of diverse molecular conformations for the target system (e.g., via conformational search or high-temperature MD).

- Perform QM single-point energy (and optionally, force) calculations on these conformations to create the initial training dataset.

- Randomly split the data into a training set (e.g., 80%) and a validation set (e.g., 20%).

Parameter Optimization Loop:

- Step 1: Optimize Parameters. Fit the MM force field parameters (e.g., bond, angle, torsion force constants, and equilibrium values) to the current training dataset. The loss function is typically the weighted sum of squared differences between MM and QM energies and forces.

- Step 2: Sample with New Parameters. Run an MD simulation (e.g., Boltzmann sampling at a relevant temperature like 400 K) using the newly optimized parameters to explore new regions of conformational space.

- Step 3: QM Calculation on New Samples. Select new, geometrically distinct conformations from the MD trajectory and compute their QM energies and forces.

- Step 4: Expand Dataset and Validate. Add the new QM calculations to the training dataset. Crucially, evaluate the current force field's accuracy on the held-out validation set.

Convergence Check:

- Monitor the error on the validation set. The procedure is considered converged when the validation error plateaus or falls below a pre-defined threshold over several iterations.

- If the validation error begins to increase significantly while the training error decreases, this indicates overfitting, and the procedure from the previous iteration should be used.

Output:

- The final, validated set of force field parameters in a standard format (e.g., Amber .frcmod or CHARMM .str files) compatible with major MD engines.

Applications: This protocol has been successfully applied to fit custom force fields for complex systems such as tri-alanine peptides and a library of 31 photosynthesis cofactors [2], demonstrating its utility for achieving superior accuracy compared to general force fields.

Practical Implementation and Tools for Researchers

Transitioning from theoretical advances to practical application requires robust software tools and accessible platforms.

Scalable Simulation with ML Potentials

The integration of MLIPs into established MD workflows is key to their adoption. The ML-IAP-Kokkos interface for LAMMPS enables this by providing a streamlined pathway to run PyTorch-based models at scale [12]. The core steps involve:

- Implementing a Python class that inherits from

MLIAPUnifiedand defines thecompute_forcesfunction. - Serializing the model and its weights into a

.ptfile. - Configuring LAMMPS to use the

mliap unifiedpair style, pointing to this model file [12]. This interface handles efficient data transfer between LAMMPS and the PyTorch model, leveraging GPU acceleration for large-scale simulations [12].

Accessibility for Experimentalists

For researchers seeking to utilize MD simulations without deep computational expertise, tools like drMD lower the barrier to entry. drMD is an automated pipeline built on the OpenMM toolkit that requires only a single configuration file to set up and run publication-quality simulations, including enhanced sampling through metadynamics [13]. It automates system setup, solvation, and minimization, and includes features like real-time progress updates and error recovery, making MD more accessible to a broader scientific audience [13].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Software and Data Resources for Modern Force Field Applications

| Resource Name | Type | Function in Research | Access/URL |

|---|---|---|---|

| OMol25 Dataset [7] [8] | Training Data | Massive, high-accuracy QM dataset for training or benchmarking general-purpose NNPs. | Meta FAIR |

| ByteFF [3] | Data-Driven MMFF | Amber-compatible force field for drug-like molecules, offering broad chemical space coverage. | ByteDance Research |

| WASP [10] | Method/Algorithm | Enables accurate ML potentials for transition metal systems by ensuring wavefunction consistency. | GitHub (/GagliardiGroup/wasp) |

| ML-IAP-Kokkos [12] | Software Interface | Connects PyTorch ML models to LAMMPS for scalable, GPU-accelerated MD simulations. | LAMMPS Distribution |

| drMD [13] | Simulation Pipeline | Automated workflow for running MD simulations in OpenMM, designed for non-experts. | GitHub (/wells-wood-research/drMD) |

| NAMD [11] | MD Simulation Engine | High-performance, parallel MD code for simulating large biomolecular systems. | University of Illinois |

Comparative Analysis of Strategic Approaches

The choice between an iterative MMFF and a general-purpose NNP is not trivial and depends on the research goals. The following diagram maps the decision-making logic for selecting the appropriate strategy:

Decision Workflow Explanation:

- For common, drug-like molecules, a pre-trained, data-driven MMFF like ByteFF is often the most efficient choice, offering an excellent balance of accuracy, speed, and compatibility with existing drug discovery pipelines [3].

- If the system is not well-represented in standard MMFFs, the next consideration is reactivity. For simulating reactions or systems with transition metals (common in catalysts and metalloenzymes), a specialized NNP like those generated by the WASP protocol is necessary to accurately capture complex electronic structures [10].

- For non-reactive but chemically diverse systems, the choice hinges on the trade-off between accuracy and setup cost. A general-purpose NNP (e.g., models trained on OMol25) provides near-DFT accuracy out-of-the-box for a vast range of molecules [7]. However, if the highest possible accuracy for a specific molecule is required and one is willing to invest computational resources in setup, then an iterative MMFF parameterization can yield a bespoke, highly accurate force field [2].

The gap between quantum mechanical accuracy and scalable molecular dynamics is closing rapidly, driven by data-centric and iterative methodologies. The emergence of massive, high-quality datasets like OMol25, coupled with sophisticated GNNs and iterative optimization frameworks, provides researchers with a powerful toolkit. Whether through the use of refined, data-driven molecular mechanics force fields or the direct application of neural network potentials, these advances enable more reliable predictions of molecular behavior, binding affinities, and dynamical properties. For drug development professionals, adopting these strategies and tools promises to enhance the reliability of computational screens and mechanistic studies, ultimately accelerating the journey from target identification to viable therapeutic candidates.

The development of accurate molecular mechanics force fields is foundational to reliable molecular dynamics (MD) simulations in computational chemistry and drug discovery. These force fields, which approximate the potential energy surface of a system, enable the study of biomolecular processes at an atomistic level. However, creating a robust force field is fraught with challenges, primarily stemming from the interdependence of parameters, the limited transferability of parameters across diverse chemical spaces, and the prohibitive computational cost associated with high-quality quantum mechanical (QM) reference data generation. These issues are deeply intertwined; optimizing one parameter in isolation can inadvertently degrade the accuracy of another, and fitting to a narrow set of chemical motifs often results in poor performance for novel molecular structures. This application note details these core challenges and presents contemporary, iterative optimization protocols designed to address them, providing researchers with actionable methodologies for next-generation force field development.

The table below summarizes the core challenges, their impact on force field development, and the quantitative evidence as documented in recent literature.

Table 1: Core Challenges in Force Field Development

| Challenge | Impact on Force Field Development | Evidence from Literature |

|---|---|---|

| Parameter Interdependence | Non-bonded parameters (charges, LJ) and torsional bonded parameters are co-dependent; optimizing them independently leads to inaccuracies in the potential energy surface. [14] | Using standard OPLS-AA bonded parameters with bespoke QUBE nonbonded terms limited accuracy, necessitating refitting of torsional parameters. [14] |

| Limited Transferability | Force fields parameterized on small molecule fragments or pure liquid properties fail to accurately predict the behavior of complex biomolecules or chemical mixtures. [15] [16] | Force fields trained only on pure liquid densities (ρl) and enthalpies of vaporization (ΔHvap) showed systematic errors in predicting solvation free energies and mixture properties. [15] |

| High Computational Cost | Generating QM reference data for torsion scans and Hessian matrices for large molecules or expansive chemical spaces is computationally prohibitive, acting as a major bottleneck. [17] [3] | A single flexible torsion scan using traditional QM methods can take days. Generating a dataset of 3.2 million torsion profiles at the B3LYP-D3(BJ)/DZVP level requires massive computational resources. [17] [3] |

Iterative Optimization Protocols

To overcome the challenges outlined above, the field is moving towards automated, iterative optimization procedures that simultaneously refine multiple parameter types against diverse and expansive datasets.

Protocol 1: Bayesian Optimization with the BICePs Algorithm

Bayesian Inference of Conformational Populations (BICePs) is a refinement algorithm that reconciles simulated ensembles with sparse or noisy experimental observables by sampling the full posterior distribution of conformational populations and experimental uncertainty. [18]

Objective: To optimize force field parameters against ensemble-averaged experimental measurements while automatically accounting for uncertainty and systematic error.

Workflow Overview:

Detailed Procedure:

Define the Prior and Likelihood:

- Prior (

p(X)): Obtain a theoretical model of conformational state populations, typically from an MD simulation using an initial force field. [18] - Likelihood (

p(D|X,σ)): Construct a function quantifying the agreement between forward model predictionsf(X)and experimental dataD. A Gaussian likelihood is common, but BICePs can employ more robust models like the Student's-t distribution to automatically detect and down-weight outliers and data points subject to systematic error. [18]

- Prior (

Sample the Posterior Distribution:

- Use Markov Chain Monte Carlo (MCMC) sampling to explore the Bayesian posterior distribution

p(X,σ|D), which represents the conformational populations and uncertainty parameters given the experimental data. [18] - Employ a replica-averaged forward model to approximate ensemble averages, ensuring no adjustable regularization parameters are needed. [18]

- Use Markov Chain Monte Carlo (MCMC) sampling to explore the Bayesian posterior distribution

Compute the BICePs Score and Optimize:

- Calculate the BICePs score, a free energy-like quantity that reflects the total evidence for a model. [18]

- Use variational minimization to optimize force field parameters by minimizing the BICePs score. This process leverages the first and second derivatives of the score for efficient convergence. [18]

Key Research Reagents:

Table 2: Essential Components for BICePs Optimization

| Item | Function / Description |

|---|---|

| Initial Force Field & MD Engine | Provides the initial conformational prior, p(X). (e.g., CHARMM, AMBER, OpenMM). |

| Experimental Observables (D) | Sparse or noisy ensemble-averaged data for refinement (e.g., NMR J-couplings, scalar couplings, distance measurements). |

| BICePs Software Package | Implements the core algorithm for posterior sampling and score calculation. [18] |

| Gradient-Based Optimizer | A variational optimization algorithm that minimizes the BICePs score. |

Protocol 2: Active Learning-Driven Force Field Optimization

This protocol leverages machine learning and active learning to iteratively expand force field coverage across a vast chemical space, directly addressing transferability and computational cost.

Objective: To generate a highly accurate and transferable force field for drug-like molecules by creating a massive, diverse QM dataset and training a graph neural network (GNN) to predict parameters.

Workflow Overview:

Detailed Procedure:

Dataset Construction and Molecular Fragmentation:

- Curate a diverse set of drug-like molecules from databases like ChEMBL and ZINC. [3]

- Use a graph-expansion algorithm to cleave molecules into smaller fragments (e.g., <70 atoms). This preserves local chemical environments and makes QM calculations feasible. Cap cleaved bonds with methyl groups or other linkers to maintain valency. [3]

High-Throughput QM Calculations:

- Perform quantum chemical calculations on the generated fragments to create two primary datasets:

- Optimization Dataset: Geometry optimize each fragment and compute the analytical Hessian matrix at a level of theory such as B3LYP-D3(BJ)/DZVP. This dataset is used to fit bond and angle parameters. [3]

- Torsion Dataset: Perform flexible torsion scans on all non-ring rotatable bonds in the fragments at the same QM level. This involves fixing the dihedral angle and optimizing all other degrees of freedom to generate a relaxed potential energy profile. [3]

- Perform quantum chemical calculations on the generated fragments to create two primary datasets:

Graph Neural Network Training:

- Train a symmetry-preserving Graph Neural Network (GNN) on the massive QM dataset. [3]

- The model learns to map the molecular graph (atoms and bonds) directly to all MM force field parameters (bonded and non-bonded) simultaneously. [3]

- Employ a differentiable partial Hessian loss to ensure the predicted parameters accurately reproduce the QM vibrational frequencies. [3]

Validation and Iteration:

- Validate the resulting force field (e.g., ByteFF) on benchmark datasets by comparing its performance against existing force fields in predicting relaxed geometries, torsional energy profiles, and conformational energies. [3]

- The model can be iteratively refined by incorporating new molecules that explore under-represented regions of chemical space, following an active learning paradigm.

Key Research Reagents:

Table 3: Essential Components for Data-Driven Force Field Development

| Item | Function / Description |

|---|---|

| Chemical Databases (ChEMBL, ZINC) | Source for diverse, drug-like molecular structures. [3] |

| Fragmentation Software (e.g., RDKit) | Tools to systematically cleave large molecules into chemically meaningful fragments. |

| Quantum Chemistry Software | Software (e.g., Gaussian, Q-Chem, ORCA) to generate high-quality reference data (optimized geometries, Hessians, torsion scans). |

| GNN Framework (e.g., PyTorch Geometric) | Framework for building and training the graph neural network model for parameter prediction. |

The challenges of parameter interdependence, transferability, and computational cost are significant but not insurmountable. The protocols detailed herein—Bayesian optimization with BICePs for refining parameters against experimental data, and active learning with GNNs for generating transferable, high-coverage force fields—represent the cutting edge in moving beyond traditional, manual parameterization. By adopting these iterative, data-driven, and automated approaches, researchers can develop more robust and accurate force fields. This will enhance the predictive power of molecular simulations, ultimately accelerating progress in fields like drug discovery and materials science.

In computational chemistry and materials science, a force field refers to the functional form and parameter sets used to calculate the potential energy of a system of atoms or molecules [1]. Force fields are essential for molecular dynamics and Monte Carlo simulations, enabling the study of system behavior and properties from the atomistic level up to micrometre scales [19] [1]. The core challenge in force field development lies in the trade-off between computational efficiency and accuracy, particularly in modeling complex processes like chemical reactions. This review provides a comprehensive overview of the three main classes of force fields—Classical, Reactive, and Machine Learning potentials—framed within the context of iterative optimization training procedures. We present detailed protocols for their application and evaluation, supporting ongoing research in automated force field development.

Classification and Core Characteristics of Force Fields

Comparative Analysis of Force Field Types

The table below summarizes the fundamental characteristics of the three primary force field types, highlighting their differing parameter complexities, applicabilities, and computational demands.

Table 1: Key Characteristics of Major Force Field Types

| Feature | Classical Force Fields | Reactive Force Fields (ReaxFF) | Machine Learning Potentials (MLIPs) |

|---|---|---|---|

| Typical Number of Parameters | 10 - 100 [19] [20] | 100 - 500 [19] [20] | Can exceed 100,000 (data-dependent) [19] |

| Parameter Interpretability | High (clear physical meaning) [19] [20] | Moderate (mix of physical and empirical terms) [19] [20] | Low ("black box" models) [19] |

| Ability to Model Bond Breaking/Formation | No (fixed bonds) [21] [1] | Yes (via bond order) [21] [19] | Yes (trained on reactive data) [22] |

| Computational Cost | Low [19] | High (~30x classical) [21] | Variable (high training, lower inference) [22] |

| Primary Application Scope | Non-reactive molecular dynamics, structural properties [19] [1] | Chemical reactions, combustion, material failure [21] [19] | Complex, system-specific PES with near-DFT accuracy [22] |

| Key Functional Forms | Harmonic bonds, Lennard-Jones, Coulomb [1] | Bond-order dependent potentials [21] [19] | Neural networks, Gaussian processes [19] |

Detailed Force Field Descriptions

Classical Force Fields: These are the most established type, calculating a system's energy using simplified physical potential functions. The total energy is typically a sum of bonded terms (bond stretching, angle bending, dihedral torsions) and non-bonded terms (van der Waals described by Lennard-Jones potential and electrostatic interactions described by Coulomb's law) [1]. Their functional forms are simple and numerically efficient, making them suitable for simulating large systems over long timescales. However, their fixed bonding topology prevents them from simulating chemical reactions [21] [19].

Reactive Force Fields (ReaxFF): ReaxFF addresses the limitation of classical force fields by introducing a bond-order formalism, which dynamically describes the strength of a bond based on the instantaneous interatomic distance [21] [19]. This allows bonds to break and form during a simulation. While this capability is powerful, it comes at a cost: the potential function contains a large number of additional energy terms (e.g., for over-coordination, lone pairs, and conjugation), making it computationally expensive—about 30 times slower than a comparable classical force field simulation [21]. Its parametrization is complex, involving a mix of physical and empirical terms [19].

Machine Learning Potentials (MLIPs): MLIPs represent a paradigm shift. Instead of using pre-defined physical equations, they use machine learning models (e.g., neural networks) to learn the relationship between atomic configurations and the system's energy and forces from reference quantum mechanical (QM) data [22]. This allows them to achieve accuracy close to the QM method they were trained on, while being much faster for molecular dynamics simulations. A key challenge is their lack of interpretability and their tendency to be unstable when simulating configurations far outside their training data [22].

Iterative Optimization Workflow in Force Field Development

The development of accurate and reliable force fields relies heavily on robust iterative optimization procedures. These workflows are designed to systematically refine force field parameters to minimize the discrepancy between simulated properties and target data, which can come from both QM calculations and experimental measurements.

Diagram 1: Generalized Parameter Optimization Workflow

Protocol: Gradient-Based Parameter Optimization

This protocol is adapted from the GROW (GRadient-based Optimization Workflow) methodology for automating force field parameterization [23].

Problem Definition:

- Define the loss function, ( F(x) ), which quantifies the difference between simulated and target data. A common form is a weighted sum of squared relative errors: ( F(x) = \sum{i=1}^{n} wi \left( \frac{fi^{exp} - fi^{sim}(x)}{fi^{exp}} \right)^2 ) where ( x ) is the parameter vector, ( n ) is the number of properties, ( fi^{exp} ) is the target value, ( fi^{sim} ) is the simulated value, and ( wi ) is the weight [23].

- Select target properties (e.g., densities, energies, elastic constants) and their reference values.

Initialization:

- Provide an initial parameter vector ( x_0 ). This starting point should be reasonably close to the expected minimum for faster convergence [23].

Iterative Optimization Loop:

- Simulation & Analysis: Run molecular simulations (e.g., Molecular Dynamics or Monte Carlo) using the current parameter set ( xk ). Calculate the physical properties ( fi^{sim}(x_k) ) from the simulation trajectories [23].

- Loss Evaluation: Compute the value of the loss function ( F(x_k) ) [23].

- Convergence Check: If a stopping criterion is met (e.g., ( F(x_k) \leq \tau ), where ( \tau ) is a predefined tolerance, or the change in ( F(x) ) is negligible), exit the loop and finalize the parameters [23].

- Parameter Update: If not converged, calculate the gradient of the loss function with respect to the parameters. Use a gradient-based optimization algorithm (e.g., Steepest Descent, Conjugate Gradient, or quasi-Newton methods) to determine a search direction and update the parameters to ( x_{k+1} ) [23]. Repeat the loop.

Advanced Optimization: Machine Learning Force Field Pre-Training

A modern approach to optimizing MLIPs involves a two-stage pre-training and fine-tuning strategy to improve stability and data efficiency [22].

Diagram 2: MLIP Pre-Training and Fine-Tuning Workflow

Pre-Training Stage:

- Data Generation: Use "rattling" methods to generate a large and diverse set of molecular configurations, including high-energy, unphysical states. This comprehensively samples the potential energy surface (PES) [22].

- Training: Train the MLIP on this large dataset, using energies and forces labeled by a simple, non-reactive classical force field. This provides a physically reasonable, though not highly accurate, PES with correct limiting behaviors, "smoothing" the landscape and preventing "holes" that cause simulation instability [22].

Fine-Tuning Stage:

- Data Curation: Compile a smaller, targeted set of high-quality ab initio (e.g., DFT) data that focuses on chemically relevant regions (equilibrium structures, transition states) [22].

- Fine-Tuning: Continue training the pre-trained MLIP on this high-quality dataset. This stage specializes the model for chemical accuracy in the relevant regions while maintaining the stability learned during pre-training [22].

Experimental Protocols for Force Field Evaluation

Protocol: Benchmarking Energetics and Elastic Properties

This protocol outlines a high-throughput method for evaluating the transferability of classical and reactive potentials, based on the methodology from the NIST interatomic potential repository project [24].

Structural Data Acquisition:

High-Throughput Calculation Setup:

- Use a computational framework like

MPinterfacesto automate the generation of input files for simulation packages like LAMMPS [24]. - For each material and force field pair, set up calculations to compute:

a. Energy vs. Volume: Perform structural relaxations over a range of volumes to obtain the equation of state.

b. Elastic Constants: Use the built-in LAMMPS

compute elasticcommand (applying small strains, e.g., 10⁻⁶) to calculate the full elastic constant matrix (C₁₁, C₁₂, C₄₄, etc.) [24].

- Use a computational framework like

Execution and Analysis:

- Run the high-throughput calculations on a compute cluster.

- For energetics: Calculate the cohesive energy and, for multi-component systems, generate convex hull plots to assess phase stability using tools like

pymatgen[24]. - For elastic properties: Extract the elastic constants and moduli from the LAMMPS output.

Benchmarking and Validation:

- Compare the force field results with reference data from density functional theory (DFT) (e.g., from the Materials Project) and experimental data, where available [24].

- Perform statistical analysis (e.g., Principal Component Analysis - PCA) on the relative errors to identify correlated deficiencies in the force field predictions [24].

Protocol: Simulating Bond Dissociation and Material Failure with a Reactive Force Field

This protocol describes how to simulate bond-breaking processes using a reactive force field, specifically employing the IFF-R method which uses Morse potentials [21].

System Preparation:

- Construct the initial atomic structure (e.g., a polymer chain, carbon nanotube, or metal crystal).

- For IFF-R, substitute harmonic bond potentials in the force field with Morse potentials for the bonds intended to be reactive. The Morse potential is ( E{Morse} = D{ij} [1 - e^{-\alpha{ij}(r{ij} - r{0,ij})}]^2 ), where ( D{ij} ) is the dissociation energy, ( \alpha{ij} ) controls the width of the potential well, and ( r{0,ij} ) is the equilibrium bond length [21].

Parameterization:

- Obtain ( r_{0,ij} ) from the original harmonic potential or experimental data.

- Set ( D_{ij} ) using experimental bond dissociation energies or high-level QM calculations (e.g., CCSD(T) or MP2) [21].

- Fit ( \alpha_{ij} ) to match the vibrational frequencies (from IR/Raman spectroscopy) or to align the Morse curve with the harmonic curve near equilibrium [21].

Simulation of Mechanical Failure:

- Energy Minimization: First, minimize the energy of the system to a force tolerance (e.g., 10⁻¹⁰ eV/Å) [24].

- Equilibration: Run an NVT or NPT simulation to equilibrate the system at the desired temperature and pressure.

- Deformation: Apply a uniaxial strain to the simulation box at a constant strain rate.

- Data Collection: Monitor the stress tensor, potential energy, and atomic coordinates throughout the deformation. Track the breaking of individual bonds as distances exceed the critical bond length defined by the Morse potential.

Essential Research Reagents and Computational Tools

The table below lists key software, databases, and tools essential for force field development, evaluation, and application.

Table 2: Key Research Reagents and Computational Tools

| Tool / Resource Name | Type | Primary Function | Relevance to Force Field Development |

|---|---|---|---|

| LAMMPS | Software | Molecular Dynamics Simulator | A highly versatile and widely used MD code for evaluating energies, elastic constants, and running reactive simulations with various force fields [24] [21]. |

| Materials Project (MP) | Database | DFT-Computed Material Properties | Provides a vast source of reference data (structures, energies, elastic constants) for force field parameterization and benchmarking [24]. |

| NIST IPR | Database | Interatomic Potential Repository | A curated collection of tested potential parameter files for download, facilitating evaluation and dissemination [24]. |

| GROW | Software | Gradient-based Optimization Workflow | An automated tool for performing iterative, gradient-based optimization of force field parameters against target data [23]. |

| IFF-R | Force Field | Reactive INTERFACE Force Field | An extension of a classical force field using Morse potentials to enable bond breaking with high computational efficiency (~30x faster than ReaxFF) [21]. |

| ReaxFF | Force Field | Reactive Force Field | A bond-order potential for modeling complex chemical reactions in large systems, though with higher computational cost [21] [19]. |

Step-by-Step Workflows and Real-World Application Pipelines

Within the iterative optimization paradigm for modern force field development, the ability to systematically generate, manage, and utilize high-quality quantum chemical (QC) reference data is paramount. The process requires not only robust computational methodologies but also automated, scalable, and reproducible pipelines to handle the vast chemical space of drug-like molecules. The Open Force Field (OpenFF) initiative addresses this need through an integrated software ecosystem, enabling the generation of extensive datasets that are critical for training and validating force field parameters. This ecosystem has been instrumental in the development of force fields like OpenFF Sage (version 2.0.0), which incorporates valence parameters trained against a large database of quantum chemical calculations and improved Lennard-Jones parameters refined using condensed-phase mixture data [25]. This application note details the protocols for leveraging the QCSubmit and QCFractal packages to construct such quantum chemical datasets, providing a practical guide for researchers engaged in force field parameterization.

The Automated Data Generation Ecosystem

The automated data generation workflow is built upon a suite of interoperable software tools, primarily from the QC* suite developed by the Molecular Sciences Software Institute (MolSSI) and the OpenFF toolkit. QCFractal serves as a distributed compute and database platform for quantum chemistry, functioning as the central backbone that manages calculation workloads and results [26] [27]. QCSubmit provides the automated tools for curating molecular datasets and submitting them to a QCFractal instance [28] [27]. Underlying these are QCEngine, which provides a unified interface to various quantum chemistry programs (e.g., Psi4, Gaussian), and QCSchema, which defines a standard for representing quantum chemical data [26]. The culmination of this infrastructure is the Quantum Chemistry Archive (QCArchive), a public repository that, as of June 2022, housed over 98 million molecules and 104 million calculations, representing a massive community resource [26].

The logical workflow and the relationship between these components are illustrated below.

Research Reagent Solutions: Essential Software Tools

The following table details the key software components required to establish an automated quantum chemical data generation pipeline.

Table 1: Essential Software Tools for Automated QC Data Generation

| Tool Name | Primary Function | Key Features | Computation Type |

|---|---|---|---|

| QCSubmit [28] [27] | Dataset Curation & Submission | Creates dataset "factories" (Basic, Optimization, TorsionDrive), validates inputs, handles molecule standardization. | Dataset Preparation |

| QCFractal [26] [27] | Distributed Compute Management | Manages job queues, interfaces with HPC schedulers (SLURM, PBS), orchestrates calculations across multiple clusters. | Job Management & Execution |

| QCEngine [26] | Program Execution Interface | Parses QCSchema, provides unified interface to ~20+ QM/MM programs (Psi4, GAMESS, XTB, etc.). | Calculation Execution |

| QCArchive [26] | Data Storage & Repository | Public/private data storage, ~100M+ calculations, provides API for querying and retrieving datasets. | Data Storage & Access |

| Geometric [29] | Geometry Optimization | Used as the backend for OptimizationDataset calculations, implements efficient optimization algorithms. |

Structure Optimization |

| Psi4 [26] | Electronic Structure Code | Open-source quantum chemistry program; often used as the workhorse for QM calculations in OpenFF pipelines. | Quantum Chemical Calculation |

Core Dataset Types and Generation Protocols

The OpenFF infrastructure supports several types of datasets, each tailored for specific force field parameterization tasks. The quantitative characteristics of these core dataset types are summarized below.

Table 2: Core Quantum Chemical Dataset Types for Force Field Development

| Dataset Type | Force Field Target | Key Outputs | Typical QM Method |

|---|---|---|---|

| TorsionDriveDataset [27] | Torsion parameters | 1D/2D torsion potential energy surfaces, optimized geometries at each grid point. | B3LYP-D3BJ/DZVP [27] |

| OptimizationDataset [29] | Bond, Angle, and Van der Waals parameters | Optimized equilibrium geometries, single-point energies, harmonic frequencies, multipole moments. | B3LYP-D3BJ/DZVP [29] |

| BasicDataset (Single-point) [26] | General benchmarking, charge models | Single-point energies, molecular properties (dipole, quadrupole), optionally Hessians. | Varies by target property |

| Dataset Mixtures (e.g., QCML) [30] | Machine-learned potentials (MLFFs) | Energies, atomic forces, multipole moments, Kohn-Sham matrices for both equilibrium and off-equilibrium structures. | DFT and Semi-empirical |

Protocol: Generating a TorsionDriveDataset

Torsion drive scans are critical for refining the torsional parameters of a force field. The following protocol outlines the steps to create a dataset of torsion scans [27].

- Initialize Factory and Specification: Create a

TorsiondriveDatasetFactoryand define the quantum chemistry specification. TheQCSpecobject specifies the method (e.g.,'B3LYP-D3BJ'), basis set (e.g.,'DZVP'), and program (e.g.,'psi4') [27]. - Define Torsion Scan Workflow: Configure the workflow components. The

ScanEnumeratoris used with aScan1Dcomponent, which specifies the central torsion to scan using a SMARTS pattern (e.g.,"[*:1]-[#6:2]-[#6:3]-[*:4]"for a C-C bond), the scan range (e.g.,(-150, 180)degrees), and the increment (e.g.,30degrees) [27]. - Prepare Input Molecules: Generate the input molecules from SMILES strings or structure files. A

StandardConformerGeneratorcomponent is typically added to the workflow to generate multiple initial conformers for each molecule [27]. - Create and Submit Dataset: Call the

create_datasetmethod of the factory with the prepared molecules, a dataset name, and a description. The resulting dataset object is then submitted to a QCFractal instance (local or public) using thesubmitmethod [27].

Protocol: Generating an OptimizationDataset

Geometry optimization datasets are used for training valence parameters (bonds, angles) and for benchmarking [26] [29].

- Dataset Initialization: Instantiate an

OptimizationDatasetobject, providing adataset_name,description, and other metadata [29]. - Configure Quantum Chemistry: Add one or more quantum chemistry specifications via the

add_qc_specmethod. The specification includes method, basis set, program, and optional settings likeimplicit_solventormaxiter[29]. - Set Optimization Procedure: The dataset uses an

optimization_procedure, typically with the'geometric'program and a specified convergence set (e.g.,'GAU'for Gaussian-style convergence) [29]. - Add Molecules and Submit: Populate the dataset with molecules using the

add_moleculemethod. Each molecule entry requires a unique index and a 3D structure. Finally, submit the dataset to a QCFractal instance using thesubmitmethod [29].

Data Management, Submission, and the Dataset Lifecycle

Managing the lifecycle of a dataset submission is crucial for reproducibility and tracking. The OpenFF community uses a GitHub-based pipeline (qca-dataset-submission repository) with an integrated lifecycle model [26] [31]. The diagram below illustrates the stages a dataset passes through from submission to archival.

- Submission and Validation: Datasets are submitted via a pull request to the

qca-dataset-submissionrepository. GitHub Actions automatically validate the submission, checking for errors in the dataset structure [31]. - Computation and Error Cycling: Once merged, the dataset is submitted to QCArchive. Calculations are dispatched to HPC resources. A key feature is automated error cycling, where a bot periodically identifies and restarts failed calculations, significantly improving completion rates [26] [31].

- Data Retrieval and Archiving: Completed datasets can be retrieved programmatically using QCSubmit. Once a dataset reaches an acceptable completion level (e.g., >99%), it is manually reviewed and moved to an "Archived/Complete" state [26] [31].

Application Example: Building a Training Set for Alkane Torsions

This practical example demonstrates the creation of a small torsion drive dataset for linear alkanes, a common step in an iterative force field training cycle [27].

This example creates a dataset that will perform a torsion drive around every central C-C bond in ethane, propane, and butane, scanning at 30-degree increments. The resulting energy profiles provide the direct reference data needed to fit and validate the torsional parameters in the force field for these molecules.

The development of accurate molecular force fields represents a cornerstone of reliable computational research in material science and drug design. The process of force-field parameter (FFParam) optimization is an ongoing endeavor, crucial for enhancing the predictive power of molecular simulations [32]. Traditional methodologies often treat parameterization, conformational sampling, and data management as separate challenges. However, a paradigm shift is underway towards integrated, iterative workflows. This protocol details the application of such an iterative loop, which synergistically combines advanced parameter optimization algorithms, enhanced conformational sampling techniques, and strategic data augmentation to accelerate and improve the force field development process. By framing these elements as interconnected components of a cyclic refinement process, researchers can achieve more robust, accurate, and transferable molecular models.

The following diagram illustrates the core iterative loop that integrates parameter fitting, conformational sampling, and data augmentation for force field development.

Experimental Protocols

Protocol 1: Force Field Parameter Optimization

This protocol outlines the steps for optimizing force field parameters using a combination of metaheuristic algorithms and machine learning (ML) surrogate models to improve efficiency.

Research Reagent Solutions

Table 1: Key Computational Reagents for Parameter Optimization

| Reagent/Tool | Type | Primary Function |

|---|---|---|

| Simulated Annealing (SA) [33] | Algorithm | Explores parameter space widely to escape local minima. |

| Particle Swarm Optimization (PSO) [33] | Algorithm | Efficiently converges towards optimal regions based on swarm intelligence. |

| Concentrated Attention Mechanism (CAM) [33] | Algorithm | Prioritizes fitting to key, representative data points (e.g., transition states). |

| Machine Learning Surrogate Model [32] | Model | Replaces computationally expensive MD calculations for rapid evaluation. |

| Bayesian Inference of Conformational Populations (BICePs) [18] | Algorithm | Refines parameters against noisy, ensemble-averaged experimental data. |

Detailed Methodology

- Objective Function Definition: Define the objective function as the weighted sum of squared differences between simulation outcomes and target data (e.g., quantum mechanical energies, experimental densities, NMR observables) [32] [18].

- Hybrid Algorithm Setup: Implement a hybrid optimizer combining Simulated Annealing (SA) and Particle Swarm Optimization (PSO).

- Initialize a population of parameter sets.

- Allow SA to perform broad exploration in early stages, accepting some suboptimal moves to avoid local minima.

- Use PSO to refine promising parameter sets, where each particle adjusts its position based on its own experience and the swarm's best-found solution [33].

- Integration of Concentrated Attention Mechanism (CAM): Modify the objective function to assign higher weights to errors associated with critical molecular configurations, such as transition states or optimally bonded structures, to improve the physical accuracy of the refined force field [33].

- Surrogate Model Deployment: For iterative protocols requiring thousands of evaluations, train an ML surrogate model (e.g., a neural network) on initial molecular dynamics (MD) data. This model learns the mapping from force field parameters to target properties, replacing the need for full MD simulations in subsequent optimization steps, which can speed up the process by a factor of ~20 [32].

- Bayesian Refinement (Optional): For integration with experimental ensemble data, use the BICePs algorithm. Minimize the BICePs score, which accounts for uncertainty in both the experimental data and the forward model, to robustly optimize parameters against sparse or noisy observables [18].

Protocol 2: Enhanced Conformational Sampling

This protocol describes methods for generating comprehensive conformational ensembles, which are essential for evaluating and training force fields against ensemble-averaged experimental data.

Research Reagent Solutions

Table 2: Key Reagents for Enhanced Conformational Sampling

| Reagent/Tool | Type | Primary Function |

|---|---|---|

| Molecular Dynamics (MD) [34] | Simulation Method | Computes physical trajectories of atoms over time. |

| Generalized Ensemble Methods (GEPS) [35] | Enhanced Sampling | Enhances sampling by modulating system parameters (e.g., charges in ALSD, REST2). |

| Zero-Multipole Summation Method (ZMM) [35] | Electrostatic Calculator | Accelerates electrostatic calculations by assuming local neutrality. |

| Coarse-grained ML Potentials [36] | Machine Learning Force Field | Provides a smoother energy landscape for faster exploration. |

| Generative Models (e.g., Diffusion Models) [36] [34] | AI Sampler | Directly samples diverse, statistically independent structures from the equilibrium distribution. |

Detailed Methodology

- System Preparation: Obtain an initial protein structure from databases like the PDB or from AI-based predictors like AlphaFold2. Prepare the system using standard tools (e.g.,

pdb2gmxin GROMACS) by solvating it in a water box and adding necessary ions. - Sampling Technique Selection:

- Option A (Physics-based MD with Enhanced Sampling): Run MD simulations using packages like GROMACS, AMBER, or OpenMM. Integrate generalized ensemble methods (GEPS) like REST2 to improve sampling efficiency. For large systems, combine GEPS with the Zero-Multipole Summation Method (ZMM) to accelerate electrostatic calculations without introducing significant bias, provided the system is not highly polarized [35].

- Option B (AI-based Generative Models): Use pre-trained generative models like AlphaFlow or UFConf. Input the protein sequence or a single structure, and run the model to generate a diverse set of plausible conformations. This approach bypasses the long timescales of MD by directly sampling the equilibrium distribution [36] [34].

- Option C (Coarse-grained ML Potentials): For specific protein systems, employ a transferable coarse-grained ML potential (e.g., from Majewski et al. or Charron et al.). These potentials, trained on extensive MD data, offer a smoother energy landscape, enabling faster exploration of folding pathways and free energy landscapes [36].

- Ensemble Generation and Analysis: Run the chosen sampling method to produce a trajectory or a set of discrete structures. Analyze the resulting ensemble using metrics like Root Mean Square Fluctuation (RMSF), radius of gyration, or dihedral angle distributions to characterize the conformational landscape.

Protocol 3: Data Augmentation for Surrogate Model Training

This protocol covers the creation of augmented datasets to improve the performance and robustness of ML surrogate models used within the iterative loop.

Research Reagent Solutions

Table 3: Key Reagents for Data Augmentation in Molecular Modeling

| Reagent/Tool | Type | Primary Function |

|---|---|---|

| Classical MD Trajectories [32] | Primary Data Source | Provides initial, physically accurate data for training and augmentation. |

| Generative AI (GANs, Diffusion Models) [37] [36] | Augmentation Tool | Creates realistic synthetic molecular configurations to expand data diversity. |

| Geometric Transformations [37] | Augmentation Technique | Applies rotations, translations, and scaling to existing structures. |

| Noise Injection [37] [38] | Augmentation Technique | Adds random noise to atomic coordinates or simulation conditions to improve model robustness. |

pydub & librosa [38] |

Software Library | Implements various audio/data augmentation strategies (adaptable for molecular data). |

Detailed Methodology

- Initial Data Acquisition: Generate the primary dataset by running a limited number of MD simulations across a carefully chosen range of force field parameters. The target properties from these simulations (e.g., density, conformational energies) form the labels for the surrogate model [32].

- Data Augmentation Execution:

- Geometric Transformations: Apply random rotations and translations to the molecular configurations in the dataset. This ensures the surrogate model is invariant to the global orientation and position of the molecule [37].

- Synthetic Data Generation: Use generative models (e.g., GANs or diffusion models) trained on the primary data to create novel, yet physically plausible, molecular configurations and their corresponding properties. This is particularly valuable for sampling rare events or conformational states [37] [36].

- Parameter Space Perturbation: Slightly perturb the input force field parameters and use the physical force field to quickly generate corresponding new data points, effectively creating more examples around the boundaries of the initial parameter set.

- Noise Introduction: Add small, random noise to the atomic coordinates of structures in the training set. This helps prevent overfitting and makes the resulting surrogate model more tolerant to numerical uncertainties [37] [38].

- Surrogate Model Training and Validation: Train a neural network or other ML model on the combined original and augmented dataset. Validate the model's predictions on a held-out test set of high-fidelity MD simulation results that were not used in training or augmentation [32].

Integrated Application Note

The synergistic integration of these three protocols creates a powerful, accelerated workflow for force field development. The core of this integration is the substitution of the most time-consuming component—direct MD simulation—with an ML surrogate model that has been trained on both physical simulation data and augmented samples [32]. In practice, an initial set of parameters is used to generate a conformational ensemble via Protocol 2. This data is then fed into Protocol 3 to create a robust, augmented dataset for training a fast-executing surrogate model. Protocol 1 then operates using this surrogate model to evaluate candidate parameters, drastically reducing the optimization time. The refined parameters from this cycle can be used to initiate a new, higher-fidelity iteration of the loop, further improving the force field until convergence is achieved. This closed-loop approach, which leverages the strengths of physical simulation, AI-based sampling, and data augmentation, represents a state-of-the-art methodology for tackling the complex challenge of force field parameterization [36].

The development of accurate molecular force fields represents a cornerstone of computational chemistry and drug discovery, enabling the prediction of molecular interactions, stability, and binding affinities. The accuracy of these force fields hinges on the optimization algorithms used to parameterize them. This article explores advanced optimization algorithms—from population-based methods like Particle Swarm Optimization (PSO) to gradient-based techniques and metaheuristics like Simulated Annealing—within the context of iterative optimization training procedures for force field development. We provide a detailed examination of their applications, comparative performance, and practical protocols for implementation, specifically framed for researchers and professionals engaged in computational drug development.

The table below catalogues key resources frequently employed in force field optimization and molecular simulation workflows.

Table 1: Key Research Reagent Solutions for Force Field Optimization and Molecular Simulation

| Item Name | Function/Application | Relevance to Optimization |

|---|---|---|

| Cambridge Structural Database (CSD) [39] | A repository of experimentally determined small molecule crystal structures. | Provides the experimental structural data used as a benchmark for training and validating force fields. |

| Rosetta Molecular Modelling Suite [39] | A comprehensive software platform for macromolecular structure prediction and design. | Implements energy functions (e.g., RosettaGenFF) and algorithms (e.g., GALigandDock) for force field optimization and docking. |

| Neural Network Potentials (NNPs) [40] [41] | Machine learning models trained to approximate quantum-mechanical potential energy surfaces. | Serves as a high-accuracy, computationally efficient target for force field parameterization and validation. |

| Atomic Simulation Environment (ASE) [40] | A set of tools and Python modules for setting up, manipulating, running, visualizing, and analyzing atomistic simulations. | Provides implementations of standard optimizers (L-BFGS, FIRE) and an interface for applying them to molecular systems. |

| geomeTRIC [40] | An optimization library specializing in geometry optimization for molecular systems. | Implements advanced internal coordinates (TRIC) for more efficient and robust convergence to minimum-energy structures. |

| Sella [40] | An open-source package for geometry optimization, including transition states and minima. | Uses internal coordinates and a rational function optimization approach for precise location of equilibrium geometries. |

Comparative Performance of Optimization Algorithms

The selection of an optimization algorithm significantly impacts the success of molecular geometry optimizations. The following table summarizes quantitative performance data for various optimizer and Neural Network Potential (NNP) pairings on a benchmark of 25 drug-like molecules.

Table 2: Optimizer and NNP Performance Benchmarking for Molecular Geometry Optimization [40]

| Optimizer | Neural Network Potential (NNP) | Success Rate (/25) | Avg. Steps to Converge | Minima Found (/25) |

|---|---|---|---|---|

| ASE/L-BFGS | OrbMol | 22 | 108.8 | 16 |

| ASE/L-BFGS | OMol25 eSEN | 23 | 99.9 | 16 |

| ASE/L-BFGS | AIMNet2 | 25 | 1.2 | 21 |

| Sella (internal) | OrbMol | 20 | 23.3 | 15 |

| Sella (internal) | OMol25 eSEN | 25 | 14.9 | 24 |

| Sella (internal) | AIMNet2 | 25 | 1.2 | 21 |

| geomeTRIC (tric) | OMol25 eSEN | 20 | 114.1 | 17 |

| geomeTRIC (tric) | GFN2-xTB | 25 | 103.5 | 23 |

Key Performance Insights:

- AIMNet2 demonstrated exceptional robustness, successfully optimizing all 25 molecules with all tested optimizers. [40]

- Sella with internal coordinates consistently achieved high success rates with low average step counts, indicating high efficiency. [40]

- Algorithms using internal coordinates (e.g., Sella internal, geomeTRIC-tric) generally outperformed their Cartesian-based counterparts in finding true local minima (fewer imaginary frequencies). [40]

- The performance of an optimizer is highly dependent on the specific NNP, underscoring the need for empirical testing. [40]

Application Notes and Experimental Protocols

Protocol 1: Force Field Parameterization via Crystal Structure Discrimination

This protocol details the procedure for optimizing a generalized force field (RosettaGenFF) using small-molecule crystal structures from the Cambridge Structural Database (CSD), as described in the literature. [39]

1. Objective: To simultaneously optimize 444 force field parameters (175 non-bonded and 269 torsional parameters) such that the native crystal lattice arrangements have lower energies than alternative decoy arrangements. [39]

2. Materials and Software:

- Source Data: 1,386 small molecule crystal structures from the CSD. [39]

- Software: Rosetta molecular modelling suite with extended symmetry machinery for handling crystallographic space groups. [39]

- Computing: High-performance computing cluster for intensive sampling (~50 CPU hours for 870 molecules, run thousands of times). [39]

3. Procedure:

- Step 1: Decoy Lattice Generation

- For each of the 1,386 small molecules, run thousands of independent Metropolis Monte Carlo with minimization (MCM) simulations.

- Randomize initial conditions: assign a random crystallographic space group, randomize lattice parameters (cell volume, angles), and randomize the ligand conformation by sampling all rotatable dihedrals. [39]

- In each MCM cycle, perturb one of the following degrees of freedom: molecular translation/rotation, a single dihedral angle, or all lattice lengths/angles. Subsequently, perform minimization on all degrees of freedom. [39]

- Collect the lowest energy conformation from each simulation run, resulting in a vast ensemble of decoy crystal packings for each molecule. [39]

Step 2: Parameter Optimization with dualOptE

- Define an objective function that maximizes the energy gap between the native crystal structure and all generated decoy structures. [39]

- Use the Simplex-search-based dualOptE algorithm to iteratively adjust the 444 force field parameters to optimize this objective. [39]

- Embed thermodynamic data and protein-ligand complex structural data into the objective function to ensure balanced parameterization for both crystal lattices and biomolecular complexes. [39]

Step 3: Iterative Refinement

4. Validation:

- The final optimized force field (RosettaGenFF) is validated by testing its performance on a cross-docking benchmark of 1,112 protein-ligand complexes. A >10% improvement in success rate for recapitulating bound structures was reported. [39]

The following workflow diagram illustrates this iterative parameterization process:

Protocol 2: Iterative Training of Machine Learning Force Fields for Molecular Liquids

This protocol outlines the process for developing a robust Gaussian Approximation Potential (GAP) for a binary solvent mixture, addressing the challenge of scale separation between intra- and inter-molecular interactions. [41]

1. Objective: To create a stable and accurate ML force field for a molecular liquid mixture (e.g., EC:EMC electrolyte) that reproduces thermodynamic properties like density in NPT ensemble simulations. [41]

2. Materials and Software:

- Target System: Binary solvent mixture, e.g., Ethylene Carbonate (EC) and Ethyl Methyl Carbonate (EMC) in a 3:7 molar ratio. [41]

- Reference Data: Energies, forces, and virials computed using an ab initio method (e.g., PBE-D2). [41]

- Software: GAP framework with SOAP descriptors; classical force field (e.g., OPLS) for initial sampling. [41]

3. Procedure:

- Step 1: Initial Data Generation (Failure of Static Sets)

- Use a classical force field (OPLS) to sample diverse molecular configurations across a wide range of densities and temperatures. [41]

- Select uncorrelated configurations from these trajectories and compute their ab initio energies/forces.

- Attempt to train an ML potential on this fixed dataset. Note: Models trained this way often fail in NPT dynamics, leading to unphysical collapse of the density due to poor description of inter-molecular interactions, despite appearing stable in NVT/NVE ensembles. [41]

- Step 2: Iterative Training and Data Augmentation

- Iteration Loop:

- Train a new GAP model on the current training set. [41]

- Run an NPT molecular dynamics simulation using the newly trained GAP.

- Monitor for instabilities (e.g., spontaneous bubble formation, large density fluctuations). [41]

- Extract new configurations from these unstable trajectories and compute their ab initio properties.

- Augment the training set with these new, relevant configurations. [41]

- Data Augmentation Strategies:

- Iteration Loop:

4. Validation:

- The success of the final ML potential is determined by its ability to produce stable, long NPT trajectories and accurately reproduce the density predicted by the reference ab initio method. [41]

The iterative workflow for training a robust ML force field is shown below:

PSO is a population-based stochastic optimization technique inspired by the social behavior of bird flocking. [42]

Core Algorithm:

- Initialization: A swarm of particles is initialized with random positions (( \vec{x}0^i )) and velocities (( \vec{v}0^i )) in the d-dimensional search space. Each particle's best known position (( \vec{p}^i )) is initially its own position. [42]