From Microseconds to AI: The Evolution of Protein Molecular Dynamics Simulations in Drug Discovery

This article traces the transformative journey of molecular dynamics (MD) simulations from their rudimentary beginnings to their current status as an indispensable computational microscope in life sciences.

From Microseconds to AI: The Evolution of Protein Molecular Dynamics Simulations in Drug Discovery

Abstract

This article traces the transformative journey of molecular dynamics (MD) simulations from their rudimentary beginnings to their current status as an indispensable computational microscope in life sciences. Aimed at researchers and drug development professionals, it explores the foundational milestones that enabled atomic-scale observation of proteins, the methodological breakthroughs in hardware and software that expanded accessible timescales, and the critical application of these tools in structure-based drug design. It further investigates contemporary challenges and the innovative troubleshooting strategies being deployed, including machine learning force fields and enhanced sampling. Finally, it provides a comparative analysis of simulation methods against experimental data and quantum chemistry benchmarks, validating their accuracy and forecasting a future where AI-powered, ab initio-accurate simulations routinely guide the development of novel therapeutics.

The Dawn of a Computational Microscope: Foundational Principles and Early Milestones in Protein MD

The 1970s marked a pivotal turning point in computational biology, bridging the gap between theoretical concepts and practical simulation tools. For the first time, researchers could move beyond static structural snapshots to observe the dynamic motion of biological macromolecules. The first molecular dynamics (MD) simulation of a protein,

conducted in 1977, represented a revolutionary achievement that would eventually be recognized with a Nobel Prize. This article examines the historical context, methodological foundations, and technical execution of these pioneering simulations, which established the foundation for modern computational studies of protein dynamics, folding, and function [1] [2].

Historical and Scientific Context

The Pre-1970s Landscape

Prior to the 1970s, molecular simulations were limited to simple substances such as water and liquids formed from noble gases [2]. The theoretical foundations for molecular mechanics calculations had been established in the 1960s, notably through the work of Shneior Lifson's group at the Weizmann Institute of Science, where future Nobel laureates Arieh Warshel and Michael Levitt contributed to developing "consistent force field" (CFF) methodologies [3]. Meanwhile, Martin Karplus, initially focused on quantum chemistry and reaction dynamics, began his transition toward biological applications during a 1969-1970 sabbatical at the Weizmann Institute, where he connected with Lifson's group [1].

Converging Scientific Developments

Several key developments set the stage for the first protein simulations:

- Force Field Development: The Lifson group extended parameterization to include side-chain components necessary for proteins and pioneered testing on crystal structures of hydrocarbons and peptides [3]

- Early Biomolecular Calculations: In 1969, Levitt and Lifson performed the first energy minimizations for entire proteins (myoglobin and lysozyme) using a united-atom model [3]

- Hybrid QM/MM Approaches: Work by Warshel and Karplus (1972) and later by Warshel and Levitt (1976) on mixed quantum and molecular mechanical calculations provided crucial methodological advances [3]

These developments created the necessary theoretical and computational framework for tackling protein dynamics.

Methodological Foundations

Fundamental Principles of MD Simulations

Molecular dynamics simulations are based on applying Newton's laws of motion to atomic systems [4]. The core concept involves calculating the force exerted on each atom by all other atoms in the system, then using these forces to predict atomic positions over time. The mathematical foundation relies on classical mechanics, with forces derived from empirical potential energy functions known as force fields [5] [6].

The basic algorithm involves:

- Force Calculation: Computing forces on each atom based on its position relative to all other atoms

- Integration Step: Updating atomic positions and velocities using Newton's equations of motion

- Iteration: Repeating this process for millions of time steps to generate a trajectory

Table 1: Core Components of Early MD Force Fields

| Force Field Component | Physical Basis | Mathematical Form | Function |

|---|---|---|---|

| Bond stretching | Hooke's law | Harmonic potential | Maintain covalent bond lengths |

| Angle bending | Elastic deformation | Harmonic potential | Maintain bond angles |

| Torsional terms | Periodic barriers | Sinusoidal potential | Control rotation around bonds |

| van der Waals | Electron cloud repulsion/dispersion | Lennard-Jones 12-6 potential | Model short-range interactions |

| Electrostatics | Charge-charge interactions | Coulomb's law | Handle long-range interactions |

Computational Challenges

Early MD simulations faced significant computational barriers [1] [4]. The need for femtosecond time steps (1-2 fs) to maintain numerical stability meant that simulating even nanosecond-scale events required millions of iterations. With systems containing thousands of atoms, each requiring force calculations with all other atoms, the computational demand grew exponentially. These constraints limited early simulations to picosecond timescales and relatively small systems [6].

The Landmark Experiment: 1977 BPTI Simulation

The Research Team and Publication

The first MD simulation of a protein was published in Nature in 1977 by J. Andrew McCammon, Bruce Gelin, and Martin Karplus [1] [2]. This landmark study simulated bovine pancreatic trypsin inhibitor (BPTI), a small 58-residue protein [6] [7]. The work was conducted at Harvard University using cutting-edge computing resources available at the time, including IBM System/370 Model 168 computers [1].

System Setup and Technical Specifications

The BPTI simulation established technical protocols that would become standard in the field:

- Simulation System: The protein was represented in full atomic detail, though early simulations used simplified solvent representations or vacuum conditions [1]

- Time Scale: The initial simulation tracked 9.2 picoseconds of dynamics [6] [7]

- Time Step: 1-2 femtoseconds, required for numerical stability [5] [4]

- Force Field: Based on the consistent force field approach with parameters for proteins [3]

Table 2: Technical Specifications of the 1977 BPTI Simulation

| Parameter | Specification | Significance |

|---|---|---|

| Protein | Bovine Pancreatic Trypsin Inhibitor (BPTI) | Small, stable, well-characterized protein |

| Number of residues | 58 amino acids | Computationally tractable size |

| Simulation duration | 9.2 picoseconds | Limited by computational resources |

| Time step | 1-2 femtoseconds | Required to capture fastest atomic vibrations |

| Computational resources | IBM System/370 Model 168 | State-of-the-art for the era |

| Key observation | Proteins are flexible with continuous motion | Overturned view of proteins as static structures |

Key Findings and Implications

The BPTI simulation revealed that proteins are flexible molecules with continuous internal motions at room temperature, countering the prevailing view of proteins as relatively static structures [1]. This demonstration that statistical fluctuations are intrinsic to protein structure established the importance of dynamics for understanding biological function [1] [7].

Experimental Protocols and Methodologies

Simulation Workflow

The general protocol for MD simulations established in these early studies remains fundamentally unchanged today, comprising several critical stages as shown in the workflow below:

Key Research Reagents and Computational Tools

Table 3: Essential Research "Reagents" for Early Protein MD Simulations

| Component | Type/Example | Function/Role |

|---|---|---|

| Protein Structure | BPTI (PDB entry not available in 1977) | Initial atomic coordinates for simulation |

| Force Field | Consistent Force Field (CFF) | Empirical potential energy function |

| Integration Algorithm | Verlet or leap-frog | Numerical integration of equations of motion |

| Solvent Model | Simplified/implicit representations | Mimic aqueous environment |

| Computational Hardware | IBM System/370 Model 168 | Mainframe computer for calculations |

| Simulation Software | Custom-developed codes | Precursor to modern packages like CHARMM |

Technical Implementation Details

The first protein simulations employed specific technical approaches to manage computational limitations:

- Initialization: Starting structures were typically obtained from experimental (X-ray) structures [7]

- Energy Minimization: Essential to remove strong van der Waals clashes and structural distortions that could destabilize simulations [7]

- Solvation: Early simulations used simplified solvent representations due to computational constraints [1]

- Temperature Control: Systems were gradually heated from low initial temperatures to the target temperature (typically 300K) [7]

- Equilibration: Simulations were run until system properties (energy, temperature, pressure) stabilized before production phases [7]

Impact and Evolution

Immediate Scientific Impact

The 1977 BPTI simulation fundamentally altered biochemical perception by demonstrating that proteins exhibit significant structural fluctuations at physiological temperatures [1] [7]. This provided a new paradigm for understanding how protein dynamics influence function, including:

- Allosteric regulation based on transitions between conformational states [5]

- Enzyme mechanism and catalytic efficiency [5] [3]

- Molecular recognition and binding processes [5]

Methodological Evolution

The pioneering work inspired rapid methodological advances throughout the 1980s and beyond:

- Timescale Extension: By 1988, BPTI simulations reached 210 ps with explicit solvent [7]

- Force Field Refinement: Development of specialized biomolecular force fields (CHARMM, AMBER, GROMOS) [7]

- Solvent Treatment: Shift toward explicit solvent models for more realistic simulations [5]

- Hardware Advances: Specialized supercomputers and GPUs dramatically increased simulation capabilities [5] [4]

Connection to Modern Applications

The foundations established in the 1970s enabled contemporary applications of MD across biological sciences:

- Drug Discovery: MD simulations provide insights into ligand-target interactions and facilitate structure-based drug design [6] [4]

- Protein Folding: Simulations reveal folding pathways and intermediates inaccessible to experimental observation [8] [9]

- Disease Mechanisms: Studies of protein misfolding and aggregation in neurodegenerative diseases [8] [4]

- Functional Analysis: Understanding how conformational changes mediate biological function [5] [4]

Timeline of Key Developments

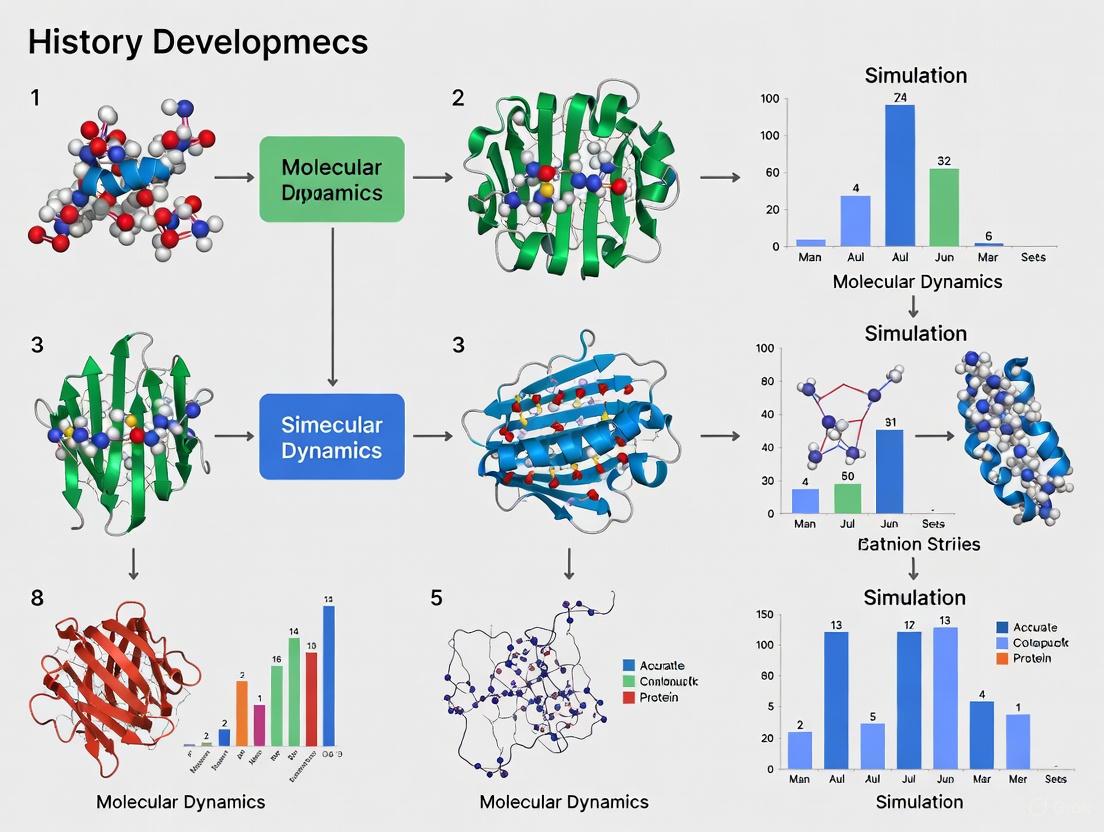

The emergence of protein MD simulations involved multiple interconnected breakthroughs across different institutions, as visualized in the following timeline:

The first protein molecular dynamics simulations in the 1970s represented a transformative achievement in computational biology, successfully merging computational statistical mechanics with biochemistry [1]. Despite severe computational limitations, these pioneering studies established the fundamental methodologies that would evolve into an essential tool for modern structural biology and drug discovery [6] [4]. The demonstration that proteins are inherently dynamic molecules, with motions critical to their function, created a new paradigm that continues to influence contemporary research across molecular biology, pharmaceutical development, and structural bioinformatics [5] [4]. The 2013 Nobel Prize in Chemistry awarded to Karplus, Levitt, and Warshel recognized the profound impact of these early contributions, which created a foundation for understanding complex chemical systems through computational approaches [3] [10].

Molecular dynamics (MD) simulation has established itself as an indispensable computational technique for probing the structure, dynamics, and function of biological macromolecules, most notably proteins. By simulating the physical motions of every atom in a system over time, MD provides atomic-level insights into processes that are often difficult to observe experimentally. The predictive power of any MD simulation rests upon two foundational pillars: the accuracy of the force field, which describes the potential energy of the system, and the faithful numerical integration of Newton's equations of motion. This technical guide details these core principles, their mathematical underpinnings, and their practical implementation, framed within the context of their historical development and critical importance to modern protein research and drug development.

Molecular dynamics is a computer simulation method for analyzing the physical movements of atoms and molecules over time. In the most common version, the trajectories of atoms and molecules are determined by numerically solving Newton's equations of motion for a system of interacting particles, where forces between the particles and their potential energies are calculated using interatomic potentials or molecular mechanical force fields [11]. The method is now extensively applied in structural biology and biophysics to study the motions of proteins and nucleic acids, which is crucial for interpreting experimental results and modeling interactions, such as in ligand docking [5] [11].

The traditional approach to understanding the influence of conformation on macromolecular function was to accumulate experimental structures. However, proteins are flexible entities, and dynamics play a key role in their functionality, undergoing significant conformational changes during their catalytic cycle, allosteric regulation, and molecular recognition [5]. MD simulation has evolved into a mature technique that can now simulate systems on biologically relevant timescales, shifting the paradigm of structural bioinformatics from studying single static structures to analyzing conformational ensembles [5]. This capacity to simulate the dynamic nature of proteins has made MD an invaluable tool for researchers and drug development professionals, enabling the study of complex processes like protein folding, ligand binding, and allosteric mechanisms.

The Force Field: A Molecular Mechanics Description of Potential Energy

A force field is a computational model that describes the forces between atoms within molecules or between molecules. In essence, it refers to the functional form and parameter sets used to calculate the potential energy of a system of atoms and molecules [12]. The parameters for these energy functions are derived from classical laboratory experiment data, quantum mechanical calculations, or a combination of both [12]. The accuracy of a force field is paramount, as it directly determines the reliability of the simulation in representing real molecular behavior.

Fundamental Components of a Force Field

The total potential energy in a typical, additive force field for molecular systems can be decomposed into bonded and non-bonded interaction terms [12] [13]:

E_total = E_bonded + E_nonbonded

Where:

E_bonded = E_bond + E_angle + E_dihedral

E_nonbonded = E_electrostatic + E_van der Waals

The following sections break down each of these components.

Bonded Interactions

Bonded interactions describe the energy associated with the covalent bond geometry of the molecule.

Bond Stretching: This term describes the energy cost associated with stretching or compressing a covalent bond from its equilibrium length. It is most commonly represented by a harmonic potential, which assumes the bond behaves like a spring [12]:

E_bond = k_ij / 2 * (l_ij - l_0,ij)^2Here,k_ijis the force constant,l_ijis the actual bond length, andl_0,ijis the equilibrium bond length between atomsiandj.Angle Bending: This term represents the energy required to bend the angle between two adjacent covalent bonds away from its equilibrium value. It is also typically modeled using a harmonic potential [13].

Dihedral Torsions: This term describes the energy associated with rotation around a central bond, connecting two atoms to two others. The functional form for dihedral energy is a periodic function that reflects the inherent periodicity of a rotation [12]. Additionally, "improper torsional" terms may be added to enforce the planarity of aromatic rings and other conjugated systems [12].

Non-Bonded Interactions

Non-bonded interactions describe the energy between atoms that are not directly connected by covalent bonds. These are computationally the most intensive part of the energy evaluation [11].

Van der Waals Interactions: These short-range forces account for attractive (London dispersion) and repulsive (electron cloud overlap) interactions. They are typically described by the Lennard-Jones potential [12] [11]:

E_van der Waals = 4ε [ (σ/r)^12 - (σ/r)^6 ]Whereεis the well depth,σis the distance at which the inter-particle potential is zero, andris the interatomic distance.Electrostatic Interactions: These are long-range forces between atoms bearing partial electrical charges. They are calculated using Coulomb's law [12]:

E_Coulomb = (1 / (4πε_0)) * (q_i q_j / r_ij)Whereq_iandq_jare the partial charges on atomsiandj,r_ijis the distance between them, andε_0is the permittivity of free space.

Force Field Types and Parameterization

Force fields can be categorized based on their level of detail and intended scope.

Table 1: Classification of Molecular Force Fields

| Classification | Description | Common Applications |

|---|---|---|

| All-Atom | Provides parameters for every atom in a system, including hydrogen [12]. | High-accuracy simulation of specific molecular interactions. |

| United-Atom | Treats hydrogen and carbon atoms in methyl and methylene groups as a single interaction center [12]. | Increased computational efficiency for larger systems. |

| Coarse-Grained | Represents groups of atoms as a single "bead," sacrificing chemical details for speed [12]. | Long-time simulations of large macromolecular complexes [12]. |

The process of parameterization—determining the numerical values for the force field functions—is crucial for its accuracy and reliability. Parameters for biological macromolecules are often derived or transferred from observations of small organic molecules, which are more accessible for experimental studies and quantum calculations [12]. Key parameter sets include values for atomic mass, atomic charge, Lennard-Jones parameters for every atom type, and equilibrium values for bond lengths, angles, and dihedral angles [12].

Newton's Laws of Motion in a Molecular Context

MD simulation is, at its core, a computer simulation of Newton's Laws for a collection of particles (atoms) [14]. The application of these classical laws to each atom in the system allows for the prediction of its dynamical evolution.

Mathematical Formulation

For a system of N atoms, the motion of each atom i with mass m_i is governed by Newton's second law:

F_i = m_i * a_i

Where F_i is the total force acting on the atom, and a_i is its acceleration [15].

The force is also equal to the negative gradient of the potential energy V with respect to the atom's coordinates r_i [15]:

F_i = - ∂V / ∂r_i

The acceleration is the second derivative of the position with respect to time [15]:

a_i = ∂²r_i / ∂t²

Therefore, the core equation of motion for MD becomes:

m_i * (∂²r_i / ∂t²) = - ∂V / ∂r_i

The potential energy V is precisely the energy computed by the force field, E_total, thus creating the direct link between the force field description and the resulting dynamics.

The MD Integration Algorithm

The analytical solution to the equations of motion is not obtainable for a system composed of more than two atoms. Therefore, MD uses numerical integration methods, specifically the class of finite difference methods [15]. The main idea is to divide the total simulation time into many small, finite time steps δt (typically 1-2 femtoseconds, 10⁻¹⁵ s), as this is the timescale of the fastest vibrations (e.g., bond stretches) in the system [5] [15] [11].

A standard MD algorithm proceeds as follows [15]:

- Initialization: Define an initial configuration of the system (atomic positions

r_i) and assign initial velocitiesv_ito all atoms. - Force Calculation: For the current configuration, compute the potential energy

Vand the forcesF_iacting on each atom. This is the most computationally demanding step. - Integration: Using the current positions, velocities, and forces, solve Newton's equations of motion to update the positions and velocities of all atoms for the next time step,

t + δt. - Iteration: Update the time (

t = t + δt) and repeat from Step 2 for the desired number of time steps.

Integration Algorithms

Several numerical integration algorithms are commonly used in MD simulations.

The Verlet Algorithm: This widely used method calculates the new positions

r(t + δt)using the current positionsr(t), the current accelerationsa(t), and the positions from the previous stepr(t - δt)[15]:r(t + δt) = 2r(t) - r(t - δt) + δt² a(t)While numerically stable, its drawback is that velocities are not explicitly calculated, though they can be estimated [15].The Velocity Verlet Algorithm: This is one of the most commonly used integrators as it explicitly incorporates velocities and is more accurate than the basic Verlet algorithm [15]. It involves a two-step process:

r(t + δt) = r(t) + δt v(t) + (1/2) δt² a(t)v(t + δt) = v(t) + (1/2) δt [a(t) + a(t + δt)]This algorithm uses the current position, velocity, and acceleration to update the position; then uses the new positions to compute the new acceleration; and finally updates the velocity using the average of the current and new accelerations [15].The Leap-Frog Algorithm: In this method, velocities and positions are updated at slightly offset times. The velocities are calculated at half-integer time steps and are used to update the positions at the next integer time step [15]:

v(t + 1/2 δt) = v(t - 1/2 δt) + δt a(t)r(t + δt) = r(t) + δt v(t + 1/2 δt)

Table 2: Comparison of Common MD Integration Algorithms

| Algorithm | Advantages | Disadvantages | Integration Error |

|---|---|---|---|

| Velocity Verlet | Explicit velocity calculation; good energy conservation (symplectic) [14]. | Requires a two-step calculation. | O(δt²) [14] |

| Leap-Frog | Computationally efficient; good energy conservation. | Velocities and positions are not synchronized at the same time [15]. | O(δt²) |

| Semi-implicit Euler | Simple to implement. | Poor energy conservation; less accurate [14]. | O(δt) [14] |

The following diagram illustrates the logical workflow and data dependencies of a molecular dynamics simulation, from system setup to trajectory analysis.

The Scientist's Toolkit: Essential Components for MD Simulations

Table 3: Key Research Reagents and Computational Tools for Molecular Dynamics

| Item / Software | Type | Primary Function |

|---|---|---|

| AMBER [5] | Software Suite | A package of molecular simulation programs, including force fields for biomolecules. |

| CHARMM [5] | Software Suite | A versatile program for classical MD simulation with a comprehensive force field. |

| GROMACS [5] | Software Suite | A high-performance MD engine, known for its extreme speed and efficiency. |

| NAMD [5] | Software Suite | A parallel MD code designed for high-performance simulation of large biomolecular systems. |

| VMD [16] | Molecular Viewer | A tool for visualizing, analyzing, and animating large biomolecular systems. |

| Explicit Solvent (e.g., TIP3P) [11] | Model | Explicitly models solvent molecules (e.g., water) for accurate solvation effects [5]. |

| Implicit Solvent [11] | Model | Models solvent as a continuous dielectric medium for faster computation. |

| Lennard-Jones Potential [12] [11] | Mathematical Function | Describes van der Waals (attractive and repulsive) interactions between atoms. |

| SHAKE Algorithm [11] | Computational Method | Constrains the lengths of bonds involving hydrogen, allowing for a larger integration time step. |

The synergy between accurate force fields and the robust application of Newtonian mechanics forms the bedrock of molecular dynamics simulation. The continued development of more refined, chemically accurate force fields, coupled with algorithmic advances that extend the accessible timescales of simulation, will further solidify the role of MD as a critical tool in the molecular sciences. For researchers in protein science and drug development, a deep understanding of these core principles is essential for designing meaningful simulations, critically evaluating results, and leveraging MD to uncover the dynamic mechanisms that drive biological function and facilitate rational drug design.

Molecular dynamics (MD) simulations provide an atomic-resolution "computational microscope" for observing protein behavior, connecting structure to function by exploring conformational space and energy landscapes [7]. The evolution of this powerful tool is a story of confronting a central, formidable limitation: the severe constraint of simulation timescales. For decades, the biological relevance of MD simulations was limited by the gulf between the computationally accessible time and the biologically relevant time. While many critical biological processes—including protein folding, conformational changes, and ligand binding—occur on timescales of microseconds to milliseconds, the pioneering MD simulations of the 1970s struggled to reach even nanoseconds [1] [7]. This temporal chasm defined the primary challenge for the field, driving innovations in hardware, software, and algorithmic approaches that have collectively extended simulation times by orders of magnitude, progressively bridging the gap between computational feasibility and biological reality.

The Pioneering Era: Picosecond Simulations

The First Protein MD Simulation

The field of biomolecular MD simulations was inaugurated in 1977 with a landmark study by McCammon, Gelin, and Karplus on the bovine pancreatic trypsin inhibitor (BPTI) protein [1] [7]. This simulation, published in Nature, was groundbreaking not only for its subject matter but for its technical achievements and limitations. It demonstrated for the first time that proteins are not static structures but dynamic entities with continuous atomic motions at room temperature, a revelation that merged computational statistical mechanics with biochemistry [1].

Table 1: The First Protein MD Simulations (1977-1988)

| Simulation Feature | 1977 BPTI Simulation [1] [7] | 1988 BPTI Simulation [7] |

|---|---|---|

| Protein System | Bovine Pancreatic Trypsin Inhibitor (BPTI) | Bovine Pancreatic Trypsin Inhibitor (BPTI) |

| Simulation Duration | 9.2 picoseconds | 210 picoseconds |

| Solvation Model | Not specified (likely implicit or vacuum) | Explicit water molecules |

| Key Achievement | First observation of protein motions via simulation | Good correlation with X-ray structure |

| Impact | Established MD as a tool for studying protein dynamics | Demonstrated importance of explicit solvent and longer timescales |

Hardware Landscape and Methodological Framework

The early MD simulations were performed on mainframe computers such as the IBM System/370 Model 168, which represented the cutting edge of computational hardware in the 1970s [1]. The computational intensity of these simulations stemmed from the need to calculate forces for each atom in the system based on molecular mechanics equations, with the non-bonded energy terms between every pair of atoms increasing as the square of the number of atoms (N²) representing the most computationally expensive summation in the potential energy calculation [7].

The functional form of these equations included:

- Bonded forces: Interactions between atoms connected by covalent bonds, modeled as simple harmonic motions

- Van der Waals forces: Treated with 12/6 Lennard-Jones potentials with user-defined cutoff distances

- Electrostatic forces: Modeled using Coulomb's law, computed using approximate methods like Ewald summations to account for long-range effects [7]

Diagram 1: Early MD simulation workflow

The Hardware-Software Coevolution: Breaking Temporal Barriers

The Computational Scaling Problem

As noted in historical analysis, for decades following the creation of MD, simulations improved with computing power along three principal dimensions: accuracy, atom count (spatial scale), and duration (temporal scale) [17]. However, since the mid-2000s, general-purpose computer platforms have failed to provide strong scaling for MD, as scale-out CPU and GPU platforms that provide substantial increases to spatial scale do not lead to proportional increases in temporal scale [17]. This scaling problem left important scientific problems inaccessible to direct simulation, prompting the development of increasingly sophisticated algorithms that presented significant complexity, accuracy, and efficiency challenges.

The simulation time of biomolecular complexes has scaled up by about three orders of magnitude every decade, a rate faster than Moore's Law, which projected a doubling every two years [18]. This exceptional progress was driven by the biomolecular simulation community's relentless pursuit and exploitation of state-of-the-art technology, leading to comparable performance for landmark simulations with the world's fastest computers [18].

Specialized Hardware Solutions

The pursuit of longer simulation timescales led to the development of specialized hardware designed specifically for MD simulations. The most notable achievement in this domain came with Anton, a special-purpose supercomputer designed for MD that enabled millisecond-scale simulations [7]. In a landmark demonstration of this capability, Anton was used to run a millisecond (1,000 microsecond) simulation of the BPTI protein—the same protein used in the 1977 pioneering simulation, but now simulated for a duration 100 million times longer [7].

More recently, a novel computing architecture called the Cerebras wafer scale engine has demonstrated the ability to completely alter scaling pathways by delivering unprecedentedly high simulation rates of up to 1.144 million steps per second for 200,000 atoms, enabling direct simulations of molecular evolution over millisecond timescales using general-purpose programmable hardware [17].

Table 2: Hardware Platforms and Their Impact on MD Timescales

| Hardware Era | Representative System | Typical Simulation Timescale | Key Advancement |

|---|---|---|---|

| 1970s Mainframes | IBM System/370 Model 168 [1] | Picoseconds (9.2 ps for BPTI) [7] | First protein simulations |

| 1980s-1990s Supercomputers | Not specified | Nanoseconds | Explicit solvent models |

| 2000s CPU Clusters | Scale-out CPU platforms [17] | Hundreds of nanoseconds to microseconds | Increased accessibility |

| 2010s Specialized Hardware | Anton supercomputer [7] | Milliseconds | First millisecond-scale simulations |

| Modern Architectures | Cerebras wafer scale engine [17] | Milliseconds for larger systems | High rates on general-purpose hardware |

Table 3: Key Research Reagents and Computational Tools in MD Simulations

| Tool Category | Specific Tools/Reagents | Function and Application |

|---|---|---|

| Force Fields | CHARMM [7], AMBER [7], GROMOS [7] | Empirical parameters describing atomic forces governing MD simulations |

| Simulation Software | GROMACS [19], DESMOND [19], AMBER [19], NAMD [7] | MD simulation packages leveraging tested force fields |

| Enhanced Sampling Methods | Metadynamics [20], Parallel Tempering [18] | Algorithms to accelerate sampling of rare events |

| Specialized Hardware | Anton [7], Cerebras Wafer Scale Engine [17] | Purpose-built processors for dramatically increased simulation rates |

| Automation Tools | drMD [20] | User-friendly automation pipeline reducing barrier to entry for non-experts |

Current Frontiers: Reaching Biologically Relevant Timescales

Millisecond Simulations and Biological Insights

The extension of MD simulations to millisecond timescales has revealed protein behavior that was previously inaccessible to computational observation. In the landmark millisecond simulation of BPTI on the Anton supercomputer, researchers observed that the protein transitioned reversibly among a small number of structurally distinct states, with two states accounting for 82% of the trajectory—behavior that agreed well with experimental data but could not be observed at shorter (microsecond) timescales [7]. This confirmation of the importance of adequate simulation times has driven continued innovation in hardware and algorithms.

Integration with Emerging Computational Approaches

Recent advances have highlighted the growing synergy between traditional MD simulations and artificial intelligence (AI) methods. While MD simulations provide physics-based trajectories of protein motions, AI approaches—particularly deep learning models—can efficiently sample conformational ensembles, especially for challenging systems like intrinsically disordered proteins (IDPs) [21]. These AI methods leverage large-scale datasets to learn complex, non-linear, sequence-to-structure relationships, allowing for modeling of conformational ensembles without the constraints of traditional physics-based approaches [21].

Diagram 2: Evolution of MD simulation timescales

Experimental Protocols: Methodologies for Extended Timescales

Standard MD Protocol for Protein Simulations

The common protocol for performing MD simulations of proteins consists of multiple carefully orchestrated steps [7]:

- Initial Structure Preparation: An X-ray crystal structure or NMR structure is obtained from the Protein Data Bank, or a theoretical structure is developed by homology modeling.

- Energy Minimization: The structure undergoes energy minimization to remove strong van der Waals interactions that might lead to local structural distortion and unstable simulation.

- Solvation: Explicit water molecules are added to solvate the system, followed by another energy minimization with the macromolecule fixed to allow water molecules to readjust around the protein.

- Initial Velocity Assignment: Initial velocities at low temperature are assigned to each atom of the system following a Maxwell-Boltzmann distribution.

- Heating Phase: The simulation proceeds through a heating phase where velocities are periodically reassigned at slightly higher temperatures until the desired temperature (typically 300-310 K) is reached.

- Equilibration Dynamics: The simulation continues until properties such as structure, pressure, temperature, and energy become stable with respect to time.

- Production Phase: The final simulation runs for the desired time length (from nanoseconds to milliseconds), during which trajectories are collected for analysis.

Enhanced Sampling Techniques

To address the challenge of capturing rare, biologically relevant events, several enhanced sampling methods have been developed:

- Metadynamics: Implemented in tools like drMD, this approach enhances sampling by adding a history-dependent bias potential that discourages the system from revisiting already sampled configurations [20].

- Parallel Tempering with Well-Tempered Ensembles: This combination has revealed how phosphorylation of intrinsically disordered proteins regulates their binding to interacting partners [18].

- Gaussian Accelerated MD (GaMD): Used for studying intrinsically disordered proteins, this method captures events like proline isomerization that occur on timescales difficult to access with conventional MD [21].

The journey from picosecond to microsecond and millisecond MD simulations represents one of the most significant triumphs in computational structural biology. This progression, driven by co-advances in hardware architecture, software algorithms, and theoretical approaches, has transformed MD from a specialized tool for experts into an increasingly accessible method that provides unprecedented insights into protein dynamics [20] [7]. The field continues to evolve with the integration of artificial intelligence methods that complement traditional MD, particularly for sampling complex conformational landscapes of intrinsically disordered proteins [21]. As hardware architectures like the Cerebras wafer scale engine begin to deliver millisecond-scale simulations for larger systems on general-purpose programmable hardware [17], and automation tools like drMD lower barriers for non-experts [20], MD simulations are poised to become even more transformative in protein science and drug discovery. The continued collaboration between hardware engineers, software developers, and biological researchers ensures that the remarkable historical trajectory of MD simulations—outpacing even Moore's Law—will continue to yield new insights into the dynamic nature of biological systems.

The evolution of molecular dynamics (MD) simulations from picosecond-scale observations of small systems in the 1980s to millisecond-scale simulations of complex biological assemblies today has fundamentally transformed structural biology [5] [16]. This progression from studying single, static structures to analyzing conformational ensembles has necessitated the development of robust quantitative metrics to extract meaningful biological insights from simulation data [5]. Among these, three techniques have emerged as fundamental analytical pillars: Root Mean Square Deviation (RMSD), Root Mean Square Fluctuation (RMSF), and Solvent Accessible Surface Area (SASA). These metrics provide complementary perspectives on protein dynamics, stability, and solvation, enabling researchers to quantify conformational changes, flexibility, and environment interactions that underlie biological function. Their development and refinement have paralleled advances in MD methodology itself, evolving from simple visual inspection of trajectories to sophisticated algorithms that can process massive datasets generated by modern simulation hardware [16] [22].

Root Mean Square Deviation (RMSD): Quantifying Structural Evolution

Fundamental Principles and Mathematical Definition

RMSD provides a quantitative measure of the average distance between atoms in superimposed protein structures [23]. In structural biology, it is most commonly used to compare the similarity of three-dimensional protein structures by measuring the deviation of atomic positions after optimal rigid body superposition. The mathematical definition of RMSD between two sets of atomic coordinates (v and w) is given by:

$$RMSD(v,w) = \sqrt{\frac{1}{n}\sum{i=1}^{n}\lVert vi - wi \rVert^2} = \sqrt{\frac{1}{n}\sum{i=1}^{n}((v{ix}-w{ix})^2 + (v{iy}-w{iy})^2 + (v{iz}-w{iz})^2)}$$

where (n) represents the number of atoms being compared, and (vi) and (wi) are the coordinates of atom (i) in the two structures [23]. The calculation typically utilizes backbone heavy atoms (C, N, O, Cα) or sometimes just Cα atoms. Normally, a rigid superposition that minimizes the RMSD is performed prior to calculation, with this minimum value being reported as the RMSD [23].

Biological Applications and Interpretation

RMSD serves multiple critical functions in MD analysis. It is routinely plotted as a function of simulation time to assess system stability and equilibration, where leveling of the RMSD curve suggests the protein has reached a stable conformational state [24]. In protein folding studies, RMSD functions as a reaction coordinate to quantify progression between folded and unfolded states [23]. The metric also provides a quantitative measure for assessing the quality of structural predictions in initiatives like CASP (Critical Assessment of Structure Prediction), where lower RMSD values indicate better agreement with experimentally determined target structures [23]. Additionally, RMSD is valuable for evaluating evolutionary similarity between proteins and the quality of sequence alignments [23].

Table 1: Key Applications of RMSD in Protein MD Simulations

| Application Domain | Specific Use Case | Typical Reference Structure | Interpretation Guidelines |

|---|---|---|---|

| Simulation Equilibration | Assessing system stability over time | Initial simulation frame | Curve plateau indicates equilibration |

| Folding Studies | Quantifying native state similarity | Experimentally determined native structure | Lower values indicate more native-like character |

| Structural Prediction | Validating computational models | Experimental target structure | <2-3 Å often indicates high accuracy |

| Conformational Changes | Characterizing large-scale motions | Representative starting structure | Spikes indicate transitions; shifts indicate drifts |

Advanced RMSD Methodologies

Recent methodological advances have expanded RMSD applications beyond conventional usage. The moving RMSD (mRMSD) approach eliminates dependency on a fixed reference structure by calculating RMSD between temporally adjacent frames in a trajectory [22]. For a structure at time (t) and reference at time (t-\Delta t), mRMSD is defined as:

$$mRMSD = RMSD(v(t), w(t-\Delta t))$$

This approach is particularly valuable for analyzing proteins with unknown native structures and can identify regions of stable and metastable states in long simulations [22]. Research suggests that time intervals ≥20 ns are appropriate for investigating protein dynamics using mRMSD [22]. The optimal rigid body transformation that minimizes RMSD can be efficiently computed using quaternion-based methods or the Kabsch algorithm [23].

Root Mean Square Fluctuation (RMSF): Mapping Regional Flexibility

Theoretical Foundation

While RMSD quantifies global structural changes, RMSF measures local flexibility by calculating the average deviation of individual atoms or residues from their mean positions over time [24]. RMSF is defined mathematically as:

$$RMSF = \sqrt{\frac{1}{T}\sum{t=1}^{T} \lVert xt - \bar{x} \rVert^2}$$

where (x_t) represents the position at time (t), (\bar{x}) is the mean position, and (T) is the total simulation time [25]. This metric effectively captures the amplitude of atomic fluctuations around mean positions, providing a residue-by-residue map of protein flexibility.

Practical Implementation and Analysis

In practice, RMSF is typically calculated for Cα atoms after aligning trajectories to a reference structure to remove global rotational and translational motion [24]. The resulting per-residue values are commonly plotted against residue number to visualize flexibility patterns across the protein sequence. This analysis reveals critical structural information, identifying rigid elements like secondary structure cores (characterized by low RMSF values) and flexible regions such as solvent-exposed loops, termini, and functionally important hinged domains (exhibiting high RMSF values) [24]. In allosteric proteins, RMSF can pinpoint regions involved in signal transmission, while in enzyme-substrate complexes, it can identify flexible active site residues that undergo conformational changes during catalysis.

Table 2: Comparative Analysis of RMSD and RMSF in Protein Simulations

| Characteristic | RMSD | RMSF |

|---|---|---|

| Primary Focus | Global structural deviation | Local residue flexibility |

| Reference Frame | Single reference structure | Average structure over trajectory |

| Typical Plot | Time series (RMSD vs. Time) | Residue index (RMSF vs. Residue Number) |

| Biological Insights | System stability, large conformational changes, folding state | Flexible regions, allosteric sites, functional dynamics |

| Calculation Scale | Entire molecule or protein backbone | Per-residue or per-atom basis |

| Equilibration Indicator | Plateau of time-series values | Stable pattern of fluctuations |

Emerging Computational Approaches

Recent advances include machine learning approaches like RMSF-net, a neural network model that predicts RMSF values from cryo-electron microscopy maps and associated atomic models [25]. This method achieves remarkable correlation (0.746-0.765) with MD-derived RMSF values while reducing computation time from days to seconds, demonstrating the potential for integrating experimental data and artificial intelligence to accelerate dynamic analysis [25]. Traditional calculation methods utilizing tools like Cpptraj involve trajectory alignment followed by atomic fluctuation computation, typically focusing on backbone atoms to reduce noise while capturing essential dynamics [24].

Solvent Accessible Surface Area (SASA): Measuring Solvent Exposure

Algorithmic Foundations and Physical Significance

SASA quantifies the surface area of a biomolecule accessible to solvent molecules, providing critical insights into solvation effects and hydrophobic interactions that drive protein folding and molecular recognition [26]. The concept was first introduced by Lee and Richards, with the widely adopted Shrake-Rupley algorithm implementing a numerical approach that places points on a sphere around each atom and counts those not occluded by neighboring atoms [27] [26]. The SASA for an atom (i) is calculated as:

$$SASAi = 4\pi(Ri + R{probe})^2 \times \frac{N{accessible}}{N_{total}}$$

where (Ri) is the atom's van der Waals radius, (R{probe}) is the solvent radius (typically 1.4 Å for water), and (N{accessible}/N{total}$ represents the fraction of points not occluded by neighboring atoms [27]. This geometric measure directly relates to environment free energy, encompassing hydrophobic effects, hydrogen bonding, and van der Waals interactions [26].

Methodological Considerations and Approximations

Accurate SASA calculation is computationally demanding due to its non-pairwise decomposable nature [26]. This challenge has spurred development of numerous approximations balancing speed and accuracy, particularly for protein structure prediction applications where thousands of models require evaluation [26]. These include neighborhood density methods counting atoms within spherical cutoffs, the "Neighbor Vector" algorithm accounting for spatial distribution of neighbors, and analytical methods like the Street-Mayo approach using scaled two-body burial approximations [26]. The SASA model in CHARMM implements a probabilistic approximation with atom-specific parameters and connectivity terms, achieving significant computational efficiency while maintaining reasonable accuracy [28].

Biological Applications and Energetic Correlations

SASA analysis provides multiple biological insights, including identification of hydrophobic cores in folded proteins (residues with consistently low SASA), detection of binding interfaces (reductions in SASA upon complex formation), and characterization of folding/unfolding transitions (correlated increases in SASA) [27] [26]. SASA values also enable estimation of solvation free energies using linear relationships, typically employing transfer-free energies per unit area [29]. For example, GROMACS implements this approach with the -odg option, calculating solvation free energy as:

$$\Delta G{solv} = \sumi SASAi \times \sigmai$$

where (\sigma_i) represents the atomic solvation parameter for atom (i$ [29].

Table 3: SASA Calculation Methods and Their Characteristics

| Method | Algorithm Type | Key Features | Computational Efficiency | Primary Applications |

|---|---|---|---|---|

| Shrake-Rupley | Numerical surface point | Gold standard for accuracy | Moderate to Slow | Reference calculations, detailed analysis |

| Neighborhood Density | Pairwise decomposable | Count-based burial approximation | Very Fast | High-throughput screening, early folding stages |

| Neighbor Vector | Directional density | Accounts for spatial neighbor distribution | Fast | Protein structure prediction |

| MSMS | Analytical surface | Constructs molecular surface mesh | Moderate | Visualization, binding site analysis |

| CHARMM SASA | Probabilistic analytical | Parameterized connectivity terms | Fast | Implicit solvation simulations |

Integrated Analytical Workflows and Computational Tools

Standard Implementation Protocols

Effective implementation of RMSD, RMSF, and SASA analyses requires structured workflows within popular MD analysis packages. For RMSD analysis using MDTraj, the protocol involves trajectory loading, atom selection (typically protein backbone), structural alignment, and RMSD calculation relative to a reference frame [24]. RMSF analysis often employs Cpptraj with input scripts specifying trajectory alignment followed by atomic fluctuation calculation [24]. SASA analysis using GROMACS requires careful parameter selection including probe radius (typically 0.14 nm), surface point density, and periodic boundary condition handling [29].

Analysis Workflow Integration

The computational ecosystem for these analyses includes specialized software tools and libraries. Key resources include MDTraj (Python library for RMSD/RMSF analysis), Cpptraj (AmberTools module for advanced trajectory analysis), GROMACS gmx sasa (specialized SASA calculation with solvation energy estimation), CHARMM SASA module (implicit solvation implementation), and VMD (visualization and analysis with plugin architecture) [27] [24] [28]. These tools integrate into broader MD workflows, enabling researchers to move seamlessly from simulation to analysis and visualization.

Table 4: Essential Computational Tools for MD Analysis

| Tool/Software | Primary Function | Key Strengths | Implementation Example |

|---|---|---|---|

| MDTraj | Python trajectory analysis | Integration with Python data science stack | md.rmsd(trajectory, reference) |

| Cpptraj | Advanced trajectory analysis | Extensive analysis algorithms, efficiency | atomicfluct out rmsf.dat @C,CA,N |

| GROMACS gmx sasa | SASA calculation | Fast implementation, solvation energy estimation | gmx sasa -f trajectory.xtc -s topology.tpr |

| CHARMM SASA | Implicit solvation model | Parameters for peptides, reversible folding | SASA atom-selection [parameters] |

| VMD | Visualization & analysis | Graphical interface, plugin ecosystem | Multi-platform standalone application |

RMSD, RMSF, and SASA represent complementary analytical frameworks that collectively provide a comprehensive picture of protein dynamics and solvation. When integrated, these metrics reveal structure-dynamics-function relationships inaccessible through static structures alone. RMSD tracks global conformational changes, RMSF maps local flexibility patterns, and SASA quantifies solvent interactions and hydrophobic driving forces. Their continued development and application—from fundamental algorithmic improvements to emerging machine learning approaches—will remain essential for extracting mechanistic insights from the increasingly complex and lengthy MD simulations made possible by computational advances. As molecular dynamics continues to evolve toward millisecond timescales and million-atom systems, these established analysis techniques will maintain their fundamental role in translating trajectory data into biological understanding.

Simulating Life's Machinery: Methodological Leaps and Expanding Applications in Biomedicine

Molecular dynamics (MD) simulations are an indispensable computational tool for studying the behavior of proteins and other biological macromolecules at an atomic level, providing insights into mechanisms that are often impossible to observe experimentally [5]. The fundamental challenge traditional MD simulations faced was the tremendous computational power required to simulate biologically relevant timescales. While experimental structures provide static snapshots, proteins are dynamic entities whose function often depends on conformational changes occurring on microsecond to millisecond timescales or longer [5]. Before the advent of specialized hardware, MD codes running on general-purpose parallel computers with hundreds or thousands of processor cores achieved simulation rates of only a few hundred nanoseconds per day for even moderately sized systems [30]. This timescale gap between what was computationally feasible and what was biologically relevant significantly limited the scientific questions that could be addressed. This article explores the hardware revolution that transformed this landscape, focusing on two complementary approaches: the adaptation of general-purpose GPUs and the development of specialized supercomputers like Anton.

Specialized Supercomputers: The Anton Paradigm

Anton's Architectural Innovation

Anton, developed by D. E. Shaw Research, represents a paradigm shift in MD simulation through its specialized, application-specific architecture. Unlike general-purpose supercomputers, Anton is a special-purpose system designed from the ground up specifically for molecular dynamics simulations of proteins and other biological macromolecules [30]. The machine, named after Anton van Leeuwenhoek, the father of microscopy, consists of a substantial number of application-specific integrated circuits interconnected by a specialized high-speed, three-dimensional torus network [30]. A key architectural differentiator from earlier special-purpose systems is that Anton runs its computations entirely on specialized ASICs rather than dividing computation between specialized chips and general-purpose host processors [30].

Each Anton ASIC contains two computational subsystems: the High-Throughput Interaction Subsystem and the Flexible Subsystem [30]. The HTIS contains 32 deeply pipelined modules running at 800 MHz, arranged much like a systolic array, and handles most calculations of electrostatic and van der Waals forces [30]. The Flexible Subsystem contains four general-purpose Tensilica cores and eight specialized but programmable geometry cores for remaining calculations including bond forces and fast Fourier transforms [30]. This specialized architecture enables exceptional performance, with a 512-node Anton machine achieving over 17,000 nanoseconds of simulated time per day for a protein-water system of 23,558 atoms—orders of magnitude faster than contemporary general-purpose systems [30].

Anton's Evolution and Capabilities

The Anton architecture has evolved through multiple generations, with each iteration expanding capabilities. Anton 3 represents the current state-of-the-art, hosted at the Pittsburgh Supercomputing Center and operational since April 2025 [31]. This third-generation system is the first and only resource available to the community capable of simulating multiple millions of atoms at speeds of microseconds per day, finally enabling routine access to biologically relevant timescales for large systems [31]. This performance level facilitates investigations of significant biological phenomena that were previously impractical, including protein folding, aggregation, membrane deformations, and the dynamics of large complexes like viruses and ribosomes [31].

The GPU Revolution: Democratizing High-Performance MD

From Graphics to General Purpose Computing

While Anton provided unprecedented performance, its specialized nature and limited accessibility created opportunities for alternative approaches. The graphics processing unit emerged as an unexpectedly powerful platform for accelerating MD simulations. Initially designed for computer graphics, GPUs evolved into general-purpose, fully programmable, high-performance processors that represented a major technical breakthrough for atomistic MD [5] [32]. Modern GPUs far exceed CPUs in terms of raw computing power, with one early implementation demonstrating over 700 times faster performance than a conventional implementation running on a single CPU core [32].

The adaptation of MD codes to GPUs required significant algorithmic rethinking due to fundamental architectural differences. GPUs contain hundreds or thousands of slower processing units compared to CPUs' limited number of fast cores [32]. This massive parallelism meant that for small or medium-sized proteins, the number of math units might be comparable to the number of atoms, shifting optimization priorities from traditional scaling considerations to utilization of available processing resources [32]. Additional challenges included memory access patterns, communication latency between CPU and GPU, and immature development tools [32].

Software Ecosystem and Performance Optimization

The MD software ecosystem rapidly adapted to leverage GPU acceleration. Popular simulation codes including AMBER, CHARMM, GROMACS, and NAMD implemented GPU support, with some like ACEMD written specifically for GPUs [5]. NAMD's development philosophy exemplifies this transition—designed for practical supercomputing on affordable hardware, it transitioned from workstation clusters in the 1990s to Linux clusters in the 2000s, and finally to GPU acceleration in the 2010s [33].

A significant advancement in GPU utilization for MD came with NVIDIA's Multi-Process Service, which addresses underutilization problems when simulating smaller systems [34]. MPS allows multiple simulations to run concurrently on the same GPU by eliminating context-switching overhead and enabling kernels from different processes to run concurrently [34]. This approach can more than double total throughput for smaller system sizes, with further optimization possible through the CUDA_MPS_ACTIVE_THREAD_PERCENTAGE environment variable [34]. The performance uplift is most dramatic for smaller systems, but even larger systems like the 408K-atom Cellulose benchmark show approximately 20% higher throughput on high-end GPUs [34].

Performance Comparison: Quantitative Analysis

Table 1: Performance Comparison of MD Hardware Platforms

| Hardware Platform | Representative Performance | System Size (atoms) | Simulation Rate | Key Applications |

|---|---|---|---|---|

| Anton 2 (512-node) | DHFR (23K atoms) | 23,558 | >17,000 ns/day | Protein-water systems [30] |

| Anton 3 | Large biological systems | Millions | Microseconds/day | Viruses, ribosomes, protein folding [31] |

| GPU (Single, without MPS) | Villin headpiece (576 atoms) | 576 | ~700x CPU speed [32] | Small to medium proteins [32] |

| GPU (with MPS, 8 processes) | DHFR (23K atoms) | ~23,000 | Approaches 5 μs/day [34] | High-throughput small system screening [34] |

| CPU Clusters (Historical) | DHFR (23K atoms) | 23,558 | Few hundred ns/day [30] | Benchmark comparisons |

Table 2: Technical Specifications of Specialized vs. General Purpose Hardware

| Architectural Feature | Anton ASIC | Traditional GPU | CPU Cluster |

|---|---|---|---|

| Processing Cores | HTIS (32 pipelined modules) + Flexible Subsystem (4 general-purpose + 8 geometry cores) [30] | Hundreds to thousands of streaming processors [32] | Few to dozens of high-clock-rate cores |

| Memory Architecture | Dedicated DRAM per ASIC with specialized network [30] | High-bandwidth GDDR with small cache [32] | Hierarchical cache with moderate bandwidth |

| Interconnect | 3D torus network (607.2 Gbit/s total bandwidth) [30] | PCIe bus (potential bottleneck) [32] | InfiniBand, Ethernet, or proprietary HPC interconnects [33] |

| Optimization Strategy | Application-specific circuitry for force calculations [30] | Massive parallelism with careful memory access patterns [32] | Spatial decomposition with MPI parallelism [5] |

| Power Efficiency | Highly optimized for specific MD workloads | Moderate to high FLOPs/watt | Lower FLOPs/watt |

Experimental Protocols and Methodologies

Benchmarking GPU Performance with MPS

To evaluate and optimize GPU performance using NVIDIA's Multi-Process Service, researchers follow specific benchmarking protocols. The following methodology outlines the process for measuring throughput improvements:

Environment Setup: Enable MPS with root privileges using the command

nvidia-cuda-mps-control -d. Test environments typically use OpenMM 8.2.0, CUDA 12, and Python 3.12 [34].Process Configuration: Determine the number of concurrent simulations based on system size and GPU memory. For smaller systems like DHFR (23K atoms), researchers might test with 2, 4, or 8 concurrent processes [34].

Thread Allocation Optimization: Set the

CUDA_MPS_ACTIVE_THREAD_PERCENTAGEenvironment variable to optimize resource allocation. Testing indicates that a value of200 / NSIMS(whereNSIMSis the number of concurrent processes) often yields optimal throughput, increasing performance by 15-25% for 8 DHFR simulations [34].Execution and Data Collection: Launch multiple simulation instances using a benchmarking script. For example:

The benchmark measures total simulation throughput across all concurrent processes [34].

Analysis: Compare total nanoseconds simulated per day across different configurations. Note that individual simulations may run slower with MPS enabled, but the aggregate throughput should increase significantly [34].

Specialized Hardware Allocation and Usage

Access to specialized resources like Anton follows distinct protocols compared to general-purpose HPC systems:

Allocation Process: Anton time is allocated annually via a Request for Proposals process, with proposals reviewed by a committee convened by the National Research Council at the National Academies of Sciences, Engineering, and Medicine [31].

Eligibility Requirements: Principal investigators must be faculty or staff members at US academic or not-for-profit research institutions. The system is available without cost for non-commercial research [31].

Proposal Preparation: Successful proposals typically demonstrate how the research will leverage Anton's unique capabilities to address biological questions requiring long timescales or large systems that would be impractical on general-purpose HPC resources [31].

Technical Implementation: Researchers with allocations access Anton through the Pittsburgh Supercomputing Center, with documentation available through authenticated portals [31].

Visualization of Hardware Architectures and Workflows

Table 3: Research Reagent Solutions for Hardware-Accelerated Molecular Dynamics

| Resource Category | Specific Tools & Platforms | Function & Application | Access Model |

|---|---|---|---|

| Specialized Hardware | Anton 3 (PSC) | Microsecond/day simulations of million-atom systems [31] | Competitive allocation for academic researchers [31] |

| GPU Software | OpenMM, NAMD, GROMACS, ACEMD | MD simulation engines optimized for GPU acceleration [5] [32] [33] | Open-source or free for academic use |

| Performance Tools | NVIDIA MPS, CUDA_MPS_ACTIVE_THREAD_PERCENTAGE |

Increases GPU utilization for multiple concurrent simulations [34] | Included with NVIDIA GPU drivers |

| Benchmarking Systems | DHFR (23K atoms), ApoA1 (92K atoms), Cellulose (408K atoms) | Standardized systems for performance comparison [34] | Publicly available in MD packages |

| Analysis & Visualization | VMD, PyMOL, Chimera | Trajectory analysis and molecular graphics [16] | Open-source or free for academics |

The hardware revolution in molecular dynamics continues to evolve with emerging architectures. Special-purpose MD processing units represent the next frontier, promising to reduce time and power consumption by approximately (10^3) times for machine-learning MD and (10^9) times for ab initio MD compared to state-of-the-art CPU/GPU systems while maintaining ab initio accuracy [35]. These architectures address the fundamental "memory wall" and "power wall" bottlenecks of von Neumann architectures through computing-in-memory approaches based on FPGA and ASIC technologies [35].

The integration of machine learning with specialized hardware further accelerates this trend. Recent advances combine cutting-edge machine learning algorithms with purpose-built hardware, creating systems capable of simulating complex physical properties with quantum-mechanical accuracy at dramatically improved speeds [35]. As these technologies mature, they promise to further democratize access to long-timescale simulations, potentially making capabilities that once required specialized supercomputers available on departmental or even individual researcher workstations.

In conclusion, the hardware revolution in molecular dynamics has fundamentally transformed the scope of scientific questions that can be addressed through simulation. The complementary paths of specialized supercomputers like Anton and general-purpose GPU acceleration have collectively enabled researchers to bridge the timescale gap from nanoseconds to microseconds and milliseconds, making biologically relevant simulation timescales increasingly routine. This progress continues to push the boundaries of computational biophysics, enabling unprecedented insights into protein function, drug binding, and other essential biological processes at atomic resolution.

Molecular dynamics (MD) has established itself as an indispensable computational microscope, enabling researchers to probe the dynamic behavior of proteins and other biomolecules at atomic resolution. Since its initial application to proteins in the early 1980s, MD has evolved from simulating small peptides for nanoseconds to routinely modeling large biomolecular complexes for microseconds or longer [36]. Despite these advances, a fundamental challenge persists: many biologically critical processes—including protein folding, conformational changes in allosteric regulation, and ligand binding/unbinding—occur on timescales (microseconds to seconds) that remain prohibitively expensive for standard MD simulations [37] [38]. This timescale problem has driven the development of enhanced sampling techniques, which strategically accelerate the exploration of configurational space while maintaining thermodynamic and kinetic fidelity.

The evolution of MD can be traced through several transformative developments. The 1990s saw simulations of small peptides for several nanoseconds, which was then considered a significant achievement [37]. The development of CPU-based supercomputers expanded capabilities to protein complexes with trajectories reaching tens of nanoseconds [37]. A paradigm shift occurred with the emergence of affordable high-performance GPUs and specialized software, making microsecond-scale simulations of systems with hundreds of thousands of atoms routine [37]. Concurrently, quantum chemistry methods like density functional theory (DFT) offered chemical accuracy but could not scale to support large biomolecules [39]. This historical context frames the critical need for, and development of, the enhanced sampling methods that form the core of this technical guide.

Theoretical Foundations of Enhanced Sampling

Enhanced sampling methods share a common goal: to facilitate the escape from local energy minima and efficiently explore the free energy landscape of biomolecular systems. These techniques can be broadly categorized into methods that rely on predefined collective variables (CVs) and those that operate without them. CVs are functions of atomic coordinates—such as distances, angles, or root-mean-square deviation (RMSD)—designed to capture the slow modes of a biological process [40].

The theoretical underpinning of many CV-based methods lies in the statistical mechanics concept of free energy estimation. When a system exhibits high energy barriers between metastable states, transitions become rare events on simulation timescales. Enhanced sampling methods overcome this limitation by modifying the effective energy landscape, either by adding bias potentials, altering the underlying Hamiltonian, or employing non-Boltzmann sampling distributions.

Table 1: Classification of Enhanced Sampling Methods

| Method Category | Representative Techniques | Key Principle | Typical Applications |

|---|---|---|---|

| Collective Variable-Based | Umbrella Sampling, Metadynamics, Steered MD | Biasing along predefined reaction coordinates | Free energy calculations, ligand binding, conformational transitions |

| Collective Variable-Free | Parallel Tempering, Stochastic Resetting | Temperature scaling or trajectory restarting | Systems with unknown reaction coordinates, protein folding |

| Accelerated Dynamics | Gaussian Accelerated MD (GaMD) | Adding harmonic boost potential | Biomolecular conformational changes, drug design |

| Path-Based | Adaptive Steered MD (ASMD) | Staging along pulling coordinate | Force-induced unfolding, nonequilibrium processes |

Methodological Deep Dive: Core Techniques

Umbrella Sampling

Umbrella Sampling (US) is a classic technique for calculating free energy profiles along a designated reaction coordinate [37]. The method operates by partitioning the reaction coordinate into multiple windows and running independent simulations in each window, with a harmonic biasing potential (umbrella) restraining the system to specific values of the coordinate [37] [40]. The unbiased free energy profile is subsequently reconstructed by combining data from all windows using statistical mechanical techniques, typically the Weighted Histogram Analysis Method (WHAM).

Experimental Protocol:

- Define Reaction Coordinate: Identify a CV (e.g., distance, angle, torsional angle) that distinguishes between initial and final states and captures the transition mechanism.

- System Setup: Prepare the solvated, neutralized molecular system using standard MD protocols with explicit or implicit solvent models [36].

- Window Selection: Divide the reaction coordinate into overlapping windows (typically 20-50) with spacing ensuring adequate phase space overlap.

- Biasing Potential Application: Apply harmonic restraints with force constants (typically 10-100 kcal/mol/Ų) sufficient to maintain the system near the window center but allowing fluctuations.

- Equilibration and Production: For each window, run energy minimization, equilibration, and production MD (typically 10-100 ns per window).

- Free Energy Reconstruction: Use WHAM or similar methods to unbias the biased distributions and obtain the potential of mean force (PMF) along the reaction coordinate.

US has been particularly valuable in studying binding processes and large conformational changes [37]. Recent methodological developments have addressed challenges related to the reliability of sampling along a single path and the completeness of conformational coverage [37].

Metadynamics

Metadynamics (MetaD), first introduced by Laio and Parrinello in 2002, enhances sampling by discouraging revisiting of previously sampled states [41] [40]. The method achieves this by depositing repulsive Gaussian potentials along selected CVs in regions of configuration space that the system has already visited [41]. Over time, these Gaussians "fill" the free energy basins, pushing the system to explore new regions [40].

The time-dependent bias potential in MetaD is expressed as: [ V{\text{bias}}(\vec{s}, t) = \sum{t' < t} W(t') \exp\left(-\frac{|\vec{s} - \vec{s}(t')|^2}{2\sigma^2}\right) ] where (\vec{s}) represents the collective variables, (W(t')) is the Gaussian height, and (\sigma) determines Gaussian width [41]. In the long-time limit, the bias potential converges to the negative of the underlying free energy: (V_{\text{bias}}(\vec{s}, t \to \infty) = -F(\vec{s}) + C) [41] [40].

Well-Tempered Metadynamics, a widely used variant, addresses the convergence issues of standard MetaD by gradually reducing the Gaussian height as simulation proceeds [40]: [ W(t) = W0 \exp\left(-\frac{V{\text{bias}}(\vec{s}(t), t)}{kB \Delta T}\right) ] where (W0) is the initial Gaussian height, (k_B) is Boltzmann's constant, and (\Delta T) is an adjustable parameter with dimension of temperature [40].

Experimental Protocol:

- Collective Variable Selection: Identify 1-3 CVs that capture the slow degrees of freedom relevant to the process under study.

- Gaussian Parameter Selection: Choose appropriate Gaussian height (0.1-1.0 kJ/mol), width (typically 5-10% of CV range), and deposition stride (100-1000 steps).

- Simulation Setup: Implement using plugins such as PLUMED integrated with MD engines like GROMACS, NAMD, or AMBER.

- Convergence Monitoring: Track the evolution of the bias potential and CV distributions until fluctuations indicate adequate sampling.

- Free Energy Extraction: Reconstruct free energy surfaces from the accumulated bias potential.

Recent advances include high-dimensional variants using machine learning to overcome the dimensionality limitation of traditional MetaD [41]. Neural networks are employed to approximate the bias potential, enabling efficient sampling with more CVs [41]. The On-the-fly Probability Enhanced Sampling (OPES) method, introduced as an evolution of MetaD, offers faster convergence and more robust parameters [41].

Gaussian Accelerated Molecular Dynamics (GaMD)

Gaussian Accelerated Molecular Dynamics (GaMD) is an enhanced sampling technique that adds a harmonic boost potential to smooth the underlying energy landscape [42]. Unlike MetaD, GaMD employs a non-negative boost potential that follows Gaussian distribution, enabling reweighting of simulations using a robust cumulant expansion to recover unbiased thermodynamic and kinetic properties.

The method works by: (1) calculating the maximum, minimum, and average potential energies of the system during a short conventional MD simulation; (2) constructing a harmonic boost potential that is added to the system when the potential energy falls below a threshold; and (3) running production simulations with the modified potential. GaMD has proven particularly valuable in biomolecular studies, such as characterizing conformational states of PD-1 induced by ligand binding [42].

Stochastic Resetting and Other Emerging Approaches

Stochastic Resetting (SR) represents a CV-free approach that periodically restarts simulations from initial conditions [38]. This simple yet powerful concept can accelerate sampling when the distribution of transition times has a high coefficient of variation. Recent research demonstrates that combining SR with MetaD can yield greater acceleration than either method alone, even compensating for suboptimal CV selection in MetaD [38].

Table 2: Performance Comparison of Enhanced Sampling Methods

| Method | Computational Overhead | Optimal Number of CVs | Convergence Time | Kinetics Recovery |

|---|---|---|---|---|

| Umbrella Sampling | Moderate (multiple windows) | 1-2 | System-dependent | Possible with additional analysis |

| Metadynamics | Low to Moderate | 1-3 | Hours to days | Possible with well-tempered variant |

| GaMD | Low | 0 | Hours to days | Accurate with reweighting |

| Stochastic Resetting | Very Low | 0 | System-dependent | Direct access to MFPT |

| AI2BMD | High (initial training) | N/A (AI-based) | Minutes to hours | Built-in ab initio accuracy |

Machine learning force fields (MLFFs) represent a paradigm shift in ab initio biomolecular dynamics [39]. Systems like AI2BMD use protein fragmentation schemes and ML potentials to achieve DFT-level accuracy with dramatically reduced computational cost [39]. For instance, AI2BMD can perform simulation steps for a 281-atom protein in 0.072 seconds compared to 21 minutes for DFT, enabling hundreds of nanoseconds of dynamics with quantum accuracy [39].

Integrated Workflows and Advanced Applications

Advanced Sampling in Drug Discovery

Enhanced sampling techniques have become indispensable in structure-based drug design, particularly for characterizing allosteric inhibition and binding mechanisms. A recent study on the PD-1/PD-L1 immune checkpoint pathway exemplifies this application [42]. Researchers employed an integrated computational pipeline combining Supervised MD (SuMD) to track ligand binding pathways, Umbrella Sampling to characterize binding free energies, and GaMD to identify inhibitory conformations of PD-1 [42]. This multi-technique approach revealed how ligand binding to an allosteric site in the C'D loop induces conformational changes that disrupt protein-protein interaction, providing a rational basis for novel immunotherapeutic development [42].

AI-Enhanced Sampling and Automation

The integration of artificial intelligence with enhanced sampling is accelerating biomolecular simulations. AI-driven approaches like AlphaFold have revolutionized static structure prediction, but capturing dynamics remains challenging [43]. Recent developments include generative models using diffusion and flow matching techniques to predict conformational ensembles [43]. These methods can effectively sample equilibrium distributions, generating diverse and functionally relevant structures beyond the capabilities of traditional MD.

Automated pipelines now combine enhanced sampling with machine learning to identify optimal CVs, a longstanding bottleneck in methods like MetaD. Nonlinear data-driven CVs, Sketch-Map, and essential coordinates algorithms help extract relevant degrees of freedom from simulation data [41]. Furthermore, neural networks can approximate high-dimensional bias potentials, extending MetaD to more complex systems [41].

Diagram 1: Enhanced Sampling Workflow. This flowchart illustrates the integrated computational pipeline combining experimental data, simulation techniques, and applications.