Force Field Validation with Statistical Ensembles: Methods, Challenges, and Applications in Drug Discovery

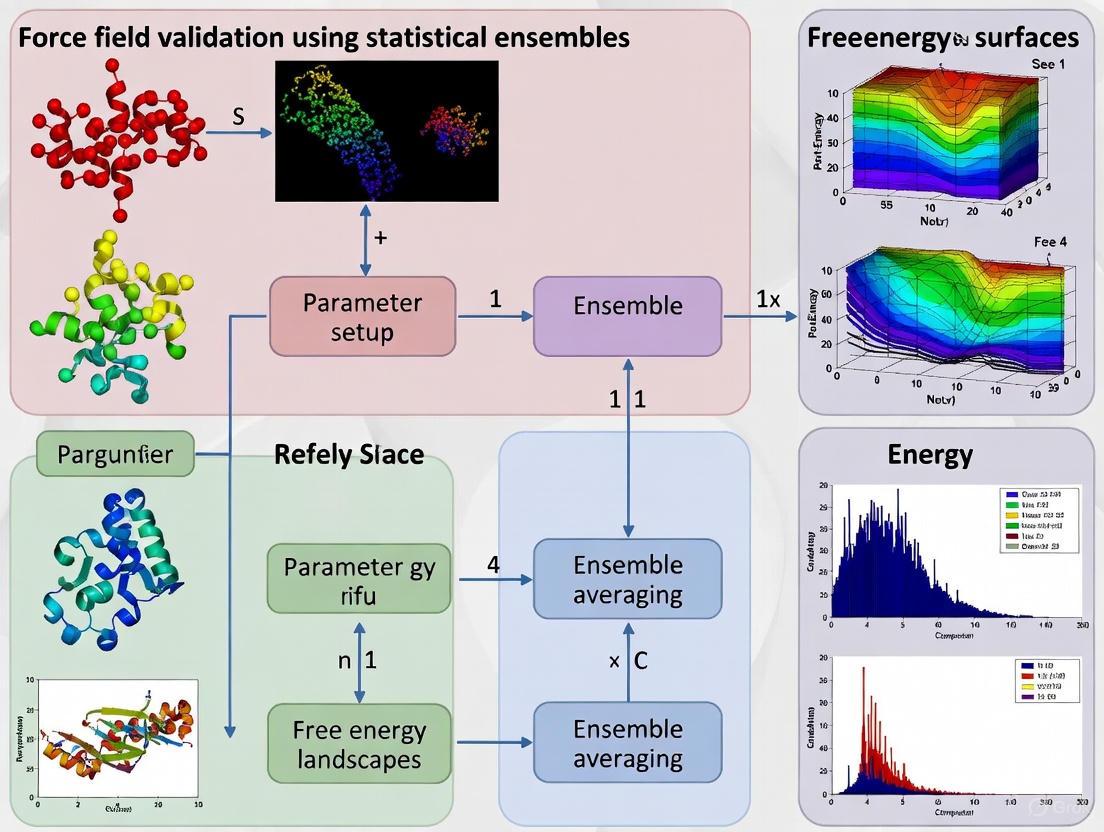

This article provides a comprehensive guide to force field validation using statistical ensembles, a critical process for ensuring the reliability of molecular simulations in biomedical research.

Force Field Validation with Statistical Ensembles: Methods, Challenges, and Applications in Drug Discovery

Abstract

This article provides a comprehensive guide to force field validation using statistical ensembles, a critical process for ensuring the reliability of molecular simulations in biomedical research. We cover foundational principles, exploring the necessity of validation for intrinsically disordered proteins and advanced peptidomimetics. The review details cutting-edge methodological approaches, including maximum entropy reweighting that integrates simulation with experimental data. We address key troubleshooting and optimization strategies to overcome sampling limitations and force field selection challenges. Finally, we present a rigorous framework for the comparative analysis of different force fields, highlighting their performance across diverse biological systems. This resource is tailored for researchers and drug development professionals seeking to implement robust validation protocols for their computational studies.

The Critical Role of Statistical Ensembles in Biomolecular Force Field Validation

The Force Field Concept and Its Parametrization Dilemma

In molecular dynamics (MD) simulations, a force field (FF) refers to the mathematical model and associated parameters that describe the potential energy of a system as a function of its atomic coordinates [1]. These empirical models use simple analytical functions to represent interatomic interactions, enabling the study of processes ranging from peptide folding to functional motions of large protein complexes [2]. The most common functional form includes terms for bonded interactions (bonds, angles, dihedrals) and non-bonded interactions (electrostatics and van der Waals forces) [1].

The fundamental challenge in force field development lies in the parametrization process. Force field parametrization is a poorly constrained problem where some properties exhibit exquisite sensitivity to small parameter variations while others appear quite insensitive [2]. The parameters within a given force field are also highly correlated, meaning that alternative parameter combinations can yield similar results, and varying one parameter may render other parameters suboptimal [2]. This complexity creates what is often termed a "parametrization dilemma," where improvements in agreement for one property may come at the expense of accuracy in another [2].

Historical Evolution of Validation Approaches

The validation of protein force fields has evolved significantly, with early studies suffering from limited statistical power. In seminal work from 1995, the validation of the AMBER ff94 force field relied heavily on a single 180 ps simulation of ubiquitin in water, where a root-mean-square deviation (RMSD) difference of 0.05 nm was claimed as significant improvement despite being within uncertainty [2]. Subsequent studies by Smith et al. (1995) utilized three 1 ns simulations of hen egg lysozyme but highlighted the difficulty of obtaining sufficient convergence for meaningful conclusions [2].

The early 2000s saw modest improvements. The 2003 AMBER release was validated based on the ability to distinguish experimental structures from decoys for 54 proteins using 10 ps simulations with implicit solvation [2]. Van der Spoel and Lindahl (2003) conducted one of the first validation studies with extended sampling (28 × 50 ns simulations) but still struggled to distinguish force fields even for simple systems [2]. By 2007, Villa et al. attempted to address poor statistics by simulating 31 proteins in triplicate for 5-10 ns but remained unable to demonstrate statistically significant differences between force fields due to variations between proteins and replicates [2].

A significant advancement came in 2012 with a systematic evaluation of eight different protein force fields using multi-microsecond simulations, allowing more robust comparison with experimental NMR data [3] [4] [5]. This study established that force fields could be categorized into distinct performance tiers and provided evidence for continued improvements in accuracy [4] [5].

Current State of Force Field Performance

Modern force fields have demonstrated progressively better performance across diverse protein systems, though significant challenges remain. The table below summarizes the performance characteristics of major force field families based on recent validation studies:

Table 1: Performance Characteristics of Major Force Field Families

| Force Field Family | Strengths | Limitations | Representative Versions |

|---|---|---|---|

| AMBER | Accurate collagen dihedrals and SAXS data [6]; Good for folded proteins and IDPs [7] [1] | Early versions (ff94, ff99) showed limited sampling [2] | ff14ipq, ff15ipq, ff19SB [1] |

| CHARMM | Good performance on folded proteins [4] [5] | Systematic shifts in collagen ϕ/ψ dihedrals [6]; Overstructuring of peptides [6] | CHARMM22*, CHARMM27, CHARMM36m [4] [6] |

| GROMOS | Validation using lysozyme NMR data [2] | Performance varies significantly by version [2] | 43A1, 45A3, 53A5, 53A6 [2] |

| OPLS | Reasonable short-timescale agreement [4] | Substantial conformational drift in long simulations [4] | OPLS-AA, OPLS-AA/L [4] |

Recent validation studies have revealed that force fields can be ranked into different performance tiers. For folded proteins like ubiquitin and GB3, CHARMM22, CHARMM27, Amber ff99SB-ILDN, and Amber ff99SB-ILDN demonstrated reasonably good agreement with experimental NMR data, while Amber ff03 and ff03* showed intermediate agreement, and OPLS and CHARMM22 exhibited substantial conformational drift [4] [5]. For intrinsically disordered proteins (IDPs), a99SB-disp, CHARMM22*, and CHARMM36m have shown promising results, though their performance varies across different disordered systems [7].

The performance of force fields is highly system-dependent. In collagen triple helix simulations, AMBER force fields accurately reproduced dihedrals, side-chain torsions, and SAXS data, while CHARMM force fields systematically shifted backbone dihedrals and overstructured the peptides [6]. For IDPs like COR15A, a 2025 study found that only DES-amber adequately reproduced both structure and dynamics, while ff99SBws captured helicity differences but overestimated them [8].

Key Methodologies in Force Field Validation

Experimental Observables for Validation

Validation of force fields relies on comparing simulation outcomes with experimental data. The choice of target properties presents a significant challenge, as parameters adjusted to reproduce conformational properties in one environment may fail in different environments [2]. Experimental data can be categorized as direct (quantities directly observed) or derived (quantities inferred from experimental data) [2].

Table 2: Key Experimental Data Used in Force Field Validation

| Experimental Method | Measured Observables | Advantages | Limitations |

|---|---|---|---|

| X-ray Crystallography | High-resolution protein structures [2] | Atomic-level structural details | Crystal packing effects; Static picture |

| NMR Spectroscopy | J-coupling constants, NOE intensities, chemical shifts, residual dipolar couplings, relaxation parameters [2] [7] | Solution-state data; Dynamic information | Interpretation model-dependent [2] |

| Small-Angle X-Ray Scattering (SAXS) | Ensemble-averaged structural parameters [7] [8] | Solution-state under native conditions; Low requirements | Sparse data; Multiple structural interpretations |

| Vibrational Spectroscopy | Bond vibrations and energies [1] | Information on local bonding | Limited structural information |

Statistical and Computational Frameworks

Robust validation requires sufficient sampling to distinguish force field deficiencies from statistical uncertainties. The essential subspace analysis using Principal Component Analysis (PCA) provides a method to compare structural ensembles across different force fields [4] [5]. The Root Mean Square Inner Product (RMSIP) quantifies the similarity between regions of conformational space sampled by different trajectories [5].

Integrative approaches that combine experimental data with simulations have grown increasingly popular, especially for IDPs [7]. The maximum entropy principle provides a framework for reweighting MD simulations with experimental data, introducing minimal perturbation to computational models required to match experimental datasets [7]. Automated parameter optimization methods like ForceBalance have enabled more systematic parameter fitting using both quantum mechanical and experimental target data [1].

Experimental Protocols for Force Field Validation

Protocol for Validating Folded Proteins

The validation of force fields for folded proteins typically follows a multi-step process that emphasizes comparison with experimental NMR data. The workflow below illustrates this comprehensive validation approach:

A typical validation protocol for folded proteins involves:

Test Set Selection: Curate a diverse set of high-resolution protein structures (e.g., 52 structures including 39 X-ray and 13 NMR-derived structures as in [2]).

Extended MD Simulations: Perform multiple long-timescale simulations (microsecond to millisecond) to ensure sufficient sampling and statistical precision [4]. For example, 10-microsecond simulations of ubiquitin and GB3 were used to evaluate eight different force fields [4] [5].

Comparison with NMR Data: Calculate experimental observables from simulations and compare with:

- J-coupling constants: Sensitive to backbone dihedral angles [2]

- Nuclear Overhauser Effect (NOE) intensities: Provide interproton distance information [2]

- Residual dipolar couplings (RDCs): Report on molecular orientation and dynamics [2]

- Order parameters (S²): Characterize bond vector flexibility [4]

Structural Metrics Analysis: Compute ensemble properties including:

Statistical Significance Testing: Determine if observed differences between force fields are statistically significant rather than resulting from sampling limitations [2].

Protocol for Validating Intrinsically Disordered Proteins

Validating force fields for IDPs presents unique challenges due to their heterogeneous conformational ensembles. The maximum entropy reweighting approach has emerged as a powerful method for determining accurate conformational ensembles of IDPs:

The protocol for IDP force field validation involves:

Initial Ensemble Generation: Perform long-timescale all-atom MD simulations (e.g., 30 μs as in [7]) using different force fields (a99SB-disp, CHARMM22*, CHARMM36m).

Experimental Data Collection: Obtain extensive experimental datasets, typically from NMR spectroscopy (chemical shifts, J-couplings, paramagnetic relaxation enhancements) and small-angle X-ray scattering (SAXS) [7].

Forward Model Application: Use mathematical models to predict experimental observables from each conformation in the MD ensemble [7].

Maximum Entropy Reweighting: Apply a reweighting procedure that introduces minimal perturbation to the initial ensemble while maximizing agreement with experimental data [7]. The effective ensemble size is controlled using the Kish ratio (typically K=0.10, retaining ~3000 structures) [7].

Convergence Assessment: Determine if reweighted ensembles from different initial force fields converge to similar conformational distributions, indicating a force-field independent approximation of the true solution ensemble [7].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Tools and Resources for Force Field Validation

| Tool/Resource | Type | Function in Validation | Examples/References |

|---|---|---|---|

| MD Software Packages | Software | Perform molecular dynamics simulations | GROMACS, AMBER, CHARMM, NAMD [6] |

| ForceBalance | Automated fitting | Optimize force field parameters against QM and experimental data | Used in AMBER ff15-FB development [1] |

| Maximum Entropy Reweighting | Computational method | Integrate MD simulations with experimental data | IDP ensemble determination [7] |

| Protein Data Bank | Structural database | Source of experimental structures for validation | Curated test sets [2] |

| NMR Data | Experimental | Validate structural ensembles and dynamics | Chemical shifts, J-couplings, NOEs [7] |

| SAXS | Experimental | Validate global structural properties | IDP compaction, ensemble properties [7] [8] |

Force field validation has progressed from qualitative assessments based on limited sampling to rigorous statistical comparisons using extensive simulation datasets and diverse experimental observables. The field has developed frameworks for evaluating force fields across different protein classes, including folded proteins, intrinsically disordered proteins, and specialized systems like collagen triple helices.

Despite these advances, fundamental challenges remain. No single force field currently excels across all protein types and properties, and the risk of overfitting to specific validation targets persists [2]. The integration of experimental data directly into parameter optimization, the development of polarizable force fields, and the use of automated fitting methods represent promising directions for future improvement [1]. Recent methodologies that enable the determination of accurate, force-field independent conformational ensembles of IDPs suggest the field may be maturing toward true atomic-resolution integrative structural biology [7].

The central challenge of validation continues to drive innovation in both force field development and assessment methodologies, with the ultimate goal of creating transferable parameters that accurately reproduce structural, dynamic, and thermodynamic properties across diverse biological systems.

Why Statistical Ensembles are Non-Negotiable for Accurate Biomolecular Modeling

Statistical ensembles have emerged as a foundational component in biomolecular modeling, transforming the field from qualitative visualization to quantitative, predictive science. This guide compares the performance of ensemble-based approaches against single-trajectory simulations, demonstrating through experimental data how ensembles are indispensable for robust force field validation, reliable free energy estimation, and accurate characterization of dynamic biological processes. The integration of ensemble methods with experimental data and advanced sampling algorithms represents a paradigm shift in computational biophysics, enabling researchers to achieve statistically significant results and avoid erroneous conclusions that plague insufficiently sampled simulations.

The Statistical Imperative: Why Single Simulations Fail

Biomolecular systems are inherently dynamic, sampling vast conformational landscapes that directly influence their function. Traditional molecular dynamics (MD) simulations relying on single trajectories are fundamentally limited for studying these complex systems due to several critical factors:

Statistical Fluctuations: Computational simulations, akin to wet lab experimentation, are subject to statistical fluctuations that must be quantified through uncertainty estimates. Without sufficient sampling, these fluctuations can lead to substantially erroneous interpretation of simulation data and wrong overall conclusions [9].

Sampling Deficiencies: Considering the stochastic nature of molecular dynamics sampling algorithms, biomolecular trajectories represent multidimensional random walks especially prone to suffering from sampling deficiencies. Relevant protein conformations are often not sampled in single trajectories, creating substantial associated errors in estimated thermodynamic and kinetic properties [9].

Force Field Validation Challenges: Assessing force field accuracy requires extensive sampling across diverse molecular systems. Single simulations provide inadequate data for meaningful force field comparison or validation against experimental observables [7].

The critical importance of statistical ensembles becomes evident when examining case studies where initial findings based on limited sampling were later refuted with proper statistical treatment. One prominent example involves claims about simulation box size effects on thermodynamic quantities, which subsequent ensemble studies demonstrated disappeared with increased sampling [9]. This scientific discussion highlights how insufficient statistics can lead to unfounded claims about physical phenomena.

Performance Comparison: Ensemble vs. Single Trajectory Approaches

Table 1: Quantitative Comparison of Simulation Approaches for Key Biomolecular Modeling Tasks

| Modeling Task | Single Trajectory Performance | Ensemble Approach Performance | Experimental Validation |

|---|---|---|---|

| Hydration Free Energy (Small Molecule) | Erroneous trends (upward/downward) appearing in individual runs [9] | Box-size independence confirmed (Mean ΔG: -8.5 ± 0.3 kcal/mol across 20 replicates) [9] | Consistent with experimental hydration values |

| Protein Solvation Free Energy | Highly variable results depending on starting structure | Statistically consistent values across box sizes when properly sampled | Requires integration with experimental techniques |

| IDP Conformational Sampling | Limited structural diversity, force-field dependent biases | Converged ensembles across force fields after maximum entropy reweighting [7] | Agreement with NMR and SAXS data (χ² improvement > 70%) [7] |

| Kinetic Parameter Estimation | Poor convergence of transition rates | Robust estimation through Markov State Models [10] | Validated through experimental kinetics |

| Force Field Validation | Inconclusive or misleading comparisons | Quantitative assessment across multiple properties | Direct experimental comparability |

Table 2: Statistical Reliability Assessment Across Sampling Methods

| Statistical Metric | Single Long Trajectory | Basic Ensemble (10 trajectories) | Advanced Adaptive Ensemble |

|---|---|---|---|

| Uncertainty Quantification | Limited to block averaging | Robust confidence intervals | Bayesian uncertainty estimates |

| Phase Space Coverage | Incomplete, path-dependent | Moderate improvement | Comprehensive exploration |

| Convergence Assessment | Challenging to verify | Statistical tests applicable | Automated convergence detection |

| Computational Efficiency | Low for rare events | Moderate | High (100-1000x improvement) [10] |

| Force Field Discrimination | Poor sensitivity | Moderate discrimination power | High sensitivity to force field differences |

Experimental Protocols and Methodologies

Ensemble-Based Free Energy Calculations

Protocol for Hydration Free Energy Validation [9]

System Setup: Create multiple independent simulation systems for the target molecule (e.g., anthracene) solvated in water boxes of varying sizes (473 to 5334 water molecules)

Replica Generation: Generate 20 independent replicates per box size with different initial random seeds

Alchemical Sampling: Perform free energy calculations using Hamiltonian replica exchange with 32 discrete λ-windows between coupled and decoupled states

Convergence Monitoring: Track statistical uncertainties through:

- Standard deviations across replicates

- Confidence interval calculations (95% confidence level)

- Time-series analysis of free energy estimates

Statistical Testing: Apply hypothesis testing to identify significant trends versus random fluctuations

Key Experimental Insight: When all replicates (N=20) are considered, no trend in computed hydration free energy is observed as a function of simulation box size. However, reliance on single realizations can produce any type of trend (upward, downward, or non-monotonic), illustrating how anecdotal evidence leads to erroneous conclusions [9].

Workflow for Determining Accurate IDP Conformational Ensembles

Methodology Details:

Initial Ensemble Generation:

- Run 30μs all-atom MD simulations using three different protein force field/water model combinations (a99SB-disp/a99SB-disp water, Charmm22*/TIP3P, Charmm36m/TIP3P)

- Collect 29,976 structures from each unbiased MD ensemble

Experimental Data Integration:

- Acquire nuclear magnetic resonance (NMR) data including chemical shifts, J-couplings, and residual dipolar couplings

- Obtain small-angle X-ray scattering (SAXS) data providing global structural parameters

- Calculate experimental observables from ensemble structures using established forward models [7]

Maximum Entropy Reweighting:

- Apply the minimal perturbation to computational models required to match experimental data

- Automatically balance restraint strengths from different experimental datasets

- Use Kish ratio (K=0.10) to maintain effective ensemble size (~3000 structures)

- Optimize weights to maximize agreement while preserving statistical robustness

Performance Outcome: For three of five IDPs studied (Aβ40, drkN SH3, and ACTR), ensembles derived from different force fields converged to highly similar conformational distributions after reweighting, demonstrating force-field independent ensemble determination [7].

Protocol for Enhanced Kinetics Estimation:

Initial Exploration: Launch multiple parallel simulations from diverse starting conformations

Progress Monitoring: Track collective variables or state assignments in real-time

Adaptive Resampling: Dynamically allocate computational resources to under-sampled regions

Model Building: Construct Markov State Models or weighted ensemble frameworks

Iterative Refinement: Continuously improve sampling based on intermediate results

Efficiency Gains: Adaptive ensemble algorithms can increase simulation efficiency by greater than a thousand-fold compared to traditional single-trajectory approaches [10].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Computational Tools for Ensemble-Based Biomolecular Modeling

| Tool/Category | Specific Examples | Function/Purpose | Performance Considerations |

|---|---|---|---|

| MD Simulation Engines | GROMACS [9], AMBER, CHARMM, NAMD [10] | Core molecular dynamics propagation | Optimized for ensemble execution on HPC resources |

| Enhanced Sampling Algorithms | Replica Exchange MD, Weighted Ensemble, Metadynamics [10] | Accelerate barrier crossing and rare events | Tradeoffs between scalability and system size |

| Ensemble Analysis Frameworks | Markov State Models, MILESTONING [10] | Extract kinetics and thermodynamics from ensemble data | Model quality depends on state definition and sampling |

| Experimental Integration Tools | Maximum Entropy Reweighting [7], Bayesian Inference | Combine simulation with experimental data | Manages experimental uncertainty and force field errors |

| Adaptive Execution Platforms | Copernicus, Ensemble Toolkit, Swift/T [10] | Dynamically control ensemble simulations based on intermediate results | Requires sophisticated workflow management |

| Force Fields | a99SB-disp, CHARMM36m, CHARMM22* [7] | Molecular interaction potentials | Ensemble approaches reveal force field limitations |

Force Field Validation Through Statistical Ensembles

Statistical ensembles provide the essential framework for rigorous force field validation, moving beyond qualitative assessment to quantitative statistical comparison. The integration of experimental data with ensemble simulations has revealed that in favorable cases, IDP ensembles obtained from different MD force fields converge to highly similar conformational distributions after maximum entropy reweighting [7].

Force Field Validation Through Ensemble Convergence

Key Validation Insights:

Convergence Testing: When ensembles from different force fields converge to similar distributions after experimental reweighting, this indicates force-field independent approximation of the true solution ensemble [7].

Discrimination Power: For IDPs where unbiased MD simulations with different force fields sample distinct conformational regions, ensemble reweighting clearly identifies the most accurate representation of the true solution ensemble [7].

Statistical Significance: Ensemble approaches enable proper statistical testing to determine whether differences between force fields exceed natural variability and sampling limitations.

The experimental evidence comprehensively demonstrates that statistical ensembles are fundamental requirements—not optional enhancements—for accurate biomolecular modeling. The comparative data reveals several unequivocal conclusions:

Statistical Reliability: Ensemble methods provide the only mathematically sound approach for quantifying uncertainties in computed biomolecular properties, without which conclusions remain suspect [9].

Force Field Development: Modern force field validation absolutely requires ensemble approaches to assess performance across diverse molecular systems and conditions [7].

Computational Efficiency: Adaptive ensemble simulations can achieve thousand-fold improvements in sampling efficiency compared to single-trajectory methods [10].

Experimental Integration: Maximum entropy and similar ensemble-based frameworks provide the most robust methodology for integrating simulation with experimental data [7] [11].

For researchers in computational biophysics and drug development, embracing statistical ensembles represents an essential paradigm shift from qualitative observation to quantitative, statistically rigorous biomolecular modeling. The experimental comparisons clearly demonstrate that ensemble approaches consistently outperform single-trajectory methods across all metrics of reliability, accuracy, and efficiency, making them truly non-negotiable for cutting-edge research in the field.

Conformational Ensembles, Sampling, and the Force Field Fitting Problem

Molecular dynamics (MD) simulations provide a powerful vehicle for capturing the structures, motions, and interactions of biological macromolecules in full atomic detail, serving as a computational microscope for researchers and drug development professionals [12]. The accuracy of such simulations, however, is critically dependent on the force field—the mathematical model used to approximate the atomic-level forces acting on the simulated molecular system [12]. The "force field fitting problem" refers to the fundamental challenge of developing energy functions that accurately reproduce the true potential energy surface of diverse molecular systems, from folded proteins to intrinsically disordered regions and macrocyclic therapeutics. This challenge is particularly acute for modeling conformational ensembles—the collections of interconverting structures that flexible molecules adopt in solution. Recent advances in sampling algorithms and force field parameterization have progressively improved the accuracy of these computational models, yet significant limitations remain, especially for complex systems with heterogeneous dynamics [13].

Force Field Comparison: Performance Across Molecular Systems

Performance Benchmarking for Macrocycles

Macrocycles represent a promising class of therapeutic compounds for difficult drug targets due to their favorable combination of properties, including improved binding affinity compared to their linear counterparts and reduced conformational flexibility [14]. A 2024 benchmark study evaluated four different force fields for macrocyclic compounds by performing replica exchange with solute tempering (REST2) simulations of 11 macrocyclic compounds and comparing conformational ensembles to nuclear Overhauser effect (NOE) distance bounds from NMR experiments [14]. The results demonstrated that modern force fields, particularly OpenFF 2.0 and XFF, yielded the best performance, outperforming established force fields like GAFF2 and OPLS/AA [14].

Table 1: Force Field Performance for Macrocyclic Compounds

| Force Field | Overall Performance | Strengths | Limitations |

|---|---|---|---|

| OpenFF 2.0 (Sage) | Good to excellent | Accurate ensembles for most macrocycles | Varies by specific compound |

| XFF | Good to excellent | Good performance with DASH partial charges | Recently developed, less extensively tested |

| GAFF2 | Moderate | Widely adopted, AM1-BCC charges | Underperforms vs. modern alternatives |

| OPLS/AA | Moderate to poor | Established history | Lower accuracy for macrocyclic ensembles |

However, the study also highlighted that for certain compounds, all examined force fields failed to produce ensembles satisfying experimental constraints, indicating persistent challenges in force field accuracy [14]. This underscores that while force fields have improved, the "fitting problem" remains partially unsolved, particularly for specialized molecular systems.

Performance for Intrinsically Disordered Proteins

Intrinsically disordered proteins (IDPs) represent a particularly challenging case for force fields due to their lack of stable tertiary structure and existence as dynamic conformational ensembles [7] [15]. Recent studies have evaluated force fields by comparing simulations to experimental data from NMR spectroscopy and small-angle X-ray scattering (SAXS) [7].

Table 2: Force Field Performance for Intrinsically Disordered Proteins

| Force Field | Performance IDPs | Key Characteristics |

|---|---|---|

| a99SB-disp | Good overall | Specifically designed for disordered proteins |

| CHARMM36m (C36m) | Good overall | Refined to reduce overpopulation of left-handed helicies |

| CHARMM22* | Variable | Improved backbone parameters |

| a99SB-ILDN | Poor for IDPs | Optimized for folded proteins, predicts overly compact ensembles |

A 2025 study demonstrated that through maximum entropy reweighting—integrating MD simulations with experimental data—ensembles from different force fields could be made to converge to highly similar conformational distributions [7]. This suggests that in favorable cases where initial agreement with experiments is reasonable, reweighted ensembles can provide force-field independent approximations of true solution ensembles [7].

Performance for Folded Proteins and Peptides

Early systematic validation studies compared eight protein force fields through extensive simulations of folded proteins, secondary structure elements, and folding events [12]. These investigations revealed that while all force fields had strengths and weaknesses, some—particularly Amber ff99SB-ILDN and CHARMM22*—provided the best overall agreement with experimental NMR data for folded proteins like ubiquitin and GB3 [12]. The study also highlighted specific deficiencies, such as the inability of CHARMM22 to maintain the native state of GB3, which unfolded during simulation [12].

Advanced Sampling and Validation Methodologies

Enhanced Sampling Techniques

Accurate determination of conformational ensembles requires adequate sampling of the accessible conformational space, which can be computationally prohibitive using standard MD simulations [16]. Enhanced sampling techniques have been developed to address this challenge:

Replica Exchange with Solute Tempering (REST2): Scales down dihedral angle terms and intramolecular nonbonded interactions of the solute to accelerate transitions while maintaining high replica-exchange acceptance probability [14]. Studies have shown that including bond-angle terms in REST2 is necessary for proper sampling of compounds with strained ring systems [14].

Gaussian Accelerated MD (GaMD): Provides unbiased reweighting of conformational distributions while accelerating sampling of energy barriers, successfully applied to study proline isomerization in disordered proteins [16].

Replica-Exchange MD (REMD): Multiple copies of the system simulate at different temperatures, allowing exchange between replicas to overcome energy barriers [14].

Integrative Approaches Combining Simulation and Experiment

Due to limitations in both force field accuracy and conformational sampling, integrative approaches that combine computational models with experimental data have emerged as powerful methodologies:

Maximum Entropy Reweighting: A robust procedure that introduces minimal perturbation to computational models required to match experimental data [7]. This approach automatically balances restraints from different experimental datasets based on the desired effective ensemble size, producing statistically robust ensembles with minimal overfitting [7].

Quality Evaluation Based Simulation Selection (QEBSS): A protocol that combines MD simulations with NMR-derived protein backbone ¹⁵N spin relaxation times (T1 and T2) and hetNOE values to identify conformational ensembles with realistic dynamics [13]. QEBSS quantitatively evaluates simulation quality and systematically selects ensembles that best reproduce experimental observations [13].

Table 3: Experimental Techniques for Force Field Validation

| Experimental Method | Information Provided | Applications in Validation |

|---|---|---|

| NMR Spectroscopy | Interatomic distances, dynamics, secondary structure | NOE distance bounds, chemical shifts, spin relaxation |

| Small-Angle X-Ray Scattering (SAXS) | Global dimensions, shape | Radius of gyration, Kratky plots |

| Förster Resonance Energy Transfer (FRET) | Inter-domain distances, dynamics | Distance distributions between fluorophores |

| Circular Dichroism (CD) | Secondary structure content | Helical, sheet, and random coil proportions |

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Essential Research Tools for Conformational Ensemble Determination

| Tool/Reagent | Function/Role | Examples/Notes |

|---|---|---|

| MD Simulation Software | Generate conformational ensembles | GROMACS, AMBER, OpenMM, Desmond |

| Force Field Parameters | Define energy functions | OpenFF 2.0, CHARMM36m, a99SB-disp, GAFF2 |

| Enhanced Sampling Algorithms | Improve conformational sampling | REST2, GaMD, REMD, Metadynamics |

| Experimental Data | Validate and refine ensembles | NMR (NOE, relaxation), SAXS, FRET |

| Reweighting/Bayesian Methods | Integrate simulations with experiments | Maximum entropy, Bayesian inference |

| Analysis Tools | Quantify ensemble properties | MDTraj, MDAnalysis, VMD |

Workflow and Signaling Pathways

Flowchart for Conformational Ensemble Determination

Integrative Structural Biology Approach

The accurate determination of conformational ensembles remains challenging due to the intertwined problems of force field accuracy and adequate sampling. Recent advances in force field development, particularly OpenFF 2.0, XFF, a99SB-disp, and CHARMM36m, have demonstrated improved performance for diverse molecular systems including macrocycles, IDPs, and folded proteins [14] [7]. Enhanced sampling methods like REST2 and integrative approaches such as maximum entropy reweighting and QEBSS provide pathways to more accurate ensembles by combining computational and experimental data [14] [7] [13].

Emerging methods using artificial intelligence show promise in overcoming limitations of traditional MD simulations by learning complex sequence-to-structure relationships from large datasets [16]. However, these approaches still face challenges including dependence on training data quality and limited interpretability [16]. The most promising future direction appears to be hybrid approaches that integrate physics-based simulations with AI methods and experimental data, potentially leading to more accurate, efficient, and force-field independent determination of conformational ensembles for drug development and molecular design.

The Critical Importance for Intrinsically Disordered Proteins (IDPs)

Intrinsically Disordered Proteins (IDPs) and regions (IDRs) represent a substantial fraction of eukaryotic proteomes, playing critical roles in cellular signaling, transcriptional regulation, and dynamic protein-protein interactions [17]. Unlike structured proteins, IDPs lack a stable three-dimensional structure under physiological conditions, existing instead as dynamic conformational ensembles [18]. This structural flexibility allows them to participate in vital biological processes but also makes them exceptionally challenging to characterize and target. IDPs are frequently associated with major human diseases, including cancer, cardiovascular diseases, and neurodegenerative disorders such as Alzheimer's and Parkinson's disease [18] [17]. Their prevalence in disease pathways, coupled with their lack of stable binding pockets, has historically rendered many IDPs "undruggable" [17]. However, recent advances in computational structural biology and force field development are now enabling researchers to accurately model IDP conformational ensembles, opening new avenues for therapeutic intervention targeting these critical proteins.

Force Field Performance Comparison for IDP Simulations

Molecular dynamics (MD) simulations provide atomistically detailed structural ensembles of IDPs, but their accuracy depends critically on the force fields used. Recent developments have yielded several force fields specifically optimized for IDP simulations. The table below summarizes the performance characteristics of contemporary force fields validated against experimental data from nuclear magnetic resonance (NMR) spectroscopy and small-angle X-ray scattering (SAXS).

Table 1: Comparison of Modern Force Fields for IDP Simulations

| Force Field | Base Force Field | Key Improvements | Performance Summary | Known Limitations |

|---|---|---|---|---|

| DES-Amber [19] | Amber ff99SB | Reparameterized dihedral and non-bonded interactions using osmotic pressure data | Best performer for COR15A dynamics; captures helicity differences between wild-type and mutant | Does not perfectly reproduce all experimental data |

| Amber ff99SBws [20] | Amber ff99SB | Upscaled protein-water interactions (10%) with TIP4P2005 water | Improved IDP chain dimensions; maintains folded protein stability | Overestimates helicity in some systems [19] |

| Amber ff03w-sc [20] | Amber ff03 | Selective protein-water interaction scaling | Accurate IDP dimensions and secondary structure propensities | Improves folded protein stability over ff03ws |

| CHARMM36m [18] [15] | CHARMM36 | Refined CMAP potentials and added NBFIX for salt bridges | Balanced performance for folded/disordered proteins; correct Aβ16-22 aggregation | Initial versions overpopulated left-handed helicies [15] |

| a99SB-disp [7] | Amber ff99SB | Modified TIP4P-D water with enhanced backbone hydrogen bonding | State-of-the-art performance in multiple IDP benchmarks | Overestimates protein-water interactions in some cases [20] |

Quantitative validation studies reveal that in favorable cases where different force fields show reasonable initial agreement with experimental data, reweighted ensembles converge to highly similar conformational distributions [7]. For example, in a comprehensive assessment of five IDPs (Aβ40, drkN SH3, ACTR, PaaA2, and α-synuclein), three force fields (a99SB-disp, CHARMM22*, and CHARMM36m) produced highly similar conformational distributions after maximum entropy reweighting with extensive NMR and SAXS datasets [7].

Experimental Protocols for Force Field Validation

Maximum Entropy Reweighting Protocol

A robust methodology for determining accurate atomic-resolution conformational ensembles integrates MD simulations with experimental data using a maximum entropy reweighting procedure [7]. The workflow involves:

Initial Ensemble Generation: Running long-timescale (e.g., 30μs) all-atom MD simulations of IDPs using different protein force field and water model combinations (e.g., a99SB-disp with a99SB-disp water, CHARMM22* with TIP3P water, CHARMM36m with TIP3P water) [7].

Experimental Data Collection: Acquiring extensive experimental datasets, primarily from NMR spectroscopy (chemical shifts, scalar couplings, relaxation data) and SAXS, which provide ensemble-averaged structural information [7].

Observable Prediction: Using forward models to predict experimental observables from each frame of the unbiased MD ensemble [7].

Reweighting Procedure: Applying maximum entropy reweighting to introduce minimal perturbation to computational models required to match experimental data, typically resulting in ensembles containing ~3000 structures with a Kish Ratio threshold of K=0.10 [7].

The following diagram illustrates this integrative structural biology workflow:

Force Field Validation on Challenging Systems

Rigorous validation involves testing force fields against IDPs with specific characteristics. A recent study evaluated 20 MD models on COR15A, "an IDP just on the verge of folding," using a two-step approach [19]:

Primary Screening: Initial validation of short 200-ns simulations against SAXS data to identify promising candidates.

Detailed Evaluation: Extended 1.2-μs MD simulations of the six best-performing models against NMR data, including a single-point mutant with slightly increased helicity.

Dynamic Assessment: Analysis of NMR relaxation times at different magnetic field strengths to evaluate conformational dynamics.

This systematic approach revealed that only DES-amber adequately reproduced both structural and dynamic properties of COR15A, highlighting the importance of rigorous, multi-faceted force field validation [19].

Therapeutic Targeting Strategies for IDPs

Biomolecular Condensates as Therapeutic Targets

IDPs frequently drive the formation of biomolecular condensates through liquid-liquid phase separation (LLPS), and abnormal condensates are implicated in cancer and neurodegenerative diseases [17]. Therapeutic strategies have evolved to target these assemblies through several mechanisms:

Table 2: Classification of Condensate-Modifying Drugs (c-mods)

| Category | Mechanism of Action | Example Compound | Therapeutic Effect |

|---|---|---|---|

| Dissolvers | Dissolve or prevent condensate formation | ISRIB | Reverses stress granule formation and restores translation [17] |

| Inducers | Trigger condensate formation to alter reaction rates | Tankyrase inhibitors | Promote degradation condensates that reduce beta-catenin [17] |

| Localizers | Alter subcellular localization of condensate components | Avrainvillamide | Restores NPM1 to nucleus/nucleolus in AML [17] |

| Morphers | Modify condensate morphology and material properties | Cyclopamine | Alters RSV condensate properties, inhibiting replication [17] |

AI-Driven Binder Design for IDPs

Recent breakthroughs in AI-based protein design have enabled targeting of disordered proteins previously considered undruggable. Two complementary strategies have emerged:

'Logos' Approach: A design strategy that creates binders by assembling proteins from a library of 1,000 pre-made parts, successfully generating tight binders for 39 of 43 tested disordered targets [21].

RFdiffusion-Based Method: Uses generative AI to produce proteins that wrap around flexible targets, achieving high-affinity binders (3-100 nM) for targets including amylin, pathogenic prion core, and IL-2 receptor γ-chain [21].

These approaches have demonstrated promising functional outcomes, including blocking pain signaling, dismantling toxic aggregates, and disabling prion seeds in cell-based tests [21].

The following diagram illustrates the therapeutic targeting strategies for IDPs and biomolecular condensates:

Table 3: Key Research Reagents and Computational Tools for IDP Research

| Resource Category | Specific Tools | Function and Application |

|---|---|---|

| Force Fields | DES-Amber, CHARMM36m, ff99SBws, ff03w-sc | Provide physical models for MD simulations of IDPs [19] [20] |

| Water Models | TIP4P/2005, TIP4P-D, TIP3P (modified) | Critical for balancing protein-water and protein-protein interactions [18] [20] |

| Reweighting Software | Maximum entropy reweighting protocols | Integrate MD simulations with experimental data [7] |

| IDP Prediction Tools | IDP-FSP, IDP-EDL, FusionEncoder | Predict disordered regions from sequence [22] [23] |

| Experimental Data | NMR chemical shifts, J-couplings, SAXS profiles | Validate and refine computational models [7] [19] |

| AI Design Platforms | RFdiffusion, 'Logos' method | Design binders to target disordered proteins [21] |

The field of IDP research is rapidly advancing, with recent progress in force field development, integrative structural biology, and therapeutic targeting strategies. Force fields such as DES-Amber and ff03w-sc demonstrate that balanced parameterization can simultaneously describe folded domains and disordered regions with improved accuracy [19] [20]. Integrative approaches that combine MD simulations with experimental data through maximum entropy reweighting are enabling determination of force-field independent conformational ensembles [7]. Most promisingly, AI-driven methods for designing binders to disordered targets are overcoming historical barriers to targeting IDPs therapeutically [21]. As these computational and experimental methodologies continue to mature, researchers are increasingly positioned to exploit the critical importance of IDPs in both fundamental biology and drug development, potentially unlocking new treatments for cancer, neurodegenerative diseases, and other disorders linked to disordered proteins.

In the last two decades, non-natural peptidic compounds have demonstrated remarkable structural diversity and widespread applicability across numerous fields [24]. Among these, β-peptides—composed of β-amino acids with an extra backbone carbon atom—have emerged as particularly promising scaffolds for biomolecular engineering. These foldamers can adopt diverse secondary structures including helical conformations, sheet-like formations, hairpins, and even higher-ordered oligomers and nanofibers [24]. The growing interest in β-peptides stems from their unique structural properties and broad potential applications in nanotechnology, biomedical fields, biopolymer surface recognition, catalysis, and biotechnology [24] [25]. Unlike natural peptides, β-peptides possess important structural differences primarily arising from the properties of the amino acid backbone, which may enable functions so far unseen for natural biomolecules [24].

Computer-assisted study and design of these non-natural peptidomimetics has become increasingly important, with molecular dynamics (MD) simulations playing a crucial role in accurately describing both monomeric and oligomeric states [24]. However, the accuracy of these computational predictions hinges on the quality of the empirical force fields used to describe atomic interactions. This case study examines the current state of force field performance for β-peptides, comparing the accuracy of three major force field families and their ability to predict both secondary structure and oligomerization behavior.

Force Field Performance: A Comparative Analysis

Quantitative Comparison of Force Field Accuracy

Recent research has systematically evaluated the performance of three major force field families specifically tailored for β-peptides: CHARMM, Amber, and GROMOS [24]. A 2023 comparative study tested these force fields across seven different β-peptide sequences with diverse structural characteristics, simulating each system for 500 nanoseconds and testing multiple starting conformations [24].

Table 1: Force Field Performance Across β-Peptide Systems

| Force Field | Successfully Modeled Peptides | Experimental Structure Reproduction | Oligomer Formation & Stability |

|---|---|---|---|

| CHARMM | 7/7 sequences | Accurate in all monomeric simulations | Correctly described all oligomeric examples |

| Amber | 4/7 sequences | Successful for β-peptides with cyclic β-amino acids | Maintained pre-formed associates but failed at spontaneous oligomer formation |

| GROMOS | 4/7 sequences | Lowest performance in structure reproduction | Limited oligomerization capabilities |

The results demonstrated clear performance differences among the force fields. The CHARMM force field extension, developed through torsional energy path matching against quantum-chemical calculations, performed best overall, accurately reproducing experimental structures in all monomeric simulations and correctly describing all oligomeric systems [24]. In contrast, the Amber force field successfully modeled only four of the seven β-peptide sequences, particularly those containing cyclic β-amino acids, while the GROMOS force field also handled only four sequences and showed the lowest performance in reproducing experimental secondary structures [24].

Specialized Force Field Extensions for β-Peptides

Each major force field family has undergone specific extensions to accommodate β-peptides:

CHARMM: The Cui group initially extended CHARMM for β-peptides [24], with subsequent improvements by Wacha et al. involving rigorous study of backbone torsions to eliminate correlations between dihedral angle parameters [24]. This resulted in better reconstruction of the ab initio potential energy surface and closer matching of experimentally determined structural quantities.

Amber: Two separate extension attempts exist in the literature—the AMBER*C variant validated for cyclic β-amino acids by the Gellman group, and the extension by the Martinek research group for both cyclic and acyclic β-amino acids [24].

GROMOS: This was the first force field to support β-peptides "out of the box" as early as 1997, developed by the original van Gunsteren group [24]. The 54A7 and 54A8 versions both support β-amino acids without further modification, though derivation of some residues by analogy is sometimes required [24].

Methodological Framework for Force Field Validation

Experimental Protocols and Simulation Methodologies

The comparative analysis of force field performance followed rigorous methodological standards to ensure impartiality and reproducibility [24]. Each simulation employed consistent protocols across all tested force fields:

Table 2: Key Research Reagents and Computational Tools

| Research Tool | Specific Type/Version | Function in β-Peptide Research |

|---|---|---|

| MD Engine | GROMACS 2019.5 | Common simulation platform for impartial force field comparison |

| Force Fields | CHARMM36m (Mar 2017), Amber ff03, GROMOS 54A7/54A8 | Empirical interaction potentials with β-amino acid parameters |

| Topology Generation | pdb2gmx (CHARMM/Amber), make_top/OutGromacs (GROMOS) | Generate molecular topologies and interaction parameters |

| Visualization & Modeling | PyMOL 2.3.0 with pmlbeta extension | Molecular graphics and β-peptide model construction |

| Analysis Package | gmxbatch Python package | Trajectory analysis and run preparation |

Simulation Workflow: Molecular models of β-peptides were built using PyMOL with specialized extensions for β-peptides [24]. After initial energy minimization in vacuo, peptide molecules were folded by setting backbone torsion angles to values corresponding to desired secondary structures. The folded peptides were solvated in appropriate solvents (water, methanol, or DMSO) in a cubic box with proper peptide-wall distances [24]. For oligomerization studies, eight copies of the solvated peptide were assembled in a 2×2×2 cube after applying random rotations to each chain [24]. The systems underwent energy minimization with position restraints on peptide heavy atoms, followed by a 100 ps MD run in the NVT ensemble for temperature coupling at 300 K [24].

Special Considerations: For short peptides, terminal groups profoundly influence folding behavior, requiring careful attention to correct termini application as reported in literature [24]. This presented challenges for some force fields—Amber lacked neutral N- and C-termini, while GROMOS was missing neutral amine and N-methylamide C-termini, limiting their applicability for certain β-peptide sequences [24].

Test System Diversity

The force field validation employed seven diverse β-peptide sequences representing various structural motifs [24]:

Peptide I: A common benchmark that folds in methanol into a left-handed 314 helix with approximately three β-amino acid residues per turn [24].

Peptides II & III: Test cases for Amber-compatible parameter derivation; Peptide II prefers 314 helical conformation in aqueous media, while Peptide III is disordered in water [24].

Peptide IV: Among the first β-peptides composed exclusively of acyclic β-amino acids adopting stable 314 conformation in water, designed as protein-protein interaction inhibitors [24].

Peptide V: Designed to adopt hairpin-like conformations in aqueous solution [24].

Peptide VI: Forms elongated strands in DMSO and assembles into nanostructured sheet-mimicking fibers in methanol and water [24].

Peptide VII (Zwit-EYYK): Designed to form stable octameric bundles in the shape of two cupped hands with four "fingers" of 314 helices each [24].

This diversity ensured comprehensive assessment of force field performance across different secondary structures and association behaviors.

Figure 1: Molecular Dynamics Workflow for β-Peptide Force Field Validation

Advanced Applications: β-Peptide Self-Assembly and Functional Materials

Supramolecular Self-Assembly and Stimuli-Responsive Materials

Beyond monomeric structures, β-peptides demonstrate remarkable supramolecular self-assembly capabilities, forming well-defined nanostructures with applications in tissue engineering, cell culture, and drug delivery [25]. These foldectures—self-assembled molecular architectures of β-peptide foldamers—exhibit uniform alignment in response to external magnetic fields and show instantaneous orientational motion in dynamic magnetic fields [26]. This magnetotactic behavior stems from amplified anisotropy of diamagnetic susceptibilities resulting from well-ordered molecular packing, reminiscent of magnetosomes in magnetotactic bacteria [26].

The magnetic alignment of foldectures can be explained by collective diamagnetic anisotropy in their ordered molecular packing. Theoretical calculations of diamagnetic susceptibilities along orthogonal crystallographic axes reveal that foldectures align their easy magnetization axis (the direction with the largest, least negative diamagnetic susceptibility) parallel to applied static magnetic fields [26]. For instance, rhombic rod foldectures (F1) from BocNH-ACPC6-OH align their longitudinal axes parallel to the field direction, while rectangular plates (F2) from BocNH-ACPC8-OBn align their minor axes parallel to the field [26]. This precise control over molecular orientation enables design of stimuli-responsive molecular systems capable of undergoing mechanical work, providing inspiration for next-generation biocompatible peptide-based molecular machines [26].

Challenges in Modeling Self-Assembly

Computational modeling of β-peptide self-assembly presents significant challenges, as accurate prediction requires capturing the collective balance of non-covalent interactions that drive association under different conditions [25]. While molecular modeling can provide crucial insights into self-assembly mechanisms and atomistic models of resulting materials, only CHARMM successfully demonstrated ability to both maintain pre-formed associates and yield spontaneous oligomer formation in simulations [24]. Amber could hold together already formed associates but failed to produce spontaneous oligomer formation, while GROMOS showed limited oligomerization capabilities [24].

Emerging Methodologies in Force Field Optimization

Bayesian Inference for Force Field Refinement

Recent advances in force field optimization leverage Bayesian inference methods to address challenges in parameterizing models against experimental data. The Bayesian Inference of Conformational Populations (BICePs) algorithm provides a robust framework for refining force field parameters against ensemble-averaged experimental measurements that are often sparse and/or noisy [27]. BICePs samples the full posterior distribution of conformational populations and experimental uncertainty, treating uncertainty in observables as nuisance parameters [27].

The algorithm uses a replica-averaged forward model that becomes a maximum-entropy reweighting method in the limit of large replica numbers [27]. This approach employs specialized likelihood functions, including Student's likelihood models, that automatically detect and down-weight data points subject to systematic error—a significant advantage when working with experimental measurements containing unknown random and systematic errors [27]. The BICePs score, a free energy-like quantity reflecting total evidence for a model, serves as an objective function for variational optimization of force field parameters [27].

Future Directions in Force Field Development

The extension of BICePs for automated force field refinement represents a promising direction for robust parameterization of molecular potentials [27]. By efficiently optimizing complex parameter spaces through calculation of first and second derivatives of the BICePs score, this approach enables automatic force field optimization against ensemble-averaged observables [27]. Such methodologies may address current limitations in β-peptide modeling, particularly for challenging systems like self-assembling foldectures and complex oligomeric bundles.

Future force field development will likely focus on improving transferability across diverse β-peptide sequences, accuracy in predicting association behavior, and compatibility with enhanced sampling methods. As β-peptides continue to find applications in designing functional nanomaterials and biomedical constructs, reliable computational models will remain indispensable for molecular-level understanding and rational design.

This case study demonstrates that accurate modeling of β-peptides and non-natural foldamers remains challenging but achievable with carefully parameterized force fields. The CHARMM family, particularly with recent improvements in backbone torsion parameters, currently provides the most reliable performance across diverse β-peptide systems [24]. However, limitations persist in modeling spontaneous oligomerization, an area where further force field refinement is needed.

The synergy between experimental and computational approaches continues to drive progress in this field, enabling fully atomistic models of β-peptide materials and their functional properties [25]. Emerging methodologies like Bayesian inference for force field optimization offer promising avenues for addressing current challenges, particularly in handling experimental uncertainty and systematic errors [27]. As computational power increases and algorithms improve, molecular dynamics simulations will play an increasingly vital role in unlocking the potential of β-peptides for designing novel biomaterials with tailored structures and functions.

Methodologies for Integrating Simulations and Experiments for Robust Ensembles

The characterization of biomolecular conformational ensembles, particularly for intrinsically disordered proteins (IDPs), represents a significant challenge in structural biology and drug development. IDPs, which lack a stable three-dimensional structure and instead populate a heterogeneous ensemble of conformations, are implicated in a wide range of biological processes and human diseases [28] [29]. The accurate description of these conformational ensembles is crucial for understanding their biological functions and for rational drug design efforts targeting these proteins [30].

In this landscape, the Maximum Entropy Reweighting framework has emerged as a powerful approach for integrating experimental data with computational models to determine accurate conformational ensembles. This framework enables researchers to refine ensembles derived from molecular dynamics (MD) simulations by incorporating experimental measurements while introducing minimal bias [28] [30] [29]. The core principle of maximum entropy reweighting is to find the least biased adjustment to a simulated ensemble that improves agreement with experimental data, thereby preserving the physical realism of the original simulation while correcting for force field inaccuracies or sampling limitations [29].

This guide provides a comprehensive comparison of maximum entropy reweighting against alternative methods for force field validation and conformational ensemble determination, with specific emphasis on applications for IDPs. We present experimental data, detailed methodologies, and practical resources to assist researchers in selecting and implementing the most appropriate integration strategy for their specific research needs.

Key Integration Approaches for Conformational Ensemble Determination

Multiple computational strategies have been developed to integrate experimental data with simulations for conformational ensemble determination, each with distinct theoretical foundations and practical implications.

Table 1: Comparison of Integrative Methods for Conformational Ensemble Determination

| Method | Theoretical Basis | Key Advantages | Limitations | Representative Applications |

|---|---|---|---|---|

| Maximum Entropy Reweighting | Information theory; minimal perturbation principle | Preserves original simulation diversity; minimal bias introduction; handles multiple data types | Dependent on quality of initial sampling; cannot generate new conformations | IDP ensembles with NMR/SAS data [31] [30] [32] |

| Bayesian/Maximum Entropy (BME) | Bayesian inference with maximum entropy prior | Accounts for experimental and prediction errors; systematic uncertainty quantification | Hyperparameter (θ) selection requires careful validation [31] | IDP ensembles with NMR chemical shifts [31] [32] |

| Maximum Entropy Optimized Force Fields | Iterative parameter optimization with maximum entropy biases | Creates transferable force fields; enables de novo prediction | Requires multiple proteins for parameterization; linear approximation limitations | MOFF force field for IDPs [33] |

| HDX Ensemble Reweighting (HDXer) | Maximum entropy applied to hydrogen-deuterium exchange data | Specifically tailored for HDX-MS data; handles exchange-competent states | Dependent on accuracy of protection factor prediction model | Membrane proteins like LeuT [34] |

Maximum Entropy Reweighting: Core Theoretical Framework

The maximum entropy reweighting framework operates on the principle of minimizing the perturbation to the original simulated ensemble while maximizing agreement with experimental data. Mathematically, this is achieved by optimizing the weights (w_t) of individual conformations in the ensemble to minimize the function:

[ L = \sumt wt \ln \frac{wt}{wt^0} + \sumi \lambdai \left( \langle Oi^{calc} \rangle - Oi^{exp} \right) ]

where (wt^0) are the original weights from the simulation (typically uniform), (\lambdai) are Lagrange multipliers that enforce agreement with experimental observables, (\langle Oi^{calc} \rangle) is the ensemble-averaged calculated value of observable (i), and (Oi^{exp}) is the corresponding experimental value [28] [29]. This formulation ensures that the relative entropy (Kullback-Leibler divergence) between the initial and reweighted ensembles is minimized while satisfying the experimental constraints.

The Bayesian extension of maximum entropy (BME) incorporates uncertainties in both experimental measurements and forward model predictions through a hyperparameter θ that balances the trust between the prior simulation and experimental data [31] [32]:

[ \chi^2 = \sumi \frac{(\langle Oi^{calc} \rangle - Oi^{exp})^2}{\sigmai^2} + \frac{1}{\theta} \sumt wt \ln \frac{wt}{wt^0} ]

where (\sigma_i) represents the uncertainty in experimental measurements and forward model predictions [31] [32]. The optimal value of θ is typically determined through validation methods, such as using a subset of experimental data not included in the reweighting procedure [31].

Experimental Protocols and Validation

Standard Protocol for Maximum Entropy Reweighting

The implementation of maximum entropy reweighting follows a systematic workflow that can be applied to various biological systems and experimental data types:

Generation of Initial Conformational Ensemble: Perform extensive MD simulations using state-of-the-art force fields to sample the conformational space. For IDPs, this typically involves microsecond-timescale simulations with force fields such as a99SB-disp, CHARMM36m, or AMBER03ws [31] [30].

Selection and Calculation of Experimental Observables: Identify appropriate experimental measurements for reweighting, such as NMR chemical shifts, residual dipolar couplings, J-couplings, or SAXS profiles. Calculate these observables from each conformation in the ensemble using appropriate forward models [30] [29].

Application of Reweighting Algorithm: Optimize conformational weights using maximum entropy or Bayesian maximum entropy algorithms to improve agreement between calculated and experimental ensemble averages while minimizing the perturbation to the original ensemble [31] [30].

Validation of Reweighted Ensemble: Assess the quality of the reweighted ensemble through statistical measures such as the Kish ratio (effective ensemble size) and cross-validation with experimental data not included in the reweighting process [31] [30].

Analysis of Conformational Properties: Examine the structural and dynamic properties of the reweighted ensemble, including secondary structure propensity, radius of gyration, and transient structural elements [30].

Experimental Data Supporting Method Efficacy

Recent studies have provided robust quantitative evidence demonstrating the effectiveness of maximum entropy reweighting for determining accurate conformational ensembles:

Table 2: Experimental Validation of Maximum Entropy Reweighting for IDP Ensemble Determination

| System Studied | Experimental Data | Force Fields Compared | Key Result | Reference |

|---|---|---|---|---|

| ACTR (71 residues) | NMR chemical shifts | a99SB-disp, a03ws, C36m | BME reweighting improved agreement with target ensemble; consistent results across force fields after reweighting | [31] [32] |

| Aβ40, drkN SH3, ACTR, PaaA2, α-synuclein | NMR chemical shifts, J-couplings, RDCs, SAXS | a99SB-disp, C22*, C36m | Converged ensembles obtained for 3/5 IDPs after reweighting; force-field independent ensembles achieved | [30] |

| LeuT (membrane transporter) | HDX-MS data | Multiple simulation conditions | HDXer correctly identified relevant conformational states from artificial data | [34] |

A particularly compelling demonstration comes from a 2025 study that applied maximum entropy reweighting to five IDPs using three different force fields [30]. This research found that for three of the five IDPs (Aβ40, ACTR, and drkN SH3), the reweighted ensembles converged to highly similar conformational distributions regardless of the initial force field used. This convergence suggests that with sufficient experimental data, maximum entropy reweighting can produce force-field independent approximations of the true solution ensembles [30]. For the remaining two IDPs (PaaA2 and α-synuclein), where initial force fields sampled distinct regions of conformational space, the reweighting procedure clearly identified the most accurate representation of the solution ensemble [30].

Visualization and Workflow

Maximum Entropy Reweighting Workflow

The following diagram illustrates the standard workflow for implementing maximum entropy reweighting of molecular dynamics simulations with experimental data:

Theoretical Basis of Maximum Entropy Principle

The mathematical foundation of maximum entropy reweighting balances agreement with experiment against minimal perturbation to the original simulation:

Research Reagent Solutions

Implementation of maximum entropy reweighting requires specific computational tools and resources. The following table outlines essential components for successful application of these methods:

Table 3: Essential Research Reagents for Maximum Entropy Reweighting

| Resource Type | Specific Examples | Function and Application | Availability |

|---|---|---|---|

| Molecular Dynamics Engines | GROMACS, AMBER, CHARMM, OPENMM | Generate initial conformational ensembles through MD simulation | Open source and commercial |

| Forward Model Software | SPARTA+, SHIFTX2, PPM, PALES | Calculate NMR observables (chemical shifts, RDCs) from structures | Open source |

| Reweighting Algorithms | BME, HDXer, PLUMED | Implement maximum entropy and Bayesian reweighting protocols | Open source |

| Benchmark IDP Systems | ACTR, Aβ40, α-synuclein, drkN SH3 | Test and validate reweighting methodologies | Protein Ensemble Database |

| Experimental Data Repositories | BMRB, SASBDB, PED | Provide experimental data for reweighting and validation | Public databases |

The Maximum Entropy Reweighting framework represents a robust and powerful approach for integrating experimental data with molecular simulations to determine accurate conformational ensembles of biomolecules, particularly for challenging systems such as IDPs. Through systematic comparison with alternative integration methods, we have demonstrated that maximum entropy reweighting provides an optimal balance between respecting the physical realism of simulations and incorporating experimental constraints.

The experimental data and protocols presented in this guide highlight the method's ability to produce convergent, force-field independent ensembles when sufficient experimental data is available. As the field of structural biology continues to grapple with the characterization of heterogeneous and dynamic biomolecules, maximum entropy reweighting stands as an essential tool in the researcher's toolkit, enabling statistically rigorous integration of diverse experimental data sources for force field validation and conformational ensemble determination.

For researchers embarking on studies of IDPs and other flexible systems, the implementation of maximum entropy reweighting with the reagents and protocols outlined here provides a pathway to determining accurate atomic-resolution ensembles that can inform biological mechanism and drug discovery efforts.

Utilizing Experimental Restraints from NMR, SAXS, and other Biophysical Techniques

Molecular dynamics (MD) simulations have become an indispensable tool for studying biological macromolecules at atomic resolution. The accuracy of these simulations, however, is critically dependent on the empirical force fields that describe interatomic interactions. The validation and refinement of these force fields against experimental data is a fundamental challenge in computational biophysics. Within this framework, experimental restraints from techniques such as Nuclear Magnetic Resonance (NMR) spectroscopy and Small-Angle X-Ray Scattering (SAXS) provide essential data for assessing and improving the accuracy of force field parameters. These techniques offer highly complementary information: NMR yields atomic-resolution detail on local structure and dynamics for moderately sized biomolecules, while SAXS provides low-resolution information on overall shape, size, and flexibility over a wide range of particle sizes. The joint application of these techniques facilitates comprehensive characterization of biomacromolecular solutions and creates a robust benchmark for validating the statistical ensembles generated by MD simulations.

Comparative Performance of Modern Force Fields

Extensive validation studies have been conducted to evaluate the performance of different protein force fields against experimental data from NMR and other biophysical techniques. The tables below summarize key findings from systematic comparisons.

Table 1: Summary of Force Field Performance in Folded State Simulations [2] [12]

| Force Field | Backbone RMSD (Å) | Native Hydrogen Bonds | Radius of Gyration | J-coupling Constants | Side-Chain χ₁ Angles |

|---|---|---|---|---|---|

| Amber ff99SB-ILDN | 1.5-2.5 | Good agreement | Slight compaction | Good agreement | Improved agreement |

| CHARMM22* | 1.4-2.3 | Good agreement | Good agreement | Good agreement | Good agreement |

| CHARMM27 | 1.6-2.8 | Slight deviations | Moderate expansion | Moderate deviations | Significant deviations |

| CHARMM36m | 1.3-2.2 | Best agreement | Good agreement | Best agreement | Good agreement |

| OPLS-AA | 1.7-2.9 | Moderate deviations | Moderate expansion | Moderate deviations | Moderate deviations |

Table 2: Performance Assessment with Intrinsically Disordered Proteins (IDPs) [7]

| Force Field | SAXS Profile Agreement (χ²) | NMR Chemical Shifts | Ensemble Diversity | Convergence after Reweighting |

|---|---|---|---|---|

| a99SB-disp | 0.8-1.5 | Excellent | Accurate | High |

| CHARMM22* | 1.2-2.1 | Good | Slightly overcompact | Moderate |

| CHARMM36m | 1.0-1.8 | Very Good | Accurate | High |

| Amber ff99SB-ILDN | 1.5-3.0 | Moderate | Overcompact | Low |

Table 3: Agreement with Specific NMR Observables [2] [12]

| Force Field | Backbone NOEs | Side-Chain NOEs | RDCs (Residual Dipolar Couplings) | ³JHNα-Couplings | Order Parameters (S²) |

|---|---|---|---|---|---|

| CHARMM22* | >95% satisfied | >90% satisfied | Q-factor: 0.25-0.35 | RMSD: 0.8-1.2 Hz | Good correlation |

| CHARMM36m | >97% satisfied | >92% satisfied | Q-factor: 0.20-0.30 | RMSD: 0.7-1.0 Hz | Excellent correlation |

| Amber ff99SB-ILDN | >92% satisfied | >85% satisfied | Q-factor: 0.30-0.40 | RMSD: 1.0-1.5 Hz | Moderate correlation |

| OPLS-AA | >90% satisfied | >82% satisfied | Q-factor: 0.35-0.45 | RMSD: 1.2-1.8 Hz | Moderate correlation |

The validation data reveal that while modern force fields have improved significantly, they exhibit distinct strengths and weaknesses. CHARMM36m and a99SB-disp generally show excellent agreement with experimental data for both folded proteins and intrinsically disordered proteins. The performance gaps are more pronounced for IDPs, where some force fields tend to produce overly compact structures. Recent versions that incorporate additional backbone and side-chain corrections generally outperform their predecessors.

Experimental Protocols and Methodologies

NMR Data Collection for Structural Validation

NMR provides multiple types of experimental parameters for force field validation. The standard protocol involves:

Sample Preparation: Protein samples (typically 0.5-1.0 mM) in appropriate buffers are prepared with uniform ¹⁵N and/or ¹³C labeling for multidimensional NMR experiments [12].

Data Collection:

- NOESY Spectra: Nuclear Overhauser Effect spectroscopy provides distance restraints (typically 1.8-6.0 Å) between proton pairs [2].

- Residual Dipolar Couplings (RDCs): Measured in weakly aligning media, RDCs provide orientational restraints relative to a molecular frame [35].

- J-coupling Constants: Three-bond J-couplings (³JHNα) report on backbone dihedral angles [12].

- Chemical Shifts: Particularly sensitive to secondary structure and conformational dynamics [7].

- Relaxation Measurements: ¹⁵N R₁, R₂, and NOE provide information on dynamics across various timescales [12].

Data Analysis: Experimental data are compared with back-calculated values from MD simulations using specialized software such as SHIFTX2 for chemical shifts and PALES for RDCs [7].

SAXS Data Acquisition and Processing

SAXS provides low-resolution structural information in solution. The standard experimental workflow includes:

Sample Preparation and Data Collection:

- Highly pure, monodisperse protein solutions at multiple concentrations (typically 1-10 mg/mL) are required [36].

- Measurements are performed at synchrotron beamlines or with laboratory sources, with exposure times optimized to minimize radiation damage [36].

- Data are collected over a momentum transfer range of 0.01 < q < 5 nm⁻¹, where q = 4πsin(θ)/λ [36].

Primary Data Analysis:

- Radius of Gyration (Rg): Determined from the Guinier plot (ln[I(s)] vs. s²) at low angles (s < 1.3/Rg) [36].

- Molecular Mass: Estimated from the forward scattering I(0) by comparison with standard proteins [36].

- Distance Distribution Function: p(r) computed via indirect Fourier transformation of I(s) using programs such as GNOM [36].

- Kratky Plot: (s²I(s) vs. s) used to assess the degree of foldedness and flexibility [7].

Validation Against Simulations: Theoretical scattering profiles are computed from MD trajectories using methods such as CRYSOL and compared with experimental data [7].

The most powerful validation strategies combine data from multiple experimental techniques:

Maximum Entropy Reweighting: This approach integrates MD simulations with experimental data by minimizing the perturbation to the simulated ensemble while maximizing agreement with experiments [7]. The protocol involves:

- Generating an initial ensemble from extended MD simulations.

- Calculating experimental observables for each frame using appropriate forward models.

- Optimizing conformational weights to satisfy experimental restraints while maintaining maximum entropy [7].

Bayesian Inference of Conformational Populations (BICePs): This method samples the full posterior distribution of conformational populations and experimental uncertainty, providing robust validation even with sparse or noisy data [27].