Force Field Parameters for Energy Minimization: A Modern Guide for Biomedical Researchers

This article provides a comprehensive guide to force field parameters for energy minimization in molecular dynamics simulations, tailored for researchers and professionals in drug development.

Force Field Parameters for Energy Minimization: A Modern Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide to force field parameters for energy minimization in molecular dynamics simulations, tailored for researchers and professionals in drug development. It covers the foundational principles of molecular mechanics force fields, explores cutting-edge automated and data-driven parametrization methodologies, and addresses common troubleshooting and optimization challenges. The content further details rigorous validation protocols and comparative analyses of popular force fields, synthesizing key insights to enhance the accuracy and reliability of computational studies in biomedical research.

Understanding Force Field Fundamentals: The Building Blocks of Molecular Simulations

In computational chemistry and materials science, the Potential Energy Surface (PES) is a fundamental concept for investigating chemical reaction processes and the physicochemical properties of materials. The PES represents the total energy of a system as a function of the spatial coordinates of its constituent atoms [1] [2]. From a geometric perspective, this energy landscape over the configuration space allows researchers to explore atomic structure properties, determine minimum energy configurations, and calculate reaction rates [2]. The practical utility of the PES extends to dynamic simulations based on Newton's laws, where the force on each atom, required for numerical integration of the equations of motion, is derived as the negative gradient of the potential energy with respect to atomic position (Fi = -∂E/∂ri) [2]. This relationship is crucial for geometric optimization algorithms that identify critical points on the PES, such as local minima (stable states) and saddle points (transition states) [2].

The primary challenge in computational simulations lies in constructing the PES both efficiently and accurately. Quantum mechanical (QM) methods, such as density functional theory (DFT), can provide highly accurate PES descriptions but are computationally prohibitive for all but the smallest systems due to their scaling with the number of atoms [1] [2]. Force field methods address this limitation by establishing a mapping between system energy and atomic positions/charges using simplified functional relationships parameterized from QM data [1] [2]. This approach significantly reduces computational complexity, enabling the simulation of large-scale systems—including polymers, biomolecules, and heterogeneous catalysts—at scales orders of magnitude beyond the practical reach of pure QM methods [1] [2]. This technical guide provides an in-depth examination of force field methodologies, their mathematical foundations, and their application in energy minimization research.

Force Field Classifications and Mathematical Formulations

Force fields can be broadly categorized into three distinct types based on their functional forms and applicable systems: classical force fields, reactive force fields, and machine learning force fields. The following table summarizes their key characteristics:

Table 1: Comparison of Major Force Field Types

| Force Field Type | Typical Number of Parameters | Interpretability | Computational Cost | Primary Applications |

|---|---|---|---|---|

| Classical Force Fields | 10 - 100 [2] | High (parameters often correspond to physical quantities) [2] | Low [2] | Non-reactive molecular dynamics, adsorption, diffusion, structure prediction [1] [2] |

| Reactive Force Fields (ReaxFF) | 100 - 500 [2] | Moderate (some terms abstracted from physical meaning) [2] | Moderate [2] | Bond formation/breaking, combustion, catalytic reactions, decomposition processes [3] [2] |

| Machine Learning Force Fields | 10^4 - 10^7 [2] | Low (complex relationships within neural networks) [2] | Variable (Lower during application, high during training) [1] [2] | Complex reactive processes with near-DFT accuracy [3] [2] |

Classical Force Fields

Classical force fields calculate a system's energy using simplified interatomic potential functions designed for modeling non-reactive interactions [2]. The general functional form typically includes terms for bonded interactions (within a certain bonding range) and non-bonded interactions (between atoms further apart) [2]:

\[ E{\text{total}} = E{\text{bonded}}} + E_{\text{non-bonded}}} \]

\[ E{\text{bonded}}} = \sum{\text{bonds}} Kr(r - r{eq})^2 + \sum{\text{angles}} K\theta(\theta - \theta{eq})^2 + \sum{\text{dihedrals}} \frac{V_n}{2} [1 + \cos(n\phi - \gamma)] \]

\[

E{\text{non-bonded}}} = \sum{i

The bonded terms often employ harmonic potentials for bond stretching and angle bending, while non-bonded interactions typically include Lennard-Jones potentials for van der Waals dispersion forces and Coulomb's law for electrostatic interactions [2]. The parameters in these equations (force constants, equilibrium values, partial charges) are derived from QM calculations and/or experimental data [2]. Although this formulation cannot model chemical reactions involving bond breaking/formation, its computational efficiency enables simulations of systems at length scales of 10–100 nm and time scales from nanoseconds to microseconds [2].

Reactive Force Fields (ReaxFF)

Reactive force fields address the fundamental limitation of classical force fields by introducing a bond-order formalism that dynamically describes bond formation and breaking [3] [2]. In ReaxFF, the bond order—calculated from interatomic distances—governs the bonding energy and updates continuously during simulation as atoms move [3]. This approach incorporates bond-order-dependent polarization charges to model both reactive and non-reactive atomic interactions through complex functional forms parameterized against QM data [3] [2]. While ReaxFF has been successfully applied to study decomposition and combustion processes of various materials, it often struggles to achieve full DFT-level accuracy in describing reaction potential energy surfaces, particularly for new molecular systems where parameter sets may exhibit significant deviations [3].

Machine Learning Force Fields (MLFFs)

Machine learning force fields represent a paradigm shift, using statistical models trained on QM data to predict potential energy without explicit physical functional forms [3] [2]. MLFFs, particularly those based on neural network potentials (NNPs) and graph neural networks (GNNs), can overcome the traditional trade-off between computational accuracy and efficiency [3]. For instance, the Deep Potential (DP) scheme provides atomic-scale descriptions of complex reactions with near-DFT precision while being significantly more efficient than traditional QM calculations [3]. Recent advances, such as the EMFF-2025 model for C, H, N, O-based high-energy materials, demonstrate how transfer learning strategies can create general-purpose NNPs that predict both mechanical properties and chemical behavior with minimal new training data [3]. These models typically operate by using atomic local environments as inputs to a neural network that outputs atomic energies, with the total potential energy being the sum of these atomic contributions [3].

Force Field Parameterization and Optimization Methodologies

Parameter optimization is crucial for developing accurate and transferable force fields. This process involves adjusting force field parameters to minimize the discrepancy between simulation results and reference data, which can include both QM calculations and experimental measurements [4] [5].

Traditional Parameter Optimization

Traditional parameter optimization employs gradient-based or global optimization algorithms to refine parameters against target properties [4]. For example, a multiscale optimization might adjust Lennard-Jones parameters for carbon and hydrogen atoms to reproduce target properties such as a molecule's relative conformational energies and its bulk-phase density [4]. This process typically involves:

- Defining an Objective Function: A function that quantifies the difference between simulated and target properties [4] [5].

- Running Iterative Simulations: Conducting molecular dynamics simulations with candidate parameter sets to compute the objective function [4].

- Updating Parameters: Using optimization algorithms to generate new parameter sets that minimize the objective function [4] [5].

This approach can be computationally intensive, as it requires numerous expensive MD simulations during the optimization loop [4].

Machine Learning-Accelerated Optimization

Recent research demonstrates that machine learning surrogate models can dramatically accelerate force field parameter optimization. In one study, a ML model was trained to predict target properties (conformational energies and bulk density) directly from force field parameters, replacing the need for most MD simulations during optimization [4]. This approach reduced the required optimization time by a factor of approximately 20× while producing force fields of similar quality to those obtained through traditional methods [4]. The workflow for implementing such an acceleration involves:

- Training Data Acquisition: Running a limited set of MD simulations across the parameter space to generate input-output pairs for training [4].

- Data Preparation and Model Selection: Processing simulation data and selecting appropriate ML architectures (e.g., neural networks) [4].

- Surrogate Model Deployment: Using the trained ML model within the optimization loop to rapidly evaluate candidate parameters [4].

Bayesian Inference for Conformational Populations (BICePs)

For refinement against experimental data, Bayesian methods offer a robust framework for force field parameterization. The BICePs algorithm samples the full posterior distribution of conformational populations while accounting for uncertainties in both experimental measurements and forward models [5]. This approach is particularly valuable when working with sparse or noisy experimental observables, as it incorporates specialized likelihood functions that can automatically detect and down-weight data points subject to systematic error [5]. The BICePs score, a free energy-like quantity, serves as an objective function for model selection and parameter optimization, enabling automated force field refinement while simultaneously sampling uncertainty distributions [5].

Experimental Protocols and Validation Frameworks

Protocol: ML-Accelerated Parameter Optimization

This protocol outlines the methodology for accelerating force field parameter optimization using machine learning surrogate models, based on recent research [4].

Define Optimization Target:

- Identify specific properties for parameterization (e.g., n-octane's relative conformational energies and bulk-phase density) [4].

- Establish reference data (QM calculations or experimental measurements).

Generate Training Dataset:

- Perform initial molecular dynamics simulations across a designed set of parameter combinations (e.g., varying Lennard-Jones parameters for carbon and hydrogen) [4].

- Record the resulting target properties for each parameter set to create input-output pairs.

Develop ML Surrogate Model:

- Prepare data through normalization and feature scaling.

- Train a neural network or other ML model to predict target properties directly from force field parameters [4].

- Validate model accuracy against held-out simulation data.

Execute Optimization Loop:

- Replace MD simulations with surrogate model predictions during parameter optimization [4].

- Use gradient-based optimization algorithms to find parameters that minimize the difference between predicted and target properties.

Final Validation:

- Run full MD simulations with the optimized parameters to verify they reproduce the target properties with the expected quality [4].

Protocol: Validation of General Neural Network Potentials

For validating general-purpose neural network potentials like the EMFF-2025 model, the following protocol ensures predictive accuracy and transferability [3].

Model Training with Transfer Learning:

Energy and Force Accuracy Assessment:

Property Prediction Benchmarking:

Chemical Space Analysis:

- Integrate the validated NNP with analytical methods such as Principal Component Analysis (PCA) and correlation heatmaps to explore intrinsic relationships and formation mechanisms of structural motifs in the chemical space [3].

Performance Metrics and Validation

The EMFF-2025 model demonstrates the capabilities of modern MLFFs, achieving DFT-level accuracy in predicting energies and forces for 20 different high-energy materials [3]. As shown in validation studies, the model's energy predictions closely align with DFT calculations along the diagonal, with mean absolute errors predominantly within ±0.1 eV/atom, and force MAEs mainly within ±2 eV/Å [3]. This level of accuracy enables reliable large-scale MD simulations of complex processes such as thermal decomposition, revealing surprisingly similar high-temperature decomposition mechanisms across most HEMs—a finding that challenges conventional views of material-specific behavior [3].

Table 2: Essential Computational Tools for Force Field Research and Development

| Tool / Resource | Category | Primary Function | Application Example |

|---|---|---|---|

| DP-GEN [3] | Software Framework | Automated generation of neural network potentials via active learning | Developing general-purpose NNPs for materials systems [3] |

| BICePs [5] | Algorithm | Bayesian inference for force field refinement against experimental data | Parameterizing potentials using sparse/noisy ensemble-averaged measurements [5] |

| Lennard-Jones Parameters [4] | Force Field Parameters | Describing van der Waals dispersion/repulsion interactions | Optimizing non-bonded interactions for organic molecules [4] |

| ReaxFF [3] [2] | Reactive Force Field | Modeling bond formation/breaking in chemical reactions | Simulating decomposition mechanisms of high-energy materials [3] |

| Quantum Chemistry Codes(VASP, CP2K, Gaussian) [2] | Reference Calculator | Generating training data via QM calculations | Providing energy/force labels for MLFF training [3] [2] |

| ML Surrogate Models [4] | Acceleration Tool | Predicting properties to avoid expensive MD simulations | Speeding up force field parameter optimization by ~20× [4] |

Force fields serve as indispensable mathematical models that bridge the critical gap between computational tractability and physical accuracy in atomic-level simulations. The evolution from classical to reactive and now to machine learning force fields represents a continuous effort to expand the applicability and improve the fidelity of these models. Classical force fields remain effective for non-reactive processes in large systems, while reactive force fields enable the investigation of chemical reactions, though with potential accuracy limitations. Machine learning force fields, particularly those leveraging transfer learning and surrogate models, are emerging as powerful tools that can achieve near-DFT accuracy while maintaining computational efficiency for large-scale simulations. As optimization methodologies continue to advance—incorporating Bayesian inference, ML acceleration, and automated parameterization—force fields will play an increasingly vital role in energy minimization research, catalyst design, and materials discovery, providing researchers with unprecedented capabilities to explore and manipulate matter at the atomic level.

In the realm of molecular dynamics (MD) and energy minimization research, force fields serve as the fundamental mathematical models that describe the potential energy of a molecular system as a function of its atomic coordinates [6]. The accuracy and computational efficiency of these simulations hinge on the precise formulation and parameterization of the force field's components. The prevailing approach in biomolecular force fields involves a clear separation of the total potential energy into bonded and non-bonded interactions [7] [8]. This separation is not merely organizational; it reflects the distinct physical origins of these interactions and enables the development of specialized, efficient computational algorithms for energy and force calculations. For researchers and drug development professionals, a deep understanding of this dichotomy is essential for interpreting simulation results, troubleshooting unstable dynamics, and developing novel parameters for new molecular species.

The general form of the potential energy function in an additive force field can be summarized as: ( E{total} = E{bonded} + E{non-bonded} ) [7] [6], where the bonded energy term is further decomposed into ( E{bonded} = E{bond} + E{angle} + E{dihedral} + E{improper} ), and the non-bonded energy comprises ( E{non-bonded} = E{electrostatic} + E_{van der Waals} ) [8] [6]. This article provides an in-depth technical examination of these core components, their functional forms, parameterization strategies, and their critical roles in energy minimization protocols within computational drug discovery.

Bonded Interaction Terms

Bonded interactions describe the energy associated with the covalent connectivity between atoms within a molecule. These terms constrain the internal geometry and are characterized by their high force constants, necessitating relatively small integration time steps in MD simulations [8]. The bonded component is a sum of several specific potentials, each governing a different internal degree of freedom.

Table 1: Summary of Bonded Interaction Terms in Molecular Force Fields

| Interaction Type | Mathematical Form | Key Parameters | Physical Description |

|---|---|---|---|

| Bond Stretching | ( V{Bond} = \frac{k{ij}}{2}(l{ij} - l{0,ij})^2 ) [8] [6] | Force constant ( k{ij} ), equilibrium distance ( l{0,ij} ) [6] | Energetic cost of stretching or compressing a covalent bond from its ideal length, modeled as a harmonic spring [6]. |

| Angle Bending | ( V{Angle} = \frac{k{\theta}}{2}(\theta{ijk} - \theta{0})^2 ) [8] | Force constant ( k{\theta} ), equilibrium angle ( \theta{0} ) [8] | Energetic cost of bending the angle between two adjacent bonds from its equilibrium value [8]. |

| Torsional Dihedral | ( V{Dihedral} = k\phi (1 + \cos(n\phi - \delta)) ) [8] | Force constant ( k_\phi ), periodicity ( n ), phase angle ( \delta ) [8] | Energy barrier for rotation around a central bond, describing conformational preferences [8]. |

| Improper Dihedral | ( V{Improper} = \frac{k\psi}{2}(\psi - \psi_0)^2 ) [8] | Force constant ( k\psi ), equilibrium angle ( \psi0 ) [8] | Enforces planarity in aromatic rings and other sp²-hybridized systems (e.g., out-of-plane bending) [8]. |

Advanced Bonded Potentials and Cross-Terms

While the harmonic potential for bond stretching is sufficient for most simulations near equilibrium, more advanced potentials exist for specialized applications. The Morse potential provides a more realistic description that allows for bond breaking at high stretching, making it essential for reactive force fields, though it is computationally more expensive [6]. Class 2 and Class 3 force fields incorporate additional complexity through anharmonic terms (cubic, quartic) for bonds and angles, and cross-terms that account for coupling between different internal coordinates, such as bond stretching and angle bending [8]. One common example is the Urey-Bradley potential, which acts as a spring between the outer atoms of a bonded triplet (1-3 interaction), effectively coupling bond length and bond angle dynamics [8].

Non-Bonded Interaction Terms

Non-bonded interactions act between all atoms in a system, regardless of their covalent connectivity. These are computationally dominant in MD simulations because they scale with the square of the number of atoms, though efficient cut-off schemes and algorithms like Particle Mesh Ewald (PME) make these calculations tractable for large systems [9] [8]. Non-bonded terms capture intermolecular forces and intra-molecular long-range effects, primarily consisting of van der Waals and electrostatic interactions.

Van der Waals Interactions

Van der Waals (vdW) interactions encompass short-range attractive (dispersion) and repulsive (Pauli exclusion) forces. The most common model is the Lennard-Jones (LJ) 12-6 potential: [ V{LJ}(r{ij}) = 4\epsilon{ij} \left[ \left(\frac{\sigma{ij}}{r{ij}}\right)^{12} - \left(\frac{\sigma{ij}}{r{ij}}\right)^{6} \right] ] where ( \epsilon{ij} ) is the depth of the potential well, ( \sigma{ij} ) is the finite distance at which the inter-particle potential is zero, and ( r{ij} ) is the distance between atoms i and j [9] [8]. The repulsive term (( r^{-12} )) models the sharp energy increase when electron clouds overlap, while the attractive term (( r^{-6} )) models induced dipole-dipole dispersion forces [8].

An alternative to the LJ potential is the Buckingham (exp-6) potential: [ V{B}(r{ij}) = A{ij} \exp(-B{ij} r{ij}) - \frac{C{ij}}{r_{ij}^{6}} ] which replaces the ( r^{-12} ) repulsion with a more physically realistic exponential term [9] [8]. However, it is computationally more expensive and carries a risk of "Buckingham catastrophe" where the potential goes to negative infinity at very short distances if not properly handled [8].

Electrostatic Interactions

Electrostatic interactions between partially charged atoms are described by Coulomb's law: [ V{Coulomb}(r{ij}) = \frac{1}{4\pi\epsilon0} \frac{qi qj}{\epsilonr r{ij}} ] where ( qi ) and ( qj ) are the partial charges on atoms *i* and *j*, ( \epsilonr ) is the relative dielectric constant, and ( r_{ij} ) is their separation [9] [8]. These interactions are long-ranged because the potential decays slowly (( r^{-1} )), necessitating special treatment like Ewald summation, Particle Mesh Ewald (PME), or reaction field methods to handle them efficiently in periodic systems [9] [8].

Combination Rules for Non-Bonded Parameters

To avoid a combinatorial explosion of parameters for every possible pair of atom types, force fields use combination rules to calculate the interaction parameters ( \sigma{ij} ) and ( \epsilon{ij} ) between different atom types from their individual parameters ( \sigma{ii} ), ( \sigma{jj} ), ( \epsilon{ii} ), and ( \epsilon{jj} ) [7] [9] [8]. The choice of rule is force field-specific.

Table 2: Common Combination Rules for Lennard-Jones Parameters

| Rule Name | Formulation | Common Usage |

|---|---|---|

| Geometric (GROMOS) | ( C{12,ij} = \sqrt{C{12,ii} \cdot C{12,jj}} ); ( C{6,ij} = \sqrt{C{6,ii} \cdot C{6,jj}} ) or equivalently ( \sigma{ij} = \sqrt{\sigma{ii} \cdot \sigma{jj}} ); ( \epsilon{ij} = \sqrt{\epsilon{ii} \cdot \epsilon{jj}} ) [9] [8] | GROMOS, OPLS (rule 3 in GROMACS) [8] |

| Lorentz-Berthelot (AMBER, CHARMM) | ( \sigma{ij} = \frac{\sigma{ii} + \sigma{jj}}{2} ); ( \epsilon{ij} = \sqrt{\epsilon{ii} \cdot \epsilon{jj}} ) [9] [8] | AMBER, CHARMM (rule 2 in GROMACS) [8] |

Parameterization and the Atom Type Concept

The set of parameters ( k ), ( l0 ), ( \theta0 ), ( \sigma ), ( \epsilon ), and atomic charges ( q ) defines a specific force field [7]. A foundational concept for managing these parameters is the atom type, which assigns unique parameters to atoms based on their element and chemical environment (e.g., hybridization, involvement in functional groups) [7]. For instance, an sp³ carbon in an alkylic chain and a carbon in a carbonyl group are assigned different atom types because their chemical behaviors differ significantly [7]. This enables the transferability of parameters across molecules sharing similar chemical moieties.

Parameterization is the process of determining these numerical values, and it is crucial for the force field's accuracy and reliability [6]. The two primary data sources for parameterization are:

- Quantum Mechanical (QM) Calculations: Used to derive bonded parameters, torsional profiles, and atomic charges from high-level electronic structure calculations [6] [10].

- Experimental Data: Macroscopic properties like liquid densities, enthalpies of vaporization, and free energies of solvation are used to refine parameters, particularly for non-bonded interactions [6].

Modern, data-driven approaches are now being employed to expand chemical space coverage. For example, the ByteFF force field uses a graph neural network (GNN) trained on a massive QM dataset of 2.4 million optimized molecular fragments and 3.2 million torsion profiles to predict parameters for drug-like molecules, demonstrating state-of-the-art performance [10].

Energy Minimization and Experimental Protocols

Energy minimization, or geometry optimization, is a fundamental computational experiment that uses the force field to find the nearest local minimum energy configuration of a molecular system. This process relieves steric clashes and bad contacts, providing a stable initial structure for molecular dynamics simulations [11]. The forces required for minimization are derived as the negative gradient of the total potential energy: ( \vec{F} = -\nabla E_{total} ) [8].

Key Methodologies for Energy Minimization

The choice of algorithm for energy minimization depends on the system size and the desired balance between speed and robustness.

- Steepest Descent: A robust, first-order method that moves atomic coordinates in the direction of the negative energy gradient (( -\nabla E )). It is stable and efficient for initially removing large steric clashes but converges poorly near the energy minimum [11].

- Conjugate Gradient (CG): A more efficient first-order method that uses information from previous steps to choose conjugate search directions. It converges faster than Steepest Descent after the initial stages and is suitable for medium to large systems [11].

- Low-Memory Broyden–Fletcher–Goldfarb–Shanno (L-BFGS): A quasi-Newtonian method that builds an approximation of the Hessian (matrix of second derivatives). It typically converges faster than Conjugate Gradients but may not be fully parallelized in all MD software [11].

Protocol for System Preparation and Minimization

A typical energy minimization protocol for a solvated protein-ligand system involves the following steps:

- Hydrogen Atom Placement: Add hydrogen atoms using the

pdb2gmxor similar tool, selecting the appropriate force field. - System Solvation: Place the solute in a pre-equilibrated water box (e.g., TIP3P, SPC) using tools like

gmx solvate, ensuring a minimum cutoff distance between the solute and the box edge. - Neutralization: Add counterions (e.g., Na⁺, Cl⁻) to achieve a neutral net charge for the system using tools like

gmx genion. - Initial Minimization with Steepest Descent: Perform an initial minimization (e.g., 50-500 steps) with the Steepest Descent integrator to remove severe steric clashes. A typical energy tolerance (

emtol) for this stage is 1000 kJ·mol⁻¹·nm⁻¹ [11]. - Convergence with Conjugate Gradient/L-BFGS: Continue minimization using the Conjugate Gradient or L-BFGS algorithm until the desired tolerance is reached. A common convergence criterion for the maximum force is

emtol = 10.0 kJ·mol⁻¹·nm⁻¹[11].

Table 3: The Scientist's Toolkit: Essential Software and Parameters

| Tool/Parameter | Function/Description | Example/Default Value |

|---|---|---|

GROMACS gmx mdrun |

MD engine that performs the energy minimization calculation [11]. | N/A |

Integrator steep |

Invokes the steepest descent algorithm for initial minimization [11]. | integrator = steep |

Integrator cg |

Invokes the conjugate gradient algorithm for efficient convergence [11]. | integrator = cg |

| emtol | Convergence tolerance; minimization stops when the maximum force is below this value [11]. | emtol = 10.0 (kJ·mol⁻¹·nm⁻¹) |

| nsteps | Maximum number of minimization steps to perform [11]. | nsteps = 500 |

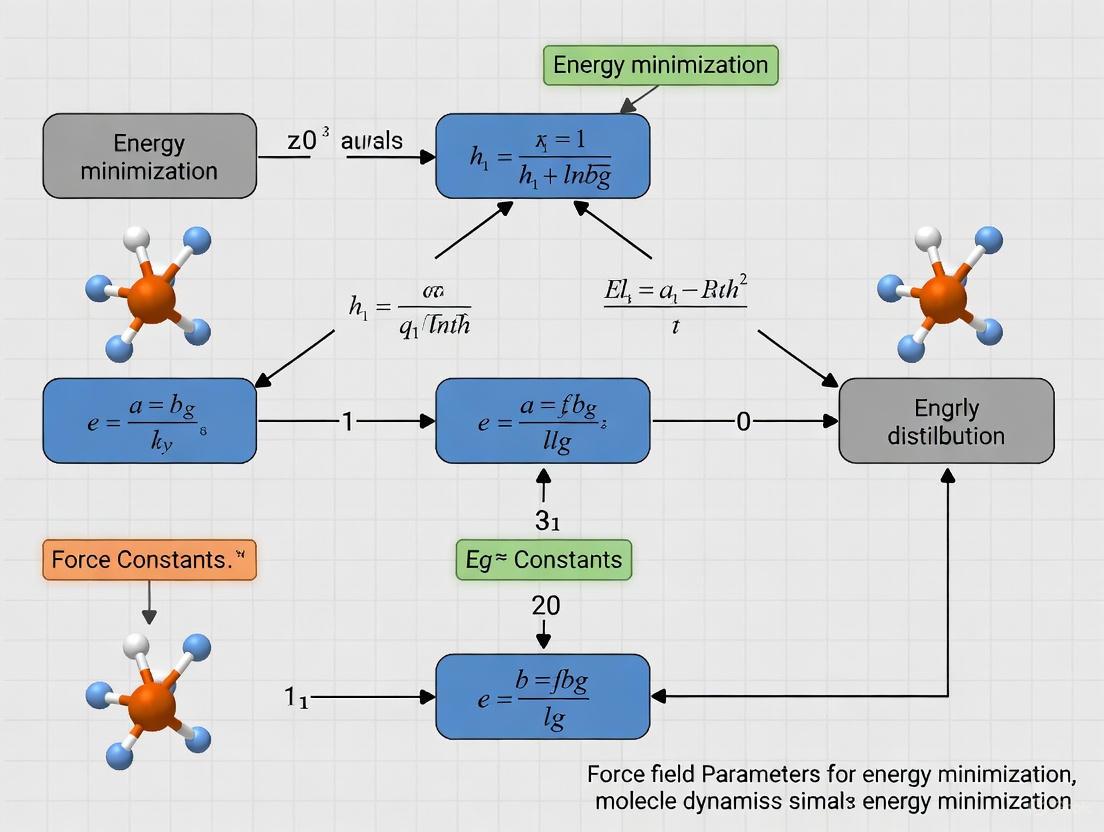

Visualizing Force Field Components and Workflows

The following diagrams illustrate the logical structure of a molecular mechanics force field and a standard parameterization workflow.

Force Field Energy Decomposition

Force Field Energy Decomposition Diagram

Data-Driven Force Field Parameterization Workflow

Data-Driven Parameterization Workflow

The separation of a force field's potential energy into bonded and non-bonded components provides a powerful and computationally efficient framework for modeling molecular systems. Bonded terms maintain structural integrity, while non-bonded terms capture essential intermolecular and long-range intramolecular forces. The accuracy of simulations in energy minimization and dynamics—especially in critical applications like computational drug discovery—is directly contingent upon the quality of the functional forms and the precision of the parameters for these terms. Emerging data-driven methodologies, which leverage large-scale quantum mechanical data and machine learning, are pushing the boundaries of force field accuracy and transferability, enabling more reliable exploration of expansive chemical spaces. A thorough understanding of these core components, their parameterization, and their implementation in minimization protocols remains indispensable for researchers aiming to leverage molecular modeling as a robust tool in scientific discovery.

In molecular simulations, a force field refers to the functional form and parameter sets used to calculate the potential energy of a system of atoms or particles. The accuracy of molecular dynamics (MD) simulations and energy minimization studies is fundamentally tied to the force field's capacity to represent physical interactions. These computational models are indispensable for studying molecular structure, dynamics, and interactions, with applications ranging from drug design to materials science. This guide details the three predominant atomistic representations—all-atom, united-atom, and coarse-grained models—framed within the context of force field parameterization for energy minimization research. Understanding the trade-offs between computational cost and biological fidelity is crucial for selecting the appropriate model for a given scientific inquiry.

Fundamental Concepts of Force Fields

The Energy Function

A force field's potential energy is typically decomposed into bonded and nonbonded interaction terms. The general form for an additive force field can be written as [6]: [ E{\text{total}} = E{\text{bonded}} + E_{\text{nonbonded}} ] where:

- ( E{\text{bonded}} = E{\text{bond}} + E{\text{angle}} + E{\text{dihedral}} )

- ( E{\text{nonbonded}} = E{\text{electrostatic}} + E_{\text{van der Waals}} )

The bonded terms describe interactions between atoms connected by covalent bonds. ( E{\text{bond}} ) is often modeled as a harmonic potential, ( E{\text{bond}} = \frac{k{ij}}{2}(l{ij}-l{0,ij})^2 ), where ( k{ij} ) is the force constant, ( l{ij} ) is the bond length, and ( l{0,ij} ) is the equilibrium bond length [6]. ( E{\text{angle}} ) represents the energy associated with the angle between three bonded atoms, also frequently using a harmonic form. ( E{\text{dihedral}} ) describes the energy related to the torsion angle around a bond, which is crucial for proper conformational sampling.

The nonbonded terms describe interactions between atoms not directly bonded. ( E{\text{electrostatic}} ) is typically represented by Coulomb's law, ( E{\text{Coulomb}} = \frac{1}{4\pi\varepsilon{0}}\frac{qi qj}{r{ij}} ), where ( qi ) and ( qj ) are partial atomic charges and ( r{ij} ) is the interatomic distance [6]. ( E{\text{van der Waals}} ) accounts for dispersion and repulsion forces, most commonly using a Lennard-Jones potential [6].

Force Field Parameterization

Parameterization involves determining numerical values for the energy function. Parameters include atomic masses, partial charges, Lennard-Jones parameters, and equilibrium values for bonds, angles, and dihedrals [6]. These are derived from multiple sources:

- Quantum mechanical calculations provide data on conformational energies, torsional profiles, and charge distributions [12].

- Experimental data, such as enthalpies of vaporization, densities, and various spectroscopic properties, serve as critical validation targets [6] [13].

- Decoy-based optimization methods create an energy gap between native-like and non-native conformations to improve a force field's discriminative power [14].

A significant challenge in parameterization is transferability—ensuring parameters work well for diverse molecules not included in the training set [14] [6]. Automated optimization frameworks like ForceBalance have been developed to systematically fit parameters to reference data while preventing overfitting through regularization terms [15].

Classification of Force Field Types

Molecular force fields are primarily categorized based on their representation of atoms and their grouping into interaction sites. The selection of model type involves a fundamental trade-off between computational efficiency and the level of chemical detail required.

Figure 1: Classification of major force field types showing their fundamental characteristics and trade-offs between resolution and computational efficiency.

All-Atom Force Fields

All-atom (AA) force fields provide the most detailed representation by explicitly including every atom in the system, including all hydrogen atoms [6]. This explicit representation allows for a more physical description of molecular geometry and interactions, particularly important for modeling hydrogen bonding, steric effects, and other directional interactions. The explicit treatment of hydrogens is crucial for studies of protein-ligand binding, enzyme catalysis, and other processes where atomic-level precision is required.

Parameterization strategies for all-atom force fields often rely on quantum mechanical (QM) calculations and experimental data. For instance, specialized force fields like BLipidFF for mycobacterial membranes derive atomic charges using a two-step QM protocol: geometry optimization at the B3LYP/def2SVP level followed by charge derivation via the Restrained Electrostatic Potential (RESP) fitting method at the B3LYP/def2TZVP level [12]. Torsion parameters are optimized to minimize the difference between QM-calculated energies and classical potential energies [12].

Applications and limitations: All-atom force fields are the preferred choice when atomic detail is critical. However, this high resolution comes at significant computational cost, limiting the system sizes and simulation timescales that can be practically studied.

United-Atom Force Fields

United-atom (UA) force fields represent hydrogen atoms implicitly by combining them with their parent heavy atoms into single interaction centers, particularly for aliphatic CHₙ groups [6] [13]. This representation significantly reduces the number of particles in the system—approximately halving the particle count for typical organic molecules—which leads to substantial computational savings [16].

Performance characteristics: Studies comparing force fields for n-alkanes have demonstrated that united-atom models can achieve comparable or even better accuracy than all-atom models in reproducing liquid-phase properties such as density, heat of vaporization, and viscosity [13]. The GROMOS united-atom force field, for instance, has shown systematic superiority in reproducing liquid-phase properties of alkanes of varying chain lengths [13]. The enhanced performance of UA models for these systems stems from the effective averaging of interactions, which can better capture collective behavior in condensed phases.

Applications: United-atom models are particularly valuable for simulating large systems where all-atom resolution is computationally prohibitive, such as lipid bilayers, polymers, and other soft materials. Their efficiency allows for longer simulations and better statistical sampling of conformational space.

Coarse-Grained Force Fields

Coarse-grained (CG) force fields represent the most drastic simplification, where multiple heavy atoms (typically 3-5) are grouped into a single interaction site or "bead" [15] [6]. This reduction in degrees of freedom can accelerate molecular dynamics simulations by 10 to 100 times compared to all-atom models [16]. The MARTINI force field is one of the most popular coarse-grained models, particularly for biomolecular simulations [17].

Mapping and parameterization: The process of coarse-graining involves defining how atoms are mapped to CG beads. In Martini 3, guidelines include respecting molecular symmetry, avoiding division of specific chemical groups between beads, and using different bead types (Regular, Small, Tiny) depending on the fragment being represented [17]. Parameterization often targets thermodynamic properties such as free energies of solvation and partitioning [15]. The ForceBalance software package enables automated optimization of CG force fields using free energy gradients derived from atomistic simulations as targets [15].

Advantages and limitations: The primary advantage of CG models is their ability to access larger spatiotemporal scales, enabling the study of processes like membrane remodeling, protein aggregation, and polymer phase separation [16]. However, this comes at the cost of lost atomic detail, making them unsuitable for studying processes where atomic resolution is essential. The smoothed energy landscape of CG models can also affect dynamic properties and barriers to conformational changes [15].

Comparative Analysis

Table 1: Quantitative comparison of force field types for molecular simulation

| Characteristic | All-Atom (AA) | United-Atom (UA) | Coarse-Grained (CG) |

|---|---|---|---|

| Atomic Resolution | Highest (all atoms explicit) | Medium (heavy atoms + polar hydrogens) | Lowest (multiple atoms per bead) |

| Computational Speed | 1x (reference) | ~2x faster [16] | 10-100x faster [16] |

| Typical System Size | < 100,000 atoms | 100,000 - 1,000,000 atoms | > 1,000,000 atoms |

| Simulation Timescale | Nanoseconds to microseconds | Nanoseconds to microseconds | Microseconds to milliseconds |

| Parameterization Complexity | High (many parameters per atom) | Medium (reduced parameter set) | Low (fewer interaction sites) |

| Accuracy for Liquid Properties | Variable (CHARMM-AA shows deviations) [13] | High (GROMOS excels for alkanes) [13] | Lower (but reasonable for large scales) |

| Hydrogen Bonding Description | Explicit and directional | Limited (implicit for aliphatic groups) | Implicit in bead interactions |

| Explicit Treatment of Hydrogens | Yes | Partial (only polar hydrogens) | No |

Table 2: Performance comparison of specific force fields for n-alkane liquid properties (relative ranking) [13]

| Force Field | Type | Density | Heat of Vaporization | Surface Tension | Viscosity | Overall Ranking |

|---|---|---|---|---|---|---|

| GROMOS | UA | 1 | 1 | 1 | 2 | 1 |

| CHARMM-UA | UA | 2 | 3 | 3 | 1 | 2 |

| TRAPPE-EH | UA | 3 | 2 | 2 | 3 | 3 |

| L-OPLS | AA | 4 | 4 | 4 | 4 | 4 |

| NERD | AA | 5 | 5 | 5 | 5 | 5 |

| CHARMM-AA | AA | 6 | 6 | 6 | 6 | 6 |

| PYS | UA | 7 | 7 | 7 | 7 | 7 |

Parameterization Methodologies

Decoy-Based Optimization for All-Atom Force Fields

Decoy-based optimization methods leverage Anfinsen's thermodynamic hypothesis, which states that a protein's native state is the conformation with the lowest free energy [14]. This approach creates a free energy gap between sets of native-like and non-native conformations.

Protocol:

- Decoy Generation: Generate large sets of decoy conformations for multiple nonhomologous proteins with different architectural motifs [14].

- Parameter Space Search: Systematically search parameter space to maximize the energy gap between native and non-native structures [14].

- Validation: Test the optimized force field on independent protein sets not included in training to assess transferability [14].

This method has been successfully applied to optimize torsional and solvation parameters in the ECEPP05 force field coupled with an implicit solvent model, resulting in improved discrimination of near-native conformations [14].

Free Energy Gradient Optimization for Coarse-Grained Models

ForceBalance implements an automated approach for optimizing coarse-grained force fields using free energy gradients [15].

Workflow:

- Reference Data Generation: Run atomistic simulations to compute hydration free energy gradients, ( \langle \Delta U \rangle_\alpha ), at selected coupling parameter (α) values [15].

- Objective Function Definition: Minimize the objective function, ( L(k) = \summ Lm(k) + w{\text{reg}} ), where ( Lm(k) ) represents weighted sum of squared differences between atomistic and CG free energy gradients [15].

- Parameter Optimization: Use a trust-radius Newton-Raphson optimizer to iteratively adjust parameters until convergence [15].

- Validation: Perform full free energy calculations with the optimized CG model and compare to atomistic and experimental results [15].

This approach has been used to optimize the SIRAH CG protein force field, improving the agreement with atomistic hydration free energies [15].

Quantum Mechanics-Based Parameterization

For specialized applications, such as developing the BLipidFF for mycobacterial membranes, a rigorous QM-based parameterization is employed [12]:

Charge Parameter Calculation:

- Segmentation: Divide large lipids into manageable segments using a divide-and-conquer strategy [12].

- QM Optimization: Perform geometry optimization at the B3LYP/def2SVP level [12].

- Charge Derivation: Calculate RESP charges at the B3LYP/def2TZVP level using multiple conformations [12].

- Charge Integration: Combine segment charges to derive the total molecular charge distribution [12].

Torsion Parameter Optimization:

- Further Subdivision: Divide molecules into smaller elements for tractable QM calculations [12].

- Energy Matching: Optimize torsion parameters to minimize differences between QM and classical potential energies [12].

Figure 2: Workflow of force field parameterization methodologies showing different optimization strategies for all-atom, coarse-grained, and QM-based approaches.

Table 3: Essential software tools and resources for force field development and application

| Tool/Resource | Type | Primary Function | Application in Energy Minimization |

|---|---|---|---|

| ForceBalance [15] | Optimization Software | Automated parameter optimization using multiple data sources | Systematic force field refinement against experimental and QM data |

| Amber Tools [18] | MD Software Suite | Force field assignment and molecular simulation | Energy minimization with ff14SB and GAFF parameters |

| GROMACS [13] | MD Engine | High-performance molecular dynamics | Efficient energy minimization and dynamics with various FFs |

| CHARMM-GUI | Web Portal | Biomolecular system setup | Preparation of simulation systems with CHARMM force fields |

| LigParGen [17] | Web Server | OPLS-AA parameter generation | Creating initial force field parameters for small molecules |

| CGbuilder [17] | Mapping Tool | Coarse-grained mapping visualization | Defining atom-to-bead mappings for CG model development |

| Antechamber [18] | Parameterization Tool | GAFF parameter assignment for nonstandard residues | Deriving force field parameters for small organic molecules |

| Multiwfn [12] | QM Analysis | Electron density and wavefunction analysis | RESP charge fitting for force field parameterization |

The selection of an appropriate force field type—all-atom, united-atom, or coarse-grained—represents a critical decision point in molecular simulation research that balances computational feasibility with the required level of detail. All-atom force fields provide the highest resolution for studying atomic interactions but at the greatest computational cost. United-atom models offer an effective compromise for many applications, particularly for organic molecules and liquid systems. Coarse-grained models enable the exploration of large-scale biomolecular processes inaccessible to higher-resolution approaches.

Future directions in force field development include more automated parameterization approaches, improved treatment of polarization effects, and the development of multi-scale methods that seamlessly transition between resolutions. For energy minimization research specifically, continued refinement of force fields through decoy-based optimization and free energy matching will enhance their discriminative power for identifying native-like structures. The integration of machine learning approaches with physical force fields represents a promising frontier for capturing complex molecular interactions while maintaining computational efficiency. As these methodologies mature, they will expand the scope and accuracy of molecular simulations across diverse research domains, from drug discovery to materials design.

Force fields are the fundamental mathematical models that describe the potential energy of a molecular system as a function of its atomic coordinates. They serve as the foundation for molecular dynamics (MD) and Monte Carlo (MC) simulations, enabling the study of molecular processes across physics, chemistry, materials science, and drug discovery [19] [20]. The parameterization of these force fields—the process of determining optimal numerical values for the constants within their mathematical expressions—represents a significant challenge that directly influences the reliability and scope of molecular simulations.

The core challenge lies in balancing three competing objectives: accuracy (the ability to reproduce experimental or quantum mechanical data), transferability (the applicability across diverse molecules and state conditions), and computational efficiency (the feasibility of simulating large systems over biologically or industrially relevant timescales) [19] [20]. This whitepaper examines the methodologies, trade-offs, and recent advancements in force field parameterization, providing researchers with a framework for selecting and developing force fields for energy minimization and molecular simulation research.

Force Field Fundamentals and Classification

Functional Forms and Components

Classical force fields typically decompose the total potential energy ((E_{total})) of a system into contributions from bonded and non-bonded interactions, governed by a series of parametric equations [20].

[E{total} = E{bond} + E{angle} + E{torsion} + E{vdW} + E{electrostatic}]

The bonded interactions include harmonic potentials for bond stretching ((E{bond})) and angle bending ((E{angle})), along with periodic functions for torsional dihedral angles ((E{torsion})). Non-bonded interactions are generally described by the Lennard-Jones potential for van der Waals forces ((E{vdW})) and Coulomb's law for electrostatic interactions ((E_{electrostatic})) between partial atomic charges [21] [20].

Classification of Force Fields

Force fields can be systematically classified based on their modeling approach, level of detail, and parameterization philosophy, as outlined in the ontology below [19].

Figure 1: An ontology for the classification of molecular force fields, covering modeling approach, level of detail, and parameterization strategy. Adapted from Scientific Data [19].

Table 1: Comparison of Major Force Field Types

| Force Field Type | Typical Number of Parameters | Parameter Interpretability | Computational Cost | Primary Applications |

|---|---|---|---|---|

| Classical (Non-reactive) | 10 - 100 [20] | High (clear physical meaning) [20] | Low to Moderate | Protein folding, membrane properties, phase equilibria [12] [22] |

| Reactive (ReaxFF) | 100+ [20] | Moderate (bond-order based) [23] | Moderate to High | Chemical reactions, combustion, catalysis [20] [23] |

| Machine Learning (ML) | 1,000 - 1,000,000+ [20] | Low (black-box nature) [20] | High (training) / Low (inference) | Complex potential energy surfaces, accelerated dynamics [20] |

The Parameterization Trilemma: Accuracy, Transferability, and Cost

The development of any force field is an exercise in navigating a trilemma, where optimizing for two objectives often requires compromises in the third.

Accuracy vs. Transferability

Component-specific force fields are parameterized for a single substance, often achieving high accuracy for that specific compound but lacking general applicability [19]. In contrast, transferable force fields use generalized building blocks (e.g., atom types, functional groups) that can be combined to model a wide range of substances. The Transferable Potentials for Phase Equilibria (TraPPE) force field exemplifies this approach, where parameters for a methyl group (-CH₃) are first fitted using ethane and then transferred to verify predictions for butane [21]. This transferability comes at a potential cost to absolute accuracy for any specific molecule, as the parameters represent averages across chemical environments [19].

Accuracy vs. Computational Cost

The level of atomic detail directly impacts both accuracy and computational cost. All-atom force fields explicitly represent every hydrogen atom and are often necessary for modeling specific electrostatic interactions or structural details [21] [22]. United-atom force fields, such as TraPPE-UA, merge hydrogen atoms into the carbon atoms they are bonded to, reducing the number of interaction sites and increasing computational efficiency, which is particularly valuable for simulating large systems like polymers [21]. The most abstract, coarse-grained models, such as TraPPE-CG, merge multiple atoms into single interaction sites, enabling simulations of even larger systems and longer timescales [21] [19].

Transferability vs. Computational Cost

Developing a highly transferable force field requires parameterization against an extensive dataset of diverse molecules and properties, which is a computationally expensive and time-consuming process [24] [23]. Furthermore, more complex force fields designed for broad applicability, such as polarizable or reactive force fields, inherently carry a higher computational cost per simulation step compared to simpler, non-reactive classical force fields [21] [20].

Parameterization Methodologies and Protocols

Force field parameters are derived by fitting to two primary classes of reference data:

- Theoretical Data: High-level ab initio quantum mechanical (QM) calculations provide data on energies, forces, charge distributions, and torsional energy profiles [12] [24].

- Experimental Data: Macroscopic experimental data, such as vapor-liquid coexistence curves, densities, enthalpies of vaporization, and radial distribution functions, serve as critical benchmarks, particularly for thermodynamic properties [21] [24].

Step-by-Step Parameterization Protocols

Protocol 1: Charge Parameterization for Complex Lipids

A recent study on developing the BLipidFF for mycobacterial membranes details a robust protocol for deriving partial atomic charges [12]:

- Modular Segmentation: Divide large, complex lipid molecules (e.g., PDIM, SL-1) into smaller, manageable molecular segments.

- Geometry Optimization: For each segment, perform geometry optimization in vacuum using Density Functional Theory (DFT) at the B3LYP/def2SVP level.

- Electrostatic Potential Calculation: Calculate the electrostatic potential for the optimized structure at the B3LYP/def2TZVP level.

- RESP Charge Fitting: Derive partial charges using the Restrained Electrostatic Potential (RESP) fitting method.

- Conformational Averaging: Repeat steps 2-4 for multiple (e.g., 25) conformations sampled from MD trajectories and use the average charge values to reduce conformational bias.

- Charge Integration: Assemble the final charges for the complete molecule by integrating the charges from all segments after removing the capping groups [12].

Protocol 2: Automated Force Field Optimization with ForceBalance

The ForceBalance method demonstrates a systematic approach for optimizing parameters against a diverse set of target data [24]:

- Objective Function Definition: Define an objective function as a sum of squared differences between simulated and reference data (experimental and/or QM), normalized by the variance of the reference data.

- Parameter Mapping: Establish a mapping between mathematical optimization parameters and physical force field parameters to ensure a well-behaved minimization problem.

- Property and Derivative Calculation: Run molecular simulations to compute target properties. Use thermodynamic fluctuation formulas to calculate accurate parametric derivatives of these properties without requiring multiple independent simulations.

- Iterative Optimization: Employ gradient-based or stochastic optimization algorithms to minimize the objective function. Adaptively adjust simulation length to enhance efficiency, performing longer simulations for higher precision as the optimum is approached [24].

Protocol 3: Reactive Force Field (ReaxFF) Optimization

Parameterizing a reactive force field like ReaxFF, which contains hundreds of parameters, presents a distinct challenge. A recent improved framework combines multiple algorithms [23]:

- Multi-Objective Optimization: Define an objective function that simultaneously minimizes errors for multiple QM-derived properties (e.g., atomic charges, bond energies, reaction energies, valence angles).

- Hybrid Algorithm Search: Utilize a hybrid optimization strategy combining a Simulated Annealing (SA) algorithm for global exploration and a Particle Swarm Optimization (PSO) algorithm for efficient local search.

- Concentrated Attention Mechanism (CAM): Introduce a weighting scheme that pays more attention to representative key data, such as equilibrium structures, during the optimization process to improve the physical realism of the final parameters [23].

The following workflow diagram synthesizes these methodologies into a generalized parameterization pipeline.

Figure 2: A generalized workflow for force field parameterization, illustrating the iterative cycle of simulation, comparison, and parameter optimization.

The Scientist's Toolkit: Key Reagents and Computational Tools

Table 2: Essential Tools and "Reagents" for Force Field Development and Validation

| Tool / Reagent | Type | Primary Function | Example Uses |

|---|---|---|---|

| Quantum Chemistry Software (Gaussian, Q-Chem) [12] [20] | Software | Generate reference data for parameterization. | Calculating electrostatic potentials for charge fitting, torsional energy profiles, and training data for ML force fields. |

| Molecular Simulation Engines (GROMACS, TINKER, OpenMM, LAMMPS) [24] [23] | Software | Perform MD/MC simulations to compute properties and test parameters. | Evaluating target properties during parameterization; running production simulations for scientific discovery. |

| Optimization Algorithms (ForceBalance, SA, PSO, GA) [24] [23] | Software/Method | Automate the search for optimal force field parameters. | Systematically minimizing the difference between simulated and reference data. |

| Validated Benchmark Datasets | Data | Provide standardized references for testing force field accuracy and transferability. | Assessing performance on protein folding [22] [25], phase equilibria [21], and secondary structure propensities [25]. |

| Water Models (TIP3P, TIP4P, TIP4P-D, OPC) [24] [22] [25] | Force Field Component | Solvate molecules and profoundly influence simulation outcome. | TIP4P-D combined with protein force fields improves reliability for disordered proteins [22]. |

Application-Specific Parameterization: Case Studies

Biomolecular Simulations: The Water Model Problem

The choice of water model is a critical parameterization decision that significantly impacts the outcome of biomolecular simulations. Traditional models like TIP3P, when combined with protein force fields, have been shown to cause an artificial collapse of intrinsically disordered proteins (IDPs) [22]. This highlights a failure in balancing intramolecular and solvent interactions. Advanced models like TIP4P-D, which includes dispersion corrections, significantly improve the reliability of simulations for proteins containing both structured and disordered regions, better reproducing experimental NMR relaxation parameters and radii of gyration [22]. Independent assessments confirm that while modern force fields have improved, finding the right balance between noncovalent attraction and repulsion remains an ongoing challenge, particularly for multi-protein systems and aggregation-prone peptides [25].

Materials and Industrial Chemistry: The TraPPE Philosophy

The TraPPE force field family prioritizes transferability and accuracy in predicting thermophysical properties for materials and industrial applications [21]. Its parameterization strategy involves fitting nonbonded parameters for simple, representative molecules to reproduce experimental phase equilibrium data (e.g., vapor-liquid coexistence curves). These parameters are then transferred as building blocks to construct more complex molecules, with the transferability validated by predicting properties of other compounds [21]. This approach ensures robustness across different state points, compositions, and properties, making it suitable for screening materials like zeolites and polymers.

Specialized Membrane Lipids: Addressing a Gap

General force fields often lack parameters for unique biological components. The development of BLipidFF for mycobacterial membrane lipids illustrates a targeted parameterization to address a specific research gap [12]. Using the modular QM-based protocol, researchers created an all-atom force field for complex lipids like mycolic acids. MD simulations using BLipidFF successfully captured key membrane properties, such as high tail rigidity and lateral diffusion rates, that were poorly described by general force fields, and showed excellent agreement with fluorescence spectroscopy and FRAP experiments [12].

Emerging Trends and Optimization

The field of force field parameterization is being advanced by several key trends:

- Automation and Machine Learning: Tools like ForceBalance automate and systematize parameterization [24], while graph-based neural networks are being explored to predict parameters directly from chemical structures, enhancing transferability [26].

- Reactive Force Field Optimization: New hybrid algorithms (e.g., SA+PSO) with concentrated attention mechanisms are improving the efficiency and accuracy of optimizing the hundreds of parameters in ReaxFF [23].

- Standardization and FAIR Data: Initiatives like the TUK-FFDat data scheme aim to create machine-readable, reusable, and interoperable formats for transferable force fields, promoting reproducibility and easier integration into workflows [19].

Force field parameterization remains a central challenge in computational molecular science. The core trilemma of balancing accuracy, transferability, and computational cost necessitates a careful, application-driven selection of the parameterization strategy and final force field. Future progress hinges on the continued development of automated, systematic, and data-driven parameterization methods, coupled with community-wide standards for force field development and validation. By addressing these challenges, the next generation of force fields will expand the frontiers of molecular simulation, enabling more reliable and predictive studies of complex systems in drug development, materials design, and beyond.

In molecular dynamics (MD) simulations, the accuracy of the force field (FF) is the cornerstone for obtaining physically meaningful results. Force fields are mathematical models that describe the potential energy surface of a molecular system as a function of atomic positions, forming the physical basis for MD methods [27] [10]. The reliability of simulations in computational drug discovery, particularly for predicting molecular behavior and interactions, hinges on the force field's ability to accurately reproduce key experimental physical properties. These properties—including density, shear viscosity, and solvation free energy—serve as critical benchmarks during the parameterization and validation of force fields [27]. A force field that fails to accurately capture these properties cannot provide trustworthy predictions for more complex phenomena, such as protein-ligand binding affinities or ion transport through membranes. This guide details the methodologies for evaluating these essential properties, providing a framework for force field selection and validation within energy minimization research.

Force Field Benchmarking: A Comparative Analysis of Key Physical Properties

Evaluating force field performance requires comparing simulation results against experimentally determined physical properties. The following quantitative analysis highlights how different all-atom force fields perform in predicting the density and viscosity of a model compound, diisopropyl ether (DIPE), a task relevant for modeling liquid ion-selective membranes [27].

Table 1: Comparison of Force Field Performance for Diisopropyl Ether (DIPE) Density and Viscosity at 293 K [27]

| Force Field | Predicted Density (g/cm³) | Deviation from Experiment | Predicted Viscosity (mPa·s) | Deviation from Experiment |

|---|---|---|---|---|

| GAFF | ~0.73 | +3 to +5% Overestimation | ~0.48 | +60 to +130% Overestimation |

| OPLS-AA/CM1A | ~0.73 | +3 to +5% Overestimation | ~0.48 | +60 to +130% Overestimation |

| CHARMM36 | ~0.71 | Quite Accurate | ~0.23 | Quite Accurate |

| COMPASS | ~0.71 | Quite Accurate | ~0.23 | Quite Accurate |

This comparative study demonstrates that the CHARMM36 and COMPASS force fields provide superior accuracy for DIPE, closely matching experimental density and viscosity values. In contrast, GAFF and OPLS-AA/CM1A significantly overestimate these properties, making them less suitable for simulating systems where transport properties like viscosity are critical [27]. Beyond thermodynamic and transport properties, accurately describing solvation behavior is paramount. The solvation free energy, which is closely linked to solubility and partition coefficients, determines how molecules distribute between different phases, such as an ether layer and an aqueous phase in a liquid membrane system [27]. Accurate prediction of this property is therefore essential for modeling phenomena like ion selectivity.

Experimental Protocols for Force Field Validation

Density Calculations (pvT Properties)

To calculate equilibrium density using molecular dynamics, a common and robust protocol involves using a large number of molecules in multiple independent simulations to ensure statistical reliability [27].

- System Setup: Construct multiple (e.g., 64 different) cubic unit cells containing a large number of molecules (e.g., 3375 DIPE molecules). This provides a balance between the magnitude of fluctuations and computational complexity, and the multiple cells are needed for subsequent viscosity calculations [27].

- Initialization: Set initial configurations in the form of a simple cubic lattice. Perform energy minimization on these initial configurations to remove any bad contacts or unrealistic geometry [27].

- Equilibration: Run simulations in the NpT (isothermal-isobaric) ensemble. Use a Nosé–Hoover thermostat and barostat to maintain constant temperature and pressure (e.g., 1 atm). The duration of this phase must be sufficient for the system density to stabilize [27].

- Production Run: Continue the NpT simulation to collect trajectory data for analysis. The density is calculated as an average over the production phase of the simulation [27].

- Analysis: The final density value is obtained by averaging the results from all independent simulation cells to improve statistical accuracy [27].

Shear Viscosity Calculations

Shear viscosity, a transport property, can be determined using the Green-Kubo formalism, which relates it to the integral of the pressure autocorrelation function.

- System Preparation: Utilize the equilibrated configurations obtained from the density calculations as starting points [27].

- Equilibration in NVE Ensemble: A short equilibration run in the microcanonical (NVE) ensemble is performed for each configuration [27].

- Production Run for Stress Tensor Data: Conduct a production run in the NVE ensemble. During this run, the components of the pressure tensor are recorded at every time step with high frequency (e.g., every 2 fs) [27].

- Autocorrelation Function Analysis: Calculate the autocorrelation function of the traceless symmetric part of the pressure tensor for each independent configuration [27].

- Integration and Averaging: Integrate the autocorrelation function to obtain a viscosity value for each independent run. The final viscosity is the average across all runs, and the standard error is calculated from the variance between them [27].

Solvation Free Energy and Related Thermodynamic Properties

For liquid membrane systems, key thermodynamic properties include mutual solubility, interfacial tension, and partition coefficients.

- Mutual Solubility of Ether and Water: This can be estimated using MD simulations by calculating the free energy of solvation. The methodology involves computing the chemical potential of the solute (e.g., water in ether or ether in water) using techniques such as thermodynamic integration or free energy perturbation [27].

- Interfacial Tension between DIPE and Water:

- Construct a simulation box containing both DIPE and water phases, allowing a clear interface to form.

- Perform a long equilibration run to stabilize the interface.

- Calculate the interfacial tension from the difference between the normal and tangential components of the pressure tensor relative to the interface, using the Kirkwood-Buff formula [27].

- Partition Coefficients (e.g., for Ethanol in DIPE + Ethanol + Water systems): The partition coefficient of a solute (Psolute) is determined by the difference in its solvation free energy in the two phases (ΔGsolvation(organic) and ΔGsolvation(water)): log(Psolute) = -(ΔGsolvation(organic) - ΔGsolvation(water)) / (RT). These solvation free energies are calculated directly using alchemical free energy methods [27].

Specialized Parameterization for Complex Systems: The Mycobacterial Membrane Case

The development of specialized force fields is necessary when studying systems with unique chemical components not adequately covered by general force fields. A prime example is the mycobacterial outer membrane (MOM) of Mycobacterium tuberculosis, which contains complex lipids like phthiocerol dimycocerosate (PDIM) and mycolic acids [12].

The BLipidFF (Bacteria Lipid Force Fields) was developed using a rigorous, multi-step parameterization strategy [12]:

- Atom Type Definition: Atoms are categorized based on location and chemical environment. A dual-character definition is used: a lowercase letter for the elemental category (e.g., 'c' for carbon) and an uppercase letter for the chemical environment (e.g., 'T' for lipid tail, 'A' for headgroup). Specialized types like cX for cyclopropane carbons address unique mycobacterial motifs [12].

- Quantum Mechanical Charge Calculation:

- Modular Division: Large, complex lipids are divided into smaller, manageable segments.

- QM Geometry Optimization: Each segment's geometry is optimized in vacuum at the B3LYP/def2SVP level of theory.

- RESP Charge Derivation: Partial atomic charges are derived using the Restrained Electrostatic Potential (RESP) fitting method at the B3LYP/def2TZVP level.

- Conformational Averaging: Charges are calculated for 25 conformations of each lipid (randomly selected from long MD trajectories), and the final charge for each segment is the arithmetic average across all conformations to eliminate error.

- Segment Integration: The charges of all segments are integrated to derive the total charge of the entire molecule after removing capping groups [12].

- Torsion Parameter Optimization: The torsion parameters (Vn, n, γ) are optimized by minimizing the difference between the energies calculated by quantum mechanics and the classical potential. Due to the large size of the molecules, further subdivision is required for these high-level calculations. All torsion parameters consisting of heavy atoms are parameterized, while bond and angle parameters are typically adopted from established force fields like GAFF [12].

Diagram: Force Field Parameterization Workflow for Complex Lipids.

Table 2: Essential Computational Tools and Resources for Force Field Development and Validation

| Item/Reagent | Function / Role in Research |

|---|---|

| Quantum Chemistry Software (e.g., Gaussian) | Performs high-level quantum mechanical calculations (geometry optimization, RESP charge derivation) essential for deriving accurate force field parameters [12]. |

| MD Simulation Engines (e.g., GROMACS, AMBER, NAMD) | Software platforms that run the molecular dynamics simulations using the developed force field parameters to compute physical properties [12] [27]. |

| Force Field Parameter Sets (e.g., CHARMM36, GAFF, BLipidFF) | Pre-defined sets of parameters (bonds, angles, torsions, non-bonded interactions) that determine the accuracy of the simulation for a given class of molecules [12] [27]. |

| System Builder Tools (e.g., PACKMOL) | Assists in constructing the initial simulation boxes, including solvated systems and liquid bilayers for membrane simulations [12]. |

| Analysis Tools (e.g., VMD, MDAnalysis) | Used to visualize simulation trajectories and calculate key properties, such as lateral diffusion rates, order parameters, and density profiles [12]. |

| Validation Data (Experimental Biophysical Data) | Experimental measurements (e.g., from Fluorescence Recovery After Photobleaching - FRAP, spectroscopy) used as a gold standard to validate the predictions of the MD simulations [12]. |

The path to reliable molecular dynamics simulations in drug discovery and materials science is paved with the rigorous benchmarking of force fields against key physical properties. As demonstrated, properties such as density, shear viscosity, and solvation free energy provide the critical litmus test for a force field's accuracy. For specialized systems like the mycobacterial membrane, a dedicated parameterization process—involving sophisticated quantum mechanical calculations and meticulous validation against biophysical experiments—is not merely beneficial but essential. The methodologies and benchmarks outlined in this guide provide a robust framework for researchers engaged in force field development and application, ensuring that subsequent energy minimization and dynamics studies are founded upon a physically accurate representation of molecular interactions.

Advanced Parametrization Methods: From Automation to Machine Learning

Automated Parametrization with Evolutionary Algorithms and Particle Swarm Optimization

In computational chemistry and materials science, the accuracy of molecular dynamics (MD) simulations is fundamentally dependent on the quality of the force field parameters used to describe interatomic interactions. These parameters are critical for energy minimization studies, which seek to find the most stable molecular configurations. Traditional manual parametrization is a tedious, time-consuming process that often requires deep expert knowledge and can be prone to human error, creating a major bottleneck in research. Automated parametrization methods, particularly those leveraging evolutionary algorithms (EAs) and particle swarm optimization (PSO), have emerged as powerful solutions to these challenges. These metaheuristic algorithms efficiently navigate high-dimensional parameter spaces to find optimal values that reproduce target experimental or quantum mechanical data. The application of these methods is transforming force field development, enabling more accurate and reliable simulations for applications ranging from drug discovery to the design of new materials. This whitepaper provides an in-depth technical guide to the core methodologies, protocols, and tools driving this innovation.

Algorithmic Foundations

Particle Swarm Optimization (PSO)

PSO is a population-based optimization technique inspired by the collective behavior of biological systems, such as bird flocking or fish schooling. In the context of force field parametrization, each "particle" in the swarm represents a candidate set of force field parameters. The swarm explores the high-dimensional parameter space collaboratively.

The algorithm operates by iteratively updating the position ( \mathbf{x}i^{k+1} ) and velocity of each particle ( i ) at iteration ( k ). A common update rule for the position is: [ \mathbf{x}{i}^{k+1} = \mathbf{x}^k{i} + w\cdot \mathbf{p}^{k}{i} + \mathbf{F}^{k}{i} ] where ( \mathbf{p}{i}^{k} ) is the momentum term, ( w ) is an inertial weight coefficient, and ( \mathbf{F}_{i}^{k} ) is a "force" term that attracts the particle toward promising regions of the search space [28].

The force term is typically calculated as: [ \mathbf{F}{i}^{k} = c{1} \cdot r{1} \cdot (\hat{\mathbf{x}}{i}^{k} - \mathbf{x}{i}^{k}) + c{2} \cdot r{2} \cdot (\hat{\hat{\mathbf{x}}}^{k} -\mathbf{x}{i}^{k}) ] where:

- ( \hat{\mathbf{x}}_{i}^{k} ) is the best position ever found by particle ( i ) (the personal best, or

pbest). - ( \hat{\hat{\mathbf{x}}}^{k} ) is the best position found by any particle in the swarm (the global best, or

gbest) or within a neighborhood [28] [29]. - ( c{1} ) and ( c{2} ) are cognitive and social weights, respectively, often set to values around 2.

- ( r{1} ) and ( r{2} ) are random numbers uniformly distributed between 0 and 1, introducing stochasticity into the search [28].

The inertial weight ( w ) is crucial for balancing exploration and exploitation. A common strategy is to start with a higher value (e.g., 0.9) to promote global exploration and linearly decrease it (e.g., to 0.4) over the optimization to refine the solution [30] [28]. After each iteration, the particle's momentum is updated as ( \mathbf{p}^{k+1}{i} = \mathbf{x}{i}^{k+1} - \mathbf{x}^{k}_{i} ) [28].

Hybrid and Enhanced PSO Variants

Standard PSO can be enhanced with additional mechanisms to improve its performance on complex force field parametrization problems, which often involve a mix of continuous and discrete parameters and possess many local minima.

- Mixed-Variable PSO: For force fields like Martini 3, parametrization involves optimizing continuous variables (e.g., bond lengths) alongside discrete variables (e.g., predefined bead types). Mixed-variable PSO algorithms are designed to handle this combined search space efficiently [31].

- Hybrid SA-PSO Algorithm: Combining Simulated Annealing (SA) with PSO leverages the strengths of both methods. The SA component, with its probabilistic acceptance of worse solutions, helps escape local minima, while PSO efficiently guides the swarm toward promising regions based on social information. One study reported that an SA+PSO hybrid was faster and more accurate than traditional metaheuristic methods for optimizing ReaxFF parameters [23].

- Adaptive Hierarchical Filtering PSO (AHFPSO): This variant introduces a hierarchical filtering mechanism where the swarm is partitioned into sub-swarms that evolve independently. The best-performing sub-swarms are promoted for further refinement, while others are eliminated. An adaptive adjustment mechanism also dynamically tunes algorithm parameters during the optimization process, enhancing performance on ill-posed, high-dimensional problems [30].

Genetic Algorithms (GAs)

As another class of evolutionary algorithms, GAs are inspired by the process of natural selection. A population of candidate solutions (called chromosomes) evolves over generations through selection, crossover (recombination), and mutation operations.

- Selection: The tournament selection method is commonly used. Here, ( N{tour} ) chromosomes are randomly drawn from the population, and the one with the best fitness (e.g., the lowest error in reproducing target data) is selected as a parent with a certain probability ( P{tour} ) [28].

- Crossover and Mutation: Selected parents are recombined to produce offspring, inheriting parameter combinations from their parents. Mutation then randomly alters some parameters in the offspring with a low probability, introducing new genetic material and helping to maintain population diversity, which is crucial for exploring the parameter space effectively [28].

Workflow and Implementation

The general workflow for automated force field parametrization using EAs and PSO follows a systematic sequence, from problem definition to final validation. The diagram below illustrates this process and the key decision points.

Defining the Optimization Problem

The first step is to define the optimization problem formally. This involves identifying the force field parameters to be optimized and collecting the target reference data against which the fitness of candidate parameters will be evaluated.