Force Field Drift in MD Simulations: Causes, Solutions, and Validation for Biomedical Research

Long-timescale Molecular Dynamics (MD) simulations are pivotal for drug discovery and understanding biomolecular mechanisms, but their predictive power is often limited by force field drift—a gradual deviation from accurate physical...

Force Field Drift in MD Simulations: Causes, Solutions, and Validation for Biomedical Research

Abstract

Long-timescale Molecular Dynamics (MD) simulations are pivotal for drug discovery and understanding biomolecular mechanisms, but their predictive power is often limited by force field drift—a gradual deviation from accurate physical representation. This article provides a comprehensive analysis for researchers and drug development professionals, exploring the fundamental roots of this drift, from inadequate parameterization to the inherent limitations of traditional molecular mechanics. We examine cutting-edge methodological solutions, including machine-learned force fields, and offer a practical guide for troubleshooting and optimizing simulation stability. Finally, we establish a framework for validating force field accuracy against quantum mechanical and experimental data, ensuring reliable results for biomedical and clinical applications.

The Roots of Instability: Understanding What Causes Force Field Drift

In the context of molecular dynamics (MD) simulations, a force field refers to the computational model and empirical energy functions used to calculate the potential energy of a system of atoms or molecules, from which the forces acting on each particle are derived [1]. These force fields are the foundation for simulating biological processes, material properties, and drug-target interactions, enabling scientists to observe molecular phenomena that are difficult or impossible to capture experimentally. The accuracy of these simulations is entirely dependent on the force field's ability to realistically represent atomic interactions.

Despite continuous refinement, a significant challenge persists in long-timescale MD simulations: force field drift. This phenomenon can be defined as the gradual deviation of a simulated system from physically accurate behavior, manifesting as evolving inaccuracies in energy distributions, atomic coordinates, and ultimately, molecular conformations. Force field drift represents a critical limitation in achieving predictive simulations, particularly for complex biomolecular processes like protein folding and ligand binding that occur over micro- to milli-second timescales. Understanding its origins—spanning functional forms, parameter limitations, and environmental dependencies—is essential for advancing the reliability of computational molecular science.

The Fundamental Causes of Force Field Drift

Force field drift arises from inherent approximations and limitations in the construction of the empirical energy functions. These limitations become increasingly pronounced as simulation time increases, leading to a gradual divergence from realistic behavior.

Limitations in Functional Forms and Electrostatic Treatment

The standard functional form of an additive force field decomposes the total potential energy into bonded and non-bonded terms [1] [2]. A fundamental limitation is the use of simplified mathematical functions that cannot fully capture the complexity of quantum mechanical potential energy surfaces.

- Harmonic Approximations: Bond stretching and angle bending are typically modeled using harmonic (quadratic) potentials [1] [2]. While computationally efficient, these potentials do not allow for bond breaking and are poor approximations at high stretching, unlike more realistic but expensive potentials like the Morse potential [1].

- Inadequate Polarization Models: Traditional fixed-charge force fields do not account for electronic polarization—the response of a molecule's electron density to its changing environment [3] [4]. This is a significant source of drift, as the same fixed atomic charges are applied in different dielectric environments (e.g., a protein's hydrophobic core versus a hydrophilic solvent-exposed surface), leading to inaccurate electrostatic interactions over time [4]. Polarizable force fields, such as the Drude model and AMOEBA, seek to address this by explicitly modeling how charge distributions change [3] [4].

- Anisotropy and Charge Penetration: Atom-centered point charges cannot represent anisotropic charge distributions, such as sigma-holes (responsible for halogen bonding) or lone pairs [4]. Furthermore, they fail to model the charge penetration effect that occurs when electron clouds overlap, which is critical for accurate short-range electrostatics [4].

Parametrization Inconsistencies and Transferability Issues

The process of determining force field parameters is a major source of potential drift.

- Parametrization Strategies: Parameters are derived from various sources, including quantum mechanical calculations on small molecules and experimental data such as enthalpies of vaporization and vibrational frequencies [1]. This can lead to inconsistencies, as different parameter subsets may be optimized against different target data, creating an inherent lack of balance [5].

- The Transferability Problem: Force fields often assume that parameters are transferable between similar chemical contexts [1] [5]. However, a torsion parameter fit for a specific small molecule may perform poorly when the same chemical moiety is placed in a different molecular environment, a common occurrence in complex biomolecular simulations [5]. This limits the force field's generalizability and can cause slow conformational drift.

Accumulation of Errors in Long-Timescale Simulations

In long simulations, the limitations above cease to be mere approximations and become active drivers of system evolution.

- Error Propagation: Small, systematic inaccuracies in energy calculations for individual interactions can propagate over thousands of timesteps. These micro-errors accumulate, leading to macro-level deviations in the simulated system's properties and behavior.

- Conformational Sampling Bias: Inaccuracies in the backbone and side-chain dihedral potentials can result in a biased preference for certain conformations over others [3]. For example, early versions of the CHARMM force field were found to favor misfolded states for certain proteins because of small inaccuracies in the backbone potential energy surface [3]. This bias can steer a simulation away from the true native state and toward non-physical low-energy states.

Table 1: Primary Sources of Force Field Drift and Their Manifestations

| Source of Drift | Underlying Cause | Observed Manifestation in Simulation |

|---|---|---|

| Fixed-Point Charges | Inability to adapt to changing dielectric environments [4]. | Inaccurate protein-ligand binding affinities; unrealistic membrane-protein interactions. |

| Harmonic Potentials | Poor representation of potential energy surface far from equilibrium [1]. | Inability to model chemical reactivity; unrealistic bond/angle strain in crowded conformations. |

| Inadequate Dihedral Potentials | Improper balance of rotamer populations and backbone ϕ/ψ preferences [3]. | Misfolding of proteins; drift towards non-native conformational basins. |

| Non-Transferable Parameters | Parameters optimized for small molecules perform poorly in macromolecular contexts [5]. | Gradual distortion of ligand geometry; loss of specific interaction geometries (e.g., H-bonds). |

Quantitative and Qualitative Manifestations of Drift

Force field drift reveals itself through both quantitative metrics and qualitative structural changes, providing researchers with diagnostic tools for identifying its presence.

Energetic and Structural Divergence

The most direct signature of force field drift is a progressive deviation from expected energetic and structural benchmarks.

- Energy Divergence: The total potential energy of the system, or its components (e.g., torsional energy, non-bonded energy), may drift to values inconsistent with known stable states. This can be identified by comparing against reference quantum mechanical (QM) calculations or experimental thermodynamic data.

- Non-Physical Conformations: Simulated molecules may adopt highly strained or sterically clashed conformations that are energetically forbidden in reality. This includes distorted protein secondary structures, unphysical bond lengths or angles, and improper ring puckering in ligands or sugars [5].

- Deviation from Native States: In protein simulations, drift can cause a folded protein to slowly unfold or a protein in its native state to exhibit increasing root-mean-square deviation (RMSD) from the experimental crystal structure without any external perturbation. Conversely, an unfolded peptide might fail to fold into its known native state, instead populating misfolded or aggregated states that the force field incorrectly stabilizes [3].

Case Studies from Biomolecular Simulations

Documented instances in the literature highlight the real-world impact of force field drift.

- Protein Folding Inaccuracies: With the CHARMM C22/CMAP force field, long simulations of the fast-folding Villin headpiece did reach the native state, but the folding mechanism differed from experimental results. More critically, for the pin WW domain, free energy calculations revealed that misfolded states were incorrectly assigned lower free energies than the native folded state, a clear indication of force field imbalance [3].

- Challenges in Drug Discovery: Inaccuracies in torsional parameters, highly relevant for drug-like molecules, can lead to incorrect predictions of a ligand's bound conformation (pose) within a protein's active site [5]. This drift in conformational preference directly impacts the accuracy of virtual screening and binding affinity calculations, limiting the predictive power of structure-based drug design.

Table 2: Experimental Protocols for Detecting and Quantifying Force Field Drift

| Protocol Name | Methodology | Key Measurable Outputs |

|---|---|---|

| Long-Timescale Folding/Unfolding | Running multiple, ultra-long MD simulations of a protein starting from folded and unfolded states [3]. | Population of native vs. misfolded states; folding/unfolding rates; free energy difference between states. |

| Crystal Lattice Prediction | Generating thousands of alternative crystal packing arrangements for a small molecule and testing if the native crystal structure has the lowest energy [5]. | Lattice energy ranking; ability to discriminate native structure from decoys. |

| Dihedral Angle Scans | Comparing the force field's potential energy surface for key dihedral angles against high-level QM calculations [5]. | Torsional energy profiles; rotamer populations; identification of spurious minima or barriers. |

| Free Energy Perturbation (FEP) | Calculating the relative binding free energy of a congeneric ligand series or solvation free energies and comparing to experimental values [6]. | Error in predicted binding affinities (ΔΔG) or solvation free energies (ΔG_solv). |

Emerging Solutions: Mitigating Drift with Advanced Force Fields

The research community is actively developing next-generation force fields and parametrization strategies to combat drift, focusing on greater physical fidelity and improved parameter balance.

Polarizable Force Fields

A major advancement is the move beyond fixed-charge models to explicitly include electronic polarization.

- Drude Oscillator Model: This model attaches massless, charged "Drude" particles to atoms via harmonic springs [3] [4]. The displacement of these particles in an electric field creates induced dipoles, allowing the molecular charge distribution to respond to its environment. The CHARMM Drude FF has been developed for proteins, lipids, and nucleic acids [3].

- Inducible Point Dipoles: The AMOEBA force field uses permanent atomic multipoles (charge, dipole, quadrupole) and inducible point dipoles to achieve a more realistic description of electrostatics and polarization [3] [4]. This model better captures anisotropic effects like lone pairs and σ-holes.

- Fluctuating Charge Models: Also known as charge equilibration (CHEQ), these models allow atomic charges to fluctuate to equalize electronegativity across the molecule, effectively modeling charge transfer [4].

Machine Learning-Driven Approaches

Machine learning (ML) is revolutionizing force field development by enabling more accurate and transferable parameter assignment.

- ML-Parameterized Molecular Mechanics: Frameworks like Grappa and Espaloma use neural networks to predict MM parameters (bonds, angles, dihedrals) directly from the molecular graph [7]. This replaces the traditional system of hand-crafted atom types and lookup tables. The resulting force fields demonstrate improved accuracy on small molecules, peptides, and proteins while retaining the computational efficiency of traditional MM [7].

- End-to-End Training: These models can be trained end-to-end on quantum mechanical data, learning a mapping from molecular graph to a full set of MM parameters that best reproduce QM energies and forces for a vast array of conformations [7]. This holistic approach promotes internal consistency and reduces parametric conflicts.

- Crystal Structure-Informed Optimization: New methods optimize force field parameters by requiring that experimentally determined crystal structures have lower energy than all alternative packing arrangements [5]. This uses the rich structural information in thousands of small-molecule crystal structures to train a balanced and transferable energy model, as demonstrated by the improved performance of the resulting RosettaGenFF in ligand docking [5].

Advanced Parametrization Strategies

Refinements in how parameters are derived also contribute to stability.

- System-Wide Optimization: Instead of fitting different parameter classes independently, newer protocols perform more holistic optimizations. For example, the CHARMM36 force field involved a simultaneous refit of backbone (CMAP) and side-chain dihedral parameters to ensure a balanced contribution to protein structure and dynamics [3].

- Leveraging Diverse Data Sources: Integrating data from multiple levels of theory and experiment—from QM energies and experimental vibrational frequencies to liquid densities and crystallographic data—helps create a more robust and generalizable parameter set [1] [5].

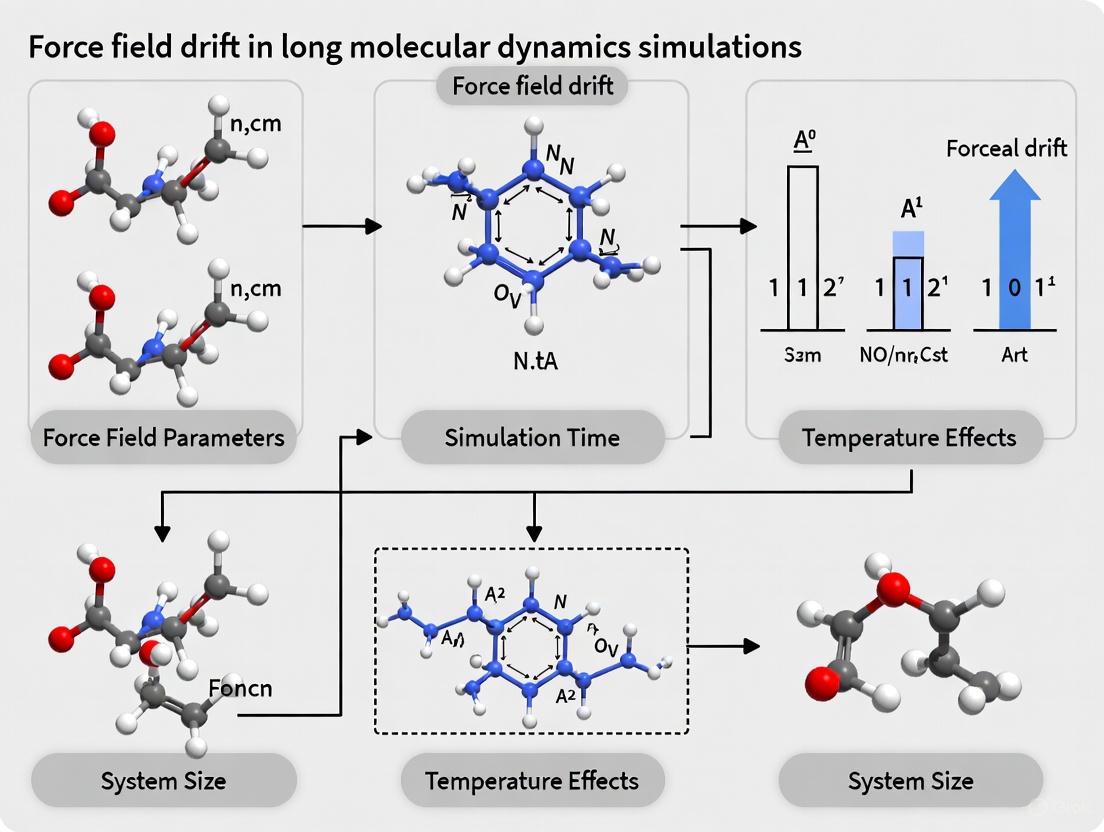

Diagram 1: A map of force field drift illustrating the relationship between its root causes and the emerging computational solutions designed to mitigate them.

Table 3: Research Reagent Solutions for Force Field Development and Validation

| Tool / Resource | Type | Primary Function in Force Field Work |

|---|---|---|

| CHARMM [3] | MD Software & Force Field | Suite for simulation and force field development; includes additive (C36) and polarizable (Drude) FFs. |

| AMBER [3] | MD Software & Force Field | Suite for biomolecular simulation; includes the ff19SB protein force field and GALigandDock. |

| OpenMM [3] [7] | MD Simulation Toolkit | Highly optimized, cross-platform library for MD, supporting both traditional and ML-driven FFs. |

| GROMACS [7] [6] | MD Software Engine | High-performance MD package for running simulations with traditional and new FFs like Grappa. |

| RosettaGenFF [5] | Energy Function & Force Field | A generalized force field optimized using crystal structure data for small molecule and drug discovery applications. |

| Grappa [7] | Machine Learning Force Field | A framework that predicts molecular mechanics parameters from molecular graphs for state-of-the-art accuracy. |

| Cambridge Structural Database (CSD) [5] | Experimental Data Repository | A database of small molecule crystal structures used for force field training and validation. |

| DPmoire [8] | MLFF Software | An open-source tool for constructing accurate machine learning force fields for complex moiré materials. |

Diagram 2: A generalized workflow for developing and validating a new force field, showing the critical stages from parameter training to final application benchmarking.

Force field drift, characterized by a simulation's gradual progression toward energetically divergent and non-physical states, remains a central challenge in molecular dynamics. Its roots are multifaceted, stemming from the simplified functional forms of energy terms, the non-transferability of empirically derived parameters, and the critical omission of physical effects like electronic polarization. The manifestations of this drift—from protein misfolding to inaccurate ligand-binding poses—directly impact the predictive power of computational models in pharmaceutical and materials development.

The path forward is being shaped by several promising strategies. The adoption of polarizable force fields addresses a fundamental physical oversight in traditional models. Simultaneously, the integration of machine learning, as seen in approaches like Grappa and crystal-informed optimization, is enabling a more consistent and data-driven parametrization process that minimizes internal conflicts. As these next-generation force fields continue to mature and be validated against a broader range of experimental and quantum mechanical data, they hold the promise of significantly reducing force field drift. This progress will be crucial for achieving truly predictive simulations of complex biomolecular processes, ultimately enhancing the role of computational science in drug discovery and molecular engineering.

In molecular dynamics (MD) simulations, the force field serves as the fundamental mathematical model that defines the potential energy of a system based on its atomic coordinates. This model decomposes the total energy into contributions from bonded interactions (chemical bonds, angles, and dihedrals) and nonbonded interactions (van der Waals and electrostatic forces) [1]. The accuracy of these parameter sets directly determines the reliability of simulation predictions across diverse applications, from drug discovery to materials science [9] [10]. The "parameterization problem" refers to the systematic errors introduced when these terms imperfectly represent true interatomic forces, leading to force field drift—the gradual deviation of simulation trajectories from physically accurate behavior, particularly problematic in long-timescale simulations [11].

This parameterization challenge persists despite advances in force field development due to the inherent complexity of capturing quantum mechanical realities with classical approximations. As MD simulations extend to biologically relevant timescales (microseconds to milliseconds) and larger systems, once-minor inaccuracies in parameter sets accumulate into significant deviations, compromising the predictive value of simulations [11] [10]. Understanding the sources and impacts of these inaccuracies is thus crucial for researchers relying on MD for drug development, biomolecular engineering, and molecular biology research.

Mathematical Foundations and Functional Forms

Bonded interactions in molecular mechanics force fields are typically modeled using simple analytical functions that describe the energy costs associated with deviations from ideal geometry. The bond stretching energy between two atoms is most commonly represented by a harmonic potential: (E{\text{bond}} = \frac{k{ij}}{2}(l{ij}-l{0,ij})^2), where (k{ij}) is the force constant, (l{ij}) is the actual bond length, and (l_{0,ij}) is the equilibrium bond length [1]. Similarly, angle bending is modeled with a harmonic term for the energy required to deform an angle from its equilibrium value. While these simple approximations work well near equilibrium geometries, they fail to capture the anharmonicity of potential energy surfaces further from equilibrium, and cannot model bond breaking or formation [1].

For dihedral angles, the functional form becomes more complex, typically employing a periodic function such as: (E{\text{dihedral}} = k\phi(1 + \cos(n\phi - \delta))), where (k_\phi) is the force constant, (n) is the periodicity, (\phi) is the dihedral angle, and (\delta) is the phase shift [12] [1]. These terms describe the energy barriers to rotation around bonds and are crucial for capturing conformational preferences. The dihedral parameters are particularly challenging to optimize as they must reproduce complex torsional profiles that dictate molecular flexibility and conformational sampling [13].

Parameterization Methodologies and Limitations

The traditional approach to deriving bonded parameters relies on fitting to quantum mechanical (QM) calculations of small molecule fragments or model compounds [13]. This process involves:

- Target Data Generation: Performing QM calculations (typically at the HF or DFT level of theory) to generate potential energy scans for bond stretching, angle bending, and torsion rotations [13].

- Parameter Optimization: Iteratively adjusting force constants and equilibrium values until the molecular mechanics (MM) energy surface best reproduces the QM reference data [13].

- Transferability Assessment: Validating parameters across multiple chemical contexts to ensure broad applicability [13].

The Force Field Toolkit (ffTK) provides a semi-automated workflow for this process, implementing optimization algorithms that minimize the difference between MM and QM potential energy surfaces [13]. However, several fundamental limitations persist:

- Inadequate Sampling of Chemical Space: Parameterization typically uses small model compounds that may not represent the diverse environments in biomacromolecules [13].

- Limited Representation of Electronic Effects: Classical force fields cannot capture polarization, charge transfer, or other electronic effects that depend on molecular context [13] [11].

- Functional Form Limitations: The simple mathematical forms cannot accurately describe complex potential energy surfaces, particularly for distorted geometries or conjugated systems [1].

Table 1: Experimental Benchmark of Bonded Parameter Performance in Force Field Validation

| Force Field | Water Model | Charge Model | Mean Unsigned Error (kcal/mol) | RMSE (kcal/mol) | Correlation (R²) |

|---|---|---|---|---|---|

| AMBER ff14SB | SPC/E | AM1-BCC | 0.89 | 1.15 | 0.53 |

| AMBER ff14SB | TIP3P | AM1-BCC | 0.82 | 1.06 | 0.57 |

| AMBER ff14SB | TIP4P-EW | AM1-BCC | 0.85 | 1.11 | 0.56 |

| AMBER ff15ipq | SPC/E | AM1-BCC | 0.85 | 1.07 | 0.58 |

| AMBER ff14SB | TIP3P | RESP | 1.03 | 1.32 | 0.45 |

| AMBER ff15ipq | TIP4P-EW | AM1-BCC | 0.95 | 1.23 | 0.49 |

| OPLS2.1 (FEP+) | - | - | 0.77 | 0.93 | 0.66 |

| AMBER TI | - | - | 1.01 | 1.30 | 0.44 |

Data derived from validation studies on JACS benchmark set (199 compounds) showing performance of different parameter combinations for binding affinity prediction [9].

Figure 1: Workflow for bonded parameters optimization showing iterative process of quantum mechanical target data generation and parameter refinement.

Nonbonded Term Parameterization: Critical Challenges

Electrostatic Interactions and Charge Assignment

Electrostatic interactions are typically modeled using Coulomb's law: (E{\text{Coulomb}} = \frac{1}{4\pi\varepsilon0}\frac{qi qj}{r{ij}}), where (qi) and (qj) are partial atomic charges and (r{ij}) is the interatomic distance [1]. The assignment of these partial atomic charges represents one of the most significant challenges in force field development, with different philosophical approaches employed by major force fields:

- AMBER/GAFF: Typically uses RESP (Restrained Electrostatic Potential) charges derived from fitting to the quantum mechanical electrostatic potential [9] [13].

- CHARMM/CGenFF: Employs water-interaction profiles to optimize charges by reproducing quantum mechanical interaction energies with water molecules [13].

- OPLS: Derives charges directly by fitting to experimentally measured condensed phase properties [13].

Each approach carries distinct limitations. RESP charges can be conformation-dependent and may overpolarize molecules, while CHARMM's water-interaction method depends heavily on the choice of interaction sites and orientations [13]. The recently introduced AMBER ff15ipq force field attempts to address some charge transfer and polarization effects through implicitly polarized charges derived in the presence of explicit solvent, showing improved performance in some benchmark tests [9].

Van der Waals Interactions and Combination Rules

Van der Waals interactions model attractive dispersion forces and repulsive electron cloud overlap, most commonly using the Lennard-Jones 12-6 potential: (E{\text{vdW}} = 4\epsilon{ij}\left[\left(\frac{\sigma{ij}}{r{ij}}\right)^{12} - \left(\frac{\sigma{ij}}{r{ij}}\right)^{6}\right]), where (\epsilon{ij}) is the well depth and (\sigma{ij}) is the collision diameter [12] [1].

The parameters for unlike atom pairs (i.e., atoms of different types) are typically generated using combination rules rather than direct parameterization. The most common approach is the Lorentz-Berthelot rules: (\sigma{ij} = \frac{\sigmai + \sigmaj}{2}) and (\epsilon{ij} = \sqrt{\epsiloni \epsilonj}) [12]. These rules introduce systematic errors because real van der Waals interactions between different atom types do not necessarily follow these mathematical approximations. Specialized NBFIX corrections are sometimes implemented for specific problematic atom pairs, but this approach is not comprehensive [14].

Table 2: Impact of Force Field Components on Binding Affinity Prediction Accuracy

| Force Field Component | Variations Tested | Best Performing | Performance Impact (MUE) |

|---|---|---|---|

| Protein Force Field | AMBER ff14SB, ff15ipq | AMBER ff14SB | ±0.06-0.18 kcal/mol |

| Water Model | SPC/E, TIP3P, TIP4P-EW | TIP3P | ±0.07 kcal/mol |

| Charge Model | AM1-BCC, RESP | AM1-BCC | ±0.21 kcal/mol |

| Overall Best Combination | AMBER ff14SB/TIP3P/AM1-BCC | - | 0.82 kcal/mol |

Data synthesized from free energy perturbation validation studies showing relative importance of different force field components [9].

Force Field Drift: Accumulation and Impact in Long Simulations

Mechanisms of Error Accumulation

Force field drift manifests as gradual deviations in protein conformations, ligand binding poses, membrane properties, or solvent structure over the course of extended MD simulations. This drift occurs through several interconnected mechanisms:

- Error Propagation: Small energy errors at each timestep accumulate over millions of steps, steering the system toward incorrect regions of conformational space [11].

- Conformational Bias: Inaccuracies in torsional potentials systematically favor incorrect rotameric states or backbone conformations, which then propagate through dependent structural elements [9].

- Nonbonded Drift: Imperfect electrostatics or van der Waals parameters gradually alter protein solvation, ion distributions, and molecular association patterns [15].

- Cascading Errors: Initial inaccuracies trigger structural adjustments that create new environments where force field limitations become more pronounced [11].

In drug discovery applications, these errors directly impact the accuracy of binding free energy predictions, with studies showing mean unsigned errors of 0.8-1.0 kcal/mol even for well-validated force fields—sufficient to mislead lead optimization efforts [9].

Experimental Validation and Error Quantification

Rigorous validation against experimental data is essential for quantifying force field drift. The JACS benchmark set—encompassing BACE, CDK2, JNK1, MCL1, P38, PTP1B, Thrombin and TYK2—provides a standard for assessing prediction accuracy across diverse protein targets [9]. Key metrics include:

- Mean Unsigned Error (MUE): Difference between predicted and experimental binding affinities (typically 0.7-1.0 kcal/mol for current force fields) [9].

- Root Mean Square Error (RMSE): Magnitude of average error, which penalizes large deviations more heavily [9].

- Correlation Coefficients: Measure of how well computational predictions rank compounds by affinity [9].

Recent validation studies reveal that different force field parameter sets yield statistically significant variations in prediction accuracy, with errors substantially exceeding the threshold for chemical accuracy (1.0 kcal/mol) in many cases [9].

Emerging Solutions and Parameterization Protocols

Machine Learning and Automated Parameterization

Machine learning approaches are revolutionizing force field development by enabling direct fitting of potential energy surfaces to high-level quantum mechanical data [11]. The DeePMD framework uses deep neural networks to represent potential energy with near-quantum accuracy while maintaining computational efficiency comparable to classical force fields [11]. These methods can achieve root mean square errors below 0.4 kcal/mol for diverse molecular systems, significantly outperforming traditional parameterization for specific applications [11].

Complementing these ML potentials, new parameterization tools like the Force Field Toolkit (ffTK) semi-automate the traditional parameterization process, making rigorous parameter development more accessible to non-specialists [13]. ffTK implements the CHARMM/CGenFF parameterization philosophy through a structured workflow encompassing charge optimization, bonded parameter derivation, and dihedral fitting [13].

Advanced Chemical Perception

Traditional force fields rely on atom typing—classifying atoms into discrete types based on element, hybridization, and local chemical environment. This approach suffers from chemical resolution limitations and cannot represent continuous electronic effects [16]. The emerging SMIRNOFF (SMIRKS Native Open Force Field) approach uses direct chemical perception based on SMIRKS patterns, allowing more nuanced parameter assignment that better captures chemical context [16].

This approach enables the development of end-to-end differentiable force fields where graph convolutional networks perceive chemical environments and assign parameters in a manner differentiable with respect to model parameters, allowing force field optimization through backpropagation [16].

Figure 2: Error propagation pathway from initial parameter inaccuracies to force field drift, with mitigation strategies.

Table 3: Research Reagent Solutions for Force Field Development and Validation

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| Force Field Toolkit (ffTK) | Software Plugin | Semi-automated parameterization | CHARMM-compatible parameter development for novel molecules [13] |

| OpenMM | MD Software Package | Molecular dynamics engine | Flexible, hardware-accelerated MD simulations with multiple force fields [9] |

| Alchaware | FEP Workflow | Automated free energy calculations | Validation of force fields through binding affinity prediction [9] |

| DeePMD | ML Framework | Neural network potentials | Machine learning force field training and deployment [11] |

| ParamChem | Web Server | Automated parameter assignment | Initial parameter generation for novel compounds via analogy [13] |

| SMIRNOFF | Force Field Format | Direct chemical perception | Modern force field specification avoiding atom types [16] |

| AMBER/GAFF | Force Field | Biomolecular simulation | Traditional force field for proteins, nucleic acids, and drug-like molecules [9] |

| CHARMM/CGenFF | Force Field | Biomolecular simulation | Alternative philosophy with optimized condensed-phase properties [13] |

| JACS Benchmark Set | Validation Set | Standardized test cases | Performance assessment across multiple protein targets [9] |

| ThermoML Archive | Experimental Database | Physical property data | Force field validation against thermodynamic measurements [16] |

The parameterization problem remains a fundamental challenge in molecular dynamics, with inaccuracies in both bonded and nonbonded terms introducing systematic errors that propagate through long simulations and limit predictive accuracy. Traditional approaches relying on atom typing and combination rules face inherent limitations in capturing complex chemical environments, particularly for novel molecular structures encountered in drug discovery [9] [13].

Promising paths forward include machine learning potentials that bypass traditional functional forms entirely [11], more sophisticated chemical perception methods that better capture molecular context [16], and automated parameterization pipelines that make rigorous parameter development more accessible [13]. Additionally, community-wide standardization of validation protocols and benchmarks ensures more robust assessment of force field performance [9] [16].

For researchers employing MD simulations in drug development and molecular biology, awareness of these parameterization challenges is essential for critical interpretation of simulation results and appropriate application of computational methods. As force fields continue to evolve toward greater accuracy and transferability, their capacity to guide experimental work and predict molecular behavior will increasingly fulfill their potential as indispensable tools in scientific discovery.

Classical molecular dynamics (MD) simulations are indispensable tools in computational chemistry and drug discovery, enabling the study of biological processes at atomic resolution. However, their predictive power is fundamentally constrained by the empirical force fields (FFs) that govern interatomic interactions. These FFs intentionally sacrifice quantum mechanical accuracy for the computational efficiency required to simulate large systems over biologically relevant timescales. This trade-off introduces systematic errors that accumulate over the course of long simulations, leading to a phenomenon known as force field drift, where sampled configurations progressively deviate from realistic behavior. This whitepaper examines the inherent limitations of classical FFs, their manifestation as drift in long-time simulations, and the emerging computational strategies designed to mitigate these deficiencies without wholly compromising computational tractability.

Molecular dynamics simulations provide a dynamic, particle-based description of molecular systems by numerically solving equations of motion to generate trajectories over time [17]. The heart of any MD simulation is its force field—a mathematical model that computes the potential energy of a system as a function of its atomic coordinates. Force fields are typically based on molecular mechanics (MM), which treats atoms as spheres and bonds as springs, using a large set of empirical parameters fitted to experimental or quantum mechanical (QM) data [17].

The primary compromise in classical FFs is the neglect of explicit electronic degrees of freedom. This simplification allows simulations to access microsecond to millisecond timescales for systems comprising hundreds of thousands of atoms, scales that are currently prohibitive for quantum mechanical methods [17]. However, this computational efficiency comes at the cost of reduced physical fidelity, particularly for processes involving electron transfer, bond breaking/formation, and polarization effects in complex charged fluids [18]. These limitations are the root cause of force field drift, where inaccuracies in the energy landscape cause simulations to sample increasingly unphysical states over time.

Fundamental Limitations of Classical Force Fields

The Fixed-Charge Approximation and Polarization Deficiencies

A central limitation of most classical FFs is their use of fixed partial atomic charges. In reality, electronic distributions respond instantaneously to their local chemical environment, a phenomenon known as polarization. Fixed-charge FFs cannot capture this effect, leading to systematic errors in describing electrostatic interactions, especially in heterogeneous environments like protein-ligand complexes or ionic liquids [18].

To overcome this, polarizable FFs have been developed that explicitly model electronic response, but at a significantly increased computational cost [18]. An alternative strategy has been to use non-polarizable FFs with reduced charges, which can improve the prediction of dynamic properties but often at the cost of structural accuracy [18].

Inability to Model Chemical Reactivity

Classical MD simulations generally maintain constant molecular topology throughout a simulation, meaning bond breaking and forming are not allowed [17]. This restriction precludes the study of chemical reactions, proton transfer processes, and other phenomena involving changes in chemical identity. While specialized reactive force fields (ReaxFF) have been developed to bridge this gap, they remain more computationally demanding than traditional FFs and require extensive parameterization [19].

Transferability and Parameterization Challenges

The concept of transferability—where a single set of parameters for a given atom type describes its behavior across diverse molecular contexts—faces particular challenges for small molecules [13]. The vast chemical space of drug-like molecules, often containing "exotic" functional groups and complex aromatic systems, runs counter to the principles of transferability [13]. Parameterization of novel chemical entities remains a significant bottleneck, with tools like the Force Field Toolkit (ffTK) aiming to minimize barriers through automation and guided workflows [13].

Table 1: Core Limitations of Classical Force Fields and Their Consequences

| Limitation | Physical Principle Compromised | Impact on Simulation Accuracy |

|---|---|---|

| Fixed Partial Charges | Electronic Polarization | Inaccurate electrostatic interactions in heterogeneous environments; poor description of dielectric response |

| Rigid Bond Connectivity | Chemical Reactivity | Inability to simulate bond breaking/formation, proton transfer, or reaction mechanisms |

| Functional Form | Quantum Mechanical Effects | Approximate potential energy surfaces; missing non-bonded terms (e.g., charge penetration, charge transfer) |

| Transferability Assumption | Chemical Specificity | Reduced accuracy for molecules with unique functional groups or complex electronic effects |

Force Field Drift: Causes and Consequences in Long Simulations

Force field drift refers to the progressive deviation of simulation trajectories from physically accurate sampling, becoming more pronounced in long timescale simulations. This phenomenon arises from the accumulation of small errors in the force field's description of the underlying potential energy surface.

Pathological Deficiencies in Complex Charged Fluids

In ionic liquids and other complex charged systems, classical FFs exhibit systematic pathologies that become increasingly evident in long simulations. These include:

- Inaccurate description of weak hydrogen bonds due to fixed charge distributions

- Hindered dynamics from the absence of electronic polarization effects

- Underprediction of transport properties like viscosity and conductivity [18]

These deficiencies directly impact the simulation of biologically relevant processes such as electrolyte behavior in batteries, where accurate description of ion transport is critical [18].

Error Accumulation in Free Energy Calculations

The accuracy of free energy calculations depends critically on the FF's ability to reproduce the true potential energy surface. When FFs contain systematic biases, these errors compound over simulation time, leading to:

- Inadequate sampling of high-energy states essential for accurate free energy estimation

- Poor extrapolation to regions of configuration space not well-represented in training data

- Divergence from experimental observables as simulation time increases [20]

Machine learning force fields (MLFFs) show promise in addressing these issues but face challenges in creating comprehensive training datasets that adequately represent the free energy surface [20].

Table 2: Manifestations of Force Field Drift in Extended Simulations

| Simulation Property | Short-Time Scale Behavior | Long-Time Scale Drift Manifestation |

|---|---|---|

| Structural Parameters | Reasonable agreement with experimental structures | Gradual deviation from reference structures; sampling of unphysical conformations |

| Dynamic Properties | Order-of-magnitude agreement with experiment | Progressive slowing or acceleration of dynamics; artificial trapping in metastable states |

| Energy Conservation | Well-conserved in microcanonical (NVE) ensemble | Energy drift due to force miscalculations and integration errors |

| Free Energy Estimates | Reasonable for small perturbations | Increasing systematic error for large conformational changes |

Emerging Solutions and Next-Generation Force Fields

Neural Network Force Fields

Neural network force fields (NNFFs) represent a paradigm shift, using machine learning to map atomic configurations to energies and forces with near-QM accuracy while maintaining much of the computational efficiency of classical FFs [18]. Frameworks like NeuralIL for ionic liquids have demonstrated capability to:

- Replicate molecular structures with improved accuracy

- Better predict weak hydrogen bonds and their dynamics

- Model proton transfer reactions inaccessible to classical FFs [18]

While NNFFs are approximately 10-100 times slower than classical FFs, they remain orders of magnitude faster than full ab initio MD, positioning them as a promising intermediate solution [18].

Reactive Force Fields (ReaxFF)

ReaxFF incorporates bond order formalism to enable dynamic bond formation and breaking within the MD framework, bridging the gap between quantum chemistry and classical MD [19]. Parameterized using QM calculations and experimental data, ReaxFF has been successfully deployed to study:

- Pyrolysis and oxidation processes in various fuel types

- Catalytic reaction mechanisms

- Soot formation and nanoparticle synthesis [19]

Advanced Parameterization Methodologies

Improved parameterization tools like the Force Field Toolkit (ffTK) address drift by enabling more rigorous development of CHARMM-compatible parameters [13]. ffTK implements a structured workflow that includes:

- Charge optimization from water-interaction profiles

- Bond and angle parameterization through fitting to QM potential energy surfaces

- Dihedral fitting using targeted optimization procedures [13]

Such methodologies help minimize parameter transferability errors that contribute to long-term drift.

Force Field Selection and Validation Workflow: This diagram illustrates the iterative process of selecting, parameterizing, and validating different classes of force fields against quantum mechanical or experimental data.

Table 3: Key Software Tools for Force Field Development and Validation

| Tool/Resource | Primary Function | Application Context |

|---|---|---|

| Force Field Toolkit (ffTK) | Guided parameterization workflow | CHARMM-compatible parameter development for small molecules [13] |

| NeuralIL | Neural network force field | Simulation of ionic liquids with QM accuracy [18] |

| ReaxFF | Reactive force field | Combustion, catalysis, and bond-breaking processes [19] |

| ParamChem/CGenFF | Automated parameter assignment | Initial parameter estimation by analogy [13] |

| Equivariant Graph Neural Networks | ML force field for free energy | Enhanced sampling and free energy surface prediction [20] |

The inherent limitations of classical force fields—particularly their fixed-charge approximation, inability to model chemical reactivity, and transferability constraints—represent a fundamental trade-off between computational efficiency and physical accuracy. These limitations directly contribute to force field drift in long molecular dynamics simulations, where systematic errors accumulate and lead to progressive deviation from physically realistic sampling.

Next-generation approaches, including neural network potentials and reactive force fields, show significant promise in mitigating these issues while maintaining reasonable computational cost. However, these advanced methods introduce their own challenges in parameterization, training data requirements, and computational overhead. The ongoing development of tools like ffTK that streamline parameterization and validation processes will be crucial for maximizing the predictive power of molecular simulations in drug discovery and materials design.

As computational resources continue to grow and machine learning methodologies mature, the gap between quantum mechanical accuracy and molecular dynamics efficiency will narrow, ultimately reducing the prevalence and impact of force field drift in long-timescale simulations.

In molecular dynamics (MD) simulations, the goal of predicting the time evolution of a system's energy as a function of its atomic coordinates is fundamental to studying processes ranging from protein folding and ligand binding to enzyme catalysis [3]. However, a central challenge in these simulations is the accumulation of numerical and physical inaccuracies over time, leading to significant drift that can compromise the validity of long-timescale results. This drift manifests as unphysical deviations in energy, temperature, structural properties, or thermodynamic observables, ultimately limiting the predictive power of simulation data.

Force fields—the set of potential energy functions from which atomic forces are derived—represent a primary source of these accumulating errors [3]. While current additive protein force fields have reached a mature state after 35 years of development, the next major step in advancing their accuracy requires an improved representation of the molecular energy surface, specifically through the inclusion of electronic polarization effects [3]. The accumulation of slight inaccuracies in these fundamental energy functions, combined with numerical integration errors, creates a compounding effect that becomes particularly problematic for the long simulations needed to study rare events or achieve proper conformational sampling.

This technical guide examines the fundamental causes of force field drift in MD simulations, presents quantitative analyses of error accumulation, details methodological approaches for error mitigation, and provides practical protocols for researchers seeking to minimize drift in their investigations. By addressing these issues systematically, the reliability of long-timescale molecular simulations for drug development and basic research can be significantly enhanced.

Force Field Limitations as a Primary Source of Systematic Drift

Additive vs. Polarizable Force Fields

Traditional additive force fields, while computationally efficient, inherently contain approximations that lead to systematic errors. These force fields use fixed atomic partial charges that cannot respond to changes in the electrostatic environment, such as those occurring during conformational transitions, binding events, or solvation changes [3]. This limitation becomes particularly significant in long simulations where sampling diverse electrostatic environments is essential.

Table 1: Comparison of Additive and Polarizable Force Fields

| Feature | Additive Force Fields | Polarizable Force Fields |

|---|---|---|

| Electrostatic Representation | Fixed atomic charges | Environment-responsive charges |

| Computational Cost | Lower | Significantly higher |

| Accuracy in Heterogeneous Environments | Limited | Improved |

| Parameterization Complexity | Moderate | High |

| Treatment of Many-Body Effects | Approximate | Explicit |

| Representative Examples | CHARMM36, AMBER ff99SB | CHARMM Drude, AMOEBA |

The CHARMM additive force field has undergone significant refinement, with the C36 version representing improvements through a new backbone CMAP potential optimized against experimental data on small peptides and folded proteins, and new side-chain dihedral parameters [3]. Similarly, the AMBER force field has seen continual improvements, with variants like ff99SB-ILDN-Phi introducing perturbations to the ϕ backbone dihedral potential to improve sampling in aqueous environments [3]. Despite these advances, the fundamental limitation of fixed electrostatics remains in additive force fields.

The Polarizable Force Field Solution

Polarizable force fields address key limitations of additive models by explicitly accounting for electronic polarization. The Drude polarizable force field in CHARMM, developed since 2001, incorporates electronic degrees of freedom through Drude particles (charged virtual sites) attached to atoms [3]. This approach allows the electronic structure to respond to changing electrostatic environments, more accurately capturing many-body effects.

The AMOEBA (Atomic Multipole Optimized Energetics for Biomolecular Applications) force field represents another polarizable approach, using atomic multipoles (rather than just partial charges) and explicit polarization [3]. Development has included implementation of appropriate integrators for computationally efficient extended Lagrangian MD simulations, optimization of water models (SWM4-DP and SWM4-NDP), and parametrization of small molecules covering functional groups commonly found in biomolecules [3].

Quantitative Analysis of Error Accumulation in Molecular Dynamics

Numerical Integration and Error Propagation

In MD simulations, the explicit time integration of Newton's equations of motion uses knowledge of the current state to compute the next state, with each calculation introducing small errors that propagate to following states [21]. This compounding effect creates a significant challenge for long-time-scale calculations, regardless of whether time steps are on the order of seconds or minutes. The overall calculation requires many time steps, involving substantial accumulated error without appropriate mitigation strategies [21].

Higher-order integration methods (e.g., Runge-Kutta, leapfrog) can minimize error at each time step, but for truly long-scale calculations, additional approaches are necessary [21]. These include careful numerical design (guarding against subtractive cancellation and error magnification), use of fused multiply-add operations, compensated computations (for dot products and polynomial evaluation), and quadruple- or arbitrary-precision computation when necessary [21].

Table 2: Error Accumulation and Mitigation in Numerical Integration

| Error Source | Impact on Long-Timescale Simulations | Mitigation Strategies |

|---|---|---|

| Finite Time Step | Truncation errors accumulating over millions of steps | Higher-order integration methods (e.g., 4th order Runge-Kutta) |

| Floating-Point Precision | Energy drift due to rounding errors in force calculations | Compensated summation algorithms, mixed-precision approaches |

| Force Calculation Approximations | Systematic deviations in molecular mechanics | Increased real-space cutoffs, improved lattice summation methods |

| Constraint Algorithms | Artificial energy redistribution over time | More accurate constraint solvers (e.g., LINCS, SHAKE) |

| Parallelization Artifacts | Non-deterministic force evaluations in parallel computing | Reproducible reduction algorithms, careful domain decomposition |

Energy Drift as a Diagnostic Metric

Energy drift in MD simulations serves as a key indicator of numerical stability and physical accuracy. In the NVE (microcanonical) ensemble, the total energy should remain constant, with fluctuations around a stable mean. A systematic drift in total energy indicates numerical problems or insufficient convergence of force calculations. Monitoring this drift provides researchers with a crucial diagnostic for simulation quality, particularly for long timescales where small per-step errors accumulate into significant deviations.

Methodological Approaches for Drift Mitigation

Advanced Force Calibration Techniques

Recent methodological advances in single-molecule force spectroscopy provide insights relevant to MD simulations. The Hadamard variance (HV) method has emerged as a robust approach for force calibration in magnetic tweezers measurements, demonstrating particular strength in mitigating drift effects [22]. This method exhibits similar or higher precision and accuracy compared to established techniques like power spectral density (PSD) and Allan variance (AV) analyses, yielding lower force estimation errors across a wide range of signal-to-noise ratios and drift speeds [22].

The HV method's significance lies in its drift-invariant properties—it maintains consistent uncertainty levels across diverse experimental conditions, making it particularly valuable for long-timescale measurements where drift becomes inevitable [22]. This approach remains robust against common sources of additive noise, such as white and pink noise, which often complicate experimental data analysis [22].

Evidence Accumulation Models for Error Analysis

Evidence Accumulation Models (EAMs) provide a powerful framework for understanding how errors accumulate in decision-making processes relevant to simulation analysis. The Drift Diffusion Model (DDM) conceptualizes behaviors as resulting from the noisy accumulation of evidence until a decision boundary is reached [23]. In MD simulations, analogous processes occur in conformational sampling and state transitions.

Research has shown that different components of these accumulation processes change on distinct timescales. In perceptual decision-making tasks, drift rate (sensitivity of evidence accumulation) was found to change as a continuous, exponential function of cumulative trial number, while response boundary (threshold for decision) changed within each daily session independently across sessions [23]. This separation of timescales suggests similar distinctions might exist in MD simulations, with some parameters evolving continuously while others reset between simulation segments.

Experimental Protocols for Drift-Robust Molecular Dynamics

Protocol 1: Force Field Validation and Benchmarking

Purpose: To establish baseline performance metrics for force fields and identify potential sources of systematic drift before embarking on production simulations.

Materials:

- Benchmark protein set: Include both folded and intrinsically disordered proteins

- Reference data: Experimental NMR measurements, crystallographic B-factors, and thermodynamic data

- Simulation software: Compatible with both additive and polarizable force fields

- Analysis tools: For calculating conformational properties, energy distributions, and time-correlation functions

Procedure:

- Initial Structure Preparation: Obtain and minimize starting structures for benchmark proteins

- Solvated System Setup: Place proteins in appropriate water boxes with physiological ion concentrations

- Equilibration Protocol: Perform gradual heating and equilibration with position restraints

- Production Simulations: Run multiple independent replicas (≥ 3) of 100-500 ns each

- Property Monitoring: Track backbone dihedral distributions, hydrogen bonding patterns, and salt bridge stability

- Drift Quantification: Calculate energy drift rates and structural deviation metrics

- Comparative Analysis: Evaluate performance against experimental references and between force fields

Validation Metrics:

- Backbone root-mean-square deviation (RMSD) stability over time

- Drift rate in total energy (should be < 0.01 kJ/mol/ns per atom)

- Reproduction of experimental conformational preferences

- Convergence of structural properties across independent replicas

Protocol 2: Long-Timescale Simulation with Intermediate Validation

Purpose: To enable extended simulation timescales while monitoring and correcting for force field drift.

Materials:

- Validated initial structure: From Protocol 1 or experimental source

- Checkpointing system: For simulation restart capability

- Reference snapshots: Experimentally determined structures when available

- Analysis framework: For periodic assessment of simulation quality

Procedure:

- Simulation Segmentation: Divide long simulations into manageable segments (50-100 ns)

- Intermediate Validation: After each segment, compare key structural metrics to reference data

- Drift Correction: Apply rotational and translational alignment to reference structures when appropriate

- Energy Redistribution: Monitor potential energy components for unphysical redistribution

- Constraint Stability: Verify bond length and angle constraints are not introducing artificial forces

- Continual Assessment: Use multiple metrics to detect subtle drift patterns

- Documentation: Maintain detailed records of all parameters and adjustments

Visualization of Error Accumulation Pathways

Diagram 1: Pathways of error accumulation in molecular dynamics simulations, showing how small initial inaccuracies compound into significant deviations.

Diagram 2: Comprehensive drift mitigation strategy spanning pre-simulation, during simulation, and post-simulation phases.

Table 3: Research Reagent Solutions for Drift Mitigation

| Resource Category | Specific Tools | Function in Drift Mitigation |

|---|---|---|

| Force Fields | CHARMM Drude, AMOEBA | Incorporate polarization for improved electrostatic response |

| Validation Databases | Protein Data Bank, NMR data repositories | Provide experimental reference data for force field validation |

| Specialized Software | NAMD, AMBER, GROMACS, OpenMM | Enable efficient implementation of advanced integration algorithms |

| Analysis Tools | MDAnalysis, VMD, PyTraj | Facilitate monitoring of simulation stability and drift metrics |

| Benchmark Systems | Fast-folding proteins, well-characterized peptides | Offer standardized test cases for force field evaluation |

| Uncertainty Quantification Tools | Bootstrapping libraries, statistical analysis packages | Enable rigorous assessment of simulation reliability |

Force field drift in long molecular dynamics simulations represents a fundamental challenge that requires multifaceted solutions. The accumulation of small errors—from force field approximations, numerical integration limitations, and sampling constraints—can compound into significant deviations that compromise predictive accuracy. Through the systematic implementation of advanced force fields incorporating polarization, robust validation protocols, careful numerical practices, and continuous monitoring strategies, researchers can significantly mitigate these effects.

The development of drift-invariant analysis methods, such as the Hadamard variance approach adapted from single-molecule force spectroscopy, provides promising directions for future methodological improvements [22]. Similarly, insights from evidence accumulation models about separate timescales for parameter evolution offer conceptual frameworks for understanding how different types of errors manifest over time [23]. As MD simulations continue to push toward longer timescales and more complex biological questions, addressing the fundamental challenge of error accumulation will remain essential for producing reliable, predictive results in drug development and basic research.

Next-Generation Solutions: Machine Learning and Advanced Parameterization

Molecular dynamics (MD) simulations are indispensable for studying biomolecular mechanisms, drug binding, and protein folding. The success of these simulations hinges on the accuracy of the underlying force field—the computational model that describes the potential energy of a system and the forces acting on its atoms. Traditional molecular mechanics (MM) force fields, which use physics-inspired functional forms, provide exceptional computational efficiency but are inherently approximate, trading accuracy for speed [24] [25]. This compromise manifests as force field drift in long-timescale simulations, where small inaccuracies in the energy function accumulate over time, steering the system toward unphysical states or incorrect thermodynamic equilibria. This drift often stems from an imperfect balance between different energy terms (e.g., backbone dihedrals) or an insufficient description of complex electronic effects like polarization [3].

The gold standard for accuracy is quantum mechanics (QM), particularly density functional theory (DFT), which explicitly treats electrons. However, QM calculations are computationally prohibitive for large systems and long timescales. Machine learning force fields (MLFFs), particularly those leveraging graph neural networks (GNNs), have emerged as a transformative technology that bridges this accuracy-efficiency gap [25] [26]. By learning the potential energy surface directly from large-scale QM data, GNN-based force fields offer a path to simulations that are both accurate and efficient, thereby directly addressing the fundamental causes of force field drift.

Graph Neural Networks: A Natural Framework for Molecular Representation

Graph Neural Networks (GNNs) are a class of deep learning models designed to operate on graph-structured data. This architecture is exceptionally well-suited for molecular systems, where atoms naturally constitute nodes and chemical bonds form edges [27]. The GNN's core operation is message passing, where each atom iteratively collects information from its neighboring atoms, building a rich numerical representation (an embedding) that encodes its local chemical environment [27] [28].

Table 1: Core Components of a Message-Passing Graph Neural Network

| Component | Mathematical Function | Role in Molecular Modeling |

|---|---|---|

| Node Embedding | ( hv^0 = fa(\text{One-Hot(Type(atom } v)) ) | Initial representation of an atom based on its element type. |

| Edge Embedding | ( h_{(v,w)}^0 = G( d(v,w) ) ) | Represents the bond or interatomic distance, often using a Gaussian filter. |

| Message Passing | ( mv^{t+1} = \sum{w \in N(v)} Mt(hv^t, hw^t, e{vw}) ) | Aggregates information from neighboring atoms ( w ) to atom ( v ). |

| Node Update | ( hv^{t+1} = Ut(hv^t, mv^{t+1}) ) | Updates the state of atom ( v ) based on the received messages. |

| Readout | ( y = R({h_v^K | v \in G}) ) | Pools all atom embeddings to a graph-level prediction (e.g., total energy). |

This message-passing framework allows GNNs to learn complex, high-dimensional relationships between atomic structure and potential energy without relying on hand-crafted features, enabling a more accurate and data-driven representation of the quantum mechanical energy landscape [27] [28].

GNN Architectures for Force Fields: From Energy Prediction to Direct Parametrization

Several specialized GNN architectures have been developed to construct machine-learned force fields. They primarily fall into two categories: those that learn the potential energy and differentiate it to obtain forces, and those that predict forces directly.

End-to-End Force Prediction (GNNFF, GAMD)

Architectures like the Graph Neural Network Force Field (GNNFF) bypass the computational bottleneck of energy differentiation by predicting atomic forces directly from automatically extracted, rotationally-covariant features of the local atomic environment [28]. Similarly, GNN Accelerated Molecular Dynamics (GAMD) directly maps the system's state (atom positions and types) to atomic forces, completely bypassing the explicit calculation of potential energy [29]. This direct approach leads to significant computational acceleration.

Machine-Learned Molecular Mechanics (Grappa, Espaloma)

An alternative strategy is to use GNNs to predict the parameters of a conventional molecular mechanics (MM) force field. Frameworks like Grappa and Espaloma employ a graph attentional neural network to analyze the molecular graph and assign optimal MM parameters (e.g., equilibrium bond lengths, force constants, and partial charges) [24] [30]. The resulting force field retains the computationally efficient functional form of traditional MM, ensuring compatibility with highly optimized MD software like GROMACS and OpenMM, but with significantly enhanced accuracy [24].

Quantitative Performance: Benchmarking GNN Force Fields

Extensive benchmarking demonstrates that GNN-based force fields can achieve quantum-chemical accuracy while maintaining the computational efficiency of classical molecular mechanics.

Table 2: Benchmarking Performance of Selected GNN Force Fields

| GNN Force Field | Key Architectural Feature | Reported Performance | Reference System |

|---|---|---|---|

| GNNFF | Direct force prediction with covariant features | 16% higher force accuracy and 1.6x faster than SchNet; Li diffusivity within 14% of AIMD [28]. | Organic molecules (ISO17), Li₇P₃S₁₁ |

| Grappa | Predicts MM parameters from molecular graph | Outperforms traditional MM/ML-MM force fields; matches experimental J-couplings & folding free energies [24]. | Small molecules, peptides, RNA |

| Espaloma-0.3 | Differentiable MM parameter assignment | Reproduces QM energies/geometries; stable simulations with accurate binding free energies [30]. | Small molecules, peptides, nucleic acids |

| GAMD | Direct force prediction, agnostic to system scale | Competitive performance with production-level MD software on large-scale simulation [29]. | Lennard-Jones system, Water system |

A critical advantage of MLFFs is their scalability. For instance, GNNFF trained on a small supercell of a lithium-ion conductor (1x2x1) was able to accurately predict the forces in a larger system (1x2x2) with only a 3% drop in accuracy [28]. This demonstrates the model's ability to learn generalizable local interactions, a key requirement for mitigating force field drift in large biomolecular systems.

Table 3: Essential Tools for Developing and Applying GNN Force Fields

| Tool / Resource | Type | Function in Research |

|---|---|---|

| QM Reference Data (DFT) | Data | Provides high-accuracy energy and force labels for training and validating MLFFs [8] [30]. |

| VASP MLFF Module | Software | An on-the-fly MLFF algorithm used to generate training data from ab initio MD simulations [8]. |

| Allegro / NequIP | Software | High-accuracy MLFF training frameworks capable of achieving errors of a fraction of meV/atom [8]. |

| DPmoire | Software | Open-source package for constructing MLFFs specifically tailored for complex moiré material systems [8]. |

| GROMACS / OpenMM | Software | Highly optimized MD engines where parameterized ML-MM force fields (e.g., Grappa) can be deployed [24]. |

| MPNICE (Schrödinger) | Pretrained Model | A commercial MLFF architecture that explicitly incorporates equilibrated atomic charges and long-range electrostatics [26]. |

Experimental Protocol: Constructing a Robust GNN Force Field

The following methodology, inspired by tools like DPmoire and research on Grappa/Espaloma, outlines a general protocol for building a transferable GNN force field [24] [8].

Step 1: Training Dataset Curation

- Structure Generation: Construct a diverse set of molecular structures covering the relevant chemical space. For biomolecules, this includes dipeptides, tripeptides, and small folded proteins.

- Configuration Sampling: Use classical MD or enhanced sampling to generate a wide range of conformations for each structure, ensuring coverage of relevant metastable states and transitions.

- QM Single-Point Calculations: Perform high-level QM calculations (e.g., DFT with an appropriate van der Waals correction) on all sampled configurations to obtain reference energies and atomic forces.

Step 2: Model Training and Validation

- Architecture Selection: Choose a GNN architecture (e.g., direct force prediction or MM parametrization) based on the desired trade-off between computational speed and physical interpretability.

- Symmetry Preservation: Ensure the model architecture is designed to be E(3)-equivariant (invariant to rotation and translation) and respects the required permutation symmetries of the energy function [24].

- Loss Function Definition: Train the model by minimizing a loss function that penalizes deviations from QM energies and forces, for example: ( L = \lambdaE (E{pred} - E{QM})^2 + \lambdaF \sumi |F{i, pred} - F_{i, QM}|^2 ).

- Validation: Rigorously test the trained model on a held-out test set of molecular structures and conformations not seen during training.

Step 3: Production MD Simulation and Validation

- Deployment: Integrate the trained MLFF into an MD engine (e.g., using OpenMM for Grappa or LAMMPS for GNNFF).

- Stability Test: Run a short, stable MD simulation of a small system to check for numerical instabilities.

- Property Validation: Compute experimentally measurable properties (e.g., J-couplings, diffusion coefficients, folding free energies) from the MLFF-driven simulation and validate against real experimental data to ensure physical correctness [24] [28].

Force field drift in long-timescale molecular dynamics simulations is a direct consequence of the approximations inherent in traditional molecular mechanics force fields. Graph Neural Network force fields present a paradigm shift, offering a path to quantum chemical accuracy without prohibitive computational cost. By learning the complex relationship between atomic structure and potential energy from vast QM datasets, GNNs directly address the root causes of inaccuracy. As these models continue to improve in data efficiency, generalization, and handling of long-range interactions, they are poised to become the standard for high-fidelity biomolecular simulation, ultimately enabling more reliable predictions in drug discovery and materials science.

Molecular dynamics (MD) simulations are indispensable for studying biomolecular structure and function, but their predictive power is limited by the accuracy of the underlying molecular mechanics (MM) force fields [4]. A significant challenge in long-time scale simulations is force field drift, where inaccuracies in the parametrized potential energy surface cause simulations to deviate from realistic physical behavior over time. This in-depth technical guide examines the novel machine learning (ML) frameworks Grappa and Espaloma, which aim to mitigate this drift by replacing traditional, hand-crafted parameter assignment with end-to-end learning of MM parameters directly from molecular graphs. We explore their architectures, provide detailed experimental protocols for their application and evaluation, and quantitatively analyze their performance. By achieving state-of-the-art accuracy while maintaining the computational efficiency of traditional MM, these force fields set the stage for more stable and reliable biomolecular simulations.

In classical MD, the potential energy of a system is calculated using a molecular mechanics force field, a computational model with a fixed functional form and empirical parameters [1]. Established force fields rely on lookup tables with a finite set of atom types, characterized by hand-crafted rules based on the chemical properties of an atom and its bonded neighbors [24] [31]. This approach, while computationally efficient, has limitations. The finite set of atom types cannot fully capture the diverse chemical environments found in complex biomolecules, leading to approximations and inaccuracies in the potential energy surface.

These inaccuracies are a primary source of force field drift in long simulations. Small, systematic errors in energy and force calculations can accumulate over nanoseconds to microseconds, causing the simulated system to gradually sample unrealistic conformations or deviate from experimentally observed dynamics. This drift undermines the reliability of simulations for predictive tasks, such as drug development, where accurate characterization of molecular interactions is critical.

The advent of geometric deep learning has enabled a new paradigm: machine-learned force fields. While E(3)-equivariant neural networks can achieve high accuracy, they remain orders of magnitude more computationally expensive than MM force fields, making them unsuitable for simulating large biomolecular systems over long time scales [24]. The frameworks Grappa (Graph Attentional Protein Parametrization) and Espaloma represent a hybrid approach. They retain the computationally efficient functional form of traditional MM but replace the lookup-table parameter assignment with a neural network that predicts parameters directly from the molecular graph [24] [31]. This end-to-end learning from data promises a more accurate and transferable description of the potential energy surface, thereby addressing the root cause of force field drift.

## 2 Molecular Mechanics and Parameter Prediction Fundamentals

The Molecular Mechanics Energy Function

In MM, the potential energy ( E ) of a molecular system is a sum of bonded and non-bonded interactions [24] [31] [2]. The general form is: [ E{\text{total}} = E{\text{bonded}} + E{\text{nonbonded}} ] [ E{\text{bonded}} = E{\text{bond}} + E{\text{angle}} + E{\text{dihedral}} ] [ E{\text{nonbonded}} = E{\text{electrostatic}} + E{\text{van der Waals}} ]

The bonded interactions are typically modeled as follows [31] [2]:

- Bond Stretching: ( E{\text{bond}} = \sum k{ij}(r{ij} - r{ij}^{(0)})^2 )

- Angle Bending: ( E{\text{angle}} = \sum k{ijk}(\theta{ijk} - \theta{ijk}^{(0)})^2 )

- Dihedral Torsions: ( E{\text{dihedral}} = \sum k{ijkl} \cos(n\phi_{ijkl} - \delta) )

The parameters for these functions—the force constants ( k ) and equilibrium values ( r^{(0)} ), ( \theta^{(0)} )—constitute the MM parameter set ( \bm{\xi} ) that Grappa and Espaloma are designed to predict [31].

Molecular mechanics energy decomposition into bonded and non-bonded terms. [1] [2]

From Atom Typing to Graph-Based Prediction

Traditional force fields use atom typing, where each atom is assigned a type based on its element and local chemical environment (e.g., an sp³ carbon vs. an aromatic carbon). Parameters are then assigned from fixed tables for all combinations of atom types involved in a bond, angle, or dihedral [24]. This method is limited by its reliance on human-expert-crafted rules and the finite nature of the type sets.

In contrast, Grappa and Espaloma treat the molecule as a graph ( \mathcal{G} = (\mathcal{V}, \mathcal{E}) ), where nodes ( \mathcal{V} ) represent atoms and edges ( \mathcal{E} ) represent bonds [24] [31]. A neural network maps this graph directly to the MM parameter set ( \bm{\xi} ). This data-driven approach captures chemical environments more flexibly and continuously, eliminating the discretization inherent in atom typing and improving transferability to molecules or functional groups not seen during force field development.

## 3 The Grappa and Espaloma Architectures

The Grappa Framework

Grappa is a machine learning framework that predicts MM parameters in two steps [24] [32]:

- Atom Embedding: A graph attentional neural network processes the molecular graph to generate a d-dimensional embedding ( \nu_i ) for each atom ( i ). This embedding aims to represent the atom's local chemical environment.

- Parameter Prediction: For each interaction type ( l ) (bond, angle, torsion, improper), a transformer ( \psi^{(l)} ) maps the embeddings of the involved atoms to the specific MM parameters: ( \xi{ij\ldots}^{(l)} = \psi^{(l)}(\nui, \nu_j, \ldots) ).

A critical feature of Grappa's architecture is its incorporation of physical symmetries. The models ( \psi^{(l)} ) are designed to be invariant under permutations of atoms that leave the respective internal coordinate invariant (e.g., ( \xi{ijk}^{\text{(angle)}} = \xi{kji}^{\text{(angle)}} )), ensuring that the predicted energy is physically sensible [24].

Grappa's two-step architecture: molecular graph to atom embeddings to MM parameters. [24]

Espaloma and the Shift to ML-Parameterized MM

Espaloma, the predecessor to Grappa, demonstrated that machine learning could be used to assign MM parameters from a molecular graph [24] [31]. A key distinction is that Espaloma's graph neural network relied on hand-crafted chemical features as node and edge inputs, such as orbital hybridization states and formal charge [24] [33]. Grappa builds upon this foundation by eliminating the need for these expert-derived features, learning a representation of chemical environment directly from the graph structure, which facilitates easier extension to new regions of chemical space [24].

## 4 Experimental Protocols and Performance Benchmarking

Training and Evaluation Methodology

Dataset: Both Grappa and Espaloma are trained and evaluated on the Espaloma benchmark dataset [24] [31]. This extensive dataset contains over 14,000 molecules and more than one million conformations, covering small molecules, peptides, and RNA [24]. The dataset includes quantum mechanical (QM) reference data for energies and forces.

Training Objective: The models are trained end-to-end to minimize the difference between the forces and energies computed from the predicted MM parameters and the reference QM data [24]. This is visualized in the workflow below.

End-to-end training workflow for machine-learned MM force fields. [24]

Key Experiments:

- Energy and Force Accuracy: The primary benchmark is the accuracy of energies and forces on held-out test molecules from the Espaloma dataset [24].

- Dihedral Scans: The accuracy of the potential energy landscape for dihedral angles in peptides is evaluated and compared to traditional force fields like Amber FF19SB [24].