Expectation-Maximization Algorithms in Biomolecular Systems: From Foundational Theory to Advanced Drug Discovery Applications

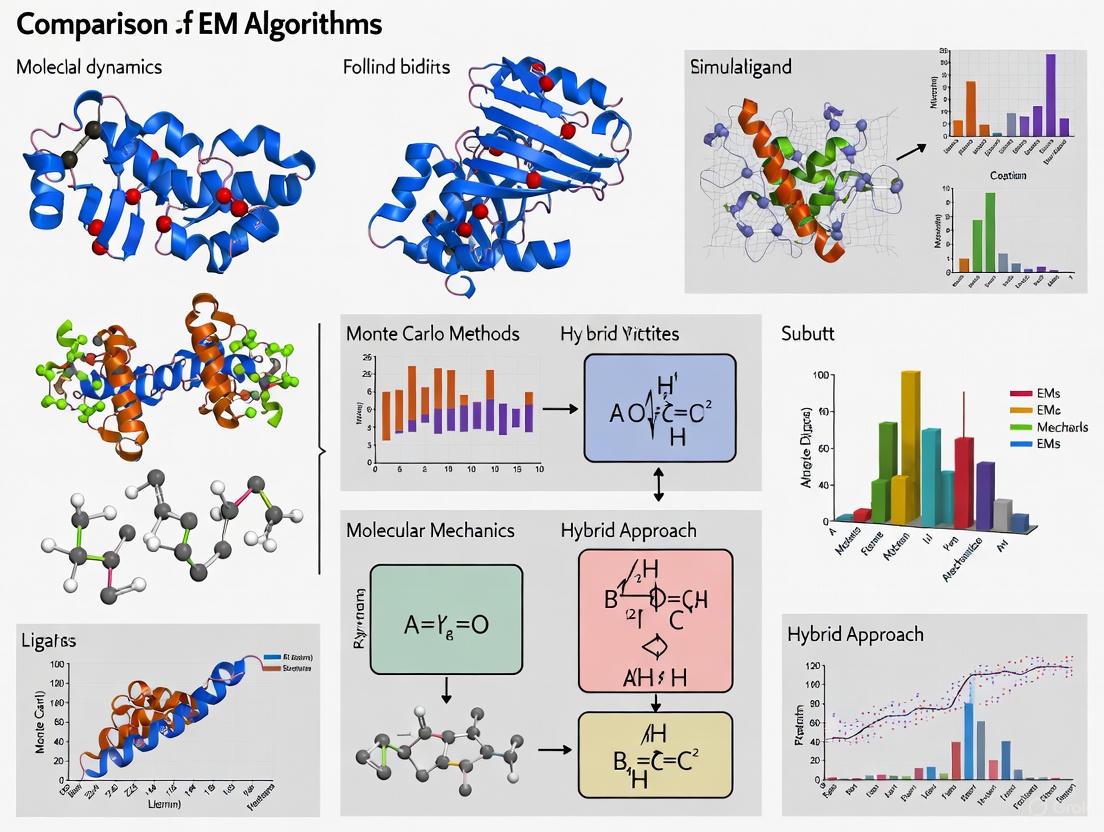

This article provides a comprehensive exploration of Expectation-Maximization (EM) algorithms and their critical role in computational biology and drug discovery.

Expectation-Maximization Algorithms in Biomolecular Systems: From Foundational Theory to Advanced Drug Discovery Applications

Abstract

This article provides a comprehensive exploration of Expectation-Maximization (EM) algorithms and their critical role in computational biology and drug discovery. It establishes foundational principles of EM methodology for researchers and scientists working with biomolecular systems, detailing specific implementations for integrating molecular simulations with experimental data. The content covers practical optimization strategies to overcome sampling and convergence challenges, and presents rigorous validation frameworks comparing EM performance against alternative computational approaches. With the growing integration of AI in pharmaceutical research, this resource offers essential insights for professionals leveraging computational methods to accelerate biomolecular analysis and therapeutic development.

Understanding EM Algorithms: Core Principles for Biomolecular Analysis

Theoretical Foundations of Expectation-Maximization in Computational Biology

The Expectation-Maximization (EM) algorithm represents a cornerstone computational methodology in statistical estimation, particularly for problems involving incomplete data or latent variables. In computational biology, where multi-scale data and hidden patterns are ubiquitous, EM provides a powerful framework for extracting meaningful biological insights from complex datasets. The fundamental principle of EM operates through an iterative two-step process: the Expectation (E-step) calculates the expected value of the latent variables given current parameters, while the Maximization (M-step) updates parameter estimates to maximize this expectation. This elegant formulation demonstrates particular utility in biomolecular systems research, where it enables researchers to navigate the inherent complexities of biological data across multiple spatial and temporal scales [1].

Recent advances have highlighted both the versatility and specialization of EM approaches in biological contexts. While the classical EM algorithm relies on calculating conditional expectations, the broader Minorization-Maximization (MM) principle demonstrates how alternative surrogate functions can be constructed through clever inequality manipulation, sometimes yielding algorithms with more favorable computational properties for specific biological problems [1]. This theoretical foundation has proven essential for addressing core challenges in computational biology, including multi-scale integration, missing data imputation, and probabilistic modeling of complex biological systems, positioning EM methodologies as indispensable tools for modern biological research.

Algorithmic Frameworks: EM and Its Variants

Core Theoretical Principles

The EM algorithm's theoretical foundation rests on its ability to systematically handle latent variables and missing data through iterative refinement. Formally, given observed data X and latent variables Z, the algorithm aims to find parameters θ that maximize the likelihood p(X|θ). Since this is often computationally intractable, EM iteratively constructs a lower bound on the likelihood using the current parameter estimates. The E-step computes the expected complete-data log-likelihood Q(θ|θ(t)) = E[log p(X,Z|θ)|X,θ(t)], while the M-step updates parameters by maximizing this expectation: θ(t+1) = argmax_θ Q(θ|θ(t)) [1]. This process guarantees monotonic improvement in the observed data likelihood, with convergence to a local optimum under relatively general conditions.

The relationship between EM and MM algorithms reveals important theoretical insights. While all EM algorithms are special cases of MM principles, not all MM algorithms can be naturally formulated as EM procedures. The distinction lies in the construction of surrogate functions: EM relies specifically on conditional expectations derived from statistical models of missing data, whereas MM employs general inequality techniques to create minorizing functions [1]. This theoretical nuance has practical implications for biological applications, as problems lacking natural missing-data interpretations may benefit more directly from MM approaches, while those with clear latent variable structures often align more naturally with classical EM formulations.

Computational Considerations in Biological Applications

The implementation of EM algorithms in computational biology must address several domain-specific computational challenges. Convergence rates vary significantly across biological applications, with some problems requiring hundreds of iterations to reach satisfactory solutions [1]. The per-iteration computational cost represents another critical factor, particularly for large-scale biological datasets where E-step expectations or M-step optimizations become computationally demanding. Practical implementations often employ acceleration techniques, approximate E-steps, or early termination criteria to balance statistical precision with computational feasibility.

The EM-MM hybrid approach has emerged as a promising strategy for balancing convergence speed with per-iteration complexity. By applying MM principles to the M-step of an EM algorithm, this hybrid approach can overcome challenging maximization problems while maintaining the stability and convergence guarantees of the EM framework [1]. For the Dirichlet-Multinomial distribution, this hybrid strategy demonstrated superior performance compared to pure EM or MM approaches in certain parameter regimes, highlighting the importance of algorithmic selection based on specific problem characteristics in biological applications.

Performance Comparison: EM Applications in Biomolecular Research

Multi-Scale Biomolecular Interaction Prediction

The MUSE framework exemplifies the innovative application of EM principles to one of computational biology's most challenging problems: integrating information across multiple biological scales. By implementing a variational EM approach, MUSE effectively addresses the optimization imbalance that plagues many multi-scale learning methods, where algorithms tend to disproportionately rely on information from a single scale while under-utilizing others [2]. This balanced approach demonstrates substantially improved performance across multiple biomolecular interaction tasks, as detailed in Table 1.

Table 1: Performance Comparison of MUSE Against State-of-the-Art Methods on Biomolecular Interaction Tasks

| Task | Dataset | Method | AUROC | AUPRC | Key Improvement |

|---|---|---|---|---|---|

| Protein-Protein Interactions (PPI) | Multiple splits | MUSE | - | - | +13.81% (BFS), +13.06% (DFS), +7.69% (Random) vs HIGH-PPI |

| Drug-Protein Interactions (DPI) | Benchmark | MUSE | 0.915 | 0.922 | +2.67% over ConPlex |

| Drug-Drug Interactions (DDI) | Benchmark | MUSE | 0.993 | 0.998 | +5.05% over CGIB |

| Protein Interface Prediction | DIPS-Plus | MUSE | 0.920 | - | P@L/5: 0.23 vs 0.17 (DeepInteract) |

| Protein Binding Sites | ScanNet | MUSE | 0.938 | 0.811 | +1.76% AUPRC over PeSTO |

MUSE's architectural innovation lies in its mutual supervision and iterative optimization between atomic structure (E-step) and molecular network (M-step) perspectives. The E-step utilizes structural information of individual biomolecules to learn effective structural representations, while the M-step incorporates interaction network topology and structural embeddings from the E-step to predict new interactions [2]. This iterative process, with carefully calibrated learning rates at each scale, enables effective information fusion that outperforms both single-scale methods and joint optimization approaches across diverse biological interaction types.

Microbial Source Tracking with EM

In microbial ecology, EM algorithms power advanced source tracking methods that quantify the contributions of different microbial environments to sink microbiomes. The FEAST framework implements an expectation-maximization approach to efficiently estimate these source proportions, though it faces limitations in computational efficiency with high-dimensional data and inability to infer directionality in source-sink relationships [3]. The recently developed FastST tool addresses these limitations while maintaining the core EM approach, achieving over 90% accuracy in directionality inference across tested scenarios while offering superior computational efficiency compared to earlier implementations [3].

Table 2: Performance Comparison of Microbial Source Tracking Methods

| Method | Algorithmic Approach | Key Strengths | Limitations | Accuracy |

|---|---|---|---|---|

| SourceTracker2 | Bayesian | Proven reliability, widely adopted | Computationally intensive with high-dimensional data | - |

| FEAST | Expectation-Maximization | Efficient implementation | Cannot infer directionality | - |

| FastST | Optimized EM | Infers directionality, computationally efficient | - | >90% directionality inference |

The experimental protocol for evaluating these microbial source tracking methods typically involves several key steps: (1) Data simulation with known source contributions across varying numbers of sources and complexity levels; (2) Algorithm application to estimate source proportions; (3) Performance evaluation by comparing estimated contributions with known ground truth; and (4) Directionality assessment for methods claiming this capability [3]. This rigorous validation framework ensures that performance claims are substantiated through controlled experimental conditions.

Experimental Protocols and Methodologies

Multi-Scale Biomolecular Interaction Analysis

The experimental protocol for evaluating MUSE's performance on biomolecular interaction tasks exemplifies rigorous methodology in computational biology. For protein-protein interaction prediction, researchers employed three distinct dataset splits: Breadth-First Search (BFS), Depth-First Search (DFS), and Random splits, each testing different aspects of model generalization [2]. The training process alternated between E-steps (structural representation learning) and M-steps (interaction prediction) with mutual supervision between scales, typically running for multiple iterations until convergence.

For atomic-scale interface and binding site predictions, the experimental workflow involved several critical stages. As visualized in the diagram below, this process integrates structural information with network-level constraints through iterative refinement:

Diagram 1: MUSE Framework Experimental Workflow

Performance quantification employed standard metrics including Area Under Receiver Operating Characteristic curve (AUROC), Area Under Precision-Recall Curve (AUPRC), and position-based precision measures (P@L/5, P@L/10) for interface predictions. Statistical significance was assessed through multiple runs with different initializations, with comparative analysis against single-scale baselines and state-of-the-art multi-scale methods [2].

Medical Imaging Reconstruction Protocol

In medical imaging applications, the experimental comparison between Maximum-Likelihood Expectation-Maximization (MLEM) and Filtered Backprojection (FBP) algorithms follows a carefully controlled protocol. Researchers typically use standardized phantoms (e.g., the National Electrical Manufacturers Association NU 4-2008 image-quality phantom) with known ground truth structures [4]. The protocol involves: (1) generating noiseless projections through analytical calculation without pixel discretization; (2) incorporating Poisson-distributed noise to simulate real-world emission imaging conditions; (3) reconstructing images with both MLEM and windowed FBP across multiple iteration parameters; and (4) matching spatial resolution between methods by comparing line profiles across reconstructed lesions [4].

Critical to this protocol is the careful matching of reconstruction parameters to enable fair comparison. For MLEM, researchers typically test iteration counts from 5 to 45 in increments of 5, while for windowed FBP, they identify matching parameter k values (ranging from 3,800 to 43,000) that produce equivalent spatial resolution [4]. This meticulous parameter matching ensures that performance differences reflect algorithmic characteristics rather than arbitrary parameter choices. Quantitative evaluation focuses on both image resolution (measured via line profiles across lesions) and noise performance (calculated as normalized standard deviation in uniform background regions), providing comprehensive assessment of the trade-offs between these competing objectives.

Successful implementation of EM methodologies in computational biology requires both specialized computational frameworks and carefully curated biological datasets. Table 3 details key resources that form the essential toolkit for researchers working in this domain.

Table 3: Essential Research Resources for EM in Computational Biology

| Resource Name | Type | Primary Function | Application Context |

|---|---|---|---|

| MUSE Framework | Software Framework | Multi-scale representation learning | Biomolecular interaction prediction |

| FastST | Computational Tool | Microbial source tracking | Microbiome analysis |

| Dirichlet-Multinomial | Statistical Model | Handling over-dispersed count data | Multivariate biological count data |

| DrugBank Database | Data Resource | Drug-target-disease associations | Drug repurposing studies |

| DIPS-Plus Benchmark | Dataset | Protein interface structures | Protein-protein interaction prediction |

| NU 4-2008 Phantom | Standardized Data | Medical imaging quality assessment | Algorithm validation in imaging |

The MUSE framework represents particularly notable infrastructure, implementing the variational EM approach for multi-scale biomolecular analysis. Its architecture specifically addresses the imbalanced nature and inherent greediness of multi-scale learning, which often causes models to rely disproportionately on a single scale [2]. By providing both E-step and M-step components with mutual supervision mechanisms, MUSE enables researchers to effectively integrate atomic structural information with molecular network data without manual balancing efforts.

Specialized Biological Datasets

Curated biological datasets form another critical component of the research toolkit, enabling standardized evaluation and comparison across methodological approaches. For biomolecular interaction studies, the DIPS-Plus benchmark provides comprehensive protein interface structures with carefully verified interaction annotations [2]. For drug-disease association research, networks compiled from multiple sources (including textual databases, machine-readable resources, and manual curation) enable robust link prediction validation [5]. These datasets typically undergo extensive preprocessing, including natural language processing extraction and hand curation, to ensure quality and consistency for computational analysis.

In microbial ecology, standardized simulated communities with known source proportions enable rigorous benchmarking of source tracking methods like FastST [3]. These simulated datasets systematically vary critical parameters including the number of sources, complexity of source-sink relationships, and abundance distributions, allowing comprehensive evaluation of algorithmic performance across diverse ecological scenarios. The availability of these carefully constructed biological datasets significantly accelerates methodological development by providing common benchmarks for objective performance comparison.

Future Directions and Emerging Applications

The evolving landscape of EM applications in computational biology points toward several promising research directions. Multi-scale integration represents a particularly fertile area, with frameworks like MUSE demonstrating the potential for EM methodologies to effectively fuse information across biological scales from atomic interactions to organism-level phenotypes [2]. The extension of these approaches to incorporate additional data types, including temporal dynamics and spatial organization, could further enhance their utility for modeling complex biological systems.

The integration of EM with deep learning architectures presents another compelling direction. While current EM methodologies primarily operate on curated biological networks or structural features, combining the statistical rigor of EM with the representation learning capabilities of deep neural networks could yield more powerful and adaptive analytical frameworks. Similarly, the development of specialized EM variants with improved convergence properties for specific biological problem classes represents an active research frontier, building on insights from comparative studies of EM, MM, and hybrid approaches [1].

As computational biology continues its evolution toward data-centric research paradigms [6], EM methodologies are poised to play an increasingly central role in extracting biological insight from complex, multi-scale datasets. Their theoretical foundation in statistical estimation, combined with practical utility across diverse biological domains, ensures that Expectation-Maximization approaches will remain essential components of the computational biologist's toolkit for the foreseeable future.

Q-functions, Minorization, and Convergence Properties

Table of Contents

- Theoretical Foundations of Q-functions and Minorization

- EM vs. MM: A Direct Comparison of Operating Characteristics

- Experimental Protocols and Convergence Analysis

- Biomolecular Applications and Research Toolkit

Theoretical Foundations of Q-functions and Minorization

The Expectation-Maximization (EM) algorithm and the more general Minorization-Maximization (MM) principle are iterative optimization methods central to maximum likelihood estimation, particularly in problems with latent variables or complex objective functions. Understanding their mechanics requires a deep dive into their core components: Q-functions and minorization.

Q-functions are the cornerstone of the EM algorithm. In the context of statistical models involving unobserved latent variables, the EM algorithm seeks to find maximum likelihood estimates where direct optimization is intractable. Given a statistical model with parameters θ, observed data X, and latent variables Z, the algorithm iterates between two steps. The Expectation step (E-step) calculates the expected value of the complete-data log-likelihood, given the observed data and the current parameter estimate θ(t). This expectation is the Q-function [7]:

Q(θ | θ(t)) = E_Z|X,θ(t) [ log p(X, Z | θ ) ].

The subsequent Maximization step (M-step) maximizes this Q-function to obtain a new parameter update: θ(t+1) = argmax_θ Q(θ | θ(t)). This process is guaranteed to non-decrease the observed data log-likelihood at every iteration [7].

Minorization is the broader principle that generalizes the EM algorithm. A function g(θ | θ(t)) is said to minorize a function f(θ) at θ(t) if two conditions hold [8]:

g(θ | θ(t)) ≤ f(θ)for allθg(θ(t) | θ(t)) = f(θ(t))The MM algorithm, which includes EM as a special case, proceeds by constructing such a minorizing functiong(θ | θ(t))and then maximizing it to find the next iterateθ(t+1). This step ensures that the objective functionf(θ)increases monotonically. The key difference between EM and MM lies in the construction of the surrogate function: EM uses conditional expectation, while MM leverages analytical inequalities, providing more flexibility [1] [9].

The following diagram illustrates the core logical relationship and workflow shared by both EM and MM algorithms, highlighting their two-step iterative nature.

EM vs. MM: A Direct Comparison of Operating Characteristics

While the EM algorithm is a specific instance of the MM principle, deriving algorithms from both perspectives for the same problem can lead to distinct implementations with unique performance characteristics. A canonical case study is the estimation of parameters for the Dirichlet-Multinomial distribution, a model frequently encountered in biomolecular research for analyzing over-dispersed multivariate count data [9]. The comparison below summarizes the core differences.

- Table 1: Comparison of EM and MM Algorithms for Dirichlet-Multinomial Estimation

Feature EM Algorithm MM Algorithm Surrogate Function Construction Relies on calculating conditional expectations of latent variables [9]. Based on crafty use of inequalities (e.g., Jensen's inequality, supporting hyperplane) [1] [9]. M-step Complexity The Q-function contains digamma and trigamma functions, making the M-step complex. It often requires an iterative, multivariate Newton's method [9]. The surrogate function is simpler, often leading to closed-form, trivial updates that are computationally cheap per iteration [9]. Stability & Simplicity Stable but can be complex to implement due to the difficult M-step [9]. Highly stable and simple to implement due to straightforward, analytical updates [1]. Scalability High-dimensional M-step maximization can be a bottleneck [9]. Highly scalable to high-dimensional data because parameters are separated and updated independently [9].

The performance trade-offs between EM and MM algorithms are further quantified by their local convergence rates and empirical performance on real datasets. The convergence rate, which measures how fast the algorithm converges near the optimum, is a key differentiator.

- Table 2: Experimental Performance on the HS76-1 Mutagenicity Dataset

Metric EM Algorithm MM Algorithm EM-MM Hybrid Algorithm Number of Iterations to Convergence Fewer (e.g., ~20) [9] More (e.g., hundreds) [9] Intermediate (fewer than pure MM) [9] Computational Cost per Iteration High (requires nested Newton iterations) [9] Very Low (simple, analytical updates) [9] Moderate (simplified M-step) [9] Final Log-Likelihood Achieved -777.79 (MLE) [9] -777.79 (MLE) [9] -777.79 (MLE) [9] Theoretical Local Convergence Rate Linear (approaches 1 with difficult M-step) [1] Linear (further from 1) [1] Linear (between EM and MM) [1]

The convergence behavior can be visualized as follows, demonstrating the trade-off between iteration cost and the total number of iterations required.

Experimental Protocols and Convergence Analysis

Experimental Protocol for Dirichlet-Multinomial Estimation

The comparative performance data presented in Table 2 was generated using a standardized experimental protocol [9]:

- Data: The HS76-1 dataset from a mutagenicity study, containing counts of dead and survived implants for 524 female mice.

- Objective: Maximize the Dirichlet-Multinomial log-likelihood (Equation 4 in the source) to estimate the parameters

α = (α₁, α₂). - Algorithms:

- EM: The E-step computes conditional expectations of the latent Dirichlet variables. The M-step requires maximizing a Q-function containing digamma functions, implemented via multivariate Newton's method.

- MM: The minorizing function is constructed using the concavity of the log-gamma function, leading to a surrogate where parameters are separated. The M-step yields a simple, iterative update.

- Hybrid: The Q-function from the EM algorithm is minorized further using a supporting hyperplane inequality, simplifying the M-step while retaining some of EM's fast convergence.

- Convergence Criterion: Iterations continue until the relative change in the log-likelihood falls below a predefined tolerance.

- Convergence Rate Calculation: The local convergence rate for each algorithm is theoretically derived as the spectral radius of the update matrix near the optimum, which is linear for all three methods [1].

Broader Convergence Properties

The convergence of iterative algorithms like EM and MM is a rich field of study. Several key properties are generally observed:

- Monotonicity: Both EM and MM are designed to ensure that the observed data log-likelihood increases monotonically with each iteration. However, it is the likelihood that must increase, not the Q-function itself. The Q-function

Q(θ | θ(t))is not guaranteed to be monotonic over iterationstbecause it is defined relative to a changing previous parameterθ(t)[10]. - Rate of Convergence: The EM algorithm typically exhibits a linear convergence rate. For the MM algorithm, the convergence rate is also linear but can be slower (i.e., the convergence constant is closer to 1) than a well-designed EM algorithm, explaining the higher number of iterations observed in practice [1]. This is in contrast to Newton's method, which can achieve a quadratic convergence rate but is less stable [8].

- Stability and General Conditions: Both algorithms are numerically stable and naturally handle parameter constraints. The DPG algorithm, a decentralized variant, has been shown to converge sublinearly at a rate of

O(log T / T)for convex problems, and to stationary points for nonconvex problems, given a diminishing step size [11].

Biomolecular Applications and Research Toolkit

The concepts of Q-functions and minorization are not merely theoretical; they underpin practical tools used in computational biology and drug development. The Dirichlet-Multinomial model, for instance, is directly applicable to several omics technologies.

Key Biomolecular Applications

- Genetic Variant Discovery: Modeling over-dispersed allele counts in population sequencing studies to accurately call single nucleotide polymorphisms (SNPs) and infer allele frequencies [9].

- Toxicology and Mutagenesis: Analyzing counts of dead and survived implants in animal studies (like the HS76-1 dataset) to assess the mutagenic potential of chemical compounds [9].

- Protein Homology Detection: Classifying protein sequences into families based on count data derived from multiple sequence alignments, where the Dirichlet-Multinomial model accounts for evolutionary divergence [9].

- Language Modeling for Bioinformatics: Applied in natural language processing for bio-medical literature mining and modeling "bursty" word occurrences in scientific text [9].

The Scientist's Computational Toolkit

For researchers implementing or applying these algorithms in biomolecular systems, the following "reagents" are essential.

- Table 3: Essential Research Reagent Solutions for Algorithm Implementation

Item Function in Analysis Multivariate Count Data The fundamental input data, such as allele counts, mutation spectra, or gene expression bins, which often exhibit over-dispersion relative to a simple multinomial model [9]. Dirichlet-Multinomial Log-Likelihood The objective function to be maximized; it accurately models the variance-inflated nature of multivariate count data common in genomics [9]. Digamma and Trigamma Functions Special functions that appear in the E-step and the score function of the Dirichlet-Multinomial model. Their evaluation is a computational cornerstone of the EM algorithm's M-step [9]. Numerical Optimizer (Newton's Method) A critical component for the inner loop of the EM algorithm when the M-step cannot be solved in closed form, required for updating parameters [9]. Inequalities (Jensen's, Supporting Hyperplane) The foundational "reagents" for constructing minorizing functions in the MM algorithm, enabling the derivation of simple, iterative updates [1] [9].

In computational statistics and biomolecular systems research, optimization algorithms are paramount for extracting meaningful patterns from complex data. The Expectation-Maximization (EM) and Minorization-Maximization (MM) algorithms represent two powerful iterative approaches for obtaining maximum likelihood estimates in models with latent variables or complex objective functions [1] [9]. While these algorithms share a similar iterative structure of constructing surrogate functions, they differ fundamentally in their derivation and operational characteristics. The EM algorithm, formally introduced in 1977 by Dempster, Laird, and Rubin, has become one of the most cited papers in statistics [7] [12]. In recent years, it has been recognized that EM is actually a special case of the more general MM principle [1] [9] [12]. This comparative framework examines both algorithms' theoretical foundations, performance characteristics, and applications in biomolecular research to guide researchers in selecting appropriate methodologies for their specific computational challenges.

Theoretical Foundations

The EM Algorithm Framework

The Expectation-Maximization algorithm operates by iteratively applying two steps to compute maximum likelihood estimates in the presence of missing data or latent variables [7]. Given a statistical model that generates a set of observed data X, unobserved latent data Z, and parameters θ, the algorithm aims to maximize the marginal likelihood L(θ;X) = p(X|θ) [7].

Expectation Step (E-step): Calculate the expected value of the log-likelihood function with respect to the conditional distribution of Z given X under the current parameter estimate θ(t): Q(θ|θ(t)) = EZ∼p(·|X,θ(t))[log p(X,Z|θ)] [7]

Maximization Step (M-step): Find the parameters that maximize the Q function from the E-step: θ(t+1) = argmaxθ Q(θ|θ(t)) [7]

This process iterates until convergence, with each iteration guaranteed to increase the log-likelihood [7] [13]. The EM algorithm is particularly valuable in scenarios like Gaussian Mixture Models, where latent variables define cluster memberships [14] [13].

The MM Algorithm Framework

The Minorization-Maximization algorithm represents a more general optimization principle that extends beyond likelihood maximization [1] [12]. For maximizing an objective function f(θ), the MM algorithm involves:

First M Step (Minorization): Create a surrogate function g(θ|θ(t)) that minorizes f(θ) at the current iterate θ(t), satisfying: g(θ(t)|θ(t)) = f(θ(t)) and g(θ|θ(t)) ≤ f(θ) for all θ [1] [12]

Second M Step (Maximization): Find the parameters that maximize the minorizing function: θ(t+1) = argmaxθ g(θ|θ(t)) [1] [12]

The MM principle can be adapted for minimization by majorizing and then minimizing the surrogate function [12]. The art of devising an MM algorithm lies in constructing tractable surrogate functions that hug the objective function as tightly as possible [12].

Relationship Between EM and MM

The EM algorithm is a special case of the MM principle where the Q function derived in the E-step serves as the minorizing function [1] [9] [12]. This relationship emerges from the information inequality, which demonstrates that the Q function satisfies the domination condition Q(θ|θ(t)) - Q(θ(t)|θ(t)) ≤ f(θ) - f(θ(t)) for all θ [1] [12]. While EM always qualifies as an MM algorithm, the reverse is not necessarily true—many MM algorithms cannot be naturally recast as EM algorithms [1] [9]. This distinction becomes particularly evident in problems lacking an apparent missing data structure, such as random graph models, discriminant analysis, and image restoration [1] [9].

Table 1: Fundamental Comparisons Between EM and MM Algorithms

| Aspect | EM Algorithm | MM Algorithm |

|---|---|---|

| Theoretical Basis | Conditional expectations via missing data framework [7] | Crafty use of inequalities (Jensen, Cauchy-Schwarz) [1] [12] |

| Surrogate Function Construction | E-step calculates expected complete-data log-likelihood [7] | First M-step uses mathematical inequalities to create minorizing function [1] |

| Scope | Special case of MM principle [1] [9] | Generalization of EM principle [1] [12] |

| Latent Variable Requirement | Requires missing data structure [7] | No latent variables needed [1] [9] |

| Applicability | Models with natural latent structure [14] [13] | Broad range of optimization problems [1] [12] |

Performance Comparison in Statistical Estimation

Case Study: Dirichlet-Multinomial Distribution

The Dirichlet-Multinomial distribution provides an illuminating case study where EM and MM derivations yield distinct algorithms with contrasting performance profiles [1] [9]. When estimating parameters for this distribution, which is commonly used for over-dispersed multivariate count data in genetics, toxicology, and language modeling [1] [9], the two approaches demonstrate notable operational differences.

For the EM algorithm applied to this problem, the Q function becomes fraught with special functions (digamma and trigamma), and the M-step resists analytical solutions, requiring iterative multivariate Newton's method [1] [9]. This results in a computationally expensive M-step, though the algorithm converges relatively quickly. Conversely, the MM algorithm produces a simpler surrogate function that yields trivial updates in the M-step, making each iteration computationally cheap but resulting in slower overall convergence [1] [9].

Convergence Characteristics

The local convergence rates of EM and MM algorithms can be studied theoretically from the unifying MM perspective [1] [9]. For the Dirichlet-Multinomial problem, analysis reveals that neither algorithm dominates the other across all parameter regimes [1] [9]. The EM algorithm typically exhibits faster convergence near the optimum but requires more computational effort per iteration [1] [9]. The MM algorithm converges more slowly but with significantly simpler iterations [1] [9].

This trade-off inspired the development of an EM-MM hybrid algorithm that partially resolves the difficulties in the M-step of the standard EM approach [1] [9]. This hybrid leverages the supporting hyperplane inequality to separate parameters in the Q function's lnΓ term, resulting in independent parameter optimization [1]. Numerical experiments demonstrate this hybrid can achieve faster convergence than pure MM in certain parameter regimes while remaining more computationally tractable than standard EM [1] [9].

Table 2: Performance Comparison for Dirichlet-Multinomial Parameter Estimation

| Algorithm | Convergence Rate | Computation per Iteration | Implementation Complexity |

|---|---|---|---|

| EM | Fast [1] [9] | High (multivariate Newton's method) [1] [9] | High (non-trivial M-step) [1] [9] |

| MM | Slow [1] [9] | Low (trivial updates) [1] [9] | Low (simple surrogate) [1] [9] |

| EM-MM Hybrid | Intermediate (depends on parameters) [1] [9] | Intermediate [1] [9] | Intermediate [1] [9] |

Diagram 1: Algorithm Performance Trade-offs

Applications in Biomolecular Systems Research

Biomolecular Simulation and QM/MM

In biomolecular research, hybrid Quantum Mechanical/Molecular Mechanical (QM/MM) methods have become indispensable tools for investigating enzyme reaction mechanisms, catalyst design, and understanding evolutionary relationships between enzymes [15]. While conceptually distinct from the statistical MM algorithm, QM/MM simulations face analogous computational challenges in balancing accuracy and efficiency. These methods partition systems into quantum mechanical regions (where chemical reactions occur) and molecular mechanical regions (where classical force fields suffice), requiring sophisticated optimization to study biomolecular systems [15].

Semi-empirical QM methods like DFTB3 and extended tight-binding (xTB) have proven particularly valuable in QM/MM simulations when proper calibration is performed [15]. These approaches enable sampling of multi-dimensional free energy surfaces necessary for analyzing complex reaction pathways and characterizing mechanochemical coupling [15]. For instance, DFTB3/MM simulations have facilitated extensive sampling requiring >150 ns of simulation time, which would be prohibitively expensive with ab initio QM/MM approaches [15].

Microbiome Analysis and Ecological Modeling

The EM algorithm has demonstrated significant utility in microbiome research, particularly through the BEEM (Biomass Estimation and EM algorithm) framework for inferring generalized Lotka-Volterra models (gLVMs) of microbial community dynamics [16]. This approach addresses a critical limitation in microbiome analysis by coupling biomass estimation with model inference, eliminating the need for experimental biomass data that often introduces substantial error [16].

In practice, BEEM alternates between estimating scaling factors (biomass) and gLVM parameters, effectively applying the EM principle to ecological modeling [16]. Performance evaluations demonstrate that BEEM-estimated parameters achieve accuracy comparable to those derived from noise-free biomass data, significantly outperforming approaches using experimentally determined biomass estimates with their inherent technical variations (mean CV = 51%) [16]. This application highlights how EM algorithms enable accurate ecological modeling from high-throughput sequencing data despite compositionality biases [16].

Population Pharmacokinetic-Pharmacodynamic Modeling

The EM algorithm has been successfully implemented in population pharmacokinetic-pharmacodynamic (PKPD) modeling using platforms like ACSLXTREME [17]. This implementation utilizes an approximation where the E-step estimates the maximum a posteriori likelihood of individual random effects (ηi) rather than their full expectation, reducing runtime while maintaining estimation quality [17].

Comparative studies demonstrate that EM-based parameter estimation produces results comparable to industry-standard tools like NONMEM, providing a valuable alternative methodology for variance modeling of PKPD data [17]. The EM framework proves particularly advantageous in handling complex variance structures and incomplete data, common challenges in clinical data analysis [17].

Table 3: Biomolecular Research Applications of EM and Related Algorithms

| Application Domain | Algorithm Type | Key Function | Performance |

|---|---|---|---|

| Microbiome Modeling (BEEM) [16] | EM variant | Infers microbial interaction parameters from relative abundance data | Eliminates need for experimental biomass data; accurate parameter estimation [16] |

| Population PK/PD Modeling [17] | EM algorithm | Estimates population parameters from sparse clinical data | Comparable to NONMEM results; efficient handling of complex variance structures [17] |

| Biomolecular QM/MM [15] | Not statistical MM | Partitions system for efficient simulation | Enables simulation of complex biomolecular systems; balance of accuracy and efficiency [15] |

Experimental Protocols and Implementation

EM Algorithm for Gaussian Mixture Models

Gaussian Mixture Models (GMMs) represent a canonical application of the EM algorithm, where latent variables define cluster memberships [14] [13]. The implementation protocol follows these steps:

Initialization: Randomly initialize parameters (means, covariances, mixture weights) or use k-means initialization [14] [13]

E-step: Calculate the probability that each data point belongs to each mixture component using current parameter estimates: p(zₙₘ = 1|xₙ) = wₘg(xₙ|μₘ,Σₘ) / ∑ⱼ wⱼg(xₙ|μⱼ,Σⱼ) where g(xₙ|μₘ,Σₘ) is the Gaussian density [14]

M-step: Update parameters using the probabilities computed in the E-step:

Convergence Check: Evaluate log-likelihood and repeat until improvement falls below threshold [14] [13]

Diagram 2: EM Algorithm Workflow

MM Algorithm for Power Series Distributions

For power series distributions (including binomial, Poisson, and negative binomial families), the MM algorithm follows this protocol [12]:

Initialization: Select initial parameter value θ₀ [12]

Minorization Step: Apply supporting hyperplane inequality to -ln(q(θ)) to create minorizing function: -ln(q(θ)) ≥ -ln(q(θₙ)) - q'(θₙ)/q(θₙ) [12]

Maximization Step: Update parameter using the minorizing function: θₙ₊₁ = x̄ q(θₙ)/q'(θₙ), where x̄ is sample mean [12]

Convergence Check: Repeat until |θₙ₊₁ - θₙ| < tolerance [12]

This approach works particularly well when the power series distribution has log-concave q(θ), which occurs when (k+1)cₖ₊₁/cₖ is decreasing in k [12].

The Scientist's Toolkit

Table 4: Essential Research Reagents for Algorithm Implementation

| Research Reagent | Function | Application Context |

|---|---|---|

| ACSLXTREME Software [17] | Implements EM algorithm for PK/PD modeling | Population pharmacokinetic-pharmacodynamic modeling [17] |

| BEEM Framework [16] | Expectation-Maximization for gLVM inference | Microbiome ecological modeling from sequencing data [16] |

| NONMEM Software [17] | Industry standard for population modeling | Benchmarking EM algorithm performance [17] |

| Dirichlet-Multinomial Model [1] [9] | Statistical model for over-dispersed counts | Comparing EM vs MM algorithm performance [1] [9] |

| Gaussian Mixture Model Package [13] | Python implementation using sklearn | Prototyping and testing EM algorithms [13] |

| Semi-empirical QM Methods (DFTB3/xTB) [15] | Approximate quantum mechanical methods | Biomolecular QM/MM simulations [15] |

This comparative framework demonstrates that both EM and MM algorithms offer powerful approaches for optimization challenges in biomolecular research, each with distinct strengths and limitations. The EM algorithm provides a principled framework for problems with natural latent variable structures, as evidenced by its successful application in microbiome modeling and pharmacokinetics [17] [16]. The more general MM algorithm offers flexibility for a broader range of optimization problems, often yielding simpler updates but potentially slower convergence [1] [9] [12]. The relationship between these algorithms—with EM as a special case of MM—provides researchers with a unified perspective for developing customized solutions to complex computational problems in biomolecular systems [1] [9] [12]. Selection between these approaches should be guided by specific problem structure, computational constraints, and convergence requirements, with hybrid strategies offering promising middle ground in many applications [1] [9].

The Role of Latent Variables in Biomolecular Data Structures

In the life sciences, technological advancements have created a paradoxical situation: while it is possible to simultaneously measure an ever-increasing number of system parameters, the resulting data are becoming increasingly difficult to interpret directly [18]. Biomolecular data acquired through techniques like single-cell omics, molecular imaging, and cryo-electron microscopy (cryo-EM) are typically high-dimensional, sparse, and exhibit complex correlation structures [18] [19]. Latent Variable Models (LVMs) address this challenge by inferring non-measurable hidden variables—termed latent variables—from empirical observations, serving as a compressed representation that captures the essential biological structure within high-dimensional datasets [18] [20].

The primary role of latent variables is to allow a complicated distribution over observed variables to be represented in terms of simpler conditional distributions, often from the exponential family [20]. In practical biomolecular applications, this translates to distinguishing healthy from diseased patient states by integrating various clinical measurements [18], resolving distinct molecular conformations from cryo-EM image datasets [21], or understanding relationships among multiple, correlated species responses in ecological studies [22]. The estimation of parameters in these models, where the model depends on unobserved latent variables, frequently relies on the Expectation-Maximization (EM) algorithm and its variants, which provide a robust iterative framework for finding maximum likelihood estimates in the presence of missing or hidden data [7] [23].

Table 1: Key Latent Variable Model Types in Biomolecular Research

| Model Type | Key Characteristics | Typical Biomolecular Applications |

|---|---|---|

| Factor Analysis (FA) | Linear model with Gaussian noise; factors follow standard normal distribution [18]. | Multi-omics integration (MOFA) [18], modeling single-cell RNA-seq dropout effects (ZIFA) [18]. |

| Gaussian Mixture Models (GMM) | Probabilistic clustering via linear superposition of Gaussian components [20]. | Cell type identification, soft clustering of expression data [20]. |

| Gaussian Process LVMs (GP-LVM) | Non-parametric model for complex, non-linear data structures [18]. | Modeling complex molecular conformation landscapes [18]. |

| Variational Autoencoders (VAEs) | Deep learning approach using neural networks as probabilistic encoders/decoders [18]. | Disentangling molecular conformations in cryo-EM [21], learning interpretable representations. |

Computational Frameworks: EM Algorithms and Their Biomolecular Applications

The Expectation-Maximization Algorithm: Core Mechanism

The EM algorithm is an iterative method for finding (local) maximum likelihood or maximum a posteriori (MAP) estimates of parameters in statistical models where the model depends on unobserved latent variables [7]. The algorithm proceeds in two repeating steps: the Expectation step (E-step), which creates a function for the expectation of the log-likelihood evaluated using the current parameter estimates, and the Maximization step (M-step), which computes new parameters maximizing the expected log-likelihood found in the E-step [7]. These parameter estimates then determine the distribution of latent variables in the next E-step.

Formally, given a statistical model that generates a set (\mathbf{X}) of observed data and (\mathbf{Z}) of unobserved latent data, the EM algorithm seeks to maximize the marginal likelihood (L(\boldsymbol{\theta}; \mathbf{X}) = p(\mathbf{X} \mid \boldsymbol{\theta})) by iteratively applying [7]:

- E-step: Define (Q(\boldsymbol{\theta} \mid \boldsymbol{\theta}^{(t)}) = E_{\mathbf{Z} \sim p(\cdot \mid \mathbf{X}, \boldsymbol{\theta}^{(t)})} [\log p(\mathbf{X}, \mathbf{Z} \mid \boldsymbol{\theta})] )

- M-step: Compute (\boldsymbol{\theta}^{(t+1)} = \underset{\boldsymbol{\theta}}{\operatorname{arg\,max}} Q(\boldsymbol{\theta} \mid \boldsymbol{\theta}^{(t)}) )

The following diagram illustrates the complete EM algorithm workflow and its interaction with observed and latent variables:

Advanced EM Variants for Complex Biomolecular Data

Standard EM algorithm assumptions often prove insufficient for complex biomolecular data, leading to specialized variants:

Stochastic EM (SEM) introduces randomness in the E-step, making it particularly useful for problems with multiple interactions in linear mixed-effects models (LMEMs) in the presence of incomplete data [23]. In psychological and psycholinguistic research, where reaction time data often contains missing or censored values exceeding 20% in some studies, SEM has demonstrated superiority over alternatives like Stochastic Approximation EM (SAEM) and Markov Chain Monte Carlo (MCMC) methods [23].

Monte Carlo EM (MCEM) replaces the expectation calculation in the E-step with Monte Carlo simulation, while Stochastic Approximation EM (SAEM) uses stochastic approximation, making it particularly valuable for nonlinear mixed-effects models (NLMEMs) [23].

The challenge of benchmarking EM performance is significant, as initialization methods (random, hierarchical clustering, k-means++), stopping criteria, and model specifications (e.g., covariance structures) dramatically affect runtime and results [24]. For text-like biomolecular data (e.g., sequences), implementations assuming multivariate Gaussian mixture models with full covariance matrices may be inappropriate due to computational demands (O(k·d³) time for inversion) and poor fit to the actual data distribution [24].

Comparative Performance Analysis of EM Algorithms in Biological Applications

Experimental Protocols for Algorithm Evaluation

Robust evaluation of EM algorithms in biomolecular contexts requires carefully designed experimental protocols that account for the unique characteristics of biological data:

Nested Resampling for In Vivo Data: When applying EM-based methods like Partial Least Squares Regression (PLSR) to in vivo data with high animal-to-animal variability, nested resampling strategies that preserve the tissue-animal hierarchy of individual replicates are essential [19]. This approach involves:

- Jackknife Resampling: Randomly omitting one biological replicate (animal) from each condition, removing all observations from that replicate as a group to maintain nesting relationships

- Subsampling: Randomly selecting observations from one biological replicate per condition to build n-of-one datasets

- Model Building: Recalculating averages and building resampled models over hundreds of iterations to assess robustness [19]

This protocol was applied to molecular-phenotypic data from mouse aorta and colon, revealing that interpretation of decomposed latent variables (LVs) changed when PLSR models were resampled, with lagging LVs proving unstable despite statistical improvement in global-average models [19].

Model Selection Criteria: For Gaussian mixture models, direct comparison of log-likelihood values is insufficient for selecting the optimal number of components, as models with more parameters inevitably describe data better [25]. Standardized protocols should instead utilize:

- Information Criteria: Akaike Information Criterion (AIC) or Bayesian Information Criterion (BIC) with minimum value selection

- Bayesian Model Selection: Incorporating prior distributions through methods described in resources like "Machine Learning - A Probabilistic Perspective"

- Cross-Validated Likelihood: Using held-out data to evaluate model performance, though computationally intensive [25]

Table 2: Performance Comparison of EM Algorithm Variants on Biological Data

| Algorithm | Theoretical Basis | Advantages | Limitations | Reported Performance |

|---|---|---|---|---|

| Standard EM | Iterative MLE via E and M steps [7] | Guaranteed monotonic increase in likelihood [7]; Simple implementation | Sensitive to initialization; Only finds local optima [7] [24] | Standard for GMM; ~75% prediction accuracy for in vivo PLSR models [19] |

| SEM | Stochastic E-step [23] | Handles missing/censored data well; Better for LMEMs with interactions [23] | Introduces variance in estimates | Superior to SAEM and MCMC for psychological data with >20% missingness [23] |

| GP-LVM | Gaussian process priors [18] | Non-parametric; Handles complex nonlinearities [18] | Computationally intensive; Interpretation challenges | Effective for molecular conformation landscapes [18] |

| VAE | Variational inference + neural networks [18] [21] | Flexible deep learning architecture; Scalable to large datasets [18] | Black-box nature; Requires careful tuning | Promising for cryo-EM disentanglement [21] |

Case Study: Interpretable Cryo-EM Through Disentangled Latent Spaces

Cryo-electron microscopy (cryo-EM) generates massive datasets of individual molecule images, where molecules exist in different conformations (shapes) that determine biological function [21]. Latent variable models, particularly variational autoencoders (VAEs), have shown promise for capturing these diverse conformations, but interpreting learned latent spaces remains challenging [21].

In this application, the observed data (x) (cryo-EM images) is modeled as being generated by a ground truth process (x = g^(z^, \phi^)), where (z^) represents ground truth conformation parameters and (\phi^*) represents pose parameters (orientation) [21]. The EM algorithm and its variants help estimate these parameters, but a fundamental challenge is disentanglement—ensuring that individual latent dimensions correspond to interpretable, biologically meaningful degrees of freedom (e.g., independent movement of molecular domains) [21].

Experimental protocols for this domain involve:

- Axis Traversal: Systematically varying individual latent dimensions while keeping others fixed to visualize their effect on reconstructed molecular structures

- Intervention-Based Metrics: Quantifying how well perturbations in latent space correspond to isolated structural changes

- Temporal Information: Leveraging time-resolved single-particle imaging to provide natural temporal structure for disentanglement [21]

Research findings indicate that without specific constraints, standard VAEs learn entangled representations where traversing a latent dimension produces complex, mixed motions of molecular components rather than interpretable, isolated movements [21].

Essential Research Toolkit for Biomolecular Latent Variable Modeling

Table 3: Key Research Reagents and Computational Tools for Biomolecular LVM

| Resource Category | Specific Tools/Frameworks | Primary Function | Application Context |

|---|---|---|---|

| Statistical Software | R with lme4, lmerTest packages [23] | Fitting linear mixed-effects models | Psycholinguistic data analysis, reaction time modeling [23] |

| Bayesian Modeling | MCMCpack, MCMCglmm [23] | Bayesian inference via MCMC | Generalized linear mixed models, biological parameter detection [23] |

| Specialized EM | ELKI [24] | Benchmarking EM implementations | Comparative performance analysis [24] |

| Deep Learning | Custom VAE/neural network frameworks [21] | Disentangled representation learning | Cryo-EM conformation analysis [21] |

| Multimodal Integration | MOFA, GFA [18] | Multi-omics factor analysis | Integrating transcriptomic, proteomic, imaging data [18] |

The experimental workflow for disentangling latent representations of molecular conformations from cryo-EM data can be visualized as follows:

The integration of latent variable models with EM algorithms continues to evolve toward addressing key challenges in biomolecular data analysis. Future methodological developments will likely focus on enhancing identifiability—ensuring that learned latent representations correspond to biologically meaningful factors rather than arbitrary transformations [21]. This pursuit connects with the theoretical framework of nonlinear Independent Component Analysis (ICA), which aims to discover true conformation changes of molecules in nature [21].

Additionally, the field is moving toward multimodal integration methods that combine different OMIC measurements with image or genome variation data [26]. As these computational approaches mature from neural network-based curve fitting to mathematically grounded methods that provide genuine biological insight, they promise to significantly advance drug discovery and structural biology by unraveling complex biological processes and facilitating targeted interventions [21].

Integrating EM with Molecular Dynamics and Experimental Data

The Expectation-Maximization (EM) algorithm serves as a powerful computational framework for estimating parameters in models with incomplete data, a common challenge in biomolecular systems research. When integrated with Molecular Dynamics (MD) simulations and experimental data, EM algorithms enable researchers to construct accurate ecological and structural models of complex biological systems, from microbial communities to protein structures. This integration is particularly valuable for addressing the "compositionality bias" inherent in high-throughput sequencing data, where absolute cell density measurements are unavailable but crucial for accurate modeling [16].

The fundamental value of this integration lies in its ability to refine predictive models and uncover system dynamics that are not directly observable through experimental methods alone. For instance, MD simulations capture protein behavior in full atomic detail at fine temporal resolution, but their accuracy depends on proper initial parameters and validation against experimental findings [27]. Similarly, ecological modeling of microbial communities requires scaling relative abundance data to absolute scales, which EM algorithms can facilitate without additional experimental biomass measurements [16]. This synergistic approach provides a more complete picture of biomolecular systems, enabling advances in drug discovery, microbiome research, and protein engineering.

Methodological Framework: EM-Driven Integration Approaches

Core Theoretical Foundation

The integration of EM with MD and experimental data typically follows a cyclic refinement process where each component informs and validates the others. The EM algorithm operates by iterating between two steps: the Expectation step (E-step) calculates the expected value of the latent variables (e.g., biomass scaling factors), while the Maximization step (M-step) estimates parameters that maximize the likelihood given the expected values [16]. When applied to biomolecular systems, this framework enables researchers to address critical bottlenecks such as the lack of absolute cell density measurements in microbiome sequencing data.

For generalized Lotka-Volterra models (gLVMs) used in microbial ecology, the standard formulation requires absolute cell densities (xi(t)) and models the growth rate of each species i as:

$$\frac{d{x}i(t)}{dt}=μi{x}i(t)+\sum{j=1}^pβ{ij}{}i(t){x}_j(t)$$

where μi represents the intrinsic growth rate parameter and βij captures interaction effects between species [16]. However, when only relative abundance data is available, the model can be reformulated by introducing scaling factors (γ(t)) that relate absolute cell densities to relative abundances ($\tilde{x}{i}(t) = xi(t)/γ(t)$), which the EM algorithm can then estimate jointly with the gLVM parameters.

BEEM: A Case Study in EM-MD-Experimental Data Integration

The BEEM (Biomass Estimation and EM Algorithm) approach exemplifies the successful integration of EM with ecological modeling and experimental validation [16]. BEEM was specifically designed to infer gLVMs from microbiome relative abundance data without requiring experimental biomass measurements, which are often technically challenging to obtain or unavailable for existing datasets. The algorithm alternates between learning scaling factors (biomass estimates) and gLVM parameters through an expectation-maximization framework, effectively addressing the compositionality problem in longitudinal microbiome sequencing data.

BEEM's performance has been rigorously validated against both synthetic datasets with known parameters and real data with gold-standard flow cytometry cell counts [16]. In synthetic data experiments where absolute cell density was precisely known, BEEM-estimated gLVM parameters were as accurate as those estimated with noise-free biomass values, and significantly more accurate than parameters derived from experimentally determined biomass estimates based on 16S rRNA qPCR. When applied to a freshwater microbial community with flow cytometry-based cell counts, BEEM demonstrated good concordance with the gold standard and outperformed existing normalization techniques.

Table 1: Performance Comparison of BEEM Against Alternative Methods

| Method | Median Relative Error | AUC-ROC for Interactions | Biomass Requirement |

|---|---|---|---|

| BEEM | <20% | ~90% | None (estimated from data) |

| MDSINE with noise-free biomass | <20% | ~90% | Experimental (error-free) |

| MDSINE with qPCR biomass (1 replicate) | >70% | ~60% | Experimental (1 qPCR replicate) |

| MDSINE with qPCR biomass (3 replicates) | >70% | ~65% | Experimental (3 qPCR replicates) |

| Relative Abundance Only | >60% | Comparable to random | None |

| LIMITS | >60% | Comparable to random | None |

Experimental Protocols and Implementation

BEEM Algorithm Implementation

The BEEM algorithm implementation involves several critical steps for proper application to microbiome data [16]:

Data Preprocessing: Collect longitudinal relative abundance data from high-throughput sequencing (e.g., 16S rRNA amplicon sequencing or shotgun metagenomics). Normalize sequencing depth across samples and filter low-abundance taxa to reduce noise.

Initialization: Initialize scaling factors (γ(t)) for each time point using reasonable defaults (e.g., based on library size or uniformly set to 1). Alternatively, use available experimental biomass measurements if partially available.

E-step: Given current gLVM parameters, estimate the expected value of the scaling factors γ(t) for each time point by maximizing the likelihood of observed relative abundances.

M-step: Given the current scaling factors, solve the gLVM parameter estimation problem using a penalized regression framework to handle the high-dimensional parameter space.

Convergence Check: Iterate between E-step and M-step until convergence of the likelihood function or parameter estimates.

Model Validation: Use cross-validation or hold-out validation to assess model performance and avoid overfitting.

The BEEM algorithm has been implemented in R and is publicly available, allowing researchers to apply it to their microbiome time-series datasets without requiring extensive computational expertise [16].

MD Simulation Refinement Protocol

Molecular dynamics simulations can be enhanced through integration with experimental data using EM-like approaches, particularly for protein structure prediction and refinement [28]. The following protocol outlines a typical workflow:

Initial Structure Modeling: Generate initial protein structures using computational tools such as AlphaFold2, Robetta-RoseTTAFold, or template-based modeling with MOE or I-TASSER [28].

Experimental Data Integration: Incorporate experimental constraints from cryo-EM, X-ray crystallography, or NMR spectroscopy as positional restraints or in scoring functions.

MD Simulation Setup:

Iterative Refinement:

- Run production MD simulations

- Compare simulation properties with experimental observables

- Adjust parameters to minimize discrepancy

- Iterate until convergence

Validation:

Table 2: Key Research Reagents and Computational Tools

| Tool/Reagent | Type | Function | Example Applications |

|---|---|---|---|

| GROMACS | Software | Molecular dynamics simulation | Analyzing molecular properties influencing drug solubility [29] |

| LAMMPS | Software | Molecular dynamics simulation | Grain growth simulation in polycrystalline materials [30] |

| BEEM | Algorithm | Biomass estimation and model inference | Inferring microbial community models from sequencing data [16] |

| AlphaFold2 | Software | Protein structure prediction | Initial structure modeling for viral capsid proteins [28] |

| I-TASSER | Software | Protein structure prediction | Template-based structure modeling [28] |

| GROMOS 54a7 | Force Field | Molecular interaction parameters | Modeling drug molecules for solubility prediction [29] |

Comparative Analysis: EM-Integrated Approaches vs. Alternatives

Performance Metrics Across Methodologies

The integration of EM algorithms with MD simulations and experimental data provides significant advantages over approaches that rely on single methodologies. The comparative performance can be evaluated across several dimensions:

Table 3: Comprehensive Method Comparison for Biomolecular System Modeling

| Methodology | Data Requirements | Computational Complexity | Accuracy | Limitations |

|---|---|---|---|---|

| EM-Integrated (BEEM) | Relative abundance time-series | Moderate | High (Median error <20%) | Requires longitudinal data [16] |

| Experimental Biomass (qPCR) | Relative abundance + qPCR measurements | Low | Low-Moderate (High technical noise) | 51% mean CV in technical replicates [16] |

| Relative Abundance Only | Relative abundance time-series | Low | Low (Median error >60%) | Fails to recover true parameters [16] |

| MD Simulation Only | Initial structure, force field parameters | High | Variable (Depends on system) | May converge to local minima [28] |

| Experimental Structure Only | Resolved 3D structure | N/A | High resolution but static | Limited dynamic information [28] |

Application-Specific Performance

The effectiveness of EM-integrated approaches varies across different biomolecular research applications:

Microbial Community Modeling: BEEM significantly outperforms methods that rely on experimental biomass measurements, particularly when applied to human gut microbiome data [16]. In one analysis of long-term human gut microbiome time series, BEEM enabled the construction of personalized ecological models revealing individual-specific dynamics and keystone species. This approach uncovered an emergent model where relatively low-abundance species may play disproportionate roles in maintaining gut homeostasis.

Protein Structure Prediction and Refinement: MD simulations have proven valuable for refining protein structures predicted using computational tools [28]. In a study comparing viral protein structure predictions, MD simulations enabled the generation of "compactly folded protein structures, which were of good quality and theoretically accurate" [28]. The integration of MD with initial predictions from tools like Robetta and trRosetta (which outperformed AlphaFold2 for the specific viral capsid protein studied) produced more reliable structural models than any approach used in isolation.

Drug Solubility Prediction: Machine learning analysis of MD-derived properties has shown comparable performance to structure-based models in predicting drug solubility [29]. Key MD properties including logP, Solvent Accessible Surface Area (SASA), Coulombic and Lennard-Jones interaction energies, Estimated Solvation Free Energies (DGSolv), RMSD, and solvation shell characteristics were highly effective in predicting solubility, with Gradient Boosting algorithms achieving R² = 0.87 and RMSE = 0.537 [29].

Visualization of Workflows and Relationships

BEEM Algorithm Workflow

Integrated MD-EM-Experimental Data Framework

The integration of Expectation-Maximization algorithms with Molecular Dynamics simulations and experimental data represents a powerful paradigm for advancing biomolecular systems research. This synergistic approach enables researchers to overcome fundamental limitations inherent in each methodology when used in isolation, particularly the compositionality problem in sequencing data and the parameter estimation challenges in MD simulations.

The BEEM algorithm demonstrates how EM frameworks can successfully bridge the gap between relative abundance data and absolute-scale ecological models without requiring error-prone experimental biomass measurements [16]. Similarly, MD simulations serve as valuable tools for refining protein structures predicted by computational methods, with metrics like RMSD, RMSF, and radius of gyration providing quantitative assessment of structural quality and convergence [28]. As these integrative approaches continue to evolve, they promise to unlock new capabilities in personalized microbiome therapeutics, drug discovery, and protein engineering by providing more accurate, dynamic models of complex biological systems.

For researchers implementing these methodologies, success depends on appropriate parameterization, validation against experimental data, and careful interpretation of results within biological contexts. The continuing development of computational tools and algorithms ensures that these integrated approaches will become increasingly accessible to the broader scientific community, accelerating discoveries across biomolecular research domains.

Practical Implementation: EM Algorithms for Biomolecular Simulation and Drug Discovery

Calibration of Complex Biomolecular Simulators Using EM-GP Frameworks

The accuracy of biomolecular simulations is paramount for applications in drug discovery and basic research. Simulators are complex, often incorporating numerous physical parameters and approximations that require careful calibration to ensure their outputs faithfully represent biological reality. This process, known as simulator calibration, involves adjusting uncertain input parameters so that the simulator's output matches real-world experimental observations. Among the various statistical approaches available, Expectation-Maximization (EM) algorithms coupled with Gaussian Process (GP) frameworks (EM-GP) have emerged as a powerful tool for this task, offering a structured way to handle noise, uncertainty, and computational expense. This guide provides a comparative analysis of the EM-GP framework against other prominent calibration methodologies, detailing their experimental performance, protocols, and applicability in biomolecular research.

Comparative Analysis of Calibration Frameworks

The following table summarizes the core characteristics, strengths, and weaknesses of several key calibration approaches relevant to biomolecular simulation.

Table 1: Comparison of Calibration and Ensemble Refinement Methods for Biomolecular Simulations

| Method | Core Principle | Key Advantages | Key Limitations | Typical Applications |

|---|---|---|---|---|

| EM-GP Frameworks | Uses Gaussian Processes as emulators of complex simulators within an iterative EM optimization loop. | - High computational efficiency vs. original simulator [31]- Built-in uncertainty quantification [32] [33]- Can integrate multi-fidelity data [32] | - GP uncertainty can be overconfident in high-error regions [33]- Requires careful model specification and validation | Calibrating computationally expensive stochastic simulators (e.g., agent-based models) [31]. |

| Bayesian/Maximum Entropy (BME) Reweighting | Reweights configurations from a pre-generated simulation ensemble to maximize agreement with experimental data. | - Does not require on-the-fly simulation- Relatively straightforward to implement [34] | - Limited to the conformational space sampled by the prior simulation- Can suffer from overfitting without sufficient regularization [34] | Interpreting static experimental data (NMR, SAXS) via conformational ensembles [34]. |

| Metaheuristic Optimization (e.g., GA, PSO) | Uses population-based stochastic search algorithms to find parameter sets that minimize a cost function. | - No derivatives required- Good for global search in complex parameter spaces- Highly flexible | - Can require thousands of simulator runs [32]- No native uncertainty estimation on parameters- Computationally prohibitive for very expensive simulators | General-purpose parameter fitting for models with cheap-to-evaluate simulators. |

| Multi-Fidelity Bayesian Optimization (MFBO) | Leverages cheaper, low-fidelity models (e.g., coarse-grained simulations) to accelerate the optimization of high-fidelity models. | - Dramatically reuces number of expensive high-fidelity runs [32]- Strategic balancing of exploration and exploitation | - Requires access to models of varying fidelities- Increased complexity in setup and management | Selecting optimal calibration points in complex systems; combining coarse-grained and all-atom simulations [32]. |

Performance Benchmarking

Quantitative benchmarks are critical for selecting an appropriate calibration framework. The following table consolidates performance data from various applications.

Table 2: Experimental Performance Data of Calibration Frameworks

| Method | Simulator / System | Key Performance Metric | Result | Comparative Improvement |

|---|---|---|---|---|

| Gaussian Process Emulator | Agent-based model for HIV & NCDs in SSA (inMODELA) [31] | Computational Speed | ~3 seconds per run on a standard laptop [31] | 13-fold faster (95% CI: 8–22) than the original simulator on an HPC cluster [31] |

| Multi-Fidelity Bayesian Optimization (GP-MFBO) | Temperature & Humidity Calibration Chamber [32] | Optimization Quality (Uniformity Score) | Temp: 0.149, Humidity: 2.38 [32] | Up to 81.7% improvement in temperature uniformity vs. standard methods [32] |

| Gaussian Process-based MLIPs | Molecular Energy Prediction [33] | Uncertainty Calibration | Good global calibration, but biased in high-uncertainty regions [33] | Identifies high-error predictions but cannot quantitatively define error range [33] |

| Machine-Learned Coarse-Grained Model | Protein Folding (e.g., Chignolin, Villin) [35] | Computational Speed vs. All-Atom MD | Orders of magnitude faster than all-atom MD [35] | Accurately predicts metastable folded/unfolded states and folding mechanisms [35] |

Experimental Protocols for Key Frameworks

Protocol for Gaussian Process Emulator Development and Calibration

This protocol is adapted from the tutorial on emulating complex HIV/NCD simulators [31].

- Design Point Selection: Abstract the most influential parameters from the complex simulator to form the emulator's input space (design points). For a chronic disease model, this could include initial disease prevalence, transmission rates, and intervention coverage.

- Training Data Generation: Run the original simulator a large number of times (e.g., 1000 runs) across the input parameter space to generate a training dataset of input-output pairs.

- Emulator Fitting: Use a Gaussian Process to model the relationship between the input parameters and the simulator's outputs. A GP defines a distribution over functions and is fully specified by a mean function and a covariance (kernel) function. The posterior mean of the GP serves as the fast approximation, while the posterior variance quantifies prediction uncertainty. This can be implemented in R with the

GauPropackage [31]. - Validation:

- Bayesian Posterior Predictive Checks: Simulate new data from the fitted emulator's posterior predictive distribution and compare it to the original simulator's output to assess descriptive accuracy.

- Leave-One-Out Cross-Validation (LOO-CV): Systematically omit one data point, fit the emulator, and predict the omitted point. Use LOO-CV to estimate the emulator's predictive accuracy (e.g., Pareto k diagnostics) [31].

- Calibration: Use the trained and validated emulator in an optimization loop (e.g., Maximum Likelihood Estimation or Markov Chain Monte Carlo) to find the input parameters that make the emulator's output best match the target experimental data.

Protocol for Multi-Fidelity Bayesian Optimization

This protocol is based on the GP-MFBO framework for calibration point selection [32].

- Hierarchical Model Establishment: Create a multi-fidelity modeling system. For example:

- Low-Fidelity: Fast, analytical physical models or coarse-grained simulations.

- Mid-Fidelity: More detailed Computational Fluid Dynamics (CFD) simulations.

- High-Fidelity: Actual physical experiments or all-atom molecular dynamics simulations [32].

- Uncertainty Quantification: Establish a system to quantify uncertainty from various sources, including model approximation error, parameter variation, and observational noise [32].

- Sequential Sampling & Optimization:

- Initialization: Run a small number of evaluations at different fidelity levels.

- Modeling: Construct a multi-fidelity Gaussian process model that correlates the different data sources.

- Acquisition: Use an acquisition function (e.g., one that balances information gain and uncertainty penalty) to select the next sample point and its fidelity level. The goal is to maximize the information per unit cost [32].

- Iteration: Repeat the modeling and acquisition steps until convergence, progressively using higher-fidelity data to refine the solution.

Workflow Visualization

The following diagram illustrates the logical structure and iterative process of a generic EM-GP calibration framework, integrating elements from the discussed protocols.

The Scientist's Toolkit: Essential Research Reagents and Solutions

This table details key computational tools and materials essential for implementing the calibration frameworks discussed in this guide.

Table 3: Key Research Reagent Solutions for Biomolecular Simulator Calibration

| Item Name | Function / Description | Relevance to Calibration |

|---|---|---|

| Amber Molecular Dynamics Package | A suite of programs for simulating biomolecular systems, featuring the GPU-accelerated pmemd engine [36]. |

Provides the high-fidelity simulator (forward model) that often requires calibration. Its GPU acceleration makes generating training data for emulators feasible [36]. |

| GauPro (R Package) | An R package for fitting Gaussian process regression models [31]. | Core engine for building a fast, statistical emulator of a complex simulator, which is the "GP" in the EM-GP framework [31]. |

| Coarse-Grained (CG) Force Fields (e.g., Martini, AWSEM) | Simplified molecular models that group multiple atoms into single interaction sites (beads) to speed up simulations [35]. | Acts as a lower-fidelity model in a Multi-Fidelity Bayesian Optimization setup, providing cheap, approximate data to guide the calibration of all-atom simulators [32] [35]. |

| rstanarm (R Package) | An R package providing a front-end to the Stan probabilistic programming language for Bayesian applied regression modeling [31]. | Useful for performing the full Bayesian inference required in the calibration step, allowing for robust uncertainty quantification on the estimated parameters [31]. |

| Experimental Observables Database (e.g., NMR Relaxation, SAXS) | Curated repositories of experimental data such as NMR spin relaxation rates and small-angle X-ray scattering profiles [34]. | Serves as the "ground truth" target against which the simulator is calibrated. Essential for validating the predictive power of the calibrated model [34]. |