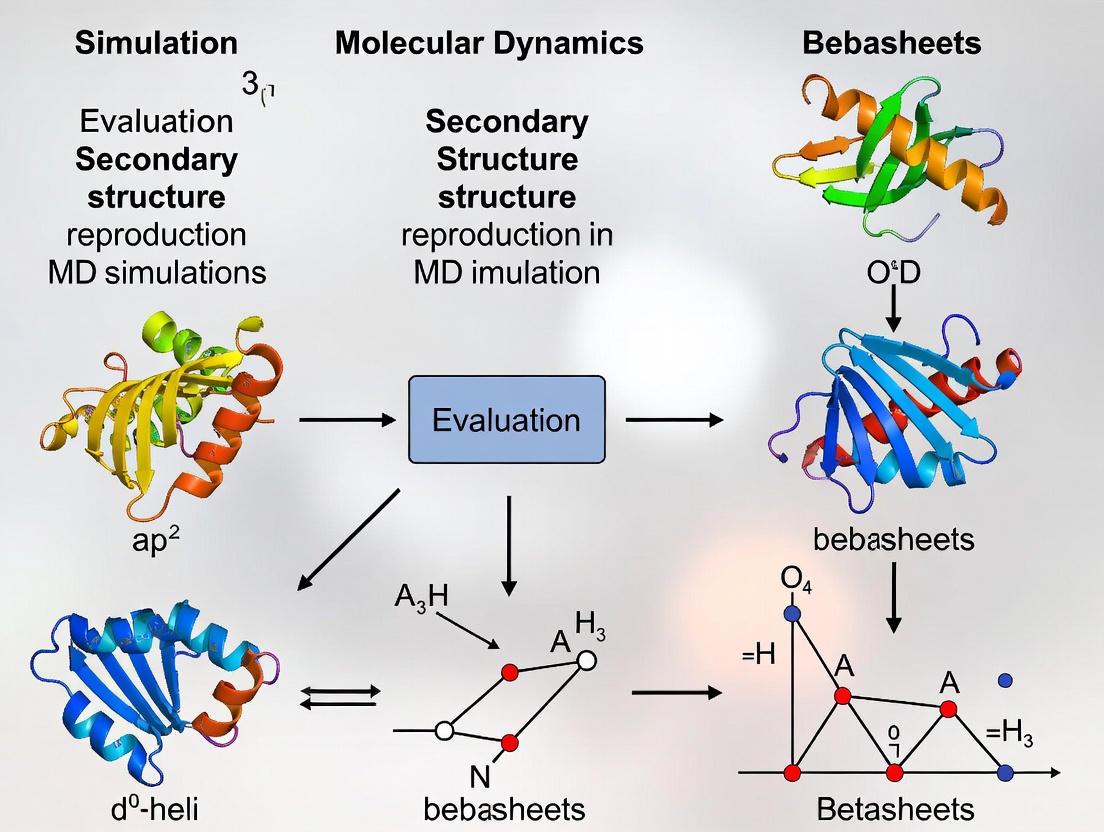

Evaluating Secondary Structure Reproduction in MD Simulations: A Guide for Biomolecular Researchers

Molecular dynamics (MD) simulation is a powerful computational technique for studying protein structure and dynamics at the atomistic level.

Evaluating Secondary Structure Reproduction in MD Simulations: A Guide for Biomolecular Researchers

Abstract

Molecular dynamics (MD) simulation is a powerful computational technique for studying protein structure and dynamics at the atomistic level. For researchers and drug development professionals, a critical step in ensuring the reliability of these simulations is validating their ability to reproduce known or predicted secondary structural elements. This article provides a comprehensive guide on this evaluation process. It covers the foundational principles connecting MD to secondary structure, details practical methodologies and analysis techniques, addresses common challenges and optimization strategies, and finally, outlines robust validation and comparative frameworks against experimental and bioinformatics data. This resource aims to equip scientists with the knowledge to conduct more accurate and trustworthy MD simulations for applications in drug design and structural biology.

The Foundation: Linking Physical Laws to Protein Folding in MD Simulations

Molecular Dynamics (MD) simulation is a powerful computational technique that describes how a molecular system evolves in time by integrating Newton's equations of motion [1]. For researchers in structural biology and drug development, MD provides an indispensable tool for investigating biomolecular structure, dynamics, and interactions at atomic resolution—information often inaccessible through experimental means alone. The core principle underpinning all MD simulations is Newton's second law of motion, which establishes the fundamental relationship between the forces acting on atoms and their resulting motion [2]. This physical foundation enables researchers to predict how molecular structures will change over time, allowing them to visualize dynamic processes such as protein folding, ligand binding, and membrane permeation that are crucial for understanding biological function and designing therapeutic interventions.

In Newtonian mechanics, the second law states that the net force on a body equals its mass multiplied by its acceleration [2]. For molecular systems, this is expressed as ( Fi = mi ai ), where ( Fi ) is the force acting on atom ( i ), ( mi ) is its mass, and ( ai ) is its acceleration [1]. Since acceleration is the second derivative of position with respect to time, this relationship becomes a differential equation that can be solved numerically to track atomic positions over time. The forces themselves are derived from potential energy functions (( V )) that describe interatomic interactions: ( Fi = -\frac{\partial V}{\partial ri} ) [1] [3]. This connection between force and potential energy is what enables MD simulations to translate the chemical information encoded in force fields into realistic structural dynamics.

Core Algorithmic Framework: Integrating the Equations of Motion

Numerical Integration Methods

The analytical solution to Newton's equations of motion is not obtainable for systems composed of more than two atoms [1]. Therefore, MD simulations employ numerical integration techniques known as finite difference methods, where integration is divided into many small finite time steps (δt) typically on the order of femtoseconds (10⁻¹⁵ seconds) to accurately capture molecular vibrations and other fast motions [1]. The integration procedure follows a fundamental algorithmic sequence:

- Initialization: The system is defined by atomic positions (( ri )) and velocities (( vi )) at time ( t )

- Force Calculation: Forces on each atom are computed from the potential energy: ( Fi = -\frac{\partial V}{\partial ri} )

- Integration: New positions and velocities at time ( t + δt ) are calculated using Newton's equations

- Iteration: The process repeats for thousands to millions of steps to generate a trajectory [1]

Table 1: Key Components of MD Integration Algorithms

| Component | Mathematical Expression | Physical Significance |

|---|---|---|

| Force | ( Fi = -\frac{\partial V}{\partial ri} ) | Negative gradient of potential energy |

| Acceleration | ( ai = \frac{Fi}{m_i} ) | Determined from force and atomic mass |

| Position Update | ( r(t+δt) = r(t) + δt v(t) + \frac{1}{2}δt^2 a(t) + \cdots ) | Taylor expansion for new positions |

| Velocity Update | ( v(t+δt) = v(t) + δt a(t) + \frac{1}{2}δt^2 b(t) + \cdots ) | Taylor expansion for new velocities |

The Verlet Family of Algorithms

Most integration algorithms in MD approximate future atomic positions using Taylor expansions of the current positions and dynamical properties [1]. The Verlet algorithm, one of the most widely used integrators, employs this approach by writing equations for both future and past positions:

[ \mathbf{r}(t+\delta t) = \mathbf{r}(t) + \delta t \mathbf{v}(t) + \frac{1}{2} \delta t^2 \mathbf{a}(t) + \cdots ] [ \mathbf{r}(t-\delta t) = \mathbf{r}(t) - \delta t \mathbf{v}(t) + \frac{1}{2} \delta t^2 \mathbf{a}(t) + \cdots ]

Adding these equations yields the basic Verlet algorithm:

[ r(t + \delta t) = 2r(t) - r(t - \delta t) + \delta t^2 a(t) ]

This formulation uses current position ( r(t) ), previous position ( r(t - δt) ), and current acceleration ( a(t) ) to predict future position [1]. While computationally efficient, the basic Verlet algorithm has significant limitations: it does not incorporate velocities explicitly (requiring additional calculations for kinetic energy), and it is not self-starting (needing previous positions at t=0) [1].

Velocity Verlet Algorithm

The Velocity Verlet algorithm addresses the limitations of the basic Verlet method by explicitly incorporating velocities at each time step [1]. This widely-used formulation consists of two key equations:

[ r(t + \delta t) = r(t) + \delta t v(t) + \frac{1}{2} \delta t^2 a(t) ] [ v(t + \delta t) = v(t) + \frac{1}{2}\delta t [a(t) + a(t + \delta t)] ]

The implementation follows a three-step procedure:

- Use current position, velocity, and acceleration to compute the next position ( r(t + δt) )

- Compute the new acceleration ( a(t + δt) ) from forces at the new position

- Update the velocity using both current and new accelerations [1]

This approach provides numerical stability while maintaining time-reversibility, making it particularly suitable for biomolecular simulations where energy conservation is important.

Leapfrog Integration Algorithm

The Leapfrog algorithm employs a different strategy by updating velocities and positions at offset time intervals [1]. The algorithm uses these core equations:

[ v\left(t+ \frac{1}{2}\delta t\right) = v\left(t- \frac{1}{2}\delta t\right) +\delta t \cdot a(t) ] [ r(t + \delta t) = r(t) + \delta t \cdot v \left(t + \frac{1}{2}\delta t \right) ]

In this method, velocities are computed at half-time steps (e.g., ( t + \frac{1}{2}δt )) while positions are computed at full-time steps (e.g., ( t + δt )) [1]. This creates a "leapfrogging" effect where velocities and positions alternately jump ahead of each other. While potentially offering computational advantages for certain systems, the Leapfrog method cannot be switched with Velocity Verlet during a simulation because the velocities are stored at different time points in the two schemes [1].

Performance Comparison of Major MD Software Packages

Benchmarking Methodologies

Evaluating the performance of MD software requires standardized benchmarking protocols that assess simulation speed, computational efficiency, and scalability across different hardware architectures. Performance is typically measured in nanoseconds of simulation completed per day (ns/day), with careful attention to CPU efficiency—the ratio of actual to theoretically optimal speedup [4]. Key considerations include:

- System Size: Performance varies significantly with the number of atoms in the simulation

- Hardware Configuration: CPU vs. GPU implementations show different scaling behavior

- Time Step: Integration algorithms allowing longer time steps (e.g., 4 fs with hydrogen mass repartitioning) can dramatically improve throughput [4]

Proper benchmarking requires knowing the serial simulation speed first, then comparing expected 100% efficient speedup (speed on 1 CPU × N) with actual speed on N CPUs [4]. This approach identifies the optimal computational resources for a given system size, avoiding scenarios where adding more CPUs actually decreases performance.

GROMACS Performance Analysis

GROMACS demonstrates exceptional performance on both CPU and GPU architectures, with particularly strong scaling on NVIDIA hardware [5] [4]. Recent implementations using the SYCL programming model for vendor-agnostic GPU support show promising results, though CUDA implementations still maintain performance advantages on NVIDIA hardware [5]. Benchmark tests across different NVIDIA GPU chipsets (P100, V100, A100) reveal expected performance progression with newer architectures [5].

Table 2: GROMACS Performance Across Hardware Configurations

| Hardware Configuration | Performance Characteristics | Optimal Use Cases |

|---|---|---|

| CPU-only (Multi-core) | Good strong scaling up to ~16 cores, then efficiency decreases | Small to medium systems, limited GPU availability |

| Single GPU + CPU cores | Highest efficiency for most system sizes, utilizes GPU for non-bonded, PME, and update | Standard biomolecular systems |

| Multiple GPUs | Improved performance for very large systems, but communication overhead | Large membrane systems, complexes with >500,000 atoms |

AMBER and NAMD Performance Profiles

AMBER's PMEMD demonstrates excellent performance on single GPU configurations, though its multi-GPU implementation is primarily designed for replica exchange simulations rather than single trajectory acceleration [4]. Notably, "a single simulation does not scale beyond 1 GPU" in standard PMEMD [4]. NAMD 3 shows significant performance improvements with multi-GPU support, particularly on modern A100 architectures [4].

Table 3: Comparative Performance of Major MD Packages

| Software | Strengths | Limitations | Optimal Hardware |

|---|---|---|---|

| GROMACS | Excellent single-GPU performance, strong scaling on CPUs | Complex workflow for multi-GPU setup | NVIDIA A100/V100, Multi-core CPUs |

| AMBER | Optimized for biomolecules, efficient single-GPU implementation | Limited multi-GPU support for single trajectories | Single high-end GPU + CPU cores |

| NAMD 3 | Good multi-GPU scaling, flexible configuration | Lower single-GPU efficiency than GROMACS | Multiple GPUs, A100 clusters |

Experimental Protocols for Performance Assessment

GROMACS Benchmarking Setup

To execute standardized benchmarks for GROMACS, the following protocol is recommended [4]:

System Preparation: Extend simulation for 10000 steps using:

CPU Execution Script:

Single GPU Execution Script:

AMBER and NAMD Benchmarking Protocols

For AMBER PMEMD benchmarks [4]:

For NAMD 3 benchmarks [4]:

Performance Optimization Techniques

A key optimization strategy applicable to all major MD packages is hydrogen mass repartitioning, which allows increasing the time step from 2 fs to 4 fs without sacrificing stability [4]. This technique modifies hydrogen masses and correspondingly decreases masses of bonded atoms to maintain total mass, effectively reducing the highest frequency vibrations that limit time step size. Implementation in AMBER tools via the parmed utility:

This optimization can potentially double simulation throughput with minimal impact on physical accuracy.

Research Reagent Solutions: Computational Tools for MD

Successful molecular dynamics research requires both software tools and specialized computational resources. The table below details essential "research reagents" for modern MD simulations.

Table 4: Essential Research Reagents for Molecular Dynamics

| Tool/Resource | Function | Application Context |

|---|---|---|

| GROMACS | Highly optimized MD package with strong GPU acceleration | General biomolecular simulations, high-throughput screening |

| AMBER PMEMD | Specialized MD with extensive biomolecular force fields | Detailed protein-DNA/RNA studies, explicit solvent models |

| NAMD 3 | Scalable MD for large systems and complex boundaries | Membrane systems, massive complexes (viruses, ribosomes) |

| Hydrogen Mass Repartitioning | Enables 4 fs time steps by modifying hydrogen masses | Throughput optimization for all simulation types |

| SYCL Programming Model | Vendor-agnostic GPU programming framework | Cross-platform deployment (AMD, Intel, NVIDIA GPUs) |

| CUDA | NVIDIA GPU computing platform | Maximum performance on NVIDIA hardware architectures |

The foundation of molecular dynamics in Newton's equations of motion provides a robust physical framework for predicting structural changes in biomolecular systems. The continuing evolution of integration algorithms and their implementation in major MD packages has dramatically enhanced our ability to simulate biologically relevant timescales and system sizes. For researchers investigating secondary structure reproduction and dynamics, understanding the performance characteristics of different MD software is crucial for designing efficient simulation campaigns.

The benchmark data presented here reveals that GROMACS generally offers superior performance for single-GPU simulations, while NAMD provides better scaling for very large systems on multiple GPUs. AMBER remains valuable for its specialized biomolecular force fields and analysis capabilities. As MD simulations continue to integrate with experimental techniques such as cryo-EM, X-ray scattering, and NMR [6] [7], the computational efficiency of these packages becomes increasingly important for achieving sufficient sampling to interpret and complement experimental data. The ongoing development of vendor-agnostic programming models like SYCL promises to maintain performance portability across future hardware architectures, ensuring that MD simulations remain an indispensable tool for drug development and structural biology.

Molecular dynamics (MD) simulation has become an indispensable tool for studying biomolecular structure and dynamics, offering atomic-level insights that are often difficult to obtain experimentally. However, a fundamental challenge persists: ensuring that simulations have adequately sampled the conformational space to reach a converged, thermally equilibrated state before data collection begins. This "sampling problem" is particularly acute for studies of secondary structure formation, where inaccuracies in force fields or insufficient simulation timescales can lead to misleading conclusions about folding mechanisms and structural stability.

The reliability of MD simulations hinges on two interdependent factors: the accuracy of the force field and the completeness of conformational sampling. Recent advances in computational power and algorithmic development have progressively extended accessible timescales, yet researchers must carefully evaluate whether their simulations have captured a representative ensemble of structures. This guide systematically compares approaches for assessing convergence in secondary structure simulations, providing researchers with practical frameworks for evaluating their computational methodologies.

Force Field Performance in Secondary Structure Formation

Comparative Analysis of Force Fields

The choice of force field significantly influences a simulation's ability to accurately reproduce secondary structure elements. Different force fields exhibit distinct biases toward specific structural propensities, making selection a critical methodological consideration.

Table 1: Comparison of Force Field Performance in β-Hairpin Formation

| Force Field | Native-like β-Hairpin Formation | Key Characteristics | Experimental Agreement |

|---|---|---|---|

| Amber ff99SB-ILDN | Yes | Modified backbone dihedral potentials and side-chain torsions | Good |

| Amber ff99SB*-ILDN | Yes | Combination of ILDN modifications with updated backbone parameters | Good |

| Amber ff99SB | Yes | Standard version without special modifications | Moderate |

| Amber ff03 | Yes | Alternative parameter set with different treatment of electrostatics | Moderate |

| GROMOS96 43a1p | Yes | United atom approach; includes phosphorylated amino acid parameters | Good |

| GROMOS96 53a6 | Yes | Updated united atom parameter set | Good |

| CHARMM27 | Variable (in some elevated temperature simulations) | Includes CMAP correction for backbone | Moderate |

| OPLS-AA/L | No | Updated dihedral parameters from original distribution | Poor |

A comprehensive study comparing 10 biomolecular force fields revealed striking differences in their ability to fold a 16-residue β-hairpin peptide derived from the Nrf2 protein. The researchers performed 37.2 μs of cumulative simulation time, with individual trajectories of 1-2 μs, providing extensive data on force field performance [8].

The results demonstrated that Amber ff99SB-ILDN, Amber ff99SB-ILDN, Amber ff99SB, Amber ff99SB, Amber ff03, Amber ff03*, GROMOS96 43a1p, and GROMOS96 53a6 all successfully folded the peptide into a native-like β-hairpin structure at 310 K. In contrast, CHARMM27 only formed native hairpins in some elevated temperature simulations, while OPLS-AA/L did not yield native hairpin structures at any temperature tested [8].

Special Considerations for Intrinsically Disordered Regions

Accurate simulation of intrinsically disordered regions (IDRs) presents unique challenges for force field performance. The conformational heterogeneity and lack of stable secondary structure elements in IDRs require specialized force fields and extensive sampling times. Recent optimizations of force fields specifically for IDRs have improved their reliability when statistical sampling is adequate [9].

For IDRs, the conformational ensemble must be characterized through experimental observables such as NMR chemical shifts, scalar couplings, residual dipolar couplings, and SAXS data. The convergence of these observables provides a more meaningful assessment of sampling quality than traditional structural metrics alone [9].

Timescales for Convergence in Biomolecular Simulations

Empirical Data on Required Simulation Times

Convergence timescales vary significantly across different biological systems, ranging from microseconds for simple secondary structure elements to milliseconds for complex folding events.

Table 2: Empirically Determined Convergence Timescales for Different Systems

| System Type | Convergence Timescale | Key Metrics | Supporting Evidence |

|---|---|---|---|

| Hydrated amorphous xylan | ~1 μs | Structural and dynamical heterogeneity | Phase separation observed despite stable density/energy [10] |

| DNA duplex (d(GCACGAACGAACGAACGC)) | 1-5 μs (internal helix) | RMSD decay, Kullback-Leibler divergence | Comparison of AMBER and Anton simulations up to 44 μs [11] |

| β-hairpin peptides | 1-2 μs | Formation of native hydrogen bonding patterns | Multiple force field comparison [8] |

| Intrinsically disordered p53-CTD | Computationally prohibitive for complete ensemble | NMR and SAXS observables | REST simulations as reference [9] |

For hydrated amorphous xylan systems, simulations show that despite standard indicators of equilibrium (density and energy) remaining constant, clear evidence of phase separation into water-rich and polymer-rich phases only emerges at the microsecond timescale. This demonstrates that traditional equilibrium metrics may be insufficient for detecting incomplete sampling of structural heterogeneity [10].

In DNA systems, research comparing AMBER simulations with those performed on the specialized Anton MD engine revealed that the structure and dynamics of the DNA helix (excluding terminal base pairs) converge on the 1-5 μs timescale. This conclusion was supported by assessing the decay of average RMSD values and the Kullback-Leibler divergence of principal component projection histograms [11].

The Combinatorial Challenge of Disordered Systems

The sampling problem becomes particularly severe for intrinsically disordered proteins and peptides. A simple calculation illustrates this challenge: for an IDR with 20 residues, each adopting just three coarse-grained conformational states, the total number of molecular conformations is approximately 3.5 billion (3¹⁹). With residue conformational transitions occurring on tens of picosecond timescales, visiting each conformation just once would require milliseconds of simulation time – computationally prohibitive with current resources [9].

This combinatorial explosion necessitates dimensionality reduction strategies. Instead of seeking complete conformational sampling, researchers focus on generating reduced ensembles that reproduce experimentally measurable quantities such as NMR and SAXS data [9].

Methodologies for Assessing Convergence

Standard Convergence Metrics

Traditional metrics for assessing convergence include:

- Stability of density and potential energy – These thermodynamic properties should fluctuate around stable values when the system reaches equilibrium [10].

- Root mean square deviation (RMSD) – The decay of average RMSD values over longer time intervals indicates structural stabilization [11].

- Root mean square fluctuation (RMSF) – Fluctuations of individual atoms (e.g., Cα atoms) provide insights into local flexibility and stability [12].

However, recent research indicates that these standard metrics may be insufficient. In xylan simulations, density and energy remained constant while structural and dynamical heterogeneity continued to evolve on microsecond timescales [10].

Advanced Statistical Approaches

More sophisticated methods for assessing convergence include:

- Kullback-Leibler divergence of principal component projections – This measures the similarity between probability distributions of essential dynamics modes sampled in different trajectory segments [11].

- Energy landscape analysis – Mapping potential energy versus density reveals stable states in molecular assembly systems [13].

- Replica exchange solute tempering (REST) – This enhanced sampling method provides a reference for comparing the statistical convergence of other methods [9].

- Markov state models (MSMs) – These models enable the construction of kinetic networks from multiple shorter simulations, helping identify metastable states and assess sampling completeness [9].

For IDRs, assessment typically involves comparing computed ensemble averages with experimental data, particularly from NMR and SAXS. The ability to reproduce multiple independent experimental observables provides strong evidence for adequate sampling [9].

Figure 1: Workflow for Assessing Convergence in Secondary Structure Simulations

Protocols for Enhanced Sampling

Specialized Sampling Methods

To address the sampling challenge in secondary structure formation, researchers have developed specialized protocols:

Replica Exchange Solute Tempering (REST) REST simulations enhance conformational sampling by reducing effective energy barriers. In this approach, the solute temperature is elevated in replicas while the solvent remains at the target temperature, improving sampling efficiency for biomolecules [9].

Probabilistic MD Chain Growth (PMD-CG) This novel protocol combines flexible-meccano and hierarchical chain growth approaches using statistical data from tripeptide MD trajectories. PMD-CG rapidly generates conformational ensembles after computing the conformational pool for all peptide triplets in the sequence. For a 20-residue p53-CTD region, this method produced ensembles that agreed well with REST simulations while being computationally more efficient [9].

Ensemble Approaches Running multiple independent simulations starting from different initial conditions provides better sampling than single long trajectories. Aggregating results from these ensembles can match the sampling achieved in long timescale simulations on specialized hardware like Anton [11].

Practical Implementation Considerations

When designing simulation experiments for secondary structure studies:

- Simulation length should be determined by system complexity rather than arbitrary thresholds. Simple secondary elements may converge in microseconds, while complex or disordered systems require longer sampling [10] [11].

- Multiple replicates from different starting structures provide more reliable assessment of convergence than single long trajectories [11].

- Enhanced sampling methods like REST should be considered for systems with high energy barriers or extensive conformational landscapes [9].

- Experimental validation remains essential for verifying simulation results, particularly through NMR, SAXS, or other biophysical techniques [9] [12].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Secondary Structure Simulations

| Tool/Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| MD Software Packages | GROMACS, AMBER, DESMOND | Biomolecular simulation engines | General MD simulations with rigorously tested force fields [14] |

| Specialized MD Engines | Anton | Ultra-long timescale simulations | Reference simulations for convergence testing [11] |

| Enhanced Sampling Methods | REST, MSM, PMD-CG | Improved conformational sampling | IDRs and systems with high energy barriers [9] |

| Force Fields | Amber ff99SB-ILDN, CHARMM27, GROMOS96 53a6 | Molecular interaction potentials | Secondary structure formation with varying performance [8] |

| Analysis Tools | VADAR, Ramachandran plot analysis | Structural quality assessment | Validation of simulated structures [15] [12] |

The convergence of secondary structure in MD simulations remains a fundamental challenge that requires careful consideration of force field selection, simulation timescales, and assessment methodologies. Empirical evidence indicates that microsecond timescales are typically necessary for even relatively simple secondary structure elements to reach convergence, with more complex systems requiring substantially longer sampling periods.

Force field selection critically influences outcomes, with certain parameter sets demonstrating superior performance for specific structural elements. The development of specialized sampling protocols like REST and PMD-CG provides promising avenues for enhancing conformational sampling, particularly for challenging systems like intrinsically disordered regions.

Moving forward, researchers should adopt a multi-faceted approach to assessing convergence, combining traditional metrics with advanced statistical analyses and experimental validation. Ensemble approaches using multiple independent simulations provide more robust sampling than single long trajectories. As computational resources continue to expand and methods evolve, the gap between simulation timescales and biologically relevant folding times will continue to narrow, enhancing the predictive power of molecular dynamics for secondary structure studies.

Molecular dynamics (MD) simulations provide unparalleled insight into the structural behavior and temporal evolution of biological macromolecules, serving as a computational microscope for researchers. The accurate evaluation of secondary structure reproduction in these simulations is paramount, as it directly correlates with a protein's biological function. This assessment relies heavily on three essential metrics: Root Mean Square Deviation (RMSD), which quantifies global structural drift; Root Mean Square Fluctuation (RMSF), which measures local residue flexibility; and Radius of Gyration (Rg), which assesses structural compactness. Together, this triad of metrics provides a comprehensive framework for evaluating the stability and structural integrity of proteins throughout MD simulations, enabling researchers to validate the quality of their simulations and draw meaningful biological conclusions about system behavior, especially regarding the preservation of secondary structural elements like α-helices and β-sheets.

Metric Definitions and Structural Significance

Root Mean Square Deviation (RMSD)

RMSD measures the average distance between the atoms of superimposed structures, providing a quantitative assessment of global conformational stability over time. Calculated after aligning a simulation trajectory to a reference structure, a low, stable RMSD indicates that the protein maintains its overall fold, while significant drifts suggest substantial conformational changes. The mathematical foundation involves calculating the square root of the average squared distance between corresponding atoms, typically focusing on the protein backbone for structural assessment. For example, studies consider systems with RMSD values below 0.3 nm to be well-converged and stable [16]. RMSD is particularly sensitive to large-scale structural rearrangements and is therefore essential for determining the equilibration phase of a simulation and the overall stability of the production run.

Root Mean Square Fluctuation (RMSF)

RMSF quantifies the deviation of individual residues from their average positions, serving as a residue-specific measure of local flexibility and dynamics. This metric is exceptionally valuable for identifying mobile regions such as flexible loops, terminal, and functionally important dynamic domains. For instance, in studies of all-β proteins, minimum RMSF values are consistently located on Cα atoms participating in stable β-sheets, confirming this secondary structure's rigidity, while terminal residues often show heightened fluctuations due to greater freedom of movement [17]. RMSF analysis can also reveal conserved dynamic patterns, with some studies showing visible periodicity in RMSF values corresponding to repeated secondary structural elements [17]. This makes RMSF indispensable for understanding functional motions, allosteric regulation, and binding mechanisms.

Radius of Gyration (Rg)

Radius of Gyration describes the compactness of a protein structure, calculated as the mass-weighted root mean square distance of atoms from the common center of mass. A stable Rg value suggests maintenance of native-like compactness, while a decreasing trend may indicate compaction or folding, and an increasing trend often signifies unfolding or loss of structural integrity. Research has demonstrated a clear relationship between secondary structure content and Rg; proteins with higher β-sheet ratios achieve smaller radii of gyration due to more efficient packing and increased hydrogen bonding within the protein [17]. Furthermore, Rg exhibits a linear relationship with chain length, with longer proteins naturally displaying larger spatial dimensions [17]. This metric is therefore crucial for assessing folding stability, identifying collapse events, and characterizing intrinsically disordered proteins.

Comparative Analysis of Metrics Across Protein Systems

Table 1: Characteristic Values and Interpretations of Key Stability Metrics

| Metric | Stable Range | High Value Interpretation | Low Value Interpretation | Dependence on Secondary Structure |

|---|---|---|---|---|

| RMSD | System-dependent; < 0.3 nm for small proteins [16] | Large conformational change, domain movement, or unfolding | Stable fold maintenance | Affected by loss/gain of secondary structure; β-sheet proteins show lower deviation [17] |

| RMSF | Variable per residue; lower in structured regions | High flexibility (loops, termini); unstable regions | Structural rigidity (secondary elements); stable core | Minimum values at β-sheet residues [17]; α-helices show moderate fluctuations |

| Rg | Comparable to native state crystal structures | Unfolding, loss of compactness, expansion | Compaction, dense packing, folding | Higher β-sheet content correlates with smaller Rg [17]; linear increase with chain length |

Table 2: Applied Examples from Recent Research Studies

| Study System | Simulation Time | Key RMSD Findings | Key RMSF Findings | Key Rg Findings |

|---|---|---|---|---|

| Influenza PB2 Cap-Binding Domain [18] | 500 ns | Used to evaluate complex stability with inhibitors | Not explicitly reported | RG-RMSD-based free energy landscape revealed binding stability |

| Mycobacterium tuberculosis Inhibitors [16] | 500 ns | All systems < 0.3 nm, indicating convergence | Not explicitly reported | Highly compact systems with no major fluctuations |

| All-β Proteins with Varying Chain Lengths [17] | Broad temperature range | Increased with temperature, especially longer chains | Visible periodicity matching repeated units; minima at β-sheet Cα atoms | Increased linearly with residue count; smaller with higher β-sheet ratio |

| HIV-1 Protease [19] | Not specified | Assessed conformational stability | Flap and flap elbow motions highly correlated | Not explicitly reported |

| HCV Core Protein Structures [20] | Not specified | Monitored structural changes and convergence | Calculated for Cα atoms to monitor fluctuations | Calculated to monitor structural compactness |

Experimental Protocols for Metric Calculation

Standard MD Workflow for Stability Analysis

The following diagram illustrates the comprehensive workflow for conducting MD simulations and calculating key stability metrics:

Detailed Methodological Framework

System Setup and Simulation Parameters:

- Initial Structure Preparation: Begin with experimentally resolved structures or high-quality homology models. For example, studies on HCV core protein utilized structures predicted by AlphaFold2, Robetta, and trRosetta when experimental structures were unavailable [20].

- Force Field Selection: Employ specialized force fields like the generalized Amber force field (GAFF) for small molecules [18] or specific protein force fields (AMBER, CHARMM, GROMOS) compatible with your system.

- Solvation and Neutralization: Solvate the system in explicit water models (TIP3P is commonly used [18]) within an appropriate periodic box with sufficient margin. Add ions to neutralize system charge and simulate physiological concentration.

- Energy Minimization: Perform steepest descent or conjugate gradient minimization to remove steric clashes and bad contacts, typically for 5,000-50,000 steps until the maximum force falls below a specified threshold.

Equilibration Protocol:

- Temperature Equilibration (NVT): Gradually heat the system from 0K to the target temperature (typically 300K) over 100-500 ps using algorithms like the Langevin thermostat [18].

- Pressure Equilibration (NPT): Further equilibrate for 1-5 ns using a barostat (Berendsen or Parrinello-Rahman) to achieve correct density at constant pressure (1 atm) [18].

Production Simulation:

- Run production dynamics for a duration sufficient to observe relevant biological processes, typically ranging from 100 ns to 1 μs, with a timestep of 1-2 fs. Save trajectory frames at appropriate intervals (e.g., every 10-100 ps) for subsequent analysis. Note that frequent trajectory saving can impact performance, with studies showing up to 4× slowdown with inappropriate intervals [21].

Trajectory Processing and Analysis:

- Trajectory Correction: Process raw trajectories to correct for periodic boundary conditions using tools like

gmx trjconvin GROMACS:gmx trjconv -s md.tpr -f md.xtc -o md_pbc.xtc -pbc wholefollowed by-pbc nojumpand centering [22]. - Metric Calculation:

- RMSD: Calculate after optimal superposition to a reference structure, typically using backbone atoms.

- RMSF: Compute per-residue fluctuations relative to the average structure.

- Rg: Determine using mass-weighted atomic distances from the center of mass.

- Advanced Analyses: Implement free energy landscape analysis using RG-RMSD plots [18], principal component analysis (PCA) [19] [16], and secondary structure analysis using tools like STRIDE in VMD [17] or DSSP.

Essential Research Reagents and Computational Tools

Table 3: Key Research Reagent Solutions for MD Stability Analysis

| Tool Category | Specific Tools/Packages | Primary Function | Application Example |

|---|---|---|---|

| MD Simulation Engines | GROMACS [22], AMBER [18], OpenMM [21] | Performing molecular dynamics simulations | GROMACS used for 500ns simulation of M. tuberculosis inhibitors [16] |

| Analysis Suites | VMD [17], MDTraj, GROMACS analysis tools | Trajectory analysis and metric calculation | VMD used for secondary structure analysis with STRIDE algorithm [17] |

| Visualization Software | PyMol [23], VMD, Chimera [18] | Molecular graphics and visualization | PyMol used to visualize docked protein structures [23] |

| Specialized Analysis | MMPBSA.py [18], PCA tools, STRIDE/DSSP | Binding energy calculations, motion analysis, secondary structure assignment | MMPBSA.py used for free energy calculations in AMBER [18] |

| Neural Network Potentials | eSEN models, UMA models [24] | Accelerated and accurate energy calculations | OMol25-trained models providing accurate energies for large systems [24] |

Interplay Between Metrics and Secondary Structure Stability

The relationship between RMSD, RMSF, Rg, and secondary structure integrity is complex and interdependent. Research has consistently demonstrated that β-sheet content significantly influences all three metrics, with higher β-sheet ratios resulting in more stable, compact structures characterized by lower Rg values, reduced RMSF at β-sheet regions, and generally more stable RMSD profiles [17]. This stability arises from the extensive hydrogen-bonding networks that characterize β-sheet architecture, which restrict atomic fluctuations and maintain structural compactness.

Temperature dependence represents another critical factor in metric interpretation. Studies on all-β proteins revealed that both RMSD and RMSF increase with temperature, with this effect being particularly pronounced in longer protein chains [17]. This temperature sensitivity underscores the importance of simulation conditions when evaluating stability metrics and comparing results across different studies.

The functional implications of these metrics are particularly evident in proteins like HIV-1 protease, where specific dynamic regions termed "dynamozones" – particularly the flap regions that control substrate access to the active site – exhibit characteristic fluctuation patterns essential for biological function [19]. These functional dynamics highlight that low fluctuation is not always desirable; instead, the appropriate flexibility in specific regions is often crucial for protein function.

For researchers focusing on secondary structure reproduction, the combination of RMSD, RMSF, and Rg with specialized secondary structure analysis tools provides the most comprehensive assessment. The STRIDE algorithm implementation in VMD, for example, allows direct correlation between metric stability and the persistence of α-helices and β-sheets throughout simulations [17], enabling researchers to distinguish between overall structural drift and specific secondary structure elements.

Molecular dynamics (MD) simulations provide atomistic insight into protein function, drug discovery, and structural biology. This guide objectively compares the performance and limitations of modern MD approaches, focusing on their capability to handle proteins of varying size and complexity, and establishes realistic expectations for simulating secondary structure.

Protein Size Distribution in Nature and Simulation Feasibility

The table below summarizes the average protein sizes across different biological kingdoms and the practical feasibility of simulating them with all-atom MD.

Table 1: Real-World Protein Sizes and Simulation Feasibility

| Organism Group | Average Protein Size (amino acids) | Representative Example | Simulation Feasibility for All-Atom MD |

|---|---|---|---|

| Archaea | 283 [25] | - | Routine (System size: ~50k-100k atoms) |

| Bacteria | 320 [25] | - | Routine (System size: ~100k-150k atoms) |

| Eukaryotes | 472 [25] | - | Challenging (System size: ~200k-500k atoms) |

| Viridiplantae | 392 [25] | - | Challenging (System size: ~150k-300k atoms) |

| Alveolata | 628 [25] | - | Very Challenging (System size: ~300k+ atoms) |

| Small Peptide | 16-60 [8] [26] | Nrf2 peptide, Amyloid-β | Highly Feasible (Extensive sampling possible) |

| Medium Protein | ~50-150 | GB3 (56 aa) [26], RNase H (155 aa) [27] | Routine (Adequate sampling for many processes) |

| Large Protein | 150-500 | α-Synuclein (140 aa) [26] | Challenging (Limited by sampling and force field accuracy) |

| Very Large Complex | 1000+ | Serpins (350-400 aa) [28] | Frontier Research (Requires advanced models/supercomputing) |

Performance Comparison of MD Simulation Software and Hardware

The choice of software and hardware directly impacts the scale and speed of simulations. Performance varies significantly based on the molecular system size.

Table 2: MD Software, Hardware Performance, and Cost-Efficiency Benchmarking

| MD Software | Key Characteristics | Representative Performance (ns/day) | Recommended GPU (for performance/cost) |

|---|---|---|---|

| NAMD | Excellent for large systems & complexes; simpler scripting [29] | Varies greatly with system size [29] | NVIDIA RTX 6000 Ada (Large simulations) [30] |

| GROMACS | High performance, open-source, extensive analysis tools [29] | ~650 (61k atoms, L40S GPU) [21] | NVIDIA RTX 4090 (Computationally intensive) [30] |

| AMBER | Optimized for biomolecules, particularly with NVIDIA GPUs [29] | - | NVIDIA RTX 6000 Ada (Large-scale) [30] |

| CHARMM | High flexibility & analysis; steep learning curve [29] | - | NVIDIA RTX 6000 Ada [30] |

| OpenMM | GPU-accelerated, library for custom algorithms [21] | 103-555 (44k atoms, various GPUs) [21] | NVIDIA L40S (Best value) [21] |

| GPU Model | VRAM | ~Speed (ns/day) for 44k atoms [21] | Relative Cost per 100 ns (vs. T4 baseline) [21] |

| NVIDIA T4 | 16 GB | 103 | 1.00 (Baseline) |

| NVIDIA V100 | 16-32 GB | 237 | 1.77 |

| NVIDIA A100 | 40-80 GB | 250 | 0.82 |

| NVIDIA L40S | 48 GB | 536 | 0.41 |

| NVIDIA H100 | 80 GB | 450 | 0.66 |

| NVIDIA H200 | 141 GB | 555 | 0.87 |

Comparative Analysis of Force Fields and Sampling Techniques

The accuracy of an MD simulation is fundamentally linked to the force field and the sampling method used. Performance varies significantly between ordered and disordered proteins.

Table 3: Force Field and Sampling Technique Comparison

| Force Field | Secondary Structure Bias / Performance Notes | Recommended Sampling Method |

|---|---|---|

| AMBER ff99SB-ILDN | Folds Nrf2 β-hairpin successfully; good for disordered proteins (Aβ40) [8] [26] | MD, REMD [26] |

| AMBER ff03 | Folds Nrf2 β-hairpin successfully [8] | MD [8] |

| CHARMM36m | Improved backbone torsion sampling; good for loops & disordered Aβ40 [31] [26] | MD, REMD [31] [26] |

| CHARMM27 | Can form native hairpins at elevated temperatures [8] | High-Temperature MD [8] |

| GROMOS96 43a1/53a6 | Folds Nrf2 β-hairpin successfully [8] | MD [8] |

| OPLS-AA/L | Did not yield native Nrf2 hairpin structures in tests [8] | - |

| Sampling Method | Principle | Applicability |

| Standard MD | Direct numerical integration of Newton's equations [29] | Good for small systems & stable, ordered proteins (e.g., GB3) [26] |

| Temperature-REMD (T-REMD) | Parallel simulations at different temps swap to overcome barriers [26] | Crucial for disordered proteins (e.g., Aβ40, α-synuclein) [26] |

Experimental Protocols for Key Studies

Protocol for MD-Based Protein Structure Refinement (CASP12)

This protocol aims to refine template-based models closer to their native structure [31].

- Initial Model Preparation: The starting structure is first processed with

locPREFMDto improve local stereochemistry and fix clashes [31]. - System Setup: The protein is solvated in a periodic cubic box with a TIP3P water model and a minimum 9 Å solvent buffer. The system is neutralized with Na+/Cl- counterions [31].

- Equilibration: The system undergoes energy minimization and gradual heating to 298 K [31].

- Production Simulation - Round 1: Multiple independent MD runs (totaling ~5.6 μs) are performed using the CHARMM36m force field under NVT conditions (298 K) with a 2 fs timestep. Weak harmonic restraints (0.025 kcal/mol/Ų) are applied to Cα atoms to guide sampling [31].

- Enhanced Sampling via Clustering: Thousands of conformations from the initial trajectories are clustered. For each cluster, an averaged structure is generated, and new MD simulations are initiated from these averages using even weaker restraints (0.005 kcal/mol/Ų) [31].

- Ensemble Generation and Averaging: Structures from the simulations are pooled. A subset of low-energy structures is selected and averaged to create a single, refined model [31].

- Iterative Refinement (Optional): A second round of MD (200 ns) can be initiated from the refined model, often without restraints. Conformations that continue to refine in the same direction as the first round are selected for final averaging [31].

The following workflow diagram illustrates this multi-stage protocol:

Protocol for Benchmarking Force Fields on β-Hairpin Folding

This protocol tests a force field's ability to fold a peptide into a specific secondary structure [8].

- Starting Structure: An extended conformation of the 16-residue Nrf2 peptide (sequence: AQLQLDEETGEFLPIQ) is generated. A structure that does not resemble the native β-hairpin is deliberately selected as the starting point to avoid bias [8].

- System Setup: The peptide is solvated in explicit water, using the water model specified for the force field being tested [8].

- Production Simulation: Multiple, independent microsecond-long MD simulations are run for each force field (e.g., AMBER ff99SB-ILDN, CHARMM27, OPLS-AA/L) at 310 K, using identical parameters [8].

- Analysis: The trajectories are analyzed using tools like DSSP to determine the percentage of simulation time the peptide spends in a native-like β-hairpin conformation compared to its known bound-state structure [8].

Protocol for Validating MD Simulations Against Experimental Data

This protocol assesses whether an MD simulation produces a physically realistic and biologically relevant conformational ensemble [27].

- System Preparation: Initial coordinates are taken from a high-resolution crystal or NMR structure (e.g., PDB IDs: 1ENH, 2RN2). Crystallographic solvents are removed [27].

- Multi-Package/Force Field Simulation: The same system is simulated using different MD packages (e.g., AMBER, GROMACS, NAMD, ilmm) and force fields (e.g., ff99SB-ILDN, CHARMM36), each following its own "best practices" for parameters, water models, and algorithms [27].

- Comparison to Experiments: The resulting conformational ensembles from each simulation are compared to a diverse set of experimental data, which can include NMR chemical shifts, order parameters (S²), scalar couplings, and hydrogen-deuterium exchange rates [27].

- Convergence and Discrepancy Analysis: The level of agreement between different simulation setups and with experimental data is quantified to identify force field or algorithm-specific biases [27].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Computational "Reagents" for MD Simulations

| Item / "Reagent" | Function / Purpose |

|---|---|

| Force Fields (e.g., CHARMM36m, AMBER ff99SB-ILDN) | Empirical potential energy functions that define the interactions between all atoms in the system; critical for accuracy [31] [26]. |

| Explicit Solvent Models (e.g., TIP3P, TIP4P) | Explicitly represent water molecules to model solvation effects and hydrophobic interactions realistically [31] [27]. |

| Periodic Boundary Conditions | Simulate a macroscopic system by placing the solvated protein in a unit cell that is repeated in space, avoiding surface artifacts [27]. |

| Particle Mesh Ewald (PME) | An algorithm for accurately and efficiently calculating long-range electrostatic interactions in periodic systems [31] [26]. |

| Thermostat (e.g., Langevin) | A computational algorithm to maintain a constant temperature during the simulation by coupling the system to a heat bath [31] [26]. |

| Markov State Models (MSMs) | A framework for analyzing many short MD simulations to build a model of the long-timescale conformational dynamics of a protein [31]. |

| Structure-Based Models (Gō Models) | Simplified, native-centric models used to study protein folding and large conformational changes by biasing the simulation toward the known native structure [28]. |

Methodology in Action: Setting Up and Analyzing Simulations for Secondary Structure

Molecular dynamics (MD) simulations have become indispensable tools in structural biology and drug discovery, providing atomic-level insights into biomolecular function and dynamics that complement experimental approaches [32]. The impact of MD simulations has expanded dramatically in recent years due to major improvements in simulation speed, accuracy, and accessibility [32] [33]. This guide examines the complete workflow from initial model preparation to production simulation, with particular focus on evaluating how well different computational approaches reproduce secondary structure elements—a critical benchmark for assessing simulation quality and reliability.

Stage 1: Initial System Setup and Model Building

Structure Acquisition and Preparation

The MD workflow begins with obtaining a high-quality initial structure, which serves as the foundation for all subsequent simulations [34] [35].

- Experimental Structures: The Protein Data Bank (PDB) remains the primary source for experimentally determined structures of proteins and nucleic acids obtained through X-ray crystallography, cryo-EM, or NMR [34] [36]. These structures provide reliable starting points but often require preprocessing to add missing atoms, residues, or loops.

- Computational Predictions: For targets without experimental structures, computational methods like AlphaFold2 have revolutionized structure prediction [37] [35]. These predicted structures can be further refined using brief MD simulations to correct potential sidechain positioning errors and improve structural quality [37].

Force Field Selection and Topology Generation

The choice of force field fundamentally influences a simulation's ability to maintain native secondary structures. Force fields approximate the physics governing interatomic interactions using parameterized mathematical functions [32] [36].

Table 1: Common Force Fields for Biomolecular Simulations

| Force Field | Best Applications | Secondary Structure Performance | Key References |

|---|---|---|---|

| AMBER99SB | Proteins, nucleic acids | Excellent α-helix and β-sheet stability | [34] [36] |

| CHARMM36 | Membrane proteins, lipids | Balanced performance across diverse systems | [36] |

| GAFF | Small molecules, drug-like compounds | Compatible with AMBER for ligand parameterization | [34] |

| GROMOS | Fast sampling, coarse-grained studies | Variable performance on specific secondary elements | [36] |

Topology generation must be performed separately for proteins and small molecules due to their different structural complexities [34]. Proteins are built from standardized amino acid building blocks, while small molecules require individual parameterization using tools such as ACPYPE or the GAFF force field [34].

Solvation and Ionization

Biomolecules function in aqueous environments, making proper solvation critical for realistic simulations.

- Explicit Solvent Models: Water molecules (e.g., TIP3P, SPC/E) are explicitly included in the simulation box, providing the most accurate representation of solvation effects but increasing system size and computational cost [34] [38].

- Implicit Solvent Models: Water is represented as a continuous dielectric medium, reducing computational demands but potentially sacrificing accuracy in modeling specific water-mediated interactions [38].

- Ion Addition: Ions are added to neutralize system charge and mimic physiological ionic strength, which can influence secondary structure stability through electrostatic screening [34].

Stage 2: Simulation Protocol and Equilibration

Energy Minimization

Before dynamics begin, the system undergoes energy minimization to remove steric clashes and unfavorable contacts introduced during setup [34]. This step ensures the system starts from a stable, low-energy configuration.

System Equilibration

A carefully designed equilibration protocol gradually relaxes the system to target thermodynamic conditions.

Table 2: Typical Multi-Stage Equilibration Protocol

| Stage | Ensemble | Key Restraints | Duration | Purpose |

|---|---|---|---|---|

| Solvent Relaxation | NVT | Heavy protein atoms | 50-100 ps | Stabilize temperature |

| Pressure Equilibration | NPT | Protein backbone | 100-200 ps | Achieve correct density |

| Full System Relaxation | NPT | Sidechains only | 50-100 ps | Release remaining restraints |

The progressive removal of positional restraints (from heavy atoms to backbone only) allows the solvent to equilibrate around the biomolecule while preserving the initial secondary structure until the production phase [34].

Stage 3: Production Simulation and Enhanced Sampling

Basic MD Parameters

Production simulations typically use integration time steps of 1-2 femtoseconds to accurately capture the fastest atomic vibrations [32] [35]. Temperature and pressure are maintained constant using coupling algorithms (e.g., Berendsen, Nosé-Hoover) to mimic experimental conditions [36]. Simulation length depends on the biological process of interest, with modern GPU-enabled systems routinely reaching microsecond timescales [32] [37].

Enhanced Sampling Methods

Standard MD simulations may struggle to sample rare events or overcome significant energy barriers. Enhanced sampling techniques improve conformational sampling efficiency:

- Replica Exchange MD: Multiple copies of the system run at different temperatures, with occasional exchanges that prevent trapping in local energy minima [37].

- Metadynamics: History-dependent bias potentials are added to encourage exploration of new conformational states along predefined collective variables [37].

- Accelerated MD: The potential energy surface is modified to lower energy barriers, increasing transition rates between states [37].

MD Workflow: Model Building to Production Simulation

Performance Comparison: Secondary Structure Stability

Evaluating how well simulations maintain known secondary structures provides critical validation of force field accuracy and simulation protocols.

Quantitative Stability Metrics

- Root Mean Square Deviation (RMSD): Measures overall structural drift from the starting configuration. Values below 2-3 Å typically indicate stable simulations [36].

- Secondary Structure Timeline: Tracks the persistence of α-helices, β-sheets, and turns throughout the simulation trajectory.

- Hydrogen Bond Analysis: Quantifies the maintenance of backbone hydrogen bonds that stabilize secondary structure elements.

- Radius of Gyration: Monitors compactness and potential unfolding events.

Table 3: Secondary Structure Reproduction Across Force Fields

| Force Field | α-Helix Stability | β-Sheet Stability | Turn Formation | Known Limitations |

|---|---|---|---|---|

| AMBER99SB | Excellent (≥95%) | Excellent (≥92%) | Accurate | Slight over-stabilization of helices |

| CHARMM36 | Very Good (≥90%) | Excellent (≥94%) | Accurate | Occasional π-helix formation |

| GROMOS | Good (≥85%) | Moderate (≥80%) | Variable | β-sheet destabilization in some motifs |

| OPLS | Very Good (≥92%) | Good (≥88%) | Slightly rigid | Reduced conformational flexibility |

Data based on community-wide assessments and published validation studies [36].

Advanced Applications and Current Trends

Drug Discovery Applications

MD simulations provide critical insights for structure-based drug design by capturing protein flexibility and binding pocket dynamics that static structures miss [32] [37]. Simulations can reveal cryptic binding sites, characterize allosteric mechanisms, and estimate binding free energies through advanced sampling techniques [37] [36].

Machine Learning Integration

Recent advances combine MD with machine learning approaches:

- Machine Learning Force Fields: Neural network potentials trained on quantum mechanical calculations offer improved accuracy while maintaining computational efficiency [37] [35].

- Dimensionality Reduction: Techniques like Principal Component Analysis (PCA) identify essential collective motions from high-dimensional trajectory data [35].

- Automated Analysis: Machine learning classifiers can automatically identify and characterize secondary structure elements throughout simulation trajectories [37].

Experimental Protocols and Methodologies

Standard Protocol for Secondary Structure Assessment

- System Setup: Obtain PDB structure 1G5O (Hsp90 N-terminal domain) and separate protein and ligand coordinates [34].

- Parameterization: Generate protein topology using AMBER99SB force field and TIP3P water model. Parameterize ligand using GAFF force field with AM1-BCC charges [34].

- Solvation: Solvate in rectangular water box with 1.2 nm padding. Add Na⁺/Cl⁻ ions to 0.15 M concentration and neutralize system charge [34].

- Equilibration: Energy minimize using steepest descent algorithm. Equilibrate with positional restraints progressively relaxed (NVT: 100 ps, NPT: 200 ps) [34].

- Production: Run 100-500 ns simulation with 2 fs time step at 300 K temperature and 1 bar pressure [34] [36].

- Analysis: Calculate RMSD, RMSF, secondary structure content (DSSP), and hydrogen bond persistence using GROMACS analysis tools [34].

Enhanced Sampling Protocol for Rare Events

- Collective Variable Selection: Identify relevant coordinates describing structural transitions (e.g., backbone dihedrals, salt bridge distances) [37].

- Bias Potential Setup: Configure well-tempered metadynamics with Gaussian hill height of 0.1 kJ/mol and width of 0.05 radians for dihedral CVs [37].

- Convergence Monitoring: Run until free energy landscape stops significant evolution (typically 200-500 ns) [37].

- Reweighting Analysis: Extract unbiased probability distributions using trajectory reweighting techniques [37].

Trajectory Analysis Methods and Outputs

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Software and Computational Tools for MD Simulations

| Tool Name | Primary Function | Application in Workflow | Key Features |

|---|---|---|---|

| GROMACS | MD simulation engine | Production simulation, analysis | High performance, extensive analysis tools [34] [36] |

| AMBER | MD simulation suite | Production simulation, free energy calculations | Advanced sampling, nucleic acid expertise [36] |

| CHARMM | MD simulation program | Membrane systems, complex assemblies | Comprehensive force fields, multiscale modeling [36] |

| ACPYPE | Ligand parameterization | Small molecule topology generation | Automated GAFF parameterization [34] |

| VMD | Trajectory visualization | Analysis and rendering | Extensive plugin ecosystem, publication-quality images |

| MDAnalysis | Trajectory analysis | Python-based analysis toolkit | Programmatic analysis, custom metrics development |

A robust MD workflow from initial model building to production simulation requires careful attention at each stage to ensure reliable results, particularly for maintaining biologically relevant secondary structures. While modern force fields have significantly improved secondary structure reproduction, researchers must still select appropriate simulation parameters and validation metrics for their specific systems. The integration of enhanced sampling methods and machine learning approaches continues to extend the capabilities of MD simulations, providing increasingly accurate insights into biomolecular dynamics for drug discovery and basic research. As hardware and algorithms continue to advance, the timescales and complexity of addressable biological questions will further expand, solidifying MD's role as a fundamental tool in structural biology.

Molecular dynamics (MD) simulations serve as a cornerstone technique in computational biology and drug development, providing atomistic insights into protein structure, dynamics, and interactions. The predictive accuracy of these simulations hinges critically on the choice of the molecular force field—the empirical mathematical function describing potential energy within the system—and the accompanying water model. Force fields balance computational efficiency with physical accuracy by modeling bonded interactions (bond stretching, angle bending, torsional rotations) and non-bonded interactions (van der Waals forces and electrostatic interactions) [39]. Within the context of a broader thesis on evaluating secondary structure reproduction in MD simulations research, this guide objectively compares prominent force fields and water models, highlighting their performance in simulating both structured protein domains and intrinsically disordered regions (IDRs).

The challenge in modern force field development lies in achieving a balanced parameterization that simultaneously stabilizes folded proteins while accurately capturing the dynamic conformational ensembles of IDPs, which lack stable tertiary structures but play crucial roles in cellular signaling and neurodegenerative diseases [40] [41]. This balance is profoundly influenced by protein-water interactions, making water model selection an integral component of the simulation setup [42]. This guide synthesizes recent benchmarking studies to compare the performance of widely used force fields and water models, providing researchers with actionable insights for selecting optimal parameters for their specific systems.

Performance Comparison of Major Force Field Families

Modern biomolecular force fields fall into several major families, each with distinct parameterization philosophies and target applications. The AMBER, CHARMM, OPLS, and GROMOS families represent the most widely used parameter sets in biomolecular simulations [42]. Conventional force fields like AMBER ff14SB and CHARMM36 were primarily optimized for folded proteins and may produce overly compact conformations for IDPs [41]. In response, refined variants have been developed to achieve a more balanced description of both structured and disordered regions.

Table 1: Major Force Field Families and Their Characteristics

| Force Field Family | Representative Force Fields | Common Water Models | Primary Strengths | Known Limitations |

|---|---|---|---|---|

| AMBER | ff99SB, ff14SB, ff19SB, ff99SB-disp, ff03ws | TIP3P, TIP4P-D, OPC, TIP4P2005 | Excellent for folded proteins; good transferability with newer variants [41] [42] | Older versions (ff99SB, ff14SB) overly compact IDPs; some variants (ff03ws) may destabilize folded domains [41] [42] |

| CHARMM | CHARMM22*, CHARMM36, CHARMM36m | TIP3P, TIP3P-Modified | Balanced protein-protein interactions; CHARMM36m improved for IDPs [43] [41] | Standard CHARMM36 may over-stabilize salt bridges and compact IDPs [40] |

| GROMOS | 53A6, 54A7, 54A8 | SPC | Thermodynamic parameterization against condensed-phase properties [44] | Can over-stabilize α-helices; area-specific limitations like poor salt activity for NaCl [44] |

| OPLS | OPLS-AA, OPLS-AA/CM1A | TIP3P, TIP4P-D | Good for small molecules and electrolytes [45] | Can overestimate density and viscosity for some systems (e.g., liquid membranes) [45] |

Quantitative Performance Benchmarking

Independent studies have benchmarked these force fields against experimental data such as Small-Angle X-Ray Scattering (SAXS) derived radius of gyration (Rg) and NMR spectroscopy observables (chemical shifts, residual dipolar couplings, and relaxation parameters). The performance varies significantly across different protein types.

Table 2: Force Field Performance Against Experimental Observables

| Force Field | Water Model | Structured Proteins (e.g., Ubiquitin) | Intrinsically Disordered Proteins/Regions | Key Supporting Evidence |

|---|---|---|---|---|

| CHARMM36m | Modified TIP3P | Stable | Rg and NMR relaxation in good agreement with experiment for several IDPs [43] [41] | Maintains fold of Ubiquitin; accurately describes conformational dynamics of FUS and other IDPs [41] |

| ff99SB-disp | Modified TIP4P-D | Stable | Excellent agreement with SAXS/NMR data for IDP chain dimensions [41] [42] | Reproduces experimental Rg for a range of disordered peptides and folded proteins [42] |

| ff19SB | OPC | Stable | Good agreement for IDP ensembles, improved over TIP3P [41] | Combination with OPC water model provides a balanced description [41] |

| ff99SB-ILDN | TIP4P-D | Slightly destabilized (~2 kcal/mol for Ubiquitin) [41] | Expanded conformations, good agreement with NMR/SAXS [41] | TIP4P-D water model crucial for achieving correct IDP ensemble [41] |

| ff03ws | TIP4P2005 | Significant instability observed [42] | Accurate for many IDPs, but overexpands some (e.g., RS peptide) [42] | Strengthened protein-water interactions can compromise folded domain stability [42] |

| DES-Amber | Modified TIP4P-D | Stable | Good for both structured and disordered regions [41] | Derived from ff99SB-disp, optimized for protein-protein association [41] |

| GROMOS 54A8 | SPC | Maintains protein secondary structure [44] | Not specifically parameterized for IDPs | Reproduces experimental NMR data for folded proteins; over-stabilizes α-helices [44] |

The Critical Role of Water Models

Water models are a foundational component of MD simulations, directly influencing the balance between protein-solvent and protein-protein interactions. Over 40 classical water potential models exist, typically classified by the number of interaction sites (three or four) and whether they are rigid or flexible [46].

Three-site models like TIP3P and SPC are computationally efficient and have been historically paired with many force fields. However, they tend to produce overly compact IDP ensembles and exhibit weak temperature-dependent cooperativity for protein folding [42]. Four-site models like TIP4P2005, OPC, and TIP4P-D offer a more accurate description of water's electrostatic distribution and experimental diffraction data [46]. The TIP4P-D model, for instance, incorporates increased dispersion forces to enhance protein-water interactions, which is crucial for correctly modeling disordered proteins [41].

A comprehensive evaluation of 44 water models against neutron and X-ray diffraction data across a wide temperature range concluded that recent three-site models (OPC3, OPTI-3T) show considerable progress, but the best overall agreement was achieved with four-site TIP4P-type models [46]. The selection of a water model is therefore not independent; force fields are often developed and validated with specific water models, and changing the default pairing requires careful validation.

Experimental Protocols for Validation

Benchmarking Workflow

To objectively evaluate force field and water model performance, researchers employ a standard workflow that compares simulation-derived metrics with experimental data. The following diagram illustrates this iterative benchmarking process.

Key Experimental Observables and Methodologies

The following experimental techniques are critical for validating the structural ensembles generated by MD simulations:

Small-Angle X-Ray Scattering (SAXS): This technique provides low-resolution structural information about proteins in solution, with the radius of gyration (Rg) serving as a key benchmark for global chain dimensions. For IDPs, the agreement between simulated and experimental Rg is a primary metric for force field accuracy [41] [42]. SAXS data collection involves exposing a purified protein sample to an X-ray beam and measuring the scattered intensity at low angles. The Rg is subsequently determined from the Guinier analysis of the scattering profile [43].

Nuclear Magnetic Resonance (NMR) Spectroscopy: NMR offers atomic-resolution data for proteins in solution. Several NMR observables are used for force field validation:

- Chemical Shifts: Sensitive to local backbone and sidechain environment, reporting on secondary structure propensity [43] [40].

- Residual Dipolar Couplings (RDCs): Provide long-range structural restraints on bond vector orientations relative to a global reference frame [43].

- Paramagnetic Relaxation Enhancement (PRE): Offers long-range distance restraints crucial for characterizing transient structures in IDPs [43].

- Spin Relaxation Rates (R1, R2) and Heteronuclear Overhauser Effect (NOE): Probe picosecond-to-nanosecond timescale dynamics, which are highly sensitive to force field choice, especially the water model [43].

Calculation of Thermodynamic and Transport Properties: For non-protein systems like liquid membranes, validation involves comparing simulated properties like density and shear viscosity against experimental measurements over a range of temperatures. This involves simulating systems with thousands of molecules (e.g., 3375 DIPE molecules) and using equilibrium and non-equilibrium MD methods to compute these bulk properties [45].

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential computational tools and their functions, as featured in the cited benchmarking studies.

Table 3: Essential Research Reagents and Computational Tools

| Item/Reagent | Function in Research | Example Use in Context |

|---|---|---|

| AMBER, CHARMM, GROMOS, OPLS-AA Force Fields | Provide parameter sets (atom charges, bond lengths, torsion angles) to calculate potential energy in the system. | Simulating protein folding, IDP conformational dynamics, and protein-ligand interactions [45] [41] [42]. |

| TIP3P, TIP4P-D, OPC, SPC Water Models | Explicitly model water as a solvent to accurately represent solvation effects and hydrophobic interactions. | TIP4P-D used with ff99SB-disp to achieve correct IDP dimensions; SPC is the default for GROMOS [46] [44] [41]. |

| Specialized MD Hardware (Anton 2) | Supercomputer designed for extremely long-timescale MD simulations (microseconds to milliseconds). | Enabled 5-10 microsecond benchmarks of full-length FUS protein, revealing force-field dependent behaviors [41]. |

| Molecular Dynamics Software (NAMD, GROMACS, AMBER) | Software suites that perform the numerical integration of Newton's equations of motion for all atoms in the system. | GROMACS used for liquid membrane studies with various force fields; NAMD implementation validated for large condensate systems [45] [41]. |

| Automated Topology Builder (ATB) | Web server for generating force field parameters and molecular topologies for ligands or small molecules. | Parameterizing a ligand for simulation with the GROMOS 54A7 force field in a protein-ligand complex [47]. |

The selection of force fields and water models is a critical decision that directly dictates the structural accuracy of MD simulations. No single force field is universally superior, but the landscape has evolved significantly with the development of balanced force fields like CHARMM36m, ff99SB-disp, and ff19SB-OPC, which perform reliably for both structured and disordered proteins when paired with modern four-point water models. Researchers must align their force field selection with the specific characteristics of their system—whether it is a folded domain, an IDP, or a hybrid protein—and rigorously validate simulation outcomes against available experimental data such as SAXS-derived Rg and NMR observables. This practice ensures that the insights gained from simulations are both biophysically meaningful and capable of guiding downstream drug development efforts.

Molecular Dynamics (MD) simulation has emerged as a cornerstone technique for studying biomolecular systems at atomic resolution, with applications ranging from small peptide dynamics to the behavior of massive complexes like the ribosome and virus capsids [48]. Despite its transformative impact, a fundamental limitation restricts its broader application: the sampling problem [48]. Biomolecular systems are characterized by rough energy landscapes with numerous local minima separated by high energy barriers, causing simulations to frequently become trapped in non-functional conformational states that fail to represent the full spectrum of biologically relevant dynamics [48]. This trapping prevents adequate sampling of all relevant conformational substates, particularly those connected to biological function such as large-scale conformational changes required for catalysis or transport through membranes [48].

Enhanced sampling algorithms have been developed precisely to address this limitation by facilitating more efficient exploration of configuration space. Among these techniques, Replica-Exchange Molecular Dynamics (REMD) has distinguished itself as one of the most popular and widely adopted approaches [49]. This guide provides a comprehensive comparison of REMD against other prominent enhanced sampling methods, evaluating their performance, applicability, and implementation for biomolecular simulations, with particular emphasis on their efficacy in reproducing accurate secondary and tertiary structural ensembles.

Replica-Exchange Molecular Dynamics (REMD)

REMD, also known as parallel tempering, employs multiple parallel simulations (replicas) of the same system running simultaneously under different thermodynamic conditions—most commonly at different temperatures [49] [50]. These replicas evolve independently for a number of steps before attempting to exchange configurations according to a Metropolis criterion based on potential energies and temperatures of adjacent replicas [48] [50]. This process creates a random walk in temperature space, allowing conformations to escape local energy minima when simulated at higher temperatures and subsequently refine at lower temperatures [49].

Key Variants: Several specialized REMD implementations have been developed to address specific sampling challenges:

- Temperature REMD (T-REMD): The original formulation using temperature as the exchange coordinate [48].

- Hamiltonian REMD (H-REMD): Utilizes different Hamiltonians or force fields across replicas, often through scaling of interaction terms [49].

- Multiplexed REMD (M-REMD): Employs multiple replicas per temperature level, potentially improving sampling quality at the cost of greater computational resources [48].

- Reservoir REMD (R-REMD): Aims to improve convergence properties compared to traditional T-REMD [48].

Competing Enhanced Sampling Methods

Metadynamics operates by discouraging revisiting of previously sampled states through the addition of repulsive bias potentials to a small set of preselected collective variables, effectively "filling free energy wells with computational sand" to drive exploration of new regions [48]. This method depends critically on the appropriate choice of collective variables but has proven effective for studying protein folding, molecular docking, and conformational changes [48].

Simulated Annealing draws inspiration from metallurgical annealing processes, employing a gradually decreasing artificial temperature parameter to guide the system toward low-energy configurations [48]. Variants include classical simulated annealing (CSA) and fast simulated annealing (FSA), with generalized simulated annealing (GSA) extending applicability to large macromolecular complexes at relatively low computational cost [48].

Ultra-Coarse-Grained (UCG) Models represent a different strategic approach by dramatically simplifying molecular representations to access longer timescales. These models combine essential dynamics coarse graining with heterogeneous elastic network modeling, sometimes incorporating anharmonic modifications to capture global conformational changes [51].

Comparative Performance Analysis

Table 1: Comparison of Key Enhanced Sampling Methods

| Method | Computational Cost | System Size Suitability | Primary Strengths | Limitations |

|---|---|---|---|---|

| REMD | High (scales with replica count) | Small to medium proteins | Excellent for global conformational sampling; No need for predefined collective variables | Temperature range selection critical; High communication overhead in parallel implementation |

| Metadynamics | Medium | All sizes (depends on CV choice) | Direct free energy estimation; Efficient for transitions along known coordinates | Quality depends entirely on appropriate collective variable selection |

| Simulated Annealing | Low to Medium | Small proteins to large complexes (with GSA) | Well-suited for very flexible systems; Lower computational demand | Risk of trapping in local minima if cooling too rapid |

| UCG Models | Low | Large complexes and systems | Access to microsecond-millisecond timescales; Study of domain rearrangements | Loss of atomic detail; Parameterization challenges |

Table 2: Performance on Specific Biomolecular Challenges