Enhanced Sampling Methods for Conformational Ensembles: A Comprehensive Guide for Biomedical Researchers

Capturing accurate conformational ensembles is crucial for understanding protein function and enabling rational drug design, yet it remains a formidable challenge due to the rugged energy landscapes of biomolecules.

Enhanced Sampling Methods for Conformational Ensembles: A Comprehensive Guide for Biomedical Researchers

Abstract

Capturing accurate conformational ensembles is crucial for understanding protein function and enabling rational drug design, yet it remains a formidable challenge due to the rugged energy landscapes of biomolecules. This article provides a comprehensive comparison of enhanced sampling methods, from established generalized-ensemble algorithms to cutting-edge AI-driven approaches. We explore foundational concepts of energy landscapes and sampling bottlenecks, detail methodological implementations including replica-exchange molecular dynamics and metadynamics, and address critical troubleshooting aspects like collective variable selection and force field limitations. The review further covers validation strategies through integration with experimental data and presents comparative analyses to guide method selection for specific biological systems, offering researchers a practical framework for advancing structural biology and therapeutic development.

Understanding Energy Landscapes and the Sampling Problem in Biomolecular Simulations

In structural biology and drug discovery, the "multiple-minima problem" represents a fundamental computational challenge. It describes the difficulty in identifying the stable, biologically relevant conformations of a protein or biomolecular complex from an astronomical number of possibilities. This arises from the rugged energy landscapes characteristic of biomolecules, where numerous local energy minima are separated by high barriers [1]. The Levinthal paradox illustrates this succinctly: a small 100-residue protein has at least 3¹⁰⁰ possible conformations, making an exhaustive conformational search impossible even on biological timescales [1]. This landscape ruggedness is particularly pronounced for intrinsically disordered proteins (IDPs), which lack a stable tertiary structure and instead exist as dynamic ensembles of interconverting conformations [2]. Accurately navigating this landscape is crucial for understanding biological function, predicting molecular interactions, and enabling rational drug design.

Comparative Analysis of Enhanced Sampling Methods

Various computational methods have been developed to overcome the multiple-minima problem. They can be broadly categorized into physics-based simulation methods and data-driven/AI approaches, each with distinct strengths and limitations in sampling conformational ensembles.

Table 1: Comparison of Enhanced Sampling Methods for Conformational Ensembles

| Method | Core Principle | Typical Application Scope | Key Advantages | Quantitative Performance & Limitations |

|---|---|---|---|---|

| Molecular Dynamics (MD) with Enhanced Sampling | Numerical integration of Newton's equations of motion with added bias potentials to accelerate barrier crossing [3]. | Atomistic detail for proteins, IDPs, and small molecule ligands; timescales from ns to μs [2]. | Physically rigorous model of interactions; can integrate experimental data via maximum entropy reweighting [4]. | Computationally expensive (μs-ms scales); force field inaccuracies can bias ensembles; struggles with rare events [2]. |

| λ-Meta Dynamics [3] | Combines λ-dynamics (a virtual coupling parameter) with meta-dynamics (history-dependent bias potentials). | Calculating absolute solvation free energy; probing high-dimension free energy landscapes. | Efficiently recovers Potential of Mean Force (PMF); accurate solvation free energies (errors within ±0.5 kcal/mol for small molecules) [3]. | Requires careful parameter tuning (Gaussian height/width); statistical fluctuations in PMF can be significant [3]. |

| AI/Deep Learning Co-folding (e.g., AF3, RFAA) [5] | Deep neural networks trained on known protein structures and sequences to predict complexes from input sequence. | High-throughput prediction of protein-ligand, protein-protein, and protein-nucleic acid complexes. | Extreme speed (seconds/minutes per prediction); high initial accuracy (e.g., AF3 ~81% native pose within 2Å) [5]. | Poor physical generalization; overfitting to training data; fails in adversarial tests (e.g., binding site mutagenesis) [5]. |

| Maximum Entropy Reweighting [4] | Integrates MD simulations with experimental data by minimally adjusting conformational weights to match data. | Determining accurate atomic-resolution conformational ensembles of IDPs. | Produces force-field independent ensembles; high agreement with NMR/SAXS data; automated and robust [4]. | Dependent on quality of initial MD ensemble and experimental data; requires sufficient ensemble size (Kish ratio) [4]. |

Experimental Protocols for Key Methods

This protocol computes the absolute solvation free energy of a small molecule, a fundamental property in drug discovery.

- System Setup: Place the solute molecule (described with QM) in a box of explicit TIP3P water molecules (described with MM).

- Hamiltonian Definition: Define the QM/MM Hamiltonian with two coupling parameters,

λ_eleandλ_vdw, which scale the electrostatic and van der Waals interactions between the QM solute and MM solvent, respectively. - Extended Dynamics: Treat the coupling parameters

λas additional dynamic variables with assigned virtual masses (m_λ). - Meta-Dynamics Biasing: During simulation, add a history-dependent bias potential

U*(λ,t)composed of Gaussian functions deposited along the trajectory to discourage revisiting sampledλvalues. - Free Energy Reconstruction: After sufficient simulation time, the bias potential converges to the negative of the Potential of Mean Force (PMF), i.e.,

ΔA(λ) = -U*(λ). - Result Interpretation: The absolute solvation free energy,

ΔA_solv, is obtained from the combined PMFs forλ_eleandλ_vdw. Benchmark results show statistical errors within ±0.5 kcal/mol for small organic molecules [3].

This protocol assesses the physical understanding and robustness of AI-based co-folding models like AlphaFold3 and RoseTTAFold All-Atom.

- System Selection: Select a protein-ligand complex with a known high-resolution structure (e.g., ATP bound to Cyclin-dependent kinase 2 (CDK2)).

- Baseline Prediction: Run the co-folding model with the wild-type protein and ligand sequence to establish baseline prediction accuracy (e.g., RMSD to crystal structure).

- Binding Site Mutagenesis:

- Challenge 1 (Removal): Mutate all binding site residues to glycine, removing side-chain interactions.

- Challenge 2 (Packing): Mutate all binding site residues to phenylalanine, removing favorable interactions and sterically occluding the pocket.

- Challenge 3 (Perturbation): Mutate each binding site residue to a chemically and sterically dissimilar residue.

- Prediction & Analysis: Run the co-folding model for each mutated system and predict the protein-ligand complex structure.

- Evaluation:

- Calculate the Root Mean Square Deviation (RMSD) of the predicted ligand pose versus the wild-type prediction.

- Check for unphysical atomic clashes and loss of specific interactions.

- A model that understands physics is expected to predict displacement of the ligand from the mutated site. Models showing overfitting will continue to place the ligand in the original, now non-functional pocket [5].

This protocol determines accurate atomic-resolution conformational ensembles of Intrinsically Disordered Proteins (IDPs).

- Initial Ensemble Generation: Perform long-timescale (e.g., 30 μs) all-atom Molecular Dynamics (MD) simulations of the IDP using state-of-the-art force fields (e.g., a99SB-disp, Charmm36m).

- Experimental Data Collection: Acquire ensemble-averaged experimental data for the same IDP, such as NMR chemical shifts, J-couplings, and SAXS profiles.

- Forward Model Calculation: For each conformation in the MD ensemble, use "forward models" to predict the values of the experimental observables.

- Maximum Entropy Reweighting:

- Apply the maximum entropy principle to find a new set of statistical weights for each MD conformation.

- The goal is to maximize the entropy of the final ensemble (minimizing bias from the initial MD model) while maximizing the agreement with the experimental data.

- Use a single free parameter (the desired effective ensemble size, defined by the Kish ratio) to automatically balance restraints from different experimental datasets.

- Ensemble Validation: Analyze the structural and statistical properties of the reweighted ensemble. A successful application shows convergence to highly similar conformational distributions from different initial force fields, providing a force-field independent approximation of the true solution ensemble [4].

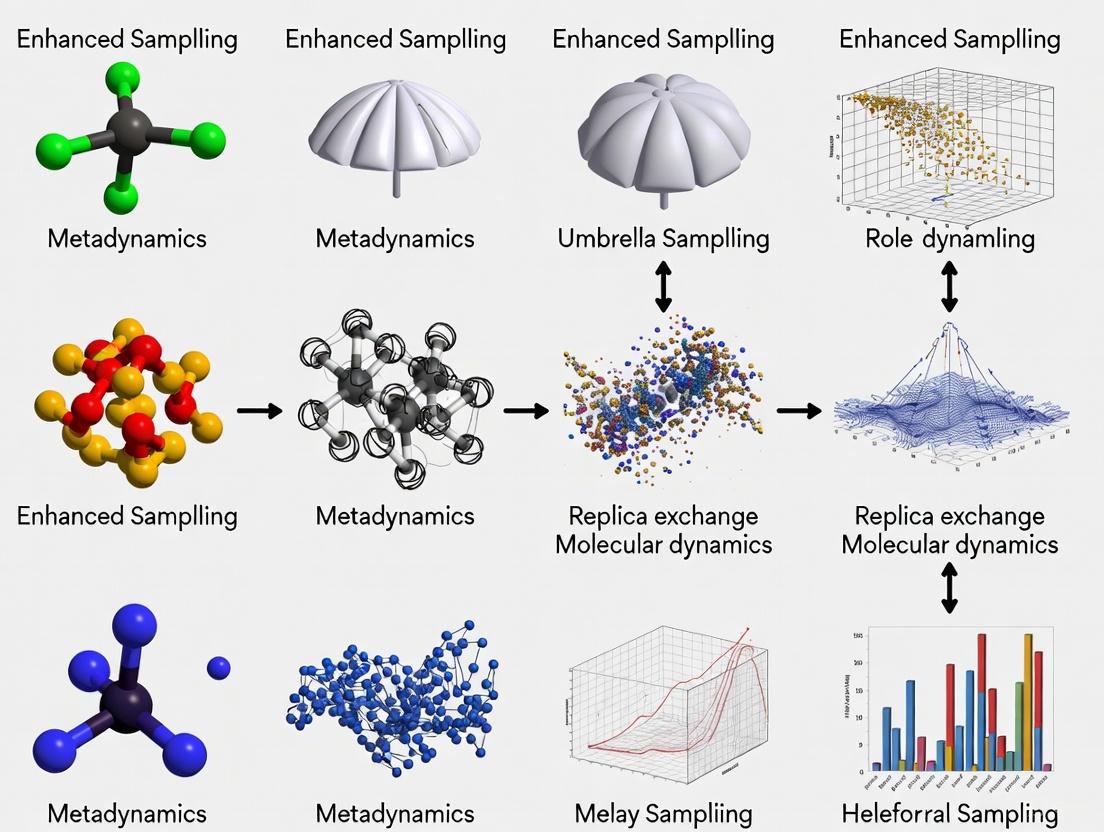

Workflow Visualization

Integrative Workflow for Ensemble Determination

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents and Computational Tools for Conformational Ensemble Research

| Item / Resource | Function / Description | Example Use Case |

|---|---|---|

| Molecular Dynamics Software (GROMACS, AMBER, NAMD) | Software suites to perform MD and enhanced sampling simulations. | Simulating the atomistic dynamics of a protein-ligand complex in explicit solvent [4] [3]. |

| State-of-the-Art Force Fields (a99SB-disp, CHARMM36m) | Physical models defining energy terms for interatomic interactions. | Generating initial conformational ensembles for IDPs prior to reweighting [4]. |

| AI Co-folding Models (AlphaFold3, RoseTTAFold All-Atom) | Web servers or software for predicting biomolecular complex structures. | Rapid initial assessment of a protein-small molecule binding pose [5]. |

| Experimental Datasets (NMR chemical shifts, SAXS profiles) | Ensemble-averaged experimental measurements of structural properties. | Serving as restraints for maximum entropy reweighting of MD simulations [4]. |

| Reweighting & Analysis Software (Custom Python/MATLAB scripts) | Code for implementing maximum entropy reweighting and analyzing ensembles. | Integrating MD simulations of an IDP with NMR data to determine a accurate ensemble [4]. |

| Explicit Solvent Models (TIP3P, a99SB-disp water) | Computational models representing water molecules in simulations. | Creating a physiologically realistic environment for MD simulations [4] [3]. |

Conformational Ensembles and Their Biological Significance

Proteins are not static entities; their function emerges from a dynamic interplay of structure, motion, and interaction [6]. Conformational ensembles—sets of structures with associated probabilities or weights—provide a fundamental framework for understanding this dynamic nature, representing the populations of interconverting structures a protein samples under physiological conditions [7]. This paradigm is crucial for describing the behavior of a vast range of proteins, from structured proteins that undergo functional motions to intrinsically disordered proteins (IDPs) that lack a stable tertiary structure altogether [4] [2]. The biological significance of conformational ensembles is profound: they underpin mechanisms of allosteric regulation, enable ligand binding and recognition, and facilitate conformational selection and induced fit. For structured proteins like G protein-coupled receptors (GPCRs), distinct subsets of conformations within the broader ensemble are responsible for activating specific downstream signaling cascades, a concept central to biased agonism and functional selectivity [8]. For IDPs, which exist as dynamic ensembles by default, structural heterogeneity is a prerequisite for their functional versatility, allowing them to act as hubs in signaling networks and interact with numerous partners [4] [2]. Accurately determining these ensembles is therefore not merely an academic exercise but is essential for elucidating disease mechanisms and guiding the rational design of therapeutics.

Methodological Toolkit: Sampling and Determining Conformational Ensembles

Determining accurate conformational ensembles is a major challenge in structural biology. Experimental and computational methods each offer distinct advantages and face unique limitations, making their integration a powerful path forward.

Experimental Techniques for Probing Ensembles

Biophysical techniques provide essential, albeit often indirect, data on conformational states and dynamics.

- Nuclear Magnetic Resonance (NMR) Spectroscopy: A cornerstone for studying dynamics, NMR can measure parameters like chemical shifts, J-coupling constants, residual dipolar couplings (RDCs), and paramagnetic relaxation enhancement (PRE) [4] [7]. These data report on local and long-range structural properties, providing ensemble-averaged information on timescales from picoseconds to seconds.

- Small-Angle X-Ray Scattering (SAXS): SAXS provides low-resolution information about the global dimensions and shape of a protein in solution, such as the radius of gyration (Rg) [4]. It is particularly valuable for characterizing the compactness or extended nature of IDP ensembles.

- Single-Molecule FRET (smFRET) and DEER Spectroscopy: These techniques can probe distances and populations of conformations, offering insights into conformational heterogeneity and dynamics that may be obscured in ensemble-averaged measurements [8].

A key limitation of these experimental methods is that they typically report on averages over large numbers of molecules and time, and the data can be consistent with a vast number of possible conformational distributions [4] [7]. This is known as the degeneracy problem.

Computational Approaches for Generating Ensembles

Computational methods aim to generate atomic-resolution models of conformational states.

- Molecular Dynamics (MD) Simulations: MD simulations use physics-based force fields to model the motions of every atom in a molecule over time. In principle, a sufficiently long MD simulation can provide an atomistically detailed conformational ensemble [4] [2]. However, the accuracy of this ensemble is highly dependent on the quality of the force field, and the timescales required to observe biologically relevant rare events can be computationally prohibitive [8] [2].

- Enhanced Sampling Methods: To overcome the timescale limitation of standard MD, various enhanced sampling methods have been developed. These include techniques like Gaussian accelerated MD (GaMD), which can capture slow events like proline isomerization in IDPs [2]. The goal is to more efficiently explore the protein's energy landscape and identify metastable states [8].

- Generative Deep Learning (DL): Recently, AI-based methods have emerged as a transformative alternative. Models like the Internal Coordinate Net (ICoN) learn the principles of conformational changes from MD simulation data and can then rapidly generate novel, synthetically reasonable conformations, providing a comprehensive sampling of a protein's conformational landscape [9] [2]. These approaches can outperform MD in generating diverse ensembles with comparable accuracy but often depend on the quality of the data used for training [2].

Integrative Methods: The Power of Combination

No single method is sufficient to unequivocally determine a conformational ensemble. Integrative approaches combine computational and experimental data to overcome their individual limitations.

- The Maximum Entropy Principle: This is a leading framework for integration. It seeks to find the minimal perturbation to a computationally derived ensemble (e.g., from an MD simulation) required to achieve agreement with experimental data [6] [4] [7]. This is typically achieved by reweighting the structures in the initial ensemble.

- Maximum Entropy Reweighting: A simple, robust, and automated maximum entropy reweighting procedure can determine accurate atomic-resolution ensembles by integrating extensive experimental datasets from NMR and SAXS with all-atom MD simulations [4]. A key advance is the use of a single free parameter—the desired effective ensemble size—to automatically balance the restraints from different experimental datasets, minimizing subjective decisions and overfitting [4]. This approach can yield conformational ensembles that are highly similar even when starting from MD simulations using different force fields, representing progress toward force-field independent accurate ensembles [4].

The following workflow diagram illustrates a robust protocol for integrative ensemble determination.

Comparative Analysis of Enhanced Sampling Methodologies

Different enhanced sampling methods offer distinct advantages and are suited for different research questions. The table below provides a structured comparison of the three primary methodological categories.

Table 1: Comparison of Enhanced Sampling Methodologies for Conformational Ensembles

| Method Category | Key Principle | Computational Cost | Key Applications | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Simulation-Based Enhanced Sampling (GaMD, Metadynamics) | Accelerates exploration of energy landscape by modifying the potential energy function [8] [2]. | High to Very High | GPCR activation [8], proline isomerization in IDPs [2]. | Physically detailed; can discover new states without prior knowledge; provides dynamical information. | Force-field dependent; requires expert parameter tuning; computationally intensive. |

| Integrative Modeling (Maximum Entropy Reweighting) | Reweights an initial MD ensemble to achieve best fit with experimental data [4] [7]. | Medium (after initial MD sampling) | Determining atomic-resolution IDP ensembles [4], resolving heterogeneity. | Produces ensembles validated by experiment; can overcome some force-field biases; automated protocols available [4]. | Limited to conformations sampled in the initial simulation; quality depends on initial sampling and experimental data. |

| AI-Driven Sampling (Generative Deep Learning, e.g., ICoN) | Learns the distribution of conformations from data (MD or experimental) to generate new structures [9] [2]. | Low (after training) Very High (for training) | Rapid sampling of highly dynamic proteins (e.g., Aβ42) [9], exploring large conformational spaces. | Extremely fast sampling post-training; can identify rare states; learns complex sequence-structure relationships [2]. | "Black box" nature; limited interpretability; dependent on quality and breadth of training data [2]. |

Quantitative Performance Benchmarking

The ultimate test of an ensemble generation method is its ability to produce physically realistic models that match experimental observations. Recent studies provide quantitative benchmarks.

Table 2: Quantitative Benchmarking of Ensemble Method Performance

| Study Focus | Method(s) Compared | Key Performance Metric | Result |

|---|---|---|---|

| IDP Ensemble Accuracy [4] | Maximum Entropy Reweighting applied to MD simulations from different force fields (a99SB-disp, C22*, C36m). | Convergence of reweighted ensembles to similar conformational distributions. | For 3 out of 5 IDPs (Aβ40, ACTR, PaaA2), reweighted ensembles from different force fields converged to highly similar distributions, indicating force-field independence [4]. |

| AI vs. MD Sampling [2] | Generative Deep Learning (DL) vs. Molecular Dynamics (MD). | Sampling efficiency and diversity for IDP conformational landscapes. | DL approaches can outperform MD in generating diverse ensembles with comparable accuracy and significantly lower computational cost after training [2]. |

| Deep Learning Validation [9] | Internal Coordinate Net (ICoN) model for Aβ42 monomer. | Ability to rationalize experimental findings and identify novel conformations. | Analysis of AI-sampled conformations revealed clusters that rationalized experimental EPR and amino acid substitution data, and identified distinct side-chain rearrangements [9]. |

Detailed Experimental Protocols

To ensure reproducibility and provide practical guidance, this section outlines detailed protocols for key experiments and calculations cited in this guide.

Protocol 1: Maximum Entropy Reweighting for IDP Ensembles

This protocol is adapted from the robust, automated procedure described in [4].

System Setup and MD Simulation:

- System Preparation: Generate an initial extended structure of the IDP. Place it in a simulation box with explicit water molecules and ions to neutralize the system charge.

- MD Production Run: Perform long-timescale (e.g., 30 µs) all-atom MD simulations using state-of-the-art force fields for disordered proteins (e.g., a99SB-disp, Charmm36m). Run multiple replicas with different initial velocities to improve sampling. Save tens of thousands of snapshots (e.g., ~30,000 structures) to form the initial unbiased ensemble.

Experimental Data Collection:

- Acquire extensive experimental data for the IDP. The protocol in [4] used NMR data (chemical shifts, J-coupling constants, residual dipolar couplings, paramagnetic relaxation enhancements) and SAXS data. Ensure data is of high quality and represents a variety of structural constraints.

Forward Model Calculation:

- For every saved MD snapshot, use appropriate forward models to predict the value of each experimental observable. For example, use SHIFTX2 or similar for NMR chemical shifts [4]. This creates a dataset linking each conformation to its predicted experimental readout.

Maximum Entropy Optimization:

- The goal is to find a set of weights ( w_t ) for each conformation ( t ) that minimizes the discrepancy between the calculated ensemble averages and the experimental data while maximizing the entropy of the distribution (minimizing bias).

- The optimization is performed subject to the constraints: ( \sum{t=1}^{N} wt O{calc}^i = O{exp}^i ) for all experimental observables ( i ), and ( \sum{t=1}^{N} wt = 1 ).

- Utilize a single free parameter, the Kish effective sample size threshold (e.g., K=0.10), to automatically balance the restraints from different data types and prevent overfitting [4]. This means the final ensemble retains ~10% of the original structures with significant weight.

Validation and Analysis:

- Assess the agreement between the experimental data and the predictions from the reweighted ensemble (back-validation).

- Analyze the structural properties of the refined ensemble, such as radius of gyration, end-to-end distances, and secondary structure content.

- Compare ensembles derived from different initial force fields to assess convergence toward a force-field independent solution [4].

Protocol 2: Generative Deep Learning for Conformation Sampling

This protocol is based on the ICoN model described in [9].

Data Curation and Preprocessing:

- Training Data Collection: Assemble a large and diverse set of protein conformations. This can be sourced from long MD simulation trajectories of the protein of interest (e.g., Aβ42) or from databases of protein structures.

- Feature Representation: Represent each conformation in internal coordinates (e.g., dihedral angles) which is a more natural representation for neural networks to learn protein motions [9].

Model Architecture and Training:

- Model Design: Implement a deep generative model, such as a variational autoencoder (VAE) or generative adversarial network (GAN). The ICoN model uses an internal coordinate-based network [9].

- Training Loop: Train the model to learn the underlying probability distribution of the conformational data. The model learns to map input conformations to a lower-dimensional "latent space" and then reconstruct them, thereby capturing the essential features of the conformational landscape.

Conformation Generation and Sampling:

- Latent Space Sampling: Once trained, sample new points from the latent space. Interpolation between points in this space can generate novel, synthetically reasonable conformations that smoothly transition between states [9].

- Structure Decoding: Decode the sampled latent points back into full atomic-level protein conformations using the decoder part of the network.

Validation and Functional Analysis:

- Experimental Comparison: Validate the generated ensemble by comparing its average properties (e.g., calculated NMR chemical shifts, Rg from SAXS) with available experimental data [9] [2].

- Cluster Analysis: Perform clustering on the generated conformations to identify dominant states. Analyze these clusters to rationalize experimental findings, such as identifying conformations with specific side-chain interactions probed by EPR spectroscopy [9].

Table 3: Key Research Reagents and Computational Tools for Ensemble Studies

| Item/Tool Name | Type | Primary Function in Ensemble Studies |

|---|---|---|

| a99SB-disp [4] | Molecular Mechanics Force Field | A protein force field and water model combination optimized for disordered proteins, used to generate accurate initial MD ensembles. |

| GROMACS/AMBER | MD Simulation Software | High-performance software packages to run all-atom MD and enhanced sampling simulations. |

| Charmm36m [4] | Molecular Mechanics Force Field | An alternative force field with improved accuracy for IDPs and membrane proteins, used for comparative MD studies. |

| SHIFTX2 [4] | Forward Model Software | Calculates NMR chemical shifts from protein structures, enabling comparison between MD ensembles and experimental NMR data. |

| NMR Chemical Shifts [4] | Experimental Data | Provides ensemble-averaged information on local backbone and side-chain conformation. |

| SAXS Data [4] | Experimental Data | Provides low-resolution information on the global shape and dimensions of a protein in solution. |

| ICoN (Internal Coordinate Net) [9] | AI Generative Model | A deep learning model that samples conformational ensembles by learning from MD data in internal coordinate space. |

The choice of an enhanced sampling method depends heavily on the specific biological question, available resources, and the system under study. The following decision diagram synthesizes the information in this guide to aid in method selection.

For researchers seeking the most physically detailed understanding of a conformational transition pathway, simulation-based enhanced sampling remains a powerful choice, despite its cost [8]. When the goal is to determine the most accurate possible equilibrium ensemble, particularly for challenging systems like IDPs, integrative modeling using maximum entropy reweighting is the state-of-the-art, as it directly incorporates experimental validation [4]. For large-scale exploration of conformational landscapes or when computational speed is paramount, AI-driven generative models offer an unparalleled advantage, though their interpretability and data dependence require careful consideration [9] [2]. Looking forward, the most powerful strategies will likely be hybrid approaches that integrate the physical rigor of MD, the data-driven efficiency of AI, and the grounding reality of experimental data [2]. This synergistic combination promises to unlock a deeper, more quantitative understanding of conformational ensembles and their profound biological significance.

Molecular dynamics (MD) simulations are a cornerstone of modern computational biology and drug discovery, providing atomic-level insight into biomolecular processes. However, a fundamental challenge limits their utility: the vast timescale gap between what is computationally feasible to simulate and the timescales of biologically critical events. Most MD simulations capture nanoseconds to microseconds, while functionally important conformational changes in proteins—such as folding, allosteric transitions, and ligand binding—often occur on millisecond to second timescales or longer [10] [11]. This discrepancy arises because molecular systems spend most of their time trapped in metastable states, separated by high free-energy barriers that are rarely crossed during short simulations [10]. This sampling bottleneck means standard MD often fails to explore the full conformational landscape, leading to incomplete or inaccurate understanding of biological function and hindering the reliability of computational predictions in drug development.

Enhanced sampling methods have been developed to overcome these barriers, effectively accelerating the exploration of configuration space while aiming to recover correct thermodynamic and kinetic properties. This guide provides a comparative analysis of leading enhanced sampling approaches, evaluating their performance, underlying mechanisms, and practical applications to help researchers select appropriate strategies for their specific sampling challenges.

Comparative Analysis of Enhanced Sampling Methods

Methodologies and Underlying Principles

Enhanced sampling techniques can be broadly categorized into collective-variable biasing and tempering methods. Collective-variable (CV) biasing methods, including metadynamics and the adaptive biasing force algorithm, rely on identifying a small set of relevant order parameters that describe the slow degrees of freedom of the system. These methods enhance sampling along these specific directions in configuration space [10]. In contrast, tempering methods, particularly parallel tempering (replica exchange), run multiple simulations at different temperatures or Hamiltonian parameters, allowing configurations to exchange and thereby overcome energy barriers more efficiently through higher-temperature replicas [10].

Metadynamics works by adding a history-dependent bias potential—typically composed of Gaussian functions—to the system's Hamiltonian along predefined CVs. This bias systematically discourages the system from revisiting previously sampled configurations, effectively "filling up" free energy minima and pushing the system to explore new regions [10]. The adaptive biasing force (ABF) algorithm takes a different approach, directly calculating and applying a bias equal to the negative of the mean force along a CV. This gradually flattens the free energy landscape along the chosen direction, enabling uniform sampling [10]. Parallel tempering employs multiple non-interacting copies (replicas) of the system simulated simultaneously at different temperatures. Periodic exchange attempts between adjacent replicas allow conformations to diffuse across temperatures, enabling systems to overcome barriers at high temperatures while accumulating properly weighted low-temperature states [10].

Quantitative Performance Comparison

The performance of various computational methods, including enhanced sampling approaches, has been rigorously assessed through blind challenges such as the Statistical Assessment of Modeling of Proteins and Ligands (SAMPL) series. The SAMPL9 host-guest challenge evaluated methods for predicting binding free energies using macrocyclic host systems [12]. The table below summarizes the performance of different methodological categories in this challenge:

Table 1: Performance of Computational Methods in SAMPL9 Host-Guest Binding Free Energy Prediction

| Method Category | Specific Method | Host System | RMSE (kcal mol⁻¹) | Key Findings |

|---|---|---|---|---|

| Machine Learning | Molecular Descriptors | WP6 | 2.04 | Highest accuracy among ranked methods for WP6 [12] |

| Docking | Not Specified | WP6 | 1.70 | Outperformed more computationally expensive MD methods [12] |

| MD/Force Fields | Various | WP6 | Varying | Generally better correlation with experiment than ML/docking [12] |

| MD/Force Fields | ATM | Cyclodextrins | <1.86 | Top performing method for cyclodextrin-phenothiazine systems [12] |

The data reveals several important trends. For the WP6 host system, docking approaches unexpectedly outperformed many MD-based methods despite their lower computational cost [12]. This suggests that adequate sampling, rather than force field accuracy, may be the primary limitation for some MD applications. For the cyclodextrin-phenothiazine systems, the Attacking Transition Method (ATM) achieved the best performance with RMSE below 1.86 kcal mol⁻¹ [12]. However, correlation metrics for ranked methods in this dataset were generally poorer than for WP6, highlighting the particular challenges posed by asymmetric host systems that can accommodate guests in multiple orientations [12].

Emerging AI-Based Approaches

A recent revolutionary approach, BioEmu, employs generative AI to overcome traditional sampling limitations [11]. This diffusion model-based system simulates protein equilibrium ensembles with approximately 1 kcal/mol accuracy while achieving a 4–5 orders of magnitude speedup compared to conventional MD [11]. BioEmu combines protein sequence encoding with a generative diffusion model, using AlphaFold2's Evoformer module to convert input sequences into structural representations. The system then generates independent structural samples in 30–50 denoising steps on a single GPU, bypassing the sequential bottleneck of MD simulations [11].

In rigorous benchmarks focusing on out-of-distribution generalization and distinct conformational states, BioEmu successfully sampled large-scale open-closed transitions with success rates of 55%-90% for known conformational changes, outperforming baselines like AFCluster and DiG [11]. This approach enables previously infeasible genome-scale protein function predictions on a single GPU, revealing substrate-induced free energy shifts and cryptic pockets for drug targeting.

Experimental Protocols and Workflows

Standard Enhanced Sampling Protocol

A typical workflow for conducting enhanced sampling simulations involves multiple stages of system preparation, equilibration, and production sampling with bias application. The diagram below illustrates this general protocol:

This workflow is implemented in widely used molecular dynamics packages such as NAMD and GROMACS, often with visualization and analysis conducted through VMD [13]. The Theoretical and Computational Biophysics Group provides comprehensive tutorials for these methods, including specialized guides for free energy calculations and enhanced sampling techniques [13].

Specialized Protocol: Binding Free Energy Calculation

For binding free energy calculations, as tested in the SAMPL challenges, specialized protocols are required. The workflow below details the process specifically for host-guest systems:

Key considerations for this protocol include adequate sampling of all relevant binding orientations. For cyclodextrin systems, for example, participants in the SAMPL9 challenge needed to account for the fact that "guest phenothiazine core traverses both the secondary and primary faces of the cyclodextrin hosts" [12]. Additionally, proper system preparation must address potential protonation states and tautomers, as these can significantly impact binding affinities.

AI-Enhanced Sampling Protocol

The revolutionary BioEmu approach follows a distinctly different protocol centered around training a generative model:

Table 2: BioEmu Training and Implementation Protocol

| Training Stage | Data Input | Key Processes | Outcome |

|---|---|---|---|

| Pre-training | Processed AlphaFold Database (AFDB) | Data augmentation to link sequences to diverse structures | Enhanced generalization to conformational variations [11] |

| MD Integration | Thousands of protein MD datasets (>200 ms total) | Reweighting using Markov state models (MSM) for equilibrium distributions | Incorporation of dynamical information [11] |

| Property Prediction Fine-Tuning | 500,000 experimental stability measurements | Minimizing discrepancies between predicted and experimental values | Thermodynamic accuracy (<1 kcal/mol error) [11] |

| Inference | Single protein sequence | 30-50 denoising steps on a single GPU | Generation of equilibrium ensemble [11] |

This protocol enables "sampling thousands of structures per hour on a single GPU, compared to months on supercomputing resources" required for traditional MD [11]. The key innovation is the Property Prediction Fine-Tuning (PPFT) stage, which incorporates experimental observations directly into the diffusion training process, optimizing the ensemble distribution by minimizing discrepancies between predicted and experimental values [11].

Research Reagent Solutions and Tools

Successful implementation of enhanced sampling methods requires both software tools and carefully characterized model systems for validation. The table below outlines essential resources in this field:

Table 3: Essential Research Reagents and Computational Tools for Enhanced Sampling Studies

| Resource Type | Specific Tool/System | Key Features/Applications | Access/Reference |

|---|---|---|---|

| Software Packages | NAMD | Molecular dynamics with enhanced sampling methods [13] | http://www.ks.uiuc.edu/Research/namd/ |

| Software Packages | VMD | Visualization and analysis of MD simulations [13] | http://www.ks.uiuc.edu/Research/vmd/ |

| Software Packages | BioEmu | AI-powered equilibrium ensemble generation [11] | Requires implementation from original publication |

| Benchmark Systems | WP6 Host-Guest | Pillar[6]arene derivative with anionic carboxylate arms [12] | SAMPL9 Challenge [12] |

| Benchmark Systems | β-Cyclodextrin | Hydrophobic cavity with hydrophilic faces [12] | SAMPL9 Challenge [12] |

| Benchmark Systems | HbCD | Hexakis-2,6-dimethyl-β-cyclodextrin derivative [12] | SAMPL9 Challenge [12] |

| Educational Resources | TCBG Tutorials | Step-by-step guides for free energy methods [13] | http://www.ks.uiuc.edu/Training/Tutorials/ |

The SAMPL challenges provide particularly valuable benchmark systems that are "much smaller and more rigid than biomolecules," making them "reasonable surrogates for proteins to help test and improve computational methods for binding free energies" [12]. These well-characterized host-guest systems enable researchers to validate their methods before applying them to more complex biological targets.

The field of enhanced sampling is evolving rapidly, with traditional CV-based and tempering methods now complemented by revolutionary AI-based approaches. While classical methods like metadynamics and parallel tempering continue to see widespread use and improvement, the emergence of tools like BioEmu demonstrates the transformative potential of generative AI for overcoming sampling bottlenecks. Quantitative assessments through initiatives like the SAMPL challenges provide crucial benchmarking, revealing that method performance can vary significantly across different molecular systems and that adequate sampling of all relevant states remains a critical challenge.

Future advancements will likely focus on hybrid approaches that combine physics-based simulations with machine learning, improved automated CV discovery, and more efficient integration of experimental data directly into sampling algorithms. As these methods mature, they will increasingly enable accurate prediction of binding affinities for drug discovery, characterization of rare biological events, and ultimately bridge the timescale gap that has long limited molecular simulations.

The study of protein dynamics, folding, and function relies heavily on two powerful theoretical frameworks: the Principle of Minimal Frustration and Free Energy Landscape (FEL) characterization. These conceptual models provide complementary insights into the structural behavior of proteins and biomolecules. The Principle of Minimal Frustration posits that evolved protein sequences are selected to have energy landscapes where the native state is the most stable, with minimal energetic conflicts that might trap the protein in non-functional conformations [14]. This organization results in a funnel-like landscape that guides the protein toward its native structure. In contrast, Free Energy Landscape characterization provides a conceptual and computational framework for mapping the different conformational states accessible to a protein, their populations, and the pathways connecting them [15]. Together, these frameworks help rationalize a wide range of protein behaviors, from folding and allostery to function and dysfunction.

The integration of these theoretical frameworks with enhanced sampling methods has revolutionized computational biophysics, enabling researchers to bridge timescales and access molecular events that were previously computationally intractable. This guide compares the performance and applications of key experimental and computational approaches within these frameworks, providing researchers with objective data to inform their methodological choices.

Theoretical Foundation and Key Concepts

The Principle of Minimal Frustration

The Principle of Minimal Frustration represents a fundamental concept in energy landscape theory. It states that natural protein domains have evolved to minimize strong energetic conflicts in their native states, creating an overall funnel-like landscape that efficiently directs the protein toward its functional folded structure [14]. This organization is achieved through evolutionary selection of sequences that stabilize the native structure more than expected from random associations of residues.

However, local violations of this principle are crucial for protein function. These locally frustrated regions enable the complex multifunnel energy landscapes needed for large-scale conformational changes, allostery, and molecular recognition [14]. Approximately 10% of interactions in allosteric proteins are highly frustrated, while about 40% are minimally frustrated, creating a web of stable interactions that impart rigidity to much of the protein structure [14]. Highly frustrated clusters often colocate with regions that reconfigure between alternative structures, sometimes acting as specific hinges or "cracking" points where local stability is low [14].

Table 1: Frustration Distribution in Allosteric Protein Domains

| Frustration Type | Percentage of Contacts | Functional Role |

|---|---|---|

| Minimally Frustrated | ~40% | Imparts structural rigidity, forms stable core |

| Neutral | ~50% | No strong functional preference |

| Highly Frustrated | ~10% | Enables conformational changes, allostery, binding sites |

Free Energy Landscape Characterization

The Free Energy Landscape framework provides a physical description of how proteins and nucleic acids fold into specific three-dimensional structures [16]. Knowledge of the FEL topology is essential for understanding biochemical processes, as it reveals the conformers of a protein, their basins of attraction, and the hierarchical relationships among them [17]. The FEL formalism illustrates the different states accessible to biomolecules, their populations, and the pathways for interconversion [15].

In this framework, the conformational space is represented as a landscape with valleys (energy minima) corresponding to meta-stable states and mountains (energy barriers) representing transition states between them. The depth of the valleys relates to the stability of each state, while the height of the barriers determines the kinetics of transitions. Reconstruction of FELs can be achieved through various experimental and computational methods, including NMR-guided simulations [15], single-molecule force spectroscopy [16], and analysis of molecular dynamics trajectories [17].

Methodological Approaches and Experimental Protocols

Frustration Analysis Protocol

The algorithm for localizing frustration involves calculating local frustration indices for each protein using a version of the global gap criterion [14]. The protocol involves:

- Energy Calculation: Using a low-resolution nonadditive water-mediated potential that is transferable and successful in ab initio protein structure prediction.

- Decoy Generation: Creating two types of decoy sets:

- Structurally conservative mutants: Native interactions are compared to those present when other amino acids are placed at the same position (mutational frustration index).

- Alternative compact structures: Native interactions are compared to those present in alternative structures of the same sequence (configurational frustration index).

- Z-score Calculation: The native energy is compared with the distribution of decoy energies using a Z-score criterion, defining a "frustration index."

- Classification: Interactions are classified as "minimally frustrated," "highly frustrated," or "neutral" based on cutoff values of the frustration indices.

This analysis can be applied to pairs of homologous proteins solved in different states to identify frustration patterns that enable conformational changes [14].

NMR-Guided Metadynamics for FEL Reconstruction

This approach incorporates NMR chemical shifts as collective variables (CVs) in metadynamics simulations to enhance sampling efficiency [15]:

- System Setup: Perform molecular dynamics simulations using packages like Gromacs with force fields such as AMBER99SB-ILDN.

- Enhanced Sampling: Apply bias-exchange metadynamics (BE-META) with multiple replicas, each with a different history-dependent potential acting on different CVs.

- Collective Variables: Include both structural and chemical shift CVs:

- Secondary structure level: α-helical, antiparallel, and parallel β-sheet content

- Tertiary structure level: Hydrophobic contacts, side-chain dihedral angles

- Chemical shift CV: Difference between experimental and calculated chemical shifts (using CamShift method)

- Convergence Monitoring: Continue simulations until bias potentials become stationary (typically hundreds of nanoseconds).

- Landscape Reconstruction: Compute free-energy landscape as a function of key CVs after convergence.

This approach enhances sampling efficiency by two or more orders of magnitude compared to standard molecular dynamics, enabling free-energy estimation with kBT accuracy from trajectories of just a few microseconds [15].

Single-Molecule Force Spectroscopy Landscape Reconstruction

This experimental approach reconstructs FELs from non-equilibrium single-molecule force measurements [16]:

- Sample Preparation: DNA hairpins or RNA molecules with distinct, sequence-dependent folding landscapes.

- Force Application: Use optical tweezers to apply precisely controlled forces to individual molecules.

- Extension Measurement: Monitor force-extension curves during unfolding and refolding cycles.

- Nonequilibrium Work Measurement: Record work values from multiple pulling experiments at different rates.

- Landscape Reconstruction: Apply the Jarzynski equality or related nonequwork relations to reconstruct the underlying free-energy landscape.

- Validation: Compare reconstructed landscapes with those from equilibrium probability distributions.

This method has been experimentally validated using DNA hairpins and applied to complex nucleic acids like riboswitch aptamers with multiple intermediate states [16].

Conformational Markov Network Analysis

This computational technique translates molecular dynamics trajectories into network representations for FEL analysis [17]:

- Trajectory Generation: Run stochastic molecular dynamics simulations with enough steps to assure ergodicity.

- Space Discretization: Divide the conformational space into cells of equal volume (nodes in the network).

- Transition Counting: Count hops between different configurations to establish links between nodes, including self-loops for within-node transitions.

- Probability Assignment: Assign weights to nodes based on the fraction of trajectory time spent in those configurations.

- Network Analysis: Analyze the Conformational Markov Network to identify:

- Basins of attraction corresponding to meta-stable conformers

- Dwell times and rate constants between states

- Hierarchical relationships among basins

This approach provides a mesoscopic description that bridges microscopic dynamics and macroscopic kinetics [17].

Comparative Performance of Enhanced Sampling Methods

Quantitative Comparison of Method Performance

Table 2: Performance Metrics of Enhanced Sampling Methods for FEL Characterization

| Method | Sampling Enhancement | System Size Limitations | Computational Cost | Key Applications |

|---|---|---|---|---|

| NMR-Guided Metadynamics | 2-3 orders of magnitude [15] | Medium proteins (e.g., GB3, 56 residues) [15] | ~380 ns/replica [15] | Protein folding, intermediate identification |

| Generative Diffusion (DDPM) | Significant computational savings [18] | Small to medium (20-140 residues) [18] | Training on short MD trajectories [18] | Conformational ensembles, novel conformation generation |

| String Method with Swarms | Path-focused enhancement [19] | Large systems (e.g., KcsA channel) [19] | Dependent on collective variables [19] | Ion channel gating, allosteric transitions |

| Conformational Markov Networks | Variable based on discretization [17] | Limited by MD trajectory length [17] | Network construction & analysis [17] | Landscape topology, kinetic hierarchy |

Accuracy and Limitations Across Methods

Table 3: Accuracy and Method-Specific Limitations in FEL Determination

| Method | Accuracy Validation | Key Limitations | Novel Conformation Sampling |

|---|---|---|---|

| NMR-Guided Metadynamics | Native structure reproduction (0.5-1.3 Å RMSD) [15] | Requires experimental NMR data [15] | Limited to force field biases |

| Generative Diffusion (DDPM) | Reproduces key structural features (RMSD, Rg) [18] | May overlook low-probability regions [18] | Generates novel transitions not in training data [18] |

| Single-Molecule Force Spectroscopy | Experimental validation against equilibrium distributions [16] | Low occupancy states difficult to resolve [16] | Limited to mechanically accessible pathways |

| String Method with Swarms | Force field dependent [19] | Pathway initialization sensitive [19] | Predefined reaction coordinates |

Research Reagent Solutions and Essential Materials

Key Research Reagents for FEL Studies

Table 4: Essential Research Reagents for Free Energy Landscape Studies

| Reagent/Software | Function/Purpose | Example Applications |

|---|---|---|

| AMBER99SB-ILDN Force Field | Protein force field for molecular dynamics [15] | GB3 folding simulations [15] |

| Gromacs Simulation Package | Molecular dynamics software [15] | Enhanced sampling simulations [15] |

| CHARMM Force Field | Alternative protein force field [19] | KcsA inactivation studies [19] |

| CamShift | Chemical shift calculation method [15] | NMR-guided metadynamics [15] |

| Bias-Exchange Metadynamics | Enhanced sampling technique [15] | Protein folding landscape determination [15] |

| Denoising Diffusion Probabilistic Model | Generative machine learning model [18] | Enhanced conformational sampling [18] |

| String Method with Swarms | Path-finding enhanced sampling [19] | Ion channel inactivation pathways [19] |

Signaling Pathways and Method Workflows

Allosteric Inactivation Pathway of KcsA Channel

KcsA Channel Inactivation Pathway

Free Energy Landscape Determination Workflow

FEL Determination Workflow

The comparative analysis presented in this guide demonstrates that both the Principle of Minimal Frustration and Free Energy Landscape characterization provide essential frameworks for understanding protein dynamics, but their effective application requires careful matching of methods to biological questions. NMR-guided metadynamics offers robust FEL determination for small to medium proteins when NMR data is available, while generative diffusion models show promise for augmenting MD simulations with significant computational savings, albeit with limitations in capturing low-populated states [18] [15]. The string method provides detailed pathways for complex transitions like ion channel inactivation, but results are force-field dependent [19].

Future methodological development should focus on integrating machine learning approaches with physical models, improving force field accuracy for conformational transitions, and developing multi-scale methods that combine experimental data with enhanced sampling. Such advances will further bridge theoretical frameworks with experimental observables, continuing to enhance our understanding of protein energy landscapes and their functional implications.

Enhanced Sampling Techniques: From Generalized-Ensemble Algorithms to AI-Driven Methods

Molecular dynamics (MD) simulations are a cornerstone of computational biology, enabling the study of biological systems at an atomic level of detail. However, a significant limitation of conventional MD is the sampling problem: biological molecules possess rough energy landscapes with many local minima separated by high-energy barriers, causing simulations to become trapped in non-representative conformational states [20] [21]. This problem is particularly pronounced in the study of complex processes like protein folding and peptide aggregation, where the relevant conformational space is vast. Enhanced sampling methods were developed specifically to overcome these limitations, and among these, Replica-Exchange Molecular Dynamics (REMD) has gained widespread popularity for its effectiveness and parallel efficiency [22] [20].

REMD represents a powerful hybrid approach that combines MD simulations with the Monte Carlo algorithm, facilitating efficient exploration of a system's free energy landscape [22]. Its development was driven by the need to study "hardly-relaxing" systems in molecular dynamics, leading to its formal introduction for biomolecular studies by Sugita and Okamoto [20] [21]. The method has since proven invaluable for investigating biological phenomena that involve significant conformational changes, such as the aggregation of amyloidogenic peptides associated with Alzheimer's disease, Parkinson's disease, and type II diabetes [22]. The core strength of REMD lies in its ability to overcome high energy barriers efficiently, allowing researchers to sample conformational space more sufficiently than conventional MD simulations can achieve within practical computational timeframes [22].

Fundamental Principles of REMD

The Generalized Ensemble and Replica Exchange Mechanism

The foundational concept of REMD involves simulating multiple non-interacting copies (replicas) of a system simultaneously, each running in parallel at different temperatures or with different Hamiltonians [22]. These replicas are essentially independent MD simulations that collectively form a "generalized ensemble." In the most common implementation, known as T-REMD (Temperature REMD), each replica is maintained at a unique temperature, with the temperatures typically spaced to ensure a sufficient exchange probability between adjacent replicas [22] [20].

The power of the method emerges from periodic Monte Carlo-style exchange attempts between neighboring replicas. Specifically, at regular intervals, the configurations of two replicas at adjacent temperatures (e.g., Tm and Tn, with Tm < Tn) are considered for swapping. The decision to accept or reject an exchange is based on the Metropolis criterion, which for REMD depends on the potential energies and temperatures of the two replicas [22]. The acceptance probability for swapping configurations between replicas at temperatures Tm and Tn is given by:

w(X → X') = min(1, exp(-Δ))

where Δ = (βn - βm)(V(q[i]) - V(q[j])), with β = 1/kBT, and V(q[i]) and V(q[j]) representing the potential energies of the two configurations [22]. This elegant formulation ensures that detailed balance is maintained, meaning the simulation correctly samples the underlying statistical mechanical ensemble. A key insight is that the kinetic energy terms cancel out in the derivation, requiring only the potential energies to compute the exchange probability [22].

Overcoming Energy Barriers

The REMD method enables efficient sampling by allowing configurations to effectively "walk" in temperature space. A low-temperature replica that becomes trapped in a local energy minimum can be exchanged to a higher temperature, where thermal energy is sufficient to escape the barrier. Once displaced, the configuration may continue to exchange through various temperatures, potentially returning to lower temperatures in a different conformational basin [22] [20].

This temperature-assisted barrier crossing allows REMD simulations to explore conformational space much more broadly than conventional MD. While a standard simulation might require impractically long timescales to observe transitions between metastable states, the replica exchange mechanism accelerates this process by periodically injecting thermal energy in a controlled, statistically valid manner [22]. The parallel nature of REMD makes it particularly suitable for modern high-performance computing clusters, where many processors can work simultaneously on different replicas [22].

REMD Variants and Methodological Extensions

As REMD gained popularity, several variants were developed to address specific challenges or improve efficiency for particular applications. The table below summarizes the key REMD variants and their characteristics.

Table 1: Variants of Replica-Exchange Molecular Dynamics

| Variant | Acronym | Exchange Parameter | Key Features | Typical Applications |

|---|---|---|---|---|

| Temperature REMD | T-REMD | Temperature | Original form; uses different temperatures [20] | Protein folding, peptide aggregation [22] |

| Hamiltonian REMD | H-REMD | Hamiltonian (force field) | Exchanges between different potential energy functions [20] | Improved sampling for complex systems |

| Reservoir REMD | R-REMD | Reservoir states | Uses pre-generated conformational reservoirs; better convergence [20] | Systems with slow conformational transitions |

| Multiplexed REMD | M-REMD | Multiple replicas per temperature | Uses multiple parallel runs at each temperature level [20] | Enhanced sampling in shorter simulation time |

| Lambda-REMD | λ-REMD | Thermodynamic coupling parameter | Exchanges along alchemical transformation pathways [20] | Free energy calculations, solvation studies |

| Constant pH REMD | pH-REMD | Protonation states | Samples different protonation states at constant pH [20] | pH-dependent phenomena, protonation equilibria |

Temperature REMD (T-REMD)

Temperature REMD (T-REMD) is the original and most widely implemented form of replica exchange. In T-REMD, replicas differ only in their simulation temperatures, with the highest temperature selected to be sufficiently elevated to overcome the relevant energy barriers in the system [20]. The choice of temperature distribution is critical for T-REMD efficiency, as it directly affects the exchange rates between adjacent replicas. If the temperature spacing is too wide, the exchange probability drops, reducing the effectiveness of the method. If too narrow, computational resources are wasted on unnecessary replicas [22].

The effectiveness of T-REMD has been shown to be strongly dependent on the system size and the activation enthalpy of the processes being studied. Interestingly, choosing the maximum temperature too high can actually make REMD less efficient than conventional MD, and a good strategy is to select the maximum temperature slightly above the point where the enthalpy for folding vanishes [20]. T-REMD has been successfully applied to study the free energy landscape and folding mechanisms of various peptides and proteins [20].

Hamiltonian REMD (H-REMD) and Specialized Variants

Hamiltonian REMD (H-REMD) represents a more general form of replica exchange where different replicas employ modified Hamiltonians (potential energy functions) rather than different temperatures [20]. These modifications can include altered force field parameters, simplified potentials, or the introduction of biasing potentials. The advantage of H-REMD is that it can provide enhanced sampling in dimensions other than temperature, which can be more efficient for certain problems.

Among specialized variants, λ-REMD allows exchange along thermodynamic coupling parameters, which has been shown to help distribute side chain rotamers of proteins into different states and has been useful for calculating absolute binding free energies [20]. Constant pH REMD enables the simulation of pH effects by allowing replicas to sample different protonation states, which is particularly valuable for studying environmental effects on protein structure and function [20].

REMD in Practice: A Case Study of Peptide Aggregation

Experimental Protocol for hIAPP(11-25) Dimerization

To illustrate a practical application of REMD, we examine a case study investigating the dimerization of the 11-25 fragment of human islet amyloid polypeptide (hIAPP(11-25)), a process relevant to type II diabetes [22]. The following workflow diagram outlines the key stages of this REMD investigation:

The methodology begins with constructing an initial configuration of the hIAPP(11-25) dimer, with the peptide sequence RLANFLVHSSNNFGA, capped by an acetyl group at the N-terminus and an NH₂ group at the C-terminus to match experimental conditions [22]. The system is then solvated in a water box with counterions added to achieve electroneutrality. Energy minimization using the steepest descent algorithm follows to remove steric clashes, after which the system undergoes equilibration in both NVT (constant number of particles, volume, and temperature) and NPT (constant number of particles, pressure, and temperature) ensembles [22].

For the production REMD simulation, 16 replicas were typically used with temperatures ranging from 300 K to 500 K, although the exact number and range depend on system size. The simulation is conducted in the NPT ensemble for 100 nanoseconds or more, with exchange attempts between neighboring replicas occurring every 1-2 picoseconds [22]. The combination of MD simulation with the Monte Carlo exchange algorithm enables the system to overcome high energy barriers and sample conformational space sufficiently to map the free energy landscape of the dimerization process [22].

Successful implementation of REMD simulations requires specific computational tools and resources. The table below details key components of the research toolkit for REMD studies:

Table 2: Essential Research Reagents and Computational Resources for REMD

| Resource Category | Specific Tools | Function/Role in REMD |

|---|---|---|

| MD Software Packages | GROMACS [22], AMBER [20], CHARMM [22], NAMD [20] | Core simulation engines with REMD implementations |

| Visualization Software | VMD (Visual Molecular Dynamics) [22] | Molecular modeling, trajectory analysis, and structure visualization |

| Computing Infrastructure | HPC cluster with MPI [22] | Parallel computation of multiple replicas; typically 2 cores per replica recommended |

| Analysis Tools | GROMACS analysis suite [22], custom scripts | Free energy calculations, cluster analysis, trajectory processing |

| Force Fields | CHARMM, AMBER, OPLS | Molecular mechanics parameter sets for potential energy calculations |

The REMD simulations are highly parallel and computationally demanding, requiring a High Performance Computing (HPC) cluster installed with both the MD software (e.g., GROMACS) and a standard message passing interface (MPI) library [22]. For the case study described, approximately two cores per replica provided good productivity on clusters equipped with Intel Xeon X5650 CPUs or better [22]. For systems requiring specialized analysis, Linux shell scripts coded in Bash are often employed for data preparation and file processing [22].

Comparative Analysis with Other Enhanced Sampling Methods

REMD is one of several enhanced sampling methods available to computational researchers. The table below provides a systematic comparison of REMD with other prominent techniques:

Table 3: Comparison of Enhanced Sampling Methods in Molecular Dynamics

| Method | Key Principle | Advantages | Limitations | Ideal Use Cases |

|---|---|---|---|---|

| REMD | Parallel simulations at different temperatures with configuration exchanges [22] [20] | Parallel efficiency; no need for pre-defined reaction coordinates [22] | Scalability issues with large systems; temperature selection critical [20] | Protein folding, peptide aggregation, small to medium systems [22] |

| Metadynamics | "Fills" free energy wells with repulsive bias [20] | Direct free energy estimation; efficient for defined processes [20] | Requires careful selection of collective variables; bias deposition [20] | Barrier crossing in known reaction coordinates, ligand binding [20] |

| Simulated Annealing | Gradual temperature cooling to find global minimum [20] [21] | Effective for finding low-energy states; applicable to large systems [20] [21] | Not a true equilibrium method; limited thermodynamic information [20] | Structure prediction, flexible macromolecular complexes [20] [21] |

When selecting an enhanced sampling method, researchers must consider both the biological and physical characteristics of their system, particularly its size [20] [21]. While REMD and metadynamics are the most widely adopted sampling methods for biomolecular dynamics, simulated annealing and its variant Generalized Simulated Annealing (GSA) are particularly well-suited for characterizing very flexible systems and can be employed at relatively low computational cost for large macromolecular complexes [20] [21].

The evolution of REMD-based methods has demonstrated their applicability across a broad spectrum of problems, from the smallest peptides to substantial molecular systems [21]. However, REMD appears most effective for systems with energy landscapes that are not excessively rough, while metadynamics excels in cases where local equilibration of intermediate simulation steps is particularly difficult [21].

Replica-Exchange Molecular Dynamics represents a powerful and versatile approach for enhancing conformational sampling in molecular simulations. Its core principle of running parallel simulations at different temperatures with periodic configuration exchanges enables efficient exploration of complex energy landscapes that would be inaccessible to conventional molecular dynamics. The development of various REMD variants, including Hamiltonian REMD, λ-REMD, and constant pH REMD, has further expanded its applicability to diverse biological problems.

While REMD faces scalability challenges for very large systems and requires careful parameter selection for optimal performance, it remains one of the most widely used enhanced sampling methods in computational biophysics and structural biology. Its strengths are particularly evident in studies of protein folding, peptide aggregation, and other conformational transitions where prior knowledge of reaction coordinates is limited. As computational resources continue to grow and methodological refinements emerge, REMD is poised to maintain its important role in the computational researcher's toolkit, contributing to our understanding of complex biological processes and facilitating drug discovery efforts targeting challenging molecular mechanisms.

Molecular Dynamics (MD) simulations have become an indispensable computational microscope, allowing researchers to observe biological and chemical processes at an atomic level. However, a significant limitation hinders their effectiveness: the rare event problem. Many processes of interest, such as protein folding, ligand binding, or conformational changes, occur on timescales that far exceed what conventional MD simulations can reach, often because the system becomes trapped in local energy minima separated by high energy barriers [20]. This results in inadequate sampling of conformational states, which in turn limits the ability to reveal functional properties of the systems being examined [20]. Enhanced sampling methods were developed precisely to address this sampling problem. Among these techniques, metadynamics has emerged as a powerful and widely adopted approach that "fills the free energy wells with computational sand" [20], thereby enabling efficient exploration of complex energy landscapes. This guide provides a comprehensive comparison of metadynamics against other enhanced sampling methods, evaluating their performance, applications, and implementation requirements for researchers in computational chemistry and drug development.

Understanding Metadynamics: Core Principles and Evolution

The Fundamental Mechanism

Metadynamics belongs to a class of biased-sampling techniques that introduce an external history-dependent potential to encourage exploration of the free energy surface (FES). The core principle involves discouraging revisiting of previously sampled states by depositing repulsive Gaussian potentials at regular intervals along strategically chosen collective variables (CVs) [20]. This process effectively "fills" the free energy wells, creating a computational memory of explored regions and pushing the system to explore new territories of the conformational landscape. As described in the literature, "by discouraging that previously visited states be re-sampled, these and newer methods, like metadynamics, allow one to direct computational resources to a broader exploration of the free-energy landscape" [20].

Key Methodological Variants

The metadynamics algorithm has evolved significantly since its inception, with several important variants enhancing its applicability:

- Well-Tempered Metadynamics: This refinement gradually reduces the height of the deposited Gaussians as simulation progresses, ensuring more controlled convergence and better estimation of free energies [23].

- Parallel Bias Metadynamics (PBMetaD): An extension designed to efficiently handle many CVs simultaneously by applying independent bias potentials to each CV [23].

- MetaDynamics Metainference (M&M): A hybrid approach that combines metadynamics with experimental data integration, using Bayesian inference to restrain simulations to match experimental observables [23].

Comparative Analysis of Enhanced Sampling Methods

To objectively evaluate metadynamics within the landscape of enhanced sampling techniques, we compare its performance, computational requirements, and applicability against other major methods.

Table 1: Comparison of Key Enhanced Sampling Methods

| Method | Core Principle | Key Advantages | Primary Limitations | Ideal Use Cases |

|---|---|---|---|---|

| Metadynamics | History-dependent bias along CVs; "fills" free energy wells | Provides direct FES reconstruction; intuitive tuning; multiple variants available | Quality heavily dependent on CV choice; risk of inaccurate FES with poor CVs | Ligand binding, conformational changes, protein folding [20] [24] |

| Replica-Exchange MD (REMD) | Parallel simulations at different temperatures with state exchanges | No need for predefined CVs; formally exact sampling | Requires substantial computational resources; temperature selection critical [20] | Protein folding, peptide structure sampling [20] |

| Alchemical Transformations | Non-physical paths between states using coupling parameter (λ) | Efficient for relative binding free energies; well-established protocols | Lacks mechanistic insights; limited to end-state comparisons [25] | Lead optimization in drug discovery [25] |

| Path-Based Methods | Physical paths along collective variables between states | Provides mechanistic insights and pathways; absolute binding free energies | Computationally demanding; path definition can be challenging [25] | Binding pathway analysis, transport mechanisms [25] |

Table 2: Performance Comparison for Binding Free Energy Calculations

| Method | System Evaluated | Performance Metrics | Computational Cost | Key References |

|---|---|---|---|---|

| Metadynamics | SARS-CoV-2 Mpro inhibitors | Kendall τ = 0.28; Pearson r² = 0.49 [24] | Medium-High (depends on CVs and system size) | Saar et al., 2023 [24] |

| Free Energy Perturbation (FEP) | SARS-CoV-2 Mpro inhibitors | Better accuracy than MetaD in this specific study [24] | High (requires many λ windows) | Saar et al., 2023 [24] |

| Ensemble Docking | SARS-CoV-2 Mpro inhibitors | Kendall τ = 0.18-0.21; Pearson r² = 0.55-0.73 [24] | Low (fastest method) | Saar et al., 2023 [24] |

| Path-Based with PCVs | General protein-ligand binding | Accurate absolute binding free energy estimates [25] | High (requires path definition and sampling) | Bertazzo et al., 2021 [25] |

Practical Performance Considerations

When selecting an enhanced sampling method, researchers must consider several practical aspects beyond theoretical performance. Metadynamics has demonstrated particular strength in binding free energy calculations, though its accuracy may be slightly lower than specialized alchemical methods like FEP for certain systems [24]. However, metadynamics provides additional mechanistic insights into binding pathways that alchemical methods cannot offer [25]. The computational expense of metadynamics is highly dependent on the choice and number of CVs, with simpler CVs requiring less computational resources but potentially missing important aspects of the transition mechanism.

Experimental Protocols and Implementation

Standard Metadynamics Protocol for Protein-Ligand Systems

Implementing metadynamics requires careful attention to several methodological aspects. The following protocol outlines key steps for a typical protein-ligand binding study:

Collective Variable Selection: Identify 2-4 CVs that capture the essential degrees of freedom for the process. Common choices include:

- Distance between protein binding site and ligand

- Ligand solvent-accessible surface area

- Number of protein-ligand contacts

- Root-mean-square deviation (RMSD) of ligand orientation

Gaussian Parameters: Set appropriate parameters for bias deposition:

Simulation Setup: Employ well-tempered metadynamics with bias factor of 10-30 and multiple walkers (typically 10 replicas) for improved sampling [23].

Convergence Monitoring: Assess convergence by monitoring the time evolution of the free energy estimate and CV distributions.

Advanced Implementation: Path Collective Variables

For complex transitions, Path Collective Variables (PCVs) provide a powerful approach. PCVs include S(x), measuring progression along a predefined pathway, and Z(x), quantifying orthogonal deviations [25]. The implementation involves:

- Defining a reference path between initial and final states

Calculating S(x) and Z(x) using the formalism:

S(x) = Σ i·e^(-λ||x - xᵢ||²) / Σ e^(-λ||x - xᵢ||²)

Z(x) = -λ⁻¹ ln(Σ e^(-λ||x - xᵢ||²))

where p denotes reference configurations, λ is a smoothing parameter, and ||x - xᵢ||² quantifies the distance between instantaneous and reference configurations [25].

Biasing the simulation along these PCVs to enhance sampling of the pathway.

Metadynamics Simulation Workflow

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Software Tools for Enhanced Sampling Simulations

| Tool Name | Primary Function | Key Features | Compatibility/Requirements |

|---|---|---|---|

| PLUMED | CV analysis and enhanced sampling | Extensive CV library; multiple method implementations; community plugins | Interfaces with GROMACS, AMBER, NAMD, etc. [23] |

| PySAGES | Advanced sampling on GPUs | Full GPU acceleration; JAX-based; multiple backends | HOOMD-blue, OpenMM, LAMMPS, JAX MD [26] |

| GROMACS | Molecular dynamics engine | High performance; extensive force fields; active development | PLUMED, VMD, PyMOL [23] |

| SSAGES | Advanced ensemble simulations | Multiple enhanced sampling methods; cross-platform | LAMMPS, GROMACS, HOOMD-blue [26] |

Emerging Trends and Future Directions

The field of enhanced sampling is rapidly evolving, with several promising developments enhancing metadynamics applications:

Machine Learning Integration

Machine learning (ML) is profoundly reshaping metadynamics and other enhanced sampling methods [27]. Key advances include:

- Data-Driven Collective Variables: ML techniques such as autoencoders and nonlinear dimensionality reduction automatically identify relevant CVs from simulation data, reducing dependence on intuition-based CV selection [27].

- Optimized Biasing Strategies: Reinforcement learning approaches adaptively optimize bias deposition parameters during simulations, improving sampling efficiency [27].

- Generative Models: Novel generative approaches, including generative adversarial networks (GANs) and diffusion models, create synthetic transition paths that guide enhanced sampling [27].

Specialized Hardware Acceleration

The development of GPU-accelerated sampling tools like PySAGES enables dramatically faster simulations by leveraging modern graphics processors [26]. This advancement allows researchers to tackle more complex systems and access longer timescales, bridging the gap between computational feasibility and biologically relevant timescales.