Energy Minimization with Constraints and Restraints: Advanced Computational Strategies for Drug Discovery

This article provides a comprehensive exploration of energy minimization techniques incorporating constraints and restraints, tailored for the computational drug discovery pipeline.

Energy Minimization with Constraints and Restraints: Advanced Computational Strategies for Drug Discovery

Abstract

This article provides a comprehensive exploration of energy minimization techniques incorporating constraints and restraints, tailored for the computational drug discovery pipeline. We cover foundational principles from statistical mechanics and physics-based simulations like Free Energy Perturbation (FEP), detail modern methodologies integrating active learning and generative AI, and address critical troubleshooting for optimization challenges. A dedicated analysis of validation frameworks and comparative performance of algorithms against experimental data offers practical insights. Aimed at researchers and drug development professionals, this review synthesizes current computational strategies to enhance the efficiency and predictive accuracy of molecular optimization in biomedical research.

Core Principles: The Physics and Theory of Energy Minimization in Molecular Systems

Theoretical Foundations of Energy Minimization

Energy minimization represents a fundamental computational paradigm for predicting the stable states and evolution of physical systems. At its core, this approach identifies configurations that minimize a system's free energy, providing insights into equilibrium properties and dynamic behaviors across diverse scientific domains. The mathematical foundation begins with defining appropriate energy functionals that encode the system's physics, followed by the application of variational principles to locate minima on often complex, high-dimensional energy landscapes.

The double-well potential serves as the canonical model for understanding phase separation phenomena and bistable systems. This potential is described by the function ( F(u) = \frac{1}{4}(u^2 - 1)^2 ), which possesses two stable minima at ( u = \pm 1 ) separated by an energy barrier at ( u = 0 ) [1]. In the context of the Allen-Cahn equation, which describes phase separation processes, the total free energy functional incorporates both this potential and interfacial energy:

[ E(u) = \int_{\Omega} \frac{\epsilon^2}{2}|\nabla u|^2 + F(u) \, dx ]

where ( \epsilon ) is the interface parameter controlling the width of the transition region between phases, and ( u ) represents the phase field variable [1]. The system evolves toward equilibrium following the direction of steepest energy descent, mathematically represented as gradient flow.

For quantum systems, the energy landscape exhibits even more complex features. Recent experiments with photon gases in optical microcavities have revealed regions of negative local kinetic energy in evanescent quantum states, where the relationship between energy and speed defies classical intuition [2]. This phenomenon challenges traditional interpretations and highlights the rich complexity of energy landscapes in quantum mechanical systems.

Computational Frameworks and Methodologies

Deep Learning for Energy Minimization

The Energy-Stabilized Scaled Deep Neural Network (ES-ScaDNN) framework represents a recent innovation for solving partial differential equations through direct energy minimization, bypassing traditional time-stepping approaches [1]. This architecture incorporates two key components:

- Scaling Layer: Constrains network output to physically meaningful ranges (e.g., [-1, 1] for the Allen-Cahn equation)

- Variance-Based Regularization: Promotes clear phase separation and prevents convergence to trivial solutions

The composite loss function combines the energy functional with stabilization terms:

[ \mathcal{L}{total} = E(u) + \lambda{var} \mathcal{R}_{var}(u) ]

where ( \mathcal{R}{var} ) represents the variance-based regularization term with weighting factor ( \lambda{var} ) [1]. Implementation considerations include selecting activation functions appropriate to problem dimensionality—ReLU for one-dimensional cases and tanh for two-dimensional problems to maintain solution smoothness.

Enhanced Sampling for Molecular Systems

In molecular simulations, energy minimization faces the challenge of navigating rough energy landscapes with numerous local minima. Enhanced sampling techniques accelerate the exploration of conformational space:

Table 1: Enhanced Sampling Methods for Complex Energy Landscapes

| Method | Key Principle | Application in Energy Minimization |

|---|---|---|

| Metadynamics | Deposits repulsive bias in collective variable space | Identifies cryptic allosteric sites by overcoming energy barriers [3] |

| Accelerated MD (aMD) | Modifies potential energy surface with boost potential | Captures millisecond-scale events in nanoseconds [3] |

| Replica Exchange MD (REMD) | Parallel simulations at different temperatures with exchanges | Samples diverse conformational states to escape local minima [3] |

| Umbrella Sampling | Applies harmonic potentials along reaction coordinates | Calculates free energy profiles for allosteric transitions [3] |

These methods enable researchers to overcome energy barriers that would be insurmountable with conventional molecular dynamics, revealing transient states and allosteric pockets critical for understanding protein function and drug binding [3].

Application Notes: Case Studies Across Disciplines

Materials Science: Phase Separation Dynamics

The Allen-Cahn equation provides a framework for modeling phase separation in material systems. Implementation requires careful attention to parameters and boundary conditions:

- Domain Specification: Typically Ω = [-1,1] for 1D or Ω = [0,1]×[0,1] for 2D

- Boundary Conditions: Neumann conditions (( \frac{\partial u}{\partial n} = 0 )) prevent interface crossing at boundaries [1]

- Interface Parameter (ε): Controls transition sharpness; smaller ε values yield sharper interfaces

The ES-ScaDNN approach demonstrates how deep learning can directly minimize the energy functional, providing advantages for steady-state solutions where traditional time-dependent formulations are inefficient [1].

Drug Discovery: Binding Energy Optimization

In pharmaceutical applications, energy minimization principles underpin the prediction of protein-ligand binding affinities. Nonequilibrium switching (NES) methods have emerged as efficient approaches for calculating binding free energies, offering 5-10X higher throughput compared to traditional free energy perturbation and thermodynamic integration [4].

Table 2: Energy Minimization Platforms in Drug Discovery

| Platform/Company | Core Approach | Key Achievement | Therapeutic Area |

|---|---|---|---|

| Exscientia | Generative AI + automated precision chemistry | First AI-designed drug (DSP-1181) in Phase I trials [5] | Oncology, Immuno-oncology |

| Schrödinger | Physics-enabled molecular design | TYK2 inhibitor (zasocitinib) advanced to Phase III [5] | Immunology |

| Insilico Medicine | Generative chemistry + target discovery | TNIK inhibitor for IPF progressed to Phase II [5] | Fibrotic Diseases |

These platforms demonstrate how energy minimization principles, when integrated with AI and high-performance computing, can dramatically compress drug discovery timelines from the traditional 5 years to as little as 18 months for candidate progression to clinical trials [5].

Quantum Systems: Tunneling and Evanescent States

Recent experiments with quantum particles in coupled waveguide systems have revealed surprising energy-speed relationships in regions of negative kinetic energy [2]. The experimental protocol involves:

- System Preparation: Creating a high-finesse microcavity with nanostructured mirrors to form waveguide potentials

- Photon Injection: Using non-resonant laser pulses (26 ns FWHM) to create a quasi-stationary photon gas

- Speed Measurement: Determining particle speeds by comparing translational motion along the waveguide with population hopping between coupled waveguides

This approach has demonstrated that particles with more negative local kinetic energy paradoxically exhibit higher measured speeds inside potential steps—a finding that challenges Bohmian trajectory interpretations of quantum mechanics [2].

Experimental Protocols

Protocol 1: Deep Learning for Allen-Cahn Equation

Title: Energy Minimization for Phase Field Models Using ES-ScaDNN

Objective: Compute steady-state solutions to the Allen-Cahn equation through direct energy minimization.

Materials:

- Python 3.8+ with TensorFlow 2.8+ or PyTorch 1.11+

- Numerical computing environment (NumPy, SciPy)

- Visualization tools (Matplotlib, ParaView)

Procedure:

- Network Architecture Design:

- Construct a fully connected neural network with 6-8 hidden layers

- Implement a scaling layer to enforce physical bounds on output

- Select activation functions: ReLU for 1D problems, tanh for 2D problems [1]

Loss Function Implementation:

- Code the Allen-Cahn energy functional: ( E(u) = \int_{\Omega} \frac{\epsilon^2}{2}|\nabla u|^2 + \frac{1}{4}(u^2 - 1)^2 \, dx )

- Add variance regularization: ( \mathcal{R}{var} = \lambda{var} \cdot \text{Var}(u) )

- Implement boundary condition enforcement via penalty terms

Training Protocol:

- Generate training points using Latin Hypercube Sampling across domain

- Initialize weights using Glorot initialization

- Use Adam optimizer with learning rate 0.001

- Train for 10,000-50,000 epochs with batch size 128

- Implement learning rate reduction on plateau

Validation:

- Compare with analytical solutions where available

- Verify mass conservation and interface sharpness

- Test sensitivity to parameter ε

Troubleshooting:

- For convergence issues, increase variance regularization weight

- If interface is diffuse, reduce ε or increase network capacity

- For numerical instability, add spectral normalization to network layers

Protocol 2: Nonequilibrium Switching for Binding Free Energy

Title: Rapid Binding Affinity Calculation via NES

Objective: Determine relative binding free energies between ligand pairs with high throughput.

Materials:

- Molecular dynamics software (GROMACS, OpenMM, NAMD)

- Ligand parameterization tools (CGenFF, ACPYPE)

- Cloud computing infrastructure for parallel execution

Procedure:

- System Preparation:

- Parameterize ligand molecules using appropriate force fields

- Solvate protein-ligand complex in explicit water

- Add ions to neutralize system charge

Nonequilibrium Protocol Design:

- Define alchemical transformation pathway between ligands

- Set switching time of 50-500 ps (significantly shorter than equilibrium methods) [4]

- Configure bidirectional transformations (forward and reverse)

Execution:

- Launch 50-100 independent switching simulations in parallel

- Apply rapid transformation protocols

- Collect work values from all trajectories

Analysis:

- Calculate free energy differences using Jarzynski equality: ( \Delta G = -\beta^{-1} \ln \langle e^{-\beta W} \rangle )

- Estimate statistical uncertainty using bootstrap methods

- Validate with thermodynamic cycle consistency checks

Advantages:

- 5-10X higher throughput versus traditional FEP/TI [4]

- Innately parallelizable and fault-tolerant

- Suitable for cloud-native implementation

Research Reagent Solutions

Table 3: Essential Computational Tools for Energy Minimization Research

| Tool/Category | Specific Examples | Function/Purpose |

|---|---|---|

| Molecular Dynamics Engines | GROMACS, AMBER, OpenMM, NAMD | Simulate molecular motion and calculate forces [3] |

| Enhanced Sampling Packages | PLUMED, Colvars | Implement metadynamics, umbrella sampling, etc. [3] |

| Deep Learning Frameworks | TensorFlow, PyTorch, JAX | Build and train neural networks for energy minimization [1] |

| Quantum Chemistry Software | Gaussian, ORCA, Q-Chem | Calculate electronic structure and energies |

| Free Energy Tools | FEP+, NES workflows, TI scripts | Compute binding affinities and energy differences [4] |

| Visualization & Analysis | VMD, PyMOL, Matplotlib, ParaView | Analyze and present structural and numerical results |

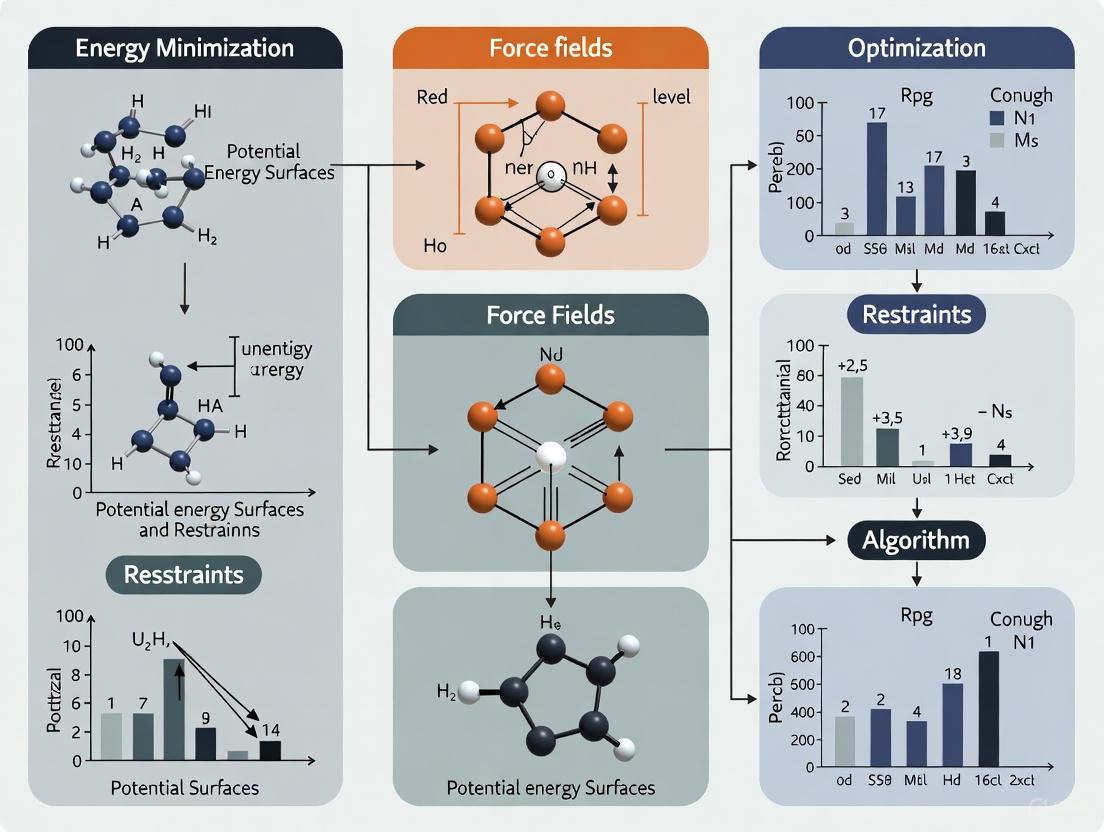

Visualizations of Key Concepts

Diagram 1: Double-Well Potential and Phase Separation

Diagram 2: Energy Minimization Computational Workflow

Diagram 3: Nonequilibrium Switching Protocol

In computational chemistry and drug development, achieving simulations that are both physically realistic and computationally feasible presents a significant challenge. The strategic application of constraints and restraints provides a powerful methodology to navigate this trade-off. Constraints typically refer to the complete removal of specific degrees of freedom (e.g., fixing bond lengths), while restraints involve the application of penalty functions that discourage, but do not entirely prevent, deviations from a reference value (e.g., harmonically restraining a distance between two atoms) [6]. These techniques are foundational to molecular dynamics (MD), free energy calculations, and structure-based drug design, enabling researchers to incorporate experimental data, maintain structural integrity, and guide simulations toward relevant conformational states. Within the broader context of energy minimization research, they effectively reduce the dimensionality of the potential energy surface, making the exploration of complex biomolecular processes tractable. This document outlines key protocols and applications, providing a practical guide for their implementation in modern computational research.

Theoretical Foundation and Energetic Penalties

The physical realism imposed by constraints and restraints is mathematically encoded through potential energy functions added to the system's Hamiltonian. The functional form and parameters of these potentials directly determine the energetic cost of deviations from the desired state.

Table 1: Common Potential Functions for Restraints and Constraints

| Type | Mathematical Form | Key Parameters | Primary Application | ||

|---|---|---|---|---|---|

| Position Restraint [6] | `Vpr(ri) = 1/2 * k_pr * | ri - Ri | ²` | k_pr: Force constant; R_i: Reference position |

Equilibration, freezing non-essential parts of a system. |

| Flat-Bottomed Position Restraint [6] | V_fb(r_i) = 1/2 * k_fb * [d_g(r_i; R_i) - r_fb]² * H[d_g(...) - r_fb] |

k_fb: Force constant; r_fb: Flat-bottom radius; g: Geometry (sphere, cylinder, layer) |

Restricting particles to a specific sub-volume (e.g., a binding pocket). | ||

| Distance Restraint [6] | V_dr(r_ij) = { 1/2 * k_dr * (r_ij - r₀)² for r_ij < r₀; 0 for r₀ ≤ r_ij < r₁; 1/2 * k_dr * (r_ij - r₁)² for r₁ ≤ r_ij < r₂ } |

k_dr: Force constant; r₀, r₁, r₂: Lower/upper bounds |

NMR refinement, imposing experimental distance measurements. | ||

| Dihedral Restraint [6] | V_dihr(ϕ') = { 1/2 * k_dihr * (ϕ' - Δϕ)² for ‖ϕ'‖ > Δϕ; 0 for ‖ϕ'‖ ≤ Δϕ } where ϕ' = (ϕ - ϕ₀) MOD 2π |

k_dihr: Force constant; ϕ₀: Reference angle; Δϕ: Tolerance |

Enforcing specific torsional angles in proteins or ligands. | ||

| Angle Restraint [6] | V_ar = k_ar * [1 - cos(n(θ - θ₀))] |

k_ar: Force constant; θ₀: Equilibrium angle; n: Multiplicity |

Restraining angles between atom pairs or with respect to an axis. | ||

| Constraint | N/A – Coordinate is fixed. | N/A | Typically applied to bonds involving hydrogen atoms to enable longer simulation time steps. |

Application Notes in Drug Discovery

The application of constraints and restraints is critical across the drug discovery pipeline, from initial target identification to lead optimization.

Target Identification and Validation using Structural Bioinformatics

In studying the Hepatitis C virus (HCV) proteome, structural bioinformatics approaches integrate homology modeling with MD simulations to identify and validate drug targets like the NS3 protease and NS5B polymerase [7]. Restraints are crucial in homology modeling, where the spatial positions of conserved residues from a known template structure are used to guide the modeling of a target sequence. Subsequent MD simulations of the modeled protein structures often employ positional restraints on the protein backbone to maintain the overall fold while allowing side chains in the binding pocket to relax, ensuring the stability of the model during analysis.

Free Energy Perturbation (FEP) for Lead Optimization

Free Energy Perturbation calculations have become a cornerstone for predicting relative binding affinities during lead optimization. The reliability of FEP is heavily dependent on the careful application of restraints [8].

- Handling Charge Changes: Perturbations that alter the formal charge of a ligand are notoriously challenging. To maintain accuracy, a counterion can be introduced to neutralize the system, and longer simulation times are required for the affected lambda windows to achieve adequate sampling [8].

- Managing Hydration: The hydration environment is critical. Inconsistent water placement between the forward and reverse transformations of a perturbation cycle can lead to hysteresis. Techniques like Grand Canonical Monte Carlo (GCMC) are used to sample water positions adequately, effectively restraining the system to a well-hydrated state [8].

- Torsional Restraints: When a ligand torsion is poorly described by the force field, resulting in unreliable dynamics, quantum mechanics (QM) calculations can be used to refine the torsion parameters. This applies a "knowledge-based" restraint, ensuring the ligand samples physically realistic conformations during the FEP simulation [8].

Absolute Binding Free Energy (ABFE) Calculations

Unlike Relative FEP, Absolute Binding Free Energy calculations decouple a single ligand from its environment in both the bound and unbound states. A key feature of this protocol is the use of harmonic restraints to maintain the ligand's position and orientation within the binding site during the decoupling process. This prevents the ligand from drifting or rotating arbitrarily in the large, unbound simulation box, which would lead to large statistical errors. However, it is noted that ABFE can be sensitive to unaccounted-for protein conformational changes, a limitation that ongoing research seeks to address [8].

Experimental Protocols

Protocol: Setting Up a Position Restraint Simulation for Protein Equilibration

This protocol is used when a protein is introduced into a new environment (e.g., solvation box, membrane) to prevent large, unrealistic structural rearrangements during initial equilibration.

Workflow: Protein Equilibration with Restraints

Materials & Reagents:

- Software: GROMACS [6], AMBER [7], or related MD software.

- Input File: Protein structure file (e.g., PDB format).

- Force Field: e.g., AMBER ff14SB [7], CHARMM, OPLS.

Step-by-Step Procedure:

- Generate Reference Structure: The initial protein structure serves as the reference positions,

R_i[6]. - Create Restraint File: Use software-specific tools (e.g., GROMACS

pdb2gmxorgenrestr) to generate a file containing the atomic indices and reference coordinates. - Define Force Constants: Set the force constant

k_prin the MD parameter file. A typical starting value is 1000 kJ/mol/nm² for heavy atoms, which strongly discourages movement. Backbone atoms may be restrained more strongly than side chains. - Run Restrained Simulation: Execute a short MD simulation (e.g., 100-500 ps) with the position restraints active. The solvent and ions will relax around the constrained protein.

- Analyze Results: Monitor the root-mean-square deviation (RMSD) of the protein backbone relative to the reference structure. The energy of the system should stabilize.

- Release Restraints: Once the system energy and protein position are stable, initiate a new production simulation without positional restraints or with significantly weaker restraints.

Protocol: Applying Distance Restraints for NMR Refinement

This protocol uses experimentally derived nuclear Overhauser effect (NOE) measurements as distance restraints to refine a molecular structure, ensuring it is consistent with empirical data.

Workflow: NMR-Driven Structure Refinement

Materials & Reagents:

- Experimental Data: List of NOE-derived atom-atom distances and their estimated bounds.

- Software: MD package with support for NMR-style restraints (e.g., GROMACS [6], AMBER, CNS).

- Initial 3D Model: A starting structure from homology modeling or ab initio prediction.

Step-by-Step Procedure:

- Translate NOEs to Restraints: Convert the list of NOE signals into a list of distance restraints between specific atom pairs. Each restraint is assigned a lower bound (

r₀) and one or two upper bounds (r₁,r₂), defining a range of acceptable distances [6]. - Choose Force Constant: Select an appropriate force constant,

k_dr. The value should be strong enough to enforce the experimental distances but not so strong as to cause numerical instabilities. Values are often on the order of 100-1000 kJ/mol/nm². - Energy Minimization: Perform an initial energy minimization of the starting structure with the distance restraints active. This removes bad contacts while pulling the structure toward agreement with the NOE data.

- Restrained MD Simulation: Run a thermalized MD simulation (often in explicit solvent) with the distance restraints applied. This allows the structure to sample configurations consistent with both the force field and the experimental data.

- Ensemble Analysis: Typically, multiple independent simulations are run. The resulting trajectories are clustered to generate a representative ensemble of structures that satisfy the NOE restraints. The final ensemble should be validated using tools that analyze stereochemical quality.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software and Computational Tools

| Tool / Resource | Type | Primary Function in Constraint/Restraint Work |

|---|---|---|

| GROMACS [6] [7] | Molecular Dynamics Software | Implements a wide range of restraint potentials (position, distance, angle, dihedral) and constraints (LINCS/SHAKE algorithms). |

| AMBER [7] | Molecular Dynamics Software | Used for MD simulations with restraints, particularly in conjunction with the AMBER force field and MMPBSA binding free energy calculations. |

| AutoDock Vina [7] | Docking Software | Utilizes an empirical scoring function that implicitly restrains ligand poses during docking simulations. |

| MODELLER [7] | Homology Modeling Software | Applies spatial restraints from template structures to generate 3D models of target protein sequences. |

| Open Force Field Initiative [8] | Force Field Development | Develops accurate, open-source force fields (e.g., OpenFF) for small molecules, which include optimized torsion parameters that reduce the need for manual restraints. |

| 3D-RISM / GIST [8] | Solvation Analysis | Identifies hydration sites in protein binding pockets, informing the placement of water molecules that may need to be restrained in FEP simulations. |

| Quantum Chemistry Software | QM Calculation | Used to generate improved torsion parameters for ligands when the classical force field description is inadequate [8]. |

In the field of computational science, particularly within drug discovery and structural biology, the challenge of energy minimization is paramount. This process is essential for predicting molecular structures, understanding protein-ligand interactions, and designing novel therapeutics. Algorithms such as Gradient Descent, Langevin Dynamics, and their stochastic variants form the computational backbone for navigating complex, high-dimensional energy landscapes. These methods enable researchers to locate low-energy states that correspond to stable molecular configurations, a task fundamental to rational drug design. The incorporation of constraints and restraints is critical in these applications, ensuring that simulations adhere to known physical laws or experimental data, thereby improving the biological relevance and accuracy of the models. This document provides application notes and detailed experimental protocols for employing these fundamental algorithms within a research framework focused on energy minimization.

Algorithmic Foundations and Comparative Analysis

Core Principles and Equations

- Gradient Descent (GD) is a first-order iterative optimization algorithm for finding a local minimum of a differentiable function. The update rule is:

x_{n+1} = x_n - η ∇V(x_n), whereηis the learning rate and∇V(x_n)is the gradient of the potential function. Its stochastic variant, Stochastic Gradient Descent (SGD), uses a noisy approximation of the gradient calculated from a random subset of data, which introduces implicit regularization and can help in escaping sharp local minima [9]. - Langevin Dynamics (LD) incorporates noise into the gradient updates, simulating the effect of a thermal bath at a given temperature. The dynamics are described by:

x_{n+1} = x_n - η ∇V(x_n) + √(2ηT) ξ_n, whereTis the temperature andξ_nis a random noise vector drawn from a standard normal distribution. This makes it particularly effective for sampling from multimodal distributions and for non-convex optimization problems [10] [9]. - Regularized Langevin Dynamics (RLD) is a recent advancement that addresses the tendency of LD to get trapped in local optima in discrete domains. It enforces an expected distance between the sampled and current solutions, encouraging more effective exploration of the solution space [10].

Quantitative Algorithm Comparison

The following table summarizes the key characteristics, strengths, and limitations of these algorithms in the context of energy minimization.

Table 1: Comparative Analysis of Energy Minimization Algorithms

| Algorithm | Key Mechanism | Best-Suited Applications | Computational Efficiency | Key Hyperparameters |

|---|---|---|---|---|

| Steepest Descent | Iteratively moves in the direction of the negative gradient [11]. | Initial stages of energy minimization; robust relaxation of highly strained structures [11]. | High speed in initial stages; slow convergence near minimum [11]. | Maximum displacement (h_0), force tolerance (ε) [11]. |

| Conjugate Gradient | Uses conjugate directions for successive line minimizations [11]. | Minimization prior to normal-mode analysis; requires flexible water models [11]. | Slower initial progress, but more efficient near minimum than Steepest Descent [11]. | Force tolerance (ε) [11]. |

| L-BFGS | Approximates the inverse Hessian using a limited memory history [11]. | Large-scale systems where Newton-type methods are prohibitive [11]. | Faster convergence than Conjugate Gradients; limited parallelization [11]. | Number of correction steps for Hessian approximation [11]. |

| Langevin Dynamics | Combines gradient information with injected thermal noise [10]. | Exploring complex, multimodal energy landscapes; combinatorial optimization [10]. | Guided exploration is more efficient than pure random sampling [10]. | Learning rate (η), effective temperature (T) [10] [9]. |

| Stochastic Gradient Descent | Uses a stochastic, noisy estimate of the true gradient [9]. | Training overparameterized neural networks; problems with large datasets [9]. | High per-iteration speed; may not converge to exact minimum without annealing [9]. | Learning rate (η), batch size [9]. |

Application Note: Energy Minimization in Protein-Ligand Docking

Background and Objective

Accurate prediction of protein-ligand binding poses and affinities is a cornerstone of structure-based drug design. A significant challenge is accounting for the flexibility of the protein's binding site, as proteins exist in an ensemble of conformational states. Energy minimization is used to refine docked poses and generate plausible conformers for ensemble docking, which improves virtual screening outcomes [12]. The objective of this application is to generate a diverse set of low-energy protein conformers for a binding site of interest to more accurately rank ligand binding affinities.

Experimental Protocol

This protocol outlines a computationally efficient method to generate plausible protein conformers for ensemble docking, leveraging a mixed-resolution model and Anisotropic Network Model (ANM) [12].

Table 2: Research Reagent Solutions for Hybrid MD/ML Workflow

| Item Name | Function/Description | Application Context |

|---|---|---|

| Anisotropic Network Model (ANM) | Coarse-grained elastic model to predict low-frequency, large-scale protein motions [12]. | Generates initial ensemble of protein conformers based on global dynamics. |

| Mixed-Resolution Model | The protein binding site is modeled at atomistic resolution, while the remainder is coarse-grained [12]. | Drastically reduces computational cost while maintaining accuracy at the site of interest. |

| Molecular Dynamics (MD) Engine (e.g., Desmond) | Performs all-atom simulations to refine structures and calculate binding free energies [12]. | Refines ANM-generated conformers and assesses ligand stability. |

| Glide Docking Module | Performs molecular docking of ligands into generated protein conformers [12]. | Ranks ligands based on predicted binding affinity across the conformer ensemble. |

| MM-GBSA Module | Calculates binding free energies from MD trajectories using Molecular Mechanics/Generalized Born Surface Area approach [12]. | Provides endpoint binding affinity estimation for docked complexes. |

Procedure:

- System Preparation:

- Obtain the initial protein structure (apo or holo form) from a database like the Protein Data Bank.

- Define the binding site region of interest (e.g., a catalytic site or protein-protein interface).

- Prepare the structure for a mixed-resolution simulation: the defined binding site is kept at full atomic resolution, while the rest of the protein is converted to a coarse-grained (CG) representation [12].

Conformer Generation with ANM:

- Use the Anisotropic Network Model (ANM) on the mixed-resolution structure to compute the slowest three normal modes related to the protein's functional dynamics [12].

- Apply six deformation parameters in both directions along these harmonic modes to derive a set of initial conformers (e.g., 36 structures) for the high-resolution binding site region [12].

Energy Minimization and Docking:

- Subject each generated binding site conformer to energy minimization using a protocol like Steepest Descent or L-BFGS to remove steric clashes [12] [11].

- Perform molecular docking calculations (e.g., using Glide) with the ligand of interest into each minimized conformer [12].

- Identify the optimal extents of deformation that yield favorable binding poses.

Validation via Molecular Dynamics:

- Select the top docked complexes and solvate them in an explicit solvent box.

- Apply harmonic restraints of varying strengths (e.g., 0, 25, 35, and 50 kcal/mol·Å²) to different zones of the truncated structure to prevent disintegration during simulation [12].

- Run multiple replicates of all-atom MD simulations for each complex (e.g., 3 replicates of 100 ns each) to confirm the stability of the ligand in the binding site [12].

Binding Affinity Calculation:

The workflow for this protocol is visualized below.

Application Note: Regularized Langevin Dynamics for Combinatorial Optimization

Background and Objective

Combinatorial Optimization (CO) problems, such as molecular docking pose selection or protein folding, are NP-hard challenges central to drug development. Traditional methods can be slow and prone to local minima. Langevin Dynamics (LD) offers an efficient gradient-guided sampling paradigm, but its direct application in discrete spaces can lead to limited exploration. The objective is to leverage Regularized Langevin Dynamics (RLD), which enforces a constant expected Hamming distance between subsequent samples, to escape local optima more effectively and solve CO problems faster and more accurately [10].

Experimental Protocol

This protocol details the implementation of a Regularized Langevin Dynamics solver for combinatorial optimization, which can be built on either a simulated annealing (RLSA) or a neural network (RLNN) framework [10].

Procedure:

- Problem Formulation:

- Define the combinatorial optimization problem in its penalty form:

min_{x∈{0,1}^N} H(x) = a(x) + λ b(x), wherea(x)is the optimization target andb(x)is a penalty term for constraint violation [10].

- Define the combinatorial optimization problem in its penalty form:

Algorithm Selection and Initialization:

- For RLSA: Initialize a random binary solution vector

x_0. Set an initial temperatureT_0and a cooling schedule. - For RLNN: Design a neural network (e.g., a graph neural network) to parameterize the solution distribution. Initialize the network weights.

- For RLSA: Initialize a random binary solution vector

RLD Sampling Loop:

- At each step

t, compute the gradient of the problem's "energy" function,∇H(x_t). In the neural network version, this gradient is obtained via backpropagation. - The core RLD update involves sampling a new candidate solution

x_{t+1}by flipping bits (changing discrete variables) with probabilities informed by the gradient and a regularization term that controls the expected change from the current state [10]. - In RLSA, the candidate is accepted or rejected based on a Metropolis criterion that considers the energy change and the current temperature [10].

- In RLNN, the network is trained using a local objective to predict low-energy states, leveraging the RLD samples.

- At each step

Termination and Output:

- The loop continues for a fixed number of steps or until convergence.

- The output is the solution

xwith the lowest energyH(x)found during the process.

The logical relationship and workflow of the RLD algorithm are shown in the following diagram.

A Thermodynamic Perspective on Training Dynamics

A powerful theoretical framework views Stochastic Gradient Descent (SGD) as a process of free energy minimization [9]. In this analogy, the algorithm does not merely minimize the training loss (internal energy, U) but a free energy function F = U - T S, where S is the entropy of the weight distribution and T is an effective temperature controlled by the learning rate and batch size [9]. This explains why high learning rates prevent convergence to a loss minimum—SGD is actively trading off lower loss for higher entropy (exploration), settling in a wider, and often more generalizable, region of the loss landscape. This principle is directly applicable to training neural network potentials for energy scoring in drug discovery, where controlling the "temperature" of SGD can lead to more robust models.

In computational chemistry and drug discovery, energy functionals and force fields are mathematical models that describe the potential energy of a molecular system as a function of the positions of its atoms [13]. These models are fundamental to molecular dynamics (MD) simulations, enabling researchers to study physical movements, interactions, and thermodynamic properties at an atomic level [14] [15]. Conventional molecular mechanics force fields (MMFFs) approximate the energy landscape using fixed analytical forms, balancing computational efficiency with chemical accuracy [14]. The general form of a molecular mechanics energy functional decomposes the total potential energy into contributions from bonded and non-bonded interactions: E_MM = E_bonded + E_non-bonded [14].

This decomposition includes energy terms for bonds (E_bond), angles (E_angle), proper torsions (E_torsion), improper torsions (E_improper), van der Waals forces (E_VDW), and electrostatic interactions (E_electrostatic) [14]. Force fields must adhere to critical physical constraints including permutational invariance, respect for chemical symmetries, and charge conservation [14]. With the rapid expansion of synthetically accessible chemical space, modern force field development has evolved from traditional look-up table approaches to data-driven parametrization using sophisticated machine learning techniques [14].

Current Force Field Methodologies and Applications

Conventional vs. Machine Learning Force Fields

Molecular mechanics force fields remain the most reliable and commonly used tool for MD simulations of biological systems despite the emergence of machine learning alternatives [14]. Table 1 compares the fundamental characteristics of these approaches.

Table 1: Comparison of Force Field Types for Molecular Modeling

| Feature | Molecular Mechanics (MMFFs) | Machine Learning (MLFFs) |

|---|---|---|

| Functional Form | Fixed analytical forms | Neural networks without fixed functional limitations |

| Computational Efficiency | High | Relatively low |

| Chemical Space Coverage | Limited by parameter tables | Potentially expansive with sufficient data |

| Data Requirements | Moderate | Extremely large |

| Handling of Non-additive Interactions | Approximate | Can capture complex non-pairwise behaviors |

| Example Implementations | AMBER, GAFF, OPLS, ByteFF | Various neural network potentials |

Recent advances in MMFFs include OPLS3e's expansion to 146,669 torsion types and OpenFF's use of SMIRKS patterns to describe chemical environments [14]. The ByteFF force field represents a modern data-driven MMFF that leverages graph neural networks trained on 2.4 million optimized molecular fragment geometries and 3.2 million torsion profiles [14]. This approach maintains the computational efficiency of conventional MMFFs while achieving state-of-the-art performance across various benchmarks including relaxed geometries, torsional energy profiles, and conformational energies [14].

Force Field Parametrization Workflow

The development of accurate force fields requires rigorous quantum mechanical calculations and systematic parameter optimization. The following diagram illustrates the comprehensive parametrization workflow for data-driven force fields like ByteFF:

Diagram 1: Data-driven force field parametrization workflow illustrating the comprehensive process from molecular dataset preparation to final deployment.

Research Reagent Solutions

Table 2: Essential Computational Tools for Force Field Development and Application

| Tool/Category | Specific Examples | Primary Function |

|---|---|---|

| Force Fields | ByteFF, AMBER, GAFF, OPLS, OpenFF | Provide parameters for energy calculations |

| Quantum Chemistry Software | B3LYP-D3(BJ)/DZVP, ωB97M-V | Generate reference data for parametrization |

| Molecular Dynamics Engines | GROMACS, AMBER, NAMD, LAMMPS | Perform simulations using force fields |

| Docking Software | AutoDock Vina, Glide, GOLD, DOCK | Predict ligand-receptor binding poses |

| Optimization Algorithms | Manifold Optimization, geomeTRIC | Energy minimization and conformational search |

| Hardware Platforms | NVIDIA GPUs (RTX 4090, RTX 6000 Ada) | Accelerate computationally intensive calculations |

Protocols for Energy Minimization in Molecular Docking

Molecular Docking and Energy Minimization Framework

Molecular docking predicts the binding affinity of ligands to receptor proteins by searching for optimal binding poses and evaluating them using scoring functions [13]. The process involves two main steps: sampling ligand conformations within the protein's active site, and ranking these conformations using scoring functions [13]. Energy minimization plays a critical role in refining these poses to remove steric clashes and obtain more reliable energy values [16].

The following diagram illustrates the complete molecular docking workflow with integrated energy minimization steps:

Diagram 2: Comprehensive molecular docking workflow highlighting the critical decision point between all-atom and manifold optimization approaches for energy minimization.

Advanced Minimization Protocol: Manifold Optimization for Flexible Molecules

This protocol extends rigid body minimization on manifolds to problems involving flexible molecules with internal degrees of freedom, substantially improving efficiency over traditional all-atom optimization while producing comparable low-energy solutions [16]. The method is particularly valuable for docking small ligands to proteins and can be applied to multidomain proteins with flexible hinges [16].

Step-by-Step Procedure

System Preparation and Representation

- Represent the ligand as a set of rigid clusters connected by rotatable bonds (hinges)

- Form a topology graph G = (V, E) where nodes correspond to rigid clusters and edges represent rotatable bonds

- Select a root cluster and establish parent-child relationships for all clusters

- Assign coordinate frames to each hinge, initially parallel to a fixed reference frame

Tree Structure Establishment

- Ensure the graph is acyclic and connected (tree structure)

- Define hinges between parent-child cluster pairs

- Center each hinge coordinate frame on the end atom in the child cluster

Manifold Optimization Configuration

- Define the conformational space as a combination of rigid motion and internal coordinates

- For a system with m rigid clusters and k rotatable bonds, the search space dimension is 6 + k

- Initialize the algorithm with starting ligand position and torsion angles

Iterative Minimization Process

- Compute the energy gradient with respect to rotational, translational, and torsional degrees of freedom

- Determine search direction using manifold-aware optimization

- Perform line search along geodesics of the manifold

- Update ligand position and torsion angles

- Repeat until convergence criteria are met (gradient norm < threshold or maximum iterations)

Pose Evaluation and Validation

- Calculate final energy of minimized complex

- Compare with alternative minimization approaches

- Validate against experimental data when available

Applications and Validation

This protocol has been successfully applied to three problems of increasing complexity: minimizing fragment-size ligands with single rotatable bonds for binding hot spot identification; docking flexible ligands to rigid protein receptors; and accounting for flexibility in both ligands and receptors [16]. Performance validation demonstrates substantial efficiency improvements over traditional all-atom optimization while producing comparable quality solutions [16].

Hardware Considerations for Energy Minimization Calculations

Optimal Hardware Configurations

Molecular dynamics simulations and energy minimization calculations require intensive computational resources to accurately model atomic interactions [15]. Table 3 provides specifications for hardware components optimized for these workloads.

Table 3: Recommended Hardware for Molecular Dynamics and Energy Minimization

| Component | Recommended Specifications | Performance Considerations |

|---|---|---|

| CPU | AMD Threadripper PRO 5995WX, AMD EPYC, Intel Xeon Scalable | Prioritize clock speed over core count; sufficient cores for parallelization |

| GPU | NVIDIA RTX 6000 Ada (48 GB), NVIDIA RTX 4090 (24 GB), NVIDIA RTX 5000 Ada | CUDA cores for parallel processing; VRAM for large systems |

| RAM | 128-256 GB DDR4/DDR5 | Capacity for large molecular systems and trajectory storage |

| Storage | High-speed NVMe SSD (2-4 TB) | Fast read/write for simulation checkpoints and trajectories |

| Cooling | Advanced liquid or precision air cooling | Maintain stability during continuous high-load computations |

For molecular dynamics workloads, CPU selection should prioritize processor clock speeds over core count, with well-suited choices including mid-tier workstation CPUs with balanced higher base and boost clock speeds [15]. Graphics Processing Units (GPUs) are particularly valuable for accelerating molecular dynamics simulations, with NVIDIA's offerings providing substantial performance benefits [15] [17].

Precision Requirements and Hardware Selection

The choice between consumer and data-center GPUs depends heavily on the precision requirements of the computational methods:

Mixed Precision Applications: Molecular dynamics packages including GROMACS, AMBER, and NAMD have mature GPU acceleration paths that operate efficiently in mixed precision [17]. These workloads benefit from cost-effective consumer GPUs like the RTX 4090 [17].

Double Precision (FP64) Applications: Density functional theory (DFT) and ab-initio codes including CP2K, Quantum ESPRESSO, and VASP often mandate true double precision throughout calculations [17]. These applications require data-center GPUs like the NVIDIA A100/H100 with strong FP64 performance [17].

Performance Benchmarking Protocol

To establish reliable performance metrics for energy minimization calculations:

System Preparation

- Select a representative molecular system relevant to research objectives

- Prepare input structures and parameter files

- Verify force field assignment and minimization parameters

Benchmark Execution

- Run minimization on a single GPU with controlled conditions

- Record wall-clock time and convergence iterations

- Monitor memory usage and temperature profiles

Metrics Calculation

- Compute performance metrics (ns/day for MD, iterations/second for minimization)

- Calculate cost efficiency (€/ns/day or €/10k ligands screened)

- Compare results across hardware configurations

Validation and Documentation

- Verify result accuracy against reference calculations

- Document complete system specifications in a "run card"

- Record solver versions, CUDA/driver versions, and input parameters

Applications in Drug Discovery and Challenges

Nutraceutical Research and Disease Management

Molecular docking has become an essential tool for identifying molecular targets of nutraceuticals in disease management [13]. This approach provides crucial information before in vitro investigations, helping authenticate the molecular targets of natural substances with therapeutic benefits [13]. Applications include target identification for nutraceuticals in disease models including cancer, cardiovascular disorders, gut diseases, reproductive conditions, and neurodegenerative disorders [13].

Limitations and Methodological Considerations

While energy minimization is crucial for refining molecular structures and obtaining reliable energy values, several important limitations must be considered:

Configuration Sampling: Energy minimization starting from a single protein structure can lead to major errors in calculating activation energies and binding free energies [18]. Extensive sampling of the protein's configurational space is essential for meaningful determination of enzymatic reaction energetics [18].

Precision Limitations: The reliance of many force fields on molecular mechanics' limited functional forms creates inherent approximations, particularly for non-pairwise additivity of non-bonded interactions [14].

Scoring Function Reliability: Challenges remain in the reliability of scoring functions for molecular docking, particularly for flexible receptor docking with backbone flexibility [13].

Recent approaches addressing these limitations include the development of data-driven force fields with expansive chemical space coverage [14], manifold optimization methods for efficient minimization [16], and integrated protocols combining multiple sampling and scoring approaches [13]. These advances continue to improve the accuracy and applicability of energy functionals and force fields for modeling molecular interactions and system energies in drug discovery research.

Optimization forms the backbone of computational problem-solving in fields ranging from machine learning to drug design. At its core, optimization involves finding the input values that minimize or maximize an objective function, often subject to various constraints. The landscape of this optimization can be broadly characterized by its convexity, which fundamentally determines the complexity of finding optimal solutions. A function is considered convex if the line segment between any two points on its graph lies above or on the graph itself, mathematically expressed as 𝑓(𝜆𝑥₁ + (1-𝜆)𝑥₂) ≤ 𝜆𝑓(𝑥₁) + (1-𝜆)𝑓(𝑥₂) for all 𝑥₁, 𝑥₂ and all 𝜆 ∈ [0,1] [19].

This mathematical property has profound implications for optimization. In convex optimization problems, any local minimum is automatically a global minimum, the optimal set is convex, and if the objective function is strictly convex, the problem has at most one optimal point [20] [19]. These characteristics make convex problems significantly more tractable, as they guarantee that algorithms can efficiently find the global optimum without getting stuck in suboptimal local minima. Conversely, non-convex optimization problems contain multiple local minima and maxima, creating an irregular landscape with many "peaks and valleys" that can trap optimization algorithms [21] [19]. This distinction is crucial for researchers designing energy minimization protocols, as the choice of algorithm and initialization strategy depends heavily on whether the underlying problem is convex or non-convex.

Table 1: Key Characteristics of Convex vs. Non-Convex Optimization Problems

| Feature | Convex Functions | Non-Convex Functions |

|---|---|---|

| Definition | Line segment between any two points lies above or on the graph | Line segment may fall below the graph in some regions |

| Number of Minima | Single global minimum | Multiple local minima and maxima |

| Optimization Complexity | Easier to optimize; guarantees global minimum | Harder to optimize; can get stuck at local minima |

| Shape | Smooth, bowl-shaped (U-shaped) | Irregular, with multiple peaks and valleys |

| Application Examples | Linear Regression, Logistic Regression, SVM | Deep Learning, Complex Neural Networks |

The Local Minima Challenge in Energy Minimization

Defining the Problem

In non-convex optimization landscapes, the presence of local minima presents a significant challenge for energy minimization protocols. A local minimum is a point in parameter space where the objective function value is smaller than at all other nearby points, but potentially greater than at a distant point (the global minimum) [22]. In contrast, a global minimum is a point where the function value is smaller than at all other feasible points across the entire domain [22]. Gradient-based optimization methods, such as gradient descent, start at a randomly chosen point and iteratively move in the direction of the negative gradient, eventually converging to a local minimum [21] [23]. However, there is no guarantee that this local minimum will be the global minimum, potentially leading to suboptimal solutions in critical applications like molecular docking or protein folding.

The challenge intensifies with the concept of basins of attraction—regions in the parameter space where starting points lead gradient descent paths to the same local minimum [22]. In complex, high-dimensional energy landscapes typical of molecular systems, these basins can be numerous and intricately shaped. Constraints can further fracture a single basin of attraction into several disjoint pieces, adding to the complexity of navigating the landscape effectively [22]. Understanding this topography is essential for developing strategies to escape local minima and locate the globally optimal solution, particularly in energy-constrained systems where even small improvements can significantly impact experimental outcomes.

Statistical and Practical Implications

The prevalence of local minima in high-dimensional optimization spaces presents substantial practical challenges for computational researchers. Statistically, local minima are common in most real-world optimization problems, with numerous local minima existing in typical loss landscapes [23]. This multiplicity means that standard optimization approaches will likely converge to a local minimum unless specific precautions are taken. The quality of these local minima can vary dramatically, with the loss at a local minimum potentially being substantially higher than at the global minimum, directly translating to reduced accuracy or efficiency in practical applications [23].

The difficulty of finding the global minimum cannot be overstated; even with advanced optimization algorithms, locating the true global optimum in complex, non-convex spaces remains computationally challenging and often infeasible for large-scale problems [23]. This fundamental limitation necessitates the development of specialized strategies and algorithms specifically designed to navigate multimodal landscapes and overcome the curse of local minima in energy minimization tasks relevant to drug development and molecular simulation.

Algorithmic Strategies for Global Optimization

Standard Optimization Algorithms

Several core algorithms form the foundation of energy minimization approaches in computational research. Steepest descent represents one of the most straightforward optimization methods, where new positions are calculated by moving along the direction of the negative gradient with a carefully controlled step size [11]. Although not the most efficient algorithm for minimization, it is robust and straightforward to implement, making it suitable for initial minimization steps [11]. The algorithm proceeds by calculating forces and potential energy, then updating positions according to: x_{n+1} = x_n + (h_n / max(|F_n|)) * F_n, where h_n is the maximum displacement and F_n is the force (negative gradient of the potential) [11]. The step size is adaptively controlled—increased by 20% when energy decreases and reduced by 80% when energy increases, with iterations continuing until the maximum force component falls below a specified threshold [11].

Conjugate gradient methods offer improved efficiency for later stages of minimization. While slower than steepest descent in initial stages, conjugate gradient becomes more efficient closer to the energy minimum [11]. This algorithm navigates the energy landscape by generating search directions that are conjugate with respect to the Hessian matrix, theoretically converging in at most N steps for a quadratic function in N dimensions. However, conjugate gradient cannot be used with constraints in certain implementations, requiring flexible water models when simulating aqueous systems [11]. For more advanced optimization, the L-BFGS (Limited-memory Broyden-Fletcher-Goldfarb-Shanno) algorithm approximates the inverse Hessian matrix using a fixed number of corrections from previous steps [11]. This quasi-Newton method typically converges faster than conjugate gradients, with memory requirements proportional to the number of particles multiplied by the correction steps rather than the square of the number of particles as in full BFGS [11].

Strategies for Escaping Local Minima

Overcoming the local minima problem requires specialized techniques designed to facilitate escape from suboptimal regions of the energy landscape. Random restarts involve repeatedly re-initializing the optimization algorithm from different random starting points, effectively sampling multiple basins of attraction to increase the probability of locating the global minimum [22] [23]. This approach can be implemented using regular grids of initial points, random points drawn from appropriate distributions, or identical initial points with added random perturbations [22]. For problems with bounded coordinates, uniform distributions are suitable, while normal, exponential, or other distributions work for unbounded components [22].

Stochastic Gradient Descent (SGD) introduces noise into the optimization process by randomly sampling subsets of data points at each iteration, preventing the algorithm from getting stuck in shallow local minima by constantly exploring different regions of the loss landscape [23]. Momentum-based approaches, including Nesterov momentum, enhance this capability by adding a fraction of the previous gradient to the current update, smoothing the optimization path and helping to carry the algorithm through small bumps in the energy landscape [23]. More advanced global optimization methods include simulated annealing, which incorporates a temperature parameter that gradually decreases, allowing initially large explorations of the energy landscape that become more focused over time [23]. Bayesian optimization constructs a probabilistic model of the objective function to strategically guide the search for the global optimum, while ensemble learning combines multiple models to improve overall optimization performance and robustness [23].

Emerging Global Optimization Frameworks

Recent research has introduced innovative approaches to address the fundamental challenges of global optimization. The Softmin Energy Minimization framework represents a novel gradient-based swarm particle optimization method designed to efficiently escape local minima and locate global optima [24]. This approach leverages a "Soft-min Energy" interacting function, J_β(𝐱), which provides a smooth, differentiable approximation of the minimum function value within a particle swarm [24]. The method defines a stochastic gradient flow in the particle space, incorporating Brownian motion for exploration and a time-dependent parameter β to control smoothness, analogous to temperature annealing in simulated annealing [24].

Theoretical analysis demonstrates that for strongly convex functions, these dynamics converge to a stationary point where at least one particle reaches the global minimum, while other particles maintain exploratory behavior [24]. This method facilitates faster transitions between local minima by reducing effective potential barriers compared to Simulated Annealing, with estimated hitting times of unexplored potential wells in the small noise regime comparing favorably with overdamped Langevin dynamics [24]. Numerical experiments on benchmark functions, including double wells and the Ackley function, validate these theoretical findings and demonstrate improved performance over Simulated Annealing in terms of escaping local minima and achieving faster convergence [24].

Application Notes for Constrained Energy Minimization

Constraint Handling Algorithms

Real-world energy minimization problems frequently involve constraints that must be satisfied throughout the optimization process. In molecular dynamics simulations, the SHAKE (Secure Hash Algorithm Kepler) algorithm transforms unconstrained coordinates to fulfill distance constraints using Lagrange multipliers [25]. The algorithm iteratively adjusts coordinates to satisfy a set of holonomic constraints expressed as σk(𝐫₁…𝐫N) = 0 for k=1…K, continuing until all constraints are satisfied within a specified relative tolerance [25]. For the specialized case of rigid water molecules, which often constitute over 80% of simulation systems, the SETTLE algorithm provides an efficient solution [25]. The GROMACS implementation modifies the original SETTLE algorithm to avoid calculating the center of mass of water molecules, reducing both computational operations and rounding errors, which is particularly important for large systems where floating-point precision of constrained distances becomes critical [25].

The LINCS (Linear Constraint Solver) algorithm offers a non-iterative alternative that resets bonds to their correct lengths after an unconstrained update, always utilizing two steps [25]. In the first step, projections of new bonds on old bonds are set to zero, while the second step corrects for bond lengthening due to rotation [25]. LINCS is based on matrix operations but avoids matrix-matrix multiplications, instead inverting the constraint coupling matrix through a power expansion: (𝐈-𝐀n)^{-1} = 𝐈 + 𝐀n + 𝐀n² + 𝐀n³ + … [25]. This method is generally more stable and faster than SHAKE but is limited to bond constraints and isolated angle constraints, with potential convergence issues in molecules with high connectivity due to coupled angle constraints [25].

Research Reagent Solutions for Energy Minimization

Table 2: Essential Research Reagents and Algorithms for Energy Minimization

| Research Reagent/Algorithm | Function/Purpose |

|---|---|

| Steepest Descent Algorithm | Robust initial minimization using gradient direction with adaptive step size control [11] |

| Conjugate Gradient Algorithm | Efficient later-stage minimization with conjugate search directions [11] |

| L-BFGS Algorithm | Quasi-Newton method with limited memory requirements for intermediate systems [11] |

| SHAKE Algorithm | Iterative constraint satisfaction using Lagrange multipliers [25] |

| LINCS Algorithm | Non-iterative bond constraint algorithm with matrix inversion via power expansion [25] |

| SETTLE Algorithm | Specialized constraint algorithm for rigid water molecules [25] |

| Softmin Energy Framework | Swarm-based global optimization using differentiable approximation of minimum function [24] |

Experimental Protocols for Energy Minimization

Protocol 1: Basic Energy Minimization Using Steepest Descent

This protocol outlines the standard procedure for energy minimization using the steepest descent algorithm, suitable for initial structure optimization in molecular systems.

Materials and Setup:

- Molecular structure file in appropriate format (PDB, GRO, etc.)

- Force field parameter files

- Simulation software (e.g., GROMACS, AMBER, NAMD)

- High-performance computing resources for larger systems

Procedure:

- System Preparation: Prepare the molecular system, ensuring all necessary parameters are available in the force field.

- Parameter Configuration: Set the integrator to "steep" (or equivalent) for steepest descent minimization.

- Step Size Initialization: Define an initial maximum displacement (h₀), typically 0.01 nm for molecular systems.

- Force Calculation: Compute forces 𝐅 and potential energy for the current coordinates.

- Position Update: Calculate new positions using: 𝐱{n+1} = 𝐱n + (hn / max(|𝐅n|)) 𝐅_n

- Energy Evaluation: Compute potential energy for new positions.

- Step Size Adjustment:

- IF (V{n+1} < Vn): Accept new positions and increase step size: h{n+1} = 1.2 hn

- IF (V{n+1} ≥ Vn): Reject new positions and decrease step size: hn = 0.2 hn

- Convergence Check: Repeat steps 4-7 until maximum force components are below specified tolerance (ε) or maximum iterations reached.

Troubleshooting Notes:

- For non-converging systems, consider reducing the initial step size or increasing the maximum number of iterations.

- If oscillation occurs, decrease the step size increase factor from 1.2 to a smaller value.

- The stopping criterion ε should be estimated based on the system; for molecular systems, values between 1 and 10 kJ mol⁻¹ nm⁻¹ are often acceptable [11].

Protocol 2: Global Optimization with Softmin Energy Minimization

This protocol describes the procedure for implementing the novel Softmin Energy Minimization approach for global optimization problems.

Materials and Setup:

- Objective function to be minimized

- Programming environment with automatic differentiation capabilities

- Multiple initial candidate points (particle swarm)

Procedure:

- Swarm Initialization: Initialize a set of particles with positions drawn from a distribution covering the parameter space.

- Softmin Energy Definition: Define the Softmin Energy function Jβ(𝐱) = -1/β log(∑{i=1}^N exp(-β f(𝐱_i))), where β is a smoothness parameter.

- Stochastic Dynamics Setup: Implement the stochastic gradient flow with Brownian motion for exploration.

- Annealing Schedule: Define a schedule for β that increases over time, gradually reducing the smoothness of the approximation.

- Iteration:

- Compute the Softmin Energy and its gradient for the current particle positions.

- Update particle positions using the gradient flow with stochastic noise.

- Adjust β according to the annealing schedule.

- Convergence Monitoring: Track the best solution found across all particles and monitor exploration metrics.

- Termination: Continue until computational budget is exhausted or convergence criteria are met.

Validation Steps:

- Test implementation on benchmark functions with known global minima (e.g., Ackley function, double wells).

- Compare performance with alternative methods like Simulated Annealing.

- Verify theoretical properties for strongly convex functions.

Integration with Broader Research Context

The challenges of optimization in energy minimization have parallels across scientific disciplines, highlighting the universal nature of these computational principles. In motor control neuroscience, research with rhesus monkeys performing reaching tasks has revealed that trajectory selection appears to balance energy efficiency with flexibility, maintaining a "safe kinetic energy range" where small trajectory differences minimally impact energy expenditure rather than strictly minimizing kinetic energy [26]. This biological insight mirrors the computational approach of seeking sufficiently good solutions rather than obsessively pursuing the global optimum regardless of cost.

In traffic management systems, research connects optimization with physical constraints through control frameworks for connected and automated vehicles (CAVs) that address computational efficiency, scalability, and compatibility with partial differential equation (PDE) models capturing macroscopic traffic dynamics [27]. These systems employ constrained control approaches using control barrier functions (CBFs) extended from ordinary differential equations to infinite-dimensional PDE systems, formulating quadratic programs that ensure safety constraints while preserving closed-loop stability [27]. Such cross-disciplinary applications demonstrate how fundamental optimization principles adapt to diverse domains with varying constraints and objectives.

The integration of advanced optimization strategies with practical constraint handling enables more efficient and robust solutions to complex energy minimization problems across scientific computing, drug development, and molecular design. By understanding the theoretical foundations, algorithmic options, and practical implementation details outlined in these application notes, researchers can make informed decisions about appropriate optimization approaches for their specific energy minimization challenges.

Computational Methods and Practical Applications in Drug Design

Free Energy Perturbation (FEP) is a rigorous, physics-based computational method used to calculate the binding free energies of biomolecular complexes. As a cornerstone of computational chemistry and structure-based drug design, FEP employs molecular dynamics (MD) simulations within a statistical mechanics framework to predict how modifications to a molecular structure affect its binding affinity to a target protein. The core principle of FEP is grounded in the linear response approximation, which posits that the free energy difference between two closely related states (e.g., a ligand and a slightly modified analog) can be determined by averaging over the energy differences sampled from equilibrium simulations. By leveraging thermodynamic cycles, FEP allows for the calculation of both relative binding free energies (RBFE) between two ligands and absolute binding free energies (ABFE) of a single ligand, providing invaluable quantitative insights for optimizing molecular interactions. This methodology aligns with the broader thesis of energy minimization with constraints, as it computationally explores the free energy landscape of molecular systems to identify states of minimal free energy under the constraints of a binding pocket.

Theoretical Foundations and Thermodynamic Cycles

The theoretical underpinning of FEP lies in statistical mechanics, specifically the perturbation theory first formalized by Zwanzig. The free energy difference between two states, A and B, is given by the Zwanzig equation: ΔG(A→B) = -kB T ln⟨exp(-(EB - EA)/kB T)⟩A where kB is Boltzmann's constant, T is the temperature, EA and EB are the potential energies of states A and B, and the angle brackets denote an ensemble average over configurations sampled from state A.

In practical drug discovery applications, this fundamental equation is applied within a thermodynamic cycle to compute binding free energies without simulating the physically complex binding and unbinding processes.

Relative Binding Free Energy (RBFE) Calculations utilize a cycle that alchemically transforms one ligand (Ligand A) into another (Ligand B) in both the solvated and protein-bound states [28]. The relative binding free energy is then computed as: ΔΔGbind = ΔGsolvent - ΔGprotein where ΔGsolvent is the free energy change for the transformation in solution, and ΔG_protein is the free energy change for the transformation in the protein binding site. This approach is highly effective for comparing congeneric series of compounds.

Absolute Binding Free Energy (ABFE) Calculations employ a different cycle where the ligand is alchemically annihilated (i.e., decoupled from its environment) in both the binding site and in bulk solvent [28]. The absolute binding free energy is the difference between these two annihilation free energies. While computationally more demanding than RBFE, ABFE can be applied to non-congeneric molecules and does not require a reference compound.

Performance and Validation of FEP

The accuracy and reliability of FEP have been rigorously tested across diverse protein targets and chemical series. A comprehensive assessment of FEP in 18 drug discovery projects and eight benchmark systems established that a root-mean-square error (RMSE) of <1.3 kcal/mol between predicted and experimental RBFE for a set of ten or more ligands is a typical threshold for validating a protein system for FEP calculations [28]. In prospective applications across 12 targets with 19 chemical series, FEP achieved an average mean unsigned error (MUE) of 1.24 kcal/mol, with a range from 0.48 to 2.28 kcal/mol [28]. This level of accuracy is sufficient to guide lead optimization in many drug discovery campaigns.

Notably, FEP has been successfully applied to challenging systems beyond small molecule-protein interactions. In a study of protein-protein interactions between HIV-1 gp120 and broadly neutralizing antibodies, a systematic FEP protocol achieved an RMSE of 0.68 kcal/mol across 55 mutation cases, near experimental accuracy [29]. This demonstrates the method's growing applicability to complex biological interfaces.

Table 1: Performance Metrics of Prospective FEP Applications

| Application Context | Number of Cases/Chemical Series | Reported Error Metric | Error Value (kcal/mol) |

|---|---|---|---|

| General Drug Discovery Projects [28] | 19 series across 12 targets | Average Mean Unsigned Error (MUE) | 1.24 |

| General Drug Discovery Projects [28] | 19 series across 12 targets | Average RMSE | 1.64 |

| Antibody-Protein Interaction (HIV gp120) [29] | 55 mutation cases | RMS Error (RMSE) | 0.68 |

| Scaffold Hopping (PDE5 Inhibitors) [30] | Not Specified | Mean Absolute Deviation (MAD) | < 2.0 |

Key Applications in Drug Discovery

Lead Optimization and Late-Stage Functionalization

FEP is extensively used to prioritize compounds for synthesis during lead optimization. By predicting the potency of proposed analogs, it helps focus medicinal chemistry efforts on the most promising candidates. For instance, in the late-stage functionalization of PRC2 methyltransferase inhibitors, FEP was used prospectively to explore a hydrophilic pocket, correctly predicting that F, Cl, and NH₂ substitutions would yield potent analogues, while larger moieties like OMe would lead to a substantial loss of binding [28]. This allows for the efficient exploration of chemical space and synthetic prioritization.

Scaffold Hopping

FEP can guide scaffold hopping, where the core structure of a molecule is altered to discover novel chemotypes, often to improve properties or escape intellectual property space. A notable example is the discovery of novel phosphodiesterase 5 (PDE5) inhibitors. Starting from known inhibitors like tadalafil, researchers used an ABFE-FEP approach to arrive at a substantially altered scaffold with a predicted and experimentally confirmed IC₅₀ of 8 nM [28] [30]. This demonstrates FEP's ability to handle significant structural changes, including ring openings and closings, that are beyond the scope of simpler scoring functions.

Fragment-Based Drug Design

FEP has shown promise in fragment-based drug discovery by accurately predicting the binding affinities of small fragments. A systematic analysis of 90 fragments across eight protein systems reported an RMSE of 1.1 kcal/mol, which is close to the generally accepted accuracy limit for FEP and remarkable given the typically weaker binding and higher uncertainty of experimental measurements for fragments [28]. Furthermore, FEP has been used to elucidate the energetic contributions of fragment linking, revealing that the linker-protein interaction energy is a major determinant of the overall binding energy gain upon linking [28].

Protein-Protein Interactions

As evidenced by the study on HIV-1 gp120 and antibodies, FEP protocols can be adapted for protein-protein interactions [29]. This requires addressing specific challenges such as longer sampling times for large bulky residues (e.g., tryptophan), modeling of post-translational modifications like glycans, and using loop prediction protocols to improve conformational sampling around mutation sites.

Diagram 1: A generalized workflow for a Free Energy Perturbation (FEP) calculation, showing the key stages from system preparation to result validation.

Detailed Experimental Protocols

Protein and Ligand Preparation

A robust FEP study begins with careful system preparation. The protein structure (from X-ray crystallography, Cryo-EM, or a predicted model) must be processed by adding hydrogen atoms, defining disulfide bonds, and assigning protonation states of ionizable residues (e.g., using a tool like Epik at pH 7.0 ± 2.0) [31]. Missing side chains or loops should be added, for instance, using Prime or homology modeling [31]. The ligand must be assigned appropriate partial charges and parameters using a force field compatible with the protein force field (e.g., OPLS4). A final restrained minimization of the entire protein-ligand complex, converging heavy atoms to an RMSD of ~0.3 Å, is recommended to relieve steric clashes [31].

System Setup and Equilibration

The prepared complex is then solvated in an explicit solvent box (e.g., orthorhombic shape with a 10 Å buffer) using a water model such as SPC [31]. The system is neutralized by adding counterions (e.g., Na⁺/Cl⁻), and an ionic concentration of 0.15 M can be added to mimic physiological conditions. Following solvation, the system undergoes a series of minimizations and equilibrations under the NPT ensemble (constant Number of particles, Pressure, and Temperature) at 300 K and 1 atm to stabilize density and relieve any residual strain before production simulations [31].

FEP/MD Production Simulation

The core of the calculation involves running MD simulations to sample the alchemical transformation. The mutation is divided into a series of intermediate non-physical states (λ windows). For each window, simulations are run to collect the energy differences needed for the free energy analysis. Enhanced sampling techniques, such as Replica Exchange Solute Tempering (REST), are often employed to improve conformational sampling and overcome energy barriers [29]. The necessary simulation time is system-dependent; for challenging residues like tryptophan or glycine in protein-protein interfaces, extended sampling times may be required [29].

Free Energy Analysis and Validation

The data from all λ windows are analyzed using methods like the Bennett Acceptance Ratio (BAR) or Multistate BAR (MBAR) to compute the free energy change. The calculated free energies must be validated. This can involve comparing the results to experimental data for a set of known compounds, assessing the hysteresis between the forward and backward transformations, and ensuring statistical error estimates are low (typically < 0.5 kcal/mol). For prospective applications, it is critical to establish the system's predictive accuracy with a validation set before relying on it for design [28].

Table 2: The Scientist's Toolkit: Essential Reagents and Software for FEP

| Category | Item | Function/Description | Example Tools/Force Fields [31] |

|---|---|---|---|

| Structure Preparation | Protein Preparation Tool | Adds H, optimizes H-bonds, assigns protonation states. | Protein Preparation Wizard (Schrödinger) |

| Loop Modeling Tool | Adds missing residues or loops in a protein structure. | Prime, Homology Modeling | |

| Force Fields | Protein Force Field | Defines energy parameters for protein atoms. | OPLS4, AMBER, CHARMM |

| Ligand Force Field | Assigns parameters and charges for small molecules. | OPLS4, GAFF | |