Energy Minimization in MD Simulation: A Foundational Guide for Robust Biomolecular Research

This article provides a comprehensive guide to the critical role of energy minimization within the molecular dynamics (MD) simulation workflow for researchers and drug development professionals.

Energy Minimization in MD Simulation: A Foundational Guide for Robust Biomolecular Research

Abstract

This article provides a comprehensive guide to the critical role of energy minimization within the molecular dynamics (MD) simulation workflow for researchers and drug development professionals. It covers foundational concepts, explaining why minimization is a non-negotiable first step to relieve atomic clashes and reach a stable equilibrium configuration on the potential energy surface. The piece delves into methodological specifics, comparing algorithms like Steepest Descent and Conjugate Gradients, and illustrates applications in preparing systems for free energy calculations. It further addresses common troubleshooting scenarios and optimization techniques to enhance efficiency, and concludes with a critical validation perspective, discussing the limitations of minimized structures and the necessity of thermal sampling for accurate thermodynamic properties.

The Critical First Step: Why Energy Minimization is Fundamental to MD Simulation

In computational chemistry and molecular dynamics (MD), energy minimization (EM), also referred to as geometry optimization or energy optimization, represents the foundational step in preparing molecular systems for subsequent simulation and analysis [1] [2]. This mathematical procedure systematically adjusts the spatial arrangement of a collection of atoms until the net interatomic force on each atom approaches zero, locating a stationary point on the Potential Energy Surface (PES) where the system reaches a stable or metastable state [1]. For researchers and drug development professionals, understanding and properly executing energy minimization is crucial for generating physically meaningful simulation results, as optimized structures correspond to molecular configurations that would naturally occur under experimental conditions [1] [2].

The motivation for performing energy minimization extends throughout the MD workflow. Without this critical preparation step, the high forces present in an initially constructed molecular system would cause unphysical movements and instabilities during subsequent dynamics simulations [2]. By relaxing the molecular geometry to a local or global energy minimum, researchers ensure that their simulations begin from a stable configuration, enabling the study of thermodynamics, chemical kinetics, spectroscopy, and biomolecular interactions with greater accuracy and reliability [1]. This technical guide explores the fundamental principles, methodologies, and practical implementations of energy minimization within the broader context of MD simulation workflows for drug discovery and materials science.

Theoretical Foundations of Energy Minimization

The Potential Energy Surface Concept

The Potential Energy Surface (PES) forms the fundamental theoretical framework for understanding energy minimization [2]. Mathematically, the PES describes the energy of a molecular system as a multidimensional function E(r) of the atomic positions, represented by a vector r containing the coordinates of all N atoms [1]. In this complex landscape, stable molecular configurations correspond to local minima—points where the energy reaches a local minimum value [1] [2].

- Global Minimum: The most stable configuration with the lowest possible energy on the PES.

- Local Minimum: A stable configuration that represents the lowest energy within a limited region of the PES but may not be the absolute lowest.

- Saddle Point: A first-order saddle point represents a transition state structure—a maximum along one direction but a minimum along all others [1].

The gradient of the potential energy, ∂E/∂r, represents the force acting on each atom [1]. At a minimum energy structure, these forces approach zero, indicating a balanced, stable configuration [1] [2]. The second derivative matrix, known as the Hessian matrix, describes the curvature of the PES and determines the vibrational frequencies of the system at the stationary point [1].

Mathematical Formulation and Optimization Problem

Energy minimization is fundamentally a mathematical optimization problem. Given a set of atoms and a vector r describing their positions, the procedure seeks to find the value of r for which E(r) reaches a local minimum [1]. This satisfies the condition that the derivative of the energy with respect to atomic positions (∂E/∂r) becomes approximately zero, while the Hessian matrix has all positive eigenvalues [1].

Most minimization algorithms employ an iterative approach with the general formula [2]: x~new~ = x~old~ + correction

Here, x~new~ refers to the molecular geometry at the next optimization step, x~old~ represents the current geometry, and the "correction" term is calculated differently by various algorithms to efficiently converge on a minimum [2]. The specific form of this correction term distinguishes the various optimization methods and determines their computational efficiency and convergence properties.

Computational Methodologies and Algorithms

Force Fields and Energy Functions

Molecular mechanics force fields provide the mathematical expressions for calculating the potential energy E(r) of a molecular system [2]. These force fields decompose the total energy into contributions from bonded interactions (bonds, angles, dihedrals) and non-bonded interactions (van der Waals, electrostatics) [2]. The Lennard-Jones potential is a fundamental component for modeling van der Waals interactions in many force fields, describing both attractive and repulsive forces between neutral atoms or molecules [2]:

[ V = 4\epsilon \left[ \left(\frac{\sigma}{r}\right)^{12} - \left(\frac{\sigma}{r}\right)^{6} \right] ]

Where ε is the well depth, σ is the distance at which the intermolecular potential is zero, and r is the separation distance between particles [2]. Modern force fields such as OPLS4/5 used in Desmond provide sophisticated parameterizations for accurate energy calculations in biomolecular systems [3].

Energy Minimization Algorithms

Table 1: Comparison of Energy Minimization Algorithms

| Algorithm | Mathematical Basis | Computational Cost | Convergence Efficiency | Typical Applications |

|---|---|---|---|---|

| Steepest Descent [2] | First-order; moves opposite largest gradient | Low per step | Slow near minimum; robust for initial steps | Initial relaxation of highly distorted structures |

| Conjugate Gradient [2] | First-order; combines current gradient with previous direction | Moderate | Faster than steepest descent; efficient for large systems | Medium to large biomolecular systems |

| Newton-Raphson [2] | Second-order; uses exact Hessian matrix | High per step | Very fast convergence; requires second derivatives | Small molecules and final precise minimization |

Steepest Descent Method

The steepest descent algorithm represents one of the simplest approaches to energy minimization. This method assumes a constant second derivative and updates the geometry according to [2]: x~new~ = x~old~ - γE''(x~old~)

Where γ is a constant. The method is named for its strategy of moving opposite to the direction of the largest gradient (steepest descent) at the initial point [2]. While robust for early stages of minimization when far from the minimum, steepest descent tends to converge slowly as it approaches the minimum and often exhibits oscillatory behavior [2].

Conjugate Gradient Method

The conjugate gradient method improves upon steepest descent by incorporating information from previous search directions. Rather than always moving along the current steepest descent direction, this technique mixes in a component of the previous search direction to avoid the oscillatory behavior that plagues steepest descent [2]. This approach allows more efficient convergence to the minimum, particularly for large molecular systems where computational efficiency is crucial [2].

Newton-Raphson Method

The Newton-Raphson method represents a more sophisticated approach that utilizes second derivatives of the energy. Based on a Taylor series expansion of the potential energy surface, this method provides more accurate step directions and typically achieves faster convergence [2]. However, the computational expense of calculating and inverting the Hessian matrix (second derivatives) for large systems often limits its practical application to smaller molecules or final stages of refinement [2].

Energy Minimization in Molecular Dynamics Workflows

Integration with Broader Simulation Pipelines

Energy minimization serves as the critical first step in comprehensive molecular dynamics workflows, preparing the system for subsequent dynamics simulations and analysis [2]. A typical MD workflow incorporating energy minimization proceeds through several stages:

- System Building: Construction of the initial molecular geometry, often from experimental coordinates or molecular modeling [2] [3].

- Energy Minimization: Relaxation of the molecular geometry to remove steric clashes and high-energy distortions [2].

- Equilibration MD: Gradual heating and density equilibration under appropriate thermodynamic ensembles [3].

- Production MD: Extended simulation for data collection and analysis [3].

- Trajectory Analysis: Extraction of structural, dynamic, and thermodynamic properties [4].

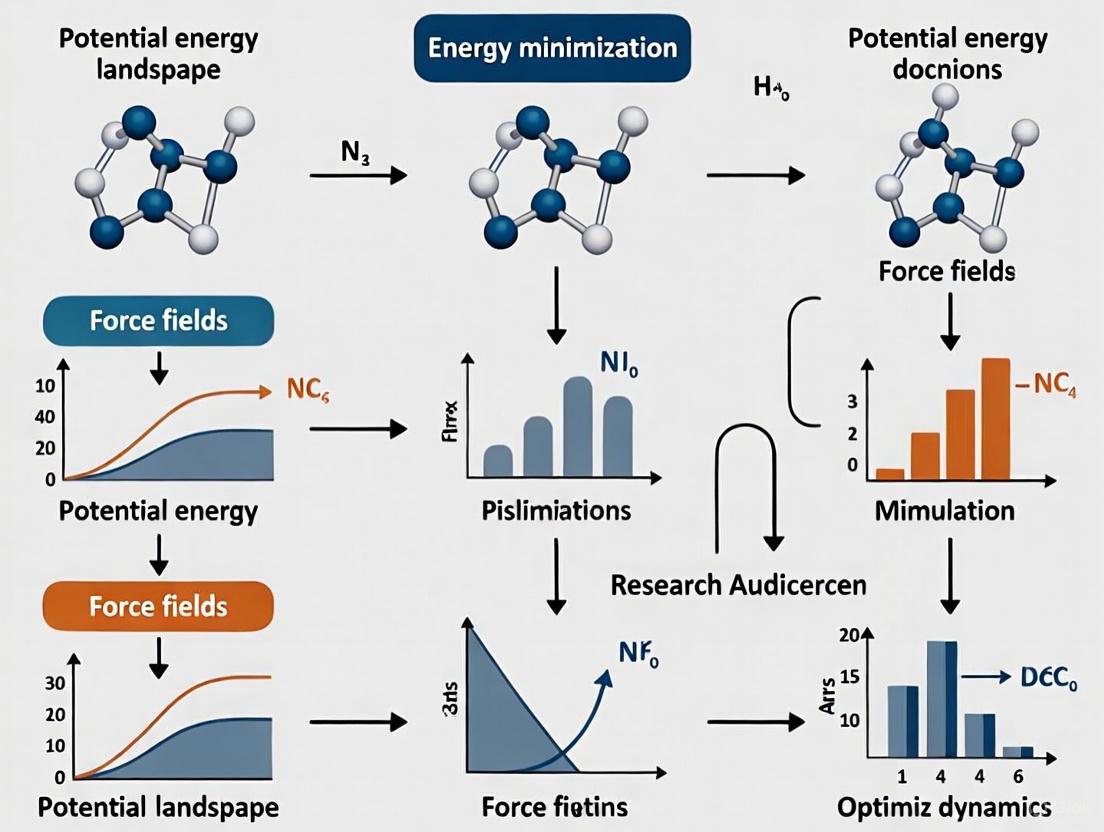

The following diagram illustrates this integrated workflow, highlighting the positioning of energy minimization within the complete simulation pipeline:

Diagram 1: MD Simulation Workflow

Practical Implementation Considerations

Coordinate Systems

The choice of coordinate system significantly impacts the efficiency of energy minimization. Cartesian coordinates, while straightforward, are redundant for molecular systems (3N coordinates for N atoms versus 3N-6 vibrational degrees of freedom) and often exhibit high correlation between variables [1]. Internal coordinates (bond lengths, angles, and dihedral angles) typically show less correlation but can be more challenging to implement, particularly for complex or symmetric systems [1]. Modern MD packages often include automated procedures for generating appropriate coordinate systems that balance these considerations [1].

Degree of Freedom Restriction

In many practical applications, certain degrees of freedom may be constrained during energy minimization. For example, positions of specific atoms (such as protein backbone atoms or crystal lattices) can be fixed while allowing other atoms to relax [1]. These "frozen degrees of freedom" maintain desired structural features while still permitting local relaxation, which is particularly useful in complex systems like the carbon nanotube simulation with fixed atoms depicted in the search results [1].

Research Reagents and Computational Tools

Essential Software Platforms

Table 2: Key Software Tools for Energy Minimization and MD Simulations

| Tool/Platform | Primary Function | Key Features | Applications in Research |

|---|---|---|---|

| Desmond [3] | High-performance MD engine | GPU acceleration; OPLS force fields; integration with Maestro | Drug discovery; protein-ligand complexes; explicit solvent simulations |

| LAMMPS [5] | Classical MD simulator | Open-source; highly customizable; extensive force fields | Materials science; polymers; coarse-grained systems |

| MDAnalysis [4] | Trajectory analysis | Python library; multiple file formats; extensible API | Analysis of MD trajectories; structural properties calculation |

| PLUMED [4] | Enhanced sampling | Plugin for multiple MD codes; free energy calculations | Metadynamics; umbrella sampling; analysis of collective variables |

Force Fields and Parameterization

Force fields represent crucial "research reagents" in computational chemistry, providing the mathematical parameters that define energy calculations [2]. Modern force fields like OPLS4/5 used in Desmond's MD engine offer comprehensive coverage of drug-like molecules and biomolecules, with careful parameterization for accurate energetics [3]. The selection of an appropriate force field depends on the specific system under investigation, with specialized force fields available for proteins, nucleic acids, lipids, organic materials, and polymers [5] [3].

Advanced Applications and Specialized Techniques

Transition State Optimization

While most energy minimizations target local energy minima, specialized techniques exist for locating transition states—first-order saddle points on the potential energy surface [1]. These saddle points represent maximum energy along the reaction coordinate but minima in all other directions, characterizing the transition state between reactants and products [1]. Methods for transition state optimization include:

- Local Search Methods: Require initial guesses close to the true transition state and follow the eigenvector with the most negative eigenvalue while minimizing in all other directions [1].

- Dimer Method: Uses two closely spaced images on the PES to find directions of negative curvature without prior knowledge of the final structure [1].

- Chain-of-State Methods: Generate a series of intermediate structures connecting reactant and product states, then optimize this path to locate the transition state [1].

Enhanced Sampling and Free Energy Calculations

Advanced sampling techniques often build upon foundational energy minimization approaches. Methods like Mixed Solvent Molecular Dynamics (MxMD) in Desmond enhance cryptic pocket identification by combining MD simulations with mixed solvent environments to probe protein surfaces [3]. Free energy perturbation (FEP+) calculations provide rigorous binding free energy estimates that rely on properly minimized starting structures [3].

The following diagram illustrates the decision process for selecting appropriate minimization strategies based on research objectives:

Diagram 2: Minimization Strategy Selection

Experimental Protocols and Best Practices

Standard Energy Minimization Protocol

A robust energy minimization protocol for biomolecular systems typically involves these methodical steps:

- System Preparation: Construct the initial molecular model, add missing hydrogen atoms, and assign appropriate protonation states for ionizable residues [3].

- Solvation and Ion Placement: Embed the system in an explicit solvent box (e.g., TIP3P water model) and add ions to achieve physiological concentration and system neutrality [3].

- Initial Minimization with Constraints: Perform an initial minimization (typically 500-1000 steps of steepest descent) with positional restraints on heavy atoms of the solute to relax solvent molecules and eliminate bad contacts [2].

- Full System Minimization: Conduct extensive minimization (typically 1000-5000 steps) without constraints using conjugate gradient or similar algorithms until the maximum force falls below a specified tolerance (e.g., 10 kJ/mol/nm) [2].

- Convergence Validation: Verify minimization success by monitoring the energy and gradient norms, ensuring both reach stable plateau values [2].

Troubleshooting Common Issues

- Slow Convergence: If minimization fails to converge within a reasonable number of steps, consider switching algorithms (from steepest descent to conjugate gradient) or checking for atomic clashes that may require additional initial restraint steps [2].

- Energy Increase: An increasing energy during minimization suggests numerical instability, potentially from overly large initial steps. Reducing the step size or implementing more conservative convergence criteria often resolves this issue [2].

- Distorted Geometry: Unphysical bond lengths or angles after minimization may indicate force field incompatibility or parameter assignment errors, requiring verification of the molecular mechanics parameterization [2] [3].

Energy minimization represents an indispensable component of the molecular dynamics workflow, providing the foundation upon which reliable simulations are built. By systematically navigating the potential energy surface to locate stable molecular configurations, this process enables researchers to generate physically meaningful starting structures for subsequent dynamics simulations and analysis. The continuing development of efficient minimization algorithms, accurate force fields, and user-friendly implementation in packages like Desmond and LAMMPS ensures that energy minimization remains a cornerstone technique in computational drug discovery and materials science [5] [3]. As MD simulations continue to address increasingly complex biological and materials systems, the fundamental principles of energy minimization will maintain their critical role in connecting atomic-scale interactions with macroscopic observable properties.

In computational chemistry and molecular dynamics, the Potential Energy Surface represents the energy of a molecular system as a multidimensional function of its atomic coordinates [6] [2]. The PES serves as the fundamental landscape upon which all molecular motions and interactions occur, determining the system's stable configurations, transition states, and reaction pathways. For a system of N atoms, the PES is defined in 3N-6 dimensions (3N-5 for linear molecules), where each point on this hyper-surface corresponds to a specific spatial arrangement of atoms and its associated potential energy [2]. The topology of this surface—characterized by minima, maxima, and saddle points—directly dictates the structural, dynamic, and functional properties of biomolecules, making its thorough understanding essential for researchers in drug development and molecular sciences.

The concept of the PES is intrinsically linked to the energy minimization procedures that form the critical first step in molecular dynamics (MD) simulation workflows [6]. Before productive MD simulation can begin, a molecular system must be relaxed from its initial coordinates to a stable equilibrium configuration located at a local minimum on the PES. This process of navigating the complex topography of the PES to identify low-energy configurations enables researchers to investigate biologically relevant properties, including the effects of mutations on protein structure and activity, and probing intermolecular interactions with small molecules or other macromolecules [7].

Mathematical Characterization of PES Critical Points

Defining Minima, Maxima, and Saddle Points

The critical points on a PES are characterized by specific mathematical conditions where the first derivative of the potential energy with respect to all nuclear coordinates vanishes [8]. Formally, for a molecular system with N atoms and potential energy U(𝐑^N), the gradient must satisfy:

∂U(𝐑^N)/∂𝐫_i = 0 for all i = 1,2,...,N

where 𝐫_i represents the position vector of atom i [8]. The nature of these stationary points is determined by the eigenvalues of the Hessian matrix (the matrix of second derivatives):

- Local Minima: All eigenvalues of the Hessian are positive. These points correspond to stable molecular configurations where small displacements result in restorative forces [2].

- Local Maxima: All eigenvalues are negative, representing energetically unfavorable configurations.

- Saddle Points: At least one eigenvalue is positive and one is negative. First-order saddle points, with exactly one negative eigenvalue, represent transition states between minima along reaction pathways [8].

Table 1: Mathematical Characterization of Critical Points on the PES

| Critical Point Type | Gradient Condition | Hessian Eigenvalues | Physical Significance |

|---|---|---|---|

| Local Minimum | ∇U = 0 | All λ > 0 | Stable equilibrium configuration |

| Local Maximum | ∇U = 0 | All λ < 0 | Unstable high-energy state |

| First-order Saddle Point | ∇U = 0 | One λ < 0, all others > 0 | Transition state between minima |

| Higher-order Saddle Point | ∇U = 0 | Multiple λ < 0 | Complex multidimensional transition |

The Role of Force Fields in PES Evaluation

The calculation of the PES relies critically on empirical force fields that mathematically describe the potential energy as a function of nuclear coordinates [2]. These force fields, such as CHARMM, AMBER, and GROMOS, decompose the total potential energy into bonded and non-bonded interaction terms [8]. The Lennard-Jones potential, a key component of non-bonded interactions, is given by:

V(r) = 4ε[(σ/r)^12 - (σ/r)^6]

where ε represents the well depth (measure of attraction strength), σ is the distance where intermolecular potential is zero, and r is the separation distance [2]. The (σ/r)^12 term describes repulsive forces while (σ/r)^6 denotes attraction [2]. The collection of these parameters constitutes a force field that restrains molecular systems to physically realistic configurations during energy minimization and MD simulations [2].

Energy Minimization Algorithms for Navigating the PES

Fundamental Minimization Approaches

Energy minimization algorithms provide the mathematical machinery to navigate the PES from high-energy initial configurations toward local minima where the net forces on atoms become negligible [2]. These methods employ iterative formulas of the type:

𝐱new = 𝐱old + correction

where 𝐱 represents the atomic coordinates [2]. The specific form of the correction term distinguishes different minimization algorithms, each with characteristic trade-offs between computational expense, convergence speed, and robustness [9] [2].

Diagram 1: Energy Minimization Workflow (Max Width: 760px)

Comparative Analysis of Minimization Algorithms

Table 2: Comparison of Energy Minimization Algorithms for PES Navigation

| Algorithm | Mathematical Formulation | Computational Efficiency | Convergence Properties | Best Use Cases |

|---|---|---|---|---|

| Steepest Descent | 𝐱new = 𝐱old - γ∇U(𝐱_old) [2] | Low cost per step, slow convergence [9] | Robust for high-energy starting structures, oscillates near minima [9] | Initial stages of minimization, poorly conditioned systems [9] |

| Conjugate Gradient | 𝐱new = 𝐱old + α𝐩 where 𝐩 = -∇U + β𝐩_previous [2] | Moderate cost, faster convergence than SD [9] | More efficient than SD near minima, can handle larger systems [9] | Systems where SD becomes inefficient, pre-normal-mode analysis [9] |

| L-BFGS | 𝐱new = 𝐱old - α𝐇∇U where 𝐇 approximates inverse Hessian [9] | Higher memory requirements, fastest convergence [9] | Superlinear convergence, efficient for large systems [9] | Production minimization when parallelization not required [9] |

| Newton-Raphson | 𝐱new = 𝐱old - [∇²U(𝐱old)]⁻¹∇U(𝐱old) [2] | Very high cost (Hessian calculation) [2] | Quadratic convergence near minima [2] | Small systems where exact Hessian is needed |

The stopping criterion for minimization algorithms is typically based on the maximum absolute value of the force components becoming smaller than a specified tolerance ε [9]. A reasonable value for ε can be estimated from the root mean square force of a harmonic oscillator at a given temperature, with values between 1 and 10 kJ mol⁻¹ nm⁻¹ generally being acceptable for biomolecular systems [9].

Integration of Energy Minimization in MD Workflows

Pre-Simulation Structure Preparation

Energy minimization serves as the critical initialization step in molecular dynamics workflows, preparing molecular systems for stable and productive simulation [2]. Starting from a non-equilibrium molecular geometry, minimization reduces the net forces on atoms (the gradients of potential energy) until they become negligible, thus locating a stable minimum on the PES [2]. This process is essential because MD simulations initiated from high-energy configurations experience instabilities and numerical inaccuracies during numerical integration of Newton's equations of motion [2].

The GROMACS simulation package implements multiple minimization algorithms, including steepest descent, conjugate gradients, and L-BFGS, selectable via the integrator setting of the mdrun program [9]. The steepest descent algorithm in GROMACS employs an adaptive step size approach, where new positions are calculated by:

𝐫new = 𝐫old + [hn/max(|𝐅n|)]𝐅_n

where hn is the maximum displacement and 𝐅n is the force (negative gradient of potential) [9]. The step size is increased by 20% following successful steps and reduced by 80% following rejected steps, providing a robust approach for navigating the PES topography [9].

Advanced Applications and Modeling Strategies

Beyond basic structure preparation, energy minimization techniques form the foundation for sophisticated modeling strategies when combined with external restraints and constraints [6]. By forcing specific atoms to overlap with template structures during minimization, researchers can quantitatively answer questions such as "how much energy is required for one molecule to adopt the shape of another" [6]. These approaches enable the calculation of conformational energy with respect to dihedral angles for proteins [6] and facilitate molecular docking studies by refining protein-peptide complex geometries, including bond lengths, angles, and inter-molecular interactions [8].

For protein-peptide docking, energy minimization refines initially randomized peptide conformations and orientations, optimizing hydrophobic interactions and hydrogen bonding geometry [8]. This refinement reveals potential molecular interactions that provide strong evidence for predicting protein-peptide binding affinities, with estimated scores after minimization more accurately reflecting actual binding energies [8].

Diagram 2: MD Simulation Workflow with Energy Minimization (Max Width: 760px)

Practical Implementation and Research Tools

The Scientist's Toolkit: Software Solutions

Contemporary research has produced specialized software tools that automate and streamline the energy minimization and MD simulation process, making these techniques more accessible to non-specialists while maintaining scientific rigor [10] [7].

Table 3: Research Reagent Solutions for PES Exploration and Energy Minimization

| Tool/Software | Primary Function | Key Features | Application Context |

|---|---|---|---|

| GROMACS [9] | Molecular dynamics simulator | Implementation of SD, CG, L-BFGS minimizers; High performance computing optimized | Production MD simulations of biomolecular systems |

| CHARMM [6] | Macromolecular energy minimization and dynamics | Program for macromolecular energy, minimization, and dynamics calculations | Detailed energy calculations and conformational analysis |

| drMD [7] | Automated MD pipeline | User-friendly automation; Single configuration file; Error handling and recovery | Accessible MD for experimentalists; Publication-quality simulations |

| FastMDAnalysis [10] | MD trajectory analysis | Unified, automated environment; Reproducibility focus; Reduced coding requirement | High-throughput analysis of simulation trajectories |

| OpenMM [7] | Molecular mechanics toolkit | GPU acceleration; Flexible force field support | Custom simulation workflows; Enhanced sampling methods |

Automated Workflows for Enhanced Reproducibility

Tools like drMD exemplify the trend toward automated pipelines that reduce the expertise required to run MD simulations while maintaining scientific rigor [7]. By using a single configuration file as input, drMD ensures reproducibility and provides real-time progress updates along with automated simulation "vitals" checks [7]. Similarly, FastMDAnalysis encapsulates core analyses into a coherent framework with detailed execution logging and standardized output of both publication-quality figures and machine-readable data files, addressing the critical challenge of reproducibility in computational research [10].

These automated approaches demonstrate a >90% reduction in the lines of code required for standard workflows while maintaining numerical accuracy against reference implementations, as validated on benchmark systems including ubiquitin and the Trp-cage miniprotein [10]. In a case study analyzing a 100 ns simulation of Bovine Pancreatic Trypsin Inhibitor, comprehensive conformational analysis was performed in under 5 minutes with a single command, dramatically reducing scripting overhead and technical barriers [10].

The thorough navigation and characterization of the Potential Energy Surface through energy minimization techniques represents the foundational step in molecular dynamics simulations and computational structural biology. The mathematical principles governing minima, maxima, and saddle points on the PES directly enable researchers to identify stable molecular configurations, understand transition states, and predict molecular behavior. As automated tools continue to lower accessibility barriers while maintaining scientific rigor, these fundamental concepts become increasingly relevant for drug development professionals seeking to investigate biologically relevant properties including mutation effects, small molecule interactions, and macromolecular binding. The ongoing development of optimized algorithms and user-friendly implementations ensures that energy minimization will remain the critical gateway procedure that enables reliable and interpretable molecular simulations across scientific disciplines.

Within the molecular dynamics (MD) simulation workflow, energy minimization (also known as energy optimization or geometry minimization) serves as a crucial preparatory step to ensure the physical realism and numerical stability of subsequent simulation phases [1] [11]. This process finds an arrangement in space of a collection of atoms where, according to a computational model of chemical bonding, the net inter-atomic force on each atom is acceptably close to zero and the position on the potential energy surface (PES) is a stationary point [1]. For macromolecular systems, the primary objectives of this minimization are twofold: first, to identify and relieve steric clashes—unphysical atomic overlaps introduced during model building or homology modeling; and second, to systematically reduce net atomic forces until they fall below acceptable thresholds, ensuring the system resides at a local energy minimum [12] [11]. Without this fundamental procedure, structures containing severe steric clashes may be rejected by refinement programs, and MD simulations may suffer from numerical instability or unphysical trajectories due to excessively high initial forces [12].

This technical guide examines the core principles, quantitative metrics, and practical methodologies for achieving these dual objectives, providing researchers and drug development professionals with validated protocols for optimizing molecular structures within the broader context of MD simulation research.

Quantitative Definition and Assessment of Steric Clashes

Energetic Definition of Steric Clashes

Steric clashes arise from the unnatural overlap of any two non-bonding atoms in a protein structure [12]. Unlike qualitative approaches that use simple distance cutoffs, a more physically meaningful definition quantifies clashes based on Van der Waals repulsion energy. A commonly adopted threshold in computational biochemistry defines a steric clash as any atomic overlap resulting in Van der Waals repulsion energy greater than 0.3 kcal/mol (0.5 kBT), with specific exceptions for bonded atoms, disulfide bonds, hydrogen bonds, and certain backbone interactions [12].

This energetic approach provides crucial context for understanding the severity of structural artifacts. Low-energy clashes may be tolerable and are occasionally present even in high-resolution experimental structures, while high-energy clashes indicate significant model-building artifacts that require resolution before proceeding with dynamics simulations [12].

The Clashscore Metric

To standardize clash assessment across proteins of different sizes, researchers have developed the clashscore metric, defined as the total clash-energy divided by the number of interatomic contacts evaluated [12]. This normalization makes the metric independent of protein size, enabling meaningful comparisons between systems.

Statistical analysis of high-resolution crystal structures has established benchmark values for acceptable clashscores. Based on the distribution of clashscores from 4,311 unique high-resolution protein chains, the acceptable clashscore threshold is determined to be 0.02 kcal·mol⁻¹·contact⁻¹ (approximately one standard deviation above the mean) [12]. Structures deviating significantly from this benchmark value likely contain artifacts requiring minimization.

Table 1: Quantitative Metrics for Steric Clash Assessment

| Metric | Definition | Calculation | Acceptable Threshold |

|---|---|---|---|

| Steric Clash | Unphysical atomic overlap | Van der Waals repulsion > 0.3 kcal/mol | Dependent on atom types [12] |

| Clash-Energy | Sum of repulsion energies | Σ(VdW repulsion of all clashes) | System-dependent [12] |

| Clashscore | Normalized clash severity | Clash-energy / Number of contacts | ≤ 0.02 kcal·mol⁻¹·contact⁻¹ [12] |

Methodologies for Steric Clash Resolution and Force Reduction

Algorithmic Approaches to Energy Minimization

Energy minimization employs iterative mathematical procedures to locate local minima on the potential energy surface [2] [1]. The general iterative formula follows:

[ x{\text{new}} = x{\text{old}} + \text{correction} ]

where ( x{\text{new}} ) refers to the value of the geometry at the next step, ( x{\text{old}} ) refers to the geometry at the current step, and the correction term varies depending on the specific algorithm employed [2].

Steepest Descent Method

The steepest descent method employs a simplified approach that assumes the second derivative is constant [2]. The position update follows:

[ x{\text{new}} = x{\text{old}} - \gamma E'(x_{\text{old}}) ]

where ( \gamma ) is a constant and ( E' ) is the gradient at the current position [2]. This method moves opposite to the direction of the largest gradient at the initial point, making it particularly effective for initially relieving severe steric clashes due to its robustness when far from the energy minimum [2] [13]. However, it typically requires more steps than more sophisticated methods as it approaches the minimum and may exhibit oscillatory behavior near the solution [2].

Conjugate Gradient Method

The conjugate gradient method enhances efficiency by incorporating information from previous search directions [2]. It begins with a step opposite the largest gradient direction (like steepest descent) but then mixes in a component of the previous direction in subsequent searches [2]. This approach reduces oscillatory behavior and typically converges more rapidly than steepest descent, especially as the system approaches the energy minimum [2] [13]. It is particularly well-suited for fine-grained optimization after severe clashes have been resolved.

Hybrid Minimization Protocols

Many practical implementations combine these algorithms in sequential protocols. For instance, the minimization tool in Chimera performs steepest descent minimization first to relieve highly unfavorable clashes, followed by conjugate gradient minimization to more effectively reach a local energy minimum after severe clashes have been resolved [13]. This hybrid approach balances computational efficiency with optimization effectiveness.

Specialized Protocols for Severe Clash Resolution

The Chiron Protocol for Severe Steric Clashes

For structures with severe steric clashes that conventional minimization may struggle to resolve, specialized protocols like Chiron have been developed [12]. This automated method uses discrete molecular dynamics (DMD) simulations with CHARMM19 non-bonded potentials, EEF1 implicit solvation parameters, and geometry-based hydrogen bond potentials [12]. Benchmark studies demonstrate its robustness in resolving severe clashes in low-resolution structures and homology models with minimal perturbation to the protein backbone, outperforming other widely used methods, especially for larger proteins [12].

Molecular Mechanics Force Field Minimization

Conventional molecular mechanics approaches using force fields like those in AMBER provide another reliable pathway for clash resolution [11]. The AMBER relaxation process typically employs the ff14SB force field for proteins and GAFF for small molecules to model interatomic interactions [11] [13]. This method iteratively adjusts atomic positions to reduce the overall potential energy, effectively removing unfavorable interactions introduced during model building [11]. Implementation through tools like AMBER's minimization module often includes constraints and restraints to maintain certain structural features during optimization [11].

Table 2: Comparison of Energy Minimization Methodologies

| Method | Key Features | Advantages | Limitations | Best Applications |

|---|---|---|---|---|

| Steepest Descent | Follows negative gradient direction; assumes constant second derivative [2] | Robust for initial steps; efficient for severe clashes [13] | Slow convergence near minimum; may oscillate [2] | Initial clash relief; highly distorted structures [13] |

| Conjugate Gradient | Incorporates previous search directions [2] | Faster convergence than steepest descent [2] | More memory-intensive; complex implementation [2] | Refinement after initial minimization [13] |

| Chiron Protocol | Uses discrete molecular dynamics (DMD) [12] | Handles severe clashes; minimal backbone perturbation [12] | Specialized implementation required [12] | Low-resolution structures; homology models [12] |

| AMBER Force Fields | ff14SB for proteins; GAFF for small molecules [11] [13] | Well-parameterized; widely validated [11] | May struggle with severe clashes alone [12] | General-purpose refinement; stable initial structures [11] |

Experimental Protocols and Implementation

Standard Energy Minimization Workflow

The following workflow diagram illustrates the sequential process for identifying and resolving steric clashes through energy minimization:

Practical Implementation Guide

Pre-Minimization Clash Detection

Before initiating minimization, conduct systematic clash detection using specialized tools:

- MolProbity: Provides Clashscore analysis, reported as the number of serious clashes per thousand atoms [14]. This widely validated tool is essential for objective quality assessment.

- Internal Scripting: For programmatic implementation, use VMD Tcl scripting with commands such as

"noh and exwithin 0.5 of noh"to identify clashing atoms [14]. - ENERGY CALCULATION: Compute Van der Waals repulsion energy using CHARMM19 or similar force field parameters with a threshold of 0.3 kcal/mol to define clashes [12].

AMBER Minimization Protocol

For standard protein structures without severe clashes, implement this AMBER-based protocol:

- System Preparation: Add hydrogens and assign partial charges using the

AddHandAdd Chargeutilities, selecting appropriate force fields (ff14SB for standard residues) [13]. - Constraint Definition: Apply positional restraints to preserve secondary structure elements or binding site geometries while allowing other regions to relax [11] [13].

- Steepest Descent Phase: Execute 100-500 steps of steepest descent minimization with a step size of 0.02 Å to relieve major steric clashes [13].

- Conjugate Gradient Phase: Perform 10-100 steps of conjugate gradient minimization with a step size of 0.02 Å to refine the structure toward a local minimum [13].

- Convergence Verification: Confirm the maximum force falls below threshold (e.g., 200 kJ·mol⁻¹·nm⁻¹) and clashscore meets acceptable criteria [12] [15].

Specialized Protocol for Severe Clashes

For structures with severe steric clashes (often encountered in homology models or low-resolution structures), employ this specialized protocol:

- Initial Assessment: Calculate initial clashscore; if severely elevated (>0.1 kcal·mol⁻¹·contact⁻¹), proceed with specialized resolution [12].

- Discrete Molecular Dynamics: Implement Chiron protocol using DMD simulations with CHARMM19 non-bonded potentials, EEF1 implicit solvation, and hydrogen bond potentials [12].

- Temperature Control: Begin with short simulations at elevated temperature (240-300 K) using Anderson's thermostat with velocity rescaling rates of 4-200 ps⁻¹ to overcome energy barriers [12].

- Iterative Refinement: Apply multiple cycles of DMD simulation and clash assessment until clashscore falls below acceptable threshold [12].

- Final Minimization: Conclude with conventional steepest descent and conjugate gradient minimization to ensure proper local geometry [12] [13].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Essential Tools for Clash Detection and Resolution

| Tool/Resource | Type | Primary Function | Implementation Notes |

|---|---|---|---|

| MolProbity | Web server/Standalone | Clashscore calculation and structure validation [14] | Provides quantitative clash assessment; essential for quality control [14] |

| Chiron | Specialized server | Automated resolution of severe steric clashes [12] | Uses DMD; handles cases where conventional minimization fails [12] |

| AMBER | MD suite | Force field-based energy minimization [11] | Includes ff14SB (proteins) and GAFF (small molecules) parameters [11] [13] |

| UCSF Chimera | Visualization & Modeling | Integrated minimization with MMTK [13] | Combines steepest descent and conjugate gradient algorithms [13] |

| VMD Tcl Scripts | Programming interface | Custom clash detection algorithms [14] | Enables "noh and exwithin 0.5 of noh" queries for atom-level analysis [14] |

| CHARMM19 Force Field | Parameter set | Van der Waals energy calculation for clash definition [12] | Provides physical basis for quantitative clash assessment [12] |

| Rosetta | Modeling suite | Alternative refinement using knowledge-based potentials [12] | Effective for smaller proteins (<250 residues) [12] |

Energy minimization serving the dual objectives of steric clash relief and atomic force reduction represents a foundational element in the MD simulation workflow. The quantitative metrics and specialized protocols detailed in this guide provide researchers with robust methodologies for preparing physically realistic structures for subsequent dynamics simulations. By implementing appropriate clash assessment techniques and selecting minimization algorithms matched to the severity of structural artifacts, scientists can significantly enhance the reliability and accuracy of their computational investigations. As molecular modeling continues to expand its role in structural biology and drug development, these fundamental procedures for structure optimization remain essential for generating biologically meaningful simulation results and advancing our understanding of molecular function.

Energy minimization is a foundational, non-negotiable step in the molecular dynamics (MD) simulation workflow. Its primary role is to relieve excessive atomic clashes and steep potential energy gradients inherent in initial molecular configurations, which, if unaddressed, would render the subsequent simulation physically meaningless and numerically unstable. In the broader context of MD research, minimization serves as the crucial bridge between a constructed molecular model and a thermodynamically valid simulation, ensuring the system possesses a reasonable initial energy before subjecting it to the heating and equilibration phases. Despite being computationally inexpensive relative to the full production run, skipping this step introduces severe risks that can compromise an entire simulation. This technical guide details the instabilities and integration failures that arise from neglecting proper minimization, providing researchers and drug development professionals with the evidence and methodologies to uphold rigorous simulation standards.

The core instability stems from the fact that initial molecular models, often derived from experimental structures or de novo prediction, are not in a state of minimal energy. Placing such a system directly into a dynamics simulation violates the fundamental assumptions of the integrators used in MD. As highlighted in studies of complex systems like asphalt, the initial model is purposefully built with a low density to ensure a random molecular distribution, which inherently creates local energy concentrations and excessive repulsive forces [16]. Energy minimization is explicitly used to eliminate these forces, "preventing excessive atomic velocities and ensuring dynamic stability at the start of the simulation" [16]. Bypassing this step forces the integrator to handle unrealistically high forces, leading to catastrophic failures.

The Consequences of Skipping Minimization

Numerical Instabilities and Integration Failures

MD integrators, such as the leap-frog or velocity Verlet algorithms, are designed to propagate atomic coordinates based on forces calculated from the potential energy function. These algorithms assume that forces and energies are within a range that allows for stable numerical integration over thousands of time steps.

- Catastrophic Energy Cascades: When minimization is skipped, atoms may be placed impossibly close, resulting in extremely high repulsive forces from the van der Waals term (often modeled by a Lennard-Jones potential). An integrator processing these forces can assign enormous velocities to atoms, causing them to "explode" or be ejected from the simulation box. This typically manifests as a simulation crash with error messages related to "constraint failure" or an uncontrollable increase in total energy.

- Distorted Bonded Terms: Similarly, distorted bond lengths and angles generate high energy from the harmonic or cosine potentials of the bonded terms. The integrator struggles with these large, instantaneous forces, leading to inaccurate trajectory propagation and a failure to conserve energy, even in microcanonical (NVE) ensembles.

Compromised Convergence and Invalid Thermodynamic Equilibrium

A system that avoids immediate catastrophic failure may still produce non-physical results that invalidate any scientific conclusions. The pursuit of thermodynamic equilibrium, a prerequisite for extracting meaningful thermodynamic and dynamic properties, becomes unattainable.

- Non-Equilibrium Starting Point: A simulation must begin from a structure that is at least locally energy-minimized to effectively explore the relevant conformational space during equilibration. Without minimization, the system starts from a high-energy, non-equilibrium state. As discussed in Communications Chemistry, the assumption that a system has reached equilibrium is often overlooked but is essential for validating results [17]. A system starting from a non-minimized state may never reach a true equilibrium, or the path to equilibrium may be so convoluted that the resulting trajectory is not representative of the system's true behavior.

- Misleading Convergence Metrics: Commonly used metrics like potential energy and density can converge rapidly in the initial stages of a simulation, giving a false impression of stability [16]. However, more critical properties, such as the radial distribution function (RDF) between key components (e.g., asphaltene-asphaltene in asphalt systems), converge much more slowly. Only when these slower-converging structural properties stabilize can the system be considered truly equilibrated [16]. A non-minimized system can exhibit seemingly stable energy while its structural properties remain in a non-equilibrium state, leading to incorrect analysis of intermolecular interactions and nanoscale structure.

Table 1: Comparison of Convergence Times for Different Properties in an Asphalt MD System [16]

| Property | Typical Convergence Time | Can Give False Positive of Equilibrium? |

|---|---|---|

| Density | Very Fast (ps-ns) | Yes |

| Potential Energy | Very Fast (ps-ns) | Yes |

| Pressure | Slower (ns+) | No |

| Radial Distribution Function (RDF) | Slowest (Multi-ns to μs) | No |

Experimental Protocols and Evidence

Case Study: Establishing Equilibrium in a Multi-Component Asphalt System

A detailed investigation into the convergence of MD simulations for asphalt systems provides a clear protocol and compelling evidence for the necessity of thorough initialization, including minimization [16].

- Objective: To determine the true equilibrium state of virgin, aged, and rejuvenated asphalt systems and identify reliable convergence metrics.

- System Preparation: A multi-component asphalt model was constructed using specific ratios of model molecules (asphaltenes, resins, aromatics, and saturates). The initial model was built in a large cubic box with a density much lower than the actual asphalt to ensure a random molecular distribution and prevent local energy concentrations [16].

- Simulation Workflow:

- Energy Minimization: This step was explicitly performed to "eliminate any excessive repulsive forces that may exist in the system, preventing excessive atomic velocities and ensuring dynamic stability at the start of the simulation" [16].

- Equilibration: The system's temperature and pressure were controlled to reach actual target values.

- Production MD: A long dynamic simulation was performed to achieve thermodynamic equilibrium.

- Key Findings: The study concluded that relying solely on rapid convergence of density and energy is "insufficient to fully demonstrate the system's equilibrium." True equilibrium was only achieved when the RDF curves for the slowest-moving components (asphaltene-asphaltene) had converged. This structural convergence is impossible if the simulation is initialized from a non-minimized, high-energy state prone to instabilities [16].

Case Study: Refining Predicted Protein Structures

MD simulations are often used to refine protein structures predicted by tools like AlphaFold2, Robetta, and I-TASSER. A study on the Hepatitis C virus core protein (HCVcp) exemplifies this protocol [18].

- Objective: To construct and refine a reliable 3D structure of HCVcp using computational predictions and MD simulation.

- Protocol:

- Structure Prediction: Initial structures were generated using de novo (AF2, Robetta, trRosetta) and template-based (I-TASSER, MOE) methods.

- System Preparation for MD: The predicted structures were prepared for MD simulation, which necessarily included an energy minimization step to remove steric clashes from the modeling process.

- MD Refinement: Production MD runs were performed.

- Analysis: The root mean square deviation (RMSD), radius of gyration (Rg), and other metrics were tracked. The study found that "MD simulations resulted in compactly folded protein structures, which were of good quality and theoretically accurate," highlighting that "the predicted structures of certain proteins must be refined to obtain reliable structural models" [18]. This refinement process is predicated on a stable MD simulation initiated from a minimized structure.

Essential Protocols for Robust Energy Minimization

Standard Minimization Workflow

A robust minimization protocol is integrated into a larger simulation workflow to ensure stability. The following diagram illustrates this standard process, where minimization is a critical early step.

Recommended Minimization Algorithms

Different algorithms offer trade-offs between speed and robustness. The choice depends on the system's initial state and size.

Table 2: Common Energy Minimization Algorithms and Their Use Cases

| Algorithm | Principle | Advantages | Limitations | Typical Use Case |

|---|---|---|---|---|

| Steepest Descent | Moves atoms in the direction of the negative gradient of the potential energy. | Robust, stable even for very poorly structured systems. | Slow convergence near the minimum. | Initial "cleaning" of highly distorted structures with bad steric clashes. |

| Conjugate Gradient | Builds on Steepest Descent by using conjugate directions for more efficient convergence. | Faster convergence than Steepest Descent for a similar memory footprint. | Can be less stable with very noisy energy landscapes. | General-purpose minimization for most biomolecular systems after initial Steepest Descent. |

| L-BFGS (Limited-memory Broyden–Fletcher–Goldfarb–Shanno) | A quasi-Newton method that approximates the Hessian matrix. | Very fast convergence near the minimum; memory efficient. | Requires a reasonably good starting structure to be stable. | Final minimization steps and geometry optimizations in QM/MM. |

A standard two-stage protocol is recommended for complex systems:

- Stage 1: Perform 50-500 steps of the Steepest Descent algorithm to handle large forces and gross steric clashes.

- Stage 2: Switch to the Conjugate Gradient or L-BFGS algorithm for 1000-5000 steps or until convergence (e.g., force tolerance below 1000 kJ/mol/nm for Steepest Descent and 100 kJ/mol/nm for Conjugate Gradient).

The Scientist's Toolkit: Software and Force Fields

The choice of software and force fields is critical for implementing a reliable minimization and simulation protocol.

Table 3: Research Reagent Solutions: Key Software for MD Simulations [19] [20] [21]

| Tool / Reagent | Function | Key Features Relevant to Minimization & Stability |

|---|---|---|

| GROMACS | High-performance MD engine. | Extremely fast minimization and MD; highly optimized for CPUs and GPUs; supports all major force fields; ideal for high-throughput biomolecular simulations [20] [21]. |

| AMBER | MD software suite with optimized force fields. | Well-validated biomolecular force fields; robust GPU-accelerated minimization and simulation (PMEMD); popular for free energy calculations [20]. |

| CHARMM | MD program and force field family. | Extensive set of force fields and simulation methods; scriptable via its own control language for complex protocols [20]. |

| LAMMPS | Highly versatile MD simulator. | Flexibility for materials modeling, soft matter, and coarse-grained systems; easily extended with plugins [21]. |

| OpenMM | High-performance, scriptable MD toolkit. | Exceptional GPU acceleration and flexibility; allows for easy implementation of custom potentials and integrators [19] [20]. |

| AMBER Force Fields | Parameter sets for biomolecules. | Provides bonded and non-bonded parameters for proteins, DNA, and lipids; essential for accurate energy calculation during minimization [20]. |

| CHARMM Force Fields | Parameter sets for biomolecules. | Another widely used family of force fields; continuously updated and validated against experimental data [20]. |

| CHARMM-GUI | Web-based system builder. | Simplifies the complex process of building, minimizing, and equilibrating systems like membranes or protein-ligand complexes [20]. |

Energy minimization is a computationally inexpensive yet indispensable safeguard in the MD workflow. Its omission, in an effort to save trivial computational resources, introduces severe risks of numerical instability, integration failure, and fundamentally non-physical results that can invalidate an entire simulation. As demonstrated by rigorous studies on system convergence, a minimized structure is the prerequisite for a simulation that can legitimately reach thermodynamic equilibrium, allowing for the accurate calculation of structural and dynamic properties. For researchers in drug development and materials science, adhering to a robust minimization protocol is not merely a technical formality but a core tenet of responsible computational research, ensuring that conclusions drawn from the virtual world are built upon a stable and reliable foundation.

Molecular Dynamics (MD) simulation is a cornerstone technique in computational drug discovery, providing insights into the dynamical behavior and physical properties of molecular systems at an atomic level [22]. Within this workflow, energy minimization serves as the critical first step, addressing a fundamental problem: the initial molecular configurations, often generated from model building, can contain steric clashes or unrealistically high-energy interactions. If such configurations were used directly in full dynamics simulations, the resulting forces could be large enough to cause numerical instability and disrupt the integration of Newton's equations of motion. The primary role of energy minimization is to locate the nearest local minimum on the potential energy surface, providing a stable and physically realistic starting configuration for subsequent dynamics [23].

This process is inherently focused on the static energy landscape. It seeks a state where the net force on every atom is zero, corresponding to a local minimum where the potential energy is at a relative minimum with respect to small atomic displacements. This is conceptually distinct from full dynamics simulations, which aim to sample the conformational space of the system over time at a finite temperature, providing information about kinetics, thermodynamics, and dynamic processes [23]. Understanding this distinction—between optimizing a single snapshot and exploring an ensemble of states—is crucial for the effective application of MD in research. This guide delves into the technical comparison of these two approaches, framing them within the broader thesis that energy minimization is not a standalone task but an indispensable component of a robust MD simulation workflow, ensuring the reliability and efficiency of subsequent dynamical analysis.

Conceptual and Theoretical Foundations

The Goal of Energy Minimization

Energy minimization algorithms operate on the principle of optimization. They iteratively adjust atomic coordinates to minimize the potential energy of the system, which is calculated by a molecular mechanics force field [22]. The force field describes the potential energy surface (PES) as a function of atomic positions, decomposing it into bonded terms (bonds, angles, torsions) and non-bonded terms (electrostatics, van der Waals) [22]. The process stops when the root mean square (RMS) force falls below a specified threshold, indicating that a local minimum has been found. At this point, the structure is stable and free of severe steric strain, making it a suitable starting point for full MD simulation.

The Goal of Full Dynamics Simulation

In contrast, full MD simulation integrates Newton's equations of motion to model the time-dependent behavior of the system. It does not seek a single energy minimum but instead samples the Boltzmann distribution of states at a given temperature. This allows researchers to observe fluctuations, rare events, and pathways between states—information that is entirely absent from a single minimized structure. Advanced free energy calculation techniques, such as Free Energy Perturbation (FEP) and Thermodynamic Integration (TI), are built upon full dynamics to compute binding affinities, a crucial metric in drug discovery [24] [23]. These methods often rely on alchemical transformations or path-based methods to connect different states, providing insights into binding mechanisms and kinetics that minimization alone cannot offer [23].

The table below summarizes the core distinctions between energy minimization and full dynamics simulations, highlighting their complementary roles in the MD workflow.

Table 1: A comparative overview of energy minimization and full dynamics simulations.

| Feature | Energy Minimization | Full Dynamics |

|---|---|---|

| Primary Objective | Find a local minimum on the PES; relieve steric strain [23] | Sample the thermodynamic ensemble; study time-evolving behavior [23] |

| System Representation | A single, static structure | An ensemble of configurations over time |

| Output | Optimized coordinates and final potential energy | Trajectory (time-series of coordinates), energies, and properties |

| Physical Insight | Static energy landscape, stable conformations | Dynamic processes, conformational entropy, kinetics, pathways [23] |

| Role in Workflow | Pre-processing step to prepare initial structure | Core simulation technique for production data |

| Computational Cost | Relatively low (no time integration) | High (scales with simulation time and system size) |

| Key Algorithms | Steepest Descent, Conjugate Gradient, L-BFGS | Leap-frog, Velocity Verlet integrators |

Methodologies and Protocols in Practice

A Standard Protocol for Energy Minimization

A typical energy minimization protocol is designed to efficiently and stably prepare a system for dynamics. The following steps outline a common procedure, often implemented with tools like DPmoire for complex systems or standard MD packages like GROMACS or AMBER [25].

- System Preparation: Construct the initial molecular system, including the protein, ligand, solvent, and ions. This structure may come from a database or a modeling tool and often contains atomic clashes.

- Force Field Selection: Choose an appropriate molecular mechanics force field (e.g., GAFF, OPLS, AMBER) or a machine learning force field (MLFF) for the system [22]. The accuracy of the force field is paramount, as it defines the PES [22].

- Minimization Execution:

- Initial Steepest Descent: Run an initial minimization (e.g., 500-1000 steps) using the Steepest Descent algorithm. This algorithm is robust for removing large forces and steric clashes from highly distorted initial structures.

- Refinement with Conjugate Gradient: Switch to the Conjugate Gradient or L-BFGS algorithm for further minimization (e.g., 1000-5000 steps) until convergence is achieved. These algorithms are more efficient for fine-tuning the structure once the largest strains have been relieved.

- Convergence Criteria: The minimization is considered converged when the RMS force (the root mean square of the forces on all atoms) is below a predefined threshold, typically 100-1000 kJ/mol/nm, indicating a local minimum has been reached.

A Standard Protocol for Free Energy Calculations with Full Dynamics

Full dynamics is used for production runs and advanced free energy calculations. The following protocol describes a setup for Absolute Binding Free Energy (ABFE) calculation using the double decoupling method [24] [23].

- System Preparation: Start from the energy-minimized structure. Solvate the protein-ligand complex in a water box and add ions to neutralize the system.

- Equilibration: Perform a series of short MD simulations to equilibrate the solvent, ions, and protein-ligand complex at the desired temperature and pressure. This typically involves positional restraints on the heavy atoms of the protein and ligand, which are gradually released.

- Alchemical Transformation Setup: Define the thermodynamic cycle for decoupling the ligand from its environment in both the bound and unbound states. This involves creating a series of non-physical intermediate states using a coupling parameter, λ [23].

- Production Simulation: Run multiple independent simulations at different λ values. For each window, collect sufficient sampling of the conformational space. Modern implementations may use advanced sampling techniques or non-equilibrium approaches to improve efficiency [23].

- Free Energy Analysis: Use an estimator, such as the Multistate Bennett Acceptance Ratio (MBAR) or Thermodynamic Integration (TI), to compute the free energy difference from the data collected across all λ windows [23]. The result is the absolute binding free energy (ΔG_b).

Integrating Minimization and Dynamics in a Research Workflow

The relationship between energy minimization and full dynamics is sequential and interdependent. Energy minimization provides the validated starting point that ensures the subsequent dynamics simulation is physically meaningful and numerically stable. This is especially critical for sensitive calculations like FEP, where the accuracy of the underlying force field and the stability of the initial pose are paramount [24] [22].

Diagram: A standard MD workflow highlighting the role of energy minimization.

The Scientist's Toolkit: Essential Research Reagents and Solutions

The following table details key computational "reagents" and tools essential for conducting research involving energy minimization and full dynamics simulations.

Table 2: Key computational tools and their functions in energy landscape studies.

| Tool / Reagent | Function / Purpose | Relevance to Workflow |

|---|---|---|

| Molecular Mechanics Force Fields (e.g., GAFF, OpenFF) [22] | Mathematical model defining the Potential Energy Surface (PES) using bonded and non-bonded terms. | Provides the energy function for both minimization and dynamics simulations [22]. |

| Machine Learning Force Fields (e.g., Allegro, NequIP) [25] | Neural network-based models that map atomic features to energies and forces, offering high accuracy. | Can replace traditional force fields for more accurate energy evaluations, especially in complex systems like moiré materials [25]. |

| Free Energy Perturbation (FEP) | An alchemical method for calculating relative binding free energies between similar ligands [24] [23]. | A key application of full dynamics, reliant on a stable, minimized starting structure for reliable results [24]. |

| Absolute Binding Free Energy (ABFE) | A method to compute the absolute binding affinity of a single ligand, often via the double-decoupling method [24] [23]. | A more computationally demanding application of full dynamics, requiring careful system preparation. |

| DPmoire [25] | An open-source software package for constructing accurate machine learning force fields for complex systems like twisted moiré structures. | Useful for preparing systems where traditional force fields may be inadequate, ensuring a reliable starting point for dynamics [25]. |

| Alchemical & Path-Based Methods [23] | Enhanced sampling techniques that use non-physical pathways (alchemical) or collective variables (path-based) to compute free energies. | Expands the scope of what can be studied with full dynamics, providing mechanistic insights alongside free energies [23]. |

Energy minimization and full dynamics are not competing techniques but rather complementary pillars of the molecular simulation workflow. Energy minimization provides a critical gateway to the static energy landscape, delivering a stable and physically reasonable structure. Full dynamics, in turn, uses this validated starting point to explore the rich, time-dependent behavior of the system, enabling the calculation of experimentally relevant observables like binding free energy. A deep understanding of both the static and dynamic aspects of the energy landscape is fundamental to advancing research in drug development and molecular science. The ongoing development of more accurate force fields and sampling algorithms continues to enhance the fidelity and predictive power of this integrated approach [22].

Choosing Your Tools: Algorithms, Force Fields, and Practical Applications in Biomedicine

Energy minimization is a fundamental component of molecular dynamics (MD) simulation workflows, serving as a critical preparatory step for subsequent analysis like normal-mode investigations and ensuring physical realism in structural models [26] [27]. In computational chemistry and drug development, minimization algorithms are employed to find the local or global minimum on the potential energy surface, which corresponds to a stable molecular configuration [26] [28]. This process reduces the net forces on atoms until they become negligible, leading the system from a non-equilibrium state toward equilibrium [26]. The efficiency and robustness of these minimization algorithms directly impact the feasibility and accuracy of large-scale MD simulations, particularly in structure-based virtual ligand screening where refining thousands of molecular structures is required [28].

This guide provides a comprehensive technical comparison of three predominant energy minimization algorithms—Steepest Descent, Conjugate Gradient, and L-BFGS—within the context of MD simulation workflows. We examine their mathematical foundations, implementation specifics, convergence properties, and practical performance characteristics to inform researchers and drug development professionals in selecting appropriate methodologies for their computational challenges.

Fundamental Principles of Energy Minimization

The Potential Energy Surface

In molecular modeling, the potential energy surface (PES) represents the energy of a molecular system as a multidimensional function of its nuclear coordinates [26]. This hyper-surface contains critical points where minima correspond to stable molecular configurations, while saddle points represent transition states [26]. The mathematical expression of the PES incorporates both bonding interactions (bonds, angles, dihedrals) and non-bonded interactions, with the latter typically described by potentials such as the Lennard-Jones 6-12 potential for van der Waals forces and Coulomb's law for electrostatic interactions [26] [29].

The Lennard-Jones potential is given by:

[ V_{LJ}(r) = 4\epsilon \left[ \left(\frac{\sigma}{r}\right)^{12} - \left(\frac{\sigma}{r}\right)^{6} \right] ]

Where ( \epsilon ) is the well depth, ( \sigma ) is the distance at which the intermolecular potential is zero, and ( r ) is the separation distance [26]. This potential captures both attractive forces (the ( r^{-6} ) term) and repulsive forces (the ( r^{-12} ) term) between neutral atoms or molecules [26].

Force Fields and Molecular Mechanics

Force fields provide the mathematical framework and parameters necessary to compute the potential energy of a molecular system [26]. They consist of collections of parameters that define optimal bond lengths, bond angles, torsional angles, and non-bonded interaction parameters, effectively restraining the search for energy minima to physically realistic configurations [26]. In MD simulations, force fields such as AMBER, UFF, and their optimized variants (e.g., MOPSA for AMMP) provide the necessary functional forms and parameters to compute potential energies and atomic forces [28].

Minimization Algorithms: Theory and Implementation

Steepest Descent Method

Algorithmic Foundation

The Steepest Descent method is one of the most straightforward minimization approaches, relying on the fundamental principle of moving atoms in the direction of the negative gradient of the potential energy [26] [27]. The algorithm updates atomic positions according to:

[ \mathbf{r}{n+1} = \mathbf{r}n + \gamma \mathbf{F}_n ]

Where ( \mathbf{r}n ) represents the atomic coordinates at step ( n ), ( \mathbf{F}n ) is the force vector (negative gradient of the potential energy), and ( \gamma ) is a step size parameter [26] [27]. In practical implementations like GROMACS, the maximum displacement is normalized by the largest force component to prevent overshooting:

[ \mathbf{r}{n+1} = \mathbf{r}n + \frac{\mathbf{F}n}{\max (|\mathbf{F}n|)} h_n ]

Where ( hn ) is the maximum displacement at step ( n ) [27]. The algorithm employs a heuristic adjustment of this displacement parameter: if the potential energy decreases (( V{n+1} < Vn )), ( h{n+1} = 1.2 hn ) to accelerate convergence; if the energy increases (( V{n+1} \geq Vn )), the step is rejected and ( hn = 0.2 h_n ) to promote stability [27].

Convergence Properties

The Steepest Descent method exhibits linear convergence and is particularly effective in the early stages of minimization when the system is far from the minimum [26] [27]. Its robustness stems from always moving in the direction of maximum energy decrease, but this same characteristic leads to inefficient oscillatory behavior near minima where the energy landscape resembles a narrow valley [26]. The method requires only gradient calculations (first derivatives), making it computationally inexpensive per iteration but generally requiring more iterations to reach convergence compared to more sophisticated methods [26].

Table 1: Key Characteristics of the Steepest Descent Method

| Aspect | Description |

|---|---|

| Mathematical Basis | First-order optimization using negative gradient direction |

| Computational Cost per Step | Low (requires only gradient evaluation) |

| Convergence Rate | Linear |

| Memory Requirements | Minimal (( O(N) )) |

| Best Application Context | Initial minimization stages with poor starting geometries |

| Implementation in MD Packages | Available in GROMACS, AMMOS |

Conjugate Gradient Method

Theoretical Framework

The Conjugate Gradient method addresses a key limitation of Steepest Descent by ensuring that each new search direction is conjugate to previous directions, preventing oscillation in narrow valleys of the potential energy surface [26] [30]. For a quadratic energy function of ( n ) variables, the method theoretically converges in at most ( n ) steps [30]. The algorithm generates search directions through an iterative process:

[ dk = -gk + \betak d{k-1} ]

Where ( dk ) is the new search direction, ( gk ) is the gradient at step ( k ), and ( \betak ) is a scalar parameter that ensures conjugacy between successive search directions [30] [31]. Multiple formulas exist for calculating ( \betak ), including Fletcher-Reeves (FR), Polak-Ribière-Polyak (PRP), and Hestenes-Stiefel (HS) formulae [31].

In molecular dynamics applications, the Conjugate Gradient method often employs the Polak-Ribière-Polyak (PRP) formula:

[ \betak^{PRP} = \frac{gk^T (gk - g{k-1})}{g{k-1}^T g{k-1}} ]

This formulation enables the algorithm to automatically reset when it moves through non-quadratic regions of the potential energy surface [31].

Advanced Variants and Convergence

Recent research has developed three-term conjugate gradient methods that incorporate restart strategies and hybrid parameters to improve computational efficiency and convergence stability [31]. These advanced variants approximate quasi-Newton directions while maintaining the low memory requirements of traditional CG methods [31]. The convergence of Conjugate Gradient methods is guaranteed for linear systems with symmetric positive-definite matrices, and for nonlinear systems under appropriate line search conditions [30] [31].

Table 2: Conjugate Gradient Variants and Their Properties

| Variant | Formula for ( \beta_k ) | Convergence Properties | Application Context |

|---|---|---|---|

| Fletcher-Reeves (FR) | ( \betak^{FR} = \frac{gk^T gk}{g{k-1}^T g_{k-1}} ) | Global convergence but may exhibit jamming | Large-scale optimization |

| Polak-Ribière-Polyak (PRP) | ( \betak^{PRP} = \frac{gk^T (gk - g{k-1})}{g{k-1}^T g{k-1}} ) | Good computational performance, may not always converge | Nonlinear problems |

| Hestenes-Stiefel (HS) | ( \betak^{HS} = \frac{gk^T (gk - g{k-1})}{d{k-1}^T (gk - g_{k-1})} ) | Good performance with exact line searches | General unconstrained optimization |

| Hybrid Three-Term | Combination of multiple β parameters with restart strategy | Improved convergence speed and stability | Image restoration and large-scale problems |

L-BFGS Algorithm

Limited-Memory Approximation

The L-BFGS (Limited-Memory Broyden-Fletcher-Goldfarb-Shanno) algorithm belongs to the quasi-Newton family of optimization methods, which approximate the Hessian matrix (second derivatives of energy) using gradient information from previous steps [27] [32]. While the full BFGS method requires ( O(N^2) ) storage for N variables, L-BFGS achieves dramatically reduced memory requirements (( O(N) )) by storing only a limited number of previous position and gradient vectors to approximate the inverse Hessian [27] [32]. This makes it particularly suitable for large-scale MD simulations with thousands of atoms.

The L-BFGS update formula constructs the search direction as:

[ dk = -Hk g_k ]

Where ( Hk ) is an approximation to the inverse Hessian built from the most recent ( m ) pairs of ( sk = x{k+1} - xk ) and ( yk = g{k+1} - g_k ) vectors [32]. Typical values for the memory parameter ( m ) range from 5 to 20, balancing approximation quality with memory usage [32].

Implementation in MD Packages

In GROMACS, L-BFGS has been shown to converge faster than Conjugate Gradient methods for energy minimization, particularly for systems where water molecules must be treated with flexible models rather than constraints like SETTLE [27]. The algorithm efficiently handles the delicate balance of forces in biomolecular systems, though its parallel implementation remains challenging due to the correction steps required for the inverse Hessian approximation [27].

Comparative Performance Analysis

Convergence Behavior

The three minimization algorithms exhibit distinct convergence profiles across different stages of the minimization process. Steepest Descent demonstrates rapid initial progress but slows significantly near minima, while Conjugate Gradient starts slower but achieves better final convergence [27]. L-BFGS typically outperforms both, especially for systems with complex energy landscapes, though it may require more careful parameter tuning [27] [32].

Table 3: Performance Comparison of Minimization Algorithms in MD Simulations

| Algorithm | Initial Convergence | Final Convergence | Memory Requirements | Computational Cost per Step |

|---|---|---|---|---|

| Steepest Descent | Fast | Slow | Low (( O(N) )) | Low |

| Conjugate Gradient | Moderate | Fast | Low (( O(N) )) | Moderate |

| L-BFGS | Moderate | Very Fast | Moderate (( O(mN) )) | Moderate to High |