Energy Minimization Convergence in Drug Discovery: A Practical Guide for Computational Scientists

This article provides a comprehensive guide to energy minimization convergence criteria, addressing the critical needs of researchers and drug development professionals.

Energy Minimization Convergence in Drug Discovery: A Practical Guide for Computational Scientists

Abstract

This article provides a comprehensive guide to energy minimization convergence criteria, addressing the critical needs of researchers and drug development professionals. It covers foundational principles of convergence and its impact on binding free energy calculations, explores methodological approaches including steepest descent and conjugate gradient algorithms, offers practical troubleshooting strategies for common convergence failures, and discusses validation techniques to ensure simulation reliability. By integrating current research and practical applications, this guide aims to enhance the accuracy and efficiency of computational workflows in pharmaceutical development.

Understanding Convergence: The Foundation of Reliable Energy Minimization

In computational biology and molecular dynamics (MD), the concept of convergence is not merely an abstract statistical ideal but a practical necessity for ensuring the physical relevance and predictive accuracy of simulations. Convergence assessment determines whether a simulation has sampled the conformational space of a biomolecule sufficiently to provide reliable estimates of its equilibrium properties [1]. This is critically important in fields such as drug development, where conclusions about molecular mechanisms, binding affinities, and stability often hinge on the results of such simulations [2].

The challenge lies in the fact that biomolecular systems exhibit a complex energy landscape with multiple minima, and simulations can become trapped in local basins for timescales that are computationally prohibitive to overcome [3]. Therefore, defining and quantifying convergence is essential for distinguishing between true physical phenomena and artifacts of inadequate sampling. This guide details the mathematical frameworks used to define convergence, its physical interpretation in the context of energy landscapes, and provides practical protocols for researchers to apply these principles.

Mathematical Foundations of Convergence

The mathematical definition of convergence in biomolecular simulations is rooted in statistical mechanics. A simulation is considered converged when it has visited all relevant states with probabilities consistent with the underlying Boltzmann distribution [1]. Several key quantitative metrics have been developed to operationalize this principle.

The Effective Sample Size and Structural Decorrelation Time

A powerful approach to assessing convergence is to calculate the effective sample size, which quantifies the number of statistically independent configurations within a correlated trajectory [1]. This method determines the structural decorrelation time, τ_dec, defined as the minimum time that must elapse between trajectory snapshots for them to be considered independent.

The mapping of a trajectory onto an effective sample size is achieved by analyzing the statistics of structurally defined bins. For a set of independent and identically distributed (i.i.d.) configurations, the fluctuations in the population of these bins will follow predictable statistical laws. By testing different time intervals between sampled configurations, one can identify the interval at which the bin population statistics match the expected i.i.d. behavior, thus determining τdec [1]. The effective sample size (Neff) is then approximately given by:

Neff ≈ tsim / τ_dec

where tsim is the total simulation time. The relative precision of an ensemble average for a property A is then roughly proportional to 1/√Neff [1].

Ensemble-Based and Histogram Methods

Another class of methods relies on comparing structural ensembles generated from different parts of a simulation. The trajectory is partitioned into segments, and each segment is classified into a structural histogram based on a set of reference structures [3]. The set of reference structures is generated by randomly selecting a structure from the trajectory, removing it and all structures within a cutoff distance, and repeating until all structures are clustered [3].

Convergence is assessed by comparing the populations of these structural bins from the first and second halves of the simulation. A converged simulation will show statistically indistinguishable populations between the two halves, indicating that the relative probabilities of different conformational substates have stabilized [3].

Practical Working Definition for MD Simulations

A practical, operational definition for convergence is: given a trajectory of length T and a property A_i extracted from it, let 〈Ai〉(t) be the running average of *Ai* from times 0 to t. The property is considered "equilibrated" if the fluctuations of 〈Ai〉(t) around the final average 〈Ai〉(T) remain small for a significant portion of the trajectory after a convergence time t_c (where 0 < t_c < T). If all individual properties of the system meet this criterion, the system can be considered fully equilibrated [2].

Table 1: Key Quantitative Metrics for Assessing Convergence

| Metric | Mathematical Principle | Reports On | Key Advantage |

|---|---|---|---|

| Effective Sample Size (N_eff) [1] | Number of independent configurations, Neff ≈ tsim / τ_dec | Statistical independence of samples | General; directly probes configurational distribution without fitting parameters |

| Structural Decorrelation Time (τ_dec) [1] | Minimum time for configurations to become statistically independent | Timescale of global conformational sampling | Blind to energy degeneracy; detects slow structural timescales |

| Histogram Population Difference (ΔP_i) [3] | ΔPi = |pi^(1) - pi^(2)|, where pi is population of structural bin i from two trajectory halves | Convergence of relative populations of conformational substates | Directly probes structural sampling and population stability |

| Autocorrelation Function [2] | Self-similarity of a time-series property over different time lags | Timescale of specific observable fluctuations | Simple to compute for any order parameter |

| Ergodic Measure [1] | Fluctuations of an observable averaged over independent simulations | Timescale for an observable to appear ergodic | Based on fluctuations of averaged quantities |

Physical Significance in Biomolecular Contexts

The mathematical definitions of convergence are inextricably linked to the physical reality of biomolecular energy landscapes. Proteins and other biomolecules exist in a complex conformational ensemble, and their functions often depend on transitions between metastable states.

Convergence vs. Equilibrium

A critical distinction exists between a simulation that has reached a local equilibrium within a conformational basin and one that has achieved global equilibrium across the entire relevant conformational space. A system can be in partial equilibrium where some properties have converged while others have not [2]. For instance, local side-chain motions may equilibrate on a nanosecond timescale, while large-scale domain movements requiring microseconds or longer may remain under-sampled.

This has profound implications for the biological interpretation of simulations. Properties that are averages over highly probable regions of conformational space (e.g., the average radius of gyration of a protein) may converge relatively quickly. In contrast, properties that depend explicitly on low-probability regions, such as transition rates between conformational states or the population of a rare intermediate, require much longer simulation times to converge [2].

The Insensitivity of Energy-Based Metrics

Energy-based convergence measures, such as the stability of the total potential energy, can be misleading. They are often insensitive to slow timescales associated with large conformational changes, particularly when the energy landscape is nearly degenerate [1]. Figure 1 illustrates this concept: two distinct conformational states may have very similar energies (ΔE → 0), but the barrier between them may be high, leading to a slow transition rate, tslow. An energy-based metric would fail to detect that the simulation has not adequately sampled the transitions between these states, whereas a structure-based method like the effective sample size calculation would detect tslow once the barrier is crossed [1].

Experimental and Computational Protocols

This section provides detailed methodologies for implementing the convergence tests discussed, enabling researchers to rigorously evaluate their own simulations.

Protocol for Effective Sample Size Determination

This protocol calculates the structural decorrelation time, τ_dec, and the effective sample size as described in [1].

- Trajectory Preparation: Gather the MD trajectory (e.g., in DCD, XTC format) and corresponding topology file. Ensure the trajectory is centered and imaged if periodic boundary conditions are used.

- Reference Structure Selection:

- Select a set of reference structures, {Si}, by randomly choosing a structure from the trajectory.

- Remove this structure and all others within a predefined structural cutoff distance, dc (e.g., 1.5-2.5 Å backbone RMSD).

- Repeat until all structures in the trajectory are assigned to a reference structure. This guarantees that reference structures are at least d_c apart.

- Structural Histogram Construction: For the entire trajectory, classify every frame according to its nearest reference structure (based on a metric like RMSD). This creates a histogram of population counts for each reference state over time.

- Sub-sampling and Statistical Testing:

- Hypothesize a range of possible decorrelation times, τ_hyp.

- For each τhyp, create a sub-sampled trajectory by taking frames spaced τhyp apart.

- Construct a structural histogram from this sub-sampled set.

- Perform a statistical test (e.g., based on variance of bin populations) to check if the sub-sampled histogram exhibits the properties of an independent and identically distributed (i.i.d.) sample.

- Identify τdec: The smallest value of τhyp for which the sub-sampled trajectory passes the i.i.d. test is identified as the structural decorrelation time, τdec. The effective sample size is then Neff = tsim / τdec.

Protocol for Ensemble-Based Convergence Analysis

This protocol uses structural clustering and population comparison, as outlined in [3].

- Generate Reference Set: Follow Steps 1-4 of the protocol in Section 4.1 to generate a set of reference structures that span the structural diversity of the entire trajectory.

- Divide Trajectory: Split the trajectory into two halves (first half and second half). For multiple independent trajectories, combine the first halves of all runs into one ensemble and the second halves into another.

- Classify Trajectory Halves: Using the single set of reference structures from Step 1, classify every frame from the first-half ensemble and the second-half ensemble. This generates two population distributions, pi^(1) and pi^(2), over the same structural bins.

- Calculate Population Differences: For each structural bin i, compute the absolute difference in population, ΔPi = |pi^(1) - p_i^(2)|.

- Assess Convergence:

- Visual Inspection: Plot the two population distributions. A converged simulation will show a similar profile.

- Quantitative Measure: Calculate the root-mean-square deviation (RMSD) between the two population vectors. A low value indicates convergence.

- State-Specific Analysis: Check ΔPi for known biologically important states. Even if the global RMSD is low, a large ΔPi for a key state indicates its population has not converged.

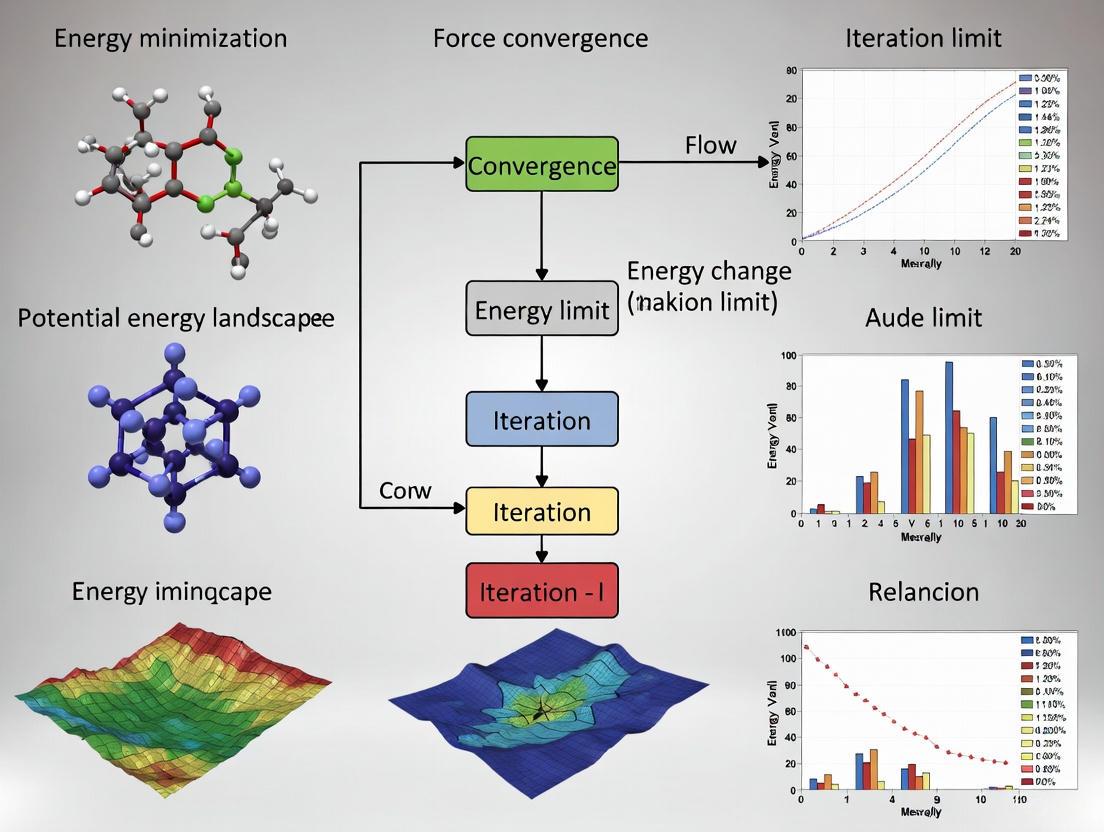

The following workflow diagram illustrates the steps involved in the ensemble-based convergence analysis.

The Scientist's Toolkit: Essential Research Reagents and Computational Tools

Table 2: Key Computational Tools and Resources for Convergence Analysis

| Tool/Resource | Type/Format | Primary Function in Convergence Analysis |

|---|---|---|

| MD Simulation Engine (e.g., GROMACS, AMBER, NAMD, OpenMM) | Software Package | Generates the primary simulation trajectory by numerically solving equations of motion. |

| Trajectory Analysis Suite (e.g., MDTraj, MDAnalysis, cpptraj) | Python Library / Standalone Tool | Handles trajectory I/O, structural alignment, and calculation of metrics like RMSD for clustering. |

| Reference Structure Set {S_i} | Data File (PDB format) | A set of structurally distinct conformations used to classify the trajectory into discrete states. |

| Structural Clustering Algorithm | Computational Method | Partitions conformational space into discrete states, forming the basis for structural histograms [3]. |

| Custom Scripts for Statistical Testing (e.g., Python, R) | Code | Implements the specific statistical tests for i.i.d. behavior and calculates effective sample size [1]. |

| Visualization Software (e.g., VMD, PyMOL) | Software Package | Provides visual inspection of reference structures, cluster centers, and representative conformations. |

Defining and rigorously assessing convergence is a cornerstone of reliable biomolecular simulation. The mathematical principles of effective sample size and ensemble comparison provide a robust framework to move beyond qualitative guesses about simulation quality. The physical significance of convergence lies in its direct connection to the true exploration of a biomolecule's energy landscape, which is a prerequisite for predicting equilibrium properties, transition rates, and ultimately, biological function.

As simulations tackle larger systems and more complex biological questions, the application of these quantitative convergence criteria will become increasingly critical. They are indispensable for validating new sampling methods, refining force fields, and ensuring that computational findings in drug discovery and basic research are built upon a solid statistical foundation.

The Critical Link Between Convergence and Binding Free Energy Calculations in Drug Discovery

The accurate prediction of binding free energy is a cornerstone of computational drug discovery, directly impacting the efficiency and success of lead compound identification and optimization [4]. These calculations estimate the strength of interaction between a potential drug molecule and its biological target, providing a quantitative basis for predicting biological activity. However, the reliability of these predictions is fundamentally dependent on a critical, yet often overlooked, principle: convergence [5].

In the context of molecular simulations, convergence refers to the point at which the calculated properties of a system, such as energy or structure, stabilize and cease to change significantly with additional simulation time. A failure to achieve convergence means that the simulation has not adequately sampled the biologically relevant conformational space, rendering subsequent free energy calculations numerically unstable and physically meaningless [5] [6]. This whitepaper explores the intrinsic link between convergence and binding free energy calculation, framing the discussion within the broader context of energy minimization criteria. It provides drug development professionals with a technical guide to understanding, assessing, and achieving convergence to enhance the reliability of computational drug design campaigns.

The Fundamental Challenge of Convergence in Molecular Simulations

Defining Convergence and Equilibrium

In molecular dynamics (MD) simulations, the system is typically started from an experimentally determined structure, which is often not in a state of thermodynamic equilibrium due to the non-physiological conditions of structure determination (e.g., crystallography) [5]. The simulation process aims to relax the system towards equilibrium, but confirming its arrival at this state is non-trivial.

A practical working definition of convergence for a given property is when the running average of that property, calculated from the start of the simulation to time t, exhibits only small fluctuations around the final average value for a significant portion of the trajectory after a "convergence time," t_c [5]. It is crucial to distinguish between partial equilibrium, where some properties have stabilized, and full equilibrium, which requires the convergence of all properties, including those dependent on infrequent transitions to low-probability conformations [5].

Why Convergence is Non-Trivial in Biomolecular Systems

Biomolecules are complex systems with rugged energy landscapes characterized by multiple local minima separated by energy barriers. The timescales required to cross these barriers and fully sample the conformational space can extend far beyond the capabilities of standard MD simulations, which are often limited to microseconds [7] [5]. One analysis of multi-microsecond trajectories revealed that while some biologically relevant properties may converge within this timeframe, others—particularly transition rates between conformational states—may not [5]. This has profound implications, as the resulting simulated trajectories may not be reliable in predicting equilibrium properties. Consequently, a simulation might appear converged for one property of interest while remaining unconverged for another, leading to inaccurate binding affinity predictions if the unconverged property is critical to the binding process.

Binding Free Energy Calculation Methods and Their Convergence Dependencies

Computational methods for estimating binding free energy exist on a spectrum of accuracy and computational cost. The choice of method dictates the specific convergence requirements and challenges.

Table 1: Key Methods for Binding Free Energy Calculation in Drug Discovery

| Method | Theoretical Basis | Key Output | Relative Computational Cost | Primary Convergence Concern |

|---|---|---|---|---|

| MM/PB(GB)SA | Molecular Mechanics, Implicit Solvent (Poisson-Boltzmann/Generalized Born) | Binding Free Energy (ΔG_bind) [7] | Medium | Adequate sampling of the protein-ligand complex, unbound protein, and unbound ligand conformations [7]. |

| Free Energy Perturbation (FEP) / Thermodynamic Integration (TI) | Alchemical Transformation (reversibly converting one ligand to another) | Relative Binding Free Energy (ΔΔG_bind) [7] | High | Complete sampling along the alchemical pathway (λ states) and conformational changes induced by the perturbed groups [7] [8]. |

| Absolute Binding Free Energy (ABFE) | Alchemical Decoupling (removing ligand from bound state and solution) | Absolute Binding Free Energy (ΔG_bind) [8] [6] | Very High | Sampling of all relevant ligand poses and protein conformations in both bound and unbound states; poor convergence leads to high variance and unstable results [6]. |

| Quantum Mechanics (QM) / Fragment Molecular Orbital (FMO) | Quantum Mechanical Principles, Electron Behavior | Interaction Energy components, which can be fit to Binding Free Energy (e.g., FMOScore) [4] | Very High (mitigated by fragmentation) | Convergence of the quantum mechanical calculation itself and, if combined with MD, the conformational sampling of the structure [4]. |

The Convergence Demands of Alchemical and Path-Based Methods

Alchemical methods, such as FEP and ABFE, are considered among the most accurate but are particularly susceptible to convergence issues. These methods calculate free energy differences by simulating a series of non-physical intermediate states along a pathway that connects two physical states (e.g., ligand bound vs. unbound) [9] [7]. If the simulation fails to sample each intermediate state adequately—a problem known as poor sampling efficiency—the resulting free energy estimate will be incorrect and may not converge even with longer simulation times [7]. Recent advances, such as the BFEE3 method for ABFE, aim to address this by designing thermodynamic cycles that minimize protein-ligand relative motion, thereby reducing the system perturbations that hinder convergence and driving a multi-fold gain in efficiency [8]. Furthermore, instabilities in ABFE calculations can arise from poor choices in pose restraints, leading to protocols that are optimized for numerical stability and convergence in production-scale drug discovery [6].

Assessing Convergence: Practical Methodologies and Criteria

Standard and Advanced Metrics for Convergence

A standard approach to check for equilibration is to monitor the time-evolution of key properties, looking for a plateau that indicates a stable average [5]. Common metrics include:

- Energetic metrics: The total potential energy of the system.

- Structural metrics: The root-mean-square deviation (RMSD) of the biomolecule relative to a reference structure.

However, reliance on RMSD alone can be misleading, as it does not guarantee the system has escaped a local energy minimum [5]. More sophisticated methods include:

- Analyzing time-autocorrelation functions (ACF) of properties to see if they decay to zero, indicating loss of memory of initial conditions [5].

- Monitoring cumulative averages of the property of interest (e.g., binding free energy) and ensuring the average does not drift beyond an acceptable error margin (e.g., 1 kcal/mol) over the latter part of the simulation.

- Performing statistical analysis like the calculation of standard errors or hysteresis (the difference between forward and backward transformations in alchemical calculations) [8]. For instance, a hysteresis below

0.5 kcal mol^-1can be an indicator of good convergence in a well-behaved ABFE calculation [8].

A Practical Workflow for Energy Minimization and Equilibration

Proper system preparation through energy minimization is a critical prelude to dynamics simulations. A typical protocol for relaxing a crystal-derived protein structure involves a staged approach with judicious use of restraints [10]:

- Minimize added atoms: Fix the heavy atoms of the crystal structure and minimize the positions of added hydrogens and solvent molecules using a robust algorithm like steepest descents until derivatives are well-behaved.

- Relax side chains: Tether or restrain the main-chain atoms while allowing side chains to adjust, again using steepest descents initially.

- Gradually release the backbone: Progressively reduce the tethering constants on the backbone atoms until the entire system can be minimized without restraints, switching to a more efficient algorithm like conjugate gradients as the system nears a minimum [10].

The following diagram illustrates the logical relationship between minimization, equilibration, and production simulations in ensuring convergence for binding free energy calculations:

Convergence criteria must be tailored to the objective. For instance, relaxing a structure prior to dynamics may only require minimizing until the maximum derivative is below 1.0 kcal mol^-1 Å^-1, whereas a normal mode analysis requires a maximum derivative below 10^-5 kcal mol^-1 Å^-1 [10].

Table 2: Key Research Reagent Solutions for Free Energy Calculations

| Tool / Resource | Type | Primary Function | Relevance to Convergence & Binding Energy |

|---|---|---|---|

| NAMD [8] | Molecular Dynamics Software | Performs MD and free energy simulations. | Engineered for scalability on CPU/GPU architectures, enabling longer simulations to improve sampling. |

| BFEE2/BFEE3 [8] | Software Plugin/Module | Automates setup of Absolute Binding Free Energy (ABFE) calculations. | Implements optimized algorithms to enhance sampling efficiency and reduce convergence time. |

| AMBER [7] | Molecular Dynamics Suite | Includes tools for MD, FEP, and TI. | Features ongoing method development (e.g., λ-dependent weight functions) to improve alchemical sampling. |

| fastDRH [7] | Web Server | Integrates docking with MM/PBSA for binding affinity prediction. | Provides a streamlined, less computationally demanding endpoint method, trading some accuracy for speed. |

| FMOScore [4] | Scoring Function | A QM-based method using the Fragment Molecular Orbital (FMO) approach. | Bypasses classical force fields and some convergence issues by calculating gas-phase interaction energies from QM. |

| Schrödinger FEP+ [4] | Commercial Drug Discovery Suite | Implements automated FEP calculations. | Uses sophisticated algorithms and force fields to improve the convergence and accuracy of relative binding affinities. |

The path to reliable binding free energy predictions is inextricably linked to achieving convergence in molecular simulations. As computational methods advance, pushing towards high-throughput applications and the integration of artificial intelligence, the rigorous assessment of convergence must remain a non-negotiable standard [11] [12] [8]. By adopting robust equilibration protocols, carefully monitoring convergence using multiple metrics, and leveraging modern optimized algorithms, researchers can significantly improve the accuracy and predictive power of their computational drug discovery efforts. A disciplined focus on convergence is not merely a technical detail but a fundamental requirement for transforming computer-aided drug discovery from a supportive tool into a definitive driver of therapeutic innovation.

John Gamble Kirkwood (1907–1959) was a distinguished theoretical physicist whose seminal work created a solid theoretical underpinning for modern physical chemistry and statistical mechanics. His research on the statistical theory of fluids, particularly the Kirkwood superposition approximation, introduced a formalism that continues to resonate throughout condensed matter chemistry and physics [13]. Kirkwood's work established a mathematical approach to the statistical mechanics of fluids, demonstrating that calculating liquid properties in terms of intermolecular interactions involves solving a coupled hierarchy of equations. Although now superseded by more accurate approximations, his superposition approximation captured essential physical effects dominating liquid structure and properties, with his distribution function theory remaining a cornerstone of liquid state theory [13]. This foundation enables modern applications across diverse fields, including energy minimization in molecular dynamics (MD) simulations and computer-aided drug discovery, where convergence criteria ensure reliable predictions of molecular behavior and thermodynamic properties.

Theoretical Foundations and Mathematical Framework

Kirkwood's Superposition Approximation

The Kirkwood superposition approximation, introduced in 1935, provides a mathematical framework for representing discrete probability distributions. For a multivariate probability distribution P(x₁, x₂, ..., xₙ), the approximation expresses the complex joint probability through products of probabilities over all subsets of variables [14].

For the case of three variables (x₁, x₂, x₃), the approximation takes the form:

P'(x₁, x₂, x₃) = p(x₁,x₂)p(x₂,x₃)p(x₁,x₃) / p(x₁)p(x₂)p(x₃) [14]

This formulation represents the probabilistic counterpart of interaction information. While the approximation does not generally produce a properly normalized probability distribution, it becomes exact for decomposable probability distributions that admit a graph structure whose cliques form a tree [14]. In such cases, the numerator contains the product of intra-clique joint distributions while the denominator contains the product of clique intersection distributions.

Statistical Mechanics of Fluids and Solutions

Kirkwood's theoretical framework extended to rigorous treatments of fluid mixtures and ionic solutions. His work with Buff produced exact representations of mixture properties in terms of molecular distribution functions and molecular interactions, now widely known as the Kirkwood-Buff theory [13]. This theoretical approach enables the interpretation of experimental data for liquid solutions through statistical mechanical formalism.

Kirkwood's 1939 paper "The Dielectric Polarization of Polar Liquids" introduced the groundbreaking concept of orientational correlations between neighboring molecules, demonstrating how these correlations control dielectric behavior in liquids [13]. This recognition that molecular interactions in liquids require solving coupled hierarchical equations established the foundation for modern approaches that employ intuitive approximations to describe condensed phase systems.

Energy Minimization and Convergence Criteria

Theoretical Significance of Minimum-Energy Structures

In macromolecular optimization calculations, the theoretical significance of minimum-energy structures requires careful interpretation. The total potential energy of different molecules cannot be compared directly due to arbitrary energy zeros in forcefields. However, comparisons remain meaningful for different configurations of chemically identical systems [10].

The calculated energy of a fully minimized structure represents the classical enthalpy at absolute zero, ignoring quantum effects (particularly zero-point vibrational motion). For sufficiently small molecules, quantum corrections for zero-point energy and free energy at higher temperatures can be computed through normal mode analysis [10].

When estimating relative binding enthalpies for enzyme-substrate complexes, meaningful comparison requires consideration of a complete thermodynamic cycle. Additionally, entropy contributions neglected in minimization calculations introduce potential errors, though these can be estimated for systems small enough to compute normal mode frequencies [10].

Convergence Criteria in Molecular Minimization

Table 1: Convergence Criteria for Molecular Minimization

| Convergence Level | Maximum Derivative (kcal mol⁻¹ Å⁻¹) | Typical Applications |

|---|---|---|

| Preliminary Relaxation | 1.0 | Relaxing overlapping atoms before molecular dynamics |

| Standard Minimization | 0.02 - 0.5 | Most molecular modeling applications |

| High-Precision | 0.0002 | Protein-substrate systems requiring extensive minimization |

| Ultra-High Precision | < 10⁻⁵ | Normal mode analysis requiring precise frequencies |

Mathematically, a minimum is defined where function derivatives are zero and the second-derivative matrix is positive definite. In gradient minimizers, derivatives provide the most direct convergence assessment [10].

Atomic derivatives can be summarized as average, root-mean-square (rms), or maximum values. The rms derivative offers better convergence measurement than simple averages because it weights larger derivatives more heavily, reducing the likelihood that few large derivatives escape detection. However, the maximum derivative should always be verified regardless of the chosen convergence metric [10].

The appropriate convergence level depends on calculation objectives. While relaxing overlapping atoms before dynamics may require maximum derivatives of only 1.0 kcal mol⁻¹ Å⁻¹, normal mode analysis demands maximum derivatives below 10⁻⁵ kcal mol⁻¹ Å⁻¹ to prevent frequency shifts of several wavenumbers [10].

Algorithm Selection and Minimization Methodology

Table 2: Minimization Algorithms and Applications

| Algorithm | Best Application Context | Strengths | Limitations |

|---|---|---|---|

| Steepest Descents | Initial steps (10-100) far from minimum | Stable with non-quadratic surfaces | Slow convergence near minimum |

| Conjugate Gradients | Intermediate to final minimization | Efficient for large systems; minimal storage | Requires quadratic approximation |

| Newton-Raphson | Final minimization near convergence | Fast convergence near minimum | Requires Hessian matrix inversion |

The choice of minimization algorithm depends on system size and optimization state. When derivatives exceed 100 kcal mol⁻¹ Å⁻¹, the energy surface is far from quadratic, making algorithms that assume quadratic surfaces (Newton-Raphson, quasi-Newton-Raphson, conjugate gradients) potentially unstable [10].

The steepest descents algorithm typically works best for initial steps when far from a minimum, after which conjugate gradients or Newton-Raphson methods can complete minimization to convergence. For highly distorted structures, simplified forcefields without cross terms or Morse bond potentials improve stability during initial minimization [10].

The conjugate gradients method requires convergence along each line search before continuing to the next direction. For non-harmonic systems, conjugate gradients may exhaustively minimize along conjugate directions without complete convergence, potentially requiring algorithm restarting [10].

Modern Applications: From Molecular Dynamics to Drug Discovery

Convergence in Molecular Dynamics Simulations

Molecular dynamics (MD) simulations complement experiments by providing atomic-level motion details, but they rely on the critical assumption that simulations reach thermodynamic equilibrium. Standard equilibration protocols involve energy minimization, heating, pressurization, and unrestrained simulation to relax the system [5].

A practical definition of equilibrium in MD states: "Given a system's trajectory of length T and property Aᵢ extracted from it, with ⟨Aᵢ⟩(t) representing the average between times 0 and t, the property is 'equilibrated' if fluctuations of ⟨Aᵢ⟩(t) with respect to ⟨Aᵢ⟩(T) remain small for a significant trajectory portion after some convergence time tc (0 < tc < T)" [5].

This definition acknowledges partial equilibrium, where some properties reach converged values while others haven't. Average properties (like distances between protein domains) depending mainly on high-probability conformational regions may converge faster than properties (like transition rates to unlikely conformations) that require thorough exploration of low-probability regions [5].

Research shows that biologically interesting properties tend to converge in multi-microsecond trajectories, though transition rates to low-probability conformations may require longer simulation times [5].

Computer-Aided Drug Discovery and AI Integration

The convergence of computer-aided drug discovery (CADD) with artificial intelligence represents a transformative advancement. AI-driven drug design (AIDD) accelerates critical stages including target identification, candidate screening, pharmacological evaluation, and quality control [11].

Hybrid AI-structure/ligand-based virtual screening and deep learning scoring functions significantly enhance hit rates and scaffold diversity. Machine learning models combined with public databases help overcome structural and data limitations for historically undruggable targets [11].

The integration of AI-driven in silico design with automated robotics for synthesis and validation, coupled with iterative model refinement, enables exponential compression of development timelines, reducing research risks and costs [11].

Experimental Protocols and Methodologies

System Preparation and Equilibration Protocol

Proper system preparation is essential for meaningful simulation results. The following protocol outlines a systematic approach for relaxing crystal-derived protein systems:

Initial Hydrogen and Solvent Minimization: Fix heavy atom crystal coordinates to allow added hydrogens and solvent to adjust to a static crystallographic environment. Use steepest descents until derivatives reach approximately 10 kcal mol⁻¹ Å⁻¹ [10].

Side Chain Relaxation: Fix or tether main chain atoms while side chains adjust, typically relaxing surface side chains before interior residues. Continue using steepest descents until derivatives fall below 10 kcal mol⁻¹ Å⁻¹ [10].

Backbone Relaxation: Gradually decrease tethering constants for backbone atoms until the system can be completely relaxed. Transition from steepest descents to more efficient algorithms (conjugate gradients) as system stability improves [10].

This staged approach prevents artifactual movement from large initial forces in strained crystal interactions while progressively relaxing the system toward an unperturbed conformation.

Workflow Visualization

Research Reagent Solutions

Table 3: Essential Computational Research Reagents

| Research Reagent | Function/Purpose | Application Context |

|---|---|---|

| Force Fields (CVFF) | Defines molecular mechanics potential energy | Energy minimization and MD simulations |

| Steepest Descents Algorithm | Initial minimization far from equilibrium | First 10-100 steps of minimization |

| Conjugate Gradients Algorithm | Efficient intermediate minimization | Intermediate minimization steps |

| Newton-Raphson Algorithm | Final precise minimization | Convergence near minimum |

| Thermodynamic Cycle | Relative binding enthalpy calculation | Enzyme-substrate complex comparison |

| Kirkwood Superposition Approximation | Many-body probability distribution | Theoretical liquid state modeling |

| Molecular Dynamics Framework | Atomic trajectory simulation | Biomolecular conformational sampling |

The theoretical foundations established by Kirkwood's formulations continue to underpin modern statistical mechanics applications across scientific disciplines. From the original superposition approximation for liquid state theory to contemporary molecular dynamics simulations and AI-enhanced drug discovery, these principles enable robust computational methodologies.

The critical importance of convergence criteria in energy minimization ensures reliable results across computational scales, from simple ligand docking to complex protein dynamics. Recent advances demonstrate that biologically relevant properties can achieve convergence in multi-microsecond trajectories, validating MD as a powerful tool for investigating equilibrium molecular properties [5].

The ongoing integration of Kirkwood's theoretical legacy with artificial intelligence and high-performance computing continues to drive innovation in molecular modeling and drug discovery, compressing development timelines while enhancing prediction accuracy. This convergence of theoretical rigor and computational power promises to accelerate scientific discovery across physical chemistry and biomedical research.

In molecular dynamics (MD) simulations, the assumptions of convergence and equilibrium form the bedrock upon which the validity of simulation results rests. Yet these concepts are often conflated or overlooked in practice, potentially rendering computational findings meaningless [2] [5]. The fundamental challenge lies in determining whether a simulated trajectory is sufficiently long for the system to have reached thermodynamic equilibrium and for measured properties to have converged to stable values [2]. This distinction carries profound implications—while many biologically interesting properties may converge within multi-microsecond trajectories, other properties, particularly those dependent on infrequent transitions to low-probability conformations, may require substantially more time [5]. This technical guide examines the critical distinctions between convergence and equilibrium within the broader context of energy minimization convergence criteria research, providing researchers with methodologies to rigorously validate their simulation protocols.

Theoretical Foundations: Statistical Mechanics Framework

The Statistical Mechanics Basis of MD

Molecular dynamics simulations derive their theoretical foundation from statistical mechanics, where physical properties of a system are determined by ensemble averages over accessible states [2] [5]. The conformational partition function, Z, represents the weighted volume of available conformational space (Ω):

$$Z={\int}{\Omega }\exp \left(-\frac{E({{{{{{{\bf{r}}}}}}}})}{{K}{B}T}\right)d{{{{{{{\bf{r}}}}}}}}$$ [2] [5]

For a property A, the ensemble average 〈A〉 is calculated as:

$$\langle A\rangle =\frac{1}{Z}{\int}{\Omega }A({{{{{{{\bf{r}}}}}}}})\exp \left(-\frac{E({{{{{{{\bf{r}}}}}}}})}{{K}{B}T}\right)d{{{{{{{\bf{r}}}}}}}}$$ [2] [5]

This mathematical framework reveals a crucial distinction: average properties like distances or angles depend primarily on high-probability regions of conformational space, while properties like free energy and entropy (derived from the partition function itself) require integration over all regions, including low-probability conformations [2]. This theoretical insight explains why different properties may converge at different rates during MD simulations.

Defining Convergence and Equilibrium

For practical application in MD simulations, we adopt the following working definitions:

Property-specific Convergence: Given a system's trajectory of length T and a property Aᵢ extracted from it, with 〈Aᵢ〉(t) representing the average of Aᵢ calculated between times 0 and t, the property is considered "converged" if fluctuations of 〈Aᵢ〉(t) with respect to 〈Aᵢ〉(T) remain small for a significant portion of the trajectory after some convergence time tc (where 0 < tc < T) [2].

System Equilibrium: A system is considered fully equilibrated only when each individual property of interest has reached convergence according to the above definition [2].

This framework acknowledges the possibility of partial equilibrium, where some properties have converged while others require further sampling, particularly those dependent on transitions to low-probability conformational states [2] [5].

Methodological Framework: Assessing Convergence and Equilibrium

Standard Equilibration Protocols

A typical MD simulation protocol begins with energy minimization of the system, followed by equilibration steps where the system is heated and pressurized to target values, culminating in an unrestrained production simulation to allow phase space exploration [2] [5]. For crystal-derived structures, a progressive relaxation approach is recommended:

- Fix heavy atoms to allow added hydrogens and solvent to adjust to the crystallographic environment using steepest descents minimization [10]

- Tether main chain atoms while side chains adjust, typically with surface side chains relaxed before interior residues [10]

- Gradually decrease tethering constants for backbone atoms until the system can be fully relaxed [10]

Table 1: Standard Energy Minimization Algorithms and Applications

| Algorithm | Best Use Case | Key Considerations | Convergence Indicators |

|---|---|---|---|

| Steepest Descents | Initial steps (first 10-100) with large gradients; highly distorted structures | Most robust for systems far from minimum; convergence slows near minimum [10] [15] | Derivatives ~10 kcal mol⁻¹ Å⁻¹ before switching algorithms [10] |

| Conjugate Gradient | Intermediate to final minimization stages; large systems | Requires storage of previous 3N gradients/directions; more complete line searches needed [10] [15] | Fletcher-Reeves or Polak-Ribière formulations; sensitive to non-harmonic systems [15] |

| Newton-Raphson | Final convergence near minimum; small systems | Requires Hessian matrix (N(N+1)/2 storage); ideal convergence in one step for quadratic systems [15] | Uses second derivatives to accelerate convergence; computationally expensive [15] |

Convergence Assessment Methodologies

Property Monitoring Approaches

The standard approach for assessing equilibration involves monitoring key properties as functions of simulation time, observing when they stabilize to relatively constant values (plateau regions) [2]. Essential monitoring metrics include:

- Energetic properties: Total potential energy, kinetic energy, enthalpy [10]

- Structural properties: Root-mean-square deviation (RMSD) from initial structure, radius of gyration [2]

- System properties: Temperature, pressure, density [16]

More sophisticated approaches include time-averaged mean-square displacements and autocorrelation functions of key properties, though these are more computationally intensive and less frequently employed [2].

Advanced Statistical Measures

For rigorous convergence assessment, particularly in research requiring quantitative accuracy, more advanced methodologies are recommended:

- Block averaging analysis: Calculating property averages over increasing trajectory blocks to identify stabilization

- Autocorrelation function decay: Assessing the timescale at which property autocorrelation functions decay to zero [2]

- Statistical inefficiency calculations: Quantifying the correlation time between independent samples

These advanced methods help address the fundamental challenge that finite-time averages from MD simulations inevitably differ from theoretical infinite-time averages, with no certain method to determine the discrepancy when the true value is unknown [2].

Quantitative Convergence Criteria

Energy Minimization Convergence Standards

The convergence criteria for energy minimization depend fundamentally on the research objectives, with different requirements for different applications:

Table 2: Energy Minimization Convergence Criteria for Different Applications

| Application Context | Recommended Maximum Derivative | Typical Iterations | Special Considerations |

|---|---|---|---|

| Pre-dynamics Relaxation | 1.0 kcal mol⁻¹ Å⁻¹ | 100-1,000 | Sufficient for removing bad contacts before MD [10] |

| Normal Mode Analysis | 10⁻⁵ kcal mol⁻¹ Å⁻¹ or less | 10,000+ | Essential for accurate frequency calculations [10] |

| Binding Energy Estimation | 0.02-0.5 kcal mol⁻¹ Å⁻¹ | 1,000-5,000 | Must consider complete thermodynamic cycle [10] |

| Protein System Minimization | 0.02-0.5 kcal mol⁻¹ Å⁻¹ | Varies by size | Example: DHFR-trimethoprim required ~14,000 iterations to 0.0002 kcal mol⁻¹ Å⁻¹ [10] |

Convergence should be assessed using multiple metrics simultaneously, with the root-mean-square (rms) derivative generally providing a better measure than simple averages, as it weights larger derivatives more heavily [10]. Critically, the maximum derivative should always be checked to ensure no localized high-force regions persist [10].

Practical Convergence Assessment in MD

For production MD simulations, convergence assessment requires monitoring multiple properties across different temporal regimes:

Diagram 1: MD Convergence Assessment Workflow

Experimental Evidence and Case Studies

Dialanine: A Model System with Complex Behavior

The dialanine molecule, despite being a simple 22-atom system, reveals surprising complexity in convergence behavior. As a toy model of a protein, its small size suggests it should reach equilibrium within typical MD simulation timescales [2]. However, studies demonstrate that even in this minimal system, certain properties may remain unconverged while others appear stable—illustrating the concept of partial equilibrium and the property-dependent nature of convergence [2].

Real-World Implications for Biomolecular Simulations

Recent analysis of multi-microsecond to hundred-microsecond trajectories reveals that properties of greatest biological interest often do converge within achievable simulation timescales, validating many current MD applications [5]. However, significant challenges remain for:

- Transition rates to and from low-probability conformations [2] [5]

- Free energy calculations requiring thorough sampling of all conformational regions [2]

- Entropy estimates dependent on complete partition function evaluation [2] [10]

This evidence supports a nuanced perspective: while full thermodynamic equilibrium may rarely be achieved in practical MD simulations, partial equilibrium sufficient for many biological applications is often attainable [2].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Computational Tools for MD Convergence Studies

| Tool/Reagent | Function/Purpose | Implementation Considerations |

|---|---|---|

| GROMACS MD Suite | Open-source MD software with complete simulation workflow [16] | Supports major force fields; includes energy minimization, equilibration, production MD, and analysis tools [16] |

| Force Fields (ffG53A7) | Defines potential energy function and parameters for MD [16] | Must be appropriate for system; ffG53A7 recommended for proteins with explicit solvent in GROMACS 5.1 [16] |

| Molecular Topology File (.top) | Describes molecular parameters, bonding, force field, and charges [16] | Generated from PDB coordinates; defines system composition and interactions [16] |

| Parameter File (.mdp) | Defines simulation parameters and algorithms [16] | Controls integrator, thermostating, barostating, and output frequency [16] |

| Steepest Descents Minimizer | Initial minimization algorithm for distorted structures [10] [15] | Used for first 10-100 steps before switching to more efficient algorithms [10] |

| Conjugate Gradient Minimizer | Intermediate minimization for large systems [10] [15] | Requires storage of 3N previous gradients; efficient for protein-sized systems [15] |

Best Practices and Implementation Guidelines

Protocol for Reliable MD Simulations

A robust MD protocol should incorporate these essential steps for ensuring convergence:

System Preparation

Solvation and Neutralization

Progressive Equilibration

Convergence Validation

Common Pitfalls and Mitigation Strategies

- Insufficient sampling: Biological timescales may exceed practical simulation times; use enhanced sampling techniques for rare events [2] [5]

- Force field limitations: Different force fields may produce varying convergence behavior; consider force field validation for specific systems [17]

- Inadequate minimization: Poorly minimized starting structures can artifactually slow equilibration; follow progressive minimization protocols [10]

- Misinterpretation of plateaus: Apparent convergence may represent trapping in local minima; replicate findings with different initial conditions [2]

The critical distinction between convergence and equilibrium in molecular dynamics simulations demands careful attention in computational research. While full thermodynamic equilibrium may be an idealized state rarely achieved in practical biomolecular simulations, the concept of partial equilibrium—where biologically relevant properties reach convergence—provides a practical framework for validating MD results. By implementing rigorous convergence criteria, monitoring multiple properties across appropriate timescales, and understanding the statistical mechanical principles underlying these concepts, researchers can enhance the reliability and interpretability of their molecular simulations. As MD continues to grow in importance for drug development and molecular biology, robust methodologies for assessing convergence and equilibrium will remain essential for generating scientifically valid insights.

The accurate prediction of protein-ligand binding affinity is a cornerstone of computer-aided drug discovery. These predictions directly enable the ranking of candidate molecules, a critical step in prioritizing compounds for synthesis and further testing. However, the reliability of these rankings is fundamentally dependent on the convergence of the underlying computational methods. This technical guide explores the critical relationship between convergence criteria in energy minimization and sampling processes, and their ultimate impact on the accuracy of binding affinity predictions and subsequent drug candidate rankings. Framed within the context of energy minimization convergence criteria in molecular dynamics and emerging quantum-classical hybrid models, this review synthesizes current methodologies, benchmarks performance across techniques, and provides a detailed protocol for researchers to evaluate convergence in their own workflows, thereby supporting more robust and reliable decision-making in drug development pipelines.

In drug discovery, the computational ranking of candidate molecules relies on the accurate prediction of binding affinity, a quantitative measure of the strength of interaction between a small molecule (ligand) and a target protein. The free energy of binding (ΔG) is the key thermodynamic quantity sought, with more negative values indicating tighter binding [18]. Critically, binding affinity is a state function, and its accurate computation depends on sufficiently sampling the relevant conformational space of the ligand-receptor complex to achieve a converged ensemble average [19].

The failure to achieve convergence during energy minimization and molecular dynamics (MD) simulations introduces significant errors into binding affinity predictions. As noted in one analysis, "computational approaches for predicting the binding affinity of ligand–receptor complex structures often fail to validate experimental results satisfactorily due to insufficient sampling" [19]. This sampling insufficiency prevents the system from reaching a thermodynamic equilibrium, leading to unreliable free energy estimates and, consequently, a misleading ranking of drug candidates. Therefore, understanding, monitoring, and ensuring convergence is not merely a technical exercise but a fundamental prerequisite for generating trustworthy data in structure-based drug design.

The Convergence Challenge in Computational Methods

A wide spectrum of computational methods exists for binding affinity prediction, each with distinct convergence characteristics and trade-offs between computational speed and predictive accuracy.

Table 1: Comparison of Binding Affinity Prediction Methods and Their Convergence Properties

| Method | Typical Compute Time | Key Convergence Metric | Expected RMSE (kcal/mol) | Primary Convergence Challenge |

|---|---|---|---|---|

| Molecular Docking | Minutes (CPU) | Pose reproducibility and scoring function stability | 2.0 - 4.0 | Limited conformational sampling; simplistic scoring functions [18] |

| MM/PB(GB)SA | Hours - Days (GPU) | Stability of enthalpy and solvation terms over trajectory snapshots | ~1.5 - 3.0 | Inadequate sampling of complex conformational changes; noisy entropy estimates [18] |

| Alchemical Methods (FEP, TI, BAR) | Days (GPU) | Free energy difference over lambda windows; hysteresis between forward/backward transitions | ~1.0 - 2.0 | Overcoming high energy barriers between states; sufficient sampling of water positions [20] [19] |

| Hybrid Quantum-Classical ML | Hours - Days (GPU) | Model performance on validation set; parameter efficiency | Varies by system and model architecture | Expressivity of quantum neural networks; noise in NISQ devices [21] [22] |

The core challenge across all methods is that the calculated energies are often comprised of large terms with opposing signs. For instance, in MM/GBSA calculations, the gas-phase enthalpy (ΔHgas) and solvation free energy (ΔGsolvent) can each be on the order of -200 to +100 kcal/mol, yet their sum yields a much smaller net binding affinity, typically in the range of -15 to -4 kcal/mol [18]. A relatively small percentage error in these large constituent terms results in a massive error in the final predicted binding affinity, rendering the ranking of candidates unreliable.

Quantitative Impact of Convergence on Prediction Accuracy

The direct correlation between convergence quality and prediction accuracy is evident in benchmark studies. A large-scale evaluation of Relative Binding Free Energy (RBFE) calculations across 22 protein targets and 598 ligands highlighted that prediction inaccuracies can be attributed not only to force field parameters but also significantly to the convergence of the simulations [20]. The study found that "large perturbations and nonconverged simulations lead to less accurate predictions," underscoring the necessity of adequate sampling for reliable results.

Similarly, advanced sampling protocols have demonstrated the tangible benefits of achieving convergence. A study on G-protein coupled receptors (GPCRs) utilized a modified Bennett Acceptance Ratio (BAR) method to ensure robust sampling. The results showed a "significant correlation with the experimental pK_D values, as evidenced by the high correlation (R² = 0.7893)" for agonists bound to the β1 adrenergic receptor, a level of accuracy that is only possible with well-converged simulations [19].

The emergence of hybrid quantum-classical machine learning models offers a new perspective on convergence and efficiency. These models aim to achieve performance comparable to classical deep learning models but with greater parameter efficiency, which can be viewed as a form of model convergence during training. One study demonstrated that a hybrid quantum-classical convolutional neural network could reduce the number of trainable parameters by 20% while maintaining performance, also leading to a 20-40% reduction in training time [22]. This parameter efficiency indicates a more optimized and effective path to a converged model state.

Experimental Protocols for Assessing Convergence

To ensure the reliability of binding affinity predictions, researchers must implement rigorous experimental protocols to assess convergence. The following methodologies are critical.

Protocol for Molecular Dynamics and Alchemical Simulations

This protocol is adapted from studies investigating binding free energies using the BAR method and MM/GBSA approaches [19] [18].

System Preparation:

- Pruning: Prune the protein structure to a fixed radius (e.g., 10-12 Å) around the binding site to reduce computational cost, unless simulating the entire protein.

- Solvation and Ions: Solvate the system in an explicit water model (e.g., TIP3P) within a periodic box. Add ions to neutralize the system's charge and achieve a physiological salt concentration (e.g., 150 mM).

- Energy Minimization: Perform energy minimization using a steepest descent algorithm until the maximum force is below a chosen threshold (e.g., 1000 kJ/mol/nm), ensuring no steric clashes.

Equilibration:

- NVT Ensemble: Heat the system gradually to the target temperature (e.g., 300 K) over a short simulation (e.g., 10-100 ps) using a thermostat.

- NPT Ensemble: Equilibrate the system density by running a simulation (e.g., 100 ps-1 ns) using a barostat to maintain constant pressure (e.g., 1 bar).

Production Simulation & Sampling:

- Run Production MD: Conduct an MD simulation in the NPT ensemble for a duration sufficient to sample relevant motions. For binding site residues, this often requires tens to hundreds of nanoseconds.

- Snapshot Extraction: After discarding the initial equilibration phase (e.g., the first 10-20% of the production run), extract snapshots at regular intervals (e.g., every 100 ps) for subsequent analysis.

Convergence Diagnostics for MD:

- Root Mean Square Deviation (RMSD): Monitor the protein backbone and ligand RMSD relative to the initial structure. Convergence is suggested when the RMSD plateau fluctuates around a stable average.

- Potential Energy and Density: Ensure the total potential energy and system density have stabilized without a drift.

- Block Averaging: Calculate the property of interest (e.g., enthalpy) over increasingly larger blocks of time. The variance between blocks should decrease as block size increases, indicating statistical convergence.

Protocol for Alchemical Free Energy Calculations (e.g., FEP, BAR)

- Lambda Window Setup: Divide the alchemical transformation path between the ligand and receptor (or between two ligands) into a sufficient number of intermediate λ states (e.g., 10-20 windows).

- Equilibration per Window: For each λ window, perform a full equilibration protocol (minimization, NVT, NPT) as described in section 4.1.

- Sampling per Window: Run production simulations for each λ window. The required time per window can vary significantly but must be long enough to sample transitions.

- Convergence Diagnostics for Alchemical Calculations:

- Overlap Analysis: Calculate the distribution overlap of the potential energy between adjacent λ windows. Poor overlap indicates insufficient sampling and a need for more windows or longer simulation times.

- Hysteresis Calculation: Perform the transformation in both the forward and reverse directions. The difference in the calculated free energy (hysteresis) should be close to zero for a fully converged simulation.

- Statistical Error Analysis: Use methods like the bootstrap method or analyze the standard error of the mean over time to estimate the uncertainty of the final free energy estimate.

The following workflow diagram illustrates the key decision points in a convergence-focused simulation protocol:

Advanced and Emerging Approaches

Consensus Ranking to Mitigate Convergence Failures

Consensus strategies offer a powerful solution to mitigate the impact of poor convergence or methodological failures in individual docking or scoring programs. The underlying principle is that the collective performance of multiple independent methods is more robust than any single method. The Exponential Consensus Ranking (ECR) method has been shown to outperform traditional consensus approaches [23]. ECR combines results from multiple docking programs by assigning an exponential score to each molecule based on its rank in each individual program:

P(i) = (1/σ) * Σ_j exp( -r_i^j / σ )

where P(i) is the final consensus score for molecule i, r_i^j is the rank of molecule i in program j, and σ is a scaling parameter. This method effectively acts as a logical "OR", giving high scores to molecules that rank well in any of the programs, thus providing resilience against a single method's convergence failure.

Hybrid Quantum-Classical Machine Learning

Hybrid Quantum-Classical models represent a frontier in achieving performance convergence with greater efficiency. These models integrate parameterized quantum circuits with classical neural networks. The primary convergence-related advantage is their potential for strong expressivity with fewer parameters. Studies propose Hybrid Quantum Neural Networks (HQNNs) that can "reduce the number of parameters while maintaining or improving performance compared to classical counterparts" [21]. This parameter efficiency suggests a more direct path to a well-converged model. However, a key challenge is the feasibility of such models on current Noisy Intermediate-Scale Quantum (NISQ) hardware, where quantum noise can impede the convergence of the quantum circuit parameters [21].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Computational Tools for Binding Affinity and Ranking Studies

| Tool / Reagent | Type | Primary Function in Research | Application Context |

|---|---|---|---|

| GROMACS | Software Suite | Molecular dynamics simulation engine for running energy minimization, equilibration, and production MD. | MD, MM/(P)GBSA, Alchemical FEP/TI/BAR [20] [19] |

| AutoDock Vina | Software Suite | Molecular docking program for fast pose prediction and initial scoring. | Docking, Virtual Screening [23] |

| OpenFF (Parsley, Sage) | Force Field | Provides small-molecule force field parameters for MD simulations. | MD, RBFE calculations [20] |

| PyTraj / MDTraj | Software Library | Python tools for analyzing MD trajectories (e.g., RMSD, SASA calculations). | Post-processing of MD simulations [18] |

| PDBbind Database | Database | Curated database of protein-ligand complexes with experimental binding affinity data. | Method benchmarking and training [21] [22] |

| Quantum Processing Unit (QPU) | Hardware | Executes parameterized quantum circuits as part of a hybrid machine learning model. | Hybrid Quantum-Classical ML for affinity prediction [21] |

Convergence is the linchpin of reliable binding affinity prediction and, by extension, successful drug candidate ranking. As computational methods evolve from traditional molecular dynamics to sophisticated alchemical calculations and hybrid quantum-classical models, the fundamental requirement for thorough sampling and model stabilization remains unchanged. Researchers must prioritize convergence diagnostics not as an optional post-processing step, but as an integral component of the simulation workflow. By adhering to rigorous protocols, leveraging consensus strategies, and critically evaluating emerging methods, scientists can significantly enhance the predictive power of their computational pipelines. This, in turn, accelerates the identification of viable drug candidates and de-risks the entire drug discovery process.

Convergence in Practice: Algorithms, Criteria, and Implementation Strategies

This guide provides an in-depth comparison of three fundamental optimization algorithms—Steepest Descent, Conjugate Gradient, and L-BFGS—within the context of energy minimization for scientific computing and drug development. Energy minimization represents a critical step in computational chemistry, molecular dynamics, and drug design workflows, where identifying stable molecular configurations directly impacts the accuracy of subsequent simulations. We present a technical analysis of each algorithm's mathematical foundation, convergence behavior, and computational characteristics, supplemented by structured performance comparisons and implementation protocols. By establishing clear selection criteria based on problem dimension, computational budget, and desired accuracy, this guide aims to equip researchers with the necessary knowledge to optimize their energy minimization workflows effectively.

Energy minimization constitutes a foundational procedure in computational science, serving as the critical first step in molecular dynamics simulations, drug docking studies, and materials science research. The process involves finding the atomic coordinates that correspond to the minimum value of a system's potential energy function, which represents the most stable configuration of the molecular system [24]. In practical applications such as drug discovery, researchers must generate and minimize numerous conformations for each candidate molecule before docking them into protein binding sites to calculate binding affinity [24].

The efficiency and effectiveness of energy minimization algorithms directly impact research productivity and simulation accuracy. Within this context, three algorithms have emerged as fundamental tools: Steepest Descent provides robustness through simplicity, the Conjugate Gradient method offers balanced performance for medium-scale problems, and Limited-memory BFGS (L-BFGS) delivers superior convergence characteristics for large-scale systems [25] [26]. This guide examines these algorithms within the broader framework of energy minimization convergence criteria research, providing researchers and drug development professionals with a comprehensive reference for algorithm selection and implementation.

Theoretical Foundations

Energy Minimization Problem Formulation

In computational chemistry and molecular modeling, energy minimization is formulated as an optimization problem where the vector $\mathbf{r}$ represents all $3N$ atomic coordinates of a system containing $N$ atoms [25]. The objective is to find the coordinate set $\mathbf{r}^*$ that minimizes the potential energy function $V(\mathbf{r})$:

$$\mathbf{r}^* = \arg\min V(\mathbf{r})$$

This potential energy function $V(\mathbf{r})$ characterizes the interatomic interactions within the system, with its gradient $\mathbf{F} = -\nabla V(\mathbf{r})$ representing the forces acting on atoms [25]. The minimization process drives the system toward equilibrium configurations where net forces approach zero, corresponding to local or global energy minima.

Convergence Criteria

Establishing appropriate convergence criteria represents a critical aspect of energy minimization. The algorithm termination typically occurs when the maximum absolute value of the force components falls below a specified threshold $\epsilon$ [25]:

$$\max(|\mathbf{F}|) < \epsilon$$

A reasonable value for $\epsilon$ can be estimated from the root mean square force of a harmonic oscillator at temperature $T$ [25]:

$$f = 2\pi\nu\sqrt{2mkT}$$

where $\nu$ represents the oscillator frequency, $m$ the mass, and $k$ Boltzmann's constant. For molecular systems, $\epsilon$ values between 1 and 10 kJ mol$^{-1}$ nm$^{-1}$ are generally acceptable [25]. Recent research has further refined convergence assessment, particularly for free-energy calculations where the relationship between bias measures and sample variance informs stopping criteria [27].

Algorithm Specifications

Steepest Descent

Mathematical Formulation

The Steepest Descent algorithm follows a straightforward iterative process where new positions are calculated according to [25]:

$$\mathbf{r}{n+1} = \mathbf{r}n + \frac{\mathbf{F}n}{\max(|\mathbf{F}n|)} h_n$$

Here, $hn$ represents the maximum displacement and $\mathbf{F}n$ is the force vector. The algorithm employs an adaptive step size control mechanism where $h{n+1} = 1.2 hn$ if $V{n+1} < Vn$ (successful step), and $hn = 0.2 hn$ if $V{n+1} \geq Vn$ (unsuccessful step) [25].

Characteristics and Applications

Steepest Descent demonstrates particular strength in the early stages of minimization and for systems far from equilibrium. Although not the most efficient algorithm for final convergence, its robustness and implementation simplicity make it valuable for initial minimization steps [25]. The method requires only gradient information and minimal memory storage, making it applicable to very large systems where memory constraints may prohibit using more sophisticated algorithms.

Conjugate Gradient Method

Mathematical Foundation

The Conjugate Gradient method improves upon Steepest Descent by incorporating information from previous search directions. For a quadratic objective function $f(\mathbf{x}) = \frac{1}{2}\mathbf{x}^T\mathbf{A}\mathbf{x} - \mathbf{x}^T\mathbf{b}$, the method generates search directions that satisfy the conjugacy property $\mathbf{p}i^T\mathbf{A}\mathbf{p}j = 0$ for $i \neq j$ [28].

The algorithm proceeds iteratively through the following steps [28]:

- Initialize: $\mathbf{x}0$, $\mathbf{r}0 = \mathbf{b} - \mathbf{A}\mathbf{x}0$, $\mathbf{p}0 = \mathbf{r}_0$

- For $k = 0, 1, \ldots$ until convergence:

- Compute $\alphak = \frac{\mathbf{p}k^T\mathbf{r}k}{\mathbf{p}k^T\mathbf{A}\mathbf{p}k}$

- Update $\mathbf{x}{k+1} = \mathbf{x}k + \alphak\mathbf{p}k$

- Compute $\mathbf{r}{k+1} = \mathbf{b} - \mathbf{A}\mathbf{x}{k+1}$

- Compute $\betak = \frac{\mathbf{r}{k+1}^T\mathbf{A}\mathbf{p}k}{\mathbf{p}k^T\mathbf{A}\mathbf{p}k}$

- Update $\mathbf{p}{k+1} = \mathbf{r}{k+1} - \betak\mathbf{p}k$

In nonlinear applications such as energy minimization, the matrix $\mathbf{A}$ is replaced by the Hessian of the potential energy function.

Characteristics and Applications

The Conjugate Gradient method typically converges more rapidly than Steepest Descent, particularly near energy minima [25]. A significant limitation in molecular dynamics applications is that standard Conjugate Gradient cannot be used with constraints, meaning flexible water models must be employed when simulating aqueous systems [25]. The method finds particular utility in minimization prior to normal-mode analysis, where high accuracy is required [25].

L-BFGS Algorithm

Mathematical Foundation

The Limited-memory BFGS algorithm approximates the BFGS method using a limited amount of computer memory. Instead of storing the full inverse Hessian matrix (which requires $O(n^2)$ memory for $n$ variables), L-BFGS maintains only the last $m$ (typically $m < 10$) pairs of position and gradient differences [26].

Let $\mathbf{s}k = \mathbf{x}{k+1} - \mathbf{x}k$ and $\mathbf{y}k = \nabla f{k+1} - \nabla fk$. The inverse Hessian approximation is updated using [26]:

$$\mathbf{H}{k+1} = (\mathbf{I} - \rhok\mathbf{s}k\mathbf{y}k^T)\mathbf{H}k(\mathbf{I} - \rhok\mathbf{y}k\mathbf{s}k^T) + \rhok\mathbf{s}k\mathbf{s}_k^T$$

where $\rhok = \frac{1}{\mathbf{y}k^T\mathbf{s}k}$. The limited-memory approach efficiently computes the search direction $\mathbf{d}k = -\mathbf{H}k\nabla fk$ without explicitly constructing $\mathbf{H}_k$ [26].

Characteristics and Applications

L-BFGS typically converges faster than Conjugate Gradient methods and has become the "algorithm of choice" for many large-scale optimization problems in machine learning and computational chemistry [26] [29]. The method's approximation of curvature information through gradient differences enables superlinear convergence while maintaining manageable memory requirements. However, the algorithm may face challenges with non-differentiable components or when constraints are present, though variants like L-BFGS-B have been developed to handle simple bound constraints [26].

Performance Comparison

Theoretical Properties

Table 1: Algorithm Characteristics Comparison

| Characteristic | Steepest Descent | Conjugate Gradient | L-BFGS |

|---|---|---|---|

| Convergence Rate | Linear (slow) | Linear/Superlinear | Superlinear |

| Memory Requirements | $O(n)$ | $O(n)$ | $O(mn)$ |

| Computational Cost per Iteration | Low | Moderate | Moderate to High |

| Robustness | High | Moderate | High |

| Implementation Complexity | Low | Moderate | Moderate |

| Handling of Ill-conditioned Problems | Poor | Good | Excellent |

Practical Performance in Scientific Applications

Table 2: Experimental Performance in Real Applications

| Application Context | Steepest Descent Performance | Conjugate Gradient Performance | L-BFGS Performance |

|---|---|---|---|

| Drug Molecule Optimization (Diabetes drugs with rotatable bonds) | Higher final energy | Lower energy values for all molecules [24] | Not specifically reported |

| Cobalt-Copper Nanostructure (Energy tolerance = 0.001) | Did not reach lowest energy | 27 iterations, 363.03 seconds [24] | Not specifically reported |

| General Large-scale Problems | Slow convergence, gets "stuck" [29] | $n$ iterations ≈ one Newton step [29] | Fewer iterations than CG [29] |

| Pre-normal-mode Analysis | Not sufficiently accurate | Required for accuracy [25] | Not yet parallelized in some implementations [25] |

Experimental evidence consistently demonstrates the superiority of advanced optimization methods for energy minimization tasks. In drug discovery applications involving small molecules with multiple rotatable bonds, Conjugate Gradient consistently achieved lower energy conformations compared to Steepest Descent [24]. Similarly, in materials science applications involving cobalt-copper nanostructures, Conjugate Gradient reached convergence in just 27 iterations with an energy tolerance of 0.001, while Steepest Descent failed to achieve the lowest energy configuration even with substantially more computational effort [24].

Implementation Considerations

Algorithm Selection Guidelines

Algorithm Selection Workflow

The selection of an appropriate energy minimization algorithm depends on multiple factors including system size, computational resources, accuracy requirements, and presence of constraints. For small systems (typically < 100 variables) where memory is not a constraint, L-BFGS generally provides the fastest convergence [29]. For large-scale systems where memory limitations exist, Conjugate Gradient methods are preferable due to their $O(n)$ memory requirements [28] [29]. When constraints are necessary in the molecular system (such as rigid water molecules), Steepest Descent may be the only viable option among these three algorithms [25].

Convergence Monitoring and Termination Criteria

Convergence Assessment Workflow

Effective convergence monitoring requires tracking multiple criteria to ensure the minimization has reached a genuine local minimum. The primary criterion is the maximum absolute value of force components, which should fall below a problem-specific threshold ε [25]. Additionally, energy changes between iterations and positional changes should be monitored to detect stagnation. For molecular systems, a reasonable ε value can be estimated from the root mean square force of a harmonic oscillator at the simulation temperature [25]. Recent research on free-energy calculations has established relationships between bias measures and sample variance, providing more sophisticated statistical approaches for convergence assessment [27].

Experimental Protocols

Standard Implementation Parameters

For Steepest Descent implementations, initial maximum displacement $h_0$ is typically set to 0.01 nm, with scaling factors of 1.2 for successful steps and 0.2 for unsuccessful steps [25]. The algorithm requires specification of either maximum force evaluations or force tolerance ε for termination.

For Conjugate Gradient methods, implementation requires a line search procedure to determine step size α_k. The standard Wolfe conditions are commonly employed [30]:

$$f(\mathbf{x}k + \alphak\mathbf{d}k) \leq f(\mathbf{x}k) + \delta\alphak\mathbf{g}k^T\mathbf{d}k$$ $$\mathbf{g}{k+1}^T\mathbf{d}k \geq \sigma\mathbf{g}k^T\mathbf{d}_k$$

with $0 < \delta \leq \sigma < 1$.

For L-BFGS, the critical parameter is the memory size $m$, typically between 3 and 20, representing the number of previous iterations used to approximate the Hessian [26]. Larger values provide better Hessian approximations but increase memory usage. The initial Hessian approximation is usually set to a scaled identity matrix $\gammak\mathbf{I}$, where $\gammak = \frac{\mathbf{s}{k-1}^T\mathbf{y}{k-1}}{\mathbf{y}{k-1}^T\mathbf{y}{k-1}}$ [26].

Research Reagent Solutions

Table 3: Essential Computational Tools for Energy Minimization Research