Energy Minimization Convergence Analysis: Techniques for Robust Biomolecular Simulations in Drug Development

This article provides a comprehensive analysis of energy minimization convergence techniques, a critical prerequisite for stable and accurate biomolecular simulations in pharmaceutical research.

Energy Minimization Convergence Analysis: Techniques for Robust Biomolecular Simulations in Drug Development

Abstract

This article provides a comprehensive analysis of energy minimization convergence techniques, a critical prerequisite for stable and accurate biomolecular simulations in pharmaceutical research. We explore the foundational principles of convergence criteria and the impact of force fields on complex systems like protein-DNA complexes. The review systematically compares the performance of prevalent algorithms—Steepest Descent, Conjugate Gradient, and L-BFGS—and introduces advanced methodologies, including an enhanced Nonlinear Conjugate Gradient method for charged systems. A dedicated troubleshooting guide addresses common failure modes, such as force non-convergence and segmentation faults, while the final section establishes robust validation protocols to distinguish mathematical convergence from true thermodynamic equilibrium, ensuring the reliability of simulation results for downstream drug discovery applications.

Understanding Convergence: The Bedrock of Stable Biomolecular Simulations

In computational science, whether simulating a drug molecule binding to a protein or optimizing a renewable energy grid, the process of energy minimization seeks to find the most stable configuration of a system by locating the lowest point on its energy landscape. The concept of convergence—the point at which this search is considered complete—serves as a critical bridge between mathematical optimization and physical meaning. Properly defining and detecting convergence is not merely a computational formality but a fundamental determinant of simulation reliability. An optimization halted prematurely may yield unrealistic, high-energy configurations, while excessively strict convergence criteria waste computational resources without improving physical relevance.

This guide provides a comprehensive comparison of convergence criteria across energy minimization methodologies, examining how mathematical stopping parameters translate to physically meaningful system states. We analyze convergence standards in computational chemistry, molecular dynamics, and broader optimization contexts, supported by experimental data on algorithm performance and practical protocols for researchers engaged in drug development and molecular simulation.

Theoretical Foundations: Mathematical Criteria for Convergence

Fundamental Convergence Parameters

Across optimization algorithms, convergence is typically determined by monitoring the evolution of three fundamental quantities: the system's potential energy, the forces acting on its components, and the spatial displacement between successive iterations.

Force-Based Criteria: The most physically grounded convergence criterion assesses the root mean square (RMS) or maximum absolute value of forces (the negative energy gradient) acting on the system. When all forces fall below a specified threshold, the system is considered at or near a minimum where the net force is zero. In molecular simulations, a common target is for the maximum force to be smaller than a reference value, often chosen based on the physical context. For example, in GROMACS molecular dynamics simulations, a reasonable value can be estimated from the root mean square force a harmonic oscillator exhibits at a given temperature, with values between 10-100 kJ mol⁻¹ nm⁻¹ generally being acceptable [1].

Energy Change Criteria: This approach monitors the change in potential energy between successive minimization steps. Convergence is achieved when the energy decrease per step falls below a threshold, indicating no significant further energy reduction is possible. This criterion is computationally simple but can be sensitive to numerical noise, particularly when forces are truncated [1].

Displacement Criteria: Some algorithms consider the maximum or RMS change in atomic coordinates between iterations. When particles or design variables stop moving significantly, the algorithm is considered converged. This approach is often used alongside force or energy criteria for added robustness [2].

Algorithm-Specific Convergence Behaviors

Different minimization algorithms exhibit characteristic convergence patterns that influence both criterion selection and interpretation:

Steepest Descent: This robust method quickly reduces energy and forces in initial iterations but typically slows dramatically near the minimum, exhibiting linear convergence. It is often used for initial minimization steps to quickly remove large steric clashes before switching to more efficient algorithms [1] [3].

Conjugate Gradient: While slower initially, conjugate gradient methods accelerate near the minimum and converge superlinearly. They are more efficient for final minimization stages but may be incompatible with constrained systems [1].

Quasi-Newton Methods (L-BFGS): These methods build an approximation to the Hessian matrix (second derivatives) to achieve faster convergence. The L-BFGS variant limits memory usage while maintaining good convergence properties, often outperforming conjugate gradient methods [2] [1].

Table 1: Convergence Characteristics of Common Minimization Algorithms

| Algorithm | Initial Convergence | Final Convergence | Best Application Context |

|---|---|---|---|

| Steepest Descent | Fast initial progress | Slow (linear convergence) | Initial minimization of poorly structured systems |

| Conjugate Gradient | Moderate progress | Fast (superlinear) | Systems without constraints after initial minimization |

| L-BFGS | Fast | Fast (superlinear) | Large systems with limited memory resources |

| Hybrid Methods | Fast | Fast | Complex systems with multiple minima |

Convergence in Practice: Domain-Specific Applications

Molecular Dynamics and Computational Chemistry

In molecular dynamics simulations, energy minimization is a crucial preparatory step before production runs. Convergence ensures the system is free of unrealistic high-energy configurations that could cause instability when dynamics begin. The GROMACS molecular dynamics package implements multiple minimization algorithms with convergence determined primarily by force thresholds [1].

A critical consideration in molecular simulations is that different system properties converge at different rates. Research on asphalt systems demonstrates that while energy and density may equilibrate rapidly, other indicators like pressure and radial distribution functions (RDF) require significantly longer simulation times to converge. In particular, RDF curves for complex components like asphaltene-asphaltene interactions may show considerable fluctuations and irregular peaks until full convergence is achieved, highlighting the risk of relying solely on energy stabilization as an equilibrium indicator [4].

Global Optimization and Metaheuristic Methods

For systems with complex energy landscapes containing multiple local minima, specialized approaches are required to distinguish local convergence from global optimality. Novel methods like Soft-min Energy minimization introduce a smooth, differentiable approximation of the minimum function value within a particle swarm, enabling gradient-based exploration of the global energy landscape [5].

Hybrid algorithms that combine different optimization strategies often demonstrate superior convergence properties. In renewable energy microgrid optimization, hybrid methods like Gradient-Assisted PSO (GD-PSO) and WOA-PSO consistently achieved lower average costs with stronger stability compared to classical approaches, with statistical analyses confirming the robustness of these findings [6].

Table 2: Performance Comparison of Optimization Algorithms in a Microgrid Case Study [6]

| Algorithm | Average Cost | Stability | Computational Cost |

|---|---|---|---|

| ACO (Classical) | Higher | High variability | Moderate |

| PSO (Classical) | Moderate | Moderate | Low |

| WOA (Classical) | Moderate | Moderate | Moderate |

| GD-PSO (Hybrid) | Lowest | High stability | Moderate |

| WOA-PSO (Hybrid) | Low | High stability | Moderate |

Physical Meaning of Convergence Indicators

The mathematical criteria for convergence directly correspond to physically meaningful system states:

Force Convergence: A system with near-zero forces on all components has reached a mechanically stable configuration where all atoms experience minimal net forces. This represents a local minimum on the potential energy surface.

Energy Convergence: When the system energy stops decreasing significantly, it has reached a thermodynamically metastable state. In molecular systems, this typically corresponds to a favorable conformational state.

Spatial Convergence: Minimal atomic displacement between iterations indicates structural stability, where the system geometry remains consistent over time.

The critical insight is that different physical properties converge at different rates, and relying on a single convergence criterion may yield misleading results. For example, in asphalt system simulations, energy stabilizes quickly while representative molecular interactions (as evidenced by RDF curves) may require orders of magnitude longer simulation times to fully converge [4].

Experimental Protocols and Assessment Methodologies

Standard Convergence Testing Protocol

Based on analyzed methodologies, the following protocol provides a robust approach for assessing convergence in energy minimization studies:

System Preparation

- Construct initial system configuration with appropriate boundary conditions

- Apply initial minimization with gentle algorithm (e.g., Steepest Descent) to remove severe steric clashes

- For molecular systems, ensure proper solvation and ionization state

Multi-Stage Minimization

- Begin with steepest descent (5,000-10,000 steps) or until maximum force < 1000 kJ mol⁻¹ nm⁻¹

- Switch to more efficient algorithm (conjugate gradient or L-BFGS) for finer convergence

- Apply progressively stricter convergence criteria in stages

Multi-Metric Monitoring

- Track maximum force and RMS force throughout minimization

- Monitor potential energy evolution

- Record coordinate changes between iterations

- For molecular systems, calculate additional metrics like radius of gyration or specialized order parameters

Convergence Validation

- Verify that all monitored metrics have stabilized

- Confirm physical reasonableness of final configuration (bond lengths, angles, etc.)

- For production simulations, ensure convergence is maintained across replicas

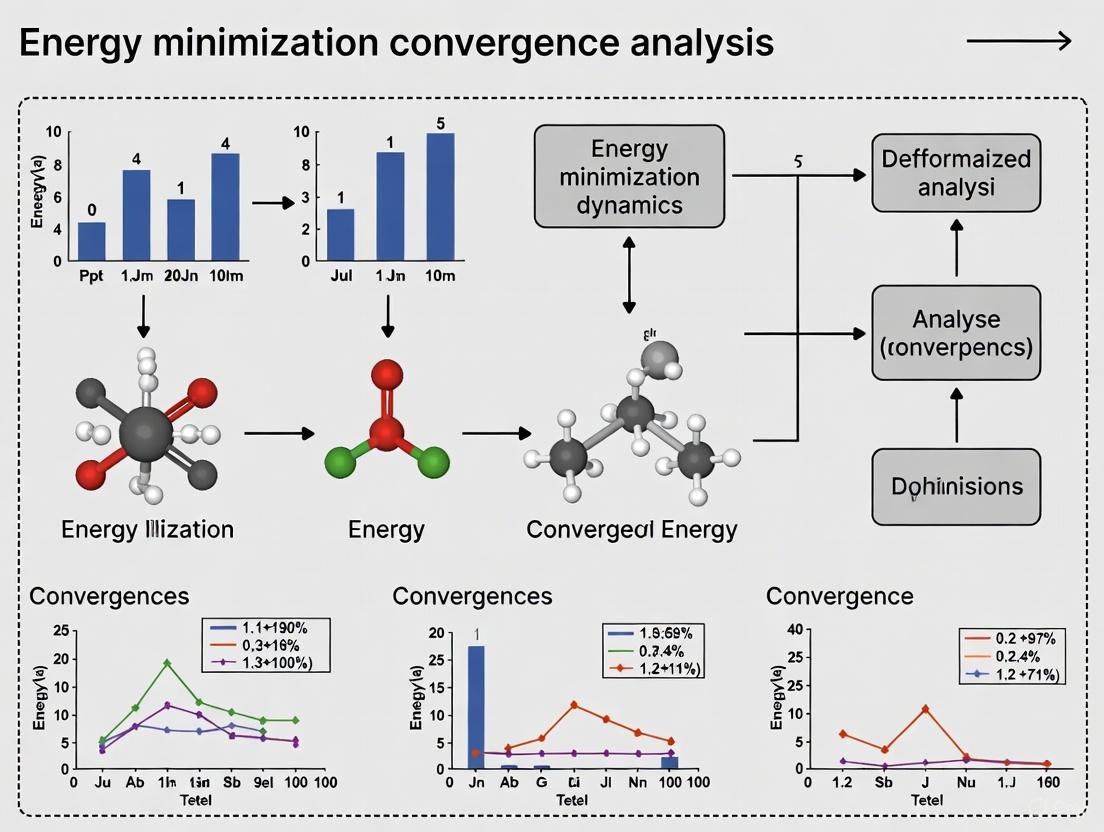

The following workflow diagram illustrates the sequential process for establishing robust convergence in energy minimization procedures:

Advanced Convergence Detection Methods

For complex systems where standard convergence metrics may be misleading, advanced detection methods provide greater reliability:

Radial Distribution Function (RDF) Convergence: In molecular systems, monitoring the stabilization of RDF curves between key components provides a more stringent convergence test than energy alone, particularly for interactions between large, slow-moving molecules like asphaltenes [4].

Statistical Convergence Tests: Implementing statistical tests on fluctuation patterns of energies and coordinates can distinguish true convergence from temporary plateaus.

Multi-Algorithm Verification: Using different minimization algorithms from independent starting points to verify consistent convergence to similar energy values and configurations.

Research demonstrates that temperature significantly impacts convergence rates, with elevated temperatures accelerating convergence but potentially compromising final configuration quality. In asphalt system simulations, raising temperature from 298K to 498K accelerated RDF curve convergence by approximately 50%, though the higher-temperature state represented a different physical regime [4].

Table 3: Research Reagent Solutions for Energy Minimization Studies

| Tool/Category | Specific Examples | Function in Convergence Analysis |

|---|---|---|

| Molecular Dynamics Software | GROMACS, NAMD, CHARMM | Provides implemented minimization algorithms and convergence checking routines |

| Visualization Tools | VMD, PyMol, Chimera | Visual inspection of minimized structures for physical reasonableness |

| Force Fields | CHARMM, AMBER, OPLS-AA | Determines energy landscape characteristics and convergence behavior |

| Analysis Packages | MDTraj, MDAnalysis, GROMACS tools | Calculate convergence metrics and analyze trajectory stability |

| Specialized Optimizers | L-BFGS, Hybrid metaheuristics | Advanced algorithms for challenging convergence landscapes |

Defining convergence in energy minimization requires careful consideration of both mathematical criteria and physical meaning. The most robust approaches implement multiple convergence metrics that monitor forces, energy changes, and structural displacements while verifying physical reasonableness of the final configuration. Algorithm selection significantly impacts convergence behavior, with hybrid methods often providing the best balance of initial progress and final convergence efficiency.

Critically, convergence timescales vary dramatically across different system properties, with fundamental structural interactions sometimes requiring orders of magnitude longer simulation time than energy stabilization. Researchers should therefore select convergence criteria that align with their specific investigative goals—while force-based convergence may suffice for stable molecular dynamics initiation, research focusing on molecular interactions or nanoscale structure requires more stringent, property-specific convergence validation.

The experimental data and methodologies presented in this guide provide a framework for researchers to implement appropriately rigorous convergence standards, ensuring that energy minimization procedures in drug development and molecular simulation yield physically meaningful, reproducible results that faithfully represent the underlying system energetics.

In computational science, whether for simulating molecular dynamics, optimizing material models, or minimizing the energy of a system, the principles governing force, energy, and step size tolerance are fundamental to achieving accurate and reliable results. Convergence analysis ensures that iterative algorithms terminate at a point that is sufficiently close to the true solution, balancing computational cost with numerical precision. These principles are particularly critical in fields like drug discovery and materials science, where the accurate description of molecular interactions and material behavior depends on the faithful representation of a system's energy landscape. Inefficient or inaccurate convergence can lead to significant errors in predicting molecular binding affinities, material properties, or fracture mechanics.

This guide provides a comparative analysis of different convergence techniques and tolerance criteria used in various computational domains. We objectively assess their performance based on experimental data, detailing the protocols used in cited studies, and summarize key reagents and computational tools essential for researchers.

Comparative Analysis of Convergence Techniques and Tolerance Criteria

Convergence Tests in Finite Element Analysis

In Finite Element Analysis, convergence tests measure how close an algorithm is to finding equilibrium at each time step. The common tests operate on the linearized system of equations ( {\bf K}_T \Delta {\bf U} = {\bf R} ), where ( {\bf R} ) is the residual (unbalanced force) vector and ( \Delta {\bf U} ) is the displacement increment vector [7].

Table 1: Convergence Tests in Finite Element Analysis [7]

| Convergence Test | Computational Formula | Tolerance Interpretation | Recommended Use Cases |

|---|---|---|---|

| Norm of Residual Vector (NormUnbalance) | ( |{\bf R}|_2 < tol ) | Acceptable root mean square (RMS) of unbalanced forces. | General-purpose use, unless stiff elements are present. |

| Norm of Displacement Increment (NormDispIncr) | ( |\Delta {\bf U}|_2 < tol ) | Acceptable RMS of displacement increments. | Models containing very stiff elements. |

| Absolute Energy Increment (EnergyIncr) | ( |(\Delta {\bf U})^T{\bf R}| < tol ) | Acceptable absolute value of the energy increment. | Rarely needed; not commonly recommended. |

A key insight is that the tolerance (tol) is a measure of the total error vector. For a model with N degrees of freedom (DOFs), if the equilibrium error e is identical for all DOFs, the error per DOF is approximately ( e \leq tol/\sqrt{N} ) [7]. This implies that for larger models, a stricter tolerance might be necessary to control the error per DOF, or conversely, the tolerance can be scaled with ( \sqrt{N} ) for models of different sizes.

Tolerance Criteria in Quantum Chemistry Geometry Optimization

In quantum chemistry packages like NWChem, geometry optimization involves tolerances on both gradients and the optimization step in Cartesian coordinates. The DRIVER module uses quasi-Newton methods with predefined tolerance sets [8].

Table 2: Standard Geometry Optimization Convergence Criteria in NWChem (Atomic Units) [8]

| Criterion | Description | LOOSE | DEFAULT | TIGHT |

|---|---|---|---|---|

| GMAX | Maximum gradient | 0.00450 | 0.00045 | 0.000015 |

| GRMS | Root mean square gradient | 0.00300 | 0.00030 | 0.00001 |

| XMAX | Maximum Cartesian step | 0.01800 | 0.00180 | 0.00006 |

| XRMS | Root mean square Cartesian step | 0.01200 | 0.00120 | 0.00004 |

The choice of coordinate system (Z-matrix, redundant internals, or Cartesians) can affect the convergence of GMAX and GRMS. However, the Cartesian criteria (XMAX and XRMS) ensure convergence to the same final geometry regardless of the coordinate system used [8].

Energy Conservation in Adaptive Molecular Dynamics

A significant challenge in Molecular Dynamics (MD) with polarizable force fields is conserving energy while computing induced dipoles. The governing equation is ( \hat{T}P = E ), where ( P ) is the vector of induced dipoles, ( E ) is the electric field, and ( \hat{T} ) is a 3N×3N matrix [9]. Solving this linear system iteratively introduces numerical errors that can cause energy drift in NVE (constant number of particles, volume, and energy) simulations.

Advanced schemes have been developed to address this, such as the Preconditioned Conjugate Gradient with Local Iterations (LIPCG) and the use of a Multi-Order Extrapolation (MOE) for better initial guesses using historical dipole information [9]. A "peek" step, implemented via Jacobi Under-Relaxation (JUR), can further accelerate convergence. Evidence suggests that with these methods, energy convergence comparable to point-charge models can be achieved within a limited number of iterations [9].

Van der Waals Treatment and Free Energy Accuracy

The method used to handle the truncation of long-range van der Waals (vdW) interactions significantly impacts the accuracy of free energy calculations. Model system tests (Lennard-Jones spheres, anthracene in water, alkane chains in water) reveal that the choice of cutoff method introduces distinct behaviors [10].

Table 3: Impact of van der Waals Truncation Methods on Free Energy Calculations [10]

| Method | Core Principle | Effect on Free Energy of Solvation | Key Artifacts |

|---|---|---|---|

| Potential Switching | Gradually scales potential to zero between switch and cutoff distances. | Essentially independent of cutoff. | Produces a non-physical "bump" in the pairwise force. |

| Potential Shifting | Applies a constant offset to potential energy equal to its value at cutoff. | Introduces significant cutoff dependence. | Ensures force continuity; second derivative is discontinuous. |

| Force Switching | Algebraically modifies force to be continuous and monotonic, reducing to zero at cutoff. | Introduces significant cutoff dependence. | Modifies both force and potential. |

| LJ-Particle Mesh Ewald | Uses Ewald summation to split interactions into short- and long-range components. | Essentially independent of cutoff. | Eliminates truncation artifacts; higher computational cost. |

Studies show that for reliable free energy calculations, potential switching or LJ-Particle Mesh Ewald (LJ-PME) are preferred, as force switching and potential shifting can introduce cutoff-dependent errors significant enough to affect the utility of the calculations [10].

Experimental Protocols and Workflows

Protocol: Validation of Hyperelastic Constitutive Laws with EUCLID

The EUCLID (Efficient Unsupervised Constitutive Law Identification and Discovery) framework was tested against conventional methods for discovering material models of natural rubber [11].

- Specimen Preparation and Testing: Natural rubber specimens with varying geometries (from simple to complex) were prepared.

- Data Collection: During mechanical tests, both global data (force-elongation) and local data (full-field displacement via Digital Image Correlation - DIC) were collected.

- Conventional Identification (Baseline): Parameters were identified for a priori selected material models (e.g., Mooney-Rivlin, Ogden) by minimizing the discrepancy between experimental and model-predicted quantities.

- EUCLID Discovery: The framework automatically selected a model from a wide library of candidates using sparse regression, unifying model selection and parameter identification without prior bias.

- Performance Assessment: The predictive accuracy and generalization of models from both routes were compared using global vs. local data, assessing their ability to predict behavior in unseen geometries [11].

Protocol: Assessing Energy-Preserving Adaptive Integrators

The performance of energy-preserving, adaptive time-step variational integrators was assessed using Kepler's two-body problem [12].

- System Setup: The conservative Kepler's two-body problem (e.g., a planet orbiting a star) was defined.

- Integrator Comparison:

- Energy-Preserving Adaptive Algorithm: A variational integrator where the time-step is adapted to preserve energy exactly by solving a coupled nonlinear system at every step.

- Standard Adaptive Variational Integrator: An integrator where time adaptation is motivated by computational efficiency, not strict energy conservation.

- Simulation and Measurement: Long-time simulations were run. Time adaptation behavior and energy error ( deviation from the true constant energy) were tracked and compared.

- Backward Error Analysis: The numerical stability of the energy-preserving algorithm was investigated by analyzing the "modified equation" it solves more exactly than the original system [12].

The Scientist's Toolkit: Key Reagents and Computational Solutions

Table 4: Essential Research Reagents and Tools for Convergence Analysis

| Item / Software | Function / Description | Field of Application |

|---|---|---|

| Digital Image Correlation (DIC) | Measures full-field displacement data on deforming specimens. | Experimental Solid Mechanics, Material Model Discovery [11] [13] |

| EUCLID | Unsupervised framework for automated constitutive model discovery via sparse regression. | Hyperelastic Material Modeling [11] |

| Polarizable Gaussian Multipole (pGM) Model | A polarizable force field using Gaussian-shaped multipoles and dipoles for electrostatic interactions. | Molecular Dynamics (Biomolecules, Materials) [9] |

| LIPCG & MOE | Preconditioned Conjugate Gradient solver with Multi-Order Extrapolation for induced dipole calculation. | Polarizable MD Simulations [9] |

| LJ-Particle Mesh Ewald | Lattice summation method for accurate treatment of long-range van der Waals interactions. | Free Energy Calculations [10] |

| Variational Integrators | Structure-preserving numerical integrators derived from discretizing Hamilton's principle. | Long-time Dynamics (Astrophysics, MD) [12] |

| AlphaFold Database | Provides predicted protein structures for targets without experimental models. | Structure-Based Drug Discovery [14] |

| REAL Database | An ultra-large, commercially available on-demand library of virtual compounds. | Virtual Screening in Drug Discovery [14] |

The selection of appropriate convergence tolerances and algorithms is not merely a numerical detail but a critical decision that directly impacts the validity of scientific conclusions. As demonstrated, the optimal choice is highly context-dependent: residual norms may suffice for general finite element analysis, while tight gradient and step criteria are needed for quantum chemistry. For long-time MD stability, energy-preserving adaptive integrators and accurate treatment of long-range forces with LJ-PME are superior, albeit computationally more intensive. The emergence of automated discovery frameworks like EUCLID and the use of ultra-large screening libraries underscore the growing need for robust, efficient, and reliable convergence control across computational science and engineering.

The Critical Role of Force Fields and Parameter Sets in Convergence Behavior

In computational chemistry and structural biology, achieving convergence—the point at which simulated properties stabilize to a reproducible average—is a fundamental prerequisite for obtaining reliable insights from molecular simulations. The potential energy functions, or force fields, and their specific parameter sets are cornerstones of this process, critically determining the accuracy and efficiency with which a system explores its conformational landscape and reaches equilibrium. Within the broader context of energy minimization convergence analysis techniques, understanding how different force fields influence this journey is paramount. A force field that inaccurately describes molecular interactions can lead to a system becoming trapped in non-representative energy minima, resulting in poor convergence and biologically irrelevant results [15]. This guide provides an objective comparison of contemporary force fields, detailing their performance, underlying methodologies, and impact on the convergence behavior of molecular dynamics (MD) simulations.

Force Field Comparison and Performance Data

The predictive accuracy of molecular dynamics simulations is intrinsically linked to the force field employed. Different parameterization philosophies—ranging from generalized automated parameter assignment to highly specialized, quantum mechanics-derived parameters—lead to significant variations in the description of molecular structure, dynamics, and the convergence of key properties.

Table 1: Overview of Featured Force Fields and Their Primary Applications

| Force Field Name | Type | Primary Application Area | Key Differentiating Feature |

|---|---|---|---|

| BLipidFF [16] | Specialized All-Atom | Mycobacterial Outer Membrane Lipids | Parameters derived rigorously from QM calculations for unique bacterial lipids. |

| AMBER ff14SB [17] | General All-Atom | Proteins (often used with GAFF for ligands) | Standard protein force field; often benchmarked for free energy calculations. |

| AMBER ff15ipq [17] | General All-Atom | Proteins | Uses an Implicitly Polarized Charge (IPolQ) model for solvation. |

| CHARMM36m [16] | General All-Atom | Biological Membranes and Polymers | Extensively validated for lipid bilayer properties; accurate for various membrane systems. |

| OPLS2.1 [17] | General All-Atom | Protein-Ligand Binding (FEP+) | Refitted with additional QM data and validated on protein-ligand binding affinities. |

| GAFF/GAFF2 [16] [17] | General All-Atom | Small Organic Molecules | A general force field for drug-like molecules, often paired with AMBER protein FFs. |

The performance of these force fields can be quantitatively assessed through benchmarking studies against experimental data. For instance, in Relative Binding Free Energy (RBFE) calculations—a sensitive test of force field accuracy—different parameter sets yield distinct error margins.

Table 2: Benchmarking Performance in Relative Binding Free Energy Calculations [17]

| Force Field & Parameter Set | Water Model | Ligand Charge Model | Mean Unsigned Error (MUE) in Binding Affinity (kcal/mol) |

|---|---|---|---|

| AMBER ff14SB/GAFF2.11 | TIP3P | AM1-BCC | 1.04 |

| AMBER ff14SB/GAFF2.11 | TIP4P-Ewald | AM1-BCC | 1.04 |

| AMBER ff14SB/GAFF2.11 | SPC/E | AM1-BCC | 1.05 |

| AMBER ff14SB/GAFF2.11 | TIP3P | RESP | 1.10 |

| AMBER ff15ipq/GAFF2.11 | TIP3P | AM1-BCC | 1.13 |

Specialized force fields demonstrate their value by capturing physical properties that general force fields miss. For example, BLipidFF was shown to uniquely capture the high rigidity and slow diffusion rates of α-mycolic acid bilayers in mycobacterial membranes, a result consistent with fluorescence spectroscopy and FRAP experiments. In contrast, general force fields like GAFF, CGenFF, and OPLS failed to accurately describe these key properties, leading to a qualitatively incorrect representation of the membrane's behavior [16].

Detailed Experimental Protocols

The development and validation of force fields rely on rigorous, multi-step protocols. The following methodologies are representative of the approaches used to generate high-quality parameters and assess convergence.

Protocol for Specialized Force Field Parameterization (BLipidFF)

The development of BLipidFF for mycobacterial lipids exemplifies a robust parameterization strategy [16]:

- Atom Type Definition: Atoms are categorized into chemically distinct types based on element and local environment (e.g.,

cTfor tail carbon,cGfor trehalose carbon,oSfor ether oxygen). This granularity allows for precise parameter assignment. - Charge Derivation via Quantum Mechanics (QM):

- Segmentation: Large, complex lipids are divided into smaller, manageable molecular segments.

- QM Calculation: Each segment undergoes geometry optimization at the B3LYP/def2SVP level, followed by electrostatic potential calculation at the B3LYP/def2TZVP level.

- RESP Fitting: The Restrained Electrostatic Potential (RESP) method is used to derive partial atomic charges. To account for conformational flexibility, this process is repeated over 25 snapshots from an MD simulation, with the final charge being the arithmetic average.

- Reassembly: The charges of all segments are integrated to form the complete molecule, after removing capping groups used during the segmentation process.

- Torsion Parameter Optimization: Torsion parameters involving heavy atoms are optimized by minimizing the difference between the potential energy surface calculated by QM and the classical potential. This often requires further subdivision of the molecules to make high-level QM calculations computationally feasible.

- Validation: The finalized force field is validated by running MD simulations and comparing the results with biophysical experimental data, such as order parameters and lateral diffusion coefficients.

Protocol for Assessing Convergence in MD Simulations

Determining whether a simulation has reached equilibrium is critical for reliable data analysis. The following protocol is recommended [15]:

- Property Selection: Select a set of structural and dynamical properties relevant to the biological question (e.g., Root-Mean-Square Deviation (RMSD), radius of gyration, specific interatomic distances, or dihedral angles).

- Running Average Calculation: For each property, calculate a cumulative moving average as a function of simulation time.

- Plateau Identification: Monitor the running averages to identify if they fluctuate around a stable value with small deviations. The time at which this plateau begins is the estimated convergence time (

t_c). - Statistical Checks: A system can be considered partially equilibrated for a specific property if the fluctuations of its running average remain small for a significant portion of the trajectory after

t_c. Full equilibrium is approached when all properties of interest meet this criterion.

It is crucial to note that while properties dependent on high-probability conformational regions (e.g., average distances) may converge in multi-microsecond trajectories, properties reliant on rare events (e.g., transition rates between infrequent states) may require much longer simulation times [15].

Workflow for Force Field Comparison in Protein Simulations

A standardized workflow for comparing force field performance, as applied in studies of ubiquitin and GB3, involves [18]:

- System Preparation: Starting from the same experimental protein structure (e.g., from the PDB), prepare identical simulation systems differing only in the force field applied.

- Extended Sampling: Run multiple, long-timescale MD simulations (on the order of microseconds per force field) using a consistent water model and simulation conditions.

- Ensemble Analysis: Use Principal Component Analysis (PCA) to project the structural ensembles from each simulation onto a common essential subspace, revealing the large-scale motions sampled.

- Experimental Validation: Compare a wide range of observables derived from the simulations (e.g., residual dipolar couplings, scalar couplings, order parameters) with NMR experimental data.

- Cross-Comparison: Quantitatively compare the conformational ensembles generated by different force fields to each other to identify systematic differences in sampled structural space.

Figure 1: Force Field Comparison Workflow. This diagram outlines the logical process for objectively comparing the performance of different molecular force fields.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful simulation studies depend on a suite of software tools and parameter databases. The table below details key resources relevant to force field application and convergence analysis.

Table 3: Key Research Reagents and Computational Tools

| Tool/Resource Name | Type | Primary Function | Relevance to Convergence |

|---|---|---|---|

| Gaussian & Multiwfn [16] | Software Suite | Quantum Mechanical Calculations & RESP Charge Fitting | Generates high-quality electronic structure data for deriving accurate force field parameters. |

| Alchaware & OpenMM [17] | MD Automation & Engine | Automated FEP Setup & Simulation | Provides an open-source platform for running free energy calculations and assessing force field performance. |

| AMBER Tools & Antechamber [17] | Parameterization Suite | Force Field Parameter Assignment | Used to generate GAFF parameters and AM1-BCC charges for small molecules. |

| BLipidFF Parameters [16] | Force Field Database | Specialized Parameters for Bacterial Lipids | Enables accurate simulation of complex bacterial membranes, which behave poorly with general FFs. |

| JACS Benchmark Set [17] | Curated Dataset | Validation Set for Free Energy Calculations | A standard set of 8 protein-target systems for benchmarking RBFE predictions across force fields. |

The choice of a force field and its parameter set is a critical determinant of convergence behavior and predictive accuracy in molecular simulations. As evidenced by benchmarking studies, general force fields can perform well for a wide range of systems but may fail to capture the unique physics of specialized components like bacterial membrane lipids. The emergence of rigorously parameterized, QM-derived force fields like BLipidFF demonstrates the significant gains in accuracy possible through targeted development. Convergence is not a universal guarantee; it must be verified for each property of interest, with the understanding that multi-microsecond trajectories may be necessary for biologically relevant properties to stabilize, while rare events may remain elusive. Therefore, a careful, purpose-driven selection of force fields, coupled with rigorous convergence analysis, is indispensable for producing reliable and meaningful simulation data in energy minimization and dynamics studies.

Analyzing the Energy Landscape of Complex Biomolecular Systems

The energy landscape perspective provides a fundamental conceptual and computational framework for predicting, understanding, and designing molecular properties of biological systems [19]. Every biomolecule possesses specific functional characteristics governed by its underlying energetics, which can be visualized as a multidimensional surface where elevation corresponds to energy and coordinates represent conformational degrees of freedom [20] [19]. For proteins and nucleic acids that have evolved to perform specific biological functions, these landscapes are typically "funneled," enabling rapid and reliable folding to native states while resolving Levinthal's paradox—the apparent contradiction between the vast conformational space and rapid folding times observed in nature [20] [19]. According to the principle of minimal frustration, native states are characterized by the formation of all native contacts required by the sequence, leading to a single dominant funnel in the potential energy landscape [19].

An emerging theme in modern biochemistry reveals that many biomolecules exhibit multifunnel energy landscapes, particularly those capable of performing multiple functions or existing in intrinsically disordered states [19]. Such landscapes contain distinct funnels corresponding to alternative stable configurations, allowing structural heterogeneity that enables different biological functions. This organization extends the principle of minimal frustration, where we expect the energy landscape to support several funnels associated with distinct functions, with populations that can be modulated by environmental conditions or cellular signals [19]. Understanding the topography of these complex landscapes—including the locations of minima, transition states, and barriers between them—provides critical insights into biomolecular folding, function, and dysfunction in disease states.

Computational Methodologies for Energy Landscape Analysis

Theoretical Foundations and Energy Landscape Theory

The energy landscape theory of biomolecular dynamics, grounded in statistical mechanics, has provided a comprehensive framework for understanding both the folding and functional properties of biomolecules [20]. This theoretical framework demonstrates that for proteins to be foldable, there must exist an ensemble of routes through which the molecule can reach its folded configuration, proceeding as a diffusive process over a relatively smooth energy landscape that lacks large kinetic traps [20]. The statistical-mechanical foundation of this approach draws important analogies from spin glass systems, where the relationship between order-disorder transitions, glassy dynamics, and energetic frustration are fundamental aspects of the energy landscape framework [20].

In highly frustrated systems with substantial energetic roughness relative to the stabilizing energy gap, thermal fluctuations become insufficient for escaping local minima, leading to divergent timescales in system dynamics [20]. Conversely, when the stabilizing energy gap between folded and unfolded ensembles is large compared to the energetic roughness, the landscape exhibits a funneled character where configurations are locally connected to states that are slightly more or less native-like [20]. This organization ensures that the diffusive walk toward the native state is guided by stabilizing energy and state connectivity, effectively averting the Levinthal paradox and enabling biological timescales for folding and function.

Key Computational Approaches and Tools

Various computational methodologies have been developed to explore and characterize biomolecular energy landscapes, each with distinct strengths and limitations for specific applications. The table below summarizes major computational approaches used in energy landscape analysis:

Table 1: Comparison of Computational Methods for Biomolecular Energy Landscape Analysis

| Method Category | Representative Tools | Key Features | Primary Applications | Limitations |

|---|---|---|---|---|

| Molecular Dynamics | GROMACS [21] [22], AMBER [21], NAMD [21], OpenMM [21] | High-performance MD, GPU acceleration, explicit/implicit solvation | Conformational sampling, free energy calculations, pathway analysis | Computationally expensive, limited timescales |

| Energy Landscape Exploration | PATHSAMPLE [22], disconnectionDPS [22] | Kinetic transition networks, discrete path sampling | Transition state identification, rate calculations, minimal energy path mapping | Requires initial structures, scaling to large systems |

| Structure Prediction & Design | FoldX [21], Rosetta (see [19]) | Energy calculations, protein design, stability predictions | Mutational analysis, protein engineering, stability design | Accuracy dependent on force field, limited conformational sampling |

| Specialized Analysis | DRIDmetric [22], freenet [22] | Dimensionality reduction, kinetic modeling, disconnectivity graphs | Landscape visualization, state decomposition, kinetics from MD | Specialized implementations, method-dependent parameters |

Molecular dynamics (MD) simulations provide a powerful framework for exploring structural and kinetic landscapes of conformational transitions, particularly through the calculation of free energy landscapes that encode both thermodynamic stability and kinetic behavior [22]. Specialized algorithms like basin-hopping global optimization and discrete path sampling (DPS) enable efficient characterization of potential energy landscapes using geometry optimization techniques, which focus sampling on discrete sets of structures through local minimization [19]. These approaches naturally coarse-grain the landscape representation into local minima and the transition states that connect them, facilitating analysis of kinetic properties through kinetic transition networks and graph transformation methods [19].

Recent methodological advances have incorporated machine learning and dimensionality reduction techniques to enhance energy landscape analysis. The Distribution of Reciprocal Interatomic Distances (DRID) metric, for instance, transforms high-resolution MD trajectories into low-dimensional structural fingerprints by capturing local structural environments around selected reference atoms [22]. This approach enables meaningful clustering of conformations into discrete states while preserving essential kinetic features, facilitating the construction of kinetic models and free energy landscapes from simulation data.

Comparative Analysis of Energy Minimization Techniques

Convergence Properties and Performance Metrics

The convergence behavior of energy minimization algorithms critically determines their effectiveness for biomolecular landscape exploration. Different methodologies exhibit distinct performance characteristics across various landscape topographies:

Table 2: Convergence Properties of Energy Minimization Techniques

| Method | Theoretical Basis | Convergence Guarantees | Local Minima Escape | Scalability to Large Systems |

|---|---|---|---|---|

| Traditional Gradient Descent | First-order optimization | Local convergence for convex functions | Poor, easily trapped | Moderate, depends on implementation |

| Stochastic Gradient Descent/Langevin Dynamics | Stochastic optimization with noise injection | Local convergence with probability 1 | Moderate, via noise term | Good for large parameter spaces |

| Simulated Annealing | Thermodynamic analogy with temperature schedule | Global convergence under specific cooling schedules | Good, through thermal fluctuations | Variable, can be computationally expensive |

| Soft-min Energy Minimization [5] | Gradient-based swarm optimization with softmin energy | For strongly convex functions, convergence to stationary points with at least one particle at global minimum | Excellent, via particle swarm and reduced effective barriers | Good, parallelizable particle dynamics |

| Basin-Hopping Global Optimization [19] | Algorithmic thermal relaxation with minimization steps | Empirical effectiveness for complex landscapes | Excellent, through acceptance of uphill moves | Moderate, requires numerous minimizations |

The Soft-min Energy minimization approach represents a novel gradient-based swarm optimization method that utilizes a smooth, differentiable approximation of the minimum function value within a particle swarm [5]. This method defines a stochastic gradient flow in particle space incorporating Brownian motion for exploration and a time-dependent parameter to control smoothness, analogous to temperature annealing in simulated annealing. Theoretical analysis demonstrates that for strongly convex functions, the dynamics converge to a stationary point where at least one particle reaches the global minimum, while other particles exhibit exploratory behavior [5]. Numerical experiments on benchmark functions demonstrate superior performance in escaping local minima compared to traditional simulated annealing, particularly when potential barriers exceed critical thresholds [5].

Basin-hopping global optimization employs a different strategy, combining thermal activation effects with local minimization to overcome energy barriers [19]. In this approach, steps are taken between local minima based on potential or free energy differences and a parameter controlling the probability of accepting uphill moves. This method can be coupled with parallel tempering to sample high-energy regions of the landscape where barriers are generally smaller, providing effective strategies for addressing broken ergodicity problems common in complex biomolecular systems [19].

Applications in Biomolecular Structure Prediction

Energy minimization techniques find critical applications in biomolecular structure prediction problems, where identifying global minimum energy configurations corresponds to determining native functional states. The multi-funneled landscapes observed for many biomolecules present particular challenges, as competing structures may be stabilized in distinct funnels, creating a landscape organization that supports multiple functions [19]. For example, recent analysis of how mutation affects the energy landscape for a coiled-coil protein, and transitions in helix morphology for a DNA duplex, revealed intrinsically multifunnel landscapes with the potential to function as biomolecular switches [23].

The energy landscape perspective has proven particularly valuable for understanding intrinsically disordered proteins (IDPs) and their complex conformational behavior [22]. For the Alzheimer's amyloid-β peptide (Aβ42), energy landscape characterization using extensive MD simulations and structural clustering based on the DRID metric revealed a "structurally inverted funnel" with disordered conformations occupying the global minimum [22]. Upon dimerization, this landscape shifts to a more standard folding funnel culminating in β-hairpin formation, illustrating how landscape topography evolves with oligomeric state and environmental context [22].

Experimental Protocols and Methodologies

Protocol for Energy Landscape Analysis of Intrinsically Disordered Proteins

Comprehensive analysis of biomolecular energy landscapes requires integration of multiple computational techniques and careful methodological implementation. The following protocol outlines a robust workflow for characterizing energy landscapes, particularly applicable to intrinsically disordered proteins like the Alzheimer's amyloid-β peptide:

System Preparation and Molecular Dynamics Simulations

- Force Field Selection: Employ biomolecular force fields such as CHARMM36m, which has proven suitable for modeling both monomeric amyloid-β and its aggregation behavior [22].

- Solvation and Ions: Solvate molecules in explicit solvent (e.g., TIP3P water) using a rectangular simulation box with minimum 1.2 nm distance from periodic boundaries. Add ions (e.g., Na+ and Cl-) to achieve physiological salt concentration (150 mM) and maintain charge neutrality [22].

- Equilibration: Perform careful system equilibration under NpT conditions (constant particle number, pressure, and temperature) at biologically relevant temperature (310 K) using appropriate thermostats (e.g., Nosé-Hoover) and barostats (e.g., Parrinello-Rahman) [22].

- Production Simulation: Conduct extended MD simulations (microsecond timescales) using an integration timestep of 2 fs, saving configurations at regular intervals (e.g., every 20 ps) for subsequent analysis [22].

Dimensionality Reduction and State Discretization

- DRID Metric Calculation: Select two sets of atoms: (1) m centroids representing structurally important positions (typically Cα atoms), and (2) N reference atoms excluding covalently bonded atoms [22]. For each centroid, compute the distribution of reciprocal interatomic distances to reference atoms, characterizing the centroid environment using the first three moments (mean, variance, skewness) of this distribution [22].

- Structural Clustering: Apply regular space clustering in DRID space using packages like PyEMMA to group structures into discrete states, ensuring high structural similarity within states while maintaining kinetic consistency with the underlying MD trajectory [22].

Free Energy and Kinetic Analysis

- Free Energy Calculation: Compute free energies for each discrete state from equilibrium occupation probabilities using the relation Fi = -kBT ln(pi), where pi is the probability of state i, k_B is Boltzmann's constant, and T is temperature [22].

- Transition State Estimation: Construct rate matrices from observed transition rates between states in the trajectory, then estimate free energy barriers using the Eyring-Polanyi formulation, averaging forward and backward barriers for consistency: F̂jk = (Fjk + F_kj)/2 [22].

- Kinetic Modeling: Extract kinetic properties including mean first-passage times, committor probabilities, and reactive fluxes using graph transformation approaches applied to the kinetic transition network [19].

Visualization and Interpretation

- Disconnectivity Graphs: Visualize the hierarchical organization of the free energy landscape using disconnectivity graphs, which group minima into superbasins according to mutual accessibility through transition states below specified energy thresholds [22].

- Pathway Analysis: Identify dominant folding pathways and their associated timescales through analysis of the kinetic transition network, highlighting key mechanistic events such as salt bridge formation and development of hydrophobic contacts [22].

Figure 1: Workflow for Biomolecular Energy Landscape Analysis

Research Reagent Solutions for Energy Landscape Studies

Successful energy landscape analysis requires specialized computational tools and methodologies that function as "research reagents" in computational experiments. The table below details essential resources for conducting comprehensive energy landscape studies:

Table 3: Essential Research Reagents for Biomolecular Energy Landscape Analysis

| Reagent Category | Specific Tools/Resources | Function/Purpose | Key Features |

|---|---|---|---|

| Simulation Engines | GROMACS [21] [22], AMBER [21], OpenMM [21] | Molecular dynamics trajectory generation | High performance, GPU acceleration, integration with analysis tools |

| Force Fields | CHARMM36m [22], AMBER force fields | Energetic parameterization of molecules | Optimized for proteins, nucleic acids, lipids, and carbohydrates |

| Analysis Packages | DRIDmetric [22], freenet [22], PyEMMA [22] | Dimensionality reduction, state discretization, kinetic modeling | Specialized metrics for biomolecules, kinetic consistency |

| Landscape Exploration | PATHSAMPLE [22], disconnectionDPS [22] | Transition state location, pathway analysis, kinetic networks | Stationary point characterization, rare event analysis |

| Visualization | VMD [21], disconnectivity graphs [22] | Structural visualization, landscape topography representation | Interactive analysis, hierarchical landscape representation |

Applications in Drug Discovery and Development

The energy landscape perspective provides powerful approaches for streamlining drug discovery by enabling more effective identification and optimization of therapeutic compounds. Computer-aided drug discovery has experienced a tectonic shift in recent years, with computational technologies being embraced in both academic and pharmaceutical settings [24]. This transformation is driven by expanding data on ligand properties and target structures, increased computing capacity, and the availability of virtual libraries containing billions of drug-like small molecules [24].

Energy landscape concepts are particularly valuable in structure-based virtual screening, where the binding affinity between drug candidates and therapeutic targets is optimized. Traditional approaches used binary classification to predict whether a drug-target interaction exists, but more informative methods now predict binding affinities (DTBA), which indicate the strength of interactions and potential efficacy [25]. Scoring functions that reflect binding affinity strength can be categorized as empirical, force field-based, or knowledge-based, with recent machine learning-derived scoring functions demonstrating improved ability to capture non-linear relationships in data [25].

Ultra-large virtual screening of chemical spaces containing billions of compounds represents a particularly promising application of energy landscape principles in early drug discovery [24]. These approaches leverage energy minimization concepts to efficiently navigate vast chemical spaces while identifying high-affinity ligands for therapeutic targets. For example, recent studies have demonstrated the rapid identification of highly diverse, potent, target-selective, and drug-like ligands to protein targets, potentially democratizing the drug discovery process and creating new opportunities for cost-effective development of safer small-molecule treatments [24].

The energy landscape perspective also provides insights into molecular mechanisms of disease, particularly for conditions associated with protein misfolding and aggregation. In Alzheimer's disease, for instance, energy landscape analysis of amyloid-β peptides has revealed how environmental factors such as lipid interactions or glycosaminoglycan binding reshape folding funnels and transition barriers, modulating structural preferences and aggregation pathways [22]. Such insights create opportunities for therapeutic interventions designed to alter energy landscape topography, steering molecular populations away from pathological states and toward harmless conformations.

The analysis of energy landscapes in complex biomolecular systems provides an essential framework for understanding structure-function relationships, folding mechanisms, and molecular recognition events in biological systems. Computational methodologies for energy landscape exploration have advanced significantly, enabling detailed characterization of global thermodynamics and kinetics for proteins and nucleic acids [19]. These approaches continue to evolve through algorithmic improvements such as local rigidification of selected degrees of freedom and implementations on graphics processing units, which accelerate the essential optimization procedures required for thorough landscape exploration [23].

Future developments in energy landscape analysis will likely focus on several key areas. More efficient sampling algorithms will be needed to address the growing complexity of biomolecular systems, particularly multi-protein complexes and cellular environments. Integration of experimental data with computational landscape exploration will provide richer constraints for modeling functional transitions. Multiscale approaches that connect detailed atomic-level landscapes with coarser-grained representations will extend the applicability of these methods to larger systems and longer timescales. Finally, machine learning techniques will increasingly complement traditional physics-based approaches, creating hybrid frameworks that leverage the strengths of both methodologies for enhanced predictive accuracy.

As these methodological advances continue, energy landscape analysis will play an increasingly central role in molecular biophysics, structural biology, and drug discovery. The conceptual framework provided by the energy landscape perspective unifies diverse experimental and computational findings, creating a coherent picture of biomolecular dynamics that connects fundamental physical principles with biological function. This unified understanding will ultimately enhance our ability to predict molecular behavior, design novel biomolecules with tailored functions, and develop more effective therapeutic strategies for treating human disease.

In computational structural biology, achieving convergence during energy minimization is a critical step for obtaining accurate, stable, and thermodynamically meaningful models of biomolecular systems. This process is particularly challenging for protein-DNA complexes, where the intricate balance of forces dictates biological function. Convergence failures in these systems often lead to inaccurate binding affinity predictions, flawed structural models, and ultimately, unreliable scientific conclusions or failed drug discovery efforts. The energy landscapes of protein-DNA complexes are typically highly nonconvex and ill-conditioned, dominated by long-range electrostatic interactions that create multiple local minima where optimization algorithms can become trapped [26]. Understanding why these systems fail to converge is essential for researchers developing predictive models in structural biology, gene regulation studies, and rational drug design.

The fundamental challenge lies in the complex physicochemical nature of protein-DNA interactions. These interfaces involve specific amino acid-nucleotide contacts, hydrogen bonding patterns, and shape complementarity that must be precisely captured in computational models. When energy minimization procedures fail to converge, researchers obtain incomplete or physically implausible models that cannot reliably inform experimental work. This article examines the root causes of convergence failure in protein-DNA systems, compares computational approaches for addressing these challenges, and provides practical guidance for researchers working at the intersection of computational biology and drug development.

Understanding the Energy Landscape of Protein-DNA Complexes

Key Factors Contributing to Convergence Failure

Protein-DNA complexes present unique challenges for energy minimization due to several intrinsic properties of their interaction interfaces. The highly nonconvex energy landscape emerges from the complex interplay between numerous degrees of freedom in both the protein and DNA components. Unlike simpler molecular systems, protein-DNA interfaces involve:

- Sequence-specific recognition patterns that create discrete energy wells

- Flexible DNA backbone conformations that can adopt multiple low-energy states

- Water-mediated hydrogen bonds that form and break dynamically

- Counterion distributions that screen electrostatic interactions

- Structural adaptations in both binding partners upon complex formation

These factors collectively create a rugged energy landscape with many local minima separated by significant energy barriers. Standard nonlinear conjugate gradient (NCG) methods frequently struggle in such landscapes because they cannot maintain sufficient descent directions when faced with poor curvature information and frequent oscillations in gradient directions [26]. The long-range nature of electrostatic forces in these charged systems further complicates the optimization process, as small structural adjustments can significantly alter distant interactions.

Experimental structural biology techniques have revealed the complexity of these interfaces. Of the over 221,000 structures in the Protein Data Bank, only approximately 13,000 are protein-nucleic acid complexes, highlighting both the difficulty of experimental determination and the relative scarcity of structural templates for computational modeling [27]. This structural diversity underscores the challenge of developing universal minimization protocols that can handle the varied geometries and interaction patterns found across different protein-DNA complex families.

Comparative Analysis of Convergence Methods

Performance Benchmarking Across Methods

Table 1: Comparison of Energy Minimization Methods for Protein-DNA Systems

| Method | Key Approach | Convergence Rate | Handling of Nonconvexity | Computational Cost | Best Use Case |

|---|---|---|---|---|---|

| Standard Nonlinear Conjugate Gradient (NCG) | Gradient-based local optimization | Moderate | Poor - easily trapped in local minima | Low | Smooth, convex regions of landscape |

| Enhanced ELS-NCG Method [26] | Modified conjugate gradient coefficient with tunable denominator | High | Excellent - improved stability in poor curvature regions | Moderate | General protein-DNA complexes with rugged landscapes |

| Soft-min Energy Global Optimization [5] | Particle swarm with smooth approximation of minimum function | High for global optimum | Excellent - designed for multiple minima | High | Initial structure prediction and refinement |

| Simulated Annealing | Temperature-controlled stochastic search | Moderate | Good but slower transition between minima | High | Systems with deep local minima |

| AI-Based Structure Prediction (AlphaFold3) [27] | Deep learning from evolutionary and structural patterns | High for initial placement | Implicitly handled through training | Variable (high for training) | Rapid model generation without explicit minimization |

Quantitative Performance Assessment

Table 2: Experimental Performance Metrics for Protein-DNA Energy Minimization

| Method | Binding Affinity Prediction Accuracy (r) | Time to Convergence | Structural Deviation from Experimental (Å RMSD) | Success Rate on Difficult Complexes |

|---|---|---|---|---|

| IDEA Model (with experimental data) [28] | 0.79 (Pearson correlation with MITOMI measurements) | Not specified | Not specified | High for bHLH transcription factors |

| IDEA Model (de novo) [28] | 0.67 (Pearson correlation with MITOMI measurements) | Not specified | Not specified | Moderate for bHLH transcription factors |

| Enhanced ELS-NCG Method [26] | Not specified | ~60% faster than staged minimization in LAMMPS | Closely matches dynamics simulation | High - handles ill-conditioned systems |

| Standard NCG | Not specified | Baseline | Significant deviations | Low - frequent trapping in local minima |

| Soft-min Energy Method [5] | Not specified | Faster than Simulated Annealing in escaping local minima | Lower energy conformations achieved | High for benchmark global optimization |

Root Causes of Convergence Failure

Technical and Biophysical Limitations

Convergence failures in protein-DNA systems stem from interconnected technical and biophysical factors that challenge conventional optimization approaches:

Poor Local Curvature Information: The energy landscapes of protein-DNA complexes often contain regions with poorly defined curvature, causing standard NCG methods to compute unstable conjugate gradient parameters. This results in oscillatory behavior where the optimization process cycles through similar states without progressing toward lower energy configurations [26].

Multiple Deep Local Minima: The combination of specific base-amino acid interactions, DNA backbone flexibility, and side chain rotamer distributions creates numerous local energy minima separated by high barriers. Methods like gradient descent become trapped in these suboptimal states, unable to explore the broader landscape effectively [5].

Long-Range Electrostatic Dominance: The polyanionic nature of DNA creates strong, long-range electrostatic fields that dominate the early stages of minimization. These forces can mask finer-grained interactions essential for specific recognition, leading optimization toward physically unrealistic configurations that satisfy electrostatic requirements but violate steric or hydrogen-bonding constraints [26].

Insufficient Experimental Constraints: While computational methods can predict protein-DNA interactions, they often lack sufficient experimental constraints to guide the minimization process. For instance, ChIP-Seq and SELEX data provide binding specificity information but remain separate from structural refinement processes [28]. The integration of such experimental data directly into minimization protocols remains challenging.

The interpretable protein-DNA Energy Associative (IDEA) model demonstrates how combining structural data with sequence information can mitigate some convergence issues. By learning a family-specific interaction matrix that quantifies energetic interactions between individual amino acids and nucleotides, IDEA creates a more tractable energy landscape that better reflects the true physicochemical constraints of protein-DNA recognition [28].

Experimental Protocols for Assessing Convergence

Methodologies for Benchmarking Performance

Protocol 1: Binding Affinity Correlation Assessment

This protocol evaluates how well computational methods predict experimental binding measurements, serving as a key validation metric for convergence to biologically relevant states:

- Select Reference Data: Obtain quantitative binding affinity measurements for a well-characterized transcription factor such as MAX TF against a comprehensive set of DNA sequences (e.g., 255 DNA sequences with E-box motif mutations from MITOMI assays) [28].

- Generate Predictions: Compute binding affinities using the computational method being evaluated (e.g., IDEA model based on MAX crystal structure PDB ID: 1HLO).

- Statistical Comparison: Calculate Pearson and Spearman correlation coefficients between computational predictions and experimental measurements.

- Integration with Experimental Data: For enhanced protocols, incorporate SELEX-seq data during training to improve correlation performance [28].

Protocol 2: Energy Minimization Efficiency Assessment

This procedure quantifies the computational efficiency of minimization algorithms for reaching thermodynamically stable configurations:

- System Preparation: Initialize protein-DNA complex structures from experimental coordinates or homology models.

- Minimization Execution: Apply the target minimization algorithm (e.g., Enhanced ELS-NCG, Soft-min Energy method) with standardized parameters.

- Progress Monitoring: Track energy values, gradient norms, and coordinate changes over optimization iterations.

- Reference Comparison: Compare final energies and structures against reference data from molecular dynamics simulations or experimental structures.

- Performance Metrics: Calculate time to reach target energy thresholds, fraction of replicates achieving convergence, and deviation from reference configurations [26].

Protocol 3: Global Optimization Performance Assessment

This methodology evaluates the ability of algorithms to escape local minima and locate global optima on challenging benchmark landscapes:

- Test Functions: Apply methods to standardized nonconvex functions with known global minima (e.g., double wells, Ackley function).

- Particle Dynamics: For swarm-based methods like Soft-min Energy optimization, track particle distributions across the search space.

- Convergence Metrics: Measure the number of function evaluations required to locate the global minimum within a specified tolerance.

- Comparative Analysis: Benchmark performance against established methods like Simulated Annealing, particularly focusing on the ability to transition between local minima [5].

Pathway to Convergence Failure

The diagram below illustrates the typical decision points and failure mechanisms encountered during energy minimization of protein-DNA complexes.

This pathway visualization reveals critical failure points where traditional minimization algorithms struggle with protein-DNA complexes. The electrostatic evaluation stage often leads to improper descent directions due to the dominance of long-range forces, while poor local curvature information causes oscillatory behavior that prevents stable convergence. When multiple local minima are present, standard methods frequently become trapped in suboptimal states. The successful pathway demonstrates how enhanced methods like ELS-NCG and Soft-min Energy optimization can overcome these pitfalls through improved stability handling and better exploration of the energy landscape.

Table 3: Essential Research Resources for Protein-DNA Convergence Studies

| Resource | Type | Primary Function | Application in Convergence Studies |

|---|---|---|---|

| Dockground Database [29] | Data Resource | Comprehensive repository of protein-DNA complexes | Provides structural templates and benchmarking datasets for method development |

| IDEA Model [28] | Computational Algorithm | Residue-level interpretable biophysical model | Predicts binding sites and affinities; benchmark for energy function accuracy |

| CSUBST Software [30] | Analysis Tool | Implements ωC metric for error-corrected convergence rates | Quantifies adaptive molecular convergence in evolutionary studies |

| AlphaFold3 [27] | AI Prediction Tool | Predicts protein structures with DNA and other ligands | Generates initial structural models for minimization protocols |

| DNA Curtains [31] | Experimental Platform | High-throughput single-molecule imaging of protein-DNA interactions | Provides experimental validation of binding kinetics and mechanisms |

| SELEX-seq Data [28] | Experimental Dataset | Systematic evolution of ligands by exponential enrichment | Enhances computational models when integrated into training protocols |

| MITOMI Assays [28] | Experimental Measurement | Microfluidic-based binding affinity quantification | Ground truth data for validating computational binding predictions |

The convergence failures encountered in protein-DNA complex energy minimization stem from fundamental limitations of traditional algorithms when confronted with the rugged, electrostatically complex landscapes of these biologically essential assemblies. The comparative data presented in this analysis demonstrates that enhanced optimization approaches like the ELS-NCG method and Soft-min Energy global optimization offer significant advantages over standard techniques, particularly through improved handling of poor curvature information and more effective navigation of multiple local minima. For researchers working in drug discovery and structural biology, selecting appropriate minimization strategies that match the complexity of their target systems is crucial for generating reliable, biologically meaningful models. As computational methods continue to evolve—especially through the integration of AI-based structure prediction with physics-based refinement—the persistent challenge of convergence failure in protein-DNA systems is likely to be mitigated, accelerating our understanding of gene regulation and creating new opportunities for therapeutic intervention.

Algorithm Deep Dive: From Steepest Descent to Enhanced Conjugate Gradient Methods

This guide provides an objective comparison of the Steepest Descent optimization algorithm against Conjugate Gradient and L-BFGS methods, with a focus on energy minimization applications in scientific computing and drug development.

Energy minimization is a foundational step in computational simulations, particularly in molecular dynamics where it is used to relieve unfavorable atomic clashes in initial structures prior to more expensive simulations [32]. The Steepest Descent (SD) method is one of the most straightforward optimization algorithms, characterized by its iterative steps taken in the direction of the negative gradient. Its update rule is defined as x_{k+1} = x_k + α_k * (-∇f(x_k)), where the step size α_k is often determined by a line search or a heuristic approach [32]. Despite its simplicity, SD forms the basis for understanding more complex optimization techniques and remains practically useful in specific scenarios.

The Conjugate Gradient (CG) method improves upon SD by incorporating information from previous search directions to build a set of conjugate vectors, which theoretically allows for convergence to a minimum of an n-dimensional quadratic function in at most n steps [33]. In contrast, the L-BFGS (Limited-memory Broyden–Fletcher–Goldfarb–Shanno) algorithm is a quasi-Newton method that approximates the inverse Hessian matrix using a limited history of updates, aiming to achieve a superlinear convergence rate without the high memory cost of the full BFGS method [32] [33].

Table 1: Core Algorithm Characteristics and Best-Suited Applications

| Algorithm | Key Mechanism | Theoretical Convergence | Best-Suited Application Phase |

|---|---|---|---|

| Steepest Descent (SD) | Follows negative gradient direction [32] | Linear | Initial minimization, very rough starting points [32] |

| Conjugate Gradient (CG) | Builds conjugate directions from previous steps [33] | n-step for n-dim quadratic (theoretical) |

Medium-scale problems, cannot be used with rigid water constraints (e.g., SETTLE) [32] |

| L-BFGS | Approximates inverse Hessian with update history [32] | Superlinear (under conditions) | Later minimization stages, smooth regions of energy landscape [32] |

Theoretical and Practical Performance Comparison

A key differentiator between these algorithms is their robustness, which refers to an algorithm's ability to make progress and converge reliably from poor initial guesses, even at the expense of slower asymptotic convergence speed.

The Steepest Descent algorithm is notably robust because it guarantees function value reduction when equipped with a proper line search. Its update procedure involves calculating new positions and accepting them only if the potential energy decreases (V_{n+1} < V_n); otherwise, the step size is reduced [32]. This inherent cautiousness makes it exceptionally reliable for the initial stages of minimization, where the energy landscape can be chaotic and gradients large.

Conversely, L-BFGS, while generally converging faster than CG in practice, relies on building a curvature approximation from previous iterations [32]. This approximation can be inaccurate when the algorithm is far from the minimum or when the energy function has sharp cut-offs, potentially leading to instability. CG methods, particularly classical ones like PRP and HS, can suffer from a lack of guaranteed convergence for general non-linear functions, though modern hybrid and three-term variants have been developed to improve robustness and performance in large-scale problems [34] [35].

Table 2: Quantitative Performance and Convergence Data

| Algorithm | Typical Memory Cost | Key Strengths | Key Limitations | Stopping Criterion (Max Force) |

|---|---|---|---|---|

| Steepest Descent | Low (O(n)) | High robustness, easy to implement, handles large initial forces [32] | Slow convergence in late stages [32] | Reasonable value between 1 and 10 is acceptable [32] |

| Conjugate Gradient | Low (O(n)) | More efficient than SD close to minimum [32] | Can get "stuck"; CG cannot be used with constraints [32] | Same as Steepest Descent [32] |

| L-BFGS | Medium (O(m*n), m=history) | Fast convergence, almost as efficient as full BFGS [32] | Approximation can be poor with switched interactions; not yet parallelized [32] | Not explicitly stated, but typically tighter than SD |

Experimental Protocols and Workflow Integration

A standard protocol for energy minimization in molecular dynamics, as seen in GROMACS, often prescribes an initial stage of Steepest Descent. For example, a protocol might specify "1000 steps of SD minimization with strong positional restraints applied to the heavy atoms of the large molecules using a force constant of 5.0 kcal/mol Å" [36]. This approach uses SD's robustness to quickly relax the system from a potentially highly non-equilibrium starting configuration (e.g., a docked ligand or a mutated protein) without causing drastic structural changes.

The following workflow diagram illustrates a typical energy minimization protocol that integrates different algorithms:

Diagram Title: Typical Staged Energy Minimization Workflow

This staged approach leverages the strengths of each algorithm: SD for initial rough minimization and CG or L-BFGS for finer convergence. The tolerances are often set differently, with a looser tolerance (e.g., 1000 kJ/mol/nm) for the initial SD phase and a tighter one for the final phase [36].

Essential Research Reagents and Computational Tools

For researchers implementing these minimization protocols, familiarity with specific tools and parameters is essential.

Table 3: Key Research Reagent Solutions for Energy Minimization

| Reagent / Software / Parameter | Function / Purpose | Example Usage / Note |

|---|---|---|

GROMACS mdrun |

Molecular dynamics simulator performing energy minimization [32] | Select algorithm via integrator = steep/cg/l-bfgs in MDP file [32] |

Position Restraints (posre.itp) |