Electron Density Partitioning: A Critical Factor for Accurate Dispersion Parameters in Drug Discovery

Accurately modeling dispersion interactions is crucial for predicting molecular binding in drug design, yet the accuracy of these parameters is intrinsically linked to how a molecule's electron density is partitioned...

Electron Density Partitioning: A Critical Factor for Accurate Dispersion Parameters in Drug Discovery

Abstract

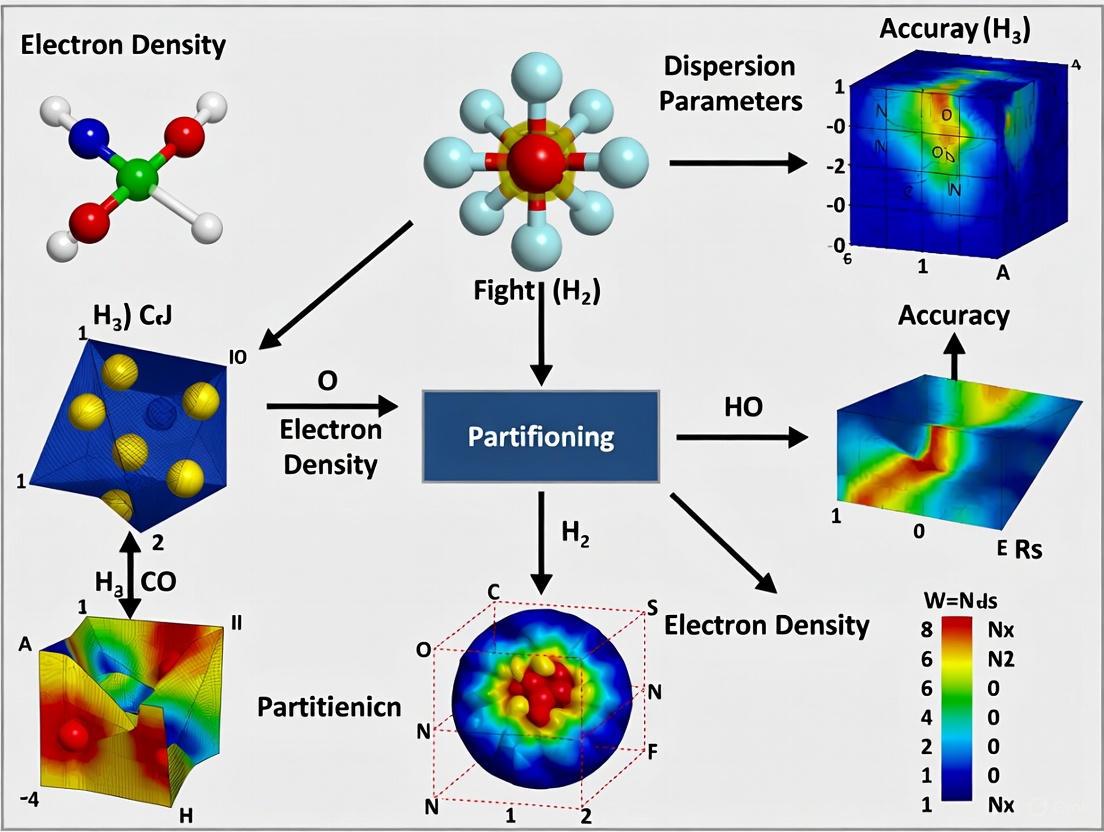

Accurately modeling dispersion interactions is crucial for predicting molecular binding in drug design, yet the accuracy of these parameters is intrinsically linked to how a molecule's electron density is partitioned into atomic contributions. This article explores the fundamental relationship between electron density partitioning schemes and the fidelity of derived dispersion parameters. We examine foundational partitioning theories like Atoms in Molecules (AIM) and Hirshfeld, detail their methodological implementation in quantum chemistry, and address common challenges in achieving parameter accuracy. By comparing the performance of different partitioning-based methods against robust quantum-mechanical benchmarks for biological ligand-pocket interactions, this review provides drug development researchers with a clear guide for selecting and validating computational approaches to improve the prediction of binding affinities and material properties.

The Quantum Mechanical Basis: Linking Electron Density to Dispersion Forces

In quantum chemistry, the ability to predict and rationalize noncovalent interactions is paramount for advancements in drug design, materials science, and crystal engineering. While the molecular electrostatic potential is well-established for interpreting "hard-type" charge-controlled interactions, the "soft-type" orbital-controlled interactions are governed by a molecule's ability to redistribute its electron density in response to an external electric field [1]. This phenomenon is quantitatively described by the polarizability density, a second-order tensor function that has emerged as a fundamental bridge connecting the abstract concept of molecular polarizability to tangible chemical reactivity and molecular recognition processes [1]. The accurate prediction of dispersion interactions—long-range attractive forces between atoms and molecules—depends critically on how we model the underlying electron density and its response properties [2].

This technical guide explores the central role of polarizability density in modern computational chemistry, with particular emphasis on how different schemes for partitioning molecular electron density into atomic components directly impact the accuracy of derived dispersion parameters. As molecular polarizability itself is a integrated quantity obtained by summing the polarizability density over all space, the choice of partitioning scheme becomes crucial for developing accurate, transferable models for complex molecular systems [1]. Recent studies have demonstrated that van der Waals dispersion interactions can induce significant polarization in electron density, with the magnitude of this effect growing with system size [2]. This finding underscores the intimate connection between electron density, polarizability, and dispersion forces—a relationship that forms the core of this examination.

Theoretical Foundation of Polarizability Density

Mathematical Definition and Physical Interpretation

The polarizability density, denoted as χ(r), is formally defined as the field derivative of the total charge density distribution, constituting a second-order tensor with components given by:

[ \chi{ij}(\mathbf{r}) = ri \frac{\partial \rho{\text{tot}}(\mathbf{r})}{\partial Fj(\mathbf{r})} ]

where (ri) is the i-th component of the position vector (\mathbf{r}), and (Fj(\mathbf{r})) is the j-th component of the electric field vector at position (\mathbf{r}) [1]. At each point in space, χ(r) is represented by a 3×3 matrix that describes how the electron density at that point responds to each component of an applied electric field.

The molecular polarizability tensor α is obtained by integrating the polarizability density over the entire molecular volume:

[ \alpha{ij} = \int \chi{ij}(\mathbf{r}) d^3r ]

This integral formulation establishes the fundamental relationship between the local response described by χ(r) and the global molecular property α [1]. The trace of the polarizability density, Tr[χ(r)], provides a scalar field that identifies regions of a molecule most susceptible to polarization, though it loses the directional information contained in the full tensor.

Key Features and Characteristics

The polarizability density exhibits several important characteristics that distinguish it from the unperturbed electron density:

- Spatial Distribution: The most polarizable regions occur primarily in the valence shells, as core electrons are more tightly bound to nuclei and consequently more difficult to polarize [1].

- Sign Asymmetry: Unlike the electron density which is strictly positive everywhere, Tr[χ(r)] can exhibit both positive and negative values in different molecular regions. Negative values indicate regions that polarize opposite to the applied field, representing a reaction against stronger polarization occurring elsewhere in the molecule [1].

- Tensor Asymmetry: The full χ(r) tensor is inherently asymmetric (( \chi{ij}(r) \neq \chi{ji}(r) ) for ( i \neq j )) except at points lying on molecular symmetry elements that impose constraints on the tensor components [1].

- Integrated Symmetry: Despite the local asymmetry of χ(r), the integrated molecular polarizability tensor α is necessarily symmetric and positive definite, as it derives from a second derivative of the energy with respect to applied electric field components [1].

Table 1: Comparative Features of Electrostatic Potential and Polarizability Density

| Feature | Electrostatic Potential φ(r) | Polarizability Density χ(r) |

|---|---|---|

| Physical Interpretation | Potential energy of a unit positive charge at point r | Response of electron density at point r to external electric field |

| Dominant Interaction Type | Hard-type (charge-controlled) | Soft-type (orbital-controlled) |

| Mathematical Nature | Scalar field | Second-order tensor field |

| Sign Definiteness | Can be positive or negative | Trace can be positive or negative |

| Computational Complexity | Relatively straightforward to compute | Requires response property calculations |

| Common Applications | Identifying reactive sites, hydrogen bonding | Understanding polarization, dispersion, donor-acceptor interactions |

Partitioning Schemes and Atomic Polarizabilities

Approaches to Electron Density Partitioning

The partitioning of molecular electron density into atomic contributions represents a cornerstone for understanding how molecular polarizability emerges from atomic and bond contributions. Currently, three principal schemes dominate the literature, each with distinct theoretical foundations and practical implications.

The Quantum Theory of Atoms in Molecules (QTAIM) approach, developed by Bader, partitions space into non-overlapping atomic basins bounded by zero-flux surfaces in the electron density gradient [1] [3]. This method provides well-defined atomic domains with clear physical interpretation based on topological analysis of the electron density. QTAIM generally produces atomic polarizabilities that demonstrate better transferability between chemical environments compared to other methods [3]. The Hirshfeld partitioning scheme employs a fuzzy boundary approach where atomic densities are determined by iterative partitioning of the molecular electron density based on a reference promolecular density [3] [4]. Recent advances like the expHAR (exponential Hirshfeld partition scheme) have improved the accuracy of hydrogen positions and X-hydrogen bond lengths in Hirshfeld atom refinement [4]. The Voronoi partitioning method divides space into polyhedral cells around each atom based solely on nuclear coordinates, creating hard-space partitions without reference to the electron density distribution [1]. While computationally efficient, this approach often produces atomic volumes and polarizabilities with limited chemical interpretability.

Impact on Atomic Polarizability Tensors

The choice of partitioning scheme significantly influences the calculated atomic polarizabilities and their physical interpretation. In the QTAIM framework, the atomic polarizability tensor for domain Ω is calculated as:

[ \alpha{ij}(\Omega) = \int{\Omega} \chi_{ij}(\mathbf{r}) d^3r ]

which can be equivalently expressed using the atomic dipole moment μ(Ω) as:

[ \alpha{ij}(\Omega) = \frac{\partial \mui(\Omega)}{\partial F_j} ]

The atomic dipole moment itself contains two distinct contributions: the internal polarization arising from deformation of electronic charge within the atomic basin, and bond polarization resulting from charge transfer between atoms through chemical bonds [1].

Unlike the molecular polarizability tensor, atomic polarizability tensors are not guaranteed to be symmetric unless the atomic basin possesses specific symmetry elements [1]. This asymmetry reflects the anisotropic electronic environment experienced by atoms in molecules and has important implications for constructing transferable force fields and dispersion models.

Table 2: Comparison of Electron Density Partitioning Schemes for Polarizability Calculations

| Partitioning Scheme | Space Type | Boundary Definition | Atomic Polarizability Transferability | Computational Cost |

|---|---|---|---|---|

| QTAIM | Non-overlapping (hard) | Zero-flux surfaces in ∇ρ(r) | High | High |

| Hirshfeld | Overlapping (fuzzy) | Iterative stockholder partitioning | Moderate | Moderate |

| Voronoi | Non-overlapping (hard) | Nuclear coordinates only | Low | Low |

| Voronoi-Deformation Density (VDD) | Non-overlapping (hard) | Voronoi with deformation density correction | Moderate | Moderate |

Computational Methodologies and Protocols

Quantum Chemical Methods for Polarizability Calculation

The accurate computation of molecular polarizabilities and polarizability densities requires careful selection of both electronic structure methods and basis sets. Traditional approaches calculate the molecular polarizability tensor via finite differentiation of the molecular dipole moment with respect to applied electric field components:

[ \alpha{ij} = \frac{\partial \mui}{\partial F_j} ]

Density Functional Theory (DFT) with hybrid functionals like B3LYP and SCAN0 provides a reasonable balance between computational cost and accuracy for polarizability calculations [5]. However, benchmark studies reveal that even hybrid DFT functionals can exhibit substantial errors, with B3LYP showing a mean absolute error of 0.302 atomic units compared to coupled-cluster references for small organic molecules [5]. The linear response coupled cluster singles and doubles (LR-CCSD) method represents the gold standard for polarizability calculations, but its O(N⁶) scaling limits application to small molecules [5]. For the 7,211 molecules in the QM7b database, LR-CCSD with diffuse basis sets like d-aug-cc-pVDZ provides benchmark-quality polarizabilities [5].

The emerging Machine Learning (ML) approaches, particularly symmetry-adapted Gaussian process regression (SA-GPR), can predict polarizability tensors with errors approximately 50% smaller than hybrid DFT at negligible computational cost [5]. These models learn from high-quality reference data and can achieve coupled-cluster level accuracy for diverse molecular sets including conjugated systems, carbohydrates, small drugs, amino acids, and nucleobases [5].

Accounting for Nuclear Vibrations

Conventional polarizability calculations typically employ optimized molecular geometries, neglecting the impact of nuclear vibrations. However, recent investigations reveal that vibrational effects can introduce significant uncertainties—up to 6% in sensing devices and 50% in optical devices [6]. The Nuclear Ensemble (NE) method samples geometries according to the nuclear wavefunction and computes polarizabilities for each configuration, providing a distribution that reflects vibrational effects [6]. For molecules like CF₂Cl₂ and CF₃CH₂F used in refrigerant and detection technologies, the isotropic polarizability distribution shows standard deviations of 1.8-6.7% relative to the mean value [6].

The Zero-Point Vibrational Average (ZPVA) correction represents an alternative approach, expanding the electronic property as a function of normal mode coordinates via first and second derivatives at the equilibrium geometry [6]. For accurate modeling of polarizability-dependent phenomena, particularly in spectroscopy and sensing applications, incorporating vibrational corrections is essential.

Experimental Protocols for Validation

Computational predictions of polarizabilities require validation against experimental measurements. Several established experimental techniques provide reference data:

- Refractometry: Measures the refractive index of gases or liquids, related to the mean polarizability through the Lorentz-Lorenz equation.

- Dielectric Constant Measurements: Provides information on the polarizability in condensed phases.

- Depolarization Ratios in Rayleigh Scattering: Determines the anisotropy of the polarizability tensor.

- Electron Impact Ionization Cross Sections: Correlates with isotropic polarizability, particularly relevant for gas sensing applications [6].

For the 23 drug molecules investigated in environmental partitioning studies, quantum mechanical calculations of polarizabilities and other physicochemical properties provide essential data where experimental measurements are limited by legal restrictions or molecular complexity [7].

Table 3: Research Reagent Solutions for Polarizability Density Calculations

| Tool/Category | Specific Examples | Function/Purpose | Key Considerations |

|---|---|---|---|

| Electronic Structure Codes | Gaussian, ORCA, CFOUR, Psi4 | Compute electron density, response properties, and polarizabilities | Support for LR-CCSD, E-field perturbations, analytical derivatives |

| Partitioning Software | AIMAll, Multiwfn, NoSpherA2 | Perform QTAIM, Hirshfeld, and Voronoi partitioning | Integration with crystallographic data, user-friendly visualization |

| Basis Sets | d-aug-cc-pVDZ, aug-cc-pVTZ, aug-cc-pVQZ | Ensure proper description of diffuse electron regions | Balance between computational cost and completeness |

| Machine Learning Frameworks | AlphaML, SA-GPR models | Predict polarizability tensors with coupled-cluster accuracy | Transferability to new chemical spaces, data requirements |

| Visualization Tools | VMD, Jmol, VESTA | Analyze and visualize polarizability density and molecular response | Tensor visualization capabilities, isosurface rendering |

| Benchmark Databases | QM7b, S12L, L7 | Provide reference data for method validation and ML training | Diversity of chemical structures, property availability |

Applications in Molecular Recognition and Materials Design

Secondary Interactions and Supramolecular Chemistry

Polarizability density analysis provides unique insights into secondary interactions that govern molecular recognition and supramolecular assembly. Unlike the electrostatic potential which identifies σ-holes and π-holes as regions of positive potential, the polarizability density reveals these as areas with enhanced polarizability, offering a complementary perspective on their reactivity [1] [3]. For electron donor-acceptor complexes and other molecular adducts, distributed atomic polarizabilities derived from polarizability density partitioning enable precise modeling of induction energies and polarization contributions to binding [1].

In halogen bonding, chalcogen bonding, and pnictogen bonding, the interplay between electrostatic and polarization components determines the strength and directionality of interactions. Polarizability density maps can predict which molecular regions are most susceptible to deformation during complex formation, providing a powerful tool for rational design of supramolecular systems [3].

Dispersion Interactions and van der Waals Forces

Dispersion interactions, responsible for long-range attraction between nonpolar molecules, are intrinsically linked to polarizability through the London dispersion formula. Recent advances demonstrate that van der Waals interactions themselves induce significant polarization in electron density, with the effect growing linearly with system size [2]. For polyaromatic hydrocarbons and supramolecular complexes, this dispersion-driven polarization can alter long-range electrostatic potentials by up to 4 kcal/mol and reshape noncovalent interaction surfaces [2].

The Many-Body Dispersion (MBD) method, particularly the fully coupled and optimally tuned variant (MBD@FCO), accurately captures these polarization effects by modeling collective electron density fluctuations through coupled quantum Drude oscillators [2]. This approach bridges energy-based dispersion models with density-based chemical analysis, enabling more realistic modeling of noncovalent interactions in complex systems.

Environmental Partitioning of Drug Molecules

The polarizability of drug molecules directly influences their environmental partitioning behavior, governing distribution between air, water, and organic phases [7]. For the 23 prominent drug molecules studied in environmental monitoring, including fentanyl, cocaine, methamphetamine, and LSD, quantum chemical calculations of polarizabilities provide essential parameters for predicting partition coefficients (logKOW, logKOA, logKAW) when experimental data is unavailable due to legal restrictions or molecular complexity [7].

The relationship between polarizability and hexadecane/air partition coefficients (logKHdA) is particularly important for understanding the behavior of semi-volatile organic compounds in indoor environments, where partitioning between gas phase, particle phase, and house dust determines exposure pathways [7]. Accurate prediction of these partitioning coefficients requires reliable polarizability data, which in turn depends on the chosen electron density partitioning scheme.

Current Challenges and Future Perspectives

Despite significant advances in computational methods for calculating polarizability density and atomic polarizabilities, several challenges remain unresolved. The transferability of atomic polarizabilities between different chemical environments continues to be limited, particularly for atoms in conjugated systems or unusual coordination environments [1] [5]. The non-additivity of polarizabilities observed in ionic liquids and other complex systems presents additional complications for force field development [3].

The integration of machine learning approaches with high-quality quantum chemical reference data offers promising avenues for accurate polarizability prediction at minimal computational cost [8] [5]. For gold nanoclusters and other nanomaterials, ML models like artificial neural networks, Gaussian process regression, and kernel ridge regression have demonstrated excellent performance in predicting polarizabilities, outperforming conventional DFT approaches [8].

The emerging field of quantum crystallography combines advanced crystallographic techniques with quantum mechanical calculations to extract precise electron density distributions from experimental measurements [4]. Methods like Hirshfeld Atom Refinement (HAR) and the electron density-based multipole model provide experimental validation for computational predictions of electron density and its response properties [4]. As these techniques become more widespread, they will enable more rigorous testing and refinement of polarizability density models.

Future research directions will likely focus on improving the description of frequency-dependent polarizabilities, extending methods to larger systems including biomolecules and materials, and developing more sophisticated partitioning schemes that better capture the effects of chemical environment on atomic response properties. The integration of polarizability density analysis into automated computational workflows for drug discovery and materials design represents another promising frontier for this fundamental bridge between electron density and molecular polarizability.

The distribution of electrons, described by the electron charge density ( \rho(\mathbf{r}) ), is a fundamental quantity in chemistry, dictating chemical bonding, molecular interactions, and material properties. [1] Within the framework of Atoms in Molecules (AIM) theory, a rigorous method for partitioning a molecular system into its constituent atoms is provided by the topology of ( \rho(\mathbf{r}) ). This partitioning is not merely conceptual; it is essential for quantifying atomic properties, understanding intermolecular interactions, and predicting the behavior of molecules in complex environments. The accuracy of derived properties, including dispersion parameters critical for modeling weak van der Waals forces, is inherently linked to the method used to define an "atom" in a molecule.

This technical guide explores the core concepts of AIM theory—atomic basins and the zero-flux condition—and frames them within a critical research context: how the choice of electron density partitioning scheme impacts the accuracy of computed dispersion energies. Such energies are vital in fields like drug development, where reliable prediction of ligand-receptor binding, which often involves secondary interactions, depends on accurate physical models. [1]

Theoretical Foundations of AIM

The Topology of the Electron Density

The electron density ( \rho(\mathbf{r}) ) is a scalar function that exhibits characteristic features in a molecule. AIM theory, developed by Bader, uses the topology of ( \rho(\mathbf{r}) ) to partition molecular space. Key topological features include:

- Critical Points (CPs): Points in space where the gradient of the density, ( \nabla \rho(\mathbf{r}) ), vanishes.

- Atomic Basins: Regions of space bounded by surfaces through which the gradient flux is zero.

- Bond Paths: Lines of maximum electron density connecting bonded atomic nuclei, identified by the paths followed from bond critical points.

The Zero-Flux Condition and Atomic Basins

The cornerstone of AIM partitioning is the zero-flux condition. This condition defines the boundary between two atomic basins. For an atomic basin ( \Omega ), the boundary surface ( S(\Omega) ) satisfies:

[ \nabla \rho(\mathbf{r}) \cdot \mathbf{n}(\mathbf{r}) = 0 \quad \text{for all } \mathbf{r} \in S(\Omega) ]

where ( \mathbf{n}(\mathbf{r}) ) is the unit vector normal to the surface at point ( \mathbf{r} ). This condition ensures that no electron density lines of force cross the boundary of the atomic basin; they are all tangential to it. Consequently, each atomic basin encompasses a single atomic nucleus and a well-defined share of the total electron density.

An atomic basin ( \Omega ) is thus defined as a region of space bounded by a zero-flux surface in the gradient vector field of ( \rho(\mathbf{r}) ) and containing one nucleus. The integrated properties within this basin, such as the electron population, energy, and volume, are assigned to that atom.

Partitioning Schemes and Their Impact on Property Prediction

The choice of partitioning scheme directly influences the computed atomic and molecular properties. Two primary approaches exist:

- Hard Space (Non-overlapping) Partitioning: Schemes like AIM define sharp, non-overlapping atomic boundaries via zero-flux surfaces. [1]

- Fuzzy (Overlapping) Partitioning: Schemes such as Hirshfeld or Mulliken populations define atoms with overlapping boundaries, often leading to different atomic charges and multipole moments. [1]

This distinction is critical for properties derived from the electron density response, such as polarizability. The molecular polarizability tensor ( \alpha ) describes how a molecule's electron density distorts under an external electric field. It can be partitioned into atomic contributions ( \alpha_{ij}(\Omega) ) by integrating the polarizability density ( \chi(\mathbf{r}) ) over an atomic basin ( \Omega ): [1]

[ \alpha{ij}(\Omega) = \int{\Omega} \chi_{ij}(\mathbf{r}) d^3r ]

where ( \chi{ij}(\mathbf{r}) = ri \frac{\partial \rho{tot}(\mathbf{r})}{\partial Fj} ) is a tensor component of the polarizability density. [1] The atomic dipole moment ( \boldsymbol{\mu}(\Omega) ) and its response to the field can be similarly decomposed into internal polarization (charge deformation within the basin) and bond polarization (charge transfer between basins). [1] A key consequence of hard partitioning is that the resulting atomic polarizability tensors are not guaranteed to be symmetric unless the atomic basin itself possesses symmetry, a feature inherent to the asymmetric nature of ( \chi(\mathbf{r}) ). [1]

Connecting Atomic Polarizability to Dispersion Interactions

Dispersion interactions, a type of London force, are soft, orbital-controlled interactions governed by the ability of molecules to instantaneously polarize one another. [1] The strength of these interactions is related to the molecular polarizability. When molecular polarizability is inaccurately partitioned into atomic terms, the resulting dispersion parameters in force fields or interaction models become less transferable and accurate. For drug molecules, which often engage in secondary interactions (e.g., in electron donor-acceptor complexes), the precise description of these dispersion forces is crucial for predicting binding affinity and selectivity. [1] AIM theory provides a rigorous, physically grounded framework for this partitioning, thereby offering a pathway to more accurate dispersion parameters compared to methods that rely on more arbitrary atomic definitions.

Computational Protocols for AIM Analysis

The following workflow outlines the key steps for performing an AIM analysis to obtain atomic properties and assess their impact on dispersion energy predictions.

Detailed Methodologies

Step 1: Molecular Geometry Optimization

- Protocol: Perform a geometry optimization using quantum chemical methods such as Density Functional Theory (DFT) or Hartree-Fock (HF) with a medium-sized basis set (e.g., 6-31G*). This ensures the molecular structure is at a minimum on the potential energy surface.

- Software: Gaussian, ORCA, GAMESS.

Step 2: Electron Density Calculation

- Protocol: Using the optimized geometry, perform a single-point energy calculation with a high-quality, dense basis set (e.g., cc-pVTZ) to obtain an accurate electron density ( \rho(\mathbf{r}) ). The wavefunction file must be saved for subsequent AIM analysis.

Step 3: AIM Topological Analysis

- Protocol: Use an AIM analysis program (e.g., AIMAll, AIMAII) to process the wavefunction file. The software will calculate the gradient ( \nabla \rho(\mathbf{r}) ), locate all critical points (nuclear, bond, ring, cage), and identify the zero-flux surfaces that define the atomic basins.

Step 4: Property Integration and Polarizability Density Analysis

- Protocol: Within each atomic basin ( \Omega ), the software integrates the electron density to yield atomic charges, integrates the energy density to yield atomic energies, and, if calculated, integrates the polarizability density ( \chi(\mathbf{r}) ) to yield atomic polarizability tensors ( \alpha(\Omega) ). [1] The workflow for calculating ( \chi(\mathbf{r}) ) itself typically involves computing the response of the electron density to a finite or perturbative external electric field.

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 1: Key Computational Tools and Resources for AIM and Property Calculation

| Tool/Resource | Type | Primary Function in AIM Research |

|---|---|---|

| AIMAll | Software Suite | Performs comprehensive AIM analysis, including basin integration and critical point location. |

| ORCA | Quantum Chemistry Package | Calculates electron densities and wavefunctions for subsequent AIM analysis; can compute response properties. |

| Gaussian | Quantum Chemistry Package | Performs geometry optimization, frequency, and single-point calculations to generate electron densities. |

| Promolecular Density | Calculation Model | A reference density constructed from superimposed, spherically averaged atomic densities; used as a starting point in some fuzzy partitioning schemes. [1] |

| Polarizability Density ( \chi(\mathbf{r}) ) | Mathematical Function | The key function for analyzing and predicting soft, orbital-controlled interactions and for partitioning molecular polarizability. [1] |

Quantitative Data from Partitioning Schemes

The choice of partitioning scheme leads to quantitatively different atomic descriptions. The table below summarizes a comparison of key features, as discussed in the literature. [1]

Table 2: Comparison of Hard-Space (AIM) and Fuzzy Electron Density Partitioning Schemes

| Feature | Hard-Space (AIM) Partitioning | Fuzzy (e.g., Hirshfeld) Partitioning |

|---|---|---|

| Atomic Boundaries | Sharp, defined by zero-flux surfaces. | Smooth, overlapping boundaries. |

| Transferability | Generally high for atomic properties in similar chemical environments. | Can be variable. |

| Atomic Polarizability Symmetry | Atomic tensor ( \alpha(\Omega) ) is not inherently symmetric. [1] | Dependent on the specific fuzzy recipe. |

| Treatment of Charge Transfer | Explicitly accounted for via bond polarization contribution to dipole. [1] | Implicitly contained within the overlapping charge distributions. |

| Connection to Dispersion | Provides a rigorous foundation for deriving atom-atom dispersion coefficients from first principles. | Less direct connection; often relies on parameterization. |

Atoms in Molecules theory, with its rigorous definition of atomic basins via the zero-flux condition, provides a physically sound and non-arbitrary method for partitioning molecular electron density. The resulting atomic properties, including the emerging field of atomic polarizability densities, are crucial for advancing the accuracy of molecular simulations. By establishing a direct and quantitative link between the topology of ( \rho(\mathbf{r}) ) and the parameters governing dispersion forces, AIM theory addresses a critical need in computational drug development: the reliable prediction of the secondary interactions that often determine the success or failure of a therapeutic candidate.

In computational chemistry and biomolecular modeling, the accurate description of dispersion forces remains a significant challenge. These forces, fundamental to molecular recognition, supramolecular interactions, and drug binding, are intimately connected to molecular polarizability—the ability of a molecule's electron density to distort in response to an external electric field [9]. While molecular polarizability is a well-defined quantum mechanical property, understanding and predicting dispersion interactions at the atomistic level requires partitioning this molecular response into atomic contributions. This partitioning is non-trivial and profoundly impacts the accuracy of dispersion parameters in polarizable force fields used for molecular dynamics simulations of biological systems [10] [11]. This technical guide examines the core challenge of deriving atomic polarizabilities from electron density, comparing partitioning schemes, and discussing the implications for the development of accurate force fields in drug development.

Theoretical Foundations of Polarizability

From Electron Density to Polarizability Density

The electron density distribution, ( \rho(\mathbf{r}) ), is a fundamental quantity in quantum chemistry, informing on chemical bonding and reactivity [9]. When a molecule is subjected to a uniform external electric field, its electron density redistributes. This response is captured by the polarizability density, ( \chi(\mathbf{r}) ), a second-order tensor function defined as the field derivative of the total charge density distribution [9] [1]: [ \chi{ij}(\mathbf{r}) = ri \frac{\partial \rho{\text{tot}}(\mathbf{r})}{\partial Fj(\mathbf{r}}) ] where ( F_j ) is the j-th component of the electric field vector. The trace of this tensor, ( \text{Tr} \chi(\mathbf{r}) ), identifies regions of a molecule that are most polarizable, playing a role for "soft" (orbital-controlled) interactions analogous to that of the electrostatic potential for "hard" (charge-controlled) interactions [9].

The molecular polarizability tensor, ( \alpha ), is obtained by integrating the polarizability density over the entire molecular volume [9] [1]: [ \alpha{ij} = \int \chi{ij}(\mathbf{r}) d^3r = \frac{\partial \mui}{\partial Fj} ] where ( \mu_i ) is the i-th component of the molecular dipole moment. Unlike the molecular polarizability tensor, which is symmetric and positive definite, the polarizability density ( \chi(\mathbf{r}) ) is inherently asymmetric and not positive everywhere, indicating that some molecular regions polarize oppositely to the applied field [9].

The Partitioning Problem

The central challenge is to decompose the integrated molecular polarizability into atomic contributions. If ( \Omega ) represents an atomic domain in space, the components of the polarizability tensor for that atom are [9]: [ \alpha{ij}(\Omega) = \int{\Omega} \chi{ij}(\mathbf{r}) d^3r = \frac{\partial \mui(\Omega)}{\partial Fj} ] The atomic dipole moment ( \mu(\Omega) ) itself consists of two parts [9]: [ \mu(\Omega) = \mu{\text{internal}}(\Omega) + \mu_{\text{bond}}(\Omega) ]

- Internal Polarization: Describes the deformation of the electronic charge distribution within the atomic basin. It is computed as ( \mu{\text{internal}}(\Omega) = -\int{\Omega} (\mathbf{r} - \mathbf{R}_{\Omega}) \rho(\mathbf{r}) d\mathbf{r} ) [9].

- Bond Polarization: Arises from charge transfer between atoms through bonds, calculated as ( \mu{\text{bond}}(\Omega) = \sum{\Omega'} [\mathbf{R}{\Omega} - \mathbf{R}{b(\Omega|\Omega')}] Q(\Omega|\Omega') ) [9].

This division highlights that the atomic response is not purely local but involves charge transfer between atoms. The lack of a unique way to partition the molecular electron density into atoms means there is no unique definition of atomic polarizabilities, leading to a variety of proposed partitioning schemes [9] [10].

Comparative Analysis of Partitioning Schemes

The choice of partitioning scheme significantly influences the calculated atomic contributions to polarizability and, consequently, the derived force field parameters. These schemes can be broadly categorized into two classes: those based on wavefunctions and orbital-based partition, and those based on real-space partition of the electron density.

Table 1: Classification of Electron Density Partitioning Schemes

| Category | Specific Schemes | Basis of Partitioning | Key Characteristics |

|---|---|---|---|

| Class II: Wavefunction-Based | Mulliken [10], Löwdin [10], Natural Population Analysis (NPA) [10] | Partitioning of the wavefunction (density matrix) over the basis functions. | Often lead to overlapping atoms. Can be sensitive to the choice of basis set, particularly Mulliken analysis. |

| Class III: Real-Space Density | Atoms-in-Molecules (AIM) [10] [11], Hirshfeld [10], Iterative Hirshfeld (Hirshfeld-I) [10], Becke [10] | Partitioning of the total electron density in three-dimensional space into atomic basins. | AIM uses zero-flux surfaces for non-overlapping atoms. Hirshfeld uses a "stockholder" approach for fuzzy, overlapping atoms. |

| Class IV: Electrostatic Potential | CHELPG, Merz-Kollman (MK-ESP) [10] | Fitting atomic charges to reproduce the molecular electrostatic potential (ESP) outside the molecule. | Does not directly partition density but derives parameters from its external effect. Charges are highly dependent on the fitting procedure and conformation. |

Impact on Polarizability Components

The choice of partitioning scheme directly affects the relative importance of the two components that contribute to the molecular polarizability: the charge fluctuation term (related to ( \mu{\text{bond}} )) and the induced atomic dipole term (related to ( \mu{\text{internal}} )).

A numerical study of 21 small molecules found that the charge fluctuation contribution dominates the molecular polarizability, but its computed ratio is highly scheme-dependent, ranging from 59.9% with the Hirshfeld or CM5 scheme to 96.2% with the Mulliken scheme [10]. This indicates that force fields based purely on induced dipoles (like the Drude oscillator or induced multipole models) might be missing a significant part of the response if their parameters are derived from schemes like Hirshfeld, whereas fluctuating charge models align more naturally with Mulliken-type partitions [10].

Furthermore, atomic polarizabilities are found to be highly anisotropic for most atoms [10]. Using an isotropic atomic polarizability, a common simplification in many force fields, is often inadequate to capture the true response of the electron density, particularly for atoms in anisotropic chemical environments like carbonyl oxygens or aromatic carbons.

Table 2: Performance of Selected Partitioning Schemes in Polarizability Calculations

| Partitioning Scheme | Dominant Polarizability Component | Transferability of Atomic Parameters | Computational Cost | Key Challenges |

|---|---|---|---|---|

| Mulliken | Charge Fluctuation (96.2%) [10] | Low (high basis-set sensitivity) | Low | Overestimates charge transfer; can yield unphysical results. |

| Hirshfeld | Mixed (Charge Fluctuation ~60%) [10] | Moderate | Moderate | "Fuzzy" atoms; atomic tensors may not be symmetric [9]. |

| AIM (QTAIM) | Induced Atomic Dipoles | High (well-defined basins) | High | Non-overlapping atoms; requires careful integration. |

| CM5 | Mixed (Charge Fluctuation ~60%) [10] | High (fitted to reproduce dipole moments) | Low (post-process) | Corrects Hirshfeld charges; but not a direct density partition. |

Methodologies and Protocols

Workflow for Calculating Distributed Polarizabilities

The following diagram outlines a general protocol for computing and partitioning molecular polarizability, integrating methodologies from the cited literature.

Key Computational Experiments

The finite-difference approach is a robust method for calculating molecular and atomic polarizabilities [10]. The protocol involves:

- System Preparation: Optimize the molecular geometry at a suitable level of theory (e.g., Density Functional Theory with a hybrid functional like B3LYP and a polarized basis set such as 6-311++G).

- Field Application: Perform single-point energy and property calculations with and without an external, uniform electrostatic field. The field is applied in six different directions (±x, ±y, ±z) with a strength typically on the order of 0.001 atomic units to remain within the linear response regime.

- Molecular Polarizability Calculation: For each field direction, compute the change in the molecular dipole moment. The components of the polarizability tensor are then obtained via finite differences: ( \alpha{ij} = \frac{\Delta \mui}{\Delta F_j} ).

- Electron Density Partitioning: Using the unperturbed electron density, partition the molecule into atomic fragments using the chosen scheme(s) (e.g., Hirshfeld, AIM).

- Atomic Dipole and Polarizability Calculation: For each atomic basin ( \Omega ) in the presence of the field, compute the atomic dipole moment ( \mu(\Omega) ). The atomic polarizability tensor components are then calculated as ( \alpha{ij}(\Omega) = \frac{\Delta \mui(\Omega)}{\Delta F_j} ).

Table 3: Essential Computational Tools for Polarizability and Partitioning Research

| Tool / Resource | Category | Function in Research | Example Software / Method |

|---|---|---|---|

| Quantum Chemistry Package | Software | Performs electronic structure calculations to obtain wavefunctions and electron densities. | Gaussian, GAMESS, ORCA, Psi4, CP2K |

| Partitioning Code | Software / Algorithm | Implements specific schemes to divide molecular electron density into atomic parts. | Multiwfn (for AIM, Hirshfeld), Hirschfeld (in Crystal), built-in tools in major packages |

| Polarizable Force Field | Application | Uses atomic polarizabilities and charges to model dispersion and polarization in MD simulations. | AMOEBA, CHARMM Drude, OPLS/CM1A |

| Linear-Scaling DFT | Method | Enables QM calculations on large systems (e.g., proteins) for environment-specific parameterization [11]. | ONETEP, CONQUEST, methods in CP2K |

| Charge Model 5 (CM5) | Model | Derives atomic charges from Hirshfeld populations to better reproduce molecular dipole moments [10]. | Available in GAMESS and as a standalone code |

Implications for Dispersion Parameter Accuracy

The partitioning challenge sits at the heart of developing accurate polarizable force fields for biomolecular simulations. Dispersion (London) forces are correlated with polarizability, and their accurate description in molecular dynamics (MD) simulations is critical for predicting protein-ligand binding affinities, protein folding, and material properties [11].

Force fields like AMBER and CHARMM traditionally use atomic polarizabilities derived either from molecular refraction measurements or fitted to reproduce quantum chemical results [10]. However, these parameters are often isotropic and transferable, meaning the same polarizability is assigned to a given atom type regardless of its local chemical environment. This approach neglects the environment-specific polarization effects, where the presence of electron-withdrawing or donating groups can significantly alter an atom's electronic character [11].

The move towards environment-specific force fields, where charges and Lennard-Jones parameters (which govern dispersion) are derived directly from quantum mechanical calculations of the specific system (e.g., a protein-ligand complex), is a promising direction [11]. By using AIM or Hirshfeld partitioning on the full system's electron density, the derived atomic polarizabilities and other parameters inherently include the effects of the environment, potentially leading to more accurate and predictive models without the need for extensive parameter libraries [11]. This approach ensures consistency between the electrostatic and dispersion parameters, as both are derived from the same underlying electron density.

Partitioning the molecular electron density to obtain meaningful atomic polarizabilities remains a complex but essential task for advancing the accuracy of computational models in drug development and materials science. The choice of partitioning scheme is not merely a technical detail; it fundamentally influences the balance between charge transfer and local dipole induction, the anisotropy of atomic response, and the ultimate transferability of parameters to complex biological environments. No single scheme is universally superior, with each offering distinct trade-offs between physical rigor, computational cost, and practicality for force field development. Future progress in accurately modeling dispersion interactions will likely rely on a more nuanced understanding of these partitioning choices and a move towards environment-specific, quantum-mechanically informed parameters that can capture the exquisite sensitivity of electron density to its chemical context.

Dispersion Forces as a Quantum Mechanical Phenomenon

Dispersion forces, or London forces, are a category of weak, attractive intermolecular interactions arising from correlated instantaneous dipoles in electron densities. These quantum mechanical phenomena are fundamental to a wide range of chemical and biological processes, from molecular recognition to the stability of amorphous solid dispersions in pharmaceutical science. The accurate quantification of dispersion energies remains a central challenge in computational chemistry. This whitepaper details how advancements in electron density partitioning methodologies provide a rigorous physical basis for deriving highly accurate, system-specific dispersion parameters, thereby enhancing the predictive power of next-generation molecular models and force fields.

Dispersion forces are a quintessential quantum mechanical phenomenon. They arise from the correlated, instantaneous fluctuations in the electron clouds of atoms and molecules, even those with no permanent dipole moment. These fluctuations create transient dipoles that induce complementary dipoles in neighboring species, resulting in a net attractive interaction. From a computational perspective, dispersion interactions represent a component of the dynamic electron correlation energy, which is challenging to describe with simple models [12].

The accurate representation of these forces in molecular modeling is non-negotiable for predicting the behavior of complex molecular systems. Traditional molecular mechanics force fields often treat dispersion through simple Lennard-Jones potentials, whose parameters are typically fit to experimental data. However, this approach can neglect system-specific polarization effects [13]. A more rigorous pathway involves deriving dispersion parameters directly from the underlying quantum mechanical (QM) electron density. The core thesis of this work is that the method chosen to partition the total electron density of a system into atomic contributions is a critical determinant in the accuracy of the resulting dispersion parameters. This whitepaper explores the theoretical foundation, computational protocols, and practical applications of this electron-density-centric approach.

Theoretical Foundation: From Electron Density to Interaction Energy

Electron Density Partitioning Methods

The first step in deriving dispersion parameters from first principles is to decompose the total electron density into atomic fragments. Several robust atoms-in-molecules (AIM) methods exist for this purpose:

- Density Derived Electrostatic and Chemical (DDEC): This method partitions the electron density into approximately spherical atom-centered basins by integrating the atomic electron density over all space. A key advantage is its ability to derive chemically meaningful charges and electron densities for both surface and buried atoms [13].

- Hirshfeld Partitioning: This approach weights the electron density at each point in space based on the relative contribution of the neutral, spherically symmetric atoms. Its iterative variant, Iterative Hirshfeld, is used to derive polarizable force field models [14].

- Minimal Basis Iterative Stockholder (MBIS): This modern partitioning scheme is used to map atomic electron densities, particularly their decay constants, to atom-specific Lennard-Jones parameters [14].

These partitioning schemes provide the atomic electron densities necessary to compute key dispersion-related properties.

The Tkatchenko-Scheffler Relation and Beyond

Once atomic electron densities are obtained, the Tkatchenko-Scheffler (TS) method is a widely used approach to derive dispersion coefficients. The TS method calculates the C6 dispersion coefficient for an atom in a molecule based on its partitioned volume and the known C6 coefficient of its free atom [14]. The formula is given by:

C6ᵢᵢ = (Vᵢ / Vᵢ⁰)² * C6ᵢ⁰

Where C6ᵢ⁰ and Vᵢ⁰ are the free-atom dispersion coefficient and volume, and Vᵢ is the atom-in-molecule volume. This method naturally incorporates the chemical environment's effect on an atom's dispersion interaction. More advanced models extend this to include higher-order terms (C8, etc.) and atomic polarizabilities [14].

Correlating Electron Density with Interaction Energy

Quantum Theory of Atoms in Molecules (QTAIM) analysis allows for the extraction of properties at bond critical points (BCPs), which can be correlated with interaction energies. For halogen bonds (a type of interaction with significant dispersion character), strong correlations have been established between the kinetic energy density (Gb) at the BCP and the interaction energy (Eint) [15].

Table 1: Correlations between Interaction Energy (Eint) and Electron Density Properties at the Bond Critical Point for Halogen Bonds [15].

| Interaction Type | Best-Fit Correlation | Coefficient of Determination (R²) | Mean Absolute Deviation (kcal/mol) |

|---|---|---|---|

| Cl···Cl | Eint = 0.49Vb ≈ -0.47Gb |

Not Specified | Not Specified |

| Br···Br | Eint = 0.58Vb ≈ -0.57Gb |

Not Specified | Not Specified |

| I···I | Eint = 0.68Vb ≈ -0.67Gb |

Not Specified | Not Specified |

| All Homo-Halogen Bonds | -Eint = 0.128Gb² - 0.82Gb + 1.66 |

0.91 | 0.39 |

These relationships provide a powerful tool for the express estimation of interaction strengths directly from a system's electron topology, bypassing more computationally expensive calculations.

Diagram 1: Workflow for deriving dispersion parameters and interaction energies from electron density. Two primary pathways are shown: one deriving C6 coefficients via the Tkatchenko-Scheffler method, and another estimating interaction energy via QTAIM property correlations.

Computational Protocols for Parameter Derivation

This section outlines detailed methodologies for deriving bespoke force field parameters, with a focus on dispersion.

The QUBEKit Workflow for Bespoke Force Fields

The Quantum Mechanical Bespoke (QUBE) force field and its associated software toolkit (QUBEKit) represent a comprehensive protocol for deriving system-specific force field parameters directly from QM calculations [13] [14]. The workflow is as follows:

- Quantum Mechanical Calculation: Perform an electronic structure calculation (typically Density Functional Theory with an appropriate functional like M06-2X) on the target molecule to obtain the wavefunction and electron density [15].

- Atoms-in-Molecule Analysis: Partition the electron density using a method like DDEC to obtain atomic charges and electron densities [13] [14].

- Bonded Parameter Derivation: Calculate bond and angle force constants from the QM Hessian matrix using the modified Seminario method [13].

- Non-Bonded Parameter Derivation:

- Atomic Charges: Derived directly from the DDEC-partitioned electron density [14].

- Lennard-Jones Parameters: The

C6dispersion coefficient is derived from the atomic electron densities using the Tkatchenko-Scheffler method. The repulsive (Rmin/σ) parameter is derived from atoms-in-molecule atomic radii [14].

- Torsion Parameter Fitting: Fit torsional parameters to reproduce QM dihedral potential energy scans [13].

- Refinement with Experimental Data: A small number of mapping parameters (e.g., free atom radii) can be tuned against experimental condensed-phase properties (e.g., liquid densities and enthalpies of vaporization) using tools like ForceBalance to ensure accuracy [14].

Table 2: Key Research Reagents and Software Solutions for Electron Density-Based Force Field Development.

| Reagent / Software | Function / Purpose | Application Context |

|---|---|---|

| DDEC Partitioning | Partitions electron density into atomic contributions for charge and dispersion derivation. | Core to QUBE force field; provides environment-specific atomic parameters [13] [14]. |

| Tkatchenko-Scheffler Method | Derives C6 dispersion coefficients from partitioned atomic volumes and electron densities. | Calculates the attractive part of the van der Waals interaction in bespoke force fields [14]. |

| Modified Seminario Method | Derives harmonic bond and angle force constants from the QM Hessian matrix. | Provides accurate bonded parameters without recourse to transferable libraries [13]. |

| QUBEKit | Software toolkit that automates the derivation of bespoke QM-based force fields. | Integrates the entire workflow from QM calculation to final force field parameter generation [14]. |

| ForceBalance | Software for systematic parameter optimization against QM and experimental target data. | Used to refine a small number of mapping parameters against liquid properties [14]. |

Protocol for Validating Dispersion Parameters in Amorphous Solid Dispersions

In pharmaceutical science, the physical stability of amorphous solid dispersions (ASDs) is heavily influenced by drug-polymer intermolecular interactions, including dispersion. The following protocol describes how to probe these interactions and their impact [16]:

- ASD Preparation: Prepare the amorphous solid dispersion using a method such as spray-drying or hot-melt extrusion.

- Solid-State Characterization:

- Differential Scanning Calorimetry (DSC): Measure the glass transition temperature (

Tg) of the ASD. An increase inTgand the absence of drug crystallization exotherms suggest strong drug-polymer interactions and good miscibility. - X-ray Powder Diffraction (XRPD): Confirm the amorphous nature of the dispersion and monitor for physical instability (crystallization) over time under stressed storage conditions (e.g., elevated temperature and humidity).

- Solid-State Nuclear Magnetic Resonance (ssNMR): Probe specific drug-polymer interactions, such as hydrogen or halogen bonding, and measure molecular mobility, which is directly related to physical stability.

- Differential Scanning Calorimetry (DSC): Measure the glass transition temperature (

- Computational Validation:

- Model the drug-polymer system using a force field with bespoke, density-derived dispersion parameters.

- Run molecular dynamics (MD) simulations to calculate interaction energies and assess the homogeneity of the dispersion.

- Correlate the computed interaction energies with experimental stability data (e.g., the time to crystallization from XRPD studies).

Applications and Impact on Predictive Modeling

The integration of accurate, QM-derived dispersion parameters has a transformative impact across multiple fields.

Drug Design and Protein-Ligand Binding

Accurate dispersion forces are critical for predicting protein-ligand binding affinities. A study demonstrated the advantage of using bespoke, environment-specific force fields for a protein–ligand complex. The computed relative binding free energy of indole and benzofuran to the lysozyme protein using these force fields (-0.4 kcal/mol) was in excellent agreement with experiment (-0.6 kcal/mol) and substantially more accurate than standard force fields (-2.4 kcal/mol) [13]. This highlights how moving beyond transferable parameters to system-specific ones, which include native-state polarization, improves predictive accuracy in computer-aided drug design.

Stabilization of Amorphous Solid Dispersions

Intermolecular interactions, including van der Waals forces and halogen bonding, are crucial for stabilizing amorphous solid dispersions (ASDs) of poorly water-soluble drugs. Drug-polymer interactions provide an energy barrier that drug molecules must overcome to aggregate and crystallize [16]. The accurate quantification of these dispersion-driven interactions through electron density partitioning helps formulators select optimal polymer carriers and predict the maximum stable drug loading, thereby enhancing the development of robust pharmaceutical products.

Diagram 2: The role of intermolecular interactions, including dispersion forces, in determining the physical stability of amorphous solid dispersions (ASDs). Strong interactions lead to stability, while weak interactions result in phase separation and crystallization.

Dispersion forces, rooted in the quantum mechanical nature of electron correlation, are indispensable for realistic molecular modeling. The accuracy with which these forces are represented hinges on the methodology used to partition the molecular electron density. Approaches like the DDEC and Hirshfeld partitioning, coupled with the Tkatchenko-Scheffler method, provide a physically rigorous pathway to derive system-specific dispersion parameters directly from first principles. The integration of these parameters into bespoke force fields, such as QUBE, has already demonstrated superior performance in challenging applications like binding affinity prediction and the stabilization of amorphous pharmaceuticals. As these protocols become more automated and accessible through software like QUBEKit, they pave the way for a new generation of highly accurate, predictive molecular simulations across chemistry and drug discovery.

Implementing Partitioning Schemes: From Theory to Computational Practice

Hirshfeld Atom Refinement (HAR) represents a significant advancement in crystallographic structure determination from X-ray diffraction data. Unlike the traditional Independent Atom Model (IAM), which treats crystals as collections of spherical, non-interacting atomic densities, HAR utilizes an aspherical atom partitioning of tailor-made ab initio quantum mechanical molecular electron densities [17] [18]. This method was developed to address a critical limitation of conventional X-ray crystallography: the inaccurate determination of hydrogen atom parameters [17]. Hydrogen atoms pose a particular challenge for X-ray diffraction because they contribute minimally to overall scattering due to having only a single electron [17] [18]. Consequently, IAM typically shortens X-H bond lengths by approximately 0.1 Å compared to benchmark neutron diffraction values and often yields non-positive definite anisotropic displacement parameters (ADPs) for hydrogen atoms [19].

HAR overcomes these limitations through an iterative quantum-mechanical approach that calculates molecular electron densities and partitions them into atomic contributions using the Hirshfeld partition [17] [18]. This method provides aspherical atomic form factors that more accurately represent the electron density distribution in molecular crystals, leading to substantial improvements in the accuracy and precision of structural parameters, particularly for hydrogen atoms [17]. The implementation of HAR has transformed X-ray crystallography into a more powerful tool for obtaining accurate structural information that was previously only accessible through neutron diffraction experiments [17].

Fundamental Principles and Methodology

Theoretical Foundation

HAR is grounded in the concept of stockholder partitioning of electron density, originally proposed by Hirshfeld [19]. In this approach, the electron density of a molecule in a crystal is partitioned into atomic basins based on a promolecular reference density. The key theoretical principle states that the electron density at any point in space is assigned to atoms in proportion to their contribution to a promolecular density composed of non-interacting atoms [19] [20]. This partitioning scheme generates aspherical atomic form factors that realistically reflect the deformation of electron density due to chemical bonding and intermolecular interactions [17].

The mathematical formalism of HAR utilizes these aspherical atomic form factors calculated from quantum mechanical wavefunctions rather than relying on the spherical scattering factors employed in IAM [17] [18]. This approach properly accounts for the contraction of electron density toward bonds and more accurately represents the actual electron distribution in molecular crystals. The scattering factors derived from Hirshfeld-partitioned atoms thus incorporate chemical bonding effects explicitly, leading to a more physically realistic model for structure refinement against X-ray diffraction data [19] [17].

The HAR Workflow

The HAR methodology follows an iterative computational procedure that cycles through successive stages of electron density calculation, Hirshfeld atom scattering factor computation, and structural least-squares refinement [17] [18]. The workflow can be summarized in several key steps:

Initial Structure Model: The process begins with a conventional IAM-refined structure, which serves as the starting point for quantum mechanical calculations [21].

Wavefunction Calculation: A single-point quantum mechanical calculation is performed on a molecular cluster or the crystal asymmetric unit to obtain the molecular electron density [17] [22]. This step incorporates effects of the crystal environment through cluster charges or periodic boundary conditions.

Electron Density Partitioning: The total electron density is partitioned into atomic contributions using the Hirshfeld method, where the electron density at each point is assigned to atoms based on their contribution to a promolecular reference density [19] [20].

Scattering Factor Calculation: Aspherical atomic scattering factors are derived from the Fourier transform of the Hirshfeld-partitioned electron densities [17] [21].

Structure Refinement: The crystal structure is refined against X-ray diffraction data using the newly calculated aspherical scattering factors, updating atomic positions and displacement parameters [17] [18].

Convergence Check: The process repeats from step 2 using the newly refined structure until convergence is achieved, typically when changes in structural parameters fall below predetermined thresholds [17].

This iterative cycle ensures consistency between the quantum mechanical electron density model and the refined structural parameters, leading to progressively improved accuracy [17].

Table 1: Comparison of Crystallographic Refinement Methods

| Feature | Independent Atom Model (IAM) | Multipole Model | Hirshfeld Atom Refinement (HAR) |

|---|---|---|---|

| Electron Density Treatment | Spherical atoms | Spherical harmonics expansion | Hirshfeld-partitioned molecular wavefunction |

| Hydrogen Atom Accuracy | Poor (X-H bonds ~0.1 Å too short) | Improved but often requires constraints | High (comparable to neutron diffraction) |

| Data Resolution Requirements | Standard | High (typically ≤0.5 Å) | Standard (successful at 0.65 Å) |

| Computational Demand | Low | Moderate | High |

| Treatment of Crystal Environment | None | Indirect via multipole parameters | Direct via cluster charges or periodic calculation |

| Parameter Transferability | Not applicable | Required for database approaches | Not required; system-specific |

Workflow Diagram

The following diagram illustrates the iterative HAR procedure:

Electron Density Partitioning in HAR

The Hirshfeld Partition and Its Variants

The core of HAR lies in the method used to partition the molecular electron density into atomic contributions. The original Hirshfeld partition, also known as the stockholder partition, divides the electron density at each point in space among atoms in proportion to their contribution to a promolecular density composed of spherical, neutral atoms [19] [20]. This approach can be expressed mathematically as:

[ \rhoA(\mathbf{r}) = \frac{\rhoA^0(\mathbf{r})}{\sumB \rhoB^0(\mathbf{r})} \rho_{\text{total}}(\mathbf{r}) ]

where (\rhoA(\mathbf{r})) is the assigned density for atom A at point (\mathbf{r}), (\rhoA^0(\mathbf{r})) is the promolecular atom density, and (\rho_{\text{total}}(\mathbf{r})) is the total molecular electron density [19].

Recent research has explored alternative partitioning schemes in a approach termed Generalized Atom Refinement (GAR) [19] [20]. These include:

- Iterative Hirshfeld: An extension where the promolecular density is updated iteratively based on the results of previous partitions [19] [20].

- Iterative Stockholder: A method that ensures the atomic densities integrate to the same populations as the original Hirshfeld scheme [19].

- Minimal Basis Iterative Stockholder (MBIS): Utilizes minimal basis sets to determine the partition [19] [20].

- Becke Partition: A space partitioning scheme based on atomic radii [19] [20].

Studies comparing these methods have found that while differences in R factors between partitions are small, systematic effects on bond lengths and ADP values of polar hydrogen atoms are observable [19] [20]. The Hirshfeld, iterative stockholder, and MBIS partitions have been identified as particularly promising for further optimization of GAR methodologies [19] [20].

Exponential Hirshfeld Partition

A recent innovation in electron density partitioning for HAR is the exponential Hirshfeld partition (expHAR) [23]. This modified approach introduces an adjustable parameter (n) that controls the overlap level of atomic electron densities:

[ wA(\mathbf{r}) = \frac{[\rhoA^0(\mathbf{r})]^n}{\sumB [\rhoB^0(\mathbf{r})]^n} ]

where (w_A(\mathbf{r})) is the weight function for atom A, and n is the adjustable parameter [23]. When n=1, the method reduces to the standard Hirshfeld partition. This parameterization provides additional flexibility in tailoring the electron density partition to specific chemical environments [23].

Application of expHAR has demonstrated improvements in hydrogen atom parameters compared to standard HAR, particularly in cases with the highest deviations from neutron diffraction reference values [23]. For B3LYP-based refinements, X-H bond lengths and hydrogen ADPs improved for 9 out of 10 structures, while MP2-based refinements showed improvements in 8 out of 9 structures [23].

Partitioning Methods Relationship

The relationships between different electron density partitioning methods in HAR can be visualized as follows:

Accuracy and Performance Assessment

Comparison with Neutron Diffraction

The gold standard for assessing hydrogen atom parameters in crystallography is neutron diffraction, as neutrons directly interact with atomic nuclei and provide accurate positions and displacement parameters for all atoms, including hydrogen [17] [18]. Multiple studies have validated HAR against neutron diffraction data, demonstrating remarkable agreement for both hydrogen atom positions and anisotropic displacement parameters (ADPs) [17].

In a comprehensive study on the dipeptide Gly-L-Ala at multiple temperatures (12, 50, 100, 150, 220, and 295 K), HAR with BLYP/cc-pVTZ calculations achieved an accuracy better than 0.009 Šfor X-H bond lengths at temperatures of 150 K or below [17]. For hydrogen ADPs, the accuracy was better than 0.006 Ų as judged by mean absolute X-ray minus neutron differences [17]. These results represent some of the most accurate hydrogen parameters ever obtained from X-ray diffraction data [17].

Notably, the precision of determining bond lengths and ADPs for hydrogen atoms through HAR is comparable to that from neutron measurements, achieved with X-ray data at a routinely achievable resolution of 0.65 Å [17] [18]. This represents a significant advancement over traditional IAM, where X-H bonds are typically underestimated by approximately 0.1 Å and hydrogen ADPs are often problematic [19] [17].

Table 2: Accuracy of Hydrogen Parameters in HAR vs Reference Methods

| Refinement Method | X-H Bond Length Accuracy (Å) | Hydrogen ADP Accuracy (Ų) | Data Resolution Requirements |

|---|---|---|---|

| Independent Atom Model (IAM) | ~0.1 shorter than neutron | Often non-positive definite | Standard |

| Multipole Model (TAAM) | ~0.02 shorter than neutron [19] | Improved but may require restraints | High (≤0.5 Å) |

| HAR (BLYP/cc-pVTZ) | <0.009 difference from neutron [17] | <0.006 difference from neutron [17] | Standard (0.65 Å sufficient) |

| HAR/3D ED | Comparable to neutron [21] | Comparable to neutron [21] | Electron diffraction data |

Comparison with Transferable Aspherical Atom Models

Transferable Aspherical Atom Models (TAAM) represent an alternative approach to incorporating aspherical scattering factors in crystallographic refinement [19] [23]. TAAM utilizes predefined sets of multipole parameters for atoms in specific chemical environments, based on the transferability principle that atoms in similar environments have similar electron density distributions [19] [23].

Comparative studies have revealed that HAR generally produces bond lengths slightly closer to neutron diffraction values than TAAM [19]. The average bond length underestimation was 0.020 Å for TAAM compared to 0.014 Å for HAR in one study [19]. This advantage of HAR is attributed to its ability to calculate system-specific electron densities without relying on transferability approximations, and its capacity to more accurately account for crystal environment effects [19] [23].

However, TAAM remains less computationally intensive than HAR and may be preferable for high-throughput applications where the highest accuracy for hydrogen parameters is not critical [19] [23].

Experimental Protocols and Methodologies

Standard HAR Protocol for X-ray Diffraction Data

The following protocol outlines a typical HAR procedure for single-crystal X-ray diffraction data:

Data Collection: Collect X-ray diffraction data to at least 0.65 Å resolution, though successful refinements have been demonstrated with even lower resolution data [17]. Low-temperature measurements (150 K or below) are recommended for improved accuracy [17].

Initial Refinement: Perform a conventional IAM refinement using standard crystallographic software to obtain initial atomic parameters for all atoms, including hydrogen [17] [22].

Wavefunction Calculation Setup:

- Select quantum chemical method (BLYP, B3LYP, or Hartree-Fock recommended) [17] [22]

- Choose basis set (cc-pVTZ or def2-SVP recommended) [17] [22]

- Define molecular cluster including the asymmetric unit and surrounding molecules to account for crystal environment effects [17]

- Implement crystal field representation via cluster multipoles or periodic calculations [19] [22]

Iterative HAR Procedure:

- Calculate molecular wavefunction using quantum chemical software [17] [18]

- Partition electron density using Hirshfeld or alternative method [19] [20]

- Compute aspherical atomic scattering factors from partitioned density [17] [21]

- Refine structure against X-ray data using new scattering factors [17] [18]

- Repeat until convergence (typically 3-5 cycles) [17]

Validation: Compare geometric parameters, particularly X-H bond lengths and hydrogen ADPs, with expected values from neutron diffraction or computational chemistry [17].

HAR with 3D Electron Diffraction Data

The combination of HAR with 3D Electron Diffraction (3D ED) has emerged as a powerful approach for studying nanocrystalline materials [21] [24]. The protocol differs from standard X-ray HAR in several key aspects:

Data Collection: Acquire 3D ED data using continuous rotation method with minimal electron dose to reduce radiation damage [21].

Dynamical Refinement: Account for multiple scattering of electrons through dynamical refinement prior to HAR implementation [21].

Small Cluster Calculations: Due to stronger interaction of electrons with matter, smaller molecular clusters may be sufficient for accurate electron density calculations [21].

This approach has been successfully applied to organic compounds including paracetamol, L-tyrosine, and 1-methyluracil, yielding hydrogen positions and ADPs comparable to neutron diffraction references [21] [24]. The most significant improvements were observed for hydrogen bond lengths and low-resolution reflection R-factors [21].

Computational Aspects and Parameters

Quantum Chemical Method Selection

The choice of quantum chemical methodology significantly influences HAR results [22]. Systematic benchmarking studies have revealed that:

Hartree-Fock (HF) method often outperforms density functional theory (DFT) for polar organic molecules, despite producing higher R factors in some cases [22]. HF tends to yield longer polar X-H bonds, which may be more accurate for certain systems [23].

Density Functional Theory with hybrid functionals containing approximately 25% Hartree-Fock exchange (such as B3LYP) typically provides the lowest R factors [23] [22]. The amount of exact exchange systematically influences X-H bond lengths, with higher percentages generally leading to longer bonds [23].

Post-HF Methods including second-order Møller-Plesset perturbation theory (MP2) and coupled cluster singles and doubles (CCSD) have been tested but show no clear advantage over less computationally expensive methods [19] [23].

Solvent Models incorporating implicit solvation systematically improve refinement results compared to gas-phase calculations for polar organic molecules [22].

Basis Set Selection

Basis set choice represents another critical parameter in HAR calculations [22]:

cc-pVDZ and cc-pVTZ: These correlation-consistent basis sets are commonly used in HAR. The larger cc-pVTZ generally provides more accurate results but at increased computational cost [17] [22].

def2-SVP and def2-TZVP: The Ahlrichs def2 series offers a cost-effective alternative with comparable performance to the cc-pVnZ sets [22].

Interestingly, smaller basis sets sometimes perform comparably or even better than larger ones in terms of the agreement with experimental data, possibly due to error cancellation effects [22].

Crystal Environment Treatment

Accurate representation of the crystal environment is crucial for successful HAR [19] [22]:

Cluster Charges: Placing point charges or multipoles on surrounding molecules to mimic the crystal field [19] [22].

Periodic Calculations: Using periodic boundary conditions to compute the wavefunction, which properly accounts for crystal periodicity but is computationally more demanding [22].

Fragmentation Approaches: Dividing large systems into smaller fragments for individual quantum chemical calculations [19] [21].

Studies indicate that accounting for intermolecular interactions improves HAR accuracy, particularly for systems with strong hydrogen bonds [19] [23].

Table 3: Essential Computational Tools for HAR Implementation

| Tool/Resource | Type | Function in HAR | Availability |

|---|---|---|---|

| OLEX2 | Software platform | Structure visualization, refinement, and analysis | Commercial with academic licenses |

| NoSpherA2 | HAR implementation | Native HAR inside OLEX2 without external dependencies | Integrated in OLEX2 |

| TONTO | Quantum crystallography software | Original HAR implementation with extensive features | Free for academic use |

| HARt | OLEX2 interface | Simplified access to basic HAR functionalities | Pre-installed in OLEX2 |

| ELMO Libraries | Database | Extremely localized molecular orbitals for fragmentation | Available with corresponding software |

| SHADE3 | ADP estimation | Hydrogen ADP prediction for constrained refinement | Web service |

| cc-pVnZ basis sets | Computational resource | Standard basis sets for quantum chemical calculations | Included in quantum chemistry packages |

| UBDB/MTAS | Aspherical scattering factor database | Transferable aspherical atom parameters for comparison | Publicly available |

Current Limitations and Future Directions

Despite its significant advantages, HAR faces several challenges and limitations:

Computational Demand: The need for repeated quantum mechanical calculations makes HAR computationally intensive, especially for large systems [19]. Fragmentation approaches and HAR-ELMO methods are being developed to address this limitation [19] [21].

Hydrogen ADP Accuracy: While greatly improved over IAM, hydrogen ADPs from HAR may still be less accurate than those derived from specialized estimation methods like SHADE3 or Normal Mode Refinement [23]. In some cases, fixing hydrogen ADPs to estimated values improves other refinement metrics [23].

Heavy Elements: Treatment of structures containing heavy metals remains challenging due to limited basis set availability and the need for relativistic methods [19].

Disorder Refinement: Proper handling of disordered regions in crystal structures is not yet fully implemented in most HAR software [19].

Macromolecular Applications: Extension to protein crystallography is still in development, though progress has been made with fragmentation techniques and database approaches [19] [21].

Future developments in HAR will likely focus on addressing these limitations through methodological improvements and computational optimizations. The integration of machine learning approaches for rapid electron density prediction, development of more efficient quantum chemical methods, and enhanced treatment of crystal environments represent promising research directions [19] [23] [22].

Hirshfeld Atom Refinement has revolutionized the accuracy of crystallographic structure determination, particularly for hydrogen atom parameters that are essential for understanding molecular structure, bonding, and interactions. By incorporating system-specific aspherical scattering factors derived from quantum mechanical calculations, HAR achieves accuracy comparable to neutron diffraction while using routinely accessible X-ray diffraction data [17]. The ongoing development of alternative electron density partitioning schemes [19] [23] [20], improved treatment of crystal environments [22], and extension to emerging techniques like 3D electron diffraction [21] [24] promise to further enhance the applicability and accuracy of this powerful method. As computational resources continue to grow and methodologies refine, HAR is poised to become an increasingly standard approach in crystallography, providing unprecedented insights into molecular structure and properties.

Theoretical Foundations