Bridging the Reality Gap: A Practical Guide to Validating Force Field Parameters Against Experimental Observables

Accurate force fields are the cornerstone of reliable molecular simulations in drug discovery and materials science.

Bridging the Reality Gap: A Practical Guide to Validating Force Field Parameters Against Experimental Observables

Abstract

Accurate force fields are the cornerstone of reliable molecular simulations in drug discovery and materials science. However, a significant 'reality gap' often exists between computational predictions and experimental results. This article provides a comprehensive framework for researchers and scientists to rigorously validate and optimize force field parameters against experimental data. Covering foundational principles, advanced methodologies like Bayesian inference and data fusion, practical optimization techniques, and robust validation benchmarks, we synthesize the latest strategies to enhance the predictive power of molecular simulations, ensuring they deliver tangible value in biomedical research and development.

The Why and What: Core Principles and the Experimental-Computational Gap

In the fields of computational chemistry and drug discovery, the "reality gap" represents a critical chasm between the performance of computational models on standardized benchmarks and their efficacy in real-world, experimental applications. This gap arises when models overfit simplified benchmark datasets but fail to capture the full complexity of biological and physical systems. Force fields—mathematical models describing the potential energy of a system of particles—are fundamental to molecular dynamics (MD) simulations, but their development and validation are often impaired by scarce and sometimes erroneous data, resulting in models that do not always agree with well-established experimental observations [1]. While computational benchmarks provide a necessary framework for initial validation, this article demonstrates through comparative data and experimental protocols why they are insufficient alone for ensuring real-world predictive accuracy, particularly in the context of force field parameter validation against experimental observables.

The Quantitative Reality Gap: Comparative Performance Data

Force Field Accuracy Across Different Physical Properties

The table below summarizes the performance of various force fields against experimental observables, illustrating the variable accuracy that characterizes the reality gap.

Table 1: Force Field Performance Against Experimental Observables

| Force Field | Target System/Property | Computational Benchmark Result | Experimental Validation Result | Reality Gap Identified |

|---|---|---|---|---|

| ML Potential (DFT-trained) [1] | Ti lattice parameters & elastic constants | Good agreement with underlying DFT data | Did not quantitatively reproduce experimental temperature-dependent properties | Deviation attributed to inaccuracies of the DFT functionals used for training |

| DFT & EXP Fused Model [1] | Ti mechanical properties | Slightly increased errors on DFT test data | Concurrently satisfied all target experimental mechanical properties and lattice parameters | Fused data learning strategy achieved higher real-world accuracy |

| PCFF, CVFF, SwissParam, CGenFF, GAFF, DREIDING [2] | Polyamide membrane density, porosity, Young's modulus | Most forcefields predicted properties in dry state well | Only specific forcefields (CVFF, SwissParam) accurately predicted pure water permeability at 100 bar | Many forcefields failed under experimentally relevant hydration and pressure conditions |

| AMBER, GROMACS, NAMD, ilmm [3] | Engrailed homeodomain (EnHD) and RNase H native state dynamics | Good and similar reproduction of various experimental observables at 298 K | Underlying conformational distributions and sampling extent showed subtle differences | Ambiguity about which simulated conformational ensembles are correct |

| Multiple Packages [3] | Protein thermal unfolding at 498 K | Some packages failed to allow unfolding or produced results at odds with experiment | Larger amplitude motions exaggerated differences between simulation packages | Failure to capture correct behavior under non-ambient conditions |

The Drug Discovery Efficacy-Effectiveness Gap

The reality gap has a direct analogue in pharmaceutical development, known as the efficacy-effectiveness gap [4]. A comprehensive evaluation revealed that contemporary cancer therapies demonstrate a median overall survival difference of 5.2 months between clinical trial data and real-world evidence, with 97% of study indications showing worse survival outcomes in real-world settings [4]. This demonstrates that the challenge of translating in silico or controlled environment results to real-world performance is a pervasive issue across multiple scientific domains.

Experimental Protocols for Bridging the Gap

The Fused Data Learning Strategy

A promising methodology to bridge the reality gap in force field development is the fused data learning strategy, which concurrently trains models on both quantum mechanical simulation data and experimental data [1].

Protocol Overview:

- DFT Trainer: A standard regression where a Machine Learning potential takes an atomic configuration and predicts potential energy, forces, and virial stress. Parameters are optimized to match values in a Density Functional Theory (DFT) database.

- EXP Trainer: Optimization is performed such that properties computed from the ML-driven simulation's trajectory match experimental values. Gradients are computed using the Differentiable Trajectory Reweighting (DiffTRe) method [1].

- Iterative Training: The process alternates between the DFT and EXP trainers after each epoch, allowing the model to simultaneously satisfy both data sources.

Key Experimental Setup:

- DFT Database: Consists of thousands of samples including equilibrated, strained, and randomly perturbed structures from various temperatures and phases [1].

- Experimental Targets: Include temperature-dependent elastic constants and lattice constants of the material (e.g., hcp Titanium) across a range of temperatures [1].

- Validation: The final model is tested on "out-of-target" properties such as phonon spectra and liquid phase structural properties to ensure generalizability [1].

Benchmarking Force Fields for Specific Applications

For specific applications like modeling polyamide membranes, a rigorous benchmarking protocol against experimental data is essential [2].

Protocol Overview:

- Membrane Preparation: Generate cross-linked polyamide membranes with a chemical composition (e.g., O/N ratio) comparable to experimentally synthesized membranes.

- Multi-State Simulation: Simulate membranes in:

- Dry State: Compare predicted density, porosity, and Young's modulus against experimental data.

- Hydrated State: Evaluate water uptake and swelling behavior.

- Non-Equilibrium Reverse Osmosis: Simulate water permeability at experimentally relevant pressures (e.g., 100 bar).

- Validation Criterion: Identify the best-performing forcefield as the one whose predictions for key properties (e.g., pure water permeability) fall within the experimental confidence interval [2].

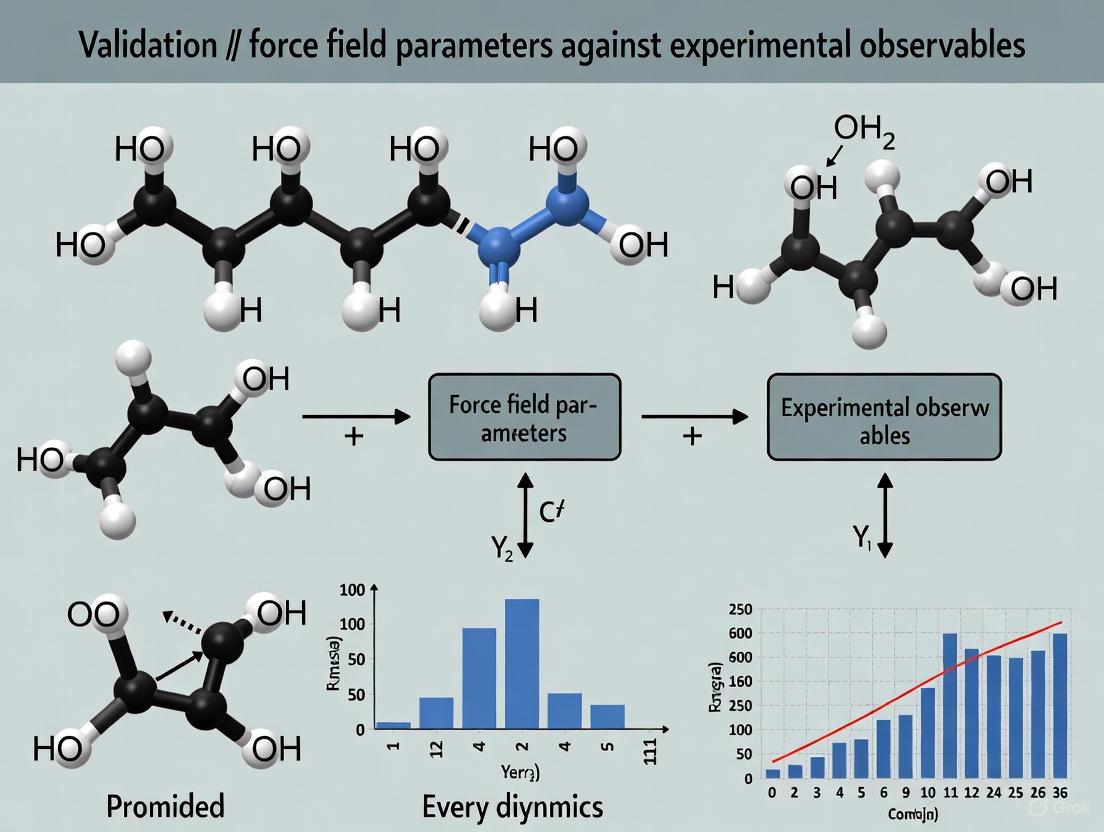

Visualizing the Integrated Workflow for Experimental Validation

The following diagram illustrates a comprehensive, iterative workflow for developing and validating force fields against experimental observables, integrating the protocols discussed above to directly address the reality gap.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 2: Key Research Reagents and Computational Tools for Force Field Validation

| Tool/Reagent | Function/Description | Role in Addressing Reality Gap |

|---|---|---|

| Differentiable Trajectory Reweighting (DiffTRe) [1] | A method that enables gradient-based optimization of force field parameters based on experimental data without backpropagating through entire simulations. | Enables direct training of models on experimental observables, fusing simulation and real-world data. |

| Standardized Benchmark Datasets [5] | Curated sets of experimental and quantum mechanical data for organic compounds, including halogens and common ions. | Provides a common ground for objective comparison of force fields, preventing cherry-picking of validation properties. |

| FFParam-v2.0 [6] | A comprehensive tool for optimizing CHARMM additive and polarizable force field parameters using both QM and condensed phase target data. | Allows parameter optimization against key experimental observables like heats of vaporization and free energies of solvation. |

| Graph Neural Networks (GNNs) [1] | A machine learning architecture used to develop high-capacity potentials that can learn complex relationships from diverse data. | Provides the flexible functional form needed to simultaneously reproduce multiple data sources (DFT and experiment). |

| Polarizable Continuum Model (PCM) [5] | A model used in computing atomic charges that accounts for the dielectric environment (e.g., water vs. vacuum). | Improves the transferability of force fields across different environments, a common source of the reality gap. |

| Clinical Practice Guidelines & Real-World Evidence (RWE) [7] [4] | Structured medical knowledge and data collected from routine clinical practice outside of controlled trials. | Used to create clinically grounded benchmarks (e.g., GAPS framework) and quantify the efficacy-effectiveness gap in drug discovery. |

The "reality gap" is not an insurmountable barrier but a call for a more rigorous, integrated approach to computational model validation. As the comparative data and experimental protocols presented here demonstrate, reliance solely on computational benchmarks is a precarious strategy. The future of accurate prediction in material science and drug development lies in methodologies that proactively fuse multiple data sources, such as the fused data learning strategy for force fields [1] and the incorporation of Real-World Evidence in pharmaceutical development [4]. By adopting these integrated validation frameworks, researchers can develop models that not only perform well on benchmarks but also reliably predict real-world behavior, thereby narrowing the gap between computational promise and experimental reality.

The development of reliable force fields represents a cornerstone of computational materials science and drug development, enabling the atomistic simulation of systems ranging from metallic alloys to complex biomolecules. However, a significant challenge persists: the "reality gap" between computationally predicted properties and actual experimental measurements [8]. Force fields trained exclusively on quantum mechanical data, such as from Density Functional Theory (DFT) calculations, often achieve impressive performance on computational benchmarks but fail to reproduce experimental observables with the fidelity required for practical applications [8]. This gap highlights the critical need for rigorous validation against experimental data—particularly fundamental mechanical and structural properties like lattice parameters and elastic constants—which serve as essential benchmarks for evaluating force field accuracy. These observables provide a crucial connection between atomic-scale simulations and macroscopic material behavior, offering a robust foundation for parameterizing and validating computational models across diverse chemical spaces [1] [8].

Recent comprehensive evaluations have revealed systematic limitations in universal machine learning force fields (UMLFFs), where even state-of-the-art models exhibit higher prediction errors for properties like density than the threshold required for practical applications [8]. This underscores the indispensable role of experimental validation in force field development. This guide objectively compares the performance of different force field approaches when validated against key experimental observables, providing researchers with a structured framework for evaluating force field reliability in real-world scenarios.

Force Field Paradigms: A Comparative Framework

Force fields can be broadly categorized into three distinct paradigms, each with characteristic strengths and limitations in reproducing experimental observables. The table below summarizes their key attributes:

Table 1: Comparison of Force Field Paradigms and Their Approach to Experimental Data

| Force Field Type | Parameter Source & Typical Count | Interpretability | Primary Training Data | Handling of Experimental Observables |

|---|---|---|---|---|

| Classical Force Fields (e.g., UFF, AMBER) [9] | 10-100 parameters; Mostly physical (bond lengths, angles, LJ terms) [9] | High (each term corresponds to a physical quantity) [9] | Traditional: Experimental data [1]. Modern: Often QM data [9] | Historically central to parameterization; now often indirect via QM data. |

| Reactive Force Fields (e.g., ReaxFF) [9] | 100+ parameters [9] | Moderate (complex bond-order formalism) [9] | Primarily QM datasets [10] | Not the primary calibration source; used for validation. |

| Machine Learning Force Fields (MLFFs) [1] [10] [8] | 100,000s+ parameters (complex neural networks) [10] | Low ("black box" models) [9] | Primarily large-scale QM calculations [1] [8] | Increasingly used in fused/fine-tuning strategies to correct QM inaccuracies [1]. |

Performance Comparison Against Experimental Benchmarks

Accuracy on Structural and Mechanical Properties

The most telling validation of a force field is its ability to predict structural and mechanical properties measured experimentally. The following table synthesizes performance data from systematic evaluations:

Table 2: Performance Comparison of Force Field Types Against Key Experimental Observables

| Experimental Observable | Classical Force Fields | Reactive Force Fields (ReaxFF) | Machine Learning Force Fields (MLFFs) | Validation Context |

|---|---|---|---|---|

| Lattice Parameters & Density | Variable accuracy; often parameterized for specific systems. | Limited published data on systematic benchmarking. | MAPE of ~10% or less for best UMLFFs (Orb, MatterSim); but often exceeds practical 2% threshold [8]. | Evaluation on ~1,500 mineral structures (UniFFBench) [8]. |

| Elastic Constants | Can be accurate if explicitly fitted; may lack transferability. | Not typically a primary target for validation. | Fused Data MLFFs: High accuracy for targeted system (e.g., Ti) [1]. UMLFFs: Errors correlate with training data representation [8]. | Target properties in fused data learning [1]; MinX-EM dataset in UniFFBench [8]. |

| Phase Stability | Highly dependent on functional form; can be challenging. | Can model phase transitions but with uncertain accuracy. | DFT-trained MLFFs often inherit DFT's phase diagram inaccuracies [1]. | Critical for predictive simulations [1]. |

| Chemical Reactivity | Generally incapable of modeling bond breaking/forming [9]. | Designed for this purpose; accuracy can be system-dependent [10] [9]. | High potential with transfer learning (e.g., EMFF-2025 for HEMs) [10]. | Essential for catalytic or decomposition studies [10]. |

Simulation Stability and Transferability

Beyond accuracy on specific properties, practical force field application requires robustness in molecular dynamics (MD) simulations.

- MD Simulation Stability: A systematic evaluation of UMLFFs revealed a pronounced hierarchy in simulation stability. On a benchmark of complex mineral structures, models like Orb and MatterSim achieved near-perfect completion rates, while others like CHGNet and M3GNet suffered failure rates exceeding 85%. These failures often stem from unphysical forces or memory overflow during simulation [8].

- The Disconnect Concern: A critical finding is the disconnect between simulation stability and mechanical property accuracy. A model that remains stable throughout a simulation does not guarantee accurate prediction of elastic constants or other target observables. This underscores that stability is a necessary, but not sufficient, condition for a reliable force field [8].

- Data Representation Bias: The accuracy of UMLFFs correlates directly with how well a target system's chemistry is represented in their training data (e.g., MPtrj, OC22). This indicates that their "universality" is often limited by systematic biases in training data composition rather than the underlying model architecture [8].

Experimental Protocols for Force Field Validation

The Fused Data Learning Strategy

A promising methodology to bridge the reality gap is fused data learning, which concurrently trains an ML potential on both quantum mechanical (QM) data and experimental observables [1].

Diagram 1: Fused data learning workflow for ML potential training.

Protocol Workflow:

- Initialization: Start with an ML potential pre-trained on a diverse set of DFT calculations (energies, forces, virial stress) [1].

- DFT Training Epoch: For one epoch, optimize parameters to match the ML potential's predictions against the DFT database using a standard regression loss [1].

- Experimental Training Epoch: For one epoch, optimize parameters to match experimental observables. This involves:

- Running MD simulations with the current ML potential.

- Calculating target experimental properties (e.g., elastic constants) from the simulation trajectory.

- Computing gradients using methods like Differentiable Trajectory Reweighting (DiffTRe), which avoids backpropagation through the entire simulation, thus enabling efficient learning from ensemble-averaged experimental data [1].

- Iteration: Alternate between the DFT and Experimental trainers for multiple epochs until convergence on both datasets [1].

Application Example: This protocol was applied to develop a titanium ML potential. The resulting model concurrently satisfied DFT-derived targets and experimental temperature-dependent elastic constants and lattice parameters of hcp titanium, achieving higher accuracy than models trained on a single data source [1].

The UniFFBench Experimental Benchmarking Framework

To standardize evaluation, the UniFFBench framework provides a protocol for rigorous experimental validation of force fields against a hand-curated dataset of approximately 1,500 mineral structures [8].

Diagram 2: UniFFBench framework for experimental force field validation.

Benchmarking Protocol:

- Dataset Curation: The MinX dataset is organized into four subsets to probe different aspects of real-world performance [8]:

- MinX-EQ: Structures under standard ambient conditions.

- MinX-HTP: Structures under extreme temperatures and pressures.

- MinX-POcc: Structures with partial atomic site occupancies (compositional disorder).

- MinX-EM: Structures with experimentally measured elastic tensors.

- Standardized Simulation: Run defined computational experiments (e.g., MD simulations, energy minimizations) on these structures using the force field under evaluation [8].

- Metric Calculation: Evaluate force fields on a comprehensive set of metrics that go beyond energy/force errors, including [8]:

- Structural Fidelity: Accuracy in predicting lattice parameters and density (MAPE).

- Simulation Stability: Percentage of completed MD simulations without catastrophic failure.

- Mechanical Properties: Accuracy of predicted elastic constants versus measured values.

- Atomic Organization: Agreement of radial distribution functions and bond lengths.

Table 3: Key Computational Tools and Datasets for Force Field Validation

| Tool/Dataset Name | Type | Primary Function in Validation | Relevance to Experimental Observables |

|---|---|---|---|

| UniFFBench / MinX Dataset [8] | Benchmarking Framework & Dataset | Provides a standardized set of ~1,500 experimental mineral structures for systematic testing. | Core dataset for validating against lattice parameters, elastic constants, and phase stability under diverse conditions. |

| DiffTRe (Differentiable Trajectory Reweighting) [1] | Computational Method | Enables efficient gradient-based optimization of force field parameters directly from experimental observables. | Key for incorporating experimental data (like elastic constants) into ML force field training. |

| DP-GEN (Deep Potential Generator) [10] | Active Learning Platform | Automates the construction of ML potentials by iteratively generating training data via an active learning loop. | Helps build robust training sets that can improve a model's generalization, indirectly aiding experimental accuracy. |

| MPtrj Dataset [8] | Computational Dataset | A large-scale DFT dataset commonly used for training UMLFFs. | Serves as a base for training; its chemical biases highlight the need for complementary experimental validation [8]. |

The journey toward truly reliable and universal force fields hinges on moving beyond computational benchmarks and embracing rigorous, standardized validation against experimental observables. As demonstrated, while MLFFs offer quantum-level accuracy at a fraction of the cost, their performance on real-world experimental properties varies significantly. Fused data learning emerges as a powerful strategy to directly address the inaccuracies of DFT and create models that are firmly grounded in physical reality [1]. Furthermore, frameworks like UniFFBench provide the essential toolkit for the community to objectively quantify the "reality gap" [8]. For researchers in materials science and drug development, the critical takeaway is that the choice and trust in a force field must be informed by its proven performance against a relevant set of experimental data, particularly fundamental measures like lattice parameters and elastic constants, which serve as the bedrock for connecting simulation to experiment.

The development of reliable force fields is a cornerstone of molecular simulation, with predictive accuracy being paramount for applications in drug discovery and materials science. This process fundamentally relies on two pillars: data from ab initio quantum mechanical calculations, primarily Density Functional Theory (DFT), and ensemble-averaged experimental measurements. Both sources, however, are fraught with inherent uncertainties that can compromise the validity of the resulting parameter sets. DFT, while powerful, suffers from systematic errors originating from exchange-correlation functional approximations and numerical settings, leading to inaccuracies in forces and energies [11] [12]. On the other hand, experimental data used for training and validation is often sparse, noisy, and subject to both random and systematic errors, the extent of which is frequently unknown a priori [13]. This guide objectively compares the nature and impact of these error sources and details modern methodologies designed to navigate them, providing a framework for researchers to critically assess force field parameterization protocols.

Understanding and Quantifying DFT Inaccuracies

The accuracy of force fields is intrinsically linked to the quality of the quantum mechanical data used for their parameterization. DFT, as the most common source of this data, introduces several layers of uncertainty.

Functional-Driven Errors and Material Dependence

The choice of exchange-correlation (XC) functional is a major source of error in DFT, and its impact is highly material-dependent. A comprehensive study on binary and ternary oxides revealed that the performance of different functionals varies significantly, with no single functional being universally superior [11]. The systemic errors manifest clearly in predictions of lattice constants, a fundamental geometric property.

Table 1: Mean Absolute Relative Error (MARE) of DFT Lattice Constants for Oxides [11]

| XC Functional | Type | Lattice Constant MARE | Remarks |

|---|---|---|---|

| LDA | Local Density Approximation | 2.21% | Systematic underestimation (overbinding) |

| PBE | Generalized Gradient Approximation | 1.61% | Systematic overestimation (underbinding) |

| PBEsol | Generalized Gradient Approximation | 0.79% | Improved for solids |

| vdW-DF-C09 | van der Waals Density Functional | 0.97% | Good performance with vdW interactions |

These errors are not random; they correlate with material chemistry, such as the presence of magnetic elements or specific metal-oxygen bonding and orbital hybridization environments [11]. This means the functional-induced error can be predicted to some extent using materials informatics, allowing for the placement of functional-specific "error bars" on DFT predictions.

Numerical Errors in Forces and Their Impact on MLIPs

For training Machine Learning Interatomic Potentials (MLIPs), the accuracy of DFT-calculated atomic forces is critical. Recent investigations have uncovered "unexpectedly large uncertainties" in forces within several widely used molecular datasets [12]. A clear indicator of numerical errors is a non-zero net force on a system, which should be zero in the absence of external fields.

Table 2: Force Component Errors in Common Molecular Datasets [12]

| Dataset | Reported RMSE in Force Components | Primary Source of Error |

|---|---|---|

| ANI-1x (def2-TZVPP) | 33.2 meV/Å | RIJCOSX approximation in older ORCA versions |

| Transition1x | Significant errors present | RIJCOSX approximation |

| AIMNet2 | Significant errors present | RIJCOSX approximation and mixed basis set data |

| SPICE | 1.7 meV/Å | Tighter settings, but some errors remain |

| OMol25 | Negligible | Well-converged settings, net forces ~zero |

These errors stem from suboptimal DFT settings, such as the use of the RIJCOSX approximation to accelerate integral evaluation and insufficiently dense integration grids [12] [14]. Given that state-of-the-art MLIPs now achieve force accuracies on the order of 10 meV/Å, these underlying errors in training data become a limiting factor for potential quality [12].

Common Numerical Pitfalls in DFT Calculations

Beyond functional choice, several numerical settings can introduce significant error:

- Integration Grids: Sparse grids, particularly for modern meta-GGA and double-hybrid functionals, yield unreliable energies and forces. The (75,302) "fine" grid in Gaussian can be insufficient; a (99,590) grid is recommended for accuracy and rotational invariance [14].

- Low-Frequency Vibrations: Treating quasi-rotational or quasi-translational modes as true vibrations in entropy calculations can lead to large errors in thermochemical predictions. Applying a correction (e.g., raising modes below 100 cm⁻¹ to 100 cm⁻¹) is recommended [14].

- Symmetry Numbers: Neglecting molecular symmetry numbers in entropy calculations introduces systematic errors in reaction thermochemistry, a common oversight in computational studies [14].

Navigating the Challenges of Sparse and Noisy Experimental Data

Force field validation and refinement often rely on fitting to experimental observables, which presents its own set of challenges.

The Nature of Experimental Observables

Experimental data for biomolecular systems is typically ensemble-averaged, representing a population-weighted average over countless conformations, and sparse, with limited data points relative to the system's complexity [13]. Crucially, these measurements are susceptible to:

- Random Errors: Inherent noise in the measurement process.

- Systematic Errors: Biases due to instrumental miscalibration or flaws in the "forward model" used to connect atomic-level structures to the experimental observable [13]. The extent of these errors is often unknown, making it difficult to weight the influence of different data points appropriately during parameter optimization.

Integrated Methodologies for Robust Parameterization

Next-generation methods are being developed to simultaneously address the uncertainties from both computational and experimental sources.

Bayesian Inference for Uncertainty Quantification

Bayesian Inference of Conformational Populations (BICePs) is an algorithm that directly tackles the problem of sparse/noisy data and model error. BICePs samples a posterior distribution that reconciles simulation priors with experimental data while treating the level of experimental uncertainty as a nuisance parameter [13]. Its key features include:

- No Adjustable Regularization: It avoids arbitrary weighting between simulation and experiment, a common source of bias [13].

- Robust Likelihoods: Specialized likelihood functions (e.g., Student's t-model) automatically detect and down-weight data points subject to systematic error or outliers [13].

- The BICePs Score: A free energy-like quantity used for model selection and, recently, as an objective function for automated force field refinement through variational optimization [13].

BICePs Method Workflow: Integrating prior simulation data and sparse experiments to refine force fields.

Data-Driven Force Field Parameterization

To address the limitations of traditional look-up table methods for expansive chemical spaces, data-driven approaches using machine learning have emerged.

- ByteFF Workflow: This method involves generating a massive, diverse QM dataset (2.4 million optimized molecular fragments), training a symmetry-preserving Graph Neural Network (GNN) to predict all molecular mechanics parameters simultaneously, and employing a differentiable loss function for optimization [15].

- BLipidFF for Specialized Systems: For unique systems like the Mycobacterial membrane, specialized force fields are developed using rigorous, QM-based parameterization. This involves defining specialized atom types, calculating partial charges via RESP fitting, and optimizing torsion parameters against QM energy scans [16].

Table 3: "Research Reagent Solutions": Key Tools for Force Field Development

| Tool / Resource | Type | Function in Validation/Parameterization |

|---|---|---|

| BICePs Algorithm [13] | Software/Method | Bayesian inference to reconcile simulation ensembles with sparse/noisy experimental data. |

| ByteFF GNN Model [15] | Machine Learning Model | Predicts bonded/non-bonded MM parameters for drug-like molecules across broad chemical space. |

| BLipidFF Parameterization [16] | Specialized Force Field | Provides accurate parameters for complex bacterial lipids using QM-derived torsion and charge data. |

| ωB97M-V/def2-TZVPD [12] | QM Method & Basis Set | High-level DFT method used for generating well-converged reference data in modern datasets (e.g., OMol25). |

| RIJCOSX Approximation [12] | Computational Setting | A source of numerical error in forces; disabling it in ORCA improves accuracy at computational cost. |

Experimental Protocols for Method Validation

Protocol: BICePs-based Force Field Refinement

This protocol details the method for refining force field parameters against ensemble-averaged data using BICePs [13].

- Initial Simulation: Generate a prior conformational ensemble using molecular dynamics or Monte Carlo simulations with an initial force field.

- Define Observables: Identify the set of experimental observables ( D ) (e.g., NMR J-couplings, distance measurements) and compute the forward model ( f(X) ) for each conformation.

- BICePs Sampling: Sample the posterior distribution ( p(\mathbf{X}, \bm{\sigma} | D) ) using Markov Chain Monte Carlo (MCMC). This involves sampling conformational labels and the uncertainty parameters ( \sigma_j ) for each observable.

- Score Calculation: Compute the BICePs score for the model. This score represents the evidence for the force field given the data.

- Variational Optimization: Use the BICePs score as an objective function. Employ gradient-based optimization to adjust force field parameters to minimize the score, thus improving agreement with experiment while accounting for all uncertainties.

- Iterate: Return to step 1 with the refined force field until convergence is achieved.

Protocol: Data-Driven Force Field Generation (ByteFF)

This protocol outlines the creation of a general-purpose, data-driven force field [15].

- Dataset Curation: Select a vast and diverse set of drug-like molecules from databases like ChEMBL and ZINC.

- Fragmentation: Cleave molecules into smaller fragments (<70 atoms) using a graph-expansion algorithm to preserve local chemical environments.

- Quantum Chemical Calculations: For each fragment, perform:

- Geometry Optimization: Optimize structures at the B3LYP-D3(BJ)/DZVP level of theory and compute analytical Hessian matrices.

- Torsion Scans: Generate millions of torsion drive calculations to map rotational energy profiles.

- Model Training: Train a symmetry-preserving Graph Neural Network on the QM dataset. The model learns to map molecular graphs to all MM parameters (bonds, angles, torsions, van der Waals, charges).

- Loss Function: Employ a differentiable loss function that compares MM-predicted energies and Hessians to the QM reference data.

- Validation: Benchmark the force field on independent test sets for accuracy in predicting geometries, torsion profiles, and conformational energies.

Data-Driven Force Field Creation: From chemical databases to a trained parameter prediction model.

Why Look Beyond Energy and Force Errors?

Force fields, the mathematical models that describe atomic interactions, are foundational to molecular dynamics (MD) simulations in drug discovery and materials science. Traditionally, the quality of these force fields has been assessed primarily by their accuracy in predicting energy and force values against quantum mechanical reference data, with low mean absolute errors (MAEs) often reported [17]. However, a growing body of evidence indicates that these conventional metrics alone are insufficient guarantees of real-world simulation reliability [18] [8].

Excellent performance on energy and force metrics can create a false sense of accuracy, as these are typically calculated for static configurations similar to those in the training data. They do not fully capture a model's performance during the long-timescale, out-of-equilibrium dynamics of actual simulations [18]. Consequently, a force field with low force MAE may still produce unstable simulations, fail to reproduce key experimental observables, or generate physically implausible atomic trajectories [17] [8]. This "reality gap" underscores the critical need for a broader, more robust set of validation metrics grounded in experimental and physical reality.

Key Validation Metrics and Experimental Protocols

A comprehensive validation strategy must assess how well force fields reproduce experimental measurements and maintain physical fidelity during simulation. The table below summarizes essential metrics beyond energy and force errors.

Table 1: Key Validation Metrics Beyond Energy and Force Errors

| Metric Category | Specific Metrics | Experimental/Computational Protocol | Significance |

|---|---|---|---|

| Simulation Stability | Simulation longevity, absence of catastrophic failures (e.g., bond breakage, atomic clashes) [17] [8]. | Run MD simulations for extended duration (e.g., ~300 ps [17]); monitor for unphysical forces or system disintegration. | Indicates robustness and reliability for practical MD applications [17]. |

| Structural Fidelity | Density, lattice parameters, radial distribution functions (RDF) or pair-distance distribution [17] [8]. | Compare MD-simulated structural properties against experimental X-ray crystallography or neutron scattering data [8]. | Ensures force field reproduces correct equilibrium structures and packing [8]. |

| Dynamic Properties | Diffusion coefficients, vibrational spectra, residence times [18]. | Calculate from MD trajectories and validate against experimental results (e.g., quasi-elastic neutron scattering, IR spectroscopy). | Probes accuracy in capturing atomic motion and kinetic properties [18]. |

| Mechanical/Thermodynamic Properties | Elastic constants (e.g., bulk modulus), enthalpy of vaporization, free energy profiles [8] [19]. | Compute properties from simulation and compare with experimental measurements (e.g., stress-strain tests, calorimetry) [19]. | Validates performance for predicting macroscopic material behavior and thermodynamics [19] [8]. |

| Rare Events & Defect Energetics | Vacancy/interstitial formation energies and migration barriers [18]. | Use enhanced sampling MD to compute energy barriers; validate against experimental activation energies or direct ab initio calculations [18]. | Tests force field transferability to non-equilibrium and defective configurations [18]. |

Special Considerations for Machine Learning Force Fields (MLFFs)

MLFFs introduce unique validation challenges. Their "black-box" nature means low average errors on standard test sets do not guarantee good performance during MD [18]. Specific issues include:

- Extrapolation Errors: MLFFs can predict extreme, non-physical forces for configurations outside their training data, leading to simulation collapse [17].

- Disconnect from Stability: Models with nearly identical force MAEs can have dramatically different simulation stability. For instance, pre-training on large, diverse datasets significantly improved GemNet-T model stability despite similar MAEs [17].

- RE-Based Metrics: For MLFFs, specific metrics focusing on forces acting on atoms during rare events (REs) like diffusion have been developed. These "force performance scores" better indicate a model's capability to accurately simulate atomic dynamics than bulk force MAE [18].

Experimental Workflows for Force Field Validation

A robust validation pipeline involves multiple stages, from initial parameterization to final assessment against complex experimental data. The diagram below outlines a comprehensive workflow integrating these stages.

Force Field Validation Workflow: This diagram outlines the multi-stage pipeline for rigorous force field validation, progressing from core parameterization to comprehensive experimental benchmarking.

Detailed Experimental Protocols

Protocol for Assessing MD Simulation Stability

Objective: To evaluate the robustness and longevity of MD simulations performed with a given force field [17] [8].

- System Preparation: Construct simulation cells for a diverse set of systems (e.g., small organic molecules, proteins, or periodic materials).

- Simulation Setup: Employ a velocity Verlet integrator with a suitable timestep (e.g., 0.5 fs). Use a thermostat (e.g., Nosé–Hoover) to maintain target temperature [17].

- Production Run: Execute a large number of MD steps (e.g., 600,000 steps corresponding to 300 ps) [17].

- Monitoring and Analysis:

- Failure Logging: Record simulations that fail catastrophically, indicated by memory overflow from excessive graph edges (for MLFFs) or unphysically large forces (e.g., >100 eV/Å) [8].

- Stability Metric: Calculate the simulation completion rate as a percentage for a benchmark set of structures [8].

- Longevity Comparison: For models that complete, compare the sustained trajectory length before any divergence from physical behavior [17].

Protocol for Validating Structural Fidelity Against Experiment

Objective: To quantify the accuracy of a force field in reproducing experimentally determined structural properties [8].

- Reference Data Curation: Curate a set of high-resolution experimental structures. For broad validation, use diverse datasets like MinX for minerals or curated protein structures from the PDB [8] [20].

- Simulation: Run MD simulations for each structure under conditions matching the experiment (e.g., temperature, pressure).

- Property Calculation:

- Density and Lattice Parameters: For crystalline materials, compute the average density and lattice parameters from the simulation trajectory and compare to experimental measurements. Report the Mean Absolute Percentage Error (MAPE) [8].

- Pair-Distance Distribution / RDF: For molecules in solution or amorphous systems, calculate the pair-distance distribution function ( h(r) ) (for finite molecules) or the radial distribution function (for bulk systems) and compare to experimental X-ray/neutron scattering data [17].

- Validation: Assess if errors fall below application-specific thresholds (e.g., density errors <2-5% for practical materials design) [8].

Protocol for Validating Rare Events and Defect Properties

Objective: To test the force field's accuracy for non-equilibrium configurations, such as defects, and the energy barriers of infrequent but critical events [18].

- Testing Set Creation: Generate a dedicated testing set (({\mathcal{D}}_{RE-V/ITesting})) comprising atomic snapshots of migrating vacancies or interstitials from ab initio MD (AIMD) simulations [18].

- Energy and Force Evaluation: Use the force field to predict energies and atomic forces for all configurations in this testing set.

- Metric Calculation:

- Calculate root-mean-square errors (RMSE) for energies and forces, but note that low overall errors can mask large inaccuracies for the specific atoms undergoing migration [18].

- Develop a force performance score that specifically evaluates the force errors on the subset of "RE atoms" directly involved in the rare event (e.g., the migrating atom and its immediate neighbors) [18].

- Barrier Calculation: If possible, use the force field in enhanced sampling simulations to compute the energy barrier for the rare event (e.g., vacancy migration) and compare directly to the DFT-calculated or experimentally derived barrier [18].

The Scientist's Toolkit: Essential Research Reagents and Solutions

This table lists key software and data resources essential for conducting thorough force field validation.

Table 2: Key Resources for Force Field Validation

| Tool/Resource Name | Type | Primary Function in Validation | Relevant Citation |

|---|---|---|---|

| ForceBalance | Software | Automated parameter optimization tool that fits force field parameters against experimental and QM target data simultaneously. | [19] [21] |

| QUBEKit | Software | Toolkit for deriving bespoke force field parameters directly from QM calculations (QM-to-MM mapping). | [19] |

| UniFFBench | Benchmarking Framework | A comprehensive framework for evaluating force fields, particularly UMLFFs, against experimental data. | [8] |

| MinX Dataset | Experimental Dataset | A hand-curated dataset of ~1,500 mineral structures with associated experimental data for validating structural, mechanical, and thermodynamic properties. | [8] |

| Curated Protein Test Set | Experimental Dataset | A set of high-resolution protein structures (e.g., 52 X-ray/NMR structures) for validating structural criteria like hydrogen bonding, SASA, and radius of gyration. | [20] |

| RE-Based Testing Sets | Computational Dataset | Snapshots of atomic configurations during rare events (e.g., vacancy/interstitial migration) from AIMD, used for testing force accuracy on migrating atoms. | [18] |

How-To: Advanced Strategies for Integrating Experimental Data

The development of machine learning force fields (MLFFs) promises to bridge the long-standing accuracy-efficiency gap in molecular simulations. Traditionally, MLFFs are trained in a bottom-up approach using data from Density Functional Theory (DFT) calculations, aiming to replicate quantum mechanical accuracy at a fraction of the computational cost [1]. However, these models often inherit the inaccuracies of the underlying DFT functionals and can struggle to reproduce key experimental observables, creating a "reality gap" between simulation and experiment [8] [1]. This guide examines the emerging paradigm of fused data learning, which concurrently utilizes DFT data and experimental measurements to train MLFFs, comparing its performance against traditional single-source approaches.

Core Methodology of Fused Data Learning

The fused data learning strategy employs an iterative training process that alternates between optimizing a model against quantum mechanical data and experimental observables. This method was effectively demonstrated in the development of a graph neural network (GNN) potential for titanium [1]. The following diagram illustrates this integrated workflow.

Figure 1: Workflow for concurrent DFT and experimental data training. The ML potential's parameters (θ) are iteratively refined using both a DFT trainer (bottom-up) and an experimental trainer (top-down) [1].

Key Components of the Workflow

- DFT Trainer: This is a standard regression task. The ML potential takes an atomic configuration as input and predicts the potential energy. Forces and virial stress are computed by differentiating the energy with respect to atomic positions. The model parameters are updated to match target values in the DFT database [1].

- EXP Trainer: This optimizer modifies parameters so that properties computed from ML-driven molecular dynamics (MD) trajectories match experimental values. The Differentiable Trajectory Reweighting (DiffTRe) method enables efficient gradient computation without backpropagating through the entire simulation, which is computationally prohibitive. The training targets often include temperature-dependent elastic constants and lattice parameters [1].

- Fused Training Loop: The training alternates between the DFT and EXP trainers, often on an epoch-by-epoch basis. This approach constrains the high-capacity ML model to simultaneously reproduce quantum mechanical data and macroscopic experimental reality, leading to a more robust and accurate potential [1].

Performance Comparison of Training Strategies

To objectively evaluate the fused data approach, we compare it against models trained solely on DFT data or fine-tuned only on experimental data. The following tables summarize quantitative performance data.

Table 1: Performance comparison of different training strategies for a titanium ML potential. Errors are reported on a DFT test dataset. Data sourced from [1].

| Training Strategy | Energy MAE (meV/atom) | Force MAE (eV/Å) | Virial MAE (meV/atom) |

|---|---|---|---|

| DFT Pre-trained (Bottom-up) | < 43 | 0.084 | 86 |

| DFT & EXP Fused (Concurrent) | 45 | 0.087 | 89 |

| DFT, EXP Sequential (Top-down) | 317 | 0.154 | 158 |

Table 2: Accuracy in predicting experimental elastic constants (C₁₁, C₁₂, C₁₃, C₃₃, C₄₄) for hcp titanium across different temperatures. Mean Absolute Percentage Error (MAPE) is shown. Data sourced from [1].

| Training Strategy | 23 K | 323 K | 623 K | 923 K |

|---|---|---|---|---|

| DFT Pre-trained | 13.5% | 14.8% | 16.1% | 18.9% |

| DFT & EXP Fused | 3.2% | 3.5% | 4.1% | 5.0% |

| DFT, EXP Sequential | 5.1% | 5.3% | 6.0% | 7.4% |

Analysis of Comparative Performance

- Accuracy on DFT Data: The DFT & EXP Fused model maintains near-chemical accuracy (energy MAE < 50 meV/atom) on the DFT test set, with errors only slightly higher than the DFT Pre-trained model. This indicates that incorporating experimental data does not significantly degrade the model's ability to reproduce the underlying quantum mechanical data [1].

- Accuracy on Experimental Data: The fused model demonstrates a decisive advantage in predicting experimental elastic constants, reducing the MAPE by over 70% compared to the DFT pre-trained model across all temperatures. This shows a direct correction of DFT functional inaccuracies [1].

- Robustness of Approach: The sequential model (fine-tuning on experiments only) also improves experimental accuracy but suffers a severe degradation in DFT-level performance (energy MAE over 300 meV/atom). The concurrent training strategy successfully balances both objectives, making it a more robust and general-purpose solution [1].

Experimental Protocols for Validation

Rigorous validation against experimental observables is crucial for establishing the reliability of any force field. The following protocols are essential benchmarks.

Mechanical Property Validation

Objective: To assess the force field's accuracy in predicting mechanical properties like elastic constants. Methodology:

- Simulation Setup: Run MD simulations in the NVT ensemble. The simulation box size is set using experimentally determined lattice constants for the target material [1].

- Strain Application: Apply small, linear strains to the equilibrium structure to compute the second-order elastic constants tensor.

- Stress Calculation: The elastic constants (Cᵢⱼ) are calculated from the stress-strain relationship, where the stress is obtained from the virial tensor during simulations [1].

- Comparison: Compare the computed elastic constants against experimentally measured values across a temperature range.

Structural and Dynamic Stability Validation

Objective: To evaluate the force field's ability to maintain structural fidelity and stability during finite-temperature MD simulations. Methodology:

- Simulation Stability: Run MD simulations for a curated set of structures (e.g., the MinX dataset for minerals) and record the simulation completion rate. Instabilities often manifest as unphysically large forces (> 100 eV/Å) leading to crashes [8].

- Structural Fidelity: For stable simulations, compute the Mean Absolute Percentage Error (MAPE) for predicted densities and lattice parameters against experimental crystal structures [8].

- Bond Length and RDF Analysis: Analyze radial distribution functions (RDF) and specific bond lengths to ensure the model maintains correct atomic-scale organization during dynamics [8].

The Scientist's Toolkit: Essential Research Reagents

This table lists key software and computational tools used in developing and validating machine learning force fields.

Table 3: Key software tools for ML force field development and validation.

| Tool Name | Type | Primary Function |

|---|---|---|

| DP-GEN [10] | Software Framework | An active learning platform for generating generalizable neural network potentials via the Deep Potential method. |

| DiffTRe [1] | Algorithm/Method | Enables gradient-based optimization of ML potentials directly from experimental data without backpropagating through MD. |

| ForceBalance [22] | Parameterization Tool | Versatile, open-source software for systematic force field optimization using both reference calculations and experimental data. |

| OpenMM [22] | MD Simulation Engine | A high-performance toolkit for molecular simulation, used as a backend for running MD with ML potentials. |

| UniFFBench [8] | Benchmarking Framework | A comprehensive framework for evaluating universal ML force fields against a large set of experimental mineral data. |

| QUBEKit [23] | Parameterization Tool | A software toolkit for deriving quantum mechanical bespoke (QUBE) force field parameters directly from electron density. |

The concurrent training of machine learning force fields on DFT and experimental data represents a significant advance in closing the "reality gap" between simulation and experiment. The fused data learning strategy produces models that correct known inaccuracies in DFT functionals while retaining quantum mechanical detail, resulting in force fields with superior predictive power for real-world material properties [1]. While challenges remain—including the need for diverse experimental training data and increased computational cost—this approach provides a more robust path toward next-generation force fields for applications in drug development, materials science, and beyond. As the field progresses, benchmarks like UniFFBench that rigorously test models against experimental complexity will be essential for driving improvements and ensuring reliability [8].

Leveraging Bayesian Inference of Conformational Populations (BICePs)

Accurate molecular simulations are paramount for modern drug development, enabling researchers to predict how candidate molecules behave at the atomic level. The reliability of these simulations, however, hinges on the quality of the force fields—the mathematical models describing interatomic potentials. Validating and refining these force fields against experimental data is a central challenge in computational chemistry and structural biology [24]. Bayesian Inference of Conformational Populations (BICePs) has emerged as a powerful algorithm designed specifically to reconcile theoretical predictions from simulation with sparse and/or noisy experimental measurements, providing a rigorous statistical framework for force field validation and parameterization [24] [25] [13]. This guide objectively compares BICePs' performance against alternative methods, detailing its core protocols, and presenting quantitative data on its application.

BICePs Core Theory and Methodological Comparison

BICePs operates on a Bayesian framework to model the posterior distribution (P(X|D)) of conformational states (X), given experimental data (D). The core relationship is expressed by Bayes' theorem:

[ P(X|D) \propto Q(D|X) P(X) ]

Here, (P(X)) is the prior distribution of conformational populations obtained from theoretical models like molecular simulations, and (Q(D|X)) is the likelihood function quantifying how well a conformation (X) agrees with experimental data [24] [25].

Key Differentiators of BICePs

Two critical features distinguish BICePs from other ensemble refinement algorithms:

Reference Potentials: Experimental observables (e.g., distances) are low-dimensional projections of a high-dimensional conformational space. BICePs introduces a reference potential (Q{ref}(r)) that represents the distribution of observables in the absence of experimental data. The weighting function becomes ([Q(r|D)/Q{ref}(r)]), ensuring that only the informative component of a restraint influences the reweighting. This prevents unnecessary bias when using multiple restraints [24] [25] [26]. For instance, a distance restraint between two residues distant in sequence is highly informative, whereas the same restraint for nearby residues may contribute little new information [25].

The BICePs Score for Model Selection: BICePs computes a quantity known as the BICePs score, which is the integrated posterior evidence for a given model. This score acts as a Bayes factor, enabling objective model selection. A more negative BICePs score indicates a model that is more consistent with the experimental data [24] [13] [27].

Comparative Analysis of Alternative Methods

The table below compares BICePs against other major classes of algorithms used for reconciling simulations with experiments.

Table 1: Comparison of BICePs with Alternative Computational Methods

| Method | Category | Key Approach | Treatment of Uncertainty | Primary Use Case |

|---|---|---|---|---|

| BICePs | Bayesian Reweighting | Post-simulation reweighting of discrete states using Bayesian inference with reference potentials [25] [26]. | Samples nuisance parameters for experimental and forward model error; robust likelihoods for outliers [13] [28]. | Force field validation/parameterization; analysis of structured and semi-flexible ensembles [24]. |

| NAMFIS / DISCON | Maximum Parsimony | Enumerates conformers to find a minimal set compatible with NMR data [25] [26]. | Limited explicit treatment | NMR refinement of small organic molecules/peptides [25] [26]. |

| Maximum Entropy | Bias Potential | Adds bias potentials during simulation to satisfy ensemble-averaged constraints [25]. | Modified by Metainference to account for experimental error [25]. | Incorporating experimental data into simulation on-the-fly. |

| Metainference | Bayesian Inference | Restrains replica-averaged observables during simulation to account for heterogeneity and error [25]. | Explicitly models experimental and ensemble uncertainty [25]. | Characterizing highly disordered and heterogeneous ensembles [25]. |

Experimental Protocols and Workflows

A Standard BICePs Workflow

The following diagram illustrates the logical flow of a typical BICePs calculation for force field validation.

Detailed Methodological Steps:

Input Preparation:

- Theoretical Prior, (P(X)): Generate a conformational ensemble using molecular dynamics (MD) simulation with a candidate force field. The ensemble is discretized into a set of conformational states with initial populations [24] [25].

- Experimental Data, (D): Collect ensemble-averaged experimental observables, such as NMR chemical shifts, J-coupling constants, or distance measurements (e.g., from NOEs or FRET) [24].

BICePs Algorithm Execution:

- Likelihood Definition: Define a likelihood function, typically a normal distribution: (Q(D|X,\sigma) = \prodj \frac{1}{\sqrt{2\pi\sigma^2}} \exp\left(-[rj(X) - rj^{exp}]^2 / 2\sigma^2\right)), where (rj(X)) is the observable back-calculated from conformation (X), and (r_j^{exp}) is the experimental value [25].

- Reference Potential Application: Compute (Q_{ref}(r)) for each observable to reweight the likelihood based on its inherent information content [24] [26].

- Nuisance Parameter Sampling: Treat the uncertainty parameter (\sigma) as an unknown, sampling it alongside conformational states (X) using a non-informative Jeffreys prior, (P(\sigma) \sim 1/\sigma) [25] [13].

Posterior Sampling: Use Markov Chain Monte Carlo (MCMC) to sample the joint posterior distribution (P(X, \sigma | D)). The MCMC scheme typically involves:

- Monte Carlo moves that transition between discrete conformational states.

- Gibbs sampling or Metropolis-Hastings moves to update the uncertainty parameter(s) (\sigma) [25].

Output Analysis:

- Reweighted Populations: Analyze the marginal distribution (P(X|D) = \int P(X, \sigma|D) d\sigma) to obtain the refined conformational populations that best agree with experiments [25].

- BICePs Score Calculation: Compute the BICePs score, which is the negative logarithm of the marginalized posterior probability, serving as a free energy-like quantity for model selection [24] [13].

Advanced Protocol: Automated Force Field Optimization

Recent advancements have extended BICePs from a validation tool to an engine for automated parameterization. The diagram below outlines this advanced workflow.

Key Steps for Automated Optimization:

- Objective Function: The BICePs score is used as a differentiable objective function to be minimized [13] [28].

- Variational Optimization: A variational method is employed to adjust force field parameters (θ). This can be achieved through:

- Posterior Sampling of Parameters: Treating θ as nuisance parameters and sampling them within the full posterior distribution [28].

- Gradient-Based Minimization: Using stochastic gradient descent, where parameter updates follow (\theta{\text{trial}} = \theta{\text{old}} - \text{lrate} \cdot \nabla u + \eta \cdot \mathcal{N}(0,1)), significantly speeding up convergence in high-dimensional spaces [28].

- Iterative Refinement: The process iterates until the BICePs score is minimized, indicating that the force field produces ensembles in maximal agreement with the experimental data [13].

Performance Data and Comparative Results

Toy Model Validation

In a foundational study, BICePs was tested on a 2D lattice protein model to evaluate its capability for force field parameterization. The goal was to select the correct value of an interaction energy parameter ((\epsilon)) using only ensemble-averaged experimental distance measurements [24] [27].

Table 2: BICePs Performance on a 2D Lattice Protein Toy Model [24] [27]

| Condition | Experimental Noise | Measurement Sparsity | BICePs Outcome |

|---|---|---|---|

| Fine-grained states | Varying levels added | Sparse (limited distances) | Successfully identified correct (\epsilon) parameter |

| Fine-grained states | Robust against noise | Robust against sparsity | Results remained accurate |

| Coarse-grained states | Low | Low | Reduced ability to discriminate correct parameter |

Key Findings: BICePs reliably selected the correct force field parameter even when the experimental data was sparse and noisy, provided the conformational states were sufficiently fine-grained. This demonstrates the method's robustness and its potential for parameterizing models where experimental data is limited [24].

All-Atom Simulation Evaluation

BICePs was applied to more biologically relevant systems, such as assessing force fields for all-atom simulations of designed beta-hairpin peptides against experimental NMR chemical shifts [24] [27].

Table 3: Force Field Evaluation for Beta-Hairpin Peptides using BICePs [24] [27]

| Force Field | BICePs Score (Relative) | Interpretation |

|---|---|---|

| Force Field A | More Negative | Higher consistency with NMR data |

| Force Field B | Less Negative | Lower consistency with NMR data |

Key Findings: The BICePs score successfully ranked different force fields by their accuracy in reproducing experimental observables, confirming its utility for model selection in the context of all-atom simulations [24].

Performance in the Presence of Error

A recent study on automated force field optimization highlights BICePs' resilience to errors, a critical feature for practical applications.

Table 4: Resilience of BICePs Optimization to Experimental Error [13]

| Error Type | BICePs Likelihood Treatment | Optimization Outcome |

|---|---|---|

| Random Error | Sampled via nuisance parameter (\sigma) | Robust parameter recovery |

| Systematic Error / Outliers | Student's likelihood model to down-weight outliers | Successful, accurate parameterization |

Key Findings: Equipped with specialized likelihood functions (e.g., the Student's model), BICePs can automatically detect and down-weight the influence of data points subject to systematic error, leading to more robust and reliable parameter optimization [13].

The Scientist's Toolkit: Essential Research Reagents

The following table details key computational tools and concepts essential for implementing BICePs in force field validation studies.

Table 5: Essential "Reagents" for BICePs Experiments

| Item / Concept | Function / Description | Example/Note |

|---|---|---|

| Molecular Dynamics (MD) Engine | Generates the initial conformational ensemble (prior (P(X))). | Software like GROMACS, AMBER, or OpenMM. |

| Discrete State Definitions | Partitions the MD trajectory into distinct conformational states for analysis. | Often derived from clustering in a space of dihedral angles or RMSD [25]. |

| Experimental Observables (D) | Provides the experimental data for Bayesian restraint. | NMR J-couplings, chemical shifts, NOE distances, or FRET efficiencies [24] [25]. |

| Reference Potential (Q_{ref}(r)) | Accounts for the intrinsic probability of an observable, preventing bias. | For a polymer chain, a Gaussian chain model for end-to-end distances [24] [26]. |

| Forward Model | A function (f(X)) that computes an experimental observable from a molecular structure. | Karplus equation for predicting J-coupling constants from dihedral angles [28]. |

| MCMC Sampler | The computational core that samples the Bayesian posterior (P(X, \sigma | D)). | Custom software implementations of the BICePs algorithm [25]. |

| BICePs Score | A free energy-like quantity used for objective model selection and validation. | More negative scores indicate models with higher evidence given the data [24] [13]. |

BICePs provides a statistically rigorous and robust framework for force field validation and parameterization. Its key advantages—the principled use of reference potentials and the quantitative BICePs score for model selection—differentiate it from maximum parsimony and maximum entropy-based alternatives. Quantitative studies demonstrate that BICePs can successfully identify correct force field parameters even when faced with sparse, noisy, and error-prone experimental data. The recent development of automated, gradient-based optimization protocols leveraging the BICePs score further solidifies its role as an powerful tool in the computational scientist's arsenal, promising to enhance the accuracy of molecular simulations in drug discovery and beyond.

Differentiable Trajectory Reweighting (DiffTRe) for Time-Independent Properties

The validation of force field parameters against experimental observables is a cornerstone of reliable molecular simulation. Accurate molecular dynamics (MD) simulations depend critically on the potential energy model that defines particle interactions. The parameterization of these models generally follows one of two paradigms: a bottom-up approach, which fits parameters to high-fidelity quantum mechanical data, or a top-down approach, which optimizes parameters directly against experimental observables [29]. While bottom-up methods have been the dominant strategy, particularly for machine learning interatomic potentials (MLIPs), they are inherently limited by the accuracy and availability of the underlying quantum mechanical data [1]. Top-down optimization circumvents this limitation but introduces significant numerical and computational challenges, primarily because experimental observables are only indirectly linked to the potential model via expensive MD simulations [29].

Differentiable Trajectory Reweighting (DiffTRe) has emerged as a powerful method to address these challenges for time-independent properties. By leveraging thermodynamic perturbation theory, DiffTRe enables the efficient computation of gradients needed to optimize force field parameters against experimental data, without the need for backpropagation through the entire simulation trajectory [29]. This guide provides a comprehensive comparison of DiffTRe against other leading parameterization methods, detailing their methodologies, performance, and ideal applications within force field validation research.

This section details the core operational principles of DiffTRe and contrasts it with other contemporary parameter optimization strategies. A comparative summary of these methods is provided in Table 1.

Table 1: Comparison of Force Field Parameterization Methods

| Method | Core Principle | Key Advantages | Key Limitations | Ideal Use Cases |

|---|---|---|---|---|

| Differentiable Trajectory Reweighting (DiffTRe) [29] | Uses thermodynamic perturbation theory to reweight trajectories from a reference simulation, avoiding differentiation through the MD. | - ~100x speed-up in gradient computation vs. backpropagation- Avoids exploding gradients- Memory-efficient | - Not applicable to time-dependent observables (e.g., diffusion coefficients)- Accuracy depends on the overlap between reference and target states | Training on thermodynamic, structural, and mechanical properties (e.g., RDF, elastic constants). |

| Reversible Simulation [30] | Explicitly calculates gradients by running the simulation backwards in time, using custom implementations. | - Constant memory cost with trajectory length- Applicable to time-dependent observables- More stable gradients than standard DMS | - Requires custom implementation- Not as widely available as other methods | Matching time-dependent properties (e.g., diffusion, viscosity, reaction rates). |

| Differentiable Simulation (DMS) [30] | Employs Automatic Differentiation (AD) to backpropagate gradients through the entire simulation trajectory. | - Exact gradients with respect to the forward model- Highly flexible for various loss functions | - High memory consumption- Prone to exploding gradients- Computationally expensive | Short simulations with simple potentials where exact gradients are critical. |

| Bayesian Inference (BICePs) [13] | Uses Bayesian statistics to sample a posterior distribution of parameters and conformational populations, accounting for data uncertainty. | - Robust to noisy/sparse data and outliers- Provides uncertainty estimates- Does not require differentiable potentials | - Computationally intensive (uses MCMC)- Gradient-free optimization can scale poorly with parameter number | Refining ensembles with sparse/noisy experimental data and quantifying uncertainty. |

| Ensemble Reweighting (ForceBalance) [30] | Adjusts the weighting of configurations from a reference simulation to match new target observables. | - Well-established methodology- Applicable to a wide range of equilibrium properties | - Not applicable to time-dependent properties- Poor scaling with parameter number in sampling-based optimization | Optimizing classical force fields against a variety of equilibrium ensemble-averaged data. |

The DiffTRe Methodology

The DiffTRe method is designed to minimize a loss function ( L(\boldsymbol{\theta}) ) that quantifies the discrepancy between simulation results and experimental data. For a set of ( K ) experimental observables ( \tilde{O}_k ), the loss is typically the mean-squared error:

[ L(\boldsymbol{\theta}) = \frac{1}{K} \sum{k=1}^{K} \left[ \langle Ok(U{\boldsymbol{\theta}}) \rangle - \tilde{O}k \right]^2 ]

where ( \langle Ok(U{\boldsymbol{\theta}}) \rangle ) is the ensemble average of the observable computed with the potential ( U ) parameterized by ( \boldsymbol{\theta} ) [29].

The central challenge of top-down learning is computing the gradient of this loss with respect to the parameters, ( \nabla{\boldsymbol{\theta}} L ). Instead of differentiating through the MD simulation, DiffTRe leverages a reference simulation run with a fixed parameter set ( \hat{\boldsymbol{\theta}} ). It then uses thermodynamic perturbation theory to estimate how the ensemble average ( \langle Ok \rangle ) would change for a new parameter set ( \boldsymbol{\theta} ) by reweighting the samples from the reference trajectory:

[ \langle Ok(U{\boldsymbol{\theta}}) \rangle \simeq \sum{i=1}^{N} wi Ok(\mathbf{S}i, U_{\boldsymbol{\theta}}) ]

The weights ( w_i ) are calculated based on the Boltzmann factor of the potential energy difference:

[ wi = \frac{e^{-\beta (U{\boldsymbol{\theta}}(\mathbf{S}i) - U{\hat{\boldsymbol{\theta}}}(\mathbf{S}i))}}{\sum{j=1}^{N} e^{-\beta (U{\boldsymbol{\theta}}(\mathbf{S}j) - U{\hat{\boldsymbol{\theta}}}(\mathbf{S}j))}} ]

where ( \beta = 1/kB T ), ( kB ) is Boltzmann's constant, ( T ) is temperature, and ( \mathbf{S}_i ) represents a sampled state (atomic positions and momenta) from the reference trajectory [29]. This reweighting scheme bypasses the need for a new simulation for every parameter update, leading to a dramatic speed-up in gradient computation.

The following diagram illustrates the DiffTRe workflow and its logical position within the broader force field optimization landscape.

Diagram 1: The DiffTRe Optimization Workflow. The process avoids differentiating through the molecular dynamics simulation by using a reweighting approach on a fixed reference trajectory.

Performance and Experimental Data

Quantitative comparisons underscore the distinct advantages of DiffTRe. In a benchmark study, DiffTRe achieved an estimated two orders of magnitude speed-up in gradient computation compared to the direct reverse-mode automatic differentiation through the simulation, while also successfully avoiding the problem of exploding gradients [29].

Furthermore, DiffTRe has been successfully applied to train high-capacity graph neural network potentials. For instance, a DimeNet++ model was learned for an atomistic model of diamond based on its experimental stiffness tensor, and for a coarse-grained water model using experimental pressure, radial, and angular distribution functions [29]. This demonstrates its capability to handle both all-atom and coarse-grained systems.

Notably, DiffTRe also generalizes established bottom-up structural coarse-graining methods. It has been shown that iterative Boltzmann inversion, a popular method for deriving coarse-grained potentials, is a special case of the DiffTRe approach, which itself can handle arbitrary potentials and many-body correlation functions [29].

Experimental Protocols and Validation

This section outlines the key experimental workflows used to validate and benchmark methods like DiffTRe, providing a protocol for researchers.

Fused Data Training Protocol

A state-of-the-art protocol that incorporates DiffTRe is the fused data learning strategy, which combines bottom-up and top-down data to train highly accurate ML potentials. The workflow, depicted in Diagram 2 below, involves alternating between training on quantum mechanical data and experimental data.

Key Steps in the Protocol:

- DFT Pre-training: An ML potential is initially pre-trained on a large dataset of Density Functional Theory (DFT) calculations, which provides energies, forces, and virial stresses for diverse atomic configurations [1].

- EXP Trainer with DiffTRe: The pre-trained model is then fine-tuned using experimental data. In this phase:

- MD simulations are run using the current ML potential.

- Time-independent experimental observables (e.g., elastic constants, lattice parameters, radial distribution functions) are computed from the simulation trajectory.

- A loss function measuring the mismatch with actual experimental data is constructed.

- DiffTRe is used to compute the gradients of this loss with respect to the ML potential parameters, and the parameters are updated accordingly [1].

- Iteration: Steps of DFT training and EXP training are alternated (e.g., epoch by epoch) until the model converges and simultaneously reproduces both the quantum mechanical and experimental target properties [1].

Validation: This approach was used to train a graph neural network potential for titanium. The resulting "fused model" successfully matched both the DFT-derived energies/forces and the experimental temperature-dependent elastic constants of hcp titanium, achieving higher overall accuracy than models trained on a single data source [1].

Diagram 2: Fused Data Training Workflow. This protocol alternates between training on quantum mechanical (DFT) data and experimental data using DiffTRe, resulting in a more accurate and robust machine learning potential.

Protocol for Comparing Gradient-Based Methods

To objectively compare DiffTRe against alternatives like reversible simulation, the following protocol can be employed:

- System Selection: Choose a well-defined test case, such as a molecular mechanics water model (e.g., TIP3P) or a simple solid like diamond.

- Target Observables: Define a set of target properties. For time-independent tests, use properties like enthalpy of vaporization or radial distribution functions. For time-dependent tests, use properties like diffusion coefficients [30].

- Gradient Benchmarking:

- Calculate the gradients of a loss function with respect to force field parameters using different methods (DiffTRe, reversible simulation, ensemble reweighting).

- Compare the numerical values and directions of the gradients obtained from each method.

- Assess the computational cost (time and memory) for each method.

- Validation: Train a force field using each method and evaluate the final model's accuracy and time to convergence.

Supporting Data: A study applying this protocol found that while gradients from DiffTRe/reversible simulation were correlated, reversible simulation provided more stable gradients across repeats with different random seeds, and was uniquely capable of training models to match time-dependent diffusion data [30].

The Scientist's Toolkit

This section catalogs the essential computational tools and "reagents" required to implement DiffTRe and related force field validation experiments.

Table 2: Essential Research Reagents and Tools

| Item | Function in Research | Example Context |

|---|---|---|

| Graph Neural Network Potentials | A flexible ML model that represents atomic systems as graphs, capable of learning complex, multi-body interactions. | DimeNet++ used to learn potentials for diamond and water [29]. |

| Differentiable Trajectory Reweighting (DiffTRe) | The core algorithm for efficient gradient computation from experimental data for time-independent observables. | Top-down learning of stiffness tensor in diamond [29]. |

| Reversible Simulation | An alternative gradient calculation method with constant memory cost, suitable for time-dependent observables. | Learning water models and gas diffusion coefficients [30]. |

| Bayesian Inference of Conformational Populations (BICePs) | A reweighting algorithm that accounts for uncertainty in experimental data, robust to outliers. | Refining force field parameters against noisy ensemble-averaged measurements [13]. |

| Fused Data Training Loop | A computational protocol that systematically combines bottom-up (DFT) and top-down (Experimental) training. | Creating a highly accurate ML potential for titanium [1]. |

| Reference Trajectory | A pre-computed, decorrelated MD simulation trajectory that serves as the sample set for the reweighting in DiffTRe. | Foundational input for the DiffTRe method [29]. |

| Thermodynamic Perturbation Theory | The underlying statistical mechanics principle that enables the reweighting of ensemble averages. | Theoretical basis for the weight calculation in DiffTRe [29]. |

The validation and parameterization of force fields against experimental data are critical for producing reliable molecular simulations. DiffTRe represents a breakthrough for top-down learning on time-independent observables, offering a computationally efficient and numerically stable pathway to enrich machine learning potentials with experimental data, especially where accurate quantum mechanical data is unavailable. Its integration into a fused data learning strategy, which concurrently uses both simulation and experimental data, currently yields the most robust and accurate results.