Biomolecular Force Field Benchmarking: A Practical Guide for Stable and Predictive MD Simulations

Molecular dynamics (MD) simulations are a cornerstone of modern computational biology and drug development, yet their predictive power is critically dependent on the choice and application of the biomolecular force...

Biomolecular Force Field Benchmarking: A Practical Guide for Stable and Predictive MD Simulations

Abstract

Molecular dynamics (MD) simulations are a cornerstone of modern computational biology and drug development, yet their predictive power is critically dependent on the choice and application of the biomolecular force field. This article provides a comprehensive framework for researchers and scientists to benchmark force fields for simulation stability. It covers the foundational principles of major force fields like AMBER, CHARMM, OPLS-AA, and GROMOS, explores methodological best practices for system setup and sampling, addresses common troubleshooting and optimization challenges, and establishes a rigorous protocol for validation against experimental data. By synthesizing recent benchmarking studies and current best practices, this guide aims to empower professionals to perform more robust, reproducible, and reliable simulations for biomedical research.

Understanding Biomolecular Force Fields: Principles, Evolution, and Key Challenges

Molecular dynamics (MD) simulations have become an indispensable tool for obtaining microscopic insights into the chemical and physical properties of biological systems, from simple solvents to complex macromolecular assemblies. [1] At the heart of every MD simulation lies the force field—a mathematical model and associated parameters that describe the potential energy of a system as a function of its atomic coordinates. [2] The accuracy of these simulations in predicting experimental observables is fundamentally dependent on the quality of the underlying force field. [3] Force fields are typically built from equations that describe the various components of molecular interactions. The functional form generally includes terms for bond stretching, angle bending, torsional rotations, and non-bonded interactions (both van der Waals and electrostatic). [2] The development and refinement of these empirical models represents an ongoing challenge in computational chemistry and biology, as researchers strive to create parameter sets that are both physically accurate and transferable across diverse molecular systems.

The selection of an appropriate force field is particularly crucial in drug development, where simulations provide insights into protein-ligand interactions, solvation, crystallization, and polymorphism. [1] This guide provides a comprehensive comparison of four major all-atom biomolecular force field families—AMBER, CHARMM, OPLS-AA, and GROMOS—focusing on their performance in biomolecular stability research. We present objective experimental data from recent benchmarking studies to inform researchers and scientists in selecting the most suitable force field for their specific applications.

Force Field Functional Forms: A Common Mathematical Foundation

Despite their differences in parameterization, most modern force fields share a common mathematical foundation for calculating potential energy. The total potential energy (( U_{\text{total}} )) is typically expressed as a sum of bonded and non-bonded interaction terms:

[ U{\text{total}} = U{\text{bond}} + U{\text{angle}} + U{\text{torsion}} + U{\text{vdW}} + U{\text{electrostatic}} ]

The specific implementation of these terms varies between force field families, but generally includes harmonic potentials for bonds and angles, periodic functions for dihedrals, and Lennard-Jones and Coulomb potentials for non-bonded interactions. [2] A critical consideration in force field selection is ensuring that the combination of equations and parameters forms a consistent set, as ad hoc modifications to subsets of parameters can disrupt the careful balance achieved during parameterization. [2]

Table 1: Common Functional Forms in Empirical Force Fields

| Energy Component | Mathematical Form | Description |

|---|---|---|

| Bond Stretching | ( U{\text{bond}} = \sum{\text{bonds}} \frac{1}{2} kr (r - r0)^2 ) | Harmonic potential between covalently bonded atoms |

| Angle Bending | ( U{\text{angle}} = \sum{\text{angles}} \frac{1}{2} k\theta (\theta - \theta0)^2 ) | Harmonic potential for angles between three connected atoms |

| Torsional Rotation | ( U{\text{torsion}} = \sum{\text{dihedrals}} \sumn \frac{Vn}{2} [1 + \cos(n\phi - \gamma)] ) | Periodic potential for rotation around bonds |

| van der Waals | ( U{\text{vdW}} = \sum{i |

Lennard-Jones potential for non-bonded interactions |

| Electrostatic | ( U{\text{electrostatic}} = \sum{i |

Coulomb's law for interaction between partial atomic charges |

Major Force Field Families: Characteristics and Parameterization Philosophies

AMBER

The AMBER (Assisted Model Building with Energy Refinement) force field is widely used for simulations of proteins, nucleic acids, and other biomolecules. Its parameterization emphasizes reproducing quantum mechanical calculations on small model systems and experimental data. Recent variants have introduced specific improvements; for example, the χOL3 modification provides enhanced accuracy for RNA simulations. [4] A systematic benchmark evaluating AMBER force fields against NMR data found that the ff99sb-ildn-nmr and ff99sb-ildn-phi variants achieved high accuracy in recapitulating experimental observables. [5] AMBER force fields have also demonstrated excellent performance in modeling collagen structure, accurately reproducing dihedrals, side-chain torsions, and small-angle X-ray scattering data. [6] The modular design of AMBER lipid force fields (Lipid14/Lipid21) enables seamless integration with parameters for proteins, nucleic acids, and carbohydrates, making them highly effective for simulating complex biomolecular assemblies. [3]

CHARMM

The CHARMM (Chemistry at HARvard Macromolecular Mechanics) force field employs a comprehensive parameterization strategy that targets both quantum mechanical data and experimental properties of small molecules and condensed phases. A distinguishing feature of recent CHARMM force fields is the implementation of the CMAP (correction map) term, which provides a grid-based energy correction for backbone dihedral angles to better reproduce protein secondary structures. [2] While CHARMM36 has demonstrated high accuracy in simulating various lipid bilayer properties and serves as an important foundation for membrane simulations, [3] studies on collagen model peptides have noted that it can systematically shift backbone ϕ and ψ dihedrals and overstructure certain motifs. [6] Research has shown that scaling the CMAP terms for specific residues (Pro, Hyp, and Gly) can significantly improve agreement with experimental data for collagen-like peptides. [6]

OPLS-AA

The OPLS-AA (Optimized Potentials for Liquid Simulations - All Atom) force field was originally parameterized to accurately reproduce thermodynamic and structural properties of liquids, with a focus on organic molecules. [1] Recent developments have seen OPLS-AA successfully extended to specialized systems. For instance, a 2025 study developed OPLS-AA parameters for organosulfur and organohalogen active pharmaceutical ingredients, validated against experimental sublimation enthalpies and single-crystal X-ray diffraction data. [1] Another 2025 study combined OPLS-AA with the CM5 charge model to dramatically improve the stability of cellulose Iβ simulations, enabling accurate modeling of surface-functionalized forms that were previously challenging. [7] In protein simulations, OPLS-AA has demonstrated strong performance; a benchmark of SARS-CoV-2 papain-like protease found that OPLS-AA with the TIP3P water model outperformed other force fields in reproducing the native fold over longer simulation times. [8]

GROMOS

The GROMOS (GROningen MOlecular Simulation) force field employs a united-atom approach, where aliphatic hydrogen atoms are not explicitly represented but are combined with the attached carbon atoms into extended atoms. This design choice prioritizes computational efficiency. [2] The GROMOS-96 force field represents a development of GROMOS-87 with improvements for proteins and small molecules, including a fourth-power bond stretching potential and an angle potential based on the cosine of the angle. [2] It is important to note that the GROMOS force fields were originally parameterized with a physically incorrect multiple-time-stepping scheme, and when used with modern, correct integrators, physical properties such as density might deviate from intended values. [2] While GROMOS is not generally recommended for long alkanes and lipids, [2] the GROMOS-CKP united-atom force field has been developed to enhance the description of lipid order parameters. [3]

Comparative Performance Analysis: Experimental Benchmarking Data

Recent systematic benchmarks provide valuable insights into the relative performance of different force field families across various biomolecular systems. The following tables summarize key experimental findings from recent studies.

Table 2: Force Field Performance in Protein and Peptide Simulations

| Force Field | Test System | Key Performance Metrics | Reference Results |

|---|---|---|---|

| AMBER ff99sb-ildn-nmr | Dipeptides, tripeptides, tetra-alanine, ubiquitin | Accuracy against 524 NMR measurements | Best overall performance; error comparable to experimental uncertainty [5] |

| AMBER ff99sb-ildn-phi | Dipeptides, tripeptides, tetra-alanine, ubiquitin | Accuracy against 524 NMR measurements | Best overall performance; error comparable to experimental uncertainty [5] |

| CHARMM27 | Dipeptides, tripeptides, tetra-alanine, ubiquitin | Accuracy against 524 NMR measurements | Moderate performance [5] |

| OPLS-AA | Dipeptides, tripeptides, tetra-alanine, ubiquitin | Accuracy against 524 NMR measurements | Moderate performance [5] |

| AMBER ff99sb | Collagen (POG)₁₀ homotrimer | Backbone dihedrals, side-chain torsions, SAXS data | Excellent agreement with experimental data [6] |

| CHARMM36 | Collagen (POG)₁₀ homotrimer | Backbone dihedrals, side-chain torsions, SAXS data | Systematic shifts in ϕ/ψ dihedrals; overstructured triple helix [6] |

Table 3: Performance in Specialized Systems and Recent Enhancements

| Force Field | Application Domain | Key Findings | Reference |

|---|---|---|---|

| OPLS-AA/CM5 | Cellulose Iβ | Unit cell parameters with <1.5% error vs. experiment; 90% tg conformations retained | Outperformed CHARMM36, GLYCAM06 [7] |

| OPLS-AA (custom) | Organosulfur/organohalogen APIs | Unit cell dimensions and sublimation enthalpies | Best accuracy with ChelpG charges and X-sites for σ-hole [1] |

| OPLS-AA/TIP3P | SARS-CoV-2 PLpro | Native fold retention in long simulations | Outperformed CHARMM27, CHARMM36, AMBER03 [8] |

| AMBER χOL3 | RNA structures | Refinement of high-quality starting models | Modest improvements for stable models; poor models deteriorated [4] |

| BLipidFF | Mycobacterial membranes | Membrane rigidity and diffusion rates | Captured properties poorly described by general FFs [3] |

Experimental Protocols for Force Field Validation

Validation Against NMR Observables

Comprehensive force field validation often involves comparison with nuclear magnetic resonance (NMR) experiments. A typical protocol involves: [5]

- System Selection: Choose model systems with available NMR data, including dipeptides, tripeptides, tetrapeptides, and well-characterized proteins like ubiquitin.

- Simulation Setup: Solvate the molecules in appropriate water models (e.g., TIP3P, TIP4P-EW, TIP4P/2005), neutralize with counterions, and minimize energy.

- Equilibration and Production: Equilibrate the system before running production simulations (typically 20-50 ns per system) at constant temperature and pressure matching experimental conditions.

- Observable Calculation: Estimate J couplings using empirical Karplus relations and chemical shifts using programs like Sparta+ from simulation trajectories.

- Statistical Analysis: Compare calculated and experimental values using uncertainty-weighted objective functions to quantify force field accuracy.

Validation Using Thermodynamic and Structural Data

For force fields targeting specific compound classes or material properties, validation often employs: [1] [9]

- Sublimation Enthalpy Measurement: Determine ΔsubHₘ⁰ using Calvet-drop sublimation microcalorimetry for crystalline materials.

- Crystallographic Analysis: Obtain reference structural data through single-crystal X-ray diffraction and powder X-ray diffraction (PXRD).

- Liquid Property Characterization: For liquid-phase parameterization, measure pure-liquid density (ρliq) and vaporization enthalpy (ΔHvap).

- Simulation Comparison: Run MD simulations using the force field and compare predicted unit cell parameters, densities, and energies with experimental values.

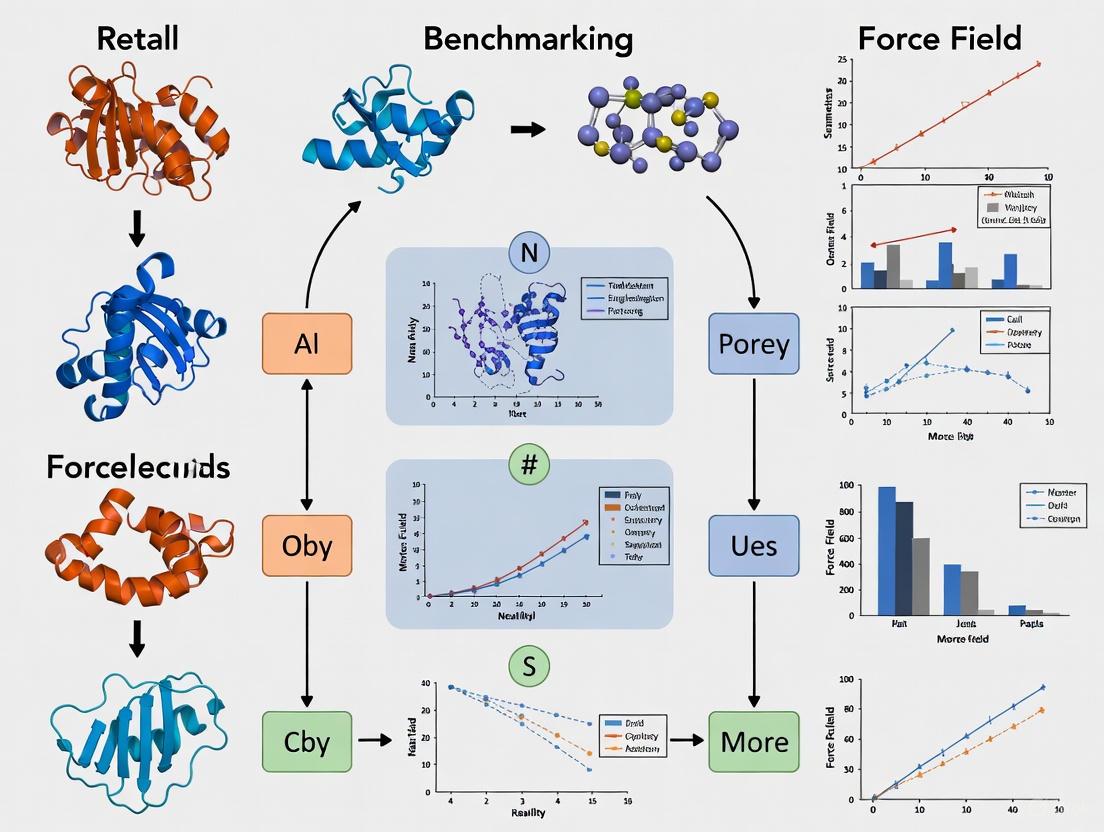

The following diagram illustrates a generalized workflow for force field development and validation, integrating the methodologies described above:

Diagram 1: Workflow for force field development and validation. The process involves iterative cycles of parameterization based on theoretical and experimental data, followed by rigorous validation against experimental observables.

Table 4: Key Software Tools and Resources for Force Field Applications

| Tool/Resource | Primary Function | Application Context |

|---|---|---|

| GROMACS | Molecular dynamics simulation package | High-performance MD simulations with various force fields [2] |

| Gaussian09 | Quantum chemistry software package | Ab initio calculations for force field parameterization [3] |

| Multiwfn | Wavefunction analysis program | RESP charge fitting for atomic partial charges [3] |

| PyMol | Molecular visualization system | Structure analysis and visualization of simulation results [5] |

| SPARTA+ | Chemical shift prediction | Calculation of NMR chemical shifts from structures [5] |

| CELREF | Powder pattern indexing | Indexation of PXRD patterns for crystal structure validation [1] |

| VOTCA | Coarse-graining toolkit | Systematic parameterization of coarse-grained force fields [2] |

| CombiFF | Automated parameterization workflow | Force field calibration against experimental liquid properties [9] |

The benchmarking data presented in this guide demonstrates that while modern force fields have achieved significant accuracy in reproducing experimental observables, their performance is highly system-dependent. The optimal choice of force field depends critically on the specific biological system and properties of interest. AMBER ff99sb-ildn variants excel in protein and peptide simulations, OPLS-AA shows strong performance for organic molecules and pharmaceutical compounds, CHARMM36 provides reliable results for lipid membranes, and specialized parameter sets like BLipidFF address unique challenges in bacterial membrane simulations.

Future force field development will likely focus on addressing remaining limitations, including the accurate description of electronic polarization effects, improved transferability across diverse chemical space, and the development of more sophisticated validation protocols against emerging experimental data. The continued collaboration between computational and experimental scientists, facilitated by initiatives such as the COST Action CA22107 for crystal structure prediction, [1] promises to further refine these essential tools for molecular simulation. As force fields continue to evolve, rigorous benchmarking against experimental data will remain crucial for guiding their development and appropriate application in drug discovery and biomolecular research.

Molecular mechanics force fields are empirical models that calculate the potential energy and atomic forces of a system based on its nuclear coordinates, serving as the foundational component for molecular dynamics (MD) simulations in computational chemistry and drug discovery [10]. The core challenge in force field development lies in balancing two competing objectives: incorporating the physical accuracy derived from quantum mechanical (QM) principles and achieving agreement with experimental observational data. QM-based parametrization offers broad chemical space coverage and fundamental physical rigor but often fails to accurately reproduce collective condensed-phase properties. Experimental calibration ensures realism but introduces empiricism and is constrained by the limited availability of high-quality reference data for complex biomolecular systems [11]. This comparison guide examines current methodologies addressing this parametrization problem, evaluating their performance against key benchmarks relevant to molecular dynamics stability research.

Comparative Analysis of Modern Parametrization Strategies

The table below summarizes the core characteristics, advantages, and limitations of the primary force field parametrization approaches used in modern biomolecular simulation.

Table 1: Comparison of Modern Force Field Parametrization Strategies

| Parametrization Strategy | Core Methodology | Representative Examples | Key Advantages | Major Limitations |

|---|---|---|---|---|

| Traditional Empirical FFs | Parameters fitted to experimental data of small molecules | GAFF, CGenFF, OPLS [10] [12] | Proven reliability for condensed phases; Good computational efficiency | Limited chemical space coverage; Labor-intensive parameterization |

| System-Specific QM-Derived | Parameters derived directly from QM calculations of target system | QMDFF [13], QUBE [14] [11] | No transferability errors; Captures specific electronic effects | Computationally expensive; Inconsistent condensed-phase accuracy |

| Modern Data-Driven FFs | Systematic optimization against extensive QM datasets | OpenFF Parsley [10], ByteFF [15] | Broad, automated chemical space coverage; Excellent QM consistency | Potential experimental property deviations; Complex training requirements |

| Specialized Biomolecular FFs | Protein parameters optimized for structured/disordered domains | CHARMM36m [16], ff99SB*-ILDN/TIP4P-D [16], a99SB-disp [16] | Balanced protein/RNA interactions; Improved IDP description | Domain-specific applicability; Water model dependencies |

Quantitative Performance Benchmarking

Independent benchmarking studies provide crucial insights into the practical performance of these parametrization strategies across different simulation contexts and physical properties.

Table 2: Force Field Performance Benchmarks Across Biomolecular Systems

| Force Field | Liquid Properties Error (Density, Hvap) | Disordered Protein Rg Accuracy | Structured Protein Stability | Binding Free Energy Performance |

|---|---|---|---|---|

| OpenFF Parsley | Similar to GAFF [10] | Not Specifically Tested | Not Specifically Tested | Accurate across 199 protein-ligand systems [10] |

| QUBE (Bespoke) | MUE: 0.031 g/cm³, 0.69 kcal/mol [11] | Not Specifically Tested | Some structure loss in regions [14] | Excellent for specific complexes (-0.4 vs -0.6 kcal/mol exp) [14] |

| CHARMM36m | Not Reported | Within experimental range for FUS [16] | Stable | Not Reported |

| ff99SB*-ILDN/TIP4P-D | Not Reported | Within experimental range for FUS [16] | ~2 kcal/mol native structure destabilization [16] | Not Reported |

| a99SB-disp | Not Reported | Within experimental range for FUS [16] | Stable | Not Reported |

| GAFF | Established baseline [10] | Compact, globular conformations [16] | Stable fiber maintained [12] | Established baseline [10] |

Experimental Protocols for Force Field Validation

Liquid Property Prediction Accuracy

The accuracy of force fields for predicting bulk liquid properties remains a fundamental validation test, particularly for small molecule parameters. The standard protocol involves:

System Preparation: A single molecule is solvated in a periodic box with several hundred to thousand water molecules, ensuring sufficient separation from periodic images [11].

Simulation Parameters: Equilibrium simulations are conducted in the NPT ensemble at standard temperature (298-300K) and pressure (1 atm) using common barostat/thermostat algorithms (Berendsen, Nosé-Hoover) with production runs of 10-50 nanoseconds [11].

Property Calculation: Density is computed from the average simulation box volume, while heat of vaporization is determined as Hvap = Egas - Eliquid + RT, where Egas and Eliquid represent potential energies of isolated and solvated molecules respectively [11].

Benchmarking: Results are compared against experimental measurements from databases like NIST, with mean unsigned errors (MUE) typically reported across a diverse set of 15-50 small organic molecules [11].

Intrinsically Disordered Protein (IDP) Conformational Sampling

Accurately modeling proteins containing intrinsically disordered regions presents distinct challenges for force field validation:

System Selection: The Fused in Sarcoma (FUS) protein serves as an exemplary test system containing both long disordered regions and structured RNA-binding domains [16].

Simulation Protocol: Multi-microsecond simulations (typically 1-10 μs) are performed using special-purpose hardware (Anton 2) or high-performance CPUs/GPUs, employing force fields coupled with various water models (TIP3P, TIP4P-D, OPC) [16].

Structural Metrics: The radius of gyration (Rg) provides the primary validation metric, compared against experimental dynamic light scattering or SAXS data. Secondary analysis includes solvent-accessible surface area (SASA) and residual dipolar couplings [16].

Performance Criteria: Successful force fields produce Rg values within experimental ranges without destabilizing structured protein domains, balancing accuracy for both ordered and disordered regions [16].

Supramolecular Self-Assembly and Stability

Validation for self-assembling systems requires specialized protocols to assess hierarchical organization:

Spontaneous Assembly Tests: Multiple molecules (typically 8-24) are randomly distributed in aqueous solution and simulated for hundreds of nanoseconds, monitoring the formation of ordered structures through hydrogen bonding patterns and periodic stacking [12].

Pre-assembled Stability: Experimentally determined structures (e.g., from crystal data) are simulated for hundreds of nanoseconds, with stability quantified through maintenance of hydrogen bonds, SASA, and structural integrity [12].

Critical Assessment: Different force fields (GROMOS, CHARMM, GAFF, Martini) show varying capabilities, with some maintaining pre-built structures but failing in spontaneous assembly, highlighting the importance of multi-faceted testing [12].

Force Field Selection Workflow: A decision pathway for selecting appropriate force fields based on research objectives and system characteristics.

Table 3: Essential Software Tools for Force Field Development and Validation

| Tool Name | Primary Function | Application Context | Key Features |

|---|---|---|---|

| ForceBalance [10] [11] | Automated parameter optimization | Systematic force field fitting against QM and experimental data | Reproducible parameterization; Multi-objective optimization |

| QUBEKit [14] [11] | Bespoke force field derivation | System-specific parameter generation from QM calculations | Direct QM-to-MM mapping; Automated workflow |

| LAMMPS [13] | Molecular dynamics simulation | Large-scale biomolecular systems | High performance; Custom force field support |

| OpenFF Toolkit [10] | SMIRNOFF-based parameter assignment | Modern small molecule force fields | Direct chemical perception; Extensible architecture |

| QCArchive [10] | Quantum chemistry data management | Reference data storage and retrieval | Distributed computing; Community datasets |

| LAMBench [17] | Large atomistic model benchmarking | Performance evaluation across domains | Standardized metrics; Cross-domain testing |

The force field parametrization landscape reflects an ongoing evolution toward hybrid methodologies that leverage both QM accuracy and experimental validation. Modern approaches like the Open Force Field initiative demonstrate that systematic optimization against extensive QM datasets can produce force fields with excellent coverage of chemical space and strong performance in binding free energy calculations [10]. Simultaneously, bespoke QM-derived force fields (QMDFF, QUBE) offer compelling advantages for specific systems where electronic effects dominate, though challenges remain in consistent condensed-phase performance [13] [14]. For complex biomolecular systems containing both structured and disordered regions, recently optimized force fields like CHARMM36m and a99SB-disp show promising capabilities when paired with appropriate water models [16].

Future progress will likely involve several key developments: (1) multi-fidelity modeling approaches that accommodate varying accuracy requirements across research domains [17], (2) increased integration of machine learning potentials with traditional force fields to expand applicability while maintaining efficiency [15], and (3) more sophisticated benchmarking platforms like LAMBench that assess generalizability across diverse chemical spaces and physical scenarios [17]. The ideal parametrization strategy ultimately depends on the specific research context, with different methodologies excelling in particular applications—highlighting the continued importance of rigorous, comparative validation against both QM benchmarks and experimental observables.

Molecular dynamics (MD) simulations have become a cornerstone of modern computational chemistry and biology, enabling researchers to study the structure, dynamics, and function of biomolecules at atomic resolution. The validity of these simulations is fundamentally determined by the accuracy of the underlying force fields—mathematical models that describe the potential energy of a system as a function of its atomic coordinates. For over five decades, classical empirical force fields have dominated biomolecular simulation, with parameter sets such as AMBER, CHARMM, and OPLS-AA serving as the workhorses for studying proteins, nucleic acids, and other biological systems. However, these traditional approaches inevitably involve trade-offs between accuracy, transferability, and computational efficiency, leading to systematic deficiencies in modeling certain biological phenomena.

The field currently stands at a transformative juncture. While classical force fields continue to mature, machine learning (ML) force fields are emerging as powerful alternatives that promise quantum-level accuracy at a fraction of the computational cost of ab initio methods. This evolution represents a paradigm shift from physics-based parametric equations to data-driven potential energy surfaces. This review examines how modern force fields have addressed historical deficiencies through specific improvements in parameterization strategies, functional forms, and integration of diverse data sources, with a particular focus on implications for biomolecular stability research.

Historical Deficiencies in Classical Force Fields

Traditional force fields have provided valuable insights into biomolecular systems but suffer from several well-documented limitations that affect their ability to accurately predict molecular stability and behavior.

Inherited Parameterization Limitations

Many contemporary force fields still rely on parameters derived decades ago, creating fundamental limitations in their accuracy. For nucleic acids, state-of-the-art force fields still utilize the same Lennard-Jones parameters derived 25 years ago, despite the fact these parameters were generally not originally fitted for nucleic acids [18]. Similarly, electrostatic parameters in many force fields are deprecated, which may explain some of the current observed deficiencies in molecular dynamics simulations [18]. This inheritance of potentially suboptimal parameters creates a foundation that limits the accuracy of even the most refined modern classical force fields.

Specific Biomolecular Challenges

Sugar-puckering in nucleic acids: Despite improvements in DNA double helix description, the representation of sugar-puckering remains a persistent problem for nucleic acids force fields [18].

Intrinsically disordered regions (IDRs): Conventional AMBER and CHARMM force fields, developed to reproduce physical properties of folded proteins, are known to perform poorly when applied to IDPs, typically producing compact, globular conformations with radii of gyration that are too low compared to experimental observations [16].

Salt bridge overstabilization: Force fields like Amber ff19sb and ff14sb show overstabilization of salt bridges, leading to overestimated pKa downshifts for acidic residues involved in these interactions [19].

Undersolvation of buried residues: Recent constant pH molecular dynamics simulations reveal deficiencies in modeling buried histidines, with significant errors in pKa predictions due to undersolvation issues [19].

Table 1: Historical Deficiencies in Classical Force Fields and Their Implications

| Deficiency Category | Specific Examples | Impact on Simulations |

|---|---|---|

| Parameterization | 25-year-old Lennard-Jones parameters in nucleic acids [18] | Limited accuracy in DNA/RNA simulations |

| Electrostatics | Deprecated electrostatic parameters [18] | Errors in ion binding and conformational sampling |

| IDP Modeling | Overly compact disordered regions [16] | Inaccurate phase behavior and binding properties |

| Solvation | Undersolvation of buried histidines [19] | Incorrect pKa values for key catalytic residues |

| Salt Bridges | Overstabilization in Amber force fields [19] | Errors in pH-dependent behavior and stability |

The Rise of Machine Learning Force Fields

Machine learning force fields represent a fundamental shift from the parametric equations of classical force fields to data-driven potential energy surfaces. ML-based force fields are attracting ever-increasing interest due to their capacity to span spatiotemporal scales of classical interatomic potentials at quantum-level accuracy [20].

ML Force Field Architectures and Approaches

Several architectural paradigms have emerged for ML force fields:

Graph Neural Networks (GNNs): Models like MACE-OFF utilize message-passing architectures to create transferable force fields for organic molecules [21] [22].

Fused Data Learning: Leveraging both Density Functional Theory (DFT) calculations and experimentally measured properties to train ML potentials, resulting in higher accuracy compared to models trained with a single data source [20].

Committee Models: Using multiple neural networks to estimate prediction uncertainty and improve accuracy through active learning approaches [23].

The MACE-OFF framework exemplifies modern ML force field approaches, demonstrating remarkable capabilities by accurately predicting a wide variety of gas and condensed phase properties of molecular systems. It produces accurate, easy-to-converge dihedral torsion scans of unseen molecules, as well as reliable descriptions of molecular crystals and liquids, including quantum nuclear effects [21] [22].

Addressing Specific Deficiencies with ML Approaches

ML force fields specifically address historical deficiencies through their data-driven nature:

NeuralIL for complex charged fluids demonstrates significantly better prediction of weak hydrogen bonds and their dynamics, which are not hindered by the absence of polarization of electronic densities as seen in classical force fields [23].

MACE-OFF has been shown to accurately describe both folded and disordered protein regions, overcoming a key limitation of classical force fields [21].

Fused data learning approaches simultaneously satisfy multiple target objectives, correcting inaccuracies of DFT functionals while maintaining accuracy on other properties [20].

Comparative Benchmarking: Classical vs. ML Force Fields

Rigorous benchmarking studies provide critical insights into how modern force fields address specific biomolecular simulation challenges.

Protein Folding and Stability

Benchmarking studies of SARS-CoV-2 papain-like protease (PLpro) revealed significant differences in force field performance. Using various structural criteria including root mean square displacement and fluctuation of backbone atoms, researchers observed that most tested force fields (OPLS-AA, CHARMM27, CHARMM36, and AMBER03) effectively reproduced the native "thumb-palm-fingers" fold over short time scales of a few hundreds of nanoseconds [8]. However, in longer MD simulations, OPLS-AA-based setups showed better performance in accurately reproducing the folding of the catalytic domain compared to other MD setups, while other force fields exhibited local unfolding of the N-terminal Ubl segment [8].

Intrinsically Disordered Proteins

The simulation of proteins containing intrinsically disordered regions presents particular challenges for force fields. Using the Fused in sarcoma (FUS) protein as a test system, researchers benchmarked nine molecular force fields, finding that the choice of force field significantly affected the global conformation of the protein, self-interactions among its side chains, solvent accessible surface area, and the diffusion constant [16]. Several force fields produced FUS conformations with radii of gyration within the experimental range determined by dynamic light scattering [16].

Table 2: Force Field Performance Across Biomolecular Systems

| Force Field | PLpro Stability [8] | IDP Rg Accuracy [16] | Nucleic Acids [18] | Computational Cost |

|---|---|---|---|---|

| OPLS-AA | Best performance in long simulations | Variable | Moderate | Low |

| CHARMM36 | Local unfolding in N-terminal | Too compact | Good with corrections | Low |

| AMBER03 | Moderate | Too compact | Moderate | Low |

| AMBER ff19SB | Not tested | Improved with OPC water | Not tested | Low |

| MACE-OFF | Not fully tested | Accurate | Promising | Moderate-High |

| NeuralIL | Not tested | Not tested | Not tested | High |

Water Model Dependencies

Force field performance is intimately connected with water model selection. Studies have demonstrated that the combination of ff19sb with OPC water is significantly more accurate than ff14sb with TIP3P water for pKa calculations [19]. Similarly, the use of four-point water models like OPC and TIP4P-D has been shown to improve the description of intrinsically disordered proteins compared to equivalent simulations carried out using the TIP3P model [16].

Experimental Protocols for Force Field Validation

Constant pH Molecular Dynamics Protocol

The accuracy of force fields for protonation equilibria can be assessed through constant pH molecular dynamics:

System Preparation: Protein coordinates are retrieved from the PDB, with termini acetylated and amidated to avoid terminal charge effects [19].

Solvation and Ion Addition: The protein is solvated in a truncated octahedral water box with a minimum of 15 Å between protein heavy atoms and box edges, then neutralized and brought to physiological ionic strength [19].

Equilibration: Systems undergo energy minimization, heating from 100 to 300 K over 1 ns, and multi-stage equilibration in the NPT ensemble with gradually reduced restraints [19].

Production Simulations: pH replica-exchange simulations are performed with asynchronous exchange attempts between adjacent pH replicas every 2 ps according to the Metropolis criterion [19].

pKa Calculation: pKa values are determined from the titration curves after discarding initial equilibration periods (typically 10 ns per replica) [19].

Protein Folding and Stability Assessment

Protocol for assessing force field performance on structured proteins:

System Setup: Protein is solvated in water with consideration of different water models (TIP3P, TIP4P, TIP5P) and replicated physiological conditions by adding 100 mM NaCl and increasing temperature to 310 K [8].

Simulation Parameters: Multiple independent simulations are run for hundreds of nanoseconds to microseconds, depending on system size and available computational resources [8].

Structural Metrics: Root mean square displacement and fluctuation of backbone atoms are calculated, along with specific catalytic residue distances (e.g., Cα(Cys111)-Cα(His272) for PLpro) [8].

Analysis: Performance is evaluated based on the ability to maintain native fold, particularly in vulnerable regions like the N-terminal Ubl segment in PLpro [8].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Tools for Modern Force Field Development and Validation

| Tool Category | Specific Examples | Function/Purpose |

|---|---|---|

| Classical Force Fields | OPLS-AA, CHARMM36, AMBER ff19SB | Baseline biomolecular simulations with proven transferability |

| ML Force Fields | MACE-OFF, NeuralIL, ANI-2x | High-accuracy simulations with quantum chemical fidelity |

| Water Models | TIP3P, TIP4P, OPC, TIP4P-D | Solvation environment with varying accuracy/complexity trade-offs |

| Validation Benchmarks | PLpro stability, FUS Rg, BBL pKa | Standardized tests for specific force field deficiencies |

| Simulation Packages | AMBER, CHARMM, LAMMPS, OpenMM | MD engines with varying force field compatibility |

| Specialized Hardware | Anton 2, GPU clusters | Enable microsecond+ simulations for adequate sampling |

The evolution of force fields has progressively addressed historical deficiencies through both incremental improvements to classical frameworks and revolutionary machine learning approaches. While classical force fields like OPLS-AA and CHARMM36 continue to demonstrate strengths in specific applications such as protein folding stability, they face fundamental limitations in areas like intrinsically disordered protein modeling and accurate pKa prediction due to inherited parameterization schemes and functional forms.

Machine learning force fields represent a paradigm shift, offering quantum-level accuracy while maintaining computational efficiency sufficient for biomolecular simulations. Approaches like MACE-OFF and fused data learning have demonstrated remarkable capabilities in addressing specific deficiencies that have plagued classical force fields for decades. However, these ML approaches currently face challenges in computational cost, incorporation of long-range interactions, and comprehensive validation across diverse biomolecular systems.

The optimal choice of force field remains system-dependent and objective-specific. For routine simulation of folded proteins, classical force fields like OPLS-AA provide proven reliability, while for properties sensitive to electronic structure or systems with significant disorder, ML force fields offer superior accuracy at increased computational cost. As the field progresses, the integration of physical principles with data-driven approaches appears most promising for developing next-generation force fields that overcome current trade-offs and enable predictive molecular simulation across the full spectrum of biomolecular complexity.

Molecular dynamics (MD) simulations are an indispensable tool in computational biology and drug discovery, providing atomistic insights into biomolecular processes. The accuracy of these simulations, however, is critically dependent on the empirical force fields used to approximate atomic-level forces. This guide objectively compares the performance of different biomolecular force fields, framing the discussion within the critical challenges of poor statistical sampling, overfitting, and the dangers of narrow validation. As force fields are progressively refined, a rigorous and multifaceted benchmarking approach is essential to assess their performance accurately and guide their development and application in stability research and drug development.

Performance Comparison of Biomolecular Force Fields

The table below summarizes quantitative data and key findings from recent studies benchmarking various force fields, highlighting their performance in reproducing biomolecular structure and dynamics.

Table 1: Benchmarking Performance of Key Biomolecular Force Fields

| Force Field | Test System(s) | Key Performance Metrics | Overall Findings and Limitations |

|---|---|---|---|

| OPLS-AA [8] | SARS-CoV-2 PLpro (native fold & inhibitor-bound form) | Backbone RMSD/RMSF, catalytic residue distance, native fold stability [8] | Superior in maintaining catalytic domain folding and N-terminal Ubl segment stability in longer simulations; OPLS-AA/TIP3P setup ranked highest [8]. |

| CHARMM27 [8] [24] | SARS-CoV-2 PLpro; Ubiquitin; GB3 | Backbone RMSD, structural stability, secondary structure retention [8] [24] | Exhibited local unfolding of N-terminal segment in PLpro [8]; GB3 unfolded during simulation, indicating stability issues [24]. |

| CHARMM36 [8] [25] | SARS-CoV-2 PLpro; 18 biological motifs | Structural criteria similar to CHARMM27; hydration structure, H-bond counts, ion-pair distances [8] [25] | Outperformed by OPLS-AA in PLpro folding stability [8]; Bayesian optimization of its charges showed systematic improvements, especially for anions [25]. |

| AMBER03 [8] | SARS-CoV-2 PLpro | Backbone RMSD/RMSF, catalytic residue distance [8] | Effectively reproduced native fold over short time scales but was outperformed by OPLS-AA in longer simulations [8]. |

| AMBER ff99SB-ILDN [24] | Ubiquitin; GB3; helical & sheet peptides; small folding proteins | NMR chemical shifts, J-couplings, NOEs, residual dipolar couplings (RDCs), folding capability [24] | Showed good overall agreement with experimental NMR data for folded proteins and improved performance in peptide and protein folding tests [24]. |

| GROMOS Family [26] | Curated set of 52 high-resolution protein structures | Backbone H-bonds, SASA, radius of gyration, secondary structure, J-couplings, NOEs, backbone dihedral distribution [26] | Statistically significant but often small differences between parameter sets; improvements in one metric often offset by losses in another [26]. |

Critical Challenges in Force Field Validation

Poor Statistical Sampling

Adequate sampling is a cornerstone for obtaining statistically meaningful results from MD simulations. The field has been historically plagued by simulations that were too short or too few to draw reliable conclusions.

- Insufficient Simulation Time and Replicates: Early force field validation studies were conducted with simulation times that are now considered extremely short—ranging from picoseconds to a few nanoseconds—making it difficult to observe biologically relevant conformational changes. [26] For instance, the validation of the AMBER ff94 force field relied partly on a single 180 ps simulation, where a small difference in RMSD was claimed as a significant improvement, a conclusion now recognized as statistically uncertain. [26] Similarly, a study involving 28 replicates of a 50 ns simulation of the villin headpiece found that variations between replicates made it difficult to reliably distinguish between two different force fields. [26]

- Inadequate System Diversity: Validation efforts have often focused on a narrow range of protein systems. For example, several versions of the GROMOS parameter sets were validated primarily on hen egg lysozyme (HEWL). [26] This creates a risk that a force field is only accurate for specific protein types and may not generalize well to other biomolecular systems with different structural features.

Overfitting

Overfitting occurs when force field parameters are optimized to reproduce a narrow set of target properties too closely, potentially at the expense of broader transferability and physical accuracy.

- The Peril of Narrow Observables: Force fields are sometimes refined to improve agreement with specific experimental observables, such as residual dipolar couplings (RDCs) or J-coupling constants. [26] While performance against these targets may improve, this can come at the cost of accuracy in other structural or thermodynamic properties. [26] This is because parameters become highly tuned to a limited dataset, failing to capture the underlying physical potential energy surface more generally.

- Parameter Correlations and Non-Uniqueness: Force field parametrization is a poorly constrained problem with highly correlated parameters. [26] This means that alternative parameter combinations can yield similar results for a specific test, making it difficult to identify the most physically correct set. This is particularly true for atomic partial charges, which have a "many-to-one" mapping to measurable properties. [25]

The Dangers of Narrow Validation

Relying on a limited set of validation criteria can create hidden biases and provide a false sense of a force field's accuracy and robustness.

- The Decoy of Root-Mean-Square Deviation (RMSD): Using a small RMSD from a starting crystal structure as the primary validation metric can be misleading. [26] A low RMSD may indicate stability but does not guarantee that the simulation is accurately sampling the true conformational ensemble or reproducing dynamic properties. It also risks reinforcing biases if the experimental structure itself was solved using the same force field. [26]

- The Need for a Multi-Faceted Validation Framework: As established in a 2024 study, while statistically significant differences between force fields can be detected, improvements in one metric (e.g., number of hydrogen bonds) are often offset by a loss of agreement in another (e.g., radius of gyration or dihedral distribution). [26] This highlights the danger of inferring force field quality based on a small subset of properties and underscores the need for a comprehensive benchmarking approach that evaluates a wide range of structural criteria across a diverse set of proteins. [26]

Detailed Experimental Protocols

To ensure reproducibility and provide a clear framework for benchmarking, this section outlines the key methodological details from the cited studies.

Protocol for Benchmarking Force Fields on a Folded Protein (SARS-CoV-2 PLpro)

This protocol is derived from a 2025 study that evaluated force fields on their ability to maintain the native structure of the SARS-CoV-2 papain-like protease (PLpro). [8]

- System Preparation: The initial coordinate for the PLpro structure was obtained from the Protein Data Bank (PDB). The protein was solvated in a periodic water box, with 100 mM NaCl added to replicate physiological ionic strength.

- Simulation Parameters: Simulations were conducted using various force fields (OPLS-AA, CHARMM27, CHARMM36, AMBER03) coupled with different water models (TIP3P, TIP4P, TIP5P). Temperature was maintained at 310 K using a thermostat (e.g., Nose-Hoover). Long-range electrostatics were treated with the Particle Mesh Ewald (PME) method.

- Production Simulation and Analysis: Multiple production runs, ranging from hundreds of nanoseconds to microseconds, were performed for each force field/water model combination. Trajectories were analyzed using:

- Root-mean-square deviation (RMSD) of backbone atoms to assess global structural stability.

- Root-mean-square fluctuation (RMSF) of backbone atoms to quantify residual flexibility.

- Distance between Cα atoms of catalytic residues (Cys111 and His272) as a specific metric for active site integrity.

- Visual inspection of the "thumb-palm-fingers" fold, particularly the stability of the N-terminal Ubl domain.

Protocol for Systematic Validation Against NMR Data

This protocol is based on a landmark 2012 study that performed extensive validation of eight force fields using NMR data from folded proteins and peptides. [24]

- System Selection: Two small, well-characterized folded proteins (Ubiquitin and GB3) with extensive NMR data were selected. Two peptides that preferentially form alpha-helical or beta-sheet structures were also simulated.

- Simulation Details: For each force field, extensive MD simulations (10 µs per protein) were run on the Anton supercomputer to achieve unprecedented sampling. All simulations were performed in explicit solvent under physiological conditions.

- Comparison with Experiment: The conformational ensembles generated by the simulations were compared directly to a wide array of experimental NMR data:

- Structural Properties: Chemical shifts, J-coupling constants.

- Dynamic Properties: Nuclear Overhauser effect (NOE) intensities, residual dipolar couplings (RDCs), and order parameters.

- Secondary Structure Propensity: For the peptides, the population of helical or sheet-like conformations was quantified and compared to experiment.

- Folding Tests: The ability of the force fields to fold an alpha-helical and a beta-sheet protein from an unfolded state was also tested.

Protocol for a Bayesian Force Field Optimization Framework

A 2025 study introduced a robust, data-driven method for optimizing force field parameters, specifically partial charges, using a Bayesian framework. [25]

- Reference Data Generation: Ab initio molecular dynamics (AIMD) simulations of 18 biologically relevant molecular fragments (e.g., carboxylates, phosphates) solvated in explicit water were performed. These simulations inherently capture electronic polarization effects.

- Quantity of Interest (QoI) Extraction: Structural QoIs, including radial distribution functions (RDFs) between solute and water, hydrogen bond counts, and ion-pair distance distributions, were computed from the AIMD trajectories to serve as the optimization target.

- Surrogate Model Training: A local Gaussian process (LGP) surrogate model was trained to map trial partial charge sets to the predicted QoIs, dramatically accelerating the parameter sampling process compared to running full classical MD for each candidate.

- Bayesian Inference: A Markov chain Monte Carlo (MCMC) engine was used to sample the posterior distribution of partial charges. This process balances the agreement with the AIMD QoIs (likelihood) against physically motivated prior knowledge, yielding not just a single "best" parameter set but also confidence intervals.

The Scientist's Toolkit: Research Reagent Solutions

The table below details key computational tools and their functions, which are essential for conducting force field benchmarking and development studies.

Table 2: Essential Research Reagents and Computational Tools

| Tool/Reagent | Function in Force Field Research |

|---|---|

| Biomolecular Force Fields (e.g., OPLS-AA, CHARMM, AMBER, GROMOS) | Empirical mathematical models that define the potential energy function and parameters for bonded and non-bonded atomic interactions, forming the core of any MD simulation. [8] [26] [24] |

| Explicit Solvent Water Models (e.g., TIP3P, TIP4P, TIP5P) | Models that represent water molecules explicitly in the simulation, critical for accurately capturing solvation effects, hydrogen bonding, and dielectric properties. [8] |

| Particle Mesh Ewald (PME) | An algorithm for efficiently calculating long-range electrostatic interactions, which is essential for simulating charged biomolecules in solution. [26] |

| Thermostats/Barostats (e.g., Nose-Hoover, Berendsen) | Algorithms to maintain constant temperature (thermostat) and pressure (barostat) during simulations, enabling the study of systems in specific thermodynamic ensembles (NVT, NPT). [27] |

| Ab Initio MD (AIMD) | Simulations using density functional theory (DFT) to compute interatomic forces, providing high-accuracy reference data for force field parametrization that includes electronic polarization. [25] |

| Bayesian Inference Framework | A statistical approach that yields optimal force field parameters with confidence intervals, enhancing robustness and providing insight into parameter uncertainty and transferability. [25] |

Logical Workflow for Force Field Validation

The following diagram illustrates a robust, multi-stage workflow for force field validation, designed to mitigate the challenges of poor sampling and narrow validation.

Bayesian Force Field Parameterization

The diagram below outlines the innovative Bayesian framework for force field parameterization, which directly addresses the challenge of overfitting by quantifying parameter uncertainty.

Best Practices for Simulation Setup: From System Construction to Enhanced Sampling

Molecular dynamics (MD) simulation serves as a "computational microscope" for life sciences, enabling researchers to observe biomolecular processes at an atomic level. The fidelity of these simulations is fundamentally governed by the force field—a mathematical model describing the potential energy of a system as a function of its atomic coordinates. The choice of force field and accompanying water model creates a critical trade-off: highly accurate ab initio methods are computationally prohibitive for large systems, while fast molecular mechanics (MM) force fields may sacrifice chemical accuracy. This guide provides an objective comparison of contemporary force fields and water models, synthesizing recent experimental data to help researchers navigate this complex landscape for biomolecular stability research.

Force Field Architectures and Performance Benchmarks

Traditional Molecular Mechanics Force Fields

Established molecular mechanics force fields employ physics-inspired functional forms with parameters derived from experimental data and quantum mechanical calculations on small molecules. They remain the workhorses for simulating large biomolecular systems over biologically relevant timescales due to their computational efficiency.

- AMBER: This force field family is widely used for proteins and nucleic acids. Recent variants include AMBER99SB-ILDN and AMBER19SB, the latter incorporating amino-acid-specific energy correction maps (CMAP) to better reproduce backbone dihedral angles [2]. GROMACS includes native support for these and other AMBER force fields [2].

- CHARMM: The CHARMM force field covers proteins, lipids, and nucleic acids. CHARMM36 is a widely used version, and its CHARMM36m variant includes adjustments for more accurate simulation of intrinsically disordered proteins (IDPs) [2] [28]. Like AMBER19SB, it typically employs CMAP corrections [2].

- GROMOS: The GROMOS-96 force field is a united-atom parameter set sometimes recommended for united-atom setups [2]. However, users should note it was originally parameterized with a physically incorrect multiple-time-stepping scheme, which can affect physical properties like density when used with modern, correct integrators [2].

Table 1: Performance Benchmarking of Selected Force Fields on the R2-FUS-LC Intrinsically Disordered Region [28]

| Force Field (FF) & Water Model (WM) | Final Score | Rg Score (Compactness) | Contact Map Score | SSP Score | Performance Profile |

|---|---|---|---|---|---|

| c36m2021s3p (CHARMM36m2021, mTIP3P) | * | 0.91 | 0.72 | 0.51 | Most balanced; samples both compact and unfolded states well. |

| a99sb4pew (AMBER99SB, TIP4P-EW) | * | 0.89 | 0.70 | 0.42 | Tends to generate more compact conformations. |

| a19sbopc (AMBER19SB, OPC) | * | 0.92 | 0.67 | 0.40 | Consistent performance across reference Rg values. |

| c36ms3p (CHARMM36m, TIP3P) | * | 0.85 | 0.70 | 0.39 | Prefers flexible and extended conformations. |

| a14sb3p (AMBER14SB, TIP3P) | # | 0.19 | 0.67 | 0.67 | Good local contacts but poor global compactness. |

| c36m3pm (CHARMM36m, TIP3P-M) | # | 0.12 | 0.99 | 0.11 | Excellent contacts but severely biased towards unfolded states. |

Score Key: * Top Group, • Middle Group, # Bottom Group

Emerging Machine-Learned and Ab Initio Approaches

Machine learning has recently been harnessed to create new force fields that promise to bridge the accuracy-efficiency gap.

- Grappa: A machine-learned molecular mechanics force field that predicts MM parameters directly from the molecular graph using a graph attentional neural network [29]. Its key advantage is performing the machine learning prediction only once per molecule; subsequent energy evaluations use standard, highly optimized MM code in engines like GROMACS and OpenMM, achieving computational cost identical to traditional force fields [29]. It has demonstrated state-of-the-art accuracy for small molecules, peptides, and RNA, and can reproduce experimentally measured J-couplings [29].

- AI2BMD: An artificial intelligence-based ab initio biomolecular dynamics system that uses a protein fragmentation scheme and a machine learning force field (ViSNet) to simulate full-atom large biomolecules [30]. It achieves a massive computational speedup over density functional theory (DFT), reducing the time for a simulation step of a 746-atom protein from 92 minutes (DFT) to 0.125 seconds, while maintaining high accuracy [30].

- NEP-MB-pol: A neuroevolution potential trained on extensive many-body polarization reference data approaching coupled-cluster-level accuracy [31]. This framework is particularly notable for accurately predicting water's thermodynamic properties and challenging transport properties (self-diffusion coefficient, viscosity, thermal conductivity) across a broad temperature range, a task where many previous ML potentials struggled [31].

Table 2: Accuracy and Efficiency Comparison of Advanced Simulation Approaches

| Method | Representative System & Performance | Energy MAE | Force MAE | Computational Efficiency |

|---|---|---|---|---|

| Grappa [29] | Small molecules, peptides, RNA | Matches state-of-the-art MM accuracy | Matches state-of-the-art MM accuracy | Same cost as traditional MM force fields (e.g., in GROMACS). |

| AI2BMD [30] | Protein (746 atoms): vs. DFT | 0.038 kcal mol⁻¹ per atom | 1.974 kcal mol⁻¹ Å⁻¹ | > 4 orders of magnitude faster than DFT. |

| Traditional MM [30] | Protein (746 atoms): vs. DFT | ~0.2 kcal mol⁻¹ per atom | 8.094 kcal mol⁻¹ Å⁻¹ | Fastest, but significantly lower accuracy. |

| NEP-MB-pol [31] | Bulk water (vs. CCSD(T)) | 1.9 meV atom⁻¹ (training) | 47.7 meV Å⁻¹ (training) | Enables ns-scale MD with quantum chemistry accuracy. |

Water Model Comparison

The choice of water model is equally critical, as it significantly influences the simulation of solute conformation, stability, and dynamics.

Table 3: Comparison of Rigid Three-Site Water Models [32]

| Water Model | O-H bond (Å) | H-O-H angle (°) | Charge on H (e) | Key Characteristics |

|---|---|---|---|---|

| TIP3P | 0.9572 | 104.52 | +0.417 | Widely used; tends to exhibit excessive localization and reduced complexity in clusters [32]. |

| SPC | 1.0 | 109.45 | +0.410 | Simpler geometry; systematic underestimation of dielectric constant [32]. |

| SPC/ε | 1.0 | 109.45 | +0.445 | Refinement of SPC with optimized charges to match experimental dielectric constant; shows superior electronic structure representation [32]. |

Information-theoretic analysis of water clusters reveals that SPC/ε demonstrates an optimal entropy–information balance and enhanced complexity measures, which correlates with its improved experimental accuracy, while TIP3P's deficiencies become more pronounced with increasing cluster size [32].

Experimental Protocols and Methodologies

Benchmarking Force Fields for Intrinsically Disordered Proteins

The challenge of simulating IDPs was highlighted in a 2023 study that benchmarked 13 force fields on the R2-FUS-LC region, an IDP implicated in ALS [28].

Protocol:

- System Preparation: The system consisted of a trimer of 16-residue R2-FUS-LC peptides.

- Simulation Details: For each of the 13 FF/WM combinations, six independent MD simulations were conducted, each lasting 500 ns (totaling 3 μs per force field).

- Evaluation Metrics:

- Radius of Gyration (Rg): To assess global compactness, distributions were compared to reference values from folded (U-shaped: 10.0 Å, L-shaped: 14.4 Å) and unfolded (calculated via Flory's model) states.

- Intra-peptide Contact Map: To evaluate the local contact details within the cross-β state.

- Secondary Structure Propensity (SSP): To analyze local conformational preferences.

- Scoring: A final composite score was derived from the three metrics to rank the force fields [28].

Validating Ab Initio Accuracy with Fragmentation

The AI2BMD study established a generalizable protocol for achieving DFT-level accuracy for proteins of varying sizes [30].

Protocol:

- Fragmentation: Proteins were split into overlapping dipeptide units (21 types in total, containing 12-36 atoms).

- Data Generation: An extensive dataset of 20.88 million samples was created by scanning dihedrals and running AIMD simulations with the M06-2X/6-31g* level of theory.

- Model Training: The ViSNet model was trained on this dataset to predict energies and forces.

- Validation: The potential's accuracy was tested on 9 proteins (175 to 13,728 atoms) by comparing AI2BMD's energy and force predictions against those computed directly by DFT (for smaller proteins) or fragmented DFT (for larger proteins) [30].

Decision Workflow and Research Toolkit

Table 4: Key Software and Analysis Tools for Force Field Benchmarking

| Tool Name | Type | Primary Function | Relevance to Benchmarking |

|---|---|---|---|

| GROMACS [2] | MD Simulation Engine | High-performance MD simulations. | Native support for AMBER, CHARMM, GROMOS force fields; includes tools like pdb2gmx for topology generation. |

| OpenMM [29] | MD Simulation Library | Flexible, hardware-accelerated MD toolkit. | Compatible with new force fields like Grappa; enables rapid prototyping and production simulations. |

| VOTCA [2] | Coarse-graining Toolkit | Systematic coarse-graining of molecular systems. | Assists in parametrizing coarse-grained force fields and has a well-tested interface with GROMACS. |

| GPUMD [31] | MD Simulation Package | GPU-accelerated MD with NEP support. | High-performance platform for running simulations with machine-learned neuroevolution potentials. |

The landscape of force fields is evolving from fixed parameter tables toward dynamic, machine-learned approaches. For simulating large systems like viruses over long timescales, traditional MM force fields like AMBER19SB and CHARMM36m remain the most practical choice, with CHARMM36m showing particular strength for IDPs. For small to medium-sized systems where chemical accuracy is critical, ML-augmented frameworks like Grappa and AI2BMD offer a transformative advance, bridging the gap between quantum accuracy and molecular mechanics efficiency. When selecting a water model, three-site models like SPC/ε provide a good balance of efficiency and improved physical properties. Researchers must align their choice with the specific biological question, system size, and available computational resources, leveraging the provided workflow and benchmarking data to make an informed decision.

Molecular dynamics (MD) simulation serves as a computational microscope, enabling researchers to observe biomolecular processes at an atomic level. The fidelity of these simulations is critically dependent on the underlying physical model, particularly the treatment of non-bonded interactions. The methods used to handle cutoff distances and long-range electrostatics are not merely computational conveniences; they are fundamental choices that can dictate the thermodynamic and structural outcomes of a simulation. Inaccurate approximations can lead to distorted protein folding pathways, incorrect stability estimates, and unreliable representations of unfolded states, ultimately compromising the value of simulations in fields like drug development. This guide objectively compares the performance implications of different methodological approaches, providing supporting experimental data to inform researchers' choices in biomolecular force field benchmarking.

Comparative Analysis of Electrostatic Treatments and Cutoff Schemes

The computational expense of MD simulations often necessitates approximations in calculating non-bonded forces. A common approach is to ignore interactions beyond a specific cutoff distance. While generally acceptable for rapidly decaying van der Waals forces, this approach is problematic for electrostatic forces, which decay slowly. Simple truncation introduces significant artifacts, leading to the development of alternative schemes.

- Cutoff-Based Force-Shifting (SHIFT): This technique modifies the electrostatic potential by adding a constant, ensuring the net force smoothly approaches zero at the cutoff distance. Interactions beyond the cutoff are ignored. While computationally efficient, this method inherently neglects long-range electrostatic contributions [33].

- Ewald Summation Methods: Techniques like the Gaussian split Ewald (GSE) method provide a more complete treatment by splitting electrostatic interactions into short-range and long-range components. The short-range component is calculated within a cutoff, while the long-range component is efficiently computed using Fourier transforms in periodic systems. Ewald methods do not inherently limit accuracy based on cutoff choice but instead shift computational burden [33].

The choice between these methods and the selection of a cutoff length are not merely technical details; they can significantly impact simulation outcomes, as revealed by controlled studies on protein folding.

Experimental Data on Force Field Treatment Impact

A landmark study systematically evaluated these approximations using the villin headpiece, a 35-amino acid protein domain, as a test system. Simulations were performed using the CHARMM22* force field on the Anton specialized supercomputer, allowing for statistically robust comparisons. The study compared the GSE method against the SHIFT technique across a range of cutoffs (8.0 Å to 12.0 Å) [33].

Table 1: Impact of Cutoff and Electrostatic Treatment on Villin Headpiece Folding

| Treatment Method | Cutoff Distance (Å) | Free Energy of Folding | Unfolded State Structural Properties |

|---|---|---|---|

| k-space Gaussian split Ewald (GSE) | 8.0 | Relatively Insensitive | Stronger Dependence |

| k-space Gaussian split Ewald (GSE) | 9.0 | Relatively Insensitive | Stronger Dependence |

| k-space Gaussian split Ewald (GSE) | 12.0 | (Reference) | (Reference) |

| Cutoff-based force-shifting (SHIFT) | 8.0 | Relatively Insensitive | Stronger Dependence |

| Cutoff-based force-shifting (SHIFT) | 9.0 | Relatively Insensitive | Stronger Dependence |

Data adapted from Piana et al. (2012) [33]. The study found that the free energy of folding was relatively insensitive to the choice of cutoff beyond 9 Å and to the use of an Ewald method versus a force-shifting technique. In contrast, the structural properties of the unfolded state showed a stronger dependence on these approximations.

The data indicates that for the free energy of folding, a key thermodynamic property, the system is surprisingly robust. The stability of the villin headpiece remained relatively unchanged with cutoffs as low as 9 Å, regardless of whether long-range electrostatics were fully accounted for with GSE or approximated with SHIFT. This suggests that for studies focused primarily on overall protein stability, these approximations may be acceptable.

However, a different picture emerges for structural properties, particularly of the unfolded state. The study found that the structural ensemble of the unfolded protein was more sensitive to both the cutoff length and the treatment of electrostatics. This is a critical consideration for research into intrinsically disordered proteins, folding pathways, or any process where the configuration of the unfolded chain is important [33].

The broader context of force field benchmarking is illustrated by a 2025 study on SARS-CoV-2 papain-like protease (PLpro). This research benchmarked force fields like OPLS-AA, CHARMM27/36, and AMBER03, considering different water models (TIP3P, TIP4P, TIP5P) and physiological conditions. It concluded that the OPLS-AA/TIP3P setup outperformed others in reproducing the native fold and stability, especially over longer timescales and when complexed with an inhibitor [34]. This underscores that the choice of force field and its associated parameters—which inherently include methods for handling cutoffs and electrostatics—is system-dependent and crucial for obtaining reliable results.

Detailed Experimental Protocols

To ensure reproducibility and provide a clear framework for benchmarking, this section outlines the key methodological details from the cited studies.

Protocol for Evaluating Cutoffs and Electrostatics in Protein Folding

The comparative study on the villin headpiece provides a replicable protocol for evaluating force field approximations [33]:

- Test System: A fast-folding variant of the villin headpiece (35 amino acids) was solvated in a cubic box with 4,397 water molecules and 5 ions, resulting in a system of 13,773 atoms.

- Force Field and Simulation Engine: The CHARMM22* force field was used. All simulations were performed on the Anton special-purpose computer, enabling long timescales necessary for obtaining statistically meaningful data.

- Simulation Parameters: Simulations were conducted in the NVT (canonical) ensemble. The system was coupled to a Nosé-Hoover thermostat with a reference temperature of 360 K and a relaxation time of 10 ps. A RESPA multi-time step scheme was employed, with a 5.0 fs time step for long-range electrostatic interactions and a 2.0 fs time step for short-range interactions.

- Variable Parameters: Fourteen independent simulations were performed. The primary variables were:

- Electrostatic Treatment: k-space Gaussian split Ewald (GSE) versus cutoff-based force-shifting (SHIFT).

- Cutoff Distance: Seven values from 8.0 Å to 12.0 Å, applied to both electrostatic and van der Waals interactions.

- Analysis: The free energy of folding was calculated to assess thermodynamic stability. Structural properties, particularly of the unfolded state, were analyzed to determine the sensitivity of the conformational ensemble to the computational approximations.

Protocol for Benchmarking Force Fields on a Therapeutic Target

The benchmarking study on SARS-CoV-2 PLpro offers a protocol for evaluating force fields on a structurally complex, therapeutically relevant protein [34]:

- Test System: The native fold of the SARS-CoV-2 papain-like protease (PLpro) in aqueous solution. The impact of different water models (TIP3P, TIP4P, TIP5P) was also tested. Physiological conditions were replicated by adding 100 mM NaCl and setting the temperature to 310 K.

- Force Fields: Multiple popular biomolecular force fields were compared, including OPLS-AA, CHARMM27, CHARMM36, and AMBER03.

- Simulation and Analysis: Both short (hundreds of nanoseconds) and longer MD simulations were performed. Structural stability was assessed using:

- Root mean square displacement (RMSD) of backbone atoms.

- Root mean square fluctuation (RMSF) of backbone atoms.

- Distance between catalytic residues (Cα(Cys111)-Cα(His272)).

- Holocomplex Evaluation: The study also explored the folding and stability of the substrate-bound holo-form of PLpro with a non-covalent inhibitor (XR8-89).

Visualization of Methodological Impact and Workflows

The logical relationship between the choice of electrostatic treatment, the selected cutoff, and their subsequent effects on simulation outcomes can be visualized as a decision pathway. The diagram below maps these cause-and-effect relationships based on the experimental findings.

This workflow illustrates the primary finding that the free energy of folding is robust across a range of common approximations, while the structural details of the unfolded state are more sensitive to these choices. It also highlights the lower boundary (9 Å) beyond which cutoff-related artifacts may become significant.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Selecting appropriate computational tools is as critical as choosing laboratory reagents. The following table details key "research reagent solutions" — software, force fields, and models — essential for conducting rigorous MD simulations in the context of biomolecular stability.

Table 2: Key Reagents for Biomolecular Dynamics Simulations

| Item Name | Function / Role in Experiment | Key Considerations |

|---|---|---|

| CHARMM22* | A classical force field defining potential energy terms for proteins. | Used in the villin study; performance depends on treatment of long-range interactions [33]. |

| OPLS-AA | An all-atom classical force field for organic molecules and proteins. | Outperformed others in PLpro benchmarking for native fold retention over longer timescales [34]. |

| GSE (GSE) | An Ewald summation method for accurate treatment of long-range electrostatic forces. | Provides a more accurate reference compared to cutoff-based methods [33]. |

| SHIFT | A cutoff-based force-shifting technique for approximating electrostatics. | Computationally efficient but ignores long-range interactions; can affect unfolded state structure [33]. |

| TIP3P | A 3-site water model commonly used with biomolecular force fields. | Part of the top-performing OPLS-AA setup in the PLpro benchmark [34]. |

| Anton | A special-purpose supercomputer designed for extremely long MD simulations. | Enabled the acquisition of statistically significant folding/unfolding data in the villin study [33]. |

The integrity of physical models in molecular dynamics is paramount. This comparison guide demonstrates that while thermodynamic properties like the free energy of folding can be robust to approximations such as cutoff distances as low as 9 Å and the use of force-shifting electrostatics, the structural fidelity of dynamic states is more fragile. Researchers focusing on native state stability may have flexibility in their choice of methods, but those investigating disordered states, folding mechanisms, or any process reliant on precise conformational sampling must prioritize accurate treatments like Ewald summation. As benchmarking studies on systems like villin headpiece and SARS-CoV-2 PLpro show, the optimal choice of force field and its associated parameters is context-dependent. Ultimately, aligning the computational methodology with the specific biological question is the key to ensuring physical model integrity and generating reliable, actionable data for drug development and basic research.

Molecular Dynamics (MD) simulation has emerged as a crucial computational technique for studying the structure, dynamics, and function of biological macromolecules with atomic resolution. However, a fundamental challenge limits its application: inadequate sampling of conformational states. Biomolecular systems are characterized by rough energy landscapes with numerous local minima separated by high-energy barriers, which can trap simulations in non-representative conformational substates, particularly those relevant to biological function [35]. The computational expense of MD simulations further exacerbates this problem, with even relatively small systems (approximately 25,000 atoms) requiring months of computation on multiple processors to reach microsecond timescales [35].

Within the specific context of benchmarking biomolecular force fields for stability research, adequate sampling becomes paramount. Force field validation requires thorough sampling of the conformational space to determine whether observed stability, fluctuations, and transitions result from the force field's accuracy rather than sampling limitations. Recent studies have demonstrated that proteins can become trapped in non-relevant conformations during long simulations without returning to biologically relevant states [35]. This review comprehensively compares strategies for achieving adequate sampling through enhanced simulation techniques and provides guidance for determining appropriate simulation length within force field benchmarking protocols.

Enhanced Sampling Methods: A Comparative Analysis

Several enhanced sampling methods have been developed to address the sampling problem in MD simulations. These methods can be broadly categorized into temperature-based, collective variable-based, and Hamiltonian-based approaches, each with distinct mechanisms, advantages, and limitations as summarized in Table 1.

Table 1: Comparison of Enhanced Sampling Methods for Molecular Dynamics Simulations

| Method | Key Mechanism | Optimal Use Cases | Computational Cost | Limitations |

|---|---|---|---|---|

| Replica-Exchange MD (REMD) | Parallel simulations at different temperatures exchange configurations [35] | Protein folding studies, peptide conformation sampling [35] | High (scales with number of replicas) [35] | Efficiency sensitive to maximum temperature choice; many replicas needed [35] |

| Metadynamics | Fills free energy wells with "computational sand" to discourage revisiting states [35] | Protein folding, molecular docking, conformational changes [35] | Moderate to High | Depends on careful selection of a small set of collective coordinates [35] |

| Simulated Annealing | Artificial temperature decreases during simulation to reach global minimum [35] | Characterization of highly flexible systems; large macromolecular complexes [35] | Low to Moderate | Variants like Generalized Simulated Annealing (GSA) perform better for large systems [35] |

| Adaptive Biasing Force (ABF) | Applies biasing forces to overcome energy barriers [35] | Barrier crossing in condensed phases | Moderate | Requires predefined reaction coordinates |