Beyond the Timescale Barrier: Modern Strategies to Overcome Sampling Limits in Small Protein Simulations

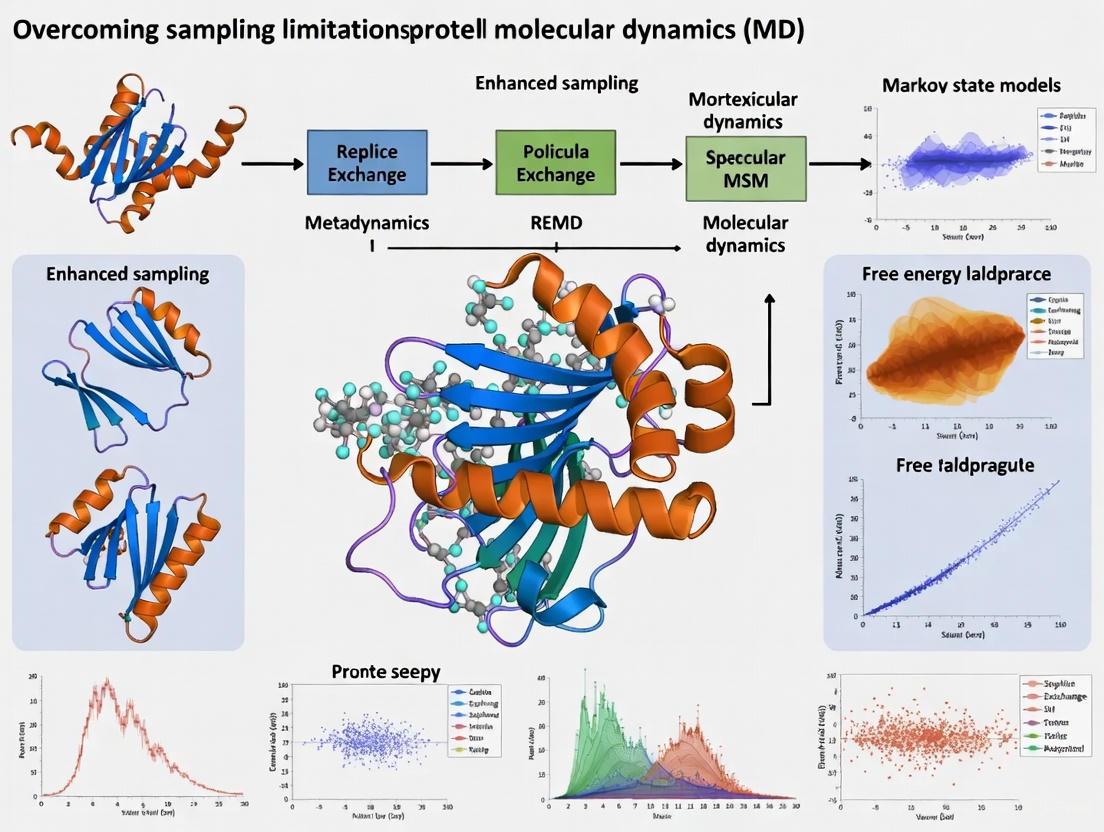

Molecular dynamics (MD) simulations are a cornerstone of structural biology and drug discovery, yet their utility is fundamentally constrained by the limited timescales accessible for sampling the conformational landscapes of...

Beyond the Timescale Barrier: Modern Strategies to Overcome Sampling Limits in Small Protein Simulations

Abstract

Molecular dynamics (MD) simulations are a cornerstone of structural biology and drug discovery, yet their utility is fundamentally constrained by the limited timescales accessible for sampling the conformational landscapes of small proteins. This article provides a comprehensive guide for researchers and drug development professionals on overcoming these sampling limitations. We explore the foundational challenges of rare events and energy barriers, detail cutting-edge methodological solutions including enhanced sampling algorithms and AI-driven generative models, and present best practices for troubleshooting and quantitative validation. By comparing the performance and applications of these diverse approaches, this review serves as a strategic roadmap for obtaining statistically robust and biologically meaningful insights from protein simulations, thereby accelerating therapeutic discovery.

The Sampling Problem: Why Small Proteins Challenge Conventional MD

Technical Support Center

Frequently Asked Questions (FAQs)

1. What is the primary cause of the "timescale dilemma" in molecular dynamics simulations? The core issue is that biomolecular motion is governed by rough energy landscapes with many local minima separated by high-energy barriers [1]. While the fundamental timestep of a simulation (the femtosecond) is sufficient to capture atomic vibrations, the functionally relevant conformational changes (such as protein folding or activation) often occur on microsecond to millisecond timescales or longer. This means that in a straightforward MD simulation, the system can become trapped in a non-functional state for an exceptionally long computational time, failing to sample all biologically important conformations [1].

2. My simulations are not converging. How can I enhance sampling of rare events? Lack of convergence often indicates insufficient sampling across energy barriers. You should consider implementing enhanced sampling algorithms. The choice of method depends on your system and the property you are studying [1]:

- Replica-Exchange MD (REMD): Well-suited for a broad range of systems, this method runs parallel simulations at different temperatures and exchanges configurations, facilitating escape from local energy minima [1].

- Metadynamics: This method is effective for exploring free-energy landscapes by adding a bias potential that discourages the system from revisiting previously sampled states. It is particularly useful when you can define a small set of collective variables that describe the process of interest [1].

- Adaptive Sampling: This approach involves running multiple short, unbiased simulations and then using data from those runs to seed new simulations from promising states. This can be guided by metrics like evolutionary coupling distances to efficiently sample pathways like receptor activation or protein folding [2].

3. How do I choose appropriate collective variables for metadynamics? Collective variables (CVs) should be descriptors of the essential slow degrees of freedom involved in the conformational change. Using a small set of physically meaningful CVs is critical for the success of metadynamics [1]. Recent methods also leverage evolutionary coupling information; distances between residues identified as evolutionarily coupled can serve as excellent, physically motivated reaction coordinates to guide sampling towards functional states [2].

4. What is the computational cost of these enhanced sampling methods? The cost varies significantly:

- REMD requires running multiple replicas simultaneously, which can be computationally prohibitive for large systems due to the high number of replicas needed [1].

- Metadynamics typically runs a single simulation but adds a computational overhead for managing the bias potential.

- Adaptive Sampling strategies, like Evolutionary Couplings-guided Adaptive Sampling (ECAS), can greatly reduce the time required to observe events like protein folding or activation by focusing computational resources on under-sampled regions of conformational space [2].

Troubleshooting Guides

Problem: Simulation is trapped in a single conformational state. Solution:

- Diagnose: Calculate the root-mean-square deviation (RMSD) over time. A flat, non-fluctuating RMSD profile indicates trapping.

- Implement REMD: This is a robust first choice for escaping local minima. Ensure you select an appropriate temperature range. The maximum temperature should be chosen slightly above the temperature where the folding enthalpy vanishes for optimal efficiency [1].

- Alternative - Use Metadynamics: If you have a good hypothesis for the reaction coordinate, apply metadynamics to "fill" the free energy well you are currently trapped in, encouraging the system to explore new areas [1].

Problem: Unable to observe a complete functional cycle (e.g., protein activation). Solution:

- Diagnose: Confirm you have a suitable starting structure and that your simulation time is theoretically sufficient (though it may not be practically feasible).

- Implement Path-Sampling Methods: Use adaptive sampling to iteratively explore the path to the active state. A specific protocol is outlined below:

- Step 1: Run an initial set of short, unbiased MD simulations.

- Step 2: For each simulation frame, calculate a score based on distances from a target functional state. The "change in the sum of evolutionary couplings pair distances (ΔSEC)" is a powerful metric for this [2].

- Step 3: Cluster the frames and select structures with the highest ΔSEC scores (i.e., those most different from the starting state) as starting points for the next round of simulations.

- Step 4: Repeat this process, building a Markov State Model (MSM) to understand the kinetics and identify the pathways of conformational change [2].

Problem: High computational cost of REMD for a large system. Solution:

- Consider Hamiltonian REMD (H-REMD): Instead of exchanging temperatures, run replicas with different Hamiltonians (e.g., different force field parameters). This can sometimes achieve similar sampling with fewer replicas [1].

- Use a Variant: Reservoir REMD (R-REMD) or multiplexed-REMD (M-REMD) can offer better convergence, though M-REMD has a high total computational cost [1].

- Switch Methods: For very large systems, generalized simulated annealing (GSA) can be a lower-cost alternative for characterizing flexible systems and large macromolecular complexes [1].

Experimental Protocols & Data

Quantitative Timescale Comparison

The table below summarizes the vast difference between the simulation timestep and the biologically relevant processes, creating the core "dilemma" [1] [3].

| Timescale | Unit (in seconds) | Relevant Molecular Process |

|---|---|---|

| Femtosecond | 1.0E-15 to 1.0E-12 s | Atomic bond vibrations; MD simulation timestep [1] [3]. |

| Picosecond | 1.0E-12 to 1.0E-9 s | Local side-chain motions; water dynamics. |

| Nanosecond | 1.0E-9 to 1.0E-6 s | Loop motions; helix formation. |

| Microsecond | 1.0E-6 to 1.0E-3 s | Allosteric transitions; small protein folding. |

| Millisecond | 1.0E-3 to 1.0E+0 s | Large-domain motions; enzyme catalytic cycles; protein-protein association. |

Detailed Method: Evolutionary Couplings-Guided Adaptive Sampling (ECAS)

This protocol is designed to efficiently sample rare conformational transitions using bioinformatic data [2].

I. Pre-Simulation Setup

- Calculate Evolutionary Couplings: Use a server like EVCouplings with the pseudolikelihood maximization method on a multiple sequence alignment of your protein's homologs to obtain a list of evolutionarily coupled residue pairs [2].

- Define a Reference State: Select a known functional structure (e.g., an active-state crystal structure) as your reference.

II. Adaptive Sampling Loop

- Initial Seeding: Begin with one or more starting structures (e.g., an inactive state).

- Run Parallel Simulations: Launch a set of multiple, short, unbiased MD simulations from your current set of structures.

- Analyze Trajectories and Calculate ΔSEC: For every saved frame in all trajectories, calculate the ΔSEC score:

- Compute the sum of distances between all evolutionarily coupled residue pairs identified in Step I.1.

- Subtract from this the same sum calculated for your reference state (from Step I.2). This gives the ΔSEC value for that frame.

- Select New Starting Structures: Cluster all frames from the current round of simulations based on their structural properties. From these clusters, select the frames with the highest ΔSEC values. These represent conformations that are most different from your reference in the evolutionarily informed degrees of freedom and are therefore prime candidates for seeding simulations that will explore new regions of conformational space.

- Iterate: Use the selected structures as the starting points for the next round of simulations (return to Step II.2). Repeat for several rounds.

III. Model Building and Analysis

- Construct a Markov State Model (MSM): Combine all simulation data from every round. Cluster the combined data to define discrete states, and then build an MSM to analyze the thermodynamics and kinetics of the processes you've sampled [2].

- Identify Pathways: Use the MSM to extract the dominant pathways connecting different functional states (e.g., from inactive to active).

Research Reagent Solutions

The table below lists key computational "reagents" for tackling sampling limitations.

| Item / Software | Function / Description |

|---|---|

| GROMACS | A high-performance MD software package that includes implementations of methods like REMD and metadynamics [1]. |

| NAMD | A parallel MD code designed for high-performance simulation of large biomolecular systems, also supporting enhanced sampling methods [1]. |

| Amber | A suite of biomolecular simulation programs with support for various REMD protocols and force fields [1]. |

| MSMBuilder | A software package for building and analyzing Markov State Models from MD simulation data [2]. |

| EVCouplings Server | A web platform for analyzing protein sequences and calculating evolutionary couplings to inform contact prediction and simulation guiding [2]. |

| Plumed | A plugin-like library that works with most MD codes to perform enhanced sampling simulations, including metadynamics and umbrella sampling. |

| Collective Variables (CVs) | Low-dimensional descriptors of slow processes (e.g., distances, angles, RMSD) used to bias simulations in methods like metadynamics [1]. |

Workflow and Pathway Visualizations

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: My molecular dynamics simulation appears "stuck" in a single conformational state. What are my primary options to overcome this?

A: When a simulation is trapped in a metastable state, you have several enhanced sampling strategies at your disposal, each with different requirements and strengths. Biased MD methods, such as Metadynamics or Accelerated MD (aMD), modify the potential energy surface to encourage escape from energy wells [4] [5]. Alternatively, path-sampling approaches like Transition Path Sampling (TPS) or the Weighted Ensemble (WE) method focus computing power exclusively on the transition events themselves without introducing bias, generating an ensemble of transition pathways [6]. The choice depends on your goal: use biased MD for free energy landscapes or path sampling for mechanistic insights and rate constants.

Q2: How can I define a good Collective Variable (CV) for methods like Metadynamics, and what if I cannot find one?

A: A good CV should unambiguously distinguish between the initial, final, and transition states of your process [7]. It must be smooth and continuous, and it should capture the slowest dynamical modes of the transition. If identifying a single CV is challenging, consider machine learning approaches like the AMORE-MD or ISOKANN frameworks. These can learn a low-dimensional reaction coordinate directly from simulation data without requiring a priori knowledge of specific CVs [8]. Alternatively, for problems involving multiple simultaneous events (like bond dissociations), the Collective Variable-Driven Hyperdynamics (CVHD) method constructs a global CV from local structural variables, which is particularly useful for chemical reactions [7].

Q3: After running an accelerated simulation with a bias potential, my statistics seem distorted. How can I recover accurate thermodynamic information?

A: Recovering unbiased statistics from biased simulations is a crucial final step. For methods like Metadynamics and aMD, this is achieved through reweighting techniques. Each sampled configuration is assigned a statistical weight based on the applied bias potential (e.g., using the Boltzmann factor ( e^{\beta \Delta V} )), which allows you to reconstruct the canonical ensemble [4]. Be aware that the effectiveness of reweighting depends on the quality of your sampling and the magnitude of the bias; poor reweighting can lead to inaccurate free energy estimates.

Q4: What specific sampling challenges should I anticipate when performing free energy calculations on protein:protein complexes?

A: Protein:protein interfaces present distinct challenges due to complex networks of protein and water interactions [9]. Mutations, particularly those involving charge changes, often introduce severe sampling problems. Slow degrees of freedom that are specific to the mutation can lead to inadequate sampling. To overcome this, employ advanced techniques like alchemical replica exchange or alchemical replica exchange with solute tempering, and ensure simulations are sufficiently long to achieve convergence [9].

Troubleshooting Common Problems

| Problem | Symptoms | Potential Solutions |

|---|---|---|

| Insufficient Sampling | Simulation trapped in one state; non-ergodic behavior; poor convergence of free energy estimate. | 1. Apply Well-Tempered Metadynamics with a carefully designed CV [5].2. Use a path sampling method (e.g., Weighted Ensemble) to focus on transitions [6].3. Increase simulation temperature or use replica exchange [10]. |

| Poorly Chosen Collective Variable (CV) | The system transitions without the CV changing, or the CV changes without a meaningful transition. | 1. Employ interpretable deep learning (e.g., AMORE-MD) to discover a reaction coordinate [8].2. Use a set of preliminary short simulations to test and validate candidate CVs. |

| Inefficient Reweighting | Large statistical error in free energy estimates; biased ensemble averages. | 1. Use the Well-Tempered Metadynamics variant for more convergent reweighting [5].2. For aMD, consider the modified boost potential (ΔVc) to prevent oversampling of high-energy regions [4]. |

| High Energy Barriers in Protein Refinement | Inability to correct structural defects in predicted protein models; models rapidly unfold. | 1. Use flat-bottom restraints to prevent large unfolding while allowing local transitions [10].2. Initiate sampling from an ensemble of alternative initial models to explore broader conformational space [10]. |

Quantitative Data & Method Comparisons

Performance of Enhanced Sampling Methods

Table 1: Comparison of key enhanced sampling methodologies. This table summarizes the core principles, requirements, and outputs of different approaches to tackling rare events.

| Method | Core Principle | Required A Priori Knowledge | Primary Output | Key Reference |

|---|---|---|---|---|

| Metadynamics | Fills energy wells with a history-dependent bias potential to encourage escape. | Collective Variables (CVs) | Free Energy Surface (FES) | [5] |

| Accelerated MD (aMD) | Applies a non-negative boost potential to the potential energy surface, lowering barriers. | Boost energy (E) and acceleration parameter (α). | Accelerated trajectory, reweighted statistics. | [4] |

| Path Sampling (e.g., TPS, WE) | Samples ensembles of unbiased transition pathways between defined states. | Initial state (A) and final state (B). | Transition pathways, rate constants. | [6] |

| AMORE-MD / ISOKANN | Learns a reaction coordinate as a neural network membership function from simulation data. | None (learned from data). | Reaction coordinate, transition pathways, atomic sensitivities. | [8] |

Empirical Data on Method Effectiveness

Table 2: Documented success of various methods in simulating long-timescale biological processes. This data illustrates the practical application and achievements of these techniques.

| Method | Biological Process Simulated | Timescale Accessed | Key Achievement | Reference |

|---|---|---|---|---|

| Milestoning | Conformational change in HIV reverse transcriptase. | Millisecond | Calculated rate constants consistent with experimental data. | [6] |

| Weighted Ensemble (WE) | Outward-to-inward transition in sodium symporter Mhp1. | Long (biologically relevant) | Generated full pathways using coarse-grained simulations. | [6] |

| DIMS | Conformational transition in lactose permease transporter. | Long (biologically relevant) | Generated atomistic transitions in implicit solvent. | [6] |

| Well-Tempered Metadynamics | Conformational landscape of 2D macromolecules. | N/A | Identified metastable states (flat, fold, scroll) and mapped FES. | [5] |

Detailed Experimental Protocols

Protocol 1: Protein Model Refinement using Restrained MD

This protocol is designed to improve the accuracy of predicted protein structures by balancing the need for conformational sampling with the risk of unfolding [10].

Initial Model Preprocessing:

- Begin with your initial predicted protein structure.

- Use a tool like

locPREFMDto resolve stereochemical errors, including severe atomic clashes, incorrect cis-peptide bonds, and poor rotamer states.

System Setup:

- Solvate the preprocessed model in an explicit water box.

- Add ions to neutralize the system.

- Energy minimize the system to remove any remaining strains.

Equilibration:

- Gradually heat the system to the target simulation temperature (e.g., 300 K) under appropriate restraints on protein heavy atoms.

- Perform a short equilibration run in the NPT ensemble to stabilize system density.

Sampling with Restraints:

- Conduct production MD simulations using flat-bottom harmonic restraints applied to the protein's Cα atoms.

- Key Parameters: The flat-bottom potential allows unrestrained sampling within a defined radius (e.g., 2-3 Å) from the initial coordinates but applies a harmonic force beyond that, preventing large-scale unfolding while permitting necessary local rearrangements.

- Optional: To enhance sampling, the simulation can be run at a moderately elevated temperature (e.g., 400 K) in combination with the restraints [10].

Post-Sampling Analysis:

- From the sampled trajectory, select a sub-ensemble of low-energy structures using a scoring function (e.g.,

RWplus). - Compute an ensemble-averaged structure from the selected conformations.

- Perform a final local energy minimization on the averaged structure to correct minor stereochemical irregularities.

- From the sampled trajectory, select a sub-ensemble of low-energy structures using a scoring function (e.g.,

Protocol 2: Exploring Conformational Landscapes with Well-Tempered Metadynamics

This protocol uses Well-Tempered Metadynamics to map the free energy landscape of a molecule, such as a 2D macromolecule or a small peptide [5].

Collective Variable (CV) Selection:

- Identify one or two CVs that best describe the transition of interest. For 2D macromolecules, this could be a radius of gyration or a shape descriptor. For alanine dipeptide, the canonical CVs are the Ramachandran dihedral angles Φ and Ψ.

System Preparation:

- Build and solvate your molecular system.

- Equilibrate using a standard MD protocol to stabilize temperature and pressure.

Well-Tempered Metadynamics Simulation:

- Initialize the simulation from a structure in a metastable state.

- Configure the bias deposition:

- Gaussian Height: Initial energy of the deposited Gaussians.

- Gaussian Width: Determined by the expected fluctuation of your CV.

- Deposition Stride: Frequency (in simulation steps) for adding new Gaussians.

- Bias Factor: A key parameter that governs how quickly the Gaussian height is reduced as the FES is explored, ensuring convergence.

Convergence Monitoring:

- Run the simulation until the free energy estimate for the key metastable states no longer exhibits a systematic drift and fluctuates around a stable value.

- Monitor the time evolution of the CVs to ensure adequate transitions between states.

Free Energy Analysis:

- The free energy surface is calculated as ( F(s) = -\frac{1}{\gamma} V(s,t) ), where ( V(s,t) ) is the bias potential at time ( t ), and ( \gamma ) is related to the bias factor.

- Identify metastable states as deep minima on the FES and locate the transition states (saddle points) connecting them.

Method Workflow & Pathway Visualizations

Enhanced Sampling Method Selection Pathway

This diagram outlines a logical decision tree for selecting an appropriate enhanced sampling method based on the scientific question and available prior knowledge.

AMORE-MD Framework Workflow

This diagram illustrates the iterative, self-supervised workflow of the AMORE-MD framework, which connects deep-learned reaction coordinates to atomistic mechanisms [8].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential software tools and computational methods for investigating rare events in molecular dynamics.

| Tool / Method | Primary Function | Key Application in Rare Events |

|---|---|---|

| ISOKANN Algorithm | Learns a neural membership function representing the dominant slow process (reaction coordinate) from simulation data. | Discovers reaction coordinates without requiring a priori knowledge of Collective Variables [8]. |

| AMORE-MD Framework | Enhances interpretability of deep-learned reaction coordinates via sensitivity analysis and minimum-energy pathways. | Connects machine-learned reaction coordinates to atomistic mechanisms [8]. |

| Well-Tempered Metadynamics | Accelerates transitions and computes free energy surfaces by depositing a history-dependent bias potential. | Explores complex conformational landscapes and identifies metastable states [5]. |

| Weighted Ensemble (WE) Path Sampling | Improves sampling efficiency by running multiple trajectories in parallel and strategically splitting/merging them. | Generates unbiased transition pathways and calculates rate constants for rare events [6]. |

| Collective Variable-Driven Hyperdynamics (CVHD) | Uses a self-learning bias on local CVs to accelerate dynamics, with on-the-fly time reweighting. | Simulates rare chemical reactions, such as bond-breaking events in pyrolysis [7]. |

| Modified aMD Boost Potential (ΔVc) | A boost equation that prevents oversampling of high-energy regions by defining an acceleration window. | Provides enhanced sampling with improved statistical recovery in explicit solvent [4]. |

Frequently Asked Questions

Q1: What are the primary molecular dynamics (MD) sampling challenges for Intrinsically Disordered Proteins (IDPs)? IDPs do not adopt a single stable structure but exist as dynamic ensembles of interconverting conformations. Their conformational space is vast, and sampling this diversity requires simulations spanning microseconds to milliseconds, which is computationally intensive. Traditional MD simulations often get trapped in local energy minima and struggle to sample rare, transient states that are crucial for biological function [11].

Q2: How do enhanced sampling methods like Replica-Exchange MD (REMD) address these challenges? REMD runs multiple parallel simulations (replicas) at different temperatures. Periodically, it attempts to exchange the system states between these replicas based on their energies and temperatures. This allows the system to overcome high energy barriers by simulating at higher temperatures, facilitating a more efficient random walk through conformational space and preventing trapping in local minima [1].

Q3: My simulations of a large disordered protein show an overly compact ensemble. What could be the issue? This is a known limitation of some force fields. While improved force fields like CHARMM36IDPSFF (C36IDPSFF) perform well for many disordered systems, they can still underestimate the radius of gyration (Rg) for larger IDPs. This indicates a potential force field inaccuracy in balancing intra-molecular interactions for larger systems, leading to overly compact conformations [12].

Q4: Are AI methods a viable alternative to MD for sampling conformational ensembles? Yes, AI and deep learning (DL) offer a transformative alternative. DL models can learn complex sequence-to-structure relationships from large datasets, enabling efficient generation of diverse conformational ensembles without the computational cost of physics-based MD simulations. They have been shown to outperform MD in generating ensembles with comparable accuracy, though they often depend on the quality of training data [11].

Q5: What is a key advantage of using metadynamics for studying protein folding or binding? Metadynamics enhances sampling by discouraging the revisiting of previously sampled states. It adds a history-dependent bias potential along predefined collective variables (e.g., root-mean-square deviation, number of native contacts), effectively "filling" free energy wells. This allows the system to explore new regions of the conformational landscape and helps in reconstructing free energy surfaces for processes like folding [1].

Troubleshooting Guides

Issue 1: Inadequate Sampling of Rare Events

Problem: Your simulation fails to observe a critical, functionally relevant conformational change that occurs on a timescale longer than your simulation can reach.

Solution:

- Implement Enhanced Sampling: Use methods like Gaussian accelerated MD (GaMD), metadynamics, or replica-exchange to help the system overcome energy barriers.

- Leverage AI-Generated Ensembles: Train or use existing deep learning models to generate initial conformational ensembles that include rare states, which can serve as starting points for more focused MD simulations [11].

- Extend Sampling with Hybrid Approaches: Combine AI and MD in a hybrid workflow. Use AI to rapidly explore conformational space and identify interesting states, then use MD to refine these states and validate their thermodynamic properties [11].

Issue 2: Force Field Inaccuracies for Disordered States

Problem: Simulated IDP ensembles are consistently too compact or too extended compared to experimental data (e.g., from SAXS or NMR).

Solution:

- Select a Specialized Force Field: Use force fields specifically parameterized for disordered proteins, such as CHARMM36IDPSFF, ff14IDPSFF, or a99SB-disp [12].

- Validate with Multiple Observables: Compare simulation results against a range of experimental data, including NMR chemical shifts, J-couplings, and SAXS profiles, not just a single metric like Rg [12].

- Test Force Field Compatibility: As demonstrated with C36IDPSFF, ensure the force field performs adequately for both disordered and folded domains if your system contains both [12].

Issue 3: High Computational Cost of Enhanced Sampling

Problem: Running a sufficient number of replicas for REMD or long enough bias deposition for metadynamics is computationally prohibitive for your system.

Solution:

- Optimize Replica Number and Placement: For REMD, carefully choose the temperature range and spacing. Using a maximum temperature too high can reduce efficiency. Tools like

temperature_generator.pycan help optimize replica placement [1]. - Use Variants like λ-REMD: For specific problems like absolute binding free energy calculations, Hamiltonian replica-exchange (H-REMD), where different replicas have different Hamiltonians, can be more efficient than temperature-based REMD [1].

- Start from AI-Predicted Structures: Reduce the necessary simulation time by starting from conformations generated by fast AI models, which may already be close to biologically relevant states [11].

Experimental Protocols & Data

Table 1: Enhanced Sampling Methods for Protein Simulations

| Method | Key Principle | Best Suited For | Key Considerations |

|---|---|---|---|

| Replica-Exchange MD (REMD) [1] | Parallel simulations at different temperatures exchange configurations, overcoming barriers. | Biomolecular folding, exploring rough energy landscapes. | Computational cost scales with system size (number of replicas). Efficiency sensitive to maximum temperature choice. |

| Metadynamics [1] | History-dependent bias potential "fills" free energy wells along collective variables. | Protein folding, conformational changes, ligand binding. | Requires careful selection of a small number of collective variables. Accuracy depends on the choice of these variables. |

| Simulated Annealing [1] | System is heated and then gradually cooled to find low-energy states. | Characterizing very flexible systems, large macromolecular complexes. | A variant, Generalized Simulated Annealing (GSA), can be applied to large systems at a relatively low computational cost. |

| AI/Deep Learning [11] | Learns sequence-to-structure relationships from large datasets to generate ensembles. | Efficiently sampling conformational landscapes of IDPs, generating diverse starting states. | Dependent on quality and source of training data. Limited interpretability. Hybrid approaches with MD are often beneficial. |

| System Type | Example Protein | Simulation Length | Number of Replicas | Key Observable Measured |

|---|---|---|---|---|

| Short Disordered Peptide | Ala5 | 1 μs × 5 | N/A | Conformational preferences |

| Disordered Protein | Aβ40 | 1 μs × 5 | N/A | Radius of gyration, NMR data |

| Fast-Folding Protein | Villin | 500 ns × 36 | 36 (REMD) | Folding stability |

| Folded Protein | GB3 | 1 μs × 1 | N/A | Structural stability, NMR data |

This protocol outlines the steps for evaluating the performance of a force field like CHARMM36IDPSFF on an intrinsically disordered protein.

System Setup:

- Initial Structure: Obtain or generate an extended conformation of the protein sequence.

- Solvation: Solvate the protein in a cubic water box (e.g., using TIP3P water model), ensuring a minimum distance between the protein and box edge.

- Neutralization: Add ions (e.g., Na⁺ or Cl⁻) to neutralize the system's net charge. Additional ions can be added to match physiological concentration.

Simulation Parameters (Based on GROMACS):

- Energy Minimization: Use the steepest descent algorithm (up to 50,000 steps) to remove steric clashes.

- Equilibration:

- Perform a 100 ps simulation in the NVT ensemble (constant Number of particles, Volume, and Temperature) using a velocity rescaling thermostat.

- Follow with a 100 ps simulation in the NPT ensemble (constant Number of particles, Pressure, and Temperature) using the Parrinello-Rahman barostat.

- Production MD:

- Run multiple (e.g., five) independent simulations of 1 μs each from different initial velocities to improve sampling and assess convergence.

- Use a 2 fs integration time step. Constrain covalent bonds involving hydrogen with the LINCS algorithm.

- Treat long-range electrostatics with the Particle Mesh Ewald (PME) method. Apply a 12 Å cutoff for both Coulomb and Lennard-Jones interactions.

Validation and Analysis:

- Conformational Analysis: Calculate the radius of gyration (Rg) and compare with experimental SAXS data.

- NMR Comparison: Compute NMR chemical shifts and scalar couplings (³J-couplings) from the simulation trajectory and compare directly with experimental NMR data.

- Convergence Check: Ensure that properties like Rg and secondary structure content have stabilized over the simulation time and are consistent across independent runs.

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function / Purpose | Key Notes |

|---|---|---|

| Specialized Force Fields (e.g., CHARMM36IDPSFF, a99SB-disp) [12] | Provide empirical parameters for MD simulations that are tuned to accurately model both folded proteins and IDPs. | Corrects biases in standard force fields that often over-stabilize folded or compact states. Essential for realistic IDP ensembles. |

| Enhanced Sampling Algorithms (e.g., REMD, Metadynamics) [1] | Integrated into MD software to overcome energy barriers and sample rare events more efficiently. | Critical for studying processes like folding and large-scale conformational changes that are beyond the reach of standard MD. |

| AI/Deep Learning Models [11] | Generate conformational ensembles of IDPs efficiently by learning from data, bypassing expensive physics-based sampling. | Useful for rapid exploration and generating starting states. Performance depends on training data quality. |

| MD Software Packages (e.g., GROMACS, AMBER, NAMD) [1] | Provide the computational engine to run MD and enhanced sampling simulations. | Include implementations of key methods like REMD and metadynamics. GROMACS is noted for its performance and wide use. |

| Lab Notebook & Scripting (e.g., RMarkdown, Python, Shell scripts) [13] | Ensure reproducibility and organization of computational experiments. | A chronologically organized lab notebook (electronic or wiki) and driver scripts (e.g., runall) that record every operation are crucial. |

Workflow and Pathway Diagrams

Enhanced Sampling MD Workflow

AI-MD Hybrid Sampling Strategy

Frequently Asked Questions

1. What defines the "computational cost barrier" in protein simulation? The barrier arises from the fundamental constraints of Molecular Dynamics (MD), where the femtosecond timestep required for stability makes simulating biologically relevant timescales (microseconds to seconds) prohibitively expensive for standard computational resources. This limits the conformational sampling needed to observe large-scale protein folding and function [14].

2. My MD simulations are not sampling the full conformational landscape. What are my options? You can consider shifting to or supplementing with Monte Carlo (MC) methods, which have no inherent timescale and can more rapidly explore conformational space using simplified moves [14]. Alternatively, implement hybrid methods like MDeNM or ClustENMD that combine the atomic detail of MD with the sampling efficiency of coarse-grained models or normal mode analysis to accelerate exploration [15].

3. How does the choice between explicit and implicit solvent models impact computational cost and results? Explicit solvent models are computationally expensive because they simulate every water molecule, but provide high accuracy for solvent-involved interactions. Implicit solvent models are significantly faster and well-suited for Monte Carlo or enhanced sampling, but may have limitations such as over-stabilizing salt bridges or incorrect ion distribution [14].

4. What are the key metrics to validate that my sampling is adequate? Beyond achieving a low RMSD to a known native structure, you should analyze the free energy landscape to identify stable states and transition barriers. Compare your simulation's principal components (PCs) of structural change against ensembles of experimental structures. Good sampling should reproduce the known experimental conformational space [15].

5. How can I visually analyze and present large, complex simulation trajectories? Effective visualization is crucial for interpretation. While traditional frame-by-frame visualization is common, new challenges require leveraging web-based tools for accessibility, interactive visual analysis systems for high-dimensional data, and even virtual reality (VR) for an immersive exploration of complex dynamics [16].

Quantitative Comparison of Sampling Methodologies

The table below summarizes the core characteristics, resource requirements, and outputs of different sampling methods, helping you choose the right approach for your project.

| Method | Computational Demand | Key Input Parameters | Primary Output | Best Suited For |

|---|---|---|---|---|

| Molecular Dynamics (MD) [14] | Very High (constrained by femtosecond timestep) | Force field, timestep, simulation temperature & duration | Time-dependent trajectory of atomic coordinates | Studying precise kinetic pathways with explicit solvent. |

| Monte Carlo (MC) [14] | Lower (no inherent timescale) | Force field, number of MC steps, move sets | Thermodynamic ensemble of uncorrelated conformations | Efficiently sampling thermodynamic states and free energy landscapes. |

| Hybrid Methods (e.g., MDeNM, ClustENMD) [15] | Moderate | Number of normal modes, RMSD thresholds, short MD runs for refinement | Ensemble of full-atomic conformers covering transitions | Rapidly exploring large-scale cooperative motions at atomic resolution. |

Experimental Protocols for Enhanced Sampling

Protocol 1: All-Atom Monte Carlo Simulation for Protein Folding

This protocol uses the AMBER99SB*-ILDN force field with a Generalized Born implicit solvent model [14].

- System Setup: Obtain the initial protein structure (folded or unfolded) from a database like the PDB.

- Parameter Configuration:

- Force Field: AMBER99SB*-ILDN.

- Solvent Model: Generalized Born with a solvent accessible surface area (SASA) term for nonpolar solvation.

- Sampling: Set the number of MC steps (e.g., 200 million).

- Temperature: Run simulations over a wide temperature range (e.g., 330K–410K) to characterize folding/unfolding equilibria.

- Execution: Run the MC simulation on available computational nodes (e.g., an AMD EPYC node using 15-30 cores).

- Analysis:

- Calculate the free energy landscape as a function of reaction coordinates (e.g., RMSD, radius of gyration).

- Identify transition states and energy barriers.

- Determine per-residue stability across the sampled temperature range.

Protocol 2: Hybrid Sampling with ClustENMD

This method efficiently generates diverse conformers at atomic resolution [15].

- Conformer Generation: Deform the initial protein structure along its low-frequency normal modes (calculated using an Elastic Network Model) to create a large pool of candidate conformers.

- Clustering: Group the generated conformers based on structural similarity (e.g., using RMSD) to identify unique representative structures.

- Refinement: Run short, all-atom MD simulations (with implicit or explicit solvent) for each cluster representative to relax and refine the structures, removing steric clashes and incorporating atomic-level details.

- Validation: Compare the final ensemble of conformers against experimental data by analyzing the principal components of structural change to see if the known experimental space is reproduced.

Workflow: Navigating Sampling Method Selection

The following diagram illustrates a logical pathway for selecting a sampling strategy based on your research goals and resources.

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Resource | Function / Description | Relevance to Sampling |

|---|---|---|

| AMBER99SB*-ILDN Force Field [14] | A high-quality, all-atom intramolecular force field. | Provides physically accurate energy calculations for both MD and MC simulations, crucial for correct folding behavior. |

| Generalized Born (GB) Implicit Solvent Model [14] | An approximate model that simulates solvent effects without explicit water molecules. | Drastically reduces computational cost, making extensive sampling in MC and hybrid methods feasible. |

| Elastic Network Model (ENM) [15] | A coarse-grained model that identifies a protein's collective, large-scale motions. | Used in hybrid methods to generate realistic deformation directions, guiding all-atom simulations to explore relevant conformational space. |

| Principal Component Analysis (PCA) [15] | A dimensionality reduction technique to identify major modes of structural variation. | A critical analytical tool to compare and validate that your simulation's sampled space matches experimental ensembles. |

| Visual Molecular Dynamics (VMD) [17] | A molecular visualization program for displaying, animating, and analyzing large biomolecular systems. | Essential for visual inspection of trajectories, analysis of structural dynamics, and creation of publication-quality renderings. |

A Toolkit of Solutions: Enhanced Sampling and AI-Driven Approaches

Troubleshooting Guides

FAQ 1: How do I resolve "hidden barriers" and poor sampling efficiency when my collective variables are not the true reaction coordinates?

| Problem | Root Cause | Diagnostic Steps | Solution |

|---|---|---|---|

| Ineffective acceleration and "hidden barriers" persist despite biasing chosen Collective Variables (CVs). | The selected CVs do not correspond to the true reaction coordinates (tRCs), missing the essential degrees of freedom that govern the conformational change [18]. | 1. Check if the biased trajectories follow non-physical pathways [18].2. Monitor if the system gets trapped in metastable states not described by the current CVs [1]. | 1. Identify True Reaction Coordinates (tRCs): Use methods like the generalized work functional (GWF) or potential energy flow (PEF) analysis computed from energy relaxation simulations to find optimal CVs [18].2. Use Adaptive Path CVs: Implement path collective variables (PCVs) that converge to the minimum free energy path during the simulation [19]. |

FAQ 2: How can I fix numerical instability and sampling artifacts in adaptive path collective variable simulations?

| Problem | Root Cause | Diagnostic Steps | Solution |

|---|---|---|---|

| Simulation crashes or shows large, non-physical fluctuations in the extended variable when using adaptive path CVs. | Discontinuities in the path CV value (s(z)) caused by path updates or the system "shortcutting" a curved path [19]. | 1. Observe sudden jumps in the value of the progress variable s in the output logs.2. Check for large, abrupt changes in the bias potential or the extended variable (λ). |

1. Stabilization Algorithm: Implement a correction step for the extended variable's position before integration. If the change in the CV (s(z)) between steps exceeds a threshold (e.g., the thermal coupling width σ), correct the previous extended variable position: λ(t-1) = s(z(t)) [19].2. Confinement Potential: Apply a mild harmonic restraint on the distance from the path (d(z)) to reduce shortcutting [19]. |

FAQ 3: What should I do if my metadynamics or eABF simulation shows poor free energy convergence?

| Problem | Root Cause | Diagnostic Steps | Solution |

|---|---|---|---|

| The calculated free energy profile does not converge and changes significantly with simulation time. | 1. In metadynamics, the bias deposition rate is too high [1].2. The CV space is too high-dimensional, leading to slow filling [19].3. The chosen CVs are suboptimal, creating hidden barriers [18]. | 1. For metadynamics, monitor the time evolution of the bias potential; it should stabilize [1].2. For WTM-eABF, check the convergence of the adaptive biasing force [19]. | 1. Use Well-Tempered Metadynamics: This variant reduces the height of added Gaussian hills over time, ensuring smoother convergence [1] [19].2. Employ Hybrid Methods: Use the WTM-eABF algorithm, which combines the benefits of metadynamics and ABF for faster and more robust convergence [19].3. Post-Processing Reweighting: Use the multistate Bennett's acceptance ratio (MBAR) estimator on the trajectory to recover unbiased statistical weights and improve the free energy estimate [19]. |

FAQ 4: How can I generate an initial path for path collective variable methods when I lack prior mechanistic knowledge?

| Problem | Root Cause | Diagnostic Steps | Solution |

|---|---|---|---|

| No initial transition path is available to initialize adaptive path CV simulations. | The transition mechanism is unknown, and reactant/product states are separated by a high free energy barrier [18]. | The simulation cannot be started because an initial path connecting the two metastable states is required. | 1. Linear Interpolation: Create an initial path by linearly interpolating between the known reactant and product structures in the CV space [19].2. Use tRCs from a Single Structure: Apply methods that can compute true reaction coordinates from a single protein structure via energy relaxation simulations, then use these to guide path generation [18].3. High-Temperature MD: Run short MD simulations at elevated temperatures to prompt spontaneous transitions, though this is not guaranteed for large barriers [1]. |

Experimental Protocols

Protocol 1: Setting Up a Well-Tempered Metadynamics Simulation with a Single Collective Variable

This protocol provides a methodology for accelerating the sampling of a conformational change using a one-dimensional CV, such as a distance or dihedral angle.

Application Context: This is suitable for studying processes where a reasonable one-dimensional reaction coordinate is known or hypothesized, such as a ligand dissociation or a domain hinge motion [1].

Detailed Methodology:

System Preparation:

- Prepare the protein-ligand complex topology and coordinate files using a tool like

tleapfor the AMBER suite. Solvate the system in a water box (e.g., TIP3P) and add ions to neutralize the charge [20]. - Perform a multi-step energy minimization to relieve steric clashes.

- Gradually heat the system to the target temperature (e.g., 300 K) under constant volume conditions with positional restraints on the protein heavy atoms.

- Equilibrate the system without restraints under constant pressure (NPT ensemble) until the density stabilizes [20].

- Prepare the protein-ligand complex topology and coordinate files using a tool like

Collective Variable Selection:

- Define a CV (

ξ) that distinguishes the reactant and product states. Examples include the distance between two protein domains, a key dihedral angle, or the root-mean-square deviation (RMSD) from a reference structure.

- Define a CV (

Simulation Parameters for Well-Tempered Metadynamics:

- Bias Factor: Set to achieve a significantly higher "bias temperature" (e.g., corresponding to 1000-6000 K) to enhance barrier crossing.

- Gaussian Height (

hG): Start with a value between 0.1 and 1.0 kJ/mol. - Gaussian Width (

σG): Choose based on the fluctuation of the CV in an unbiased short simulation. - Deposition Rate: Add a Gaussian hill every 1-10 ps (100-1000 simulation steps).

Production Simulation:

Protocol 2: Implementing the Path-WTM-eABF Hybrid Method for Complex Transitions

This protocol describes a more advanced setup for sampling complex transitions where a one-dimensional CV is insufficient, using adaptive path collective variables biased by the WTM-eABF algorithm [19].

Application Context: Ideal for intricate biomolecular processes like enzymatic reactions or large-scale protein conformational changes with multiple slow degrees of freedom [19].

Detailed Methodology:

Initial System Preparation: Follow the same steps as in Protocol 1 for system minimization, heating, and equilibration.

Define the Collective Variable Space and Initial Path:

- Select a set of relevant CVs (e.g., several distances, angles, or dihedrals) that are thought to describe the transition. This forms the CV space

z. - Generate an initial path connecting the reactant and product states in this CV space. This can be done via linear interpolation or by using a zero-temperature path optimization method like the nudged elastic band [19].

- Select a set of relevant CVs (e.g., several distances, angles, or dihedrals) that are thought to describe the transition. This forms the CV space

Configure the Path WTM-eABF Simulation:

- Path Parameters: Define the path with a sufficient number of discrete, equidistant nodes (e.g., 20-50). Set a path update frequency (e.g., every N steps) and a half-life (

τ) for the update weights. - WTM-eABF Parameters:

- Thermal Coupling Width (

σ): Use a small value (e.g., 0.01-0.05) for tight coupling between the extended variableλand the path progresss(z). - Bias Temperature (

ΔT): Set for the WTM component. - Gaussian Parameters: Define the height and width for the WTM hills deposited in

λ-space.

- Thermal Coupling Width (

- Stabilization: Enable the correction algorithm for the extended variable

λto handle discontinuities from path updates, using the thermal coupling widthσas the threshold [19].

- Path Parameters: Define the path with a sufficient number of discrete, equidistant nodes (e.g., 20-50). Set a path update frequency (e.g., every N steps) and a half-life (

Production and Analysis:

- Run the simulation. The path will iteratively update towards the minimum free energy path.

- Monitor the convergence metric

Δs(z), which should approach zero at the transition state for an ideal path [19]. - Use the MBAR estimator on the trajectory frames to compute the final converged free energy profile [19].

| Item Name | Function / Purpose | Example Usage in Protocol |

|---|---|---|

| Molecular Dynamics Engine | Software to perform the MD and enhanced sampling simulations. | GROMACS [1], NAMD [1], AMBER [1] [20]. Used in all protocols for simulation execution. |

| Path Collective Variable (PCV) Implementation | A code or plugin that defines and manages adaptive path CVs. | PLUMED [19]. Essential for Protocol 2 to define the path and calculate the progress variable s(z). |

| Generalized Born (GB) Solvent Model | An implicit solvent model for faster computation of solvation effects. | Used in force field parameterization for continuum solvent [21] and can be used in production MD (e.g., igb=5 in AMBER [21]). |

| True Reaction Coordinate (tRC) Identification Tool | A method or code to compute optimal CVs from protein structures. | Generalized Work Functional (GWF) method [18]. Used to find the best CVs to bias, overcoming hidden barriers. |

| Multistate Bennett's Acceptance Ratio (MBAR) | A statistical reweighting estimator to compute unbiased free energies from biased simulations. | Used in post-processing of WTM-eABF trajectories to obtain accurate free energies [19]. |

| IEFPCM Solvent Model | A continuum solvation model used in quantum chemical calculations. | Used during force field parameterization to compute energies in liquid phase for more accurate parameters [21]. |

Signaling Pathways and Workflows

Frequently Asked Questions (FAQs) & Troubleshooting Guide

This guide addresses common challenges researchers face when implementing generative AI models for simulating small protein ensembles, providing targeted solutions to overcome sampling limitations.

FAQ 1: Why does my generated protein ensemble lack structural diversity and get stuck near the initial conformation?

- Problem: The model fails to explore conformational states far from the input structure, a common limitation noted in models like AlphaFlow and aSAM when dealing with complex multi-state proteins or long flexible elements [22].

- Solutions:

- High-Temperature Training: Incorporate high-temperature (e.g., 450 K) simulation data from datasets like

mdCATHduring training. This teaches the model to overcome energy barriers and explore a wider landscape [22]. - Architectural Check: Ensure your model uses an expressive, full-rank transformation. For flow-based models, consider residual connections (

φ_k(x) = x + δ * u_k(x)) to improve the representational capacity of the learned transformation [23]. - Evaluation: Quantify diversity using the mean initial RMSD (

initRMSD) of your generated ensemble and compare it to a reference MD simulation. Consistently lower values indicate poor exploration [22].

- High-Temperature Training: Incorporate high-temperature (e.g., 450 K) simulation data from datasets like

FAQ 2: How can I improve the physical realism and stereochemical quality of generated atomistic structures?

- Problem: Generated structures, particularly side chains, may contain atom clashes or distorted bond geometries, even when global structures appear correct. This is a known issue in atomistic generators like aSAM [22].

- Solutions:

- Post-Processing Energy Minimization: Apply a brief, constrained energy minimization to the raw model outputs. Restraining backbone atoms to 0.15–0.60 Å RMSD during minimization can efficiently resolve clashes while preserving the overall ensemble structure [22].

- Physics-Informed Loss: Incorporate physics-based terms, such as bond length or angle restraints, directly into the model's loss function during training to encourage stereochemical validity [22].

- Quality Metrics: Use validation tools like MolProbity scores to assess clash scores and overall structural quality, comparing your results against established baselines [22].

FAQ 3: My model performs well on backbone atoms but poorly on side-chain conformations. How can I fix this?

- Problem: Some models are optimized for backbone (Cα) generation and treat side chains as a separate, less accurate post-processing step [22].

- Solutions:

- Latent Diffusion for Atoms: Implement a latent diffusion model (like aSAM) that encodes and generates heavy atom coordinates, forcing the model to learn joint distributions for both backbone and side-chain torsion angles [22].

- Focus on Torsions: Evaluate your model's performance on side-chain dihedral angles (χ distributions). A model specifically designed for atomistic generation should approximate these distributions from MD reference data much more effectively than Cα-only models [22].

FAQ 4: What is the fundamental connection between a denoising diffusion model and a classical Molecular Dynamics (MD) force field?

- Problem: The relationship between the learned denoising process and physical forces can seem abstract.

- Explanation: A denoising model trained to remove noise from atomic configurations is mathematically equivalent to learning the physical force field. For a Boltzmann distribution

p ∝ e^(-βE), the score function∇log pis proportional to the physical forces-∇E[24] [25]. The model learns to move atoms from a noisy (unstable) state back to a more stable one, which is precisely what forces do in an MD simulation [24].

Experimental Protocols & Performance Data

The following table summarizes key quantitative results from benchmarking the aSAM model against AlphaFlow on the ATLAS dataset, highlighting their respective strengths and weaknesses in generating protein ensembles [22].

Table 1: Benchmarking aSAM versus AlphaFlow on ATLAS MD Dataset

| Evaluation Metric | Model | Performance Result | Interpretation & Implication |

|---|---|---|---|

| Cα RMSF Correlation (PCC) | AlphaFlow | 0.904 (Avg.) | Better at capturing the amplitude of local backbone fluctuations. |

| aSAMc | 0.886 (Avg.) | Slightly lower, but still strong performance on local flexibility. | |

| Cβ Ensemble Similarity (WASCO-global) | AlphaFlow | Higher Score | Significantly better at approximating the global ensemble diversity. |

| aSAMc | Lower Score | Struggles more with multi-state proteins and large conformational changes. | |

| Backbone Torsion Angles (WASCO-local) | aSAMc | Higher Score | Superior at learning the joint distribution of φ/ψ angles. |

| AlphaFlow | Lower Score | Limited by training that uses only Cβ positions. | |

| Side-Chain Torsion Angles (χ distributions) | aSAMc | High Accuracy | Effectively approximates χ distributions from MD data. |

| AlphaFlow | Low Accuracy | Cannot model side-chain torsions directly. | |

| Structural Quality (MolProbity Score) | AlphaFlow | Better (Lower) Score | Produces structurally cleaner outputs with fewer clashes. |

| aSAMc | Worse (Higher) Score by 0.63 units | Requires post-generation energy minimization for improvement. |

Protocol: Implementing a Temperature-Conditioned Latent Diffusion Model (aSAMt)

This methodology enables the generation of structural ensembles conditioned on temperature, generalizing to unseen sequences and temperatures [22].

Data Preparation:

- Source: Use the

mdCATHdataset, which contains MD simulations for thousands of globular protein domains at multiple temperatures (e.g., 320 K to 450 K) [22]. - Partition: Split the data into training and test sets, ensuring no protein sequence overlap.

- Source: Use the

Model Training:

- Step 1 - Autoencoder (AE): Train an autoencoder to learn a compressed, SE(3)-invariant latent representation of heavy atom protein coordinates. The decoder must accurately reconstruct 3D structures with a heavy atom RMSD of 0.3–0.4 Å [22].

- Step 2 - Latent Diffusion: Train a diffusion model to learn the probability distribution of the latent encodings. Condition this model on both an initial 3D structure and the target temperature [22].

Ensemble Generation & Refinement:

- Sampling: For a given input structure and temperature, sample multiple latent vectors from the diffusion model and decode them into 3D structures [22].

- Energy Minimization: To resolve minor atom clashes, apply a short energy minimization protocol that restrains backbone atoms. This step is crucial for achieving high MolProbity scores [22].

Validation:

- Assess the temperature-dependent behavior of ensemble properties like flexibility (RMSF) and conformational diversity (PCA, initRMSD).

- Use WASCO-local scores to validate the accuracy of backbone (φ/ψ) and side-chain (χ) torsion angle distributions against reference MD data [22].

Workflow Visualization

The following diagram illustrates the core architecture and process of a temperature-conditioned latent diffusion model for protein ensemble generation.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Generative Protein Ensemble Research

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| mdCATH Dataset [22] | Dataset | Provides MD simulations of thousands of globular protein domains at multiple temperatures for training and validating temperature-conditioned models. |

| ATLAS Dataset [22] | Dataset | A large dataset of protein simulations performed at 300 K, used for benchmarking ensemble generation methods. |

| aSAM / aSAMt [22] | Software Model | An atomistic latent diffusion model for generating all-heavy-atom protein ensembles, with a version (aSAMt) conditioned on temperature. |

| AlphaFlow [22] | Software Model | A generative model based on AlphaFold2 components, used as a state-of-the-art benchmark for Cβ and flexibility modeling. |

| BoltzGen [26] | Software Model | A generative AI model capable of de novo protein binder design, unifying both structure prediction and protein design tasks. |

| Energy Minimization Protocol [22] | Computational Method | A constrained optimization process applied to generated structures to resolve atom clashes and improve stereochemical quality without significantly altering the global conformation. |

| WASCO Score [22] | Analysis Metric | Quantifies the similarity between two structural ensembles (global) or their local torsion angle distributions (local). |

| Equivariant GNN (e.g., SE(3)-Transformer) [25] | Neural Network Architecture | A type of graph neural network that preserves symmetry under 3D rotations and translations, crucial for processing and generating 3D molecular structures. |

Leveraging Machine Learning for Data-Driven Collective Variables

Technical Support Center

Frequently Asked Questions (FAQs)

FAQ 1: What are data-driven collective variables (CVs) and how do they differ from traditional CVs? Data-driven CVs are one-dimensional, interpretable, and differentiable variables generated by machine learning algorithms to describe a transformation's progress. Unlike traditional CVs, which are based on intuitive physical or chemical descriptors (e.g., distances, angles, radii of gyration), data-driven CVs are a generalization of the path collective variable concept. They are created by performing a kernel ridge regression of the committor probability, which encodes the progress of a transformation. This approach can uncover optimal descriptors for modeling transformations that are not apparent through physical intuition alone [27].

FAQ 2: Why is adequate sampling a critical challenge in Molecular Dynamics (MD) simulations of small proteins? Biological molecules possess rough energy landscapes with many local minima separated by high-energy barriers. This makes it easy for simulations to become trapped in non-functional conformational states for extended periods, preventing a meaningful characterization of the protein's dynamics and function. Large conformational changes essential for biological activity, such as those occurring during catalysis or substrate transport, often occur on timescales beyond the reach of conventional MD simulations [1].

FAQ 3: My ML-CV seems to perform poorly when applied to a larger system. What could be the cause? This is a common issue related to transferability. A machine-learned force field (and by extension, a CV derived from it) is only applicable to the phases of the material in which it was trained. If the larger system explores regions of phase space not included in the training data, the results will be unreliable. The best practice is to ensure the training data explores as much of the relevant phase space as possible. It is often recommended to train a force field on a system that is large enough for the relevant phonons or collective oscillations to fit into the supercell [28].

FAQ 4: During on-the-fly training, the number of reference configurations (ML_ABN) is low. How can I increase it?

A low number of selected reference configurations indicates that the algorithm is not encountering enough novel atomic environments. This can be addressed by adjusting the threshold for selecting new configurations. Lowering the default value of the ML_CTIFOR parameter (e.g., from 0.02 to a smaller value) makes the criterion for adding a new configuration to the training set more sensitive. The optimal value is system-dependent and should be determined through trial and error [28].

FAQ 5: How should I handle atoms of the same element in different chemical environments (e.g., bulk vs. surface oxygen)? Treating them as a single species can lead to higher errors. For improved accuracy, you can split a single elemental species into multiple subtypes. This involves:

- Grouping atoms by their subtype in the POSCAR file.

- Assigning each group a unique name (e.g., O1, O2).

- Updating the POTCAR file to include a separate entry for each new species name. Be aware that this improves accuracy at the cost of computational efficiency, as the cost scales quadratically with the number of species [28].

Troubleshooting Guides

Problem: Poor Convergence of Free Energy Estimates with ML-CVs

| Symptom | Potential Cause | Solution |

|---|---|---|

| Hysteresis in free energy profiles | Inadequate sampling of the transition path or a poorly chosen CV. | Ensure the ML-CV is trained on sufficient data that includes configurations from the transition state region. Consider running multiple independent simulations or using a variant of metadynamics (e.g., well-tempered metadynamics) to improve convergence [1]. |

| Drifting free energy estimates | The system is exploring new phase space not captured by the original ML-CV. | Implement a re-training protocol where the ML model is updated with new data collected during the enhanced sampling simulation, or use an on-the-fly learning approach [27] [28]. |

| High variance in forces from MLFF | Insufficient training data or inaccurate ab-initio reference calculations. | Verify the convergence of the underlying ab-initio calculations (e.g., KPOINTS, ENCUT). Continue training in underrepresented regions of phase space (using ML_MODE=TRAIN with an existing ML_AB file) to expand the database [28]. |

Problem: Instabilities in On-the-Fly Machine Learning Force Field Training

| Symptom | Potential Cause | Solution |

|---|---|---|

| Non-converged electronic structures during ab-initio steps | The use of charge density mixing (MAXMIX) with large ion steps between calculations. |

Do not set MAXMIX > 0 when training force fields. The significant movement of ions between first-principles calculations often causes the self-consistency cycle to fail [28]. |

| Unphysical cell deformation in NpT simulations | Extreme cell tilting, especially in fluids or systems with vacuum. | For fluids, use ICONST to constrain the cell shape and only allow volume changes. This prevents the cell from "collapsing." For systems with surfaces or isolated molecules, train stresses are not physical; set ML_WTSIF to a very small value (e.g., 1E-10) and use the NVT ensemble [28]. |

| Poor exploration of phase space | Training in the NVE ensemble or starting at too high a temperature. | Avoid training in the NVE ensemble. Prefer the NpT ensemble (if applicable) or the NVT ensemble with a stochastic thermostat (e.g., Langevin) for better ergodicity. Start training at a low temperature and gradually increase it (ramp) to about 30% above the target application temperature [28]. |

Problem: Inaccurate or Non-Interpretable ML-CVs

| Symptom | Potential Cause | Solution |

|---|---|---|

| CV fails to distinguish between relevant states | The input features/descriptors lack the necessary information to describe the mechanism. | Use global descriptors that capture permutation invariance or other relevant symmetries. For solvation dynamics, ensure descriptors include information from the first solvation shell. In some cases, inertial effects may require more complex features than just atomic positions [27]. |

| CV is noisy and not smooth | Under-regularized model or noisy training data. | Adjust the regularization parameter in the kernel ridge regression. Ensure the forces from the ab-initio calculations are well-converged, as they are used in training the committor model [27] [28]. |

Experimental Protocols and Methodologies

Protocol 1: Data-Driven Path Collective Variable Generation

This methodology outlines the generation of a data-driven collective variable as described in France-Lanord et al. [27].

- Data Collection: Perform ab-initio MD simulations or select a diverse set of configurations from existing trajectories that span the transformation of interest (e.g., unfolded and folded states, associated and dissociated states).

- Feature Calculation: For each configuration, compute a set of atomic descriptors. These can be simple intuitive variables or high-dimensional global descriptors (e.g., permutation invariant vectors).

- Committor Analysis: Estimate the committor probability for each configuration in the dataset. The committor, pB, is the probability that a configuration committed to state B (the product state) rather than state A (the reactant state).

- Model Training: Use a kernel ridge regression (KRR) algorithm to learn the functional relationship between the atomic descriptors and the committor probabilities.

- CV Validation: The resulting model provides a one-dimensional, differentiable, and interpretable CV. Validate its quality by projecting the transformation path onto this CV and ensuring it provides a clear and continuous reaction coordinate.

Protocol 2: On-the-Fly Training of a Machine-Learned Force Field in VASP

This protocol summarizes the best practices for training an MLFF as per the VASP wiki [28], which can serve as the foundation for ML-CVs.

Initial Setup:

- Set

ML_MODE = TRAIN. The presence or absence of anML_ABfile determines if training starts from scratch or continues from an existing database. - Configure the ab-initio settings in the

INCARfile for electronic minimization. Crucially, setISYM = 0(turn off symmetry) and avoid settingMAXMIX > 0. - For the MD part, set a suitable time step (

POTIM): not exceeding 0.7 fs for hydrogen-containing compounds or 1.5 fs for oxygen-containing compounds. - Prefer the NpT ensemble (

ISIF=3) for robustness, or NVT (ISIF=2) with a Langevin thermostat. Avoid the NVE ensemble.

- Set

Systematic Training for Complex Systems:

- If the system has different components (e.g., a crystal surface and an adsorbing molecule), train them separately first. Train the bulk crystal, then the surface, then the isolated molecule, and finally the combined system.

Continual Learning and Refinement:

- To add new configurations to an existing database, run

ML_MODE = TRAINwith the existingML_ABfile in the working directory. - To generate a new force field from a precomputed ab-initio dataset (

ML_ABfile), useML_MODE = SELECT. This mode ignores the old list of local reference configurations and creates a new set.

- To add new configurations to an existing database, run

Protocol 3: Enhanced Sampling with Metadynamics using ML-CVs

This protocol integrates the ML-CV into an enhanced sampling technique [1].

- Collective Variable Definition: Use the data-driven CV generated in Protocol 1 as the reaction coordinate for metadynamics.

- Simulation Setup: In your MD software (e.g., GROMACS, NAMD), configure a metadynamics simulation. Define the ML-CV as the biased collective variable.

- Parameters: Set the Gaussian hill height and width, and the deposition rate. For well-tempered metadynamics, set an appropriate bias factor.

- Production Run: Run the metadynamics simulation. The history-dependent bias potential will "fill" the free energy wells, encouraging the system to escape local minima and explore new regions of the CV space.

- Analysis: The free energy surface (FES) as a function of the ML-CV can be reconstructed from the deposited bias potential.

Workflow and Relationship Diagrams

Workflow for Developing and Using ML-CVs

Decision Tree for Sampling Method Selection

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Tools for ML-CV Development and Enhanced Sampling

| Item/Reagent | Function/Brief Explanation | Key Considerations |

|---|---|---|

| VASP [28] | A software package for performing ab-initio quantum mechanical calculations using pseudopotentials and a plane wave basis set. Used to generate accurate training data (forces, energies) for MLFFs and ML-CVs. | Requires careful setup of INCAR parameters (e.g., ISYM=0, avoid MAXMIX). The POTCAR file must not change between training sessions. |

| AMBER99SB*-ILDN [14] | An all-atom force field for proteins. Provides intramolecular interaction parameters. Often combined with an implicit solvent model for faster sampling in Monte Carlo or MD simulations. | When used with implicit solvent, limitations include over-stabilized salt bridges and neglect of temperature dependence of solvation free energy. |

| GROMACS/NAMD [1] | High-performance molecular dynamics simulation packages. Used to run conventional and enhanced sampling MD simulations. They support plugins or built-in methods for metadynamics and replica-exchange. | Ensure compatibility with the ML-CV definition, which may require custom coding or the use of a PLUMED plugin to interface the ML model with the MD engine. |

| Metadynamics [1] | An enhanced sampling technique that accelerates the exploration of free energy landscapes by adding a history-dependent bias potential. Ideal for biasing data-driven CVs. | Effectiveness depends on the quality of the CV. Well-tempered metadynamics is often preferred to improve convergence. |

| Replica-Exchange MD (REMD) [1] | A sampling method that runs multiple parallel simulations at different temperatures (T-REMD) or with different Hamiltonians (H-REMD), periodically exchanging configurations. | Efficiency is sensitive to the choice of maximum temperature. Too high a maximum temperature can make REMD less efficient than conventional MD. |

| Generalized Born/SASA Model [14] | An implicit solvent model that approximates the electrostatic and non-polar contributions to solvation free energy. Significantly faster than explicit solvent for MC simulations. | Can have limited representation of hydrogen bonds and incorrect ion distributions compared to explicit solvent models. |

| Kernel Ridge Regression [27] | The machine learning algorithm used to create the data-driven CV by regressing atomic descriptors against the committor probability. | Produces a one-dimensional, differentiable, and interpretable CV suitable for biasing in enhanced sampling. |

| Permutation Invariant Vector [27] | A type of global atomic descriptor used as input for the ML model. It can capture complex structural information that simpler, intuitive variables might miss. | Can be essential for achieving high accuracy in systems where simple CVs are insufficient. |

Molecular Dynamics (MD) simulation has become an indispensable tool for studying biomolecular processes, such as protein folding and drug binding. However, a fundamental challenge limits its effectiveness: the femtosecond timestep required for numerical integration means that observing biologically relevant events often requires simulating microseconds to milliseconds of real time, which demands immense computational resources [29]. This "timescale problem" fundamentally constrains the questions researchers can address about large-scale conformational changes using accessible computational hardware [14].

In this context, Monte Carlo (MC) methods emerge as a powerful alternative for thermodynamic characterization. Unlike MD, which simulates dynamics through time, MC methods rely on random sampling of conformational space and have no inherent timescale, enabling them to bypass kinetic limitations entirely [14]. This technical support guide explores how researchers can implement MC approaches to overcome sampling barriers in the study of small proteins, providing practical troubleshooting and methodological frameworks.

Understanding Monte Carlo: Key Concepts for Researchers

Fundamental Principles

Monte Carlo simulations generate a sequence of molecular conformations through random moves that are accepted or rejected based on the Metropolis criterion, which uses energy calculations to Boltzmann-weight probabilities. This approach provides several distinct advantages for specific research applications:

- Thermodynamic Focus: MC simulations are designed to sample the Boltzmann distribution of states, making them ideal for calculating free energies, equilibrium populations, and stability parameters without being constrained by kinetic barriers [14].

- No Inherent Timescale: Because MC is not based on numerical integration of equations of motion, it does not simulate a physical trajectory through time. This allows it to "jump" between distant conformational states that would be separated by slow, barrier-crossing events in MD [14].

- Efficient Move Sets: Specialized moves can be designed that modulate particularly important degrees of freedom, such as dihedral angles for peptides, leading to more efficient exploration of conformational space per computational step [14].

Comparative Advantages Over Molecular Dynamics

Table: Key Differences Between Molecular Dynamics and Monte Carlo Simulation Approaches

| Feature | Molecular Dynamics | Monte Carlo |

|---|---|---|

| Timescale | Inherently linked to simulation time | No inherent timescale |

| Primary Output | Kinetic trajectories & time-dependent properties | Thermodynamic ensembles & equilibrium properties |

| Barrier Crossing | Limited by integration timestep & energy barriers | Can bypass barriers through discrete moves |

| Solvent Treatment | Efficient with explicit solvent | Typically uses implicit solvent models |

| Kinetic Information | Directly provides kinetic data | Requires reconstruction from thermodynamic data [14] |

Implementation Guide: Monte Carlo for Small Protein Folding

Research Reagent Solutions

Table: Essential Computational Components for Monte Carlo Protein Folding Studies

| Component | Function | Example Implementations |

|---|---|---|

| Intramolecular Force Field | Defines energy contributions from bonds, angles, torsions, and non-bonded interactions | AMBER99SB*-ILDN [14], CHARMM |

| Implicit Solvent Model | Approximates solvent effects without explicit water molecules, dramatically speeding up calculations | Generalized Born with Surface Area (GBSA) [14] |

| Sampling Algorithm | Governs how conformational moves are proposed and accepted | Metropolis Monte Carlo, Wang-Landau Sampling |

| Move Sets | Defines types of conformational changes attempted | Dihedral angle rotations, backbone moves, sidechain adjustments |

| Analysis Tools | Processes simulation output to extract thermodynamic information | Free energy estimators, cluster analysis, order parameters |

Experimental Protocol: Thermodynamic Characterization of Small Proteins