Beyond the Table: The Critical Limitations of Traditional Look-up Table Force Fields and the Rise of Machine Learning

This article provides a comprehensive analysis of the limitations inherent in traditional look-up table-based force fields, a longstanding cornerstone of molecular dynamics simulations in drug development and biomolecular research.

Beyond the Table: The Critical Limitations of Traditional Look-up Table Force Fields and the Rise of Machine Learning

Abstract

This article provides a comprehensive analysis of the limitations inherent in traditional look-up table-based force fields, a longstanding cornerstone of molecular dynamics simulations in drug development and biomolecular research. We explore the foundational principles of these methods, highlighting their rigidity and limited transferability to new chemical spaces. The discussion then transitions to modern machine learning (ML) alternatives, such as the Grappa framework, which learn parameters directly from molecular graphs. We address common troubleshooting issues and performance bottlenecks associated with traditional approaches and present a rigorous comparative validation against experimental data, revealing a significant 'reality gap.' Finally, the article concludes with future perspectives on how next-generation force fields are set to enhance the accuracy and scope of computational modeling in biomedical research.

The Rigid Framework: Understanding Traditional Look-up Table Force Fields and Their Inherent Limitations

Classical molecular mechanics force fields are foundational to computational chemistry, drug discovery, and materials science, providing the analytical functions and parameters that describe interatomic forces and enable molecular dynamics simulations [1]. Traditional force fields operate on a principle known as indirect chemical perception, where force field parameters are assigned to molecules through an intermediary step of atom typing [2]. In this paradigm, each atom in a molecule is assigned a discrete classification—an atom type—based on its local chemical environment. These atom types then serve as keys for looking up specific parameters in extensive predefined tables for bond stretching, angle bending, torsion potentials, and non-bonded interactions [2]. This method stands in contrast to emerging approaches utilizing direct chemical perception, where parameters are assigned directly based on chemical structure patterns without the intermediary atom type classification [2]. The traditional framework, while computationally efficient and deeply entrenched in simulation software, introduces specific limitations in reproducibility, extensibility, and accuracy that have motivated the development of next-generation alternatives. This technical guide examines the core mechanics of this established approach, framing its discussion within the inherent constraints of look-up table methodologies.

The Atom Typing Process: Defining Chemical Identity

The initial and most critical step in parameterizing a molecule with a traditional force field is atom typing. An atom type is a symbolic label that encodes information about an element's local chemical environment, including its hybridization state, bonded neighbors, and participation in specific functional groups [1]. The complexity of chemical systems necessitates a proliferation of these types; for example, the OPLS force field defines 347 distinct atom types for carbon alone [1].

The Challenge of Chemical Context Encoding

The fundamental challenge of atom typing lies in sufficiently encoding the chemical context that determines an atom's physicochemical behavior. Consider a carbon atom: it may be assigned one atom type if it is an sp³-hybridized carbon in a methane molecule, and an entirely different type if it is an sp²-hybridized carbon in a ketone group. This specificity ensures that the carbon in a methyl group and the carbonyl carbon receive different parameters for their bonds, angles, and van der Waals interactions. However, this can lead to a proliferation of atom types. In some cases, as highlighted by Mobley and colleagues, chemically identical atoms must be assigned different types merely to differentiate between single and double bonds, as the bond-stretch parameters are inferred from the atom types of the bonded partners [2].

Rule-Based Atom Typing and its Ambiguities

The process of assigning atom types can be manual or automated. Manual assignment is tedious, error-prone, and not reproducible for large-scale screening studies [1]. Automated tools use rule-based systems to assign types. These rules often rely on a rigid hierarchy, where more specific rules are applied before more general ones [1]. A significant reproducibility issue stems from the fact that the rules for applying a force field are not always disseminated in a machine-readable format. Often, they are described only in human-readable documentation, such as journal articles or force field manuals, leading to potential ambiguity and differing interpretations within the research community [1]. This ambiguity in the initial parameterization step can propagate through an entire simulation, compromising the reproducibility of computational studies.

Predefined Parameter Tables: The Force Field Look-up System

Once all atoms in a system are assigned types, the specific parameters for the energy function are retrieved from extensive, predefined parameter tables. The force field's total energy equation is a sum of various terms, and the parameters for each term are looked up based on combinations of atom types.

Table 1: Core Energy Terms and Their Parameter Look-up Keys in Traditional Force Fields

| Energy Term | Physical Interaction | Look-up Key | Example Parameters |

|---|---|---|---|

| Bond Stretch | Vibration of covalent bonds | Pair of bonded atom types | Force constant (kb), Equilibrium length (r0) |

| Angle Bend | Bending between three bonded atoms | Triplet of sequentially bonded atom types | Force constant (kθ), Equilibrium angle (θ0) |

| Torsions | Rotation around a central bond | Quartet of sequentially bonded atom types | Barrier heights (Vn), Phase shifts (γ), Periodicity (n) |

| Van der Waals | Non-bonded dispersion and repulsion | Pair of atom types (any non-bonded) | Well depth (ε), Atomic radius (σ) |

| Electrostatics | Coulombic interaction | Single atom type | Partial atomic charge (q) |

The structure of a force field file reflects this organization. For instance, the ReaxFF force field format contains separate sections for General parameters, Atoms, Bonds, Off-diagonal terms, Angles, and Torsions, each with blocks of parameters indexed by atom type indices [3]. Similarly, the MacroModel force field file is organized into a Main Parameter Section with distinct subsections for stretching, bending, torsional, and van der Waals interactions [4].

A Standard Workflow: From Molecule to Parameterized System

The following diagram illustrates the standard workflow for applying a traditional force field to a molecular system, highlighting the central role of indirect chemical perception.

This workflow, termed "indirect chemical perception" [2], creates a fundamental dependency on the correctness and completeness of both the atom typing rules and the parameter tables. Errors or ambiguities in either component lead to an incorrectly parameterized molecule.

Limitations of the Traditional Look-up Table Approach

The traditional framework of atom typing and parameter tables, while successful for decades, presents significant limitations that hinder progress in force field development and application, particularly in the context of modern drug discovery which explores expansive chemical spaces.

Reproducibility and Ambiguity

A key challenge is the lack of reproducibility. As noted in the development of the Foyer tool, "ambiguity in molecular models often stems from inadequate usage documentation of molecular force fields and the fact that force fields are not typically disseminated in a format that is directly usable by software" [1]. When atom-typing rules are embedded as heavily nested if/else statements within a software's source code, or described only in text, their exact logic can be opaque, making it difficult for different researchers to achieve the same parameterization for a given molecule.

Parameter Proliferation and Inflexibility

The indirect perception model inherently leads to a proliferation of parameters. Creating a new atom type to refine, for example, Lennard-Jones interactions for a specific chemical context, necessitates the creation of all associated bond, angle, and torsion parameters involving that new type [2]. This needlessly increases the force field's complexity and the dimensionality of any parameter optimization problem. It also makes force fields difficult to extend to new chemistries, as adding a single new atom type requires a subject-matter expert to carefully define dozens of new interconnected parameters [2].

Limited Coverage for Expanding Chemical Space

The look-up table approach is inherently limited by its predefined set of atom types and parameters. With the rapid expansion of synthetically accessible, drug-like chemical space, it becomes practically impossible for traditional force fields to maintain complete coverage [5]. This can be a critical bottleneck in computational drug discovery, where researchers frequently work with novel molecular scaffolds that may not be fully represented in existing force fields.

Experimental Validation and Benchmarking Protocols

Assessing the performance of a force field parameterized via traditional methods is a critical step. The following benchmark protocol, derived from a 2025 study on RNA-ligand force fields, provides a robust methodological template [6].

System Preparation and Simulation

- Structure Selection: Curate a diverse set of experimental structures from databases (e.g., HARIBOSS for RNA-ligand complexes [6]). The selection should cover a range of topologies and binding modes.

- Parameterization: Assign force field parameters using the standard atom-typing and table look-up procedure. Ligands are typically parameterized with a companion force field like GAFF, using tools such as

ACpypeto generate topology files [6]. - Simulation Setup: Solvate the system in an explicit water model (e.g., OPC, TIP4P-D), add ions to neutralize the charge and achieve physiological concentration, and minimize and equilibrate the system. Subsequently, run unrestrained production molecular dynamics (MD) simulations (e.g., 1 μs under NPT conditions at 298 K and 1 atm) using simulation packages like Amber or GROMACS [6].

Analysis Metrics

- Structural Stability: Calculate the root-mean-square deviation (RMSD) of the simulated structure relative to the experimental starting structure to assess overall drift.

- Interaction Fidelity: Analyze contact maps by defining a contact between residues or a ligand and its target when heavy atoms are within 4.5 Å. Track the occupancy of contacts present in the experimental structure and identify newly formed ("gained") contacts during the simulation [6].

- Ligand Dynamics: Compute the ligand-only RMSD (LoRMSD) by aligning the simulation trajectory on the target's backbone and measuring the RMSD of the ligand's heavy atoms, providing a measure of ligand binding stability [6].

Table 2: Key Analysis Metrics for Force Field Validation

| Metric | Calculation Method | What It Reveals |

|---|---|---|

| Heavy Atom RMSD | RMSD of all non-hydrogen atoms relative to a reference structure. | Overall structural preservation of the simulated complex. |

| Contact Occupancy | Fraction of simulation frames a specific interatomic contact is present. | Stability of key binding interactions (e.g., hydrogen bonds, hydrophobic contacts). |

| LoRMSD | RMSD of ligand atoms after aligning the receptor backbone. | Stability and mobility of the bound ligand within its binding site. |

| Δ Contact Map | Difference between simulation contact map and experimental contact map. | Systematic shifts in interaction networks (positive values = contacts gained; negative = contacts lost). |

Table 3: Key Software Tools for Traditional and Modern Force Field Application

| Tool / Resource | Function | Relevance to Traditional Force Fields |

|---|---|---|

| Foyer [1] | An open-source Python tool for defining and applying force field atom-typing rules in a human- and machine-readable XML format. | Improves reproducibility by providing a formal, unambiguous format for atom-typing rules, separating them from the software's source code. |

| SMIRNOFF [2] | A direct chemical perception format that uses SMIRKS patterns to assign parameters directly from chemical structure, bypassing atom types. | Represents the modern alternative to traditional force fields, highlighting their limitations related to parameter proliferation and inflexibility. |

| ACpype [6] | A tool for generating topologies and parameters for small molecules for use with AMBER and GROMACS, typically using the GAFF. | Aids in applying the traditional look-up table approach (GAFF) to drug-like ligands in biomolecular simulations. |

| ParmEd [1] | A library for facilitating interoperability between different simulation codes and manipulating molecular topology files. | Often used in conjunction with tools like Foyer to write syntactically correct input files for various simulation engines (e.g., OpenMM, GROMACS). |

| ByteFF [5] | A recently developed, data-driven force field for drug-like molecules parameterized using a graph neural network on a massive quantum chemical dataset. | Exemplifies the shift towards machine learning-driven parameterization to overcome the coverage limitations of traditional table-based methods. |

The traditional force field architecture, built upon the twin pillars of atom typing and predefined parameter tables, has been a powerful engine driving decades of advancement in molecular simulation. Its structured, look-up table-based approach provides a tractable method for estimating the complex potential energy surface of molecular systems. However, this very structure is the source of its principal limitations: ambiguity that hampers reproducibility, inflexibility that complicates extension and optimization, and incomplete coverage in the face of rapidly expanding chemical space. The emergence of new paradigms, such as the SMIRNOFF format with its direct chemical perception [2] and data-driven, machine-learning approaches like ByteFF [5], is a direct response to these constraints. These modern methodologies aim to systematize and automate force field development, moving beyond the limitations of human-defined atom types and static tables. While traditional force fields will undoubtedly remain in use for the foreseeable future, understanding their core mechanics and inherent limitations is crucial for researchers to critically evaluate simulation results and to embrace the next generation of more automated, reproducible, and broadly applicable molecular models.

The theoretical chemical space of plausible organic molecules is estimated to encompass over 10^60 unique structures with molecular weights under 500 Da [7] [8]. This staggering number represents both a universe of potential solutions for drug discovery and material science, and a fundamental challenge for computational chemistry. Current experimental methods capture only a minuscule fraction of this space; for instance, non-targeted analysis (NTA) methods used to identify chemicals of emerging concern have been shown to cover only about 2% of the relevant chemical space [7]. This limited coverage creates a critical bottleneck in fields from exposomics to drug development, where understanding chemical exposure and discovering new therapeutic agents requires navigating this uncharted territory.

The problem is further compounded by the limitations of traditional force fields in computational chemistry. Molecular mechanics (MM) force fields, while computationally efficient, employ simple functional forms and a finite set of atom types that cannot adequately represent the true complexity of quantum mechanical (QM) energy landscapes [9]. Even when coupled with trainable, flexible parametrization engines, the accuracy of these legacy force fields often cannot exceed the chemical accuracy threshold of 1 kcal/mol—the empirical level required for qualitatively correct characterization of many-body systems [9]. This review examines how the fundamental constraint of finite atom types in traditional look-up table approaches for force fields creates an insurmountable bottleneck for exploring chemical space, and surveys emerging methodologies that aim to overcome these limitations.

Quantifying the Chemical Space Bottleneck

The Unexplored Exposome and Drug Discovery Challenges

The concept of chemical space was initially introduced in drug discovery, where systematic exploration of drug-like structures is paramount [8]. However, the gap between known and potential chemicals is vast. While databases like PubChem contain over 115 million unique structures, this represents less than 0.001% of the possible chemical space for small organic molecules [8]. This exploration challenge is particularly acute in exposomics, where humans are exposed to countless chemicals—both natural and synthetic—throughout their lifetimes, yet only a tiny fraction have been identified or assessed for biological activity [8].

Table 1: Chemical Space Coverage in Current Research

| Domain | Known Structures | Theoretical Space | Coverage | Primary Limitations |

|---|---|---|---|---|

| Exposomics (NTA studies) | ~60,000 in NORMAN SusDat | ~10^60 | ~2% [7] | Sample prep, chromatography, MS detection, data processing |

| Drug Discovery | <10 million in HTS libraries | >10^33 drug-like compounds | "A droplet by the ocean" [10] | Synthesis bottleneck, HTS library limitations |

| General Organic Structures | ~115 million in PubChem | >10^60 (MW <500 Da) | <0.001% [8] | Registration focus on human-made chemicals |

The situation in drug discovery is equally constrained. High-throughput screening (HTS), the foundation of current small-molecule discovery, relies on libraries containing less than 10 million distinct chemotypes, while the total number of synthesizable, drug-like compounds exceeds 10^33 [10]. As one researcher starkly noted, current drug discovery methods are "not even exploring a tide-pool by the side of the ocean; they're perhaps exploring a droplet!" [10]

The Atom Typing Limitation in Molecular Mechanics

Traditional molecular mechanics force fields face a fundamental architectural constraint known as atom typing, where "atoms of distinct nature are forced to share parameters" [9]. This approach creates an inherent bottleneck in chemical space exploration because:

- Limited Representational Capacity: The fixed library of atom types cannot adequately capture the diverse electronic environments atoms experience in different molecular contexts [9].

- Parametrization Challenges: The process is "human-derived, labor-intensive, and inextendable" [9], relying on expert curation rather than systematic coverage.

- Functional Form Limitations: Even with perfect parameters, the simple functional forms of MM force fields cannot accurately fit QM energies and forces, especially in high-energy regions [9].

The result is that on limited chemical spaces and in low-energy regions, the energy disagreement between legacy force fields and QM is "far beyond the chemical accuracy of 1 kcal/mol" [9]—the threshold necessary for realistic chemical predictions.

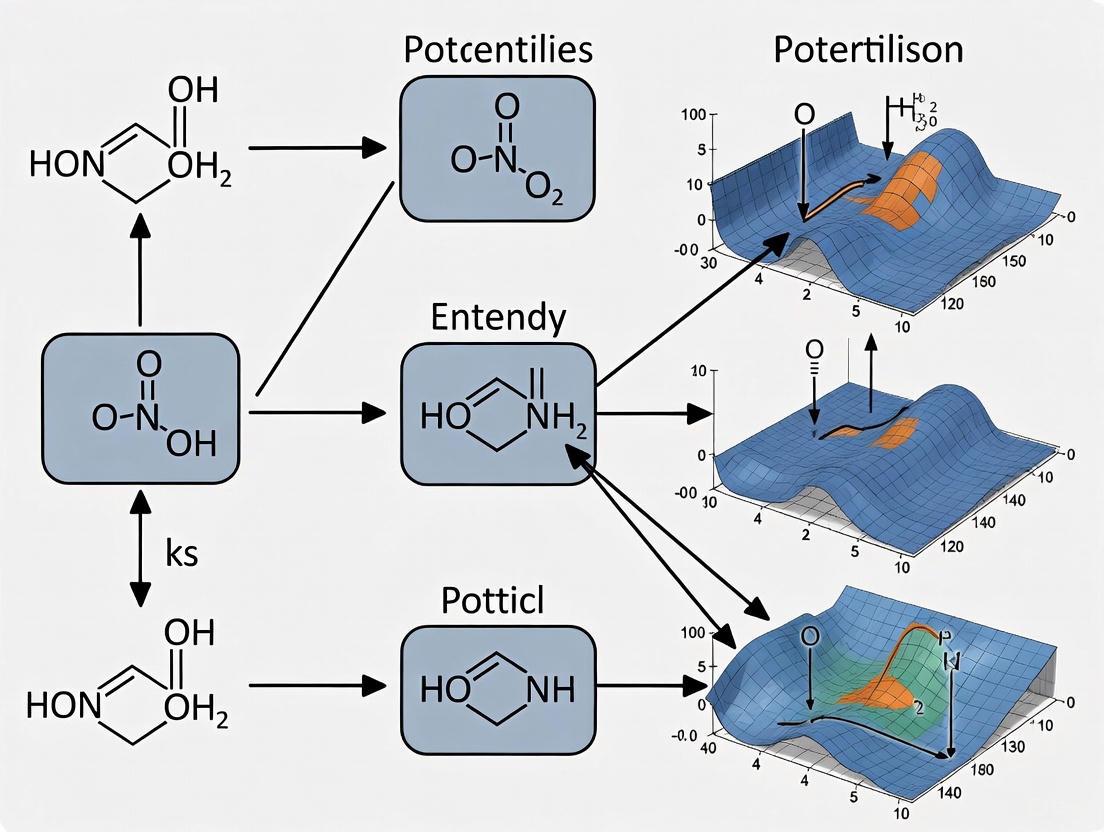

Figure 1: The Multi-stage Bottleneck in Chemical Space Exploration

Machine Learning Force Fields: Bridging the Accuracy Gap

The Machine Learning Paradigm Shift

Machine learning force fields (MLFFs) represent a fundamental shift from the traditional look-up table approach. Rather than relying on fixed atom types and functional forms, MLFFs use "differentiable neural functions parametrized to fit ab initio energies, and furthermore forces through automatic differentiation" [9]. This approach has demonstrated remarkable accuracy, with many recent variants achieving energy errors well below the chemical accuracy threshold of 1 kcal/mol on limited chemical spaces [9].

The architectural advantage of MLFFs lies in their ability to learn complex, high-dimensional relationships between atomic configurations and energies without being constrained by pre-defined atom types or interaction terms. This enables them to capture quantum mechanical effects that are fundamentally beyond the representational capacity of traditional force fields [9].

The Computational Bottleneck

Despite their superior accuracy, MLFFs face their own bottleneck: computational cost. While MLFFs are "magnitudes faster than QM calculations (and scale linearly w.r.t. the size of the system), they are still hundreds of times slower than MM force fields" [9]. This creates a critical speed-accuracy tradeoff that currently limits the practical application of MLFFs to biologically relevant systems.

Table 2: Performance Comparison: MM vs. ML Force Fields

| Characteristic | Molecular Mechanics (MM) | Machine Learning Force Fields (MLFF) |

|---|---|---|

| Functional Form | Simple, physics-based terms | Flexible neural functions |

| Accuracy | >1 kcal/mol error [9] | <1 kcal/mol error achievable |

| Speed | ~0.005 ms per evaluation (A100 GPU) [9] | ~1 ms per evaluation (A100 GPU) [9] |

| Scalability | O(N) for well-optimized modern codes [9] | O(N) but with larger prefactor |

| Parametrization | Human-curated atom typing [9] | Automated from QM data |

| Chemical Space Coverage | Limited by atom type library | Potentially broader with sufficient training |

For small molecule systems of up to 100 atoms, some of the fastest MLFFs still require approximately 1 millisecond per energy and force evaluation on an A100 GPU, compared to less than 0.005 milliseconds for MM force fields [9]. This performance gap becomes prohibitive when simulating biomolecular systems of considerable size over biologically relevant timescales.

Emerging Solutions and Methodologies

Multipole Featurization for Complex Systems

Recent research has addressed the scaling limitations of MLFFs for systems with diverse chemical elements. The smooth overlap of atomic positions (SOAP) descriptor, commonly used in MLFFs, scales quadratically with the number of unique chemical elements, "requiring additional computational resources and sometimes causing poor conditioning of the resulting design matrices" [11]. The normalized Gaussian multipole (GMP) descriptor addresses this by "implicitly embedding elemental identity through a Gaussian representation of atomic valence densities, leading to a fixed vector size independent of the number of chemical elements in the system" [11].

This approach demonstrates that the number of density functional theory (DFT) calls—a major computational bottleneck—can remain approximately independent of the number of chemical elements, in contrast to the increase required with SOAP [11]. This is particularly valuable for modeling complex alloys or catalytic systems with multiple elements.

Workflow for MLFF Development and Validation

The development of robust MLFFs requires careful workflow design and validation. The following methodology has proven effective for creating reliable machine-learned force fields:

Figure 2: MLFF Development and Validation Workflow

Reference Data Generation: Perform ab initio molecular dynamics (AIMD) or select diverse configurations for QM energy and force calculations. Systems include bulk metals and alloys with varying numbers of elements to test scalability [11].

Active Learning Implementation:

- Utilize uncertainty quantification (UQ) methods, such as Bayesian estimates, to determine when a model needs updating [11].

- Interface with electronic structure software to generate additional reference data for edge cases [11].

- Employ on-the-fly training procedures that automatically form training sets during simulation [11].

Validation Metrics:

Performance Benchmarks:

The Scientist's Toolkit: Essential Research Reagents and Computational Tools

Table 3: Research Reagent Solutions for Chemical Space Exploration

| Reagent/Resource | Function | Application Context |

|---|---|---|

| NORMAN SusDat Database | Reference database containing ~60K unique chemicals with PubChem CIDs [7] | Benchmarking chemical space coverage in NTA studies |

| Graphite Felt (GF) Support | Compressible support for single-atom catalysts in flow reactors [12] | Enhancing productivity in SAC-mediated reactions |

| Pt₁-MoS₂/Gr Catalyst | Single-atom catalyst with pyramidal Pt-3S structure resistant to metal leaching [12] | Continuous-flow chemoselective reduction reactions |

| SPARC DFT Code | Real-space formalism DFT code with minimal dependencies for rapid training data generation [11] | Implementing GMP-based on-the-fly potentials |

| Redox Flow Cell Reactor | Customized reactor for SAC-catalyzed reactions requiring high flow rates [12] | Overcoming quantitative conversion bottleneck in fine chemical production |

The exploration of chemical space remains fundamentally bottlenecked by the limitations of traditional molecular mechanics force fields and their dependence on finite atom types. While machine learning force fields have demonstrated unprecedented accuracy, their computational cost creates a new bottleneck that limits practical application to complex biological systems. The most promising path forward lies in the design space between MM and ML force fields—developing approaches that incorporate physical constraints and computational efficiency of molecular mechanics with the accuracy and flexibility of machine learning.

Emerging methodologies such as multipole featurization, active learning workflows, and specialized hardware implementations show potential for bridging this gap. As these approaches mature, they will enable researchers to navigate the uncharted regions of chemical space more effectively, accelerating discovery in drug development, materials science, and exposomics. The ultimate solution to the chemical space bottleneck will likely involve neither pure physics-based approaches nor purely data-driven models, but rather a thoughtful integration of both paradigms that balances accuracy, speed, and interpretability.

Biomolecular force fields (FFs) serve as the foundational mathematical models that describe the energetic interactions between atoms within molecular dynamics (MD) simulations, enabling scientists to study the structure, dynamics, and function of biological molecules. Traditional, fixed-charge FFs have been powerful workhorses for decades. However, their inherent inflexibility—particularly the use of static, precomputed parameters and lookup tables for atomic charges and interactions—poses significant limitations for modeling complex, dynamic, or chemically unique systems. This inflexibility becomes acutely problematic when simulating peptides with radical chemistries or intricate biomolecular complexes where electronic polarizability, charge transfer, and specific environmental effects are critical. The core issue lies in the lookup table paradigm itself: once an atom is assigned a type, its properties are largely fixed, making it difficult to adapt to novel molecular contexts or electronic states not originally envisioned by the force field developers [13] [14].

This whitepaper explores these limitations through specific case studies, demonstrating how the rigidity of traditional FFs hinders progress in targeted peptide design and the simulation of charged fluids. Furthermore, it examines the emerging solutions—polarizable FFs, machine learning potentials, and advanced sampling techniques—that are beginning to overcome these challenges. By framing this discussion within the context of modern drug discovery and biomolecular research, we aim to provide practitioners with a clear understanding of both the pitfalls of outdated methodologies and the practical pathways toward more accurate and predictive simulations.

Case Study: De Novo Design of Keap1-Targeting Peptides

Experimental Protocol and Workflow

The design of short peptides to bind the Kelch domain of Keap1 represents a compelling case where traditional methods are being superseded by more integrated, generative approaches. A novel computational framework combining deep generative modeling with in silico optimization exemplifies this shift [15]. The protocol can be summarized as follows:

- Backbone Generation with RFdiffusion: The RFdiffusion generative model was used to design short peptide backbones (3-10 residues) targeting specific hydrophobic "hotspot" residues (Y334, I461, F478, A556, Y525, Y572, F577) on the Keap1 surface (PDB ID: 2FLU). The "beta" model was employed to ensure topological diversity beyond default helical structures. The command used was:

docker run -it --rm --gpus 'device=0' -v /RFdiffusion/models:/app/models -v /RFdiffusion/inputs:/app/inputs -v /RFdiffusion/outputs:/app/outputs inference.output_prefix=/app/outputs/design_ppi_peptide-3–10 inference.model_directory_path=/app/models inference.ckpt_override_path=/app/models/Complex_beta_ckpt.pt inference.input_pdb=/app/inputs/2FLU.pdb inference.num_designs=1000 'contigmap.contigs=[X325–609/0 70–100]' 'ppi.hotspot_res=[X334,X461,X478,X556,X525,X572,X577]'[15]. - Sequence Design with ProteinMPNN: Generated backbone structures were optimized for specific sequence using ProteinMPNN. A three-step optimization process was run with 15 relaxation cycles and a temperature parameter of 0.5 to enhance sequence diversity, yielding 567 peptide sequences. The command used was:

./mpnn_fr/dl_interface_design.py -silent input.silent -relax_cycles 15 -seqs_per_struct 1 -temperature 0.5 -outsilent outputX.silent[15]. - In Silico Peptide Screening: The resulting FASTA sequences were analyzed for critical drug-like properties using web servers, including ToxinPred3 (toxicity), AllertcatPro2 (allergenicity), PlifePred (stability), and Innovagen (solubility) [15].

- Validation via Molecular Dynamics: Top candidates underwent extensive MD simulations to confirm stable binding to Keap1, assessing binding contacts and structural stability over time [15].

Quantitative Analysis of Peptide Properties

Table 1: Key characteristics of the identified antioxidant peptide NY9 from milk tofu cheese, which interacts with Keap1.

| Property | Value/Result | Method of Analysis |

|---|---|---|

| ABTS Radical Scavenging (IC₅₀) | 11.06 μmol/L | In vitro biochemical assay |

| Thermal & pH Stability | Excellent | Stability testing under varied conditions |

| Key Keap1 Binding Residues | Leu557, Leu365, Val465, Thr560, Gly464 | Molecular docking and dynamics |

| Primary Binding Interactions | Hydrogen bonding, Hydrophobic interactions | Molecular dynamics simulations |

| Cytoprotective Effect | Reduced ROS and MDA; increased CAT and GSH-Px | Cell experiments (HepG2 cells) |

This case study highlights a modern pipeline that bypasses many limitations of force field lookup tables. However, the final MD validation step remains dependent on the accuracy of the chosen FF. As noted in a systematic benchmark, "no single [force field] model performs optimally across all systems," and many exhibit "strong structural bias" when simulating peptides, underscoring the ongoing challenge [16].

The Limitation of Lookup Tables in Force Fields

The Fundamental Rigidity of Additive Force Fields

Traditional biomolecular FFs, such as AMBER, CHARMM, and OPLS, are primarily additive all-atom force fields [13]. Their core limitation is the use of static lookup tables for key parameters:

- Fixed Partial Charges: Each atom is assigned a permanent, context-independent partial charge. This model fails to account for electronic polarization, the phenomenon where the electron distribution of an atom changes in response to its local electrostatic environment [13] [14].

- Pre-defined Atom Types: Atoms are classified into types based on their chemical element and hybridization. The bonded (bonds, angles, dihedrals) and non-bonded (van der Waals) parameters for these types are stored in lookup tables. This system struggles with chemical modifications, such as post-translational modifications (PTMs) in proteins, which introduce non-standard amino acids not originally parameterized [13].

This architecture leads to a fundamental trade-off: lookup tables provide computational efficiency and simplicity but at the cost of physical accuracy and transferability for systems beyond their original parametrization scope [13].

Practical Consequences in Biomolecular Simulations

The reliance on lookup tables and fixed charges manifests in several pathological deficiencies during simulations:

- Inaccurate Treatment of Charged and Polar Interactions: Nonpolarizable FFs with fixed charges often miscalculate the strength of hydrogen bonds and ion-pair interactions. This leads to systematic errors in simulating the dynamics of complex charged fluids like ionic liquids, where "calculated transport properties are generally lower than experimental measurements" [14].

- Inability to Model Charge Transfer and Chemical Reactivity: Classical FFs cannot simulate processes where electrons are redistributed, such as proton transfer reactions, because the atomic charges are fixed by the lookup table. This necessitates a quantum mechanical treatment [14].

- Poor Handling of Novel Molecules: Parametrizing a new molecule (e.g., a drug-like ligand or a modified residue) is a major undertaking. While automated tools exist, they often rely on extending existing parameters from the lookup table, which may be unsuitable. Users must then undertake rigorous validation—e.g., calculating dipole moments and water interactions for charge assignments—a process requiring deep expertise that is often overlooked [17].

Table 2: Comparison of traditional and modern force field approaches.

| Feature | Traditional Additive FFs (Lookup Tables) | Polarizable FFs | Machine Learning FFs |

|---|---|---|---|

| Atomic Charges | Fixed, assigned from a table | Fluctuate based on environment | Determined by a neural network |

| Parametrization | Manual, labor-intensive | Complex, requires polarizability parameters | Data-driven, trained on QM data |

| Transferability | Limited to predefined chemical space | Higher for varying environments | High, in principle, within training data domain |

| Computational Cost | Low (Baseline) | 10-100x higher than additive FFs | 10-100x higher than additive FFs |

| Key Limitation | Cannot model polarization or charge transfer | High computational cost; parameter complexity | Black-box nature; data dependency; computational speed |

Emerging Solutions to Overcome Lookup Table Inflexibility

Polarizable Force Fields

Polarizable FFs address the most significant shortcoming of additive models by incorporating electronic polarization. This is achieved through various methods, such as the Drude oscillator model or fluctuating charge models, which allow atomic charges to respond dynamically to changes in the molecular environment [13]. This enables a more physical description of interactions in heterogeneous environments like protein-ligand binding sites or membrane interfaces, where electrostatic effects are crucial. While they offer superior accuracy, their widespread adoption has been hindered because "polarizable FFs are computationally more expensive (about 10 times) than non-polarizable FFs" [13].

Machine Learning Force Fields (MLFFs)

MLFFs represent a paradigm shift, moving away from pre-defined mathematical functions and lookup tables toward models that learn the relationship between atomic structure and energy/forces directly from quantum mechanical (QM) data [14].

- Principles: Models like NeuralIL use neural networks to predict energies and forces with near-DFT accuracy but at a fraction of the computational cost of ab initio MD [14].

- Advantages: They can naturally handle charge transfer, polarization, and even chemical reactions, effectively bridging the gap between the accuracy of QM and the scale of classical MD [14].

- Current Status: While promising, MLFFs are "still much slower than their classical counterparts (10–100 times slower)" and their accuracy is contingent on the quality and breadth of their training data [14]. They are also perceived as "black boxes" compared to classical FFs.

Advanced Sampling and Validation Protocols

To mitigate sampling issues and ensure robustness, modern simulation practices recommend:

- Performing Replicate Simulations: Initiating multiple simulations from different starting points to better approximate ergodicity and avoid getting trapped in local energy minima [17].

- Using Biased Sampling Methods: Techniques like metadynamics and Gaussian-accelerated MD can drive the system to explore slow degrees of freedom that would be inaccessible in standard MD timescales [17].

- Rigorous Validation: Analyzing simulations with methods like root-mean-squared deviation (RMSD) clustering or principal-component analysis (PCA) to identify representative states, rather than "cherry-picking" snapshots [17].

Table 3: Key software tools and resources for modern force field research and peptide design.

| Tool/Resource | Primary Function | Relevance to Overcoming Lookup Table Limitations |

|---|---|---|

| RFdiffusion | De novo protein and peptide backbone design [15] | Generative design bypasses the need for template-based modeling. |

| ProteinMPNN | Protein sequence design and optimization [15] | Rapidly designs sequences for any given backbone structure. |

| AMBER/CHARMM | MD simulation suites with traditional additive FFs [13] | Baseline tools; their additive FFs exemplify the lookup table approach. |

| OpenMM | High-performance MD simulation toolkit | Facilitates the implementation of new FF types, including custom ML potentials. |

| Force Field Toolkit (fftk) | VMD plugin for parameter generation [17] | A guided interface for parametrizing new molecules, helping to navigate lookup table gaps. |

| NeuralIL | Neural network force field for ionic liquids [14] | An example of an MLFF that accurately models complex charged fluids. |

| ToxinPred3/AllertcatPro2 | In silico prediction of peptide toxicity and allergenicity [15] | Critical for screening designed peptides for drug-like properties. |

The inflexibility of traditional lookup table-based force fields presents a significant bottleneck in the accurate simulation of peptide radicals, complex biomolecules, and reactive systems. The static nature of their parameters fails to capture essential physics like electronic polarization and charge transfer, limiting their predictive power for modern drug discovery and materials science. However, the field is undergoing a transformative shift. Through case studies in peptide design and charged fluids, we have seen how generative AI models (RFdiffusion, ProteinMPNN) can circumvent some design challenges, while polarizable force fields and machine learning potentials directly address the physical shortcomings of additive models. For researchers, the path forward involves a careful, critical approach: selecting force fields and simulation protocols with an awareness of their limitations, embracing replicate simulations and rigorous validation, and strategically adopting new ML-based tools where they offer the greatest benefit. As these advanced methods mature and become more computationally accessible, they will undoubtedly unlock new frontiers in our understanding and design of complex molecular systems.

In computational chemistry and materials science, force fields form the foundational mathematical models that describe the potential energy surfaces governing atomic interactions. The transferability problem refers to the critical limitation where parameters derived from a specific training dataset fail to accurately predict properties or behaviors in chemical environments beyond those represented in the training data. This challenge persists as a fundamental constraint in molecular simulations, particularly as researchers attempt to explore increasingly expansive chemical spaces for applications such as drug discovery and materials design [18] [19].

Traditional force field development has relied heavily on look-up table approaches, where parameters are assigned based on chemical group classifications. While these methods benefit from computational efficiency, they inherently struggle with transferability due to their limited functional forms and discrete descriptions of chemical environments [19] [20]. For instance, conventional molecular mechanics force fields (MMFFs) like AMBER, CHARMM, and OPLS employ fixed analytical forms that approximate the energy landscape through decomposition into bonded and non-bonded interactions. This simplification sacrifices accuracy, particularly when non-pairwise additivity of non-bonded interactions becomes significant, making these models susceptible to failures in unexplored chemical territories [19] [21].

The core issue stems from a fundamental trade-off: simplified physical models offer computational efficiency but lack the expressive power to capture the complex, multi-dimensional nature of quantum mechanical potential energy surfaces. As research increasingly focuses on drug-like molecules and complex materials, the limitations of traditional parameterization methods become more pronounced, necessitating more sophisticated approaches to force field development [19] [22].

Comparative Analysis of Force Field Approaches

The table below summarizes the key characteristics, transferability challenges, and representative examples of different force field paradigms:

Table 1: Comparison of Force Field Approaches and Their Transferability Characteristics

| Force Field Type | Parameterization Approach | Transferability Strengths | Transferability Limitations | Representative Examples |

|---|---|---|---|---|

| Traditional Look-up Table | Pre-defined parameters based on chemical group assignments [20] | Computational efficiency; Well-established for known chemical spaces [19] | Limited by fixed functional forms; Poor handling of unseen chemical environments [19] | AMBER, OPLS-AA, GAFF [19] [20] |

| Machine Learning Potentials | Neural networks trained on quantum mechanical data [22] | High accuracy near training data; Ability to capture complex interactions [18] | Susceptible to overfitting; Performance degradation on out-of-distribution systems [23] | MACE-OFF, ANI, AIMNet [22] |

| Graph Neural Network Parameterized | GNNs predict parameters from molecular graphs [19] [21] | Automatic parameter generation; Improved coverage of chemical space [19] | Training data requirements; Potential instability in MD simulations [19] | ByteFF, Espaloma [19] |

| Polarizable Force Fields | Include electronic response to environment [21] | Better description of electrostatic interactions [21] | Complex parameterization; Computational overhead [21] | AMOEBA, ByteFF-Pol [21] |

The comparative analysis reveals that while machine learning force fields (MLFFs) demonstrate superior accuracy for systems within their training domain, they face significant transferability barriers when applied to configurations, chemical elements, or system sizes not adequately represented during training [18] [24]. For example, universal MLFFs like CHGNET and ALIGNN-FF typically achieve energy errors of several tens of meV/atom, which may be insufficient for applications requiring high precision, such as moiré materials where electronic band structures exhibit energy scales on the order of meV [25].

Fundamental Limitations of Look-up Table Approaches

Traditional look-up table force fields operate on a construction plan principle, where parameters are assigned to atoms based on their chemical context according to a predefined taxonomy [20]. This approach introduces several fundamental limitations that directly impact transferability:

Discrete Chemical Descriptors: Look-up tables rely on discrete chemical environment classifications (atom types) that cannot adequately represent the continuous nature of chemical bonding and electron density redistribution. The SMIRKS patterns used in modern implementations like OpenFF provide greater specificity but still struggle with chemical edge cases and unusual bonding situations [19].

Limited Functional Forms: Traditional molecular mechanics force fields employ simplified mathematical functions that cannot capture the complexity of quantum mechanical potential energy surfaces. This inherent approximation leads to inaccuracies, particularly for molecular properties strongly influenced by electron correlation effects [19] [21].

Data Scalability Issues: As synthetically accessible chemical space expands rapidly through advances in combinatorial chemistry and high-throughput screening, the number of required parameters in look-up tables grows combinatorially. For instance, OPLS3e increased its torsion types to 146,669 to enhance accuracy and expand chemical space coverage, demonstrating the scalability challenge of this approach [19].

The ByteFF development team highlighted these limitations, noting that "these discrete descriptions of the chemical environment have inherent limitations that hamper the transferability and scalability of these force fields" [19]. This recognition has driven the shift toward data-driven parameterization methods that can continuously adapt to chemical context rather than relying on discrete classifications.

Experimental Methodologies for Evaluating Transferability

Comprehensive Benchmarking Tests

Rigorous assessment of force field transferability requires going beyond conventional validation metrics. A comprehensive benchmarking suite should include:

Phase Transfer Tests: Evaluating performance across different phases (solid, liquid, interface) is crucial. Research has demonstrated that models trained exclusively on liquid configurations fail to accurately capture vibrational frequency distributions in the solid phase or liquid-solid phase transition behavior. This deficiency is only remedied when training data includes configurations sampled from both phases [18].

Multi-Scale Property Validation: Transferability should be assessed across diverse properties including radial distribution functions, mean-squared displacements, phonon density of states, melting points, and computational X-ray photon correlation spectroscopy (XPCS) signals. XPCS captures density fluctuations at various length scales in the liquid phase, providing valuable information beyond conventional metrics [18].

Chemical Space Extrapolation: Testing model performance on molecules with functional groups, element types, or bonding environments not represented in the training data. The MACE-OFF development team emphasized the importance of evaluating "unseen molecules" to truly assess transferability [22].

Table 2: Key Experiments for Evaluating Force Field Transferability

| Experiment Category | Specific Tests | Critical Metrics | Transferability Insights |

|---|---|---|---|

| Structural Properties | Radial distribution functions, Phonon density of states [18] | RMSE against reference data [25] | Accuracy in replicating spatial atomic distributions and vibrational properties |

| Thermodynamic Properties | Melting points, Liquid-solid phase transitions [18] | Transition temperatures, Enthalpy changes | Ability to capture phase behavior and temperature-dependent phenomena |

| Dynamic Properties | Mean-squared displacement, XPCS signals [18] | Diffusion coefficients, Relaxation times | Performance in predicting temporal evolution and transport properties |

| Chemical Transfer | Torsional energy profiles of unseen molecules [22] | Energy barrier RMSE, Conformational distributions | Generalization to new molecular structures and functional groups |

| Scale Transfer | System size scaling [25] | Energy/force errors vs. system size | Stability and accuracy when simulating larger systems than trained on |

Workflow for Assessing Transferability

The following diagram illustrates a comprehensive experimental workflow for evaluating force field transferability:

Figure 1: Comprehensive workflow for evaluating force field transferability across multiple domains including phase behavior, system size, and chemical space.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Force Field Development and Transferability Research

| Tool/Category | Primary Function | Application in Transferability Research | Key Features |

|---|---|---|---|

| Graph Neural Networks (GNNs) | Parameter prediction from molecular graphs [19] [21] | Learning continuous representations of chemical environments | Symmetry preservation; Message passing [19] |

| ALMO-EDA Decomposition | Energy decomposition analysis [21] | Generating physically meaningful training labels | Separates interaction energy components [21] |

| DPmoire | MLFF construction for moiré systems [25] | Specialized transferability for twisted materials | Automated dataset generation [25] |

| TUK-FFDat Format | Standardized force field data scheme [20] | Enabling interoperable parameter exchange | SQL-based; Machine-readable [20] |

| Quantum Chemistry Codes | Reference data generation (e.g., VASP) [25] | Producing training data for MLFFs | DFT calculations with vdW corrections [25] |

Emerging Solutions and Forward-Looking Approaches

Advanced Machine Learning Architectures

Modern approaches to addressing transferability challenges leverage sophisticated machine learning architectures:

Equivariant Graph Neural Networks: Models like MACE and Allegro incorporate E(3) equivariance, ensuring that predictions transform correctly under rotation and translation. This geometric consistency improves transferability to diverse molecular configurations [22].

Polarizable Force Fields with ML-Parameterization: ByteFF-Pol represents a significant advancement by combining polarizable force field forms with GNN-based parameterization. This approach captures electronic response to environment while maintaining transferability across chemical space [21].

Differentiable Physical Constraints: Incorporating physical constraints directly into the ML training process enhances transferability. For example, the TNEP framework with atomic polarizability constraints improves predictions for larger molecular clusters by enforcing physically meaningful decomposition of molecular polarizabilities into atomic contributions [26].

Data Strategy Innovations

Addressing transferability requires not only algorithmic advances but also strategic data management:

Diverse Training Data Collection: Research shows that including both solid and liquid configurations in training data is essential for capturing material behavior across phases. Similarly, covering diverse chemical environments in the training set significantly improves transferability [18] [19].

Active Learning and Transfer Learning: DPmoire demonstrates the effectiveness of constructing MLFFs using non-twisted structures and then applying them to complex moiré systems. This approach combines initial training on simpler systems with targeted transfer learning for specific applications [25].

Committee Error Estimation: Implementing committee models to estimate prediction uncertainty helps identify regions of chemical space where transferability may be compromised, allowing for targeted data acquisition or model refinement [26].

The continued development of transferable force fields requires a multifaceted approach that combines physically motivated functional forms, advanced machine learning architectures, strategic data generation, and comprehensive validation protocols. As these methodologies mature, they promise to expand the accessible chemical space for computational discovery while maintaining the accuracy required for predictive modeling in materials science and drug discovery.

The Paradigm Shift: How Machine Learning is Redefining Molecular Parametrization

Accurate modeling of interatomic interactions is fundamental to understanding material properties and chemical processes at the atomic level. Traditional force fields, based on fixed functional forms and empirical parameterization, have long been used in molecular dynamics and Monte Carlo simulations. However, these classical approaches often fail to accurately describe complex systems, particularly those involving bond breaking and formation, complex electronic interactions, and environments far from equilibrium [27]. While quantum mechanical methods like Density Functional Theory provide the necessary accuracy, they are computationally prohibitive for large systems and long-time-scale simulations [27] [28]. In this context, Machine Learning Force Fields have emerged as a transformative tool, bridging the gap between computational efficiency and quantum-level accuracy by learning the Potential Energy Surface directly from quantum mechanical calculations [27].

Fundamental Concepts and Methodologies

Core Principles of MLFFs

Machine Learning Force Fields are structured to learn a specific function of the atomic coordinates: the Potential Energy Surface. These models are trained directly from quantum-mechanical calculations, using neural networks, Gaussian processes, and other advanced ML techniques to capture complex, high-dimensional relationships between atomic positions, energies, and forces without relying on predefined functional forms [27]. MLFFs maintain the linear scaling of classical force fields while approaching the accuracy of DFT, representing a powerful intermediate that enables new scientific insights by making large-scale and longtime scale simulations feasible for reactive systems [28].

Key MLFF Architectures

The MLFF landscape encompasses several sophisticated architectures, each with distinct approaches to learning atomic interactions.

Neural Network Potentials

- SchNet: An end-to-end learning framework based on message-passing neural networks that employs continuous filter convolutional layers to model quantum interactions. This framework removed the need for hand-made descriptors and instead enabled learning relevant atomic representations directly from data [27].

- Message Passing Network with Iterative Charge Equilibration: An architecture that explicitly incorporates equilibrated atomic charges and long-range electrostatics, enabling representation of multiple charge states, ionic systems, and electronic response properties while simultaneously improving accuracy [28].

- Universal Models for Atoms: A suite of models offering high accuracy and good performance for reaction barrier heights for finite systems, covering the majority of the periodic table [28].

Kernel-Based Methods

- Gradient Domain Machine Learning: Uses a Hessian kernel to devise an analytically integrable force field to learn the total energy of the system without partitioning it into atomic contributions. GDML is the only global model published in the literature [27].

- Bravais-Inspired Gradient-Domain Machine Learning: Extends the sGDML framework to include periodic systems with supercells containing up to roughly 200 atoms. BIGDML employs a global representation of the full system and uses the full translation and Bravais symmetry group for a given material, achieving meV/atom accuracy with only 10–200 training geometries [29].

- Gaussian Approximation Potential: Takes the classical approach of representing the system's total energy as atomic contributions, using the Smooth Overlap of Atomic Positions descriptor to represent local atomic environments [27].

MLFF Development Workflow

The process of constructing robust Machine Learning Force Fields follows a systematic workflow encompassing data generation, model training, and validation.

MLFF Development and Application Workflow | This diagram illustrates the iterative process of creating and validating a Machine Learning Force Field.

Reference Data Generation

The initial phase involves generating a diverse set of atomic configurations and computing their energies and forces using high-level quantum mechanical methods like Density Functional Theory. For materials, it's crucial to choose a large enough structure so that phonons or collective oscillations "fit" into the supercell [30]. The electronic minimization must be thoroughly checked for convergence, including parameters such as the number of k-points, plane wave cutoff, and electronic minimization algorithm [30]. For layered materials, van der Waals interactions play a crucial role in determining DFT-calculated interlayer distances, making their inclusion indispensable [25].

Training Methodologies

MLFF training can be performed using different operational modes:

- TRAIN Mode: Used to start a training run. Depending on the existence of a valid ML_AB file, the training can start from zero or continue based on an existing structure database [30].

- SELECT Mode: In this mode, a new MLFF is generated from ab-initio data provided in the ML_AB file, but the list of local reference configurations is ignored and a new set is created. This is useful for generating MLFFs from precomputed or external ab-initio datasets [30].

Key considerations during training include exploring as much of the phase space of the material as possible by using appropriate molecular dynamics ensembles. The NpT ensemble is preferred for training as additional cell fluctuations improve the robustness of the resulting force field [30].

Validation and Testing

Rigorous validation against standard DFT results is essential to confirm the MLFF's efficacy in capturing complex atomic interactions [25]. The testing should include configurations not present in the training set, particularly for the intended application domains. For moiré systems, test sets are often constructed using large-angle moiré patterns subjected to ab initio relaxations [25].

Quantitative Performance Comparison of MLFF Approaches

Accuracy and Data Efficiency Metrics

Table 1: Comparison of MLFF Method Performance Characteristics

| Method | Reported Energy Error | Reported Force Error | Data Efficiency | Key Advantages |

|---|---|---|---|---|

| BIGDML [29] | Substantially below 1 meV/atom | Not specified | 10-200 geometries | Uses full symmetry group; global representation |

| DPmoire [25] | Not specified | 0.007-0.014 eV/Å (RMSE) | Moderate | Specifically tailored for moiré systems |

| Universal MLFFs (CHGNET) [25] | ~33 meV/atom | Not specified | High (pre-trained) | Broad applicability across materials |

| Universal MLFFs (ALIGNN-FF) [25] | ~86 meV/atom | Not specified | High (pre-trained) | Good for high-throughput screening |

| MPNICE [28] | Near-DFT accuracy | Not specified | Moderate | Includes atomic charges; 89 elements |

Computational Requirements

Table 2: Computational Characteristics of MLFF Methods

| Method | Computational Scaling | Maximum System Size | Key Limitations |

|---|---|---|---|

| BIGDML [29] | Favorable with system size | ~200 atoms | Limited by global representation |

| Local MLFFs [30] | Linear with atoms | Large systems | Limited by descriptor cutoff |

| SchNet/MPNN [27] | Linear with atoms | Large systems | Requires careful architecture design |

| On-the-fly Learning [30] | DFT cost during training | Limited by DFT | Initial training computationally expensive |

Advanced Technical Considerations

Treatment of Chemical Species and Environments

In complex systems, treating atoms of the same element in different environments as separate species within an MLFF can significantly improve accuracy. This is particularly important in structures where atoms can have different oxidation states, or where both surface and bulk atoms are present [30]. The implementation involves:

- Grouping atoms by subtype: In the POSCAR file, atoms of the same "subtype" are arranged together with specified numbers for each group

- Assigning unique names: Each species receives a distinct name (e.g., "O1" and "O2"), with names limited to two characters

- Updating POTCAR: Increasing the number of types listed in the POTCAR file, adding a separate entry for each new species [30]

The main disadvantage of this approach is decreased computational efficiency, as the cost scales quadratically with the number of species, though using reduced descriptors can mitigate this to some extent [30].

Molecular Dynamics Setup for Training

Appropriate molecular dynamics parameters are crucial for generating effective training data:

- Time step: Should not exceed 0.7 fs and 1.5 fs for hydrogen and oxygen-containing compounds, respectively. For heavy elements like silicon, a time step of 3 fs may work well [30]

- Temperature control: Gradually heating the system using a temperature ramp (setting TEEND higher than TEBEG) helps explore a larger portion of the phase space [30]

- Ensemble selection: Prefer molecular dynamics training runs in the NpT ensemble for improved robustness, though for fluids, only volume changes of the supercell should be allowed to prevent cell "collapse" [30]

Handling Long-Range Interactions

Most MLFFs dismiss long-range interactions, but the BIGDML approach addresses this challenge through a global representation that preserves periodicity using the minimal-image convention [29]. This approach:

- Employs a periodic Coulomb matrix descriptor that accounts for the full system

- Uses the full translation and Bravais symmetry group for the material

- Captures many-body correlations between atomic forces across the supercell

- Avoids the uncontrollable locality approximation inherent in atom-decomposed models [29]

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Software Tools for MLFF Development and Application

| Tool/Resource | Function | Application Context |

|---|---|---|

| VASP MLFF Module [30] [25] | On-the-fly training during MD simulations | Materials science, periodic systems |

| DPmoire [25] | Automated MLFF construction for moiré systems | Twisted 2D materials, TMDs |

| Allegro/NequIP [25] | High-accuracy MLFF training frameworks | General materials, achieving meV accuracy |

| DeepMD [25] | Neural network potential training | Broad materials and molecules |

| sGDML/GDML [27] [29] | Kernel-based force field learning | Molecules and periodic systems (BIGDML) |

| ASE [25] | Atomistic simulation environment | General MD and analysis |

| LAMMPS [25] | Molecular dynamics simulator | Production MD with trained potentials |

Application Protocols: Moiré Material Case Study

The DPmoire package provides a robust methodology for constructing MLFFs specifically tailored for moiré structures, following this detailed experimental protocol [25]:

MLFF Construction for Moiré Materials | This workflow outlines the specialized protocol for creating force fields for twisted 2D material systems.

Step-by-Step Procedure

Initial Structure Generation: Construct 2×2 supercells of non-twisted bilayers and introduce in-plane shifts to generate various stacking configurations [25]

Constrained Structural Relaxation: Perform structural relaxations for each configuration while keeping the x and y coordinates of a reference atom from each layer fixed to prevent structural drift toward energetically favorable stackings. Maintain constant lattice constants throughout the simulations [25]

Molecular Dynamics Sampling: Conduct MD simulations under the aforementioned constraints to augment the training data pool using the VASP MLFF module. Initially establish a baseline MLFF using single-layer structures before proceeding with full simulations to ensure stability [25]

Selective Data Incorporation: Incorporate data solely from DFT calculation steps rather than all MD steps to maintain high data quality [25]

Test Set Construction: Build the test set using large-angle moiré patterns subjected to ab initio relaxations to ensure the MLFF's applicability to moiré systems and mitigate overfitting to non-twisted structures [25]

Model Training: Utilize the Allegro or NequIP frameworks for MLFF training, though other MLFF algorithms like DeepMD can also be effectively trained on these datasets [25]

Challenges and Future Directions

Despite significant advances, MLFF development faces several challenges. Data requirements for training remain substantial, and transferability across different chemical environments needs improvement [27]. The interpretability of learned representations is another area requiring attention [27]. For universal MLFFs, precision may be insufficient for structural relaxation tasks in specialized systems like moiré materials, where energy scales of electronic bands are often on the order of meV [25].

Future developments are focusing on several key areas:

- Integration with multiscale modeling: Combining MLFFs with virtual cell models and coarse-grained representations to enable whole-cell multiscale simulations [31]

- Improved data efficiency: Approaches like BIGDML that achieve meV/atom accuracy with only 10-200 training geometries [29]

- Active learning frameworks: Enabling models to adaptively learn from new data and improve predictions in previously unexplored regions of chemical space [27]

- Beyond-DFT accuracy: Developing MLFFs trained on higher-level quantum mechanical methods to overcome DFT limitations [29]

As MLFF methodologies continue to mature, they are poised to dramatically expand the scope of atomistic simulations, enabling precise studies of complex systems that were previously computationally prohibitive.

For decades, molecular dynamics (MD) simulations have relied on Molecular Mechanics (MM) force fields to approximate the potential energy surfaces of atomic systems. These traditional force fields employ a physics-inspired functional form where the potential energy is expressed as a sum of contributions from bonded interactions (bonds, angles, dihedrals) and non-bonded interactions. The parameters governing these interactions—force constants, equilibrium values, and partial charges—are assigned based on a finite set of atom types characterized by the chemical properties of the atom and its bonded neighbors. This assignment is typically done via lookup tables, which inherently limits the description of chemical environments to those predefined types.

This lookup table approach faces fundamental limitations in accuracy and transferability. The hand-crafted rules for atom typing struggle to capture the complex, context-dependent nature of molecular interactions, particularly in uncharted regions of chemical space. Consequently, these force fields often trade accuracy for computational efficiency, limiting their predictive capability for diverse molecular systems including proteins, peptides, and novel drug candidates.

Theoretical Foundations: GNNs and Transformers as Graph Representation Learners

Graph Neural Networks for Molecular Systems

Graph Neural Networks (GNNs) provide a natural framework for representing molecular systems. In this representation, atoms correspond to nodes and chemical bonds represent edges in a molecular graph. GNNs build representations of nodes through neighborhood aggregation or message passing, where each node gathers features from its neighbors to update its representation of the local graph structure. Stacking multiple GNN layers enables the model to propagate each node's features across the molecular graph, capturing increasingly complex chemical environments.

The fundamental operation of a GNN layer for updating the hidden features (h) of node (i) at layer (\ell) can be expressed as:

[ h{i}^{\ell+1} = \sigma \Big( U^{\ell} h{i}^{\ell} + \sum{j \in \mathcal{N}(i)} \left( V^{\ell} h{j}^{\ell} \right) \Big), ]

where (U^{\ell}, V^{\ell}) are learnable weight matrices, (\sigma) is a non-linearity, and (\mathcal{N}(i)) denotes the neighborhood of node (i). This formulation allows GNNs to capture the topological structure of molecules directly from their graph representation, eliminating the need for predefined atom types.

Transformer Architectures as Graph Networks

The Transformer architecture, initially developed for natural language processing, has deep connections to GNNs. Transformers can be viewed as GNNs operating on fully-connected graphs of tokens, where the self-attention mechanism captures the relative importance of all tokens with respect to each other.

The self-attention mechanism updates the hidden feature (h) of the (i)-th element as:

[ h{i}^{\ell+1} = \sum{j \in \mathcal{S}} w{ij} \left( V^{\ell} h{j}^{\ell} \right), ]

where (w{ij} = \text{softmax}j \left( Q^{\ell} h{i}^{\ell} \cdot K^{\ell} h{j}^{\ell} \right)), and (\mathcal{S}) denotes the set of all elements in the sequence.

This operation is mathematically similar to the neighborhood aggregation in GNNs, but considers all elements in the set as neighbors. The multi-head attention mechanism allows the model to jointly attend to information from different representation subspaces, enhancing its expressive power. Positional encodings provide hints about sequential ordering or molecular structure, making Transformers powerful set-processing networks for molecular representation learning.

Equivariant Graph Neural Networks for Force Fields

In atomistic simulations, equivariance—the property that model outputs transform predictably under symmetry operations—is crucial for physical accuracy. While energy is invariant to rotation and translation, forces are equivariant (they rotate with the system). Equivariant Graph Neural Networks (EGNNs) explicitly incorporate these symmetries through their architecture.

EGNNs employ a message-passing scheme equivariant to rotations, satisfying (G(Rx) = RG(x)), where (R) is a rotation and (G) is an equivariant transformation. This is typically achieved using spherical harmonics and tensor products, enabling rich representation of atomic environments while respecting physical symmetries. Several EGNN architectures have been developed for force fields, including NequIP, Allegro, BOTNet, MACE, Equiformer, and TorchMDNet.

Table 1: Comparison of Equivariant GNN Force Field Architectures

| Architecture | Key Features | Symmetry Handling | Computational Efficiency |

|---|---|---|---|

| NequIP | Based on tensor field networks | Equivariant through spherical harmonics | Moderate |

| Allegro | Uses Bessel functions for radial basis | Strictly equivariant | High |

| MACE | Higher-order body-ordered messages | Many-body equivariant | Moderate |

| Equiformer | Combines attention with equivariance | Rotationally equivariant | Lower for large systems |

| TorchMDNet | Optimized for MD simulations | Equivariant constraints | High |

Grappa: A Case Study in Modern Machine Learning Force Fields

Architecture and Design Principles

Grappa (Graph Attentional Protein Parametrization) represents a significant advancement in machine learning force fields by leveraging graph attentional neural networks and transformers to predict MM parameters directly from molecular graphs. The architecture consists of two main components:

Graph Attention Network: Constructs atom embeddings that represent local chemical environments based solely on the 2D molecular graph structure, without requiring hand-crafted chemical features.

Transformer with Symmetry-Preserving Positional Encoding: Predicts MM parameters from the atom embeddings while respecting the permutation symmetries inherent in molecular mechanics.

The mapping from molecular graph to energy parameters is differentiable with respect to both model parameters and spatial positions, enabling end-to-end optimization on quantum mechanical energies and forces. Crucially, the machine learning model prediction depends only on the molecular graph, not the spatial conformation, so it must be evaluated only once per molecule. Subsequent energy evaluations incur the same computational cost as traditional MM force fields.

Comparison with Traditional Lookup Table Approaches

Table 2: Lookup Tables vs. Grappa for Force Field Parameterization

| Aspect | Traditional Lookup Tables | Grappa (ML Approach) |

|---|---|---|

| Parameter Source | Fixed set of atom types with hand-crafted rules | Learned directly from molecular graph |

| Chemical Coverage | Limited to predefined atom types | Extensible to novel chemical environments |

| Feature Engineering | Requires expert knowledge (hybridization, formal charge) | Automatic feature learning from graph structure |

| Transferability | Poor for unseen chemical motifs | High, demonstrated for peptides, RNA, and radicals |

| Accuracy | Limited by fixed functional form | State-of-the-art MM accuracy across diverse molecules |

Grappa overcomes key limitations of traditional lookup table approaches by replacing the fixed set of atom types with a flexible graph representation that learns to capture chemical environments directly from data. This eliminates the need for hand-crafted features such as orbital hybridization states or formal charge, allowing the model to generalize to novel molecular structures including peptide radicals and complex biomolecules.

Experimental Protocols and Benchmarking Methodologies

Benchmarking EGraFF Models

The EGraFFBench study provides a comprehensive benchmarking framework for evaluating equivariant GNN force fields. The protocol involves:

Dataset Curation: Utilizing 10 datasets including small molecules, peptides, and RNA, with two new challenging datasets (GeTe and LiPS20) specifically designed to test out-of-distribution generalization.

Model Training: Training 6 EGraFF models (NequIP, Allegro, BOTNet, MACE, Equiformer, TorchMDNet) on quantum mechanical data including energies and forces from density functional theory calculations.

Evaluation Metrics: Assessing models using traditional metrics (force and energy errors) and novel metrics that evaluate simulation quality, including:

- Structural accuracy (radial distribution functions)

- Stability of molecular dynamics trajectories

- Diffusion constants

- Performance on out-of-distribution tasks

Downstream Task Evaluation: Testing models on challenging scenarios including different crystal structures, temperatures, and novel molecules to assess generalization capability.

The benchmarking revealed that lower force or energy errors do not guarantee stable or reliable simulations, highlighting the importance of comprehensive evaluation beyond conventional metrics.

Grappa Training and Validation

The experimental protocol for developing and validating Grappa force fields includes:

Training Data: Utilizing the Espaloma dataset containing over 14,000 molecules and more than one million conformations covering small molecules, peptides, and RNA.

Training Procedure: Optimizing the graph neural network and transformer components to predict MM parameters that minimize the difference between MM-calculated and quantum mechanical energies and forces.

Validation Methods:

- Comparing potential energy landscapes of dihedral angles with reference data

- Evaluating agreement with experimentally measured J-couplings

- Assessing folding free energies of small proteins like chignolin

- Testing transferability to peptide radicals and macromolecules

Molecular Dynamics Simulations: Demonstrating transferability to macromolecular systems including a complete virus particle, with performance comparable to established force fields but with significantly improved accuracy.

Diagram 1: Grappa's end-to-end training workflow (Title: Grappa Training Workflow)

Performance Analysis and Comparative Evaluation

Accuracy and Efficiency Metrics

Grappa demonstrates significant improvements over traditional force fields and other machine-learned approaches:

Energy and Force Accuracy: Outperforms traditional MM force fields and the machine-learned Espaloma force field on the comprehensive Espaloma benchmark dataset, achieving state-of-the-art MM accuracy for small molecules, peptides, and RNA.

Dihedral Parameterization: Accurately reproduces potential energy landscapes of peptide dihedral angles, matching the performance of Amber FF19SB without requiring correction maps (CMAPs).

Experimental Validation: Closely reproduces experimentally measured J-couplings and improves calculated folding free energies for the small protein chignolin.

Computational Efficiency: Maintains the same computational cost as traditional MM force fields when integrated with highly optimized MD engines like GROMACS and OpenMM, enabling simulation of million-atom systems on a single GPU.

Limitations and Failure Modes of Current Approaches

Despite these advances, current EGraFF models exhibit several important limitations:

Out-of-Distribution Generalization: Performance on out-of-distribution datasets (different crystal structures, temperatures, or novel molecules) remains unreliable, with no single model outperforming others across all datasets and tasks.

Simulation Stability: Lower errors on energy or force predictions do not guarantee stable molecular dynamics simulations, as models can suffer from trajectory explosions or poor structural reproduction.

Data Efficiency: Training accurate models still requires substantial quantum mechanical data, though equivariant architectures have improved data efficiency compared to non-equivariant approaches.