Beyond the Minimum: A Practical Guide to Validating Molecular Structures with Dynamics Simulations

This article provides a comprehensive guide for researchers and drug development professionals on validating energy-minimized molecular structures through Molecular Dynamics (MD) simulations.

Beyond the Minimum: A Practical Guide to Validating Molecular Structures with Dynamics Simulations

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on validating energy-minimized molecular structures through Molecular Dynamics (MD) simulations. Moving beyond static models, we explore the foundational necessity of MD for assessing structural stability under realistic conditions. The content details practical methodologies, from force field selection to simulation protocols, and addresses common troubleshooting scenarios like inadequate equilibration. Finally, we present a framework for the rigorous validation of MD results, comparing them against experimental data and alternative computational models to ensure reliability for downstream applications in biomedical research and drug discovery.

From Static to Dynamic: Why Minimized Structures Need MD Validation

Molecular simulations provide powerful tools for researchers and drug development professionals to predict the structure, dynamics, and function of chemical and biological systems. Among these techniques, energy minimization (EM) serves as a fundamental approach for locating stable molecular configurations by navigating the potential energy surface (PES) to find local energy minima. However, this method contains an inherent limitation: it operates in a timeless context, devoid of the temporal dimension essential for understanding molecular behavior under physiological conditions. This article objectively compares the performance of energy minimization against molecular dynamics (MD) simulations, with a specific focus on how the missing time dimension in EM affects the validation of predicted structures against experimental data.

The core issue lies in the nature of the PES, a multidimensional landscape where each point represents the potential energy of a specific molecular geometry. This surface features numerous local minima—energetically stable configurations that may not represent the global minimum or biologically relevant states. While EM algorithms efficiently locate these local minima, they cannot account for time-dependent phenomena such as protein flexibility, ligand binding/unbinding events, or entropy contributions to stability—factors critically important for accurate drug design. Within the context of validating minimized structures with molecular dynamics research, MD simulations emerge as an essential complementary approach, providing the temporal dimension needed to assess whether EM-derived structures remain stable under simulated physiological conditions and sample relevant conformational states.

Theoretical Foundation: Energy Landscapes and the Local Minima Problem

The Potential Energy Surface and Molecular Stability

The PES represents a fundamental concept in computational chemistry, mapping the potential energy of a molecular system as a function of its nuclear coordinates. Within this multidimensional hypersurface, local minima correspond to geometrically stable structures where any small displacement increases the energy [1]. These minima represent isomers, reactants, or products connected by transition states (first-order saddle points). The global minimum (GM) represents the most thermodynamically stable structure, which is the ultimate target of structure prediction efforts [1].

The complexity of navigating this surface cannot be overstated. Theoretical models indicate that the number of local minima scales exponentially with system size according to the relation (N_{min}(N) = \exp(\xi N)), where (\xi) is a system-dependent constant [1]. This exponential relationship creates a formidable challenge for locating the global minimum, particularly for large, flexible molecules like proteins or drug-like compounds where the number of degrees of freedom creates an enormously complex energy landscape with countless local minima.

How Energy Minimization Algorithms Work

Energy minimization algorithms employ various strategies to locate local minima on the PES. The most common approaches include:

Steepest Descent: This robust but inefficient algorithm moves atomic coordinates in the direction of the negative energy gradient ((-\mathbf{F}_n)). Step sizes are adjusted heuristically—increased by 20% when energy decreases and reduced by 80% when energy increases [2]. While guaranteed to find a local minimum, it often requires many steps and performs poorly near minima.

Conjugate Gradient: More efficient than steepest descent closer to minima, this method uses conjugate direction vectors rather than following the gradient directly. However, it cannot be used with constraints, including rigid water models like SETTLE [2].

L-BFGS: The limited-memory Broyden–Fletcher–Goldfarb–Shanno algorithm approximates the inverse Hessian matrix using corrections from previous steps, enabling faster convergence than conjugate gradients while maintaining reasonable memory requirements [2].

All EM algorithms share a fundamental characteristic: they terminate when the maximum force component falls below a specified threshold, typically between 1-10 kJ·mol⁻¹·nm⁻¹, estimating that a weak oscillator at 1 K would exhibit root-mean-square forces of approximately 7.7 kJ·mol⁻¹·nm⁻¹ [2].

The Missing Time Dimension in Energy Minimization

Energy minimization operates exclusively in a timeless context, seeking static configurations with zero force components. This approach misses critical aspects of molecular behavior:

Temperature Effects: EM produces structures representative of 0 K, devoid of thermal fluctuations that enable barrier crossing and access to higher-energy conformations that may be biologically relevant at physiological temperatures.

Entropic Contributions: The free energy (G = H - TS) includes both enthalpy (H) and entropy (S). While EM addresses the enthalpy component through the potential energy, it completely ignores entropic contributions that significantly influence molecular stability and binding affinity at finite temperatures.

Kinetic Accessibility: EM cannot distinguish between kinetically accessible versus inaccessible states. A deep local minimum separated by high barriers may be less relevant biologically than a shallower minimum that is easily accessible under physiological conditions.

Dynamic Processes: Crucial phenomena like allosteric transitions, conformational selection, and induced fit mechanisms require understanding the temporal evolution of structures, which EM cannot provide.

Comparative Analysis: Energy Minimization Versus Molecular Dynamics

Fundamental Methodological Differences

The table below summarizes the core distinctions between energy minimization and molecular dynamics simulations:

| Feature | Energy Minimization | Molecular Dynamics |

|---|---|---|

| Time Dimension | Absent (static) | Explicit (dynamic evolution) |

| Temperature | 0 K (no thermal fluctuations) | Controlled (NVT/NPT ensembles) |

| Sampling Scope | Local minimum on PES | Configurational space sampling |

| Output | Single structure | Structural trajectory |

| Barrier Crossing | Cannot escape local minima | Possible with sufficient kinetic energy |

| Entropy Estimation | Not possible | Possible through analysis |

| Computational Cost | Lower | Higher (requires longer simulation times) |

| Experimental Validation | Limited to static structures | Direct comparison with time-resolved data |

Molecular dynamics simulations address the limitations of EM by introducing the time dimension through numerical integration of Newton's equations of motion. A recent comprehensive review highlights that "MD simulations offer valuable insights into molecular orientations and interactions governing the interfacial behavior of water and organic molecules of liquid–liquid interfaces and those featuring nanoparticles on a molecular scale" [3]. This capability to model temporal evolution makes MD indispensable for studying processes like polymer adsorption at interfaces, protein-ligand binding, and membrane dynamics.

Performance Comparison: Optimization Success and Minimum Quality

Recent benchmark studies comparing optimization methods for neural network potentials provide quantitative insights into EM limitations. The table below summarizes results from testing 25 drug-like molecules with various optimizer-NNP combinations, limited to 250 optimization steps with convergence defined as maximum force < 0.01 eV/Å [4]:

| Optimizer | Successfully Optimized | Average Steps to Complete | True Minima Found | Avg. Imaginary Frequencies |

|---|---|---|---|---|

| L-BFGS | 22-25 | 1.2-120.0 | 16-21 | 0.16-0.35 |

| FIRE | 20-25 | 1.5-159.3 | 11-21 | 0.16-0.45 |

| Sella | 15-25 | 12.9-108.0 | 8-21 | 0-0.40 |

| Sella (internal) | 20-25 | 1.2-23.3 | 15-24 | 0.04-0.16 |

| geomeTRIC (cart) | 7-25 | 13.6-195.6 | 5-22 | 0.24-0.56 |

| geomeTRIC (tric) | 1-25 | 11-114.1 | 1-23 | 0.04-0.56 |

The data reveals several critical limitations of EM approaches. First, no optimizer successfully located true local minima (structures with zero imaginary frequencies) in all test cases. Second, methods varied significantly in their efficiency, with some requiring nearly 200 steps on average to converge. Third, many "optimized" structures contained imaginary frequencies, indicating they were actually saddle points rather than true minima. These findings demonstrate that despite convergence by force criteria, EM frequently produces structures that would be unstable in real molecular systems.

Case Study: Polymer Structure Prediction at Liquid-Liquid Interfaces

Research on polypyrrole (PPy) formation at water-chloroform interfaces demonstrates the critical importance of combining EM with MD. In this system, MD simulations revealed that "the charge-to-size ratio dictates the hydrophobic–amphiphilic–hydrophilic transition of PPy, which in turn controls the adsorption of the oligomers at the water–chloroform interface" [3]. This insight emerged from analyzing density profiles, electrostatic potential, and hydrogen bonding interactions over time—information completely inaccessible to pure EM approaches.

Morphological characterizations including FESEM, AFM, and HRTEM confirmed that well-defined two-dimensional PPy sheets formed only at intermediate oxidant concentrations corresponding to the optimum charge-to-size ratio identified through MD simulations [3]. This experimental validation underscores how MD can guide researchers toward experimentally realizable structures rather than theoretical minima that may not form under synthesis conditions.

Validation Protocols: Integrating Energy Minimization with Molecular Dynamics

A Framework for Validating Minimized Structures

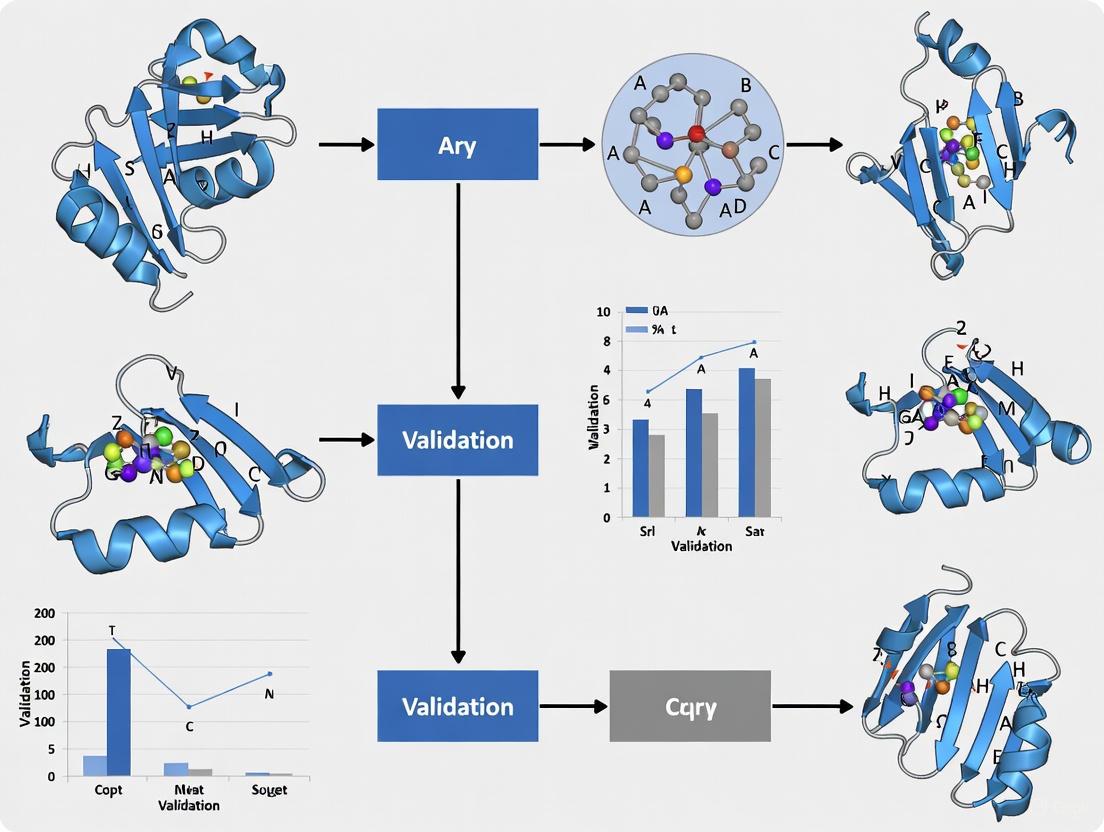

The following workflow illustrates a robust protocol for validating energy-minimized structures using molecular dynamics simulations:

This protocol implements a multistage approach that begins with energy minimization to remove steric clashes and unfavorable contacts, followed by gradual introduction of the time dimension through equilibration phases, ultimately leading to production MD simulations that sample the configurational space accessible at physiological conditions.

Experimental Validation of Simulated Structures

A critical challenge in molecular simulations lies in properly comparing simulated structures with experimental data. A specialized protocol has been developed "for comparing the structural properties of lipid bilayers determined by simulation with those determined by diffraction experiments, which makes it possible to test critically the ability of molecular dynamics simulations to reproduce experimental data" [5].

This model-independent method analyzes MD simulation data in the same manner as experimental data by determining structure factors and reconstructing transbilayer scattering-density profiles through Fourier transformation. When applied to dioleoylphosphatidylcholine (DOPC) bilayers, this approach revealed that "neither the GROMACS nor the CHARMM22/27 simulations reproduced experimental data within experimental error," with particularly strong disagreement in the widths of terminal methyl distributions [5]. This demonstrates that even MD simulations with current force fields may not fully capture experimental reality, highlighting the essential role of experimental validation.

Advanced Sampling Techniques for Complex Systems

For large or complex systems like supramolecular assemblages, enhanced sampling techniques become necessary. Recent research demonstrates innovative approaches where "the protein structure can be described at a mixed-resolution to perform atomistic modeling in the functional regions of the protein, and a lower-resolution modeling is applied in the remaining parts to reduce the computational cost" [6].

This methodology combines anisotropic network modeling (ANM) to generate plausible conformers, followed by docking calculations and all-atom MD simulations of truncated binding sites to estimate binding free energies. Application to triose phosphate isomerase (TIM) demonstrated that 100 ns MD simulations provided sufficient sampling to estimate binding affinities accurately, with comparable results between intact and truncated structures [6]. This approach offers a computationally efficient framework for validating minimized structures in biologically relevant contexts.

Essential Research Reagents and Computational Tools

The table below details key computational tools and methodologies referenced in the studies discussed, providing researchers with practical resources for implementing the protocols described:

| Research Tool | Function/Purpose | Application Context |

|---|---|---|

| GROMACS | Molecular dynamics simulation package | Polymer interfacial studies [3], lipid bilayer validation [5] |

| OPLS-AA | All-atom force field parameters | Polymer-solvent interactions [3] |

| Steepest Descent | Energy minimization algorithm | Initial structure relaxation [2] |

| L-BFGS | Quasi-Newton minimization | Efficient localization of minima [2] [4] |

| Sella | Geometry optimization package | Transition state and minimum optimization [4] |

| geomeTRIC | Optimization with internal coordinates | Molecular structure optimization [4] |

| ANM | Anisotropic Network Model | Efficient conformational sampling [6] |

| MM-GBSA | Molecular Mechanics/Generalized Born Surface Area | Binding free energy calculations [6] |

These tools represent the essential computational "reagents" required for implementing robust protocols that combine energy minimization with molecular dynamics validation. The selection of appropriate tools depends on system characteristics, with smaller systems benefiting from more exhaustive sampling methods, while larger systems may require mixed-resolution or enhanced sampling approaches to achieve sufficient conformational coverage within practical computational constraints.

Energy minimization remains an essential first step in molecular simulations, efficiently removing steric clashes and locating local energy minima. However, its fundamental limitations—particularly the missing time dimension and inability to escape local minima—make it insufficient as a standalone approach for predicting biologically relevant structures. The integration of EM with molecular dynamics simulations creates a powerful framework that combines the efficiency of local minimization with the physically realistic sampling provided by temporal evolution.

Validation against experimental data remains the ultimate test for any computational approach. The protocols discussed herein, particularly those that analyze MD trajectories using the same methods as experimental data [5], provide robust frameworks for assessing predictive accuracy. As force fields continue to improve and sampling methods become more efficient, the combination of EM and MD will increasingly enable researchers to bridge the gap between static molecular structures and dynamic biological function, ultimately accelerating drug discovery and materials design.

For researchers and drug development professionals, the practical implication is clear: energy-minimized structures should be viewed as starting points rather than final predictions. Subsequent molecular dynamics simulation and experimental validation are essential steps for developing reliable molecular models that accurately represent behavior under physiological conditions. This integrated approach moves computational research beyond the limitations of local minima and the timeless world of pure energy minimization into the dynamic realm of molecular reality.

Molecular dynamics (MD) simulations have become an indispensable tool in the computational molecular sciences, providing unparalleled insight into the atomistic motions and time-dependent behavior of biological systems. While static structures offer a snapshot of molecular architecture, they fail to capture the dynamic nature essential for function. MD simulations bridge this gap, serving as a powerful validation tool to probe the stability, flexibility, and realistic conformational landscapes of biomolecules. As the reliance on computationally predicted protein structures grows with advances in tools like AlphaFold2, the importance of MD for validating these models has increased correspondingly. This guide examines how MD simulations are applied to assess and refine molecular models, comparing various methodological approaches and their effectiveness across different protein systems and states.

Fundamental Validation Metrics in Molecular Dynamics

MD simulations generate terabytes of trajectory data, which researchers distill into quantitative metrics to assess structural stability, flexibility, and dynamic behavior. The most fundamental metrics provide insights into different aspects of protein dynamics and are routinely applied across diverse studies.

Table 1: Core Metrics for MD-Based Validation

| Metric | Description | Interpretation | Application Example |

|---|---|---|---|

| Root Mean Square Deviation (RMSD) | Measures average displacement of atoms relative to a reference structure | Lower values indicate stable convergence; rising trends suggest structural drift | FtsZ tense conformation showed 2.7-3.1 Å RMSD with GDP, indicating instability [7] |

| Root Mean Square Fluctuation (RMSF) | Quantifies per-residue flexibility throughout simulation | Peaks indicate flexible regions; valleys represent structural constraints | T6 and T7 loops in SH3 domain showed higher flexibility in GTP-bound states [8] [7] |

| Radius of Gyration (Rg) | Measures structural compactness | Decreasing values suggest compaction; increases may indicate unfolding | Used to assess folding quality in benchmark datasets [9] |

| Essential Dynamics (PCA) | Identifies collective motions from covariance matrices | Large eigenvalues represent functionally relevant motions | Differentiated conformational dynamics in SH3 domain mutants [8] |

These metrics enable researchers to move beyond qualitative assessment to quantitative validation of structural models. For instance, RMSD analysis of FtsZ proteins from Staphylococcus aureus and Bacillus subtilis revealed that relaxed conformations were stable (RMSD 1.2-1.6 Å) regardless of bound nucleotide, while tense conformations formed higher energetic states, particularly when bound to GDP (RMSD 2.7-3.1 Å) [7]. Such analyses validate whether a predicted or modeled structure maintains stability under simulation conditions or exhibits problematic flexibility.

MD for Validation of Specific Molecular Systems

Validation of De Novo Designed Proteins

MD simulations play a crucial role in validating computationally designed proteins, assessing whether designed structures maintain their intended conformations and possess dynamics compatible with their functional goals. Studies have revealed that designed proteins often exhibit exceptional stability, sometimes exceeding naturally occurring proteins [10]. Through MD analysis, researchers have identified structural bases for this stability, including optimized core packing, increased burial of hydrophobic surface area, and favorable arrangements of charged residues.

For example, MD simulations of the designed protein AYEdes demonstrated decreased RMSD and RMSF values, decreased solvent-accessible surface area, increased secondary structure retention, and more favorable contact networks compared to reference structures [10]. Similarly, analysis of successful versus unsuccessful digoxigenin-binding protein designs revealed that successful designs had more rigid cavity entrances, better-organized hydrophobic cores, smaller cavity volumes, and preorganized ligand-binding side chains [10].

Assessment of Intrinsically Disordered Proteins

Validating models of intrinsically disordered proteins presents unique challenges due to their inherent flexibility and lack of stable tertiary structure. MD simulations have been successfully integrated with experimental data from NMR spectroscopy and small-angle X-ray scattering to determine accurate atomic-resolution conformational ensembles of IDPs [11]. The maximum entropy reweighting procedure has emerged as a powerful approach, introducing minimal perturbation to computational models required to match experimental data.

Studies on IDPs including Aβ40, drkN SH3, ACTR, PaaA2, and α-synuclein have demonstrated that in favorable cases, where IDP ensembles obtained from different MD force fields show reasonable initial agreement with experimental data, reweighted ensembles converge to highly similar conformational distributions after reweighting [11]. This approach represents substantial progress toward force-field independent integrative structural biology of disordered proteins.

Refinement of Predicted Structures

MD serves as a crucial refinement tool for structures predicted through computational methods. A study on hepatitis C virus core protein demonstrated that MD simulations compactly folded protein structures predicted by various tools (AlphaFold2, Robetta, trRosetta, MOE, I-TASSER), resulting in theoretically accurate models [12]. The root mean square deviation of backbone atoms, root mean square fluctuation of Cα atoms, and radius of gyration effectively monitored structural changes and convergence during the refinement process [12].

Table 2: MD Applications Across Protein States

| Protein State | Key MD Validation Approaches | Challenges | Notable Findings |

|---|---|---|---|

| Designed Proteins | Stability assessment (RMSD/RMSF), contact analysis, hydration analysis | Balancing stability with functional dynamics | Some designs show exceptional thermostability; successful designs preorganize functional sites [10] |

| Intrinsically Disordered Proteins | Maximum entropy reweighting with experimental data, ensemble comparison | Sparse experimental data, force field dependencies | Reweighted ensembles from different force fields can converge to similar distributions [11] |

| Predicted Structures | Post-prediction refinement, quality assessment via ERAT and phi-psi plots | Initial model quality, sampling limitations | MD refinement improves model quality scores and produces more reliable structural models [12] |

Advanced Sampling and Machine Learning Approaches

Conventional MD simulations face limitations in sampling rare events due to temporal constraints. Enhanced sampling methods and machine learning approaches have emerged to address these challenges, providing more efficient exploration of conformational landscapes.

Generative and Committor-Guided Methods

The Gen-COMPAS framework represents a recent advancement, combining generative diffusion models with committor analysis to reconstruct transition pathways without predefined collective variables [13]. This approach generates physically realistic intermediates and uses committor-based filtering to identify transition states, enabling rapid convergence of transition-path ensembles within nanoseconds compared to traditional enhanced-sampling methods that require orders of magnitude more sampling [13].

Applied to systems ranging from miniproteins to ribose-binding proteins and mitochondrial carriers, Gen-COMPAS successfully retrieves committors, transition states, and free-energy landscapes efficiently, demonstrating the power of uniting machine learning with molecular dynamics for mechanistic insight [13].

Benchmarking and Weighted Ensemble Approaches

Standardized benchmarking has become increasingly important as MD methods diversify. A recent modular benchmarking framework systematically evaluates protein MD methods using weighted ensemble sampling based on progress coordinates derived from time-lagged independent component analysis [9]. This approach enables fast and efficient exploration of protein conformational space and supports both classical force fields and machine learning-based models.

The framework includes comprehensive evaluation of over 19 different metrics across diverse domains, applied to a dataset of nine proteins ranging from 10 to 224 residues that span various folding complexities and topologies [9]. Such standardized evaluation enables direct, reproducible comparisons across MD approaches, addressing a critical gap in the molecular simulation community.

Experimental Protocols and Workflows

Standard MD Validation Protocol

A typical MD-based validation protocol involves multiple stages of simulation and analysis. The process generally begins with system preparation, where the protein structure is solvated in water molecules with ions added to neutralize the system. Energy minimization removes steric clashes, followed by gradual heating and equilibration phases to stabilize temperature and density. Production simulation then proceeds, typically for tens to hundreds of nanoseconds, during which coordinates are saved for subsequent analysis [8] [7].

For the α-spectrin SH3 domain and its mutants, researchers performed 40 ns simulations using GROMACS with the GROMOS96 43a2 force field, explicit SPC water model, and particle mesh Ewald method for long-range electrostatics [8]. Conformational analysis employed a two-level approach combining self-organizing maps and hierarchical clustering to identify functionally relevant conformations and highlight differences in conformational dynamics [8].

Standard MD Validation Workflow

Integrative Validation with Experimental Data

For higher-accuracy validation, particularly with challenging systems like IDPs, integrative approaches combine MD with experimental data. The maximum entropy reweighting procedure represents one such methodology, determining accurate atomic-resolution conformational ensembles by integrating all-atom MD simulations with experimental data from NMR spectroscopy and SAXS [11].

This workflow involves running long-timescale MD simulations with different force fields, predicting experimental observables for each simulation frame using forward models, and then reweighting the ensembles to achieve optimal agreement with experimental data while maintaining maximum entropy [11]. The approach automatically balances restraints from different experimental datasets based on the desired effective ensemble size, producing statistically robust ensembles with minimal overfitting.

Integrative Validation Workflow

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful MD validation requires appropriate tools and methodologies. The table below outlines key computational "reagents" essential for effective MD-based validation studies.

Table 3: Essential Research Reagents for MD Validation

| Tool Category | Specific Tools | Function | Application Notes |

|---|---|---|---|

| Simulation Engines | GROMACS, AMBER, OpenMM | Perform molecular dynamics calculations | GROMACS favored for speed; AMBER for force field variety; OpenMM for GPU acceleration [8] [9] [14] |

| Force Fields | CHARMM36m, a99SB-disp, GROMOS96 | Define molecular interactions and potentials | CHARMM36m and a99SB-disp show strong performance for IDPs; choice depends on system [11] |

| Analysis Suites | MDTraj, MDAnalysis, GROMACS tools | Process trajectories and calculate metrics | MDTraj offers Python API; MDAnalysis provides comprehensive analysis suite [8] [9] |

| Enhanced Sampling | GaMD, Metadynamics, WE | Accelerate rare event sampling | GaMD valuable for glycosidic linkage transitions; WE effective for protein folding [14] [9] |

| Benchmarking | MDBenchmark, WESTPA | Assess simulation performance and sampling | MDBenchmark optimizes computing resource use; WESTPA implements weighted ensemble [15] [9] |

Molecular dynamics simulations have evolved into sophisticated validation tools capable of probing the stability, flexibility, and conformational landscapes of biomolecules with atomic resolution. Through quantitative metrics like RMSD and RMSF, advanced sampling methods, and integration with experimental data, MD provides critical insights that complement static structural models. As computational protein design and prediction continue advancing, robust MD validation protocols will become increasingly essential for verifying structural models and ensuring their biological relevance. The continued development of standardized benchmarking frameworks and integrative methods promises to further enhance the reliability and applicability of MD as a validation tool across diverse biological systems.

In molecular dynamics (MD) research, validating the stability of minimized structures is a critical step for ensuring the reliability of computational models in drug development and structural biology. Stability metrics provide quantitative, time-dependent measures to assess whether a simulated molecular system has reached a physiologically relevant equilibrium or if it undergoes significant conformational changes. Among the most critical metrics are the Root-Mean-Square Deviation (RMSD), which measures the average atomic displacement of a structure relative to a reference, typically indicating global conformational stability; the Radius of Gyration (Rg), which quantifies the compactness and overall dimensions of a molecular structure; and Energy Fluctuations, which reflect the thermodynamic stability of the system through the conservation of potential and kinetic energy. These metrics are indispensable for benchmarking the performance of different modeling algorithms, such as AlphaFold, PEP-FOLD, and homology modeling, and for verifying that a minimized structure represents a stable, native-like conformation rather than a transient or unstable state [16]. This guide objectively compares these core stability metrics, detailing their experimental protocols, interpretation, and application in contemporary research.

Comparative Analysis of Key Stability Metrics

The table below provides a structured comparison of the three primary stability metrics, detailing their core functions, analytical focus, and interpretation guidelines.

Table 1: Comparative Analysis of Key Structural Stability Metrics

| Metric | Core Function & Analytical Focus | Data Interpretation Guidelines | Representative Values from Recent Studies |

|---|---|---|---|

| Root-Mean-Square Deviation (RMSD) | Measures global structural drift by calculating the average distance between atoms after optimal alignment to a reference structure [17]. | Low, stable RMSD: Conformational stability is achieved [18].Sudden RMSD jumps: Possible conformation change or unfolding event.Gradual, continuous rise: System may not be equilibrated. | Protein backbone: ~1.5-3.0 Å (stable complex) [18] [17].Ligand atoms: < 1.0 Å (stable binding) [17]. |

| Radius of Gyration (Rg) | Measures structural compactness and overall shape, calculated as the mass-weighted root mean square distance of atoms from the structure's center of mass [19]. | Decreasing Rg: System is collapsing or becoming more compact.Stable Rg: Maintains a consistent fold and packing.Increasing Rg: Structure is expanding or loosening, potentially unfolding. | Folded proteins: ( R_g(N) \propto N^{\nu} ), with ( \nu \sim 1/3 ) for a compact, globular state [19]. |

| Energy Fluctuations | Assesses thermodynamic stability by monitoring the conservation of total energy in the system (NVE ensemble) or the stability of potential energy over time (NVT/NPT ensembles) [20]. | Stable total energy (NVE): Validates the integrity of the simulation and energy conservation.Stable potential energy: Indicates the system is in a state of equilibrium. Large drifts suggest instability. | - |

Experimental Protocols for Metric Analysis

A robust MD protocol is essential for obtaining reliable and reproducible metric data. The following workflow and detailed methodology are synthesized from current research practices.

System Preparation and Simulation

- Initial Structure Minimization: Begin with a modeled or experimentally derived structure (e.g., from PDB). Use steepest descent or conjugate gradient algorithms to remove steric clashes and bad contacts, ensuring the structure is at a local energy minimum [16].

- Solvation and Ionization: Place the molecule in a physiologically relevant solvent box (e.g., TIP3P water model). Add ions to neutralize the system's charge and achieve a desired ionic concentration, mimicking a biological environment.

- Equilibration Phases:

- NVT Ensemble: Equilibrate the system with a constant Number of particles, Volume, and Temperature (typically 300 K using a thermostat like Nosé-Hoover) for 100-500 ps. This allows the system to reach the target temperature.

- NPT Ensemble: Further equilibrate with a constant Number of particles, Pressure (1 bar using a barostat like Parrinello-Rahman), and Temperature for 100-500 ps. This allows the solvent density to adjust correctly and the box size to stabilize.

- Production MD Run: Perform an extended simulation using an integrator like LINCS under the desired ensemble (NPT for stability studies). The duration must be sufficient to capture relevant biological dynamics; for protein folding or peptide stability, this can range from 100 ns to several microseconds [16] [18]. A 100 ns simulation is a common benchmark for initial stability validation [16].

Trajectory Analysis and Metric Calculation

- Trajectory Processing: Before analysis, ensure trajectories are correctly aligned (e.g., to the protein backbone) to remove global rotation and translation.

- RMSD Calculation: The RMSD is calculated as: ( \text{RMSD}(t) = \sqrt{ \frac{1}{N} \sum{i=1}^{N} \lvert \vec{r}i(t) - \vec{r}i^{\text{ref}} \rvert^2 } ) where ( \vec{r}i(t) ) is the position of atom ( i ) at time ( t ), ( \vec{r}_i^{\text{ref}} ) is its position in the reference structure (often the minimized or starting structure), and ( N ) is the number of atoms [21] [18]. This is typically calculated for backbone atoms (C, Cα, N) to assess the core structural stability.

- Radius of Gyration (Rg) Calculation: The Rg is calculated as: ( Rg = \sqrt{ \frac{1}{M} \sum{i=1}^{N} mi \lvert \vec{r}i - \vec{r}{\text{com}} \rvert^2 } ) where ( mi ) is the mass of atom ( i ), ( M ) is the total mass, ( \vec{r}i ) is the position of atom ( i ), and ( \vec{r}{\text{com}} ) is the center of mass of the molecule [19]. This can be calculated for the entire molecule or specific subchains to analyze internal packing.

- Energy Fluctuation Analysis: Monitor the total energy (in NVE simulations) and the potential energy (in NPT/NVT simulations) over time. The system is considered thermodynamically stable if these values fluctuate steadily around a constant mean without significant drifts [20].

The Scientist's Toolkit: Essential Research Reagents and Software

The table below lists key computational tools and their functions, as utilized in the cited experimental studies.

Table 2: Essential Research Reagents & Software Solutions

| Tool Name | Primary Function | Application Example |

|---|---|---|

| GROMACS | A versatile package for performing MD simulations, including trajectory analysis [22]. | Used for all-atom MD simulations to predict crystal structures of bowl-shaped π-conjugated molecules and analyze their energy landscapes [22]. |

| Desmond | A high-performance MD simulator designed for biomolecular systems [18]. | Employed for 500 ns simulations of carbonic anhydrase XII with inhibitors to study binding stability and conformational changes [18]. |

| LAMMPS | A classical molecular dynamics code for modeling materials at the atomic scale [23]. | Applied to study the stratification behavior of methane-hydrogen mixtures under different gravitational fields [23]. |

| AutoDock Vina / InstaDock | Molecular docking and virtual screening tools for predicting ligand binding poses and affinities [24]. | Used for high-throughput virtual screening to identify natural inhibitors targeting the αβIII-tubulin isotype [24]. |

| AlphaFold / PEP-FOLD | Algorithms for predicting protein and peptide structures from amino acid sequences [16]. | Compared for their efficacy in modeling short, unstable antimicrobial peptides (AMPs) [16]. |

Integrated Interpretation: A Case Study in Cancer Drug Discovery

The power of these integrated metrics is exemplified in a 2025 study investigating carbonic anhydrase XII (CA XII) inhibitors for cancer therapy. Researchers combined RMSD, Rg, and energy analyses to validate the stability of a novel inhibitor (V35) bound to the target protein. Over a 500 ns simulation, the protein-ligand complex exhibited a stable backbone RMSD of around 1.5-2.0 Å, indicating minimal global structural drift. The Rg value remained constant, confirming that the protein maintained its compact, folded conformation without unfolding or significant expansion. Concurrently, stable potential energy and favorable MM/GBSA binding free energy calculations (-44.17 kcal/mol for V35) confirmed the thermodynamic stability of the complex. This multi-metric validation provided high confidence that the minimized structure of the V35-CA XII complex was stable and reliable for further analysis and design, underscoring the compound's potential as a lead therapeutic candidate [18].

RMSD, Rg, and energy fluctuations are non-redundant, complementary metrics that form the cornerstone of structural validation in molecular dynamics. While RMSD provides a macro-level view of conformational stability, and Rg offers insight into structural compactness, energy fluctuations underpin the thermodynamic validity of the simulation. As demonstrated in cutting-edge research, from validating AI-predicted peptide structures to designing isoform-selective drugs, the triangulation of data from these metrics is essential for benchmarking computational models and making confident decisions in rational drug design. Researchers are advised to always apply these metrics in concert, as their integrated story provides a far more reliable assessment of structural stability than any single metric alone.

Accurate prediction of short peptide structures represents a significant challenge in computational biology, with direct implications for therapeutic development, particularly for antimicrobial peptides (AMPs) and other bioactive sequences [16]. Short peptides are often highly flexible and can adopt numerous conformations in solution, making them difficult to model with traditional algorithms trained primarily on globular proteins [25]. This case study examines how Molecular Dynamics (MD) simulations have emerged as a crucial validation tool for assessing the performance of structure prediction algorithms, with a specific focus on revealing the complementary strengths of AlphaFold and PEP-FOLD when applied to short peptides.

The study is framed within a broader thesis on validating minimized structures with molecular dynamics research, demonstrating how computational validation pipelines can guide researchers in selecting appropriate tools based on peptide characteristics. By integrating MD simulations as a benchmark, researchers can move beyond static structural snapshots to evaluate conformational stability under physiologically relevant conditions [16] [25].

Methodology: Comparative Modeling and MD Validation Framework

Peptide Selection and Characterization

The foundational study selected 10 putative antimicrobial peptides randomly derived from the human gut metagenome, with lengths falling within the typical AMP range (12-50 amino acids) [16]. Prior to structure prediction, researchers conducted comprehensive physicochemical characterization using the ExPASy-ProtParam tool to calculate key properties including isoelectric point (pI), aromaticity, grand average of hydropathicity (GRAVY), and instability index [16]. This initial profiling enabled subsequent correlation between peptide properties and algorithmic performance.

Disordered regions were predicted using the RaptorX server, which employs a Deep Convolutional Neural Fields (DeepCNF) model to forecast structural disorder, secondary structure, and solvent accessibility [16]. This step was particularly important for interpreting MD results, as disordered regions typically exhibit higher flexibility during simulations.

Structure Prediction Algorithms

Four distinct modeling approaches were selected to represent the spectrum of current methodologies [16]:

- AlphaFold: Deep learning-based approach utilizing evolutionary constraints and attention mechanisms

- PEP-FOLD3: De novo fragment-assembly method based on structural alphabet letters

- Threading: Template-based approach leveraging known protein folds

- Homology Modeling: Comparative modeling using MODELLER with related structures

This strategic selection enabled direct comparison between template-based, de novo, and deep learning methods, providing insights into their respective strengths and limitations for short peptide modeling.

Validation Metrics and MD Protocol

The validation pipeline incorporated multiple complementary approaches [16]:

- Ramachandran plot analysis to assess backbone dihedral angle quality

- VADAR for comprehensive structural validation

- Molecular Dynamics simulations for stability assessment

For MD simulations, researchers performed 40 independent simulations (4 structures × 10 peptides), each with 100 ns duration using GROMACS software [16]. The simulation protocol included system solvation in TIP3P water models, application of the AMBER force field, and implementation of periodic boundary conditions to mimic physiological environments [26] [27]. Trajectory analysis focused on stability metrics, including RMSD, RMSF, and intramolecular interactions.

Results: MD Reveals Algorithm-Specific Strengths

Performance Across Peptide Classes

Molecular dynamics simulations demonstrated that algorithmic performance was strongly influenced by peptide physicochemical properties, particularly hydrophobicity [16]. The stability of predicted structures during MD simulations revealed complementary strengths between the different approaches.

Table 1: Algorithm Performance Based on Peptide Properties

| Peptide Characteristic | Optimal Algorithm(s) | Key Observations from MD Simulations |

|---|---|---|

| High hydrophobicity | AlphaFold & Threading | Structures maintained compact folds with stable hydrophobic cores |

| High hydrophilicity | PEP-FOLD & Homology Modeling | Better solvation and stable hydrogen bonding networks |

| Mixed secondary structures | PEP-FOLD | More accurate β-turn and loop predictions |

| α-helical bias | AlphaFold | High accuracy in helix geometry and packing |

| Disordered regions | PEP-FOLD | More realistic flexibility in unstructured regions |

Quantitative Stability Metrics from MD

The root mean square deviation (RMSD) analysis throughout 100 ns simulations provided quantitative measures of structural stability. PEP-FOLD generated structures that generally exhibited lower RMSD fluctuations after equilibration, indicating greater conformational stability under simulation conditions [16]. AlphaFold predictions, while often initially more compact, in some cases showed higher RMSD values during simulations, suggesting structural adjustments toward more stable conformations [25].

Radius of gyration (Rg) measurements revealed that AlphaFold frequently produced more compact starting structures, while PEP-FOLD structures maintained more consistent compactness throughout simulations [16]. This observation suggests differences in how these algorithms handle the balance between intramolecular interactions and solvation effects.

Comparative Accuracy Assessment

Table 2: Algorithm Performance Benchmarking for Short Peptides

| Algorithm | Approach | Strength Areas | MD Validation Insights | Key Limitations |

|---|---|---|---|---|

| AlphaFold | Deep learning | α-helical peptides, compact folds [16] [28] | pLDDT scores don't always correlate with MD stability [25] [28] | Challenged by mixed secondary structures, orphan proteins [29] [25] |

| PEP-FOLD | De novo fragment assembly | Hydrophilic peptides, stable dynamics [16] [30] | Maintains structural stability during MD simulations [16] | Limited to 9-36 amino acids; lower Z-scores [30] [31] |

| Threading | Template-based | Hydrophobic peptides [16] | Complementary to AlphaFold for certain peptide classes [16] | Template dependency limits novel fold prediction |

| Homology Modeling | Comparative modeling | Hydrophilic peptides [16] | Moderate performance across peptide types [16] | Requires suitable templates |

Recent benchmarking studies involving 588 peptide structures confirmed that while AlphaFold predicts α-helical, β-hairpin, and disulfide-rich peptides with high accuracy, its pLDDT confidence metric does not always correlate with lowest RMSD to experimental structures [28]. This highlights the critical importance of MD validation for identifying the most accurate predicted conformations.

Integrated Workflow for Peptide Structure Prediction

The following workflow diagram illustrates the integrated approach for peptide structure prediction and validation revealed by the case study:

The Scientist's Toolkit: Essential Research Reagents

Table 3: Computational Tools for Peptide Structure Prediction and Validation

| Tool Category | Specific Tools | Function | Application Notes |

|---|---|---|---|

| Structure Prediction | AlphaFold 2/3, PEP-FOLD 4, I-TASSER 5.1 | Generate 3D structural models from sequence | AlphaFold excels for single-chain proteins; PEP-FOLD for de novo peptide folding [16] [31] |

| MD Simulation | GROMACS, AMBER | Simulate dynamic behavior and validate stability | GROMACS recommended for efficiency with peptide systems [16] [26] |

| Structure Validation | VADAR, PROCHECK, MolProbity | Assess stereochemical quality | Ramachandran analysis critical for backbone validation [16] |

| Physicochemical Analysis | ExPASy-ProtParam, Prot-pi | Calculate charge, hydropathicity, instability index | Essential for correlating properties with algorithm performance [16] |

| Disorder Prediction | RaptorX, DISOPRED3 | Identify intrinsically disordered regions | Important for interpreting MD fluctuations [16] |

Discussion: Implications for Rational Peptide Design

The integration of MD simulations as a validation tool has fundamentally transformed our understanding of computational peptide modeling. Rather than seeking a universal "best" algorithm, researchers can now adopt a property-informed selection strategy where peptide characteristics guide tool selection [16]. This approach recognizes that the biophysical properties of peptides significantly influence algorithmic performance.

The finding that AlphaFold and PEP-FOLD display complementary strengths based on peptide hydrophobicity has practical implications for drug development pipelines [16]. For hydrophobic peptides (common in membrane-active AMPs), an AlphaFold-first approach with MD validation may be optimal. For hydrophilic or mixed-charge peptides, PEP-FOLD may produce more stable structures. This paradigm shift from universal to context-dependent algorithm selection represents a significant advancement in computational structural biology.

Furthermore, the observation that AlphaFold's pLDDT scores do not consistently correlate with MD stability for short peptides highlights the necessity of dynamical validation [25] [28]. This suggests that confidence metrics from static prediction algorithms should be interpreted cautiously, and MD simulations provide essential complementary information about conformational stability under near-physiological conditions.

This case study demonstrates that Molecular Dynamics simulations serve as an essential tool for validating and benchmarking structure prediction algorithms for short peptides. Through comprehensive MD validation, researchers have discovered that AlphaFold and PEP-FOLD exhibit complementary strengths that correlate with peptide physicochemical properties.

The key insight is that algorithm performance is context-dependent – AlphaFold excels with hydrophobic peptides and compact folds, while PEP-FOLD generates more stable structures for hydrophilic peptides and maintains better dynamical stability during simulations. These findings support the development of integrated prediction pipelines where multiple algorithms are applied in parallel, with MD simulations serving as the final arbiter of structural quality.

For researchers pursuing rational design of therapeutic peptides, these findings suggest a strategic approach: (1) characterize peptide physicochemical properties, (2) select algorithms based on these properties, (3) generate multiple structural models, and (4) employ MD simulations to identify the most stable conformations. This property-informed modeling strategy, validated by molecular dynamics, will accelerate the development of peptide-based therapeutics by providing more reliable structural models for interaction analysis and functional studies.

Executing Validation: A Step-by-Step MD Protocol for Robust Structure Assessment

In molecular dynamics (MD) research, the initial, energy-minimized structure of a biomolecule represents only a starting point. Validating this structure requires simulating its behavior in a realistic environment, a process central to bridging the gap between static structural data and biological function [32] [33]. The methods used to solvate the molecule, place ions, and define the surrounding medium directly determine the simulation's physical accuracy and its relevance to experimental observations. For drug discovery professionals, a correctly built system is indispensable for reliable investigations into mechanisms of action, stability, and molecular interactions [33] [34].

This guide objectively compares the predominant approaches for constructing these simulation environments: explicit solvent models, which simulate every water molecule and ion, and implicit solvent models, which represent the solvent as a dielectric continuum. We will evaluate their performance in the context of validating minimized structures, supported by experimental data and detailed protocols.

Core Concepts and Research Reagents

Before comparing methodologies, it is essential to understand the key components and tools that constitute the "simulation environment." The table below details the essential research reagents and software solutions used in this field.

Table 1: Key Research Reagents and Software Solutions for MD Simulations

| Item/Software | Type/Provider | Primary Function in Simulation Setup |

|---|---|---|

| TP3P, SPC/E Water Models | Explicit Solvent Model | Provides an atomic-level representation of water molecules, capturing granular solvent effects and viscosity [35]. |

| Generalized Born (GB) Model | Implicit Solvent Model | Approximates solvation free energy as a sum of polar and nonpolar terms, significantly speeding up calculations by replacing explicit water [32]. |

| Molecular Operating Environment (MOE) | Software / Chemical Computing Group | An all-in-one platform for molecular modeling, cheminformatics, and structure-based drug design, useful for initial system building [36]. |

| AMBER | MD Package | A widely used MD software suite that provides tools for system building (e.g., tleap), explicit and implicit solvent simulation, and advanced analysis [32]. |

| GROMACS | MD Package | A high-performance MD software known for its speed, often optimized for GPU computing, used for simulating biomolecules in explicit solvent [34] [37]. |

| Schrödinger Suite | Software / Schrödinger | A comprehensive platform that integrates quantum chemical methods, machine learning, and MD for molecular modeling and free energy calculations [36]. |

Methodological Comparison: Explicit vs. Implicit Solvation

The choice between explicit and implicit solvent is a fundamental decision that trades off computational cost for physical detail. The following workflow outlines the standard protocol for building a simulation system, highlighting steps that differ between the two approaches.

Diagram 1: Workflow for Building an MD Simulation System.

The table below provides a direct performance comparison of these two methods, illustrating the core trade-offs.

Table 2: Performance Comparison of Explicit vs. Implicit Solvent Models

| Feature | Explicit Solvent (e.g., TIP3P) | Implicit Solvent (e.g., GB) |

|---|---|---|

| Physical Realism | High. Captures specific water structure, hydrogen bonding, and diffusion [32] [35]. | Low. Lacks explicit water molecules, missing atomic-level solvent details [32]. |

| Computational Cost | Very High. Can require simulating 10x more particles; dramatically slower [35]. | Low. Much faster, allowing for longer timescale simulations [32]. |

| Ion Effects | Explicitly models discrete ions and their dynamics [32] [38]. | Models ionic strength as a continuum, missing specific ion interactions [38]. |

| Salt Bridge Stability | Accurate. Can show solvent-separated states and realistic dynamics [38]. | Often over-stabilized. Contact pairs are typically too stable compared to explicit solvent [38]. |

| Ideal Use Case | Final validation of dynamics, studying specific solvent-ion interactions, and parameterizing coarse-grained models [32] [38]. | Initial stability checks, rapid sampling, and large-scale conformational changes [32]. |

Experimental Protocols and Validation Data

Detailed Protocol: Explicit Solvent System Setup

The following protocol, adapted for a system like an siRNA duplex, is typical for setting up an explicit solvent simulation using the AMBER package [32].

- Force Field and Topology Preparation: Load the initial PDB file into the

tleapmodule. Apply a nucleic acid force field (e.g.,ff14SBorOL3). This step assigns parameters for bonded and non-bonded interactions to every atom. - Solvation in Explicit Water: The solute is immersed in a predefined box of explicit water molecules, such as TIP3P. A common command is

solvateBox <molecule> TIP3PBOX 12.0, which places the solute in a box with a 12.0 Å buffer of water. - Ion Placement: Ions are added to neutralize the system's net charge and to achieve a physiologically relevant ionic concentration (e.g., 0.15 M NaCl). In

tleap, the commandaddIons2 <molecule> Na+ 0adds sodium ions until the system charge is neutralized. Further ions can be added to match the desired concentration. - System Equilibration: The fully solvated system undergoes a multi-stage energy minimization and equilibration process. This involves:

- Minimization: Relaxing the solvent and ions around the fixed solute to remove steric clashes.

- Heating: Gradually increasing the system temperature to the target (e.g., 300 K) under positional restraints on the solute.

- Density Equilibration: A short simulation in the NPT ensemble (constant Number of particles, Pressure, and Temperature) to allow the solvent density to reach the correct value.

- Unrestrained Equilibration: A final equilibration run with all restraints removed to ensure the system is stable before production dynamics.

Experimental Validation: Linking Simulation to Physicochemical Properties

The ultimate test of a properly built and validated system is its ability to predict or correlate with experimental data. A compelling example comes from research that uses MD simulations to predict a key pharmaceutical property: aqueous solubility.

Experimental Methodology [34]:

- A dataset of 211 diverse drug molecules was compiled with experimental aqueous solubility (logS) data.

- Each compound underwent MD simulation in explicit water (using GROMACS with the GROMOS 54a7 force field) to extract dynamic properties.

- Key MD-derived properties were calculated from the trajectories, including:

- Solvent Accessible Surface Area (SASA)

- Coulombic and Lennard-Jones (LJ) interaction energies between the solute and water.

- Estimated Solvation Free Energy (DGSolv)

- These properties, along with the experimental octanol-water partition coefficient (logP), were used as features in machine learning models to predict solubility.

Results and Performance Data: The MD-derived properties demonstrated high predictive power. The Gradient Boosting machine learning algorithm achieved a predictive R² of 0.87 and a root mean square error (RMSE) of 0.537 for logS on a test set of compounds [34]. This performance is comparable to models based solely on chemical structure, confirming that simulations in a properly built explicit solvent environment can capture the essential molecular interactions governing solubility.

Table 3: Key MD-Derived Properties for Predicting Aqueous Solubility [34]

| Property | Description | Influence on Solubility |

|---|---|---|

| logP | Octanol-water partition coefficient (experimental). | Well-established negative correlation; higher logP generally means lower solubility. |

| SASA | Solvent Accessible Surface Area. | Measures the compound's surface exposed to solvent; larger SASA often correlates with higher solubility. |

| Coulombic_t | Coulombic interaction energy over time. | Captures the strength of polar interactions with water; stronger favorable interactions increase solubility. |

| LJ | Lennard-Jones interaction energy. | Represents van der Waals dispersion forces with water; favorable interactions promote solubility. |

| DGSolv | Estimated Solvation Free Energy. | The overall free energy change of solvation; a more negative value favors dissolution. |

Selecting the right simulation environment is not a one-size-fits-all decision but a strategic choice based on the research question. Explicit solvent methods remain the gold standard for final validation studies, providing the detail necessary to compare directly with biophysical experiments and to interrogate specific solvent-mediated interactions. In contrast, implicit solvent models offer a powerful tool for high-throughput screening and studying events over longer timescales where computational cost is prohibitive.

The validation of a minimized structure is complete only when its dynamic behavior in a realistic environment aligns with experimental evidence. As demonstrated by the successful prediction of drug solubility, a rigorously built simulation system is the critical link that transforms a static, minimized model into a dynamic, biologically relevant entity, thereby solidifying the role of MD as a cornerstone of modern molecular research and drug development.

Accurate force field parameters, potential energy functions, and receptor-ligand models are indispensable for modeling the solvation and binding of drug-like molecules to biological receptors [39]. In the context of validating minimized structures with molecular dynamics (MD) research, the selection of an appropriate force field fundamentally determines the reliability and predictive power of the simulation outcomes. The rapidly expanding chemical space of medicinally relevant scaffolds demands that these mathematical models exhibit exceptional transferability across diverse molecular systems. Among the most widely utilized frameworks in biomolecular simulations are CHARMM (Chemistry at HARvard Macromolecular Mechanics), AMBER (Assisted Model Building with Energy Refinement), and their generalized counterparts for small molecules—CGenFF and GAFF [39] [40]. This guide provides an objective comparison of these force fields, drawing upon experimental and simulation data to inform researchers, scientists, and drug development professionals in selecting the optimal model for their specific molecular systems and research objectives.

Historical Context and Design Philosophies

The CHARMM and AMBER force fields emerged from distinct developmental pathways with different philosophical underpinnings. CHARMM was originally developed for simulations of proteins and nucleic acids, focusing on accurate description of structures and non-bonded energies [40]. AMBER shares similar biomolecular targets but employs a different approach to parameterization, where the use of RESP (Restrained Electrostatic Potential) charges is intended to reduce the need for extensive torsional potentials compared to models with empirical charge derivation [40]. The fundamental divergence lies in their treatment of electrostatic interactions, which profoundly impacts their performance across various chemical environments.

The need to simulate drug-like small molecules necessitated the development of generalized force fields compatible with these biomolecular frameworks. The CHARMM General Force Field (CGenFF) extended the CHARMM biomolecular force field to small drug-like molecules, allowing parameterization of arbitrary compounds to model interactions with biomolecular receptors in a manner consistent with the CHARMM force field [39]. Similarly, the General AMBER Force Field (GAFF) was developed to extend the application of the AMBER biomolecular force field to small drug-like molecules [39]. These generalized force fields employ different parameterization strategies rooted in their respective philosophical foundations.

Fundamental Differences in Parameterization Approaches

Electrostatic Treatment: CGenFF assigns charges expected to most accurately represent the Coulombic interaction of an atom with a proximal TIP3 water molecule if evaluated in the gas phase using HF/6-31G(d) level quantum mechanical (QM) calculations [39]. This approach arguably captures the polarization effects of the condensed phase more explicitly. In contrast, GAFF uses the AM1-BCC charge model designed to accurately reproduce the electrostatic surface potential (ESP) around a molecule, also evaluated in the gas phase using HF/6-31G* level of QM theory [39]. GAFF's methodology leverages the overestimated gas-phase dipole moment under the presumption that condensed phase polarization effects are fortuitously present.

Training Data and Validation: Both CGenFF and GAFF are trained on large, chemically diverse collections of model compounds that presumably contain atom-types and connectivities representing vast spaces of medicinally relevant compounds [39]. However, their validation against experimental data reveals differential performance across various molecular systems. CGenFF's parameterization emphasizes consistency with the CHARMM biomolecular force field, while GAFF prioritizes broad coverage of organic chemical space with automated parameterization capabilities.

Table 1: Fundamental Characteristics of Generalized Force Fields

| Feature | CGenFF | GAFF |

|---|---|---|

| Charge Model | HF/6-31G(d) Coulombic interaction with TIP3P water | AM1-BCC fitting to electrostatic surface potential |

| Biomolecular Compatibility | CHARMM | AMBER |

| Chemical Coverage | Drug-like small molecules | Organic and pharmaceutical molecules |

| Element Coverage | H, C, N, O, S, P, halogens | H, C, N, O, S, P, halogens |

| Automation Level | Moderate | High |

| Primary Strength | Condensed phase polarization | Gas phase electrostatic accuracy |

Performance Comparison: Quantitative Assessment Across Molecular Systems

Hydration Free Energy Predictions

Hydration free energy (HFE) represents a crucial property in drug design, measuring a compound's affinity for water and influencing binding affinity. A comprehensive analysis of over 600 molecules from the FreeSolv dataset revealed that both CGenFF and GAFF demonstrate comparable overall accuracy in predicting absolute HFEs [39]. However, significant differences emerge at the level of specific functional groups, highlighting specialized strengths and limitations for particular chemical contexts.

Functional Group-Specific Performance: Molecules containing nitro-groups show divergent behavior—CGenFF over-solubilizes while GAFF under-solubilizes them in aqueous medium [39]. Amine-containing compounds are systematically under-solubilized, with this effect more pronounced in CGenFF than in GAFF [39]. Conversely, carboxyl groups are over-solubilized to a greater extent in GAFF than in CGenFF [39]. These systematic errors provide critical guidance for researchers studying compounds with these specific functional motifs, suggesting potential corrective strategies or alternative force field selection based on molecular composition.

Thermodynamic Property Reproduction

Evaluation of force field performance for bio-relevant compounds like furfural, 2-methylfuran, 2,5-dimethylfuran, and 5-hydroxymethylfurfural reveals nuanced differences in thermodynamic property prediction [41]. In assessments of liquid density and vapor-liquid equilibria, different force fields demonstrate variable agreement with experimental data, with no single force field universally superior across all compounds and properties.

Liquid Density Predictions: For furfural, GAFF and CHARMM27 overestimate density across temperatures, while OPLS-AA shows better agreement with experimental data [41]. Similar compound-specific performance variations emerge for 2-methylfuran and 2,5-dimethylfuran (DMF), where different force fields show alternating superiority in predicting temperature-dependent density trends [41]. These findings underscore the importance of context-dependent force field selection, particularly for thermodynamic studies of biofuels and biomass-derived compounds.

Cross-Solvation Free Energy Accuracy

A systematic evaluation of nine condensed-phase force fields against experimental cross-solvation free energies provides a robust assessment of their relative accuracies [42]. This analysis considered a matrix of cross-solvation free energies for 25 small molecules representative of alkanes, chloroalkanes, ethers, ketones, esters, alcohols, amines, and amides, offering comprehensive insights into force field performance across diverse chemical environments.

Table 2: Force Field Performance Metrics for Cross-Solvation Free Energies

| Force Field | Correlation Coefficient | RMSE (kJ mol⁻¹) | Average Error (kJ mol⁻¹) |

|---|---|---|---|

| GROMOS-2016H66 | 0.88 | 2.9 | -0.2 |

| OPLS-AA | 0.87 | 2.9 | +0.2 |

| OPLS-LBCC | 0.86 | 3.3 | +0.3 |

| AMBER-GAFF2 | 0.84 | 3.3 | -0.3 |

| AMBER-GAFF | 0.83 | 3.6 | -0.6 |

| OpenFF | 0.82 | 3.6 | -1.5 |

| GROMOS-54A7 | 0.81 | 4.0 | +1.0 |

| CHARMM-CGenFF | 0.80 | 4.2 | -0.3 |

| GROMOS-ATB | 0.76 | 4.8 | -0.1 |

The data reveals that CHARMM-CGenFF shows moderate performance with a correlation coefficient of 0.80 and RMSE of 4.2 kJ mol⁻¹, while GAFF and GAFF2 demonstrate improved accuracy with RMSE values of 3.6 and 3.3 kJ mol⁻¹, respectively [42]. These differences, while statistically significant, are distributed heterogeneously across compound classes, emphasizing the chemical context-dependence of force field performance.

Binding Affinity Prediction: Critical Applications in Drug Discovery

Protein-Ligand Binding Affinity Calculations

Predicting binding affinity of ligands to protein targets represents a cornerstone application in computer-aided drug design. Alchemical relative binding free energy (ΔΔG) calculations have demonstrated remarkable accuracy in this domain, with both CHARMM and AMBER/GAFF achieving performance comparable to commercial implementations like Schrödinger's FEP+ [43]. A large-scale study encompassing 482 perturbations from 13 different protein-ligand datasets reported an average unsigned error (AUE) of 3.64 ± 0.14 kJ mol⁻¹ for combined AMBER and CHARMM force fields, effectively equivalent to FEP+'s AUE of 3.66 ± 0.14 kJ mol⁻¹ [43].

Consensus Approaches: Combining results from multiple force fields through simple averaging or weighted schemes can enhance prediction accuracy, potentially mitigating individual force field biases [43]. This strategy proves particularly valuable in lead optimization stages where reliable relative binding affinities guide medicinal chemistry efforts.

Membrane Protein Applications

The application of ΔΔG calculations to membrane proteins like G protein-coupled receptors (GPCRs) introduces additional complexities due to heterogeneous lipid environments, buried water molecules, and intricate system setup [44]. Recent research demonstrates successful application of both AMBER-TI and AToM-OpenMM with GAFF parameters for predicting binding affinities of ligands to GPCRs, showing good agreement with experimental data [44]. This expansion into membrane protein systems highlights the continuing evolution of these force fields for pharmaceutically relevant targets beyond soluble proteins.

Experimental Protocols and Methodologies

Hydration Free Energy Calculation Protocol

The calculation of hydration free energies follows well-established alchemical pathways implemented in MD packages like CHARMM [39]. The fundamental approach involves:

Thermodynamic Cycle: HFE is computed as ΔGhydr = ΔGvac - ΔGsolvent, where ΔGvac and ΔGsolvent represent the free energy of turning off solute interactions in vacuo and in aqueous medium, respectively [39]. This corresponds to the change in standard chemical potential as a solute transfers from gas phase to aqueous phase at equilibrium.

Alchemical Transformation: A hybrid Hamiltonian H(λ) = λH₀ + (1-λ)H₁ connects the endpoint states (fully interacting and non-interacting solute) through a coupling parameter λ ∈ [0,1] [39]. The solute is progressively annihilated from its environment using multiple simulation blocks for water molecules, dummy particles, and the solute itself.

System Setup: The simulation system consists of the solute molecule in a cubic box of explicit water (TIP3P model) with periodic boundary conditions, allowing at least 14Å between the solute and box edges [39]. Non-bonded interactions are typically truncated at 12Å, with free energy calculations performed using methods like MBAR, BAR, or TI.

Figure 1: Hydration Free Energy Calculation Workflow

Relative Binding Free Energy Protocol

Relative binding free energy calculations follow a dual transformation approach in complex and solvent environments [44] [43]:

System Preparation: Protein structures are prepared using tools like CHARMM-GUI Membrane Builder for membrane proteins or standard solvation for soluble proteins [44]. Ligands are parameterized using GAFF or CGenFF with charges calculated via AM1-BCC or RESP approaches.

Transformation Setup: The structural differences between ligand pairs are identified, and hybrid topologies are generated for alchemical transitions [43]. For each transformation, both electrostatic and van der Waals interactions are simultaneously scaled using softcore potentials to avoid singularities.

λ Scheduling: Multiple intermediate λ states (typically 12-16 windows) connect the physical end states [44]. Common λ values include 0.0, 0.0479, 0.1151, 0.2063, 0.3161, 0.4374, 0.5626, 0.6839, 0.7937, 0.8850, 0.9521, and 1.0, strategically distributed to ensure sufficient overlap between adjacent states.

Simulation and Analysis: Equilibration is performed at each λ window followed by production simulations. Free energy differences are computed via thermodynamic integration or free energy perturbation, with statistical precision enhanced through independent replicates [44].

Figure 2: Relative Binding Free Energy Calculation Workflow

Table 3: Computational Tools for Force Field Applications

| Tool Name | Function | Compatibility |

|---|---|---|

| CHARMM/OpenMM | GPU-accelerated MD simulations with alchemical free energy methods | CHARMM/CGenFF |

| pmx | Hybrid topology generation for alchemical calculations with GROMACS | AMBER/CHARMM |

| CHARMM-GUI | Membrane protein system preparation | CHARMM/CGenFF |

| ANTECHAMBER | Automatic parameterization of organic molecules | AMBER/GAFF |

| pyCHARMM | Python framework embedding CHARMM's functionality | CHARMM/CGenFF |

| AMBER-TI | Thermodynamic integration implementation | AMBER/GAFF |

| AToM-OpenMM | Alchemical transfer method for binding affinity | AMBER/GAFF |

| Multiwfn | Wavefunction analysis for charge parameterization | All |

The comparative analysis of CHARMM/CGenFF and AMBER/GAFF reveals a nuanced landscape where each force field exhibits distinct strengths and limitations. CGenFF demonstrates advantages in modeling condensed phase polarization effects and compatibility with CHARMM biomolecular force fields, while GAFF offers robust automated parameterization and excellent performance across diverse organic compounds. For hydration free energy predictions, both force fields show comparable overall accuracy but display functional group-specific deviations that should inform context-dependent selection [39]. In binding affinity calculations, consensus approaches combining both force fields can achieve accuracy comparable to state-of-the-art commercial implementations [43].

Strategic force field selection should consider the specific research context: membrane protein systems may benefit from CHARMM's explicit polarization treatment, while high-throughput screening of diverse compound libraries might leverage GAFF's automation capabilities. Critically, researchers should validate force field performance for their specific molecular systems against available experimental data, particularly when investigating compounds with functional groups known to present parameterization challenges. As force field development continues evolving with incorporation of polarizable models, machine learning approaches, and expanded training datasets, the guidelines presented here provide a foundation for informed decision-making in biomolecular simulation research.

In molecular dynamics (MD) simulations, the choice of statistical ensemble is not merely a technical detail but a fundamental decision that dictates the physical reality the simulation represents. An ensemble defines the collection of microscopic states that a system can explore, governed by macroscopic constraints like constant temperature (T), pressure (P), volume (V), or energy (E). The NVT (canonical) and NPT (isothermal-isobaric) ensembles are the workhorses of modern molecular simulation, particularly for biomolecular systems in aqueous environments. Proper equilibration within the correct ensemble is a prerequisite for obtaining thermodynamically meaningful results that can be correlated with experimental observations. This guide provides a comprehensive comparison of NVT and NPT ensembles, detailing their theoretical foundations, practical implementation protocols, and performance benchmarks, all framed within the critical context of validating energy-minimized structures for robust scientific research [45] [46].

The process often begins with an energy-minimized structure, a single, static configuration representing a local minimum on the potential energy surface. However, biological function and measurable properties arise from the dynamic exploration of conformational space around this minimum at a finite temperature. MD simulation is the powerful technique that samples these meta-stable and transitional conformations [47]. The equilibration cascade—the careful process of bringing a minimized structure to a stable thermodynamic state—is therefore the essential bridge between a static model and a dynamic, physiologically relevant system [45].

Theoretical Foundations: NVT vs. NPT Ensembles

Defining the Ensembles and Their Physical Significance

The core distinction between the ensembles lies in their conserved quantities, which in turn determine their appropriate applications [46].

NVT Ensemble (Constant Number of particles, Volume, and Temperature): Also known as the canonical ensemble, it is the default choice in many simulation packages. In this ensemble, the system's volume is fixed, and the temperature is controlled to a set point through algorithms that act as a thermostat. This ensemble is ideal for conformational searching of molecules in vacuum or for studying systems where volume and density are not primary concerns, such as a protein in a fixed box of solvent. It offers the advantage of less perturbation to the trajectory due to the absence of coupling to a pressure bath [46].

NPT Ensemble (Constant Number of particles, Pressure, and Temperature): This ensemble provides control over both temperature and pressure. The system's volume is allowed to fluctuate to maintain a constant external pressure, regulated by a barostat. This is the ensemble of choice when accurate pressure, volume, and system density are critical. It most closely mimics standard experimental conditions (e.g., 1 atm pressure and 300 K temperature), making it essential for simulating condensed phases, especially biomolecules in solution, to achieve the correct equilibrium density [46] [48].

Table 1: Core Characteristics of NVT and NPT Ensembles

| Feature | NVT Ensemble | NPT Ensemble |

|---|---|---|