Benchmarking Force Field Accuracy with LAMBench: A Comprehensive Guide for Biomedical Research

The advent of Large Atomistic Models (LAMs) promises universal, ready-to-use force fields to accelerate scientific discovery.

Benchmarking Force Field Accuracy with LAMBench: A Comprehensive Guide for Biomedical Research

Abstract

The advent of Large Atomistic Models (LAMs) promises universal, ready-to-use force fields to accelerate scientific discovery. However, their reliability across diverse biomedical systems requires rigorous evaluation. This article explores the LAMBench benchmarking system, a comprehensive framework designed to assess LAMs on generalizability, adaptability, and applicability. We delve into the foundational principles of LAMBench, its methodological approach for evaluating model performance on out-of-distribution data, strategies for troubleshooting and optimizing underperforming models, and a comparative analysis of current state-of-the-art LAMs. Aimed at researchers and drug development professionals, this guide provides critical insights for selecting and validating high-accuracy force fields, ultimately enhancing the reliability of molecular simulations in biomedical and clinical research.

Understanding LAMBench: The New Gold Standard for Force Field Evaluation

The Critical Need for Universal Potential Energy Surfaces Models

In the field of molecular modeling, the ability to accurately and efficiently compute the potential energy surface (PES) of atomistic systems is foundational to scientific advancement across disciplines from drug discovery to materials science. The PES, defined as the ground state solution of the electronic Schrödinger equation under the Born-Oppenheimer approximation, represents the energy landscape governing atomic interactions and dynamics [1] [2]. Despite the existence of a universal physical solution in quantum mechanics, practical computational methods have historically faced a fundamental trade-off: highly accurate quantum chemical calculations remain computationally prohibitive for large systems and long timescales, while empirical force fields offer speed at the cost of reduced accuracy and transferability [3] [4].

Large Atomistic Models (LAMs) have recently emerged as promising candidates to bridge this divide. These machine learning-based foundation models are pretrained on diverse quantum mechanical data to approximate the universal PES, then fine-tuned for specific applications [1]. However, until recently, the scientific community lacked comprehensive benchmarks to evaluate the true progress of these models toward universality. The introduction of LAMBench has provided the first standardized framework for assessing LAM performance across critical dimensions including generalizability, adaptability, and applicability [5] [1]. This comparison guide presents an objective evaluation of current state-of-the-art LAMs using LAMBench data, revealing both significant progress and substantial remaining challenges in the pursuit of truly universal potential energy surface models.

Understanding Potential Energy Surfaces: From Physical Foundations to Computational Models

The Physical and Mathematical Basis

The concept of the potential energy surface is rooted in the Born-Oppenheimer approximation, which separates the rapid motion of electrons from the slower nuclear motion [2]. This allows the definition of a PES where for each arrangement of atomic nuclei, the energy represents the electronic ground state energy plus nuclear-nuclear repulsion [2]. The PES therefore becomes a function of nuclear coordinates only, creating an energy landscape that determines structural stability, molecular dynamics, and reaction pathways [2].

Traditional molecular mechanics force fields approximate this landscape using fixed functional forms with empirically parameterized terms for bonded interactions (bonds, angles, dihedrals) and non-bonded interactions (electrostatics, van der Waals) [4] [6]. For example, the Class I force field functional form represents the total potential energy as:

[U{\text{total}} = U{\text{bonded}} + U{\text{nonbonded}} = (U{\text{bond}} + U{\text{angle}} + U{\text{dihedral}}) + (U{\text{electrostatic}} + U{\text{van der Waals}})]

While these force fields have enabled remarkable progress in biomolecular simulation, their fixed functional forms and limited transferability constrain their accuracy across diverse chemical environments [4].

The Rise of Machine Learning Approaches

Machine learning interatomic potentials (MLIPs) represent a paradigm shift from these traditional approaches. Rather than using fixed functional forms, LAMs utilize flexible neural network architectures trained on quantum mechanical data to learn the underlying PES directly [1]. This data-driven approach potentially allows LAMs to capture complex quantum mechanical effects without explicit physical modeling, offering a path toward universal approximations of the PES that remain computationally feasible for molecular dynamics simulations [5].

The LAMBench Evaluation Framework: A Standardized Benchmarking System

Benchmark Design and Implementation

LAMBench provides a comprehensive benchmarking system designed to evaluate Large Atomistic Models through a high-throughput, automated workflow [1]. The system assesses three fundamental capabilities essential for deploying LAMs as ready-to-use tools in scientific discovery:

- Generalizability: Measures accuracy on out-of-distribution datasets not included in training, evaluating performance as universal potentials across diverse atomistic systems [1]

- Adaptability: Assesses capacity for fine-tuning beyond potential energy prediction, particularly for structure-property relationship tasks [1]

- Applicability: Evaluates stability and efficiency in real-world simulations, including molecular dynamics stability and inference speed [1] [7]

The benchmark employs a normalized metric system that compares model performance against a baseline "dummy model" that predicts energy solely from chemical formula without structural information [7]. This creates a standardized scale where 0 represents perfect DFT accuracy and 1 indicates performance no better than the baseline [7].

Evaluation Domains and Metrics

LAMBench evaluates models across three primary domains representing different application contexts and accuracy requirements [7]:

- Inorganic Materials: Assessments based on phonon properties (maximum frequency, entropy, free energy, heat capacity) and elastic properties (shear and bulk moduli)

- Molecules: Evaluations using torsion profile energy, torsional barrier height, and relative conformer energy profiles

- Catalysis: Measurements of energy barriers, reaction energy changes, and error rates for reaction types including transfer, dissociation, and desorption

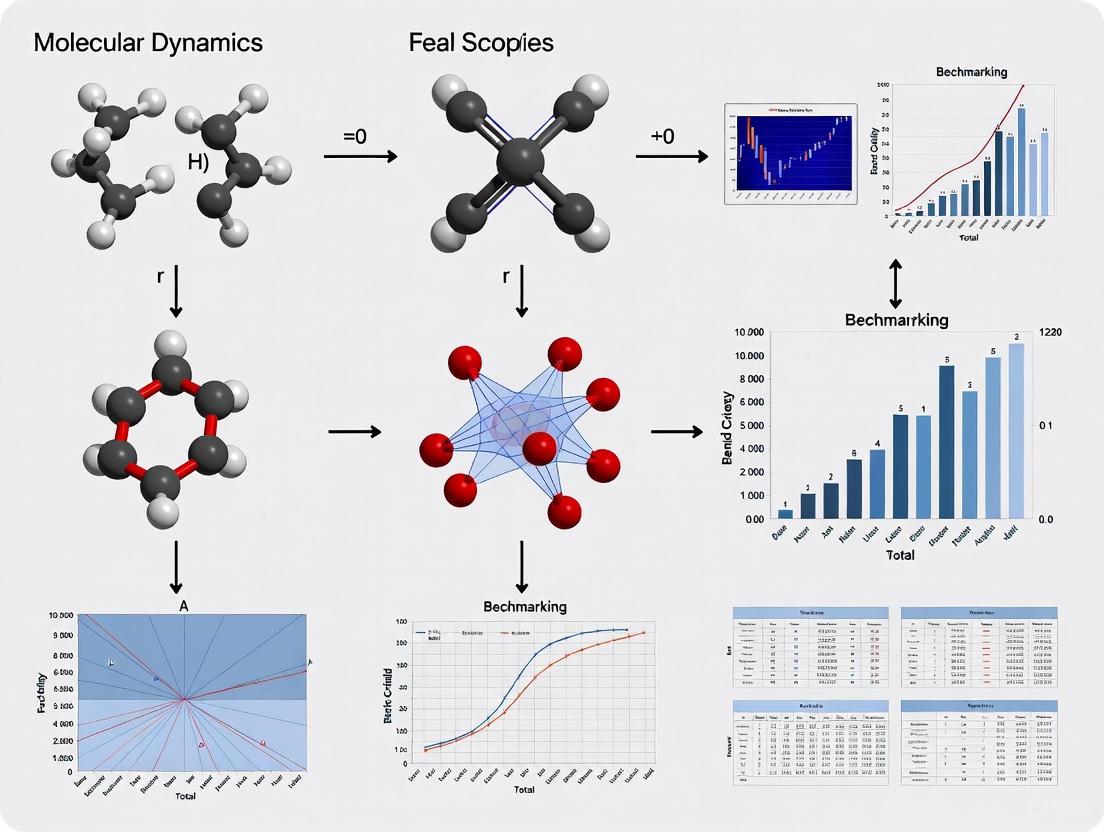

The following diagram illustrates the comprehensive LAMBench evaluation workflow:

Comparative Performance Analysis of State-of-the-Art LAMs

Generalizability Performance

Generalizability represents a model's accuracy on unseen data across different chemical domains. The following table summarizes the generalizability performance of leading LAMs as measured by LAMBench (v0.3.1), where lower values indicate better performance [7]:

Table 1: Generalizability Performance of Large Atomistic Models

| Model | Force Field Prediction Error (M̄FFm) | Property Calculation Error (M̄PCm) |

|---|---|---|

| DPA-3.1-3M | 0.175 | 0.322 |

| Orb-v3 | 0.215 | 0.414 |

| DPA-2.4-7M | 0.241 | 0.342 |

| GRACE-2L-OAM | 0.251 | 0.404 |

| Orb-v2 | 0.253 | 0.601 |

| SevenNet-MF-ompa | 0.255 | 0.455 |

| MatterSim-v1-5M | 0.283 | 0.467 |

| MACE-MPA-0 | 0.308 | 0.425 |

| SevenNet-l3i5 | 0.326 | 0.397 |

| MACE-MP-0 | 0.351 | 0.472 |

DPA-3.1-3M demonstrates the strongest overall generalizability, with the lowest errors in both force field prediction and property calculation tasks [7]. The significant variation between models highlights the current performance gap in the field, with the top-performing model (DPA-3.1-3M) achieving approximately half the error of the lowest-ranked model (MACE-MP-0) in force field prediction [7].

Applicability and Efficiency Metrics

Beyond accuracy, practical deployment requires computational efficiency and stability in molecular dynamics simulations. The following table compares applicability metrics, where higher efficiency scores and lower instability scores indicate better performance [7]:

Table 2: Applicability and Efficiency of Large Atomistic Models

| Model | Efficiency Score (MEm) | Instability Metric (MISm) |

|---|---|---|

| Orb-v3 | 0.396 | 0.000 |

| SevenNet-MF-ompa | 0.084 | 0.000 |

| DPA-2.4-7M | 0.617 | 0.039 |

| GRACE-2L-OAM | 0.639 | 0.309 |

| Orb-v2 | 1.341 | 2.649 |

| MatterSim-v1-5M | 0.393 | 0.000 |

| MACE-MPA-0 | 0.293 | 0.000 |

| SevenNet-l3i5 | 0.272 | 0.036 |

| MACE-MP-0 | 0.296 | 0.089 |

| DPA-3.1-3M | 0.261 | 0.572 |

Efficiency and stability metrics reveal different trade-offs in model design [7]. Notably, Orb-v2 achieves high computational efficiency but demonstrates significant instability in molecular dynamics simulations, while several models including Orb-v3, SevenNet-MF-ompa, MatterSim-v1-5M, and MACE-MPA-0 show perfect stability scores (0.000) with varying efficiency [7].

Accuracy-Efficiency Trade-offs

The relationship between accuracy and computational efficiency represents a critical consideration for practical applications. LAMBench analysis reveals that no single model currently dominates across all metrics, requiring researchers to make context-dependent selections [7]. DPA-3.1-3M provides the highest accuracy but moderate efficiency, while specialized models like SevenNet-MF-ompa offer superior stability for molecular dynamics applications despite lower generalizability scores [7].

Experimental Protocols and Methodologies

Force Field Prediction Assessment

The force field prediction tasks evaluate model accuracy in predicting energies, forces, and virials across three domains [7]:

- Test Datasets: ANI-1x, MD22, AIMD-Chig for molecules; Torres2019, Batzner2022, Sours2023, Lopanitsyna2023, Mazitov2024, Gao2025 for inorganic materials; Vandermause2022, Zhang2019, Villanueva2024 for catalysis [7]

- Evaluation Metric: Root Mean Square Error (RMSE) normalized against baseline dummy model performance [7]

- Normalization: ( \hat{M}^m{k,p,i} = \min\left(\frac{M^m{k,p,i}}{M^{\mathrm{dummy}}_{k,p,i}}, 1\right) ), where values are capped at 1.0 (dummy model performance) [7]

- Aggregation: Log-average of normalized metrics across datasets with weighted combination of energy (0.45), force (0.45), and virial (0.1) predictions [7]

Molecular Dynamics Stability Testing

Stability assessments measure energy conservation in NVE (microcanonical ensemble) simulations across nine different structures [7]:

- Protocol: Energy drift measurement during NVE molecular dynamics simulations

- Systems: Diverse molecular and materials systems representing different chemical environments

- Scoring: Instability metric (MISm) quantifying total energy drift, where lower values indicate better stability [7]

Efficiency Benchmarking Methodology

Computational efficiency is measured through standardized inference timing [7]:

- System Selection: 1000 randomly selected frames from inorganic materials and catalysis domains

- System Sizing: Frames expanded to 800-1000 atoms through unit cell replication to ensure GPU utilization convergence

- Measurement: Average inference time per atom (μs/atom) across 900 frames after 10% warm-up exclusion

- Normalization: Efficiency score ( M_E^m = \frac{\eta^0 }{\bar \eta^m } ) with ( \eta^0 = 100 \ \mathrm{\mu s/atom} ) [7]

Essential Research Reagents and Computational Tools

The development and evaluation of universal PES models relies on specialized computational resources and methodologies. The following table details key components of the research toolkit:

Table 3: Essential Research Toolkit for PES Model Development

| Tool/Resource | Function | Application in LAM Research |

|---|---|---|

| LAMBench Framework | Standardized benchmarking system | Evaluation of generalizability, adaptability, and applicability across models [5] [1] |

| Density Functional Theory | Quantum mechanical reference data | Generation of training labels and evaluation benchmarks [1] [2] |

| Graph Neural Networks | Model architecture backbone | Atomic representation learning and parameterization [4] |

| MPtrj Dataset | Materials Project trajectory data | Training data for inorganic materials domain [1] |

| ANI-1x Dataset | Quantum chemical calculations | Small molecule training and evaluation data [7] |

| OC20 Dataset | Catalyst adsorption data | Catalysis domain training and evaluation [1] |

| End-to-End Differentiable Framework | Force field parameterization | Self-consistent parametrization of proteins and ligands [4] |

Implications for Scientific Discovery and Drug Development

The advancement toward universal PES models has profound implications for scientific discovery, particularly in structure-based drug design where accurate molecular simulations are crucial [8]. Current limitations in traditional force fields restrict their ability to simulate heterogeneous systems and complex chemical transformations, creating bottlenecks in drug discovery pipelines [8]. The improved accuracy and transferability demonstrated by leading LAMs can potentially address these challenges by:

- Enabling more reliable prediction of protein-ligand binding affinities [8]

- Improving assessment of drug candidate membrane permeability [8]

- Supporting enumeration of putative bioactive conformations [4]

- Facilitating virtual screening of ultra-large compound libraries [8]

The LAMBench evaluation framework provides researchers with critical guidance for selecting appropriate models based on their specific application requirements, whether prioritizing accuracy for property prediction or stability for molecular dynamics simulations [7].

The comprehensive benchmarking provided by LAMBench reveals both significant progress and substantial challenges in the development of universal potential energy surface models. While current LAMs such as DPA-3.1-3M demonstrate impressive generalizability across diverse chemical domains, significant gaps remain between existing models and the ideal of a truly universal PES [5] [1]. The benchmarking data indicates that no single model currently dominates across all performance dimensions, requiring researchers to make strategic trade-offs based on their specific application needs.

Future advancements in universal PES models will likely require [1]:

- Incorporation of more diverse, cross-domain training data

- Development of multi-fidelity modeling approaches

- Improved conservativeness and differentiability for molecular dynamics stability

- Enhanced computational efficiency without sacrificing accuracy

As these models continue to evolve, standardized benchmarking frameworks like LAMBench will play a crucial role in guiding development efforts and providing researchers with objective performance data for model selection. The ongoing progress in this field promises to significantly accelerate scientific discovery across chemistry, materials science, and drug development by providing increasingly accurate and computationally accessible approximations to the universal potential energy surface.

The rapid emergence of Large Atomistic Models (LAMs) as foundational tools for approximating quantum-mechanical potential energy surfaces has created an urgent need for comprehensive evaluation frameworks. LAMBench addresses this need by providing a dynamic, extensible benchmarking ecosystem that rigorously assesses LAM performance across generalizability, adaptability, and applicability domains. This comparison guide presents an objective performance analysis of ten state-of-the-art LAMs using LAMBench v0.3.1, revealing significant performance variations and highlighting the considerable gap between current models and the ideal universal potential energy surface. Our findings demonstrate that while models like DPA-3.1-3M and Orb-v3 show promising generalizability, no single model currently dominates across all evaluation dimensions, emphasizing the critical importance of cross-domain training data, multi-fidelity modeling, and physical conservativeness for advancing ready-to-use LAMs in scientific discovery and drug development.

The LAMBench Evaluation Framework

Core Evaluation Dimensions

LAMBench employs a systematic, multi-faceted approach to benchmarking Large Atomistic Models, evaluating them across three fundamental capabilities essential for real-world scientific applications [9] [1]:

Generalizability: Assesses model accuracy as universal potentials across diverse atomic systems, particularly focusing on out-of-distribution (OOD) performance where test datasets are independently constructed with distributions distinct from training data. This dimension encompasses both force field prediction and domain-specific property calculation tasks [9] [7].

Adaptability: Measures a model's capacity for fine-tuning beyond potential energy prediction, with emphasis on structure-property relationship tasks that are crucial for domain-specific applications in materials science and drug development [9] [1].

Applicability: Evaluates practical deployment viability through stability assessments in molecular dynamics simulations and computational efficiency metrics, ensuring models can function effectively in real-world scientific workflows [9] [7].

System Architecture and Workflow

The LAMBench system implements a high-throughput, automated workflow for task calculation, result aggregation, analysis, and visualization [9] [1]. This architecture enables consistent, reproducible evaluation across diverse model architectures and chemical domains. As a dynamic platform, LAMBench is designed to continuously evolve with the research community, integrating new tasks, datasets, and evaluation methodologies over time [7].

LAMBench Evaluation Framework

Comparative Performance Analysis of State-of-the-Art LAMs

Comprehensive Model Performance Metrics

LAMBench v0.3.1 evaluated ten prominent LAMs released before August 1, 2025, providing a comprehensive comparison across generalizability and applicability domains [7]. The benchmark employs normalized error metrics that compare model performance against a baseline dummy model that predicts energy solely based on chemical formula without structural details, where a value of 0 represents perfect DFT accuracy and 1 indicates performance equivalent to the baseline model [7].

Table 1: Comprehensive LAMBench Performance Leaderboard (v0.3.1)

| Model | Generalizability Force Field Error (M̄FFm) ↓ | Generalizability Property Calculation Error (M̄PCm) ↓ | Efficiency Score (MEm) ↑ | Instability Metric (MISm) ↓ |

|---|---|---|---|---|

| DPA-3.1-3M | 0.175 | 0.322 | 0.261 | 0.572 |

| Orb-v3 | 0.215 | 0.414 | 0.396 | 0.000 |

| DPA-2.4-7M | 0.241 | 0.342 | 0.617 | 0.039 |

| GRACE-2L-OAM | 0.251 | 0.404 | 0.639 | 0.309 |

| Orb-v2 | 0.253 | 0.601 | 1.341 | 2.649 |

| SevenNet-MF-ompa | 0.255 | 0.455 | 0.084 | 0.000 |

| MatterSim-v1-5M | 0.283 | 0.467 | 0.393 | 0.000 |

| MACE-MPA-0 | 0.308 | 0.425 | 0.293 | 0.000 |

| SevenNet-l3i5 | 0.326 | 0.397 | 0.272 | 0.036 |

| MACE-MP-0 | 0.351 | 0.472 | 0.296 | 0.089 |

Performance Analysis and Key Findings

The benchmarking results reveal several critical patterns in current LAM capabilities:

Generalizability Performance: DPA-3.1-3M demonstrates superior generalizability for force field prediction (M̄FFm = 0.175), significantly outperforming other models, with Orb-v3 and DPA-2.4-7M also showing strong capabilities [7]. For property calculation tasks, DPA-3.1-3M again leads (M̄PCm = 0.322), followed by DPA-2.4-7M and SevenNet-l3i5, indicating that architectural innovations in these models better capture domain-specific physical properties [7].

Efficiency Trade-offs: A clear efficiency-accuracy trade-off emerges from the data, with Orb-v2 achieving the highest efficiency score (MEm = 1.341) but middling generalizability performance, while top-performing generalizability models like DPA-3.1-3M show moderate efficiency (MEm = 0.261) [7]. This highlights the practical considerations researchers must balance when selecting models for specific applications.

Stability Considerations: The instability metric reveals substantial variation in model reliability during molecular dynamics simulations, with Orb-v2 exhibiting significant instability (MISm = 2.649) while several models including Orb-v3, SevenNet-MF-ompa, and MatterSim-v1-5M demonstrate perfect stability (MISm = 0.000) [7]. This dimension is particularly crucial for long-time-scale simulations in drug development.

Experimental Protocols and Methodologies

Generalizability Assessment Methodology

LAMBench employs rigorous, domain-specific protocols for evaluating model generalizability across diverse chemical spaces [7]:

Table 2: Force Field Prediction Evaluation Domains and Datasets

| Domain | Test Datasets | Prediction Types | Weight Allocation |

|---|---|---|---|

| Inorganic Materials | Torres2019Analysis, Batzner2022equivariant, Sours2023Applications, Lopanitsyna2023Modeling, Mazitov2024Surface, Gao2025Spontaneous | Energy, Force, Virial (if periodic) | wE = wF = 0.45, wV = 0.1 (with virial); wE = wF = 0.5 (without virial) |

| Molecules | ANI-1x, MD22, AIMD-Chig | Energy, Force | wE = wF = 0.5 |

| Catalysis | Vandermause2022Active, Zhang2019Bridging, Villanueva2024Water | Energy, Force | wE = wF = 0.5 |

The generalizability error metric is calculated through a multi-step normalization and aggregation process [7]. First, the raw error metric for each test is normalized against a baseline dummy model: M̂k,p,im = min(Mk,p,im/Mk,p,idummy, 1). Domain-specific metrics are then computed as log-averages: M̄k,pm = exp(1nk,p ∑i=1nk,p log M̂k,p,im). These are combined using weighted averages across prediction types: M̄km = ∑p wp M̄k,pm / ∑p wp. The final generalizability metric represents the average across all domains: M̄m = 1nD ∑k=1nD M̄km [7].

Domain-Specific Property Calculation Protocols

Beyond force field prediction, LAMBench evaluates models on specialized property calculations critical for scientific applications [7]:

Inorganic Materials Domain: The MDR phonon benchmark assesses maximum phonon frequency, entropy, free energy, and heat capacity, while the elasticity benchmark evaluates shear and bulk moduli, with equal weight (1/6) assigned to each property type [7].

Molecules Domain: The TorsionNet500 benchmark evaluates torsion profile energy, torsional barrier height, and percentage of molecules with barrier height errors >1 kcal/mol, while Wiggle150 assesses relative conformer energy profiles, with each of the four prediction types weighted at 0.25 [7].

Catalysis Domain: The OC20NEB-OOD benchmark evaluates energy barriers, reaction energy changes, and percentage of reactions with barrier errors >0.1 eV for transfer, dissociation, and desorption reactions, with each of five prediction types weighted at 0.2 [7].

Applicability Testing Methodologies

LAMBench employs practical tests to evaluate model viability in real-world simulations [7]:

Efficiency Assessment: Models are evaluated on 900 frames expanded to 800-1000 atoms from Inorganic Materials and Catalysis domains, with efficiency score calculated as MEm = η0/η̄m, where η0 = 100 μs/atom and η̄m represents average inference time across configurations [7].

Stability Quantification: Stability is measured through total energy drift in NVE simulations across nine diverse structures, providing critical insights into model performance in extended molecular dynamics simulations relevant to drug development [7].

LAMBench Evaluation Workflow

Benchmark Models and Architectures

The LAMBench ecosystem encompasses diverse model architectures and training approaches, providing researchers with a comprehensive toolkit for atomic system modeling [9] [1] [7]:

Table 3: Essential LAMBench Research Reagents

| Model/Resource | Type | Primary Application Domain | Key Features |

|---|---|---|---|

| DPA-3.1-3M | Large Atomistic Model | Multi-domain | Leading generalizability performance, moderate efficiency |

| Orb-v3 | Large Atomistic Model | Multi-domain | Excellent stability, strong generalizability |

| MACE-MP-0 | Domain-Specific LAM | Inorganic Materials | Trained on MPtrj dataset at PBE/PBE+U level |

| SevenNet-0 | Domain-Specific LAM | Inorganic Materials | Trained on MPtrj dataset at PBE/PBE+U level |

| AIMNet | Domain-Specific LAM | Small Molecules | Trained at SMD(Water)-ωB97X/def2-TZVPP level |

| Nutmeg | Domain-Specific LAM | Small Molecules | Trained at ωB97M-D3(BJ)/def2-TZVPPD level |

| MPtrj Dataset | Training Data | Inorganic Materials | PBE/PBE+U level DFT calculations |

| ANI-1x | Benchmark Dataset | Molecules | Small molecule quantum properties |

| OC20 | Benchmark Dataset | Catalysis | Adsorption energies and catalyst interactions |

Cross-Domain Training Strategies

The benchmarking results strongly suggest that enhancing LAM performance requires simultaneous training with data from diverse research domains [9] [1]. The multitask pretraining strategy emerges as a promising approach, encoding shared knowledge into unified structures with high representational capacity while integrating domain-specific components through specialized neural networks [9]. This strategy directly addresses the fundamental challenge of unifying DFT data across domains despite variations in exchange-correlation functionals, basis sets, and pseudopotentials [9] [1].

LAMBench represents a significant advancement in the systematic evaluation of Large Atomistic Models, providing researchers and drug development professionals with comprehensive, objective performance comparisons across critical capability dimensions. The current benchmarking data reveals that while substantial progress has been made, a significant gap remains between existing LAMs and the ideal universal potential energy surface [9] [1].

The most promising development path appears to be through incorporating cross-domain training data, supporting multi-fidelity modeling at inference time, and ensuring model conservativeness and differentiability [9] [1]. As LAMBench continues to evolve as a dynamic ecosystem, it will facilitate the development of increasingly robust and generalizable atomistic models, ultimately accelerating scientific discovery across chemistry, materials science, and drug development.

In the field of computational molecular science, Large Atomistic Models (LAMs) have emerged as foundation models designed to approximate the universal potential energy surface (PES) governed by quantum mechanics [1]. These models aim to capture fundamental atomic and molecular interactions across diverse chemical systems, potentially spanning the accuracy of quantum mechanics with the computational efficiency of classical force fields. However, the rapid development of diverse LAMs has created a critical need for standardized evaluation methodologies to assess their true capabilities and limitations. The LAMBench benchmarking system addresses this gap by providing a comprehensive framework designed to evaluate LAMs across three fundamental pillars: generalizability, adaptability, and applicability [1] [9]. This systematic approach enables researchers to objectively compare model performance, identify strengths and weaknesses, and guide the development of more robust and reliable atomistic models for scientific discovery and drug development.

The LAMBench Evaluation Framework

The LAMBench system implements a high-throughput, automated workflow to benchmark diverse LAMs across multiple tasks, with integrated automation for calculation execution, result aggregation, analysis, and visualization [1]. This standardized approach ensures consistent evaluation across different models and domains. The benchmark tasks are specifically designed to assess three core capabilities essential for deploying LAMs as ready-to-use tools across scientific research contexts [1]:

- Generalizability: Assesses model accuracy on datasets not included in training, particularly out-of-distribution (OOD) test sets with distributions distinct from training data.

- Adaptability: Evaluates a model's capacity for fine-tuning on tasks beyond potential energy prediction, especially structure-property relationships.

- Applicability: Concerns the stability and efficiency of deploying LAMs in real-world simulations, particularly molecular dynamics.

The following workflow diagram illustrates the integrated evaluation process implemented in LAMBench:

LAMBench Integrated Evaluation Workflow

Quantitative Performance Comparison of Leading LAMs

LAMBench provides comprehensive quantitative metrics that enable direct comparison of state-of-the-art LAMs. The following tables summarize performance data for leading models released prior to August 2025, as measured by LAMBench version v0.3.1 [7].

Table 1: Comprehensive LAM Performance Comparison on LAMBench

| Model | Generalizability Force Field ($\bar{M}^m_{\mathrm{FF}}$) ↓ | Generalizability Property ($\bar{M}^m_{\mathrm{PC}}$) ↓ | Applicability Efficiency ($M_E^m$) ↑ | Applicability Stability ($M^m_{\mathrm{IS}}$) ↓ |

|---|---|---|---|---|

| DPA-3.1-3M | 0.175 | 0.322 | 0.261 | 0.572 |

| Orb-v3 | 0.215 | 0.414 | 0.396 | 0.000 |

| DPA-2.4-7M | 0.241 | 0.342 | 0.617 | 0.039 |

| GRACE-2L-OAM | 0.251 | 0.404 | 0.639 | 0.309 |

| Orb-v2 | 0.253 | 0.601 | 1.341 | 2.649 |

| SevenNet-MF-ompa | 0.255 | 0.455 | 0.084 | 0.000 |

| MatterSim-v1-5M | 0.283 | 0.467 | 0.393 | 0.000 |

| MACE-MPA-0 | 0.308 | 0.425 | 0.293 | 0.000 |

| SevenNet-l3i5 | 0.326 | 0.397 | 0.272 | 0.036 |

| MACE-MP-0 | 0.351 | 0.472 | 0.296 | 0.089 |

Performance Analysis by Evaluation Pillar

Generalizability Performance

Table 2: Force Field Prediction Generalizability Across Domains

| Model | Molecules Domain Error | Inorganic Materials Domain Error | Catalysis Domain Error |

|---|---|---|---|

| DPA-3.1-3M | 0.161 | 0.152 | 0.211 |

| Orb-v3 | 0.192 | 0.201 | 0.251 |

| DPA-2.4-7M | 0.223 | 0.218 | 0.281 |

| MACE-MP-0 | 0.342 | 0.327 | 0.385 |

The generalizability metrics ( $\bar{M}^m{\mathrm{FF}}$ and $\bar{M}^m{\mathrm{PC}}$ ) are normalized error metrics where lower values indicate better performance [7]. These metrics are calculated through a multi-step process: individual error metrics are first normalized against a baseline dummy model that predicts energy based solely on chemical formula, then log-averaged across datasets within each domain, and finally weighted across prediction types (energy, force, virial) and domains [7]. An ideal model matching Density Functional Theory (DFT) labels perfectly would score 0, while the dummy model scores 1 [7].

Applicability Performance

Table 3: Applicability and Efficiency Metrics

| Model | Inference Time (μs/atom) | Efficiency Score | Stability Metric |

|---|---|---|---|

| Orb-v2 | 74.5 | 1.341 | 2.649 |

| GRACE-2L-OAM | 156.5 | 0.639 | 0.309 |

| DPA-2.4-7M | 162.1 | 0.617 | 0.039 |

| DPA-3.1-3M | 383.1 | 0.261 | 0.572 |

Applicability metrics evaluate practical deployment characteristics [7]. The efficiency score ($ME^m$) is calculated as $ME^m = \eta^0 / \bar{\eta}^m$, where $\eta^0 = 100 \ \mu s/atom$ and $\bar{\eta}^m$ is the average inference time per atom, meaning higher values indicate better efficiency [7]. Stability ($M^m_{\mathrm{IS}}$) quantifies total energy drift in NVE simulations, with lower values indicating better stability [7].

Experimental Protocols and Methodologies

Generalizability Testing Protocol

The generalizability assessment employs zero-shot inference with energy-bias term adjustments based on test dataset statistics [7]. The testing methodology encompasses:

- Test Domains: Evaluations span three primary domains: Molecules (ANI-1x, MD22, AIMD-Chig datasets), Inorganic Materials (Torres2019Analysis, Batzner2022equivariant, Sours2023Applications, and related datasets), and Catalysis (Vandermause2022Active, Zhang2019Bridging, Villanueva2024Water datasets) [7].

- Prediction Types: Models are evaluated on energy (E), force (F), and when available, virial (V) predictions. For force field tasks, root-mean-square error (RMSE) is used with weights typically set at $wE = wF = 0.5$, or $wE = wF = 0.45$ and $w_V = 0.1$ when virial labels are available [7].

- Normalization Procedure: Individual error metrics are normalized against a baseline dummy model: $\hat{M}^m{k,p,i} = \min\left(\frac{M^m{k,p,i}}{M^{\mathrm{dummy}}_{k,p,i}}, 1\right)$, where $m$ indicates the model, $k$ denotes domain, $p$ signifies prediction type, and $i$ represents test set index [7].

Domain-Specific Property Calculation Protocol

For domain-specific property evaluation, mean absolute error (MAE) serves as the primary error metric [7]:

- Inorganic Materials Domain: MDR phonon benchmark predicts maximum phonon frequency, entropy, free energy, and heat capacity; elasticity benchmark evaluates shear and bulk moduli. Each prediction type carries equal weight (1/6) [7].

- Molecules Domain: TorsionNet500 benchmark assesses torsion profile energy, torsional barrier height, and number of molecules with barrier height error >1 kcal/mol; Wiggle150 benchmark evaluates relative conformer energy profile. Each prediction type weight: 0.25 [7].

- Catalysis Domain: OC20NEB-OOD benchmark evaluates energy barrier, reaction energy change, and percentage of reactions with barrier errors >0.1 eV for transfer, dissociation, and desorption reactions. Each prediction type weight: 0.2 [7].

Applicability Testing Protocol

- Efficiency Measurement: Random selection of 1000 frames from Inorganic Materials and Catalysis domains, expanded to 800-1000 atoms via unit cell replication. After warm-up exclusion, average inference time is measured over 900 configurations [7].

- Stability Assessment: Quantified by measuring total energy drift in NVE simulations across nine structures [7].

- Experimental Data Integration: Some advanced protocols incorporate experimental data fusion, combining DFT calculations with experimentally measured mechanical properties and lattice parameters to train ML potentials [10].

Table 4: Key Research Resources for LAM Development and Evaluation

| Resource | Type | Primary Function | Access |

|---|---|---|---|

| LAMBench | Benchmarking System | Comprehensive evaluation of LAMs across generalizability, adaptability, and applicability | Open Source |

| LAMBench Leaderboard | Interactive Platform | Real-time performance comparison of state-of-the-art LAMs | Online Access |

| MPtrj Dataset | Training Data | Inorganic materials trajectories for LAM pretraining | Public |

| ANI-1x | Training Data | Quantum chemical structures for organic molecules | Public |

| OC20 Dataset | Training Data | Adsorbate-catalyst relaxations for catalysis models | Public |

| DiffTRe Method | Algorithm | Differentiable trajectory reweighting for experimental data integration | Method Description [10] |

The comprehensive evaluation of leading Large Atomistic Models through LAMBench reveals several key insights. First, significant performance variations exist across different model architectures, with no single model dominating all evaluation categories. While DPA-3.1-3M demonstrates superior generalizability for force field prediction ( $\bar{M}^m{\mathrm{FF}} = 0.175$ ), other models like Orb-v2 show remarkable efficiency ($ME^m = 1.341$) despite higher generalizability errors [7].

Second, the evaluation reveals a noticeable trade-off between accuracy and efficiency, as illustrated in Figure 2 of the LAMBench leaderboard [7]. Models with lower generalizability errors often exhibit higher computational requirements, though exceptions exist.

Most importantly, LAMBench analysis reveals a significant gap between current LAMs and the ideal universal potential energy surface [1]. This gap highlights the need for continued development in several key areas: incorporating cross-domain training data, supporting multi-fidelity modeling at inference time, and ensuring model conservativeness and differentiability [1]. As LAMBench evolves as a dynamic, extensible platform, it will continue to facilitate the development of more robust and generalizable LAMs, ultimately accelerating scientific discovery across chemistry, materials science, and drug development.

Defining Out-of-Distribution Performance for Real-World Scientific Challenges

In the pursuit of universal potential energy surfaces, a model's performance on familiar data is less informative than its ability to generalize to novel, unseen chemical systems. This out-of-distribution (OOD) generalizability is the critical benchmark for determining whether a Large Atomistic Model (LAM) can become a ready-to-use tool in real scientific discovery. Evaluated using the LAMBench framework, OOD performance rigorously tests a model's capacity to accurately predict energies, forces, and physical properties across diverse atomistic domains that were not part of its training data [1]. This article provides a comparative analysis of leading LAMs, examining their OOD performance as quantified by the standardized benchmarking system of LAMBench.

The development of Large Atomistic Models mirrors the trajectory of other foundation models in machine learning, where comprehensive benchmarking has been a fundamental prerequisite for rapid advancement [1]. In molecular modeling, however, existing benchmarks have historically suffered from two significant limitations: they are intrinsically domain-specific, focusing on isolated sub-fields rather than encompassing varied atomistic systems; and they often fail to reflect real-world application scenarios, reducing their relevance to scientific discovery [1]. The LAMBench system addresses these gaps by introducing a systematic approach to evaluating OOD generalizability, which it defines as a model's performance on test datasets that are independently constructed and exhibit a distribution distinct from the training data [1] [9]. This approach aligns with practical scientific applications, where researchers frequently employ models on chemical systems beyond those represented in the original training corpus.

LAMBench Evaluation Framework

Core Evaluation Methodology

The LAMBench system is designed to benchmark diverse LAMs across multiple tasks within a high-throughput automated workflow [1]. Its evaluation centers on three fundamental capabilities of an LAM:

- Generalizability: The accuracy of an LAM when utilized as a universal potential across a diverse range of atomistic systems not seen during training [1].

- Adaptability: The LAM's capacity to be fine-tuned for tasks beyond potential energy prediction, with emphasis on structure-property relationship tasks [1].

- Applicability: The stability and efficiency of deploying LAMs in real-world simulations [1].

For OOD evaluation, LAMBench adopts a practical approach by considering OOD test datasets as downstream datasets designed to address specific scientific challenges, providing a more meaningful measure of real-world utility [9].

Experimental Protocols for OOD Generalizability

LAMBench employs a rigorous methodology for assessing force field prediction capabilities across three primary domains, using zero-shot inference with energy-bias term adjustments based on test dataset statistics [7].

The evaluation workflow involves several critical steps. First, for force field prediction tasks, performance is assessed across three domains: Inorganic Materials (including datasets like Torres2019Analysis, Batzner2022equivariant), Molecules (including ANI-1x, MD22, AIMD-Chig), and Catalysis (including Vandermause2022Active, Zhang2019Bridging) [7]. The error metric is normalized against a baseline dummy model that predicts energy solely based on chemical formula without structural details [7]. For each domain, the log-average of normalized metrics across all datasets within the domain is computed [7]. Finally, a weighted dimensionless domain error metric encapsulates the overall error across various prediction types (energy, force, virial), ultimately producing a comprehensive generalizability error metric [7].

For domain-specific property calculation tasks, LAMBench employs Mean Absolute Error (MAE) as the primary error metric [7]. In the Inorganic Materials domain, the MDR phonon benchmark predicts maximum phonon frequency, entropy, free energy, and heat capacity, while the elasticity benchmark evaluates shear and bulk moduli [7]. In the Molecules domain, the TorsionNet500 benchmark assesses torsion profile energy, torsional barrier height, and the number of molecules with excessive torsional barrier height errors [7]. For Catalysis, the OC20NEB-OOD benchmark evaluates energy barrier, reaction energy change, and the percentage of reactions with predicted energy barrier errors exceeding 0.1eV for different reaction types [7].

Comparative Performance Analysis of Leading LAMs

Quantitative Generalizability Assessment

The following table summarizes the OOD performance of leading LAMs as evaluated by LAMBench (v0.3.1), showcasing their generalizability across force field prediction and property calculation tasks [7]:

| Model | Generalizability Force Field (M̄mFF) ↓ | Generalizability Property (M̄mPC) ↓ | Applicability Efficiency (MmE) ↑ | Applicability Stability (MmIS) ↓ |

|---|---|---|---|---|

| DPA-3.1-3M | 0.175 | 0.322 | 0.261 | 0.572 |

| Orb-v3 | 0.215 | 0.414 | 0.396 | 0.000 |

| DPA-2.4-7M | 0.241 | 0.342 | 0.617 | 0.039 |

| GRACE-2L-OAM | 0.251 | 0.404 | 0.639 | 0.309 |

| Orb-v2 | 0.253 | 0.601 | 1.341 | 2.649 |

| SevenNet-MF-ompa | 0.255 | 0.455 | 0.084 | 0.000 |

| MatterSim-v1-5M | 0.283 | 0.467 | 0.393 | 0.000 |

| MACE-MPA-0 | 0.308 | 0.425 | 0.293 | 0.000 |

| SevenNet-l3i5 | 0.326 | 0.397 | 0.272 | 0.036 |

| MACE-MP-0 | 0.351 | 0.472 | 0.296 | 0.089 |

Note: All metrics are normalized, with lower values (↓) indicating better performance for error metrics (M̄mFF, M̄mPC, MmIS) and higher values (↑) indicating better performance for efficiency (MmE). A dummy model achieves M̄mFF = 1, while an ideal model would achieve 0 [7].

Performance Insights and Trends

Analysis of the LAMBench results reveals several important trends. DPA-3.1-3M demonstrates the strongest overall OOD generalizability for force field prediction tasks, achieving the lowest M̄mFF score of 0.175 [7]. Interestingly, there is no clear correlation between force field prediction accuracy and property calculation performance, as some models with moderate force field scores excel in property prediction [7]. The efficiency metric (MmE) shows considerable variation, with Orb-v2 being the fastest but suffering from stability issues, while SevenNet-MF-ompa is significantly slower but demonstrates perfect stability [7]. Stability measurements reveal dramatic differences, with several models (Orb-v3, SevenNet-MF-ompa, MatterSim-v1-5M, MACE-MPA-0) achieving perfect stability scores (0.000), while Orb-v2 shows notably high instability (2.649) [7].

Essential Research Reagents and Computational Tools

To implement and evaluate OOD performance using the LAMBench framework, researchers should be familiar with the following key resources and methodologies:

Key Research Reagent Solutions

| Item | Function in OOD Evaluation |

|---|---|

| LAMBench Codebase | Open-source benchmarking system for automated evaluation of LAMs across multiple tasks [1] |

| Interactive Leaderboard | Platform for tracking model performance and comparing results across research groups [7] |

| MPtrj Dataset | Domain-specific training data for inorganic materials at PBE/PBE+U level of theory [1] |

| ANI-1x & MD22 | Molecular datasets for benchmarking small molecule force field predictions [7] |

| OC20NEB-OOD | Catalysis dataset for evaluating energy barriers and reaction energies [7] |

| TorsionNet500 | Benchmark for assessing torsion profile energy and torsional barrier height predictions [7] |

| DiffTRe Method | Differentiable Trajectory Reweighting technique for training on experimental data [10] |

Implications for Scientific Discovery

The OOD performance metrics provided by LAMBench reveal a significant gap between current LAMs and the ideal universal potential energy surface [1] [9]. This evaluation framework highlights several critical requirements for advancing the field: incorporating cross-domain training data to enhance generalizability, supporting multi-fidelity modeling to satisfy varying requirements across different domains, and ensuring models' conservativeness and differentiability to optimize performance in property prediction tasks and ensure stability in molecular dynamics simulations [1].

For researchers and drug development professionals, these findings underscore the importance of selecting LAMs based on comprehensive OOD benchmarking rather than isolated domain performance. The current leaderboard indicates that while progress has been made, no single model excels across all domains and metrics, suggesting that model selection should be guided by specific application requirements [7]. As LAMBench continues to evolve as a dynamic and extensible platform, it will facilitate the development of more robust and generalizable LAMs, ultimately accelerating scientific discovery across chemistry, materials science, and drug development [1].

Large Atomistic Models (LAMs) are emerging as foundation models for approximating the universal potential energy surface (PES) of atomistic systems, with the potential to revolutionize scientific fields like materials science and drug discovery [1]. However, their development has been hampered by the lack of comprehensive benchmarks. LAMBench addresses this by providing a rigorous evaluation system designed to assess whether these models are truly ready-to-use tools for real-world scientific applications [1] [9].

The LAMBench Evaluation Framework: Beyond Single-Domain Accuracy

LAMBench moves beyond traditional, domain-specific benchmarks by evaluating LAMs across three core capabilities essential for their practical deployment [1] [7]:

- Generalizability: This measures a model's accuracy on out-of-distribution datasets, reflecting its performance as a universal potential across diverse chemical systems. It is tested through Force Field Prediction (energy, force, and virial) and Domain-Specific Property Calculation (e.g., phonon frequencies, torsional barriers) [1] [7].

- Adaptability: This assesses a model's capacity to be fine-tuned for tasks beyond potential energy prediction, such as learning structure-property relationships for specific scientific problems [1].

- Applicability: This critical dimension evaluates the stability and efficiency of LAMs in real-world simulations, ensuring they are not only accurate but also robust and practical for use in lengthy molecular dynamics runs [1] [7].

The following diagram illustrates the logical relationship between these pillars and the ultimate goal of scientific discovery.

Quantitative Performance Comparison of Leading LAMs

LAMBench provides a standardized platform for the objective comparison of state-of-the-art models. The table below summarizes the generalizability and applicability performance of several leading LAMs as reported on the LAMBench leaderboard (v0.3.1) [7]. A lower score for generalizability metrics is better, while a higher score for applicability efficiency is better.

Table 1: LAMBench Leaderboard Snapshot (v0.3.1)

| Model | Generalizability (Force Field) M̄ᵐFF ↓ | Generalizability (Property) M̄ᵐPC ↓ | Applicability (Efficiency) Mᴱᵐ ↑ | Applicability (Instability) MᴵSᵐ ↓ |

|---|---|---|---|---|

| DPA-3.1-3M | 0.175 | 0.322 | 0.261 | 0.572 |

| Orb-v3 | 0.215 | 0.414 | 0.396 | 0.000 |

| DPA-2.4-7M | 0.241 | 0.342 | 0.617 | 0.039 |

| GRACE-2L-OAM | 0.251 | 0.404 | 0.639 | 0.309 |

| Orb-v2 | 0.253 | 0.601 | 1.341 | 2.649 |

| SevenNet-MF-ompa | 0.255 | 0.455 | 0.084 | 0.000 |

| MatterSim-v1-5M | 0.283 | 0.467 | 0.393 | 0.000 |

| MACE-MPA-0 | 0.308 | 0.425 | 0.293 | 0.000 |

| SevenNet-l3i5 | 0.326 | 0.397 | 0.272 | 0.036 |

| MACE-MP-0 | 0.351 | 0.472 | 0.296 | 0.089 |

Source: LAMBench Leaderboard [7]

Key Performance Insights

- Trade-offs are Evident: The data reveals that no single model leads in all categories. For instance, while

DPA-3.1-3Mshows the best force field generalizability, other models likeOrb-v3andMatterSim-v1-5Mdemonstrate superior stability (instability metric of 0.000) in molecular dynamics simulations [7]. - The Accuracy-Efficiency Balance: A notable finding from LAMBench is the trade-off between accuracy and computational speed [1]. This is crucial for researchers who must choose a model based on the constraints of their project, whether it's high-throughput screening or long-time-scale dynamics.

Detailed Experimental Protocols in LAMBench

Protocol for Generalizability Testing

The evaluation of generalizability is a multi-step, automated process within LAMBench's high-throughput workflow [1].

Table 2: Generalizability Test Domains and Metrics

| Domain | Example Datasets | Prediction Types & Weights | Primary Error Metric |

|---|---|---|---|

| Inorganic Materials | Torres2019Analysis, Batzner2022equivariant, Sours2023Applications [7] | Energy (0.45), Force (0.45), Virial (0.1) [7] | RMSE |

| Molecules | ANI-1x, MD22, AIMD-Chig [7] | Energy (0.5), Force (0.5) [7] | RMSE |

| Catalysis | Vandermause2022Active, Zhang2019Bridging, Villanueva2024Water [7] | Energy (0.45), Force (0.45), Virial (0.1) [7] | RMSE |

The workflow for calculating the generalizability metric involves normalization against a baseline model, aggregation across domains, and final score calculation, as shown in the following diagram.

Protocol for Applicability Testing

- Efficiency Workflow: Models are evaluated on their inference speed (in microseconds per atom) across 900 frames of 800-1000 atoms, which are generated by replicating unit cells from inorganic and catalysis domains to fully utilize GPU capacity [7]. The efficiency score is calculated as ( M_E^m = \frac{\eta^0}{\bar{\eta}^m} ), where ( \eta^0 = 100 \ \mu s/\text{atom} ) is a reference value and ( \bar{\eta}^m ) is the model's average inference time [7].

- Stability Workflow: The stability of a model is quantified by measuring the total energy drift in NVE (microcanonical ensemble) molecular dynamics simulations across nine different structures [7]. A lower energy drift indicates a more stable and physically reliable model, which is critical for producing trustworthy simulation results.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Resources for LAM Development and Evaluation

| Item Name | Type | Function & Description |

|---|---|---|

| LAMBench | Benchmark | Core benchmarking system for evaluating generalizability, adaptability, and applicability of LAMs [1] [7]. |

| OMol25 Dataset | Training Data | Massive dataset from Meta FAIR with over 100M quantum calculations at ωB97M-V/def2-TZVPD level, covering biomolecules, electrolytes, and metal complexes [11]. |

| QUID Benchmark | Benchmark | A "platinum standard" quantum-mechanical benchmark for ligand-pocket interaction energies, combining CC and QMC methods [12]. |

| Universal Model for Atoms (UMA) | LAM | A state-of-the-art architecture using a Mixture of Linear Experts (MoLE) to unify training across disparate datasets [11]. |

| ByteFF | Force Field | A data-driven molecular mechanics force field parameterized using a graph neural network on a large QM dataset [13]. |

| eSEN Model | LAM | An equivariant transformer-style architecture from Meta FAIR; available in both direct-force and conservative-force variants [11]. |

LAMBench represents a critical step toward transforming Large Atomistic Models from academic curiosities into reliable tools for scientific discovery. By rigorously evaluating models on generalizability, adaptability, and applicability, it provides researchers with the data needed to select the right model for their specific challenge. Current benchmarks reveal a significant performance gap between existing LAMs and the ideal of a universal potential energy surface [1]. This underscores the need for continued development, particularly in cross-domain training, multi-fidelity modeling, and ensuring physical conservativeness [1]. As LAMBench evolves, it will continue to guide the community in building more robust and generalizable models, ultimately accelerating progress in fields ranging from inorganic materials to drug design.

How LAMBench Works: A Practical Framework for Accuracy Assessment

In computational chemistry and materials science, the accuracy of a force field is not a single metric but a multi-faceted measure of how well it approximates the underlying quantum mechanical potential energy surface (PES). The concept of a universal PES, governed by the Schrödinger equation under the Born-Oppenheimer approximation, provides a theoretical foundation for developing large-scale, general-purpose force fields [1]. The LAMBench benchmarking system has been established to rigorously evaluate these emerging Large Atomistic Models (LAMs) by deconstructing their performance across three core prediction tasks: energy, force, and virial accuracy [1]. This objective comparison delves into the performance of leading LAMs, using quantitative data from LAMBench to illuminate the critical trade-offs and strengths that define the current state of force field prediction.

Quantitative Performance Comparison of Leading LAMs

The table below summarizes the overall benchmark performance of select LAMs as measured by LAMBench, integrating their generalizability error and key applicability metrics [7].

Table 1: Overall LAMBench Performance Metrics for Selected Models

| Model | Generalizability (Force Field) ( \bar{M}^m_{\mathrm{FF}} ) ↓ | Generalizability (Property) ( \bar{M}^m_{\mathrm{PC}} ) ↓ | Efficiency ( M_{\mathrm{E}}^m ) ↑ | Instability ( M^m_{\mathrm{IS}} ) ↓ |

|---|---|---|---|---|

| DPA-3.1-3M | 0.175 | 0.322 | 0.261 | 0.572 |

| Orb-v3 | 0.215 | 0.414 | 0.396 | 0.000 |

| DPA-2.4-7M | 0.241 | 0.342 | 0.617 | 0.039 |

| GRACE-2L-OAM | 0.251 | 0.404 | 0.639 | 0.309 |

| SevenNet-MF-ompa | 0.255 | 0.455 | 0.084 | 0.000 |

| MatterSim-v1-5M | 0.283 | 0.467 | 0.393 | 0.000 |

| MACE-MPA-0 | 0.308 | 0.425 | 0.293 | 0.000 |

| SevenNet-l3i5 | 0.326 | 0.397 | 0.272 | 0.036 |

| MACE-MP-0 | 0.351 | 0.472 | 0.296 | 0.089 |

Note: ↓ Lower is better; ↑ Higher is better. ( \bar{M}^m_{\mathrm{FF}} ) and ( \bar{M}^m_{\mathrm{PC}} ) are composite error metrics for force field and property prediction tasks, respectively. ( M_{\mathrm{E}}^m ) is an efficiency score, and ( M^m_{\mathrm{IS}} ) measures instability in simulations. Data sourced from LAMBench v0.3.1 [7].

Accuracy by Prediction Type

A force field's total performance is an aggregate of its accuracy on specific physical quantities. The following table breaks down the normalized error metrics for top-performing models across the core force field prediction types [7].

Table 2: Detailed Error Breakdown by Domain and Prediction Type

| Model | Domain | Normalized Energy Error ( \bar{M}^m_{k,E} ) ↓ | Normalized Force Error ( \bar{M}^m_{k,F} ) ↓ | Normalized Virial Error ( \bar{M}^m_{k,V} ) ↓ |

|---|---|---|---|---|

| DPA-3.1-3M | Molecules | 0.12 | 0.15 | - |

| Inorganic Materials | 0.18 | 0.19 | 0.17 | |

| Catalysis | 0.21 | 0.23 | 0.20 | |

| Orb-v3 | Molecules | 0.16 | 0.18 | - |

| Inorganic Materials | 0.22 | 0.24 | 0.21 | |

| Catalysis | 0.25 | 0.27 | 0.24 | |

| SevenNet-MF-ompa | Molecules | 0.19 | 0.21 | - |

| Inorganic Materials | 0.24 | 0.26 | 0.23 | |

| Catalysis | 0.28 | 0.30 | 0.26 |

Note: Errors are normalized against a baseline dummy model, where a value of 1.0 signifies performance no better than the baseline. Virial errors are typically only computed for systems with periodic boundary conditions [7].

The LAMBench Evaluation Framework

Core Evaluation Methodology

The LAMBench system is designed to provide a holistic assessment of LAMs by evaluating three fundamental capabilities: generalizability (performance on unseen data across domains), adaptability (fine-tuning potential for property prediction), and applicability (stability and efficiency in real-world simulations) [1]. The benchmarking process is automated within a high-throughput workflow [1].

Metric Calculation and Normalization

A key feature of LAMBench is its structured approach to calculating comparable, normalized error metrics. The generalizability error metric for force field prediction (( \bar{M}^m_{\mathrm{FF}} )) is a composite score derived through a multi-step process [7]:

Per-Dataset Normalization: The initial error metric for a model (m) on a specific test set (i), prediction type (p) (energy, force, virial), and domain (k) is normalized against the error of a baseline "dummy" model: ( \hat{M}^m{k,p,i} = \min\left(\frac{M^m{k,p,i}}{M^{\mathrm{dummy}}_{k,p,i}}, 1\right) ). This dummy model predicts energy based solely on chemical composition, ignoring atomic structure. This normalization sets performance worse than the dummy model to 1, and perfect performance to 0 [7].

Aggregation: The normalized metrics are aggregated using a log-average across datasets within a domain and prediction type, then combined into a domain score using a weighted average across prediction types (typically with weights (wE=wF=0.45) and (wV=0.1) when virials are available). The final ( \bar{M}^m{\mathrm{FF}} ) is the average of the domain-wise error metrics [7].

This rigorous normalization allows for a fair comparison across diverse chemical domains and system sizes.

Essential Research Reagents and Computational Tools

Table 3: Key Research Reagents and Tools for Force Field Benchmarking

| Item Name | Type | Primary Function in Evaluation |

|---|---|---|

| LAMBench | Software Benchmark Suite | Core platform for running standardized, high-throughput evaluations of LAMs across multiple tasks and domains [1] [7]. |

| Density Functional Theory (DFT) | Computational Method | Generates high-fidelity quantum mechanical data (energy, forces, virials) used as reference "ground truth" for training and evaluating LAMs [1] [10]. |

| Differentiable Trajectory Reweighting (DiffTRe) | Training Algorithm | Enforces model consistency with experimental data by allowing gradient-based optimization without backpropagating through entire simulations [10]. |

| Molecular Dynamics (MD) | Simulation Engine | Tests the applicability and stability of LAMs in real-world simulation scenarios, such as checking for energy drift in NVE ensembles [1] [7]. |

| AMBER/GAFF | Classical Force Field | Provides a well-established baseline and parameter set for comparisons, particularly in biomolecular simulations like free energy calculations [14] [15]. |

Discussion and Future Directions

The benchmark data reveals a significant performance gap among current LAMs and highlights a critical trade-off between accuracy and computational efficiency. For instance, while DPA-3.1-3M leads in generalizability, SevenNet-MF-ompa is an order of magnitude more efficient, a crucial factor for large-scale simulations [7]. Furthermore, no single model excels across all domains and prediction types, underscoring the challenge of developing a truly universal potential [1].

Future advancements are likely to focus on several key areas. The fusion of data from multiple sources, such as combining DFT data with experimental mechanical properties and lattice parameters, has proven effective in creating models of higher accuracy that satisfy a broader range of target objectives [10]. Supporting multi-fidelity modeling at inference time will be essential to meet the varying requirements for exchange-correlation functional accuracy across different scientific domains [1]. Finally, ensuring models are conservative (forces are derivatives of energy) and differentiable remains paramount for physical consistency and stability in molecular dynamics simulations [1]. As LAMBench continues to evolve, it will provide the necessary framework to track progress toward robust and generalizable force fields that can accelerate scientific discovery.

The accuracy of a force field in predicting fundamental physicochemical properties is a direct measure of its utility in scientific discovery. While predicting energies and forces is a necessary baseline, the true test for a Large Atomistic Model (LAM) is its performance in downstream property calculations, which are critical for applications in material science and drug design [16]. These properties—ranging from the vibrational spectra of inorganic materials to the torsional barriers of drug-like molecules—serve as a bridge between abstract potential energy surfaces and tangible, experimentally observable phenomena. Framed within the comprehensive benchmarking paradigm of LAMBench [1], this guide provides an objective comparison of how state-of-the-art LAMs perform on these essential tasks. By focusing on domain-specific property calculations, we move beyond generic force-field accuracy to evaluate how ready these models are for deployment in real-world research scenarios.

The LAMBench Evaluation Framework for Property Calculation

LAMBench is designed to assess Large Atomistic Models (LAMs) across three core capabilities: generalizability, adaptability, and applicability [1]. This guide focuses on its systematic approach to evaluating domain-specific property calculation, a key aspect of a model's generalizability.

The benchmark tests models across three distinct scientific domains, each with its own critical properties [7]:

- Inorganic Materials: Evaluates a model's ability to predict properties crucial for material stability and performance, such as phonon spectra and elastic constants.

- Molecules: Assesses accuracy in calculating conformational energy profiles and torsional barriers, which are vital for understanding molecular reactivity and interactions in fields like drug design [16].

- Catalysis: Tests the prediction of reaction energy barriers and pathways, which are essential for catalyst screening and development.

Performance is quantified using a normalized error metric, ( \bar M^m_{\mathrm{PC}} ), which aggregates Mean Absolute Error (MAE) across all property prediction tasks within these domains [7]. This metric is normalized against a baseline model, where a value of 0 represents a perfect model and a value of 1 indicates performance no better than the baseline [7].

Comparative Performance of Leading Large Atomistic Models

The following table summarizes the performance of leading LAMs, as benchmarked by LAMBench, on property calculation and other key metrics. The generalizability error on property calculation tasks, ( \bar M^m_{\mathrm{PC}} ), is the primary indicator of a model's accuracy for the domain-specific calculations discussed in this guide. A lower value signifies better performance [7].

Table 1: LAMBench Leaderboard Snapshot (v0.3.1) for Selected Models

| Model | Generalizability - Property Calculation (( \bar M^m_{\mathrm{PC}} )) ↓ | Generalizability - Force Field (( \bar M^m_{\mathrm{FF}} )) ↓ | Applicability - Efficiency (( M^m_{\mathrm{E}} )) ↑ | Applicability - Stability (( M^m_{\mathrm{IS}} )) ↓ |

|---|---|---|---|---|

| DPA-3.1-3M | 0.322 | 0.175 | 0.261 | 0.572 |

| DPA-2.4-7M | 0.342 | 0.241 | 0.617 | 0.039 |

| Orb-v3 | 0.414 | 0.215 | 0.396 | 0.000 |

| GRACE-2L-OAM | 0.404 | 0.251 | 0.639 | 0.309 |

| MACE-MPA-0 | 0.425 | 0.308 | 0.293 | 0.000 |

| SevenNet-MF-ompa | 0.455 | 0.255 | 0.084 | 0.000 |

| MatterSim-v1-5M | 0.467 | 0.283 | 0.393 | 0.000 |

| MACE-MP-0 | 0.472 | 0.351 | 0.296 | 0.089 |

Performance Analysis Across Scientific Domains

A high-level comparison reveals several key insights. No single model currently dominates across all domains and metrics, highlighting a significant performance trade-off. For instance, while DPA-3.1-3M leads in property calculation accuracy (( \bar M^m{\mathrm{PC}} = 0.322 )), it does so at a notable cost to computational efficiency (( M^m{\mathrm{E}} = 0.261 )) compared to models like GRACE-2L-OAM (( M^m{\mathrm{E}} = 0.639 )) [7]. This illustrates a recurrent theme in the benchmark results: the tension between accuracy and speed. Furthermore, some models, such as Orb-v3 and MACE-MPA-0, achieve perfect scores in stability metrics (( M^m{\mathrm{IS}} = 0.000 )), a critical feature for running reliable molecular dynamics simulations, yet they show middling performance on property prediction [7]. This underscores that force field accuracy does not automatically translate to high fidelity in derived properties.

Experimental Protocols for Property Calculation

To ensure reproducibility and provide a clear understanding of how these benchmarks are conducted, this section details the experimental protocols LAMBench uses for property calculation.

Inorganic Materials Domain: Phonons & Elasticity

- Objective: To evaluate a model's prediction of vibrational properties and mechanical moduli.

- Protocol: The benchmark uses the MDR phonon benchmark to predict the maximum phonon frequency, entropy, free energy, and heat capacity at constant volume. The elasticity benchmark evaluates the shear and bulk moduli. These properties are derived from the interatomic potential by perturbing atomic positions (for phonons) or applying strains (for elasticity) and analyzing the resulting changes in energy and forces.

- Evaluation Metric: Mean Absolute Error (MAE) is used for each property. The final score for this domain is an average of all six property errors, with each assigned an equal weight of ( \frac{1}{6} ) [7].

Molecules Domain: Torsional Barriers & Conformer Energies

- Objective: To assess the model's accuracy in capturing the energy changes associated with molecular conformation, which is fundamental to drug design and molecular reactivity [16].

- Protocol: Two primary benchmarks are used:

- TorsionNet500: Evaluates the torsion profile energy, the torsional barrier height, and the number of molecules for which the predicted torsional barrier height error exceeds 1 kcal/mol.

- Wiggle150: Assesses the relative conformer energy profile. In both cases, the model calculates the potential energy for a series of constrained molecular geometries where a specific dihedral angle is systematically rotated.

- Evaluation Metric: MAE is used for each prediction type. The final score for the Molecules domain is an average of the four MAE values (three from TorsionNet500 and one from Wiggle150), with each assigned a weight of 0.25 [7].

Catalysis Domain: Reaction Energy Barriers

- Objective: To test a model's capability in predicting reaction pathways, a key requirement for catalyst screening.

- Protocol: The OC20NEB-OOD benchmark is used. It evaluates the energy barrier and the reaction energy change (delta energy) for three reaction types: transfer, dissociation, and desorption. The benchmark also tracks the percentage of reactions with a predicted energy barrier error exceeding 0.1 eV. The model's potential energy surface is sampled along a reaction coordinate, typically using the Nudged Elastic Band (NEB) method, to locate the transition state and calculate the barrier.

- Evaluation Metric: MAE is used for each prediction type. The final score for the Catalysis domain is an average of five MAE values, with each assigned a weight of 0.2 [7].

The following workflow diagram illustrates how these diverse experimental protocols are integrated within the LAMBench system to provide a holistic evaluation.

The Scientist's Toolkit: Essential Research Reagents & Materials

To conduct these evaluations, LAMBench relies on a curated set of benchmark datasets and computational tools. The following table details these essential "research reagents" and their functions in the benchmarking process.

Table 2: Key Research Reagents and Benchmarking Materials

| Item Name | Type | Primary Function in Evaluation |

|---|---|---|

| MPtrj Dataset [1] | Training Data | A large dataset of inorganic materials trajectories used for pretraining many domain-specific LAMs at the PBE/PBE+U level of theory. |

| MDR Phonon Benchmark [7] | Test Dataset | Evaluates model predictions for vibrational properties like maximum phonon frequency, entropy, and free energy. |

| Elasticity Benchmark [7] | Test Dataset | Tests the accuracy of predicted mechanical properties, including shear and bulk moduli. |

| TorsionNet500 [7] | Test Dataset | A benchmark for evaluating torsion profile energy and torsional barrier height in molecules. |

| Wiggle150 [7] | Test Dataset | Assesses the accuracy of relative conformer energy profiles for molecular systems. |

| OC20NEB-OOD [7] | Test Dataset | Tests the prediction of energy barriers and reaction energies for catalytic reactions (transfer, dissociation, desorption). |

| Dummy Model [7] | Baseline Model | A simple model that predicts energy based only on chemical formula, providing a reference for normalizing error metrics. |

The quantitative comparison provided by LAMBench reveals a clear landscape: while modern LAMs have made impressive strides, a significant gap remains between their current capabilities and the ideal of a universal, highly accurate potential for property prediction [1]. The performance trade-offs observed—particularly between accuracy, efficiency, and stability—highlight that model selection is not a one-size-fits-all decision. Researchers must choose models based on their specific domain needs, whether that is high fidelity for torsional barriers in drug design or robust stability for long molecular dynamics simulations. Future development of LAMs should focus on incorporating more cross-domain training data, supporting multi-fidelity modeling to accommodate different levels of quantum mechanical theory, and ensuring models are conservative and differentiable to guarantee physical meaningfulness [1]. As LAMBench continues to evolve as a dynamic benchmark, it will provide the essential framework needed to guide and accelerate the development of more robust and generalizable force fields, ultimately empowering scientific discovery across chemistry, materials science, and drug development.

For researchers in computational chemistry and drug development, selecting a force field or a large atomistic model (LAM) is a critical decision that can determine the success or failure of a simulation. Beyond simple prediction accuracy, two practical considerations are paramount: simulation stability and computational efficiency. A model that produces unstable, non-conservative dynamics or requires prohibitive computational resources has limited applicability in real-world scientific discovery, regardless of its static accuracy. This guide objectively compares the performance of modern machine learning interatomic potentials using the LAMBench evaluation system, providing a framework for assessing these crucial applicability metrics.

Core Applicability Metrics in LAMBench

The LAMBench framework evaluates the applicability of Large Atomistic Models through two principal metrics: Stability and Efficiency [1] [7]. These metrics are designed to assess how reliably and practically a model can be deployed in molecular simulations.

- Stability (

M_IS): This metric quantifies the physical robustness of a model in molecular dynamics (MD) simulations. It is measured by the total energy drift observed in NVE (microcanonical) ensemble simulations across nine different structures [7]. A low energy drift is critical for achieving accurate and physically meaningful simulation trajectories, as it reflects the model's conservation of energy. Non-conservative models can exhibit high apparent accuracy on static test sets but fail in practical MD applications [1]. - Efficiency (

M_E): This metric evaluates the computational speed of a model. It is defined by normalizing the average inference time per atom against a reference value (η^0 = 100 μs/atom) [7]. The efficiency score is calculated asM_E^m = η^0 / η_bar^m, whereη_bar^mis the measured average inference time for modelm. A higherM_Escore indicates better (faster) performance. These measurements are conducted on systems containing 800 to 1000 atoms to ensure assessments are within the regime of GPU performance convergence [7].

Quantitative Comparison of Model Applicability

The following tables summarize the applicability performance of state-of-the-art LAMs as benchmarked by LAMBench (v0.3.1), providing a direct comparison of their stability and efficiency.

Table 1: Overall Applicability Scores of LAMs from LAMBench Leaderboard [7]

| Model | Efficiency (M_E) ↑ |

Instability (M_IS) ↓ |

|---|---|---|

| Orb-v3 | 0.396 | 0.000 |

| SevenNet-MF-ompa | 0.084 | 0.000 |

| MatterSim-v1-5M | 0.393 | 0.000 |

| MACE-MPA-0 | 0.293 | 0.000 |

| SevenNet-l3i5 | 0.272 | 0.036 |

| MACE-MP-0 | 0.296 | 0.089 |

| DPA-3.1-3M | 0.261 | 0.572 |

| DPA-2.4-7M | 0.617 | 0.039 |

| GRACE-2L-OAM | 0.639 | 0.309 |

| Orb-v2 | 1.341 | 2.649 |

Table 2: Comparative force field performance in specific molecular dynamics simulations [17] [18]

| Force Field | System / Property Tested | Key Performance Finding |

|---|---|---|

| CHARMM Drude | CTA Fiber Stability (Hydrogen Bond Count) | Maintained stable, ordered structure during simulation [17] |

| GAFF | CTA Fiber Stability (Hydrogen Bond Count) | Maintained stable, ordered structure during simulation [17] |

| Polarized Martini | CTA Fiber Stability (Hydrogen Bond Count) | Maintained stable, ordered structure during simulation [17] |

| GROMOS | CTA Fiber Stability (Hydrogen Bond Count) | Structure collapsed after ~130 ns, but retained partial order [17] |

| CGenFF | CTA Fiber Stability (Hydrogen Bond Count) | Fiber collapsed immediately; most hydrogen bonds broken [17] |

| CHARMM36 | Diisopropyl Ether (DIPE) Density & Shear Viscosity | Provided quite accurate density and viscosity values [18] |

| COMPASS | Diisopropyl Ether (DIPE) Density & Shear Viscosity | Provided quite accurate density and viscosity values [18] |

| GAFF | Diisopropyl Ether (DIPE) Density & Shear Viscosity | Overestimated density by 3-5% and viscosity by 60-130% [18] |

| OPLS-AA/CM1A | Diisopropyl Ether (DIPE) Density & Shear Viscosity | Overestimated density by 3-5% and viscosity by 60-130% [18] |

Experimental Protocols for Quantifying Applicability

LAMBench Stability and Efficiency Assessment

The standardized methodology employed by LAMBench provides a consistent protocol for evaluating model applicability.

Figure 1: LAMBench's workflow for quantifying model applicability through standardized efficiency and stability tests.

Efficiency Measurement Protocol [7]:

- System Preparation: Randomly select 1000 frames from inorganic material and catalysis domains. Expand each frame to contain between 800 and 1000 atoms by replicating the unit cell, using a binary search algorithm to fully utilize GPU capacity.

- Timing Procedure: Execute inference on the 900 frames (the initial 10% are considered a warm-up phase and are excluded). Record the average inference time per atom (

η_bar^m). - Score Calculation: Compute the efficiency score as

M_E^m = 100 / η_bar^m, where the reference valueη^0is 100 μs/atom.

Stability Measurement Protocol [7]:

- Simulation Setup: Initialize NVE (microcanonical) molecular dynamics simulations for nine different structural systems.

- Trajectory Analysis: Run the simulations and monitor the total energy of the system over time.

- Drift Quantification: Calculate the total energy drift throughout the simulation trajectory. This drift value constitutes the instability metric

M_IS.

Specialized Force Field Stability Assessments

Independent studies provide deeper insights into stability evaluation protocols for specific systems, such as supramolecular assemblies.

Protocol for Supramolecular Fiber Stability [17]:

- Construct a Pre-Built Fiber: Create an initial structure of a known supramolecular fiber assembly (e.g., a stack of 24 CTA molecules from a crystal structure).

- Run MD Simulations: Simulate the fiber structure for hundreds of nanoseconds using different force fields (e.g., GROMOS, CHARMM, GAFF, Martini).

- Quantitative Analysis:

- Structural Order: Calculate the number of hydrogen bonds between key molecular groups (e.g., CTA amides) over time. A stable force field will maintain a constant number.

- Solvent Accessibility: Monitor the solvent-accessible surface area (SASA). A stable structure will show minimal variation in SASA.

- Visual Inspection: Observe the final simulation snapshots for structural collapse or disintegration.

Protocol for Liquid Membrane Property Assessment [18]:

- Select Representative Molecule: Choose a well-characterized molecule relevant to the system of interest (e.g., Diisopropyl Ether for liquid membranes).