Benchmarking Ensemble Generation Methods for Disordered Proteins: From Molecular Simulations to Machine Learning

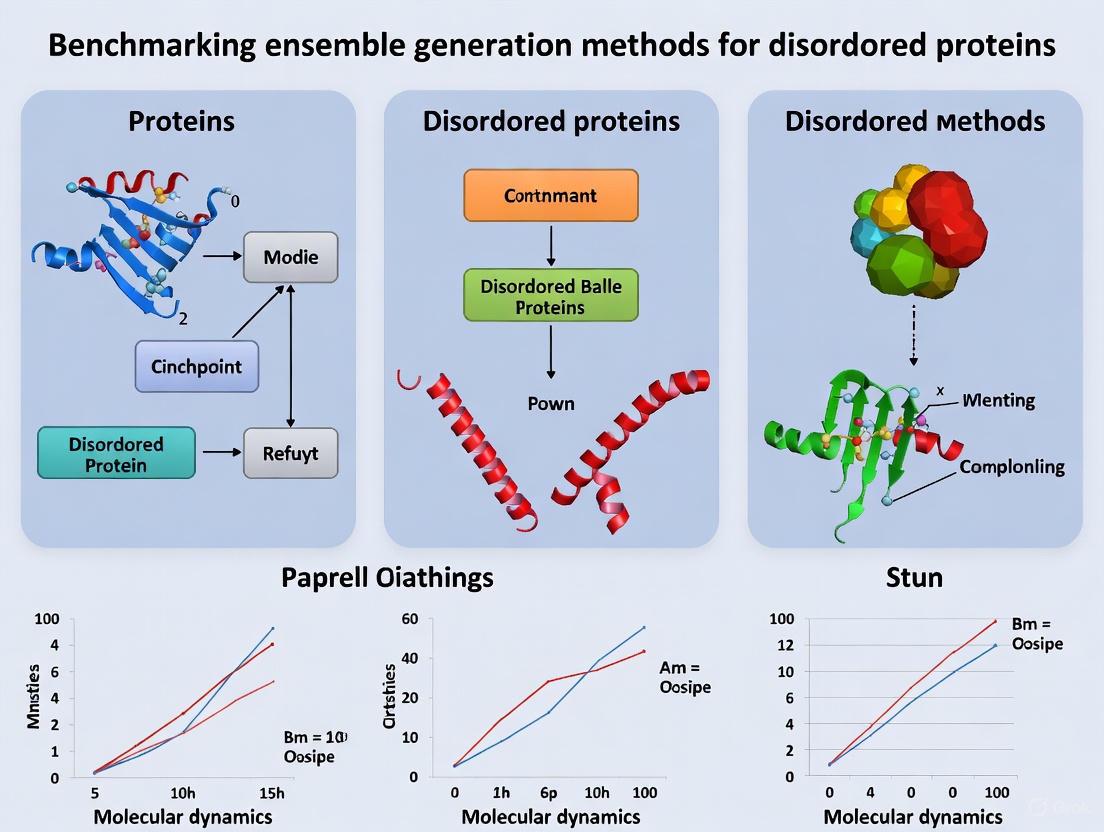

This article provides a comprehensive benchmark of computational methods for generating conformational ensembles of intrinsically disordered proteins (IDPs).

Benchmarking Ensemble Generation Methods for Disordered Proteins: From Molecular Simulations to Machine Learning

Abstract

This article provides a comprehensive benchmark of computational methods for generating conformational ensembles of intrinsically disordered proteins (IDPs). Aimed at researchers and drug development professionals, it explores the foundational principles of IDP ensemble characterization, compares traditional molecular dynamics with emerging machine learning techniques like generative adversarial networks and diffusion models, and outlines rigorous validation protocols. The review synthesizes insights from recent advances in integrative modeling, force-field comparisons, and AI-driven generation, offering a practical framework for selecting, optimizing, and validating ensemble generation methods to accelerate the study of IDP function and drug discovery.

Understanding Intrinsically Disordered Proteins: Why Conformational Ensembles Are Fundamental to Function

The Biological Significance of IDPs in Cellular Processes and Disease

Intrinsically disordered proteins (IDPs) and intrinsically disordered regions (IDRs) challenge the classical structure-function paradigm by performing crucial biological functions without adopting stable three-dimensional structures under physiological conditions [1]. These proteins are characterized by dynamic conformational ensembles, rapidly fluctuating between multiple states rather than maintaining a fixed architecture [2]. IDPs are highly abundant in eukaryotic proteomes, with estimates suggesting more than 30% of all eukaryotic proteins contain significant disordered segments [1]. Their unique biophysical properties, including high flexibility and structural plasticity, enable IDPs to participate in complex cellular processes that are inaccessible to their structured counterparts, particularly in signaling, regulation, and coordination of intricate interaction networks [2] [1].

The biological significance of IDPs extends across normal cellular physiology and disease pathogenesis. In healthy cells, IDPs function as crucial hubs in protein interaction networks, enabling precise control of transcriptional regulation, cell cycle progression, and signal transduction [2]. However, their structural flexibility also renders them susceptible to misfolding and aggregation, with devastating consequences in neurodegenerative diseases and cancer [3] [4]. This review examines the dual nature of IDPs in cellular processes and disease, with a specific focus on benchmarking the experimental and computational methods used to characterize these enigmatic proteins.

Structural and Functional Characteristics of IDPs

Sequence Determinants of Structural Disorder

The intrinsic disorder of IDPs is encoded in their amino acid sequences, which exhibit distinct compositional biases compared to structured proteins. IDPs display a characteristically low proportion of bulky hydrophobic amino acids (such as Trp, Tyr, Phe, Ile, and Leu) that form the stable cores of folded proteins, while being enriched in polar and charged residues (including Arg, Gln, Ser, Pro, and Glu) known as disorder-promoting amino acids [2] [1]. This distinct amino acid composition results in lower overall hydrophobicity and higher net charge, creating substantial barriers to spontaneous folding through reduced hydrophobic driving force and enhanced electrostatic repulsion [1]. Additionally, IDPs frequently possess lower sequence complexity and reduced evolutionary constraints, allowing for functional diversification through alternative splicing and post-translational modifications [2].

Table 1: Amino Acid Composition Bias in Ordered vs. Disordered Protein Regions

| Category | Amino Acids | Role in Structure Formation |

|---|---|---|

| Order-Promoting | C, W, Y, I, F, V, L | Depleted in disordered regions; form hydrophobic cores |

| Disorder-Promoting | M, K, R, S, Q, P, E | Enriched in disordered regions; prevent stable folding |

| Neutral | A, G, H, T, N, D | No strong preference for ordered or disordered regions |

Functional Mechanisms of IDPs

IDPs employ diverse mechanistic strategies to perform their biological functions, leveraging their structural plasticity as a functional advantage rather than a limitation:

Molecular Recognition and Signaling: IDPs frequently undergo coupled folding and binding upon interaction with their biological targets, enabling high-specificity but low-affinity interactions that are ideal for dynamic signaling processes [2]. This mechanism allows the same disordered region to adopt different structures when binding to different partners, facilitating participation in multiple signaling pathways [2]. The kinetics of these interactions are particularly advantageous for cellular signaling, as IDPs often exhibit extremely fast association rates that allow rapid initiation and termination of signals [2].

Combinatorial Regulation: The accessibility of post-translational modification sites within disordered regions enables IDPs to function as molecular integrators of multiple signals [2]. Phosphorylation, acetylation, ubiquitination, and other modifications can serve as molecular switches that modulate IDP conformation and interaction properties, allowing precise temporal control of cellular processes [2] [1].

Liquid-Liquid Phase Separation (LLPS): Many IDPs drive the formation of membraneless organelles through LLPS, facilitating the spatial organization of cellular components without lipid bilayer encapsulation [2] [5]. These biomolecular condensates function as specialized reaction hubs that concentrate specific biomolecules while excluding others, enabling regulation of complex biochemical processes [5]. Proteins involved in LLPS can act as drivers (capable of autonomous phase separation) or clients (recruited into pre-existing condensates), with many IDPs functioning as drivers due to their multivalent interaction potential [5].

IDPs in Cellular Processes and Human Disease

Physiological Roles in Cellular Signaling and Regulation

IDPs serve crucial functions as central hubs in cellular interaction networks, particularly in signaling and regulatory pathways [2]. Their structural flexibility allows IDPs to interact with multiple partners, often functioning as scaffolds for the assembly of complex macromolecular machines [2]. In transcriptional regulation, disordered activation domains enable combinatorial control of gene expression through dynamic interactions with coactivators and chromatin remodeling complexes [2]. The CREB-binding protein (CBP) represents a paradigmatic example, with its disordered nuclear coactivator binding domain (NCBD) adopting different structures when bound to different transcription factors, thereby expanding its functional repertoire [2].

Cell cycle control provides another illustrative example of IDP functionality, with disordered proteins such as p27 serving as dynamic regulators of cyclin-dependent kinases [2]. The conformational flexibility of p27 allows it to interact with multiple cyclin-CDK complexes, with its biological activity directly mediated by the intrinsic helicity of a disordered linker region [2]. Similarly, the p53 tumor suppressor protein relies on disordered regions for its regulation and function, with the conformational ensemble of its N-terminal transactivation domain fine-tuning its interaction with the negative regulator Mdm2 [2]. Subtle alterations in the residual structure of disordered p53 regions can significantly impact its function, demonstrating the exquisite sensitivity of IDP-mediated regulatory mechanisms [2].

Pathological Roles in Neurodegeneration and Cancer

The structural plasticity of IDPs that enables their crucial physiological functions also renders them vulnerable to misfolding and pathological aggregation in disease states. In neurodegenerative disorders, specific IDPs undergo conformational transitions that lead to toxic aggregation and disruption of proteostasis mechanisms [3].

Neurodegenerative Diseases: Multiple neurodegenerative conditions are characterized by the accumulation of misfolded IDPs, including TDP-43 in amyotrophic lateral sclerosis (ALS), tau and Aβ in Alzheimer's disease, α-synuclein in Parkinson's disease, and huntingtin in Huntington's disease [3] [6]. These proteins typically undergo liquid-liquid phase separation under physiological conditions, but perturbations in cellular homeostasis can drive aberrant phase transitions toward solid-like aggregates that form toxic inclusions [3]. The failure of proteostasis mechanisms, including the ubiquitin-proteasome system, autophagy, and molecular chaperones, exacerbates this pathological process by allowing accumulation of misfolded IDPs [3].

Cancer: IDPs function as central regulators of oncogenic signaling pathways, with their dysregulation contributing to tumor pathogenesis [4]. Prominent examples include the c-Myc transcription factor, which controls cell growth, apoptosis, and metabolic processes, and p53, which serves as a critical tumor suppressor [4]. The structural flexibility of these proteins enables them to participate in complex interaction networks, but also makes them vulnerable to mutational disruption that can lead to oncogenic activation or loss of tumor suppressor function [4]. IDPs are also heavily implicated in programmed cell death pathways, including apoptosis, autophagy, and necroptosis, with disordered regions facilitating crucial protein-protein interactions in these regulatory networks [7].

Table 2: Disease-Associated Intrinsically Disordered Proteins

| Disease Category | Representative IDPs | Pathological Mechanisms |

|---|---|---|

| Neurodegenerative | TDP-43, Tau, α-synuclein, Aβ, Huntingtin | Aberrant phase transitions, toxic aggregation, proteostasis failure |

| Cancer | c-Myc, p53 | Dysregulated signaling, altered interaction networks |

| Programmed Cell Death | Proteins in apoptosis, autophagy, necroptosis | Disrupted protein-protein interactions in death signaling |

Benchmarking Methodologies for IDP Ensemble Generation

Experimental Approaches for IDP Characterization

The dynamic nature of IDPs necessitates specialized experimental approaches that can capture their heterogeneous conformational ensembles rather than providing single static structures [8]. Several biophysical techniques have been adapted or developed specifically for studying disordered proteins:

Nuclear Magnetic Resonance (NMR) Spectroscopy: NMR provides unparalleled insights into IDP conformational dynamics across multiple timescales, from fast local motions (ps-ns) to slower conformational exchanges (μs-ms) [8]. Advanced NMR strategies including ¹³C detection, non-uniform sampling, and segmental isotope labeling address the challenges posed by spectral overcrowding and the low stability of IDPs [8]. Parameters such as chemical shifts, hydrogen exchange rates, and relaxation measurements reveal transient secondary structures and dynamic properties within IDP ensembles [8].

Small-Angle X-Ray Scattering (SAXS): SAXS provides low-resolution information about the overall dimensions and shape characteristics of IDPs in solution, offering valuable constraints for validating computational models [6] [9]. The technique yields ensemble-averaged parameters such as the radius of gyration (Rg) and pairwise distance distributions that reflect the global properties of IDP conformational ensembles [6].

Single-Molecule Fluorescence Resonance Energy Transfer (smFRET): This technique enables quantification of distance distributions between specific sites within IDPs, providing insights into conformational heterogeneity that may be obscured in ensemble-averaged measurements [2] [8].

Integrative Approaches: No single experimental technique can fully characterize IDP structural ensembles, necessitating integrative approaches that combine data from multiple methods [8] [9]. Maximum entropy reweighting procedures have emerged as powerful strategies for determining accurate atomic-resolution conformational ensembles by integrating molecular dynamics simulations with experimental data from NMR and SAXS [9]. These approaches minimize bias toward initial computational models while ensuring consistency with experimental observations [9].

Workflow for IDP Structural Ensemble Determination

Computational and AI-Based Approaches

Computational methods have become indispensable tools for predicting and characterizing IDP structural ensembles, complementing experimental approaches:

Molecular Dynamics (MD) Simulations: All-atom MD simulations provide atomic-resolution models of IDP conformational ensembles, but their accuracy depends heavily on the force fields used to describe interatomic interactions [9]. Recent improvements in force fields and water models have significantly enhanced the accuracy of MD simulations for IDPs, though discrepancies with experimental data persist [9]. Integrative approaches that combine MD simulations with experimental data through maximum entropy reweighting procedures have demonstrated particular promise for generating force-field independent conformational ensembles [9].

AlphaFold-Based Approaches: While initially developed for structured proteins, AlphaFold has shown surprising utility for predicting inter-residue distances in disordered proteins [6]. The AlphaFold-Metainference method leverages these predicted distances as structural restraints in molecular dynamics simulations to generate structural ensembles of IDPs [6]. This approach enables the transfer of distance information derived from folded proteins to the characterization of disordered proteins, addressing the challenge of limited high-resolution structural data for IDPs [6]. Validation against SAXS data and NMR measurements has demonstrated that AlphaFold-Metainference can generate accurate conformational ensembles for both highly disordered and partially disordered proteins [6].

Bioinformatics Predictors: Numerous computational tools have been developed for predicting intrinsic disorder from amino acid sequence, including DISOPRED, DISOclust, OnD-CRF, IUPred, ANCHOR, and ESpritz [2] [10]. These predictors analyze sequence features such as amino acid composition, complexity, and physicochemical properties to identify regions likely to be disordered [2] [10]. The D2P2 database provides a consensus of disorder predictions across multiple algorithms for the human proteome, facilitating comprehensive analysis of protein disorder [2].

Table 3: Performance Comparison of IDP Ensemble Generation Methods

| Method | Resolution | Key Applications | Limitations |

|---|---|---|---|

| NMR Spectroscopy | Atomic | Site-specific dynamics, transient structures | Limited to smaller proteins, spectral complexity |

| SAXS | Global dimensions | Ensemble shape, size validation | Low resolution, ensemble averaging |

| smFRET | Inter-site distances | Conformational heterogeneity, subpopulations | Requires labeling, limited coverage |

| Molecular Dynamics | Atomic | Detailed conformational sampling | Force field dependencies, computational cost |

| AlphaFold-Metainference | Atomic | Ensemble generation from predicted distances | Limited to AlphaFold-confident regions |

Table 4: Research Reagent Solutions for IDP Studies

| Resource Category | Specific Tools | Function and Application |

|---|---|---|

| Experimental Databases | DisProt, pE-DB, LLPSDB | Structured information on disordered proteins and conformational ensembles |

| Bioinformatics Predictors | IUPred, ANCHOR, PONDR, DISOPRED | Disorder and binding region prediction from sequence |

| Integrated Datasets | D2P2, LLPSDatasets | Consensus predictions and standardized benchmarking data |

| Specialized Resources | PhaSePro, DrLLPS, FuzDB | Phase separation proteins and fuzzy interactions |

The study of intrinsically disordered proteins has transformed our understanding of protein structure-function relationships, revealing the profound biological significance of structural plasticity and dynamics. IDPs play essential roles in cellular signaling, regulation, and organization through mechanisms that are fundamentally different from those employed by structured proteins. Their involvement in human diseases, particularly neurodegeneration and cancer, highlights the therapeutic potential of targeting disordered regions and their interactions.

Methodological advances in both experimental and computational approaches have dramatically improved our ability to characterize IDP structural ensembles, with integrative strategies combining multiple data sources providing particularly powerful insights. The recent development of AlphaFold-Metainference and robust maximum entropy reweighting protocols represents significant progress toward accurate, force-field independent conformational ensembles at atomic resolution [6] [9]. As these methods continue to evolve, they will enhance our understanding of IDP functions in health and disease, potentially enabling new therapeutic strategies that target the unique properties of disordered proteins.

For decades, structural biology has operated under a paradigm dominated by static structures, seeking to resolve proteins into single, stable three-dimensional configurations. This approach has proven remarkably successful for well-folded globular proteins, with breakthroughs like AlphaFold2 providing unprecedented access to accurate structural models [11]. However, this single-structure framework fundamentally fails to capture the dynamic nature of a significant portion of the proteome—intrinsically disordered proteins (IDPs) and intrinsically disordered regions (IDRs). These proteins, which constitute approximately 30-40% of the human proteome, perform critical cellular functions in signaling, transcriptional regulation, and molecular recognition without adopting fixed structures [12] [11]. Instead, they exist as dynamic conformational ensembles—rapidly interconverting collections of structures that cannot be meaningfully represented by any single conformation.

The limitations of static models have become increasingly apparent as researchers recognize that protein plasticity is not an exception but a fundamental feature of biological systems. This recognition has catalyzed a paradigm shift from structural biology to ensemble biology, where the objective is no longer to determine a single "correct" structure but to characterize the complete landscape of accessible conformations and their populations. This shift is particularly crucial for drug discovery, as approximately 80% of human proteins remain "undruggable" by conventional methods, largely because many challenging targets require therapeutic strategies that account for conformational flexibility and transient binding sites [11]. This comparison guide benchmarks current ensemble generation methods, providing structural biologists and drug development professionals with experimental data and protocols to navigate this evolving landscape.

Benchmarking Ensemble Generation Methods: Experimental Data and Performance Metrics

The evaluation of ensemble methods requires diverse metrics that capture their ability to accurately represent dynamic conformational states. The table below summarizes quantitative performance data for key computational approaches discussed in this guide.

Table 1: Performance Benchmarks of Ensemble Generation Methods for Disordered Proteins

| Method | Type | Key Features | Reported Performance | Best For |

|---|---|---|---|---|

| PepENS [13] | Ensemble ML | Combines ProtT5 embeddings, PSSM, HSE features | Precision: 0.596, AUC: 0.860 (Dataset 1) | Protein-peptide binding residue prediction |

| FiveFold [11] | Algorithm Ensemble | Combines 5 structure prediction algorithms | Functional Score: 0.82 (composite metric) | Capturing conformational diversity in IDPs |

| MaxEnt Reweighting [9] | MD Integration | Integrates MD with NMR/SAXS via maximum entropy | Kish Ratio: 0.10 (~3000 structures retained) | Atomic-resolution ensembles with experimental validation |

| RFdiffusion [14] | Generative AI | Designs binders to IDP sequences | Kd: 3-100 nM for various IDP targets | Generating high-affinity binders to disordered proteins |

| IDP-EDL [12] | Ensemble Deep Learning | Integrates task-specific predictors | N/A (Framework review) | Disorder prediction and MoRF identification |

These benchmarks reveal a trade-off between predictive accuracy and structural diversity. Methods like PepENS demonstrate high precision in specific binding prediction tasks [13], while approaches like FiveFold excel at capturing broad conformational diversity [11]. The maximum entropy reweighting method strikes a balance by refining molecular dynamics simulations with experimental data to produce ensembles that are both accurate and diverse [9].

Experimental Protocols for Ensemble Method Validation

Maximum Entropy Reweighting with NMR and SAXS

The maximum entropy reweighting protocol represents a robust approach for determining accurate atomic-resolution conformational ensembles of IDPs by integrating molecular dynamics simulations with experimental data [9].

Workflow Overview:

Detailed Protocol:

- Generate initial conformational ensemble using long-timescale all-atom MD simulations (typically 30μs) with state-of-the-art force fields such as a99SB-disp, Charmm22*, or Charmm36m [9].

- Collect experimental data including NMR chemical shifts, J-couplings, residual dipolar couplings, and SAXS curves.

- Apply forward models to predict experimental observables from each frame of the MD ensemble using established computational methods [9].

- Perform maximum entropy reweighting by minimizing the equation: ( L(w) = \sum wi \log wi + \sum \lambdaj (\langle Oj \rangle - Oj^{\text{exp}})^2 ), where ( wi ) are conformation weights, ( \lambdaj ) are Lagrange multipliers, ( \langle Oj \rangle ) are ensemble-averaged observables, and ( O_j^{\text{exp}} ) are experimental values [9].

- Set Kish ratio threshold to 0.10, ensuring the final ensemble contains approximately 3000 structures with significant weights, balancing accuracy and diversity [9].

- Validate reweighted ensembles by comparing against experimental data not used in the reweighting process and assessing convergence across different force fields.

FiveFold Conformational Sampling Workflow

The FiveFold methodology generates conformational ensembles through a sophisticated consensus-building approach that leverages multiple prediction algorithms [11].

Ensemble Generation Process:

Detailed Protocol:

- Algorithmic prediction: Process the target sequence through five complementary structure prediction algorithms: AlphaFold2, RoseTTAFold, OmegaFold, ESMFold, and EMBER3D [11].

- Secondary structure assignment: Analyze each algorithm's output using the Protein Folding Shape Code system to assign standardized secondary structure elements [11].

- PFVM construction: Build a Protein Folding Variation Matrix by analyzing each 5-residue window across all five algorithms to capture local structural preferences and variations [11].

- Probabilistic sampling: Apply user-defined selection criteria to sample conformational diversity, ensuring minimum RMSD between conformations and appropriate secondary structure content ranges [11].

- Structure construction: Convert each PFSC string to 3D coordinates using homology modeling against the PDB-PFSC database [11].

- Quality assessment: Filter ensembles through stereochemical validation to ensure physically reasonable conformations [11].

RFdiffusion Binder Design for IDPs

RFdiffusion represents a groundbreaking approach for generating binders to intrinsically disordered proteins starting from sequence information alone [14].

Detailed Protocol:

- Input specification: Provide only the target IDP sequence without pre-specifying target geometry or conformation [14].

- Flexible target diffusion: Use RFdiffusion fine-tuned for flexible targets to generate complexes with varying conformations for both the IDP and designed binder [14].

- Two-sided partial diffusion: Implement partial diffusion that samples varied target and binder conformations simultaneously to enhance shape complementarity [14].

- Sequence design: Generate sequences for backbone structures using ProteinMPNN [14].

- Filtering: Screen designs using AlphaFold2 for monomer conformation and complex formation [14].

- Affinity maturation: Employ additional partial diffusion cycles to optimize binding affinity, selecting designs with increased hydrogen bonds between target and binder [14].

- Experimental validation: Test binding affinity using biolayer interferometry and assess thermostability through circular dichroism [14].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of ensemble methods requires specific computational tools and resources. The table below catalogues essential solutions for researchers entering this field.

Table 2: Research Reagent Solutions for Ensemble Structural Biology

| Resource | Type | Function | Access |

|---|---|---|---|

| ProtT5/ESM-2 [13] [12] | Protein Language Model | Generates sequence embeddings for feature extraction | Publicly available |

| Charmm36m/a99SB-disp [9] | Molecular Dynamics Force Field | Provides physical models for MD simulations | Publicly available |

| PSSM Profiles [13] | Evolutionary Feature | Captures evolutionary conservation patterns | Derived from multiple sequence alignments |

| Half-Sphere Exposure [13] | Structural Feature | Quantifies residue solvent accessibility in specific directions | Calculated from structural models |

| DeepInsight [13] | Feature Transformation | Converts tabular data into image-like formats for CNN processing | Publicly available |

| NMR Chemical Shifts [9] | Experimental Data | Provides residue-specific structural information | Experimental measurement |

| SAXS Curves [9] | Experimental Data | Reports on global dimensions and shape characteristics | Experimental measurement |

These tools enable researchers to capture different aspects of protein disorder and dynamics. Protein language models like ProtT5 and ESM-2 have proven particularly valuable, providing rich residue-level embeddings that capture evolutionary patterns relevant to disorder and molecular recognition [13] [12]. When combined with structural features like half-sphere exposure and evolutionary features from PSSM profiles, these embeddings form a powerful feature set for training ensemble machine learning models like PepENS [13].

The paradigm shift from static structures to dynamic ensembles represents more than just a methodological evolution—it fundamentally changes how we understand and manipulate biological systems. As the benchmarks and protocols in this guide demonstrate, ensemble methods are maturing from specialized tools into robust platforms for interrogating protein function. The convergence of machine learning, molecular simulations, and experimental biophysics has created an exciting trajectory where accurately determining force-field independent conformational ensembles of IDPs is becoming feasible [9].

The implications for drug discovery are profound. Ensemble approaches enable targeting of transient binding sites, allosteric pockets, and dynamic interaction networks that are invisible to static structure methods. Techniques like RFdiffusion for designing binders to IDPs [14] and FiveFold for mapping conformational landscapes [11] are already expanding the druggable proteome. As these methods continue to evolve, integrating better with experimental data and improving computational efficiency, they promise to unlock new therapeutic strategies for previously intractable targets.

The future of ensemble structural biology lies in tighter integration between methods—combining the strengths of AI-based prediction, physics-based simulation, and experimental validation to create multi-scale models that capture both atomic details and biological timescales. This integration will ultimately provide a more complete understanding of protein function, enabling precision interventions in health and disease.

Intrinsically Disordered Proteins (IDPs) and protein regions challenge the classical structure-function paradigm by performing crucial biological roles without adopting a single, stable three-dimensional conformation. Instead, they exist as dynamic structural ensembles, rapidly interconverting between multiple conformations in solution. Characterizing these heterogeneous ensembles is essential for understanding their functions in cellular signaling, regulation, and assembly, as well as their implications in neurodegenerative diseases and cancer. This guide provides a comparative analysis of three key experimental techniques—Nuclear Magnetic Resonance (NMR), Small-Angle X-Ray Scattering (SAXS), and Paramagnetic Relaxation Enhancement (PRE)—for determining accurate conformational ensembles of IDPs. Framed within the broader context of benchmarking ensemble generation methods, we objectively evaluate the performance, capabilities, and limitations of each technique to inform methodological choices in disordered protein research.

Fundamental Principles and Measurables

Each experimental technique probes different aspects of IDP conformational ensembles, providing complementary information that can be integrated for a more complete structural understanding.

Nuclear Magnetic Resonance (NMR) spectroscopy provides atomic-resolution information about local structural propensities and dynamics. Key observables include chemical shifts (sensitive to secondary structure propensity), scalar couplings (reporting on backbone dihedral angles), residual dipolar couplings (RDCs, providing orientational constraints), and relaxation parameters (characterizing picosecond-to-nanosecond dynamics). NMR is particularly powerful for identifying transient secondary structure and quantifying local flexibility within disordered chains [9].

Small-Angle X-Ray Scattering (SAXS) offers low-resolution but global information about overall molecular dimensions and shape. The primary measurables include the radius of gyration (Rg), which describes the overall size of the molecule, and the pair-wise distance distribution function P(r), which provides a histogram of all intra-molecular distances within the ensemble. SAXS is exceptionally valuable for detecting large-scale conformational changes and assessing compaction or expansion of IDPs under different conditions [15] [9].

Paramagnetic Relaxation Enhancement (PRE) measures long-range distance restraints (up to ~35 Å) that are challenging to obtain by other methods. By introducing paramagnetic labels at specific sites and measuring their effects on nuclear relaxation rates, PRE provides information about transient contacts and long-range interactions within heterogeneous ensembles. This technique is particularly powerful for detecting low-populated compact states that might be invisible to other methods [6].

Table 1: Key Experimental Observables for IDP Ensemble Characterization

| Technique | Primary Observables | Spatial Resolution | Distance Range | Key Parameters |

|---|---|---|---|---|

| NMR | Chemical shifts, J-couplings, RDCs, relaxation rates | Atomic-level | Short-range (1-5 Å) | δ (ppm), J (Hz), R₁, R₂, NOE |

| SAXS | Rg, P(r) function, Kratky plot | Global/molecular | 10-100+ Å | Rg (Å), Dmax (Å), I(q) vs q |

| PRE | Paramagnetic relaxation rates (Γ₂) | Intermediate | Up to ~35 Å | Γ₂ (s⁻¹), distance restraints |

Technical Comparison and Benchmarking Data

When benchmarking ensemble generation methods, understanding the performance characteristics of each experimental technique is crucial for appropriate experimental design and data interpretation.

Information Content and Resolution

NMR provides the highest atomic-resolution data but primarily reports on local structure. The chemical shift is exquisitely sensitive to local environment and secondary structure propensity, with deviations from random coil values indicating transient structural formation. Recent advances in maximum entropy reweighting procedures have demonstrated how to integrate extensive NMR datasets with molecular dynamics simulations to determine accurate atomic-resolution conformational ensembles of IDPs [9].

SAXS delivers global structural parameters that are highly sensitive to overall chain dimensions and shape. The P(r) function provides a model-free description of the distance distribution within the molecule. However, SAXS data are ensemble-averaged and can be consistent with multiple conformational distributions, creating an inherent degeneracy in interpretation. Research shows that individual AlphaFold2 structures of disordered proteins show poor agreement with SAXS data, underscoring the necessity of ensemble representations for IDPs [6].

PRE bridges local and global information by providing sparse but valuable long-range distance restraints. These measurements are particularly important for detecting and characterizing transient compact states that may be functionally relevant. However, PRE requires site-specific labeling and the introduction of paramagnetic probes that could potentially perturb the native conformational ensemble [6].

Throughput and Sample Requirements

SAXS offers the highest experimental throughput, requiring relatively short measurement times (seconds to minutes) and moderate sample concentrations (0.5-5 mg/mL). Modern automated sample changers enable high-throughput screening of multiple conditions, making SAXS ideal for studying environmental effects on IDP conformation.

NMR demands higher sample concentrations (0.1-1 mM) and longer acquisition times (hours to days), especially for multi-dimensional experiments. Recent advances in non-uniform sampling and sensitivity-enhanced probes have improved throughput, but NMR remains more time-intensive than SAXS.

PRE requires additional sample preparation for specific labeling with paramagnetic probes (typically MTSL or EDTA-derived tags), adding complexity and time to experimental workflow. Each specific site of interest requires separate labeling and measurement.

Table 2: Technical Specifications and Benchmarking Performance

| Parameter | NMR | SAXS | PRE |

|---|---|---|---|

| Sample Amount | 50-500 μL (0.1-1 mM) | 10-50 μL (0.5-5 mg/mL) | 50-500 μL (0.1-1 mM) |

| Measurement Time | Hours to days | Seconds to minutes | Hours per site |

| Labeling Required | Optional (¹⁵N, ¹³C) | No | Yes (paramagnetic) |

| Information Type | Local structure, dynamics | Global dimensions, shape | Long-range distances |

| Maximum Range | Bond lengths to ~15 Å | 10 to数百 Å | Up to ~35 Å |

| Key Strengths | Atomic resolution, site-specific, dynamics | Solution state, rapid, model-free | Long-range restraints, sparse states |

Experimental Protocols and Methodologies

NMR for IDP Ensemble Determination

Sample Preparation: Uniformly ¹⁵N- and/or ¹³C-labeling is typically required for assignment and structural studies. IDP samples are prepared in appropriate buffers, often at lower concentrations than folded proteins to prevent aggregation (typically 0.1-0.5 mM). Reducing agents may be added to prevent cysteine oxidation.

Data Collection: Standard experiments include: 1) 2D ¹H-¹⁵N HSQC for assignment and fingerprinting; 2) ¹³C-detected experiments for low-sensitivity or aggregating samples; 3) T₁, T₂, and heteronuclear NOE measurements for dynamics; 4) Residual Dipolar Couplings (RDCs) in aligned media for orientation restraints.

Data Integration with Simulations: The maximum entropy reweighting approach has emerged as a powerful method for integrating NMR data with molecular dynamics simulations. As described in recent work, this procedure involves: "Using forward models to predict the values of the experimental measurements used as restraints in each frame of the unbiased MD ensemble" followed by reweighting to achieve agreement with experimental data while minimizing perturbation to the simulation force field [9].

SAXS Data Collection and Analysis

Sample and Buffer Matching: SAXS measurements require careful buffer subtraction to extract the protein scattering signal. Matched reference buffer is measured before or after the protein sample. Ideally, multiple concentrations are measured to extrapolate to infinite dilution and eliminate effects of interparticle interference.

Data Collection Parameters: Modern synchrotron-based SAXS instruments typically use X-ray wavelengths of ~1 Å, with sample-to-detector distances calibrated for q-range of approximately 0.01 to 5 nm⁻¹ (q = 4πsinθ/λ, where 2θ is the scattering angle). Exposure times are optimized to minimize radiation damage while maintaining good signal-to-noise.

Advanced SAXS Applications: The SAXS-A-FOLD website provides an automated pipeline for "ensemble modeling optimizing the fit of AlphaFold or user-supplied protein structures with flexible regions to SAXS data." The protocol involves: "A starting pool of typically 10-50 × 10³ conformations is generated using a Monte Carlo method that samples backbone dihedral angles along the chosen segments of potential flexibility in the protein structures," followed by ensemble selection using non-negative least squares (NNLS) optimization against experimental data [15].

PRE Measurement and Interpretation

Spin Labeling: Cysteine residues are introduced at desired positions via site-directed mutagenesis, followed by modification with paramagnetic probes such as MTSL. Unlabeled cysteines should be removed, and labeling efficiency must be verified by mass spectrometry.

Data Collection: PRE rates (Γ₂) are measured by comparing signal intensities or relaxation rates in paramagnetic (oxidized) and diamagnetic (reduced) states. The difference in transverse relaxation rates (ΔR₂) between these states provides the Γ₂ value.

Ensemble Interpretation: PRE data are particularly challenging to interpret for heterogeneous ensembles because the measured Γ₂ values represent population-weighted averages of all conformations. Advanced computational methods, including ensemble reweighting and maximum entropy approaches, are required to derive structural models consistent with PRE data.

Integrated Approaches and Workflows

No single technique provides a complete picture of IDP conformational landscapes. Integrated approaches that combine multiple experimental observables with computational methods have emerged as the most powerful strategy for determining accurate ensembles.

Figure 1: Integrative Workflow for IDP Ensemble Determination. Multiple experimental data sources are combined with computational sampling methods through ensemble reweighting approaches to generate validated structural ensembles.

The maximum entropy reweighting framework has proven particularly successful for integration. As demonstrated in recent work: "We demonstrate how to determine accurate atomic resolution conformational ensembles of IDPs by integrating all-atom MD simulations with experimental data from nuclear magnetic resonance (NMR) spectroscopy and small-angle x-ray scattering (SAXS) with a simple, robust and fully automated maximum entropy reweighting procedure" [9].

Similarly, AlphaFold-based approaches are being adapted for ensemble modeling: "We introduce the AlphaFold-Metainference method to use AlphaFold-derived distances as structural restraints in molecular dynamics simulations to construct structural ensembles of ordered and disordered proteins" [6].

Research Reagent Solutions

Successful characterization of IDP ensembles requires specialized reagents and computational resources. The following table details key solutions used in the featured experiments.

Table 3: Essential Research Reagents and Resources for IDP Ensemble Studies

| Category | Specific Resource | Function/Application | Example Use |

|---|---|---|---|

| Computational Tools | SAXS-A-FOLD (https://saxsafold.genapp.rocks) | Ensemble modeling of flexible regions against SAXS data | Optimizing fit of AlphaFold structures to SAXS data [15] |

| Computational Tools | WAXSiS | Calculating theoretical SAXS profiles from structures | Validating ensemble models against experimental I(q) [15] |

| Databases | Protein Ensemble Database | Repository of conformational ensembles | Accessing validated IDP ensembles for benchmarking [9] |

| Software | OpenFold | Trainable AlphaFold2 implementation | Fine-tuning with experimental restraints (DEERFold) [16] |

| Sample Prep | Isotopically labeled media (¹⁵N, ¹³C) | NMR sample preparation | Enabling multidimensional NMR studies of IDPs [9] |

| Probes | MTSL and similar compounds | Site-directed spin labeling | Introducing paramagnetic centers for PRE measurements [6] |

NMR, SAXS, and PRE each provide distinct and valuable insights into the conformational landscapes of intrinsically disordered proteins. NMR excels at providing atomic-resolution information about local structure and dynamics, SAXS delivers global parameters describing overall dimensions and shape, and PRE offers unique access to long-range interactions and sparsely populated states. The most accurate ensemble descriptions emerge from integrated approaches that combine multiple experimental observables with computational sampling through maximum entropy reweighting or similar Bayesian approaches. As the field advances, the development of automated pipelines like SAXS-A-FOLD and AlphaFold-Metainference, along with standardized benchmarking datasets, will increasingly enable researchers to determine force-field independent conformational ensembles of IDPs at atomic resolution. These advances will ultimately enhance our understanding of IDP function in health and disease, facilitating drug development strategies targeting these challenging but biologically crucial proteins.

Intrinsically disordered proteins (IDPs) and regions (IDRs) represent a significant portion of the human proteome and play crucial roles in cellular signaling, transcriptional regulation, and dynamic protein-protein interactions [12] [11]. Unlike folded proteins with stable three-dimensional structures, IDPs exist as dynamic structural ensembles of rapidly interconverting conformations under physiological conditions [9] [6]. This inherent flexibility makes them impossible to characterize with single static structures, presenting unique challenges for structural biologists and drug discovery professionals. Accurate ensemble generation—the computational process of constructing representative sets of protein conformations—has thus become paramount for understanding IDP function and dysfunction [11] [9].

The field faces three fundamental challenges that complicate ensemble determination. First, the degeneracy problem arises because infinitely many conformational ensembles can agree with any given set of experimental measurements within error margins [17]. Second, inadequate sampling occurs when computational methods fail to explore the full conformational landscape, missing rare but functionally important states [18] [19]. Third, force field inaccuracies introduce biases because the physical models used in simulations imperfectly represent atomic interactions, leading to ensembles that diverge from reality [9]. This review examines these interconnected challenges, compares current methodological approaches for addressing them, and provides a benchmarking framework based on recent experimental and computational advances.

The Degeneracy Problem: Multiple Solutions for Single Experimental Datasets

Nature of the Problem

Degeneracy presents a fundamental mathematical challenge in ensemble modeling of IDPs. As Hummer and Köfinger explicitly state, "there are generally several different sets of weights, say, w⃗1, ..., w⃗N, with w⃗i ≠ w⃗j, such that ξMi(w⃗l) is less than some threshold that defines reasonable agreement with experiment for all l" [17]. This means that for any given IDP under specific experimental conditions, multiple structurally distinct ensembles can reproduce the same experimental observables within acceptable error ranges. The problem is particularly pronounced with sparse experimental datasets, which are common in IDP characterization due to technical limitations [9].

Computational Approaches to Mitigate Degeneracy

Table 1: Methods for Addressing Ensemble Degeneracy

| Method | Core Principle | Advantages | Limitations |

|---|---|---|---|

| Bayesian Weighting (BW) [17] | Estimates probability distribution over possible weights for conformers using Bayesian statistics | Provides built-in uncertainty quantification; combines experimental and theoretical information | Requires representative initial conformational sampling; computationally intensive |

| Maximum Entropy Reweighting [9] | Applies minimal perturbation to computational models to match experimental data | Preserves maximum information from initial sampling; automated balancing of multiple data sources | Dependent on quality of initial ensemble; may require extensive experimental data |

| FiveFold Consensus [11] | Combines predictions from five complementary algorithms to generate ensembles | Reduces individual algorithmic biases; captures broader conformational diversity | Computational resource intensive; complex implementation |

The Bayesian weighting formalism directly addresses degeneracy by reframing it as a statistical uncertainty problem. Instead of identifying a single "best fit" set of weights, BW calculates a probability density over all possible ways of weighting conformers in an ensemble, effectively quantifying the uncertainty in the estimates themselves [17]. This approach incorporates both experimental data and theoretical predictions through a likelihood function and prior distribution, typically centered on Boltzmann weights derived from potential energy calculations.

Maximum entropy methods provide an alternative framework where researchers "seek to introduce the minimal perturbation to a computational model required to match a set of experimental data" [9]. This principle ensures that the final ensemble retains as much information as possible from the initial computational model while satisfying experimental constraints. Recent implementations have automated the balancing of restraints from multiple experimental datasets, using the desired effective ensemble size as a single adjustable parameter [9].

Consensus approaches like FiveFold tackle degeneracy through methodological diversity. By integrating predictions from five distinct algorithms—AlphaFold2, RoseTTAFold, OmegaFold, ESMFold, and EMBER3D—the method identifies common folding patterns while explicitly capturing variations through its Protein Folding Variation Matrix [11]. This ensemble strategy mitigates individual algorithmic limitations and generates multiple plausible conformations that collectively represent the protein's conformational landscape.

The Sampling Challenge: Exploring Vast Conformational Landscapes

Limitations of Traditional Sampling Methods

Molecular dynamics simulations face fundamental limitations in sampling the complete conformational landscape of IDPs. As Sun et al. explain, "MD trajectories are constrained by rugged energy landscapes whose high barriers render functional transitions rare on simulation timescales" [18]. Conventional runs consequently become trapped in local minima and undersample transient or high-energy states that are often functionally critical. This sampling inadequacy persists despite advances in computing power because the relevant timescales for functional transitions in IDPs can extend beyond what is computationally feasible with all-atom simulations [19].

The sampling problem is particularly acute for IDPs with specific structural preferences. For example, α-synuclein, associated with Parkinson's disease, contains regions with residual secondary structure and long-range contacts that occur transiently but may be crucial for its aggregation propensity [17]. Capturing these rare events requires sampling techniques that efficiently explore conformational space beyond local minima.

Advanced Sampling and Generative Approaches

Table 2: Methods for Enhanced Conformational Sampling

| Method | Sampling Strategy | Theoretical Basis | Representative Applications |

|---|---|---|---|

| Energy Preference Optimization (EPO) [18] | Online refinement using energy-ranking mechanism and list-wise preference optimization | Stochastic differential equation sampling; preference optimization | Tetrapeptides, ATLAS, Fast-Folding benchmarks |

| AlphaFold-Metainference [6] | Uses AF-predicted distances as restraints in MD simulations | Maximum entropy principle; metainference approach | Highly disordered proteins; TDP-43, ataxin-3, prion protein |

| Deep Generative Models (DGMs) [19] | Learn parametric model of equilibrium distribution from data | Variational autoencoders, GANs, normalizing flows, diffusion models | Conformation sampling beyond simulation timescales |

Energy Preference Optimization represents a novel approach that turns pretrained protein ensemble generators into energy-aware samplers without requiring additional MD trajectories [18]. EPO incorporates a physics-based energy ranking mechanism that employs listwise preference optimization to guide the generator toward diverse and physically realistic ensembles rather than single low-energy states. This method establishes a new state-of-the-art in nine evaluation metrics on Tetrapeptides, ATLAS, and Fast-Folding benchmarks, demonstrating that energy-only preference signals can efficiently steer generative models toward thermodynamically consistent conformational ensembles [18].

AlphaFold-Metainference addresses the sampling problem by leveraging deep learning predictions as restraints. The method uses AlphaFold-predicted inter-residue distances as structural restraints in molecular dynamics simulations to construct structural ensembles of ordered and disordered proteins [6]. This approach effectively transfers information from the extensive databases of folded proteins to the prediction of disordered protein ensembles, despite AlphaFold having been trained primarily on structured proteins from the PDB.

Deep generative models offer a fundamentally different approach to sampling protein conformational space. As reviewed by Deep Generative Modeling of Protein Conformations, DGMs learn a parametric model of the equilibrium distribution of protein conformations directly from data, enabling rapid generation of diverse, independent structural samples [19]. This allows scalable exploration of conformational landscapes that are otherwise prohibitively expensive to access with conventional simulations, bridging a critical gap in our ability to model protein dynamics.

Figure 1: Workflow for Generating Accurate Protein Conformational Ensembles. This diagram illustrates the integrated approach required to address the major challenges in ensemble generation, combining multiple computational and experimental strategies.

Force Field Inaccuracies: The Physical Model Challenge

Assessing Force Field Performance

Force field inaccuracies remain a significant obstacle in generating accurate IDP ensembles. As Borthakur et al. demonstrate, "MD simulations are limited by the accuracy of the force fields used to describe the interactions between atoms in molecules" [9]. Despite recent improvements in molecular mechanics force fields and water models, discrepancies between simulations and experiments persist among the best performing force fields. These inaccuracies stem from approximations in the potential energy functions that simplify the complex quantum mechanical interactions governing atomic behavior.

Comparative studies have evaluated force fields such as a99SB-disp, Charmm22*, and Charmm36m against experimental data for IDPs including Aβ40, drkN SH3, ACTR, PaaA2, and α-synuclein [9]. The results show that different force fields can produce substantially different conformational distributions, with varying agreement with experimental measurements. This force field dependence introduces systematic biases that propagate through all downstream analyses and applications.

Integrative Methods for Force Field Improvement

Integrative approaches that combine MD simulations with experimental data provide a path toward force-field independent ensembles. The maximum entropy reweighting procedure introduced by Borthakur et al. enables the determination of accurate atomic-resolution conformational ensembles of IDPs by integrating all-atom MD simulations with extensive experimental datasets from NMR and SAXS [9]. This approach automatically balances restraints from multiple experimental sources using the desired effective ensemble size as a single parameter.

Remarkably, when applied to IDPs where initial force field ensembles show reasonable agreement with experimental data, reweighted ensembles from different force fields converge to highly similar conformational distributions [9]. This convergence suggests that with sufficient experimental data, it becomes possible to determine physically realistic atomic-resolution IDP ensembles with conformational properties that are independent of the initial force fields used to generate the computational models.

Table 3: Force Field Comparison in Ensemble Generation

| Force Field | Water Model | Key Strengths | Documented Limitations |

|---|---|---|---|

| a99SB-disp [9] | a99SB-disp water | Specifically optimized for disordered proteins | Potential overcompaction in certain sequences |

| Charmm22* [9] | TIP3P water | Balanced performance for folded and disordered regions | Underestimation of helical propensity in some IDPs |

| Charmm36m [9] | TIP3P water | Improved accuracy for membrane proteins and IDPs | Occasional overextension in highly charged regions |

Benchmarking Ensemble Generation Methods: Quantitative Comparisons

Performance Metrics and Experimental Validation

Rigorous benchmarking requires multiple complementary metrics to evaluate ensemble accuracy, diversity, and physical realism. The Functional Score used in FiveFold represents a composite metric evaluating conformational utility for drug discovery applications, incorporating structural diversity (0-1 scale), experimental agreement (0-1 scale), binding site accessibility (0-1 scale), and computational efficiency (0-1 scale) with weighted contributions [11].

Experimental validation remains essential for assessing ensemble accuracy. Small-angle X-ray scattering provides information about global dimensions and pairwise distance distributions, while nuclear magnetic resonance spectroscopy offers residue-specific structural and dynamic information [9] [6]. For the AlphaFold-Metainference approach, validation against SAXS data for 11 highly disordered proteins showed better agreement compared to individual AlphaFold structures or CALVADOS-2 ensembles [6]. Similarly, maximum entropy reweighting demonstrated exceptional agreement with extensive NMR datasets for five IDPs, including Aβ40 and α-synuclein [9].

Comparative Performance Across Methods

Table 4: Benchmarking Results Across Ensemble Generation Methods

| Method | Experimental Agreement | Conformational Diversity | Computational Efficiency | Key Applications |

|---|---|---|---|---|

| Maximum Entropy Reweighting [9] | Exceptional agreement with NMR/SAXS | Preserves diversity from initial sampling | Moderate (requires initial MD) | Aβ40, α-synuclein, ACTR, drkN SH3, PaaA2 |

| AlphaFold-Metainference [6] | Good agreement with SAXS data | Captures flexibility in disordered regions | High (leverages pre-trained AF) | Highly disordered proteins; TDP-43, ataxin-3 |

| Energy Preference Optimization [18] | State-of-art in 9 distribution metrics | High diversity and physical realism | High after initial training | Tetrapeptides, ATLAS, Fast-Folding |

| FiveFold Consensus [11] | Good consensus across methods | High structural diversity | Low (five algorithms) | Alpha-synuclein, expanded druggable proteome |

Performance comparisons reveal method-specific strengths and limitations. Maximum entropy reweighting achieves exceptional experimental agreement but requires initial MD simulations, making it computationally demanding [9]. AlphaFold-Metainference provides efficient ensemble generation leveraging pre-trained deep learning models but may miss some conformational states not represented in the training data [6]. Energy Preference Optimization establishes new state-of-the-art performance across multiple distributional metrics while maintaining computational efficiency after initial training [18]. The FiveFold consensus approach generates highly diverse ensembles but requires running five separate structure prediction algorithms [11].

Table 5: Research Reagent Solutions for Ensemble Generation

| Resource | Type | Function | Implementation Examples |

|---|---|---|---|

| EnGens Pipeline [20] | Software Framework | Generation and analysis of representative conformational ensembles | Python package with Docker image; featurization via PyEmma |

| PENSA [20] | Analysis Toolkit | Provides metrics for ensemble comparison (Jensen-Shannon Distance, Kolmogorov-Smirnov Statistic) | Comparison of generated ensembles from different methods |

| ProDy [20] | Dynamics Analysis | Algorithms for studying protein dynamics, including normal mode analysis | Dynamic dataset analysis alongside EnGens |

| SHIFTX [17] | Prediction Algorithm | Predicts chemical shifts from protein structures | Used in Bayesian weighting likelihood functions |

| CALVADOS-2 [6] | Coarse-Grained Model | Efficient sampling of disordered protein ensembles | Benchmark for AlphaFold-Metainference validation |

The computational tools available for ensemble generation have expanded significantly, providing researchers with specialized resources for different aspects of the workflow. The EnGens pipeline offers a unified framework for generating and analyzing protein conformational ensembles from both static datasets (e.g., experimental structures) and dynamic datasets (e.g., MD simulations) [20]. It provides customizable featurization through PyEmma and incorporates both linear and nonlinear dimensionality reduction techniques.

Specialized analysis toolkits like PENSA provide different metrics for comparing generated ensembles, including Jensen-Shannon Distance, Kolmogorov-Smirnov Statistic, and Overall Ensemble Similarity [20]. These metrics enable quantitative comparisons between ensembles generated by different methods or against reference ensembles.

For force field assessment and refinement, the maximum entropy reweighting code published alongside Borthakur et al.'s work provides a fully automated procedure for integrating MD simulations with extensive experimental datasets [9]. This resource facilitates the calculation of accurate, force-field independent conformational ensembles of IDPs at atomic resolution.

The field of ensemble generation for disordered proteins has made significant strides in addressing the fundamental challenges of degeneracy, sampling, and force field accuracy. Integrative approaches that combine computational models with experimental data have demonstrated particular promise, enabling the determination of accurate conformational ensembles that transcend the limitations of individual methods. The convergence of reweighted ensembles from different force fields to similar conformational distributions suggests that force-field independent ensemble determination is achievable with sufficient experimental data [9].

Future advancements will likely come from several directions. Improved physical models through continued force field refinement will enhance the accuracy of initial conformational sampling. More efficient sampling algorithms, particularly deep generative models, will enable broader exploration of conformational landscapes [19]. Enhanced experimental techniques will provide richer datasets for validating and refining computational ensembles. Finally, standardized benchmarking initiatives similar to the CAID2 program for disorder prediction will establish community-wide standards for evaluating ensemble generation methods [12].

As these developments converge, the field moves closer to routine determination of accurate atomic-resolution conformational ensembles for disordered proteins. This capability will fundamentally advance our understanding of IDP function and dysfunction, opening new opportunities for therapeutic intervention against challenging targets that have previously resisted drug discovery efforts [11] [9].

A Landscape of Computational Methods: From Physics-Based Simulations to AI-Driven Generation

Molecular dynamics (MD) simulations serve as an indispensable tool in computational biology and drug discovery, providing atomic-level insights into protein structure, dynamics, and interactions that complement experimental approaches [21]. The accuracy and reliability of these simulations are fundamentally governed by the force field—the mathematical model that describes the potential energy surface of a molecular system as a function of atomic positions [22]. While modern force fields have achieved considerable success in simulating structured proteins, accurately modeling intrinsically disordered proteins (IDPs) and regions (IDRs) presents unique challenges due to their structural heterogeneity and conformational flexibility [23] [24].

The development of force fields capable of simultaneously describing both structured domains and disordered regions remains an active area of research. This comparison guide objectively assesses current state-of-the-art force fields within the specific context of benchmarking ensemble generation methods for disordered proteins research. We evaluate force field performance based on their ability to reproduce experimental observables across diverse protein systems, with particular emphasis on IDP chain dimensions, secondary structure propensities, and the stability of folded domains when present in hybrid proteins containing both ordered and disordered regions [24] [25].

Current Challenges in IDP Force Field Development

Modeling IDPs with MD simulations presents distinct challenges not typically encountered with structured proteins. The energy landscapes of IDRs are weakly funneled, making conformational sampling extremely inefficient [25]. Furthermore, conventional force fields parameterized for globular proteins often produce overly compact IDP conformations with underestimated radii of gyration (Rg) compared to experimental measurements [26] [24]. This "collapsed" behavior arises primarily from imbalances between protein-protein and protein-water interactions, as well as inaccuracies in backbone dihedral potentials that favor structured states over disordered ensembles [23].

A significant complication in force field development is the system-dependent nature of performance. A force field that excels for one IDP may perform poorly for another, making transferability a key challenge [24]. This necessitates benchmarking across multiple protein systems with diverse sequence characteristics and structural features. Additionally, hybrid proteins containing both structured domains and disordered regions require force fields that can accurately capture both types of structural elements simultaneously—a demanding test that many force fields fail [25].

Force Field Comparison and Performance Assessment

Comprehensive Force Field Benchmarking Tables

Table 1: Performance Summary of Major Force Field Families for IDP Simulations

| Force Field | Base Family | Key Features/Modifications | Recommended Water Model | Strengths | Limitations |

|---|---|---|---|---|---|

| CHARMM36m [26] [24] | CHARMM | Modified torsional parameters; adjusted protein-water interactions | TIP3P-modified (CHARMM) | Balanced performance for folded/IDP regions; good Rg prediction [24] | May over-stabilize certain secondary structures [26] |

| ff99SB-disp [26] | AMBER | Pair with TIP4P-D water; enhanced dispersion interactions | TIP4P-D | Excellent IDP dimensions; good for many disordered systems [26] | May over-stabilize protein-water interactions [26] |

| DES-Amber [26] | AMBER | Optimized against osmotic pressure data | Modified TIP4P-D | Improved protein-protein association | Limited testing on diverse IDPs [26] |

| ff03ws [21] [25] | AMBER | Upscaled protein-water interactions; backbone torsional adjustments | TIP4P/2005 | Accurate IDP chain dimensions [21] | Can destabilize folded domains [21] |

| ff99SBws [21] | AMBER | Selective water scaling; torsional refinements | TIP4P/2005 | Maintains folded stability while sampling IDP ensembles [21] | Slightly expanded folded domains [21] |

| a99SB-ILDN [25] | AMBER | Sidechain torsional improvements (Ile, Leu, Asp, Asn) | TIP3P (standard) | Good sidechain rotamers | Overly compact IDPs without modified water models [25] |

| CHARMM22* [25] | CHARMM | Backbone dihedral adjustments | TIP3P (standard) | Improved helix-coil balance | Limited testing on complex hybrid proteins [25] |

Table 2: Quantitative Performance Metrics from Recent Benchmarking Studies

| Force Field | R2-FUS-LC Rg (Å) [24] | R2-FUS-LC SSP Score [24] | R2-FUS-LC Contact Score [24] | Overall Score [24] | Ubiquitin Stability [21] | Villin HP35 Stability [21] |

|---|---|---|---|---|---|---|

| c36m2021s3p | 10.0-14.4 (matched) | 0.71 | 0.69 | 0.73 | Stable | Stable |

| a19sbopc | 10.0-14.4 (matched) | 0.68 | 0.58 | 0.63 | Stable | Stable |

| a99sb4pew | 10.0 (biased) | 0.70 | 0.56 | 0.68 | Stable | Stable |

| c36ms3p | 14.4 (biased) | 0.65 | 0.57 | 0.66 | Stable | Stable |

| a03ws | Expanded | 0.27 | 0.29 | 0.19 | Unstable | Unstable |

| c27s3p | Variable | 0.26 | 0.26 | 0.17 | N/A | N/A |

Table 3: Performance of Force Fields on Hybrid Protein Systems [25]

| Force Field | Water Model | δRNAP Disordered Domain Rg | RD-hTH Transient Helix | MAP2c159-254 Helical Propensity | NMR Relaxation |

|---|---|---|---|---|---|

| CHARMM36m | TIP4P-D | Accurate | Retained | Accurate | Good agreement |

| Amber99SB-ILDN | TIP4P-D | Slightly compact | Retained | Moderate | Moderate agreement |

| CHARMM22* | TIP4P-D | Accurate | Not retained | Underestimated | Poor agreement |

| Amber99SB-ILDN | TIP3P | Overly compact | Retained | Overestimated | Poor agreement |

| CHARMM36m | TIP3P | Slightly compact | Retained | Accurate | Moderate agreement |

Key Findings from Comparative Assessments

Recent benchmarking studies reveal several important trends in force field performance. CHARMM36m consistently ranks among the top performers across multiple studies, demonstrating particular strength in maintaining the stability of folded domains while accurately sampling disordered regions [26] [24]. In comprehensive assessments of the R2-FUS-LC region, CHARMM36m with modified TIP3P water (c36m2021s3p) achieved the highest overall score by balancing performance across radius of gyration, secondary structure propensity, and contact map accuracy [24].

Amber-family force fields, particularly those utilizing four-site water models like TIP4P-D or specialized modifications (e.g., ff99SB-disp, ff03ws), excel at reproducing the expanded dimensions of IDPs but may compromise folded domain stability in hybrid proteins [21] [26]. For instance, ff03ws demonstrated significant instability in simulations of ubiquitin and villin headpiece, with unfolding events observed within microsecond timescales [21].

The choice of water model proves equally important as the protein force field itself. Traditional three-site models like TIP3P tend to promote overly compact IDP conformations and artificially enhanced protein-protein interactions, while more modern four-site models (TIP4P/2005, TIP4P-D, OPC) significantly improve the balance between protein-solvent and protein-protein interactions [26] [25].

Experimental Protocols and Benchmarking Methodologies

Standard Benchmarking Workflow

Figure 1: Comprehensive force field benchmarking workflow incorporating multiple experimental validation metrics.

Key Methodological Considerations

System Selection and Preparation: Benchmarking should encompass diverse protein systems including fully structured proteins, fully disordered proteins, and hybrid proteins containing both structured and disordered regions [24] [25]. For IDP-focused assessments, the R2 region of FUS-LC has emerged as an important model system due to its biological relevance to ALS and availability of high-quality structural data [24]. Systems should be solvated in appropriate water models with ion concentrations matching experimental conditions, utilizing periodic boundary conditions and particle mesh Ewald electrostatics [25].

Simulation Protocols: Production simulations should typically extend to microsecond timescales with multiple replicates (typically 3-6) to assess convergence and sample conformational diversity [24]. Temperature control (typically 300-310K) and pressure regulation (1 atm) should be maintained using modern thermostats and barostats. Sufficient equilibration (100+ ns) is critical before production data collection.

Validation Metrics and Experimental Comparison: A multi-faceted validation approach is essential, comparing simulation outputs with diverse experimental observables:

- Global dimensions: Radius of gyration (Rg) compared with SAXS data and polymer theory predictions [24]

- Secondary structure: Secondary structure propensities compared with NMR chemical shifts and scalar couplings [21]

- Local contacts: Contact maps compared with NMR paramagnetic relaxation enhancement (PRE) and chemical shift perturbations [24]

- Dynamics: NMR relaxation parameters (R1, R2, NOE) to assess conformational flexibility and timescales [25]

- Stability: Root mean square deviation (RMSD) and fluctuations (RMSF) for structured domains [21]

Statistical analysis should quantify agreement between simulation and experiment, with recent approaches incorporating Z-score based assessments for Rg distributions and correlation coefficients for contact maps [24].

Table 4: Key Computational Tools and Resources for Force Field Benchmarking

| Resource Category | Specific Tools/Resources | Primary Function | Application in Benchmarking |

|---|---|---|---|

| Simulation Software | GROMACS, NAMD, AMBER, OpenMM | Molecular dynamics engines | Production MD simulations |

| Force Fields | CHARMM36m, Amber ff19SB, DES-Amber | Molecular mechanics parameters | Governing interatomic interactions |

| Water Models | TIP3P, TIP4P/2005, TIP4P-D, OPC | Solvent representation | Balancing protein-solvent interactions |

| Analysis Tools | MDTraj, MDAnalysis, VMD | Trajectory analysis | Calculating Rg, contacts, structure |

| Validation Data | PDB, BMRB, SASBDB | Experimental reference data | Comparison with simulations |

| Specialized Hardware | Anton2, GPU clusters | Accelerated sampling | Enhanced conformational sampling |

| Benchmark Datasets | FUS R2 region [24], IDP test sets [26] | Standardized testing | Consistent performance assessment |

Comprehensive benchmarking studies reveal that modern force fields have significantly improved in their ability to model both structured and disordered protein regions, though perfect balance remains elusive. CHARMM36m currently represents the most consistently balanced choice for hybrid proteins, while specialized Amber variants (ff99SB-disp, ff03ws) excel for specific IDP applications but may compromise folded domain stability [21] [24]. The critical importance of water model selection cannot be overstated, with four-site models generally providing superior performance for disordered protein systems compared to traditional three-site alternatives [26] [25].

Future force field development will likely increasingly incorporate machine learning approaches, as demonstrated by emerging data-driven parameterization methods like ByteFF [22]. These approaches leverage large-scale quantum chemical datasets and graph neural networks to predict force field parameters across expansive chemical spaces, potentially addressing transferability challenges. Additionally, continued refinement of protein-water interactions and torsional parameters remains essential, particularly for accurately capturing the subtle balance of interactions that govern IDP conformations and phase separation phenomena [26] [23].

For researchers investigating disordered proteins, the current recommendation is to select force fields based on their specific system characteristics—prioritizing CHARMM36m or ff99SBws for hybrid proteins containing both structured and disordered regions, while considering specialized IDP force fields like ff99SB-disp for fully disordered systems. Multivariate validation against multiple experimental observables remains essential, with NMR relaxation parameters proving particularly sensitive to force field imperfections [25]. As force fields continue to evolve, the benchmarking methodologies outlined in this guide will remain essential for validating new developments in this rapidly advancing field.

Intrinsically disordered proteins (IDPs) and regions (IDRs) represent a significant challenge and opportunity in structural biology. Comprising around a third of the eukaryotic proteome, these proteins lack stable tertiary structure under physiological conditions yet play critical roles in cellular signaling, transcriptional regulation, and dynamic protein-protein interactions [27]. The conformational heterogeneity of IDPs necessitates describing them as ensembles of rapidly interconverting structures rather than as single static conformations [9].

Molecular dynamics (MD) simulations provide atomically detailed structural descriptions of IDP conformational states but face limitations in accuracy due to force field imperfections and sampling challenges [9] [28]. Experimental techniques like nuclear magnetic resonance (NMR) spectroscopy and small-angle X-ray scattering (SAXS) provide ensemble-averaged measurements but are consistent with numerous possible conformational distributions [9]. Integrative approaches that combine MD simulations with experimental data have emerged as powerful solutions to this challenge, with maximum entropy reweighting representing one of the most statistically rigorous methodologies [9] [29].

This guide objectively compares maximum entropy reweighting approaches against alternative methods for determining accurate conformational ensembles of disordered proteins, providing researchers with experimental data and protocols to inform their methodological selections.

Theoretical Foundation of Maximum Entropy Reweighting

Maximum entropy reweighting operates on the principle of introducing minimal perturbation to a computational ensemble to achieve agreement with experimental data. In the Bayesian/Maximum Entropy (BME) framework, the goal is to derive new weights (wⱼ) for each configuration in an ensemble by minimizing the function:

[ \chi^2 - \theta S_{\text{rel}} ]

Where χ² quantifies agreement between experimental data and calculated observables, and S_rel measures the deviation between original ensemble weights (wⱼ⁰) and reweighted weights (wⱼ) [30]. The hyperparameter θ balances these terms, determining confidence in the prior simulation versus experimental data [29].

This approach preserves the maximum possible information from the original simulation while incorporating experimental constraints, avoiding overfitting through careful determination of the θ parameter [29] [30]. The methodology has been successfully applied to integrate diverse experimental data including NMR chemical shifts, SAXS profiles, and hydrogen-deuterium exchange mass spectrometry (HDX-MS) measurements [31] [9] [29].

Comparative Analysis of Reweighting Implementations

Table 1: Comparison of Maximum Entropy Reweighting Implementations

| Method | Key Features | Experimental Data Supported | Automation Level | Validation Status |

|---|---|---|---|---|

| HDXer [31] | Maximum-entropy bias applied post hoc to MD ensembles | HDX-MS peptide deuteration levels | Moderate (requires peptide mapping) | Validated on binding protein conformational states |

| BME Protocol [29] [30] | Bayesian framework balancing experimental and prior errors | NMR chemical shifts, SAXS, J-couplings | Manual θ determination required | Applied to α-synuclein, ACTR; synthetic data validation |

| Automated MaxEnt [9] | Single free parameter (Kish threshold); automated restraint balancing | Multi-source NMR, SAXS | High (fully automated) | Tested on 5 IDPs; force-field independence demonstrated |

Table 2: Performance Comparison on IDP Ensemble Determination

| Method | Ensemble Size Preservation | Force Field Dependence | Computational Efficiency | Key Limitations |

|---|---|---|---|---|

| HDXer [31] | Degrades with reduced sequence coverage | Not assessed | Fast post-processing | Sequence coverage limitations; HDX prediction model accuracy |

| BME [29] | Controlled via θ parameter; ~30% retention typical | Reduces but does not eliminate dependence | Minutes to hours for reweighting | Subjective θ determination; potential overfitting |

| Automated MaxEnt [9] | Fixed via Kish ratio (K=0.1); ~10% retention | Achieves force-field independence in favorable cases | Efficient ensemble processing | Requires reasonable initial force field agreement |

Experimental Protocols and Workflows

Bayesian/Maximum Entropy Reweighting Protocol

The BME protocol follows a systematic workflow [30]:

- Ensemble Generation: Perform long-timescale MD simulations (typically 30+ μs) using state-of-the-art force fields such as a99SB-disp, CHARMM36m, or Amber ff03ws