Benchmarking Energy Minimization in Biomedical Systems: From Algorithms to Clinical Applications

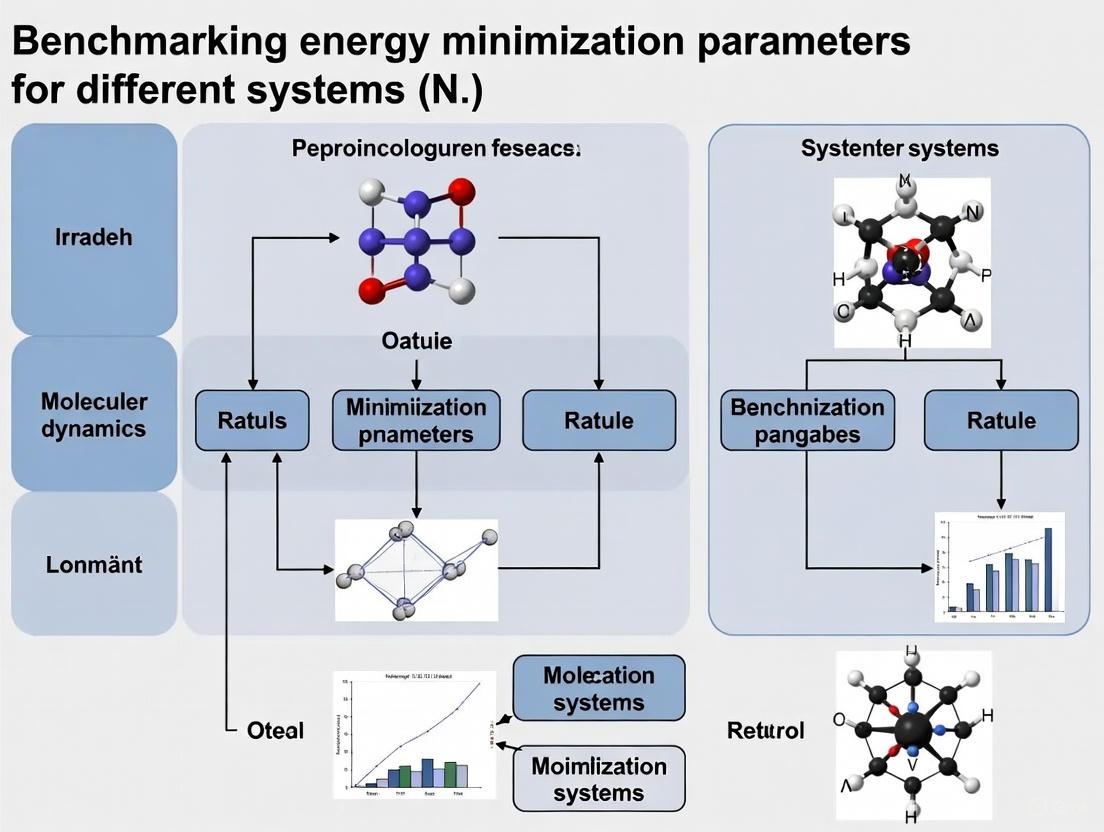

This article provides a comprehensive framework for benchmarking energy minimization parameters across diverse biomedical systems, tailored for researchers and drug development professionals.

Benchmarking Energy Minimization in Biomedical Systems: From Algorithms to Clinical Applications

Abstract

This article provides a comprehensive framework for benchmarking energy minimization parameters across diverse biomedical systems, tailored for researchers and drug development professionals. It explores the fundamental principles of energy minimization algorithms like steepest descent and conjugate gradients, and their critical role in molecular dynamics and free energy calculations for drug discovery. The content details methodological applications in predicting molecular solubility, optimizing polymer heart valves, and performing binding free energy calculations. It addresses common troubleshooting challenges and optimization strategies for force fields, sampling, and handling charged systems. Finally, it establishes validation protocols and comparative benchmarking frameworks that integrate functional accuracy with emerging sustainability metrics, providing a holistic approach for evaluating computational methods in biomedical research and development.

Understanding Energy Minimization: Core Principles and Biomedical Significance

Defining Energy Minimization in Computational Biomedicine

Energy minimization is a foundational computational technique used to find the lowest energy conformation of a molecular structure by iteratively adjusting the positions of its atoms. The primary goal is to locate a stable, low-energy state that avoids steric clashes and promotes experimentally observed geometries, corresponding to a local minimum on the potential energy surface [1]. In computational biomedicine, this process is critical for obtaining realistic molecular structures, which are essential for accurate downstream analyses such as molecular docking, drug design, and molecular dynamics (MD) simulations [2] [1].

The concept is rooted in the physical principle that a lower-energy state is statistically favored and more likely to correspond to the natural state of a structure, whether it be a protein, a small molecule ligand, or a protein-ligand complex [2] [3]. By refining molecular geometries, energy minimization increases the reliability of computational models, thereby enhancing the prediction of biological activity and binding affinity in drug discovery projects [1].

Fundamental Principles and Algorithms

The Energy Landscape and the Multiple-Minima Problem

The potential energy of a molecular system is a function of the coordinates of all its atoms. This multidimensional space, known as the potential energy surface, contains numerous local minima and saddle points. The "multiple-minima problem" refers to the significant challenge of locating the global minimum—the most stable conformation—amongst a vast number of local energy minima [4]. This is particularly acute for flexible molecules like peptides and proteins, where the number of possible conformations grows exponentially with the number of rotatable bonds. Advanced methods like the Monte Carlo-minimization hybrid approach have been developed to overcome this by combining Metropolis Monte Carlo sampling with energy minimization, thereby helping the system escape local minima and traverse the energy landscape more effectively [4].

Mathematical Formulation and Force Fields

The total potential energy ((E{\text{total}})) in a typical, additive force field is calculated as the sum of bonded ((E{\text{bonded}})) and nonbonded ((E_{\text{nonbonded}})) interactions [5]:

[E{\text{total}} = E{\text{bonded}} + E_{\text{nonbonded}}]

Where the bonded terms are further decomposed as: [E{\text{bonded}} = E{\text{bond}} + E{\text{angle}} + E{\text{dihedral}}]

And the nonbonded terms are: [E{\text{nonbonded}} = E{\text{electrostatic}} + E_{\text{van der Waals}}]

The bonded interactions describe the energy associated with the covalent structure of the molecule [5]:

- Bond Stretching ((E{\text{bond}})): Often modeled by a harmonic potential, (E{\text{bond}} = \frac{k{ij}}{2}(l{ij} - l{0,ij})^2), where (k{ij}) is the force constant, (l{ij}) is the current bond length, and (l{0,ij}) is the equilibrium bond length [5].

- Angle Bending ((E_{\text{angle}})): Describes the energy penalty for deviating from an equilibrium bond angle.

- Dihedral/Torsional ((E_{\text{dihedral}})): Represents the energy barrier for rotation around a central bond.

The nonbonded interactions describe how atoms that are not directly bonded interact with each other [5]:

- van der Waals ((E_{\text{van der Waals}})): Typically modeled by a Lennard-Jones potential to account for short-range repulsion and long-range attraction.

- Electrostatic ((E{\text{electrostatic}})): Calculated using Coulomb's law, (E{\text{Coulomb}} = \frac{1}{4\pi\varepsilon{0}} \frac{qi qj}{r{ij}}), where (qi) and (qj) are atomic charges and (r_{ij}) is the distance between them [5].

The parameters for these functions—such as equilibrium bond lengths, force constants, and atomic charges—are collectively known as a force field [5]. A wide variety of force fields exist, including AMBER, CHARMM, and GROMOS, each parameterized for specific types of molecules and simulations [2] [5] [1].

Core Minimization Algorithms

Several algorithms are employed to navigate the energy landscape, each with distinct advantages and trade-offs between computational cost and convergence efficiency. The following table summarizes the key characteristics of popular algorithms:

Table 1: Comparison of Core Energy Minimization Algorithms

| Algorithm | Principle of Operation | Convergence Speed | Stability & Best Use Cases |

|---|---|---|---|

| Steepest Descent [6] [1] | Moves atoms in the direction of the negative gradient (steepest energy descent). | Fast initial progress, but slow near the minimum. | Very stable, even from high-energy structures. Ideal for initial, crude minimization. |

| Conjugate Gradient [6] [1] | Uses the gradient history to generate non-interfering (conjugate) search directions. | Faster than Steepest Descent near the minimum. | More efficient than Steepest Descent for finer minimization. Requires a good starting structure. |

| L-BFGS [6] | A quasi-Newton method that uses an approximation of the Hessian (second derivative) matrix. | Very fast convergence. | Often the fastest for large systems; may have parallelization limitations. |

| FIRE [7] | A MD-based algorithm with adaptive time steps and velocity modifications. | Fast inertial relaxation. | Efficient for finding local minima; used in ABACUS and other MD packages. |

Benchmarking Methodologies and Performance

Benchmarking studies are crucial for evaluating the performance of different energy minimization protocols in realistic scenarios. The following experimental data, synthesized from recent literature, provides a comparative view.

Performance in Molecular Docking

A 2023 study on acetylacetone-based oxindole derivatives compared the impact of different energy minimization tools on molecular docking outcomes, specifically against the Indoleamine 2,3-Dioxygenase target [1]. The results highlight how the choice of minimization tool can influence predicted binding energies.

Table 2: Docking Score Comparison After Energy Minimization with Different Tools

| Minimization Tool | Reported Binding Energy (kcal/mol) | Key Interactions Observed |

|---|---|---|

| AMBER [1] | -9.8 | Strong hydrogen bonding and pi-pi stacking |

| GROMACS [1] | -9.5 | Moderate hydrogen bonding |

| CHARMM [1] | -9.3 | Van der Waals interactions dominant |

| Schrödinger Suite [1] | -10.1 | Comprehensive hydrophobic and polar interactions |

Algorithmic Efficiency

A 2023 paper introducing the Gradual Optimization Learning Framework (GOLF) provided data on the performance of traditional algorithms versus the novel neural network-based approach for energy minimization on diverse drug-like molecules [1].

Table 3: Efficiency Comparison of Minimization Algorithms on Drug-like Molecules

| Minimization Method | Average Number of Steps to Convergence | Computational Time (Relative Units) |

|---|---|---|

| Steepest Descent [1] | 5,200 | 1.00 |

| Conjugate Gradient [1] | 1,550 | 0.35 |

| L-BFGS [1] | 980 | 0.25 |

| GOLF (Neural Network) [1] | 950 | 0.18 |

Integrated MD and Minimization Protocols

A 2018 study on virtual screening post-processing protocols compared the application of the MM-PBSA (Molecular Mechanics Poisson-Boltzmann Surface Area) method for binding affinity prediction on minimized versus non-minimized conformations [1]. The research concluded that applying MM-PBSA on energy-minimized conformations achieved significant computational time reductions (approximately 40% less) while maintaining comparable accuracy to more expensive protocols, demonstrating the value of minimization in streamlining workflows.

Experimental Protocols for Benchmarking

To ensure reproducible and meaningful benchmarking of energy minimization parameters, a standardized experimental protocol is essential. The following workflow details the key steps.

Detailed Methodology

Structure Preparation: The initial 3D structure of the biomolecule (e.g., a protein or protein-ligand complex) is obtained from a source like the Protein Data Bank (PDB). This structure is then preprocessed to add missing hydrogen atoms, assign correct protonation states for amino acids at the desired pH, and potentially fix missing loops or residues using modeling software [2].

Force Field Selection and Assignment: An appropriate force field (e.g., AMBER14, CHARMM36) is selected based on the system under study [2] [5]. The force field parameters, including atom types, bonded terms, and partial atomic charges, are assigned to every atom in the system. Tools like YASARA's AutoSMILES can automate this process, performing pH-dependent bond order assignment and charge calculations to ensure accuracy [2].

Solvation and Neutralization: The biomolecule is placed in a simulation box (e.g., a cubic or rhombic dodecahedron box) filled with explicit water molecules (e.g., TIP3P model). Counterions (e.g., Na⁺ or Cl⁻) are added to neutralize the system's total charge and mimic physiological ionic strength [6].

Initial Minimization: A short minimization using the Steepest Descent algorithm is typically performed. This step aims to remove any severe steric clashes introduced during the solvation and ionization process, which is crucial for stabilizing the system before more refined techniques are applied [6] [1]. This is often done with positional restraints on the heavy atoms of the solute to allow the solvent to relax first.

Primary Benchmarking Minimization: This is the core comparative step. The system is subjected to energy minimization using different algorithms slated for benchmarking (e.g., Conjugate Gradient, L-BFGS, FIRE) from the same starting point and with identical simulation parameters (cutoffs, etc.) [7] [6]. Key parameters to monitor include the integration time step (for MD-based minimizers like FIRE) and convergence tolerance.

Convergence Analysis: The performance of each algorithm is tracked by monitoring the potential energy of the system and, more importantly, the maximum force acting on any atom in the system over the course of the minimization. A common convergence criterion is when the maximum force falls below a specified threshold (e.g., 1000 kJ/mol/nm in GROMACS) [6]. The number of steps and computational time required to reach convergence are recorded for efficiency comparisons.

Output and Downstream Application: The final minimized structure is saved. Its quality can be assessed by examining the root-mean-square deviation (RMSD) from the initial structure, the stability in subsequent MD simulations, or its performance in docking and scoring experiments [1].

Essential Research Tools and Reagents

A well-equipped computational toolkit is fundamental for conducting energy minimization research. The table below lists key software and force fields.

Table 4: Essential Research Toolkit for Energy Minimization

| Tool / Resource | Type | Primary Function & Relevance |

|---|---|---|

| GROMACS [6] [1] | Software Suite | Open-source MD package highly optimized for performance; includes robust steepest descent, conjugate gradient, and L-BFGS minimizers. |

| AMBER [2] [1] | Software Suite | A comprehensive suite widely used for simulating biomolecules; includes the pmemd program renowned for efficient minimization and MD. |

| CHARMM [5] [1] | Software Suite | A versatile program for macromolecular simulation with a wide array of minimization algorithms and detailed force fields. |

| OpenMM [5] [1] | Software Toolkit | A high-performance, GPU-accelerated toolkit for molecular simulation that offers powerful energy minimization capabilities. |

| YASARA [2] | Software Suite | A modeling and simulation tool integrated with SeeSAR; offers automated force field assignment (AutoSMILES) and flexible/rigid minimization options. |

| ABACUS [7] | Software Platform | A materials simulation platform that includes the FIRE minimization algorithm and supports first-principles and machine learning potentials (DeePMD-kit). |

| AMBER Force Fields [2] [5] | Force Field | A family of widely used force fields (e.g., AMBER14, AMBER15FB) for proteins, nucleic acids, and organic molecules. |

| YAMBER/YASARA2 [2] | Force Field | Custom force fields developed for the YASARA suite, which have demonstrated high performance in protein structure prediction challenges. |

Energy minimization remains an indispensable step in computational biomedicine, directly impacting the accuracy and reliability of subsequent modeling and simulation outcomes. Benchmarking studies consistently reveal that there is no single "best" algorithm or force field for all scenarios. The optimal choice is highly system-dependent.

Performance trade-offs are clear: while Steepest Descent offers robustness for poorly structured starting models, advanced algorithms like L-BFGS and hybrid methods like FIRE provide superior convergence efficiency for more refined minimization. Furthermore, the integration of minimization into broader workflows—such as its role in preparing structures for docking or its combination with cosolvent MD techniques—continues to enhance its utility in driving structure-based drug discovery [1]. As the field progresses, the development of automated parametrization tools and machine learning-enhanced frameworks like GOLF promises to further streamline the minimization process, making it faster and more accessible for tackling complex biological questions and accelerating drug development.

In the field of computational science and molecular modeling, energy minimization represents a fundamental process for determining the stable states of molecular systems. This procedure is critical across numerous disciplines, including drug design, materials science, and computational biology, where identifying low-energy configurations of molecules enables researchers to predict molecular behavior, stability, and function. The efficiency and effectiveness of energy minimization algorithms directly impact the feasibility and accuracy of such simulations, particularly as systems increase in size and complexity.

This guide provides a comprehensive comparative analysis of three fundamental gradient-based optimization algorithms—Steepest Descent, Conjugate Gradient, and L-BFGS—within the context of benchmarking energy minimization parameters for different molecular systems. These algorithms form the computational backbone of widely used simulation packages like GROMACS, employed by researchers and drug development professionals to solve complex optimization problems where analytical solutions are intractable. Each algorithm possesses distinct characteristics, performance profiles, and implementation requirements that must be carefully matched to specific research objectives and computational constraints.

The following sections detail the underlying mathematical principles of each algorithm, present experimental performance data from controlled studies, outline standardized testing protocols for benchmarking, and provide practical implementation guidelines. By establishing a structured framework for algorithm evaluation, this guide aims to equip researchers with the necessary knowledge to select appropriate minimization techniques for their specific molecular systems, ultimately enhancing the reliability and efficiency of computational investigations in scientific research and drug development.

Algorithm Fundamentals and Mathematical Principles

Steepest Descent Algorithm

The Steepest Descent algorithm represents one of the simplest and most intuitive approaches to gradient-based optimization. The fundamental principle driving this method is the consistent movement in the direction of the negative gradient of the objective function, which corresponds to the direction of steepest local descent. In energy minimization problems, this translates to updating atomic positions in proportion to the forces acting upon them, as forces are defined as the negative gradient of the potential energy function.

The algorithm operates through an iterative process where new positions are calculated using the formula: r_{n+1} = r_n + (h_n / max(|F_n|)) * F_n, where r_n represents the current atomic coordinates, F_n is the force vector (negative gradient), and h_n is a dynamically adjusted maximum displacement parameter [8]. A key feature of this method is its adaptive step-size control mechanism: if a step decreases the potential energy (V_{n+1} < V_n), the displacement parameter is increased by 20% for the next iteration (h_{n+1} = 1.2 h_n); if the step increases energy, it is rejected and the displacement parameter is reduced by 80% (h_n = 0.2 h_n) [8]. This conservative approach ensures stability but contributes to slower convergence rates compared to more sophisticated methods.

Conjugate Gradient Algorithm

The Conjugate Gradient method addresses a fundamental limitation of Steepest Descent—its tendency to oscillate in narrow valleys of the energy landscape—by generating a sequence of non-interfering search directions. In this context, "conjugate" means that these search directions remain orthogonal under a transformation by the Hessian matrix, ensuring that minimization along one direction preserves the minimality achieved along previous directions [9].

For quadratic functions of the form f(x) = 1/2 xᵀAx - bᵀx + c, where A is a symmetric positive definite matrix, the conjugate gradient method theoretically converges to the exact solution in at most n iterations (where n is the problem dimensionality) [9]. In non-quadratic energy minimization problems, which are common in molecular modeling, the algorithm generalizes through line search techniques and restarting strategies. Practical implementations use formulas such as Fletcher-Reeves (β_k = (g_{k+1}ᵀg_{k+1})/(g_kᵀg_k)) or Polak-Ribière (β_k = (g_{k+1}ᵀ(g_{k+1} - g_k))/(g_kᵀg_k)) to calculate the update parameters that maintain conjugacy while adapting to the local energy landscape [9]. A significant implementation constraint in molecular dynamics packages like GROMACS is that Conjugate Gradient cannot be used with rigid water models (e.g., SETTLE) and requires flexible water formulations instead [8].

L-BFGS Algorithm

The Limited-memory Broyden-Fletcher-Goldfarb-Shanno algorithm belongs to the quasi-Newton family of optimization methods, which progressively build an approximation to the inverse Hessian matrix using only gradient information. This approximate Hessian enables more informed search directions that account for the local curvature of the energy landscape, typically resulting in faster convergence compared to first-order methods [8].

The standard BFGS method would require storing a dense n×n matrix (where n is the number of parameters), becoming computationally prohibitive for large molecular systems with thousands of atoms. L-BFGS circumvents this limitation through a sliding-window technique that retains only a fixed number of vector pairs from previous iterations, implicitly representing the inverse Hessian approximation without explicit matrix storage [8]. This limited-memory approach makes L-BFGS particularly suitable for large-scale molecular optimization problems where the number of variables can reach millions. The algorithm has been observed to demonstrate improved convergence when potential functions employ switched or shifted interactions rather than sharp cut-offs, as discontinuous changes in potential energy can degrade the quality of the Hessian approximation built from historical gradient information [8].

Table 1: Core Characteristics of Energy Minimization Algorithms

| Algorithm | Update Mechanism | Memory Requirements | Convergence Properties | Implementation Constraints |

|---|---|---|---|---|

| Steepest Descent | Direction of negative gradient | Low (only current position and gradient) | Linear convergence; robust but slow | None; suitable for all molecular systems |

| Conjugate Gradient | Conjugate directions using gradient history | Low (few vectors: position, gradient, search direction) | Superlinear for quadratic problems; n-step convergence for n-dimensional quadratics | Cannot be used with constraints or rigid water in GROMACS |

| L-BFGS | Quasi-Newton with approximate inverse Hessian | Moderate (stores m previous correction pairs) | Superlinear convergence; faster than CG in practice | Works best with switched/shifted potentials; limited parallelization |

Performance Benchmarks and Experimental Data

Comparative Convergence Studies

Rigorous benchmarking of optimization algorithms provides crucial insights for researchers selecting appropriate minimization strategies. In a specialized study comparing nonlinear Conjugate Gradient and L-BFGS methods for DNS-based optimal control in turbulent channel flow—a computationally intensive problem analogous to complex molecular systems—researchers observed dramatic performance differences. The damped L-BFGS method, when combined with a cubic line search, demonstrated a fourfold speedup in convergence compared to the standard Polak-Ribière Conjugate Gradient algorithm [10]. This significant performance advantage was attributed to L-BFGS's ability to incorporate curvature information, which enables more effective step directions and lengths compared to the conjugate gradient approach [10].

Further evidence from the CUTEst optimization test set (a standardized collection of optimization problems) reinforces these findings. When solving 130 nonlinear bound-constrained and unconstrained problems, a modern limited-memory nonlinear Conjugate Gradient implementation (e04kf) demonstrated competitive performance with L-BFGS-B, requiring approximately half the memory while maintaining similar convergence rates in terms of gradient evaluations [11]. Specifically, both solvers used roughly the same number of gradient evaluations, but the Conjugate Gradient implementation solved 70% of the problems faster in terms of computational time [11]. This highlights the important balance between memory efficiency and computational speed in algorithm selection.

Performance Across Problem Domains

The relative performance of these algorithms varies significantly based on problem characteristics, particularly dimensionality and ill-conditioning. For small-scale optimization problems (typically fewer than 100 parameters), Newton or quasi-Newton methods are generally preferred due to their rapid convergence [9]. However, as problem size increases, the memory requirements of standard BFGS become prohibitive, making limited-memory approaches like L-BFGS and Conjugate Gradient more practical [9].

In ill-conditioned problems where the energy landscape contains valleys of sharply varying curvature, Conjugate Gradient methods with preconditioning techniques can outperform alternatives [9]. The convergence rate of Conjugate Gradient methods depends heavily on the condition number of the Hessian matrix (κ(A)), with better conditioning leading to faster convergence [9]. For large-scale molecular systems where evaluating second-order derivatives is computationally prohibitive or outright impossible, both CG and L-BFGS present attractive alternatives as they avoid explicit Hessian computation and storage [11].

Table 2: Experimental Performance Comparison Across Problem Types

| Problem Type | Steepest Descent | Conjugate Gradient | L-BFGS |

|---|---|---|---|

| Small-scale NLP (<100 params) | Slow convergence; not recommended | Competitive with good line search | Fast convergence; often preferred |

| Large-scale NLP (>10,000 params) | Impractical due to slow convergence | Excellent due to low memory requirements | Best convergence with sufficient memory |

| Ill-conditioned systems | Poor convergence; zigzagging behavior | Good with preconditioning | Better with full curvature approximation |

| Turbulent channel flow control | Not tested | Baseline performance | 4x faster than CG [10] |

| CUTEst test problems | Not tested | 70% solved faster than L-BFGS-B [11] | Competitive; better gradient efficiency |

Molecular Energy Minimization Performance

In the specific context of molecular energy minimization, as implemented in the GROMACS molecular dynamics package, each algorithm serves distinct purposes. Steepest Descent remains valuable in initial minimization stages where robustness is prioritized over efficiency, particularly for badly positioned starting structures with steric clashes or distorted geometries [8]. Its simplicity and guaranteed convergence make it suitable for preparing systems for more refined minimization.

The Conjugate Gradient algorithm demonstrates stronger performance in later stages of minimization closer to the energy minimum, though it converges slower than Steepest Descent in early iterations [8]. This algorithm is particularly valuable for minimization preceding normal-mode analysis, which requires high accuracy and cannot be performed with constraints [8]. For most other purposes where constraints are needed, the efficiency advantages may not justify the implementation limitations.

L-BFGS has been found to converge faster than Conjugate Gradients in molecular minimization contexts, making it generally preferable when available [8]. Its quasi-Newton approach leveraging approximate curvature information typically requires fewer iterations to reach equivalent precision compared to conjugate gradient methods, though the per-iteration computational cost is slightly higher due to the maintenance of the limited-memory correction pairs.

Experimental Protocols for Benchmarking

Standardized Testing Methodologies

To ensure reproducible and meaningful comparison of energy minimization algorithms, researchers should adhere to standardized testing protocols. The following methodology provides a structured approach for benchmarking performance across different molecular systems:

Test Problem Selection: Curate a diverse set of optimization problems representing various challenges encountered in computational chemistry and molecular dynamics. The CUTEst collection provides a standardized set of test problems widely used in optimization algorithm development [11]. Additionally, include real-world molecular systems of varying complexity, from small organic molecules to large biomolecular complexes.

Convergence Criteria Definition: Establish consistent termination conditions to enable fair cross-algorithm comparisons. Common criteria include:

- Gradient norm tolerance: Optimization terminates when the maximum absolute value of force components falls below a specified threshold (e.g., 1-10 kJ mol⁻¹ nm⁻¹ for molecular systems) [8].

- Function value change threshold: Stop when the relative change in objective function between iterations falls below a predefined limit.

- Maximum iteration count: Establish an upper bound on computational effort regardless of convergence.

Initialization Standardization: Use identical starting points for all algorithms to enable direct performance comparison. For molecular systems, include both "well-behaved" starting structures and deliberately distorted configurations to test robustness.

Performance Metrics Collection: Record multiple quantitative measures for comprehensive assessment:

- Number of iterations until convergence

- Total computational time

- Number of function and gradient evaluations

- Final objective function value achieved

- Memory utilization during optimization

Statistical Validation: Perform multiple runs with varying initial conditions where applicable, and report statistical measures (mean, standard deviation) to account for performance variability.

Evaluation in DNS-Based Optimal Control

For problems involving extremely expensive function evaluations, such as Direct Numerical Simulation (DNS) of turbulent flows or complex molecular dynamics simulations, a modified benchmarking approach is necessary:

Efficiency Measurement: Focus on the number of functional and gradient evaluations required rather than just iteration count, as these dominate computational cost in DNS-based optimization [10].

Line Search Integration: Evaluate algorithm performance in combination with different line search strategies (bisection, quadratic interpolation, cubic interpolation), as line search efficiency significantly impacts overall performance [10].

Relative Improvement Tracking: Monitor cost functional improvement per unit of computational expense, as optimization algorithms are often stopped well before formal convergence in these expensive applications [10].

The following workflow diagram illustrates the recommended experimental protocol for comprehensive algorithm benchmarking:

Diagram 1: Experimental benchmarking workflow for comparing optimization algorithms, following standardized protocols to ensure reproducible results.

Implementation Guidelines and Research Reagents

Researchers have access to numerous well-established software implementations of the algorithms discussed in this guide. These "research reagents" provide the essential computational tools for energy minimization across various scientific domains:

Table 3: Essential Computational Resources for Optimization Research

| Tool/Resource | Algorithm Implementation | Application Context | Key Features |

|---|---|---|---|

| GROMACS | Steepest Descent, Conjugate Gradient, L-BFGS | Molecular dynamics and energy minimization | Highly optimized for biomolecular systems; automated convergence detection |

| nAG e04kf | Limited-memory Nonlinear Conjugate Gradient | Large-scale nonlinear optimization | Bound constraints; low memory footprint; competitive with L-BFGS-B [11] |

| L-BFGS-B | Limited-memory BFGS with Bound Constraints | General nonlinear optimization | Handles box constraints; widely used in machine learning [12] |

| optim (R) | BFGS, L-BFGS-B, Conjugate Gradient | Statistical modeling and estimation | Multiple algorithm options; Hessian computation for standard errors [12] |

| nlminb (R) | PORT (Quasi-Newton) | Nonlinear regression | Constrained optimization; gradient validation feature [12] |

Practical Implementation Considerations

Successful implementation of energy minimization algorithms requires careful attention to several practical aspects:

Gradient Computation: The choice between analytical and numerical gradients significantly impacts performance and accuracy. When available, analytical gradients provide superior precision and computational efficiency. For molecular force fields, analytical gradients are typically accessible, while in custom optimization problems, numerical differentiation might be necessary despite its increased computational cost and potential precision issues [13].

Memory Allocation: For large-scale problems, memory requirements often dictate algorithm selection. Standard BFGS requires O(n²) memory, making it infeasible for high-dimensional problems. Both L-BFGS (O(mn), where m is the number of correction pairs) and Conjugate Gradient (O(n)) offer more memory-efficient alternatives [9] [11]. The nAG e04kf solver requires approximately half the memory of L-BFGS-B, making it particularly attractive for problems with millions of variables [11].

Constraint Handling: When molecular systems require constraints (e.g., fixed bond lengths, rigid water geometries), algorithm compatibility becomes crucial. Standard Conjugate Gradient implementations typically cannot handle constraints, while L-BFGS-B and specialized constrained optimizers like PORT support bound constraints [8] [12].

Termination Criteria Selection: Setting appropriate convergence thresholds requires balancing precision with computational effort. For molecular systems, a reasonable gradient norm tolerance can be estimated from the root mean square force of a harmonic oscillator at a given temperature, typically between 1-10 kJ mol⁻¹ nm⁻¹ [8]. Excessively tight tolerances should be avoided due to numerical noise in force calculations.

Hybrid Approaches: Combining algorithms can leverage their respective strengths. A common strategy employs Steepest Descent for initial rapid improvement followed by switching to L-BFGS or Conjugate Gradient for refined convergence [8]. This approach is particularly effective for poorly conditioned starting structures with significant steric clashes or distorted geometries.

Based on the comprehensive analysis of algorithmic characteristics, performance benchmarks, and implementation considerations, the following recommendations guide researchers in selecting appropriate energy minimization strategies:

For initial minimization stages or poorly conditioned starting structures, the robustness and simplicity of Steepest Descent make it a reliable choice, despite its slower convergence [8]. Its stability when dealing with significant steric clashes or distorted molecular geometries provides a solid foundation for subsequent refinement with more advanced methods.

For medium to large-scale molecular systems where memory constraints are significant, Conjugate Gradient methods offer an attractive balance between efficiency and resource requirements [9] [11]. Their superiority over Steepest Descent in later minimization stages and minimal memory footprint (only a few vectors required) make them particularly suitable for systems with thousands of atoms or when using computational resources with limited memory capacity.

For problems where computational expense of gradient evaluations dominates the optimization cost, L-BFGS generally provides the fastest convergence, as demonstrated by its fourfold speedup over Conjugate Gradient in DNS-based optimal control studies [10]. When sufficient memory is available and the potential energy surface is reasonably smooth, L-BFGS typically achieves satisfactory results with fewer iterations, offsetting its slightly higher per-iteration cost.

For very large-scale optimization with millions of parameters, modern limited-memory Conjugate Gradient implementations (e.g., nAG e04kf) provide competitive alternatives to L-BFGS-B, with the advantage of reduced memory requirements [11]. These approaches are particularly valuable in statistical applications, machine learning, and massive molecular systems where both computational efficiency and memory footprint are critical considerations.

The field of optimization continues to evolve, with emerging hybrid approaches and specialized implementations offering enhanced performance for specific problem classes. Researchers should consider maintaining a toolkit of multiple algorithms and perform preliminary benchmarking on representative systems to identify the most effective approach for their specific molecular systems and research objectives. As computational methods advance, the ongoing development of optimization algorithms will continue to enable more sophisticated and accurate molecular simulations across scientific disciplines.

The Critical Role of Force Fields and Parameterization

Molecular dynamics (MD) simulations have become an indispensable tool in computational chemistry and drug discovery, providing atomic-level insights into the behavior of biological systems. The predictive accuracy of these simulations fundamentally relies on the potential energy functions, or force fields, that describe the interatomic interactions within the system [14]. Force field parameterization—the process of deriving and optimizing the numerical constants in these equations—represents a critical challenge in the field. With the rapid expansion of synthetically accessible chemical space, traditional parameterization approaches face significant limitations in coverage and accuracy [15]. This guide provides an objective comparison of contemporary force fields and their parameterization strategies, offering researchers a framework for selecting appropriate models for their specific molecular systems.

Force Field Parameterization Methodologies

Traditional and Data-Driven Parameterization Approaches

Force field development has evolved from expert-driven manual parameterization to increasingly automated, data-intensive approaches. Traditional parameterization typically employs a combination of quantum mechanical (QM) calculations and experimental data, using least-squares minimization algorithms to optimize parameters [16]. This approach is exemplified by force fields like GAFF (General Amber Force Field), OPLS (Optimized Potentials for Liquid Simulations), and CHARMM (Chemistry at HARvard Macromolecular Mechanics) [15].

In contrast, modern data-driven approaches leverage machine learning and large-scale QM datasets to automate parameter discovery. The emergence of graph neural networks (GNNs) has enabled end-to-end force field parameterization, where models predict parameters directly from molecular structures [15]. These approaches address the scalability limitations of traditional methods while maintaining the computational efficiency of molecular mechanics force fields.

Table 1: Comparison of Force Field Parameterization Strategies

| Parameterization Approach | Key Features | Representative Force Fields | Advantages | Limitations |

|---|---|---|---|---|

| Traditional Look-up Table | Pre-defined parameters based on chemical environment | GAFF, CHARMM36, AMBER Lipid14/Lipid21 | High interpretability, well-established | Limited chemical space coverage, labor-intensive updates |

| SMIRKS-Based | Chemical perception via SMIRKS patterns | OpenFF | Systematic parameter assignment, extensible | Discrete chemical descriptions limit transferability |

| Graph Neural Network | End-to-end parameter prediction from molecular structure | ByteFF, Espaloma | Broad chemical coverage, continuous representation | Complex training process, data-intensive |

| Specialized Modular | Target-specific parameters with QM derivation | BLipidFF | High accuracy for specialized systems | Limited transferability to other molecular classes |

Optimization Algorithms for Parameterization

The selection of optimization algorithms significantly impacts parameterization efficacy. Studies comparing multi-start local optimization algorithms (Simplex, Levenberg-Marquardt, POUNDERS) with global optimization approaches (genetic algorithms) reveal that algorithm performance varies depending on the target properties and parameter space [16]. For complex force fields with multiple minima, genetic algorithms often demonstrate superior effectiveness in reaching lower error solutions, though they typically require more function evaluations than local methods [16].

Recent advances introduce Bayesian calibration methods using Gaussian Process surrogate models, which efficiently quantify parameter uncertainties while reducing the computational cost of parameter optimization [17]. This approach is particularly valuable for top-down parameterization against experimental data, where simulation costs would otherwise be prohibitive.

Comparative Performance Analysis of Modern Force Fields

Accuracy Across Chemical Space

Comprehensive benchmarking studies evaluate force field performance using metrics including conformity to QM geometries, torsional energy profiles, and conformational energies. Based on these criteria, recent evaluations provide quantitative comparisons of popular force fields:

Table 2: Performance Benchmarking of Small Molecule Force Fields

| Force Field | Relative Energy Error (ddE) | Geometric Accuracy (RMSD) | Torsional Profile Fidelity | Chemical Coverage |

|---|---|---|---|---|

| OPLS3e | Lowest error | Best performance | Excellent | Broad (146,669 torsion types) |

| OpenFF 1.2.0 | Low error | Good performance (0.4-0.5 Å) | Very good | Moderate |

| ByteFF | Very low error | High geometric accuracy | Excellent | Very broad |

| GAFF2 | Moderate error | Moderate performance (~0.6 Å) | Good | Moderate |

| MMFF94S | Moderate error | Moderate performance | Good | Limited |

| BLipidFF | Specialized for bacterial lipids | N/A | Excellent for target systems | Narrow but deep |

Performance assessments consistently place OPLS3e at the top tier for small molecule force fields, with OpenFF 1.2.0 ranking as the best publicly available alternative [18]. The recently developed ByteFF demonstrates state-of-the-art performance across multiple benchmarks, excelling in predicting relaxed geometries, torsional energy profiles, and conformational energies [15].

Specialized force fields like BLipidFF illustrate the value of domain-specific parameterization. For mycobacterial membrane lipids, BLipidFF uniquely captures the high rigidity and slow diffusion rates that general force fields fail to reproduce, showing excellent agreement with biophysical experiments like Fluorescence Recovery After Photobleaching (FRAP) [14].

Performance in Biomolecular Simulations

Beyond small molecules, force field selection critically impacts simulations of biological macromolecules. For double-stranded DNA, systematic comparisons reveal significant differences in predicted mechanical properties between AMBER family force fields (bsc1, OL15) and CHARMM36 [19]. These variations highlight the importance of force field selection for specific biomolecular systems and target properties.

Experimental Protocols for Force Field Evaluation

Quantum Mechanics Reference Data Generation

High-quality parameterization requires rigorous QM reference data. The ByteFF methodology exemplifies modern best practices, employing B3LYP-D3(BJ)/DZVP level theory to generate an expansive dataset of 2.4 million optimized molecular fragment geometries with analytical Hessian matrices, plus 3.2 million torsion profiles [15]. This level of theory provides an optimal balance between accuracy and computational cost for molecular conformational potential energy surfaces.

Molecular fragmentation employs graph-expansion algorithms that traverse each bond, angle, and non-ring torsion, retaining relevant atoms and their conjugated partners before capping cleaved bonds [15]. This approach preserves local chemical environments while managing computational complexity. For membrane-specific force fields like BLipidFF, a divide-and-conquer strategy segments large lipids into manageable modules for QM calculations, with careful capping to maintain electronic continuity [14].

Charge and Torsion Parameterization

Partial charge derivation typically employs a two-step QM protocol: initial geometry optimization at B3LYP/def2SVP level followed by charge derivation via the Restrained Electrostatic Potential (RESP) fitting method at B3LYP/def2TZVP level [14]. To enhance statistical reliability, charges are averaged across multiple conformations (e.g., 25 conformations randomly selected from MD trajectories).

Torsion parameter optimization minimizes the difference between QM-calculated energies and classical potential energies. For complex lipids like PDIM in BLipidFF, this requires further molecular subdivision—PDIM was divided into 31 different elements—to make high-level torsion calculations computationally feasible [14].

Validation Methodologies

Comprehensive force field validation employs multiple complementary approaches:

- Conformational energetics: Comparing relative energies between conformers against QM reference data using ddE metrics [18]

- Geometric accuracy: Assessing atom-positional root mean square deviation (RMSD) and torsional fingerprint deviation (TFD) between force field- and QM-optimized structures [18]

- Bulk properties: Evaluating performance in predicting experimental properties like density, viscosity, and phase equilibria [17]

- Specialized biophysical validation: For membrane systems, comparing against FRAP measurements of diffusion rates and order parameters [14]

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Tools for Force Field Development and Application

| Tool Category | Specific Tools | Primary Function | Application Context |

|---|---|---|---|

| Quantum Chemistry Software | Gaussian09, ORCA | Reference data generation via QM calculations | Charge derivation, torsion profiling, geometry optimization |

| Force Field Packages | AMBER, CHARMM, OpenMM, GROMACS | MD simulation engines | Force field implementation and validation |

| Parameterization Tools | GAFF, CGenFF, FFBuilder, Antechamber | Parameter assignment for novel molecules | System setup for non-standard molecules |

| Data Analysis & Visualization | Multiwfn, VMD, MDAnalysis | RESP charge fitting, trajectory analysis | Parameter derivation, simulation analysis |

| Specialized Force Fields | BLipidFF, ByteFF, OpenFF | Target-specific parameter sets | Specialized applications (membranes, drug discovery) |

| Benchmarking Datasets | OpenFF Full Optimization Benchmark, QCArchive | Standardized performance assessment | Force field validation and comparison |

Force field selection remains a critical decision point in molecular simulations, with significant implications for predictive accuracy. Based on current benchmarking studies, researchers should consider OPLS3e for small molecule simulations where accessible, with OpenFF 1.2.0 and the newer ByteFF as excellent open alternatives providing broad chemical coverage [15] [18]. For membrane systems, particularly mycobacterial studies, BLipidFF offers specialized parameters that accurately capture unique biophysical properties [14]. The field continues to evolve toward data-driven parameterization approaches that balance the computational efficiency of molecular mechanics with increasingly accurate coverage of expansive chemical spaces, promising enhanced predictive power for drug discovery and materials design.

Free energy calculations are indispensable tools in computational chemistry and drug discovery, providing critical insights into molecular interactions, stability, and binding affinities. The accurate prediction of free energy differences governs the balance of chemical species and available chemical work, enabling computational design of new chemical entities that could revolutionize pharmaceutical development and materials science [20]. Among the diverse computational strategies developed, two distinct families of methods have emerged as particularly important: the alchemical route and the geometrical (path-based) route [21].

This guide provides an objective comparison of these fundamental approaches, framing their performance within broader research on benchmarking energy minimization parameters. Understanding the relative strengths, limitations, and optimal application domains for each method is essential for researchers selecting computational tools for predicting biomolecular recognition, solvation thermodynamics, and other critical phenomena.

Core Methodological Principles

Alchemical Free Energy Methods

Alchemical free energy methods calculate free energy differences using non-physical pathways defined by a coupling parameter (λ). This parameter continuously modulates the system's Hamiltonian between initial and final states via intermediate "alchemical" states [22] [23]. The hallmark of these methods is their use of "bridging" potential energy functions representing intermediate states that cannot exist as real chemical species [23].

Key Formulations:

- Free Energy Perturbation (FEP): Based on the Zwanzig relationship, FEP estimates free energy differences from ensemble averages of energy differences: ΔA = -kBT · ln⟨exp[-(U₁ - U₀)/kBT]⟩₀ [22].

- Thermodynamic Integration (TI): Employs numerical integration of the derivative of the Hamiltonian with respect to λ: ΔA = ∫⟨∂U(λ)/∂λ⟩λ dλ [22].

These methods implement soft-core potentials and advanced sampling techniques to avoid singularities and improve convergence [22]. Alchemical transformations are typically applied within thermodynamic cycles to compute relative binding free energies or through multi-stage processes for absolute binding free energies [23].

Path-Based Free Energy Methods

Path-based (geometrical route) methods compute free energy differences along a physical, real-space pathway connecting the initial and final states [21]. Unlike alchemical approaches, these methods simulate physical processes like ligand dissociation from a receptor.

Key Implementations:

- Potential of Mean Force (PMF): Computes the free energy profile along a chosen reaction coordinate, often requiring restraints on ligand conformation and orientation [21].

- Path Collective Variables (PCV): Maps the entire separation process onto a curvilinear pathway constructed using the string method, defined as s = ∑k k·e^(-λ‖X-X^(k)‖²) / ∑k e^(-λ‖X-X^(k)‖²), where X^(k) represents coordinates of images along the path [21].

- Optimized Path Methods: Construct smooth, short transition paths using harmonic restraints on dihedrals or other coordinates, with equilibrium parameters gradually changed from initial to final states [24].

These methods can demonstrate superior efficiency for certain systems, with studies reporting self-convergent and cross-convergent results in peptide transitions [24].

Comparative Performance Analysis

Table 1: Fundamental characteristics of alchemical and path-based free energy methods

| Characteristic | Alchemical Methods | Path-Based Methods |

|---|---|---|

| Core Principle | Non-physical pathway via coupling parameter λ [22] [23] | Physical pathway in real-space [21] |

| Transformation Type | "Alchemical" intermediate states [23] | Geometrical separation or transition [21] |

| Primary Pathway | Hamiltonian interpolation [22] | Ligand dissociation or conformational change [21] |

| Typical Applications | Relative binding affinities, solvation free energies, protein mutations [23] | Absolute binding free energies, conformational transitions [24] [21] |

| Computational Efficiency | High for small-molecule binding in deep pockets [21] | Superior for superficial binding or large molecules [21] |

Table 2: Quantitative performance comparison for binding free energy calculations

| Performance Metric | Alchemical Methods | Path-Based Methods | ||

|---|---|---|---|---|

| Typical Accuracy Range | 1-2 kcal/mol with optimized protocols [22] | Self-convergent and cross-convergent results demonstrated [24] | ||

| Challenging Cases | Perturbations with | ΔΔG | > 2.0 kcal/mol show increased errors [25] | Efficient for beta-hairpin structures like trpzip2 [24] |

| Sampling Requirements | Sub-nanosecond simulations sufficient for some systems [25] | More efficient than conventional MD for some peptides [24] | ||

| System Size Limitations | Efficient for small to moderate ligands [21] | Suitable for association of large molecules [21] |

Detailed Experimental Protocols

Alchemical Free Energy Calculation Workflow

Protocol for Relative Binding Free Energy Calculation [23]:

System Preparation:

- Generate protein-ligand complex structures using molecular docking if needed

- Solvate the system in explicit solvent, add counterions for neutralization

- Employ appropriate force fields (e.g., AMBER, CHARMM, OPLS)

Equilibration:

- Perform energy minimization using steepest descent and conjugate gradient algorithms

- Gradually heat the system to target temperature (e.g., 300 K) over 100-200 ps

- Conduct NPT equilibration until density stabilizes (typically 1-5 ns)

Alchemical Transformation Setup:

- Define λ values for transformation (typically 12-24 intermediates)

- Implement soft-core potentials for van der Waals interactions: ULJ(rij;λ) = 4εijλ(1/[α(1-λ) + (rij/σij)⁶]² - 1/[α(1-λ) + (rij/σ_ij)⁶]) [22]

- Use Hamiltonian replica exchange (HREX) between λ windows to enhance sampling

Production Simulation:

Free Energy Analysis:

- Apply multistate estimators such as MBAR or BAR for optimal precision

- Compute statistical uncertainties using block analysis or bootstrap methods

- Implement cycle closure algorithms to improve consistency [25]

Path-Based Free Energy Calculation Workflow

Protocol for Absolute Binding Free Energy Using Path Collective Variables [21]:

Path Generation:

- Define initial (holo) and final (apo) states of the system

- Use the string method with 20-40 images to generate a curvilinear dissociation pathway [21]

- Optimize the path through iterative refinement until convergence

Path Collective Variable Setup:

- Define PCV s using the formulary s = ∑{k=1}^M k·e^(-λ‖X-X^(k)‖²) / ∑{k=1}^M e^(-λ‖X-X^(k)‖²) [21]

- Set λ parameter based on mean distance between adjacent images

- Define orthogonal coordinate Z = -1/λ ln∑_k e^(-λ‖X-X^(k)‖²)

Simulation with Biasing Potentials:

- Apply biasing potential u_⊥(Z) to maintain the system near the path

- Sample the PCV using metadynamics or umbrella sampling

- For ligand-receptor systems, ensure proper sampling of rotational and conformational degrees of freedom

Potential of Mean Force Calculation:

- Compute PMF along the s coordinate using weighted histogram analysis method (WHAM) or similar techniques

- Account for restraining potentials in final free energy estimate

- Calculate standard binding free energy using ΔG°bind = -kBT ln[K_eq C°], where C° is standard concentration (1/1661 ų) [21]

Convergence Validation:

- Verify self-convergence through multiple independent simulations

- Assess cross-convergence with different initial conditions [24]

- Compare with alternative methods when possible

Workflow Visualization

Free Energy Calculation Workflows: Alchemical vs. Path-Based Methods

Research Reagent Solutions

Table 3: Essential computational tools for free energy calculations

| Tool Category | Representative Solutions | Primary Function |

|---|---|---|

| Simulation Packages | AMBER [25], GROMACS, CHARMM, OpenMM [23] | Molecular dynamics engine with free energy capabilities |

| Path Sampling Tools | PLUMED, COLVAR | Implementation of path collective variables and metadynamics |

| Analysis Libraries | alchemlyb [25], pymbar, alchemical-analysis [23] | Free energy estimation from simulation data |

| Workflow Managers | BioSimSpace [26] | Modular, interoperable workflow construction |

| Benchmarking Resources | Soft Benchmarks [27] | Standardized test sets for method validation |

Alchemical and path-based free energy methods offer complementary approaches with distinct advantages for different scenarios. Alchemical methods typically excel for computing relative binding affinities of small molecules in deep binding pockets and benefit from extensive protocol optimization and automation [21] [25] [23]. Path-based approaches demonstrate superior performance for systems with superficial binding poses, large molecular associations, and absolute binding free energy calculations where physical pathways are preferred [24] [21].

The choice between methodologies should be guided by system characteristics, available computational resources, and specific research questions. As both approaches continue to evolve through integration with machine learning, quantum corrections, and enhanced sampling algorithms [22], their accuracy, efficiency, and application scope will further expand, solidifying their role as indispensable tools in computational molecular sciences.

Energy Minimization in Drug-Target Binding and Affinity Prediction

In computational drug design, energy minimization is a foundational technique used to find the most stable, low-energy conformation of a molecular structure by iteratively adjusting the positions of its atoms. The primary goal is to locate a local minimum on the potential energy surface (PES), which corresponds to a stable conformation of the molecule that is more likely to represent its natural state [2] [1]. This process is critical for refining molecular geometries, eliminating unfavorable steric clashes, and producing more accurate and realistic structures for subsequent computational analyses [1] [28]. The importance of energy minimization extends to key applications in drug discovery, including the preparation of ligands and proteins for molecular docking, improving the accuracy of binding pose predictions, increasing the efficiency of molecular dynamics simulations, and enhancing the prediction of binding affinity [1].

The underlying concept is the Potential Energy Surface (PES), a multidimensional landscape describing how the potential energy of a system changes with its atomic positions. The "minima" on this surface—including the global minimum (the most stable conformation) and various local minima (semi-stable conformations)—are of particular interest. A critical challenge is that the biologically active form of a drug, its bioactive conformation, which it adopts when bound to its target, may not always correspond to the global minimum but rather to a local minimum stabilized by the target's binding pocket [28].

Comparative Analysis of Energy Minimization Methods

Various algorithms have been developed to navigate the PES, each with distinct strengths, weaknesses, and optimal application scenarios. The choice of method often depends on the trade-off between computational cost, speed of convergence, and the need to avoid becoming trapped in local minima. The table below provides a structured comparison of the most common energy minimization methods.

Table 1: Comparison of Common Energy Minimization Methods

| Method | Key Principle | Advantages | Disadvantages | Typical Use Case |

|---|---|---|---|---|

| Steepest Descent [28] | Moves atomic positions downhill along the direction of the most negative energy gradient. | Fast initial convergence; effective for removing severe steric clashes; computationally simple. | Becomes inefficient near the minimum; often gets trapped in local minima. | Initial, rough optimization of structures with high-energy clashes. |

| Conjugate Gradient [28] | Uses information from previous gradients to determine a conjugate direction for movement. | Faster convergence than Steepest Descent near the minimum; more efficient for structure refinement. | More computationally expensive in early stages than Steepest Descent. | Secondary refinement of structures after initial minimization. |

| Newton-Raphson [28] | Uses both the first (gradient) and second (Hessian matrix) derivatives of the energy function. | Highly accurate; very fast convergence near a minimum. | Calculating the Hessian matrix is computationally expensive for large systems. | High-precision minimization of small to medium-sized systems. |

| Simulated Annealing [28] | Mimics the physical process of heating and slow cooling to overcome energy barriers. | Can escape local minima; effective for finding the global minimum in complex systems. | Computationally time-consuming; result quality depends on the cooling schedule. | Global minimization for large molecules or complexes where local methods fail. |

| Genetic Algorithms [28] | Based on principles of natural selection, evolving a population of conformations over generations. | Effective global exploration; can be parallelized for speed. | Computationally intensive; performance depends on algorithm parameters (e.g., population size). | Exploring a wide conformational space to find low-energy structures. |

Benchmarking Performance in Drug-Target Affinity Prediction

The ultimate test for energy minimization protocols is their performance in practical drug discovery tasks, particularly in predicting drug-target binding affinity (DTA). Recent research benchmarks traditional physics-based methods against modern deep learning (DL) approaches, revealing a complex performance landscape.

Performance Tiers of Docking Methods

A comprehensive 2025 study systematically evaluated nine molecular docking methods, categorizing them into four distinct performance tiers based on their success rates in pose prediction (RMSD ≤ 2 Å) and physical validity (PB-valid) across diverse benchmark datasets (Astex diverse set, PoseBusters set, and DockGen) [29]:

- Tier 1: Traditional Methods – Methods like Glide SP consistently excelled in producing physically valid poses, maintaining PB-valid rates above 94% across all datasets. This highlights the enduring robustness of well-established physics-based approaches [29].

- Tier 2: Hybrid AI Methods – Approaches that integrate traditional conformational searches with AI-driven scoring functions, such as Interformer, offered a balanced performance between pose accuracy and physical validity [29].

- Tier 3: Generative Diffusion Models – Methods like SurfDock demonstrated "exceptional pose accuracy," achieving RMSD ≤ 2 Å success rates exceeding 70% on all benchmarks. However, their physical validity scores were suboptimal, indicating a tendency to generate poses with issues like steric clashes or improper hydrogen bonding [29].

- Tier 4: Regression-Based Models – These purely DL-based methods, such as KarmaDock and QuickBind, often failed to produce physically plausible poses, resulting in the lowest combined success rates [29].

Table 2: Performance Benchmarking of Docking Methods on the PoseBusters Set [29]

| Method Category | Example Method | Pose Accuracy (RMSD ≤ 2 Å) | Physical Validity (PB-valid) | Combined Success (RMSD ≤ 2 Å & PB-valid) |

|---|---|---|---|---|

| Traditional | Glide SP | Data Not Explicitly Shown | >97% | Data Not Explicitly Shown |

| Hybrid AI | Interformer | Data Not Explicitly Shown | Data Not Explicitly Shown | Data Not Explicitly Shown |

| Generative Diffusion | SurfDock | 77.34% | 45.79% | 39.25% |

| Regression-Based | QuickBind | Data Not Explicitly Shown | Data Not Explicitly Shown | Data Not Explicitly Shown |

The Role of Energy Minimization in Enhancing Predictions

Energy minimization is often employed as a critical post-processing step to address the limitations of docking methods. For instance, minimizing the energy of a protein-ligand complex can resolve steric clashes and promote favorable interactions, leading to a refined structure with lower free energy [2]. This is particularly useful for refining poses generated by DL models that have high accuracy but poor physical validity. Studies indicate that applying energy minimization after pose prediction can improve the ligand's score and provide greater confidence in the predicted binding mode, especially for smaller, fragment-like molecules [2]. Furthermore, energy minimization can be used in an "induced fit" protocol, where both the ligand and the protein backbone are allowed to flexibly adapt to each other, effectively expanding a binding site that is too narrow to host a ligand [2].

Experimental Protocols for Benchmarking

Robust benchmarking requires standardized experimental protocols. Below is a detailed methodology for a typical evaluation of energy minimization and docking protocols, synthesized from recent literature.

Diagram 1: Experimental workflow for benchmarking energy minimization protocols in structure-based drug design.

Detailed Methodology

System Preparation and Minimization:

- Input Structures: The process begins with high-quality protein-ligand complex structures from sources like the Protein Data Bank (PDB) [30]. For a rigorous test of generalization, datasets should include known complexes (e.g., Astex diverse set), unseen complexes (e.g., PoseBusters set), and novel binding pockets (e.g., DockGen) [29].

- Structure Preparation: Hydrogen atoms are added, protonation states are assigned, and force field parameters are assigned to all atoms using tools like AutoSMILES in YASARA, which provides pH-dependent bond order assignment and charge calculation [2].

- Energy Minimization Protocol: The minimization is performed using selected algorithms (see Table 1). Protocols may vary, such as keeping the protein backbone rigid (for rigid-body docking refinement) or flexible (to simulate induced fit) [2]. Multiple force fields (e.g., AMBER, YAMBER, CHARMM) should be tested for robustness [2].

Docking and Affinity Prediction:

- The minimized structures are used as input for various docking programs, including traditional (Glide SP, AutoDock Vina), deep learning-based (SurfDock, DiffBindFR), and hybrid (Interformer) methods [29].

- For binding affinity prediction, advanced free energy calculations can be performed. For example, the re-engineered Bennett Acceptance Ratio (BAR) method uses molecular dynamics (MD) simulations and explicit membrane models (for membrane proteins like GPCRs) to calculate binding free energies across multiple intermediate states (lambda values) [31].

Validation Metrics:

- Pose Accuracy: Measured by Root-Mean-Square Deviation (RMSD) of the ligand heavy atoms from the experimentally determined crystal structure, with a threshold of ≤ 2.0 Å often considered a successful prediction [29].

- Physical Validity: Assessed by tools like the PoseBusters toolkit, which checks for chemical and geometric consistency, including bond lengths, angles, stereochemistry, and the absence of severe protein-ligand clashes [29].

- Interaction Recovery: The ability of a method to recapitulate key molecular interactions (e.g., hydrogen bonds, hydrophobic contacts) observed in the crystal structure is critically evaluated [29].

- Binding Affinity Correlation: The calculated binding free energies (e.g., from BAR or scoring functions) are compared to experimental values (e.g., pKᵢ, IC₅₀) to determine the correlation coefficient (R²) [31].

The Scientist's Toolkit: Essential Research Reagents and Solutions

A successful benchmarking study relies on a suite of specialized software tools and databases. The following table details key resources used in the featured experiments and the broader field.

Table 3: Essential Research Tools for Energy Minimization and Binding Affinity Prediction

| Tool Name | Category | Primary Function | Application in Research |

|---|---|---|---|

| YASARA [2] | Molecular Modeling & Simulation | A versatile software for molecular modeling, dynamics, and energy minimization. Supports multiple force fields. | Used for refining protein-ligand complexes via energy minimization, with options for rigid or flexible backbones to simulate induced fit. |

| AutoDock Vina [29] | Molecular Docking | A widely used traditional docking program that uses a scoring function and search algorithm to predict binding poses. | Serves as a standard baseline for comparison against newer deep learning-based docking methods in benchmarking studies. |

| Glide SP [29] | Molecular Docking | A high-performance traditional docking tool known for its accurate pose prediction and rigorous scoring. | Often used as a high-quality benchmark in comparative studies due to its consistent performance in producing physically valid poses. |

| SurfDock [29] | Deep Learning Docking | A state-of-the-art generative diffusion model for molecular docking. | Exemplifies the modern DL approach that achieves high pose accuracy but may require post-processing for physical validity. |

| GROMACS [31] | Molecular Dynamics | An open-source package for MD simulations, which includes energy minimization capabilities. | Used to run MD simulations for equilibration and as an engine for binding free energy calculations with methods like BAR. |

| PDBbind [30] | Database | A curated database collecting protein-ligand complex structures and their experimental binding affinity data. | Provides the essential primary data for training machine learning models and for benchmarking docking and affinity prediction methods. |

| PoseBusters [29] | Validation Tool | A toolkit to systematically validate the physical plausibility and chemical correctness of docking predictions. | A critical tool for modern benchmarking, moving beyond RMSD to assess the real-world utility of predicted molecular structures. |

Diagram 2: Tool interaction in a drug discovery pipeline, showing the relationship between different software categories.

The benchmarking of energy minimization parameters and docking methods reveals a nuanced landscape where no single approach is universally superior. Traditional physics-based methods like Glide SP remain gold standards for producing physically plausible results, while modern deep learning approaches like generative diffusion models show remarkable promise in pose prediction accuracy but require further development to ensure physical realism. Energy minimization serves as a critical bridge, refining initial predictions into more stable and physically valid structures. The choice of protocol should be guided by the specific goal: rapid initial screening, high-precision refinement, or exploration of novel binding pockets. As the field advances, the integration of robust energy minimization into end-to-end deep learning pipelines, alongside standardized multi-dimensional benchmarking as illustrated in this guide, will be crucial for developing more reliable and efficient computational tools for drug discovery.

Implementing Energy Minimization: Techniques for Drug Discovery and Biomedical Engineering

Free Energy Perturbation (FEP) for Relative Binding Affinities

Free Energy Perturbation (FEP) represents a class of rigorous, physics-based computational methods for predicting the relative binding affinities of molecules to biological targets. As a cornerstone of structure-based drug design, FEP calculations utilize molecular dynamics (MD) simulations to compute free energy differences between related systems through alchemical transformations [32] [33]. The fundamental principle underpinning FEP is the statistical relationship that allows calculation of free energy differences between two states (e.g., a ligand and its modified analog) based on simulations that sample their conformational spaces [34]. This approach has transitioned from a theoretical methodology to an essential tool in drug discovery pipelines due to substantial advancements in force fields, sampling algorithms, and computational hardware, particularly graphics processing units (GPUs) [32] [33].

The accuracy of FEP methods has been demonstrated to approach experimental reproducibility, with root-mean-square errors (RMSE) often around 1 kcal/mol for diverse protein-ligand systems [33] [35]. This level of precision enables researchers to prioritize synthetic efforts effectively, explore vast chemical spaces computationally, and optimize multiple molecular properties simultaneously, including potency, selectivity, and solubility [35]. FEP methodologies have expanded beyond simple R-group modifications to address complex challenges in drug discovery, including scaffold hopping, macrocyclization, covalent inhibitors, and protein-protein interactions [32] [33].

Key FEP Platforms and Methodologies

Commercial and Academic FEP Implementations

Several FEP implementations have been developed across commercial, academic, and open-source domains, each with distinctive features and capabilities. These platforms share a common theoretical foundation but differ in their force fields, sampling algorithms, automation levels, and application domains.

Schrödinger's FEP+ platform represents one of the most widely adopted commercial implementations, utilizing the OPLS force field and enhanced sampling techniques to achieve high accuracy across diverse protein classes and perturbation types [33] [35]. The platform offers comprehensive workflows for various drug discovery applications, including hit discovery, lead optimization, and protein engineering [35].

Amber-based FEP implementations provide academic and research alternatives with customizable parameters and algorithms. As demonstrated in antibody design projects, these implementations incorporate Hamiltonian replica exchange to improve sampling and include specialized protocols for estimating statistical uncertainties [34]. The Amber software package facilitates large-scale automated FEP calculations for evaluating both binding affinity and structural stability impacts of mutations [34].

Uni-FEP represents an automated and scalable workflow developed by ATOMBEAT, designed for consistent and reproducible FEP simulations from minimal inputs [36] [37]. This platform has been validated on an extensive benchmark dataset comprising approximately 1000 protein-ligand systems with around 40,000 ligands, making it one of the most comprehensively tested implementations [36].

QUELO's FEP platform offers unique capabilities for running calculations with both molecular mechanics (MM) and quantum mechanics/molecular mechanics (QM/MM) force fields [38]. Its AI-based parametrization of ligands seamlessly integrates with receptor and solvent force field terms, trained to mimic quantum mechanical behavior [38]. This approach enables hundreds of atoms to be treated at the QM level without sacrificing sampling quality.

Theoretical Foundations and Computational Methodologies

FEP calculations rely on statistical mechanics principles to compute free energy differences between thermodynamic states. The fundamental equation for the free energy difference between two systems (e.g., a ligand and its modified analog) is given by:

$${A}{j}-{A}{i}=-{k}{B}T\mathrm{ln}{\left\langle \mathrm{exp}\left[-\frac{{E}{j}\left(\overrightarrow{X}\right)-{E}{i}\left(\overrightarrow{X}\right)}{{k}{B}T}\right]\right\rangle }_{i}$$

where (Aj) and (Ai) represent the free energies of states (j) and (i), (k_B) is Boltzmann's constant, (T) is temperature, and the angle brackets denote an ensemble average over configurations (\overrightarrow{X}) sampled from state (i) [34]. This exponential averaging (EXP) method, while theoretically exact, requires sufficient overlap between the configurational spaces of the two states for practical convergence.

To address sampling challenges, modern FEP implementations employ advanced techniques such as replica exchange solute tempering (REST) or Hamiltonian replica exchange, which enhance conformational sampling by reducing energy barriers between states [32] [34]. The Bennett Acceptance Ratio (BAR) method provides improved statistical accuracy when equilibrium sampling is available for both states [34]. Most practical FEP calculations utilize intermediate "window" states between the endpoints to ensure smooth transitions and adequate overlap [34].

Table 1: Key Methodological Components in Modern FEP Implementations

| Component | Description | Implementation Examples |

|---|---|---|

| Force Fields | Mathematical functions describing potential energy | OPLS4 [33], Amber [34], AI-parametrized [38] |

| Sampling Enhancement | Techniques to improve conformational sampling | REST/REST2 [32], Hamiltonian RE [34] |

| Free Energy Estimators | Algorithms for calculating ΔG from simulation data | BAR [34], MBAR, EXP [34] |

| System Preparation | Protocols for modeling protein, ligands, and solvent | Automated pipelines [35] [38], homology modeling [32] |

| Uncertainty Estimation | Methods for quantifying statistical errors | Automated analysis [34], confidence intervals |

Performance Benchmarking of FEP Platforms

Accuracy Assessment Against Experimental Data

The performance of FEP methodologies is rigorously assessed through large-scale benchmark studies comparing computational predictions with experimental binding affinity measurements. These benchmarks provide critical insights into the accuracy, reliability, and limitations of different FEP approaches across diverse biological systems and chemical transformations.

The Uni-FEP Benchmarks represent one of the most extensive public datasets for FEP validation, comprising approximately 1000 protein-ligand systems with around 40,000 ligands [36] [37]. This benchmark was specifically designed to reflect real-world drug discovery challenges, including scaffold replacements, charge changes, and other complex modifications commonly encountered in medicinal chemistry [36]. Performance across this diverse dataset demonstrates the robustness of modern FEP methods, with many targets showing RMSE values below 1.0 kcal/mol and correlation coefficients (R²) exceeding 0.5 [37].

A comprehensive assessment published in Communications Chemistry surveyed the maximal achievable accuracy of rigorous protein-ligand binding free energy calculations [33]. This study established that when careful preparation of protein and ligand structures is undertaken, FEP can achieve accuracy comparable to experimental reproducibility [33]. The research highlighted the importance of considering experimental variability when assessing computational methods, reporting that reproducibility between independent binding affinity measurements ranges from 0.56 to 0.69 pKi units (0.77 to 0.95 kcal/mol) [33].