Automated Force Field Optimization with Bayesian Inference: A Robust Workflow for Biomolecular Modeling and Drug Discovery

This article provides a comprehensive guide to automated force field parameter optimization using Bayesian inference, a transformative approach for computational researchers and drug development professionals.

Automated Force Field Optimization with Bayesian Inference: A Robust Workflow for Biomolecular Modeling and Drug Discovery

Abstract

This article provides a comprehensive guide to automated force field parameter optimization using Bayesian inference, a transformative approach for computational researchers and drug development professionals. We explore the foundational Bayesian principles that enable robust parameter estimation by explicitly accounting for uncertainty in both experimental data and computational models. The content details cutting-edge methodological workflows, including the use of the BICePs score for variational optimization and the treatment of forward model parameters. Practical guidance is offered for troubleshooting systematic errors and optimizing computational efficiency. Finally, the article presents rigorous validation protocols and comparative analyses across different biomolecular systems, demonstrating how these automated workflows enhance the predictive accuracy of molecular simulations for reliable drug design.

Bayesian Foundations: The Statistical Framework for Robust Force Field Parameterization

Bayesian inference provides a powerful statistical framework for automating and improving the parameterization of molecular force fields. By formally treating model parameters as probability distributions, it enables researchers to integrate prior knowledge with new experimental and computational data, quantify uncertainties, and make robust decisions between competing models. This approach is particularly valuable for force field optimization, a long-standing challenge where high-dimensional, interdependent parameters must be determined from sparse, noisy observables [1] [2]. This document outlines core Bayesian methodologies and provides detailed application protocols for researchers developing automated parameterization workflows.

Core Bayesian Concepts for Molecular Modeling

Theoretical Foundation

At the heart of Bayesian inference is Bayes' theorem, which updates prior beliefs about model parameters (θ) after observing data (D):

Posterior ∝ Likelihood × Prior

- Prior Distribution (P(θ)): Encodes existing knowledge or physical constraints about force field parameters before considering new data [1].

- Likelihood Function (P(D|θ)): Measures the probability of observing the data given a specific set of parameters [3].

- Posterior Distribution (P(θ|D)): Represents the updated belief about the parameters after combining the prior with the observed data [3] [1].

This framework naturally handles uncertainty and allows for the integration of diverse data sources, from quantum mechanical calculations to ensemble-averaged experimental measurements [4] [5].

Key Bayesian Methods in Force Field Development

Table 1: Key Bayesian Methods and Their Applications in Molecular Modeling

| Method | Key Feature | Primary Application in Force Fields | Representative Algorithm/Tool |

|---|---|---|---|

| Bayesian Model Selection | Compares competing models while penalizing unnecessary complexity [1]. | Selecting optimal functional forms (e.g., number of atom types, inclusion of multipoles) [1]. | Bayes Factors, Reversible Jump MCMC [1] |

| Hierarchical Bayesian Models | Models multi-level data structure and shares information across groups [3]. | Parameterizing molecular fragments for transferable force fields [5]. | Empirical Bayes [3] |

| Markov Chain Monte Carlo (MCMC) | Samples from complex posterior distributions [3]. | Estimating distributions of force field parameters and conformational populations [4] [5]. | Metropolis-Hastings, Gibbs Sampling [3] |

| Bayesian Active Learning | Uses model uncertainty to select the most informative data points [6]. | On-the-fly training of machine learning force fields on representative configurations [6]. | Mapped Gaussian Process (MGP) [6] |

Detailed Application Notes

Workflow for Automated Bayesian Force Field Optimization

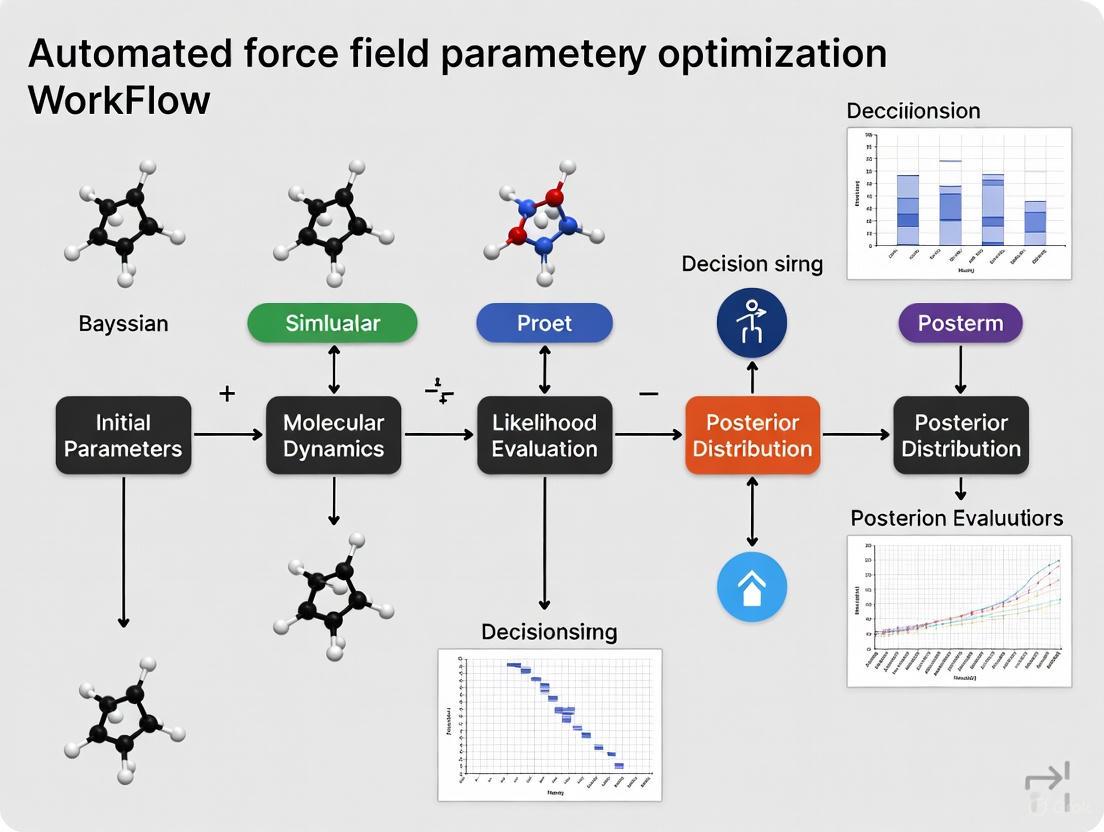

The following diagram illustrates a generalized, automated workflow for Bayesian force field optimization, integrating elements from several research efforts.

Automated Bayesian Force Field Optimization

Protocol 1: Bayesian Optimization of Partial Charges

This protocol is adapted from methods that learn partial charge distributions from ab initio molecular dynamics (AIMD) data [5].

Define System and Prepare Reference Data

- Select Molecular Fragments: Choose small, chemically meaningful fragments representative of the larger system (e.g., acetate, methylammonium) [5].

- Generate AIMD Reference Data: Perform AIMD simulations of each solvated fragment. From the trajectories, extract condensed-phase structural Quantities of Interest (QoIs), such as:

- Radial distribution functions (RDFs) between solute and solvent atoms.

- Hydrogen bond counts or order parameters.

- Ion-pair distance distributions (for charged species) [5].

- Enforce Physical Constraints: Define a truncated normal prior for partial charges, with bounds based on typical force field values and a constraint to maintain the total molecular charge [5].

Build a Surrogate Model

- Run Initial Force Field Simulations: Execute multiple classical molecular dynamics (FFMD) simulations, sampling partial charges from the prior distribution.

- Train Local Gaussian Process (LGP) Surrogate: For each QoI, train an LGP model that maps a set of partial charges to the predicted QoI. This surrogate dramatically accelerates posterior sampling by replacing costly FFMD simulations [5].

Sample the Posterior via MCMC

- Define Likelihood: Use a multivariate Gaussian to quantify the agreement between LGP-predicted QoIs and the AIMD reference data.

- Execute MCMC Sampling: Use a sampler (e.g., No-U-Turn Sampler) to draw samples from the posterior distribution of partial charges. The LGP surrogate enables the efficient evaluation of thousands of candidate parameter sets [5].

- Validate Parameters: Select multiple posterior samples and run full FFMD simulations to confirm they reproduce the AIMD reference data within acceptable error margins (e.g., RDF errors < 5%) [5].

Protocol 2: Model Selection for the 2CLJQ Fluid Model

This protocol uses Bayesian model selection to determine the justified level of complexity in a molecular model, as demonstrated with the two-centered Lennard-Jones + quadrupole (2CLJQ) model [1].

Define Model Hierarchy

Establish a set of nested models with increasing complexity:

- Model M1 (United Atom): Fixed bond length (L) and fixed zero quadrupole moment (Q).

- Model M2 (Anisotropic United Atom): Variable bond length (L) and fixed zero quadrupole moment (Q).

- Model M3 (Anisotropic United Atom + Quadrupole): Variable bond length (L) and variable quadrupole moment (Q) [1].

Specify Priors and Likelihood

- Priors: Assign informative prior distributions to parameters based on previous knowledge or chemical intuition. For example, use a normal distribution for the bond length L centered on a known value from quantum chemistry [1].

- Likelihood: Assume experimental errors are independent and identically distributed, using a Gaussian likelihood to compare simulation outputs to experimental data (e.g., saturated liquid density, vapor pressure, surface tension) [1].

Calculate Bayes Factors via RJMC

- Implement Reversible Jump Monte Carlo (RJMC): Use RJMC to sample simultaneously across the different models and their parameter spaces. This algorithm allows transitions between models while maintaining detailed balance [1].

- Compute Bayes Factors: Estimate the evidence for each model by calculating the Bayes factor (B32) between two models (e.g., M3 and M2). A B32 > 1 supports the more complex model (M3). Interpretation follows scales like Kass and Raftery's (e.g., B32 > 10 is strong evidence) [3] [1].

- Decision: Adopt the most complex model only if the Bayes factor provides positive or strong evidence in its favor, ensuring additional parameters are justified by the data [1].

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item / Software | Function / Purpose | Application Example | Key Features |

|---|---|---|---|

| Stan / PyMC3 | Probabilistic programming languages for flexible Bayesian modeling [3]. | Specifying custom likelihoods and priors for force field parameter posteriors. | Hamiltonian Monte Carlo, No-U-Turn Sampler [3] |

| LGP Surrogate Model | Fast emulator for molecular simulation observables [5]. | Accelerating MCMC sampling by predicting RDFs from charges without full MD. | Interpretable, physics-informed priors [5] |

| Replica-Averaged Forward Model | Bayesian reweighting algorithm for ensemble-averaged data [4]. | Refining force field parameters against sparse/noisy experimental observables. | Handles systematic error, infers uncertainty [4] |

| Mapped Gaussian Process (MGP) | Accelerated Bayesian machine learning force field [6]. | Large-scale MD with quantum accuracy and on-the-fly active learning. | Built-in uncertainty, constant evaluation cost [6] |

| Ab Initio MD (AIMD) Data | High-level reference data for condensed-phase behavior [5]. | Providing target RDFs and HB statistics for charge optimization. | Captures many-body and polarization effects [5] |

Advanced Methodologies

MCMC Sampling for Conformational Populations

The internal mechanism of MCMC sampling for a method like Bayesian Inference of Conformational Populations (BICePs) can be visualized as follows [4]:

BICePs MCMC Sampling Process

This diagram shows the core cycle of an MCMC algorithm used in methods like BICePs [4]. It samples both conformational states (X) and uncertainty parameters (σ), evaluating the posterior probability which balances a simulation-derived prior against the likelihood of matching experimental data. The process iterates, building up the posterior distribution used for force field validation and refinement [4].

Core Challenges in Traditional Force Field Optimization

Force fields are fundamental to molecular simulations, enabling the study of material properties and dynamic processes from the atomic to the mesoscopic scale. They establish a mathematical relationship between a system's energy and the positions of its atoms, creating a potential energy surface (PES) that is crucial for exploring chemical reactions and material characteristics [7]. The accuracy of this PES directly determines the reliability of simulation outcomes in applications such as drug design, catalyst development, and material science. Traditional force field optimization involves refining parameters to ensure the model faithfully reproduces reference data, which can come from high-level quantum mechanical (QM) calculations or experimental measurements [4] [8]. However, this process is fraught with challenges, including the high dimensionality of parameter space, the competing demands of accuracy and transferability, and the need to properly account for errors and uncertainties in reference data. This document outlines the core challenges within the context of developing automated, robust optimization workflows, with a specific focus on the role of Bayesian inference methods in addressing these issues.

Classification of Force Fields and Their Parameterization Landscape

Understanding the different types of force fields is essential for appreciating their respective optimization challenges. They can be broadly categorized into three groups, each with a distinct approach to modeling the PES.

Table 1: Classification and Characteristics of Major Force Field Types

| Force Field Type | Typical Number of Parameters | Interpretability | Primary Optimization Targets | Key Limitations |

|---|---|---|---|---|

| Classical Force Fields (e.g., CHARMM, OPLS-AA, GAFF) [9] [7] | 10 - 100 [7] | High (parameters often have direct physical meaning) [7] | Liquid densities, vaporization enthalpies, vibrational frequencies [8] | Cannot describe bond breaking/formation; limited accuracy for properties not explicitly fitted [7] |

| Reactive Force Fields (e.g., ReaxFF) [10] [7] | 100+ [7] | Medium (complex functional forms) [10] | Reaction energies, barriers, DFT-level structures [10] | Struggles to achieve DFT-level accuracy for new systems; complex parameterization [10] |

| Machine Learning Force Fields (MLFFs) (e.g., EMFF-2025, Neural Network Potentials) [10] [7] | 100,000+ (network weights) | Low ("black box" models) | DFT-level energies and forces for diverse configurations [10] | High computational cost for training; data hunger; limited transferability outside training data [10] |

The parameterization strategy differs significantly across these types. Classical force fields are typically optimized using least-squares minimization or global optimization algorithms (e.g., the Levenberg-Marquardt algorithm used for methane [11]) against a limited set of condensed-phase experimental data, such as liquid density (( \rho{\text{liq}} )) and vaporization enthalpy (( \Delta H{\text{vap}} )) [8]. In contrast, reactive force fields and machine learning force fields are heavily parameterized against large datasets derived from Quantum Mechanical (QM) calculations, such as Density Functional Theory (DFT), which provide target values for energies, forces, and charges [10] [7]. The EMFF-2025 potential, for instance, was developed using a transfer learning strategy that leverages a pre-trained model and incorporates minimal new data from DFT calculations, achieving chemical accuracy for a wide range of high-energy materials [10].

Core Challenges in Force Field Optimization

The Accuracy-Transferability Trade-off and Parametric Complexity

A fundamental challenge is the inherent trade-off between a force field's accuracy for specific systems and its transferability to novel compounds or different physical states. Traditional force fields parameterized solely on fluid-phase thermodynamic properties often fail to accurately predict solid-liquid equilibria (SLE). For example, the TraPPE force field, while accurate for liquid-phase properties of methane, exhibited significant deviations (over 17 K) in predicting melting points [11]. Similarly, the OPLS force field has been shown to underestimate the melting point of benzene by 23 K and overestimate that of methanol by approximately 20% [11]. This lack of transferability underscores a critical challenge: a model's performance is inherently limited by the data used for its parameterization.

The complexity of the optimization landscape escalates with the number of parameters. While classical force fields have a relatively low-dimensional space (10-100 parameters), it remains highly correlated and interdependent [4]. Changing a single parameter, such as the Lennard-Jones well depth (( \epsilon )) or radius (( \sigma )), can affect multiple physical properties simultaneously, making it difficult to isolate the effect of a single parameter change [11]. This interdependence is even more pronounced in complex reactive force fields and is extreme in machine learning force fields, where millions of non-physical parameters (network weights) must be optimized [10] [7].

Data-Related Challenges: Uncertainty, Error, and Sparse Observables

Force field refinement must contend with significant uncertainties in both the reference data and the forward models used to predict observables.

Uncertainty in Reference Data: Both QM calculations and experimental measurements are subject to random and systematic errors [4]. QM methods have intrinsic errors based on the chosen functional and basis set, while experimental data can be sparse and noisy [4] [12]. Many force field optimization algorithms lack a robust mechanism to integrate these multiple sources of uncertainty, leading to overfitting to potentially erroneous data points.

Sparse and Ensemble-Averaged Data: Experimental observations, such as those from NMR or scattering techniques, are typically ensemble-averaged, representing a weighted mean across a vast number of conformational states [4] [12]. Optimizing a force field against such data is an ill-posed inverse problem, as many different microscopic parameter sets could potentially yield the same macroscopic average. This is further complicated when the experimental data is sparse, providing an incomplete set of restraints for the optimization.

The High Computational Cost of Validation

A force field's performance cannot be assessed solely on its optimization targets; rigorous validation against non-target properties is essential. This process is computationally demanding. For example, validating a force field for liquid membrane applications requires extensive molecular dynamics (MD) simulations to compute properties like density, shear viscosity, interfacial tension, and mutual solubility, which are then benchmarked against experimental data [9]. This validation often reveals shortcomings, as seen with the GAFF and OPLS-AA/CM1A force fields, which overestimated the viscosity of diisopropyl ether (DIPE) by 60-130% [9]. Similarly, the CombiFF-optimized force fields showed good agreement with experiment for many non-target properties but exhibited larger discrepancies for shear viscosity and dielectric permittivity, likely due to the united-atom representation and implicit treatment of electronic polarization [8]. This highlights that the physical formulation of a force field ultimately constrains its achievable accuracy, regardless of parameter tuning.

A Bayesian Inference Protocol for Robust Force Field Optimization

Bayesian Inference of Conformational Populations (BICePs) provides a powerful statistical framework to address the challenges of uncertainty and sparse data [4] [12]. The following protocol outlines its application for force field refinement.

Theoretical Foundation

BICePs samples the full posterior distribution of parameters, which is proportional to the likelihood of the experimental data given the parameters, multiplied by the prior distributions of the parameters [4]:

p(X, σ | D) ∝ p(D | X, σ) p(X) p(σ)

Where:

Xrepresents the conformational states.σrepresents the uncertainty parameters (nuisance parameters).Dis the experimental data.p(D | X, σ)is the likelihood function.p(X)is the prior population from a theoretical model (e.g., a molecular simulation).p(σ)is a non-informative prior for the uncertainty.

A key innovation is the use of a replica-averaged forward model [4] [12]. Instead of comparing data to a single simulation snapshot, the forward model prediction is an average over multiple replicas of the system: f_j(X) = (1/N_r) * ∑_{r=1}^{N_r} f_j(X_r). This makes BICePs a maximum-entropy reweighting method that naturally balances experimental information with the prior without needing adjustable regularization parameters [4].

Detailed Experimental Protocol

Step 1: System Setup and Prior Ensemble Generation

- Procedure: Choose the molecular system and the force field parameters to be optimized. Run extensive molecular dynamics (MD) or Monte Carlo (MC) simulations using an initial force field to generate a theoretical prior ensemble. This ensemble provides the initial guess for the conformational populations,

p(X). - Critical Notes: The quality of the prior ensemble influences the efficiency of convergence but not the final result, as BICePs can reweight it significantly. For a lattice model test system, this might involve exhaustive enumeration of states [4].

Step 2: Define Observables and Forward Model

- Procedure: Identify the ensemble-averaged experimental observables

Dto be used as restraints (e.g., NMR J-couplings, distance measurements from FRET, thermodynamic data). Define the forward modelf(X)that calculates the theoretical value of an observable from a given molecular configurationX[12]. - Example: For optimizing Karplus parameters for J-coupling constants, the forward model is the Karplus relation:

^3J(φ) = A cos²(φ) + B cos(φ) + C, whereφis the dihedral angle andA,B,Care the parameters to be optimized [12].

Step 3: Configure BICePs and Sample the Posterior

- Procedure: Use the BICePs algorithm to sample the posterior distribution

p(X, σ | D).- Likelihood Selection: Choose an appropriate likelihood function. The Student's likelihood model is robust for handling outliers and systematic errors, as it marginalizes over individual uncertainties with a fewer number of parameters [4].

- MCMC Sampling: Perform Markov Chain Monte Carlo (MCMC) sampling to explore the space of conformational populations

Xand uncertainty parametersσ. Gradients of the BICePs score with respect to force field parameters can be used to accelerate convergence [4] [12].

- Critical Notes: The number of replicas

N_rshould be sufficiently large to reduce the standard error of the mean in the replica-averaged forward model.

Step 4: Variational Optimization and Model Selection

- Procedure: Use the BICePs score—a free energy-like quantity that reports the evidence for a model—as the objective function for variational optimization.

- Optimization: Employ stochastic gradient descent to minimize the BICePs score with respect to the force field parameters

θ. The parameter update can be formulated as:θ_trial = θ_old - l_rate ⋅ ∇u + η ⋅ N(0,1), wherel_rateis the learning rate andηis noise to escape local minima [4] [12]. - Validation: The optimized force field must be validated against a held-out set of experimental data or properties not used in the optimization (e.g., transport properties, SLE data) to ensure its transferability [11] [9] [8].

- Optimization: Employ stochastic gradient descent to minimize the BICePs score with respect to the force field parameters

Table 2: Research Reagent Solutions for Bayesian Force Field Optimization

| Reagent / Resource | Type | Function in the Protocol | Example Use Case |

|---|---|---|---|

| BICePs Algorithm | Software | Core engine for sampling the posterior and computing the BICePs score [4]. | Force field validation and refinement against ensemble data [4]. |

| Replica-Averaged Forward Model | Computational Method | Predicts ensemble-averaged observables from multiple conformational replicas, reducing overfitting [4] [12]. | Calculating expected J-coupling constants from an ensemble of protein structures [12]. |

| Student's Likelihood Model | Statistical Model | Likelihood function robust to outliers and systematic error; limits number of uncertainty parameters [4]. | Down-weighting the influence of erroneous or inconsistent data points during refinement [4]. |

| QM Reference Data (e.g., from DFT) | Dataset | Provides high-accuracy target data for parameterizing reactive and ML force fields [10] [7]. | Training the EMFF-2025 neural network potential for energetic materials [10]. |

| Experimental Ensemble Data (e.g., NMR, Thermodynamic) | Dataset | Provides physical restraints for refining force field parameters against real-world observations [4] [8]. | Optimizing force fields to reproduce liquid densities and vaporization enthalpies [8]. |

Diagram 1: Workflow for Bayesian Force Field Optimization. The process integrates prior simulation data with experimental observations to sample a posterior distribution, which is then used to variationally optimize force field parameters (θ), followed by essential validation.

The optimization of traditional force fields is constrained by significant challenges, including the accuracy-transferability trade-off, high-dimensional and correlated parameter spaces, and the pervasive presence of uncertainty in reference data. The Bayesian Inference of Conformational Populations (BICePs) protocol presented here offers a robust, automated framework to address these issues. By explicitly sampling posterior distributions and leveraging replica-averaged forward models with robust likelihoods, BICePs facilitates force field refinement that is resilient to data sparsity and errors. This approach, which can be integrated with both classical and machine learning potentials, represents a promising path toward more reliable and predictive molecular simulations for drug development and materials science.

Bayesian Inference of Conformational Populations (BICePs) is a statistically rigorous algorithm designed to reconcile theoretical predictions of molecular conformational ensembles with sparse and/or noisy experimental measurements [13]. Developed to address the challenges of characterizing structurally heterogeneous molecules, BICePs operates within a Bayesian framework to reweight conformational state populations as a post-simulation processing step [13] [14]. This approach has proven particularly valuable for studying natural product macrocycles, peptidomimetics, and other foldamers where conventional structural determination methods face limitations [13].

A key innovation of BICePs is its ability to perform objective model selection through a quantity known as the BICePs score, which reflects the integrated posterior evidence for a given model [14]. This capability, combined with proper implementation of reference potentials, distinguishes BICePs from earlier Bayesian inference methods like Inferential Structure Determination (ISD) [13]. The algorithm has evolved significantly since its inception, with BICePs v2.0 now supporting diverse experimental observables and providing user-friendly tools for data preparation, processing, and posterior analysis [15] [16].

Theoretical Foundation

Bayesian Inference Framework

BICePs models a posterior distribution ( P(X|D) ) of conformational states ( X ), given experimental data ( D ). According to Bayes' theorem, this posterior probability is proportional to the product of a likelihood function and a prior distribution [13]:

[ P(X|D) \propto Q(D|X)P(X) ]

Here, ( P(X) ) represents the prior distribution of conformational populations derived from theoretical modeling, such as molecular simulations. The likelihood function ( Q(D|X) ) quantifies how well a given conformation ( X ) agrees with experimental measurements and typically assumes a normally-distributed error model [13]:

[ Q(D|X,\sigma) = \prodj \frac{1}{\sqrt{2\pi\sigma^2}} \exp\left(-[rj(X) - r_j^{\text{exp}}]^2 / 2\sigma^2\right) ]

where ( rj(X) ) are observables back-calculated from the theoretical model, ( rj^{\text{exp}} ) are experimental values, and ( \sigma ) represents uncertainty parameters that account for both experimental error and conformational heterogeneity [13].

Reference Potentials

A crucial advancement in BICePs is the proper implementation of reference potentials ( Q_{\text{ref}}(r) ), which account for the information content of experimental restraints [13] [14]. The modified posterior probability becomes [13]:

[ P(X|D) \propto \left[ \frac{Q(r(X)|D)}{Q_{\text{ref}}(r(X))} \right] P(X) ]

The reference potential represents the distribution of observables in the absence of experimental information, ensuring that restraints are weighted according to their informativeness [14]. For example, a distance restraint between residues far apart in sequence provides more information than one between nearby residues, and appropriate reference potentials prevent introduction of unnecessary bias when multiple non-informative restraints are used [13] [14].

The BICePs Score for Model Selection

The BICePs score is a Bayes factor-like quantity that enables quantitative model selection [14]. It is computed as the free energy of introducing experimental restraints:

[ \text{BICePs score} = -\ln Z{\text{posterior}} + \ln Z{\text{prior}} ]

where ( Z{\text{posterior}} ) and ( Z{\text{prior}} ) are the partition functions of the posterior and prior distributions, respectively [14]. This score represents the integrated evidence for a model given the experimental data, with more negative values indicating models that better agree with experiments [14] [4].

Table 1: Key Theoretical Concepts in BICePs

| Concept | Mathematical Representation | Role in BICePs |

|---|---|---|

| Prior Distribution | ( P(X) ) | Represents theoretical predictions of conformational populations from simulations |

| Likelihood Function | ( Q(D|X,\sigma) ) | Quantifies agreement between conformational states and experimental data |

| Posterior Distribution | ( P(X|D) ) | Balanced estimate of conformational populations considering both theory and experiment |

| Reference Potential | ( Q_{\text{ref}}(r) ) | Accounts for inherent distributions of observables without experimental bias |

| BICePs Score | ( -\ln Z{\text{posterior}} + \ln Z{\text{prior}} ) | Enables model selection by quantifying total evidence for a model |

BICePs in Automated Force Field Parameterization

Variational Optimization Framework

Recent advancements have extended BICePs to perform automated force field refinement through variational minimization of the BICePs score [4]. This approach treats force field parameters as adjustable variables ( \theta ) and optimizes them by minimizing the BICePs score, which serves as an objective function that naturally regularizes against overfitting [4]:

[ \theta^* = \arg\min_{\theta} \left[ -\ln \int P(X,D|\theta) dX \right] ]

This variational framework leverages gradient information for efficient optimization in high-dimensional parameter spaces [4]. The derivation of first and second derivatives of the BICePs score enables the use of sophisticated optimization algorithms that converge more rapidly than derivative-free approaches [4].

Handling Systematic Errors and Outliers

BICePs incorporates specialized likelihood functions robust to systematic errors and experimental outliers [4]. The Student's model, for instance, marginalizes uncertainty parameters for individual observables while assuming mostly uniform noise levels with occasional erratic measurements [4]. This approach limits the number of uncertainty parameters that need sampling while effectively capturing outliers [4].

For replica-averaged simulations, the uncertainty parameter ( \sigmaj ) combines Bayesian error ( \sigmaj^B ) and standard error of the mean ( \sigma_j^{\text{SEM}} ) [4]:

[ \sigmaj = \sqrt{(\sigmaj^B)^2 + (\sigma_j^{\text{SEM}})^2} ]

where ( \sigma_j^{\text{SEM}} ) is estimated from the finite sample of replicas and decreases with the square root of the number of replicas [4].

Application to Forward Model Parameterization

BICePs has also been adapted for empirical forward model parameterization [17]. Two novel methods have been developed:

- Nuisance parameter integration: Treats forward model parameters as nuisance parameters and integrates over them in the full posterior distribution [17].

- Variational minimization: Employs BICePs score minimization to optimize parameters, applicable to any differentiable forward model [17].

This approach has been successfully demonstrated in refining Karplus parameters for J-coupling constant predictions, showing improved accuracy for both toy model systems and human ubiquitin [17].

Computational Protocols and Implementation

BICePs Workflow

The following diagram illustrates the standard BICePs workflow for conformational ensemble refinement:

BICePs Software Specifications

BICePs v2.0 is implemented as a free, open-source Python package that provides comprehensive tools for ensemble reweighting [15] [16]. Key features include:

Table 2: BICePs v2.0 Software Capabilities

| Feature | Description | Supported Data Types |

|---|---|---|

| Experimental Observables | NMR data processing | NOE distances, J-couplings, chemical shifts, HDX protection factors [15] [16] |

| Sampling Methods | Posterior distribution sampling | Markov Chain Monte Carlo (MCMC) [13] |

| Uncertainty Quantification | Error model estimation | Nuisance parameter sampling for σ values [13] [14] |

| Analysis Tools | Posterior analysis and visualization | Convergence assessment, statistical significance evaluation [15] |

| Model Selection | BICePs score calculation | Free energy estimation for model comparison [14] |

Protocol for Force Field Parameter Optimization

The following workflow illustrates the extended BICePs protocol for automated force field parameter optimization:

Step-by-Step Implementation:

Initialization: Begin with initial force field parameters and experimental reference data [4].

Ensemble Generation: Perform molecular simulations to generate conformational ensembles using current force field parameters [4].

BICePs Score Calculation: Compute the BICePs score comparing ensemble predictions with experimental data [14] [4].

Gradient Estimation: Calculate derivatives of the BICePs score with respect to force field parameters using adjoint methods or automatic differentiation [4].

Parameter Update: Adjust force field parameters using gradient-based optimization algorithms to minimize the BICePs score [4].

Convergence Check: Repeat steps 2-5 until the BICePs score stabilizes and parameters converge [4].

Validation: Assess optimized force field performance on independent test systems not used during parameter optimization [4].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Tool/Resource | Function | Application Context |

|---|---|---|

| BICePs v2.0 Software | Open-source Python package for Bayesian ensemble reweighting | Core algorithm implementation [15] [16] |

| Molecular Dynamics Engine | Generate conformational ensembles | Prior distribution sampling (e.g., GROMACS, OpenMM) [4] |

| NMR Data Processing | Prepare experimental observables | Input data preparation for NOE, J-couplings, chemical shifts [15] |

| Markov Chain Monte Carlo | Posterior distribution sampling | Uncertainty quantification and ensemble refinement [13] |

| Automatic Differentiation | Gradient computation for optimization | Force field parameter sensitivity analysis [4] |

| Reference Potential Database | Baseline distributions for observables | Proper normalization of experimental restraints [13] [14] |

BICePs provides a powerful framework for reconciling theoretical predictions with experimental measurements through Bayesian inference. Its core innovations—proper reference potential implementation and the BICePs score for model selection—enable both conformational ensemble refinement and force field parameter optimization. Recent extensions toward automated parameter optimization using variational methods and robust error handling demonstrate BICePs' evolving capabilities in addressing key challenges in computational biophysics and molecular design.

The integration of BICePs into automated force field parameterization workflows represents a significant advancement for the field, offering a principled approach to force field development that naturally accounts for experimental uncertainties and systematic errors. As molecular simulations continue to play an increasingly important role in drug discovery and materials design, BICePs provides an essential bridge between theoretical modeling and experimental validation.

Accurate molecular force fields are critical for reliable simulations in structural biology and drug design. However, force field parameterization is fundamentally challenged by multiple sources of uncertainty, including sparse or noisy experimental data and approximations in computational models [4]. Bayesian inference provides a powerful framework for addressing these challenges through the explicit treatment of uncertainty. This application note examines the role of nuisance parameters and specialized error models within automated force field optimization workflows, with a focus on practical implementation for research scientists.

Nuisance parameters enable the quantification of uncertainty in both experimental measurements and forward model predictions, while robust likelihood functions automatically identify and down-weight outliers affected by systematic error [4] [12]. The integration of these elements into methods like Bayesian Inference of Conformational Populations (BICePs) allows for the simultaneous refinement of force field parameters and quantification of uncertainty, leading to more reliable and interpretable models [4] [12].

Quantitative Analysis of Bayesian Error Models

Table 1: Key Likelihood Functions and Error Models in Bayesian Force Field Optimization

| Model Name | Mathematical Formulation | Handled Uncertainty | Key Advantages |

|---|---|---|---|

| Gaussian Likelihood [4] | ( p(D \mid X, \sigma) \propto \prod{j=1}^{Nj} \frac{1}{\sqrt{2\pi\sigmaj^2}} \exp\left[-\frac{(dj - fj(\mathbf{X}))^2}{2\sigmaj^2}\right] ) | Random error | Simple, widely applicable; works with replica-averaged forward model. |

| Student's t Likelihood [4] | Derived by marginalizing individual uncertainties ( \sigmaj ) conditioned on a typical uncertainty ( \sigma0 ). | Random error and systematic outliers | Robust to outliers; limits number of uncertainty parameters needing sampling. |

| Replica-Averaged Forward Model [4] [12] | ( \sigmaj = \sqrt{(\sigmaj^B)^2 + (\sigmaj^{SEM})^2} ) where ( \sigmaj^{SEM} = \sqrt{ \frac{1}{N} \sumr^N (fj(Xr) - \langle fj(\mathbf{X}) \rangle)^2 } ) | Finite sampling error and experimental noise | Separately accounts for Bayesian uncertainty (( \sigma^B )) and standard error of the mean (( \sigma^{SEM} )) from finite replica sampling. |

Table 2: Comparison of Bayesian Force Field Optimization Frameworks

| Framework | Core Objective | Treatment of Nuisance Parameters | Representative Applications |

|---|---|---|---|

| BICePs [4] [12] | Force field refinement and model selection against ensemble-averaged data. | Sampled via MCMC; includes conformational states ( X ) and experimental uncertainties ( \sigma ). | Protein lattice models; Karplus parameter optimization for ubiquitin [12]. |

| BioFF [2] | Iterative force field parameterization using ensemble refinement. | Regularization via entropic prior on ensemble and simplified prior on force field parameters. | Simple polymer model using label-distance data [2]. |

| Bayesian Partial Charge Optimization [5] | Learning charge distributions from ab initio MD data. | Uncertainty in parameters is inherent to the posterior distribution; uses surrogate models for efficiency. | 18 biologically relevant molecular fragments; calcium binding to troponin [5]. |

Experimental Protocol: BICePs for Force Field Optimization

This protocol outlines the procedure for automating force field parameter refinement using the BICePs algorithm, based on the methodology described by Raddi et al. [4] [12].

Stage 1: System Preparation and Prior Definition

- Generate a Prior Conformational Ensemble: Perform molecular dynamics (MD) simulations using an initial force field to generate a structural ensemble. This provides the prior distribution of conformational populations, ( p(X) ) [4] [12].

- Define Observables and Forward Model: Identify the set of experimental observables ( D ) (e.g., NMR J-couplings, distance measurements). Establish a forward model ( f(X) ) that computes these observables from any molecular configuration ( X ) [12].

Stage 2: BICePs Setup and Configuration

- Specify Nuisance Parameters: Define the uncertainty parameters ( \sigma_j ) for each experimental observable. These will be treated as nuisance parameters and sampled [4].

- Select Likelihood Function:

- For data with primarily random error, the Gaussian likelihood is appropriate.

- For datasets suspected to contain outliers or systematic errors, the Student's t likelihood is recommended, as it marginalizes over individual uncertainties and is more robust [4].

- Implement Replica-Averaging: Configure the replica-averaged forward model, ( fj(\mathbf{X}) = \frac{1}{Nr}\sum{r}^{Nr} fj(Xr) ), where ( N_r ) is the number of replicas. This accounts for finite sampling error in the simulation [4] [12].

Stage 3: Sampling and Optimization Execution

- Sample the Posterior: Use Markov Chain Monte Carlo (MCMC) sampling to draw from the full posterior distribution: ( p(\mathbf{X}, \bm{\sigma}, \theta \mid D) \propto p(D \mid g(\mathbf{X}, \theta), \bm{\sigma}) p(\mathbf{X}) p(\bm{\sigma}) p(\theta) ) This samples conformational states ( \mathbf{X} ), nuisance parameters ( \bm{\sigma} ), and force field parameters ( \theta ) simultaneously [4] [12].

- Variational Optimization (Alternative): Alternatively, optimize force field parameters by minimizing the BICePs score, a free energy-like quantity. This variational approach can leverage gradient information for faster convergence in high-dimensional parameter spaces [4] [12]. ( \theta{\text{new}} = \theta{\text{old}} - \text{lrate} \cdot \nabla u + \eta \cdot \mathcal{N}(0,1) ) This update incorporates stochastic noise ( \eta ) to escape local minima [12].

Stage 4: Validation and Analysis

- Convergence Diagnostics: Monitor MCMC chains for convergence of both force field parameters ( \theta ) and nuisance parameters ( \sigma ).

- Model Validation: Validate the refined force field by assessing its performance on independent experimental data or observables not used in the training set.

- Uncertainty Quantification: Report the posterior distributions of the optimized parameters, which provide natural confidence intervals [5].

Workflow Visualization

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Tool/Reagent | Function in Workflow | Implementation Notes |

|---|---|---|

| Conformational Ensemble [4] [12] | Serves as the prior distribution ( p(X) ) for Bayesian inference. | Typically generated from MD simulation; should broadly cover conformational space. |

| Forward Model [12] | Computes theoretical observables ( f(X) ) from structures for comparison with experiment. | Can be empirical (e.g., Karplus equation) or machine learning-based; must be differentiable for gradient-based optimization. |

| BICePs Software [4] [12] | Implements the core Bayesian inference algorithm, MCMC sampling, and BICePs score calculation. | Key features: replica-averaging, robust likelihoods, and gradient calculation for optimization. |

| MCMC Sampler [4] [5] | Samples the posterior distribution of parameters and nuisance variables. | Critical for uncertainty quantification; requires convergence diagnostics. |

| Ab Initio MD Data [5] | Provides high-quality reference data for parameterizing force fields against quantum-mechanical targets. | Used as a condensed-phase benchmark; captures many-body interactions and polarization effects. |

Handling Sparse, Noisy, and Ensemble-Averaged Experimental Data

Automated force field optimization represents a paradigm shift in molecular simulation, moving beyond traditional manual parameterization towards robust, data-driven workflows. Within this domain, a central challenge is the effective utilization of experimental data that is often limited in quantity (sparse), contains significant measurement errors (noisy), and provides only averaged structural information (ensemble-averaged). Bayesian inference frameworks have emerged as particularly powerful tools for addressing these challenges, as they explicitly account for uncertainty in both parameters and data. These methods enable researchers to extract maximum information from limited experimental observations while avoiding overfitting and providing natural mechanisms for quantifying confidence in the resulting models. This application note examines the key advantages of Bayesian approaches for handling difficult data scenarios and provides detailed protocols for implementation in force field development pipelines, particularly relevant for drug discovery applications where experimental data may be scarce or expensive to acquire.

Key Advantages of Bayesian Methods for Challenging Data

Bayesian methods provide several distinct advantages over traditional optimization approaches when working with sparse, noisy, and ensemble-averaged experimental data. These advantages stem from the probabilistic nature of Bayesian inference, which treats both parameters and data as probability distributions rather than fixed values.

Table 1: Advantages of Bayesian Methods for Different Data Challenges

| Data Challenge | Bayesian Approach | Key Advantage | Representative Method |

|---|---|---|---|

| Sparse Data | Prior distributions regularize inference | Prevents overfitting; incorporates physical constraints | Bayesian Inference of Conformational Populations (BICePs) [4] |

| Noisy Data | Explicit error models with nuisance parameters | Separates experimental error from model discrepancy | Student's t-likelihood for outlier resilience [4] |

| Ensemble-Averaged Data | Replica-averaged forward models | Connects single structures to experimental observables | Maximum Entropy reweighting [4] |

| Systematic Errors | Hierarchical error models | Automatically detects and down-weights outliers | Marginalized uncertainty parameters [4] |

| Transferability | Uncertainty quantification | Natural confidence intervals for parameters | Posterior distribution analysis [5] |

Formal Statistical Treatment of Uncertainty

Unlike conventional force field optimization methods that typically treat experimental uncertainties as fixed, known quantities, Bayesian methods formally incorporate uncertainty as part of the inference problem. The BICePs algorithm, for instance, treats the extent of uncertainty in experimental observables (σ) as nuisance parameters that are sampled alongside conformational populations [4]. This approach uses a Bayesian posterior of the form:

[ p(X,\sigma|D) \propto p(D|X,\sigma)p(X)p(\sigma) ]

where (X) represents conformational states, (D) is the experimental data, and (\sigma) captures uncertainties in measurements. The Jeffreys prior (p(\sigma) \sim \sigma^{-1}) provides a non-informative starting point for uncertainty estimation [4]. This formal treatment means that rather than simply minimizing discrepancies between simulation and experiment, the algorithm jointly infers both the most likely conformational populations and the most likely extent of experimental error.

Robustness to Outliers and Systematic Errors

Bayesian methods can incorporate specialized likelihood functions that provide exceptional resilience to problematic data points. The Student's likelihood model implements a data error model that marginalizes uncertainty parameters for individual observables, operating under the assumption that most measurements share a similar noise level except for a few erratic measurements [4]. This approach automatically detects and down-weights outliers without requiring manual intervention or pre-filtering of data. The mathematical derivation involves a model where uncertainties for particular observables (\sigmaj) are distributed about some typical uncertainty (\sigma0) according to a conditional probability (p(\sigmaj|\sigma0)), which is then marginalized to derive a posterior with a single uncertainty parameter [4]. This provides significant advantages for drug development applications where experimental conditions may introduce systematic errors that are difficult to characterize in advance.

Natural Regularization for Sparse Data

The incorporation of prior information in Bayesian methods provides inherent regularization that prevents overfitting when working with limited data. This is particularly valuable in force field parameterization where the number of adjustable parameters may be large relative to the available experimental data. Bayesian approaches maintain a balance between fitting the experimental data and maintaining physically reasonable parameter values through the prior distribution [5]. The resulting posterior distributions provide not just single "best-fit" parameters but entire probability landscapes, enabling researchers to understand which parameters are well-constrained by the data and which remain uncertain [5]. This uncertainty quantification is crucial for establishing the domain of applicability of force fields, especially when extending to novel molecular systems in drug discovery.

Quantitative Assessment of Method Performance

Table 2: Performance Metrics for Bayesian Force Field Optimization

| Performance Metric | Traditional Methods | Bayesian Methods | Experimental Validation |

|---|---|---|---|

| Uncertainty Quantification | Limited or post-hoc | Native to inference | Posterior distributions provide natural confidence intervals [5] |

| Outlier Resilience | Manual exclusion required | Automatic detection | Student's likelihood minimizes impact of erratic measurements [4] |

| Convergence Stability | Sensitive to initial parameters | More robust to initialization | MCMC sampling explores parameter space more completely [4] [5] |

| Computational Cost | Lower per iteration | Higher per iteration | Surrogate models (e.g., LGPs) can accelerate sampling [5] |

| Transferability Assessment | Limited insights | Built-in uncertainty propagation | Posterior predictive checks validate transferability [5] |

Experimental Protocols

Protocol: BICePs with Replica-Averaged Forward Model

Purpose: To reconcile molecular simulations with sparse or noisy ensemble-averaged experimental measurements while automatically determining appropriate uncertainty parameters.

Materials and Equipment:

- Molecular dynamics simulation software (e.g., GROMACS, NAMD, OpenMM)

- BICePs software package (Python implementation)

- Experimental data (NMR, FRET, or other ensemble-averaged measurements)

- High-performance computing cluster

Procedure:

- Generate Prior Ensemble: Run molecular dynamics simulations to generate an initial structural ensemble. This serves as the prior distribution (p(X)) [4].

- Define Forward Model: Implement mathematical functions that calculate experimental observables from atomic coordinates (e.g., J-couplings, chemical shifts, distance measurements) [4].

- Set Up Replica-Averaged Likelihood: Configure the replica-averaged forward model with Gaussian likelihood: [ p(\mathbf{X},\bm{\sigma}|D) \propto \prod{r=1}^{Nr}\left{p(Xr)\prod{j=1}^{Nj}\frac{1}{\sqrt{2\pi\sigmaj^2}}\exp\left[-\frac{(dj-fj(\mathbf{X}))^2}{2\sigmaj^2}\right]p(\sigmaj)\right} ] where (fj(\mathbf{X}) = \frac{1}{Nr}\sum{r}^{Nr}fj(Xr)) is the replica-averaged forward model prediction [4].

- Configure MCMC Sampling: Set up Markov chain Monte Carlo sampling to sample simultaneously from conformational states and uncertainty parameters.

- Run Sampling Phase: Execute extended MCMC sampling to obtain posterior distributions of conformational populations and uncertainty parameters.

- Calculate BICePs Score: Compute the BICePs score for model selection, which represents the free energy of turning on conformational populations under experimental restraints [4].

- Validate with Synthetic Data: Test the workflow using synthetic data with known ground truth to verify proper implementation.

Troubleshooting Tips:

- If convergence is slow, consider reducing the number of replicas or adjusting MCMC proposal distributions.

- If uncertainty parameters grow excessively large, check for systematic discrepancies between forward model predictions and experimental data.

- For better performance with outliers, implement the Student's likelihood model instead of Gaussian likelihood.

Protocol: Bayesian Force Field Optimization with AIMD Reference

Purpose: To optimize force field parameters using ab initio molecular dynamics data as reference within a Bayesian framework, providing uncertainty quantification for parameters.

Materials and Equipment:

- Ab initio molecular dynamics software (e.g., CP2K, VASP)

- Classical molecular dynamics software (e.g., GROMACS, OpenMM)

- Bayesian inference software with Gaussian process capabilities

- High-performance computing resources

Procedure:

- Generate AIMD Reference Data: Run ab initio molecular dynamics simulations for target molecular fragments in explicit solvent to capture condensed-phase behavior [5].

- Extract Quantities of Interest: Calculate radial distribution functions, hydrogen bond counts, and other structural properties from AIMD trajectories as reference data [5].

- Define Parameter Priors: Establish physically motivated prior distributions for force field parameters (e.g., truncated normal distributions for partial charges) [5].

- Train Surrogate Model: Develop local Gaussian process surrogate models that map force field parameters to structural quantities of interest, bypassing the need for full MD simulations during inference [5].

- Set Up Bayesian Inference: Configure the likelihood function to measure agreement between classical MD and AIMD reference data.

- Sample Posterior Distribution: Use MCMC sampling to explore the posterior distribution of force field parameters.

- Validate Optimized Force Field: Run validation simulations with the optimized parameters and compare against experimental data not used in training [5].

Troubleshooting Tips:

- If surrogate model predictions are inaccurate, increase the training set size for the Gaussian process.

- If MCMC mixing is poor, consider adjusting proposal distributions or using gradient-based samplers.

- If force field performance is unsatisfactory on validation tests, reconsider the parameter prior distributions or the choice of reference data.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Bayesian Force Field Optimization

| Tool/Resource | Type | Function in Workflow | Implementation Notes |

|---|---|---|---|

| BICePs | Software package | Bayesian inference of conformational populations | Handles replica-averaged forward models; provides BICePs score for model selection [4] |

| ForceBalance | Optimization framework | Systematic force field parameter optimization | Supports multiple reference data types; includes regularization [18] |

| Local Gaussian Process (LGP) | Surrogate model | Accelerates Bayesian inference | Predicts structural properties from parameters faster than full MD [5] |

| Replica Exchange MD | Sampling enhancement | Improves conformational sampling | Provides better prior ensembles for Bayesian inference |

| Student's Likelihood | Statistical model | Robustness to outliers | Alternative to Gaussian likelihood; marginalizes individual uncertainties [4] |

| Ab Initio MD | Reference data generator | Provides training data for force field optimization | Captures condensed-phase behavior with electronic structure accuracy [5] |

Discussion

The application of Bayesian methods to force field optimization represents a significant advancement in molecular simulation, particularly for scenarios involving sparse, noisy, or ensemble-averaged experimental data. These approaches transform the fundamental nature of parameterization from a deterministic optimization problem to a probabilistic inference problem, with several important implications for computational drug discovery.

The explicit treatment of uncertainty makes Bayesian methods particularly valuable in early-stage drug development where experimental data may be limited. By quantifying uncertainty in force field parameters, researchers can make more informed decisions about which simulation results can be trusted and which require experimental verification. The natural regularization provided by prior distributions helps prevent overfitting to limited datasets, producing more transferable force fields that perform better on novel molecular systems [5].

The robustness to outliers and systematic errors provided by specialized likelihood functions, such as the Student's model in BICePs, reduces the need for manual data curation and enables more automated workflows [4]. This is particularly valuable in high-throughput settings where manual inspection of all experimental data points is impractical. The ability to automatically detect and down-weight problematic measurements while still extracting information from the remaining data represents a significant efficiency improvement over traditional methods.

For drug development professionals, these Bayesian approaches offer a more rigorous statistical foundation for leveraging expensive experimental data. The quantification of uncertainty provides natural stopping criteria for parameterization efforts - once parameter uncertainties are reduced below functionally significant thresholds. Furthermore, the posterior distributions of parameters enable more robust sensitivity analyses, identifying which parameters most strongly influence key simulation outcomes relevant to drug binding or stability.

As force field development continues to evolve, Bayesian methods provide a natural framework for incorporating diverse data sources - from quantum mechanical calculations to various experimental measurements - while properly accounting for the different uncertainties associated with each data type. This unified approach to force field parameterization will play an increasingly important role in developing the next generation of molecular models for computer-aided drug design.

Methodological Workflows: Implementing Bayesian Optimization from Theory to Practice

Accurate molecular force fields are fundamental to the predictive power of molecular simulations in computational chemistry and structural biology. The parameterization of these force fields, however, presents a significant challenge due to high-dimensional parameter spaces, interdependent parameters, and the sparse, noisy nature of experimental data used for validation [19] [2]. Bayesian Inference of Conformational Populations (BICePs) addresses these challenges through a statistically rigorous framework that reconciles theoretical predictions with experimental measurements [14]. A key innovation within this framework is the BICePs score, a free energy-like quantity that enables both model selection and variational optimization of force field parameters [19] [12]. This application note details the theoretical foundation, practical implementation, and experimental protocols for using the BICePs score in automated force field parameter optimization workflows.

Theoretical Foundation of the BICePs Score

Bayesian Inference of Conformational Populations

BICePs employs a Bayesian framework to infer the posterior distribution of conformational states ( X ) and experimental uncertainty parameters ( \sigma ), given experimental data ( D ) [19] [14]: [ p(X, \sigma | D) \propto \underbrace{p(D | X, \sigma)}{\text{likelihood}} \underbrace{p(X)}{\text{prior}} \underbrace{p(\sigma)}_{\text{prior}} ] Here, the prior ( p(X) typically comes from a theoretical model or molecular simulation, while the likelihood function ( p(D | X, \sigma) quantifies the agreement between forward model predictions ( f(X) and experimental observations [19]. The inclusion of uncertainty parameters ( \sigma ) as nuisance variables allows BICePs to handle random and systematic errors in experimental data without requiring prior error estimates [12] [4].

The BICePs Score as a Model Selection Metric

The BICePs score is defined as the negative logarithm of the marginalized posterior probability [14]: [ \text{BICePs score} = -\ln \int p(D | X, \sigma) p(X) p(\sigma) dX d\sigma ] In practice, this is computed as the free energy change associated with "turning on" the experimental restraints [19] [4]. This score represents the total evidence for a model given the experimental data, providing an unequivocal metric for model selection [14]. Lower BICePs scores indicate models whose predicted ensembles are more consistent with experimental observations.

Table 1: Interpretation of BICePs Score Values in Model Assessment

| BICePs Score Range | Model Consistency with Data | Recommended Action |

|---|---|---|

| Significantly Lower | High consistency | Preferred model for parameterization |

| Similar Values | Comparable consistency | Further refinement or additional data needed |

| Significantly Higher | Low consistency | Model rejection or substantial revision |

Advancements in BICePs Score Calculation

Recent enhancements to BICePs have equipped it with a replica-averaging forward model, transforming it into a maximum-entropy (MaxEnt) reweighting method [12] [4]. This approach uses replica-averaged forward model predictions: [ fj(\mathbf{X}) = \frac{1}{Nr} \sum{r}^{Nr} fj(Xr) ] where ( N_r ) is the number of replicas. This formulation eliminates the need for adjustable regularization parameters to balance experimental information with the prior [12]. The BICePs score derived from this enhanced method contains inherent regularization, making it particularly powerful for variational optimization [19].

Variational Optimization Using the BICePs Score

Optimization Framework

The BICePs score serves as a powerful objective function for variational optimization of force field parameters [19]. Consider a force field with parameters ( \mathbf{c} = (c1, c2, \ldots, cm) ) that we wish to optimize. The optimization problem can be formulated as: [ \mathbf{c}^* = \arg \min{\mathbf{c}} \left[ \text{BICePs score}(\mathbf{c}) \right] ] where ( \mathbf{c}^* ) represents the optimal parameter set. This approach enables automated force field refinement while simultaneously sampling the full distribution of uncertainties [19].

Gradient-Based Optimization

For efficient optimization in high-dimensional parameter spaces, BICePs utilizes gradient information [12]. The first and second derivatives of the BICePs score with respect to force field parameters enable efficient exploration of complex parameter landscapes. The parameter update mechanism follows: [ \mathbf{c}{\text{trial}} = \mathbf{c}{\text{old}} - l{\text{rate}} \cdot \nabla u + \eta \cdot \mathcal{N}(0,1) ] where ( l{\text{rate}} ) is the learning rate, ( \nabla u ) is the gradient of the objective function, and ( \eta ) introduces stochastic noise to facilitate escape from local minima [12]. This gradient-based approach significantly accelerates convergence, particularly for higher-dimensional optimization problems [12].

The following diagram illustrates the complete variational optimization workflow using the BICePs score:

Key Research Reagents and Computational Tools

Table 2: Essential Research Reagents and Computational Tools for BICePs Optimization

| Tool/Reagent | Function in BICePs Workflow | Implementation Notes |

|---|---|---|

| BICePs Software | Core algorithm for posterior sampling and score calculation | BICePs v2.0 provides improved user interface and extensibility [20] |

| Molecular Dynamics Engine | Generates prior conformational ensembles | GROMACS, AMBER, or OpenMM typically used [21] |

| Reference Potentials | Corrects for bias from non-informative restraints | Polymer models for chain molecules; maximum-entropy distributions for macrocycles [14] |

| Gradient Calculation | Enables efficient parameter optimization | First and second derivatives of BICePs score accelerate convergence [12] |

| Enhanced Likelihood Functions | Handles outliers and systematic errors | Student's t-model marginalizes uncertainty parameters for robust error handling [4] |

Experimental Protocol: Force Field Parameter Optimization

This protocol outlines the steps for optimizing force field parameters using variational minimization of the BICePs score, demonstrated for a 12-mer HP lattice model and all-atom systems [19] [14].

Step-by-Step Procedure

Step 1: Initial Ensemble Generation

- Simulate conformational ensemble using initial force field parameters ( \mathbf{c}_0 ) [2].

- Ensure adequate sampling of conformational states ( X ) to represent the equilibrium distribution.

- Calculate theoretical observables ( f(X) ) from the ensemble using appropriate forward models.

Step 2: Experimental Data Preparation

- Compile ensemble-averaged experimental measurements ( D = {d1, d2, \ldots, d_N} ) (e.g., NMR observables, distance measurements) [19] [14].

- Identify potential sources of error but note that precise error estimates are not required as BICePs treats uncertainties as nuisance parameters [4].

Step 3: BICePs Score Calculation

- Implement replica-averaged forward model using sufficient replicas (( Nr )) to minimize standard error [12]: [ \sigmaj^{\text{SEM}} = \sqrt{\frac{1}{N} \sum{r}^{N} (fj(Xr) - \langle fj(\mathbf{X}) \rangle)^2} ]

- Sample the full posterior distribution using Markov Chain Monte Carlo (MCMC) over conformational states ( X ) and uncertainty parameters ( \sigma ) [19] [14].

- Compute BICePs score as the free energy of applying experimental restraints.

Step 4: Parameter Optimization Loop

- Calculate gradients of the BICePs score with respect to force field parameters [12].

- Update parameters using gradient-based optimization: [ \mathbf{c}{\text{new}} = \mathbf{c}{\text{old}} - l_{\text{rate}} \cdot \nabla (\text{BICePs score}) + \eta \cdot \mathcal{N}(0,1) ]

- Repeat Steps 1-3 with updated parameters until convergence criteria are met.

Step 5: Validation and Model Selection

- Compare BICePs scores across different force field models [14].

- Select model with lowest BICePs score as the optimal parameterization.

- Validate optimized force field against independent experimental data not used in optimization.

Critical Steps and Troubleshooting

- Convergence Monitoring: Ensure MCMC sampling reaches equilibrium by monitoring trace plots of key parameters [14].

- Gradient Stability: Adjust learning rate ( l_{\text{rate}} ) and noise term ( \eta ) to balance convergence speed and stability [12].

- Systematic Error Handling: Employ Student's likelihood model to automatically detect and down-weight outliers [4].

Application Examples and Case Studies

Lattice Model Demonstration

In a proof-of-concept study, BICePs was used to optimize interaction parameters for a 12-mer HP lattice model using ensemble-averaged distance measurements [19]. The method successfully identified correct energy parameters despite significant experimental noise and sparse data, demonstrating robustness in the presence of random and systematic errors [19].

All-Atom Protein Force Field Optimization

BICePs has been applied to optimize Karplus parameters for J-coupling constant predictions in human ubiquitin [12]. The approach successfully refined six distinct sets of Karplus parameters for different J-coupling types (3JHNHα, 3JHαC′, 3JHNCβ, 3JHNC′, 3JC′Cβ, 3JC′C′), improving agreement between simulated ensembles and NMR measurements [12].

Neural Network Potential Optimization

The BICePs framework has been extended to optimize neural network-based forward models [12]. By using the BICePs score as a loss function, researchers can train neural networks to predict experimental observables from molecular configurations, demonstrating the method's flexibility for complex, differentiable forward models [12].

The mathematical relationships and computational flow in the BICePs optimization process are illustrated below:

The BICePs score provides a powerful foundation for automated force field parameter optimization through Bayesian inference. Its formulation as a free energy-like quantity enables both rigorous model selection and efficient variational optimization, even in the presence of sparse, noisy experimental data. The protocols outlined here offer researchers a practical roadmap for implementing this approach in diverse molecular systems, from simple lattice models to all-atom proteins and complex molecular assemblies. As force field accuracy requirements continue to grow, BICePs-based optimization represents a promising direction for robust, automatic parameterization of molecular potentials.

Replica-Averaged Forward Models for Maximum-Entropy Reweighting

The accurate determination of biomolecular conformational ensembles is fundamental to understanding function, yet it remains a significant challenge in structural biology. Computational methods like molecular dynamics (MD) simulations can generate structural ensembles, but their accuracy is often limited by force field imperfections and finite sampling. Conversely, experimental techniques provide crucial data but are inherently ensemble-averaged and subject to noise. Replica-averaged forward models for maximum-entropy reweighting represent a powerful class of post-processing algorithms that integrate these complementary sources of information. By treating experimental measurements as ensemble averages over multiple replicas of structures, these methods refine theoretical ensembles to be more consistent with experimental data while making minimal assumptions about uncertainty. This approach provides a robust statistical framework for force field validation and optimization through Bayesian inference, enabling the systematic improvement of molecular models [22] [4].

Theoretical Foundation

Core Principles of Replica Averaging and Maximum Entropy

Replica-averaged modeling is a maximum-entropy approach that reconciles simulated conformational ensembles with experimental observations. The fundamental principle involves using a forward model to predict experimental observables from atomic coordinates, then comparing these predictions to actual measurements. The key innovation lies in treating the experimental data as restraints on replica-averaged observables rather than on individual structures.

In this framework, an initial ensemble of structures is generated from molecular simulations, with each conformation assigned a preliminary statistical weight. The experimental observables are not compared to predictions from single structures, but to the average prediction across multiple replicas of the system. This replica averaging accounts for the fact that experiments measure properties averaged over enormous numbers of molecules and time scales [23] [4].

The maximum-entropy principle ensures that the reweighted ensemble remains as close as possible to the original simulation-based ensemble while satisfying the experimental constraints. Mathematically, this is achieved by minimizing the Kullback-Leibler divergence between the refined and original ensembles, subject to the constraint that the replica-averaged forward model predictions match the experimental data within their uncertainties [2] [23].

Bayesian Formulation with Replica Averaging

The Bayesian formulation of replica-averaged modeling incorporates experimental data through a likelihood function that depends on replica-averaged observables. The posterior probability takes the general form:

$$ p(\mathbf{X},\bm{\sigma}|D) \propto \prod{r=1}^{N{r}}\left{p(Xr)\prod{j=1}^{N{j}}\frac{1}{\sqrt{2\pi\sigma{j}^{2}}}\exp\left[-\frac{(dj-fj(\mathbf{X}))^2}{2\sigma{j}^{2}}\right]p(\sigmaj)\right} $$

Where:

- $\mathbf{X}$ represents the set of $N_r$ conformation replicas

- $dj$ is the experimental value for observable $j$ from a set of $Nj$ measurements

- $fj(\mathbf{X}) = \frac{1}{Nr}\sum{r}^{Nr}fj(Xr)$ is the replica-averaged forward model prediction

- $\sigma_j$ represents uncertainty parameters for each observable

- $p(Xr)$ is the prior probability of conformation $Xr$

- $p(\sigma_j)$ is typically a non-informative Jeffreys prior [4]

This formulation allows simultaneous inference of conformational populations and uncertainty parameters, with the replica average ensuring proper comparison to ensemble-averaged experimental data.

The BICePs Score for Model Selection

A key advantage of the Bayesian approach is the ability to perform objective model selection through the BICePs score. This free energy-like quantity measures the evidence for a model given the experimental data:

$$ f(k) = -\ln\frac{Z(k)}{Z_0} $$

where $Z(k)$ is the evidence for model $k$ with prior $P(k)(X)$, and $Z_0$ is the evidence for a reference model with uniform populations. Lower BICePs scores indicate models more consistent with experimental data. This score provides a robust metric for force field validation and parameter optimization, with inherent regularization that prevents overfitting [24] [4].

Computational Protocol

Workflow Implementation

The following diagram illustrates the complete workflow for replica-averaged reweighting using BICePs:

Step-by-Step Protocol

Step 1: Initial Ensemble Generation

- Procedure: Perform molecular dynamics or Monte Carlo simulations using your chosen force field to generate a diverse structural ensemble. For protein systems, enhanced sampling methods may be necessary to adequately explore conformational space.

- Critical Parameters:

- Simulation length must ensure convergence for properties of interest

- Save snapshots at appropriate intervals to ensure conformational diversity

- For large systems, consider coarse-grained representations to enhance sampling

- Validation: Assess sampling convergence through block analysis or redundancy measurements [23] [24].

Step 2: Forward Model Calculations

- Procedure: For each saved structure, compute theoretical values for experimental observables using appropriate forward models:

- NMR chemical shifts: Use SHIFTX2 or similar algorithms

- J-couplings: Calculate from torsion angles using empirical relationships

- NOE distances: Compute interatomic distances

- HDX protection factors: Estimate using empirical models based on solvent accessibility and hydrogen bonding

- Implementation: Automate calculations using scripts to process all structures in the ensemble [24] [25].

Step 3: BICePs Input Preparation

- Procedure: Use the BICePs.Preparation class to format inputs:

- Data Structure: Store experimental values and forward model predictions in Pandas DataFrame objects for efficient access [24].

Step 4: Posterior Sampling

- Procedure: Execute Markov Chain Monte Carlo sampling to estimate the posterior distribution of conformational populations and uncertainty parameters:

- Parameters:

- Number of replicas (typically 10-100)

- MCMC steps (typically 10^5-10^7)

- Burn-in period (typically 10-20% of total steps)

- Parallelization: Utilize parallel tempering for improved sampling of multimodal distributions [24] [4].

Step 5: Convergence Assessment

- Procedure: Monitor convergence through:

- Trace plots of population parameters

- Gelman-Rubin statistics for multiple chains

- Autocorrelation analysis

- Acceptance Criteria:

- Gelman-Rubin statistic < 1.1 for all parameters

- Stable population estimates across independent runs

- Sufficiently effective sample size (>100-1000 independent samples) [24].

Step 6: Ensemble Analysis and Force Field Optimization

- Procedure:

- Extract reweighted populations from the posterior distribution

- Calculate the BICePs score for model selection

- For force field optimization, use variational methods to minimize the BICePs score with respect to force field parameters

- Validation: Compute observables not included in refinement to assess transferability [4].

Research Reagent Solutions

Table 1: Essential Software Tools for Replica-Averaged Reweighting

| Tool Name | Type | Primary Function | Application Note |

|---|---|---|---|

| BICePs v2.0 | Python package | Bayesian reweighting of conformational ensembles | Supports NOE distances, J-couplings, chemical shifts, HDX protection factors [24] |

| GROMACS | MD simulation engine | Generate initial conformational ensembles | Optimized for biomolecular systems with GPU acceleration |

| NAMD | MD simulation engine | Alternative for ensemble generation | Particularly effective for large systems [26] |

| Sassena | Computing tool | Calculate scattering intensities from MD | Critical for comparing to neutron scattering data [26] |

| ForceBalance | Optimization package | Systematic force field parameterization | Can be integrated with BICePs score for parameter optimization [18] |

| Alexandria Chemistry Toolkit (ACT) | Machine learning software | Evolutionary optimization of force fields | Implements genetic algorithms for parameter space exploration [27] |

Application to Force Field Optimization

Automated Parameterization Workflow

Replica-averaged reweighting provides a powerful approach for automated force field optimization through variational minimization of the BICePs score. The fundamental insight is that the BICePs score serves as a differentiable objective function that measures the agreement between simulation and experiment while automatically accounting for various sources of uncertainty.

The optimization workflow involves:

- Initialization: Begin with initial force field parameters and generate a conformational ensemble

- BICePs Evaluation: Compute the BICePs score for the current parameter set

- Gradient Calculation: Compute derivatives of the BICePs score with respect to force field parameters

- Parameter Update: Adjust parameters using gradient-based optimization methods

- Iteration: Repeat until convergence of the BICePs score [4]

This approach has been successfully demonstrated for lattice models and von Mises-distributed polymer systems, showing robust convergence even in the presence of substantial experimental error.

Handling Experimental Uncertainty

A critical advantage of the Bayesian formulation is its explicit treatment of experimental uncertainty. The method naturally handles: