Advances in Explicit Solvent Ensemble Generation: From Machine Learning Potentials to Biomedical Applications

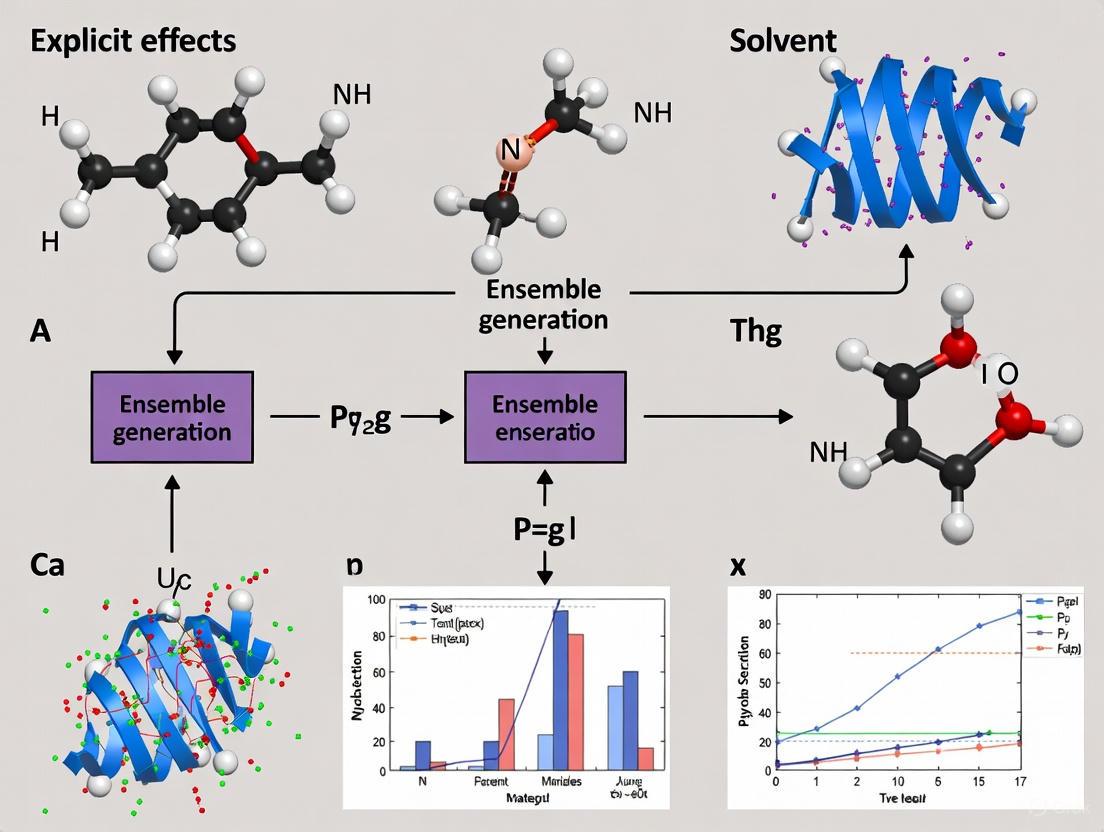

This comprehensive review explores cutting-edge computational strategies for generating conformational ensembles in explicit solvents, a critical capability for understanding biomolecular function and drug binding.

Advances in Explicit Solvent Ensemble Generation: From Machine Learning Potentials to Biomedical Applications

Abstract

This comprehensive review explores cutting-edge computational strategies for generating conformational ensembles in explicit solvents, a critical capability for understanding biomolecular function and drug binding. We examine the foundational principles distinguishing explicit from implicit solvent models and their impact on capturing realistic dynamics. The article details emerging machine learning methodologies, including active learning potentials and generative models, that overcome traditional sampling limitations. We provide practical troubleshooting guidance for optimizing simulations and rigorous validation frameworks for benchmarking model performance against experimental and explicit solvent references. Designed for computational researchers and drug development professionals, this synthesis highlights how accurate, efficient explicit solvent ensemble generation is revolutionizing the prediction of molecular behavior in biomedical research.

Explicit vs Implicit Solvent Models: Why Atomic Detail Matters in Ensemble Generation

The Critical Limitations of Implicit Solvent Models for Conformational Sampling

FAQs and Troubleshooting Guides

Frequently Asked Questions (FAQs)

Q1: What is the primary factor behind the conformational sampling speedup observed in implicit solvent models? The accelerated conformational sampling in implicit solvent models, such as Generalized Born (GB), is primarily due to the reduction of solvent viscosity rather than fundamental changes to the free-energy landscape of the solute. In explicit solvent models like PME with TIP3P water, the discrete water molecules create a frictional drag that slows molecular motion. The speedup factor is highly system-dependent, ranging from approximately 1-fold for small dihedral flips to about 100-fold for large conformational changes like nucleosome tail collapse [1].

Q2: For which type of chemical reactions are implicit solvent models likely to be sufficient? Implicit solvent models can be sufficient for reactions where the solvent acts as a bulk dielectric medium without forming specific, directional interactions with the solute at the transition state. Studies on silver-catalyzed furan ring formation in DMF (a polar aprotic solvent) showed that implicit models could correctly identify the most favorable reaction pathway, as there was no direct chemical participation of solvent molecules in the reaction mechanism [2].

Q3: When is an explicit solvent model absolutely necessary? An explicit solvent model is crucial when solvent molecules engage in specific, non-covalent interactions (e.g., hydrogen bonding) with the solute that significantly alter the electronic structure or stability of intermediates and transition states. This is often the case in aqueous systems and for processes where water acts as a reactant or a direct participant in the mechanism. Furthermore, implicit models often fail to accurately reproduce experimental ring puckering conformations in highly flexible and charged systems like glycosaminoglycans [3].

Q4: How does the choice of explicit water model affect simulation outcomes? The choice of explicit water model (e.g., TIP3P, TIP4P, TIP5P, OPC, SPC/E) can significantly influence conformational sampling and stability. For instance, in simulations of a heparin dodecamer, TIP3P and SPC/E models yielded stable conformations, while TIP4P, TIP5P, and OPC introduced greater structural variability. These differences arise from variations in how the models treat charge distribution and molecular geometry, affecting their ability to reproduce bulk water properties and local solute-solvent interactions [3].

Q5: Can machine learning potentials (MLPs) help overcome the limitations of explicit solvent simulations? Yes, machine learning potentials trained on accurate quantum mechanics data offer a promising path forward. They can model chemical processes in explicit solvent at a fraction of the computational cost of ab initio molecular dynamics (AIMD). Active learning strategies, which use descriptor-based selectors to build efficient training sets, enable the generation of MLPs that can capture complex solute-solvent interactions and provide reaction rates in agreement with experimental data [4].

Troubleshooting Common Issues

Issue 1: Unrealistically fast conformational dynamics in implicit solvent simulations.

- Problem: The simulated molecule samples conformational space too quickly, leading to non-physical kinetics and potentially incorrect population distributions.

- Solution: The reduced viscosity in implicit solvent is a known cause. To obtain more physically realistic kinetics, introduce a Langevin thermostat with a collision frequency that mimics the effective viscosity of the desired explicit solvent. Be aware that the primary value of the simulation in this case may be the identification of stable states, rather than the transition rates between them [1].

Issue 2: Implicit solvent model fails to reproduce experimentally observed conformations for a charged, flexible polymer.

- Problem: Simulations of a heparin oligosaccharide using an implicit solvent model do not match experimental data on ring puckering or global chain architecture.

- Solution: Switch to an explicit solvent model. Implicit models often poorly capture the strong, specific electrostatic and hydrogen-bonding interactions between the solute and water molecules that are critical for structuring flexible, charged systems like glycosaminoglycans. A model like TIP3P or OPC is recommended in such cases [3].

Issue 3: Need to model a reaction in solution, but full explicit solvent QM calculations are computationally prohibitive.

- Problem: The system is too large for routine QM calculations with explicit solvent molecules, but an implicit solvent model may be inadequate.

- Solution: Consider a multi-scale approach:

- Option A (QM/MM): Treat the reactive core with quantum mechanics (QM) and the surrounding solvent with a molecular mechanics (MM) force field. This captures specific solvent effects at a lower cost than full QM [2].

- Option B (Machine Learning Potentials): Use an active learning workflow to generate a machine learning potential. This involves running short quantum mechanics/molecular mechanics (QM/MM) or cluster calculations to create a training set that spans the relevant chemical and conformational space, resulting in a potential that can drive extensive explicit solvent MD simulations [4].

Issue 4: Inconsistent results across different explicit water models.

- Problem: The conformational ensemble of a protein or biomolecule changes significantly when using TIP3P vs. TIP4P vs. OPC water models.

- Solution: This is a known challenge, as different water models have varying dipole moments and parameterizations that can influence solute behavior. If available, compare your results against experimental data (e.g., NMR, SAXS) to determine which model is most accurate for your system. If no experimental data exists, report results using multiple established models like TIP3P and OPC to demonstrate robustness—or uncertainty—in your findings [3].

Experimental Protocols & Data

Quantitative Comparison of Sampling Speed

The following table summarizes the speedup of conformational sampling for a Generalized Born (GB) implicit solvent model compared to an explicit solvent (PME with TIP3P) model, as reported in a systematic study [1].

Table 1: Conformational Sampling Speedup: Implicit vs. Explicit Solvent

| Conformational Change Type | System Example | Approximate Sampling Speedup (GB vs. PME) | Primary Cause of Speedup |

|---|---|---|---|

| Small | Dihedral angle flips in a protein | ~1-fold | Reduction in solvent viscosity |

| Large | Nucleosome tail collapse, DNA unwrapping | ~1 to 100-fold | Reduction in solvent viscosity |

| Mixed | Folding of a miniprotein | ~7-fold | Reduction in solvent viscosity |

Protocol: Benchmarking Solvent Models for a Biomolecular System

This protocol outlines steps to evaluate the impact of solvent model choice on conformational sampling, based on methodologies used in recent literature [1] [3].

System Preparation:

- Construct the initial coordinates of your solute (e.g., protein, DNA, carbohydrate).

- Generate parameter/topology files for the solute using a force field like CHARMM36m or AMBER.

- Prepare the solvation boxes using tools like CHARMM-GUI or

tleap.

Solvation and Neutralization:

- Solvate the solute in a periodic box (e.g., cubic or octahedral) with a minimum distance (e.g., 10-12 Å) between the solute and the box edge.

- Add a sufficient number of counterions (e.g., Na⁺, Cl⁻) to neutralize the system's total charge. For explicit solvent simulations, use ion parameters that are consistent with the chosen water model.

Simulation Setup:

- Perform energy minimization using a steepest descent algorithm until the maximum force is below a reasonable tolerance (e.g., 1000 kJ/mol/nm).

- Equilibrate the system in the NVT ensemble for 100-250 ps at the target temperature (e.g., 300 K), applying positional restraints on heavy atoms of the solute.

- Further equilibrate in the NPT ensemble for 100-250 ps to stabilize the pressure (e.g., 1 bar), again with solute restraints.

- Run production molecular dynamics simulations without restraints. The required length depends on the system size and the conformational change of interest (nanoseconds to microseconds).

Comparative Analysis:

- For each simulation (e.g., GB implicit, TIP3P explicit, OPC explicit), calculate key structural metrics:

- Root-mean-square deviation (RMSD): To monitor overall stability.

- Radius of gyration (Rg): To measure compactness.

- End-to-end distance: For polymeric molecules.

- Dihedral angle distributions: For specific torsions of interest.

- Compare the resulting conformational ensembles and the rate of sampling between the different solvent models.

- For each simulation (e.g., GB implicit, TIP3P explicit, OPC explicit), calculate key structural metrics:

Workflow Diagram

Diagram Title: Solvent Model Benchmarking Workflow

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Computational Tools for Solvent Model Research

| Item | Function / Description | Example Use Case |

|---|---|---|

| Explicit Solvent Models | Atomistic representation of water molecules. | Capturing specific solute-solvent interactions like hydrogen bonding. |

| TIP3P | A common 3-site water model; efficient and widely used. | General-purpose biomolecular simulation where a balance of cost and accuracy is needed [3]. |

| OPC | A 4-site water model parameterized for high accuracy. | When seeking improved agreement with experimental water properties over TIP3P [3]. |

| Implicit Solvent Models | Treats solvent as a continuous dielectric medium (e.g., GB, PBSA). | Rapid conformational sampling; MM-PBSA binding free energy calculations [1] [3]. |

| Machine Learning Potentials (MLPs) | Surrogate models trained on QM data to accurately represent potential energy surfaces. | Modeling chemical reactions in explicit solvent at near-QM accuracy but lower cost [4]. |

| Hybrid QM/MM | Combines a QM region (reactive core) with an MM region (solvent). | Studying reaction mechanisms where the electronic structure of the solute is perturbed by the solvent [2]. |

| Active Learning | An iterative strategy to automatically build efficient training sets for MLPs. | Generating a robust and data-efficient MLP for a chemical reaction in solution [4]. |

Solvent Model Selection Logic

Diagram Title: Solvent Model Selection Guide

Technical Support Center: Troubleshooting Explicit Solvent Simulations

Frequently Asked Questions (FAQs)

FAQ 1: My explicit solvent molecular dynamics (MD) simulations are computationally prohibitive for adequate conformational sampling. What are efficient alternatives?

- Issue: The high computational cost of explicit solvent MD simulations limits the ability to achieve sufficient sampling for robust ensemble generation.

- Solution: Consider leveraging machine learning potentials (MLPs) trained on explicit solvent data. These models act as accurate surrogates, enabling the generation of conformational ensembles at a fraction of the computational cost while maintaining physical fidelity [5] [6]. Furthermore, specialized deep learning generators like aSAM (atomistic structural autoencoder model) can be trained on existing MD simulation datasets to produce heavy atom protein ensembles, effectively capturing backbone and side-chain torsion angle distributions [5].

FAQ 2: How can I model the effect of environmental conditions, like temperature, on my structural ensembles?

- Issue: Standard ensemble generators often produce ensembles for a single, fixed condition (e.g., 300 K), limiting their predictive power.

- Solution: Utilize newly developed temperature-conditioned models. For instance, aSAMt is a generative model that produces protein conformational ensembles conditioned on temperature. Trained on multi-temperature datasets like mdCATH, it can recapitulate temperature-dependent ensemble properties and generalize to temperatures outside its training data [5].

FAQ 3: My simulations fail to capture key solvent-induced conformational changes or stabilization. What could be wrong?

- Issue: Implicit solvent models, while computationally efficient, can sometimes fail to capture specific solute-solvent interactions that stabilize certain conformations.

- Solution: Ensure you are using an explicit solvent model for production simulations, especially for systems where solvent-induced interactions are critical. Research confirms that untruncated solvent-induced interactions between solute elements are sufficient to generate a rich free energy landscape, stabilizing specific conformations in a way that implicit models may not fully capture [7] [8]. For chemical reactions in solution, using MLPs with explicit solvent is crucial for accurately modeling the influence of solvent on reaction rates and mechanisms [6].

FAQ 4: How many solvent molecules are sufficient for a cluster-based explicit solvent model to be accurate?

- Issue: When using a cluster model (a solute surrounded by a finite number of solvent molecules) to train MLPs, defining the appropriate cluster size is challenging.

- Solution: The radius of the solvent shell around the substrate should be no less than the cut-off radius used for training the MLP. This prevents artificial forces near the solvent-vacuum interface in the cluster data. Studies show that MLPs trained on such cluster data demonstrate good transferability to systems with periodic boundary conditions, accurately predicting bulk properties [6].

FAQ 5: How do I ensure my training data for a Machine Learning Potential (MLP) adequately covers the relevant chemical space?

- Issue: The quality and size of the training dataset are paramount for an MLP to reliably capture solute-solvent interactions at energy minima and transition state regions.

- Solution: Implement an active learning (AL) strategy combined with descriptor-based selectors. This approach automates the construction of data-efficient training sets that span the relevant chemical and conformational space. Using descriptors like Smooth Overlap of Atomic Positions (SOAP) helps assess whether the training set adequately represents the chemical space of interest, ensuring robust MLP performance [6].

Troubleshooting Guides

Problem: Inaccurate Reaction Rates in Solution

- Potential Cause: The machine learning potential (MLP) lacks sufficient data in the transition state regions or does not adequately capture specific solute-solvent interactions.

- Resolution Steps:

- Implement Active Learning: Use an AL workflow with descriptor-based selectors to iteratively improve the MLP. This identifies and incorporates underrepresented configurations in the training set [6].

- Validate with Cluster Models: Generate initial training data using cluster models containing solvent molecules placed at relevant positions around the solute, ensuring accurate description of non-covalent interactions [6].

- Benchmark Against Experiment: Compare the MLP-predicted reaction rates with experimental data to validate the model's accuracy, as demonstrated in studies of Diels-Alder reactions [6].

Problem: Poor Sampling of Multi-State Protein Ensembles

- Potential Cause: The generative model struggles to explore conformational states distant from the initial input structure.

- Resolution Steps:

- Leverage High-Temperature Training Data: Models like aSAMt, trained on MD simulations at higher temperatures (e.g., 320-450 K), show an enhanced ability to explore conformational landscapes. If using your own generator, incorporate high-temperature simulation data into training [5].

- Quantitatively Compare Ensembles: Use metrics like WASCO-global scores (based on Cβ positions) and principal component analysis (PCA) to compare the diversity of your generated ensemble against long, reference MD simulations [5].

- Check Local Torsions: Evaluate the model's ability to capture backbone (φ/ψ) and side-chain (χ) torsion angle distributions using scores like WASCO-local. A failure here may indicate a need for models with atomistic resolution like aSAM [5].

Experimental Protocols & Data Presentation

Detailed Methodology: Active Learning for MLPs in Explicit Solvent

This protocol outlines a general strategy for generating reactive machine learning potentials to model chemical processes in explicit solvents [6].

Initial Data Generation:

- Gas Phase/Implicit Solvent Set: Generate configurations by randomly displacing atomic coordinates of the solute. For reactions, start from the transition state (TS) geometry.

- Explicit Solvent Cluster Set: Create cluster models with the solute and a shell of explicit solvent molecules. The solvent shell radius must be at least the size of the MLP's cut-off radius. Configurations can be sourced from snapshots of quantum mechanics/molecular dynamics (QM/MD) simulations.

Initial MLP Training: Train the first version of the MLP using the small, initially generated set of configurations labeled with reference energies and forces.

Active Learning Loop:

- Propagation: Use the current MLP to run molecular dynamics simulations, exploring new configurations.

- Selection: Employ descriptor-based selectors (e.g., SOAP) to identify configurations that are underrepresented in the current training set.

- Labeling: Perform accurate quantum mechanical (QM) calculations on the selected new configurations to obtain energies and forces.

- Retraining: Expand the training set with the new labeled data and retrain the MLP.

- Convergence Check: Repeat until the MLP's predictions on a validation set stabilize and key properties (e.g., reaction rates, radial distribution functions) match reference data.

The workflow is designed for efficient exploration of complex potential energy surfaces with high data efficiency.

Workflow Diagram: Active Learning for ML Potentials

The table below summarizes key methods, their descriptions, and applications for handling solvent effects in computational research.

| Method | Key Description | Application Context |

|---|---|---|

| Explicit Solvent MD [7] [8] | Atomistic representation of solvent molecules. Considered the most accurate but computationally expensive. | Generating reference ensembles; studying specific solute-solvent interactions (e.g., hydrogen bonding). |

| Implicit Solvent (Continuum) [8] [9] | Models solvent as a polarizable continuum. Computationally efficient but may miss specific interactions. | Rapid conformational sampling; initial structure screening; large systems where explicit solvent is infeasible. |

| Machine Learning Potentials (MLPs) [6] [10] | Surrogate models trained on QM data. Offer near-QM accuracy at lower computational cost. | Generating ensembles; modeling chemical reactions in explicit solvent; long-time-scale dynamics. |

| Deep Generative Models (e.g., aSAM/aSAMt) [5] | Neural networks trained on MD data to generate new conformational ensembles. Can be conditioned on variables like temperature. | Rapid production of structural ensembles; exploring temperature-dependent protein dynamics. |

| Cluster-Continuum (Microsolvation) [9] | Hybrid approach combining a few explicit solvent molecules with an implicit continuum model. | Balancing accuracy and cost for systems where specific solvent interactions are critical. |

The Scientist's Toolkit: Research Reagent Solutions

This table details essential computational tools and datasets used in modern explicit solvent and ensemble generation research.

| Item | Function & Explanation |

|---|---|

| Machine Learning Potentials (MLPs) | Fast, accurate surrogates for quantum mechanical calculations, enabling feasible molecular dynamics in explicit solvent [6] [10]. |

| Active Learning (AL) Workflows | Automated strategies for building optimal training sets for MLPs, ensuring they cover relevant chemical space efficiently [6]. |

| Multi-Temperature Datasets (e.g., mdCATH) | MD simulation datasets run at various temperatures, used to train conditional generative models that predict temperature-dependent ensemble properties [5]. |

| Neural Network Potentials (NNPs) | A type of MLP using neural networks; models like eSEN and UMA offer state-of-the-art accuracy for molecular energy and force predictions [10]. |

| Benchmark Sets (e.g., FlexiSol) | Curated datasets of experimental solvation energies and partition ratios for flexible, drug-like molecules, used to test and validate solvation models [9]. |

| Latent Diffusion Models (e.g., aSAM) | A class of generative AI that learns the distribution of molecular structures in a compressed latent space, used for generating diverse conformational ensembles [5]. |

Workflow Diagram: Temperature-Conditioned Ensemble Generation

Computational Bottlenecks in Traditional Explicit Solvent Molecular Dynamics

Frequently Asked Questions

FAQ 1: What is the primary computational cost in explicit solvent Molecular Dynamics (MD)? The primary cost arises from calculating a vast number of non-bonded interactions (electrostatics and van der Waals) between explicit solvent molecules and between the solvent and solute. Unlike implicit solvent models that treat the solvent as a continuous medium, explicit solvent models atomistically represent every solvent molecule, increasing the number of atoms by orders of magnitude and drastically raising computational demands [4] [11].

FAQ 2: My explicit solvent MD simulations are too slow for sufficient conformational sampling. What are my options? You can consider multi-scale approaches. One effective strategy is using machine learning potentials (MLPs) trained on accurate quantum chemical data. These MLPs can serve as fast and accurate surrogates for the underlying potential energy surface, enabling longer and larger-scale simulations that are infeasible with traditional ab initio MD [4]. Alternatively, hybrid explicit-solute implicit-solvent (VESIS) models can significantly improve efficiency by reducing the number of particles in the system [11].

FAQ 3: How can I accurately model chemical reactions in explicit solvent without the prohibitive cost of ab initio MD? A promising method involves generating a reactive machine learning potential using an active learning (AL) strategy. This approach automates the construction of a compact, yet comprehensive, training set that spans the relevant chemical and conformational space. The resulting MLP allows for accurate modeling of reaction mechanisms, rates, and solvent effects at a fraction of the computational cost [4].

FAQ 4: Are there methods to accelerate the calculation of solvation forces in MD? Yes, deep learning methods are being developed to predict solvation free energies and atomic forces directly from a molecule's internal coordinates. These models, often accelerated on GPUs, learn from data generated by solving physical equations like the Poisson-Boltzmann equation. They are particularly suitable for processing many snapshots from long MD trajectories and can be used in enhanced sampling simulations [12].

Troubleshooting Guides

Problem 1: Inadequate Sampling of Solvent Configurations

Symptoms

- Poor convergence of free energy estimates.

- Inaccurate prediction of solvent-influenced properties, such as pKa values or reaction rates.

- Failure to capture key solvent-shell structures around the solute.

Solution: Enhanced Sampling Techniques Implement advanced sampling algorithms to improve the efficiency of phase space exploration.

- Protocol: Constant pH Replica Exchange MD (pH-REMD) in Explicit Solvent

This method enhances sampling of both protonation states and solvent configurations.

- System Setup: Prepare the solute and explicit solvent box using standard procedures.

- Replica Setup: Create multiple replicas of the system, each running at a different pH value.

- Dynamics Propagation: Run standard MD in explicit solvent for a short period (e.g., a few picoseconds) on each replica.

- Protonation State Change: Periodically, attempt a change to the protonation state of titratable residue(s). These attempts are evaluated using an implicit solvent model (like Generalized Born) to approximate the solvation free energy difference, avoiding the high barrier present in explicit solvent.

- Replica Exchange: After protonation attempts, allow for a brief solvent relaxation. Then, attempt to exchange configurations between neighboring pH replicas based on a Metropolis criterion.

- Repeat: Cycle through steps 3-5 to generate a well-sampled ensemble [13].

- Protocol: Constant pH Replica Exchange MD (pH-REMD) in Explicit Solvent

This method enhances sampling of both protonation states and solvent configurations.

Problem 2: High Computational Cost of Free Energy Calculations

Symptoms

- Inability to run simulations long enough to obtain statistically meaningful results.

- Need for massive computational resources to simulate biologically relevant time scales.

Solution: Leverage Machine Learning Potentials and Implicit Solvent Models Replace expensive energy/force calculations with faster, data-driven models.

- Protocol: Active Learning for MLP Generation in Solution

This workflow generates a data-efficient MLP for chemical processes in explicit solvents.

- Initial Data Generation:

- Generate a small set of diverse configurations for the solute (e.g., from random displacements or along a reaction path).

- For solvent, use cluster models with a solvent shell radius at least as large as the MLP's cut-off. Label these clusters with reference energies and forces from high-level electronic structure calculations.

- Active Learning Loop:

- Train MLP: Train an initial MLP on the current dataset.

- Run MLP-MD: Propagate MD simulations using the trained MLP.

- Select New Data: Use descriptor-based selectors (e.g., Smooth Overlap of Atomic Positions, SOAP) to identify new configurations that are poorly represented in the training set.

- Label and Expand: Compute reference energies/forces for these new configurations and add them to the training set.

- Iterate: Repeat the train-simulate-select loop until the MLP's performance converges [4].

- Initial Data Generation:

- Protocol: Variational Explicit-Solute Implicit-Solvent (VESIS) Model

This model reduces cost by treating the solvent implicitly while keeping the solute atoms explicit.

- Define Free Energy Functional: The functional, G[Γ, R], depends on the solute-solvent interface (Γ) and solute atomic positions (R). It includes surface energy, solute-solvent van der Waals interactions, and continuum electrostatics.

- Two-Stage Iterative Minimization:

- Stage 1 (Interface Optimization): Fix solute atomic positions (R). Use a fast binary level-set method to find the equilibrium solute-solvent interface (Γ) that minimizes the free energy.

- Stage 2 (Solute Relaxation): Fix the optimized interface (Γ). Use an adaptive-mobility gradient descent method to relax the solute atomic positions (R), minimizing the total energy.

- GPU Implementation: Implement the binary level-set and gradient descent algorithms on a GPU to significantly accelerate the minimization process [11].

- Protocol: Active Learning for MLP Generation in Solution

This workflow generates a data-efficient MLP for chemical processes in explicit solvents.

Experimental Protocols & Data

Detailed Methodology: Active Learning for MLPs

The following workflow details the process of creating a machine learning potential for modeling reactions in explicit solvents, as referenced in the troubleshooting guide [4].

Quantitative Performance Data

The table below summarizes key metrics associated with the computational bottlenecks and solutions discussed.

| Method / Challenge | Key Metric | Reported Value / Comparison | Source |

|---|---|---|---|

| Traditional Explicit Solvent AIMD | Computational Cost | Prohibitive for free energy calculations requiring extensive sampling. | [4] |

| Machine Learning Potentials (MLP) | Data Efficiency | Active learning with descriptor-based selectors creates accurate potentials with much smaller datasets than traditional neural network potentials (requiring 1000s of configurations). | [4] |

| Variational Explicit-Solute Implicit-Solvent (VESIS) | Speedup | GPU implementation offers a significant improvement in efficiency over CPU implementation for determining equilibrium molecular conformations. | [11] |

| Deep Learning for Solvation Forces | Application | Accurately predicts solvation free energies/forces; free energy landscape closely resembles explicit solvent simulations. | [12] |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function / Description |

|---|---|

| Machine Learning Potentials (MLPs) | Fast and accurate surrogates for quantum mechanical potential energy surfaces, enabling longer and larger-scale simulations. |

| Active Learning (AL) | A strategy for building small, data-efficient training sets for MLPs by intelligently selecting the most informative new configurations. |

| SOAP Descriptors | (Smooth Overlap of Atomic Positions) Used in active learning to quantify similarity between atomic structures and identify under-sampled regions of chemical space. |

| Explicit Solvent Cluster Data | A computationally efficient way to generate training data for MLPs that captures specific solute-solvent interactions, with good transferability to periodic bulk systems. |

| Variational Implicit-Solvent Model (VISM) | A coarse-grained model that represents the solvent as a continuum, defined by a solute-solvent interface, to calculate solvation free energies efficiently. |

| Constant pH Replica Exchange MD (pH-REMD) | An enhanced sampling method that runs multiple replicas at different pH levels to improve the sampling of protonation states and associated solvent configurations. |

| Binary Level-Set Method | A fast numerical algorithm for tracking the evolution of the solute-solvent interface in implicit solvent models, optimized for GPU acceleration. |

| ωB97M-V/def2-TZVPD | A high-level density functional theory (DFT) method and basis set used for generating accurate reference data for training MLPs (e.g., in the OMol25 dataset). |

FAQs: Navigating Explicit Solvent Simulations

Why is an explicit solvent model non-negotiable for studying Intrinsically Disordered Proteins (IDPs)?

Explicit solvents are essential for IDPs because their function and binding are governed by dynamic, transient interactions that implicit models cannot capture. Implicit solvent models, which represent the solvent as a polarizable continuum, fail to describe the specific solute-solvent interactions, entropy effects, and pre-organization that dictate IDP behavior [14]. The dynamic and heterogeneous nature of unbound IDPs means their conformational ensemble is highly sensitive to the environment; reliable ensemble generation requires atomistic representation of solvent molecules to model these specific, weak, and transient interactions accurately [14].

What are the primary technical challenges of running explicit solvent simulations for drug binding?

The main challenges are computational cost and sufficient sampling. Explicit solvent simulations, particularly with ab initio molecular dynamics (AIMD), require immense resources because they need extensive sampling to achieve statistically meaningful ensembles [4]. This is especially true for processes like drug binding or chemical reactions, where accurately capturing the transition state regions demands a high-quality, diverse training set for the potential energy surface [4]. Enhanced sampling techniques and advanced computing hardware, like GPUs, are often necessary to overcome these bottlenecks [14].

How do explicit solvents provide a more accurate picture for solvent-sensitive reactions?

Explicit solvents atomistically represent solute-solvent interactions, which can significantly alter reaction dynamics, rates, and product ratios [4]. Unlike continuum models, they can capture specific effects such as hydrogen bonding, pre-organisation of solvent molecules around the solute, and entropy contributions [4]. For instance, modelling a Diels-Alder reaction in both water and methanol with explicit solvents allows researchers to obtain reaction rates that match experimental data and analyze the distinct influence of each solvent on the reaction mechanism [4].

Troubleshooting Common Experimental Issues

Problem: Poor Convergence in Oligonucleotide Conformational Sampling

- Observation: Your replica exchange molecular dynamics (REMD) simulations of an RNA oligonucleotide show poor convergence even after microseconds of simulation per replica. Replica RMSD profiles indicate the ensemble is not well-equilibrated [15].

- Solution: Implement a reservoir replica exchange molecular dynamics method. This modified version of traditional REMD has proven to be a more cost-effective and reliable alternative, demonstrating much better convergence for explicitly solvated RNA systems like the rGACC tetramer [15].

- Protocol:

- Set up your explicit solvent system as for traditional REMD.

- Instead of attempting to exchange across all replicas simultaneously, the reservoir method uses a pre-generated, diverse ensemble of structures (the "reservoir") to facilitate more efficient sampling.

- This approach allows for a more comprehensive exploration of the rugged energy landscape of nucleic acids in a feasible simulation time [15].

Problem: Inefficient Generation of Machine Learning Potentials for Reactions in Solution

- Observation: Your machine learning potential (MLP) for a chemical reaction in explicit solvent is inaccurate, requiring thousands of ab initio MD configurations that are computationally prohibitive [4].

- Solution: Adopt an active learning (AL) strategy with descriptor-based selectors. This builds data-efficient training sets that span the relevant chemical and conformational space without exhaustive sampling [4].

- Protocol:

- Initial Training Set: Generate a small set of initial configurations. For the solute, start from the transition state and randomly displace atomic coordinates. For the solvent, use cluster models with a solvent shell radius at least as large as the MLP's cut-off radius to avoid artificial forces [4].

- Train Initial MLP on this set.

- Active Learning Loop:

- Run short MD simulations using the current MLP.

- Use a molecular descriptor, like Smooth Overlap of Atomic Positions (SOAP), to evaluate whether new structures are well-represented in the existing training set.

- Structures lying outside the known descriptor space are sent for ab initio calculation and added to the training set.

- Re-train the MLP with the expanded set [4].

- This iterative process ensures the MLP is trained on the most informative data points, leading to an accurate and generalizable potential.

Problem: Distinguishing Binding Mechanisms for Disordered Proteins

- Observation: You have a potential binder for an Intrinsically Disordered Protein (IDP), but it's unclear if it works by inducing a new conformation or stabilizing a pre-existing one (conformational selection) [16].

- Solution: Utilize umbrella sampling molecular dynamics simulations to quantify mechanism-specific binding affinities [16].

- Protocol:

- Use molecular dynamics to induce and fix the IDP (e.g., islet amyloid polypeptide) in distinct conformations relevant to its function, such as an α-helix or β-sheet.

- Employ umbrella sampling to measure the affinity of your binder for these different fixed conformations.

- Analyze the binding preferences. A binder that shows high selectivity for one pre-structured conformation is likely operating via conformational selection. This approach can reveal how sequence and conformational specificity jointly contribute to binding, informing the rational design of disordered protein binders [16].

Research Reagent Solutions

Table 1: Essential Computational Tools for Explicit Solvent Research

| Item/Reagent | Function/Benefit |

|---|---|

| GPU-Accelerated MD Codes (e.g., NVIDIA RTX 2080Ti) | Drastically accelerates explicit solvent MD sampling, achieving 100-200 ns/day for ~1 million atom systems [14]. |

| Reservoir Replica Exchange MD | A modified REMD method providing superior convergence for explicit solvent simulations of rugged landscapes (e.g., RNA) [15]. |

| Machine Learning Potentials (MLPs) | Surrogate models (e.g., ACE, GAP) that provide QM-level accuracy for explicit solvent reactions at a fraction of the cost [4]. |

| Active Learning (AL) Strategy | Efficiently builds small, high-quality training sets for MLPs by selecting the most informative structures via descriptor-based selectors [4]. |

| Umbrella Sampling | A computational technique to calculate conformational-specific binding affinities and free energies in explicit solvent environments [16]. |

� Workflow Visualization

DOT Script for Enhanced Sampling Workflow

Diagram 1: Active learning workflow for generating machine learning potentials in explicit solvent.

DOT Script for IDP Targeting Strategies

Diagram 2: Strategic pathways for targeting intrinsically disordered proteins.

Next-Generation Methods: Machine Learning Potentials and Generative Models for Explicit Solvent Ensembles

Active Learning with Machine Learning Potentials for Data-Efficient Explicit Solvent Modeling

Troubleshooting Guide: Common Issues and Solutions

Q1: My ML potential performs well on training configurations but fails during molecular dynamics (MD) simulations, leading to unphysical structures or simulation crashes. What is wrong?

This is typically caused by the ML potential encountering configurations that are outside its learned domain, a problem known as extrapolation [17]. The training set lacks diversity and does not span the full chemically relevant conformational space of your solute-solvent system [4].

- Solution: Implement a robust Active Learning (AL) loop. After initial training, run short MD simulations using your ML potential. For new configurations encountered, use a selector to decide which ones to add to your training set [4].

- Recommended Selector: Use a descriptor-based selector like Smooth Overlap of Atomic Positions (SOAP) to quantify the similarity of new structures to your existing training set. Structures with low similarity (high "distance") should be selected for quantum mechanics (QM) calculation and added to the training data [4].

- Advanced Protocol (Uncertainty-Driven Dynamics): Modify the potential energy surface in your MD simulations to bias the system towards regions where the ML model has high uncertainty. This can be achieved by adding a bias potential,

Ebias, that is a function of the model's uncertainty estimate,σ²E, accelerating the exploration of under-sampled configurations [17].

Q2: How can I generate a good initial training set that includes explicit solvent effects without incurring prohibitive computational costs?

Generating a massive set of explicit solvent configurations from ab initio MD (AIMD) is often computationally infeasible for chemically relevant systems [4]. Using configurations from classical molecular dynamics (MD) force fields is not recommended, as they often have a weak overlap with the true quantum mechanical potential energy surface [4].

- Solution: Use a cluster-continuum approach. Generate training data using cluster models that contain your solute surrounded by a shell of explicit solvent molecules [4].

- Protocol:

- The radius of the solvent shell should be at least as large as the cut-off radius used for training the MLP to avoid artificial forces at the cluster boundary [4].

- Label these cluster configurations (energy and forces) using your chosen QM method.

- Studies show that MLPs trained on cluster data demonstrate good transferability to larger periodic boundary condition (PBC) systems, accurately predicting bulk properties [4].

Q3: What are the best practices for selecting new structures for training within an active learning cycle to ensure data efficiency?

Random selection or selection based only on energy criteria is inefficient. The goal is to maximize the diversity and chemical relevance of your training set with as few QM calculations as possible [4] [17].

- Solution 1 (Descriptor-Based): Employ a SOAP descriptor kernel. This measures the similarity between atomic environments. New structures are selected for QM calculation if their similarity to the existing training set is below a defined threshold [4].

- Solution 2 (Query-by-Committee): Train an ensemble of ML models (e.g., 5-10 neural networks with the same architecture but different initializations). Use the variance in the predicted energies of the ensemble as an uncertainty metric. Frames with high uncertainty are candidates for training [17].

- Batch Selection: For selecting multiple structures at once (batch mode), methods like COVDROP can be used. This approach uses Monte Carlo dropout to estimate a covariance matrix between predictions and selects the batch that maximizes the joint entropy, ensuring both high uncertainty and diversity in the selected batch [18].

Q4: How can I accurately model chemical reaction rates and mechanisms in explicit solvent using ML potentials?

Accurately capturing the effect of solvent on transition states and reaction barriers is crucial [4]. Standard MD struggles to sample these rare events.

- Solution: Combine your ML potential with enhanced sampling techniques.

- Protocol:

- Use your data-efficient ML potential to run extensive MD simulations.

- Identify key collective variables (CVs) for the reaction.

- Employ methods like metadynamics or umbrella sampling to drive the system through the reaction pathway and compute free energy barriers [17].

- The ML potential allows for the required extensive sampling at a fraction of the cost of direct AIMD, providing statistically meaningful ensembles for calculating reaction rates that agree with experimental data [4].

Active Learning Selector Performance Comparison

The table below summarizes different strategies for selecting new data points in an active learning loop.

| Selector Method | Key Metric | Key Advantage | Applicable Model Types |

|---|---|---|---|

| Descriptor-Based (e.g., SOAP) [4] | Similarity of atomic environments | General metric, low computational cost, model-agnostic | All MLP types (ACE, GAP, NequIP, etc.) |

| Query-by-Committee (QBC) [17] | Variance in energy predictions from an ensemble | Directly targets model uncertainty; physically intuitive | Neural Network ensembles (ANI, PhysNet, etc.) |

| Uncertainty-Driven Dynamics (UDD) [17] | Bias potential based on model uncertainty | Actively drives MD to explore uncertain regions; efficient for rare events | Neural Network ensembles |

| Batch Selection (COVDROP) [18] | Joint entropy (log-determinant) of a covariance matrix | Selects diverse, non-redundant batches in one cycle; ideal for drug discovery | Deep learning models (Graph Neural Networks) |

Experimental Protocol: Building an ML Potential for a Diels-Alder Reaction in Solution

This protocol outlines the key steps for building a machine learning potential to study a Diels-Alder reaction between cyclopentadiene (CP) and methyl vinyl ketone (MVK) in water, based on a successful application in recent literature [4].

1. Initial Data Set Generation

- Gas Phase/Implicit Solvent: Start by generating a small set of configurations for the reacting substrates (CP and MVK) in the gas phase. This can be done by randomly displacing atomic coordinates from the transition state geometry [4].

- Explicit Solvent Clusters: Generate cluster models containing the solute (CP+MVK) surrounded by a first solvation shell of explicit water molecules. The shell radius must be at least equal to the cut-off radius of the MLP descriptor [4].

- Reference Calculations: Perform QM calculations (e.g., DFT with a dispersion correction) on all generated configurations to obtain reference energies and forces.

2. Active Learning Loop Workflow

The following diagram illustrates the iterative process of building a robust ML potential.

3. Production Simulation and Analysis

- Use the final, validated ML potential to run long, stable MD simulations.

- Analyze the reaction mechanism, compute free energy profiles using enhanced sampling methods, and obtain reaction rates for comparison with experimental data [4].

The Scientist's Toolkit: Essential Research Reagents & Solutions

The table below lists key computational tools and methods referenced in this guide.

| Item Name | Function/Brief Explanation | Example/Reference |

|---|---|---|

| SOAP Descriptor [4] | A mathematical descriptor to quantify the similarity between local atomic environments; used for selecting diverse structures in AL. | Used as a kernel in descriptor-based active learning selectors. |

| Atomic Cluster Expansion (ACE) [4] | A linear regression-based machine learning potential model known for its data efficiency. | Applied in building ML potentials for Diels-Alder reactions in solution [4]. |

| ANI Neural Network Potential [17] | A neural network-based potential (e.g., ANI-1ccx, ANI-2x) used for organic molecules; often deployed in ensembles. | Used in Query-by-Committee and Uncertainty-Driven Dynamics [17]. |

| Cluster-Continuum Model [4] | A hybrid approach using a QM-treated solute with explicit solvent molecules embedded in an implicit continuum solvent model. | Efficient method for generating initial training data for explicit solvent effects [4]. |

| Uncertainty-Driven Dynamics (UDD) [17] | A biased MD technique that uses the ML model's own uncertainty to explore under-sampled configurations. | Accelerates the discovery of transition states and rare events in AL loops [17]. |

| COVDROP Method [18] | A batch active learning method that uses Monte Carlo dropout to estimate model uncertainty and select diverse batches. | Shown to improve model performance for ADMET and affinity predictions in drug discovery [18]. |

Generative Adversarial Networks (GANs) for Direct Conformational Ensemble Generation

Frequently Asked Questions (FAQs)

Q1: What is the key advantage of using a GAN over traditional Molecular Dynamics (MD) for ensemble generation? GANs can generate thousands of statistically independent conformations in fractions of a second, circumventing the formidable computational cost and kinetic barriers that limit MD sampling [19].

Q2: Can a GAN model generate ensembles for protein sequences not seen during training? Yes, conditional generative models like idpGAN are designed for this purpose. By training on data from multiple molecules and using sequence composition as conditional input, the model learns transferable features and can predict sequence-dependent ensembles for novel sequences [19].

Q3: My research focuses on structured proteins. Are GANs only applicable to Intrinsically Disordered Proteins (IDPs)? No. While IDPs are a natural initial target due to their conformational variability, the method is principle can be extended to any protein system. The idpGAN approach has been retrained on atomistic simulation data, showing its potential for higher-resolution ensemble generation for a broader range of proteins [19].

Q4: How does the model ensure that generated 3D conformations are physically realistic? The Generator network is trained adversarially against a Discriminator network that learns to distinguish generated conformations from real simulation data. Furthermore, architectural choices, such as using invariant input features like interatomic distances for the Discriminator, help ensure the physical realism of the outputs [19].

Q5: What are the current limitations of this approach? A primary limitation is the resolution of the generated ensembles. The proof-of-principle model (idpGAN) was trained on coarse-grained (Cα) simulation data. While extending to atomistic resolution is possible, it presents greater complexity [19]. The accuracy of the generated ensemble is also inherently tied to the quality and diversity of the training simulation data.

Troubleshooting Guide

| Common Issue | Possible Cause | Proposed Solution |

|---|---|---|

| Non-physical or collapsed structures | Generator instability or mode collapse during GAN training. | Review training dynamics; ensure diverse and representative training data; consider using multiple Discriminator networks (MD-GAN) for stability [19]. |

| Poor transferability to new sequences | Training data does not adequately cover the sequence space of interest. | Expand training set to include a wider variety of sequences and conformational states; consider transfer learning by fine-tuning a pre-trained model on a smaller, target-specific dataset. |

| Insufficient conformational diversity | Discriminator over-penalizing rare conformations. | Analyze the latent space; employ techniques like mini-batch discrimination or add an explicit diversity term to the generator's loss function. |

| Mismatch in ensemble properties vs. reference data | Generator has not fully learned the underlying Boltzmann distribution of the training data. | Use reweighting techniques or apply the method to "Boltzmann Generators," which explicitly learn to sample from the energy landscape [20]. |

Experimental Protocols & Data

Key Methodology: Training a Conditional GAN for Conformational Generation

The following protocol summarizes the method used to train idpGAN, a model for generating coarse-grained conformational ensembles [19].

- Data Curation: Gather a large set of molecular dynamics (MD) simulation trajectories for various proteins (e.g., IDPs). The training set should span a significant portion of the sequence space to enable transferability.

- Data Preprocessing: Extract molecular conformations and represent them as 3D coordinates of Cα atoms. Calculate the interatomic distance matrix for each conformation to ensure E(3) invariance (invariance to translation, rotation, and reflection).

- Model Architecture:

- Generator (G): A transformer-based network that takes a latent vector ( z ) and a one-hot encoded amino acid sequence ( a ) as input. It outputs a sequence of 3D coordinates for the Cα atoms. The self-attention mechanism helps form globally consistent structures.

- Discriminator (D): A network that takes a protein conformation (as a distance matrix) and the corresponding amino acid sequence. It outputs a probability of the conformation being "real" from the training data. For variable-length proteins, use multiple discriminators or a convolutional network with padding.

- Adversarial Training: Train G and D in a competitive min-max game. G aims to generate conformations that fool D, while D aims to correctly classify real and generated samples.

- Validation: Evaluate the trained model on a held-out test set of proteins not present in the training data. Quantify performance by comparing generated ensemble properties (e.g., radius of gyration, contact maps) against those derived from reference MD simulations.

Quantitative Performance Data

The table below summarizes key quantitative findings from the idpGAN study, demonstrating its ability to reproduce ensemble properties from MD simulations [19].

| Model / Metric | Training Data | Transferability | Key Result |

|---|---|---|---|

| idpGAN (Cα) | CG MD of IDPs | Yes (on IDP_test set) | Reproduced sequence-specific contact probability maps for unseen sequences; generated "realistic" conformations qualitatively matching MD data [19]. |

| idpGAN (all-atom) | All-atom implicit solvent (ABSINTH) | Demonstrated in principle | Showed the approach can be extended to higher-resolution conformational ensemble generation [19]. |

Research Reagent Solutions

The following table details key computational "reagents" and their roles in developing GANs for conformational ensemble generation.

| Item | Function in the Experiment |

|---|---|

| Molecular Dynamics (MD) Simulation Data | Serves as the source of "ground truth" conformational data for training the generative model. The quality and breadth of this data directly determine the model's capabilities [19] [20]. |

| Coarse-Grained (Cα) Force Field | A simplified molecular model that reduces computational cost for generating initial training data and for the proof-of-concept model, allowing for faster iteration and validation [19]. |

| Transformer-based Generator | The neural network that creates new protein conformations. Its self-attention mechanism is crucial for modeling long-range dependencies within a sequence to produce globally consistent 3D structures [19]. |

| Generative Adversarial Network (GAN) Framework | Provides the adversarial training setup that forces the generator to produce physically realistic conformations that are indistinguishable from real MD samples [19] [20]. |

Workflow Visualization

GAN Training and Ensemble Generation Workflow

From Sequence to Ensemble: The idpGAN Pipeline

Graph Neural Network-Based Implicit Solvation with Explicit Solvent Accuracy

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Our GNN implicit solvent model runs slower than anticipated. What are the primary factors affecting computational performance?

Performance is predominantly influenced by the GNN architecture complexity and the system setup. Using larger hidden layer sizes in the Multi-Layer Perceptrons (MLPs) of your GNN, such as 128 versus 48, increases the number of parameters and computation time [21] [22]. Furthermore, modern neural network implementations are optimized for parallel operations on GPUs, whereas Molecular Dynamics (MD) relies on fast, consecutive evaluations of the Hamiltonian. To maximize efficiency, simulate multiple replicates of a molecule simultaneously on a GPU to leverage parallel processing [21] [22].

Q2: When is it appropriate to use our model for solvation free energy (ΔG) calculations, and when is it not recommended?

Your model is suitable for calculating conformational landscapes and relative free energy differences. However, a fundamental limitation exists: models trained solely by force-matching learn potential energies only up to an arbitrary constant. This makes them inherently unsuitable for calculating absolute solvation free energies [23]. For accurate absolute ΔG predictions, ensure your model and training procedure incorporate derivatives with respect to alchemical coupling parameters (λ) in addition to forces, which anchors the energy to a physically meaningful scale [24] [23].

Q3: Our model performs well on most small organic molecules but fails on a new compound class. What could be the cause?

This indicates a transferability failure. The model's performance is constrained by the chemical space covered in its training data [21] [22]. If the new compounds feature atoms, functional groups, or molecular weights outside the scope of the training set (e.g., the model was trained on molecules <500 Da but is now applied to molecules of 500-700 Da), the predictions will be unreliable [21] [22]. Always verify that your target molecules fall within the domain of your model's training data.

Q4: How does the accuracy of our GNN implicit solvent model compare to traditional explicit and implicit methods?

When properly trained and applied within its domain, a GNN implicit solvent model can achieve accuracy on par with explicit solvent simulations for reproducing conformational ensembles and mean forces [21] [22]. It significantly outperforms traditional continuum implicit solvent models like GB-Neck2, particularly in capturing local solvation effects that continuum models miss [21] [22]. The table below provides a quantitative comparison.

Q5: What is the key difference between "embedding concatenation" and "embedding merging" in solvation free energy prediction, and why does it matter?

- Embedding Concatenation: Uses separate GNNs to encode the solute and solvent molecules independently. Their resulting embeddings are then simply concatenated for the final prediction. This method primarily captures intramolecular features but fails to explicitly model critical intermolecular interactions [25].

- Embedding Merging: Also uses separate encoders initially, but then employs structured integration mechanisms (e.g., attention) to model interactions between solute and solvent atoms. This more closely mimics real chemical processes like hydrogen bonding, leading to more accurate and physically meaningful predictions of solvation free energy [25].

Common Error Messages and Solutions

| Error Scenario / Symptom | Potential Root Cause | Recommended Solution |

|---|---|---|

| Unphysical molecular geometries or energies during MD. | The model is generating its own non-physical states not present in the training data. This is a known challenge for ML potentials. | Incorporate enhanced sampling techniques to guide the simulation back to physically realistic regions. Continuously monitor energy and geometry for sanity checks. |

| Poor prediction of local solvation effects (e.g., hydrogen bonding). | Standard continuum implicit solvent models underlying the GNN's functional form cannot capture discrete solvent effects. | The GNN is designed to correct this. Ensure your model uses a functional form that allows it to learn local corrections to the continuum model, particularly in the non-polar term [21] [22]. |

| Model fails to generalize to new solvent types. | The model was trained on data for a specific solvent (e.g., water). | ML-based solvent models are typically solvent-specific. For a new solvent, you must retrain the model using training data generated from explicit simulations of that specific solvent [21] [22]. |

| Inaccurate solvation free energies despite good force-matching. | The model was trained using a loss function based only on force-matching. | Adopt a multi-term loss function that includes, in addition to forces, the derivatives of the energy with respect to alchemical variables (λelec, λsteric). This ensures the energy surface is correct, not just its gradients [23]. |

Quantitative Data and Performance

Performance Comparison of Solvation Models

The table below summarizes key performance metrics for different types of solvation models, highlighting the position of GNN-based implicit solvents.

| Model Type | Key Example | Accuracy (vs. Explicit) | Computational Speed (Relative) | Key Limitation(s) |

|---|---|---|---|---|

| Explicit Solvent | TIP3P Water Model | Gold Standard | 1x (Baseline) | Extremely high computational cost; slow dynamics [21]. |

| Continuum Implicit Solvent | GB-Neck2 (GBSA) | Low; misses local effects [21] | >18x faster [21] | Inaccurate description of local solvation (e.g., H-bonds) [21]. |

| Early ML Implicit Solvent | Specific-system ML Potentials | High for trained system | Often slower than explicit [21] [22] | Lacks transferability; new data and training needed per system [21]. |

| GNN Implicit Solvent (This Work) | Katzberger & Riniker model [21] [22] | On par with explicit [21] [22] | Up to 18x faster than explicit [21] [22] | Performance depends on training data diversity and size [21]. |

| Advanced GNN for ΔG | ReSolv [24] | MAE close to experimental uncertainty [24] | Cheaper than explicit MD [24] | Requires careful top-down training with experimental data [24]. |

GNN Architecture Trade-offs: Accuracy vs. Speed

The choice of GNN architecture complexity directly impacts the balance between prediction accuracy and simulation speed. The following table, based on experiments with hidden layer size variation, illustrates this trade-off [21] [22].

| Hidden Layer Size | Model Parameter Count | Relative Simulation Speed | Prediction Accuracy (on Test Set) |

|---|---|---|---|

| 128 | Highest | Slowest | Highest |

| 96 | High | Slow | High |

| 64 | Medium | Medium | Medium |

| 48 | Lowest | Fastest | Acceptable (Slight degradation) |

Experimental Protocols and Workflows

Workflow for Developing a Transferable GNN Implicit Solvent Model

The following diagram outlines the comprehensive workflow for developing and validating a general GNN-based implicit solvation model.

Protocol: Free Energy Calculation with LSNN

For researchers requiring accurate absolute solvation free energies, the following detailed protocol using the λ-Solvation Neural Network (LSNN) methodology is recommended [23].

Objective: To calculate the hydration free energy (ΔGsolv) of a small organic molecule using a GNN implicit solvent model with accuracy comparable to explicit solvent alchemical simulations.

Principles: Standard ML potentials trained only by force-matching predict energies up to an arbitrary constant, making them unsuitable for absolute free energy calculations. The LSNN approach overcomes this by extending the training to include derivatives with respect to alchemical coupling parameters, which define a physically meaningful energy zero-point [23].

Step-by-Step Procedure:

- Model Architecture Setup:

- Begin with a GNN-implicit solvent model that uses a physically motivated functional form, such as a modified GBSA model where a GNN predicts scaling factors for the polar and non-polar terms [23].

- The non-polar solvation contribution is calculated as:

- ΔGnon-polar = Σi=1N σ(φ(R, λelecq, λstericsra, r, Rcutoff)) γ λsterics(ri + rw)2

- Here,

σis the sigmoid function,φis the GNN, andλ_elecandλ_stericare the alchemical coupling parameters [23].

Multi-Term Loss Function Training:

- Train the model using a composite loss function that goes beyond simple force-matching:

ℒ = w_F * (⟨∂U_solv/∂r_i⟩ - ∂f/∂r_i)^2 + w_elec * (⟨∂U_solv/∂λ_elec⟩ - ∂f/∂λ_elec)^2 + w_steric * (⟨∂U_solv/∂λ_steric⟩ - ∂f/∂λ_steric)^2

- The weights

w_F,w_elec, andw_stericare empirically tuned [23]. This ensures the model learns the correct energy surface, not just its gradients.

- Train the model using a composite loss function that goes beyond simple force-matching:

Free Energy Calculation:

- With the trained LSNN model, the solvation free energy can now be computed as a physical, comparable value.

- Perform free energy simulations or analysis (e.g., using thermodynamic perturbation or integration) leveraging the accurate potential of mean force (PMF) provided by the model [23].

Validation:

- Validate the predicted ΔGsolv values against experimental hydration free energy databases such as FreeSolv [24].

- The model should achieve a Mean Absolute Error (MAE) close to the average experimental uncertainty, significantly outperforming standard explicit solvent force fields like GAFF or CGenFF, which are known to systematically overestimate hydration free energies [24].

The Scientist's Toolkit: Essential Research Reagents

This section details the key computational "reagents" required to successfully implement and experiment with GNN-based implicit solvation models.

Key Research Reagent Solutions

| Item Name | Function / Purpose | Technical Specifications & Notes |

|---|---|---|

| Reference Datasets | Provides target data for training and benchmarking. | FreeSolv Database: Curated experimental hydration free energies for small neutral molecules [24]. CombiSolv / MNSol Database: Large collections of experimental solvation free energies for diverse solute-solvent pairs [25]. |

| Base Implicit Solvent Model | Serves as the physically-motivated foundation for the GNN correction. | GB-Neck2 (GBSA): A generalized Born model providing a good starting point for long-range electrostatic effects. The GNN learns a local correction to this continuum [21] [22]. |

| GNN Architecture | The core machine learning component that learns the solvation potential. | Invariant GNN: A 3-layer graph neural network that operates on molecular graphs [21] [22]. MMHNN: A more advanced architecture using hypergraphs and prior knowledge to efficiently model solute-solvent interactions [25]. |

| Training Data (MAFs) | The fundamental input for standard force-matching training. | Mean Applied Forces (MAFs): Reference forces obtained by averaging over explicit solvent configurations. Represents the mean force exerted by the solvent on the solute [21] [23]. |

| Alchemical Coupling Parameters (λ) | Enables accurate free energy calculation in advanced models like LSNN. | λelec & λsteric: Scalars that couple/disconnect the electrostatic and van der Waals interactions between the solute and solvent. Their derivatives are used in the loss function to pin the absolute energy [23]. |

| Molecular Graph Featurizer | Converts molecular structures into machine-readable graph inputs. | RDKit Package: A standard cheminformatics tool used to convert SMILES strings into molecular graphs and compute initial atom and bond features (e.g., atom type, hybridization, partial charge) [25]. |

Hybrid QM/MM and Multiscale Approaches for Chemically Reactive Systems

Frequently Asked Questions (FAQs)

Q1: What is the primary advantage of using a QM/MM approach over a full QM treatment for studying reactions in biomolecular systems?

A1: The key advantage is computational efficiency. Quantum mechanical (QM) methods are necessary for describing chemical reactions but are computationally prohibitive for systems larger than a few hundred atoms. Molecular mechanical (MM) methods efficiently handle the size and complexity of biopolymers (up to ~100,000 atoms). QM/MM combines these by applying a QM treatment only to the chemically active region (e.g., substrates, co-factors) and an MM treatment to the surroundings (e.g., protein, solvent), making reactive biomolecular simulations feasible and accurate [26].

Q2: My QM/MM simulations are not capturing rare reactive events. What strategies can I use to improve sampling?

A2: This is a common limitation as typical QM/MM simulations are often restricted to short timescales (hundreds of picoseconds). You can address this by employing enhanced sampling techniques, which accelerate the observation of rare events by applying controlled biases to the system. Examples include thermodynamic integration and umbrella sampling [27]. Another strategy is multiple time step (MTS) MD, which accelerates integration by performing expensive QM calculations less frequently than cheaper MM force calculations [27].

Q3: How can I model solvent effects more accurately than with a simple continuum model?

A3: While implicit solvent models (e.g., polarisable continuum) are efficient, they fail to capture specific solute-solvent interactions. For higher accuracy, use explicit solvent models. Recent advances using Machine Learning Potentials (MLPs) trained on QM data now allow for the modeling of chemical processes in explicit solvent at a much lower computational cost than direct ab initio MD, providing reaction rates in agreement with experimental data [4].

Q4: How can I assess if my enhanced sampling simulation has converged and produced a reliable ensemble?

A4 Demonstrating convergence is critical. For replica exchange MD (REMD) simulations, you can:

- Analyze replica RMSD profiles to see if replicas are sufficiently wandering through temperature space.

- Perform a detailed ensemble analysis through clustering to see if the populations of major conformational states have stabilized.

- Be aware that for even small RNA systems, convergence in explicit solvent may require microseconds of simulation per replica. Methods like Reservoir REMD (R-REMD) can significantly enhance convergence rates [28].

Troubleshooting Guides

Poor Sampling and Convergence

| Symptom | Possible Cause | Solution |

|---|---|---|

| Rare chemical reaction events are not observed within simulation time. | QM/MM MD timescales are too short for slow reaction kinetics. | Implement enhanced sampling methods (e.g., umbrella sampling, metadynamics) to bias the system along a reaction coordinate [27]. |

| Inadequate conformational sampling of the protein or solvent. | Rugged energy landscape; insufficient simulation time. | Use Replica Exchange MD (REMD). For better efficiency in explicit solvent, consider Reservoir REMD (R-REMD), which uses a pre-generated high-temperature reservoir to drive convergence [28]. |

| Ensembles from multiple simulations show significant disparities. | Lack of convergence; incomplete sampling of the free energy landscape. | Extend simulation time. Use multiple, independent simulations with different initial velocities. Quantify convergence via cluster population stability and replica RMSD analysis [28]. |

Technical and Numerical Instabilities

| Symptom | Possible Cause | Solution |

|---|---|---|

| Discontinuities or energy spikes at the QM/MM boundary. | Covalent bonds cut the QM/MM boundary, creating unphysical terminal atoms. | Employ a link atom or pseudopotential scheme to saturate the valencies of the QM region atoms at the boundary [27] [29]. |

| Unphysical polarization or electrostatic interactions between subsystems. | Use of simple mechanical embedding without electrostatic polarization. | Switch to electrostatic embedding, where the MM point charges polarize the QM electron density. For higher accuracy, consider advanced polarizable potentials for the MM region [27] [29]. |

| High computational cost limits simulation length. | The QM calculation at each step is too expensive. | Use multiple time step (MTS) algorithms (e.g., RESPA) to compute QM forces less frequently than MM forces. Utilize efficient software frameworks like MiMiC designed for high-performance QM/MM [27]. |

Essential Experimental and Computational Protocols

Protocol for Enhanced Sampling QM/MM MD of a Reaction in Explicit Solvent

This protocol outlines the steps to study a chemical reaction in a biological system using accelerated QM/MM.

System Setup:

- Obtain the initial structure (e.g., from a crystal structure or homology modeling).

- Parameterization: Use a standard MM force field (e.g., AMBER ff14SB for proteins, ff99bsc0 for DNA) for the MM region [27]. For the QM region, select an appropriate method (e.g., DFT) and basis set.

- Solvation: Solvate the entire system in an explicit solvent box (e.g., TIP3P water model) [27] [28].

Equilibration:

- Perform energy minimization to remove bad contacts.

- Carry out classical MM MD equilibration in stages: first with positional restraints on the solute, then with restraints only on the active site, and finally a full unrestrained equilibration to stabilize temperature and density.

Defining the Reaction Coordinate:

- Identify a key geometric parameter that describes the reaction progress (e.g., a bond distance, bond angle, or a combination thereof). This will be used as the collective variable (CV) for enhanced sampling.

Enhanced Sampling Production Run:

- Run a QM/MM MD simulation with an enhanced sampling method such as umbrella sampling.

- Procedure: Restrain the system at multiple successive windows along the pre-defined CV. In each window, perform a constrained QM/MM MD simulation to collect the probability distribution of the CV.

- Use the Weighted Histogram Analysis Method (WHAM) to unbiased the data from all windows and combine them to compute the Potential of Mean Force (PMF), which provides the reaction free energy profile and barrier [27].

Protocol for Building a Machine Learning Potential for Reactions in Solution

This protocol describes a modern, data-driven approach to achieve long timescales with QM-level accuracy [4].

Initial Data Generation:

- Create two initial training sets:

- Gas Phase/Implicit Solvent Set: Generate configurations of the reacting substrates by randomly displacing atomic coordinates, starting from minima and transition states.

- Explicit Solvent Cluster Set: Create cluster models with the solute surrounded by a shell of explicit solvent molecules. The shell radius should be at least the cut-off radius planned for the MLP.

- Create two initial training sets:

Active Learning Loop:

- Train Initial MLP: Use the initial data sets (with reference QM energies and forces) to train the first version of the MLP.

- MD Propagation and Selection: Run short MD simulations using the current MLP. Use descriptor-based selectors (e.g., Smooth Overlap of Atomic Positions - SOAP) to identify new configurations that are poorly represented in the existing training set.

- Retrain: Compute QM-level energies and forces for the newly selected structures and add them to the training set. Retrain the MLP. Iterate this process until the MLP is stable and accurate.

Production Simulation:

- Use the final, validated MLP to run extensive MD simulations in explicit solvent (with periodic boundary conditions) to observe reactive events, compute reaction rates, and analyze mechanisms.

The workflow for this protocol is summarized in the following diagram:

The Scientist's Toolkit: Key Research Reagents and Computational Solutions

The following table details essential computational tools and their functions in multiscale modeling.

| Item Name | Function / Application | Key Context |

|---|---|---|

| MiMiC Framework [27] | A software framework for efficient QM/MM MD on HPC systems. | Enables flexible and high-performance simulations by coupling different QM and MM programs without performance loss. |

| Enhanced Sampling Methods (e.g., Umbrella Sampling, Metadynamics) [27] | Accelerates the observation of rare events (e.g., chemical reactions, large conformational changes) by biasing simulation along reaction coordinates. | Crucial for overcoming the timescale limitation of standard QM/MM MD to achieve converged free energy estimates. |

| Multiple Time Step (MTS) Algorithms [27] | Speeds up simulation by calculating expensive QM forces less frequently than cheap MM forces. | Addresses the high computational cost of QM calculations, allowing for longer or faster simulations. |

| Machine Learning Potentials (MLPs) (e.g., ACE, GAP, NequIP) [4] | Acts as a fast and accurate surrogate for the QM potential energy surface. | Allows for extensive sampling of reactions in explicit solvent at near-QM accuracy but at a fraction of the computational cost. |

| Reservoir Replica Exchange MD (R-REMD) [28] | An enhanced sampling variant where a pre-computed reservoir of high-temperature structures accelerates convergence. | A cost-effective and reliable method for generating well-converged conformational ensembles in explicit solvent, vital for force field validation. |

| Electrostatic Embedding [27] [29] | A QM/MM electrostatic scheme where MM point charges polarize the QM region's electron density. | Provides a more physically accurate description of the environment's effect on the reactive site compared to mechanical embedding. |

Key Challenges & Fundamental Concepts

What are the principal challenges when targeting Intrinsically Disordered Proteins (IDPs) with small molecules?

IDPs lack stable three-dimensional structures and defined binding pockets, which presents several challenges for drug discovery:

- Lack of Stable Binding Sites: Unlike folded proteins, IDPs populate a conformational ensemble of rapidly interconverting structures, making it difficult to identify a single, stable binding site for small molecules [30].

- Dynamic Binding Mechanisms: Small molecules often bind through a "dynamic shuttling" mechanism, transitioning among networks of spatially proximal interactions without significantly altering the IDP's conformational ensemble. This makes affinity and specificity difficult to predict and optimize [30].

- System-Dependent Effects: Small molecules can affect IDP conformational ensembles in various ways—causing entropic expansion, population shifts, compaction, or even oligomerization—requiring system-specific characterization [30].