Advanced Strategies for Refining Force Field Parameters Against Experimental Data

Accurate force fields are the cornerstone of reliable molecular dynamics simulations in computational drug discovery and materials science.

Advanced Strategies for Refining Force Field Parameters Against Experimental Data

Abstract

Accurate force fields are the cornerstone of reliable molecular dynamics simulations in computational drug discovery and materials science. This article provides a comprehensive guide for researchers on modern strategies for refining molecular mechanics force field parameters against experimental data. It covers foundational principles, from the critical role of force fields in simulating biomolecular systems to the inherent limitations of quantum mechanics (QM)-trained models. The content delves into advanced methodological frameworks, including Bayesian inference and machine learning (ML) techniques for parameter optimization. It further addresses practical challenges like handling data uncertainty and offers robust validation protocols to assess and compare refined force fields against both QM benchmarks and key experimental observables, empowering scientists to build more predictive computational models.

The Foundation of Force Field Refinement: Bridging Theory and Experiment

The Critical Role of Force Fields in Molecular Dynamics Simulations

Frequently Asked Questions (FAQs)

1.1 What are the most significant challenges in refining force fields against experimental data? Refining force fields involves several key challenges: achieving a balance between protein-protein and protein-solvent interactions to correctly model both folded and disordered proteins, dealing with sparse or noisy experimental data, and managing the high dimensionality and interdependence of force field parameters. Furthermore, integrating multiple data sources, such as various quantum chemical calculations and diverse experimental observables, without introducing conflicting parameter adjustments is non-trivial [1] [2] [3].

1.2 Which experimental observables are most valuable for force field validation and refinement? A combination of experimental data provides the most robust validation. Key observables include:

- NMR Data: Scalar coupling constants (nJHH, nJCH) and chemical shifts provide exquisite detail on local conformations and dynamics [4] [1].

- Scattering Data: Small-Angle X-Ray Scattering (SAXS) profiles report on global chain dimensions and are crucial for validating the ensembles of intrinsically disordered proteins [1] [2].

- Crystal Structure Data: Comparing simulated structures to aggregated crystal structure data helps validate backbone dihedrals and side-chain torsions in folded proteins [5].

- Mechanical Properties: For materials science applications, elastic constants and lattice parameters are fundamental targets for refinement [6].

1.3 My simulation of an intrinsically disordered protein (IDP) appears too compact. How can I address this? This is a classic force field limitation. Modern solutions involve using force fields specifically refined to enhance protein-water interactions. For example, the DES-Amber and ff99SBws force fields incorporate adjustments, such as scaled Lennard-Jones parameters, to improve hydration and reduce unnatural intramolecular attraction, resulting in more experimentally accurate expanded ensembles for IDPs [1] [2].

1.4 How can I refine force field parameters for a novel molecule, like a unique bacterial lipid? A systematic, quantum mechanics (QM)-based parameterization strategy is required. This involves:

- Atom Typing: Define new atom types based on the unique chemical environments of the molecule.

- Charge Derivation: Calculate partial atomic charges using methods like Restrained Electrostatic Potential (RESP) fitting based on QM calculations.

- Torsion Optimization: Optimize dihedral parameters by minimizing the difference between QM-calculated and classically computed torsion energies for key rotatable bonds [7].

1.5 Can machine learning be integrated with experimental data for force field development? Yes, this is a cutting-edge approach. Machine learning potentials can be trained not only on quantum chemical data (e.g., from Density Functional Theory) but also directly on experimental observables. A fused data learning strategy alternates training between DFT-calculated energies/forces and experimental properties (like elastic constants), resulting in a more accurate and constrained model that respects both first-principles physics and real-world measurements [6].

Troubleshooting Guides

Problem: Inaccurate Torsional Energy Profiles in Small Molecules

Issue: Simulations of drug-like small molecules fail to reproduce the correct rotational energy barriers around flexible bonds, leading to errors in conformational populations.

Solution: Implement a data-driven parameterization approach using high-quality quantum mechanics (QM) data and machine learning.

Investigation & Resolution Steps:

- Verify the Issue: Compare the torsional energy profile from your simulation against a high-level QM calculation for the same molecule or molecular fragment.

- Adopt a Modern Force Field: Consider using a recently developed, data-driven force field like ByteFF. This force field was trained on a massive dataset of 3.2 million torsion profiles and 2.4 million optimized molecular fragments at the B3LYP-D3(BJ)/DZVP level of theory, providing expansive coverage of chemical space [8].

- Utilize a Specialized ML Framework: For systems where no pre-parameterized force field exists, use a tool like the Alexandria Chemistry Toolkit (ACT). This software employs evolutionary machine learning (genetic algorithms combined with Monte Carlo) to discover optimal force field parameters that best reproduce the QM training data [9].

Preventative Measures:

- When working with novel chemical space, avoid relying solely on traditional look-up table-based force fields.

- Validate torsional profiles for a representative subset of molecules before embarking on large-scale production simulations.

Problem: Incorrect Structural Ensembles for Intrinsically Disordered Proteins (IDPs)

Issue: Simulated IDP ensembles are overly compact and do not match experimental data from techniques like SAXS or NMR.

Solution: Re-balance the force field by strengthening protein-water interactions or using refined torsional parameters.

Investigation & Resolution Steps:

- Quantify the Discrepancy: Calculate the radius of gyration (Rg) from your simulation and compare it to the Rg derived from experimental SAXS data.

- Switch to a Balanced Force Field: Select a force field that has been explicitly refined for IDPs. Based on recent benchmarks, the top performers include:

- Validate with Multiple Observables: Ensure the new force field not only corrects the Rg but also agrees with NMR data (e.g., scalar couplings, chemical shifts, and relaxation times) to confirm local structure is also correct [1].

Preventative Measures:

- Always consult recent literature for force field benchmarks on systems similar to yours, especially when simulating disordered states.

- Use a multi-observable validation strategy against NMR and SAXS data.

Problem: Force Field Inaccuracy in Specific Materials (e.g., Moiré Structures or Titanium)

Issue: For complex materials, universal force fields or those trained on general datasets fail to reproduce key material properties with the required meV-level accuracy.

Solution: Construct a highly accurate, system-specific Machine Learning Force Field (MLFF).

Investigation & Resolution Steps:

- Identify the Accuracy Gap: Benchmark your current force field or a universal MLFF against DFT-calculated forces and energies for a test set of relevant structures.

- Use a Specialized Workflow: For moiré structures (e.g., twisted bilayers), employ a tool like DPmoire. This software automates the generation of training data from shifted non-twisted bilayers and subsequent MLFF training, ensuring high accuracy for the target system [10].

- Implement Fused Data Learning: For materials like titanium, train the MLFF concurrently on both DFT data (energies, forces) and experimental data (elastic constants, lattice parameters). This hybrid approach corrects for inherent DFT inaccuracies and produces a model that satisfies all target objectives [6].

- Validate on Target Properties: Rigorously test the final MLFF on its ability to reproduce the properties it was designed for, such as electronic band structures in relaxed moiré systems [10] or temperature-dependent mechanical properties [6].

Table: Key Experimental Observables for Force Field Validation

| Observable | System Type | Information Provided | Common Experimental Sources |

|---|---|---|---|

| Scalar Couplings (nJ) | Biomolecules, Organic Molecules | Dihedral angles, conformational preferences, stereochemistry | NMR Spectroscopy [4] |

| Chemical Shifts | Biomolecules, Organic Molecules | Local electronic environment, secondary structure | NMR Spectroscopy [4] |

| Radius of Gyration | Intrinsically Disordered Proteins | Global chain dimensions and compaction | SAXS [1] [2] |

| Elastic Constants | Materials | Mechanical response to stress | Ultrasonic measurements, mechanical testing [6] |

| Lattice Parameters | Crystalline Materials | Unit cell dimensions | X-ray Diffraction (XRD) [6] |

| SAXS Form Factors | Proteins, Complex Structures | Solution-state structure and shape | SAXS [5] |

Problem: Parameterizing Force Fields for Novel Bacterial Membrane Lipids

Issue: Standard bio-membrane force fields (e.g., CHARMM36, Lipid21) do not contain parameters for unique bacterial lipids like those in Mycobacterium tuberculosis, leading to inaccurate simulations.

Solution: Develop dedicated force field parameters using a modular QM-based approach.

Investigation & Resolution Steps:

- Define New Atom Types: Create specialized atom types (e.g.,

cXfor cyclopropane carbons,cTfor lipid tail carbons) to capture the unique chemical features of the lipid [7]. - Calculate Partial Charges:

- Divide the large lipid molecule into manageable segments.

- For each segment, perform geometry optimization and RESP charge fitting at a high QM level (e.g., B3LYP/def2TZVP).

- Average charges over multiple conformations and reassemble the total molecular charge by integrating segments and removing capping groups [7].

- Optimize Torsion Parameters:

- Further subdivide the molecule into smaller elements containing the rotatable bonds.

- Optimize the torsion parameters (Vn, n, γ) to minimize the difference between the QM-calculated and classically computed potential energy for that torsion [7].

- Validate with Biophysical Data: Run simulations with the new parameters (e.g., BLipidFF) and validate against available experimental data, such as membrane rigidity and lateral diffusion coefficients measured by Fluorescence Recovery After Photobleaching (FRAP) [7].

Preventative Measures:

- For specialized systems, anticipate the need for bespoke parameterization.

- Establish a standardized QM calculation and validation protocol to ensure parameter consistency and quality.

Research Reagent Solutions

Table: Essential Tools and Resources for Force Field Refinement

| Reagent / Tool | Type | Primary Function | Example Use Case |

|---|---|---|---|

| BICePs Algorithm [3] | Software Algorithm | Bayesian inference for reconciling simulation ensembles with sparse/noisy experimental data. | Robust force field refinement against ensemble-averaged measurements like NMR J-couplings. |

| Alexandria Chemistry Toolkit (ACT) [9] | Software | Uses evolutionary machine learning (genetic algorithms) to optimize force field parameters from scratch. | Automated parameter discovery for organic molecules against QM training data. |

| DPmoire [10] | Software | Automated construction of machine learning force fields for twisted moiré material systems. | Generating accurate MLFFs for relaxed structures of twisted bilayer materials like WSe₂. |

| BLipidFF [7] | Force Field | Specialized all-atom force field for bacterial membrane lipids. | Simulating the unique structure and dynamics of the Mycobacterium tuberculosis outer membrane. |

| ByteFF [8] | Force Field | Data-driven, Amber-compatible force field for drug-like molecules. | Achieving accurate torsional profiles and conformational energies across expansive chemical space. |

| DES-Amber [1] | Force Field | Refined protein force field for balanced simulation of folded proteins and IDPs. | Simulating an IDP that undergoes a coil-helix transition without over-stabilizing compact states. |

| Validated NMR Dataset [4] | Experimental Dataset | Curated collection of proton-carbon and proton-proton scalar coupling constants for organic molecules. | Benchmarking computational methods for predicting NMR parameters or validating force fields. |

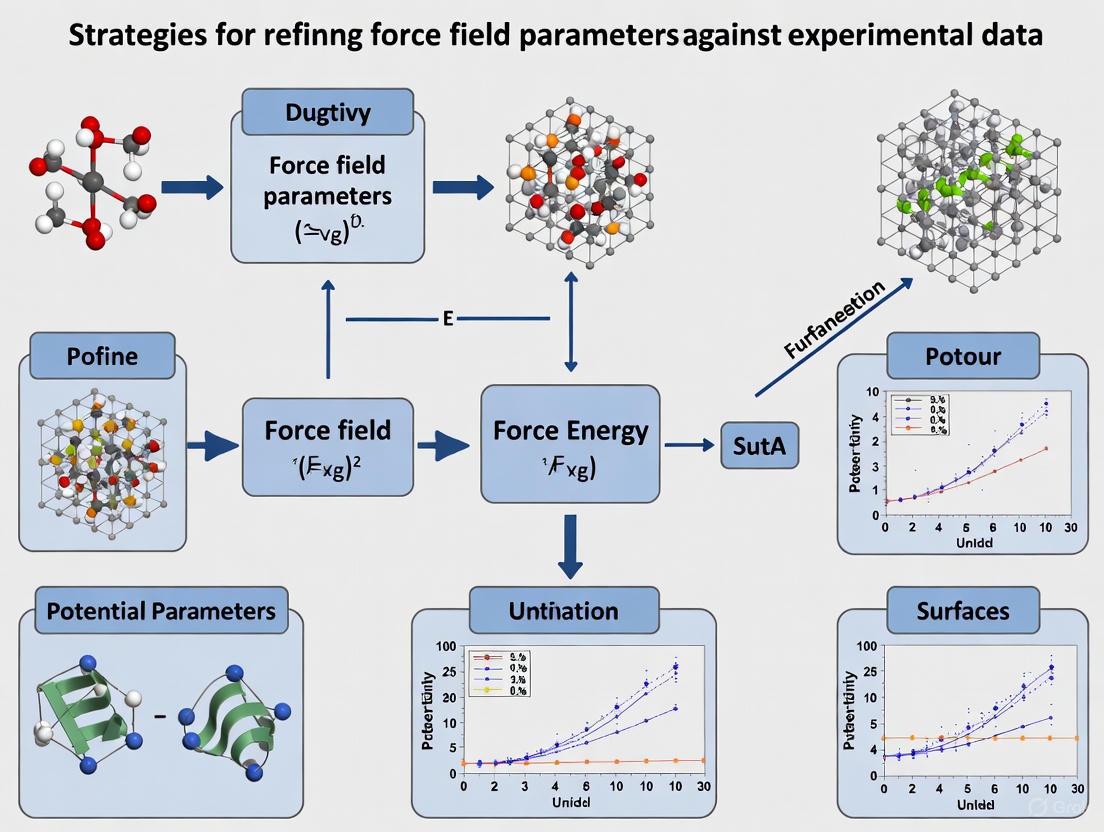

Workflow Diagrams

Force Field Refinement Workflow

ML Potential Training with Fused Data

Limitations of Purely QM-Trained Force Fields and the Experimental Gap

Frequently Asked Questions (FAQs)

FAQ 1: Why does my force field, trained only on quantum mechanics (QM) data, produce inaccurate macroscopic properties like density or viscosity in molecular dynamics (MD) simulations?

- Answer: This discrepancy arises because the limited functional forms of classical force fields are unable to fully capture the complex quantum mechanical nature of interatomic interactions. Purely QM-trained force fields often lack the error cancellation that occurs when parameters are refined against experimental data. Consequently, small errors in microscopic interactions can accumulate during MD simulations, leading to significant inaccuracies in predicted bulk properties [11].

FAQ 2: What is the "transferability" problem in machine-learned force fields (MLFFs)?

- Answer: Transferability refers to a force field's ability to make accurate predictions for molecular configurations or systems that were not included in its training data. MLFFs trained on QM data often struggle with this. They can be highly accurate within their training domain but fail when simulating different states of matter (e.g., liquids vs. crystals), chemical environments, or temperatures outside their training set. This is because they may not have learned the underlying physical laws that govern these new scenarios [12] [13].

FAQ 3: My force field fails to simulate bond formation and breaking correctly. What is the limitation?

- Answer: Standard classical force fields use fixed bonding terms (bonds, angles, dihedrals) and cannot dynamically model chemical reactions where bonds break and form. While reactive force fields (like ReaxFF) and some advanced MLFFs are designed for this purpose, they are more complex and computationally expensive to parameterize and train. A purely QM-trained non-reactive force field inherently lacks this capability [14].

FAQ 4: Why do my simulations of magnetic materials using a standard non-magnetic force field yield incorrect material properties?

- Answer: The properties of magnetic materials are governed by spin-dependent interactions. A standard force field trained on non-magnetic QM data completely neglects these spin degrees of freedom. This can lead to major inaccuracies in predicting key properties. For instance, a non-magnetic potential may correctly predict dynamic properties like viscosity at high temperatures where magnetic moments self-average, but it will fail to capture static properties like equilibrium lattice parameters, which are highly sensitive to magnetic states [12].

Troubleshooting Guides

Issue: Inaccurate Bulk Liquid Properties You have trained a force field on high-level QM data of isolated molecules and dimers, but MD simulations of the bulk liquid yield incorrect density, enthalpy of vaporization, or transport properties.

Root Cause: The force field's functional form may be too simplistic to capture many-body effects (e.g., polarization, charge transfer) critical in the condensed phase. The parameters derived from gas-phase QM calculations do not account for the complex many-body environment of a liquid [11].

Solution:

- Incorporate Polarizability: Move from a fixed-charge force field to a polarizable one. This allows the atomic charges to respond to their local electronic environment, more accurately modeling dielectric properties and interaction energies [11].

- Refit against Condensed-Phase QM: Use QM data of molecular clusters or periodic condensed-phase systems for training, which better represent the target environment.

- Refine against Experimental Data: As a final step, refine key force field parameters (e.g., Lennard-Jones, charge scaling factors) against a small set of experimental bulk properties like density and enthalpy of vaporization to achieve error cancellation [11].

Issue: Poor Performance for Intrinsically Disordered Proteins (IDPs) When simulating proteins, your force field incorrectly predicts overly collapsed or overly extended structures for disordered regions, not matching Small-Angle X-Ray Scattering (SAXS) or NMR data.

Root Cause: An imbalance between protein-protein and protein-water interactions. Many force fields over-stabilize protein-protein interactions due to imperfectly tuned van der Waals or electrostatic terms, leading to unnatural collapse of flexible regions [2].

Solution:

- Upscale Protein-Water Interactions: Empirically scale up the Lennard-Jones parameters between protein atoms and water molecules. This strengthens solvation and helps prevent excessive intramolecular association, leading to more realistic chain dimensions for IDPs [2].

- Use Advanced Water Models: Replace simple 3-site water models (e.g., TIP3P) with more accurate 4-site models (e.g., TIP4P2005, OPC) that have a better description of electrostatic distributions and dispersion forces [2].

- Refine Backbone Torsions: Reparameterize backbone torsional potentials against high-level QM data of peptide fragments or experimental NMR data (e.g., scalar couplings) to correct biases in secondary structure propensities [2].

Issue: Lack of Transferability Across Chemical Space Your MLFF performs well on its training molecules but shows degraded accuracy when applied to new molecules with different functional groups or elements.

Root Cause: The training dataset lacks sufficient chemical diversity, and the model has overfitted to the specific chemical motifs present in the training set. The locality approximation in many MLFFs can also limit their ability to capture long-range interactions [8] [13].

Solution:

- Expand and Diversify Training Data: Actively sample chemical space. Use algorithms to select maximally diverse training molecules or fragments, covering a wide range of functional groups, elements, and charge states relevant to your target application [8].

- Employ Active Learning: Implement an active learning workflow where the MLFF itself identifies gaps in its knowledge during MD simulations. Configurations with high predictive uncertainty are sent for QM calculation and then added to the training set, iteratively improving coverage and robustness [12].

- Adopt a Global Representation: For materials, consider using MLFF models with global representations (like BIGDML) that incorporate the full symmetry of the crystal and do not rely on a strict locality approximation, thus better capturing long-range correlations [13].

Experimental Protocols for Force Field Refinement

Protocol 1: Refining Force Fields Using SAXS Data for Biomolecules

This protocol details how to use Small-Angle X-Ray Scattering (SAXS) data to adjust force field parameters and achieve accurate conformational ensembles for proteins, especially Intrinsically Disordered Proteins (IDPs).

- Objective: To balance protein-water and protein-protein interactions by matching computed and experimental SAXS profiles.

- Materials & Reagents:

- Protein system of interest (e.g., an IDP sequence).

- Experimental SAXS data for the protein in solution.

- Molecular dynamics simulation software (e.g., GROMACS, AMBER, OpenMM).

- A modern force field (e.g., a variant of AMBER, CHARMM).

- A 4-site water model (e.g., TIP4P2005, OPC).

- Methodology:

- Initial Simulation: Run multiple, long-timescale MD simulations of the protein in explicit solvent using a baseline force field.

- Compute Theoretical SAXS: From the simulation trajectory, compute the theoretical SAXS intensity profile, I(q), using methods such as CRYSOL or FOXS.

- Compare to Experiment: Calculate the discrepancy (e.g., via χ² value) between the computed I(q) and the experimental I(q).

- Parameter Adjustment: If the computed chain dimensions are too collapsed compared to experiment, systematically scale up the Lennard-Jones (LJ) interaction parameters between protein atoms and water oxygen atoms by a small factor (e.g., 1.08 - 1.10).

- Iterate: Repeat steps 1-4 with the scaled parameters until the theoretical SAXS profile matches the experimental data within an acceptable error margin [2].

Protocol 2: Optimizing Torsional Parameters Against QM and NMR Data

This protocol uses quantum mechanics (QM) calculations and NMR spectroscopy data to refine torsional dihedral parameters, which are critical for accurate conformational sampling.

- Objective: To correct inaccuracies in backbone and sidechain dihedral potentials to match QM energy scans and experimental NMR observables.

- Materials & Reagents:

- Representative molecular fragments (e.g., dipeptides for backbone, isolated molecules for sidechains).

- Quantum chemistry software (e.g., Gaussian, Q-Chem) for QM calculations.

- Experimental NMR data (e.g., scalar J-couplings, chemical shifts).

- Force field parameterization tools.

- Methodology:

- QM Torsion Scans: For the molecular fragment of interest, perform a relaxed QM potential energy surface scan by rotating the target dihedral angle. The QM method should be a good balance of accuracy and cost (e.g., B3LYP-D3(BJ)/DZVP) [8].

- Compute MM Torsion Profile: Calculate the molecular mechanics (MM) energy for the same set of geometries using the initial force field parameters.

- Parameter Fitting: Adjust the torsional force constants (Vn) and phase shifts (γ) in the force field's dihedral term to minimize the difference between the QM and MM energy profiles.

- NMR Validation: Run MD simulations of a small peptide or protein with the refined parameters. Calculate NMR observables (e.g., J-couplings) from the simulation ensemble and compare them to experimental NMR data. This serves as a secondary, solution-phase validation of the torsional refinement [2].

Comparative Data on Force Field Performance

Table 1: Comparison of Force Field Types and Their Characteristic Limitations

| Force Field Type | Typical Number of Parameters | Key Limitations of Purely QM-Trained Variants | Common Experimental Refinement Targets |

|---|---|---|---|

| Classical Non-Reactive | 10 - 100 [14] | Cannot simulate chemical reactions; limited by fixed functional forms leading to inaccurate many-body interactions [14] [11]. | Density, enthalpy of vaporization, hydration free energy, SAXS profiles for IDPs [2] [11]. |

| Reactive Force Fields | 100+ [14] | High computational cost; parameterization is complex and can be system-specific [14]. | Reaction barriers, crystal structures, elastic constants. |

| Machine Learning (ML) | 100,000+ (network weights) [14] | Can lack transferability; data-inefficient if not properly constrained; may neglect long-range interactions [12] [13]. | Bulk properties (to ensure transferability), spectroscopic data. |

| Polarizable Force Fields | Varies | Parameterization is complex; higher computational cost than fixed-charge FFs; transferability can be uncertain [11]. | Dielectric constants, liquid densities, interaction energies of molecular dimers [11]. |

Table 2: Example Artifacts from the QM-Experiment Gap and Their Solutions

| Observed Artifact / Inaccuracy | Associated System | Proposed Refinement Strategy | Reference |

|---|---|---|---|

| Overly collapsed disordered protein chains | Intrinsically Disordered Proteins (IDPs) | Scale up protein-water van der Waals interactions; use 4-site water models [2]. | [2] |

| Incorrect lattice parameters & equilibrium volumes | Magnetic Fe-Cr-C alloys | Train on spin-polarized DFT data to account for magnetic moments; use transfer learning to reduce cost [12]. | [12] |

| Inaccurate bulk liquid density & transport properties | Organic liquids & electrolytes | Use polarizable force fields trained on energy decomposition analysis (EDA) of molecular dimers [11]. | [11] |

| Lack of transferability to new molecules | Drug-like small molecules | Train graph neural networks on massive, diverse QM datasets of molecular fragments [8]. | [8] |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Computational and Experimental Resources for Force Field Refinement

| Reagent / Resource | Function / Application | Example Use Case |

|---|---|---|

| 4-Site Water Models (TIP4P2005, OPC) | More accurate representation of water's electrostatic distribution and dispersion compared to 3-site models. | Rebalancing protein-water interactions to obtain correct IDP dimensions and folded protein stability [2]. |

| Energy Decomposition Analysis (ALMO-EDA) | Splits interaction energy into physically meaningful components (electrostatics, polarization, charge transfer). | Training polarizable force fields by fitting individual energy terms to their corresponding QM-derived components [11]. |

| Graph Neural Networks (GNNs) | Machine learning models that predict force field parameters directly from a molecule's 2D graph structure. | Creating transferable force fields that cover expansive chemical space for drug discovery [8] [11]. |

| Active Learning Workflows (e.g., DPGEN) | Automatically identifies and adds the most informative new configurations to a training dataset. | Efficiently building robust and transferable machine-learned force fields for complex materials [12]. |

| Small-Angle X-Ray Scattering (SAXS) | Provides low-resolution structural information about the size and shape of molecules in solution. | Validating and refining the global chain dimensions of IDPs from MD simulations [2]. |

| Symmetry-Enhanced Global Descriptors (BIGDML) | ML representations that use the full symmetry of a crystal, avoiding the locality approximation. | Achieving high data efficiency and accuracy in force fields for periodic materials [13]. |

Workflow Diagram: Bridging the QM-Experiment Gap

Force Field Refinement Workflow

Force Field Optimization by Imposing Kinetic Constraints with Path Reweighting

Frequently Asked Questions (FAQs)

FAQ 1: What types of experimental data are most critical for refining force field parameters? A comprehensive refinement strategy should target a diverse set of experimental data to ensure the force field is not overfitted to a single property. Key targets include:

- Thermodynamic Properties: Such as pure-liquid densities and vaporization enthalpies, which help calibrate cohesive energies and intermolecular interactions [15].

- Kinetic Properties: Such as rate constants for molecular processes like isomerization or protein-ligand unbinding. These provide crucial constraints on the energy landscape's dynamics [16].

- Structural Properties: Including data from X-ray crystallography and NMR (e.g., J-coupling constants, NOE intensities) to validate the ensemble of conformations a protein or molecule adopts [17].

FAQ 2: My force field reproduces thermodynamic data well but fails on kinetic properties. What should I do? This is a common issue, as many force fields are primarily optimized for structural and thermodynamic accuracy. To address this, consider methods that incorporate kinetic constraints directly into the optimization process. The path reweighting method, for example, allows you to adjust force field parameters so that simulated rate constants match experimental values. This is done by reweighting an ensemble of molecular trajectories from a prior simulation to satisfy the new kinetic constraints with minimal perturbation, following the Maximum Entropy principle [16].

FAQ 3: How can I handle uncertainty and potential errors in experimental data during force field optimization? Bayesian inference methods are particularly well-suited for this challenge. Algorithms like Bayesian Inference of Conformational Populations (BICePs) treat experimental uncertainty as a parameter to be sampled. This allows the optimization to automatically account for random and systematic errors in the data. BICePs can use specialized likelihood functions, such as a Student's model, to identify and down-weight outliers, leading to more robust parameterization [3].

FAQ 4: What is the risk of optimizing a force field against only a narrow set of experimental properties? Optimizing against a small range of properties carries a high risk of overfitting. A force field might show excellent performance for the targeted properties but perform poorly for others. For example, improvements in agreement with one metric (e.g., residual dipolar couplings) are often offset by a loss of agreement in another (e.g., structural or thermodynamic properties) [17]. A robust force field should be validated against a wide range of non-target properties to ensure its transferability [15].

FAQ 5: Are there automated approaches for force field parameterization against large, diverse chemical datasets? Yes, modern data-driven approaches are tackling this challenge. One method involves using graph neural networks (GNNs) trained on large-scale quantum mechanics (QM) datasets. These models predict bonded and non-bonded parameters for drug-like molecules directly from their chemical structure, ensuring permutational invariance and chemical symmetry. This approach allows for expansive chemical space coverage beyond traditional look-up tables [8].

Troubleshooting Guides

Issue 1: Poor Reproduction of Protein NMR Observables

Problem: Simulations using your refined force field do not match experimental NMR data, such as J-coupling constants or NOE intensities.

Diagnosis and Solution Steps:

| Step | Action | Technical Details |

|---|---|---|

| 1 | Verify Data Quality | Scrutinize the experimental data for sparseness or noise. NMR observables are ensemble-averaged and can have significant uncertainties [17]. |

| 2 | Use Bayesian Reweighting | Employ a tool like BICePs to reweight your simulation ensemble. BICePs samples the full posterior distribution of conformational populations while accounting for experimental uncertainty [3]. |

| 3 | Check for Compensating Errors | Ensure improvement in NMR observables isn't degrading other properties. Validate against a curated set of high-resolution protein structures and other metrics like radius of gyration or SASA [17]. |

| 4 | Refine Parameters | If reweighting is successful but the prior ensemble is poor, use the BICePs score as an objective function to automatically refine the underlying force field parameters against the NMR data [3]. |

Issue 2: Inaccurate Ligand Unbinding or Conformational Transition Rates

Problem: The simulated rates of key molecular processes (e.g., ligand unbinding, isomerization) are orders of magnitude slower or faster than experimental measurements.

Diagnosis and Solution Steps:

| Step | Action | Technical Details |

|---|---|---|

| 1 | Confirm Rare Event Sampling | Use enhanced sampling methods (e.g., metadynamics, umbrella sampling) to ensure adequate sampling of the transition state and free energy barriers. |

| 2 | Apply Path Reweighting | Implement a maximum-caliber path reweighting approach. Generate an ensemble of trajectories with an initial force field, then reweight this ensemble to impose experimental rate constants as constraints [16]. |

| 3 | Identify Sensitive Parameters | The reweighting procedure provides insight into which force field parameters (e.g., specific dihedral angles or non-bonded interactions) are most sensitive to the kinetics, guiding targeted refinement [16]. |

| 4 | Iterate and Validate | Run new simulations with the optimized parameters and re-calculate the rates to validate the improvement. |

Experimental Targets and Protocols

The table below summarizes key experimental properties used for force field refinement and their significance.

Table 1: Key Experimental Targets for Force Field Refinement

| Property Category | Specific Observable | Significance in Force Field Refinement | Common Experimental Source |

|---|---|---|---|

| Thermodynamic | Liquid Density ((\rho_{liq})) | Calibrates overall packing and van der Waals interactions in the condensed phase [15]. | Densimetry |

| Vaporization Enthalpy ((\Delta H_{vap})) | probes the strength of total intermolecular interactions (van der Waals and electrostatic) [15]. | Calorimetry | |

| Kinetic | Rate Constants (e.g., isomerization, unbinding) | Directly constrains the heights of free energy barriers and the dynamics on the energy landscape [16]. | Stopped-flow, FRET, single-molecule spectroscopy |

| Structural | J-coupling constants | Provides information on backbone and side-chain dihedral angle distributions [17]. | NMR Spectroscopy |

| NOE Intensities | Yields interatomic distance restraints for validating three-dimensional structures [17]. | NMR Spectroscopy | |

| Root-mean-square deviation (RMSD) | Measures the average deviation of simulated structures from a reference (e.g., X-ray structure) [17]. | X-ray Crystallography |

Workflow Diagram for Force Field Refinement

The following diagram illustrates a robust, iterative workflow for refining force fields against experimental data, integrating several modern methodologies discussed in the FAQs.

Force Field Refinement Workflow

The Scientist's Toolkit: Key Reagents & Solutions

Table 2: Essential Computational Tools for Force Field Refinement

| Tool / Resource | Function | Example Use Case |

|---|---|---|

| Path Reweighting Algorithm [16] | Adjusts force field parameters to match experimental kinetic data by reweighting trajectories. | Optimizing a protein-ligand force field to reproduce experimental unbinding rate constants. |

| BICePs Software [3] | A Bayesian reweighting algorithm that reconciles simulation ensembles with noisy experimental data. | Determining the most consistent conformational populations from a set of sparse NMR observables. |

| Graph Neural Networks (GNNs) [8] | Machine learning models that predict force field parameters directly from molecular structures. | Rapidly generating parameters for a novel drug-like molecule not covered by traditional force fields. |

| Quantum Mechanics (QM) Datasets [8] | Large-scale datasets of molecular energies, geometries, and Hessians used for training ML models. | Providing high-quality target data for training a GNN to predict accurate intramolecular parameters. |

| CombiFF Workflow [15] | An automated system for calibrating force field parameters against thermodynamic data for compound families. | Systematically optimizing Lennard-Jones parameters for a new class of organic molecules. |

Troubleshooting Guides and FAQs

FAQ: Addressing Data Challenges in Force Field Refinement

1. How can we improve force field accuracy when high-quality experimental data is scarce? Data scarcity is a common challenge in force field parameterization. A modern data-driven approach can address this by generating expansive and highly diverse molecular datasets for training. For instance, one methodology involves creating a dataset of 2.4 million optimized molecular fragment geometries with analytical Hessian matrices and 3.2 million torsion profiles at a consistent quantum mechanics (QM) level of theory (e.g., B3LYP-D3(BJ)/DZVP). A graph neural network (GNN) can then be trained on this dataset to predict all bonded and non-bonded molecular mechanics force field parameters simultaneously across a broad chemical space, achieving state-of-the-art performance [8].

2. What strategies exist for managing noisy or inconsistent historical experimental data? Historical datasets are often inconsistent or poorly annotated, which can mislead computational models. The primary solution is prioritizing data integrity first, algorithms second. Before using historical data for parameter refinement, invest in robust data curation to clean and properly annotate it. Implement FAIR (Findable, Accessible, Interoperable, and Reusable) data principles at the point of data generation, "baking it in by design" rather than treating it as an afterthought. This ensures data is captured with consistent terminology and rich metadata, making it reliable for future use [18].

3. Our force field performs well on folded proteins but fails on disordered polypeptides. How can we achieve better balance? Achieving a balance between modeling folded proteins and intrinsically disordered proteins (IDPs) is a recognized challenge. Imbalances often stem from inadequate protein-water interactions or torsional parameters. Two refined strategies have proven effective:

- Refining Protein-Water Interactions: Upscaling protein-water van der Waals interactions can improve the prediction of IDP chain dimensions while maintaining folded protein stability.

- Targeted Torsional Refinements: Specifically re-parameterizing backbone and side chain torsional parameters (e.g., for residues like glutamine) can correct biases, such as overestimated helicity, and yield more accurate conformational sampling for both folded and disordered states [2].

4. How can we integrate diverse data types (multi-omics, various assays) for more holistic force field validation? Integrating high-dimensional, heterogeneous data is complex. Multi-omics data integration methods offer a framework. Advanced computational techniques, particularly deep generative models like Variational Autoencoders (VAEs), are effective for this task. These methods can handle high-dimensionality and heterogeneity, performing tasks such as data imputation, denoising, and the creation of joint embeddings from different data types, which can be used to validate force fields against a wider set of experimental observables [19].

5. What is the impact of data imbalance on predictive maintenance models for laboratory equipment, and how can it be mitigated? In predictive maintenance, data is often severely imbalanced, with very few failure instances compared to many healthy operation instances. This can cause models to fail to learn failure patterns. A proven solution is the creation of failure horizons. This technique labels the last 'n' observations before a failure event as 'failure,' thereby increasing the number of failure cases in the training set and providing the model with a temporal window to learn pre-failure behavior [20].

Key Experimental Protocols

Protocol 1: Generating a High-Diversity QM Dataset for Force Field Parametrization

This protocol outlines the creation of a large-scale quantum mechanics dataset for training data-driven force fields, as demonstrated in the development of ByteFF [8].

- 1. Molecular Selection: Curate a initial set of drug-like molecules from databases like ChEMBL and ZINC20 based on criteria such as aromatic rings, polar surface area (PSA), and quantitative estimate of drug-likeness (QED).

- 2. Molecular Fragmentation: Cleave the selected molecules into smaller fragments (e.g., <70 atoms) using a graph-expansion algorithm that traverses each bond, angle, and non-ring torsion. This preserves local chemical environments.

- 3. Protonation State Expansion: Expand the fragment library to cover various protonation states within a physiologically relevant pKa range (e.g., 0.0 to 14.0) using tools like Epik.

- 4. QM Calculations: Perform quantum mechanics calculations on the final, deduplicated fragment set.

- Method: Use the B3LYP-D3(BJ)/DZVP level of theory.

- Optimization Dataset: Generate optimized geometries for all fragments.

- Torsion Dataset: Create torsion profiles by scanning dihedral angles.

Table: Key Components of a QM Dataset for Force Field Training

| Component | Description | Scale Example |

|---|---|---|

| Molecular Fragments | Cleaved molecules preserving local chemical environments. | 2.4 million unique fragments [8] |

| Optimized Geometries | Energy-minimized 3D structures with analytical Hessian matrices. | 2.4 million geometries [8] |

| Torsion Profiles | Scans of dihedral angles to capture rotational energy barriers. | 3.2 million profiles [8] |

| QM Theory Level | The specific method and basis set used for calculations (e.g., B3LYP-D3(BJ)/DZVP). | Balanced accuracy and computational cost [8] |

Protocol 2: Refining Force Fields for Balanced Protein and IDP Performance

This protocol describes a strategy to correct imbalances in protein force fields, enabling accurate simulation of both folded proteins and intrinsically disordered polypeptides (IDPs) [2].

- 1. Identify the Source of Imbalance: Run microsecond-scale simulations of benchmark systems (e.g., a stable folded protein like Ubiquitin and a known IDP). Compare results to experimental data from SAXS (chain dimensions) and NMR (secondary structure propensities) to identify deficiencies.

- 2. Select a Refinement Strategy:

- Option A: Refine Protein-Water Interactions. Selectively upscale the Lennard-Jones parameters between protein atoms and water to strengthen protein-water interactions, which can prevent IDPs from overly collapsing.

- Option B: Refine Torsional Parameters. Perform targeted re-parameterization of backbone and/or side chain dihedral parameters for specific amino acids (e.g., glutamine) that show biased sampling.

- 3. Validate the Refined Force Field: Conduct extensive simulations on a diverse set of protein systems, including:

- Folded proteins and protein-protein complexes (to assess stability).

- A series of IDPs with known experimental data (to assess chain dimensions and secondary structure).

- Compare all results against experimental observables to ensure balanced improvement.

Table: Validation Metrics for Balanced Protein Force Fields

| System Type | Key Validation Metrics | Experimental Technique |

|---|---|---|

| Folded Proteins | Backbone RMSD, RMSF, stability over µs-timescales | X-ray Crystallography, NMR |

| Protein Complexes | Binding interface stability, association free energy | NMR, SAXS, ITC |

| Intrinsically Disordered Proteins (IDPs) | Radius of gyration (Rg), end-to-end distance, secondary structure propensity | SAXS, smFRET, NMR |

Workflow Visualizations

Data-Driven Force Field Workflow

Force Field Refinement Cycle

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools and Data for Force Field Research

| Item | Function / Description |

|---|---|

| Graph Neural Networks (GNNs) | Symmetry-preserving ML models that predict molecular mechanics force field parameters directly from molecular structure, ensuring permutational and chemical invariance [8]. |

| Generative Adversarial Networks (GANs) | A deep learning framework used to generate synthetic data that mimics real data patterns, helping to overcome data scarcity in model training [20] [21]. |

| Variational Autoencoders (VAEs) | Deep generative models particularly useful for multi-omics data integration, capable of data imputation, denoising, and creating joint latent representations from heterogeneous data sources [19]. |

| FAIR Data Principles | A set of guidelines (Findable, Accessible, Interoperable, Reusable) for data management to ensure data is clean, contextualized, and reusable, which is critical for AI-driven discovery [18] [22]. |

| Application Programming Interfaces (APIs) | Software intermediaries that allow different applications and siloed data sources (e.g., ELNs, LIMS) to communicate, enabling automated data integration and analysis [22]. |

| High-Throughput QM Datasets | Large-scale, high-quality quantum mechanics datasets (e.g., optimized geometries, torsion profiles) that serve as the foundational training data for developing accurate, data-driven force fields [8]. |

Methodologies for Experimental Data Integration: From Bayesian Inference to ML Potentials

Bayesian Inference of Conformational Populations (BICePs) for Robust Parameter Refinement

Bayesian Inference of Conformational Populations (BICePs) is a statistically rigorous algorithm designed to reconcile theoretical predictions of conformational state populations with sparse and/or noisy experimental measurements [23]. In computational chemistry and drug discovery, accurately determining structural ensembles is crucial for understanding biomolecular function, but this is often challenged by experimental data that are ensemble-averaged, sparse, and subject to random and systematic errors [3] [23].

BICePs addresses these challenges through a Bayesian framework that samples the full posterior distribution of conformational populations while simultaneously treating experimental uncertainties as nuisance parameters [3] [24]. A key advantage of BICePs is its ability to perform objective model selection and parameter optimization through a quantity called the BICePs score, which reflects the integrated posterior evidence in favor of a given model [23]. This makes BICePs particularly valuable for force field validation and refinement, where it can automatically discover optimal parameter sets while accounting for multiple sources of uncertainty [3] [24].

Core Concepts and Terminology

- Conformational Populations: The relative probabilities of different molecular structures or conformations that a molecule can adopt in solution.

- Prior Distribution (

p(X)): Theoretical predictions of conformational state populations, typically from molecular simulations [23]. - Likelihood Function (

p(D|X,σ)): Quantifies how well forward model predictionsf(X)agree with experimental dataD, incorporating uncertainty parametersσ[3]. - Posterior Distribution (

p(X,σ|D)): The refined conformational populations and uncertainty estimates obtained after combining prior knowledge with experimental data [3]. - BICePs Score: A free energy-like quantity used for model selection and parameter optimization [23].

- Forward Model: A computational framework that predicts experimental observables from molecular configurations [24].

- Reference Potentials: Distributions of observable values in the absence of experimental restraint information, crucial for avoiding unnecessary bias [23].

Troubleshooting Guide: Frequently Asked Questions

Q1: My BICePs calculations show poor convergence. What could be wrong?

Possible Causes and Solutions:

Insufficient Replica Sampling:

- Problem: The replica-averaged forward model requires enough replicas to accurately approximate the ensemble average. Too few replicas lead to high standard error of the mean (SEM), which can be misinterpreted as true uncertainty [3].

- Solution: Increase the number of replicas (

N_r). The SEM decreases with the square root of the number of replicas [3].

Poorly Parameterized Forward Model:

- Problem: Inaccurate empirical relationships (e.g., in Karplus parameters for J-coupling predictions) introduce systematic errors that hinder convergence [24].

- Solution: Use BICePs to refine the forward model parameters (

θ) by either sampling them as nuisance parameters or through variational minimization of the BICePs score [24].

Inadequate Treatment of Outliers:

- Problem: Experimental datasets often contain a few erratic measurements that can disproportionately influence the results [3].

- Solution: Employ BICePs' specialized likelihood functions, such as the Student's model, which marginalizes uncertainty parameters for individual observables and is robust to outliers [3].

Q2: How do I know if my force field parameters are optimized correctly?

Validation Strategies:

- Monitor the BICePs Score: The BICePs score reports the free energy of "turning on" the experimental restraints. During variational optimization, a lower (more negative) BICePs score indicates a model that has stronger evidence given the data [3] [24].

- Check Parameter Reproducibility: Perform repeated optimizations from different initial parameter values. Correctly optimized parameters should converge to the same optimal values, ensuring reproducibility [3].

- Assess Robustness to Error: Test the optimized parameters against synthetic datasets with varying extents of random and systematic error. A robust parameterization should perform well even in the presence of significant noise [3].

- Examine Posterior Distributions: Well-optimized parameters will yield posterior distributions of conformational populations that are consistent with the experimental data without overfitting, which is achieved through the inherent regularization in the BICePs score [3] [23].

Q3: What is the role of reference potentials, and how do I choose them?

Importance and Selection:

- Role: Reference potentials (

Q_ref(r)) account for the distribution of observable values in the absence of experimental information. They prevent unnecessary bias, especially when many simultaneous restraints are used, by ensuring that only informative data significantly reweights the ensemble [23]. - Selection Criteria:

- For distance restraints in linear chains, a Gaussian reference potential or one derived from polymer physics of a random-coil is appropriate [23].

- For cyclic molecules, a maximum-entropy distribution parameterized from average distances across the conformational state space is often effective [23].

- The choice can be problem-dependent, but the key is to use a physically reasonable model for the system in the absence of specific experimental restraints.

Q4: Can BICePs handle different types of experimental data?

Supported Data Types and Forward Models:

BICePs is adaptable to various ensemble-averaged experimental measurements through the use of appropriate forward models. The table below summarizes common data types and their corresponding forward models.

Table 1: Experimental Data Types and Forward Models Compatible with BICePs

| Experimental Data Type | Description of Forward Model | Key Parameters |

|---|---|---|

| NMR J-couplings [24] | Karplus relation: ^3J = A cos²(ϕ) + B cos(ϕ) + C |

Karplus parameters (A, B, C), dihedral angle (ϕ) |

| NMR chemical shifts [23] | Empirical or machine learning models mapping structure to chemical shift | Structure-based descriptors, neural network weights |

| NOE distance restraints [23] | Inverse sixth-power averaging: <r⁻⁶>^(-1/6) |

Interatomic distances |

| FRET efficiency [23] | Distance-dependent energy transfer models | Donor-acceptor distance, Förster radius |

Q5: How does BICePs compare to other Bayesian inference methods?

BICePs shares the core Bayesian framework of methods like Inferential Structure Determination (ISD) and MELD but offers key distinctions [23]. Its unique advantages include:

- Explicit use of reference potentials to avoid bias from non-informative restraints [23].

- Quantitative model selection via the BICePs score, which is computed through free energy estimation methods [23].

- A replica-averaged forward model that makes it a maximum-entropy reweighting method without needing adjustable regularization parameters [3] [24].

Essential Research Reagent Solutions

To implement BICePs for force field parameter refinement, researchers require a suite of computational tools and data.

Table 2: Essential Research Reagents for BICePs Experiments

| Reagent / Tool | Function / Purpose | Examples / Notes |

|---|---|---|

| Conformational Ensemble | Provides the prior distribution p(X) of molecular structures. |

Generated by MD simulation, Monte Carlo methods, or enhanced sampling [23]. |

| Experimental Datasets | Sparse, ensemble-averaged data used for reweighting and validation. | NMR observables (J-couplings, chemical shifts, NOEs), FRET data [23] [24]. |

| Forward Model | Computes theoretical observables f(X) from structural configurations. |

Empirical relationships (e.g., Karplus equation) or neural networks [24]. |

| BICePs Software | Core algorithm for sampling the posterior and computing scores. | Implementations may vary; the theoretical foundation is described in [3] [23] [24]. |

| Quantum Chemistry Data | High-quality reference data for validating and training force fields. | Used in workflows like ByteFF to generate accurate torsion profiles and Hessian matrices [8]. |

Standard Experimental Protocol for Parameter Refinement

This protocol outlines the steps for refining force field or forward model parameters using BICePs with a variational optimization approach [3] [24].

Step 1: Prepare the Prior Conformational Ensemble

- Generate a diverse set of molecular conformations using molecular dynamics or Monte Carlo simulations with an initial force field.

- Ensure adequate sampling of the relevant conformational space.

Step 2: Select and Prepare Experimental Data

- Gather ensemble-averaged experimental measurements (

D). - Choose appropriate forward models (

f_j) for each observable.

Step 3: Define the BICePs Posterior and Score

- Set up the replica-averaged posterior distribution, which includes likelihood functions (e.g., Gaussian, Student's-t), the prior

p(X), and priors for uncertaintiesp(σ)[3]. - The BICePs score is calculated as the free energy of applying the experimental restraints.

Step 4: Perform Variational Optimization

- Compute the first and second derivatives of the BICePs score with respect to the force field or forward model parameters (

θ). - Use an optimization algorithm to minimize the BICePs score, updating parameters to find the values with the strongest evidence given the data [3] [24].

Step 5: Validate the Refined Parameters

- Assess convergence by running repeated optimizations.

- Test the refined parameters on independent datasets or through cross-validation to ensure they are not overfitted.

The following diagram illustrates the iterative workflow for this protocol.

BICePs Parameter Refinement Workflow

Advanced Applications: Neural Network Forward Models

BICePs is not limited to empirical forward models. Its framework generalizes to any differentiable forward model, including neural networks (NNs) [24]. This is particularly promising for observables where empirical relationships are poorly defined or insufficiently accurate.

Troubleshooting NN Forward Models:

- Problem: Overfitting to sparse training data.

- Solution: The BICePs score provides a form of inherent regularization. Furthermore, the Bayesian framework allows for the treatment of NN weights as parameters to be sampled or optimized, naturally incorporating uncertainty [24].

- Problem: High computational cost of training.

- Solution: Leverage the derivatives of the BICePs score to perform efficient gradient-based optimization of the NN parameters, which can be more data-efficient than traditional training [24].

The following diagram contrasts the refinement of traditional analytical forward models with neural network-based models.

Forward Model Refinement Pathways

Differentiable Trajectory Reweighting (DiffTRe) for Gradient-Based Optimization

Differentiable Trajectory Reweighting (DiffTRe) presents a paradigm shift for refining force field parameters against experimental data. It addresses a central challenge in molecular dynamics: how to systematically improve the accuracy of a potential energy model, particularly a neural network potential (NNP), when high-fidelity ab initio data is unavailable or insufficient. DiffTRe enables gradient-based optimization of force fields by leveraging experimental observables within a top-down learning framework [25]. This methodology bypasses the need for differentiation through the entire molecular dynamics trajectory, which is computationally expensive and prone to numerical instability. Instead, it uses a reweighting scheme based on statistical mechanics to compute the gradients needed to update potential parameters, achieving around two orders of magnitude speed-up in gradient computation compared to methods that backpropagate through the simulation [25]. For researchers engaged in force field refinement, DiffTRe offers a path to enrich models with experimental data, thereby enhancing their predictive accuracy for real-world systems, from small molecules to complex biomolecules like proteins [26].

Frequently Asked Questions (FAQs)

Q1: What is the core computational advantage of DiffTRe over fully differentiable molecular simulations? DiffTRe's primary advantage is its avoidance of backpropagation through the MD simulation for time-independent observables. It achieves this by leveraging thermodynamic perturbation theory to reweight an existing trajectory generated with a reference potential. This bypasses the memory overhead and exploding gradients associated with storing all operations of a forward simulation for reverse-mode automatic differentiation [25] [26]. The result is a method that is more memory-efficient and numerically stable.

Q2: For which types of experimental observables is DiffTRe suitable? DiffTRe is explicitly designed for time-independent equilibrium observables [25] [27]. This includes a wide range of properties commonly measured in experiments, such as:

- Thermodynamic properties: Enthalpy of vaporization, pressure [25].

- Structural properties: Radial distribution functions (RDFs), angular distribution functions [25], and native protein conformations [26].

- Mechanical properties: Stiffness tensor [25]. It is important to note that DiffTRe is not generally applicable to time-dependent properties, such as diffusion coefficients, relaxation rates, or reaction rates [27] [28].

Q3: My training loss is unstable, and my potential is not improving. What could be wrong?

Instability often arises from a low effective sample size during reweighting. This occurs when the updated potential parameters θ produce energies that are too different from the reference potential θ̂, making a few states dominate the weighted average [25]. To troubleshoot:

- Monitor Effective Sample Size (Neff): Track

Neffduring training. A significant drop indicates the reference trajectory is no longer representative. The effective sample size is approximated byNeff ≈ exp(-Σi wi log(wi)), wherewiare the reweighting weights [25]. - Shorten the Update Interval: Regularly update the reference potential

θ̂and generate a new trajectory with the current parametersθbeforeNeffbecomes too small [25] [26]. - Verify Observable Calculation: Ensure that the observable calculated from your simulation (e.g., RDF) is computed correctly from the reweighted ensemble and that the loss function is appropriate for your target.

Q4: Can DiffTRe be applied to coarse-grained systems in addition to all-atom models? Yes, a significant strength of DiffTRe is its applicability to both all-atom and coarse-grained models. The method has been successfully used to learn a coarse-grained water model [25] and, importantly, to train neural network potentials for coarse-grained proteins using only their native experimental structures as training data [26]. This demonstrates its generality for developing transferable force fields at different resolutions.

Q5: How does DiffTRe compare to other gradient-based force field optimization methods? The table below summarizes how DiffTRe fits into the landscape of parameter optimization methods.

Table 1: Comparison of Gradient-Based Force Field Optimization Methods

| Method Class | Method Name | Key Advantages | Key Limitations |

|---|---|---|---|

| Ensemble-based | DiffTRe [25] | Memory-efficient; avoids exploding gradients; fast gradient computation. | Not applicable to time-dependent observables. |

| Ensemble Reweighting (e.g., ForceBalance [27]) | Applicable to a variety of equilibrium properties. | Not applicable to time-dependent properties. | |

| Trajectory-based | Differentiable Simulation (DMS) [27] | Accurate gradients; applicable to time-dependent observables. | Poor memory scaling with trajectory length; can be unstable. |

| Reversible Simulation [27] | Memory-efficient; applicable to time-dependent observables. | Requires custom implementation; can be unstable. | |

| Numerical | Finite Differences | Simple to implement; uses standard simulation software. | Poor scaling with parameter number; gradients can be inaccurate. |

Troubleshooting Guides

Diagnosing and Resolving Low Effective Sample Size

A low effective sample size (Neff) is the most common cause of poor training performance in DiffTRe. Follow this diagnostic flowchart to identify and correct the issue.

Problem: The reweighted ensemble is dominated by very few states from the reference trajectory, leading to high-variance gradients and unstable training.

Solution Steps:

- Quantify the Problem: Calculate

Neffduring each training step. A sharp drop is a clear warning sign [25]. - Update Reference State: The most direct solution is to update the reference potential parameters

θ̂with the current parametersθand generate a new, more representative trajectory. This should be done periodically during training [26]. - Adjust Optimization Hyperparameters: If

Neffdrops too rapidly between reference updates, reduce the learning rate of your optimizer (e.g., Adam). This results in smaller parameter updates and keepsθcloser toθ̂for longer.

Handling Non-Convergence and Poor Force Field Generalization

Problem: The training loss decreases, but the resulting force field performs poorly in validation simulations or fails to capture the target physics.

Solution Steps:

- Verify Reference Data: Ensure the experimental data used as the training target is accurate and appropriate for your system.

- Inspect the Prior Potential: When using a neural network potential, it is often paired with a classical prior potential

U_λ(e.g., for bonded terms and steric repulsion). An poorly defined prior can prevent the NNP from learning the correct corrections. Check that your prior does not already bias the system against the target state [26]. - Review the Training Set: For coarse-grained protein folding, using a diverse set of protein structures in training is crucial for developing a generalizable model (G-NNP). A model trained on a small, non-diverse set (FF-NNP) may not extrapolate well [26].

- Regularize the Loss Function: Add regularization terms to the loss function to prevent overfitting to the specific training observables at the expense of other important physical properties.

The Scientist's Toolkit: Essential Research Reagents

To implement a DiffTRe experiment for force field refinement, the following computational tools and reagents are essential.

Table 2: Key Research Reagents and Tools for DiffTRe Experiments

| Reagent / Tool | Function / Purpose | Implementation Examples |

|---|---|---|

| Differentiable MD Engine | A molecular dynamics engine that allows for gradient computation through energy and force calculations. | TorchMD [26], JAX-MD [29] |

| Neural Network Potential (NNP) Architecture | A flexible model to represent the potential energy function. | DimeNet++ [25], SchNet [26] |

| Thermodynamic Reweighting Core | The code that implements the reweighting of trajectory states using the DiffTRe equation. | Custom code in PyTorch [26] or JAX [25] |

| Reference Dataset | The experimental data used as the optimization target. | RDFs, enthalpies, protein native structures [25] [26] |

| Optimization Algorithm | A gradient-based optimizer to update force field parameters. | Adam [30] |

Standard Experimental Protocol: Refining a Coarse-Grained Protein Potential

This protocol outlines the key steps for training a coarse-grained neural network potential for proteins using DiffTRe, based on the methodology by Navarro et al. [26].

Objective: To train a coarse-grained NNP such that simulations with it produce ensembles with minimal RMSD to native protein conformations.

Step-by-Step Workflow:

System Setup and CG Mapping:

- Define the coarse-grained representation. A common choice is a Cα model where each amino acid is represented by a single bead located at its Cα atom [26].

- Assign bead types based on the 20 standard amino acids.

Define the Energy Function:

- The total potential energy is

U_total = U_θ(NNP) + U_λ(Prior). The prior potentialU_λincludes essential terms to prevent chain breaking and atomic overlap, and to enforce chirality [26]. - Choose and initialize a graph neural network architecture (e.g., SchNet) for

U_θ.

- The total potential energy is

Generate Initial Trajectory and Sample States:

- Using an initial set of parameters

θ̂, run short, parallel MD simulations starting from native or extended structures. - From these trajectories, sample

Kuncorrelated states{S_i}.

- Using an initial set of parameters

Compute Reweighted Ensemble Average:

- For each sampled state

S_i, compute the RMSD between its coordinates and the native conformation. - Calculate the ensemble average RMSD using the DiffTRe reweighting formula:

⟨RMSD⟩ = Σ_i [ w_i * RMSD(S_i) ]wherew_i = exp[-β(U_θ(S_i) - U_θ̂(S_i))] / Σ_j exp[-β(U_θ(S_j) - U_θ̂(S_j))][26].

- For each sampled state

Define and Compute Loss Function:

- A margin-ranking loss is often effective:

L = max(0, ⟨RMSD⟩ + m), wheremis a target margin (e.g., -1 Å). This prevents further optimization once the RMSD is sufficiently low [26].

- A margin-ranking loss is often effective:

Compute Gradients and Update Parameters:

- Compute the gradient of the loss

Lwith respect to the NNP parametersθusing automatic differentiation. - Update the parameters

θusing a gradient-based optimizer.

- Compute the gradient of the loss

Iterate to Convergence:

- Periodically update the reference potential

θ̂with the currentθand regenerate the trajectory to maintain a high effective sample size. - Repeat steps 3-6 until the training loss stabilizes and validation checks confirm the model's quality.

- Periodically update the reference potential

Advanced Applications and Integration

DiffTRe's flexibility allows it to be integrated into more sophisticated training schemes. It can be combined with other algorithms within a unified framework like chemtrain [31]. For instance, a potential can be pre-trained using bottom-up methods like Force Matching on ab initio data and subsequently refined against experimental data using DiffTRe in a top-down approach. This data fusion strategy leverages the strengths of both paradigms, resulting in a molecular model of higher accuracy than one trained on a single data source [31] [32]. Furthermore, DiffTRe generalizes classical bottom-up coarse-graining methods like Iterative Boltzmann Inversion, extending them to many-body correlation functions and arbitrary potential forms [25].

The refinement of molecular mechanics force fields is a critical endeavor in computational chemistry and drug development. Traditional parameterization strategies often rely exclusively on either quantum mechanical (QM) data or experimental observations. Training on QM data—a "bottom-up" approach—ensures a fundamental physical basis but can propagate and even amplify inaccuracies inherent in the underlying QM method, such as those from approximate Density Functional Theory (DFT) functionals [6]. Conversely, a "top-down" approach using only experimental data can result in force fields that are under-constrained, accurately reproducing a handful of target properties but failing unpredictably on others [6] [33]. Fused data learning emerges as a powerful strategy to overcome these limitations by concurrently training a single force field model on both QM-derived data (energies, forces, virial stresses) and experimentally measured properties (lattice parameters, elastic constants) [6]. This methodology leverages the complementary strengths of both data sources: the broad, high-resolution configurational sampling provided by QM calculations and the physical ground-truth embedded in experimental measurements. This technical support guide is framed within a broader thesis on refining force field parameters against experimental data, providing researchers with practical troubleshooting and foundational knowledge for implementing this advanced parameterization strategy.

The Scientist's Toolkit: Essential Reagents and Computational Solutions

The following table details the key computational tools, data types, and software required to implement a fused data learning protocol for force field development.

Table 1: Key Research Reagent Solutions for Fused Data Learning

| Item Name | Type | Primary Function in Fused Learning |

|---|---|---|

| DFT Database | Data | Provides target labels for energy, atomic forces, and virial stress across a diverse set of atomic configurations for "bottom-up" learning [6]. |

| Experimental Properties | Data | Supplies macroscopic target observables (e.g., elastic constants, lattice parameters) to constrain the force field to physical reality["top-down" learning] [6]. |

| Graph Neural Network (GNN) Potential | Model | A high-capacity machine learning model that serves as the flexible functional form for the force field, capable of learning complex multi-body interactions [6]. |

| Differentiable Trajectory Reweighting (DiffTRe) | Algorithm | Enables gradient-based optimization of force field parameters against experimental observables without backpropagating through the entire MD simulation, overcoming memory and instability issues [6]. |

| ForceBalance | Software | An automated parameter fitting tool that can optimize force field parameters against both QM and experimental target data simultaneously [33] [34]. |

Core Methodology and Workflow

The successful implementation of fused data learning requires a structured workflow that integrates data preparation, model training, and validation. The diagram below illustrates the high-level concurrent training protocol.

Workflow Description: The process begins with an initial Machine Learning (ML) potential, often pre-trained solely on DFT data [6]. The core of fused learning is the alternating training loop. In one epoch, the DFT Trainer updates the model parameters (θ) by minimizing the mean-squared error between the ML potential's predictions and the DFT-calculated energies, forces, and virial stresses. In the next epoch, the EXP Trainer takes over, using the Differentiable Trajectory Reweighting (DiffTRe) method to compute gradients of experimental observables with respect to θ and updating the parameters to match measured properties [6]. This cycle continues until the model converges, simultaneously satisfying the constraints from both data sources.

Detailed Experimental Protocol

The following steps outline a specific protocol for training an ML potential for a metallic system (e.g., titanium) using fused data learning, as demonstrated in the referenced research [6].

DFT Database Generation:

- Perform high-throughput Density Functional Theory (DFT) calculations on a diverse set of atomic configurations. This dataset should include:

- Equilibrium crystal structures (e.g., HCP, BCC, FCC).

- Strained and elastically deformed configurations.

- Randomly perturbed atomic structures.

- High-temperature molecular dynamics snapshots (generated via ab initio MD).

- The dataset should be broad enough to avoid distribution shift during subsequent MD simulations. For a typical system, this may involve thousands of configurations [6].

- Extract and store the target properties for each configuration: total energy, atomic forces, and the virial stress tensor.

- Perform high-throughput Density Functional Theory (DFT) calculations on a diverse set of atomic configurations. This dataset should include:

Experimental Data Curation:

- Collect experimentally measured mechanical properties and lattice parameters across a range of temperatures. For example, elastic constants and lattice parameters of HCP titanium from 4 K to 973 K [6].

- To balance computational cost with constraint quality, select a subset of representative temperatures (e.g., 23 K, 323 K, 623 K, 923 K) for the primary training loop. The remaining data can be used for validation.

Model and Training Configuration:

- Initialization: Initialize a Graph Neural Network (GNN) potential using parameters from a model pre-trained only on the DFT database. This provides a physically reasonable starting point and avoids the need for a simple prior potential [6].

- Loss Function Construction:

- DFT Loss Term: A weighted sum of mean-squared errors for energy, forces, and virial stress.

- Experimental Loss Term: A weighted sum of mean-squared errors between simulation-calculated and experimentally measured properties (e.g., elastic constants, lattice parameters via zero pressure condition).

- Training Loop: Implement an alternating training schedule. For one epoch, update parameters using batches from the DFT database. For the next epoch, update parameters by running NVT ensemble simulations at the target experimental temperatures and using the DiffTRe method to minimize the experimental loss [6]. Batch-wise switching is also a feasible alternative.

- Validation and Early Stopping: Monitor the model's error on a separate validation set of DFT data and experimental properties not used in training. Halt training when validation performance stops improving.

Troubleshooting Guides

Guide: Experimental Properties Are Not Reproduced Accurately

Problem: After training, your fused model fails to match the target experimental properties within an acceptable error margin.

Check 1: Insufficient Experimental Constraints

- Symptoms: Good performance on DFT test set but large deviations from experiment.

- Solution: Increase the weight of the experimental loss term during training. Furthermore, ensure your experimental dataset is diverse enough to constrain the model; a single data point is often inadequate [6]. Incorporate multiple types of properties (e.g., elastic constants, lattice parameters, and perhaps phonon frequencies) if available.

Check 2: Inconsistency Between DFT and Experimental Data

- Symptoms: The model struggles to minimize both DFT and experimental losses simultaneously, with errors oscillating without converging.

- Solution: This indicates a fundamental inconsistency, often where the DFT functional itself is inaccurate for the target properties [6]. The fused learning approach is designed to correct for this. Ensure the DFT training database is sufficiently diverse and trust the experimental data as the higher-level truth. The model should learn to deviate from the DFT baseline to match experiment.

Check 3: Poor Sampling in EXP Trainer Simulations

- Symptoms: Unstable or noisy gradients during the experimental training phase.

- Solution: The DiffTRe method relies on simulations to compute observables. Ensure your MD simulations in the EXP trainer are long enough to yield well-converged ensemble averages for properties like elastic constants. Check for equilibration before starting property measurement.

Guide: Model Fails to Generalize to "Off-Target" Properties

Problem: The model performs well on the trained-upon QM and experimental data but fails to accurately predict other properties (e.g., phonon spectra, liquid structure, or properties of a different phase).

Check 1: Overfitting to a Narrow Set of Configurations

- Symptoms: Excellent accuracy on target data but physically implausible results for other properties.

- Solution: Review the diversity of your DFT training database. It must sample the relevant regions of configurational space (e.g., different phases, defect structures, surfaces). A database containing only perfect crystal structures will not generalize to disordered systems. Augment the DFT database with active learning to include new configurations sampled during exploratory simulations [6].

Check 2: Lack of Long-Range Interactions in Training

- Symptoms: Failure to reproduce properties dependent on long-wavelength phonons or elastic phenomena.

- Solution: The DFT training data, often generated from small supercells (e.g., <100 atoms), may not capture long-range interactions [6]. If possible, incorporate larger-scale DFT calculations or use experimental data that inherently probes long-range effects (like elastic constants) to provide these constraints.

Guide: Unstable or Divergent Training Dynamics

Problem: The training process is unstable, with the loss function exhibiting large oscillations or diverging completely.

Check 1: Incorrect Loss Weighting

- Symptoms: Wild oscillations in error metrics after switching between DFT and EXP trainers.