Accurate Temperature and Pressure Control in MD Simulations: A Guide to Reliable Ensemble Generation for Biomolecular Research

This article provides a comprehensive guide for researchers and drug development professionals on achieving accurate temperature and pressure control in Molecular Dynamics (MD) simulations.

Accurate Temperature and Pressure Control in MD Simulations: A Guide to Reliable Ensemble Generation for Biomolecular Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on achieving accurate temperature and pressure control in Molecular Dynamics (MD) simulations. It covers the foundational principles of thermodynamic ensembles and the molecular definition of temperature, explores the mechanisms and selection criteria for modern thermostats and barostats, and addresses common challenges in generating physically valid conformational ensembles. Further, it discusses rigorous validation protocols against experimental data and emerging machine-learning methods for enhanced sampling. The content synthesizes best practices to equip scientists with the knowledge to perform robust MD simulations, crucial for applications in drug design and understanding biomolecular dynamics.

The Fundamentals of Thermodynamic Ensembles and Molecular-Level Control

Defining Temperature and Pressure at the Molecular Scale

Frequently Asked Questions (FAQs)

1. Why is precise temperature control critical in my MD simulation? Temperature is a fundamental thermodynamic parameter that directly influences the conformational ensemble of biomolecules according to the Boltzmann distribution. Accurate temperature control ensures your simulation samples physically realistic states. Poor control can prevent the system from reaching a proper thermodynamic equilibrium, invalidating any results derived from the trajectory [1] [2].

2. My simulation results are physically unrealistic. What are the first parameters I should check? Before running a production simulation, always double-check that your temperature and pressure coupling parameters are correctly carried over from your NVT and NPT equilibration steps. A common mistake is a mismatch between the temperature for velocity generation and your system's target equilibrated temperature [3].

3. How can I be sure my simulation has reached equilibrium? A system can be considered equilibrated when the average of key properties (like energy or RMSD) calculated over successive time windows shows only small fluctuations around a stable value after a convergence time. Be aware that some properties, especially those dependent on infrequent transitions to low-probability conformations, may require much longer simulation times to converge than others [2].

4. What is the consequence of an incorrectly sized pair-list buffer in GROMACS? An undersized buffer for the Verlet pair list can lead to an energy drift. This happens because particle pairs may move from outside the interaction cut-off to inside it between list updates, causing missed interactions. GROMACS can automatically determine this buffer size based on a tolerance for the energy drift [4].

Troubleshooting Guides

Issue 1: Simulation Not Sampling Correct Thermodynamic Ensemble

Problem: Your simulation is not reproducing expected equilibrium properties or the temperature/pressure is unstable.

Diagnosis and Solutions:

- Check Thermostat/Berostat Choice: The selection of a thermostat or barostat algorithm can significantly impact the sampling of physical observables like potential energy. For instance, while the Nosé-Hoover chain and Bussi thermostats provide reliable temperature control, their potential energy sampling can show a pronounced dependence on the integration time step [5].

- Verify Equilibration: Do not begin production analysis until the system is equilibrated. Plot the potential energy and root-mean-square deviation (RMSD) of the biomolecule as a function of time. The simulation can be considered preliminarily equilibrated when these properties reach a stable plateau [2].

- Inspect Parameter Transfer: When setting up a production run, ensure all temperature and pressure coupling parameters (e.g., taut, taup, coupling types) match those used during a stable NPT equilibration phase [3].

Issue 2: Energy Drift in Long Simulations

Problem: The total energy of your system shows a consistent drift over time, indicating a possible flaw in the simulation setup.

Diagnosis and Solutions:

- Analyze Pair-List Settings: In GROMACS, an energy drift can occur if the Verlet pair-list buffer is too small. Use the

mdrunoutput to monitor the average number of interactions per step that were beyond the pair-list cut-off. A significant number indicates a need for a larger buffer (rlist) or more frequent neighbor searching (nstlist) [4]. - Remove Center-of-Mass Motion: The center-of-mass velocity should be set to zero at every step. Although there is usually no net external force, the update algorithm can introduce a slow change in the center-of-mass velocity, leading to a drift in the total kinetic energy over very long runs [4].

Issue 3: System Instability and Crash

Problem: Your simulation crashes shortly after starting, often with errors related to forces or particle displacement.

Diagnosis and Solutions:

- Review Topology: Use tools like

gmx checkto verify the integrity of your topology. Common errors include missing atoms in the coordinate file, incorrect atom names that don't match the force field's residue database, or long bonds due to missing atoms [6]. - Confirm Force Field Combining Rules: The Lennard-Jones parameters between different atom types are computed using combining rules specified in the force field. Using an incorrect rule (e.g., using GROMOS rule 1 for an AMBER force field that requires rule 2) will lead to unrealistic interactions and instability. This is defined in the

forcefield.itpfile [7].

Thermostat Algorithm Performance

The table below summarizes key findings from a benchmark study of thermostat algorithms using a binary Lennard-Jones glass-former model, providing guidance for algorithm selection [5].

Table: Benchmarking Thermostat Algorithms in MD Simulations

| Thermostat Algorithm | Sampling Reliability | Time-Step Dependence | Key Characteristic / Consideration |

|---|---|---|---|

| Nosé-Hoover Chain (NHC2) | Reliable temperature control | Pronounced in potential energy | Extended Hamiltonian formalism; canonical ensemble. |

| Bussi (Stochastic velocity rescaling) | Reliable temperature control | Pronounced in potential energy | Minimal disturbance on Hamiltonian dynamics; corresponds to a global Langevin thermostat. |

| Langevin (GJF) | Consistent temperature & potential energy sampling | Low | Direct discretisation; accurate configurational sampling and diffusion. |

| Langevin (BAOAB) | Accurate configurational sampling | Moderate | Operator-splitting method. |

| Langevin (ABOBA) | - | Moderate | Operator-splitting method. |

| Langevin (VV) | - | Moderate | Velocity-Verlet integration. |

Experimental Protocols

Protocol 1: Standard Protocol for System Equilibration and Production

This protocol outlines the standard steps for preparing an MD system, equilibrating it, and running a production simulation, with a focus on temperature and pressure control [3] [8] [4].

- System Setup: Construct your system topology using a tool like

pdb2gmxand place it in a simulation box with solvent and ions. - Energy Minimization: Run a steepest descent or conjugate gradient minimization to remove any steric clashes and bad contacts, relaxing the system to the nearest local energy minimum.

- NVT Equilibration: Equilibrate the system with a thermostat (e.g., Nosé-Hoover, Bussi) at the target temperature (e.g., 300 K) for 50-100 ps, typically with positional restraints on solute heavy atoms. This allows the solvent and ions to relax around the solute.

- NPT Equilibration: Equilibrate the system with both a thermostat and a barostat (e.g., Parrinello-Rahman) at the target temperature and pressure (e.g., 1 bar) for 100-200 ps, often with the same positional restraints. This adjusts the system density to the correct value.

- Production MD: Run the final, unrestrained simulation for the required time to sample the desired properties. Crucially, ensure the temperature and pressure parameters from the stable NPT equilibration are correctly transferred to this step.

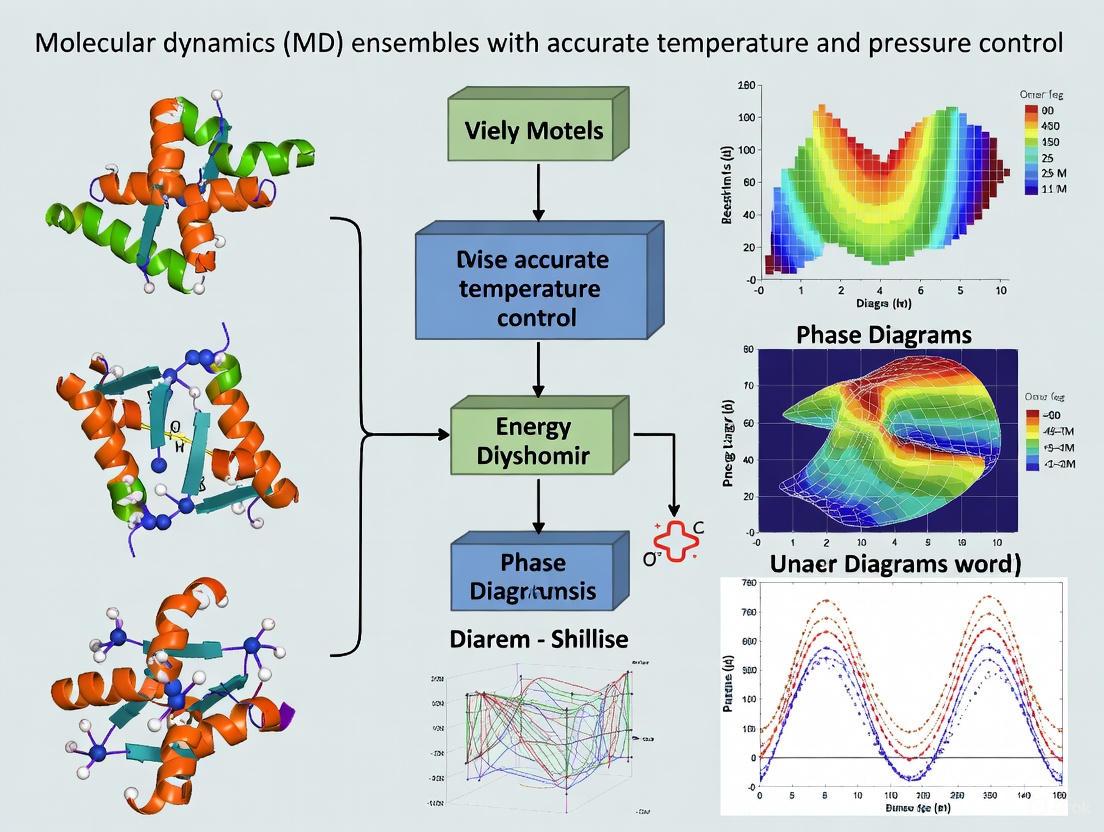

Diagram: MD Ensemble Equilibration and Production Workflow.

Protocol 2: Assessing Convergence of Simulated Ensembles

Convergence is not guaranteed by long simulation times alone; it must be actively verified. This protocol provides a method to assess whether your simulation has sampled enough to be considered converged for a property of interest [2] [9].

- Property Selection: Choose one or more properties

A(e.g., RMSD, radius of gyration, specific inter-atomic distance, potential energy). - Calculate Running Average: For a trajectory of total length

T, calculate the running average of propertyA, denoted<A>(t), from time 0 totfor allt < T. - Visual Inspection and Analysis: Plot

<A>(t)as a function of timet. - Convergence Criterion: Identify if there is a convergence time

t_cafter which the fluctuations of<A>(t)around the final average<A>(T)remain small and bounded for a significant portion of the remaining trajectory. The system can be considered equilibrated with respect to propertyAaftert_c.

Table: Typical Convergence Timescales for Different Systems

| System Type | Approximate Convergence Timescale | Key Converged Properties (excluding terminal regions) |

|---|---|---|

| Small DNA duplex | ~1–5 μs | Helix structure and dynamics [9] |

| Dialanine (22-atom peptide) | Multi-microsecond (some properties unconverged) | - [2] |

| Globular Protein Domains | Varies; multi-microsecond trajectories often needed | Properties of high biological interest (e.g., local flexibility, native-state dynamics) [1] [2] |

The Scientist's Toolkit

Table: Essential "Research Reagent Solutions" for Temperature/Pressure Controlled MD

| Item / Resource | Function / Purpose | Example Use-Case |

|---|---|---|

| GROMACS MD Package | A versatile suite for performing MD simulations and analysis. | Used for simulating the temperature-dependent structural ensembles of proteins in the aSAM/ASAMt study [1]. |

| Nosé-Hoover Chain Thermostat | A deterministic algorithm for canonical (NVT) ensemble generation via an extended Hamiltonian. | Provides reliable temperature control in simulations of biomolecules [5]. |

| Bussi Thermostat | A stochastic velocity-rescaling method designed to minimally perturb the Hamiltonian dynamics. | An alternative global thermostat for constant-temperature simulations [5]. |

| Langevin Thermostat (GJF) | A stochastic algorithm; the Grønbech-Jensen-Farago implementation provides accurate configurational sampling. | Useful for consistent sampling of both temperature and potential energy [5]. |

| Parrinello-Rahman Barostat | An algorithm for maintaining constant pressure, allowing for fluctuations in box shape and size. | Standard choice for NPT ensemble simulations of biomolecular systems in solution. |

| mdCATH / ATLAS Datasets | Curated MD simulation datasets of proteins at various temperatures. | Used for training and benchmarking machine learning models like aSAMt for ensemble generation [1]. |

| AMBER, CHARMM, GROMOS | Class I biomolecular force fields defining interaction potentials. | Provide the empirical energy functions and parameters needed to compute forces during an MD simulation [7]. |

Molecular Dynamics (MD) simulations are a cornerstone of computational chemistry and materials science, enabling researchers to study the temporal evolution of molecular systems at an atomic level. The concept of a thermodynamic ensemble is central to this process, providing a statistical framework derived from the laws of classical and quantum mechanics for deriving a system's thermodynamic properties [10]. Essentially, an ensemble is a collection of points that can independently describe all possible states of a system, allowing researchers to probe phase space to compute accurate observables [11].

Different ensembles represent systems with varying degrees of separation from their surroundings, ranging from completely isolated systems to completely open ones [10]. The choice of ensemble depends on the specific problem and the experimental conditions one aims to mimic. This technical support document focuses on the three most prevalent ensembles in MD simulations: NVE (microcanonical), NVT (canonical), and NPT (isothermal-isobaric). By controlling which state variables—such as energy (E), volume (V), temperature (T), pressure (P), and number of particles (N)—are kept constant, each ensemble generates a distinct statistical sampling from which various structural, energetic, and dynamic properties can be calculated [12].

Table: Key Thermodynamic Ensembles in Molecular Dynamics

| Ensemble | Name | Constant Parameters | Fluctuating Quantities | Typical Application |

|---|---|---|---|---|

| NVE | Microcanonical | Number of particles (N), Volume (V), Energy (E) | Temperature (T), Pressure (P) | Studying isolated systems; exploring constant-energy surfaces [12] [11] |

| NVT | Canonical | Number of particles (N), Volume (V), Temperature (T) | Energy (E), Pressure (P) | Simulations where volume is fixed, e.g., conformational searches in vacuum or solids [12] [13] |

| NPT | Isobaric-Isothermal | Number of particles (N), Pressure (P), Temperature (T) | Energy (E), Volume (V) | Mimicking common lab conditions; determining equilibrium density [12] [10] |

The following diagram illustrates the logical relationship and primary use case for each ensemble in a typical simulation workflow:

Detailed Ensemble Specifications and Theoretical Foundations

The Microcanonical Ensemble (NVE)

The NVE ensemble represents an isolated system that cannot exchange energy or matter with its surroundings [10]. It is characterized by the conservation of the total energy (E) of the system, which is the sum of kinetic (KE) and potential energy (PE) (E = KE + PE = constant) [11]. This ensemble is obtained by directly integrating Newton's equations of motion without any temperature or pressure control [12].

In practice, while total energy is conserved, the potential and kinetic energies can fluctuate as the system moves through valleys (low PE) and peaks (high PE) on the Potential Energy Surface (PES). To keep the total energy constant, a decrease in potential energy must be compensated by an increase in kinetic energy, and vice versa. This directly affects the velocities of atoms, leading to temperature fluctuations [11]. Although energy conservation is the ideal, slight energy drift can occur due to numerical rounding and integration truncation errors [12].

Appropriate Use Cases and Limitations NVE is the most fundamental ensemble and is the direct result of integrating Newton's equations. It is highly valuable for exploring the constant-energy surface of conformational space [12] and for investigating dynamical properties using formalisms like the Green-Kubo relation [11]. However, it is generally not recommended for the equilibration phase of a simulation because, without energy flow facilitated by a thermostat, it is difficult to achieve a desired, specific temperature [12]. The inherent temperature fluctuations can also be problematic, for instance, potentially causing a protein to unfold if the kinetic energy increases significantly, making it unsuitable for simulating isothermal conditions [10].

The Canonical Ensemble (NVT)

The NVT ensemble maintains a constant number of atoms (N), a constant volume (V), and a constant temperature (T) [10]. In this ensemble, the system is allowed to exchange heat with an external reservoir, often visualized as a giant thermostat or heat bath [10] [11]. The total energy is not constant, meaning that as the system moves on the PES, the kinetic energy does not have to compensate for changes in potential energy, thus stabilizing the temperature [11].

Thermostat Methods Temperature control is achieved through algorithms known as thermostats. The Discover program, for example, uses direct temperature scaling during initialization and temperature-bath coupling during data collection [12]. Common thermostat implementations include [13]:

- Berendsen Thermostat: A weak-coupling method that scales velocities periodically. It has good convergence but can produce unnatural dynamics.

- Langevin Thermostat: A stochastic method that applies random forces to individual atoms, providing good control even in mixed phases but making trajectories non-reproducible.

- Nosé-Hoover Thermostat: An extended system method that introduces additional degrees of freedom to represent the heat bath. It is widely used and, in principle, reproduces the correct NVT ensemble.

Appropriate Use Cases NVT is the default ensemble in many MD programs, such as Discover [12]. It is the appropriate choice for conformational searches of molecules in vacuum without periodic boundary conditions, as volume, pressure, and density are not defined in such setups [12]. It is also suitable for simulations where lattice vectors must remain constant, such as studying ion diffusion in solids or reactions on surfaces [13]. A key consideration is that thermostats de-correlate atomic velocities, which can affect the system's dynamics [11].

The Isobaric-Isothermal Ensemble (NPT)

The NPT ensemble maintains a constant number of atoms (N), constant pressure (P), and constant temperature (T) [10]. This is achieved by coupling the system to both a thermal bath (thermostat) to conserve temperature and a mechanical bath (barostat) to conserve pressure by dynamically adjusting the simulation box volume [11]. This ensemble allows fluctuations in both the total energy and the volume of the system.

Appropriate Use Cases The NPT ensemble is the most suitable for mimicking standard experimental conditions, where reactions are typically carried out at constant temperature and pressure [10]. It is the ensemble of choice when correct pressure, volume, and density are critical to the simulation [12]. It is particularly valuable for studying phase transitions, traversing phase diagrams, and determining a system's equilibrium density [11]. It can also be used during equilibration to achieve the desired temperature and pressure before switching to another ensemble for data collection [12].

Table: Troubleshooting Common Ensemble-Related Issues

| Problem | Potential Cause | Solution |

|---|---|---|

| Large pressure fluctuations after switching from NPT to NVE [14] | Switching to instantaneous (non-equilibrated) box dimensions from NPT. Volume is away from its equilibrium value. | Use sufficiently averaged box dimensions from the NPT run, not the final instantaneous snapshot, when setting up the NVE simulation. |

| Density does not converge to expected value in NPT [15] | Poor equilibration protocol; simulation time too short; incorrect forcefield parameters. | Ensure a smarter, multi-step equilibration protocol. Extend simulation time, especially for compression. Validate forcefield and simulation settings. |

| Total energy fluctuates excessively in NVE [15] | Normal fluctuation in finite-sized systems with discrete timesteps. | Understand that fluctuations are normal. Verify that the magnitude is reasonable for your system size and timestep. |

| Unphysical dynamics or poor sampling [11] | Overly strong coupling to thermostat/barostat, disturbing natural motion. | Use longer time constants for the thermostat and barostat to ensure weaker, more physical coupling to the external baths. |

FAQs and Troubleshooting Guides

Frequently Asked Questions

Q1: Shouldn't all ensembles give the same results in principle? In the thermodynamic limit (for an infinite system size), and away from phase transitions, there is an equivalence of ensembles for basic thermodynamic properties [16]. However, for the finite-sized systems used in practical MD simulations, the choice of ensemble can yield different results. Furthermore, fluctuations of quantities (e.g., energy in NVT or volume in NPT) are ensemble-dependent and are related to different thermodynamic derivatives, such as specific heat or compressibility [12] [16].

Q2: Which ensemble should I use for my production run? The choice depends on the experimental conditions you wish to mimic and the free energy you are interested in [16].

- Use NPT to mimic the vast majority of laboratory experiments, which are conducted at constant atmospheric pressure and temperature [10] [16]. It is also necessary for calculating properties related to the Gibbs free energy.

- Use NVT when you need to hold the volume constant, for example, when studying a system in a fixed container or when pressure is not a significant factor and you want less perturbation of the trajectory [12] [13].

- Use NVE for studying isolated systems, exploring constant-energy surfaces, or calculating properties derived from the fluctuation-dissipation theorem (e.g., via Green-Kubo formalism) [12] [11].

Q3: Why do I get large pressure spikes when switching from NPT to NVE? This is a common issue that often indicates that the volume used for the NVE simulation is not the equilibrium volume. When switching from NPT to NVE, you should not use the instantaneous box dimensions from the end of the NPT run. Instead, use sufficiently averaged box dimensions to ensure the system is at its equilibrium volume for the given temperature and pressure. Additionally, ensure that the time constant (tau) for the barostat in the NPT simulation was large enough to avoid an unphysical strong coupling that masks the true pressure fluctuations [14].

Q4: My density in NPT doesn't reach the expected value. What's wrong? This problem can stem from several sources, as seen in a case where a water simulation failed to reach a density of 1 g/cm³ [15]. Potential causes include:

- Insufficient equilibration: The system might have been equilibrated for too long at an incorrect density, making it difficult to compress/expand to the target density.

- Inadequate simulation time: Compressing a system often requires longer simulation time than expanding it.

- Incorrect forcefield or parameters: The interaction parameters (e.g., for a water model like TIP4P) may not be set up correctly to reproduce the experimental density. The solution is to review and optimize your entire simulation protocol, including the equilibration phase, and to ensure your forcefield parameters are appropriate for your system [15].

Standard Simulation Protocol and Workflow

A typical and robust MD procedure is not performed within a single ensemble but involves a sequence of simulations to properly equilibrate the system before production data is collected [10]. The following workflow diagram outlines a standard protocol:

Step-by-Step Methodology:

- Energy Minimization: This is the initial step to relax the initial structure and remove any high-energy steric clashes or unrealistic geometry. It is not a dynamics step and prepares the system for stable dynamics.

- NVT Equilibration: The minimized structure is first subjected to dynamics in the NVT ensemble. This brings the system to the desired temperature by allowing the velocities to equilibrate. The volume is held fixed during this phase [10].

- NPT Equilibration: After temperature stabilization, the system is switched to the NPT ensemble. This allows the density (and thus the volume) to adjust to the target temperature and pressure, ensuring the system is in a state of full thermodynamic equilibrium [10].

- Production Run: Once the system is equilibrated (evidenced by stable temperature, pressure, and energy), the production simulation is run. The ensemble for this phase (NPT, NVT, or NVE) is chosen based on the scientific question. For comparison with most experiments, NPT is used [10] [16].

- Data Analysis: The trajectory generated from the production run is used to compute structural, energetic, and dynamic properties of interest.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools for Ensemble Control

| Tool / Reagent | Function / Purpose | Example Implementations |

|---|---|---|

| Thermostat | Controls the system temperature by adding/removing kinetic energy. | Berendsen, Nosé-Hoover, Langevin (in LAMMPS, GROMACS, Amber) [12] [13] |

| Barostat | Controls the system pressure by adjusting the simulation box volume. | Berendsen, Parrinello-Rahman (in LAMMPS, GROMACS, Amber) [12] [10] |

| Force Field | Provides the potential energy function (PES) governing atomic interactions. | AMBER (for biomolecules), CHARMM, OPLS, EAM (for metals) [1] [11] |

| Software Package | Performs numerical integration of equations of motion and ensemble control. | LAMMPS, GROMACS, AMBER, BioSIM [12] [15] [17] |

| Analysis Suite | Processes trajectory data to compute averages, fluctuations, and other properties. | MDAnalysis, VMD, GROMACS analysis tools, in-house scripts |

The Critical Role of Ensembles in Capturing Biomolecular Dynamics and Function

Technical Support & Troubleshooting Hub

Frequently Asked Questions (FAQs)

Q1: Why are multiple simulation replicas (ensembles) necessary instead of a single long simulation? A single simulation can produce results specific to its initial conditions, a problem known as poor sampling. Ensemble-based approaches, using multiple replicas that differ in their initial atomic velocities, are required to achieve reliability, accuracy, and precision in MD calculations [18]. They allow you to estimate the mean and variance of any calculated quantity, providing a measure of its statistical uncertainty. Relying on a single simulation can lead to unreproducible or unreliable results due to the intrinsically chaotic nature of MD [18].

Q2: My NPT simulation shows large oscillations in volume and pressure. Is this normal? Pronounced oscillations in volume and pressure around the target values are a typical outcome of Nose-Hoover-like pressure control (barostat) [19]. As long as the magnitude of these oscillations remains within reasonable bounds and does not increase dramatically throughout the simulation, it is usually not a cause for concern. The period and amplitude of these oscillations can often be adjusted via the barostat time constant parameter [19].

Q3: How do I choose between the Berendsen and Parrinello-Rahman barostat methods for NPT simulations? The choice depends on the flexibility you need for your simulation cell and the properties you are studying:

- Parrinello-Rahman: Allows all degrees of freedom of the simulation cell to vary. It is more versatile for studying phenomena like phase transitions in solids but requires careful setting of the

pfactorparameter, which is related to the system's bulk modulus [20]. - Berendsen: Efficiently controls pressure for quick convergence and is typically used with a fixed cell shape (only volume changes) or fixed cell angles. It requires an appropriate setting of the

compressibilityparameter [20].

Q4: How can I integrate experimental data to improve my conformational ensemble? A robust method is Maximum Entropy Reweighting. This integrative approach refines your computational ensemble by adjusting the statistical weights of structures from an MD simulation to achieve better agreement with experimental data (e.g., from NMR or SAXS) while introducing the minimal possible perturbation to the original model [21] [22]. This helps generate a force-field independent, accurate conformational ensemble [21].

Q5: What is the recommended number of replicas and their length for a reliable ensemble? For a fixed amount of computational resources, running more shorter simulations is generally better than running fewer longer ones. It is recommended to use protocols like 30 replicas of 2 ns each or 20 replicas of 3 ns each to maximize sampling and minimize error bars for a fixed computational cost [18]. While a common guideline suggests at least three replicas, many observed quantities of interest exhibit non-Gaussian distributions, meaning more replicas are often needed to reliably characterize the underlying distribution [18].

Troubleshooting Guide

| Problem | Possible Causes | Recommended Solutions |

|---|---|---|

| Large energy drift in NVE ensemble | 1. Incorrect initial conditions2. Poor conservation of total energy | 1. Ensure initial velocities are correctly thermalized [23].2. Monitor the total energy curve; it should remain constant with only small oscillations [19]. |

| Failure to converge conformational properties | 1. Insufficient sampling2. Non-Gaussian distribution of QoIs | 1. Use ensemble-based approaches with multiple replicas [18].2. Increase the number of replicas to better characterize the distribution [18]. |

| Poor agreement with experimental data | 1. Inaccurate force field2. Inadequate sampling of relevant states3. Imperfect forward model | 1. Use a modern, validated force field [24] [21].2. Integrate experiments with simulations using maximum entropy reweighting [21] [22].3. Ensure the model used to calculate observables from structures is accurate [22]. |

| Unstable NPT simulation (cell collapse/explosion) | 1. Poorly chosen barostat parameters (pfactor/compressibility)2. Excessively large time step |

1. For Parrinello-Rahman, use a pfactor on the order of 10^6 to 10^7 GPa·fs² for crystalline metals as a starting point [20].2. Reduce the integration time step, especially for systems with fast vibrations. |

Essential Methodologies & Protocols

Protocol 1: Setting Up a Basic NPT Ensemble Simulation

This protocol outlines the steps for performing an NPT simulation using the ASE package, as demonstrated in a study of fcc-Cu's thermal expansion [20].

- System Preparation: Start with an energy-minimized structure. For solids, create a sufficient supercell (e.g., 3x3x3 unit cells).

- Calculator Assignment: Assign a force field or calculator to the atoms object (e.g., EMT or PFP).

- Initialization:

- Dynamics Object Creation: Create an NPT dynamics object, specifying:

time_step: The integration time step (e.g., 1 fs).temperature_K: The target temperature.externalstress: The target external pressure (e.g., 1 bar).ttime: Time constant for the thermostat (e.g., 20 fs).pfactor: Barostat parameter for Parrinello-Rahman (e.g., 2e6 GPa·fs²).

- Production Run: Attach a logger and run the simulation for a sufficient number of steps (e.g., 20,000 steps for 20 ps). Equilibration is reached when properties like temperature and pressure fluctuate around their set values.

Protocol 2: Determining an Accurate Conformational Ensemble for an IDP

This protocol, based on a 2025 study, describes how to obtain an accurate ensemble for an intrinsically disordered protein (IDP) by integrating MD with experimental data [21].

- Generate Initial Ensembles: Run long-timescale, all-atom MD simulations (e.g., 30 µs) of the IDP using multiple state-of-the-art force fields (e.g., a99SB-disp, C22*, C36m) [21].

- Collect Experimental Data: Gather extensive experimental data, such as NMR chemical shifts and SAXS profiles, under identical conditions.

- Apply Maximum Entropy Reweighting:

- Use a forward model to predict experimental observables from each frame of the MD simulation.

- Use a maximum entropy reweighting procedure to compute new statistical weights for each conformation in the ensemble. The goal is to achieve optimal agreement with the experimental data while minimizing the deviation from the original simulation ensemble [21] [22].

- Use a single free parameter, such as the desired effective ensemble size (Kish Ratio, K), to automatically balance the restraints from different experimental datasets [21].

- Validation and Analysis:

- Validate the reweighted ensemble by checking its agreement with experimental data not used in the reweighting.

- Quantify the similarity of ensembles derived from different force fields to assess if a force-field independent solution has been found [21].

Data Presentation: Ensemble Simulation Protocols

The table below summarizes findings from a study that investigated the optimal way to divide a fixed computational budget (60 ns) for binding free energy calculations. The results demonstrate the impact of different ensemble strategies on the reliability of the results [18].

Table 1: Comparison of Ensemble Simulation Protocols for a Fixed 60 ns Computational Budget

| Protocol (Number of Replicas × Simulation Length) | Key Advantages | Key Limitations / Uncertainties |

|---|---|---|

| 1 × 60 ns | - | Not reproducible; prone to large errors; results are not reliable [18]. |

| 60 × 1 ns | - | Production run is typically too short for results to converge [18]. |

| 20 × 3 ns | - Recommended protocol [18]- Good balance of ensemble size and simulation length- Smaller error bars | Simulation duration may be too short to capture very slow conformational changes. |

| 30 × 2 ns | - Recommended protocol [18]- Maximizes ensemble size for a fixed cost- Smallest error bars | Short simulations may miss rare events with high energy barriers. |

| 12 × 5 ns | Longer sampling per replica. | Larger statistical uncertainties due to smaller ensemble size [18]. |

| 6 × 10 ns | Even longer sampling per replica. | Even larger statistical uncertainties [18]. |

Workflow Visualization

Diagram 1: Integrative Ensemble Refinement Workflow

This diagram illustrates the workflow for refining a conformational ensemble by integrating molecular dynamics simulations with experimental data.

Diagram 2: NPT Ensemble Control System

This diagram shows the logical relationships and control mechanisms in an NPT molecular dynamics simulation.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Ensemble-Based Biomolecular Simulations

| Item | Function / Description | Example Use Case |

|---|---|---|

| MD Software Packages | Software to perform molecular dynamics simulations. They differ in algorithms, performance, and supported force fields. | GROMACS [24] [25], AMBER [24], NAMD [24], CHARMM [25], LAMMPS [25], QuantumATK [19], ASE [20]. |

| Force Fields | Empirical mathematical functions and parameters that describe the potential energy of a system of particles. | AMBER ff99SB-ILDN [24], CHARMM36 [24], CHARMM36m [21], a99SB-disp [21]. Choice depends on the system (e.g., proteins, water, lipids). |

| Water Models | Specific parameter sets to simulate the behavior of water molecules. | TIP3P [24] [21], TIP4P-EW [24], a99SB-disp water [21]. Must be compatible with the chosen force field. |

| Thermostat Algorithms | Control the temperature of the simulation by scaling velocities. | Nose-Hoover [20] [19], Berendsen [20]. Essential for NVT and NPT ensembles. |

| Barostat Algorithms | Control the pressure of the simulation by adjusting the simulation cell volume. | Parrinello-Rahman [20], Berendsen [20], Martyna-Tobias-Klein [19]. Essential for NPT ensembles. |

| Analysis Tools (Bio3D, g_covar) | Software packages for analyzing MD trajectories, such as calculating dynamic cross-correlation matrices. | Bio3D R package [25], g_covar in GROMACS [25]. Used to identify correlated and anti-correlated motions in proteins. |

| Maximum Entropy Reweighting Tools | Software to integrate experimental data with simulation ensembles by reweighting. | Custom scripts and frameworks [21] used to implement the Bayesian/Maximum Entropy reweighting protocol [22]. |

Linking Ensemble-Averaged Properties to Experimental Observables

Troubleshooting Guides

Guide 1: Resolving Discrepancies Between Simulated and Experimental Data

Problem: My molecular dynamics (MD) ensemble shows poor agreement with key experimental observables, such as NMR chemical shifts or SAXS profiles.

Solution: Follow this systematic workflow to identify the source of the discrepancy and refine your ensemble.

1. Diagnose the Source of Error

- Check Force Field Selection: Different force fields (e.g., a99SB-disp, Charmm36m, Charmm22*) have varying accuracies for different classes of proteins, especially Intrinsically Disordered Proteins (IDPs). Try simulating with multiple state-of-the-art force fields to see if the discrepancy persists [21].

- Verify Sampling Adequacy: Ensure your simulation is long enough to achieve ergodic sampling. Use metrics like the Kish ratio to check the effective ensemble size and identify if you are overfitting to a limited conformational space [21].

- Validate the Forward Model: The mathematical model used to back-calculate experimental observables (e.g., chemical shifts, relaxation rates) from your atomic coordinates must be accurate. An error here can cause disagreement even with a perfect structural ensemble [21] [26].

2. Apply Integrative Refinement If the initial diagnosis suggests a plausible but imperfect ensemble, use experimental data to refine it via reweighting.

- Use a Maximum Entropy Reweighting Protocol: This method minimally adjusts the weights of structures in your initial MD ensemble to achieve the best possible agreement with a comprehensive set of experimental data. The strength of restraints is automatically balanced based on a target effective ensemble size (Kish ratio, e.g., K=0.10) to prevent overfitting [21].

- Combine Multiple Data Types: Integrate various experimental data sources simultaneously (e.g., NMR chemical shifts, residual dipolar couplings (RDCs), paramagnetic relaxation enhancements (PREs), and SAXS data) for a more robust and conclusive refinement [21] [26].

Guide 2: Handling Instabilities in Finite-Temperature MD Simulations

Problem: My MD simulation becomes unstable at the target temperature and pressure, crashing or producing unphysical results.

Solution: Instabilities often stem from inaccuracies in the force field or its integration, particularly for complex systems at finite temperatures.

1. System Setup and Equilibration

- Thermostat and Barostat Settings: Use established thermostats (e.g., Nose-Hoover - NHC) and barostats (e.g., Martyna-Tobias-Klein - MTK) for reliable temperature and pressure control. Ensure the coupling constants (

Taukey) are appropriate for your system to avoid oscillatory or poor control [27]. - Gradual Heating: Do not start simulations at the target temperature from a low-energy minimized structure. Use the

InitialVelocitiesblock to assign random velocities corresponding to a lower temperature and gradually heat the system to the target temperature over hundreds of picoseconds to avoid high-energy clashes [27].

2. Force Field and Model Considerations

- Test Foundational Models Critically: Be aware that some machine learning force fields (e.g., M3GNet, CHGNet) or "universal" force fields, while accurate for static properties at 0 K, may struggle with finite-temperature dynamics and phase behavior. Their performance is tied to the density functional theory (DFT) functionals used in their training data [28].

- Consider Fine-Tuning: If using a machine learning force field, investigate if fine-tuning on a smaller, system-specific dataset computed with a more suitable DFT functional can correct for inherited biases and improve dynamic reliability [28].

Frequently Asked Questions (FAQs)

FAQ 1: What is the most robust method to create an MD ensemble that agrees with my experimental data?

The most robust and automated method currently available is a maximum entropy reweighting procedure. This approach integrates your unbiased MD simulation with extensive experimental datasets. Its key advantage is that it uses a single free parameter (the desired effective ensemble size) to automatically balance the restraints from all experimental data types, preventing overfitting and producing a statistically robust ensemble with minimal perturbation to the original simulation [21].

FAQ 2: Can I use AlphaFold2-predicted structures as a starting point for generating accurate conformational ensembles?

Yes, AlphaFold2-predicted structures are increasingly used as promising starting points for MD simulations. For structured proteins, you can initiate free MD simulations from an AlphaFold-generated structure and then select trajectory segments that show stable RMSD and align well with experimental NMR relaxation data to build your dynamic ensemble [26]. For IDPs, AlphaFold can also generate multiple models that serve as a diverse starting ensemble [21].

FAQ 3: My simulation agrees with some NMR data but not others. Which experimental observables are most important to use for reweighting?

It is crucial to use multiple, complementary experimental data types to constrain different aspects of the ensemble. No single observable is sufficient.

- NMR Chemical Shifts: Sensitive to local secondary structure and backbone conformation [21] [26].

- Residual Dipolar Couplings (RDCs): Provide long-range structural information on molecular orientation and topology [26].

- Spin Relaxation Data (R1, R2, NOE): Probe dynamics on picosecond-to-nanosecond timescales, yielding generalized order parameters (S²) [26].

- Paramagnetic Relaxation Enhancements (PREs): Offer long-range distance restraints that can reveal transient contacts [26].

- SAXS Data: Reports on the global shape and radius of gyration of the ensemble [21]. Integrating all these data types provides a comprehensive set of restraints that leads to a more accurate and trustworthy ensemble [21] [26].

FAQ 4: How do I know if my refined ensemble is overfitting the experimental data?

A key metric to prevent overfitting is the Kish Effective Sample Size (Kish ratio). This ratio measures the fraction of conformations in your initial ensemble that have significant weight after reweighting. A very low Kish ratio (e.g., K < 0.05) indicates that only a tiny subset of structures is being used to fit the data, which is a sign of overfitting. A good practice is to set a minimum threshold for the Kish ratio (e.g., K=0.10) during the reweighting process to ensure your final ensemble retains a broad and representative sample of conformations [21].

Experimental Protocols & Methodologies

Protocol 1: Maximum Entropy Reweighting of MD Ensembles

This protocol details how to refine an MD ensemble using experimental data from NMR and SAXS [21].

1. Generate Initial Ensembles

- Run long-timescale (e.g., 30 µs) all-atom MD simulations of your system using multiple modern force fields (e.g., a99SB-disp, Charmm36m, Charmm22*).

- Extract a large number of snapshots (e.g., 30,000 structures) from the equilibrated trajectory to form the initial ensemble.

2. Calculate Experimental Observables from the Ensemble

- For each snapshot in the ensemble, use forward models to back-calculate the experimental observables you have measured.

- This includes predicting NMR chemical shifts, J-couplings, RDCs, and SAXS profiles from the atomic coordinates.

3. Perform Maximum Entropy Reweighting

- Use a reweighting algorithm that maximizes the entropy of the final weights distribution while minimizing the discrepancy (χ²) between the back-calculated and experimental ensemble-averaged observables.

- The key parameter to set is the target effective ensemble size, defined by the Kish ratio (e.g., K=0.10). The algorithm will automatically determine the restraint strengths needed to achieve this.

4. Validate the Reweighted Ensemble

- Check that the reweighted ensemble shows improved agreement with the experimental data used for refinement.

- Validate the ensemble against experimental data not used in the reweighting process, if available.

- Analyze the Kish ratio to ensure the ensemble is not overfit.

Protocol 2: Validating Ensembles with NMR Relaxation Data

This protocol describes a trajectory-selection method to validate dynamic ensembles using NMR relaxation [26].

1. Run MD Simulation and Segment Trajectory

- Initiate an MD simulation, ideally starting from an AlphaFold2-predicted structure.

- Divide the long MD trajectory into shorter segments (e.g., based on RMSD plateaus that indicate stable conformational sampling).

2. Back-calculate NMR Relaxation Parameters

- For each trajectory segment, back-calculate NMR relaxation parameters, such as longitudinal (R1) and transverse (R2) relaxation rates, heteronuclear NOE, and cross-correlated relaxation (ηxy) rates.

3. Select Consistent Trajectory Segments

- Compare the back-calculated relaxation parameters from each segment directly to the experimental values.

- Identify and select the trajectory segments whose back-calculated data show the best agreement with the experimental results.

4. Construct the Final Ensemble

- Combine the selected trajectory segments to build the final, validated 4D conformational ensemble that is most consistent with the experimental NMR dynamics.

Research Reagent Solutions

The table below lists key computational and experimental resources used in the field of integrative ensemble modeling.

| Item Name | Type | Function/Brief Explanation |

|---|---|---|

| a99SB-disp Force Field | Computational Force Field | A protein force field and water model combination noted for its accuracy in simulating IDPs and generating ensembles that show good initial agreement with experimental data [21]. |

| Charmm36m Force Field | Computational Force Field | An all-atom protein force field designed for simulating a wide range of proteins, including membrane proteins and IDPs, often used for comparative ensemble studies [21]. |

| Maximum Entropy Reweighting Code | Computational Software | A software protocol (often custom Python scripts) that reweights MD ensembles to match experimental data with minimal bias, using the maximum entropy principle [21]. |

| NMR Relaxation Data (R1, R2, NOE, ηxy) | Experimental Data | NMR measurements that provide detailed, site-specific insights into protein dynamics on fast timescales, crucial for validating and refining conformational ensembles [26]. |

| SAXS Data | Experimental Data | Small-angle X-ray scattering data that provides low-resolution information about the global shape and size (radius of gyration) of a protein in solution, constraining the overall ensemble properties [21]. |

| AlphaFold2 | Computational Model | An AI system that predicts protein structures from amino acid sequences. Its predictions can serve as high-quality starting points for MD simulations [26]. |

| Cross-Correlated Relaxation (ηxy) | Experimental Data | An advanced NMR relaxation parameter that is less biased by slow conformational exchange processes than R2, making it highly valuable for accurate ensemble validation [26]. |

Workflow Diagrams

Integrative Ensemble Modeling Workflow

Experimental Data Integration Logic

Implementing Thermostats and Barostats: From Theory to Practice

Frequently Asked Questions

Q1: My production simulation results show suppressed energy fluctuations. What could be the cause? The Berendsen thermostat is known to suppress the fluctuations of the system’s kinetic energy and produce an energy distribution with a lower variance than a true canonical ensemble [29] [30]. While it is excellent for relaxing a system to equilibrium, it does not generate a correct thermodynamic ensemble. For production runs, switch to an algorithm like the Nosé-Hoover chain, V-rescale (Bussi), or Langevin thermostat with a low friction coefficient, which are designed to correctly sample the canonical ensemble [29] [30].

Q2: Why are the dynamic properties (e.g., diffusivity) in my system inaccurate when I use a stochastic thermostat? Stochastic thermostats like Andersen and Langevin work by randomizing particle velocities, which interferes with the natural, correlated motion of particles [29] [30]. This "violent perturbation" of particle dynamics can artificially slow down the system's kinetics and lead to inaccurate diffusion coefficients and viscosity [30]. If you are studying dynamic properties, consider using a deterministic extended system thermostat like Nosé-Hoover, which minimally disturbs the Newtonian dynamics [29] [30].

Q3: My system exhibits large, unphysical temperature oscillations during an NPT simulation. How can I fix this? The Nosé-Hoover thermostat can introduce periodic temperature fluctuations, especially in systems far from equilibrium or when coupled with a barostat in NPT simulations [29] [30]. A common solution is to use a Nosé-Hoover chain, which adds multiple thermostat variables with different 'masses' to help dampen these oscillations [29]. Furthermore, note that NPT and non-equilibrium MD (NEMD) simulations often require a stronger (more efficient) thermostat coupling than NVT simulations to maintain the target temperature [30].

Q4: I need to heat my system to a target temperature quickly for equilibration. Which thermostat is most effective? For rapid heating, cooling, or system relaxation, the Berendsen thermostat is highly effective due to its predictable, exponential decay of temperature deviations and robust convergence [29]. Its strong coupling method quickly removes the difference between the current and target temperature. However, remember that this should only be used during equilibration; you must switch to a different thermostat for production data collection [29].

Q5: Why does my simulation of a small solute in solvent show unrealistic temperature fluctuations? This can occur when using a local thermostat on a small group of atoms. While local thermostats are useful for large solutes, the temperature of small solutes can fluctuate significantly because they have fewer degrees of freedom to absorb and redistribute kinetic energy [29]. For small molecules, a global thermostat that controls the temperature of the entire system uniformly is often more appropriate [29].

Troubleshooting Guides

Problem: Erratic Energy Drift in Long Simulations

- Symptoms: A steady, non-physical increase or decrease in the total energy of the system over time in an NVT simulation.

- Possible Causes and Solutions:

- Cause 1: Inefficient temperature coupling. The thermostat is not able to properly maintain the kinetic energy.

- Solution: Adjust the thermostat's coupling parameter. For the Berendsen, Nosé-Hoover, or V-rescale thermostats, this is the time constant

τ_T. A very largeτ_Tresults in weak coupling and poor temperature control, while a very smallτ_Tcan overly perturb the dynamics. A value of0.1 - 1.0 psis often a good starting point for biomolecular simulations [29] [30].

- Solution: Adjust the thermostat's coupling parameter. For the Berendsen, Nosé-Hoover, or V-rescale thermostats, this is the time constant

- Cause 2: The development of appreciable center-of-mass motion.

- Solution: Ensure your MD engine removes the center-of-mass motion at every step [4]. Most modern packages do this by default.

- Cause 1: Inefficient temperature coupling. The thermostat is not able to properly maintain the kinetic energy.

Problem: Unphysical Sampling of Configurational or Kinetic Properties

- Symptoms: The distribution of particle velocities does not match the Maxwell-Boltzmann distribution, or configurational properties like potential energy show a strong time-step dependence.

- Possible Causes and Solutions:

- Cause 1: Use of a non-canonical thermostat for production.

- Cause 2: High friction coefficient in a Langevin thermostat.

- Solution: The friction term in the Langevin equation directly damps motion. For equilibrium MD, use a low friction coefficient (e.g.,

0.1 - 1 ps⁻¹) to minimize interference with the system's natural dynamics [5] [30]. A recent benchmarking study found that diffusion coefficients systematically decrease with increasing friction [5].

- Solution: The friction term in the Langevin equation directly damps motion. For equilibrium MD, use a low friction coefficient (e.g.,

Problem: Poor Performance in NPT or Non-Equilibrium MD (NEMD) Simulations

- Symptoms: In NPT simulations, the temperature or pressure control is unstable. In NEMD (e.g., shear flow), the thermostat fails to evacuate excess heat effectively.

- Possible Causes and Solutions:

- Cause: Insufficiently strong thermostat coupling.

- Solution: NPT and NEMD simulations often require stronger thermostat coupling (shorter

τ_T) than NVT equilibrium MD to maintain the target temperature [30]. This is because the barostat's volume scaling and the external work in NEMD can act as strong heat sources/sinks. If using Nosé-Hoover, try switching to a Nosé-Hoover chain for better stability [29].

- Solution: NPT and NEMD simulations often require stronger thermostat coupling (shorter

- Cause: Insufficiently strong thermostat coupling.

Comparative Performance of Thermostat Algorithms

The table below summarizes key characteristics and performance metrics of common thermostat algorithms, based on recent benchmarking studies and theoretical foundations [29] [5] [30].

Table 1: Thermostat Algorithm Comparison

| Thermostat Algorithm | Type | Canonical Ensemble? | Disturbance on Dynamics | Key Strengths | Key Weaknesses | Best Use Cases |

|---|---|---|---|---|---|---|

| Velocity Rescaling | Strong Coupling | No [29] | High | Simple, fast equilibration [29] | Can create hot spots; incorrect ensemble [29] | Heating/Cooling only [29] |

| Berendsen | Weak Coupling | No [29] [30] | Low | Robust, fast relaxation to target T [29] | Suppresses energy fluctuations [29] [30] | System equilibration [29] |

| Andersen | Stochastic | Yes [29] | Very High | Correct ensemble; simple concept [29] | Randomization impairs correlated motion & dynamics [29] [30] | Sampling static properties only [30] |

| Langevin | Stochastic | Yes [5] | High (Friction-dependent) | Correct ensemble; very stable [5] [30] | High computational cost; reduces diffusion [5] | Systems with implicit solvent; NEMD [30] |

| Bussi (V-rescale) | Stochastic | Yes [29] [5] | Low | Correct ensemble; fast & robust like Berendsen [29] | Potential energy can be time-step dependent [5] | General purpose NVT production [30] |

| Nosé-Hoover | Extended System | Yes [29] | Low | Correct ensemble; good for kinetics [29] | Can cause temperature oscillations [29] [30] | NVT of systems near equilibrium [29] |

| Nosé-Hoover Chains | Extended System | Yes [29] | Low | Correct ensemble; suppresses oscillations [29] | More complex with multiple parameters [29] | Recommended for most production NVT [29] [30] |

Table 2: Quantitative Benchmarking Data (Lennard-Jones System) Data adapted from Shiraishi et al. (2025) [5]

| Thermostat Algorithm | Relative Computational Cost | Diffusion Coefficient (D) at low friction | Potential Energy Time-Step Dependence |

|---|---|---|---|

| Nosé-Hoover Chain (NHC2) | Low | High | Pronounced |

| Bussi | Low | High | Pronounced |

| Langevin (BAOAB, low γ) | ~2x Higher [5] | High (but decreases with γ) [5] | Low |

| Langevin (GJF, low γ) | ~2x Higher [5] | High (but decreases with γ) [5] | Low |

Experimental Protocol: Benchmarking Thermostats for a New System

When applying thermostats to a novel system, such as a new protein-ligand complex, it is good practice to perform a small-scale benchmark to inform your choice for the full production simulation.

Objective: To evaluate the performance of different thermostat algorithms on a model system (e.g., a protein in a water box) by assessing their ability to generate correct kinetic and potential energy distributions and to produce stable dynamics.

Methodology:

- System Preparation: Create a solvated system of your protein of interest, following standard energy minimization and equilibration procedures.

- Equilibration: Use the Berendsen thermostat to quickly bring the system to the target temperature (e.g., 300 K).

- Production Runs: Launch multiple short (e.g., 1-5 ns) MD simulations in the NVT ensemble from the same equilibrated structure, each using a different thermostat (e.g., Nosé-Hoover Chains, Bussi, Langevin with low friction).

- Data Analysis:

- Velocity Distribution: Plot the distribution of velocities for all atoms and fit it to the Maxwell-Boltzmann distribution for the target temperature. A good thermostat will show an excellent fit [29].

- Energy Fluctuations: Calculate the variance of the total potential and kinetic energy. Compare it to the expected variance for a canonical ensemble. The Berendsen thermostat will show suppressed fluctuations [30].

- Stability: Monitor the stability of the temperature and the potential energy over time. Look for drifts or unphysical oscillations.

- Dynamics: Calculate the mean-squared displacement (MSD) of water or ligand atoms to check if stochastic thermostats are unduly suppressing diffusion.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Software and Algorithmic "Reagents" for Temperature Control

| Item | Function | Example Implementation / Notes |

|---|---|---|

| Nosé-Hoover Chains (NHC) | Extended system thermostat for canonical sampling with minimal perturbation to dynamics [29]. | In GROMACS: tcoupl = Nose-Hoover, nh-chain-length = 10 (default) [29]. |

| Bussi Stochastic Velocity Rescaling | Stochastic thermostat that corrects the Berendsen method to produce a canonical ensemble [29] [5]. | In GROMACS: tcoupl = V-rescale [29]. In LAMMPS: fix nvt/stats or fix nvt/ssa. |

| Langevin Thermostat (BAOAB/GJF) | Stochastic thermostat that integrates a friction and noise term; excellent configurational sampling [5]. | Use the BAOAB or GJF integrator for accuracy. The friction coefficient (gamma or gamma_ln) is a critical parameter [5]. |

| Berendsen Thermostat | Weak-coupling algorithm for fast and robust system relaxation to a target temperature [29] [30]. | Use only for equilibration. In GROMACS: tcoupl = Berendsen [29]. |

| Parrinello-Rahman Barostat | A semi-isotropic barostat for correct NPT ensemble sampling, often paired with Nosé-Hoover or Bussi thermostats [30]. | In GROMACS: pcoupl = Parrinello-Rahman. Recommended for production NPT simulations [30]. |

Thermostat Selection Workflow

The following diagram outlines a logical decision process for selecting an appropriate thermostat algorithm based on your simulation goals.

Choosing the Right Barostat for Biomolecular Simulations in Solvent

Barostat FAQs and Troubleshooting Guide

What is a barostat and why is it critical for my biomolecular simulations?

A barostat is an algorithm in Molecular Dynamics (MD) software that maintains the system's pressure at a constant target value, on average. This is essential for simulating NPT (isothermal-isobaric) ensembles, which mirror common experimental conditions in solution and are necessary for obtaining accurate densities and volumes for your biomolecular system. Correct barostat selection is vital because an inappropriate choice can suppress natural fluctuations or disturb Newtonian dynamics, leading to inaccurate physical properties and unreliable simulation outcomes [30].

Which barostat should I use for production simulations of proteins in solvent?

For production-level NPT simulations, the Parrinello-Rahman barostat is highly recommended [30]. It correctly samples the NPT ensemble by allowing for fluctuations in both the volume and shape of the simulation box. This makes it particularly suitable for biomolecular systems in solution, where accurate energy and volume fluctuations are critical. It should be used with a moderate coupling strength for optimal performance [30].

I see a warning that the Berendsen barostat is "obsolete." Should I be concerned?

Yes. This warning, common in MD software like GROMACS, should be taken seriously for production runs [31]. While the Berendsen barostat is efficient at quickly relaxing the system to the target pressure, it achieves this by suppressing the natural fluctuations of volume in the NPT ensemble [30]. It is acceptable for the initial equilibration of your system but should not be used for production simulations where accurate sampling and property calculation are the goals [30] [31].

My NPT simulation is unstable or exhibits large oscillations. What could be wrong?

If you are using the Parrinello-Rahman barostat and observe unphysical, large oscillations, it often indicates that your system is far from equilibrium [30]. We recommend the following troubleshooting steps:

- Re-equilibrate your system: Ensure your system is thoroughly equilibrated using a more robust method like the Berendsen barostat before switching to Parrinello-Rahman for production.

- Check coupling strength: An excessively strong or weak coupling constant can cause instabilities. Use a moderate coupling strength, typically between 1-10 ps, and refer to your software's documentation for guidance.

- Verify system preparation: Ensure your initial structure is sound, with proper protonation states and no steric clashes, and that the solvent box is of appropriate size [32].

Barostat Performance Comparison

The table below summarizes the key characteristics of common barostats to guide your selection [30].

Table 1: Comparison of Barostat Algorithms for Biomolecular Simulations

| Barostat | Ensemble Sampled | Key Mechanism | Pros | Cons | Recommended Use |

|---|---|---|---|---|---|

| Berendsen | Incorrect NPT | Scales coordinates/box vectors; first-order pressure relaxation [30]. | Fast pressure stabilization, good for equilibration. | Suppresses volume fluctuations, yields inaccurate properties for production [30]. | Initial system equilibration only. |

| Parrinello-Rahman | Correct NPT | Extended system method; allows independent change of box vectors [30]. | Correct NPT ensemble, allows for anisotropic box deformation. | Can produce oscillations if system is far from equilibrium [30]. | Production simulations (with moderate coupling). |

Workflow for Barostat Selection and Use

The following diagram outlines a logical workflow for selecting and applying barostats in a typical biomolecular simulation protocol.

Barostat Implementation Workflow for Stable NPT Ensembles

Essential Parameter Relationships

Understanding how key parameters interact is crucial for stable simulations. This diagram shows the core relationships in the Parrinello-Rahman algorithm.

Key Parameter Interactions in the Parrinello-Rahman Barostat

The Scientist's Toolkit: Essential Materials and Reagents

Table 2: Key Research Reagent Solutions for Biomolecular MD Simulations

| Item | Function | Example(s) / Notes |

|---|---|---|

| Force Field | Defines potential energy functions and parameters for atoms and molecules. | AMBER99SB-ILDN, CHARMM36, OPLS-AA. Select one parameterized for proteins and your specific solvent [32]. |

| Water Model | Represents solvent molecules and their interactions with the solute. | TIP3P, SPC, TIP4P. Must be compatible with the chosen force field [32]. |

| Simulation Software | Provides the engine to run MD simulations, including integrators and ensemble controls. | GROMACS, NAMD, AMBER, LAMMPS [33] [31] [32]. |

| Thermostat | Maintains constant temperature. Must be chosen in tandem with the barostat. | Nosé-Hoover or V-rescale are recommended for production with Parrinello-Rahman barostat [33] [30]. |

| Barostat | Maintains constant pressure. Critical for NPT ensemble sampling. | Parrinello-Rahman for production; Berendsen for initial equilibration [30]. |

| System Building Tool | Prepares initial simulation system: solvation, ionization, etc. | PACKMOL, CHARMM-GUI, GROMACS pdb2gmx [32]. |

| Trajectory Analysis Tools | Analyzes output trajectories to compute physical properties and validate results. | Built-in GROMACS tools, VMD, MDAnalysis, PyTraj. |

This guide provides a foundational protocol for configuring and troubleshooting NPT (isothermal-isobaric) ensemble simulations, a critical step in molecular dynamics (MD) for achieving physically meaningful and stable production runs. Proper NPT equilibration allows a system to reach the correct density by allowing volume fluctuations, which is essential for simulating realistic conditions in solvated proteins, membrane patches, and other biomolecular systems [34]. The following FAQs, protocols, and tables are designed within the broader research context of developing accurate temperature and pressure control methods for MD ensembles, providing drug development professionals and researchers with clear, actionable guidance.

Core Concepts and NPT Essentials

What is the primary purpose of an NPT equilibration simulation?

The primary purpose of an NPT equilibration simulation is to stabilize the system density under conditions of constant particle Number (N), Pressure (P), and Temperature (T). Unlike NVT (constant Volume) equilibration, the NPT ensemble allows the simulation box volume to fluctuate, which is crucial for systems like solvated proteins or membrane patches where achieving a realistic, experimentally comparable density is essential before beginning production simulations [34]. This step ensures the system is in a stable state representative of the target physical conditions.

How do I know if my NPT simulation has been successful?

Success is determined by the stabilization of key thermodynamic properties over time. Your sign of success is observing flatline behavior in plots of density and pressure [34]. Specifically:

- The density value should plateau around an expected value (e.g., near 1008 kg/m³ for SPC/E water models).

- The pressure should fluctuate around the reference value you set. These plateaus indicate the system has reached equilibrium and is ready for production MD runs.

Detailed Experimental Protocol

A Ten-Step Protocol for System Preparation

For explicitly solvated biomolecules, a gradual relaxation protocol is recommended to ensure stability. The following steps, adapted from a established protocol, comprise 4000 minimization steps and 40,000 MD steps (totaling 45 ps) before a final, extended NPT step [35].

This workflow illustrates the multi-step equilibration protocol for stable production simulations.

Protocol Execution Notes:

- System Division: The protocol divides the system into "mobile" molecules (fast-diffusing, e.g., water, ions) and "large" molecules (slow-diffusing, e.g., proteins, lipids). Mobile molecules are relaxed first [35].

- Positional Restraints: Strong positional restraints (e.g., 5.0 kcal/mol·Å² on heavy atoms of large molecules) are applied in initial steps and gradually weakened or removed to allow controlled relaxation [35].

- Precision for Minimization: Due to potential numerical overflows from atomic overlaps, it is recommended to perform minimization steps with full double precision, even if subsequent MD uses GPU acceleration [35].

- Final NPT Step: The final step (Step 10) is run until the system density meets a predefined plateau criterion, indicating stabilization [35].

Troubleshooting Common NPT Issues

Why is my system density incorrect after NPT equilibration?

An incorrect final density often stems from an inadequate simulation protocol or parameter selection.

- Insufficient Equilibration Time: The system might not have been allowed enough time to reach equilibrium. The NPT simulation must be long enough for the density to plateau. If an obvious trend to shrink or expand is visible, the run needs to be significantly longer [15].

- Poor Initial System Configuration: If the system is equilibrated for a very long time at the wrong density, it can be difficult for it to compress or expand to the correct density within a reasonable simulation time. A smarter equilibration protocol that starts from a better initial guess of the volume is recommended [15].

- Incorrect Pressure Coupling: The choice of barostat and its parameters, such as the time constant (

tau_p) and compressibility, must be appropriate for your system.

Why does my simulation box deform excessively in one direction?

This is a common issue in systems with anisotropic components, such as lipid bilayers.

- Incorrect Pressure Coupling for the Phase: Using semi-isotropic or anisotropic pressure coupling before a membrane has self-assembled or is correctly oriented can cause box deformation. The pressure coupling assumes the membrane normal is aligned with the z-axis [36].

- Solution: For self-assembling systems or those without a predefined orientation, begin with isotropic pressure coupling. Once the membrane is formed and its orientation is known, the system can be rotated, and semi-isotropic coupling can be applied for the production run [36].

Why are the energy fluctuations in my subsequent NVE run too large?

Large, non-constant fluctuations in total energy in an NVE (microcanonical) ensemble run following NPT often indicate the system was not fully equilibrated.

- Lack of Equilibration: The system may not have reached a true equilibrium state during the NPT phase. The energy fluctuations should be around a stable average; a significant drift suggests the system is still relaxing [15].

- Finite System Size and Time Discretization: Some fluctuation is normal in MD simulations due to the finite size of the system and the discrete time steps. However, the fluctuations should be within a reasonable range expected for your system size and temperature [15].

Implementation and Parameterization

Configuration and Parameter Tables

Selecting the right parameters is crucial for a stable NPT simulation. The following tables summarize key settings.

Table 1: Common Barostats and Key Parameters

| Barostat | Algorithm Type | Key Parameters | Typical Use Case |

|---|---|---|---|

| Parrinello-Rahman [37] [20] | Extended System | pfactor (e.g., 10⁶ - 10⁷ GPa·fs² for metals), tau_p (time constant) |

Solids, phase transitions, anisotropic systems. Allows full cell changes. |

| Berendsen [20] | Weak Coupling | tau_p (time constant), compressibility (e.g., 4.5e-5 bar⁻¹ for water) [36] |

Efficient scaling for equilibration. Not recommended for production. |

| Monte Carlo [35] | Stochastic | Volume change attempt frequency (e.g., every 100 steps) | Inhomogeneous systems, can avoid artifacts of weak-coupling methods. |

Table 2: Typical NPT Equilibration Parameters and Diagnostics

| Parameter Category | Example Setting | Purpose and Notes |

|---|---|---|

| Simulation Length | 100 ps - 1 ns+ [34] | Must be long enough for density/pressure to plateau. |

| Reference Pressure | 1.0 bar [20] [36] | Target pressure for the barostat. |

| Pressure Coupling Type | Isotropic, Semi-isotropic, Anisotropic | Must match system geometry [36]. |

| Compressibility | 4.5e-5 bar⁻¹ [36] | System-specific. Critical for accurate volume response. |

Time Constant (tau_p) |

1.0 - 5.0 ps [34] [36] | How quickly the barostat responds. |

| Success Diagnostic | Density plateau (e.g., ~1008 kg/m³ for SPC/E water) [34] | Primary indicator of equilibration success. |

The Scientist's Toolkit: Essential Research Reagents and Software

Table 3: Key Software and Components for NPT Simulations

| Item | Function in NPT Simulation | Example / Note |

|---|---|---|

| MD Engine | Executes the numerical integration of the equations of motion. | GROMACS [34], AMBER [35], NAMD, LAMMPS [15], VASP [37], ASE [20] |

| Force Field | Defines the potential energy surface and interatomic forces. | CHARMM, AMBER, GROMOS, OPLS-AA, TIP4P (water) [15] |

| Barostat Algorithm | Regulates the system pressure by adjusting the simulation box. | Parrinello-Rahman [37] [20], Berendsen [20], Monte Carlo [35] |

| Thermostat Algorithm | Regulates the system temperature by scaling particle velocities. | Nosé-Hoover [20], Langevin [37], v-rescale [36] |

| Positional Restraints | Harmonically constrain atoms to initial positions during relaxation. | Force constants of 5.0, 2.0, and 0.1 kcal/mol·Å² [35] |

| Visualization/Analysis Tool | Monitors simulation progress and analyzes results. | SAMSON [34], VMD, PyMOL, matplotlib |

Advanced Topics and Best Practices

How does the choice of thermostat/barostat affect my results?

The choice of algorithm can influence both the stability of the simulation and the physical correctness of the generated ensemble.

- Thermostats: The Nosé-Hoover thermostat generates a correct canonical (NVT) ensemble but can exhibit energy drift in poorly equilibrated systems. The Langevin thermostat provides robust temperature control and is often used during equilibration [35].

- Barostats: The Berendsen barostat is efficient at driving the system to the target pressure but does not generate a correct isothermal-isobaric (NPT) ensemble and is thus recommended for equilibration only. The Parrinello-Rahman barostat is more rigorous and suitable for production simulations, especially for solids or studies involving phase transitions [20]. It has been shown that weak-coupling barostats can introduce artifacts in inhomogeneous systems, for which a Monte Carlo barostat may be preferable [35].

Are NVT and NPT equilibrations always necessary?

The necessity depends on the scientific goal and the initial state of the system.

- For Stable, Pre-formed Systems: If you start with a well-equilibrated system and only change a minor component (e.g., a ligand), a full NVT+NPT protocol might be abbreviated.

- For Self-Assembly Studies: If you are simulating a process like lipid bilayer self-assembly from a random mixture, the assembly is the process of interest. In this case, you may skip standard NPT equilibration to avoid introducing biases, though energy minimization is still critical [36]. The key is to justify your approach and, if skipping equilibration, discard the initial non-equilibrium part of the trajectory from analysis.

Frequently Asked Questions (FAQs)

Q1: My system's temperature drifts significantly during NVE production runs. What is wrong? In an NVE (microcanonical) ensemble, the total energy is conserved, but the temperature, calculated from the instantaneous kinetic energy, is expected to fluctuate naturally [19] [38]. A significant drift, rather than oscillation around an average, often indicates that the system was not properly equilibrated beforehand. To resolve this, switch to an NVT (canonical) ensemble using a thermostat like Nose-Hoover or Bussi stochastic velocity rescaling for the equilibration phase. These thermostats correctly sample the canonical ensemble and will stabilize the temperature around your target value [29] [19]. Only after the system properties (temperature, energy, density) have stabilized should you switch to an NVE ensemble for production dynamics [38].

Q2: How do I choose the right thermostat for my NVT production simulation? The choice of thermostat depends on whether you need correct thermodynamic sampling for property calculation or if your priority is rapid equilibration.

- For production simulations where accurate thermodynamic sampling is critical, use the Nose-Hoover thermostat (or its chain variant) or the Bussi stochastic velocity rescaling thermostat. These generate a correct canonical ensemble [29].

- For system relaxation and equilibration, the Berendsen thermostat is robust and converges quickly to the target temperature, though it produces an energy distribution with lower variance than a true canonical ensemble and should be avoided for production runs [29]. The table below summarizes common thermostats and their characteristics [29].

Table 1: Common Thermostats and Their Applications

| Thermostat | Algorithm Type | Key Characteristics | Recommended Use |

|---|---|---|---|

| Berendsen | Weak coupling | Fast, robust convergence; does not produce correct ensemble | System relaxation & equilibration |

| Nose-Hoover | Extended system | Correct canonical ensemble; does not impair correlated motion | Production simulations |

| Bussi Velocity Rescaling | Stochastic | Correct canonical ensemble; based on Berendsen but corrected | Production simulations |

| Andersen | Stochastic | Correct canonical ensemble; impairs kinetics by randomizing velocities | Studying static properties, not dynamics |

Q3: My molecular docking results are biologically irrelevant. Could the protein flexibility be the issue? Yes, this is a common issue. Traditional docking treats the protein as a rigid body, which can fail if your ligand requires a different protein conformation than the one in your starting crystal structure [39]. A best practice is to use ensemble docking. You can generate an ensemble of protein conformations by running an MD simulation of the protein (or protein-ligand complex), clustering the trajectory, and using the central structures from the top clusters as multiple docking targets [39]. This workflow indirectly incorporates protein flexibility and can lead to more biologically relevant results [39].

Q4: What is a standard workflow to go from a crystal structure to a production ensemble? A typical workflow involves multiple stages of minimization and MD with progressively released constraints to equilibrate the system properly before production [40]. The following diagram illustrates a robust, multi-stage equilibration protocol.

Troubleshooting Guides

Issue: Unstable Energy Conservation in NVE Ensemble

Problem: The total energy in your NVE simulation shows a pronounced drift instead of small, stable oscillations [19] [38].

Solutions: