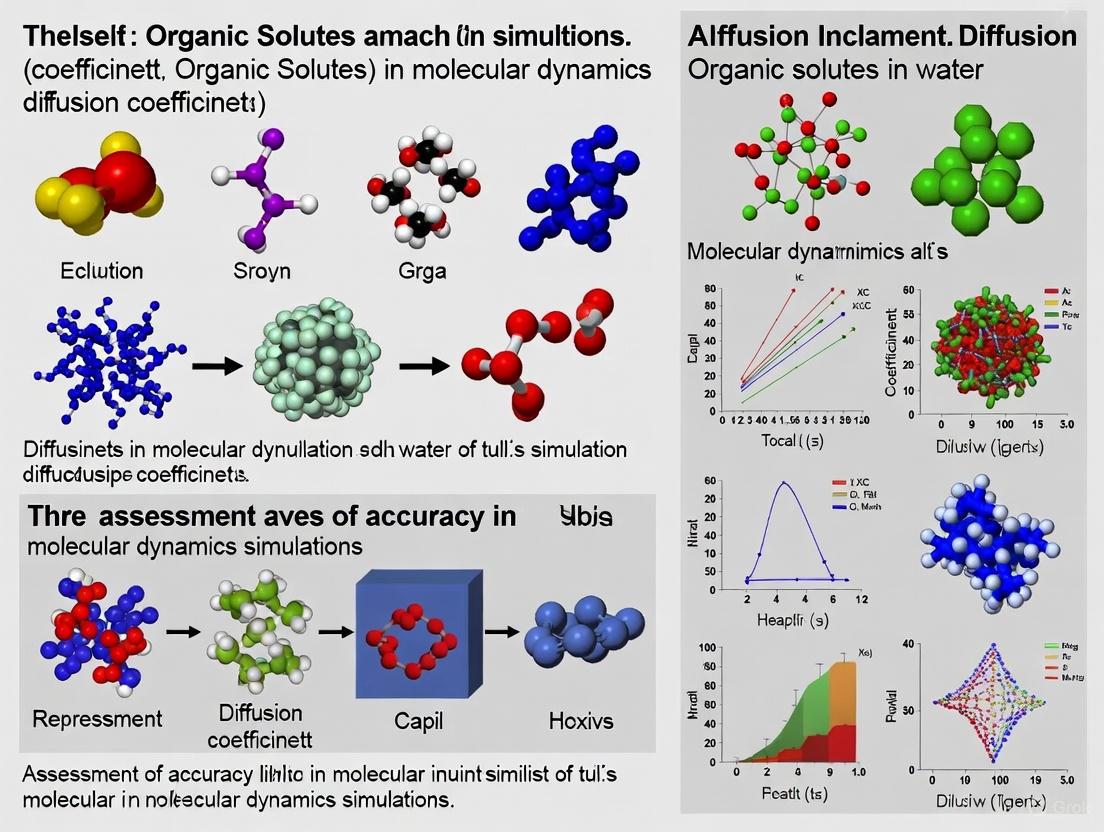

Accuracy Assessment of Diffusion Coefficients for Organic Solutes in Water: From Foundational Principles to Advanced Predictive Models

Accurate determination of diffusion coefficients for organic solutes in water is critical for pharmaceutical development, environmental forecasting, and chemical process design.

Accuracy Assessment of Diffusion Coefficients for Organic Solutes in Water: From Foundational Principles to Advanced Predictive Models

Abstract

Accurate determination of diffusion coefficients for organic solutes in water is critical for pharmaceutical development, environmental forecasting, and chemical process design. This article provides a comprehensive accuracy assessment spanning foundational principles, established experimental methods like Taylor dispersion, common error sources in measurement, and the emergence of machine learning models. By synthesizing recent research, we offer a systematic framework for researchers and drug development professionals to evaluate, troubleshoot, and select optimal strategies for predicting and measuring these vital parameters, ultimately enhancing the reliability of diffusion-driven processes.

Why Accuracy Matters: The Critical Role of Diffusion Coefficients in Biomedical and Environmental Processes

Core Concepts and Fundamental Principles

The diffusion coefficient, often symbolized as D, is a fundamental physical constant that quantifies the rate of molecular diffusion. It is defined as the proportionality constant in Fick's first law of diffusion, which states that the molecular flux ( J ) is proportional to the negative of the concentration gradient ( dc/dx ) [1]. Physically, it represents the amount of a substance that diffuses across a unit area in one second under the influence of a unit concentration gradient [2] [3].

The SI unit for the diffusion coefficient is square meters per second (m²/s), though square centimeters per second (cm²/s) is also commonly used [3] [4] [1]. A higher diffusion coefficient indicates a faster rate of diffusion between substances [4].

Quantitative Data Comparison: Diffusion Coefficients of Representative Substances

The diffusion coefficient of a substance is not an absolute value but depends on the state of matter and the specific medium through which diffusion occurs. The table below provides a comparison of diffusion coefficients for various substances in different media, illustrating typical orders of magnitude.

Table 1: Experimentally Determined Diffusion Coefficients in Different Media

| Solute | Solvent/Medium | Temperature (°C) | Diffusion Coefficient, D | Source / Context |

|---|---|---|---|---|

| Oxygen (O₂) | Air (gas) | 25 | 0.210 cm²/s [4] | Binary diffusion in gas phase |

| Carbon Dioxide (CO₂) | Air (gas) | 25 | 0.160 cm²/s [4] | Binary diffusion in gas phase |

| Hydrogen (H₂) | Air (gas) | 25 | 0.410 cm²/s [4] | Binary diffusion in gas phase |

| Oxygen (O₂) | Water (liquid) | 25 | 2.10 × 10⁻⁵ cm²/s [4] | Solute at infinite dilution |

| Carbon Dioxide (CO₂) | Water (liquid) | 25 | 1.92 × 10⁻⁵ cm²/s [4] | Solute at infinite dilution |

| Glucose | Water (liquid) | 25 | ~6.70 × 10⁻⁶ cm²/s [5] | Experimental data from Taylor dispersion method |

| Sorbitol | Water (liquid) | 25 | ~6.60 × 10⁻⁶ cm²/s [5] | Experimental data from Taylor dispersion method |

| Acetone | Water (liquid) | 25 | 1.16 × 10⁻⁵ cm²/s [4] | Solute at infinite dilution |

| Ethanol | Water (liquid) | 25 | 0.84 × 10⁻⁵ cm²/s [4] | Solute at infinite dilution |

The data shows that diffusion coefficients in gases are typically ~10,000 times greater than in liquids [4]. Within liquids, larger molecules like glucose and sorbitol have significantly smaller diffusion coefficients compared to smaller molecules like oxygen or acetone [4] [5].

Experimental Protocols for Determining Diffusion Coefficients

Accurate measurement of diffusion coefficients, especially for organic solutes in water, is critical for research and process design. Unlike viscosity or thermal conductivity, there is no single universally standardized technique, and methods are often chosen based on the specific system [6]. The following sections detail two prominent methodologies.

Taylor Dispersion Method

The Taylor dispersion method is a widely used, indirect technique for measuring mutual diffusion coefficients in liquid systems, valued for its relative experimental simplicity [5].

Detailed Workflow:

- Apparatus Setup: A long (e.g., 20 m), thin-bore (e.g., 3.945×10⁻⁴ m), coiled capillary tube is placed in a thermostatic bath to maintain a constant temperature [5].

- Solvent Flow: A solvent (e.g., water) is pumped through the capillary tube under laminar flow conditions [5].

- Solute Injection: A small, precise volume (e.g., 0.5 cm³) of a solution with a slightly different composition (e.g., glucose in water) is injected as a sharp pulse into the solvent stream [5].

- Dispersion and Detection: As the pulse travels through the capillary, the parabolic velocity profile of the laminar flow causes the solute to disperse, forming a characteristic concentration distribution that is detected at the outlet, typically using a differential refractive index analyzer [5].

- Data Analysis: The temporal variance of the resulting concentration distribution (which approaches a Gaussian shape) is directly related to the diffusion coefficient of the solute, which is obtained by fitting the detected profile to the solution of the Taylor dispersion equation [5].

Transient Uptake/Release Methods for Biofilms and Granular Solids

For complex, porous media like biofilms or granular sludge, methods based on transient mass balance are common, though they often face challenges with precision and accuracy [7].

Detailed Workflow for Transient Uptake:

- Biomass Preparation: Granules or biofilm particles, initially free of the solute of interest, are placed in a well-mixed solution of finite volume with a known solute concentration [7].

- Deactivation (if necessary): For non-reactive solutes, the biomass may be deactivated to prevent biological consumption from confounding diffusion measurements [7].

- Concentration Monitoring: The decrease in solute concentration in the liquid bulk is monitored over time as the solute diffuses into the granules [7].

- Data Analysis: The time-dependent concentration data is fitted to the solution of Fick's second law of diffusion for a sphere, yielding the effective diffusivity [7].

Detailed Workflow for Transient Release: This is the reverse process, where solute-loaded granules are placed in a solute-free solution, and the increase in bulk concentration is monitored and analyzed to determine the diffusion coefficient [7].

Decision Workflow for Method Selection

The flowchart below outlines a logical pathway for researchers to select an appropriate method for measuring diffusion coefficients based on their specific system and requirements.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful experimental determination of diffusion coefficients requires specific reagents and instrumentation. The following table details key materials and their functions in the protocols described above.

Table 2: Key Research Reagent Solutions and Essential Materials

| Item Name | Function / Application | Example & Notes |

|---|---|---|

| Taylor Dispersion Apparatus | Measures mutual diffusion coefficients in liquid solutions. | Includes a long coiled capillary tube (e.g., 20 m Teflon), peristaltic pump, thermostatic bath, and differential refractive index detector [5]. |

| Microelectrodes | Measures concentration profiles within porous media like biofilms at micro-scale. | Used for O₂, pH, CO₂; provides high-resolution spatial data in steady-state or transient methods [7]. |

| Model Organic Solutes | Well-characterized, pure compounds for method calibration and fundamental studies. | D(+)-Glucose (≥99.5%), D-Sorbitol (≥98%) for studying sugar transport [5]. Acetone, Ethanol for simpler systems [4]. |

| Deionized / Ultra-pure Water | Standard solvent for preparing aqueous solutions and ensuring no ionic interference. | Obtained from systems like Millipore Elix 3 (conductivity 1.6 μS) [5]. |

| Predictive Software & Models | Estimates diffusion coefficients using theoretical and empirical correlations. | Wilke-Chang and Hayduk-Minhas correlations for liquids; Chapman-Enskog theory for gases [3] [5]. Modern approaches use machine learning [8]. |

| Thermostatic Bath | Maintains constant temperature during measurement, critical as D is temperature-sensitive. | Required for methods like Taylor dispersion to ensure data reliability and study temperature dependence [5]. |

Critical Considerations for Accuracy Assessment

Assessing the accuracy of measured diffusion coefficients, particularly for organic solutes in water, requires acknowledging significant methodological challenges.

- Method Limitations: A 2020 simulation study concluded that existing methods for measuring diffusion coefficients in complex media like biofilms are inherently imprecise and inaccurate, with some methods having a theoretical relative standard deviation of up to 61% and potential underestimation of the true value by up to 37% [7]. This is attributed to factors like solute sorption, mass transfer boundary layers, and inaccurate assumptions about granule size and shape [7].

- Empirical Correlation Errors: Predictive models like Wilke-Chang are valuable but can significantly overestimate experimental results, especially at higher temperatures. For instance, in glucose-water systems, the Wilke-Chang correlation showed good agreement at 25-45°C but substantially overestimated the diffusion coefficient at 65°C [5]. This highlights the importance of experimental validation for specific conditions.

- System Dependency: The diffusion coefficient is not an intrinsic property of the solute alone. In porous media, the effective diffusion coefficient is always less than in free solution due to tortuosity (τ) and porosity (ε), following the relationship ( D_{eff} = D \cdot \varepsilon / \tau ) [7] [9]. Ignoring these factors introduces significant inaccuracy.

The accurate determination of diffusion coefficients (D) for organic solutes in water is a cornerstone of predictive modeling across diverse scientific and engineering disciplines. This parameter quantifies the rate at which molecules disperse due to random thermal motion and is critical for designing and optimizing processes in pharmaceutical science, environmental engineering, and chemical reactor design. Variations in the methods used to obtain this value—ranging from theoretical estimation to experimental measurement—can lead to significantly different outcomes in real-world applications. This guide provides a comparative analysis of how diffusion coefficients are applied and validated within these key fields, offering researchers a framework for assessing the accuracy and appropriateness of different determination methods.

Comparative Data Analysis of Diffusion Coefficient Applications

The table below summarizes the core applications, key parameters, and comparative findings related to diffusion coefficients across three critical fields.

Table 1: Key Applications of Diffusion Coefficients in Water: A Comparative Analysis

| Application Field | Key Organic Solutes/Polymers Studied | Determination Method | Key Parameter(s) / Outcome | Comparative Finding / Impact |

|---|---|---|---|---|

| Drug Transport [10] [11] | Diltiazem HCl, Theophylline in Ethyl Cellulose (EC), Eudragit RS 100 | Experimental release from thin films (monolithic solutions); Fick's law analysis | Diffusion Coefficient (D) in polymer; Drug release kinetics | D significantly influenced by plasticizer type/amount (e.g., 17.5% w/w TBC in EC 10: D = 1.2 × 10⁻¹⁰ cm²/s for Theophylline). Polymer chain length had minor effect. |

| Pollutant Dispersion [12] [13] | Ammonia Nitrogen (NH₃–N), Total Phosphorus (TP), Chemical Oxygen Demand (COD) | Integrated numerical modeling (SWMM-EFDC); 2D advection-dispersion model | Longitudinal Dispersion Coefficient (DL); Pollutant concentration | DL highly dependent on flow velocity profile: 0.17 m²/s (gradient flow) vs. 89.94 m²/s (drift flow), drastically altering predicted pollution spread. |

| Reactor Design [14] | Glucose, Sorbitol | Experimental measurement vs. Theoretical estimation (Wilke-Chang, Hayduk-Minhas correlations) | Diffusion Coefficient (D) in aqueous solution; Reactor conversion profile | At 65°C, model estimates significantly overestimated D versus experimental data, leading to inaccurate prediction of glucose conversion along the reactor axis. |

Experimental Protocols and Methodologies

A critical understanding of the data presented above requires insight into the experimental and numerical methodologies employed to obtain them.

Protocol for Determining Drug Diffusion in Polymers

In the development of diffusion-controlled drug delivery systems, the diffusion coefficient of an active pharmaceutical ingredient within a polymer matrix is typically determined through a desorption kinetics experiment [10].

- Film Preparation: A thin film (e.g., ~50 μm thick) is created where the drug is molecularly dispersed (a monolithic solution) within a polymer (e.g., Ethyl Cellulose) and plasticizer (e.g., Tributyl Citrate) mixture.

- Drug Desorption: The drug-containing film is immersed in a well-stirred release medium (e.g., buffer solution) at a constant temperature (e.g., 37°C).

- Concentration Monitoring: The concentration of the drug released into the medium is measured at regular time intervals until release is complete.

- Data Fitting with Fick's Law: The entire release profile is fitted to the solution of Fick's second law of diffusion for a plane sheet. The governing equation and solution are complex, but the cumulative release (Mt) as a fraction of the total drug (M∞) can be described by a series solution [10]: ( \frac{Mt}{M{\infty}} = 1 - \sum{n=1}^{\infty} \frac{2G^2 \exp(-\betan^2 D t / L^2)}{\betan^2 (\betan^2 + G^2 + G)} ) where ( G = L h / D ), ( h ) is the mass transfer coefficient, ( L ) is the film thickness, ( D ) is the diffusion coefficient, and ( \beta_n ) are the roots of ( \beta \tan \beta = G ). The diffusion coefficient (D) is the primary fitting parameter extracted from this analysis.

Protocol for Numerical Analysis of Pollutant Dispersion

For predicting the spread of pollutants in rivers and coastal zones, a two-dimensional depth-averaged numerical model is often used [13]. The workflow involves solving a system of differential equations.

- Governing Equation: The core model is the depth-averaged advection-dispersion equation: ( \frac{\partial h \bar{c}}{\partial t} + \frac{\partial h \bar{ux} \bar{c}}{\partial x} + \frac{\partial h \bar{uy} \bar{c}}{\partial y} = \frac{1}{h} \frac{\partial}{\partial x} \left( h D{xx} \frac{\partial \bar{c}}{\partial x} + h D{xy} \frac{\partial \bar{c}}{\partial y} \right) + \frac{1}{h} \frac{\partial}{\partial y} \left( h D{yx} \frac{\partial \bar{c}}{\partial x} + h D{yy} \frac{\partial \bar{c}}{\partial y} \right) ) where ( \bar{c} ) is depth-averaged concentration, ( \bar{ux}, \bar{uy} ) are depth-averaged velocity components, and ( h ) is water depth.

- Dispersion Tensor: The components of the dispersion tensor (Dxx, Dxy, etc.) are calculated using the longitudinal (DL) and transverse (DT) dispersion coefficients, which are themselves functions of the flow velocity and bottom friction [13]. A key step is characterizing the vertical velocity profile as either "gradient" (driven by gravity/pressure) or "drift" (driven by surface wind stress), which leads to vastly different D_L values.

- Model Simulation: The equations are solved numerically over a computational mesh of the study area (e.g., a bay) with input from a hydrodynamic model that provides the velocity field. The output is the spatial and temporal distribution of pollutant concentration.

Protocol for Experimental vs. Theoretical Diffusion in Reactors

In reactor design, particularly for laminar flow reactors, validating theoretical diffusion coefficients is essential [14].

- Experimental Measurement: The diffusion coefficients of key reactants and products (e.g., glucose and sorbitol) are measured experimentally across a range of relevant temperatures and concentrations. The specific method was not detailed in the provided source, but techniques often involve Taylor dispersion or NMR.

- Theoretical Estimation: The same diffusion coefficients are estimated using established correlations, such as the Wilke-Chang or Hayduk-Minhas equations, which are based on properties like molecular weight and viscosity.

- Reactor Simulation & Comparison: A reactor model is run twice: first using the experimentally determined diffusion coefficients, and then using the theoretically estimated ones. The outputs (e.g., glucose conversion profile along the reactor axis) are compared to quantify the impact of the accuracy of D on the model's predictive power.

Visualization of Workflows and Relationships

The following diagrams illustrate the core experimental and numerical workflows discussed in this guide.

Drug Release and Polymer Diffusivity

Diagram 1: Workflow for determining drug diffusion coefficients in polymers.

Pollutant Dispersion Modeling

Diagram 2: Numerical workflow for pollutant dispersion simulation.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Materials and Their Functions in Diffusion Studies

| Material / Reagent | Function in Research | Application Field |

|---|---|---|

| Ethyl Cellulose (EC) [10] | A hydrophobic polymer used to form the controlled-release matrix; its viscosity grade and chain length can influence drug diffusivity. | Drug Transport |

| Acetyltributyl Citrate (ATBC) [10] | A water-insoluble plasticizer; incorporated into the polymer matrix to increase polymer chain mobility and thereby increase drug diffusion coefficient. | Drug Transport |

| Eudragit RS 100 [10] | A copolymer for drug delivery; forms a permeable, non-swelling film that allows for diffusion-controlled release. | Drug Transport |

| Chloride Ion (Cl⁻) [15] | Used as a conservative tracer (e.g., in sodium chloride) in field studies to track groundwater flow and calibrate dispersion models. | Pollutant Dispersion |

| Glucose [14] | A common reactant and solute; its experimentally measured diffusion coefficient is crucial for accurate modeling of reactor performance in processes like sorbitol production. | Reactor Design |

| Sorbitol [14] | A reaction product; measuring its diffusion coefficient is important for understanding its transport away from the catalyst site in a reactor. | Reactor Design |

| Acoustic Doppler Current Profiler (ADCP) [13] | A field instrument used to measure water velocity profiles, which are essential for calculating empirical dispersion coefficients in rivers and coastal zones. | Pollutant Dispersion |

The accurate determination of diffusion coefficients for organic solutes in aqueous solutions represents a fundamental challenge with significant implications across scientific and industrial domains. In pharmaceutical research, these values predict drug mobility in biological fluids; in chemical engineering, they inform reactor and separation process design; and in environmental science, they dictate the transport of organic contaminants. The core challenges in accurate diffusion coefficient assessment revolve around three interconnected factors: molecular size of the solute, system temperature, and the complex solute-solvent interactions that occur in different chemical environments. Different experimental methodologies have been developed to probe these parameters, each with distinct advantages and limitations. This guide objectively compares the performance of key experimental approaches and the predictive models that support them, providing researchers with a framework for selecting appropriate methodologies based on their specific accuracy requirements.

The stakes for accurate measurement are substantial. Recent research demonstrates that using estimated rather than experimentally determined diffusion coefficients can significantly alter the predicted conversion profile in reactor simulations, directly impacting process optimization and scale-up [16] [5]. Furthermore, the assumption that widely used predictive models like Stokes-Einstein and Wilke-Chang maintain accuracy across all conditions has been critically tested, revealing significant deviations in specific temperature regimes and solution compositions [16] [17]. This assessment provides a structured comparison of methodological approaches, delivering the experimental data and protocol details necessary for informed decision-making in diffusion coefficient research.

Quantitative Data Comparison

Diffusion Coefficients of Organic Solutes in Various Aqueous Systems

Table 1: Experimentally Measured Diffusion Coefficients of Organic Solutes

| Solute | Solvent System | Temperature (°C) | Diffusion Coefficient (m²/s) | Measurement Technique | Key Observation |

|---|---|---|---|---|---|

| Phenol/Toluene | SDS Solutions (below CMC) | Not Specified | Almost independent of SDS concentration | Taylor Dispersion | Demonstrates micelle-independent diffusion in absence of micelle formation [18] |

| Phenol/Toluene | SDS Solutions (above CMC) | Not Specified | Rapid decrease | Taylor Dispersion | Shows significant reduction due to micelle solubilization [18] |

| Glucose | Water | 25-65 | Measured across temperature range | Taylor Dispersion | Temperature dependence observed; models overestimate at higher temperatures [16] [5] |

| Sorbitol | Water | 25-65 | Measured across temperature range | Taylor Dispersion | Similar temperature dependence to glucose [16] [5] |

| Fluorescein | Sucrose-Water (aw=0.38) | Not Specified | 1.9 × 10⁻¹⁷ | Fluorescence Recovery After Photobleaching (FRAP) | Stokes-Einstein underpredicted by factor of 118 [17] [19] |

| Rhodamine 6G | Sucrose-Water (aw=0.38) | Not Specified | 1.5 × 10⁻¹⁸ | FRAP | Stokes-Einstein underpredicted by factor of 17 [17] |

| Calcein | Sucrose-Water (aw=0.38) | Not Specified | 7.7 × 10⁻¹⁸ | FRAP | Stokes-Einstein underpredicted by factor of 70 [17] |

| Polyethylene Glycols (≤4 kDa) | Aerobic Granules | 4.0 ± 0.1 | Not significantly different from water | Transient Uptake Method | No significant obstruction by granule matrix [20] |

| PEG (10 kDa) | Aerobic Granules | 4.0 ± 0.1 | Could not penetrate entire granule | Transient Uptake Method | Diffusion hindered by semi-solid regions [20] |

Table 2: Predictive Model Performance Across Conditions

| Predictive Model | Application Domain | Accuracy Conditions | Limitations | Key References |

|---|---|---|---|---|

| Stokes-Einstein Relation | Sucrose-water solutions (proxy for SOA) | Accurate at water activity ≥0.6 (viscosity ≤360 Pa·s) | Underpredicts diffusion by factors of 17-118 at water activity of 0.38 (high viscosity) [17] | Chenyakin et al., 2017 [17] [19] |

| Wilke-Chang Correlation | Glucose-Water, Sorbitol-Water | Similar to experimental data at 25-45°C | Significantly overestimates experimental results at 65°C [16] [5] | Taddeo et al., 2025 [16] [5] |

| Hayduk-Minhas Correlation | Glucose-Water, Sorbitol-Water | Similar to experimental data at 25-45°C | Significantly overestimates experimental results at 65°C [16] | Taddeo et al., 2025 [16] [5] |

Experimental Protocols and Methodologies

Detailed Methodologies for Diffusion Coefficient Measurement

Taylor Dispersion Technique The Taylor dispersion method has become a predominant technique for measuring mutual diffusion coefficients in both binary and ternary systems due to its relatively straightforward experimental setup and measurement execution [16]. The protocol is based on the dispersion of a small pulse of solution into a carrier stream of slightly different composition flowing through a long, thin capillary tube under laminar flow conditions. The standard implementation involves: (1) Using Teflon tubing of approximately 20 meters in length with a very small internal diameter (e.g., 3.945 × 10⁻⁴ m) coiled into a helix of approximately 40 centimeters diameter; (2) Maintaining constant temperature through immersion in a thermostat; (3) Injecting a precise volume (e.g., 0.5 cm³) of solution into the carrier stream using a peristaltic pump and injector system; (4) Monitoring the outlet stream with a differential refractive index analyzer with high sensitivity (e.g., 8 × 10⁻⁸ RIU); (5) Recording the signal continuously through a data acquisition system [16] [5]. The method assumes fully developed laminar flow with a parabolic velocity profile and depends on the analysis of the concentration distribution variance at the tube outlet to calculate diffusion coefficients. For ternary systems, the approach was extended from its original binary formulation, allowing determination of cross-diffusion coefficients [16].

Fluorescence Recovery After Photobleaching (FRAP) FRAP provides an alternative methodology particularly valuable for measuring diffusion in viscous or complex matrices. The technique involves: (1) Incorporating fluorescent probe molecules (e.g., fluorescein, rhodamine 6G, calcein) into the sample matrix; (2) Using a focused laser beam to photobleach a small region of the fluorescent sample; (3) Monitoring the subsequent recovery of fluorescence in the bleached area as unbleached molecules diffuse into it; (4) Analyzing the recovery kinetics to calculate diffusion coefficients [17] [19]. This method has been particularly useful for studying diffusion in highly viscous systems like sucrose-water solutions that serve as proxies for secondary organic aerosols, where it revealed significant deviations from Stokes-Einstein predictions at low water activities [17].

Transient Uptake of Non-Reactive Solute This method is specifically adapted for measuring diffusion in porous granular structures like aerobic granular sludge. The standardized protocol includes: (1) Preparing a granule solution in a volumetric flask with a specific ratio of water volume to granule volume (typically α-value ≈ 4); (2) Creating a separate solution containing the solute of interest (e.g., polyethylene glycols of varying molecular weights); (3) Combining the solutions in a jacketed glass vessel maintained at constant temperature (4.0 ± 0.1°C to minimize biological activity); (4) Sampling at irregular intervals using pipette tips covered with stainless steel mesh to exclude granules; (5) Replacing sampled volume immediately with solution of expected final solute concentration to maintain constant volume; (6) Determining final granule volume using the modified Dextran Blue method [20]. This approach has revealed that diffusion coefficients for molecules up to 4 kDa in aerobic granules are not significantly different from their values in water, indicating minimal obstruction by the granule matrix [20].

Experimental Workflow Visualization

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Experimental Materials

| Reagent/Material | Function in Diffusion Experiments | Application Examples | Technical Specifications |

|---|---|---|---|

| Sodium Dodecyl Sulfate (SDS) | Surfactant for studying micelle-mediated diffusion | Investigating solute-micelle interactions and solubilization effects [18] | Critical micelle concentration dependent; purity ≥99% |

| Fluorescent Dyes (Fluorescein, Rhodamine 6G, Calcein) | Molecular probes for FRAP measurements | Measuring diffusion in viscous sucrose-water solutions [17] [19] | High quantum yield, photostable, specific excitation/emission profiles |

| Polyethylene Glycols (PEGs) | Model substrates of varying molecular weights | Studying molecular weight effects on diffusion in porous granules [20] | Molecular weight range: 62 Da - 10,000 Da; monodisperse preferred |

| d(+)-Glucose | Model solute for binary and ternary systems | Diffusion studies in aqueous solutions at varying temperatures [16] [5] | High purity (≥99.5%); dried at 40°C for 2 hours before use |

| d-Sorbitol | Model solute for binary and ternary systems | Diffusion studies in aqueous solutions at varying temperatures [16] [5] | High purity (≥98%); dried at 40°C for 2 hours before use |

| Sucrose | Matrix former for viscous solutions | Creating proxy systems for secondary organic aerosols [17] [19] | Analytical grade; prepared at specific water activities |

| Teflon Capillary Tubing | Flow conduit for Taylor dispersion | Housing laminar flow for dispersion measurements [16] [5] | Length: ~20 m; Internal diameter: ~0.4 mm; coiled configuration |

| Differential Refractive Index Analyzer | Detection system for concentration changes | Monitoring solute dispersion in Taylor method [16] [5] | High sensitivity (e.g., 8×10⁻⁸ RIU); continuous data acquisition |

Critical Analysis of Methodological Performance

Assessment of Experimental Techniques

The comparative analysis of experimental methodologies reveals a clear trade-off between applicability, accuracy, and complexity. The Taylor dispersion technique demonstrates exceptional versatility across binary and ternary systems with straightforward implementation, but requires careful control of flow conditions and temperature stability. Recent applications in glucose-sorbitol-water systems highlight its precision in capturing temperature-dependent behavior, though proper execution demands substantial tubing length (10-20 meters) and precise internal diameter control [16] [5]. The method's reliability depends heavily on maintaining laminar flow regimes through appropriate flow rates and capillary dimensions.

The FRAP technique offers distinct advantages for studying diffusion in highly viscous or complex matrices where conventional methods face limitations. Its application in sucrose-water systems revealed the critical breakdown of Stokes-Einstein predictions at low water activities, underscoring its value for challenging measurement environments [17] [19]. However, this method requires incorporation of fluorescent probes that may potentially alter system properties, and the data interpretation depends on appropriate modeling of recovery kinetics. The technique successfully captured diffusion coefficients spanning four to five orders of magnitude as water activity varied from 0.38 to 0.80, demonstrating its dynamic range [17].

The transient uptake method provides specialized capability for measuring diffusion in porous media and biological matrices like aerobic granular sludge. Its key advantage lies in directly quantifying solute penetration into complex structures, revealing that molecules up to 4 kDa diffuse through granules without significant obstruction [20]. The method requires careful temperature control (4.0 ± 0.1°C) to minimize biological activity during measurements and specialized sampling techniques to exclude granular material from liquid samples.

Evaluation of Predictive Models

The assessment of predictive models against experimental data reveals context-dependent performance with significant implications for researchers. The Stokes-Einstein relation provides reasonable predictions in sucrose-water solutions at water activities ≥0.6 (viscosity ≤360 Pa·s), but substantially underpredicts diffusion coefficients at lower water activities (higher viscosities), with errors ranging from 17 to 118-fold depending on the specific molecule [17]. This breakdown at high viscosities challenges its uncritical application in glassy or highly viscous systems relevant to atmospheric aerosol science and pharmaceutical formulations.

The Wilke-Chang and Hayduk-Minhas correlations offer convenient estimation for organic solutes in aqueous systems, demonstrating reasonable agreement with experimental data for glucose and sorbitol at moderate temperatures (25-45°C) [16] [5]. However, both models significantly overestimate diffusion coefficients at elevated temperatures (65°C), indicating temperature-dependent limitations that must be considered in process design applications. This temperature-sensitive inaccuracy directly impacts reactor simulation outcomes, as demonstrated by different glucose conversion profiles when using experimental versus predicted diffusion values [16].

The accuracy assessment of diffusion coefficient methodologies reveals that strategic selection depends critically on the specific research context and system properties. For standard aqueous organic solutions at moderate temperatures, Taylor dispersion provides robust, reliable data with established protocols. For viscous, glassy, or complex matrices, FRAP offers unique capabilities but requires careful validation against potential probe effects. For porous media and biological systems, transient uptake methods deliver relevant penetration data but with increased experimental complexity.

The performance comparison of predictive models underscores that while computational estimations provide valuable screening tools, critical applications require experimental validation, particularly at temperature extremes or in high-viscosity regimes. The consistent finding that model deviations follow predictable patterns (e.g., systematic overprediction at higher temperatures) enables researchers to apply appropriate correction factors when experimental determination is impractical.

This comparison guide provides the foundational framework for researchers to match methodological approaches to their specific accuracy requirements, system properties, and experimental constraints. The compiled experimental data, technical protocols, and performance assessments create a decision-making resource for advancing diffusion coefficient research across pharmaceutical, environmental, and chemical processing applications.

In scientific research and industrial application, the concept of "accuracy" is an imperative that transcends individual disciplines. Whether the subject is a statistical model forecasting clinical outcomes or a physical model predicting the diffusion of an organic solute in water, the reliability of the prediction directly impacts scientific credibility and operational success. In predictive analytics, accuracy refers to how well a model's forecasts align with actual observed outcomes, measured through statistical metrics and validation techniques [21] [22]. In physical chemistry, accuracy manifests in the precise determination of parameters like diffusion coefficients, which quantify how substances disperse through mediums—a critical factor in processes from drug delivery to environmental remediation [23] [5].

This guide explores this accuracy imperative through an interdisciplinary lens, comparing different methodological approaches for assessing predictive reliability. We demonstrate how principles for evaluating machine learning models find direct parallels in laboratory protocols for measuring physicochemical properties, creating a unified framework for accuracy assessment across computational and experimental domains.

Evaluating Predictive Models: Metrics and Methodologies

Core Accuracy Metrics for Predictive Models

The assessment of predictive models employs distinct metrics tailored to the model's task—classification versus regression—and the specific business or research context [21] [22].

Table 1: Key Metrics for Predictive Model Evaluation

| Metric Category | Specific Metric | Interpretation and Application |

|---|---|---|

| Overall Performance | Brier Score [24] | Measures the average squared difference between predicted probabilities and actual outcomes (0=perfect; 0.25=non-informative for 50% incidence). |

| Discrimination | C-statistic (AUC-ROC) [24] | Indicates the model's ability to distinguish between classes (e.g., patients with vs. without disease). Value from 0.5 (no discrimination) to 1 (perfect discrimination). |

| Discrimination | Discrimination Slope [24] | The difference in the mean of predictions between subjects with and without the outcome. Easy to visualize with box plots. |

| Calibration | Calibration Slope [24] | The slope of the linear predictor; a value of 1 indicates ideal calibration. Critical for external validation. |

| Calibration | Hosmer-Lemeshow Test [24] | A goodness-of-fit test comparing observed to predicted events by decile of predicted probability. |

| Clinical Usefulness | Net Benefit (Decision Curve Analysis) [24] | A decision-analytic measure that quantifies the net benefit of using a model to make decisions across a range of threshold probabilities. |

For classification models (e.g., predicting customer churn), accuracy alone can be misleading, especially with imbalanced datasets. A fraud detection model trained on data with 99% non-fraud cases might achieve 99% accuracy by always predicting "no fraud," rendering it useless. Therefore, metrics like precision (how many positive predictions were correct) and recall (how many actual positives were identified) provide better insights. The F1-score combines both, balancing false positives and false negatives [22].

For regression models (e.g., forecasting continuous outcomes like house prices or chemical reaction yields), common metrics include Root Mean Squared Error (RMSE) or Mean Absolute Error (MAE), which quantify the average deviation of predictions from actual values. R-squared measures the proportion of variance in the outcome that is explained by the model [21] [22].

Advanced Validation Techniques

Beyond single metrics, robust validation techniques are crucial to ensure models generalize to new, unseen data [21]. Cross-validation, particularly k-fold cross-validation, partitions the dataset into k subsets. The model is trained on k-1 subsets and tested on the remaining one, repeating this process k times. This technique provides a more comprehensive view of model performance and helps mitigate overfitting, where a model learns noise rather than underlying patterns, excelling on training data but failing on new data [21].

For assessing the reliability of individual predictions, advanced approaches include:

- Perturbation of Input Cases: Testing whether small alterations in input features lead to significantly different predictions, which would question the prediction's stability [25].

- Local Quality Measures: Evaluating model performance in the specific local region of the feature space where a new data point lies, as performance can vary across different areas of the data [25].

Accuracy in Experimental Science: The Case of Diffusion Coefficients

The Critical Role of Accurate Diffusion Coefficients

In chemical engineering and pharmaceutical research, the diffusion coefficient (D) is a fundamental physical parameter with direct implications for predictive model reliability. It quantifies the rate at which a molecule (e.g., an organic solute) diffuses through a solvent (e.g., water) [5]. Accurate values for D are critical for:

- Reactor Design and Simulation: Optimizing processes like the catalytic hydrogenation of glucose to sorbitol, where simulations using experimentally determined diffusion coefficients yield significantly different conversion profiles compared to those using estimated values [5].

- Environmental Forecasting: Predicting the evaporation and transport of volatile organic compounds (VOCs) from wastewater, which depends on their diffusion rates and other properties like Henry's law constant [26].

- Geological Storage Security: Modeling the diffusion of water in supercritical CO₂ during carbon sequestration in saline aquifers, as this controls brine evaporation and salt precipitation that can impact injection efficiency [23].

Experimental Protocols for Measuring Diffusion Coefficients

Several experimental methods exist for determining diffusion coefficients, each with specific protocols, advantages, and limitations. The choice of method significantly impacts the accuracy and reliability of the obtained values [7].

Table 2: Comparison of Methods for Measuring Diffusion Coefficients in Aqueous Systems

| Method | Basic Principle | Typical System | Key Challenges and Error Sources |

|---|---|---|---|

| Taylor Dispersion [5] | A pulse of solution is injected into a solvent flowing laminarly through a capillary tube. The dispersion of the pulse is measured to determine D. | Organic solute-water solutions (e.g., glucose, sorbitol). | Requires precise temperature control and a well-characterized flow system. Laminar flow regime is essential. |

| Quantitative Raman Spectroscopy [23] | Used to acquire concentration profiles of a solute (e.g., water in CO₂) in a capillary tube over time. D is determined based on Fick's laws. | High-pressure and high-temperature systems (e.g., CO₂ sequestration). | Sensitive to calibration and instrument stability. Avoids convection interference. |

| Transient Uptake/Release [7] | Measures the temporal change in bulk concentration as a solute diffuses into (uptake) or out of (release) a porous body like a granule or biofilm. | Biofilms, granular sludge. | Susceptible to error from solute sorption to biomass, granule shape irregularities, and size distribution. |

| Microelectrode Profiling [7] | A microelectrode measures the concentration profile of a solute (e.g., oxygen) within a biofilm or granule under steady-state or transient conditions. | Biofilms, granular sludge, single granules. | Presence of a mass transfer boundary layer can lead to underestimation of D. Requires invasive probes. |

A Monte Carlo analysis of methods for measuring diffusion coefficients in biofilms has revealed that these methods can be imprecise (relative standard deviation from 5% to 61%) and inaccurate, with one theoretical experiment showing a 37% underestimation of the true value due to error sources like solute sorption and mass transfer boundary layers [7].

The following diagram illustrates the logical relationship between the core concepts of predictive accuracy, its application in two distinct fields, and the shared imperative of rigorous methodology.

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Featured Experiments

| Reagent / Material | Function and Application | Example Context |

|---|---|---|

| Silica Capillary Tube | Serves as a high-pressure cell for observing diffusion processes; its small diameter helps avoid convection interference [23]. | Studying diffusion of water in supercritical CO₂ for carbon sequestration [23]. |

| Microelectrodes | Miniature sensors used to measure concentration profiles of specific solutes (e.g., O₂) within biofilms or granules with high spatial resolution [7]. | Determining diffusion coefficients and reaction zones in aerobic granular sludge [7]. |

| Raman Spectrometer | Provides quantitative, non-destructive analysis of concentration profiles in real-time during a diffusion experiment [23]. | Acquiring water concentration profiles in CO₂ to determine diffusion coefficients [23]. |

| Teflon Capillary Tube | The core component in the Taylor dispersion method; laminar flow within the tube is essential for measuring solute dispersion [5]. | Determining diffusion coefficients of glucose and sorbitol in water [5]. |

| Differential Refractive Index Analyzer | Detects the difference in refractive index between the carrier stream and the dispersed pulse at the outlet of the capillary in Taylor dispersion [5]. | Analyzing the dispersion profile of glucose/water and sorbitol/water systems [5]. |

The imperative for accuracy creates a common thread linking the seemingly disparate fields of predictive analytics and physical chemical measurement. In both domains, reliability is not a single number but a multi-faceted property assessed through rigorous methodology—be it cross-validation and perturbation tests for algorithms or Taylor dispersion and error analysis for diffusion coefficients. The most reliable outcomes, whether a clinical prognosis or a reactor simulation, arise from a disciplined commitment to quantifying and validating predictive accuracy at every stage, from business understanding and data preparation to experimental protocol and deployment. This disciplined approach ensures that predictions, in all their forms, can be trusted to inform critical decisions in science and industry.

Bench and Screen: A Guide to Experimental and Computational Determination Methods

In the realm of pharmaceutical research and development, accurately determining the diffusion coefficients of organic solutes in aqueous solutions is fundamental for understanding molecular size, behavior, and stability. This assessment forms the critical bridge to calculating hydrodynamic radii, a key parameter for characterizing therapeutic molecules from small peptides to complex proteins and nanoparticles [27]. Among the techniques available, Taylor Dispersion Analysis (TDA) and Dynamic Light Scattering (DLS) have emerged as prominent gold-standard methods. While both techniques rely on the Stokes-Einstein relationship to connect diffusion coefficients with hydrodynamic size, their underlying physical principles, operational methodologies, and applicability domains differ significantly [27] [28]. This guide provides an objective comparison of TDA and DLS performance, supported by experimental data, to inform researchers and drug development professionals in selecting the optimal technique for their specific analytical challenges in diffusion coefficient accuracy assessment.

Fundamental Principles and Methodologies

Taylor Dispersion Analysis (TDA)

Taylor Dispersion Analysis is an absolute method based on the dispersion of a solute plug under laminar Poiseuille flow within a uniform cylindrical capillary. First described by Taylor in 1953 and later refined by Aris, TDA measures the temporal broadening of an injected analyte band as it travels through a capillary immersed in a temperature-controlled bath [27]. The method operates by injecting a small nanoliter-scale sample plug into a carrier stream of buffer moving through a fused-silica capillary. As the sample transports through the capillary, the combined action of parabolic flow velocity and radial diffusion causes characteristic band dispersion. The hydrodynamic radius (Rh) is calculated from the peak arrival times and standard deviations at two detection windows using the derived equation:

\begin{equation} Rh = \sqrt[3]{\frac{kb T}{96 \pi^2 \eta \cdot \tan(\theta)} \cdot \frac{t2 - t1}{\tau2^2 - \tau1^2} \cdot \frac{1}{r^3}} \end{equation}

where $kb$ is the Boltzmann constant, $T$ is temperature, $\eta$ is viscosity, $r$ is capillary radius, $t1$ and $t2$ are peak center times, and $\tau1$ and $\tau_2$ are corresponding standard deviations of the peaks [27]. Modern TDA instruments utilize pixilated UV area imaging to enhance data collection quality, enabling routine measurement of therapeutic proteins and peptides.

Dynamic Light Scattering (DLS)

Dynamic Light Scattering, also known as photon correlation spectroscopy, determines particle size by measuring fluctuations in the intensity of scattered light caused by Brownian motion of particles in solution [28]. When a laser beam illuminates a sample, particles scatter light in all directions, with smaller particles moving rapidly and causing fast intensity fluctuations, while larger particles move more slowly and generate slower fluctuations [29]. The core of DLS analysis involves constructing an autocorrelation function (ACF) from these intensity fluctuations:

\begin{equation} g(\tau) = \frac{\langle I(t)I(t+\tau)\rangle}{\langle I(t)^2\rangle} \end{equation}

where $I(t)$ is the intensity at time $t$, and $\tau$ is the delay time [28]. This ACF is typically fitted as an exponential function:

\begin{equation} g(\tau) = b{\infty} + b0 \exp(-2\Gamma\tau) \end{equation}

where $b{\infty}$ is the baseline value, $b0$ is the maximum ACF value, and $\Gamma$ is the decay rate. The diffusion coefficient $D$ is derived from this analysis, and the hydrodynamic radius $R_h$ is subsequently calculated using the Stokes-Einstein equation:

\begin{equation} D = \frac{kB T}{6 \pi \eta Rh} \end{equation}

where $k_B$ is Boltzmann's constant, $T$ is absolute temperature, and $\eta$ is solvent viscosity [28]. DLS instruments typically employ a 90° or 173° scattering angle configuration, with advanced systems offering multi-angle detection for improved resolution of polydisperse samples [30].

Figure 1: Dynamic Light Scattering (DLS) Experimental Workflow. The process begins with laser illumination of the sample, detection of scattered light intensity fluctuations, autocorrelation function analysis, and calculation of hydrodynamic size via the Stokes-Einstein equation.

Experimental Performance Comparison

Analytical Capabilities and Limitations

Table 1: Technical Performance Comparison of TDA and DLS

| Parameter | Taylor Dispersion Analysis (TDA) | Dynamic Light Scattering (DLS) |

|---|---|---|

| Size Range | 0.1 nm - 100 nm (small molecules to proteins) [31] | 0.3 nm - 15 μm [30] |

| Concentration Range | 0.05 - 50 mg/mL (therapeutic proteins) [27] | 0.1 mg/mL (lysozyme) to 50% w/v [30] |

| Sample Volume | 56 nL [27] | 1.5 μL - 50 μL [30] |

| Measurement Principle | Flow-induced dispersion in capillary | Fluctuations in scattered light intensity |

| Diffusion Coefficient Accuracy | High for monodisperse solutions [31] | Moderate, affected by polydispersity [27] |

| Aggregate Detection Sensitivity | Lower sensitivity to large aggregates [27] | High sensitivity (scattering ∝ r⁶) [27] [28] |

| Small Molecule Analysis | Suitable (e.g., gadolinium contrast agents) [31] | Challenging below 1 nm [27] |

| Polydisperse Sample Analysis | Limited, provides average diffusion coefficient [27] | Better with multi-angle detection [30] |

| Excipient Interference | Minimal [27] | Significant, requires careful background subtraction |

Experimental Data from Comparative Studies

Table 2: Experimental Sizing Results for Therapeutic Molecules (TDA vs. DLS)

| Molecule | Concentration | Condition | TDA Hydrodynamic Radius (nm) | DLS Hydrodynamic Radius (nm) | Reference Method |

|---|---|---|---|---|---|

| Oxytocin | 0.5 mg/mL | Native | 1.2 ± 0.1 | Not measurable | Literature values [27] |

| Bovine Serum Albumin | 5 mg/mL | Native | 3.8 ± 0.2 | 3.7 ± 0.3 | Literature values [27] |

| IgG1 mAb | 1 mg/mL | Native | 5.4 ± 0.3 | 5.5 ± 0.4 | HP-SEC [27] |

| IgG1 mAb | 1 mg/mL | Thermally stressed (75°C) | 6.1 ± 0.4 | 8.2 ± 0.7 | HP-SEC with aggregate detection [27] |

| Etanercept | 25 mg/mL | Native | 6.8 ± 0.3 | 6.6 ± 0.5 | HP-SEC [27] |

| Etanercept | 25 mg/mL | Thermally stressed (65°C) | 7.5 ± 0.4 | 10.3 ± 0.9 | HP-SEC with aggregate detection [27] |

| Lipid Nanoparticles | 0.1 mg/mL | Formulated for mRNA | 45.2 ± 2.1 | 46.8 ± 3.2 | Complementary NTA [32] |

Comparative studies of therapeutic peptides and proteins demonstrate that TDA and DLS provide comparable sizing results for monodisperse systems in a concentration range of approximately 0.5 to 50 mg/mL [27]. However, TDA performs superiorly at lower concentrations where DLS tends to yield theoretically high Z-average radius values. A critical distinction emerges in analyzing stressed formulations: DLS shows significantly larger apparent hydrodynamic radii due to its heightened sensitivity toward aggregates, while TDA provides values closer to the monomeric species [27]. This makes DLS exceptionally valuable for aggregate detection but less accurate for determining the primary size in polydisperse systems.

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Materials for TDA and DLS Experiments

| Category | Specific Items | Function/Application | Compatible Techniques |

|---|---|---|---|

| Buffer Components | Phosphate buffers, citrate buffers, NaCl, arginine-HCl | Maintain physiological pH and ionic strength | TDA, DLS [27] |

| Stabilizers | Sucrose, mannitol, polysorbate 80 | Prevent aggregation and surface adsorption | TDA, DLS [27] |

| Quality Control Standards | NIST-traceable latex/nanoparticle standards | Instrument calibration and validation | DLS [30] |

| Capillaries | Fused silica capillaries (various diameters) | Sample transport and dispersion measurement | TDA [27] |

| Cuvettes | Quartz cuvettes (low volume: 45 μL) | Sample containment for light scattering measurements | DLS [30] |

| Therapeutic Proteins | Bovine serum albumin, IgG antibodies, etanercept | Model systems for method development and validation | TDA, DLS [27] |

| Small Molecules | Gadolinium-based contrast agents, oxytocin | Small molecule diffusion studies | TDA (preferred) [27] [31] |

Application-Specific Protocol Recommendations

Taylor Dispersion Analysis for Small Molecules and Peptides

Protocol: TDA for Gadolinium-Based Contrast Agents (Adapted from [31])

Instrument Setup: Utilize a TDA instrument equipped with UV detection and temperature control. Condition fused silica capillary (length: 1-2 m, internal diameter: 50-75 μm) with running buffer.

Buffer Preparation: Prepare appropriate aqueous buffer matching the formulation requirements. Filter through 0.2 μm membrane and degas prior to use.

Sample Preparation: Dissolve gadolinium-based contrast agents in running buffer at concentrations of 0.1-10 mg/mL. Centrifuge at 10,000-15,000 × g for 10 minutes to remove particulate matter.

Analysis Parameters: Set flow rate to 2 mm/s, injection volume to 56 nL, and detection wavelength based on analyte UV absorption (typically 200-280 nm for peptides).

Data Acquisition: Inject sample and monitor peak profiles at two detection windows. Record arrival times (t₁, t₂) and corresponding peak variances (τ₁², τ₂²).

Data Analysis: Calculate diffusion coefficient using the TDA equation. Derive hydrodynamic radius via Stokes-Einstein relationship. For frontal TDA mode, adapt calculations accordingly for improved sensitivity [31].

This protocol has demonstrated inter-capillary relative standard deviation of approximately 3.6% for hydrodynamic diameter measurements of gadolinium chelates, confirming good reproducibility [31].

Dynamic Light Scattering for Protein Aggregation Studies

Protocol: DLS for Stressed Monoclonal Antibody Formulations (Adapted from [27])

Sample Stress Induction: Subject therapeutic proteins (e.g., IgG1, etanercept) to thermal stress using a thermomixer. Typical conditions: 60-80°C for 10 minutes in 1.5 mL reaction tubes.

Instrument Calibration: Verify DLS performance using NIST-traceable latex size standards. Ensure laser warm-up time of at least 6 minutes for signal stability [30].

Sample Preparation: Dilute stressed and control proteins in formulation buffer to concentrations of 0.1-5 mg/mL. For high concentration formulations (50 mg/mL), dilute to appropriate scattering intensity range.

Measurement Parameters: Set temperature to 25°C, measurement angle to 90° or 173°, acquisition duration of 10-30 seconds per run with 10-15 repetitions.

Data Collection: Perform measurements in triplicate. Monitor correlation function decay and transmittance for signs of sedimentation or agglomeration during measurement.

Data Analysis: Apply cumulant analysis for polydispersity index (PDI) and z-average hydrodynamic radius. Use regularization algorithms for size distribution analysis when PDI > 0.2.

This protocol successfully identified size increases in thermally stressed monoclonal antibodies, with DLS showing greater responsiveness to aggregate formation compared to TDA [27].

Figure 2: Taylor Dispersion Analysis (TDA) Experimental Workflow. The process involves sample injection into capillary flow, formation of laminar flow profile with radial diffusion, detection of band broadening at two positions, and calculation of hydrodynamic radius via the Stokes-Einstein equation.

The choice between Taylor Dispersion Analysis and Dynamic Light Scattering for diffusion coefficient measurement depends critically on sample characteristics and research objectives. TDA excels in analyzing small molecules and peptides, provides accurate results across wide concentration ranges with minimal excipient interference, and is particularly valuable for absolute diffusion coefficient determination in monodisperse systems [27] [31]. Conversely, DLS offers superior sensitivity for aggregate detection in protein formulations, handles broader size ranges including nanoparticles, and provides more comprehensive information for polydisperse systems through advanced distribution algorithms [27] [30] [29]. For complete characterization of complex biologics such as lipid nanoparticle-based mRNA vaccines, employing both techniques orthogonally provides the most comprehensive size and distribution profile [32]. Researchers should select TDA when precise diffusion coefficients for small molecules are required, while opting for DLS when monitoring protein aggregation or analyzing heterogeneous nanoparticle systems.

The Role of Microelectrodes and Transient Uptake/Release Assays in Biofilms

In biofilm research, accurately determining the diffusion coefficients of organic solutes is paramount for understanding mass transfer limitations and predicting metabolic activity. Among the various techniques employed, microelectrodes and transient uptake/release assays represent critical methodological approaches. These techniques enable researchers to probe the internal environment of biofilms with high spatial and temporal resolution, providing essential data on solute transport. However, a comprehensive comparison of their experimental protocols, accuracy, and applicability is required by the rigorous demands of modern water research and drug development. This guide objectively evaluates the performance of these core techniques against alternative methods, framing the analysis within the broader thesis of accuracy assessment for diffusion coefficients in aquatic biofilm systems.

Theoretical Significance of Biofilm Diffusion Coefficients

The biofilm matrix, composed of extracellular polymeric substances (EPS) and microbial cells, imposes a diffusive resistance on the transport of metabolites, leading to concentration profiles that affect local microbial reaction rates [33]. This often results in severe mass transfer limitations and partially penetrated, less effective biofilms [33]. The effective diffusion coefficient (De) is the key parameter characterizing this diffusive transport, typically lower than the diffusion coefficient in water due to the obstruction posed by the biofilm matrix [7]. The accurate determination of De is therefore considered essential for modeling and scaling up microbial conversions in systems ranging from wastewater treatment to medical biofilms [33].

Despite its importance, the literature reveals a wide variation in reported D_e values, even for the same solutes [7]. This variability is partially attributed to genuine differences in biofilm density and composition, but also significantly to the inherent limitations and methodological differences in the experimental techniques used to measure them [7]. The structure of the biofilm imposes a diffusive resistance for the transport of metabolites, and as a consequence, concentration profiles will develop which affect the local microbial reaction rates [33].

Comparative Analysis of Measurement Techniques

Researchers have developed numerous methods to measure diffusion coefficients in biofilms, broadly categorized into steady-state and transient techniques. A critical review of the literature identifies six common methods, each with distinct operational principles and applications [7]. The choice of method involves trade-offs between precision, invasiveness, and technical complexity.

Table 1: Comparison of Biofilm Diffusion Coefficient Measurement Methods

| Method Name | Type | Measured Parameter | Key Requirement | Primary Advantage | Primary Disadvantage |

|---|---|---|---|---|---|

| Steady-State Reaction [7] | Mass Balance | Effective Diffusive Permeability | A priori knowledge of kinetic constants | Measures active biofilms under realistic conditions | Highly sensitive to inaccurate kinetic parameters |

| Transient Uptake of Non-Reactive Solute [7] | Mass Balance | Effective Diffusivity | Biomass deactivation or use of inert tracer | Avoids complications from microbial reaction | Deactivation may alter biofilm structure; tracer may not mimic real solute |

| Transient Release of Non-Reactive Solute [7] | Mass Balance | Effective Diffusivity | Biomass deactivation or use of inert tracer | Simpler liquid phase analysis than uptake | Same as transient uptake; potential for solute sorption errors |

| Steady-State Concentration Profiles [7] | Microelectrode | Effective Diffusive Permeability | Detectable concentration gradient in boundary layer | Direct measurement of internal concentration profile | Requires precise electrode positioning and calibration |

| Steady-State Reaction with Internal Profile [7] | Microelectrode | Effective Diffusive Permeability | Measured internal concentration gradient | Combines flux data with internal profile, less sensitive to boundary layer | Requires microelectrode measurement and external flux calculation |

| Transient Penetration to Center [7] | Microelectrode | Effective Diffusivity | Microelectrode positioned at granule center | Measures diffusion directly in active biofilms; high temporal resolution | Technically challenging setup; single-point measurement |

Performance and Accuracy Assessment

A Monte Carlo simulation analysis has revealed significant differences in the theoretical precision of these methods, with relative standard deviations ranging from 5% to 61% [7]. Furthermore, a model-based simulation of a diffusion experiment identified six key sources of error that can lead to an underestimation of the diffusion coefficient by up to 37% [7]. These error sources are:

- Solute Sorption: The non-specific binding of the solute to the biofilm matrix.

- Biomass Deactivation: Potential alteration of biofilm physical properties during inactivation.

- Mass Transfer Boundary Layer: Failure to account for external liquid resistance.

- Granule/Biofilm Roughness: Deviation from ideal spherical or smooth geometry.

- Granule/Biofilm Shape: Assumption of perfect spherical symmetry.

- Granule/Biofilm Size Distribution: Use of an average size instead of actual distribution.

These findings highlight that diffusion coefficients cannot be determined with high accuracy using existing experimental methods. Importantly, the need for highly precise measurements as input for biofilm models can be questioned, as model output generally has limited sensitivity to the diffusion coefficient [7].

Detailed Experimental Protocols

Microelectrode-Based Transient Penetration Assay

This method leverages microelectrodes to monitor the transient diffusion of a solute into a single biofilm particle or granule, allowing for the determination of the effective diffusivity in active biofilms [7].

Workflow Overview:

Protocol Steps:

- Biofilm Preparation: Well-defined model biofilms, such as aerobic granular sludge, are used. Alternatively, artificial biofilms can be constructed from agar containing inert polystyrene particles to simulate bacterial obstruction [33].

- Microelectrode Positioning: A microelectrode (e.g., for oxygen or a specific ion) is carefully positioned at the center of a single, representative biofilm granule suspended in a well-mixed solution [7].

- Concentration Step-Change: A rapid step-change in the concentration of the target solute is introduced into the well-mixed bulk liquid [7].

- Transient Response Monitoring: The microelectrode continuously monitors the transient concentration profile at the center of the granule as the solute diffuses inward [7].

- Data Analysis: The recorded transient response data is fitted to a solution of Fick's second law of diffusion using least-squares optimization. The effective diffusivity ((D_e)) is the fitting parameter that minimizes the difference between the model and experimental data [7].

Transient Uptake/Release Mass Balance Assay

This method relies on monitoring solute concentration changes in the bulk liquid to infer diffusion properties, avoiding the need for complex internal measurements.

Workflow Overview:

Protocol Steps:

- For Transient Uptake: Biofilm granules, free of the solute of interest, are placed in a well-mixed solution of finite volume with a known initial solute concentration. The decrease in bulk liquid concentration as the solute diffuses into the granules is monitored over time [7].

- For Transient Release: This is the reverse process. Biofilm granules are first soaked with the solute and then placed in a well-mixed solution that is initially solute-free. The subsequent increase in bulk liquid concentration is monitored [7].

- Data Analysis: For both variants, the time-dependent concentration data in the liquid phase is fitted to a solution of Fick's second law of diffusion for a sphere (or other relevant geometry) to obtain the effective diffusivity [7]. This method works best with inert tracer molecules or with microbial activity halted via deactivation, though deactivation may alter biofilm properties [33] [7].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution of these assays requires specific tools and materials. The table below details key solutions and their functions in biofilm diffusion research.

Table 2: Essential Research Reagent Solutions for Biofilm Diffusion Experiments

| Item | Function/Application | Key Considerations |

|---|---|---|

| Microelectrodes [33] [7] | Sensing specific analytes (e.g., O₂, pH, glucose) inside biofilms with high spatial resolution. | Tip diameter (μm-range); selectivity and sensitivity; calibration stability; mechanical robustness for penetration. |

| Artificial Biofilm Matrices [33] | Well-defined model systems to study obstruction effects without microbial activity. | Typically agar or other hydrogels with controlled inclusion of inert particles (e.g., polystyrene) to simulate bacteria. |

| Non-Reactive Tracers [33] [7] | Used in transient uptake/release assays to study diffusion without metabolic conversion. | Must closely resemble metabolites in size/charge; common examples include fluorescent dyes or inert sugars. |

| Phosphate Buffered Saline (PBS) [34] | Electrochemical measurement medium; rinsing buffer to remove unattached cells. | Provides stable ionic strength and pH, minimizing confounding electrochemical effects from metabolites. |

| Specific Analytic Solutions | Solutes for diffusion studies (e.g., glucose, oxygen, pharmaceuticals, micropollutants). | Purity and accurate concentration preparation are critical; relevant to the research context (environmental/medical). |

The assessment of diffusion coefficients in biofilms remains a challenging endeavor with no single perfect technique. Microelectrode-based transient assays provide direct, high-resolution data from within active biofilms but are technically demanding. Mass balance-based transient assays offer a more accessible approach but are prone to inaccuracies from biofilm deformation and solute-matrix interactions. The choice of method should be guided by the specific research question, the available technical expertise, and the required precision, while acknowledging the inherent limitations and error sources in each technique. Future advancements in non-contact electrochemical evaluation and sensor miniaturization hold promise for more accurate and less invasive measurements, further refining our understanding of solute transport in these complex biological systems.

Molecular diffusion coefficients are fundamental transport properties critical for the design and simulation of mass transfer processes in fields ranging from chemical engineering to pharmaceutical development. In the absence of experimental data, engineers and scientists frequently turn to empirical correlations for estimation. Among the most widely recognized are the Wilke-Chang equation (1955) and the Hayduk-Minhas correlation, both developed for predicting binary diffusion coefficients at infinite dilution in liquid systems.

This guide provides a comprehensive comparison of these two models, focusing on their predictive performance for organic solutes in aqueous systems—a context of particular importance for pharmaceutical research where drug solubility and transport often involve aqueous environments. We evaluate these correlations against modern machine learning approaches and experimental data, providing researchers with the quantitative analysis necessary to select appropriate models for their applications.

Model Formulations and Theoretical Foundations

The Wilke-Chang Equation

Proposed in 1955, the Wilke-Chang equation is a hydrodynamic model based on the Stokes-Einstein relationship that views diffusion as a solute particle moving through a continuous solvent medium. The model incorporates an association parameter intended to account for specific solvent-solute interactions, with different values recommended for water, methanol, ethanol, and unassociated solvents [35].

The Wilke-Chang equation remains the most widely used correlation for estimating binary diffusivities, primarily due to its simplicity and long-standing presence in engineering literature [36]. It requires only knowledge of solvent viscosity, solute molar mass, solute molar volume at normal boiling point, and temperature.

The Hayduk-Minhas Correlation

The Hayduk-Minhas correlation represents a more recent empirical approach developed to address some limitations of earlier models. Like Wilke-Chang, it is based on hydrodynamic principles but utilizes different correlating parameters including molar volume, parachor, and radius of gyration of both solute and solvent [37].

This correlation has shown improved accuracy over previous models for predicting diffusivities in specific solutions such as normal paraffins, aqueous solutions, and generally for both polar and non-polar solutions according to its developers [37].

Performance Comparison and Accuracy Assessment

Quantitative Performance Metrics

Table 1: Overall Accuracy Assessment of Diffusion Coefficient Correlations

| Model | Average Absolute Relative Deviation (AARD) | Test Conditions | Key Limitations |

|---|---|---|---|

| Wilke-Chang | 13.03% [38] | Aqueous systems, 1192 data points | Limited association parameters; struggles with specific solvent systems [35] |

| 10-15% (general estimate) [35] | General liquid phase systems | ||

| >20% errors at higher temperatures [16] | Glucose-water system at 65°C | ||

| Hayduk-Minhas | <20% for aqueous-organic mixtures [39] | Methanol/water and acetonitrile/water mixtures | Performance varies significantly with system type |

| Machine Learning | 3.92% [38] | Aqueous systems, 1192 data points | Requires substantial computational resources and expertise |

Table 2: Performance Across Different System Types

| System Type | Best Performing Model | Typical Error Range | Alternative Options |

|---|---|---|---|

| Aqueous Systems | Machine Learning (RDKit descriptors) [38] | ~4% AARD | Scheibel correlation (<20% error) [39] |

| Methanol/Water Mixtures | Scheibel, Wilke-Chang, or Lusis-Ratcliff [39] | <20% error | Hayduk-Laudie for acetonitrile/water [39] |

| Acetonitrile/Water Mixtures | Scheibel, Wilke-Chang, or Hayduk-Laudie [39] | <20% error | Varies by specific solute |

| Reservoir Fluids | No consistently superior model [40] | Varies by system | Wilke-Chang, Hayduk-Minhas, extended Sigmund |

Contextual Performance Analysis

The evaluation of these correlations reveals several important patterns:

Temperature Dependence: Recent research on glucose-water systems demonstrates that both Wilke-Chang and Hayduk-Minhas correlations provide reasonable estimates at lower temperatures (25-45°C), but significantly overestimate experimental results at elevated temperatures (65°C) [16].

System Specificity: A comprehensive evaluation of diffusion coefficients in systems related to reservoir fluids found that no correlation shows consistent and dominant superiority for all binary mixtures, although some perform better for particular groups or regions [40].

Comparative Performance: In studies comparing multiple correlations, the Scheibel correlation sometimes outperforms the more widely used Wilke-Chang method for aqueous-organic mixtures, showing the smallest errors according to some analyses [39].

Experimental Validation Methodologies

Standard Experimental Techniques

The accuracy assessments of empirical correlations depend heavily on reliable experimental data obtained through several established techniques:

Table 3: Key Experimental Methods for Diffusion Coefficient Measurement

| Method | Key Principle | Advantages | Limitations |

|---|---|---|---|

| Taylor Dispersion | Measures dispersion of solute pulse in laminar flow through capillary [16] | Easy assembly and execution [35] | Requires long capillaries (10-20 m) and precise flow control |

| Peak Parking (PP) | Measures axial band broadening during stationary parking period [35] | Uses conventional HPLC equipment; no special skills needed | Less familiar methodology; requires specialized data analysis |

| Diaphragm Cell | Diffusion through porous membrane separating different concentrations [35] | Established historical method | Tedious and complicated procedures |

| NMR | Pulsed field gradient measures molecular displacement [35] | Non-destructive; provides structural information | Expensive instrumentation; limited to appropriate nuclei |

Experimental Workflow

The following diagram illustrates a typical experimental workflow for diffusion coefficient measurement using the Taylor dispersion method, which is currently used "almost exclusively" for several reasons including easy assembly of the experimental system and ease of measurement execution [16]:

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Reagents and Materials for Diffusion Experiments

| Reagent/Material | Function/Application | Example Specifications |

|---|---|---|

| Teflon Capillary Tubes | Flow channel for Taylor dispersion measurements | Length: 20 m; Inner diameter: 3.945×10⁻⁴ m [16] |

| Differential Refractive Index Detector | Detection of concentration differences at capillary outlet | Sensitivity: 8×10⁻⁸ RIU [16] |

| Thermostatic Bath | Temperature control for temperature-dependent studies | Range: 25-65°C [16] |

| HPLC System with Pump | Mobile phase delivery for peak parking methods | Conventional HPLC or microflow capillary systems [35] |

| Non-porous Silica Particles | Packing material for obstructive factor determination in PP methods | Particle diameter: specific to application [35] |

| High-Purity Solutes | Study of specific solute-solvent systems | Example: d(+)-Glucose (≥99.5% purity) [16] |

Emerging Alternatives: Machine Learning Approaches

Recent advances in machine learning have introduced novel approaches that significantly outperform traditional empirical correlations. One study developed machine learning models using 195 molecular descriptors computed automatically from molecular structure, achieving an AARD of just 3.92% on aqueous systems compared to 13.03% for Wilke-Chang [38].

These models leverage RDKit cheminformatics packages to generate molecular descriptors from structure, then apply advanced algorithms to predict diffusion coefficients with remarkable accuracy. The best machine learning models use temperature and automatically calculated molecular descriptors as inputs, making them both accurate and convenient for practical application [38].

Similar machine learning approaches have been successfully applied to polar and nonpolar solvent systems (excluding water), with gradient boosted algorithms achieving AARD values of approximately 5%—significantly better than the Wilke-Chang equation which showed AARD of 40.92% for polar and 29.19% for nonpolar systems in the same study [36].

The Wilke-Chang and Hayduk-Minhas correlations represent important historical developments in the prediction of diffusion coefficients, but their limited accuracy (typically 10-20% error) and system-dependent performance constrain their utility in modern research applications, particularly in pharmaceutical development where precise transport properties are often critical.

For applications requiring the highest possible accuracy, machine learning approaches now offer substantially improved performance, while the Scheibel correlation may provide a middle ground for certain aqueous-organic mixtures where traditional models are preferred. When selecting a predictive model, researchers should consider the specific solvent system, temperature range, and availability of experimental data for validation, while recognizing that all correlations perform poorly for some systems and conditions.